1. Introduction

The volume of digital data generated worldwide is increasing at an unprecedented pace, due to the rise of cloud computing, social media, online commerce, Internet of Things (IoT) technologies, and large-scale multimedia applications [

1,

2,

3]. Industry forecasts suggest that the global datasphere was estimated to be around 149 zettabytes (

bytes) in 2024, being expected to surpass 180 zettabytes by the end of 2025. If the same growth trend continues, 2026 could see data volumes reach roughly 230–240 zettabytes [

4,

5,

6]. With this continuous surge in data, keeping storage cost-effective has become one of the most urgent challenges for today’s data centers and cloud storage systems, as reflected both in architectural discussions of warehouse-scale computing [

7] and in recent studies on cloud storage cost complexity [

8].

Large-scale workload measurements reported by major industry players, including Microsoft, Google, and enterprise storage vendors, show that redundancy is common across primary and secondary storage systems, with practical deployments frequently exceeding 50% redundant data [

9,

10,

11]. This redundancy not only increases storage demand, but also slows down retrieval efficiency, and negatively affects downstream applications such as image search, classification, clustering, and recommendation [

12,

13,

14]. Consequently, data deduplication has become a widely adopted strategy in production storage systems. Industry reports suggest widespread enterprise adoption of data deduplication to lower storage overhead and operating costs [

15], whereas academic evaluations emphasize its significance to boost storage efficiency [

16].

This study focuses on large-scale image deduplication methods. Early research on image deduplication mostly relied on perceptual hashing techniques. Venkatesan et al. [

17] proposed a robust hashing framework that converts an image into a compact binary code, enabling efficient similarity comparison while remaining stable under common image processing operations such as compression and small photometric changes. In [

18], the authors used multi-resolution models from the Haar transform to derive feature vectors from images. They proved that the energy distribution of these transformation coefficients across various scales successfully captures the features of images for content-based image retrieval. Zauner’s benchmark study [

19] evaluated various perceptual hashing algorithms that compare low-frequency discrete cosine transform (DCT) coefficients to their median value to create a compact binary hash for precise image similarity comparison. Due to the low computational complexity and minimal memory footprint, perceptual hashing approaches (AHash, DHash, PHash, and WHash) have been widely adopted for detecting exact duplicates and near-duplicates under mild photometric variations [

20]. In addition to proposing specific hashing algorithms, several studies have provided broader analyses and applications of perceptual hashing techniques. Monga and Evans [

21] provided one of the earlier surveys on perceptual image hashing, outlining core design principles and robustness-efficiency trade-offs. In [

22], Swaminathan et al. proposed a strong and secure image hashing approach. Their method generates hashes that withstand regular signal processing operations such as compression, filtering, and minimal geometric distortions, while secret key randomization protects against malicious attacks. In addition, Khelifi and Jiang [

23] proposed a perceptual hashing method based on virtual watermark detection. The technique produces reliable hash values that maintain perceptual similarity by making use of the detector reaction to pseudo-random watermark patterns inside a decision-theoretic framework, thereby supporting the application of perceptual hashing for image similarity and duplicate detection tasks.

Despite being efficient and popular, several studies have reported that perceptual hashing methods exhibit limited robustness when images are affected by geometric transformations. In a recent study [

24], Sharma et al. highlighted this limitation by examining distance distributions. They showed that common hashing methods fail to match images modified by basic geometric changes such as rotation, scaling, and cropping. In [

25], McKeown et al. demonstrate that perceptual hashes struggle with spatial consistency; specifically, they show that shifting an object’s layout often results in unreliable similarity scores. A consistent trend was also observed by Kotzer et al. [

26], where they found that standard perceptual hashes were insufficient for modern image editing and that neural network-based features performed better. These findings suggest that classical hashing methods often do not perform well in real-world conditions, where duplicate images are often altered by different angles, recompression, editing, and background clutter.

Observing the limitations of perceptual hashing methods under geometric transformations, several recent studies focused on deep learning-based features for image similarity and deduplication. Deep convolutional neural networks (CNNs) can learn sophisticated, hierarchical visual representations, as demonstrated by models such as AlexNet [

27] and VGG [

28]. Additional study found that these learned embeddings capture high-level semantic details and are inherently more resilient to geometric and visual variations than handcrafted features, making them superior for matching tasks [

29]. To this end, Xia et al. [

30] introduced deep feature learning strategies designed for near-duplicate detection and demonstrated that they were significantly more robust than classical hashing methods. This advantage is supported by recent domain-specific benchmarks. For instance, Truong et al. [

31] ran an extensive test on medical image datasets and found that CNN-based embeddings are much more reliable than classical hashing methods when dealing with real-world issues like structural changes or varied imaging conditions.

To address the trade-off between robustness and efficiency, recent studies have started looking into hybrid and transformer-based models. Jakhar et al. [

32] incorporated deep learning features into a perceptual hashing framework to improve near-duplicate detection with geometric distortions at a moderate computational cost. At the same time, transformer architectures have become a potent method of learning highly contextual and invariant representations. These architectures are based on the self-attention mechanism as presented by Vaswani et al. [

33]. Chen et al. [

34], for instance, used this mechanism to create a transformer-based hashing technique. Recent developments, like the work of Mahmud et al. [

35], show that attention is still relevant for producing effective, compact representations by optimizing its use for particular tasks like lossless compression. Despite their strengths, learning-based techniques generally struggle with scalability due to their high computational overhead compared to simpler perceptual hashing methods, which can create scalability bottlenecks in large-scale systems [

36].

Nonetheless, the absence of consistent benchmarks makes it difficult to compare a large collections of hashing techniques objectively. Fair, direct comparisons between traditional and deep learning approaches are challenging because researchers use heterogeneous evaluation settings, which include different datasets, preprocessing steps, and performance metrics [

31]. Furthermore, many studies only assess performance on one type of duplicate (e.g., exact copies), failing to jointly evaluate the entire spectrum of adversarially transformed copies, near-duplicates, and exact duplicates that are typical of real-world image collections.

To overcome these challenges, in this paper, we proposed a unified evaluation framework that allows for a fair and systematic comparison between traditional perceptual hashing and more recent deep learning-based deduplication techniques. Analyzing all three duplicate types under a single framework allows us to demonstrate how different design decisions influence an algorithm’s accuracy and efficiency in real-world scenarios. This analysis provides a roadmap for navigating the practical challenges of deduplication. This comparative study also helps determine exactly when simple hashing is enough and when it is time to switch to more complex, learning-based models.

2. Materials and Methods

In this section, we outline the experimental setup and evaluation procedure used to test various image deduplication methods under consistent, reproducible conditions. The objective is to examine how different algorithms respond to increasing visual diversity, ranging from exact duplicates to substantially altered versions of the original image. Five popular techniques are included in our selection, which is split into two groups: traditional perceptual hashing techniques (AHash, DHash, PHash, and WHash) and a deep learning technique based on CNN embeddings. These particular hashing algorithms were selected as typical examples of effective, manually created descriptors that are frequently benchmarked in the literature [

19,

20]. This choice allows robustness-oriented feature learning and efficiency-oriented hashing to be compared side by side.

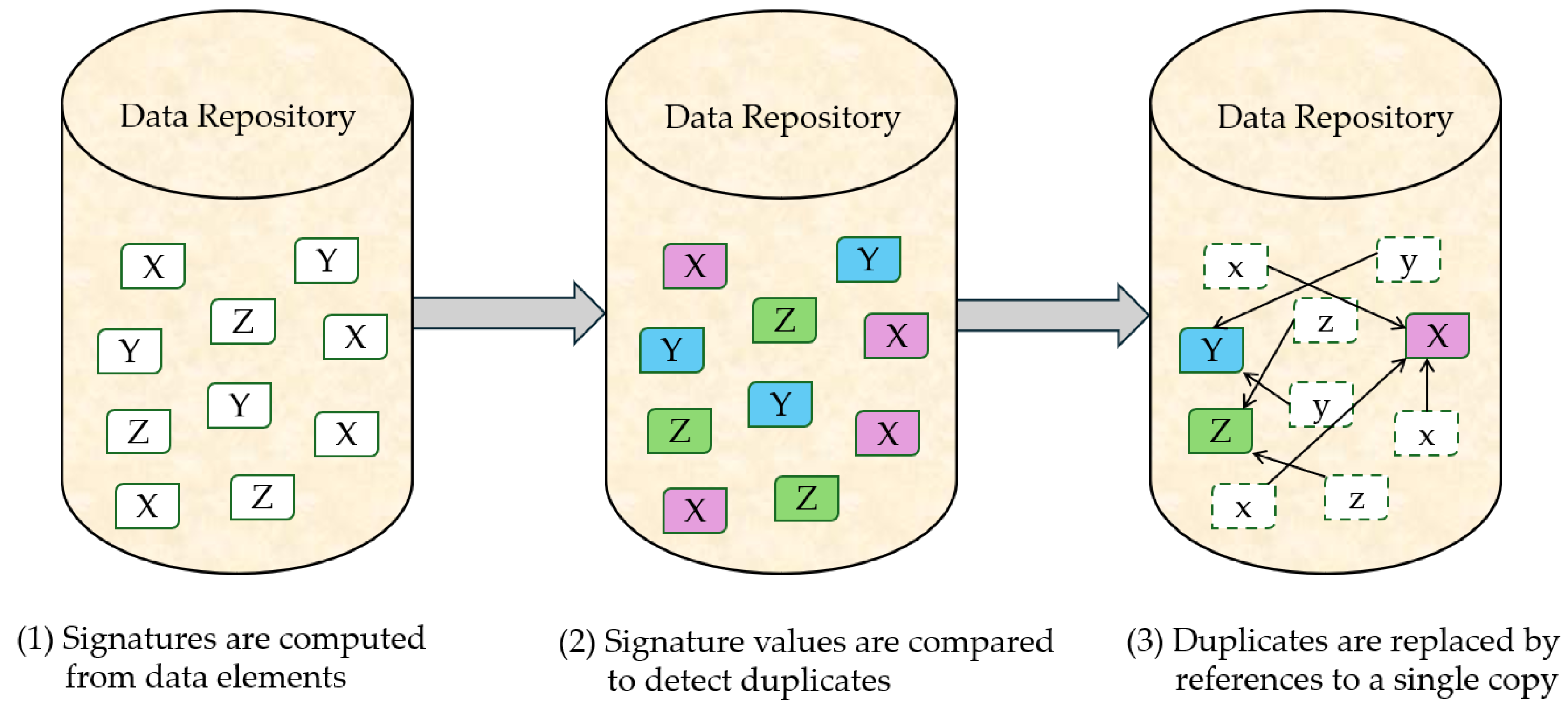

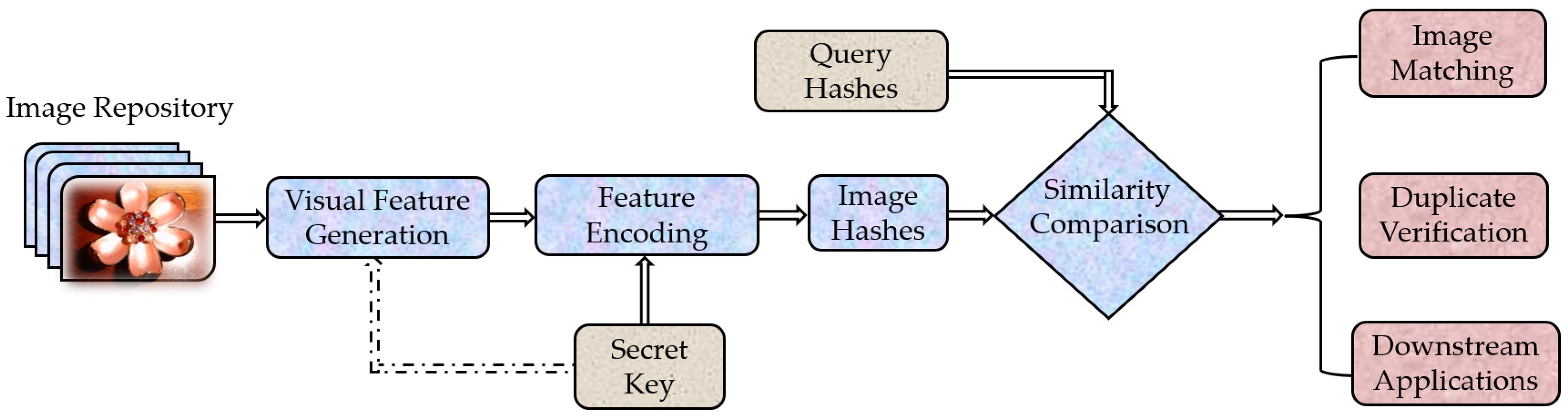

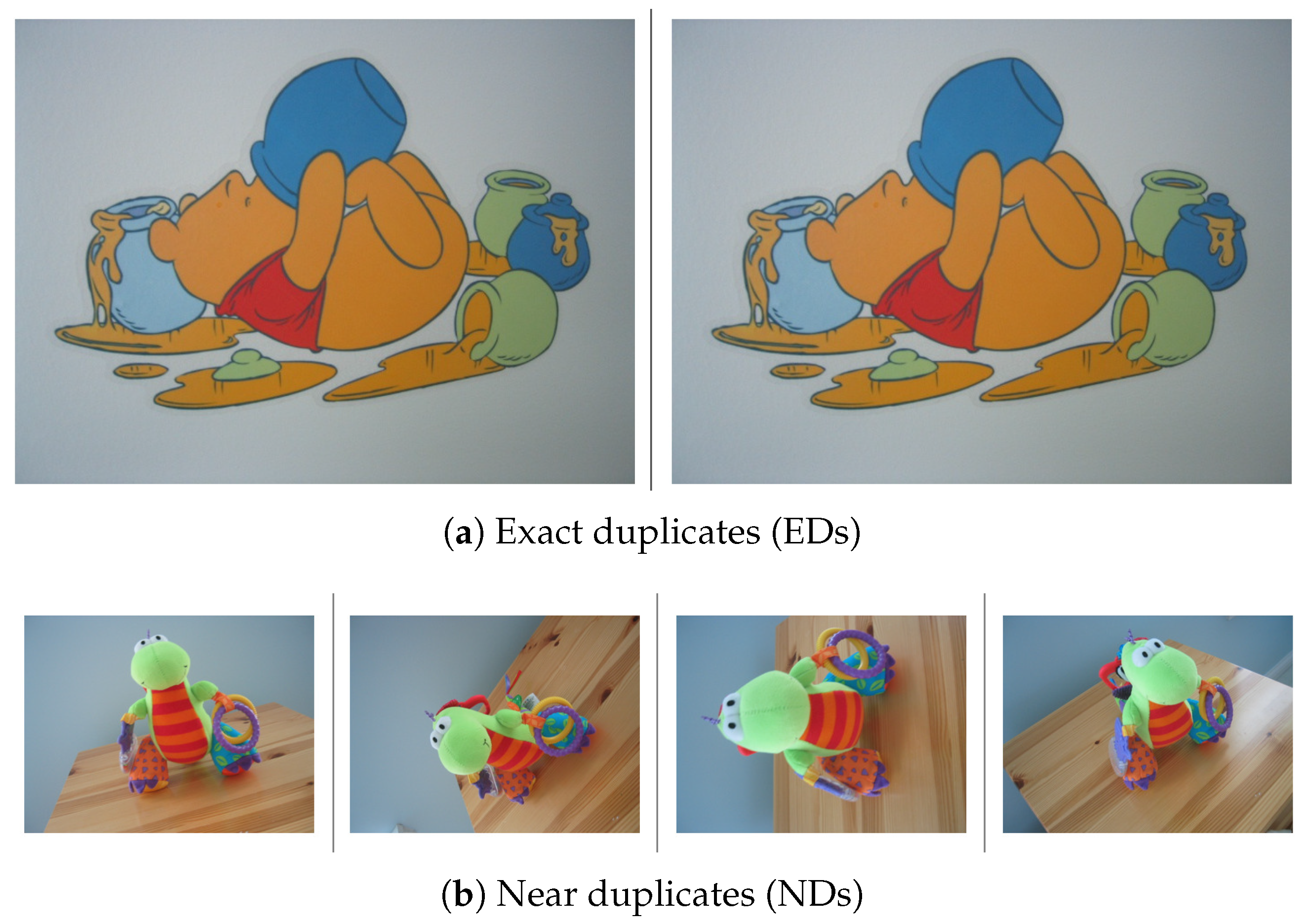

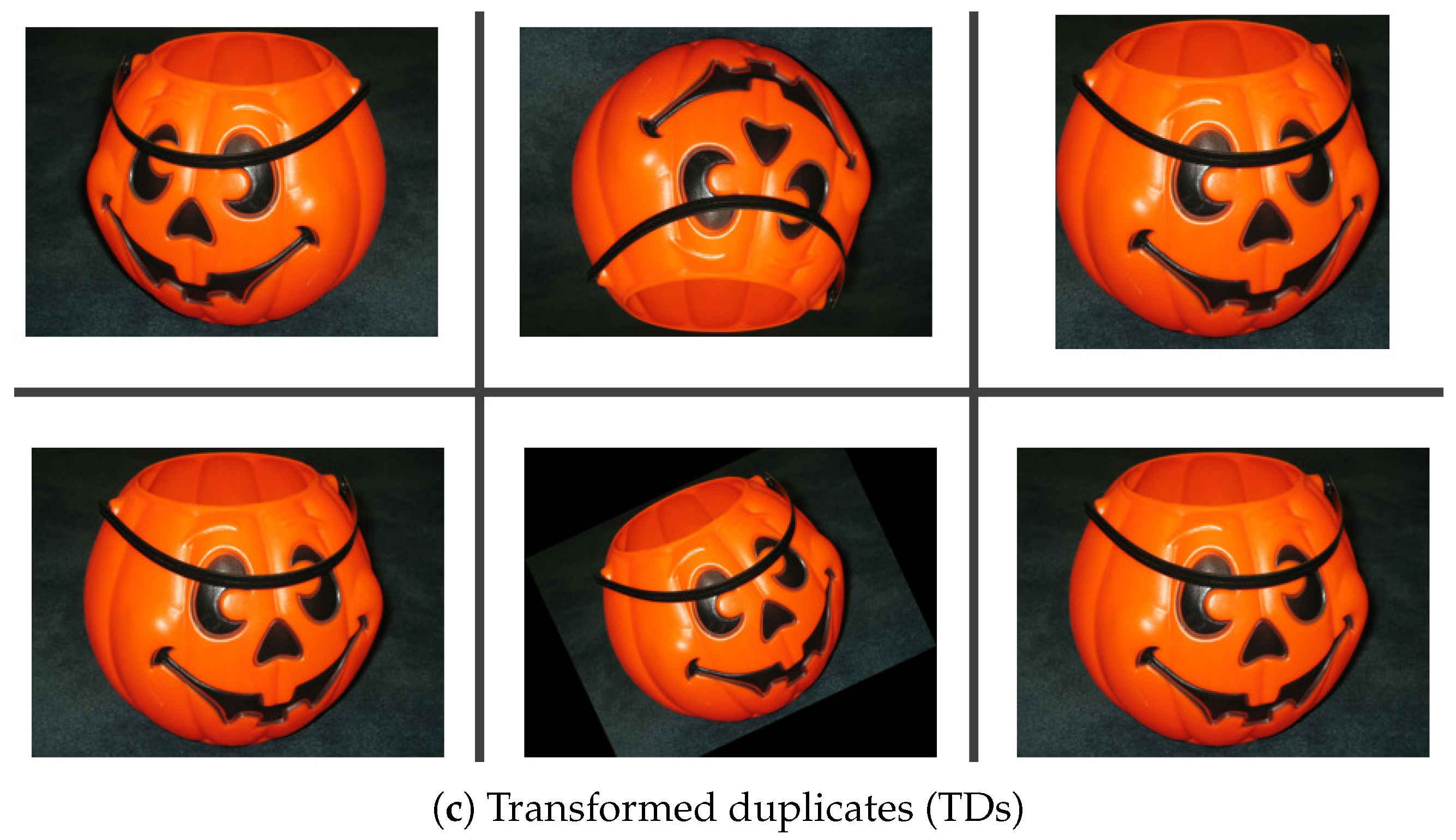

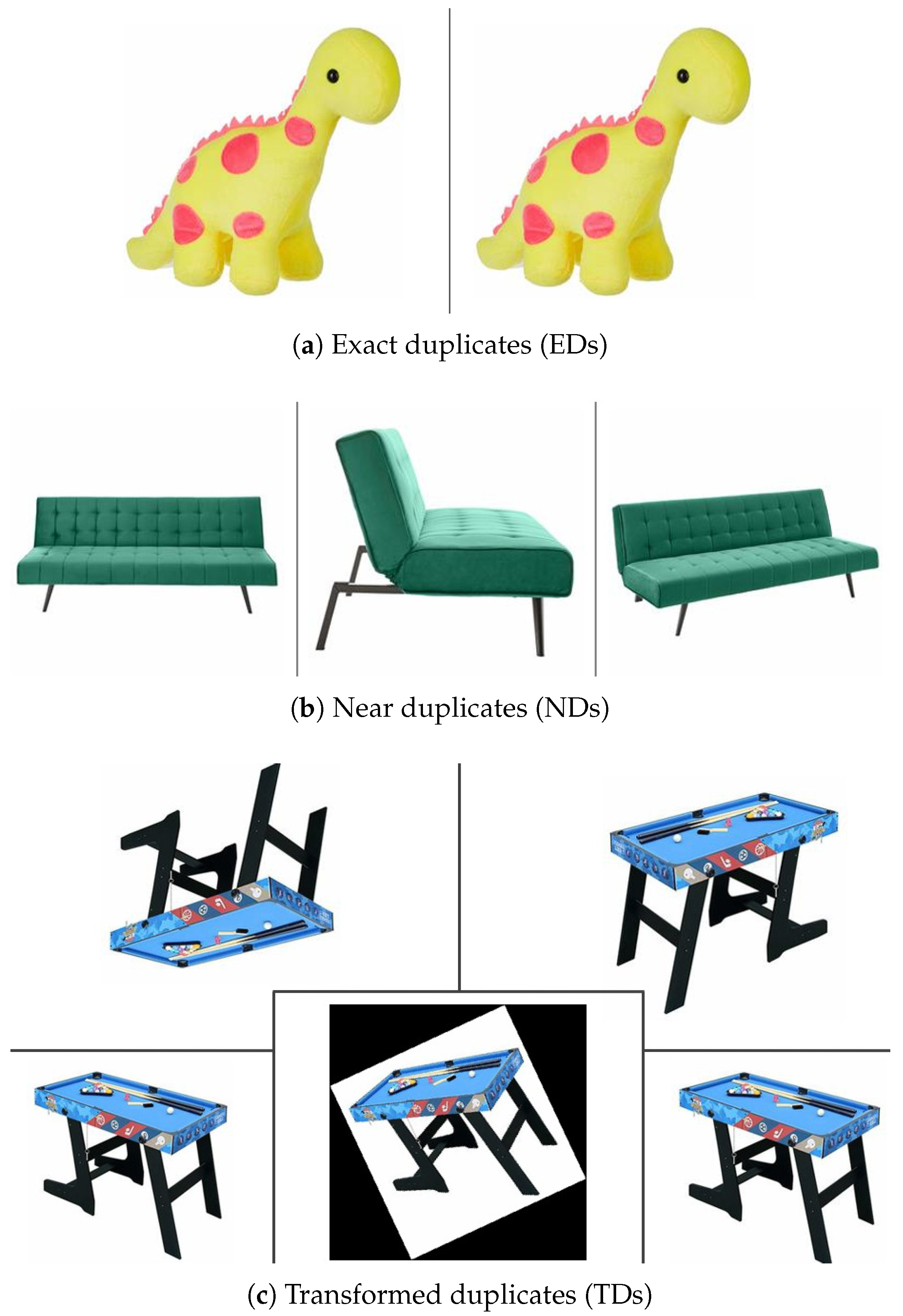

Evaluation was conducted using benchmark image collections derived from the UKBench (UKB) and Amazon Berkeley Objects (ABO) datasets. We produced three subsets for each dataset that were intended to mimic real-world duplication: exact copies, near duplicates with slight photometric changes, and transformed duplicates featuring geometric shifts like cropping, flipping, and rotation. To ensure consistency, we followed a four-step pipeline: (i) building duplicate-specific datasets, (ii) extracting image representations using either hashing or CNN embeddings, (iii) computing similarities with the appropriate distance measure for each method, and (iv) evaluating performance quantitatively. Our evaluation concentrates on four key areas: retrieval precision, ranking effectiveness, set overlap, and speed. For accuracy, we use Precision–Recall and ROC (Receiver Operating Characteristic) analysis; for ranking quality, we use MAP (Mean Average Precision) and NDCG (Normalized Discounted Cumulative Gain); for set comparisons, we use Jaccard similarity; runtime data offers insights into computational efficiency. A general overview of the data deduplication process is illustrated in

Figure 1.

The following subsections will discuss how we set up our study. This includes the techniques we chose, our way of extracting features, the math behind our comparisons, and the data and metrics we used to measure the overall performance.

2.1. Overview of the Methods Evaluated

To benchmark classical hashing and deep-learning-based representations within a a single evaluation setup, we included four perceptual hashing approaches (AHash, DHash, PHash, and WHash), as well as a pretrained CNN embedding approach.

2.1.1. Average Hash (AHash)

Among perceptual hashing techniques, Average Hash (AHash) is commonly used in perceptual hashing-based deduplication [

37] and is widely used as a lightweight baseline in comparative studies [

38]. AHash is computationally cheap and deterministic for any input by reducing an image’s global luminance structure to a compact 64-bit binary descriptor through its fixed, non-learning pipeline of resizing, grayscaling, and mean-based thresholding [

39].

The algorithm is depicted in

Figure 2 and the processing steps are summarized as follows:

Computing the Average Intensity: To set a single global threshold, AHash computes the mean intensity over all

pixels in the resized image, as per its standard formulation [

39]. The mean luminance is determined using the following equation:

where

is the grayscale intensity of the

ith pixel.

Generating the binary hash: After computing the mean intensity, the algorithm thresholds the grayscale matrix pixel by pixel. If a pixel’s intensity is greater than or equal to the average, the bit is set to 1, or to 0 otherwise. Arranging these bits in row-major order produces a 64-bit descriptor that captures the overall luminance pattern of the image.

Although AHash is extremely fast and computationally lightweight, its dependence on a global intensity pattern makes it sensitive to illumination changes, contrast shifts, and geometric transformations [

21]. Nonetheless, due to its low complexity and high speed, AHash remains valuable as a baseline method in duplicate detection pipelines and is often used as an initial screening step to filter obvious duplicates before applying stronger hashing methods or deep learning models.

2.1.2. Difference Hash (DHash)

Difference Hash (DHash) focuses on image structure by comparing neighboring pixels and encoding their relative intensity differences [

19]. Unlike AHash, which uses a single global mean as a threshold, DHash encodes local brightness transitions (gradients), which generally makes it less sensitive to illumination changes. The algorithm proceeds through the following steps as shown in

Figure 3:

Resizing the image: DHash begins by resizing the image to (commonly ). Adding one extra column makes it possible to compare horizontal pixel pairs within each row. This resizing suppresses high-frequency content and ensures consistent dimensions across all images.

Converting to grayscale: The resized image is then mapped to a grayscale representation, yielding a 9 × 8 matrix of luminance values . This lowers computation because only one channel is processed.

Computing horizontal differences: At its core, DHash represents an image by comparing the intensities of horizontally neighboring pixels and encoding their relative differences [

19]. Within each row, the algorithm performs pairwise comparisons between each pixel and the pixel immediately to its right. Let

denote the binary difference field produced by these comparisons:

This step turns the matrix into an gradient matrix that captures structural transitions.

Generating the binary hash: Next, each entry in the 8 × 8 difference field is converted into a binary bit. A value of 1 is assigned when the left pixel intensity exceeds the right pixel intensity; otherwise, a value of 0 is assigned. The specific ordering of these comparisons does not affect the hash as long as it is kept consistent across images. The values of are concatenated row-wise to form a 64-bit DHash fingerprint, representing the dominant horizontal gradient pattern of the image.

We can see that DHash is more resistant to illumination shifts than AHash, because DHash is based on relative intensity between neighboring pixels, which tends to remain stable even when the brightness shifts uniformly [

19]. DHash strikes a practical balance between computational efficiency and structural encoding, making it suitable for detecting nearly identical images [

13]. However, under geometric transformations, DHash degrades because operations such as rotation, cropping, and perspective shifts change pixel adjacency and distort the underlying gradient relationships [

21]. So, it performs best when the image layout does not change much.

2.1.3. Perceptual Hash (PHash)

Unlike AHash and DHash, which operate in the spatial domain, Perceptual Hash (PHash) works in the frequency domain by applying the Discrete Cosine Transform (DCT) to break the image into frequency components. It then relies on low-frequency DCT coefficients, which tend to remain stable under compression, mild rotations, and illumination changes, giving PHash stronger robustness than spatial-domain hashes [

19]. The algorithm proceeds through the following steps:

Resizing and grayscale conversion: In the beginning, the image is normalized to a fixed size of , and then mapped to grayscale. This results in a consistent luminance representation that supports DCT-based frequency analysis while retaining the necessary structure for perceptual hashing.

Applying the 2D Discrete Cosine Transform (DCT): A two-dimensional DCT is used on the grayscale image

to convert it into a frequency-domain representation

. The DCT formula utilized is the standard type-II 2D DCT [

40]:

where

and

are normalization factors defined as:

Extracting the 8 × 8 low-frequency block: To capture global structure, PHash retains only low-frequency DCT coefficients while discarding higher-frequency detail. In practice, the top-left block of the DCT matrix is extracted to create a compact feature set that can withstand minor image changes.

Median thresholding and hash generation: The median of the 64 values in the low-frequency block is calculated as:

A 64-bit hash is then created by comparing each coefficient to this median:

Concatenating these values row-wise yields the final PHash fingerprint.

Comparing hashes using Hamming distance: Similarity between two images is calculated using the Hamming distance between their hash vectors

and

:

where ⊕ denotes the XOR operation. A smaller Hamming distance indicates greater similarity between images. This comparison method is standard for perceptual hash fingerprints [

19].

PHash exhibits strong resilience to changes in illumination and to JPEG compression [

41,

42]. The JPEG standard itself preserves low-frequency DCT coefficients, which PHash leverages to maintain hash stability [

19]. Thus, it provides significantly better discriminative capability than AHash and DHash. Large rotations, heavy cropping, or significant distortions that change the global frequency structure cause it to perform worse [

43]. Additionally, PHash requires more processing power than basic spatial hashing techniques.

2.1.4. Wavelet Hash (WHash)

Wavelet Hash (WHash) uses a 2D Haar wavelet transform to create a perceptual fingerprint that captures the structure of the image at various resolutions [

18,

44]. While PHash depends on DCT frequency coefficients, and AHash and DHash operate directly in the spatial domain, WHash employs wavelet decomposition to capture both spatial layout and frequency content. Robustness to minor geometric and photometric distortions is usually enhanced by this mixed representation [

17]. The foundation of our WHash implementation is the imagededup library [

45]. In this method, the Haar wavelet transform’s approximation coefficients are used to generate the hash. Below is the processing flow:

Resizing and grayscale conversion: The image is first converted to grayscale and resized (typically to ). Because of this uniform input, the wavelet decomposition is consistent for every image.

Applying the 2D Haar wavelet transform: Next, a separable two-dimensional Haar wavelet transform is applied to the grayscale image

. One approximation sub-band,

A, and three detail sub-bands,

H,

V, and

D, which capture horizontal, vertical, and diagonal variations, respectively, are produced by this decomposition. The transformation can be expressed as:

where

A represents the low-frequency (coarse) content of the image, and the detail sub-bands capture the high-frequency variations.

Selecting approximation coefficients: We use approximation coefficients A, which are stable under minor changes like illumination shifts, compression, or blur. The resulting coefficient set is then used to generate the perceptual hash.

Median thresholding and hash generation: Next, we compute the median of the approximation coefficients and denote it by

Each coefficient is compared to the threshold to produce a binary value:

Finally, the thresholded bits are concatenated to form the 64-bit WHash fingerprint.

WHash uses wavelet features to retain information from both the spatial and frequency domains, improving robustness to blur, filtering, and small rotations compared to AHash and DHash [

17]. At the same time, it maintains a favorable balance of computational cost and perceptual stability. Despite its improved stability, WHash can degrade under large geometric transformations, as major rotations and heavy cropping disrupt the underlying multi-resolution representation [

23]. It has a longer runtime than basic spatial hashes, but it is still much less expensive computationally than deep learning methods.

2.1.5. Convolutional Neural Network (CNN)-Based Embedding Model

The CNN-based method runs images through a pretrained deep convolutional network to obtain high-dimensional feature vectors, leveraging hierarchical feature abstractions learned from large-scale image datasets [

28,

46]. VGG-16 was selected as the representative CNN baseline because it is a gold-standard architecture for robust feature extraction in image retrieval literature. In contrast to hashing methods that use handcrafted pixel or frequency-based descriptors, CNN embeddings are learned features that represent higher-level semantics like shapes, textures, and object structure. These learned representations are more robust than traditional approaches for detecting duplicates, as they remain stable under a wider range of image transformations [

26,

30].

2.2. Feature Extraction Pipeline

Using the algorithmic definitions in

Section 2.1, we describe the feature-generation procedure used in our experiments. For each image in the Exact Duplicate, Near Duplicate, and Transformed datasets, feature descriptors were extracted using all five approaches: AHash, DHash, PHash, WHash, and the CNN-based embedding model.

2.2.1. Hash-Based Feature Generation

Each image was encoded as a 64-bit binary descriptor using the four perceptual hashing techniques (AHash, DHash, PHash, and WHash), as described in

Section 2.1. The similarity between image pairs was determined using the Hamming distance, making it possible to compare these small fingerprints effectively. The Hamming distance between two hash vectors was transformed into a normalized similarity score in the interval

in order to facilitate score-based evaluation for Precision–Recall (PR), ROC, and F1-score curves which is shown in Equation (

12).

where

is the Hamming distance between two 64-bit hash descriptors. For tabulated results, we additionally assessed performance at three fixed Hamming distance thresholds (0, 10, and 32), which correspond to strict, moderate, and relaxed matching conditions. The entire hash-based feature extraction and similarity comparison procedure is shown in

Figure 4.

2.2.2. CNN-Based Feature Generation

Before being run through a pretrained CNN, images were resized and normalized to produce high-dimensional embedding vectors (see

Section 2.1). Cosine similarity, which generates continuous similarity scores in the interval

, was used to calculate the similarity between embedding vectors. For score-based Precision–Recall (PR), ROC, and F1-score evaluation, these raw cosine similarity scores were directly utilized.

Three fixed cosine similarity thresholds (0.5, 0.9, and 1.0) were used to further analyze performance under relaxed, moderate, and strict matching conditions for tabulated results. The entire CNN-based feature extraction and matching pipeline is shown in

Figure 5.

2.2.3. Evaluation Strategy

The extracted hash descriptors and CNN embeddings were used to calculate the Precision–Recall (PR) curves, ROC curves, F1-scores, MAP, NDCG, Jaccard similarity, and runtime, as reported in

Section 3.

Two complementary evaluation strategies were used because different metrics require different evaluation principles. Accuracy and ranking-based metrics such as Jaccard similarity, MAP, and NDCG were computed using fixed, method-specific thresholds, which is common practice in image deduplication. In contrast, PR, ROC, and F1-score curves were obtained using score-based evaluation by sweeping over normalized similarity scores, enabling threshold-independent evaluation. This separation allows fair comparison for tabulated results while providing a clear view of ranking behavior through curve-based analysis.

2.3. Evaluation Metrics

Multiple evaluation metrics were used to objectively evaluate the performance of the five deduplication methods across the Exact Duplicate, Near Duplicate, and Transformed datasets. These metrics measure accuracy, ranking quality, robustness, and computational efficiency at various similarity thresholds. The definitions of the evaluation metrics used in this study are provided in the following subsections.

2.3.1. Precision and Recall

In this study, precision is defined as the fraction of retrieved items that are true duplicates, whereas recall is the fraction of all true duplicates that the method retrieves. These measures are the standard for information retrieval and classification [

51,

52], including related tasks like copy detection [

53]. Equations (

13) and (

14) calculate precision and recall, respectively.

where

,

, and

denote true positives, false positives, and false negatives, respectively. Precision–Recall (PR) curves depict the trade-off between these two quantities at various thresholds.

2.3.2. ROC Components: TPR and FPR

The Receiver Operating Characteristic (ROC) curve summarizes binary classification behavior by showing how the True Positive Rate (TPR) varies with the False Positive Rate (FPR) [

54,

55]. These quantities are defined as:

where

denotes true negatives.

2.3.3. F1-Score

The F1-score summarizes performance in a single value by calculating the harmonic mean of precision and recall [

53,

56]. It is defined as:

where

and

denote Precision and Recall, respectively.

2.3.4. Mean Average Precision (MAP)

Mean Average Precision (MAP) assesses the level of accuracy of a ranked list of results by calculating the average precision at each location where a relevant item is retrieved. It has become the standard metric for evaluating ranking performance in information retrieval [

51], and its estimation properties are well-studied [

57]. It is defined as:

where

Q denotes the total number of queries, and

represents the Average Precision for the

qth query, defined as:

In this definition, represents the number of relevant duplicates for the query q, is the precision computed at rank i, and is an indicator function that specifies whether the image at rank i is relevant.

2.3.5. Normalized Discounted Cumulative Gain (NDCG)

NDCG is a standard ranking metric that prioritizes relevant duplicates retrieved at higher ranks, making it ideal for assessing ordered retrieval lists in modern information retrieval systems [

51,

58]. The notation “@i” evaluates the metric on only the top-

i retrieved images, emphasizing the quality of the highest-ranked results.

where the Discounted Cumulative Gain (DCG) at rank

i is computed as:

here,

denotes the relevance of the item at rank

k, where

= 1 for a true duplicate and

= 0 otherwise. The ideal DCG (IDCG) is computed using the same expression as DCG, but with an ideal ordering, all relevant images appear before any irrelevant images [

59,

60].

2.3.6. Jaccard Similarity

The Jaccard similarity index measures the overlap between the predicted duplicate set (

P) and the ground-truth set (

Q). Originally proposed for set comparison in ecology, it has since become widely used in data mining applications [

61,

62]. It is defined as:

which represents the ratio between the number of elements common to both sets, and the number of total unique elements in either set.

2.3.7. Runtime Measurement

The total runtime was calculated as follows to assess computational efficiency:

In this notation,

is the per-image feature extraction time, while

represents the time spent computing similarity over the dataset. Runtime is commonly reported alongside accuracy-based measures in large-scale retrieval and duplicate detection studies because scalability and computational cost are critical in real-world deployment [

63,

64,

65].

2.4. Dataset Description

Two benchmark datasets were used in this study: the UKBench (UKB) dataset [

66] and the Amazon Berkeley Objects (ABO) dataset [

67]. For each dataset, we created three subsets corresponding to Exact Duplicate, Near Duplicate, and Transformed cases. Sample images from UKB and ABO are shown in

Figure 6 and

Figure 7 respectively.

2.4.1. UKB Dataset

The UKB dataset [

66] was created for image retrieval and includes 10,200 images of 2550 objects. Each object is photographed four times, typically from different angles and occasionally with minor lighting changes. Because of this four-image grouping, UKB automatically generates near-duplicate sets and has become a standard benchmark in content-based image retrieval.

This work used the following subsets for large-scale quantitative evaluation:

Exact Duplicate: 5100 images

Near Duplicate: 10,200 images

Transformed: 10,200 images

All tabulated results were computed on the full Exact Duplicate, Near-Duplicate, and Transformed subsets, whereas for graphical analysis, including Precision–Recall (PR), ROC, and F1-score curves, a curated subset of 1000 Near-Duplicate (ND) images and 1002 Transformed-Duplicate (TD) images from the UKB dataset was used (

Figure 6). The selected images form groups of visually similar instances captured from different viewpoints and subjected to geometric variations such as flipping, rotation, cropping, and changes in aspect ratio.

2.4.2. ABO Dataset

The Amazon Berkeley Objects (ABO) dataset [

67] is composed of actual Amazon.com product listings. The catalog includes 147,702 products, 398,212 unique images, 360-degree turntable sequences, and artist-created 3D models for thousands of items. Because each product contains extensive metadata (such as category, brand, and physical attributes), ABO serves as a large-scale benchmark for object understanding and retrieval.

This study only used 2D catalog images. The subsets constructed for quantitative evaluation include:

Exact Duplicate: 2000 images;

Near Duplicate: 3000 images;

Transformed: 3000 images.

To demonstrate PR, ROC, and F1-score behavior, we chose 660 representative images from the Near-Duplicate (ND) and Transformed-Duplicate (TD) subsets. Each ND group contains three images with similar visual content, whereas each TD group contains five variations of the same image, such as flipped, resized, and rotated versions. These transformed samples were created using the same augmentation scheme as in the UKB experiments, but without cropping. This option preserves the underlying dataset structure while allowing for clear visual comparison across duplicate categories.

2.5. Experimental Setup

We conducted all experiments in Python 3.10 on a dedicated workstation using JupyterLab. All methods were evaluated under identical hardware and software conditions to ensure fairness. The system included an Intel

® Core™ i7-5930K processor at 3.50 GHz, 64 GB RAM, and an NVIDIA GeForce GTX TITAN X GPU (12 GB) running Ubuntu 22.04 LTS. In this environment, we completed all pipeline steps, including dataset loading, descriptor generation for each method, duplicate-search operations, and metric computation. The runtime values reported in

Section 3 were measured on the same hardware configuration. Using a single, consistent setup improves reproducibility and comparability of all results.

3. Results and Analysis

3.1. Graphical Evaluation on the UKB Dataset

This section presents the graphical performance comparison of the five methods on the UKB dataset. PR, ROC, and F1-score plots were created using a curated set of 1000 Near-Duplicate (ND) images and 1002 Transformed-Duplicate (TD) images. For perceptual hashing methods, the curves were generated by sweeping similarity scores normalized from Hamming distance over the range . In contrast, the CNN-based approach used cosine similarity scores swept over the full range for score-based evaluation.

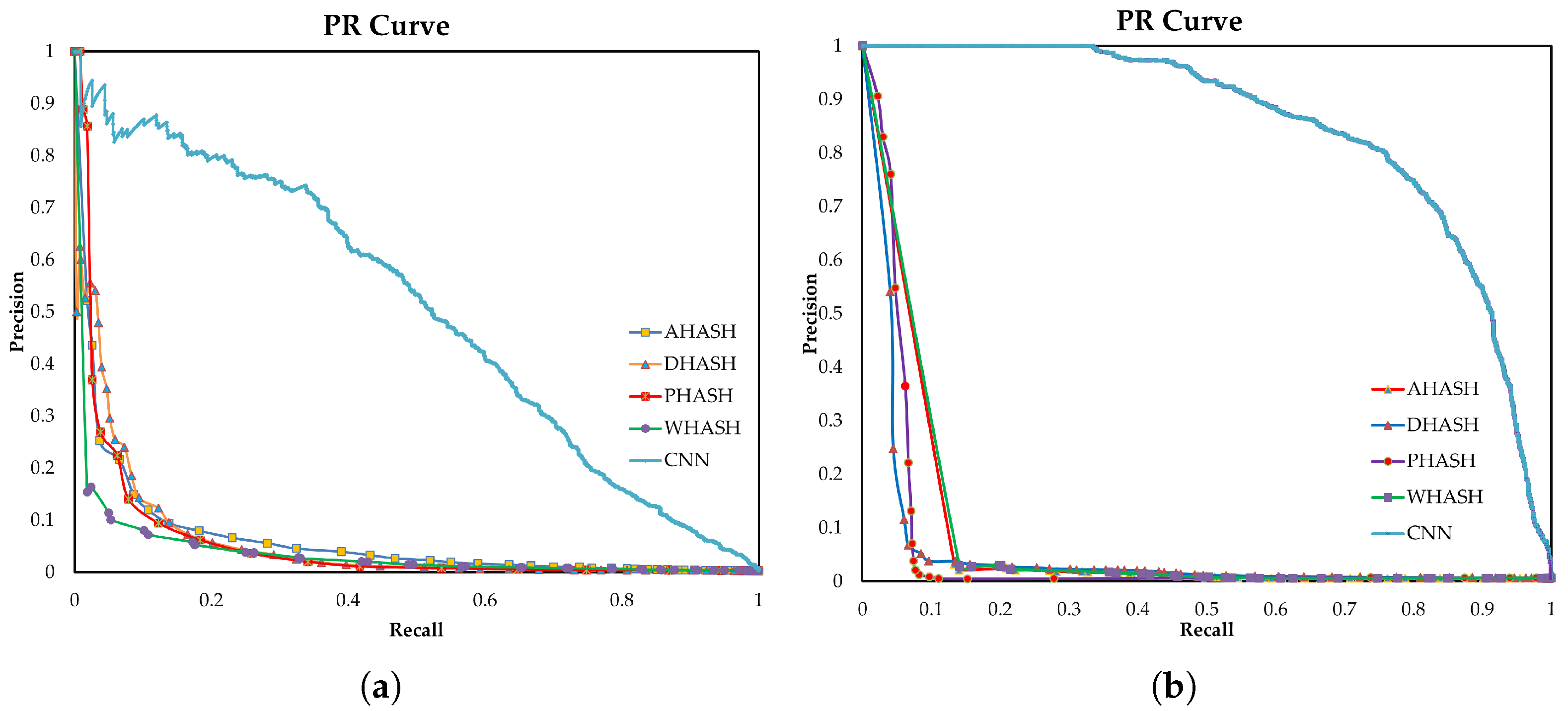

3.2. Precision–Recall Curve Analysis (UKB)

Figure 8a presents the Precision–Recall (PR) curves for the UKB Near-Duplicate (ND) subset. All perceptual hashing methods show a sharp drop in precision as recall increases, suggesting that their effectiveness is limited once recall moves beyond very low levels. While PHash and DHash achieve high precision at the initial points, their performance declines rapidly, and AHash and WHash follow a similar trend with slightly slower but still notable decreases.

The same behavior is more pronounced for the Transformed-Duplicate (TD) subset in

Figure 8b. Here, the PR curves of all hashing methods fall quickly at very low recall values, reflecting their sensitivity to geometric transformations. In contrast, the CNN-based method consistently maintains high precision over a broad range of recall in both subsets, with performance dropping only near full recall. This highlights the stronger robustness of learned deep features compared to handcrafted hash methods for image deduplication.

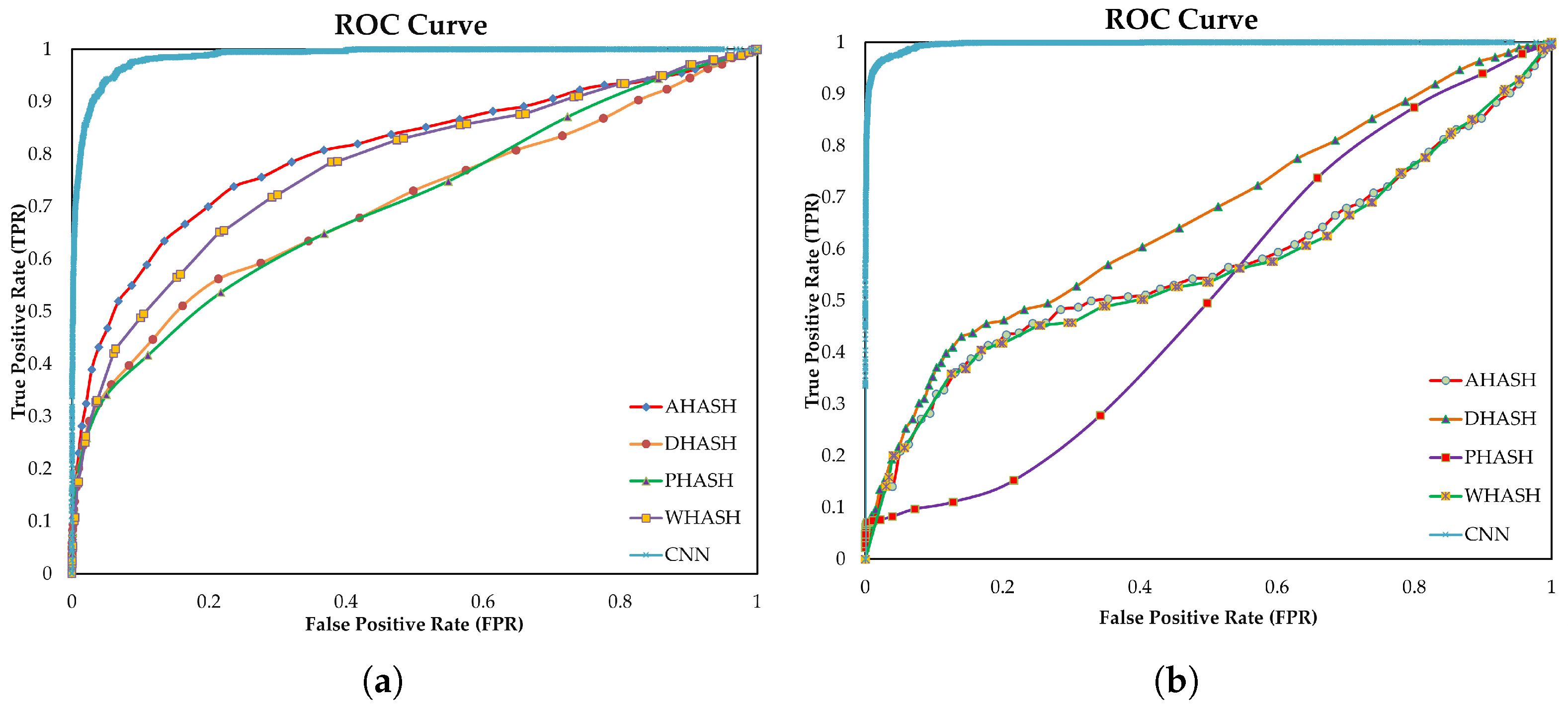

3.2.1. ROC Curve Analysis (UKB)

The ROC curve for the UKB Near-Duplicate (ND) subset is illustrated in

Figure 9a. All perceptual hashing methods follow a similar pattern, with the true positive rate increasing gradually as the false positive rate rises. AHash and WHash perform slightly better at low false positive rates, while DHash and PHash lag behind by a small margin. Overall, the differences among the hashing methods are minor, indicating limited ability to clearly separate duplicates from non-duplicates.

The Transformed-Duplicate (TD) results in

Figure 9b further emphasize this limitation. All hashing methods suffer a noticeable drop in performance under geometric transformations, with PHash showing the largest decline, especially at moderate false positive rates. In contrast, the CNN-based method achieves a near-ideal ROC curve in both subsets, rising sharply near the origin and maintaining a high true positive rate across the full range of false positives. This confirms the strong and stable discriminative power of deep features, consistent with the behavior observed in the PR curves.

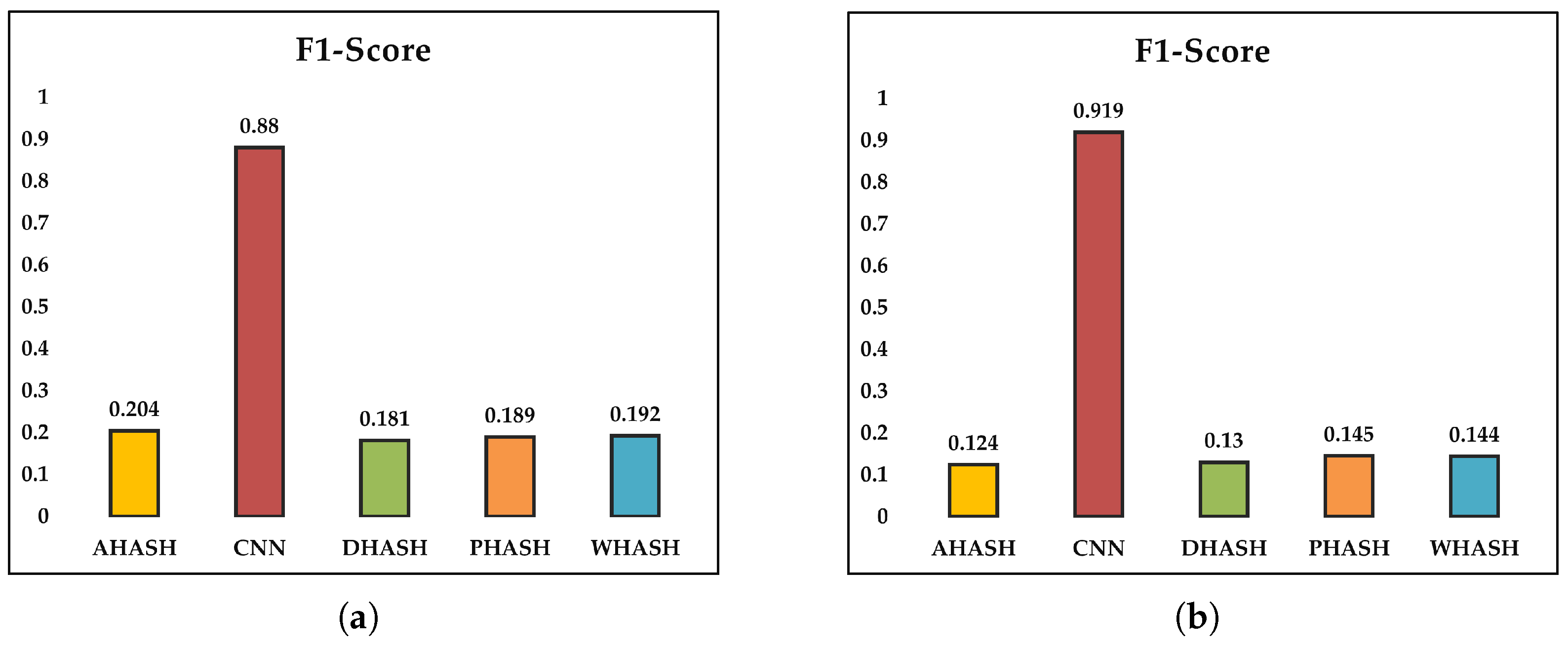

3.2.2. F1-Score Analysis (UKB)

Figure 10 shows the F1-score results for the UKB dataset. For the Near-Duplicate (ND) subset (

Figure 10a), all perceptual hashing methods produce low F1-scores, indicating a weak balance between precision and recall. Among the hashing techniques, AHash performs best, with WHash and PHash close behind, while DHash shows slightly lower performance. In contrast, the CNN-based method achieves a much higher F1-score of about 0.88, demonstrating a significantly better precision–recall balance.

A similar trend is observed for the Transformed-Duplicate (TD) subset in

Figure 10b. The F1-scores of hashing methods remain low, with PHash and WHash performing slightly better than AHash and DHash, reflecting their limited robustness to geometric transformations. The CNN-based method further improves in this setting, reaching an F1-score of approximately 0.92, which highlights its strong robustness and consistent discriminative performance under more challenging visual variations.

3.3. Graphical Evaluation on the ABO Dataset

The ABO dataset presents a more challenging evaluation scenario than UKB due to higher intra-class variability and more diverse object appearances and backgrounds. To capture these effects, PR, ROC, and F1-score plots were created with a curated set of 660 images from the Near-Duplicate (ND) and Transformed-Duplicate (TD) subsets. The ND subset is made up of visually similar images, whereas the TD subset contains multiple transformed versions of each image. These graphical results demonstrate how each method responds to increased appearance diversity and transformation complexity, as well as the performance gap between perceptual hashing techniques and CNN-based embeddings under more realistic deduplication conditions.

3.3.1. Precision–Recall Curve Analysis (ABO)

The Precision–Recall (PR) curve for the ABO Near-Duplicate (ND) subset is illustrated in

Figure 11a. All perceptual hashing methods experience a rapid drop in precision with only a small increase in recall, indicating difficulty in handling the high visual diversity and complex backgrounds of ABO product images. While some hashing methods achieve reasonable precision at very low recall levels, their performance quickly degrades as more duplicate pairs are retrieved.

This trend becomes even more pronounced for the Transformed-Duplicate (TD) subset in

Figure 11b. When images are subjected to transformations such as flipping, cropping, and rotation, the PR curves of all hashing methods collapse at very low recall values, revealing poor robustness to appearance changes. In contrast, the CNN-based method maintains much higher precision over a broad range of recall in both subsets, highlighting its stronger ability to capture semantic similarity in visually complex and transformed product images.

3.3.2. ROC Curve Analysis (ABO)

Figure 12a shows the ROC curves for the ABO Near-Duplicate subset. All four hashing methods display a gradual increase in true positive rate as the false positive rate rises, indicating limited but meaningful ability to distinguish duplicates under near-duplicate conditions. Among the hashing approaches, AHash and WHash perform slightly better, while PHash and DHash show weaker discrimination.

For the Transformed subset in

Figure 12b, the performance of hashing methods declines further. The ROC curves become flatter, especially at low false positive rates, highlighting their reduced robustness to geometric transformations such as flipping and rotation.

In contrast, the CNN-based method exhibits a steep rise near the origin and stays close to the upper-left corner in both subsets. This indicates strong discriminative capability even at very low false positive rates, underscoring the superior robustness of learned deep embeddings in the presence of high visual diversity and complex transformations in the ABO dataset.

3.3.3. F1-Score Analysis (ABO)

Figure 13 summarizes the F1-score results for the ABO dataset. For the Near-Duplicate subset (

Figure 13a), all perceptual hashing methods produce low F1-scores, showing that they struggle to balance precision and recall in the presence of high visual variability. Among the hashing approaches, DHash performs slightly better than the others, followed by AHash, PHash, and WHash, although the overall differences are small and the scores remain low.

A similar pattern is observed for the Transformed subset in

Figure 13b. Under stronger geometric and appearance changes, such as rotation and aspect-ratio modification, the F1-scores of all hashing methods drop further, indicating very limited robustness.

In contrast, the CNN-based method achieves much higher F1-scores in both subsets, with particularly strong performance on the transformed images. This highlights that learned deep embeddings are far more effective than perceptual hashing methods at preserving discriminative information in visually diverse and heavily transformed product images.

3.4. Quantitative Evaluation on the UKB Dataset

This subsection summarizes UKB quantitative results on the complete Exact, Near Duplicate, and Transformed subsets. To ensure comparability, each method is evaluated using three threshold settings: Hamming distance thresholds of 0, 10, and 32 for perceptual hashes and cosine similarity thresholds of 0.5, 0.9, and 1.0 for the CNN-based approach. These thresholds allow for direct comparisons of MAP, NDCG, Jaccard Index, and runtime among all five approaches and the reason behind choosing these values are to simulate three real-world scenarios: Strict/Exact Matching (Hamming 0/CNN 1.0) for finding identical files; Moderate Matching (Hamming 10/CNN 0.9) for finding near-duplicates with minor changes; and Relaxed Matching (Hamming 32/CNN 0.5) to test the limits of the models against heavy transformations. The value of 32 for hashing represents a 50% bit-flip in the 64-bit hash, serving as a logical boundary for testing model failure.

3.4.1. Mean Average Precision (MAP) Results on the UKB Dataset

Table 1 summarizes MAP results for the three UKB subsets with the chosen thresholds. On the Near Duplicate subset, the hashing methods produce very low MAP at the strict threshold, but improve at the moderate threshold, with AHash and WHash slightly outperforming DHash and PHash. In contrast, the CNN approach achieves a much larger gain, with a MAP of 0.4182 at the moderate threshold, indicating significantly higher ranking quality.

Among the hashing techniques, PHash achieves the highest MAP for the Transformed subset at the moderate threshold; however, the absolute values are still low, suggesting poor robustness to geometric distortions. Once more, the CNN approach outperforms all other approaches at the mid-range threshold, reaching 0.6080 MAP.

For Exact Duplicates, all hashing methods under the strict threshold produce near-perfect MAP, consistent with identical image content. When the threshold is loosened, MAP falls significantly. In contrast, the CNN method achieves 0.9958 MAP at the moderate threshold and maintains moderate ranking performance even in the relaxed condition, indicating greater stability across duplicate types.

3.4.2. Normalized Discounted Cumulative Gain (NDCG) Results on the UKB Dataset

The NDCG scores on UKB dataset are listed in

Table 2, and they mostly follow the MAP trends. Hashing-based techniques yield very low NDCG for Near Duplicates at the strict threshold, followed by a moderate improvement at the mid-range setting. The CNN model shows a substantially larger gain at the moderate threshold, reflecting improved ranking performance under mild visual variation.

The NDCG for the hashing techniques is marginally higher for the Transformed subset than in the Near Duplicate setting, but the gains are still small and differ depending on the method. Once again, the CNN approach leads at the mid-range threshold, indicating that it more consistently preserves the ranking structure under geometric transformations.

For Exact Duplicates, all hashing techniques achieve near-perfect NDCG at the strict threshold, which is expected for identical images. Their scores significantly drop when the threshold is loosened, but the CNN stays powerful at the moderate setting. Overall, these NDCG results support the MAP trend: CNN embeddings offer more consistent ranking across both minor and significant variations, while perceptual hashes perform best for identical content.

The Jaccard Index results for UKB are shown in

Table 3, which exhibits the same general pattern as MAP and NDCG. The hashing techniques yield extremely low Jaccard values on the Near Duplicate subset at the strict threshold and only slightly improve at the mid-level setting. The scores show little overlap between the retrieved sets and the actual duplicate sets, even at their best. The CNN approach, on the other hand, shows a much more dependable match between predicted and ground-truth duplicates and increases significantly at the moderate threshold.

For transformed duplicates, Jaccard scores for hashing methods rise slightly but remain low, indicating a lack of tolerance for geometric changes. The CNN model continues to outperform all others, with the highest overlap at the mid-level threshold and a clear advantage in transformed duplicate detection.

For exact duplicates, the hashing methods achieve near-perfect Jaccard at the strict threshold, as expected given that the images are identical. When the threshold is relaxed, their scores drop dramatically, whereas the CNN remains strong at the moderate setting. Overall, these findings support the same conclusion: hashing is most reliable for truly identical images, whereas CNN has a more consistent overlap with the ground truth across all UKB duplicate conditions. It should be noted that certain scores (such as 1.0 or 0.0) in

Table 1,

Table 2 and

Table 3 are the same. This is not accidental; rather, it is a reflection of the algorithms’ mathematical structure. All properly operating hashes provide identical bits for exact duplicates, yielding a score of 1.0. Since hashing techniques are made to be sensitive to any pixel-level change, they all automatically score 0.0 for near-duplicates at a threshold of 0. When competing approaches find precisely the same number of accurate pairs from the fixed ground-truth set at a high threshold, they get identical fractional scores (like 0.5851).

3.4.3. Runtime Results (UKB)

Table 4 summarizes the runtime breakdowns for UKB dataset, including encoding, searching, and total time. Hashing methods have consistently low runtimes across all three subsets, ranging from 40 to 60 s. AHash is the most efficient, while WHash has a low overhead due to its wavelet-based processing.

In contrast, the CNN-based method has a significantly longer runtime. For the Near Duplicate and Transformed subsets, its feature-extraction (encoding) time is more than 90 s, and its overall runtime is roughly 240 s (four to six times longer than the hash-based approaches). The CNN is still noticeably slower than the handcrafted methods, even on the Exact Duplicate subset.

Overall, these findings demonstrate a clear trade-off: although CNN consistently yields higher accuracy, this comes at the cost of significantly longer computation times when compared to lightweight hashing methods.

3.5. Quantitative Evaluation on the ABO Dataset

Quantitative results for each of the five approaches using the ABO dataset are shown in this subsection. We assess each method at three fixed operating points to match the UKB setup and maintain fair comparisons: cosine similarity thresholds of 0.5, 0.9, and 1.0 for the CNN model, and Hamming distance thresholds of 0, 10, and 32 for the hashing methods. Direct comparison of MAP, NDCG, Jaccard Index, and runtime under the increased visual variability of ABO product images is made possible by these shared settings.

3.5.1. Mean Average Precision (MAP) Results on the ABO Dataset

The MAP results for ABO across the Exact Duplicate, Near Duplicate, and Transformed subsets are compiled in

Table 5. The hashing techniques only slightly improve on the Near Duplicate subset when the threshold is loosened; AHash and PHash are slightly higher at the mid-level setting, but overall MAP values stay low because of the significant appearance variability in ABO product images. The CNN approach, on the other hand, exhibits a more pronounced increase at the moderate threshold (roughly 0.32), indicating that deep embeddings are better at ranking visually similar products in this dataset.

A similar pattern follows in the Transformed subset. Even with relaxed thresholds, the hashing methods produce low MAP values because common ABO edits (such as flips and aspect-ratio changes) frequently break the handcrafted descriptors. The CNN approach outperforms, with a stronger rise at the moderate threshold (approximately 0.44), indicating greater resilience to geometric variation.

For Exact Duplicates, hashing methods produce high MAP under the strict threshold, as expected for truly identical images, but performance suffers significantly when the threshold is relaxed. In contrast, the CNN model achieves a significantly higher MAP at the mid-level threshold (approximately 0.91) while maintaining relatively stable ranking performance under the relaxed condition. Taken together, the ABO findings mirror UKB results, where CNN-based embeddings outperform hashing methods for ranking, especially when duplicates have higher appearance diversity or geometric transformations.

3.5.2. Normalized Discounted Cumulative Gain (NDCG) Results on the ABO Dataset

Table 6 summarizes the NDCG performance on the ABO dataset. For Near Duplicates, hash-based methods produce low NDCG at the strict threshold and only modest gains at the moderate setting. The CNN approach results in a significant improvement (roughly 0.47), indicating higher ranking quality even for subtle visual differences between products.

A similar pattern is observed in the Transformed subset. The hash-based methods improve only slightly as thresholds are relaxed, indicating their sensitivity to common ABO edits like flips and other geometric changes. The CNN performs best at the moderate threshold (approximately 0.69), indicating better preservation of ranking quality during transformation.

For Exact Duplicates, hash-based methods produce near-perfect NDCG under the strict threshold, followed by a sharp decline as the threshold rises. In contrast, the CNN approach achieves a high mid-level score (approximately 0.93) and is more stable under relaxed conditions. Consistent with the MAP analysis, these findings show that perceptual hashes are most effective for truly identical images, whereas CNN embeddings maintain more reliable ranking across minor and major variations.

3.5.3. Jaccard Index Results on the ABO Dataset

Table 7 reports the Jaccard Index values for ABO dataset. On the Near Duplicate subset, all hashing approaches produce very low scores under the strict threshold, with only minor gains at the moderate setting. PHash and DHash improve slightly more, but overall overlap remains low, implying that the retrieved sets correspond to only a small fraction of the ground-truth duplicates. The CNN model shows a more pronounced improvement at the mid-level threshold (approximately 0.30), indicating better duplicate retrieval under ABO’s higher variability.

On the Transformed subset, Jaccard overlap for the hashing methods is low even at relaxed thresholds, indicating their sensitivity to flips, rotations, and aspect-ratio changes. The CNN again outperforms, reaching around 0.44 at the moderate threshold and maintaining a clear advantage in transformed duplicate detection.

In the Exact Duplicate subset, all hashing methods achieve very high Jaccard overlap at the strict threshold, as expected given that identical images are easy to match. When the threshold is relaxed, their scores decrease significantly, while the CNN remains strong at a moderate setting (roughly 0.85). Overall, the Jaccard index confirms that hashes work best for perfect matches, whereas CNN retrieval remains more consistent across variations in the ABO dataset, which was also true for the UKB dataset.

3.5.4. Runtime Results (ABO)

Table 8 highlights runtime breakdowns (encoding, search, and total time)cfor ABO dataset. According to the UKB findings, hashing methods remain fast across all three subsets. Total runtime for Near Duplicate and Transformed images is usually in the 9–12 s range, with AHash and DHash being the most efficient and WHash incurring a minor overhead due to wavelet processing. The Exact Duplicate subset is faster overall, with total times typically less than 7 s.

In contrast, the CNN approach remains significantly more expensive. Encoding alone takes over 30 s on the Near Duplicate subset and more than 50 s on the Transformed subset, for a total runtime of approximately 46 and 65 s respectively. Even for Exact Duplicates, where hashing takes only a few seconds, the CNN is significantly slower.

Overall, the ABO runtime results reflect the same trade-off as the UKB, such that CNN embeddings provide much higher retrieval accuracy but require significantly more computation, whereas perceptual hashing remains much faster but with lower accuracy.