Author Contributions

Conceptualization, S.W.B. and J.-H.H.; methodology, S.W.B.; software, S.W.B.; validation, S.W.B. and J.-H.H.; formal analysis, S.W.B.; investigation, J.-H.H.; resources, J.-H.H.; data curation, S.W.B.; writing—original draft preparation, S.W.B.; writing—review and editing, J.-H.H.; visualization, S.W.B.; supervision, J.-H.H.; project administration, J.-H.H.; funding acquisition, J.-H.H. All authors have read and agreed to the published version of the manuscript.

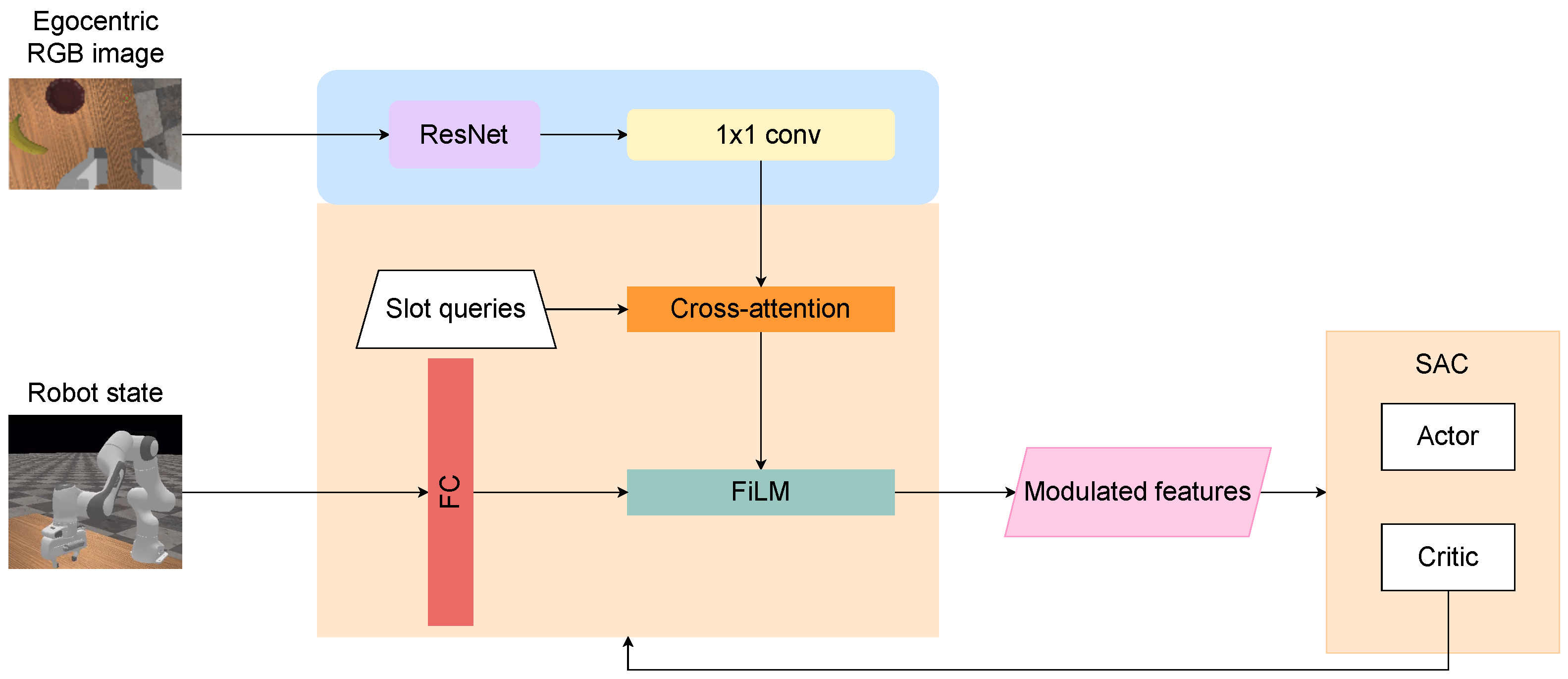

Figure 1.

Overview of the proposed proprioception-modulated architecture. Egocentric RGB input is encoded via ResNet and tokenized. Learnable slot queries attend to the tokens using a cross-attention mechanism. The resulting slot features are modulated by the robot states via FiLM and shared across all SAC components for policy learning. During reinforcement learning, the beige background modules, including FiLM conditioning, state projection, cross-attention, and slot heads, remain trainable and receive gradients from the SAC critic, while the blue background modules are frozen.

Figure 1.

Overview of the proposed proprioception-modulated architecture. Egocentric RGB input is encoded via ResNet and tokenized. Learnable slot queries attend to the tokens using a cross-attention mechanism. The resulting slot features are modulated by the robot states via FiLM and shared across all SAC components for policy learning. During reinforcement learning, the beige background modules, including FiLM conditioning, state projection, cross-attention, and slot heads, remain trainable and receive gradients from the SAC critic, while the blue background modules are frozen.

Figure 2.

PickBanana egocentric manipulation setup in ManiSkill. A seven-DoF robotic arm operates above a tabletop with a banana and a bowl placed near the target region (green dot). The task objective is to grasp and place the banana at the target location. The bowl is a non-goal movable object present at the target area.

Figure 2.

PickBanana egocentric manipulation setup in ManiSkill. A seven-DoF robotic arm operates above a tabletop with a banana and a bowl placed near the target region (green dot). The task objective is to grasp and place the banana at the target location. The bowl is a non-goal movable object present at the target area.

Figure 3.

Pre-training loss components for the egocentric slot-based encoder. (a) Swap-invariant pixel-space UV regression error (pos), (b) attention alignment loss (attnNLL), and (c) visibility prediction BCE (vis loss) over pre-training steps on PickBanana. All losses decrease rapidly and stabilize, indicating accurate pixel localization, attention centered on projected object tokens, and robust visibility handling in the wrist camera view.

Figure 3.

Pre-training loss components for the egocentric slot-based encoder. (a) Swap-invariant pixel-space UV regression error (pos), (b) attention alignment loss (attnNLL), and (c) visibility prediction BCE (vis loss) over pre-training steps on PickBanana. All losses decrease rapidly and stabilize, indicating accurate pixel localization, attention centered on projected object tokens, and robust visibility handling in the wrist camera view.

Figure 4.

Slot specialization from egocentric attention. Random frames illustrate the original observation (orig) and the attention heatmaps for Slot 0 and Slot 1. Across diverse viewpoints and object configurations, the two slots form a consistent decomposition in which one slot attends to the banana and the other to the bowl, with rare identity permutations expected from permutation-invariant slot learning without explicit slot–object binding. When both objects are fully occluded, attention reverts to the end-effector region as an agent-centric fallback cue.

Figure 4.

Slot specialization from egocentric attention. Random frames illustrate the original observation (orig) and the attention heatmaps for Slot 0 and Slot 1. Across diverse viewpoints and object configurations, the two slots form a consistent decomposition in which one slot attends to the banana and the other to the bowl, with rare identity permutations expected from permutation-invariant slot learning without explicit slot–object binding. When both objects are fully occluded, attention reverts to the end-effector region as an agent-centric fallback cue.

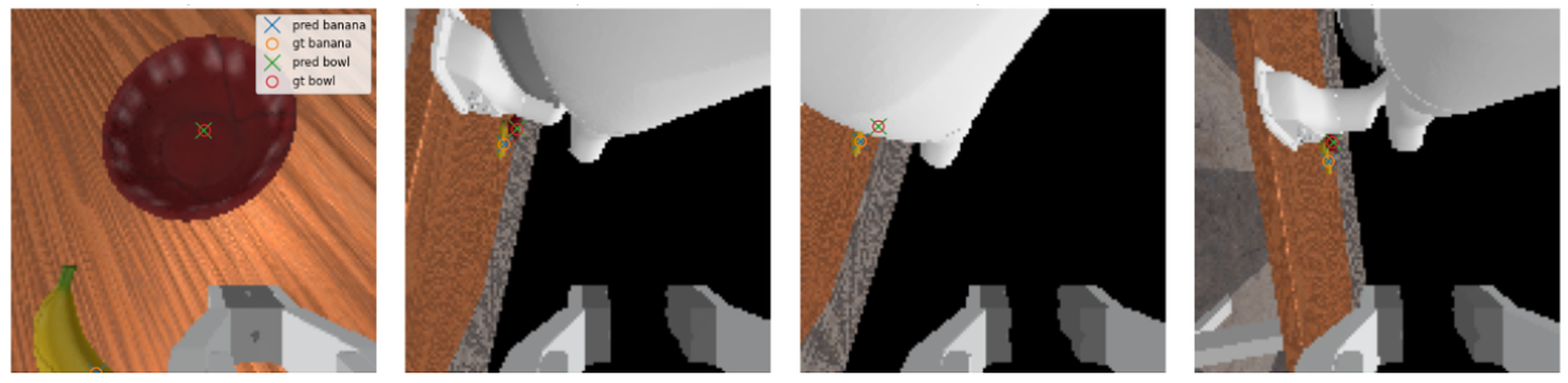

Figure 5.

Qualitative evaluation of the auxiliary pixel heads after pre-training. Predicted object UV locations (blue “x” for banana, green “x” for bowl) are overlaid with simulator-projected ground-truth pixels (orange “o” for banana, red “o” for bowl) on egocentric frames. Samples (a–d) show accurate localization when objects are visible, while sample (e) illustrates a difficult occlusion/out-of-view case handled via visibility/validity masking.

Figure 5.

Qualitative evaluation of the auxiliary pixel heads after pre-training. Predicted object UV locations (blue “x” for banana, green “x” for bowl) are overlaid with simulator-projected ground-truth pixels (orange “o” for banana, red “o” for bowl) on egocentric frames. Samples (a–d) show accurate localization when objects are visible, while sample (e) illustrates a difficult occlusion/out-of-view case handled via visibility/validity masking.

Figure 6.

Egocentric qualitative check of slot attention. A phone-mounted camera on a human arm mimics the wrist viewpoint, observing printed banana and bowl targets. Each pair of panels shows Slot-0 and Slot-1 attention heatmaps. Across the shown samples, the two slots remain spatially localized and track the banana and bowl under viewpoint changes, with occasional permutation (slot swapping), reflecting exchangeable slot identities rather than loss of tracking.

Figure 6.

Egocentric qualitative check of slot attention. A phone-mounted camera on a human arm mimics the wrist viewpoint, observing printed banana and bowl targets. Each pair of panels shows Slot-0 and Slot-1 attention heatmaps. Across the shown samples, the two slots remain spatially localized and track the banana and bowl under viewpoint changes, with occasional permutation (slot swapping), reflecting exchangeable slot identities rather than loss of tracking.

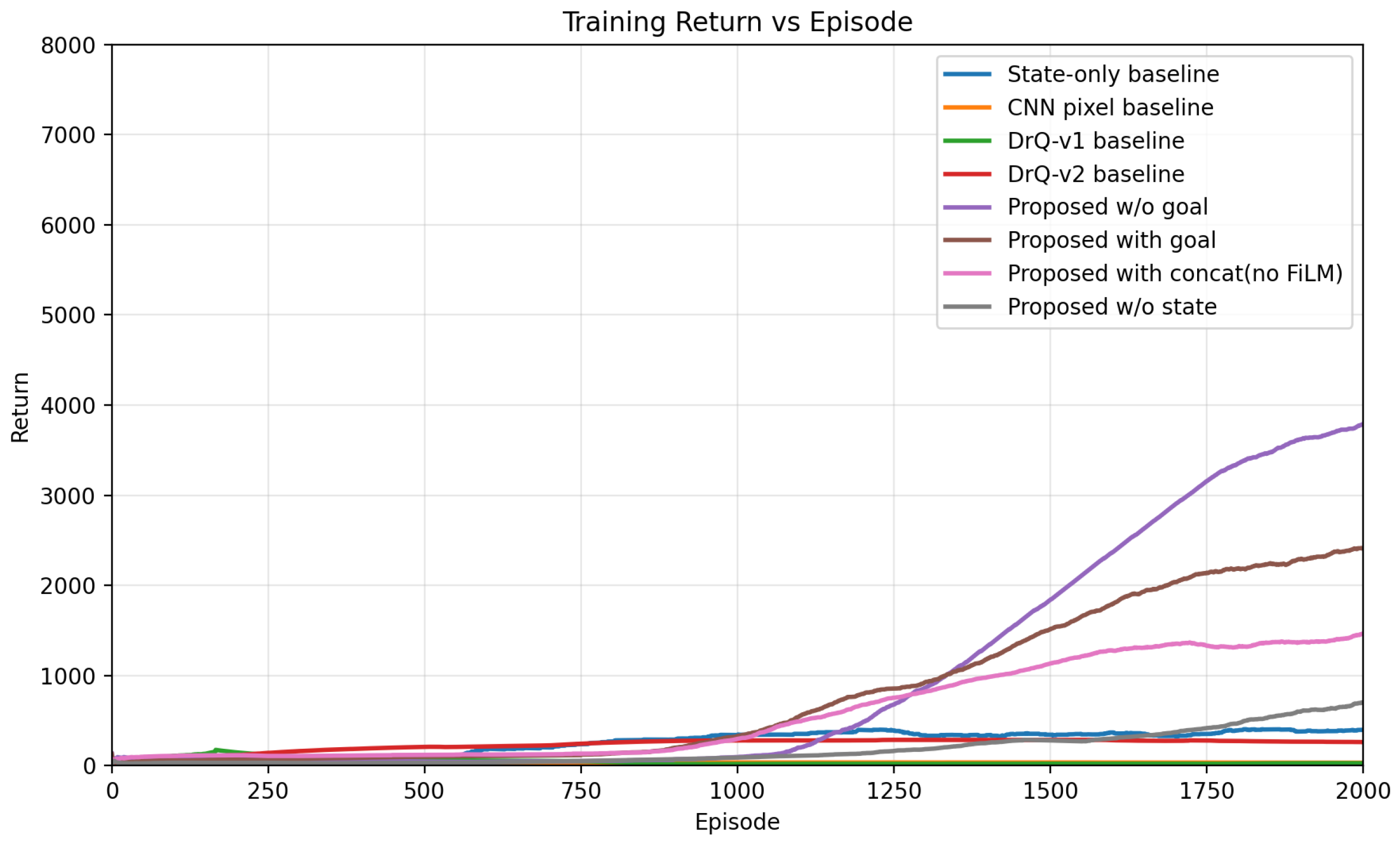

Figure 7.

Training return over 3000 episodes for all baselines and ablations under the same reward scaling. The proposed multi-slot attention agent consistently outperforms pixel-only and state-only alternatives, and the ablations isolate which design choices drive this gap.

Figure 7.

Training return over 3000 episodes for all baselines and ablations under the same reward scaling. The proposed multi-slot attention agent consistently outperforms pixel-only and state-only alternatives, and the ablations isolate which design choices drive this gap.

Figure 8.

Manipulation behavior (left to right) (a) reaching toward the banana; (b) grasping (c) lifting, transporting and pushing bowl; (d) place at target position (e) hold at the target position.

Figure 8.

Manipulation behavior (left to right) (a) reaching toward the banana; (b) grasping (c) lifting, transporting and pushing bowl; (d) place at target position (e) hold at the target position.

Figure 9.

Evolution of normalized reward components during training. The x-axis shows environment steps, and the y-axis shows normalized component magnitude in the range . Each curve corresponds to a reward component () logged during training and smoothed using exponential moving averaging.

Figure 9.

Evolution of normalized reward components during training. The x-axis shows environment steps, and the y-axis shows normalized component magnitude in the range . Each curve corresponds to a reward component () logged during training and smoothed using exponential moving averaging.

Figure 10.

Training curves comparing FiLM with tanh modulation (proposed), FiLM with linear+clamp, concatenation with projection, and concatenation without FiLM.

Figure 10.

Training curves comparing FiLM with tanh modulation (proposed), FiLM with linear+clamp, concatenation with projection, and concatenation without FiLM.

Figure 11.

Training return across 2000 episodes for different velocity penalty scaling terms. The proposed setting (StaticTerm = 5) achieves faster learning and higher stable return compared to StaticTerm = 1 and StaticTerm = 10.

Figure 11.

Training return across 2000 episodes for different velocity penalty scaling terms. The proposed setting (StaticTerm = 5) achieves faster learning and higher stable return compared to StaticTerm = 1 and StaticTerm = 10.

Figure 12.

Training return across episodes comparing the proposed method with the static reward term and the variant where the static term is removed.

Figure 12.

Training return across episodes comparing the proposed method with the static reward term and the variant where the static term is removed.

Figure 13.

Training return across 2000 episodes for different reward scaling factors. The proposed variant (RewardScale:10) achieves faster performance improvement and a higher final return compared to RewardScale:5 and RewardScale:15.

Figure 13.

Training return across 2000 episodes for different reward scaling factors. The proposed variant (RewardScale:10) achieves faster performance improvement and a higher final return compared to RewardScale:5 and RewardScale:15.

Figure 14.

Slot attention visualization when and the environment contains two objects. For clarity, we visualize the two slots that exhibit the strongest localized attention responses.

Figure 14.

Slot attention visualization when and the environment contains two objects. For clarity, we visualize the two slots that exhibit the strongest localized attention responses.

Figure 15.

Position prediction under surplus slot configuration (). Predicted and ground-truth keypoints for the banana and bowl are overlaid across different viewpoints.

Figure 15.

Position prediction under surplus slot configuration (). Predicted and ground-truth keypoints for the banana and bowl are overlaid across different viewpoints.

Figure 16.

Training return for end-to-end learning, where the visual encoder and policy are optimized jointly without representation pre-training. The agent improves its return but never reaches successful task completion.

Figure 16.

Training return for end-to-end learning, where the visual encoder and policy are optimized jointly without representation pre-training. The agent improves its return but never reaches successful task completion.

Table 1.

Environment and observation specifications for the PickBanana task.

Table 1.

Environment and observation specifications for the PickBanana task.

| Component | Specification |

|---|

| Robot model | 7-DOF Franka Emika Panda |

| Camera setup | Egocentric RGB camera mounted on the end-effector (Pandawrist) |

| Observation vector | 35-D proprioceptive state (joint positions, velocities, gripper pose) |

| Action space | 7-D continuous action space |

| Objects | Banana, target location, and a bowl |

| Simulator | SAPIEN engine via ManiSkill framework (panda_wristcam agent) |

Table 2.

Percentage contribution of reward components across training phases (mean ± standard deviation over environment steps).

Table 2.

Percentage contribution of reward components across training phases (mean ± standard deviation over environment steps).

| Phase | Reach | Post-Grasp | Place |

|---|

| Early | | | |

| Mid | | | |

| Late | | | |

Table 3.

Representation probing on PickBanana latents (mean ± std over three seeds). Contact AUROC measures interaction alignment. Δdist measures progress encoding. ΔObj is averaged over object displacement probes. Occ/Noise ΔAUROC reports the change in Contact AUROC under occlusion and noise (closer to 0 indicates higher robustness).

Table 3.

Representation probing on PickBanana latents (mean ± std over three seeds). Contact AUROC measures interaction alignment. Δdist measures progress encoding. ΔObj is averaged over object displacement probes. Occ/Noise ΔAUROC reports the change in Contact AUROC under occlusion and noise (closer to 0 indicates higher robustness).

| Variant | Contact AUROC ↑ | dist ↑ | Obj (avg) ↑ | Occ AUROC | Noise AUROC |

|---|

| FiLM (full) | 0.879 ± 0.019 | 0.841 ± 0.011 | 0.552 ± 0.029 |

−0.002 ± 0.026 |

−0.001 ± 0.006 |

| State-only | 0.852 ± 0.009 | 0.813 ± 0.008 | 0.570 ± 0.025 | | |

| Image-only | 0.638 ± 0.142 | 0.821 ± 0.022 | 0.529 ± 0.024 | | |

| Concat (no FiLM) | 0.531 ± 0.206 | 0.846 ± 0.014 | 0.577 ± 0.035 | | |