Leveraging MCP and Corrective RAG for Scalable and Interoperable Multi-Agent Healthcare Systems

Abstract

1. Introduction

2. Scientific Background

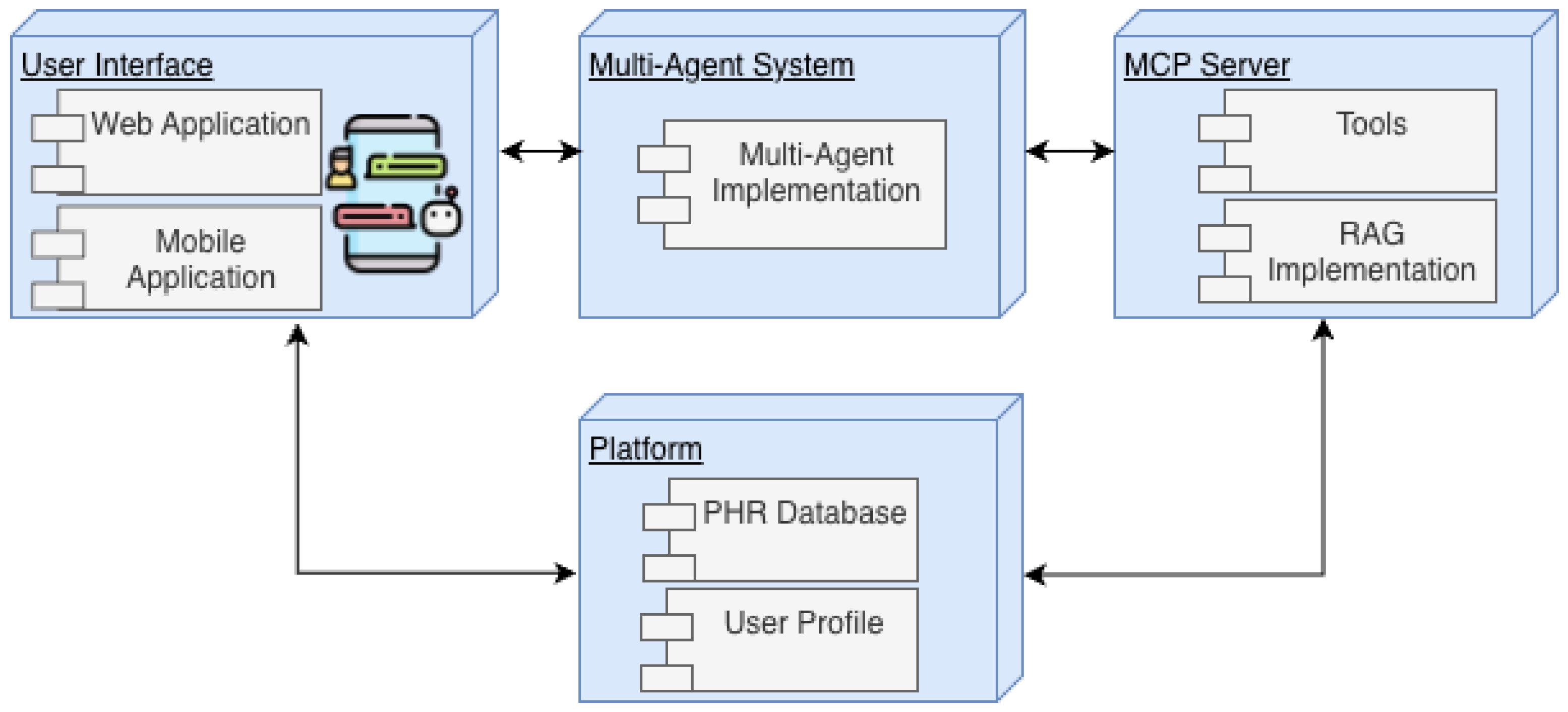

3. Design and Implementation

3.1. User Interface

3.2. Platform

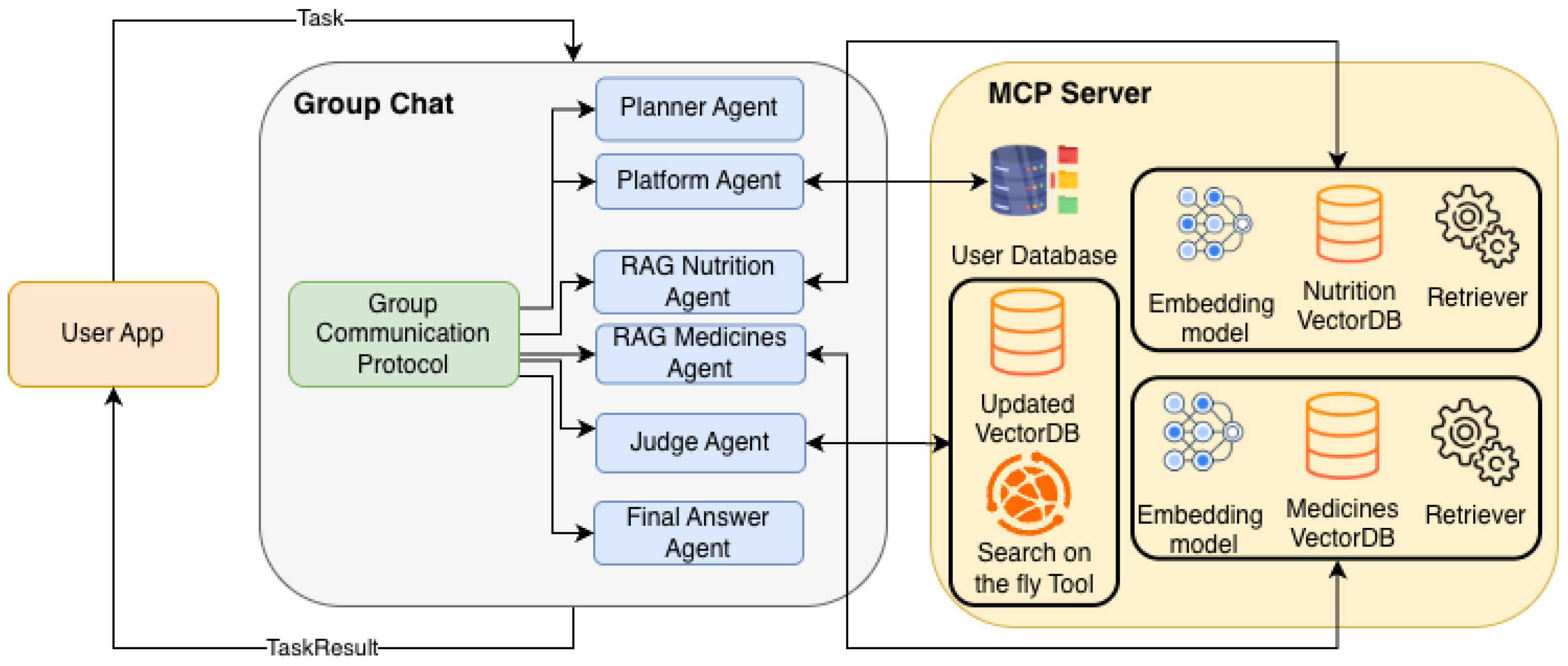

3.3. Multi-Agent System

3.4. Model Context Protocol

3.5. Implementation of MAS

| Algorithm 1 Process_User_Query() |

| Input: User Query (U) |

| Output: Final Response (R) |

| 1. Initialization Phase |

| 1: ▹ Initialize empty conversation history |

| 2: ▹ Register Agent Ensemble |

| 3: ▹ |

| Establish MCP Tool Connections |

| 2. Context Ingestion |

| 4: ▹ Inject user query into context |

| 3. Main Orchestration Loop |

| 5: while Process_Is_Active do |

| 6: # A. Next Speaker Selection |

| 7: ▹ Model-based dynamic routing |

| 8: # B. Agent Execution Logic |

| 9: if then |

| 10: ▹ Decompose query into sub-tasks |

| 11: |

| 12: |

| 13: else if then |

| 14: if query_requires_personal_data then |

| 15: # Secure retrieval of Patient Health Records (PHR) |

| 16: |

| 17: |

| 18: |

| 19: end if |

| 20: else if then |

| 21: if query_requires_domain_knowledge then |

| 22: # 1. Standard Retrieval via MCP |

| 23: ▹ Retrieve top-k documents |

| 24: # 2. Judge Validation (CRAG Logic) |

| 25: |

| 26: if then ▹ If context is insufficient |

| 27: # 3. Corrective Web Search |

| 28: |

| 29: ▹ Enrich context with web data |

| 30: else |

| 31: |

| 32: end if |

| 33: |

| 34: |

| 35: end if |

| 36: else if then |

| 37: ▹ Synthesize all agent outputs |

| 38: if then ▹ Validate relevance and safety |

| 39: |

| 40: ▹ Terminate loop |

| 41: else |

| 42: ▹ Trigger re-evaluation |

| 43: end if |

| 44: end if |

| 45: end while |

| 4. Output Generation |

| 46: return R |

3.6. End-to-End Architecture Workflow

3.6.1. Phase 1: Query Decomposition (The Planner)

3.6.2. Phase 2: Retrieval via MCP

3.6.3. Phase 3: Active Validation (The Judge-Loop)

3.6.4. Phase 4: Final Synthesis

4. System in Practice

4.1. User Requirements

4.2. Prototype

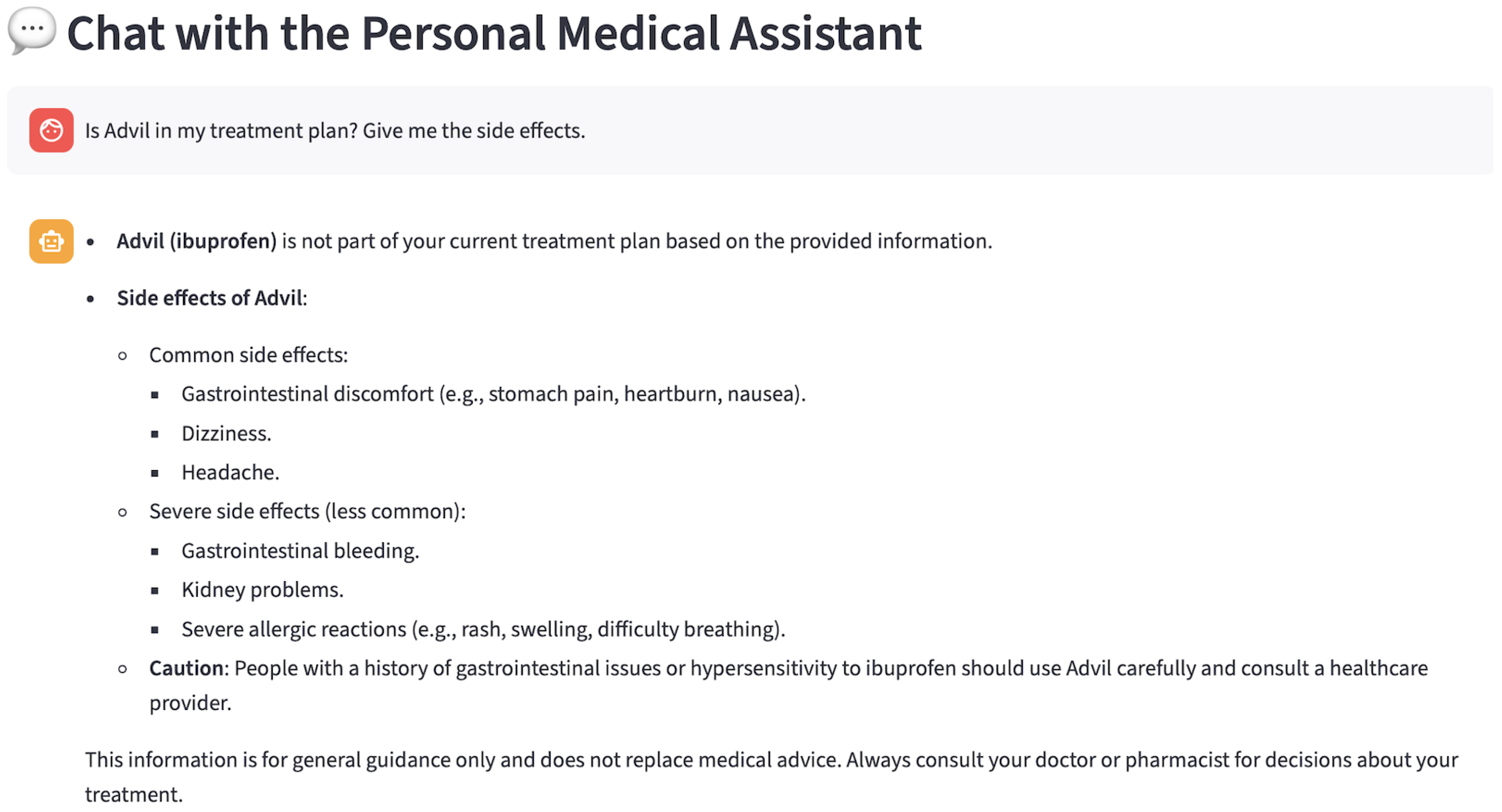

4.2.1. User Scenario

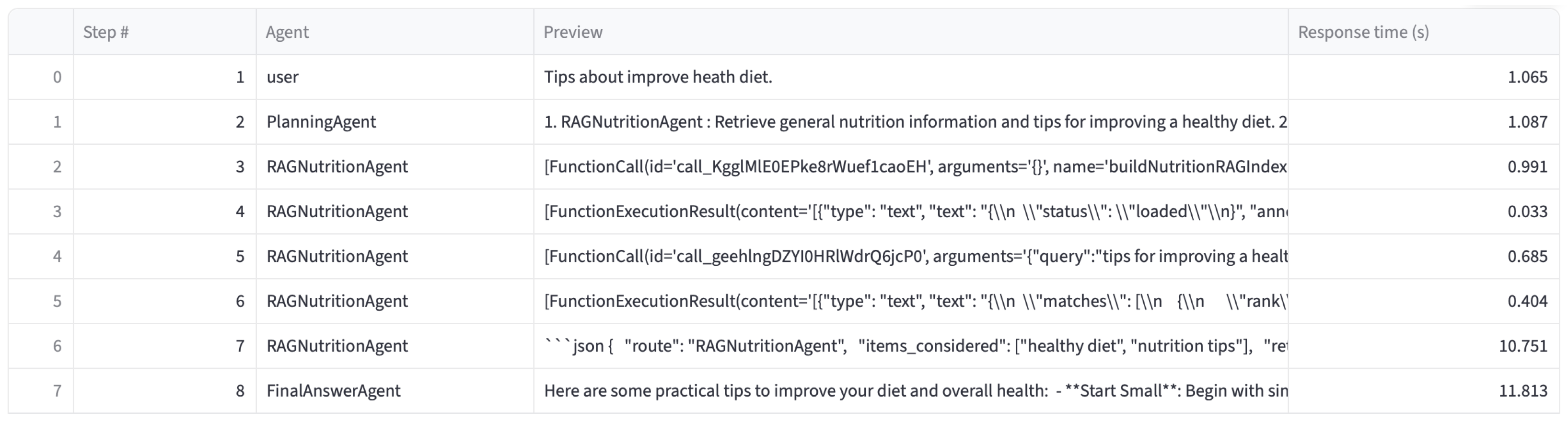

4.2.2. Monitoring Scenario

5. Evaluation and Results

5.1. Evaluation Methodology

- Presentation of Personal Data: The MAS retrieves the user’s PHR and profile information and presents only the essential attributes, without exposing additional sensitive details such as nutrition logs, medication history, or physiological measurements.

- Personal Nutrition Information with Additional Knowledge: The MAS accesses the user’s nutrition records from the PHR and enriches them using the RAG module to generate validated and accurate outcomes.

- Personal Drug Treatment Information with Additional Knowledge: The MAS fetches medication-related information from the PHR and applies RAG-based augmentation to provide validated, evidence-informed insights and up-to-date information.

- General Nutrition Information with Additional Knowledge: For general, non-personal nutrition queries, the MAS uses the RAG module for nutrition-based knowledge without referencing the user’s personal PHR data.

- General Drug Information with Additional Knowledge: The MAS responds to general medication-related questions by leveraging RAG to generate validated knowledge, again without adding any personal health information from the user’s PHR.

- Combined Nutrition and Pharmacological Analysis: In this advanced scenario, the MAS integrates data from both the Nutrition RAG Agent and the Medicine RAG Agent simultaneously. This allows the system to identify potential drug-nutrient interactions (e.g., verifying if a prescribed diet is safe given the user’s current medication) and provide comprehensive safety warnings that neither agent could generate in isolation.

- Real-Time Corrective Retrieval (AC-RAG): This scenario addresses cases where the internal vector database contains insufficient, ambiguous, or outdated information. The Judge Agent actively evaluates the initial retrieval quality; if the relevance score falls below a predefined threshold of 0.7 [48], it autonomously triggers a Web Drug Search (e.g., NLM databases) via the MCP server. This corrective mechanism fetches the latest clinical data on-the-fly, which is then synthesized with the internal context to ensure the final response is factually current and accurate. Crucially, the system implements a dynamic update loop: validated external findings are automatically indexed into the vector database, allowing the assistant to incrementally expand its reusable knowledge base and preventing redundant external searches for future queries.

5.2. MCP Evaluation

5.3. Comparative RAG Evaluation: Standard vs. CAG vs. AC-RAG

5.3.1. Results Analysis: The Accuracy-Efficiency Trade-Off

5.3.2. Detailed Breakdown of the Proposed Approach

5.4. System Architecture Validation

6. Discussion

7. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Kalathas, D.; Koulouris, D.; Menychtas, A.; Tsanakas, P.; Maglogiannis, I. Continuous machine learning for assisting AR indoor navigation. SN Comput. Sci. 2024, 5, 913. [Google Scholar] [CrossRef]

- Bulla, C.; Parushetti, C.; Teli, A.; Aski, S.; Koppad, S. A review of AI based medical assistant chatbot. Res. Appl. Web Dev. Des. 2020, 3, 1–14. [Google Scholar]

- Pap, I.A.; Oniga, S. eHealth assistant AI chatbot using a large language model to provide personalized answers through secure decentralized communication. Sensors 2024, 24, 6140. [Google Scholar] [CrossRef] [PubMed]

- Ferrag, M.A.; Tihanyi, N.; Debbah, M. From LLM reasoning to autonomous AI agents: A comprehensive review. arXiv 2025, arXiv:2504.19678. [Google Scholar] [CrossRef]

- Hadfield, G.K.; Koh, A. An economy of AI agents. arXiv 2025, arXiv:2509.01063. [Google Scholar] [CrossRef]

- Masterman, T.; Besen, S.; Sawtell, M.; Chao, A. The landscape of emerging AI agent architectures for reasoning, planning, and tool calling: A survey. arXiv 2024, arXiv:2404.11584. [Google Scholar] [CrossRef]

- Tang, Q.; Xiang, H.; Yu, L.; Yu, B.; Lu, Y.; Han, X.; Sun, L.; Zhang, W.; Wang, P.; Liu, S.; et al. Beyond turn limits: Training deep search agents with dynamic context window. arXiv 2025, arXiv:2510.08276. [Google Scholar] [CrossRef]

- Khatami, S.; Frantz, C. Prompt Engineering Guidance for Conceptual Agent-based Model Extraction using Large Language Models. arXiv 2024, arXiv:2412.04056. [Google Scholar] [CrossRef]

- Li, X.; Wang, S.; Zeng, S.; Wu, Y.; Yang, Y. A survey on LLM-based multi-agent systems: Workflow, infrastructure, and challenges. Vicinagearth 2024, 1, 9. [Google Scholar] [CrossRef]

- He, J.; Treude, C.; Lo, D. LLM-Based Multi-Agent Systems for Software Engineering: Literature Review, Vision, and the Road Ahead. ACM Trans. Softw. Eng. Methodol. 2025, 34, 124. [Google Scholar] [CrossRef]

- Ehtesham, A.; Singh, A.; Gupta, G.K.; Kumar, S. A survey of agent interoperability protocols: Model context protocol (MCP), agent communication protocol (ACP), agent-to-agent protocol (A2A), and agent network protocol (ANP). arXiv 2025, arXiv:2505.02279. [Google Scholar]

- Angert, T.; Suzara, M.; Han, J.; Pondoc, C.; Subramonyam, H. Spellburst: A node-based interface for exploratory creative coding with natural language prompts. In Proceedings of the 36th Annual ACM Symposium on User Interface Software and Technology, San Francisco, CA, USA, 29 October–1 November 2023; pp. 1–22. [Google Scholar]

- Zhang, B.; Liu, Z.; Cherry, C.; Firat, O. When scaling meets LLM finetuning: The effect of data, model and finetuning method. arXiv 2024, arXiv:2402.17193. [Google Scholar] [CrossRef]

- Sawarkar, K.; Mangal, A.; Solanki, S.R. Blended RAG: Improving RAG (retriever-augmented generation) accuracy with semantic search and hybrid query-based retrievers. In Proceedings of the 2024 IEEE 7th International Conference on Multimedia Information Processing and Retrieval (MIPR), San Jose, CA, USA, 7–9 August 2024; pp. 155–161. [Google Scholar]

- Lewis, P.; Perez, E.; Piktus, A.; Petroni, F.; Karpukhin, V.; Goyal, N.; Küttler, H.; Lewis, M.; Yih, W.; Rocktäschel, T.; et al. Retrieval-augmented generation for knowledge-intensive NLP tasks. Adv. Neural Inf. Process. Syst. 2020, 33, 9459–9474. [Google Scholar]

- Joshi, S. Introduction to Vector Databases for Generative AI: Applications, Performance, Future Projections, and Cost Considerations. Int. Adv. Res. J. Sci. Eng. Technol. 2025, 12, 79–93. [Google Scholar] [CrossRef]

- Yu, W.; Iter, D.; Wang, S.; Xu, Y.; Ju, M.; Sanyal, S.; Zhu, C.; Zeng, M.; Jiang, M. Generate rather than retrieve: Large language models are strong context generators. arXiv 2022, arXiv:2209.10063. [Google Scholar]

- Singh, A.; Ehtesham, A.; Kumar, S.; Khoei, T.T. Agentic retrieval-augmented generation: A survey on agentic rag. arXiv 2025, arXiv:2501.09136. [Google Scholar] [CrossRef]

- Yan, S.-Q.; Gu, J.-C.; Zhu, Y.; Ling, Z.-H. Corrective retrieval augmented generation. arXiv 2024, arXiv:2401.15884. [Google Scholar] [CrossRef]

- Joshi, S. Review of autonomous systems and collaborative AI agent frameworks. Int. J. Sci. Res. Arch. 2025, 14, 961–972. [Google Scholar] [CrossRef]

- Chan, B.J.; Chen, C.-T.; Cheng, J.-H.; Huang, H.-H. Don’t do rag: When cache-augmented generation is all you need for knowledge tasks. In Companion Proceedings of the ACM on Web Conference 2025; Association for Computing Machinery: New York, NY, USA, 2025; pp. 893–897. [Google Scholar]

- Gilson, A.; Safranek, C.W.; Huang, T.; Socrates, V.; Chi, L.; Taylor, R.A.; Chartash, D. How does ChatGPT perform on the United States Medical Licensing Examination (USMLE)? The implications of large language models for medical education and knowledge assessment. JMIR Med. Educ. 2023, 9, e45312. [Google Scholar] [CrossRef]

- Kung, T.H.; Cheatham, M.; Medenilla, A.; Sillos, C.; De Leon, L.; Elepaño, C.; Madriaga, M.; Aggabao, R.; Diaz-Candido, G.; Maningo, J.; et al. Performance of ChatGPT on USMLE: Potential for AI-assisted medical education using large language models. PLoS Digit. Health 2023, 2, e0000198. [Google Scholar] [CrossRef] [PubMed]

- Koulouris, D.; Kalathas, D.; Menychtas, A.; Pasias, A.; Athanasiou, V.; Tsanakas, P.; Maglogiannis, I. A Web-Based Information System for Medical Forensics Incorporating Generative AI in Reports Compilation. In Proceedings of the IFIP International Conference on Artificial Intelligence Applications and Innovations, Limassol, Cyprus, 26–29 June 2025; Springer: Cham, Switzerland, 2026; pp. 342–352. [Google Scholar]

- Singhal, K.; Azizi, S.; Tu, T.; Mahdavi, S.S.; Wei, J.; Chung, H.W.; Scales, N.; Tanwani, A.; Cole-Lewis, H.; Pfohl, S.; et al. Large language models encode clinical knowledge. Nature 2023, 620, 172–180. [Google Scholar] [CrossRef]

- Singhal, K.; Tu, T.; Gottweis, J.; Sayres, R.; Wulczyn, E.; Amin, M.; Hou, L.; Clark, K.; Pfohl, S.R.; Cole-Lewis, H.; et al. Toward expert-level medical question answering with large language models. Nat. Med. 2025, 31, 943–950. [Google Scholar] [CrossRef] [PubMed]

- Li, Y.; Li, Z.; Zhang, K.; Dan, R.; Jiang, S.; Zhang, Y. ChatDoctor: A medical chat model fine-tuned on a large language model Meta-AI (LLaMA) using medical domain knowledge. Cureus 2023, 15, e40895. [Google Scholar] [CrossRef]

- Han, T.; Adams, L.C.; Papaioannou, J.M.; Grundmann, P.; Oberhauser, T.; Löser, A.; Truhn, D.; Bressem, K.K. MedAlpaca—An open-source collection of medical conversational AI models and training data. arXiv 2023, arXiv:2304.08247. [Google Scholar]

- Luo, R.; Sun, L.; Xia, Y.; Qin, T.; Zhang, S.; Poon, H.; Liu, T.Y. BioGPT: Generative pre-trained transformer for biomedical text generation and mining. Brief. Bioinform. 2022, 23, bbac409. [Google Scholar] [CrossRef]

- Samdani, G.; Dixit, Y.; Viswanathan, G. Leveraging LangGraph and AutoGen for Agentic AI Frameworks. World J. Adv. Eng. Technol. Sci. 2023, 8, 402–411. [Google Scholar] [CrossRef]

- Abbasian, M.; Azimi, I.; Rahmani, A.M.; Jain, R. Conversational health agents: A personalized LLM-powered agent framework. arXiv 2023, arXiv:2310.02374. [Google Scholar]

- Qian, C.; Liu, W.; Liu, H.; Chen, N.; Dang, Y.; Li, J.; Yang, C.; Chen, W.; Su, Y.; Cong, X.; et al. ChatDev: Communicative agents for software development. In Proceedings of the 62nd Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), Bangkok, Thailand, 2024; Association for Computational Linguistics: Stroudsburg, PA, USA, 2024; pp. 15174–15186. [Google Scholar]

- Zhang, C.; Yang, Z.; Liu, J.; Li, Y.; Han, Y.; Chen, X.; Huang, Z.; Fu, B.; Yu, G. AppAgent: Multimodal agents as smartphone users. In Proceedings of the 2025 CHI Conference on Human Factors in Computing Systems, Yokohama, Japan, 26 April–1 May 2025; pp. 1–20. [Google Scholar]

- Liu, X.; Yu, H.; Zhang, H.; Xu, Y.; Lei, X.; Lai, H.; Gu, Y.; Ding, H.; Men, K.; Yang, K.; et al. AgentBench: Evaluating LLMs as agents. arXiv 2023, arXiv:2308.03688. [Google Scholar] [CrossRef]

- Kalathas, D.; Menychtas, A.; Maglogiannis, I.; Tsanakas, P. Incorporating Medical Assistants in eHealth Environments Using an Agentic RAG Approach. In Proceedings of the IFIP International Conference on Artificial Intelligence Applications and Innovations, Limassol, Cyprus, 26–29 June 2025; Springer: Cham, Switzerland, 2026; pp. 154–167. [Google Scholar]

- Alghamdi, H.M.; Mostafa, A. Towards reliable healthcare LLM agents: A case study for pilgrims during Hajj. Information 2024, 15, 371. [Google Scholar] [CrossRef]

- Jagatap, A.; Merugu, S.; Comar, P.M. RxLens: Multi-Agent LLM-powered Scan and Order for Pharmacy. In Proceedings of the 2025 Conference of the Nations of the Americas Chapter of the Association for Computational Linguistics: Human Language Technologies (Volume 3: Industry Track), Online, 2025; Association for Computational Linguistics: Stroudsburg, PA, USA, 2025; pp. 822–832. [Google Scholar]

- Agrawal, R.; Kumar, H. Enhancing Cache-Augmented Generation (CAG) with Adaptive Contextual Compression for Scalable Knowledge Integration. arXiv 2025, arXiv:2505.08261. [Google Scholar] [CrossRef]

- Gangavarapu, R.; Srinivasan, A.R.A.; Moparthi, V. Evaluating Accuracy in Large Language Models: Benchmarking Corrective Rag vs. Naive Retrieval Augmented Generation Approach. In Proceedings of the 2025 IEEE International Conference on AI and Data Analytics (ICAD), Medford, MA, USA, 24 June 2025; pp. 1–7. [Google Scholar]

- Marcondes, F.S.; Gala, A.; Magalhães, R.; Perez de Britto, F.; Durães, D.; Novais, P. Using Ollama. In Natural Language Analytics with Generative Large-Language Models: A Practical Approach with Ollama and Open-Source LLMs; Springer: Cham, Switzerland, 2025; pp. 23–35. [Google Scholar]

- Şakar, T.; Emekci, H. Maximizing RAG efficiency: A comparative analysis of RAG methods. Nat. Lang. Process. 2025, 31, 1–25. [Google Scholar] [CrossRef]

- Douze, M.; Guzhva, A.; Deng, C.; Johnson, J.; Szilvasy, G.; Mazaré, P.E.; Lomeli, M.; Hosseini, L.; Jégou, H. The Faiss library. IEEE Trans. Big Data 2025, 1–17. [Google Scholar] [CrossRef]

- World Health Organization. Healthy Diet. Available online: https://www.who.int/news-room/fact-sheets/detail/healthy-diet (accessed on 10 December 2025).

- U.S. Department of Agriculture, Agricultural Research Service. FoodData Central. Available online: https://fdc.nal.usda.gov/ (accessed on 10 December 2025).

- National Library of Medicine (US). DailyMed. Available online: https://dailymed.nlm.nih.gov/dailymed/ (accessed on 10 December 2025).

- National Library of Medicine (US). MedlinePlus XML Files. Available online: https://medlineplus.gov/xml.html (accessed on 10 December 2025).

- Ray, P.P. A survey on model context protocol: Architecture, state-of-the-art, challenges and future directions. TechRxiv 2025. [Google Scholar] [CrossRef]

- Han, S.; Junior, G.T.; Balough, T.; Zhou, W. Judge’s Verdict: A Comprehensive Analysis of LLM Judge Capability Through Human Agreement. arXiv 2025, arXiv:2510.09738. [Google Scholar]

- Alabdulwahab, A.; Japic, C.; Le, C.; Dubey, D.; Trivedi, D.; Hope, J.; Stone, P.; Srivastava, S.; Tashman, A.; Zhang, A. Comparative Study of Large Language Model Evaluation Frameworks with a Focus on NLP vs. LLM-As-A-Judge Metrics. In Proceedings of the 2025 Systems and Information Engineering Design Symposium (SIEDS), Charlottesville, VA, USA, 2 May 2025; pp. 410–415. [Google Scholar]

- Es, S.; James, J.; Anke, L.E.; Schockaert, S. Ragas: Automated evaluation of retrieval augmented generation. In Proceedings of the 18th Conference of the European Chapter of the Association for Computational Linguistics: System Demonstrations, St. Julian’s, Malta, 2024; Association for Computational Linguistics: Stroudsburg, PA, USA, 2024; pp. 150–158. [Google Scholar]

| Scenario | Queries | Planner | Platform | RAG Nutri | RAG Med | Judge | Final |

|---|---|---|---|---|---|---|---|

| 1 | 10 | X | X | X | |||

| 2 | 15 | X | X | X | X | X | |

| 3 | 15 | X | X | X | X | X | |

| 4 | 20 | X | X | X | X | ||

| 5 | 20 | X | X | X | X | ||

| 6 | 15 | X | X | X | X | X | |

| 7 | 15 | X | X | X | X |

| Scenario | Num of Queries | MCP Use Metric | Duration (s) |

|---|---|---|---|

| 1 | 10 | Very High | 2.97 |

| 2 | 15 | Moderate | 7.44 |

| 3 | 15 | High | 8.20 |

| 4 | 20 | High | 5.24 |

| 5 | 20 | High | 5.10 |

| 6 | 15 | High | 9.25 |

| 7 | 15 | Very High | 11.50 |

| Overall | 110 | High | 7.06 |

| Approach | Faithfulness | Answer Relevancy | Context Relevance | Avg Latency (s) | Tokens/Req |

|---|---|---|---|---|---|

| Typical RAG | 0.85 | 0.64 | 0.55 | 30.30 | 37,057 |

| CAG (Cache) | 0.82 | 0.66 | 0.56 | 26.54 | 68,593 |

| AC-RAG | 0.88 | 0.86 | 0.78 | 33.40 | 52,500 |

| Scenario | Faithfulness | Answer Relevancy | Context Relevance | Latency (s) |

|---|---|---|---|---|

| Nutrition Agent | 0.75 | 0.98 | 0.49 | 31.20 |

| Medicine Agent | 0.72 | 0.83 | 0.81 | 32.50 |

| Combined (Nutri + Med) | 0.82 | 0.91 | 0.85 | 33.50 |

| Feature | Generic LLM | Single Agent | Std. MAS | Std. RAG | CAG | Proposed (AC-RAG) |

|---|---|---|---|---|---|---|

| Memory | None | Tool-based | Local | Vector Only | Pre-loaded Context | Shared + Vector + Web |

| Context | Short | Extended | Distributed | Retrieval Dependent | Fixed/Cached | Active & Verified |

| Reasoning | Generic | Tool-aided | Fragmented | Fact-based | Context-based | Self-Correcting |

| Accuracy | Low | Moderate | Inconsistent | Variable | High (Static) | Very High |

| Scalability | Low | Moderate | Moderate | High | Limited | High (MCP) |

| Data Type | Static Weights | External Tools | Local Data | Vector Snapshot | Cached Context | Real-Time Hybrid |

| Correction | None | Manual | None | None | None | Auto (Judge) |

| Healthcare Fit | Low | Moderate | Moderate | Moderate | Low (Static) | Very High |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Kalathas, D.; Menychtas, A.; Tsanakas, P.; Maglogiannis, I. Leveraging MCP and Corrective RAG for Scalable and Interoperable Multi-Agent Healthcare Systems. Electronics 2026, 15, 888. https://doi.org/10.3390/electronics15040888

Kalathas D, Menychtas A, Tsanakas P, Maglogiannis I. Leveraging MCP and Corrective RAG for Scalable and Interoperable Multi-Agent Healthcare Systems. Electronics. 2026; 15(4):888. https://doi.org/10.3390/electronics15040888

Chicago/Turabian StyleKalathas, Dimitrios, Andreas Menychtas, Panayiotis Tsanakas, and Ilias Maglogiannis. 2026. "Leveraging MCP and Corrective RAG for Scalable and Interoperable Multi-Agent Healthcare Systems" Electronics 15, no. 4: 888. https://doi.org/10.3390/electronics15040888

APA StyleKalathas, D., Menychtas, A., Tsanakas, P., & Maglogiannis, I. (2026). Leveraging MCP and Corrective RAG for Scalable and Interoperable Multi-Agent Healthcare Systems. Electronics, 15(4), 888. https://doi.org/10.3390/electronics15040888