1. Introduction

Infrared small target detection has attracted increasing attention due to its inherent robustness to illumination and weather conditions, making it highly valuable for a wide range of critical applications, such as remote sensing surveillance, fire early warning, maritime security, and precision guidance. As a core component of infrared target detection and tracking systems, it aims to capture and identify small targets in diverse scenarios using infrared imaging, thereby providing reliable information for subsequent decision-making and execution. However, compared with visible-light images, infrared small target detection faces substantial challenges arising from complex imaging environments, unique imaging mechanisms, and demanding application requirements. First, in long-range imaging scenarios, infrared small targets usually occupy only a few pixels and lack clear shape, fixed scale, and rich texture information, resulting in extremely limited discriminative features. Second, complex background interference caused by clouds, birds, clutter, and other distractors often overwhelms weak targets, leading to high false alarm rates. Third, real-world applications such as precision guidance and real-time surveillance impose strict requirements on computational efficiency, while improving detection reliability typically increases model complexity, further exacerbating the difficulty of achieving real-time performance. Therefore, designing infrared small target detection algorithms that achieve high detection accuracy, low false alarm rates, and high efficiency remains a fundamental and challenging research problem.

In the early stages of infrared small target detection, numerous traditional methods were proposed, mainly including sparse matrix-based methods [

1,

2,

3], filter-based methods [

4,

5,

6], and local contrast-based methods [

7,

8,

9]. Despite their effectiveness in specific scenarios, these methods suffer from inherent limitations. On the one hand, their performance heavily relies on handcrafted priors and manually tuned parameters, making them difficult to generalize across diverse and complex environments. On the other hand, their robustness to noise and clutter is limited, which restricts their ability to suppress background interference and accurately extract weak target features, leading to degraded detection performance in practical applications.

In recent years, deep learning has achieved remarkable success in computer vision and related fields, providing powerful end-to-end feature learning capabilities. Compared with traditional approaches, deep learning-based infrared small target detection methods can automatically learn discriminative representations without handcrafted features, offering superior robustness and generalization. However, due to the extremely small target size and complex backgrounds, target features are still prone to attenuation and loss during deep network propagation, which limits further performance improvements. Existing methods have attempted to alleviate these issues but remain constrained by notable drawbacks. For example, Li et al. [

10] proposed a Densely Nested Attention Network (DNANet), which employs densely nested U-shaped architectures and channel-spatial attention to enhance feature interaction, but suffers from slow inference speed and limited edge localization accuracy. Li et al. [

11] introduced a Multi-Directional Learnable Edge Information-Assisted Dense Nested Network, where edge information of small targets is explicitly extracted and fused with hierarchical features via dense connections, thereby strengthening the network’s perception of target boundary structures. Sun et al. [

12] introduced a Receptive Field and Direction Induced Attention Network (RDIAN), which leverages receptive field and directional attention to mitigate target-background imbalance, achieving high efficiency at the cost of reduced feature representation capability.

To overcome these limitations, this paper proposes a Gradient Compensation-Based Feature Learning Network (GCFLNet) for infrared small target detection. The term “gradient-compensated” emphasizes the design philosophy of continuously reinforcing edge and structural cues during multi-stage feature learning, rather than introducing a standalone gradient operator. GCFLNet integrates the local modeling capability of convolutional neural networks with Transformer-inspired global context modeling, enabling effective representation of infrared small targets under complex backgrounds. By exploiting infrared gradient vector fields, the proposed network enhances target feature representation, enabling cleaner and more detailed feature extraction. In the encoder stage, an Edge Enhancement Module (EEM) is designed to suppress background noise while accurately capturing fine-grained target edge information. Subsequently, a Global–Local Feature Interaction (GLFI) module is introduced to cooperate with EEM, further reducing noise interference and achieving effective fusion of local details and global semantic information. In the decoder stage, a Multi-Scale Information Compensation (MSIC) module is proposed to promote deep interaction and fusion of multi-scale features by optimizing skip connections and up-sampling operations, thereby jointly improving localization accuracy and segmentation quality. The main contributions of this work are summarized as follows:

To address the issues of blurred edges and background noise interference in infrared small targets, an edge enhancement module (EEM) is proposed. By leveraging infrared gradient vector field characteristics, EEM accurately captures fine target contours while adaptively suppressing background clutter and noise, effectively enhancing target–background discrimination.

To jointly model local details and global semantic dependencies, a novel global–local feature interaction module (GLFI) is designed. By combining the local correlation modeling of CNNs with an attention-inspired global context modeling mechanism, and using edge-enhanced features as guidance, GLFI enables effective fusion of local and global features while further suppressing residual noise.

To fully exploit the complementary characteristics of multi-scale features, a multi-scale information compensation module (MSIC) is introduced. This module explores spatial and channel-wise differences across feature scales and facilitates adaptive interaction between high-level and low-level features, generating more discriminative representations for infrared small target detection.

3. Methodology

In this work, the term “compensation” refers to a feature enhancement strategy that supplements structural or spatial information weakened during deep feature extraction, rather than a specific mathematical operator. The proposed gradient-compensated framework aims to continuously reinforce edge-aware and multi-scale information across different stages of the network.

Figure 1 illustrates the overall pipeline of the proposed GCFLNet. The network follows the encoder–decoder architecture of U-Net, fully exploiting its strengths in feature fusion and spatial information recovery. First, the infrared image is fed into the encoder for feature extraction. During this process, the encoder is jointly assisted by the Edge Enhancement Module (EEM), which suppresses background noise and accurately extracts fine target edge contours, providing effective edge information compensation for the encoded features. Specifically, the encoder first performs preliminary feature encoding through basic convolutional blocks. The resulting features are then processed by the Global–Local Feature Interaction (GLFI) module to capture long-range global dependencies and local detailed representations. Subsequently, the features extracted by GLFI are deeply fused with the edge-enhanced features generated by the EEM, producing enhanced representations that simultaneously preserve semantic information and fine edge details. During feature transmission and spatial reconstruction, the encoder and decoder are connected via skip connections. Along these pathways, the Multi-Scale Information Compensation (MSIC) module is employed to adaptively integrate high-level and low-level features, effectively compensating for the spatial information loss caused by down-sampling in the encoder and progressively restoring the spatial resolution of feature maps. Finally, the fused features are fed into the segmentation head, where pointwise convolution layers are used to adjust the channel dimensions and generate the final prediction, producing the segmentation results of infrared small targets.

3.1. Edge Enhancement Module

Edge contours in images represent the intrinsic boundary characteristics between targets and the background or between different homogeneous regions. They not only convey fundamental geometric shape and spatial location information of targets, but also serve as a critical discriminative cue for distinguishing targets from complex backgrounds in infrared small target detection. Unlike visible-light images, which are dominated by rich texture information, infrared images are formed based on thermal radiation differences between targets and backgrounds. As a result, infrared imagery typically exhibits blurred textures, a narrow gray-level dynamic range, and low contrast, rendering traditional texture-dependent detection methods ineffective. In contrast, edge contours generated by abrupt radiation changes between targets and backgrounds can clearly delineate the true shape and spatial extent of targets, effectively compensating for the lack of texture information. Therefore, edge features constitute a crucial entry point for infrared small target detection.

The distribution patterns of gradient vectors show significant variations across different infrared imaging scenes. In smooth background regions or cluttered noise areas, gradient vectors tend to be randomly distributed in both direction and magnitude, showing no clear structural regularity. In contrast, within regions containing infrared small targets, gradient vectors display a strong convergent pattern toward the target center. This distinctive distribution accurately characterizes the target’s edge locations and contour structures. Motivated by this observation, we propose an Edge Enhancement Module (EEM), which deeply integrates multi-scale gradient magnitude maps with features from the main encoder branch. By embedding gradient-based edge information into multi-level network features, EEM effectively compensates for the loss of fine edge details caused by progressive down-sampling in the encoder, guiding the network to focus on the structural boundaries of infrared small targets and significantly reducing the risk of targets being overwhelmed by complex backgrounds due to their small size and low contrast.

The EEM consists of two core components, an Edge Feature Extraction Unit (EFEU) and Central Difference Convolution (CDC) [

28], as shown in

Figure 2. In practice, multi-scale feature maps are fed into the EEM, where EFEU and CDC work collaboratively to extract accurate edge information while smoothing background noise. Specifically, EFEU employs an adaptive gradient vector computation mechanism to estimate the gradient direction and magnitude at each pixel location. By amplifying gradient differences between small targets and background clutter, EFEU extracts highly discriminative edge features from the original representations, enabling effective separation of targets from noise and faithfully preserving fine edge details. The EFEU employs a 3 × 3 convolution kernel to compute image gradients, where the Sobel operator is used to initialize the convolution weights. Specifically, the central column of the kernel in the x-direction and the central row of the kernel in the y-direction are fixed to zero, while the remaining parameters are dynamically optimized during training to adapt to diverse image characteristics and application scenarios. After obtaining the horizontal and vertical gradient responses at each spatial location, the gradient magnitude is calculated using the Euclidean norm.

However, while EFEU enhances fine target edges, it may introduce excessive smoothing of gradient information, potentially leading to the loss of subtle edge details. To address this limitation, CDC serves as a complementary component. Although central difference convolution was originally introduced for texture-sensitive tasks, in this work it is employed to emphasize local intensity variations and edge transitions rather than fine-grained texture patterns. This property is particularly suitable for infrared imagery, where structural gradients dominate over texture cues. By performing differential operations through weighted neighborhood aggregation, CDC effectively highlights abrupt intensity variations and exhibits strong responses to the overall location and orientation of target edges. Although CDC can be sensitive to background clutter in noisy scenes, its high computational efficiency and robust representation of global contours compensate well for the shortcomings of EFEU. Within the EEM, fine-grained gradient features extracted by EFEU are adaptively fused with robust contour features produced by CDC. This fusion strategy alleviates the over-smoothing issue inherent in single gradient-based methods while suppressing noise interference in edge detection. Consequently, the proposed EEM enhances discriminative edge structures without relying on texture characteristics and preserves detailed target boundaries without sacrificing robustness, producing stable and high-quality edge-enhanced features. These features provide reliable and discriminative guidance for subsequent detection stages, ultimately improving the overall performance of infrared small target detection.

3.2. Global–Local Feature Interaction Module

Existing infrared small target detection networks often suffer from an imbalance in feature modeling, where low-frequency global information is overemphasized while high-frequency global information is handled in a relatively coarse manner. This limitation results in insufficient fine-grained modeling of global information, making it difficult to effectively capture edge details and spatial correlations of infrared small targets. To address this issue, we revisit the complementary relationship between local and global features, as well as the intrinsic differences between convolutional neural networks (CNNs) and self-attention-inspired global context reasoning. Accordingly, we propose a Global–Local Feature Interaction Module (GLFI), which organically integrates globally shared convolutional weights with context-aware attention weights. This design enables precise modeling of high-frequency local details while efficiently capturing long-range dependencies across different spatial locations, thereby enhancing the model’s perception capability for infrared small targets.

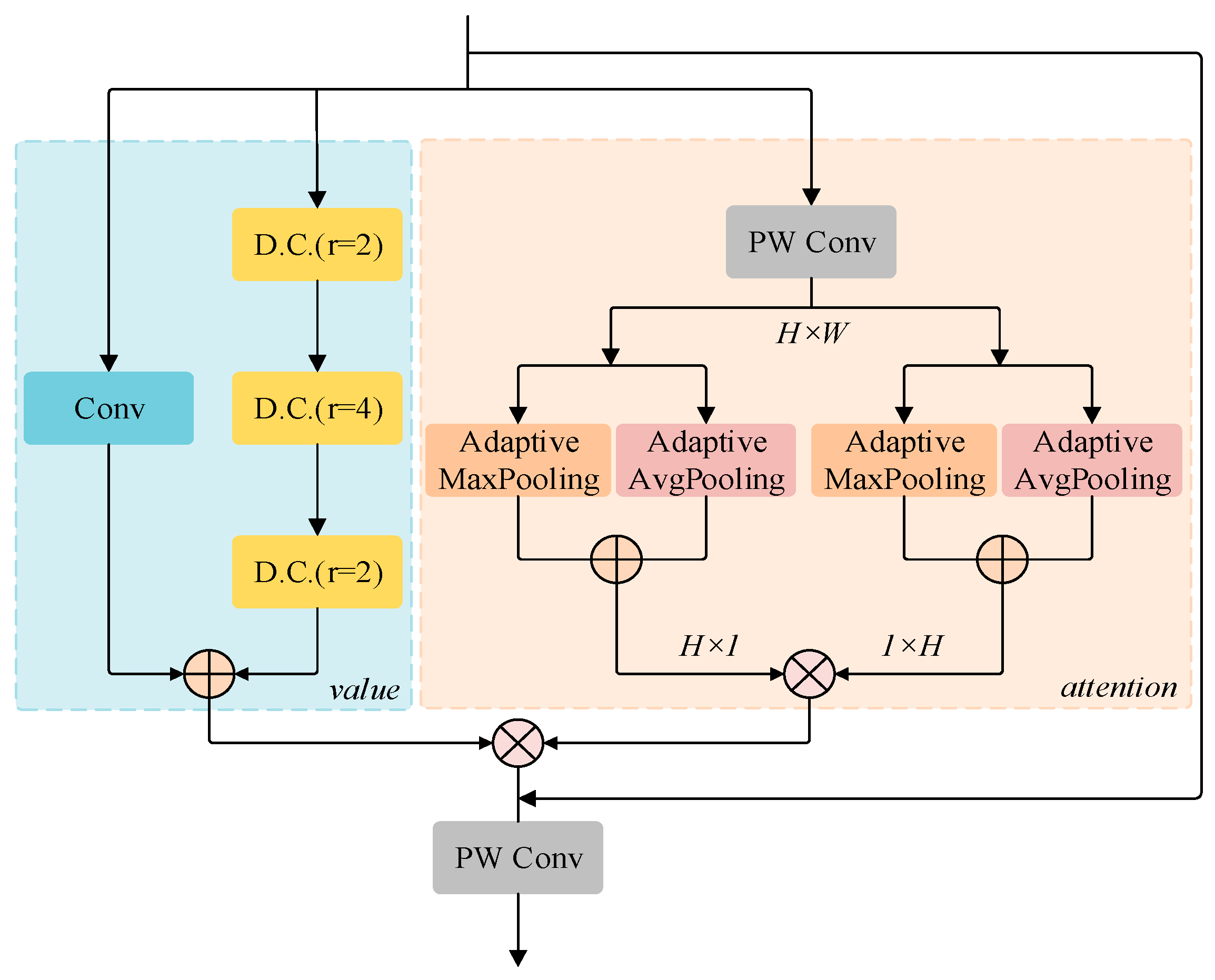

As illustrated in

Figure 3, the GLFI module simulates global attention via pooling-based weighting to achieve deep interaction between global and local features. Its core architecture consists of convolution layers, dilated convolution layers, and fully connected layers. Specifically, GLFI extracts local features through a parallel combination of standard convolution and dilated convolution with different dilation rates. Benefiting from dilated sampling, dilated convolution can significantly enlarge the receptive field without increasing computational cost, allowing for more effective modeling of long-range dependencies. However, due to the inherent non-local self-correlation properties of infrared images, the sparse sampling pattern of dilated convolution may lead to the loss of fine local information. To mitigate this issue, standard convolution is introduced as a complementary branch. Its dense sampling characteristic compensates for the information loss caused by dilated convolution, ensuring the integrity and richness of local feature representations.

While extracting local features, GLFI further introduces point-wise convolution (PWConv) to efficiently fuse the input features with the edge-enhanced features extracted by EEM. Leveraging globally shared weights, PWConv reinforces the guiding role of edge information in feature representation and enhances the sensitivity of the network to target boundaries. Subsequently, a multi-dimensional feature aggregation strategy combining adaptive max pooling and adaptive average pooling is employed to aggregate fused features along different spatial dimensions. Adaptive max pooling highlights the most salient local responses, strengthening critical gradient cues of infrared small targets, whereas adaptive average pooling smooths background clutter while preserving global statistical characteristics. By performing matrix multiplication on the outputs of these two pooling operations, GLFI generates context-aware attention weights that dynamically characterize the importance of features at different spatial locations.

Finally, the refined local feature maps are multiplied with the learned attention weights to achieve weighted fusion of local details and global contextual information, thereby establishing feature dependencies across the entire spatial domain. During inference, the GLFI module maintains a lightweight design while enabling the network to jointly model global context and high-frequency local details. This effectively enhances feature attention to potential small target regions and provides more discriminative feature representations for subsequent detection tasks.

3.3. Multi-Scale Information Compensation Module

In deep learning-based feature extraction frameworks, high-level and low-level features exhibit pronounced complementarity. High-level features, obtained through successive layers of abstraction and aggregation, encode rich semantic information and category-discriminative cues, enabling strong high-level semantic understanding and accurate characterization of a target’s intrinsic attributes. However, their spatial resolution is relatively low, leading to sparsification and loss of spatial information, which limits their ability to represent fine-grained edges and precise positional details. In contrast, low-level features preserve abundant low-level visual details and local spatial information, allowing accurate localization of subtle target structures. Nevertheless, these features primarily describe primitive data attributes, exhibit weak inter-channel semantic correlations, and contain substantial redundant information due to the lack of effective global contextual constraints. Conventional skip-connection mechanisms simply concatenate or add low-level encoder features to high-level decoder features, without adequately modeling the intrinsic relationships across different feature hierarchies. As a result, the fused representations often fail to jointly preserve semantic richness and spatial detail.

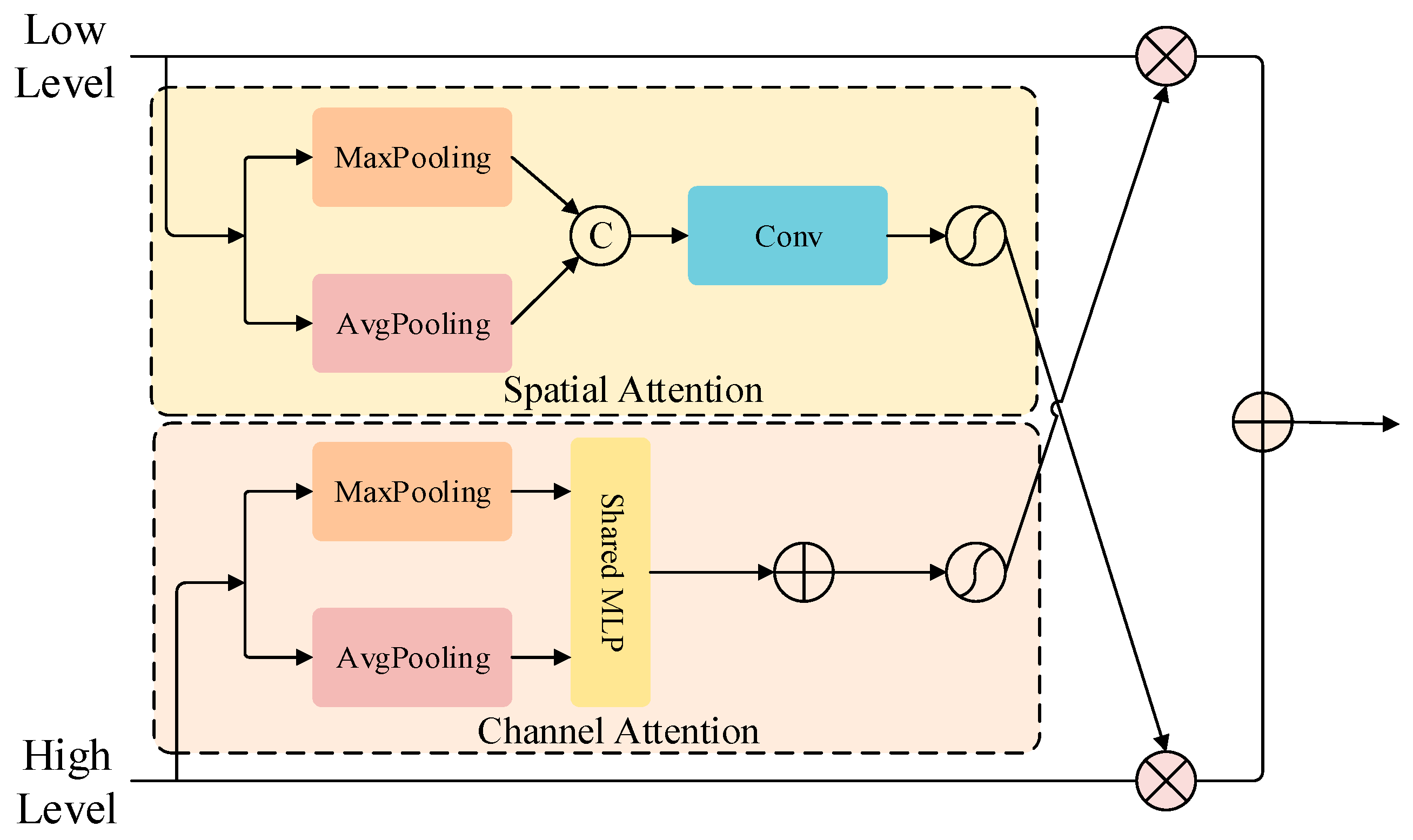

To address this limitation, we propose a Multi-Scale Information Compensation Module (MSIC), which enables efficient fusion of high- and low-level features through differentiated feature enhancement strategies. As illustrated in

Figure 4, MSIC designs dedicated optimization paths tailored to the characteristics of features at different scales. For high-level features, a spatial attention mechanism is introduced to reweight spatial dimensions, enhancing spatial details and local structural information, thereby compensating for the inherent spatial resolution deficiency and improving the model’s perception of target location and contour structure. For low-level features, a channel attention mechanism is employed to adaptively learn the importance of each channel, emphasizing discriminative channel responses while suppressing redundant and irrelevant information, thus strengthening inter-channel semantic correlations and endowing low-level features with enhanced global contextual awareness. In MSIC, information compensation is implemented through attention-based feature modulation rather than direct concatenation or residual addition. Through this differentiated attention-driven enhancement and fusion strategy, the MSIC module adaptively exploits the complementary relationships between high- and low-level features, producing feature representations that simultaneously preserve rich semantic information and fine-grained spatial details. Moreover, it effectively suppresses redundant information and background noise, significantly improving the purity, discriminability, and effectiveness of the fused features.

4. Experiment

4.1. Experimental Data and Experimental Settings

Comprehensive experiments are conducted on two public infrared small target detection and segmentation benchmarks, IRSTD-1K and NUDT, to thoroughly evaluate the detection performance and generalization capability of the proposed GCFLNet. The IRSTD-1K dataset contains 1000 real-world infrared images collected under diverse scenarios, covering various target shapes, scale distributions, and complex cluttered backgrounds. All samples are provided with pixel-level accurate annotations, offering highly reliable supervision for model training and evaluation. The NUDT dataset is a large-scale single-frame infrared small target detection benchmark, consisting of 1327 images rendered by combining real infrared backgrounds with synthetic infrared targets. It includes representative complex scenes such as urban areas, fields, oceans, and cloud layers, enabling effective assessment of model robustness across different environments.

For comparative experiments, traditional methods are implemented on a workstation equipped with an Intel® Core™ i5-8300H @ 2.30 GHz CPU and an NVIDIA GeForce GTX 1060 GPU, while deep learning–based methods are evaluated on a high-performance server with an Intel® Xeon® E5-2620 v4 @ 2.10 GHz CPU and an NVIDIA TITAN XP 12 GB GPU. For methods without publicly available code, the official results reported in their original publications are directly adopted for comparison. For methods with open-source implementations, retraining is performed without modifying network architectures or hyperparameter settings to ensure fair and objective comparisons. For the proposed GCFLNet, the batch size is set to 16 and the maximum number of training epochs is 1500. SoftIoULoss is employed to accommodate the pixel-level supervision requirements of infrared small target segmentation. The AdamW optimizer is adopted to improve training stability and mitigate overfitting. A multi-stage learning rate decay strategy is applied to dynamically adjust the learning rate during training. In addition, a deep supervision scheme is introduced by imposing supervisory signals at multiple network levels, further accelerating convergence and enhancing detection accuracy.

4.2. Performance Evaluation

Performance evaluation for infrared small target detection should jointly consider shape representation accuracy and localization reliability, as a single metric is insufficient to comprehensively reflect the overall capability of a model. Conventional pixel-level metrics widely used in object detection and segmentation, such as Intersection over Union (IoU), Precision, and Recall, mainly focus on quantifying the geometric similarity between predicted results and ground-truth annotations. However, infrared small targets are typically characterized by blurred contours, weak texture cues, and extremely limited spatial extent. Relying solely on shape-oriented metrics is therefore inadequate for accurately assessing a model’s localization performance. Additional task-specific metrics are required to establish a more complete evaluation framework. To this end, a multi-dimensional evaluation protocol is adopted in this work. IoU is used as the fundamental metric to quantify the pixel-wise overlap between the predicted segmentation and the ground-truth mask, reflecting the model’s ability to represent and fit the target shape. Meanwhile, Probability of Detection (Pd) and False Alarm Rate (Fa) are introduced as key localization-oriented metrics. Pd measures the effectiveness of target detection, while Fa evaluates the model’s resistance to background clutter and noise. Furthermore, Receiver Operating Characteristic (ROC) curves are plotted to illustrate the trade-off between Pd and Fa under different decision thresholds, enabling a more intuitive and objective comparison of detection performance across different methods.

IoU is a core shape evaluation metric for infrared small target segmentation. It quantifies the pixel-level overlap between the predicted segmentation region and the ground-truth annotation. This metric reflects the consistency between the predicted target shape and the true target shape. The

IoU is calculated as follows:

where

Ainter and

Aunion denote the intersection and concatenation of the predicted pixels with the real image pixels, respectively.

Pd is a core metric for evaluating localization accuracy in infrared small target detection. It is defined as the ratio of correctly detected target pixels to the total number of ground-truth target pixels. This metric quantifies the model’s ability to effectively identify infrared small targets. The

Pd is calculated as follows:

where

Tcorrect and

Tall denote the number of correctly predicted target pixels and all target pixels, respectively.

Fa is a critical metric for measuring robustness against background clutter. It represents the proportion of background pixels incorrectly classified as target pixels over the entire image. The

Fa is calculated as follows:

where

Pfalse and

Pall denote the number of pixels with prediction errors and the number of all image pixels, respectively.

4.3. Performance Comparison with Previous Methods

To comprehensively evaluate the detection performance of the proposed GCFLNet, extensive comparisons are conducted with representative infrared small target detection methods, including filter-based approaches (Top-Hat, Max–Median), low-rank based methods (RIPT, IPI), local contrast-based methods (TLLCM, WSLCM), and recent deep learning-based approaches (UIUNet, DNANet, RDIAN, etc.). All comparative methods strictly follow the experimental settings and parameter configurations reported in their original papers to ensure fairness and reliability.

As shown in

Table 1, GCFLNet achieves consistently superior performance across all core metrics. In terms of

IoU, GCFLNet attains 71.93% on the IRSTD-1K dataset, significantly outperforming the second-best method MSHNet (67.16%), and showing an order-of-magnitude improvement over traditional local contrast method. On the NUDT dataset, GCFLNet achieves the highest

IoU of 86.49%, further demonstrating its strong capability in accurate target shape modeling and contour preservation. For detection probability, GCFLNet achieves a

Pd of 93.27% on IRSTD-1K, slightly lower than MSHNet but markedly higher than other traditional and most deep learning methods. On the NUDT dataset, GCFLNet reaches the best

Pd of 98.60%, indicating its excellent target detection capability under complex background conditions. In terms of false alarm rate, GCFLNet achieves the lowest

Fa of 4.17 on NUDT, outperforming AGPCNet and significantly reducing false detections compared with DNANet and RDIAN. On IRSTD-1K, GCFLNet also maintains competitive

Fa performance, resulting in a more balanced overall detection behavior. Unlike lightweight approaches that trade detection reliability for higher frame rates, GCFLNet leverages the efficient parallelism of its multi-branch GCFLNet architecture to achieve competitive inference speed while simultaneously enhancing detection accuracy and reducing false alarms, resulting in a better balance between performance and efficiency. Although NUDT contains synthetic targets, the consistent performance gains across both synthetic and real datasets suggest that GCFLNet captures robust gradient-based structural cues rather than dataset-specific generation patterns. To further analyze the trade-off between detection probability and false alarm rate, ROC curves of different methods are plotted on both IRSTD-1K and NUDT datasets, as illustrated in

Figure 5. The ROC curve of GCFLNet rapidly approaches the ideal upper-left region, and its AUC value remains highly competitive, confirming its advantage in achieving high detection accuracy with low false alarms.

Although GCFLNet achieves robust performance in most challenging scenarios, it still exhibits limitations under certain extreme conditions. Specifically, when infrared small targets are embedded in backgrounds with highly similar gradient and intensity distributions, the edge responses may become indistinct, leading to occasional missed detections. In addition, under extremely low signal-to-clutter ratios or in the presence of strong structural clutter (e.g., dense cloud boundaries or stripe-like noise), gradient enhancement may introduce weak false responses. Moreover, targets approaching single-pixel scale remain challenging due to the lack of sufficient spatial context. These cases indicate potential directions for future improvement, such as incorporating stronger cross-scale context modeling or temporal information.

Figure 6 presents qualitative detection results of different methods. Traditional approaches suffer from limited handcrafted features and often fail under low-contrast targets or heavy background clutter, leading to frequent missed detections and false alarms. In contrast, GCFLNet accurately localizes small targets and produces precise segmentation results even in challenging scenarios with tiny targets and complex backgrounds. This performance gain mainly stems from the collaborative effect of internal modules. The EEM enhances target boundaries while suppressing background noise. The GLFI captures both global dependencies and local representations, preventing small target features from being diluted. The MSIC further enables adaptive fusion of high- and low-level features, improving detection accuracy and segmentation precision, and effectively reducing both missed detections and false alarms.

Overall, by jointly leveraging EEM, GLFI, and MSIC, GCFLNet significantly enhances feature representation and localization capability for infrared small targets, achieving superior detection accuracy and robustness under complex backgrounds.

4.4. Ablation Study

To investigate the individual contributions and synergistic effects of the core components in GCFLNet, ablation experiments are conducted on the NUDT dataset using U-Net as the baseline. By progressively incorporating EEM, GLFI, and MSIC into the baseline model, the impact of each module on detection performance is quantitatively analyzed. The experimental results are summarized in

Table 2.

When only EEM is introduced, the model achieves notable improvements in IoU, Pd, and Fa compared with the baseline. This indicates that EEM effectively enhances the extraction of target boundary information while suppressing background clutter, thereby reducing both missed detections and false alarms. These results demonstrate that EEM plays a critical role in strengthening edge-aware representations and providing more discriminative features for subsequent processing.

When only GLFI is added, the improvement in IoU is relatively limited, whereas Pd and Fa exhibit clear optimization. This suggests that the primary strength of GLFI lies in capturing global–local relational features, which improves target localization accuracy and suppresses background-induced false alarms rather than directly refining target shape fitting. When EEM and GLFI are jointly integrated, the edge-enhanced features provided by EEM effectively complement the feature interaction process in GLFI, further reinforcing target representations and mitigating noise interference. As a result, all evaluation metrics are significantly improved, validating the strong complementarity and synergistic interaction between these two modules.

After incorporating MSIC into the baseline model, all metrics show consistent performance gains, with particularly notable improvements in Pd and Fa. This demonstrates that MSIC possesses strong multi-scale feature fusion capability, effectively compensating for spatial information loss caused by encoder down-sampling and enabling adaptive integration of high- and low-level features.

Further analysis of the pairwise module combinations provides deeper insight into their interactions. The integration of EEM and GLFI yields more evident overall improvements compared with the use of each module individually. The explicit boundary cues introduced by EEM offer reliable guidance for the global modeling process in GLFI, thereby facilitating more effective interaction between local structural details and global semantic information. The combination of EEM and MSIC preserves edge sensitivity while promoting cross-level information propagation, leading to more complete and discriminative feature representations. In contrast, the GLFI and MSIC configuration contributes more to structural coherence and semantic stability. However, its ability to suppress low-level background noise is relatively weaker than that of combinations involving EEM.

When all three modules are incorporated, the network achieves the best overall performance. Improvements are consistently observed across IoU, Pd and Fa compared with any single-module or dual-module configuration. These results indicate that the three components complement each other in terms of feature enhancement, dependency modeling, and multi-scale compensation.