Efficient and Controllable Image Generation on the Edge: A Survey on Algorithmic and Architectural Optimization

Abstract

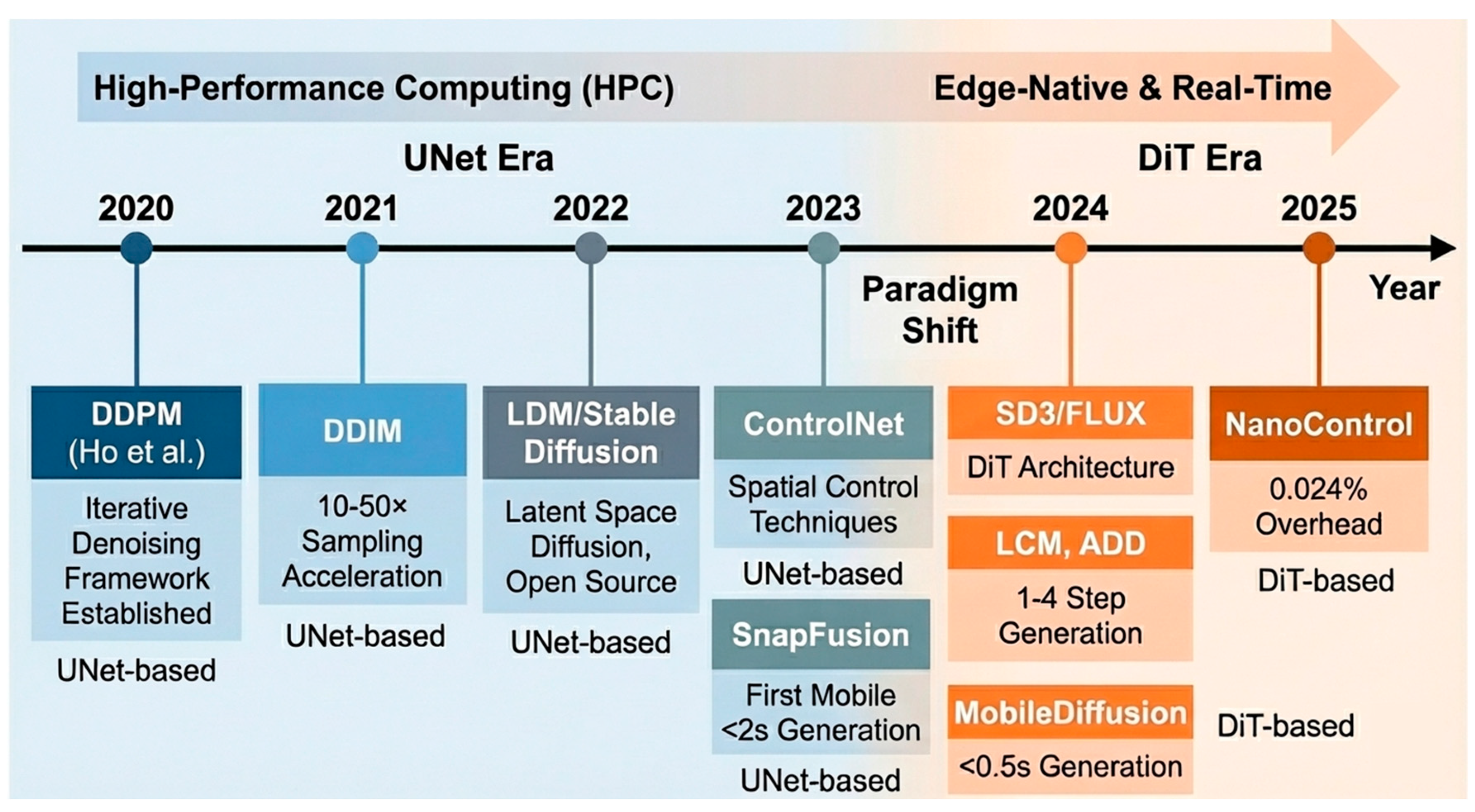

1. Introduction

1.1. Motivation: The Gap Between GenAI Demands and Edge Constraints

1.2. Problem Definition: The “Efficiency–Quality–Control” Trilemma

1.3. Scope and Contribution: The Four-Layer Software Optimization Stack

- Layer I (model architecture optimization): Design-time structural decisions made before or during initial training, including backbone selection (UNet vs. DiT), block configuration, and macro-architecture design (e.g., channel width, attention placement).

- Layer II (efficient controllability): Integration of control mechanisms into the base architecture, ranging from high-overhead encoder replication (ControlNet) to minimalist attention injection (NanoControl).

- Layer III (sampling and algorithmic acceleration): Algorithmic techniques that reduce the number of sampling steps or computational cost per step, independent of the underlying architecture (e.g., LCM, rectified flow).

- Layer IV (model compression): Post-training optimizations applied to pre-trained models prior to deployment, including quantization (FP16 to INT8), pruning, and memory optimization techniques.

1.4. Comparison with Existing Surveys

2. Background: Metrics and Baselines

2.1. Quality Metrics

2.1.1. Distributional Metrics (FID, IS)

2.1.2. Semantic and Esthetic Metrics (CLIP, Esthetic Score)

2.1.3. Control Accuracy Metrics (mIoU, Edge-F1)

- Depth: Correlation coefficient measurement after depth estimation via MiDaS [39].

- Pose: Keypoint detection via OpenPose [40], followed by PCKh/OKS calculation.

- Semantic: Segmentation mIoU measurement based on ADE20K [43].

- Compositionality: Attribute binding, object relationships, and complex composition evaluation via T2I-CompBench [44].

| Condition Type | Primary Metric | Measurement Method | Standard Dataset |

|---|---|---|---|

| Segmentation | mIoU | Mask2Former [45] extraction | ADE20K [43] |

| Depth | RMSE, δ thresholds | MiDaS [39] extraction | NYU Depth V2 [46] |

| Pose (OpenPose) | PCK, mAP (OKS) | OpenPose [40] detection | COCO-Pose [47] |

| Edge (Canny) | F1-Score | OpenCV Canny [41] | BSDS500 [48] |

| Edge (HED) | ODS-F, OIS-F | HED [42] detector | BSDS500 [48] |

| Identity | DINO similarity, ArcFace cosine | Feature extraction | Custom |

2.2. Theoretical Efficiency Metrics

2.2.1. Computational Complexity (FLOPs, MACs)

2.2.2. Model Size and Parameters

2.2.3. Data Precision (Bit-Width)

2.3. Baseline Architectures

2.3.1. UNet-Based Models (SD1.5, SDXL)

2.3.2. DiT-Based Models (SD3, FLUX)

3. Layer I: Model Architecture Optimization

3.1. Structural Complexity Analysis

3.1.1. Convolution (UNet) vs. Attention (DiT) Complexity

3.1.2. The Quadratic Cost of Global Attention

3.1.3. Memory Access Patterns and Hardware Friendliness

3.2. Neural Architecture Search (NAS) Approaches

3.3. Efficient Backbone Design

3.3.1. Block Removal and Channel Reduction

3.3.2. Depth-Wise Separable Convolutions (Mobile-Optimized UNet)

4. Layer II: Efficient Controllability

4.1. High-Overhead Mechanisms

4.1.1. ControlNet: The Cost of Model Replication

4.1.2. ControlNet++ and Cycle Consistency

4.2. Low-Overhead Adapters

4.2.1. T2I-Adapter and IP-Adapter

4.2.2. LoRA-Based Control

4.3. Architecture-Integrated Control (Minimalist)

4.3.1. ControlNet-XS: Architectural Bottlenecking

4.3.2. NanoControl and OminiControl: Attention/KV Injection

4.4. Control Accuracy vs. Generalization Trade-Off

5. Layer III: Sampling and Algorithmic Acceleration

5.1. Step Reduction Algorithms

5.1.1. Latent Consistency Models (LCM)

5.1.2. Rectified Flow and Flow Matching

5.2. One-Step Generation Techniques

5.3. Algorithmic Caching Strategies

6. Layer IV: Model Compression Techniques

6.1. Quantization Algorithms

6.1.1. Post-Training Quantization (PTQ) for Diffusion

6.1.2. Impact of Bit-Width Reduction (Information Loss Analysis)

6.2. Structural Pruning and Distillation

6.3. Algorithmic Memory Optimization

7. Quantitative Analysis (Software-Centric)

7.1. Control-Efficiency Analysis

7.1.1. Parameter Efficiency Leaderboard (CES)

7.1.2. Parameter Count vs. Control Quality

7.2. Algorithmic Efficiency Analysis

7.3. Theoretical Computational Reduction

8. Discussion: The Limits of Software Optimization

8.1. Summary of Algorithmic Optimization Gains

8.2. The Gap: Theoretical FLOPs vs. Real-World Performance

8.3. Future Work: Towards System-Level Optimization

8.3.1. Hardware–Software Co-Design for Memory-Bound Architectures

8.3.2. Lightweight Control for Next-Generation Architectures

8.3.3. Cross-Layer Optimization Interactions

8.3.4. Extension to Multimodal and Domain-Specific Generation

9. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Goodfellow, I.; Pouget-Abadie, J.; Mirza, M.; Xu, B.; Warde-Farley, D.; Ozair, S.; Courville, A.; Bengio, Y. Generative Adversarial Nets. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), Montreal, QC, Canada, 8–13 December 2014; pp. 2672–2680. [Google Scholar]

- Kingma, D.P.; Welling, M. Auto-Encoding Variational Bayes. In Proceedings of the International Conference on Learning Representations (ICLR), Banff, AB, Canada, 14–16 April 2014. [Google Scholar]

- Larsen, A.B.L.; Sønderby, S.K.; Larochelle, H.; Winther, O. Autoencoding beyond pixels using a learned similarity metric. In Proceedings of the International Conference on Machine Learning (ICML), New York, NY, USA, 19–24 June 2016; pp. 1558–1566. [Google Scholar]

- Wang, X.; Jiang, H.; Zeng, T.; Dong, Y. An Adaptive Fused Domain-Cycling Variational Generative Adversarial Network for Machine Fault Diagnosis under Data Scarcity. Inf. Fusion 2026, 126, 103616. [Google Scholar] [CrossRef]

- Yan, J.; Cheng, Y.; Zhang, F.; Li, M.; Zhou, N.; Jin, B.; Wang, H.; Yang, H.; Zhang, W. Research on Multimodal Techniques for Arc Detection in Railway Systems with Limited Data. Struct. Health Monit. 2025. [Google Scholar] [CrossRef]

- Ho, J.; Jain, A.; Abbeel, P. Denoising Diffusion Probabilistic Models. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), Virtual, 6–12 December 2020; pp. 6840–6851. [Google Scholar]

- Song, J.; Meng, C.; Ermon, S. Denoising Diffusion Implicit Models. In Proceedings of the International Conference on Learning Representations (ICLR), Virtual, 3–7 May 2021. [Google Scholar]

- Rombach, R.; Blattmann, A.; Lorenz, D.; Esser, P.; Ommer, B. High-Resolution Image Synthesis with Latent Diffusion Models. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), New Orleans, LA, USA, 18–24 June 2022; pp. 10684–10695. [Google Scholar]

- Li, Y.; Wang, H.; Jin, Q.; Hu, J.; Chemerys, P.; Fu, Y.; Wang, Y.; Tulyakov, S.; Ren, J. SnapFusion: Text-to-Image Diffusion Model on Mobile Devices within Two Seconds. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), New Orleans, LA, USA, 10–16 December 2023. [Google Scholar]

- Chen, J.; Ran, X. Deep Learning with Edge Computing: A Review. Proc. IEEE 2019, 107, 1655–1674. [Google Scholar] [CrossRef]

- Xu, D.; Li, T.; Li, Y.; Su, X.; Tarkoma, S.; Jiang, T.; Crowcroft, J.; Hui, P. Edge Intelligence: Empowering Intelligence to the Edge of Network. Proc. IEEE 2021, 109, 1778–1837. [Google Scholar] [CrossRef]

- Zhang, L.; Rao, A.; Agrawala, M. Adding Conditional Control to Text-to-Image Diffusion Models. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Paris, France, 2–6 October 2023; pp. 3836–3847. [Google Scholar]

- Peebles, W.; Xie, S. Scalable Diffusion Models with Transformers. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Paris, France, 2–6 October 2023; pp. 4195–4205. [Google Scholar]

- Esser, P.; Kulal, S.; Blattmann, A.; Entezari, R.; Müller, J.; Saini, H.; Levi, Y.; Lorber, D.; Sber, D.; Salimans, T.; et al. Scaling Rectified Flow Transformers for High-Resolution Image Synthesis. In Proceedings of the International Conference on Machine Learning (ICML), Vienna, Austria, 21–27 July 2024. [Google Scholar]

- Black Forest Labs. FLUX.1 Technical Report. 2024. Available online: https://blackforestlabs.ai/ (accessed on 14 February 2025).

- Luo, S.; Tan, Y.; Huang, L.; Li, J.; Zhao, H. Latent Consistency Models: Synthesizing High-Resolution Images with Few-Step Inference. In Proceedings of the International Conference on Learning Representations (ICLR), Vienna, Austria, 7–11 May 2024. [Google Scholar]

- Sauer, A.; Lorenz, D.; Blattmann, A.; Rombach, R. Adversarial Diffusion Distillation. In Proceedings of the European Conference on Computer Vision (ECCV), Milan, Italy, 29 September–4 October 2024. [Google Scholar]

- Zhao, Y.; Li, Y.; Ge, Z.; Lin, G. MobileDiffusion: Subsecond Text-to-Image Generation on Mobile Devices. In Proceedings of the European Conference on Computer Vision (ECCV), Milan, Italy, 29 September–4 October 2024. [Google Scholar]

- Cao, H.; Tan, C.; Gao, Z.; Xu, Y.; Chen, G.; Heng, P.A.; Li, S.Z. A Survey on Generative Diffusion Models. IEEE Trans. Knowl. Data Eng. 2024, 36, 2814–2830. [Google Scholar] [CrossRef]

- Song, Y.; Dhariwal, P.; Chen, M.; Sutskever, I. Consistency Models. In Proceedings of the International Conference on Machine Learning (ICML), Honolulu, HI, USA, 23–29 July 2023; pp. 32211–32252. [Google Scholar]

- Kang, M.; Zhu, J.Y.; Zhang, R.; Park, J.; Shechtman, E.; Paris, S.; Park, T. Scaling up GANs for Text-to-Image Synthesis. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Vancouver, BC, Canada, 18–22 June 2023; pp. 10124–10134. [Google Scholar]

- Ravi, S.; Anand, V.; Kumar, A.; Athikomrattanakul, S. Efficient Memory Management for On-Device AI Inference. In Proceedings of the ACM International Conference on Mobile Computing and Networking (MobiCom), Madrid, Spain, 2–6 October 2023. [Google Scholar]

- Mou, C.; Wang, X.; Xie, L.; Wu, Y.; Zhang, J.; Qi, Z.; Shan, Y.; Qie, X. T2I-Adapter: Learning Adapters to Dig out More Controllable Ability for Text-to-Image Diffusion Models. In Proceedings of the AAAI Conference on Artificial Intelligence, Vancouver, BC, Canada, 20–27 February 2024; pp. 4296–4304. [Google Scholar]

- Ye, H.; Zhang, J.; Liu, S.; Han, X.; Yang, W. IP-Adapter: Text Compatible Image Prompt Adapter for Text-to-Image Diffusion Models. arXiv 2023, arXiv:2308.06721. [Google Scholar]

- Dao, T.; Fu, D.Y.; Ermon, S.; Rudra, A.; Ré, C. FlashAttention: Fast and Memory-Efficient Exact Attention with IO-Awareness. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), New Orleans, LA, USA, 28 November–9 December 2022; pp. 16344–16359. [Google Scholar]

- Podell, D.; English, Z.; Lacey, K.; Blattmann, A.; Dockhorn, T.; Müller, J.; Penna, J.; Rombach, R. SDXL: Improving Latent Diffusion Models for High-Resolution Image Synthesis. In Proceedings of the International Conference on Learning Representations (ICLR), Vienna, Austria, 7–11 May 2024. [Google Scholar]

- Chen, J.; Yu, J.; Ge, C.; Yao, L.; Xie, E.; Wu, Y.; Wang, Z.; Kwok, J.; Luo, P.; Lu, H.; et al. PixArt-α: Fast Training of Diffusion Transformer for Photorealistic Text-to-Image Synthesis. In Proceedings of the International Conference on Learning Representations (ICLR), Vienna, Austria, 7–11 May 2024. [Google Scholar]

- Yang, L.; Zhang, Z.; Song, Y.; Hong, S.; Xu, R.; Zhao, Y.; Zhang, W.; Cui, B.; Yang, M.H. Diffusion Models: A Comprehensive Survey of Methods and Applications. ACM Comput. Surv. 2023, 56, 105. [Google Scholar] [CrossRef]

- Ma, Z.; Zhang, Y.; Liu, B.; Sun, T.; Ge, Z.; Feng, Y.; Yang, S.; Zhang, K. Efficient Diffusion Models: A Comprehensive Survey from Principles to Practices. arXiv 2024, arXiv:2410.11795. [Google Scholar] [CrossRef] [PubMed]

- Heusel, M.; Ramsauer, H.; Unterthiner, T.; Nessler, B.; Hochreiter, S. GANs Trained by a Two Time-Scale Update Rule Converge to a Local Nash Equilibrium. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), Long Beach, CA, USA, 4–9 December 2017; pp. 6626–6637. [Google Scholar]

- Salimans, T.; Goodfellow, I.; Zaremba, W.; Cheung, V.; Radford, A.; Chen, X. Improved Techniques for Training GANs. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), Barcelona, Spain, 5–10 December 2016; pp. 2234–2242. [Google Scholar]

- Radford, A.; Kim, J.W.; Hallacy, C.; Ramesh, A.; Goh, G.; Agarwal, S.; Sastry, G.; Askell, A.; Mishkin, P.; Clark, J.; et al. Learning Transferable Visual Models from Natural Language Supervision. In Proceedings of the International Conference on Machine Learning (ICML), Virtual, 18–24 July 2021; pp. 8748–8763. [Google Scholar]

- Schuhmann, C.; Beaumont, R.; Vencu, R.; Gordon, C.; Wightman, R.; Cherti, M.; Coombes, T.; Katta, A.; Mullis, C.; Wortsman, M.; et al. LAION-5B: An Open Large-Scale Dataset for Training Next Generation Image-Text Models. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), New Orleans, LA, USA, 28 November–9 December 2022; pp. 25278–25294. [Google Scholar]

- Wang, Z.; Bovik, A.C.; Sheikh, H.R.; Simoncelli, E.P. Image Quality Assessment: From Error Visibility to Structural Similarity. IEEE Trans. Image Process. 2004, 13, 600–612. [Google Scholar] [CrossRef]

- Borji, A. Pros and Cons of GAN Evaluation Measures: New Developments. Comput. Vis. Image Underst. 2022, 215, 103329. [Google Scholar] [CrossRef]

- Hessel, J.; Holtzman, A.; Forbes, M.; Le Bras, R.; Choi, Y. CLIPScore: A Reference-free Evaluation Metric for Image Captioning. In Proceedings of the Conference on Empirical Methods in Natural Language Processing (EMNLP), Punta Cana, Dominican Republic, 7–11 November 2021; pp. 7514–7528. [Google Scholar]

- Xu, J.; Liu, X.; Wu, Y.; Tong, Y.; Li, Q.; Ding, M.; Tang, J.; Dong, Y. ImageReward: Learning and Evaluating Human Preferences for Text-to-Image Generation. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), New Orleans, LA, USA, 10–16 December 2023. [Google Scholar]

- Wu, X.; Hao, Y.; Sun, K.; Chen, Y.; Zhu, F.; Zhao, R.; Li, H. Human Preference Score v2: A Solid Benchmark for Evaluating Human Preferences of Text-to-Image Synthesis. arXiv 2023, arXiv:2306.09341. [Google Scholar]

- Ranftl, R.; Bochkovskiy, A.; Koltun, V. Vision Transformers for Dense Prediction. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Virtual, 10–17 October 2021; pp. 12179–12188. [Google Scholar]

- Cao, Z.; Hidalgo, G.; Simon, T.; Wei, S.E.; Sheikh, Y. OpenPose: Realtime Multi-Person 2D Pose Estimation Using Part Affinity Fields. IEEE Trans. Pattern Anal. Mach. Intell. 2021, 43, 172–186. [Google Scholar] [CrossRef]

- Canny, J. A Computational Approach to Edge Detection. IEEE Trans. Pattern Anal. Mach. Intell. 1986, PAMI-8, 679–698. [Google Scholar] [CrossRef]

- Xie, S.; Tu, Z. Holistically-Nested Edge Detection. In Proceedings of the IEEE International Conference on Computer Vision (ICCV), Santiago, Chile, 7–13 December 2015; pp. 1395–1403. [Google Scholar]

- Zhou, B.; Zhao, H.; Puig, X.; Fidler, S.; Barriuso, A.; Torralba, A. Scene Parsing through ADE20K Dataset. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 21–26 July 2017; pp. 633–641. [Google Scholar]

- Huang, K.; Sun, K.; Xie, E.; Li, Z.; Liu, X. T2I-CompBench: A Comprehensive Benchmark for Open-world Compositional Text-to-Image Generation. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), New Orleans, LA, USA, 10–16 December 2023. [Google Scholar]

- Cheng, B.; Misra, I.; Schwing, A.G.; Kirillov, A.; Girdhar, R. Masked-attention Mask Transformer for Universal Image Segmentation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), New Orleans, LA, USA, 18–24 June 2022; pp. 1290–1299. [Google Scholar]

- Silberman, N.; Hoiem, D.; Kohli, P.; Fergus, R. Indoor Segmentation and Support Inference from RGBD Images. In Proceedings of the European Conference on Computer Vision (ECCV), Florence, Italy, 7–13 October 2012; pp. 746–760. [Google Scholar]

- Lin, T.Y.; Maire, M.; Belongie, S.; Hays, J.; Perona, P.; Ramanan, D.; Dollár, P.; Zitnick, C.L. Microsoft COCO: Common Objects in Context. In Proceedings of the European Conference on Computer Vision (ECCV), Zurich, Switzerland, 6–12 September 2014; pp. 740–755. [Google Scholar]

- Arbelaez, P.; Maire, M.; Fowlkes, C.; Malik, J. Contour Detection and Hierarchical Image Segmentation. IEEE Trans. Pattern Anal. Mach. Intell. 2011, 33, 898–916. [Google Scholar] [CrossRef] [PubMed]

- Caron, M.; Touvron, H.; Misra, I.; Jégou, H.; Mairal, J.; Bojanowski, P.; Joulin, A. Emerging Properties in Self-Supervised Vision Transformers. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Virtual, 10–17 October 2021; pp. 9650–9660. [Google Scholar]

- Deng, J.; Guo, J.; Xue, N.; Zafeiriou, S. ArcFace: Additive Angular Margin Loss for Deep Face Recognition. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Long Beach, CA, USA, 16–20 June 2019; pp. 4690–4699. [Google Scholar]

- Molchanov, P.; Tyree, S.; Karras, T.; Aila, T.; Kautz, J. Pruning Convolutional Neural Networks for Resource Efficient Inference. In Proceedings of the International Conference on Learning Representations (ICLR), Toulon, France, 24–26 April 2017. [Google Scholar]

- Sze, V.; Chen, Y.H.; Yang, T.J.; Emer, J.S. Efficient Processing of Deep Neural Networks: A Tutorial and Survey. Proc. IEEE 2017, 105, 2295–2329. [Google Scholar] [CrossRef]

- Gholami, A.; Kim, S.; Dong, Z.; Yao, Z.; Mahoney, M.W.; Keutzer, K. A Survey of Quantization Methods for Efficient Neural Network Inference. In Low-Power Computer Vision; Chapman and Hall/CRC: Boca Raton, FL, USA, 2022; pp. 291–326. [Google Scholar]

- Li, Y.; Shen, Y.; Gao, S.; Liu, Z.; Xu, Y.; Zhang, W.; Lu, H.; Huang, G. Q-Diffusion: Quantizing Diffusion Models. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Paris, France, 2–6 October 2023; pp. 17535–17545. [Google Scholar]

- Micikevicius, P.; Narang, S.; Alben, J.; Diamos, G.; Elsen, E.; Garcia, D.; Ginsburg, B.; Houston, M.; Kuchaiev, O.; Venkatesh, G.; et al. Mixed Precision Training. In Proceedings of the International Conference on Learning Representations (ICLR), Vancouver, BC, Canada, 30 April–3 May 2018. [Google Scholar]

- Shang, Y.; Yuan, Z.; Xie, B.; Wu, B.; Yan, Y. Post-Training Quantization on Diffusion Models. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Vancouver, BC, Canada, 18–22 June 2023; pp. 1972–1981. [Google Scholar]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep Residual Learning for Image Recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, 27–30 June 2016; pp. 770–778. [Google Scholar]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, Ł.; Polosukhin, I. Attention Is All You Need. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), Long Beach, CA, USA, 4–9 December 2017; pp. 5998–6008. [Google Scholar]

- Ronneberger, O.; Fischer, P.; Brox, T. U-Net: Convolutional Networks for Biomedical Image Segmentation. In Proceedings of the Medical Image Computing and Computer-Assisted Intervention (MICCAI), Munich, Germany, 5–9 October 2015; pp. 234–241. [Google Scholar]

- Liu, X.; Gong, C.; Liu, Q. Flow Straight and Fast: Learning to Generate and Transfer Data with Rectified Flow. In Proceedings of the International Conference on Learning Representations (ICLR), Kigali, Rwanda, 1–5 May 2023. [Google Scholar]

- Cherti, M.; Beaumont, R.; Wightman, R.; Wortsman, M.; Ilharco, G.; Gordon, C.; Schuhmann, C.; Schmidt, L.; Jitsev, J. Reproducible Scaling Laws for Contrastive Language-Image Learning. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Vancouver, BC, Canada, 18–22 June 2023; pp. 2818–2829. [Google Scholar]

- Raffel, C.; Shazeer, N.; Roberts, A.; Lee, K.; Narang, S.; Matena, M.; Zhou, Y.; Li, W.; Liu, P.J. Exploring the Limits of Transfer Learning with a Unified Text-to-Text Transformer. J. Mach. Learn. Res. 2020, 21, 1–67. [Google Scholar]

- Shen, H.; Zhang, J.; Xiong, B.; Hu, R.; Chen, S.; Wan, Z.; Wang, X.; Zhang, Y.; Gong, Z.; Bao, G.; et al. Efficient diffusion models: A survey 2024. arXiv 2025, arXiv:2502.06805. [Google Scholar]

- Ignatov, A.; Timofte, R.; Kulik, A.; Yang, S.; Wang, K.; Baum, F.; Wu, M.; Xu, L.; Van Gool, L. AI Benchmark: All About Deep Learning on Smartphones in 2019. In Proceedings of the IEEE/CVF International Conference on Computer Vision Workshops (ICCVW), Seoul, Republic of Korea, 27 October–2 November 2019; pp. 3617–3635. [Google Scholar]

- Pope, R.; Douglas, S.; Chowdhery, A.; Devlin, J.; Bradbury, J.; Heek, J.; Xiao, K.; Agrawal, S.; Dean, J. Efficiently Scaling Transformer Inference. In Proceedings of the Machine Learning and Systems (MLSys), Miami, FL, USA, 4–8 June 2023. [Google Scholar]

- Elsken, T.; Metzen, J.H.; Hutter, F. Neural Architecture Search: A Survey. J. Mach. Learn. Res. 2019, 20, 1–21. [Google Scholar]

- Howard, A.G.; Zhu, M.; Chen, B.; Kalenichenko, D.; Wang, W.; Weyand, T.; Andreetto, M.; Adam, H. MobileNets: Efficient Convolutional Neural Networks for Mobile Vision Applications. arXiv 2017, arXiv:1704.04861. [Google Scholar] [CrossRef]

- Liu, S.; Zhu, J.; Lu, J.; Gong, Y.; Li, L.; Cheng, B.; Ma, Y.; Wu, L.; Wu, X.; Leng, D.; et al. NanoControl: A Lightweight Framework for Precise and Efficient Control in Diffusion Transformer. arXiv 2025, arXiv:2508.10424. [Google Scholar] [CrossRef]

- Li, M.; Cong, Y.; Zhang, G.; Wu, Y.; Xu, P.; Gu, Q.; Xu, K. ControlNet++: Improving Conditional Controls with Efficient Consistency Feedback. In Proceedings of the European Conference on Computer Vision (ECCV), Milan, Italy, 29 September–4 October 2024. [Google Scholar]

- Hu, E.J.; Shen, Y.; Wallis, P.; Allen-Zhu, Z.; Li, Y.; Wang, S.; Wang, L.; Chen, W. LoRA: Low-Rank Adaptation of Large Language Models. In Proceedings of the International Conference on Learning Representations (ICLR), Virtual, 25–29 April 2022. [Google Scholar]

- Ruiz, N.; Li, Y.; Jampani, V.; Pritch, Y.; Rubinstein, M.; Aberman, K. DreamBooth: Fine Tuning Text-to-Image Diffusion Models for Subject-Driven Generation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Vancouver, BC, Canada, 18–22 June 2023; pp. 22500–22510. [Google Scholar]

- Liu, S.Y.; Wang, C.Y.; Yin, H.; Molchanov, P.; Wang, Y.C.F.; Cheng, K.T.; Chen, M.H. DoRA: Weight-Decomposed Low-Rank Adaptation. In Proceedings of the International Conference on Machine Learning (ICML), Vienna, Austria, 21–27 July 2024. [Google Scholar]

- Zavadski, D.; Ryll, J.P.; Kneip, L. ControlNet-XS: Designing an Efficient and Effective Architecture for Controlling Text-to-Image Diffusion Models. In Proceedings of the European Conference on Computer Vision (ECCV), Milan, Italy, 29 September–4 October 2024. [Google Scholar]

- Tan, Z.; Cong, Y.; Li, M.; Wang, Y.; Wu, S.; Zhang, G. OminiControl: Minimal and Universal Control for Diffusion Transformer. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Honolulu, HI, USA, 19–25 October 2025. [Google Scholar]

- Qin, C.; Zhang, S.; Zhang, N.; Bai, J.; Zhang, Y.; Shen, H.; Yang, H. UniControl: A Unified Diffusion Model for Controllable Visual Generation in the Wild. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), New Orleans, LA, USA, 10–16 December 2023. [Google Scholar]

- Luo, S.; Tan, Y.; Patil, S.; Gu, D.; von Platen, P.; Passos, A.; Huang, L.; Li, J.; Zhao, H. LCM-LoRA: A Universal Stable-Diffusion Acceleration Module. arXiv 2023, arXiv:2311.05556. [Google Scholar]

- Lipman, Y.; Chen, R.T.Q.; Ben-Hamu, H.; Nickel, M.; Le, M. Flow Matching for Generative Modeling. In Proceedings of the International Conference on Learning Representations (ICLR), Kigali, Rwanda, 1–5 May 2023. [Google Scholar]

- Liu, X.; Zhang, X.; Ma, J.; Peng, J.; Liu, Q. InstaFlow: One Step is Enough for High-Quality Diffusion-Based Text-to-Image Generation. In Proceedings of the International Conference on Learning Representations (ICLR), Vienna, Austria, 7–11 May 2024. [Google Scholar]

- Yan, H.; Yang, L.; Zhang, Z.; Luo, J. PeRFlow: Piecewise Rectified Flow as Universal Plug-and-Play Accelerator. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), Vancouver, BC, Canada, 9–15 December 2024. [Google Scholar]

- Oquab, M.; Darcet, T.; Moutakanni, T.; Vo, H.; Szafraniec, M.; Khalidov, V.; Fernandez, P.; Haziza, D.; Massa, F.; El-Nouby, A.; et al. DINOv2: Learning Robust Visual Features without Supervision. arXiv 2024, arXiv:2304.07193. [Google Scholar] [CrossRef]

- Sauer, A.; Boesel, F.; Dockhorn, T.; Blattmann, A.; Esser, P.; Rombach, R. Fast High-Resolution Image Synthesis with Latent Adversarial Diffusion Distillation. In Proceedings of the ACM SIGGRAPH Asia Conference, Tokyo, Japan, 3–6 December 2024. [Google Scholar]

- Ma, X.; Fang, G.; Wang, X. DeepCache: Accelerating Diffusion Models for Free. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, WA, USA, 17–21 June 2024; pp. 15762–15772. [Google Scholar]

- He, Y.; Liu, L.; Liu, J.; Wu, W.; Zhou, H.; Zhuang, B. PTQD: Accurate Post-Training Quantization for Diffusion Models. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), New Orleans, LA, USA, 10–16 December 2023. [Google Scholar]

- Zhao, J.; Zhang, B.; Chen, Z.; Wang, Z.; Zhao, Y.; Tian, Y.; Yuan, C. MixDQ: Memory-Efficient Few-Step Text-to-Image Diffusion Models with Metric-Decoupled Mixed Precision Quantization. In Proceedings of the European Conference on Computer Vision (ECCV), Milan, Italy, 29 September–4 October 2024. [Google Scholar]

- Li, Y.; Lin, J.; Tang, H.; Sun, K.; Song, Y.; Han, S. SVDQuant: Absorbing Outliers by Low-Rank Components for 4-Bit Diffusion Models. In Proceedings of the International Conference on Learning Representations (ICLR), Singapore, 24–28 April 2025. [Google Scholar]

- LeCun, Y.; Denker, J.S.; Solla, S.A. Optimal Brain Damage. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), Denver, CO, USA, 27–30 November 1989; pp. 598–605. [Google Scholar]

- Fang, G.; Ma, X.; Wang, X. Structural Pruning for Diffusion Models. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), New Orleans, LA, USA, 10–16 December 2023. [Google Scholar]

- Castells, T.; Yamamoto, H.; Moro, A.; Kobayashi, T.; Otani, M. LD-Pruner: Efficient Pruning of Latent Diffusion Models using Task-Agnostic Insights. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, WA, USA, 17–21 June 2024. [Google Scholar]

- Zhang, K.; Li, D.; Li, Y.; Liu, Z. EcoDiff: Economizing Diffusion Models for Better Efficiency. arXiv 2024, arXiv:2403.11111. [Google Scholar]

- Bar-Tal, O.; Yariv, L.; Lipman, Y.; Dekel, T. MultiDiffusion: Fusing Diffusion Paths for Controlled Image Generation. In Proceedings of the International Conference on Machine Learning (ICML), Honolulu, HI, USA, 23–29 July 2023; pp. 1737–1752. [Google Scholar]

- Dao, T. FlashAttention-2: Faster Attention with Better Parallelism and Work Partitioning. In Proceedings of the International Conference on Learning Representations (ICLR), Vienna, Austria, 7–11 May 2024. [Google Scholar]

- Chen, T.; Xu, B.; Zhang, C.; Guestrin, C. Training Deep Nets with Sublinear Memory Cost. arXiv 2016, arXiv:1604.06174. [Google Scholar] [CrossRef]

- von Platen, P.; Patil, S.; Lozhkov, A.; Cuenca, P.; Lambert, N.; Rasul, K.; Davaadorj, M.; Wolf, T. Diffusers: State-of-the-Art Diffusion Models. GitHub Repository. 2022. Available online: https://github.com/huggingface/diffusers (accessed on 14 February 2025).

- Rhu, M.; Giber, N.; Keckler, S.W. vDNN: Virtualized Deep Neural Networks for Scalable, Memory-Efficient Neural Network Design. In Proceedings of the IEEE/ACM International Symposium on Microarchitecture (MICRO), Taipei, Taiwan, 15–19 October 2016; pp. 1–13. [Google Scholar]

- Zhao, S.; Chen, D.; Chen, Y.C.; Bao, J.; Hao, S.; Yuan, L.; Wong, K.Y.K. Uni-ControlNet: All-in-One Control to Text-to-Image Diffusion Models. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), New Orleans, LA, USA, 10–16 December 2023. [Google Scholar]

- Kaplan, J.; McCandlish, S.; Henighan, T.; Brown, T.B.; Chess, B.; Child, R.; Gray, S.; Radford, A.; Wu, J.; Amodei, D. Scaling Laws for Neural Language Models. arXiv 2020, arXiv:2001.08361. [Google Scholar] [CrossRef]

- Dehghani, M.; Djolonga, J.; Mustafa, B.; Padlewski, P.; Heek, J.; Gilmer, J.; Steiner, A.P.; Caron, M.; Geirhos, R.; Alabdulmohsin, I.; et al. Scaling Vision Transformers to 22 Billion Parameters. In Proceedings of the International Conference on Machine Learning (ICML), Honolulu, HI, USA, 23–29 July 2023; pp. 7480–7512. [Google Scholar]

- Lin, S.; Wang, A.; Yang, X. SDXL-Lightning: Progressive Adversarial Diffusion Distillation. arXiv 2024, arXiv:2402.13929. [Google Scholar] [CrossRef]

- Yang, Z.; Zhang, L.; Chen, Y.; Zhou, Y. EdgeFusion: On-Device Text-to-Image Generation. arXiv 2024, arXiv:2404.11925. [Google Scholar]

- Apple Inc. Core ML Framework Documentation. 2023. Available online: https://developer.apple.com/documentation/coreml (accessed on 14 February 2025).

- Qualcomm Technologies Inc. Qualcomm AI Engine Direct SDK (QNN). 2023. Available online: https://developer.qualcomm.com/software/qualcomm-ai-engine-direct-sdk (accessed on 14 February 2025).

- Podell, D.; English, Z.; Lacey, K.; Dockhorn, T.; Blattmann, A.; Rombach, R. Efficient VAE Decoding for High-Resolution Image Synthesis. In Proceedings of the International Conference on Learning Representations (ICLR), Vienna, Austria, 7–11 May 2024. [Google Scholar]

- Williams, S.; Waterman, A.; Patterson, D. Roofline: An Insightful Visual Performance Model for Multicore Architectures. Commun. ACM 2009, 52, 65–76. [Google Scholar] [CrossRef]

- Ivanov, A.; Dryden, N.; Ben-Nun, T.; Li, S.; Hoefler, T. Data Movement Is All You Need: A Case Study on Optimizing Transformers. In Proceedings of the Machine Learning and Systems (MLSys), Virtual, 5–9 April 2021. [Google Scholar]

- Kim, S.; Hooper, C.; Gholami, A.; Dong, Z.; Li, X.; Shen, S.; Mahoney, M.W.; Keutzer, K. SqueezeLLM: Dense-and-Sparse Quantization. In Proceedings of the International Conference on Machine Learning (ICML), Vienna, Austria, 21–27 July 2024. [Google Scholar]

- Kwon, W.; Li, Z.; Zhuang, S.; Sheng, Y.; Zheng, L.; Yu, C.H.; Gonzalez, J.; Zhang, H.; Stoica, I. Efficient Memory Management for Large Language Model Serving with PagedAttention. In Proceedings of the ACM SIGOPS Symposium on Operating Systems Principles (SOSP), Koblenz, Germany, 23–26 October 2023; pp. 611–626. [Google Scholar]

- Jouppi, N.P.; Young, C.; Patil, N.; Patterson, D.; Agrawal, G.; Bajwa, R.; Bates, S.; Bhatia, S.; Boden, N.; Borber, A.; et al. In-Datacenter Performance Analysis of a Tensor Processing Unit. In Proceedings of the ACM/IEEE Annual International Symposium on Computer Architecture (ISCA), Toronto, ON, Canada, 24–28 June 2017; pp. 1–12. [Google Scholar]

- Chen, Y.H.; Krishna, T.; Emer, J.S.; Sze, V. Eyeriss: An Energy-Efficient Reconfigurable Accelerator for Deep Convolutional Neural Networks. IEEE J. Solid-State Circuits 2017, 52, 127–138. [Google Scholar] [CrossRef]

- Fang, G.; Li, K.; Ma, X.; Wang, X. TinyFusion: Diffusion Transformers Learned Shallow. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Nashville, TN, USA, 11–16 June 2025; pp. 18144–18154. [Google Scholar]

- You, H.; Barnes, C.; Zhou, Y.; Kang, Y.; Du, Z.; Zhou, W.; Zhang, L.; Nitzan, Y.; Liu, X.; Lin, Z.; et al. Layer- and Timestep-Adaptive Differentiable Token Compression Ratios for Efficient Diffusion Transformers. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Nashville, TN, USA, 11–16 June 2025; pp. 18072–18082. [Google Scholar]

- Wu, J.; Wang, H.; Shang, Y.; Shah, M.; Yan, Y. PTQ4DiT: Post-training Quantization for Diffusion Transformers. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), Vancouver, BC, Canada, 9–15 December 2024. [Google Scholar]

- Lee, Y.; Park, K.; Cho, Y.; Lee, Y.J.; Hwang, S.J. KOALA: Empirical Lessons Toward Memory-Efficient and Fast Diffusion Models for Text-to-Image Synthesis. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), Vancouver, BC, Canada, 9–15 December 2024. [Google Scholar]

- Yin, T.; Gharbi, M.; Park, T.; Zhang, R.; Shechtman, E.; Durand, F.; Freeman, W.T. Improved Distribution Matching Distillation for Fast Image Synthesis. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), Vancouver, BC, Canada, 9–15 December 2024. [Google Scholar]

- Marchisio, A.; Massa, A.; Mrazek, V.; Bussolino, B.; Martina, M.; Shafique, M. A Survey on Deep Learning Hardware Accelerators for Heterogeneous HPC Platforms. ACM Comput. Surv. 2024, 56, 1–38. [Google Scholar]

- Sze, V.; Chen, Y.H.; Emer, J.; Suleiman, A.; Zhang, Z. Hardware for Machine Learning: Challenges and Opportunities. In Proceedings of the IEEE Custom Integrated Circuits Conference (CICC), Austin, TX, USA, 30 April–3 May 2017; pp. 1–8. [Google Scholar]

| Aspect | Cao et al. [19] | Ma et al. [29] | Yang et al. [28] | Ours |

|---|---|---|---|---|

| Focus | General diffusion | Efficient diffusion | Diffusion applications | Edge deployment |

| Controllability | Limited | Partial | Partial | Comprehensive |

| Control-Cost Analysis | ✗ | ✗ | ✗ | ✓ (CES metric) |

| FLOPs-Latency Gap | ✗ | Mentioned | ✗ | ✓ (FER metric) |

| 4-Layer Stack | ✗ | ✗ | ✗ | ✓ |

| Specification | SD1.5 (UNet) | SDXL (UNet) | SD3 Medium (DiT) | FLUX.1-dev (DiT) |

|---|---|---|---|---|

| Release | August 2022 | July 2023 | June 2024 | August 2024 |

| Architecture | U-Net + ResNet + Transformer | U-Net (3× larger) | MMDiT | Rectified Flow Transformer |

| Parameters | ~860 M | ~2.6 B | ~2 B | ~12 B |

| Native Resolution | 512 × 512 | 1024 × 1024 | 1024 × 1024 | 1024 × 1024+ |

| Text Encoder | CLIP-L [32] | CLIP-L + OpenCLIP bigG [61] | CLIP-L + OpenCLIP bigG + T5-XXL [62] | T5-XXL [62] + CLIP [32] |

| Typical Steps | 20–50 | 20–50 | 20–30 | 20–30 |

| FID (COCO-30K) | ~8–12 | ~6–10 | ~5–8 | ~4–7 |

| Est. FLOPs/step | ~50 G | ~150 G | ~100 G | ~250 G |

| Memory (FP16) | ~4 GB | ~8 GB | ~6 GB | ~24 GB |

| Component | Standard UNet (SD1.5) [8] | SnapFusion UNet [9] | MobileDiffusion [18] | Reduction |

|---|---|---|---|---|

| Encoder (Down) | ~18 GMACs | ~12 GMACs | ~8 GMACs | 33–56% |

| Middle Block | ~8 GMACs | ~3 GMACs | ~2 GMACs | 62–75% |

| Decoder (Up) | ~20 GMACs | ~14 GMACs | ~10 GMACs | 30–50% |

| Attention Layers | ~6 GMACs | ~4 GMACs | ~3 GMACs | 33–50% |

| Total | ~52 GMACs | ~33 GMACs | ~23 GMACs | 36–56% |

| Conv Type | Standard | Mixed | Separable | - |

| Channel Width | 320–1280 | 256–1024 | 192–768 | 20–40% |

| Mechanism | Control Accuracy | Generalization | Overhead | Approach |

|---|---|---|---|---|

| ControlNet [12] | High | Low (single) | 361 M/cond | Specialist |

| ControlNet++ [69] | Very High | Low (single) | 361 M/cond | Specialist |

| UniControl [75] | Moderate | High (9 types) | 140 M | Generalist |

| T2I-Adapter [23] | Moderate | Medium | 77 M | Balanced |

| LoRA-based [70] | Variable | High (composable) | 4 M (avg) | Flexible |

| NanoControl [68] | Moderate–High | High (universal) | 0.1 M | Balanced |

| Precision | Weight Bits | Activation Bits | Model Size (SD1.5) | FID Δ | Speed vs. FP16 | NPU Support |

|---|---|---|---|---|---|---|

| FP32 | 32 | 32 | ~3.4 GB | Baseline | 0.5× | ✗ (Not Supported) |

| FP16 | 16 | 16 | ~1.7 GB | 0 | 1.0× | Δ (Partial) |

| BF16 | 16 | 16 | ~1.7 GB | 0 | 1.0× | Limited |

| W8A8 | 8 | 8 | ~0.85 GB | <1.0 | 1.8–2.5× | ✓ (Supported) |

| W4A16 | 4 | 16 | ~0.5 GB | 1.0–2.0 | 1.5–2.0× | Δ (Partial) |

| W4A8 | 4 | 8 | ~0.5 GB | 1.0–3.0 | 2.0–3.0× | ✓ (Supported) |

| W4A4 | 4 | 4 | ~0.5 GB | >5.0 | 2.5–4.0× | Limited |

| Technique | Peak VRAM Reduction | Speed Impact | Complexity | Best for |

|---|---|---|---|---|

| VAE Tiling [90] | 50–70% | −10.2 | Low | High-res decode |

| U-Net Tiling [90] | 30–50% | −20.3 | Medium | Panorama |

| FlashAttention [25] | 30–50% | 19.6 | Low | All attention |

| FlashAttention-2 [91] | 40–60% | 39.2 | Low | All attention |

| Attention Slicing [93] | 20–40% | −30.5 | Very Low | Fallback |

| Activation Offload [94] | 50–80% | −102 | Medium | OOM prevention |

| Gradient Checkpoint [92] | 60–80% (train) | −30% (train) | Low | Training only |

| Rank | Method | Year | Venue | Base | Added Params | NOR (%) | Memory Overhead | Control Types | mIoU (Seg) | SSIM (Edge) | RMSE (Depth) | CES |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 1 | NanoControl [68] | 2025 | arXiv | DiT | 0.1 M | 0.02% | 1.01× | Multi | 78.2 | 0.891 | 0.082 | 156.4 |

| 2 | OminiControl [74] | 2025 | ICCV | DiT | 0.5 M | 0.10% | 1.02× | Universal | 79.5 | 0.895 | 0.079 | 92.3 |

| 3 | ControlNet-XS [73] | 2024 | ECCV | UNet | 14 M (SD1.5) | 1.60% | 1.15× | 8+ | 81.3 | 0.902 | 0.075 | 70.8 |

| 4 | ControlNet-XS [73] | 2024 | ECCV | UNet | 48 M (SDXL) | 1.40% | 1.12× | 8+ | 82.1 | 0.908 | 0.072 | 48.9 |

| 5 | IP-Adapter [24] | 2023 | arXiv | UNet | 22 M | 2.60% | 1.08× | Style/ID | - | - | - | 45.2 * |

| 6 | T2I-Adapter [23] | 2024 | AAAI | UNet | 77 M | 9.00% | 1.12× | 8+ | 76.8 | 0.875 | 0.089 | 40.6 |

| 7 | LoRA (Control) [70] | 2024 | - | UNet | 4 M (avg) | 0.50% | 1.01× | Domain | 72.5 | 0.852 | 0.095 | 36.3 |

| 8 | UniControl [75] | 2023 | NeurIPS | UNet | 140 M | 16.30% | 1.35× | 9+ | 80.2 | 0.889 | 0.078 | 35.4 |

| 9 | ControlNet++ [69] | 2024 | ECCV | UNet | 361 M | 42.00% | 2.00× | 8+ | 89.3 | 0.938 | 0.068 | 34.1 |

| 10 | ControlNet [12] | 2023 | ICCV | UNet | 361 M | 42.00% | 2.00× | 8+ | 78.1 | 0.825 | 0.086 | 29.8 |

| 11 | Uni-ControlNet [95] | 2023 | NeurIPS | UNet | 380 M | 44.20% | 2.10× | Multi | 79.5 | 0.842 | 0.083 | 29.2 |

| Method | Canny Edge (SSIM↑) | Depth (RMSE↓) | Pose (mAP↑) | Segmentation (mIoU↑) | Normal (Angular↓) |

|---|---|---|---|---|---|

| ControlNet [12] | 0.825 | 0.086 | 72.3 | 78.1 | 12.5° |

| ControlNet++ [69] | 0.938 (+13.7%) | 0.068 (+20.9%) | 79.8 (+10.4%) | 89.3 (+14.3%) | 9.8° (+21.6%) |

| ControlNet-XS [73] | 0.902 (+9.3%) | 0.075 (+12.8%) | 76.2 (+5.4%) | 81.3 (+4.1%) | 11.2° (+10.4%) |

| T2I-Adapter [23] | 0.875 (+6.1%) | 0.089 (−3.5%) | 69.8 (−3.5%) | 76.8 (−1.7%) | 13.1 (−4.8%) |

| NanoControl [68] | 0.891 (+8.0%) | 0.082 (+4.7%) | 74.5 (+3.0%) | 78.2 (+0.1%) | 11.8° (+5.6%) |

| Category/Model | Year | Architecture | Params | Steps | FID (COCO) | Latency (A100) | Latency (Mobile) | FLOPs/Img | Precision |

|---|---|---|---|---|---|---|---|---|---|

| Baseline Models | |||||||||

| SD1.5 [8] | 2022 | UNet | 860 M | 50 | 8.59 | 2850 ms | >60,000 ms | 2600 G | FP16 |

| SD1.5 [8] | 2022 | UNet | 860 M | 20 | 9.12 | 1140 ms | >25,000 ms | 1040 G | FP16 |

| SDXL [26] | 2023 | UNet | 3.5 B | 50 | 6.82 | 8500 ms | N/A | 7800 G | FP16 |

| SD3 Medium [14] | 2024 | DiT | 2.0 B | 28 | 5.95 | 4200 ms | N/A | 2800 G | FP16 |

| FLUX.1-dev [15] | 2024 | DiT | 12 B | 28 | 4.72 | 12,500 ms | N/A | 7000 G | FP16 |

| Step Reduction | |||||||||

| SD1.5 + LCM [16] | 2023 | UNet | 860 M | 4 | 10.85 | 228 ms | 5200 ms | 208 G | FP16 |

| SD1.5 + LCM-LoRA [76] | 2024 | UNet | 860 M + 67 M | 4 | 11.23 | 232 ms | 5350 ms | 208 G | FP16 |

| SDXL + LCM [16] | 2024 | UNet | 3.5 B | 4 | 8.45 | 680 ms | N/A | 624 G | FP16 |

| SD1.5 + PeRFlow [79] | 2024 | UNet | 860 M | 4 | 9.52 | 228 ms | 5100 ms | 208 G | FP16 |

| SDXL Turbo (ADD) [17] | 2023 | UNet | 3.5 B | 1 | 12.35 | 170 ms | N/A | 156 G | FP16 |

| SDXL Lightning [98] | 2024 | UNet | 3.5 B | 4 | 7.89 | 680 ms | N/A | 624 G | FP16 |

| Mobile-Optimized | |||||||||

| SnapFusion [9] | 2023 | UNet (Opt) | 380 M | 8 | 9.85 | 185 ms | 1840 ms | 416 G | INT8 |

| MobileDiffusion [18] | 2024 | UNet (Opt) | 320 M | 8 | 10.42 | 165 ms | 520 ms | 184 G | INT8 |

| MobileDiffusion [18] | 2024 | UNet (Opt) | 320 M | 1 | 14.25 | 42 ms | 158 ms | 23 G | INT8 |

| EdgeFusion [99] | 2024 | UNet (Opt) | 295 M | 4 | 11.78 | 112 ms | 890 ms | 92 G | INT8 |

| Quantized Models | |||||||||

| SD1.5 W8A8 [54] | 2023 | UNet | 860 M | 20 | 9.45 | 685 ms | 8500 ms | 1040 G | INT8 |

| SD1.5 W4A8 (MixDQ) [84] | 2024 | UNet | 860 M | 8 | 10.12 | 380 ms | 3200 ms | 416 G | W4A8 |

| SDXL W8A8 [54] | 2024 | UNet | 3.5 B | 20 | 7.28 | 2550 ms | N/A | 3120 G | INT8 |

| Optimization Stage | Technique | Latency Reduction | FID Impact | Cumulative Latency |

|---|---|---|---|---|

| Baseline | SD1.5 (50 steps, FP16) [8] | - | 8.59 | 60,000+ ms |

| Step Reduction | 50 → 8 steps (LCM) [16] | 6.25× | 2.26 | 9600 ms |

| Architecture | UNet Optimization (NAS) [9,66] | 2.5× | 0.32 | 3840 ms |

| Quantization | FP16 → INT8 [54] | 2.0× | 0.25 | 1920 ms |

| Engine | PyTorch → CoreML [100]/QNN [101] | 2.5× | 0 | 768 ms |

| VAE | VAE Optimization [102] | 1.5× | 0 | 512 ms |

| Total | All Combined | 117× | 2.83 | ~520 ms |

| Model Comparison | FLOPs Ratio | Latency Ratio (A100) | FER | Interpretation |

|---|---|---|---|---|

| SD1.5 (50 → 20 steps) [8] | 2.50× | 2.50× | 1 | Matches Theory |

| SD1.5 (50 steps) → LCM (4 steps) [16] | 12.50× | 12.50× | 1 | Matches Theory |

| SD1.5 → SnapFusion (8 steps) [9] | 6.25× | 15.41× | 0.41 | Faster than Theory |

| SD1.5 → MobileDiffusion (8 steps) [18] | 14.13× | 17.27× | 0.82 | Faster than Theory |

| SDXL → SD3 Medium [14,26] | 2.79× | 2.02× | 1.38 | Slower than Theory |

| SD1.5 → SD3 Medium [8,14] | 0.92× | 0.67× | 1.37 | Slower than Theory |

| UNet (SD1.5) → DiT (FLUX.1-dev) [8,15] | 0.37× | 0.23× | 1.61 | Slower than Theory |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Ham, S.-J.; Park, C.-S. Efficient and Controllable Image Generation on the Edge: A Survey on Algorithmic and Architectural Optimization. Electronics 2026, 15, 828. https://doi.org/10.3390/electronics15040828

Ham S-J, Park C-S. Efficient and Controllable Image Generation on the Edge: A Survey on Algorithmic and Architectural Optimization. Electronics. 2026; 15(4):828. https://doi.org/10.3390/electronics15040828

Chicago/Turabian StyleHam, Se-Jun, and Chun-Su Park. 2026. "Efficient and Controllable Image Generation on the Edge: A Survey on Algorithmic and Architectural Optimization" Electronics 15, no. 4: 828. https://doi.org/10.3390/electronics15040828

APA StyleHam, S.-J., & Park, C.-S. (2026). Efficient and Controllable Image Generation on the Edge: A Survey on Algorithmic and Architectural Optimization. Electronics, 15(4), 828. https://doi.org/10.3390/electronics15040828