1. Introduction

The training of deep neural networks fundamentally relies on stochastic optimization, wherein model parameters are iteratively adjusted in directions indicated by minibatch gradients while ensuring stability of step sizes and preservation of generalization performance. The design of optimization algorithms is largely characterized by two dimensions: the mechanisms by which gradient directions are smoothed or accumulated, and the strategies by which step sizes are adapted across parameters. This dichotomy underpins the primary trade-offs observed among existing methods in terms of convergence speed, stability, and generalization quality. Despite the proliferation of algorithms in recent years, four families have emerged as the most widely adopted in practice. Stochastic gradient descent (SGD) with momentum remains a strong baseline, noted for its simplicity and robust generalization properties [

1,

2].

AdaGrad rescales coordinates using the (cumulative) squared-gradient history, helping on sparse features [

3]. RMSProp introduces the adaptation per parameter through an exponential moving average (EMA) of squared gradients. Adam integrates momentum with adaptive scaling and applies bias corrections [

4,

5], with AMSGrad providing a convergence-motivated variant [

6]. Based on this, AdamW decouples the weight decay from Adam’s adaptive update, improving regularization control [

7]. For a broader context, see the survey by Bottou, Curtis, and Nocedal [

8].

Why a new optimizer? Despite there being many Adam variants, the following three gaps remain relevant in practice:

- (1)

No principled “momentum-of-momentum.” The standard numerator uses one EMA. Simply stacking EMAs lengthens memory, but double-counts cold-start bias, shrinking early steps unless they are patched.

- (2)

Warmup dependency. Schedules often rely on bespoke warmups to achieve stable early scaling, which complicates pipelines and increases failed runs.

- (3)

Opaque early-time scaling. With one EMA numerator, there is no orthogonal knob to extend memory while keeping the instantaneous pass-through of new gradient information well controlled.

This paper presents a new optimizer called AdamN. AdamN’s main contribution is an EMA-of-EMA numerator with an exact double-EMA debiasing factor that removes both inner and outer cold-start bias. The denominator and weight decay (WD) remain Adam-compatible (EMA of squared gradients; decoupled WD), so the method is drop-in with the same Big-O cost.

This contribution translates into the following real operational benefits:

- (4)

Faster time-to-quality: Earlier accuracy milestones (e.g., 70–90% train accuracy) are reached sooner, shortening iteration cycles for model/ablation development and accelerating time-to-market.

- (5)

Lower energy and cost per experiment: Reaching target accuracy in fewer epochs reduces GPU-hours and power draw for both research and production retraining.

- (6)

Schedule-friendly starts: Exact debiasing reduces dependence on hand-tuned warmups, which simplifies pipelines and cuts failed runs caused by unstable early steps.

- (7)

Throughput on shared clusters: Shorter “wall-time to useful model” improves cluster utilization; teams can run more variations under quota.

- (8)

Edge and small-budget training: When computation or thermal headroom is limited (on-device or tiny clouds), AdamN’s faster launch and stable scaling help hit acceptable accuracy within tight budgets.

Research Questions (RQs) We organize our study around five questions and their evaluation metrics:

RQ1—Time-to-quality. Does AdamN reach target accuracy faster than AdamW/SGD while keeping final accuracy comparable?

Metric: wall-clock seconds to hit train/val milestones 40%, 50%, 60%, 70%, 80%, and 90%.

RQ2—Final quality at equal budget. At a fixed epoch/time budget, does AdamN match or exceed final test accuracy?

Metric: test accuracy at E = 100 and at a fixed wall-clock budget.

RQ3—Sensitivity and robustness. How sensitive is AdamN to the newly introduced -ramp, LR, and WD vs. AdamW/SGD?

Metric: mean ± SD (95% CI) of final test accuracy over 3–5 seeds per grid point.

RQ4—What “makes’’ AdamN work? Are gains primarily from the nested-EMA numerator or exact double-EMA debiasing?

Metric: ablation deltas on time-to-milestones and final accuracy when toggling nested on/off and exact vs. simple debiasing.

RQ5—Generalization to NLP under imbalance. Do AdamN’s rare-token update-efficiency gains hold beyond vision?

Metric: validation/test perplexity per frequency bin (head/mid/tail), tail PPL, and effective LR (row-wise RMS) on embeddings; fixed-budget final PPL.

The paper is organized as follows:

Section 2 reviews the literature and the motivation for a new optimizer.

Section 3 reviews the notation and setup of the related work.

Section 4 presents

AdamN: Method and Full Derivation, including the exact nested-EMA weights and the bias-correction factor

.

Section 5 provides an algorithmic reference.

Section 6 reports experiments: proof of concept and

CIFAR-10 pre-benchmarking, followed by the main

CIFAR-100 benchmark with ResNet-18 and ViT-B16.

Section 7 presents the ablation.

Section 8 discusses limitations and practical guidance, and

Section 9 concludes.

2. Literature Survey and Motivation for a New Optimizer

2.1. Foundations Before SGD

Before stochastic methods became dominant, optimization in numerical analysis and early machine learning relied on full-bath techniques. The classical method of steepest descent, introduced by Cauchy in the 19th century [

9], updates parameters along the negative gradient of the full dataset loss. While stable, this approach is computationally expensive for large datasets and scales poorly.

Other classical approaches include the following:

- (1)

Newton’s method, introduced in the 17th century [

10], and its quasi-Newton variants such as BFGS (developed in the 1970s [

11,

12,

13]), which use curvature information for faster convergence on convex problems but require storing and inverting Hessian approximations.

- (2)

Coordinate descent, dating back to early-20th-century optimization [

14,

15], which updates one parameter at a time and was practical for small-dimensional problems.

- (3)

Conjugate gradient methods (Hestenes and Stiefel, 1952 [

16]), which improved convergence for quadratic objectives by exploiting conjugacy instead of simple steepest directions.

These methods were effective in smaller-scale convex settings but became infeasible as datasets and neural networks grew. The breakthrough of stochastic gradient descent (SGD) by Robbins and Monro (1951) [

17] was to replace the full gradient with an unbiased minibatch estimator. This drastically reduced per-step computation and enabled scaling, at the cost of higher variance—a trade-off that ultimately aided exploration and generalization in deep learning.

2.2. First-Order Foundations

SGD and Momentum: SGD with a global learning rate remains the default for large-scale supervised training. Polyak’s heavy-ball momentum [

1] and deep-learning practice [

2] accelerate progress in curved valleys by accumulating low-frequency components of the gradient. Nesterov’s look-ahead gradient [

18] often improves stability on ill-conditioned objectives. Strengths: simplicity, strong generalization in vision, and predictable schedule design. Limitations: a single global step size struggles with anisotropy and poorly scaled features; early progress can be slow.

Adaptive Methods (AdaGrad/RMSProp): AdaGrad rescales coordinates using the (cumulative) squared-gradient history, helping on sparse features [

3]. RMSProp trades the cumulative sum for an EMA so step sizes do not vanish [

19]. These methods reduce sensitivity to global LR but can suffer from stale or overly smooth denominators when noise/curvature changes quickly.

2.3. Adam and Decoupled Weight Decay

Adam introduced EMAs for both first and second moments with explicit bias corrections that address cold-start underestimation; this makes early training more stable and typically faster [

5]. AMSGrad provides a convergence-favoring variant by enforcing a nondecreasing second-moment estimate [

6]. They are stable defaults, but the

single numerator EMA forces a trade-off between memory and responsiveness; extending memory usually hurts early-step magnitude unless one introduces warmups.

AdamW: L2 added to the loss inside an adaptive method does not behave like classical weight decay; decoupling the decay step (AdamW) restores clean regularization and often improves validation accuracy at no extra cost [

7]. It solves WD coupling but

does not address numerator memory vs.

early scaling. 2.4. Recent Developments

A wave of optimizers extends or rethinks Adam along several axes. AdaBelief modifies the second moment to track the “belief” in the gradient, often improving generalization relative to Adam [

20]. Sharpness-aware methods such as SAM and its scale-invariant variant ASAM perturb parameters toward neighborhoods of lower loss curvature to improve generalization [

21,

22]. Shampoo brings practical block-wise second-order preconditioning at scale [

23]. AdamP introduces a geometry-aware projection to mitigate over-adaptation and boost vision generalization [

24]. Adan uses an adaptive Nesterov-style numerator for faster convergence, particularly in vision [

25]. D-Adaptation removes manual learning-rate tuning by adapting the global scale automatically [

26]. Sophia leverages a clipped Hessian estimate to realize a scalable stochastic second-order method with strong results for LLM pretraining [

27]. Lion—discovered via symbolic search—updates with a sign-based momentum rule and is competitive in large-scale training [

28].

These advances improve specific regimes (e.g., curvature awareness, sign-based steps, and scale-free training), yet none of them provide a principled, bias-corrected momentum-of-momentum that (a) extends numerator memory, (b) exactly fixes compounded cold-start bias, and (c) keeps Adam-like simplicity and cost.

2.5. Large-Batch Regime and Scaling

Large batches can hurt generalization by pushing toward sharper minima [

29], although careful schedules and system design can scale training dramatically (e.g., 1 h ImageNet) [

30]. Trust-ratio-style methods (e.g., LAMB) enable stable very-large-batch training by normalizing the step to the parameter norm [

31].

2.6. What Current Methods Still Miss

Despite extensive progress [

8], several gaps persist that motivate our approach:

- (1)

No principled “momentum-of-momentum.” Adam uses a single EMA in the numerator. If one naively stacks EMAs to lengthen memory, the inner EMA’s cold-start bias remains uncorrected, shrinking early steps.

- (2)

Warmup dependence. Many pipelines rely on hand-tuned warmups to overcome timid or erratic starts, even with Adam’s bias corrections [

5].

- (3)

Under-parameterized trade-off between responsiveness and inertia. With only one numerator EMA, tuning smoothness vs. freshness is constrained; there is no clean way to expose an additional, orthogonal knob that lengthens memory while keeping early steps properly scaled.

- (4)

Opaque early-time scaling. Interactions between η, β1, β2, and the denominator can obscure how much of the current gradient passes through each step.

2.7. Motivation for AdamN

AdamN targets these gaps while remaining drop-in-compatible with Adam/AdamW. AdamN has the following characteristics:

- (1)

Nested numerator (EMA-of-EMA) yields a triangular-with-exponential-tail-kernel, longer memory, and smoother directions than a single EMA.

- (2)

Exact double-EMA debiasing provides a closed-form factor that removes both inner and outer cold-start shrinkage, eliminating the need for ad hoc warmups while preserving stability.

- (3)

Transparent scaling. The decomposition into (i) freshness, (ii) exact bias factor, and (iii) adaptive denominator clarifies instantaneous step size and informs principled LR scaling.

- (4)

Cost and compatibility. It has the same Big-O cost as Adam/AdamW with one extra buffer and retains decoupled weight decay and modern scheduling [

7].

- (5)

This directly targets the memory vs. early-scale gap while remaining drop-in- and scheduler-friendly.

2.8. Systematic Comparison with Related Nested/Multi-Momentum Approaches

To substantiate our claim regarding the novelty of AdamN’s bias correction, we systematically analyzed recent optimizers that employ multiple momentum-like terms, as shown in

Table 1.

Adan’s ‘momentum difference’ term () is structurally distinct from applying an EMA to the output of another EMA. Mathematically, Adan’s numerator can be written as , which is a weighted combination of current and past gradients but does not exhibit the triangular-kernel weighting pattern (Equation (21)) that characterizes nested EMAs. Consequently, Adan does not require—nor does it provide—a double-EMA bias correction factor.

3. Notation and Setup

Let the parameters (all layers, all tensors: convolution kernels, linear weights, biases, etc.) be concatenated into a single vector

. At step

, given a minibatch

, the stochastic gradient

of the minibatch empirical loss with respect to all parameters can be defined as follows:

Let the learning rate be > 0, the stabilizer be , and the gradient at step . When present, let the weight decay be , decoupled unless otherwise noted.

Modern deep networks are trained by stochastic first-order methods that update parameters using minibatch gradients. To reason precisely about later variants (momentum, Adam/AdamW, and our proposed AdamN), we first fix notation for the minibatch loss and its gradient and make clear how learning rate, numerical stabilization, and weight decay (WD) enter the update. This section establishes a common baseline against which the effects of momentum and adaptivity can be interpreted.

3.1. SGD and Momentum (Reference Form)

- (1)

Vanilla SGD: SGD updates parameters by moving against the minibatch gradient with a global learning rate. It looks only at the current gradient to pick the next step. It is simple but slow, and it can get stuck or bounce around. Its updated parameters are as follows:

With decreasing , SGD achieves classical stochastic rates in convex settings. With constant , it converges to a noise floor whose radius depends on and the variance of the gradient. Despite its simplicity, SGD remains competitive in large, supervised tasks, especially with data augmentation and carefully designed learning-rate schedules.

Limitations:

A single global step size as seen in (2) can be inadequate for highly anisotropic problems.

Early training can lag adaptive methods on sparse or poorly scaled features.

Large batch caution: very large batches may reduce test accuracy by converging to sharper minima, as noted by [

9]; see also large batch scheduling successes in [

11].

- (2)

Polyak (Heavy-Ball) Momentum: This adds velocity Instead of just the current gradient, it mixes in part of the last update’s direction. That way, steps get smoother and faster in steady directions and reduce zigzagging in high-curvature regions by accumulating low-frequency gradient information.

where

is the momentum term ∈ [0, 1] that decides how much of the past velocity is kept.

- (3)

Nesterov Accelerated Gradient (NAG): NAG computes the gradient at a look-ahead position [

18] as follows:

This anticipatory step in (5) typically improves stability and effective conditioning. In practice, set momentum µ ∈ [0.9, 0.99] (e.g., 0.9 for noisier tasks and 0.95–0.99 for smoother regimes). We choose the base learning rate by a brief sweep or LR-range test, then use a cosine decay or step decay schedule. For very deep networks or highly non-stationary early gradients, we include a short warmup (e.g., 3–10 epochs or 1–5% of total steps) to avoid unstable starts.

3.2. Adam (Adaptive Moment Estimation)

Adam combines a momentum-like first moment (EMA of gradients) with an adaptive second moment denominator (EMA of squared gradients) and applies bias corrections so that early estimates are not underestimated [

5].

- (1)

The first moment (momentum of gradients) is derived as follows:

- (2)

The second moment (EMA of squared gradients) is derived as follows:

The result is a fast, directionally smooth, scale-aware update that is straightforward to tune. The bias correction addresses the cold-start bias, stabilizing the critical early iterations where gradients are volatile. This combination explains Adam’s widespread adoption in NLP, vision, and reinforcement learning.

The standard bias-correction derivation is as follows:

First, we do not need the gradients themselves to be constant, only that their mean and second moment stay the same assuming the gradient statistics are stationary. Concretely:

- (1)

The average gradient is constant: for all steps .

- (2)

The average squared gradient is constant: for all steps .

- (3)

By using (6) and (7), taking expectations of the first and second moments of Adam under this assumption will be as follows:

- (4)

For , the factor in (8) is less than 1 for small , so is smaller than .

- (5)

As , and 1, so the bias goes away.

- (6)

The same holds for : , so it also starts too small.

That is why they are biased toward zero. We started at zero, and the EMA underestimates the true mean and variance early on by a factor of .

Without factors

where

∈ [1, 2], the first and second moments are systematically underestimated during initialization [

5]. This underestimation is most pronounced when

is large and can cause instability in the first stages of training; AMSGrad [

6] analyzes related convergence issues.

Bias-corrected estimates:

Divide out those factors to remove the bias:

The final update expressed in terms of past gradients

is as follows:

Substitute (10) into (11) and simplify, then the weighted sum over

is as follows:

where

Current gradient coefficient:

For the most recent gradient :

Defaults. β1 = 0.9, β2 ∈ [0.99, 0.999], ϵ = 10−8. Sometimes we reduce β2 for a greater response and apply a warmup if the early gradients spike.

Caveats:

- (1)

It can yield slightly weaker generalization than tuned SGD on some vision tasks; schedule design and regularization are critical.

- (2)

Warmup is often required for very deep or highly regularized models.

Notes:

- (1)

If gradients are not stationary, the correction still removes the zero-init bias.

- (2)

If a gradient is large, grows, which shrinks the effective learning rate. If it is small, the step size stays larger.

- (3)

is an adaptive scale factor, not another momentum. Adam has only one momentum: .

Thus, Adam combines:

- (1)

Momentum (past gradients for direction).

- (2)

Adaptive scaling (past magnitudes for step size).

3.3. AdamW (Decoupled Weight Decay)

Motivation: In standard Adam, adding L2 regularization to the loss does not match classical weight decay, because the adaptive denominator mixes shrinkage with rescaling. AdamW resolves this by decoupling the weight decay step from the adaptive update [

7] as follows:

- (2)

Adaptive step:

This restores weight decay as pure multiplicative shrinkage and frequently improves validation/test performance compared to Adam + L2 at equal computation.

Defaults: Same as Adam, with depending on the model and augmentation.

What to expect:

Cleaner, schedule-independent control of regularization.

Often stronger generalization than Adam + L2, with identical computational cost.

Regularization: L2 vs. decoupled decay:

L2 regularization adds to the loss, contributing to the gradient. In Adam, this term is normalized by , coupling shrinkage with adaptation.

The decoupled decay in (14) applies

outside the adaptive update, yielding uniform shrinkage independent of local rescaling [

7]. This separation improves controllability and often leads to better generalization.

4. AdamN: Contributions and Method

4.1. Contributions

We introduce AdamN, a nested-momentum adaptive optimizer which has the following qualities:

- (1)

It introduces a second EMA on the numerator (a true nested momentum: ) which acts as a triangular kernel with an exponential tail, yielding a smoother and longer memory update direction than a single EMA.

- (2)

It derives an exact double-EMAs bias correction that removes the cold-start shrinkage inherited from nested EMAs. Intuitively, the nested numerator behaves as a data-adaptive controller that tempers early step magnitudes, preventing overshoot without requiring hand-tuned warmup.

- (3)

It retains Adam-style adaptive braking through a second moment , using decoupled weight decay.

- (4)

It demonstrates faster early progress at stable scaling on ResNet-18/CIFAR-100 while matching or slightly exceeding AdamW’s final accuracy at similar computation.

4.2. Method and Foundation

Let us also define EMA elements as

, then

are defined as follows:

where

controls the memory of

(1st moment),

controls the memory of

(nested numerator), and

controls the memory of

(2nd moment/brakes). From (16) and (17), substitute

into

and collect weights on

, which will give the following:

where

is the current time step/iteration (

),

is a summation index over time when unrolling an EMA (in which

terms contribute to

), and

is a summation index over gradient timestamps (in which

terms go into

and then

).

The weight decay is decoupled. All operations involving powers, division, and square roots are applied elementwise.

4.3. Nested Numerator as a Triangular Kernel

Evaluate the inner sum in (19) as follows:

Hence, the exact weights on

are as follows:

The equal-betas case in (21) illustrates why nested EMAs possess much longer effective memory.

In this setting, the numerator acts as a triangular kernel with an exponential tail: the linear factor increases with the age of each gradient before being exponentially damped by . As a result, when is large, the numerator becomes more inertial, retaining the past information significantly longer.

4.4. Bias Correction

Simple (insufficient) bias correction (Adam-style warmup): The nested numerator is unbiased via

With simple correction, the early steps are still biased low because inherits bias from .

Exact bias correction: The nested numerator is unbiased via

This is the exact analog of Adam’s in (10) but for a double EMA of . It amplifies early step safely and often shortens time-to-50/60-percent milestones.

It can also be formally shown that this expression corresponds to the total accumulated weight at time .

To derive , unroll the recurrences (assume for all to compute the expectation/gain factor) as follows:

- (2)

Second EMA:

After evaluating the two geometric sums in (28), the exact debiasing factor for nested momentum will be defined as follows:

Remark on stationarity: The derivation of

assumes stationary gradient statistics (

), following the same analytical framework used to derive Adam’s bias correction [

5]. This assumption serves to identify the functional form of the zero-initialization bias—the systematic underestimation caused by

—rather than to model the true (non-stationary) gradient distribution during training.

In practice, gradient statistics are highly non-stationary, particularly in early epochs. However, the bias correction remains effective because it addresses the initialization artifact: at , the unnormalized estimates and are scaled by factors that vanish as , regardless of the gradient’s true mean. The correction factor compensates for this cold-start shrinkage.

For double EMAs, the non-stationarity concern is more pronounced because the outer EMA accumulates bias from the inner EMA’s already-biased estimates. Our exact factor accounts for this compounding effect (the cross-term in Equation (29)), which simple cascaded corrections would miss. Empirically, AdamN’s consistent performance across diverse tasks—where gradient statistics change dramatically during training—validates the practical robustness of our approach.

4.5. Freshness

By plugging

into (21), the instantaneous pass-through of

into

(pre-bias) is given as follows:

The bias correction scales all weights by

to remove the early-time shrinkage, so the effective normalized coefficient is as follows:

Freshness quantifies how much new gradient information enters the numerator at each step. Unlike cumulative weights, it isolates the instantaneous pass-through for the newest gradient.

For example, by recalling (16) and (17), AdamN’s freshness is as follows:

Expanding shows that the coefficient in

the current in

is as follows:

That boxed term is what we call freshness—how much new gradient information is injected at this step (before bias-correction/normalization/LR), while AdamW freshness is as follows: as seen in (6).

Learning rate scaling and : The tolerance to learning rate of AdamN depends directly on the freshness term

When is small (e.g., ), the freshness is , nearly the same as AdamW’s .

In this case, AdamN tolerates only about higher learning rates—effectively without an advantage.

By contrast, when is large (e.g., ), the freshness shrinks to , and the bias correction amplifies the initial steps.

To get comparable aggressiveness, a simple first-order scaling is defined as follows:

Here, AdamN can run safely at ∼ the learning rate of AdamW, achieving faster early progress without destabilization.

Thus, the LR advantage of AdamN manifests itself primarily in the high regime, where its nested numerator would otherwise be too inertial without correction.

This behavior is illustrated in

Table 2.

4.6. Interpretation of

Effect of on AdamN dynamics: Large introduces two competing effects that must be understood jointly:

Reduced freshness (raw numerator). The uncorrected weight on the current gradient in is , which vanishes as . This makes the numerator increasingly inertial—dominated by past gradients rather than new information.

Increased bias-correction amplification. The factor grows slowly for large , causing the bias-corrected numerator to be amplified more aggressively in early epochs.

These effects can appear contradictory: large reduces sensitivity to new gradients (effect 1) while potentially increasing step magnitude (effect 2). The practical consequence is that high- configurations produce steps that are large but delayed; the optimizer moves confidently in outdated directions. This explains our ablation findings: degrades both speed and final accuracy because the numerator cannot adapt quickly to the changing loss landscape, regardless of step magnitude.

We therefore recommend for most tasks, where freshness remains high and the bias correction provides stable early scaling without excessive inertia.

4.7. Instantaneous Learning Rate (instLR) and Comparison

Using (31), we define effective instantaneous scaling—coefficient—on the current gradient as follows:

which clarifies why AdamN can launch fast yet remain stable.

This multiplier scales the contribution of (and, via the weights above, all past ) and is the real step size the optimizer effectively takes at time , not just the scheduler’s base learning rate .

The numerator is amplified correctly at cold start, while the denominator tempers spikes. At low

(e.g., 0.1), exact vs. simple bias corrections are indistinguishable due to denominator absorption. But at higher

(e.g., 0.8), exact bias correction yields consistently higher instLR and faster early convergence, as confirmed in

Table 3.

These closed forms assume the usual initialization . If we start from non-zero , extra terms like and appear, but they decay fast and are usually negligible.

Digital Signal Processing (DSP) Analogy: From the DSP discipline, the bias factor plays the role of a phase correction, aligning the nested-EMA numerator with the true gradient “phase” by removing the systematic lag at cold start, while the instantaneous learning rate (instLR) acts as an amplitude envelope, setting the step magnitude after normalization. Together they resemble phase and amplitude control in modulation: corrects the trajectory (phase alignment), and instLR governs update strength (amplitude). This perspective clarifies why exact matters most at large (long memory), where naive debiasing leaves a persistent phase lag that directly suppresses the effective amplitude.

4.8. Update Rule (Bias-Corrected) and Weighted-Sum View

The effective per-coordinate step is as follows:

where

is the exact debiased, noise-smoothed numerator and

the usual second moment. This combination is a

richer diagonal preconditioner than Adam/AdamW’s—closer to second-order behavior—yet remains first-order in cost.

With decoupled decay

and using (18), the bias-corrected denominator, like Adam’s correction, will be as follows:

Equivalently, the update is a weighted sum over past gradients, and it is defined as follows:

5. Algorithm and Complexity

AdamN adds one EMA buffer

) compared to Adam/AdamW. The recurrence relations (lines 6–8) in

Figure 1 each require O(d) elementwise operations; the scalar bias factor

is computed in O(1). Total per-step complexity is

O(d) time and O(3d) = O(d) space, identical to Adam/AdamW up to a constant factor of ~1.5× in memory. The explicit weighted-sum representation (Equation (22)) is provided for theoretical insight and is never computed during runtime.

1: Given: Base LR , , , , , WD .

2: Initialize: , parameter vector , first moment , nested momentum , .

3: Repeat,

4: .

5 (compute gradient).

6: ,

7: .

8:

9: .

10: ,

11: .

12: ,

13: ,

14: .

15: * .

16: .

17: .

18: Until stopping criterion is met.

19: Return optimized parameters .

6. Experiments

Before moving to standard benchmarks such as CIFAR-100, we first conducted a series of progressively harder toy experiments to probe the dynamics of AdamN. These tests illustrate why a nested numerator plus adaptive braking is necessary.

For each baseline, we selected the hyperparameters yielding the highest validation performance and report that run.

This section will present the following experiments using the hyperparameters shown in

Table 3:

- (1)

A proof of concept showing why the denominator is essential.

- (2)

CIFAR-10 as pre-benchmarking.

- (3)

CIFAR-100 is the main benchmark.

- (4)

Additional MNIST/EMNIST runs also show marked speed-to-accuracy gains.

- (5)

NLP rare-token evaluation using a small transformer language model on a Wikitext-2-style corpus.

6.1. Hyperparameter Search Protocol

For each optimizer, we performed a grid search over the following spaces as seen in

Table 4:

All other hyperparameters (β1 = 0.9, β3 = 0.999, ε = 10−8 for Adam variants; cosine schedule for all) were held constant at standard defaults.

Search strategy: Full grid search (no random or Bayesian optimization).

Runs per configuration: Single run during search; 3 seeds for selected configurations.

Selection criterion: Configuration with highest validation accuracy at epoch 100.

Stopping criterion: Fixed 100 epochs (no early stopping during search).

Budget: AdamN: 18 configurations; AdamW: 12 configurations; Adam: 12 configurations; SGD: 12 configurations. Total: 54 runs on CIFAR-100/ResNet-18.

The selected hyperparameters for each optimizer, as shown in

Table 5, represent the configuration achieving best validation performance in this search.

6.2. Proof of Concept: Stabilizing Nested Momentum

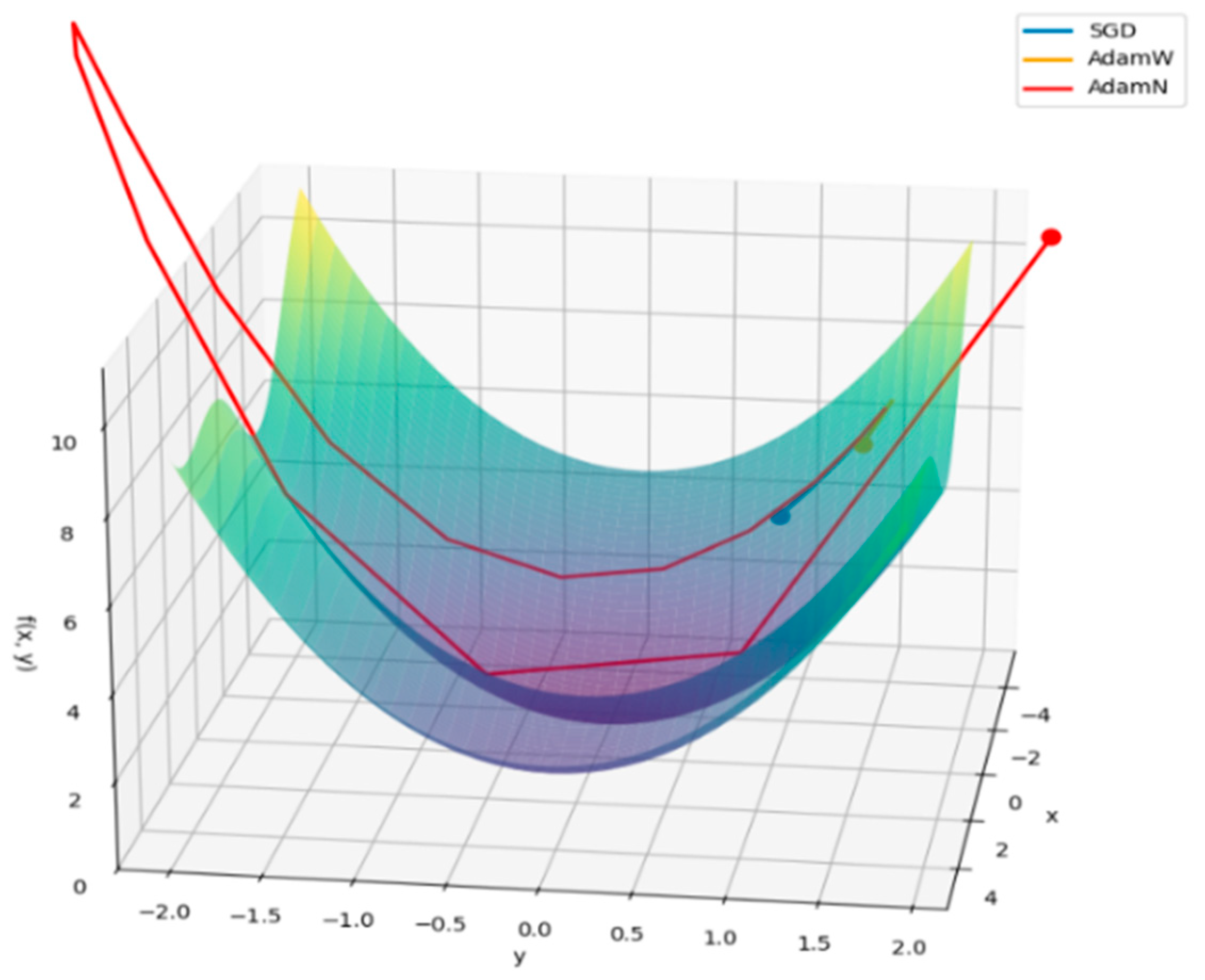

As an initial sanity check, we are optimizing the following smooth 2D objective equation:

Without a denominator term, AdamN behaved explosively: the nested numerator amplified updates until trajectories shot off the landscape. This motivated the addition of an adaptive braking mechanism (EMA of squared gradients, as in Adam/AdamW).

Figure 2 illustrates extreme overshooting before the brakes were introduced.

Figure 3 shows the effect of implementing EMA of squared gradients to control that explosive behavior with only 30 epochs.

6.3. Challenging Variant with Cubic Terms

Next, we tested a harder function,

which includes cubic terms. This makes the function unbounded below: as

, the cubic terms dominate and pull

. The task therefore shifts from finding a global minimum to settling into a useful local minimum near the origin before divergence.

Setup: Learning rate = . Momentum for SGD = 0.9. Betas for AdamW = (0.9, 0.999). Betas for AdamN = (0.9, 0.1, 0.999).

Results: AdamN survived and converged across 5 seeds, while SGD diverged at the same learning rate as shown in

Table 6.

6.4. Rosenbrock Function: High-Dimensional Stress Test

We then moved to a more realistic high-dimensional test, the Rosenbrock function (the “banana valley”),

whose global minimum is at

with value 0. The Rosenbrock landscape is notorious for its long, narrow, curved valley, where plain gradient descent zigzags and progresses slowly. Results across 5 seeds are given in

Table 7.

Results: AdamW and AdamN successfully navigated the valley, while SGD plateaued at a much higher loss.

Momentum-based methods like AdamN navigate this landscape more efficiently than SGD.

In higher dimensions (), AdamN consistently reached milestones faster than AdamW, confirming its advantage in noisy, poorly conditioned valleys.

6.5. Addional Vision Datasets (Brief)

MNIST and EMNIST confirm the speed-to-accuracy advantage with competitive final metrics. Time to reach training and validation accuracy milestones’ results are shown in

Table 8 and

Table 9, respectively. Note: times are shown as train/val.

6.6. CIFAR-10 (Pre-Benchmarking)

Experiment Setup: ResNet-18 with100 epochs, a single GPU, cosine schedule, and decoupled weight decay. AdamN uses exact debiasing and the same scheduler budget as AdamW.

Findings: AdamN matches/slightly exceed AdamW’s final test accuracy while hitting milestones earlier, as shown in

Table 10.

6.7. Summary of Toy Tests

These controlled landscapes show that:

- (1)

Nested momentum without braking leads to instability (overshooting).

- (2)

Adding the Adam-style denominator stabilizes training while preserving fast numerator dynamics.

- (3)

Before tackling the more challenging CIFAR-100 benchmark, we validated AdamN on progressively harder datasets including MNIST, Fashion-MNIST, EMNIST, and CIFAR-10. Across all these datasets, AdamN consistently reached key accuracy milestones faster than Adam, AdamW, and SGD, while maintaining competitive or superior final accuracy and loss. These results confirm that AdamN’s advantages are not confined to toy problems but extend robustly across diverse datasets and architectures.

6.8. NLP Imbalance Validation: Token-Frequency Bins and Effective Learning Rates

The NLP study is designed to stress class imbalance in language modeling: we include a setting with randomly corrupted tokens injected into the training split (validation/test remain clean) to mimic real-world sparsity, and a low-resource variant with only 10% of the training text.

Experimental setup: We evaluate how AdamN handles the rare-token regime typical in language modeling under class imbalance.

Corpus and preprocessing: Word-level LM on a Wikitext-2-style corpus with a stable regex tokenizer (lowercased words + punctuation). Vocabulary is built only from the training split. Streams are split 80/10/10 (train/val/test). BPTT length = 35. We fix the random seed end-to-end (model init, batch order), so AdamN and baselines see identical minibatches.

Model: Small tied-embedding transformer LM: embedding 256, 2 encoder layers, 4 heads, FFN 512, dropout 0.2, sinusoidal positional encoding, tied input/output embedding’s, and 10 epochs of training.

Optimizers and schedules:

- -

Adam/AdamW: , decoupled WD = 0 for Adam and for AdamW, linear warmup cosine decay, LR = .

- -

SGD: , decoupled WD = 0, linear warmup cosine decay, LR = .

- -

AdamN: , decoupled WD , cosine decay (no warmup), LR = , exact double-EMA debiasing in the numerator.

All runs use grad-norm clip = 0.5.

Frequency bins (head/mid/tail): Sort tokens by training frequency; slice cumulative index ranges: head (high frequency) (top ~1%), mid (medium frequency) (next ~19%), and tail (low frequency) (remaining ~80%). Metrics are computed per bin on the validation stream.

Optimization was evaluated on the validation perplexity (PPL), both overall and specifically for predictions on tokens belonging to each bin. We also analyzed the average effective learning rate (LR) applied to the embedding rows for tokens within each bin.

Metrics: NLP imbalance metrics (RQ5). The primary metric is validation perplexity (PPL), both overall and per slice. We additionally compute the average effective learning rate applied to embedding rows for tokens in each slice.

For AdamW, the effective instLR is computed as follows:

For AdamN (nested numerator + exact debias), the effective instLR is computed as follows:

Here, is the exact double-EMA bias factor from our derivation (29). We take the RMS across the embedding dimension to get one scalar per row, then average over rows in each bin.

Validation Protocol A—Full Data with Rare-Token Stress (Training Corruption)

Task framing: This experiment targets rare-token representations in a language modeling task. To mimic real-world imbalance and increase token sparsity, we train on a Wikitext-2-style dataset augmented with randomly corrupted tokens in the training split only (validation/test remain clean).

This amplifies the long-tail difficulty while leaving evaluation unbiased.

Validation PPL by bin (↓ better) results and average effective LR on embedding rows (arbitrary units, ↑ larger scale) are shown in

Table 11 and

Table 12, respectively.

RQ5: Generalization to NLP under imbalance (rare-token efficiency):

Findings: Head/mid are comparable; the tail dominates the imbalance story. Under identical budgets, AdamN halves tail PPL vs. AdamW while using a smaller tail effective LR—consistent with AdamN’s longer-memory, exactly debiased numerator producing higher-quality rare-token updates (better likelihood gain per unit step), rather than just increasing step size. Here, low acts as a noise filter, preventing overshooting.

Validation Protocol B—Low-resource challenge (10% training data)

We repeat the pipeline after down-sampling the training split to 10%, while keeping val/test full-size. Vocabulary shrinks (e.g., ~18 k), and tail sparsity becomes severe.

Validation PPL by bin (↓ better) results, and average effective LR on embedding rows are shown in

Table 13 and

Table 14, respectively:

Findings (low resource): Under extreme data scarcity, where the tail gets much harder for both methods, the low-momentum, high-dampening profile of AdamN is vastly superior for regularization and learning the sparsest embedding’s. AdamN retains a strong advantage (~3.5× lower tail PPL) while again allocating less (~5× lower effective LR) than AdamW—evidence that AdamN’s nested numerator + exact debias improves update efficiency on rare tokens when data is scarce. And this confirms the previous hypothesis: AdamN’s superiority is achieved not by faster exploration but by superior step precision and stability. It is winning by taking smaller, cleaner steps where there are large, noisy steps that lead to parameter drift.

Practical Interpretation:

- -

Head/mid stability, tail efficiency. Across both settings, AdamN improves tail PPL without simply increasing tail LR, indicating better bias/variance trade-offs in the rare-token regime.

- -

No warmup for AdamN. Exact double-EMA debias provides a clean start; AdamW still benefits from short warmup to avoid poor early scaling.

- -

Fair comparisons. We seed all randomness, reuse the same initial weights, and preserve identical minibatch order to isolate optimizer behavior.

- -

Caveat. Absolute PPL depends on model size and schedules; the robust signal is the relative tail behavior and effective-LR distribution under imbalance.

We also evaluated AdamN on a larger-scale setting (RQ5). In a full fine-tuning experiment on

Llama 3.1–8B using a small dataset, AdamN demonstrated strong speed-to-quality behavior: it reached AdamW’s final perplexity in approximately half as many training steps as seen in

Table 15, corresponding to an ≈

improvement in time-to-quality.

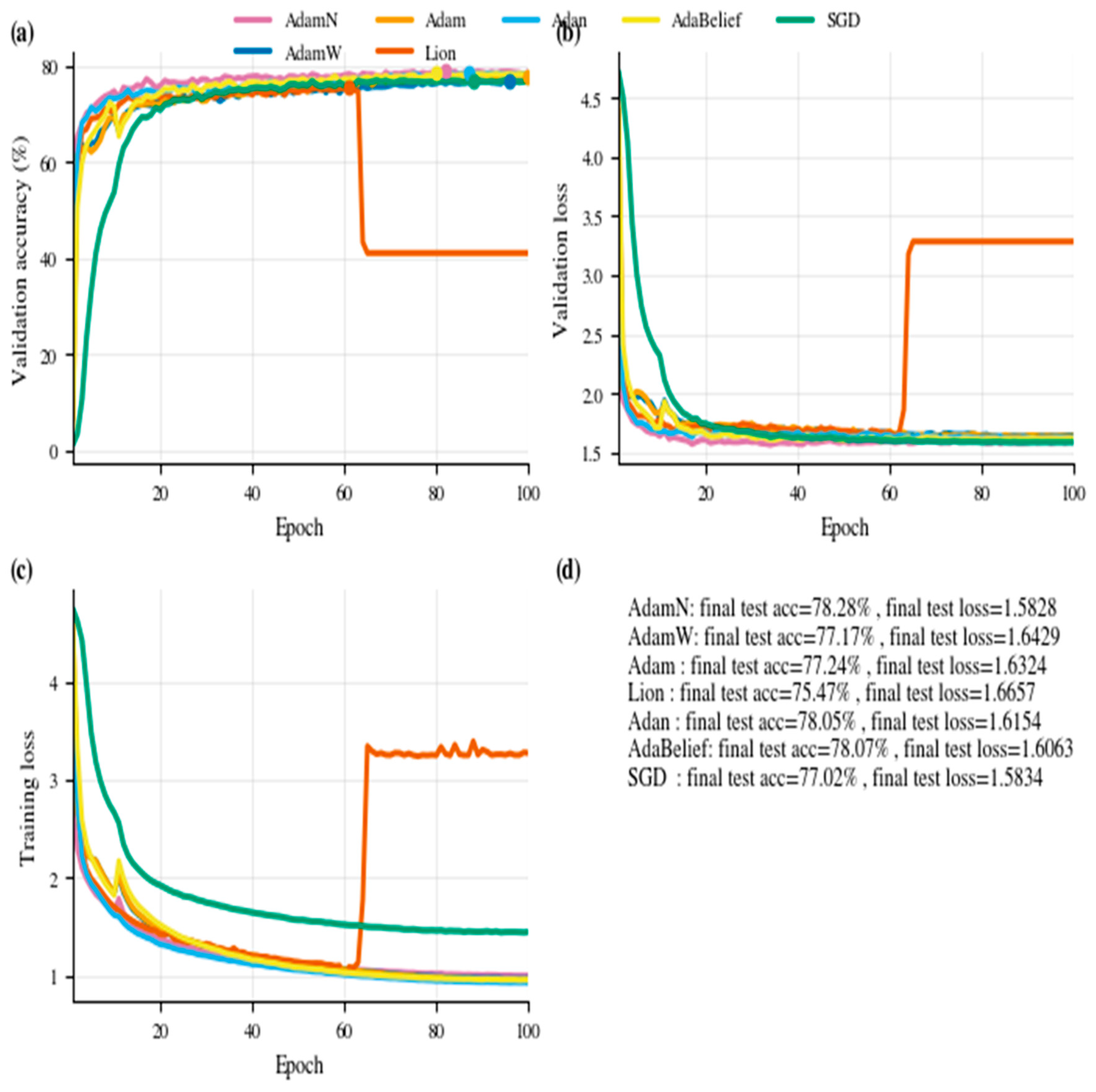

6.9. CIFAR-100 (Main Benchmark)

CIFAR100 is one of the most challenging small-scale vision benchmarks, with 100 classes and relatively few examples per class. In this section, our primary goal is not to push state-of-the-art test accuracy—achieving beyond 90% typically requires specialized augmentation pipelines, transfer learning, and extensive tuning. Instead, we deliberately kept the standard setup and focused on what CIFAR100 reveals about optimizer speed. AdamN is designed as a fast learner, and CIFAR100 provides a realistic, high-dimensional stress test to evaluate how quickly an optimizer can drive training accuracy upward under a fixed epoch budget. Across runs, the validation accuracy remained in the expected 75–76% range for plain training without tricks, but the key outcome is that AdamN consistently reached accuracy milestones earlier than AdamW, Adam, or SGD, demonstrating its advantage as a speed-oriented optimizer.

Experiment Setup: We evaluated CIFAR-100 with ResNet-18 and ViT-b16 for 100 epochs, using cosine learning-rate schedule and decoupled weight decay. All optimizers receive the same tuning budget; AdamN uses exact nested debiasing and no warmup, while Adam/AdamW and SGD are trained with a warmup to reflect standard practice. For fairness and reproducibility, we (i) fix a global random seed and enable deterministic settings where possible, (ii) seed the DataLoader shuffles and all stochastic augmentations per epoch so that all the optimizers see the identical sample order and transformations at every epoch, (iii) load the same initial weights (identical state_dict) before each strategy runs, and (iv) apply AdamW-style decay exclusions (no WD on biases/norms/ViT pos-embeds/cls) across all methods, enable AMP, and turn on PyTorch (2.9.0) fast paths (AdamW fused when supported; Adam/SGD foreach = True). This translates into materially lower computation cost and energy per run, compounding across large hyperparameter sweeps.

We report two regimes: from-scratch training (full ResNet-18 and customized ViT on 32 × 32 CIFAR100) and transfer learning (ImageNet-pretrained backbone with a reinitialized classifier and ViT-b16). Both regimes exhibit the same trend—AdamN reaches target accuracies sooner at comparable final accuracy—with the transfer-learning runs showing slightly smoother curves and a modestly faster time-to-accuracy due to stronger initialization. All other data-pipeline components and hyperparameters are held constant across methods. The only difference is that, in from-scratch training, we adopted a uniform AdamW-style weight-decay policy across all optimizers (AdamN, AdamW, Adam, and SGD): decay true weights, but excluding biases, normalization affine parameters, and ViT positional/class tokens. This ensures fairness and isolates optimizer behavior from regularization confounds; this translates into materially lower computation cost and energy per run, compounding across large hyperparameter sweeps.

For multi-seed dispersion and sensitivity, we ran N = 3 seeds on AdamN only. Sweep: LR ∈ {1 × 10−3, 6 × 10−4, 3 × 10−4}, WD ∈ {0.05, 0.1} (decoupled), and β2 ∈ {0.1, 0.2, 0.3} under a fixed compute budget.

Metrics and Protocols

Time-to-quality (RQ1): We record per-epoch cumulative wall-clock time and compute seconds to reach validation accuracy milestones {50, 60, 70, 80, 90%}.

Final quality equal budget (RQ2): We report test accuracy at E = 100 and at a fixed wall-clock budget.

Sensitivity and robustness (RQ3). For grids, we report mean SD and 95% CI across 3 seeds.

Mechanism ablation (RQ4): We run a toggle: nested on/off exact/simple debias, measuring milestone times and final accuracy.

Headline results: In all cases, AdamN reaches all training and validation-accuracy milestones at matched final accuracy with reduced wall-clock time by 20~80% speedup under identical hardware and dataloaders.

Table 16,

Table 17,

Table 18 and

Table 19 present the results.

6.10. Comparison with Lion and Adan

To validate AdamN’s speed claims against recent optimizers, we benchmark against Lion [

28] and Adan [

25] under equivalent conditions (same seeds, data order, and AMP enabled).

Lion employs a sign-based update rule with momentum interpolation, eliminating the need for second-moment estimation. Adan uses an adaptive Nesterov-style numerator with three β parameters. Both are designed for fast convergence.

Results: AdamN consistently reaches the 40–70% validation accuracy milestones faster than both Lion and Adan. At higher milestones (80–90%), Adan becomes competitive, likely due to its Nesterov-style lookahead. Lion shows intermediate performance, with slightly slower early convergence than AdamN but faster than AdamW.

Observation: AdamN’s advantage is most pronounced in the early-to-mid training phase, aligning with its design goal of fast, warmup-free starts via exact double-EMA debiasing. For practitioners prioritizing rapid iteration during model development or hyperparameter search, this early-phase speedup translates directly to reduced experimentation time.

6.11. Training Curves

To visualize the dynamics of AdamN compared to other optimizers, we plot train/val accuracy, instantaneous learning rate (instLR), step RMS, and the freshness-weighted gradient RMS across epochs.

Freshness-weighted Gradient RMS: Figure 4 demonstrates that AdamN significantly suppresses the RMS of the freshness-weighted current gradient compared to AdamW. This indicates that AdamN better damps raw gradient noise, leading to smoother and more reliable updates.

Instantaneous LR and Denominator RMS: As shown in

Figure 5, AdamN’s instLR (exact vs. simple bias correction) matches almost perfectly, confirming that the difference between the two forms is negligible in practice for lower

. The RMS denominator decays smoothly, stabilizing the magnitude of the update during training.

Instantaneous LR and Step RMS: In

Figure 6, the top plot (instantaneous LR) shows that the instLR for Adam/AdamW is consistently and significantly higher than for AdamN. This metric represents the potential “kick” the optimizer would give to a fresh gradient. AdamW is theoretically far more aggressive. On the other hand, the bottom plot (step_RMS) shows the actual magnitude of the update applied to the model’s weights at each epoch. Again, AdamW takes consistently larger steps.

Validation Accuracy and Training Loss. As illustrated in

Figure 7, both AdamN and AdamW converge to a similar final validation accuracy (

75–76%), but AdamN reaches a high accuracy faster—consistent with improved conditioning of “hard” directions early on. Training loss curves also show AdamN descending more steeply in the early epochs, demonstrating its advantage in speed. So, AdamN achieves a higher final accuracy and reaches milestones significantly faster, all while taking smaller, more controlled steps.

This is not a paradox; it is a sign of being a highly efficient optimizer. It is not about the size of the step but the quality of its direction.

In general, these curves illustrate AdamN’s key strength: a fast rocket-like launch with higher instLR and larger early steps, followed by a stable cruise where noise is effectively suppressed, making it a stronger default than AdamW when early progress and robust scaling both matter.

NLP’s Overall Convergence: As shown in

Figure 8a (Val PPL vs. Epoch), both AdamN and AdamW quickly minimized the overall validation perplexity. However, AdamN demonstrated superior stability and faster initial convergence, achieving a final PPL of 1.87 compared to AdamW’s final PPL of 2.0, suggesting a more robust optimization path.

NLP’s Perplexity by Frequency Bin: The advantage of AdamN is starkly revealed when examining performance on the rare tokens, as depicted in

Figure 8b (per-token frequency bins).

Head/Mid: Both optimizers performed nearly identically on common tokens (head PPL 1.20; mid PPL 1.5–1.6).

Tail: AdamN achieved a tail PPL of 155.09, which is significantly lower than AdamW’s tail PPL of 308.82. This indicates that AdamN is substantially better at learning effective representations for rare tokens with higher update efficiency (better likelihood gain per unit step), where the gradient signals are sparse and noisy.

NLP’s Effective Learning Rate Analysis: Figure 9 (effective LR by frequency bin) explains the performance gap as follows:

Head/Mid: AdamW applies a much larger effective LR to these common tokens (mid LR for AdamW vs. for AdamN). This aggressive step size on common tokens likely causes overshooting and instability.

Tail: Critically, AdamW applies an excessively large effective LR of 53.12 to the rare token embeddings. This is due to the small, noisy second moment estimates, which cause the denominator to collapse, leading to a massive, unstable step size. In contrast, AdamN maintains a dramatically lower and more stable effective LR of 12.29 for the tail tokens. The nested momentum in AdamN provides better normalization for the sparse gradient updates, preventing the pathological acceleration that plagues AdamW on rare features.

Llama3.1-8B benchmark: Time-to-quality: “when does AdamN match AdamW?”

AdamN reaches AdamW’s final perplexity in roughly half the number of steps (~2.25× faster time-to-quality) as shown in

Figure 9 and

Figure 10.

Headline results:

RQ1: Time-to-quality. As illustrated in

Figure 8, both AdamN and AdamW converge to a similar final validation accuracy (

75–76%), but AdamN reaches a high accuracy faster.

RQ2: Final quality at equal budget. In general, AdamN matches/slightly exceeds other optimizers’ final test accuracy at similar wall-clock time while hitting earlier milestones as shown in

Table 14,

Table 15,

Table 16 and

Table 17 above.

RQ3: Sensitivity and robustness. As seen in

Table 20, LR matters most here, with 6 × 10

−4 hitting a better speed/accuracy than 3 × 10

−4 (slower to milestones) and 1 × 10

−3 (slightly worse final and a bit flaky at 75%).

WD effect is mild on final accuracy (differences ~0.1–0.3%); sometimes 5 × 10−4 is a hair faster to , but it is not consistently decisive.

β2 in the 0.1–0.3 band is a second-order knob with small swings. At 6 × 10−4, 0.30 edges out on both final and speed; at 3 × 10−4, the best final is at 0.20 (74.98%), but again within noise of 0.10/0.30.

7. Ablations

To isolate which design choices driving AdamN’s behavior, we run targeted ablations under the same CIFAR-100/ResNet-18/ViT-B16 setup (100 epochs, cosine LR schedule, and decoupled weight decay), with fixed seeds so all methods see the same sample order and stochastic augmentations. Unless noted, we change one factor at a time and keep the rest constant.

NLP task: We mirror each ablation in the LM setup (same model, tokenizer, BPTT, and frequency-bin protocol), and report head/mid/tail PPL alongside overall PPL and embedding effective-LR per bin.

Exact vs. Simple Debiasing: Replacing exact

with simple

leaves inner-EMA bias and increases the cold start. At small

, the denominator absorbs much of the difference; at larger

, the gaps in instLR and time-to-milestones widen as seen in

Table 19.

NLP: Exact notably improves tail PPL vs. simple correction, especially in the low-resource setting; AdamN achieves better tail likelihoods without increasing tail effective LR.

Effective LR Diagnostics: We compute per-row effective LR on the embedding matrix and average within head/mid/tail bins. AdamN attains lower tail PPL with smaller tail effective LR, implying higher update quality per unit step due to the nested numerator and exact debiasing, rather than aggressive scaling.

Weight Decay: Decoupled WD is cleaner and more controllable than L2 in loss. For fast start runs, consider slightly lower WD early decay to avoid over-regularizing while still finding good directions. For later phase, we may raise WD (or to match AdamW defaults) to improve generalization and stability.

Scheduling: Start small (e.g., 0.1, 0.3) and increase to 0.6–0.8 by 20–40% epochs to preserve fast launch and stabilize later epochs. in [0.99, 0.999] is robust.

Warmup: AdamN uses no warmup; Adam/AdamW and SGD still benefit from a short warmup to avoid poor early scaling.

RQ4: What “makes’’ AdamN work? To tease apart the two ideas inside AdamN and show which one is doing the work, we ablated nested EMA and debiasing for AdamN across

-start ∈ {0.1, 0.2, 0.3, 0.8, 0.9, 0.95} at lr = 1 × 10

−3, wd = 1 × 10

−4 on CIFAR-100 (ResNet-18) as seen in

Table 21. At low

(0.1–0.3), nested-on and nested-off deliver comparable test accuracy (74.3–75.2%), and exact vs. simple debiasing is second-order (≤0.4%). However, with large

(≥0.8), nested-on collapses (72.8 → 69.2%), while nested-off remains ~74.4–74.9%. Time-to-milestones mirrors this: high-β

2 with nested-on substantially slows

and often fails to reach

. Overall, the

best setting in this regime is

nested-off with = 0.3 (75.18%), suggesting that

shorter memory is preferable at this LR/WD and that composing EMAs (nested) is only safe if β

2 is kept small.

Analysis of High-β2 Degradation:

Table 21 reveals that nested momentum (‘nested-on’) degrades significantly at β

2 ≥ 0.8, with test accuracy dropping from 74.7% (β

2 = 0.1) to 69.2% (β

2 = 0.95). This degradation does not occur when nesting is disabled (‘nested-off’), which maintains ~74.4% accuracy across all β

2 values.

The root cause is compounded inertia. At β2 = 0.95:

Freshness = (1 − 0.9)(1 − 0.95) = 0.005, meaning only 0.5% of the current gradient enters the numerator directly.

The effective memory of the nested EMA spans ~20 steps (1/(1 − β2)), causing the update direction to lag significantly behind the loss landscape.

The bias correction factor amplifies early steps aggressively, but the amplified direction is outdated, leading to inefficient or destabilizing updates.

Conversely, at β2 = 0.1, freshness = 0.09 (9%), and effective memory is ~1.1 steps—close to AdamW’s single-EMA behavior but with the benefits of exact debiasing.

Practitioner Guidance: We strongly recommend β2 ∈ [0.1, 0.3] for most tasks. Values above 0.5 should be avoided unless the task specifically benefits from very long numerator memory (e.g., extremely noisy gradients). If high β2 is desired for stability, consider disabling the nested structure (setting ) or using a β2 ramp that starts low and increases gradually.

8. Discussion, Limitations, and Reproducibility

Where does the new proposed optimizer AdamN shine? Early-phase speed at stable scale on noisy/ill-conditioned problems, less reliance on bespoke warmups, and scheduler-friendliness.

In NLP with class imbalance, AdamN consistently improved tail (rare-token) perplexity under two regimes: (i) full data with corrupted training tokens to amplify sparsity and (ii) low-resource (10% training split). In both cases, AdamN achieved lower tail PPL with smaller effective LR on rare embedding’s, indicating more efficient updates (better likelihood gain per unit step) rather than simply larger steps. Head/mid tokens were comparable to AdamW.

Non-stationarity considerations: While our bias correction is derived under stationary gradient assumptions, real training involves highly non-stationary gradients. The factor is designed to correct zero-initialization bias rather than gradient drift; its effectiveness in practice (demonstrated across vision, NLP, and synthetic tasks) suggests robustness to non-stationarity. Future theoretical work could analyze convergence guarantees under specific non-stationary gradient models.

Energy and cost implications: AdamN’s faster time-to-milestones translates directly to reduced resource consumption. On CIFAR-100/ResNet-18 (

Table 17), reaching 80% validation accuracy required 437s for AdamN vs. 564s for AdamW—a 22% reduction in wall-clock time. Assuming a typical GPU power draw of 250W, this corresponds to approximately 8.8 Wh saved per training run. Across hyperparameter sweeps (54 configurations in our search), cumulative savings exceed 475 Wh—meaningful for large-scale experimentation. The elimination of warmup schedules also reduces pipeline complexity and failed runs due to misconfigured warmup periods.

Hyperparameter complexity: AdamN introduces β2 (nested momentum coefficient) as a new hyperparameter, while repurposing β3 for the second-moment EMA (equivalent to Adam’s β2). This increases the nominal hyperparameter count from two (Adam: β1 and β2) to three (AdamN: β1, β2, and β3).

We mitigate this complexity through strong defaults:

- -

β1 = 0.9 (unchanged from Adam);

- -

β2 = 0.1 (new; robust across tasks);

- -

β3 = 0.999 (identical to Adam’s β2).

In practice, users can adopt AdamN as a drop-in replacement for AdamW using these defaults, adjusting only the learning rate and weight decay as they would for any optimizer. The β2 parameter becomes relevant only for advanced tuning or when early-phase speed is critical.

Furthermore, AdamN’s warmup-free operation eliminates the need to tune warmup-related hyperparameters (warmup steps and warmup schedule type), which are often required for stable AdamW training. This trade-off may reduce net tuning complexity in practice.

AdamN’s limits: Sensitivity to β2. AdamN’s nested momentum is effective only within a recommended range (β

2 ∈ [0.1, 0.3]). At high β

2 (≥0.8), compounded smoothing creates excessive inertia, degrading both convergence speed and final accuracy (

Table 19). This sensitivity is a meaningful limitation: users must either (a) stay within the safe range, (b) disable nesting for high-β

2 configurations, or (c) employ a β

2 schedule. We view this as an acceptable trade-off given the strong performance within the recommended range and AdamN’s robustness to other hyperparameters (LR and WD).

We often see that the small β2 acts as a bridle: just enough smoothing to stabilize direction, not so much to dull responsiveness.

This is not a paradox of “smaller step = faster learning”; it is

better directionality. In our notation (19), the effective gain on the fresh gradient scales as follows:

Thus, pushing β2 high (especially with nested EMA) shrinks and lags the update—steps become “polite but late.” With small β2 (≈0.1–0.3), we still denoise the numerator enough to avoid jitter, yet the gain on the new signal remains high, giving crisp, well-aimed moves. That is why we see faster and higher ceilings without overshoot.

Reproducibility checklist: Report: (i) architecture, (ii) epochs, (iii) batch size, (iv) LR schedule, (v) , (vi) WD and its schedule, (vii) gradient clipping, (viii) AMP settings, (ix) hardware, and (x) seed and data augmentations. Save best-val and last-epoch checkpoints; log milestones (50/70/80/90% train/val) with wall-clock.

NLP-specific: tokenizer and casing rules; vocabulary construction split (train-only), BPTT length, corruption/noise settings (if any), and head/mid/tail binning rule (percentile cuts on training frequencies). Save best-val and last-epoch checkpoints; log milestones (e.g., PPL thresholds or accuracy levels) with wall-clock.

9. Conclusions

AdamN adds a principled momentum-of-momentum to the numerator and corrects its combined cold-start bias exactly. This yields a smooth long-memory direction paired with adaptive braking, enabling a fast, warmup-free launch at an Adam-like cost. On CIFAR-100 (main benchmark) and CIFAR-10 (pre-benchmark), AdamN reaches accuracy milestones sooner while matching final accuracy; MNIST/EMNIST show similar patterns.

AdamN’s advantage is not aggressive inertia or huge instLR. Its nested numerator and bias correction produce more reliable, noise-robust estimates of the search direction’s scale, so it tends to take calibrated steps that avoid intermediate overshoot while still moving fast. So, the net effect is calibrated step sizes, not “bigger” step sizes.

AdamN narrows the gap between standard first-order and costly second-order methods. By combining a nested, exactly debiased numerator (long-memory, noise-reduced direction) with Adam-style per-coordinate scaling, AdamN achieves a richer diagonal preconditioning effect that improves stability and early progress at first-order cost. Unlike true second-order optimizers, AdamN does not estimate or invert the Hessian (and thus cannot capture cross-parameter curvature), but empirically it recovers a substantial portion of the practical benefits that make second order attractive.

NLP result: In a word-level transformer on a Wikitext-2-style corpus (with a rare-token stress via training-only corruption and a 10% low-resource variant), AdamN reduced tail perplexity compared to AdamW while using smaller effective learning rates for rare tokens, consistent with more efficient rare-token learning.

Given its simplicity and compatibility (cosine schedules and decoupled WD), AdamN is a compelling default when early progress and stable scaling matter.

9.1. Practical Recommendations

Based on our extensive experiments across vision (CIFAR-10/100, MNIST, EMNIST, and ViT-B/16), NLP (Wikitext-2 and Llama 3.1-8B), and synthetic benchmarks (Rosenbrock), we offer the following guidance for practitioners in

Table 22:

Why β2 ∈ [0.1, 0.3]: Values in this range maintain sufficient

freshness (5–9% of current gradient passes through directly) while providing meaningful smoothing. Higher values create compounded inertia that degrades both speed and final accuracy (see

Section 7,

Table 19).

When to adjust β2:

- -

Noisy gradients (small batches and high augmentation): Consider β2 = 0.2–0.3 for additional smoothing.

- -

Clean gradients (large batches and simple tasks): β2 = 0.1 is optimal.

- -

Never use β2 ≥ 0.5 with nested momentum enabled.

Drop-in usage: For most users, simply replace AdamW with AdamN using the defaults above and remove any warmup schedule. No other changes are required.

9.2. When to Use AdamN—Decision Guidelines

The most beneficial recommendation for AdamN are listed below in

Table 23 and

Table 24:

10. Patent Disclosure

The methods and systems described in this work have been disclosed in a provisional patent application filed with the United States Patent and Trademark Office (USPTO). The application, titled “Nested Double-Smoothing Optimizer For Training Neural Networks,” was filed by the authors’ institution under Application No. 63/942,313. The filing covers the core algorithmic framework, bias-correction mechanisms, and system-level implementations described in this paper.