Large-Scale Sparse Multimodal Multiobjective Optimization via Multi-Stage Search and RL-Assisted Environmental Selection

Abstract

1. Introduction

- 1.

- Dual-Strategy Genetic Operator Based on Improved Hybrid Encoding: To optimize the bin vector and maintain the sparsity of the solutions, a dynamic redistribution strategy of binary vectors based on sparse sensing is proposed. The sparse fuzzy decision variables framework, on the other hand, is tailored for real vectors. It adjusts parameters dynamically based on the sparsity of the solution space, enabling more precise determination of the search step sizes in the fuzzy evolutionary algorithm.

- 2.

- Affinity-Based Elite Strategy: This strategy updates the sets of real vector and binary vectors by calculating affinity using random selection and Mahalanobis distance. By ensuring that real vectors are paired with the most compatible binary vectors, this method increases the likelihood of generating superior offspring solutions that are closer to the Pareto-optimal set.

- 3.

- Adaptive sparse environment selection strategy based on multilayer perceptron (MLP) reinforcement learning: This strategy introduces an MLP-based reinforcement learning mechanism into the environmental selection phase to dynamically model and update solution sparsity. By adaptively adjusting selection pressure according to the learned sparsity distribution and gradient-descent direction information, it effectively balances convergence and diversity, enhances the preservation of sparse Pareto-optimal solutions, and accelerates convergence in large-scale multimodal multiobjective optimization problems.

2. Related Works

2.1. Large-Scale MMOPs

- 1.

- The problem contains at least one local Pareto-optimal solution.

- 2.

- The problem contains at least two equivalent global Pareto-optimal solutions that correspond to the same point on the Pareto front (PF).

2.2. Existing Sparse Large-Scale Multiobjective Algorithms

2.3. Existing Multimodal Multiobjective Algorithms

2.4. Motivation

3. The Proposed MASR-MMEA

3.1. Framework of MASR-MMEA

| Algorithm 1: Framework of MASR-MMEA |

|

| Algorithm 2: Dual-Strategy Genetic Operator |

|

| Algorithm 3: Affinity-Based Elite Strategy |

|

| Algorithm 4: MLP Training |

|

3.2. Dual-Strategy Genetic Operator

| Algorithm 5: Dynamic Redistribution Strategy |

|

| Algorithm 6: Sparsity-Based Adaptive Fuzzy Evolutionary Algorithm |

|

3.2.1. Dynamic Redistribution Strategy

3.2.2. Sparse Fuzzy Decision Variables Framework (SFDV)

3.3. Affinity-Based Elite Strategy

3.4. Adaptive Sparse Environment Selection Strategy Based on MLP

4. Experimental Studies on Benchmark Problems

4.1. Experimental Settings

- 1.

- All experiments in this paper were conducted on a PC with the following configuration: 13th Gen Intel(R) Core(TM) i7-13700F, 32 GB RAM, running the Windows 11 Home operating system (Version 25H2), and MATLAB R2023a. The code for all benchmark problems, evaluation metrics, and comparison algorithms was provided by the PlatEMO platform.

- 2.

- Benchmark Problems: In recent years, multimodal multiobjective optimization problems (MMOPs) have received increasing attention in algorithm testing. However, most existing test problems have several limitations: they often lack scalability, lack sparse Pareto-optimal solutions, and have low-dimensional decision variables that fail to reflect the complexity of real-world problems. As a result, there is a significant shortage of test problems that effectively combine large-scale decision variables, multimodality, and sparsity. To address this gap, the SMMOP1-SMMOP8 test suite proposed by MP-MMEA has emerged as a valuable benchmark for researchers. In this study, we use the SMMOP1-SMMOP8 test suite to assess the effectiveness of our proposed algorithm in solving large-scale MMOPs with sparse solutions.

- 3.

- Parameter Settings: The population sizes for the SMMOP1-SMMOP8 test problems were set based on the number of equivalent Pareto sets (PS). Specifically, the population sizes N were set to 400, 600, and 800 for , 6, and 8, respectively, to ensure sufficient solutions for each equivalent Pareto optimal set. To ensure a fair comparison, the maximum number of evaluations for all multiobjective evolutionary algorithms (MOEAs) was set to 250,000, 400,000, and 500,000 for problems with 100, 200, and 500 decision variables, respectively. For SMMOP1-SMMOP8, the merge and split operations of MASR-MMEA were performed every 10 generations. The initial ACC values were set to 0.4, 0.7, and 0.8 for , 6, and 8, respectively. The compared MOEAs were configured using parameters and genetic operators as suggested in the PlatEMO platform or in the original papers. In the MO_Ring_PSO_SCD algorithm, particle swarm optimization was used to generate offspring, with acceleration coefficients and set to 0.5 and inertia weight W set to 0.1. In DN-NSGA-II, the crowding distance factor was set to half of the population size to maintain an even distribution of solutions and prevent clustering. For SparseEA and MSKEA, simulated binary crossover [52] and polynomial mutation [53] were used to generate offspring, with crossover and mutation probabilities set to 1 and , respectively, and distribution indices set to 20. MP-MMEA’s merge and split operations were conducted every 50 generations, while HHC-MMEA applied hybrid hierarchical clustering every 10 generations.

4.2. Benchmark Algorithms

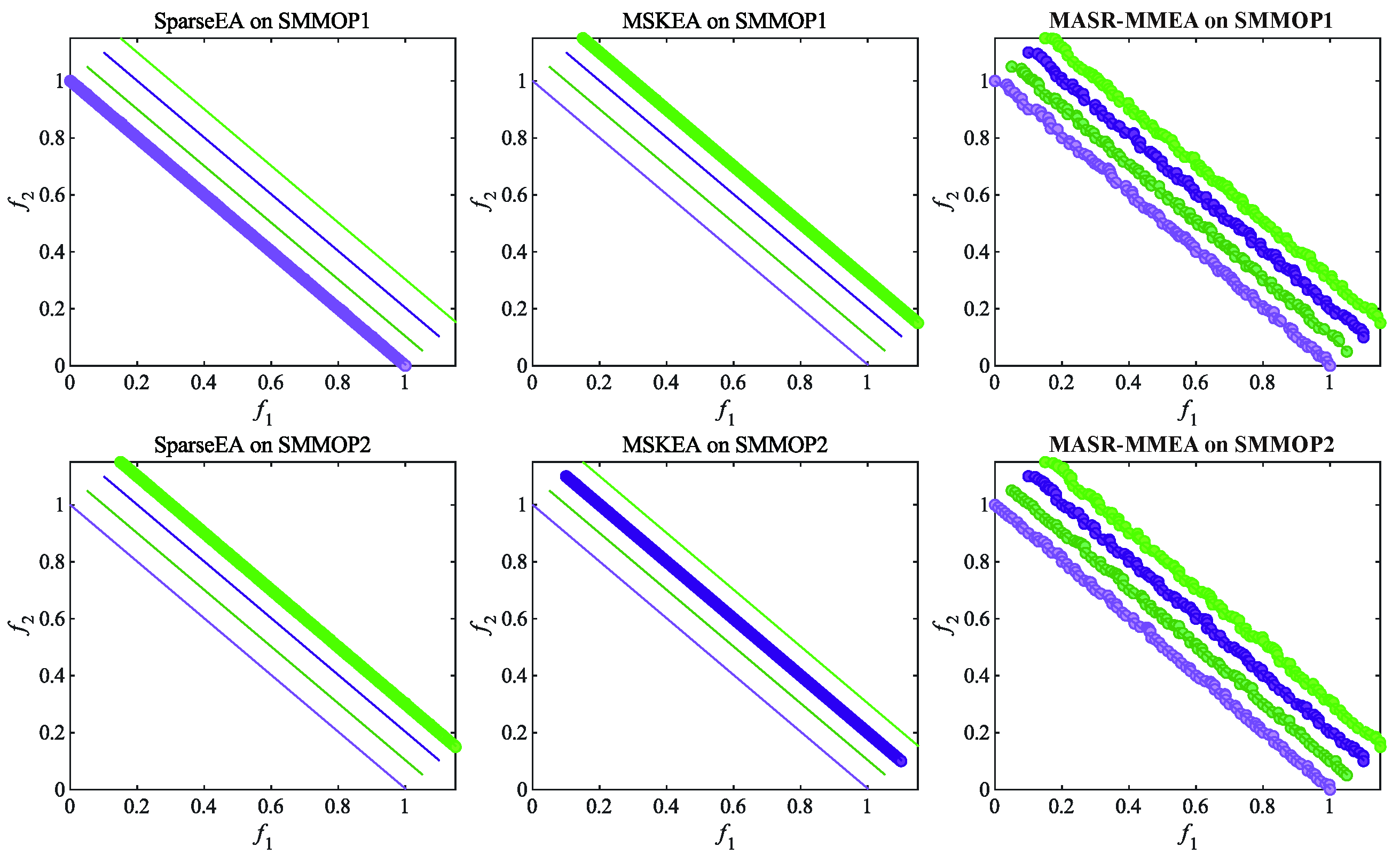

4.3. Comparison Experiments

4.4. Ablation Study

4.4.1. The Effectiveness of the Improved Dual-Strategy Genetic Operator Based on Hybrid Encoding

4.4.2. Effectiveness of the Elite Vector Strategy Based on Mahalanobis Distance Metric

4.4.3. Effectiveness of the Adaptive Sparse Environment Selection Strategy Based on MLP

4.5. Experimental Studies on Real-World Applications

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

Abbreviations

| MOPs | Multiobjective Optimization Problems |

| MMOPs | Multimodal Multiobjective Optimization Problems |

| RL | Reinforcement Learning |

| LSMMOPs | Large-Scale Multimodal Multiobjective Optimization Problems |

| MOEAs | Multiobjective Evolutionary Algorithms |

| MMEAs | Multimodal Multiobjective Evolutionary Algorithms |

| PF | Pareto Front |

| PS | Pareto Set |

| DMs | Decision Makers |

| MLP | Multilayer Perceptron |

| SBX | Simulated Binary Crossover |

| PM | Polynomial Mutation |

| GDV | Gradient-Descent-like Direction Vector |

| SFDV | Sparse Fuzzy Decision Variables |

| IGD | Inverted Generational Distance |

| IGDX | Inverted Generational Distance in Decision Space |

| HV | Hypervolume |

| CN | Critical Node Detection |

| IS | Instance Selection |

| CD | Community Detection |

Appendix A

| Problem | D | MPMMEA | HHCMMEA | MO_Ring_PSO_SCD | DNNSGAII | MASR-MMEA |

|---|---|---|---|---|---|---|

| SMMOP1 | 100 | 3.4262e-1 (2.28e-1) - | 3.0936e-1 (2.88e-1) - | 5.8388e+0 (6.63e-2) - | 3.3931e+0 (1.49e-2) - | 4.2845e-2 (1.16e-2) |

| SMMOP2 | 100 | 3.8698e-1 (6.20e-2) - | 2.1168e-1 (2.15e-1) - | 7.3142e+0 (2.09e-1) - | 6.3899e+0 (6.35e-1) - | 3.6793e-2 (2.83e-2) |

| SMMOP3 | 100 | 6.9891e-1 (4.21e-1) - | 3.0060e-1 (3.09e-1) - | 7.1963e+0 (1.68e-1) - | 6.4346e+0 (7.43e-1) - | 1.3464e-1 (1.41e-1) |

| SMMOP4 | 100 | 3.1727e-1 (1.01e-1) - | 2.8843e-1 (2.27e-1) - | 6.3233e+0 (8.93e-2) - | 3.3693e+0 (1.61e-2) - | 7.5132e-2 (4.85e-2) |

| SMMOP5 | 100 | 3.2076e-1 (8.17e-2) - | 2.3373e-1 (1.36e-1) - | 6.0677e+0 (8.64e-2) - | 3.5518e+0 (4.39e-2) - | 8.0524e-2 (5.11e-2) |

| SMMOP6 | 100 | 5.4760e-1 (4.78e-1) - | 3.5769e-1 (1.71e-1) - | 6.2384e+0 (1.11e-1) - | 3.4437e+0 (1.11e-2) - | 1.5823e-1 (1.46e-1) |

| SMMOP7 | 100 | 6.4131e-1 (5.10e-1) - | 3.8089e-1 (4.02e-1) - | 5.6062e+0 (7.23e-2) - | 3.5394e+0 (3.31e-2) - | 2.1894e-1 (2.58e-1) |

| SMMOP8 | 100 | 1.1029e+0 (6.06e-1) - | 5.5143e-1 (3.97e-1) - | 5.8528e+0 (8.10e-2) - | 3.5313e+0 (2.80e-2) - | 3.7537e-1 (3.41e-1) |

| SMMOP1 | 200 | 7.7396e-1 (1.07e-1) - | 9.2270e-1 (2.52e-1) - | 9.2113e+0 (7.86e-2) - | 4.8336e+0 (2.19e-2) - | 1.2676e-1 (1.62e-1) |

| SMMOP2 | 200 | 8.4577e-1 (1.79e-1) - | 9.2762e-1 (5.19e-1) - | 1.0940e+1 (1.92e-1) - | 1.1671e+1 (7.17e-1) - | 6.7412e-2 (7.19e-2) |

| SMMOP3 | 200 | 1.4738e+0 (4.87e-1) - | 1.1286e+0 (5.13e-1) - | 1.0816e+1 (1.98e-1) - | 1.2181e+1 (7.71e-1) - | 6.5747e-1 (3.40e-1) |

| SMMOP4 | 200 | 9.1303e-1 (1.78e-1) - | 9.5634e-1 (3.72e-1) - | 9.7588e+0 (9.21e-2) - | 4.8240e+0 (2.14e-2) - | 1.7992e-1 (1.20e-1) |

| SMMOP5 | 200 | 8.8605e-1 (1.98e-1) - | 9.4876e-1 (2.38e-1) - | 9.4533e+0 (1.15e-1) - | 5.2524e+0 (5.40e-2) - | 2.2129e-1 (1.73e-1) |

| SMMOP6 | 200 | 1.3005e+0 (4.81e-1) - | 1.3137e+0 (2.95e-1) - | 9.7308e+0 (1.29e-1) - | 4.8713e+0 (6.49e-3) - | 6.4268e-1 (2.39e-1) |

| SMMOP7 | 200 | 2.1195e+0 (7.68e-1) - | 1.0386e+0 (2.47e-1) - | 8.9003e+0 (8.21e-2) - | 5.2633e+0 (3.99e-2) - | 6.9430e-1 (3.60e-1) |

| SMMOP8 | 200 | 2.0776e+0 (6.18e-1) - | 1.7249e+0 (2.32e-1) - | 9.1180e+0 (1.03e-1) - | 5.2816e+0 (3.75e-2) - | 9.8649e-1 (4.67e-1) |

| SMMOP1 | 500 | 2.9461e+0 (4.20e-1) - | 4.5552e+0 (4.20e-1) - | 1.6310e+1 (1.74e-1) - | 7.7364e+0 (5.11e-2) - | 6.9194e-1 (2.22e-1) |

| SMMOP2 | 500 | 2.8773e+0 (5.30e-1) - | 3.8229e+0 (1.17e+0) - | 1.8101e+1 (3.40e-1) - | 2.3144e+1 (5.87e-1) - | 7.6296e-1 (4.71e-1) |

| SMMOP3 | 500 | 3.7201e+0 (9.39e-1) - | 4.6911e+0 (8.10e-1) - | 1.7917e+1 (4.63e-1) - | 2.3862e+1 (6.13e-1) - | 2.0437e+0 (2.94e-1) |

| SMMOP4 | 500 | 3.1952e+0 (2.10e-1) - | 4.5826e+0 (3.72e-1) - | 1.7150e+1 (1.83e-1) - | 7.8032e+0 (7.87e-2) - | 1.1166e+0 (5.99e-1) |

| SMMOP5 | 500 | 3.2697e+0 (1.68e-1) - | 4.7510e+0 (4.57e-1) - | 1.6523e+1 (2.69e-1) - | 9.1196e+0 (1.09e-1) - | 1.1432e+0 (5.25e-1) |

| SMMOP6 | 500 | 3.7158e+0 (8.42e-1) - | 5.1628e+0 (2.23e-1) - | 1.7065e+1 (3.29e-1) - | 7.8978e+0 (7.20e-2) - | 2.2132e+0 (4.33e-1) |

| SMMOP7 | 500 | 4.5286e+0 (1.16e+0) - | 4.3086e+0 (3.64e-1) - | 1.6076e+1 (1.89e-1) - | 8.9965e+0 (6.87e-2) - | 2.1422e+0 (5.08e-1) |

| SMMOP8 | 500 | 4.5375e+0 (6.88e-1) - | 5.5221e+0 (5.57e-1) - | 1.6087e+1 (1.65e-1) - | 9.0192e+0 (7.95e-2) - | 2.7611e+0 (5.83e-1) |

| +/−/= | 0/24/0 | 0/24/0 | 0/24/0 | 0/24/0 | ||

| Problem | D | SparseEA | MSKEA | LMMODE | CMMOGA_DLF | MASR-MMEA |

|---|---|---|---|---|---|---|

| SMMOP1 | 100 | 3.3974e+0 (3.17e-2) - | 3.4412e+0 (8.45e-3) - | 3.5751e+0 (1.10e-1) - | 2.7494e+0 (3.59e-1) - | 4.2845e-2 (1.16e-2) |

| SMMOP2 | 100 | 3.3842e+0 (4.30e-2) - | 3.4416e+0 (9.20e-3) - | 1.1859e+1 (6.61e-1) - | 4.4228e+0 (8.37e-1) - | 3.6793e-2 (2.83e-2) |

| SMMOP3 | 100 | 3.4510e+0 (3.89e-2) - | 3.4675e+0 (2.71e-3) - | 1.1849e+1 (9.03e-1) - | 4.8078e+0 (7.94e-1) - | 1.3464e-1 (1.41e-1) |

| SMMOP4 | 100 | 3.3744e+0 (3.44e-2) - | 3.4361e+0 (1.11e-2) - | 3.6438e+0 (1.19e-1) - | 3.0826e+0 (4.19e-1) - | 7.5132e-2 (4.85e-2) |

| SMMOP5 | 100 | 3.3815e+0 (3.99e-2) - | 3.4315e+0 (1.22e-2) - | 3.6675e+0 (1.65e-1) - | 2.9943e+0 (2.75e-1) - | 8.0524e-2 (5.11e-2) |

| SMMOP6 | 100 | 3.4306e+0 (1.25e-2) - | 3.4662e+0 (3.22e-3) - | 3.7680e+0 (1.09e-1) - | 3.1536e+0 (4.08e-1) - | 1.5823e-1 (1.46e-1) |

| SMMOP7 | 100 | 3.4341e+0 (7.99e-3) - | 3.4689e+0 (1.71e-3) - | 3.8143e+0 (1.91e-1) - | 3.0328e+0 (2.50e-1) - | 2.1894e-1 (2.58e-1) |

| SMMOP8 | 100 | 3.4458e+0 (3.74e-2) - | 3.4704e+0 (9.59e-4) - | 3.7400e+0 (1.21e-1) - | 3.2197e+0 (2.17e-1) - | 3.7537e-1 (3.41e-1) |

| SMMOP1 | 200 | 4.8637e+0 (4.34e-2) - | 4.8829e+0 (6.55e-3) - | 5.6313e+0 (1.38e-1) - | 4.9087e+0 (2.94e-1) - | 1.2676e-1 (1.62e-1) |

| SMMOP2 | 200 | 4.8673e+0 (7.17e-2) - | 4.8825e+0 (8.42e-3) - | 1.5126e+1 (1.01e+0) - | 8.2305e+0 (1.27e+0) - | 6.7412e-2 (7.19e-2) |

| SMMOP3 | 200 | 4.9325e+0 (9.30e-2) - | 4.9136e+0 (3.55e-2) - | 1.5801e+1 (1.08e+0) - | 8.6252e+0 (1.10e+0) - | 6.5747e-1 (3.40e-1) |

| SMMOP4 | 200 | 4.8088e+0 (3.96e-2) - | 4.8800e+0 (6.65e-3) - | 5.7135e+0 (1.74e-1) - | 5.8068e+0 (6.04e-1) - | 1.7992e-1 (1.20e-1) |

| SMMOP5 | 200 | 4.8244e+0 (5.15e-2) - | 4.8775e+0 (1.00e-2) - | 5.6258e+0 (1.38e-1) - | 5.0778e+0 (5.05e-1) - | 2.2129e-1 (1.73e-1) |

| SMMOP6 | 200 | 4.8883e+0 (6.08e-2) - | 4.8969e+0 (9.60e-3) - | 6.0442e+0 (1.57e-1) - | 5.2675e+0 (5.92e-1) - | 6.4268e-1 (2.39e-1) |

| SMMOP7 | 200 | 4.8862e+0 (3.22e-2) - | 4.9204e+0 (4.30e-2) - | 5.9194e+0 (1.43e-1) - | 5.4506e+0 (4.62e-1) - | 6.9430e-1 (3.60e-1) |

| SMMOP8 | 200 | 4.9187e+0 (6.39e-2) - | 4.9121e+0 (2.51e-2) - | 5.6880e+0 (1.86e-1) - | 5.5831e+0 (2.68e-1) - | 9.8649e-1 (4.67e-1) |

| SMMOP1 | 500 | 7.8736e+0 (9.11e-2) - | 7.8164e+0 (8.47e-2) - | 9.9065e+0 (1.52e-1) - | 9.6053e+0 (5.47e-1) - | 6.9194e-1 (2.22e-1) |

| SMMOP2 | 500 | 7.8818e+0 (8.43e-2) - | 7.7813e+0 (6.31e-2) - | 2.1269e+1 (1.22e+0) - | 1.5700e+1 (1.24e+0) - | 7.6296e-1 (4.71e-1) |

| SMMOP3 | 500 | 8.0429e+0 (1.49e-1) - | 7.8448e+0 (7.75e-2) - | 2.0781e+1 (1.24e+0) - | 1.6089e+1 (1.45e+0) - | 2.0437e+0 (2.94e-1) |

| SMMOP4 | 500 | 7.8387e+0 (7.12e-2) - | 7.7967e+0 (8.34e-2) - | 1.0370e+1 (2.64e-1) - | 1.0310e+1 (9.73e-1) - | 1.1166e+0 (5.99e-1) |

| SMMOP5 | 500 | 7.8049e+0 (7.36e-2) - | 7.8057e+0 (8.48e-2) - | 1.0089e+1 (1.66e-1) - | 9.6278e+0 (2.31e-1) - | 1.1432e+0 (5.25e-1) |

| SMMOP6 | 500 | 7.9518e+0 (1.16e-1) - | 7.8973e+0 (7.17e-2) - | 1.0958e+1 (2.02e-1) - | 1.0025e+1 (9.75e-1) - | 2.2132e+0 (4.33e-1) |

| SMMOP7 | 500 | 8.0283e+0 (1.19e-1) - | 7.9004e+0 (8.35e-2) - | 1.0341e+1 (2.08e-1) - | 9.9188e+0 (2.11e-1) - | 2.1422e+0 (5.08e-1) |

| SMMOP8 | 500 | 8.0035e+0 (8.74e-2) - | 7.8974e+0 (8.66e-2) - | 1.0228e+1 (1.73e-1) - | 1.0024e+1 (2.20e-1) - | 2.7611e+0 (5.83e-1) |

| +/−/= | 0/24/0 | 0/24/0 | 0/24/0 | 0/24/0 | ||

| Problem | D | MPMMEA | HHCMMEA | DNNSGAII | MO_Ring_PSO_SCD | MASR-MMEA |

|---|---|---|---|---|---|---|

| SMMOP1 | 100 | 9.7345e-1 (4.48e-1) - | 2.7030e-1 (1.37e-1) - | 3.7502e+0 (1.32e-2) - | 5.9342e+0 (6.46e-2) - | 1.9128e-1 (1.48e-1) |

| SMMOP2 | 100 | 1.0859e+0 (3.11e-1) - | 2.1617e-1 (1.04e-1) = | 5.2651e+0 (5.75e-1) - | 7.5018e+0 (1.81e-1) - | 2.0909e-1 (1.74e-1) |

| SMMOP3 | 100 | 1.7703e+0 (5.04e-1) - | 3.6931e-1 (1.73e-1) + | 5.5116e+0 (6.12e-1) - | 7.3038e+0 (2.21e-1) - | 6.7422e-1 (4.27e-1) |

| SMMOP4 | 100 | 7.1385e-1 (2.84e-1) - | 2.9138e-1 (1.52e-1) - | 3.7206e+0 (1.77e-2) - | 6.3386e+0 (8.97e-2) - | 2.4262e-1 (2.08e-1) |

| SMMOP5 | 100 | 7.0377e-1 (2.44e-1) - | 3.5367e-1 (1.37e-1) - | 3.8341e+0 (3.44e-2) - | 6.0951e+0 (8.99e-2) - | 2.0381e-1 (1.46e-1) |

| SMMOP6 | 100 | 1.0922e+0 (4.66e-1) - | 4.9778e-1 (1.89e-1) = | 3.8124e+0 (1.07e-2) - | 6.3841e+0 (7.58e-2) - | 7.1485e-1 (4.41e-1) |

| SMMOP7 | 100 | 1.9863e+0 (5.51e-1) - | 4.0807e-1 (1.94e-1) + | 3.9332e+0 (3.10e-2) - | 5.8067e+0 (7.46e-2) - | 1.1490e+0 (4.99e-1) |

| SMMOP8 | 100 | 2.0794e+0 (3.88e-1) - | 8.2172e-1 (2.18e-1) + | 3.9447e+0 (2.48e-2) - | 5.9415e+0 (8.22e-2) - | 1.1518e+0 (3.06e-1) |

| SMMOP1 | 200 | 1.7560e+0 (5.83e-1) - | 1.0882e+0 (1.78e-1) - | 9.2870e+0 (6.90e-2) - | 5.3382e+0 (1.73e-2) - | 4.6280e-1 (2.59e-1) |

| SMMOP2 | 200 | 1.7899e+0 (5.25e-1) - | 1.1839e+0 (4.60e-1) - | 1.0917e+1 (2.10e-1) - | 9.9155e+0 (7.59e-1) - | 5.0743e-1 (2.99e-1) |

| SMMOP3 | 200 | 2.9591e+0 (5.50e-1) - | 1.5321e+0 (3.74e-1) = | 1.0860e+1 (2.69e-1) - | 1.0231e+1 (7.72e-1) - | 1.3753e+0 (5.46e-1) |

| SMMOP4 | 200 | 1.2341e+0 (3.67e-1) - | 1.2724e+0 (2.87e-1) - | 9.8350e+0 (6.84e-2) - | 5.3218e+0 (2.23e-2) - | 4.2234e-1 (2.02e-1) |

| SMMOP5 | 200 | 1.5868e+0 (5.55e-1) - | 1.2653e+0 (2.12e-1) - | 9.5371e+0 (5.90e-2) - | 5.6487e+0 (4.92e-2) - | 4.0819e-1 (2.58e-1) |

| SMMOP6 | 200 | 2.2113e+0 (5.01e-1) - | 1.7450e+0 (1.86e-1) - | 9.8589e+0 (1.04e-1) - | 5.3928e+0 (8.82e-3) - | 1.2986e+0 (4.91e-1) |

| SMMOP7 | 200 | 3.2023e+0 (6.43e-1) - | 1.3385e+0 (3.06e-1) + | 9.1777e+0 (1.04e-1) - | 5.7180e+0 (3.10e-2) - | 1.8395e+0 (5.50e-1) |

| SMMOP8 | 200 | 3.3584e+0 (5.88e-1) - | 2.2428e+0 (2.62e-1) - | 9.2691e+0 (7.96e-2) - | 5.7159e+0 (3.68e-2) - | 1.7540e+0 (3.97e-1) |

| SMMOP1 | 500 | 4.3189e+0 (6.26e-1) - | 5.2167e+0 (4.29e-1) - | 1.6374e+1 (2.38e-1) - | 8.4914e+0 (6.36e-2) - | 2.014e+0 (7.90e-1) |

| SMMOP2 | 500 | 4.5447e+0 (7.82e-1) - | 4.4129e+0 (4.37e-1) - | 1.8031e+1 (4.71e-1) - | 2.0719e+1 (8.66e-1) - | 2.1136e+0 (5.80e-1) |

| SMMOP3 | 500 | 5.5325e+0 (7.37e-1) - | 5.0509e+0 (5.51e-1) - | 1.8150e+1 (3.50e-1) - | 2.1238e+1 (8.05e-1) - | 3.5194e+0 (7.87e-1) |

| SMMOP4 | 500 | 4.1007e+0 (5.47e-1) - | 5.3318e+0 (2.57e-1) - | 1.7139e+1 (2.56e-1) - | 8.5364e+0 (5.60e-2) - | 2.0131e+0 (6.07e-1) |

| SMMOP5 | 500 | 4.2686e+0 (5.88e-1) - | 5.3089e+0 (2.22e-1) - | 1.6693e+1 (2.90e-1) - | 9.4947e+0 (1.06e-1) - | 2.0532e+0 (6.00e-1) |

| SMMOP6 | 500 | 5.2012e+0 (7.38e-1) - | 5.4838e+0 (1.19e-1) - | 1.7121e+1 (2.77e-1) - | 8.5726e+0 (2.40e-2) - | 3.2368e+0 (4.60e-1) |

| SMMOP7 | 500 | 6.0529e+0 (6.76e-1) - | 4.9813e+0 (3.42e-1) - | 1.6272e+1 (1.93e-1) - | 9.5697e+0 (7.23e-2) - | 3.7809e+0 (7.22e-1) |

| SMMOP8 | 500 | 6.0207e+0 (5.43e-1) - | 5.7797e+0 (2.65e-1) - | 1.6232e+1 (2.32e-1) - | 9.5594e+0 (7.10e-2) - | 4.0224e+0 (6.43e-1) |

| +/−/= | 0/24/0 | 4/17/3 | 0/24/0 | 0/24/0 | ||

| Problem | D | SparseEA | MSKEA | LMMODE | CMMOGA_DLF | MASR-MMEA |

|---|---|---|---|---|---|---|

| SMMOP1 | 100 | 3.7372e+0 (4.82e-2) - | 3.8017e+0 (1.18e-2) - | 3.6026e+0 (1.39e-1) - | 2.8100e+0 (3.95e-1) - | 1.9128e-1 (1.48e-1) |

| SMMOP2 | 100 | 3.7022e+0 (5.21e-2) - | 3.8027e+0 (1.36e-2) - | 1.1543e+1 (6.58e-1) - | 3.9713e+0 (5.07e-1) - | 2.0909e-1 (1.74e-1) |

| SMMOP3 | 100 | 3.8009e+0 (2.48e-2) - | 3.8362e+0 (1.91e-3) - | 1.1837e+1 (6.39e-1) - | 4.1925e+0 (4.90e-1) - | 6.7422e-1 (4.27e-1) |

| SMMOP4 | 100 | 3.7223e+0 (4.60e-2) - | 3.7989e+0 (9.73e-3) - | 3.8137e+0 (1.88e-1) - | 3.0370e+0 (3.17e-1) - | 2.4262e-1 (2.08e-1) |

| SMMOP5 | 100 | 3.7066e+0 (4.67e-2) - | 3.7958e+0 (1.25e-2) - | 3.8193e+0 (1.74e-1) - | 3.7933e+0 (1.45e-1) - | 2.0381e-1 (1.46e-1) |

| SMMOP6 | 100 | 3.8035e+0 (1.95e-2) - | 3.8336e+0 (2.91e-3) - | 4.1158e+0 (1.01e-1) - | 3.0806e+0 (3.71e-1) - | 7.1485e-1 (4.41e-1) |

| SMMOP7 | 100 | 3.8045e+0 (7.63e-3) - | 3.8382e+0 (1.42e-3) - | 4.0134e+0 (1.54e-1) - | 3.8569e+0 (1.40e-1) - | 1.1490e+0 (4.99e-1) |

| SMMOP8 | 100 | 3.8020e+0 (1.74e-2) - | 3.8393e+0 (1.28e-3) - | 3.7050e+0 (2.26e-1) - | 3.8124e+0 (1.45e-1) - | 1.1518e+0 (3.06e-1) |

| SMMOP1 | 200 | 5.3079e+0 (4.71e-2) - | 5.3994e+0 (9.04e-3) - | 5.5189e+0 (1.15e-1) - | 5.0945e+0 (1.74e-1) - | 4.6280e-1 (2.59e-1) |

| SMMOP2 | 200 | 5.3513e+0 (7.76e-2) - | 5.4024e+0 (6.75e-3) - | 1.5441e+1 (8.50e-1) - | 7.2784e+0 (8.58e-1) - | 5.0743e-1 (2.99e-1) |

| SMMOP3 | 200 | 5.4056e+0 (6.70e-2) - | 5.4269e+0 (2.08e-3) - | 1.5590e+1 (9.41e-1) - | 7.6269e+0 (8.09e-1) - | 1.3753e+0 (5.46e-1) |

| SMMOP4 | 200 | 5.3234e+0 (6.22e-2) - | 5.3912e+0 (1.27e-2) - | 5.8900e+0 (1.55e-1) - | 5.4175e+0 (4.66e-1) - | 4.2234e-1 (2.02e-1) |

| SMMOP5 | 200 | 5.3343e+0 (5.05e-2) - | 5.3996e+0 (1.99e-2) - | 5.6803e+0 (1.40e-1) - | 5.8077e+0 (1.28e-1) - | 4.0819e-1 (2.58e-1) |

| SMMOP6 | 200 | 5.3797e+0 (2.15e-2) - | 5.4239e+0 (2.67e-3) - | 6.2864e+0 (1.22e-1) - | 5.2485e+0 (5.05e-1) - | 1.2986e+0 (4.91e-1) |

| SMMOP7 | 200 | 5.3907e+0 (1.49e-2) - | 5.4329e+0 (1.64e-2) - | 5.9643e+0 (1.36e-1) - | 5.8498e+0 (1.67e-1) - | 1.8395e+0 (5.50e-1) |

| SMMOP8 | 200 | 5.3978e+0 (4.74e-2) - | 5.4297e+0 (1.10e-3) - | 5.5922e+0 (1.78e-1) - | 5.8187e+0 (1.90e-1) - | 1.7540e+0 (3.97e-1) |

| SMMOP1 | 500 | 8.5727e+0 (7.76e-2) - | 8.5758e+0 (2.68e-2) - | 9.5916e+0 (1.59e-1) - | 8.8795e+0 (4.89e-1) - | 2.014e+0 (7.90e-1) |

| SMMOP2 | 500 | 8.5969e+0 (1.12e-1) - | 8.5727e+0 (3.10e-2) - | 2.1420e+1 (1.16e+0) - | 1.2676e+1 (8.66e-1) - | 2.1136e+0 (5.80e-1) |

| SMMOP3 | 500 | 8.7225e+0 (1.25e-1) - | 8.6100e+0 (3.90e-2) - | 2.1546e+1 (1.11e+0) - | 1.2862e+1 (7.11e-1) - | 3.5194e+0 (7.87e-1) |

| SMMOP4 | 500 | 8.5709e+0 (9.21e-2) - | 8.5853e+0 (3.78e-2) - | 1.0421e+1 (2.33e-1) - | 9.1403e+0 (6.91e-1) - | 2.0131e+0 (6.07e-1) |

| SMMOP5 | 500 | 8.5806e+0 (9.14e-2) - | 8.5807e+0 (3.69e-2) - | 9.8896e+0 (1.13e-1) - | 9.3563e+0 (2.31e-1) - | 2.0532e+0 (6.00e-1) |

| SMMOP6 | 500 | 8.6528e+0 (1.15e-1) - | 8.6243e+0 (4.08e-2) - | 1.0924e+1 (2.31e-1) - | 9.4130e+0 (9.06e-1) - | 3.2368e+0 (4.60e-1) |

| SMMOP7 | 500 | 8.7187e+0 (1.19e-1) - | 8.6404e+0 (4.10e-2) - | 1.0113e+1 (1.64e-1) - | 9.2413e+0 (2.55e-1) - | 3.7809e+0 (7.22e-1) |

| SMMOP8 | 500 | 8.6553e+0 (1.04e-1) - | 8.6224e+0 (4.40e-2) - | 9.8571e+0 (1.57e-1) - | 9.5258e+0 (3.24e-1) - | 4.0224e+0 (6.43e-1) |

| +/−/= | 0/24/0 | 0/24/0 | 0/24/0 | 0/24/0 | ||

| Problem | np | D | MPMMEA | HHCMMEA | MO_Ring_PSO_SCD | DNNSGAII | MASR-MMEA |

|---|---|---|---|---|---|---|---|

| SMMOP1 | 500 | 2.9461e+0 (4.20e-1) - | 4.5552e+0 (4.20e-1) - | 1.6310e+1 (1.74e-1) - | 7.7364e+0 (5.11e-2) - | 6.8094e-1 (2.22e-1) | |

| SMMOP2 | 500 | 2.8773e+0 (5.30e-1) - | 3.8229e+0 (1.17e+0) - | 1.8101e+1 (3.40e-1) - | 2.3144e+1 (5.87e-1) - | 7.5096e-1 (4.71e-1) | |

| SMMOP3 | 500 | 3.7201e+0 (9.39e-1) - | 4.6911e+0 (8.10e-1) - | 1.7917e+1 (4.63e-1) - | 2.3862e+1 (6.13e-1) - | 2.0137e+0 (2.94e-1) | |

| SMMOP4 | 4 | 500 | 3.1952e+0 (2.10e-1) - | 4.5826e+0 (3.72e-1) - | 1.7150e+1 (1.83e-1) - | 7.8032e+0 (7.87e-2) - | 1.0266e+0 (5.99e-1) |

| SMMOP5 | 500 | 3.2697e+0 (1.68e-1) - | 4.7510e+0 (4.57e-1) - | 1.6523e+1 (2.69e-1) - | 9.1196e+0 (1.09e-1) - | 1.1532e+0 (5.25e-1) | |

| SMMOP6 | 500 | 3.7158e+0 (8.42e-1) - | 5.1628e+0 (2.23e-1) - | 1.7065e+1 (3.29e-1) - | 7.8978e+0 (7.20e-2) - | 2.1232e+0 (4.33e-1) | |

| SMMOP7 | 500 | 4.5286e+0 (1.16e+0) - | 4.3086e+0 (3.64e-1) - | 1.6076e+1 (1.89e-1) - | 8.9965e+0 (6.87e-2) - | 2.0522e+0 (5.08e-1) | |

| SMMOP8 | 500 | 4.5375e+0 (6.88e-1) - | 5.5221e+0 (5.57e-1) - | 1.6087e+1 (1.65e-1) - | 9.0192e+0 (7.95e-2) - | 2.6711e+0 (5.83e-1) | |

| SMMOP1 | 500 | 4.3189e+0 (6.26e-1) - | 5.2167e+0 (4.29e-1) - | 1.6374e+1 (2.38e-1) - | 8.4914e+0 (6.36e-2) - | 2.1114e+0 (7.90e-1) | |

| SMMOP2 | 500 | 4.5447e+0 (7.82e-1) - | 4.4129e+0 (4.37e-1) - | 1.8031e+1 (4.71e-1) - | 2.0719e+1 (8.66e-1) - | 2.2036e+0 (5.80e-1) | |

| SMMOP3 | 500 | 5.5325e+0 (7.37e-1) - | 5.0509e+0 (5.51e-1) - | 1.8150e+1 (3.50e-1) - | 2.1238e+1 (8.05e-1) - | 3.5194e+0 (7.87e-1) | |

| SMMOP4 | 6 | 500 | 4.1007e+0 (5.47e-1) - | 5.3318e+0 (2.57e-1) - | 1.7139e+1 (2.56e-1) - | 8.5364e+0 (5.60e-2) - | 2.0131e+0 (6.07e-1) |

| SMMOP5 | 500 | 4.2686e+0 (5.88e-1) - | 5.3089e+0 (2.22e-1) - | 1.6693e+1 (2.90e-1) - | 9.4947e+0 (1.06e-1) - | 2.0532e+0 (6.00e-1) | |

| SMMOP6 | 500 | 5.2012e+0 (7.38e-1) - | 5.4838e+0 (1.19e-1) - | 1.7121e+1 (2.77e-1) - | 8.5726e+0 (2.40e-2) - | 3.2368e+0 (4.60e-1) | |

| SMMOP7 | 500 | 6.0529e+0 (6.76e-1) - | 4.9813e+0 (3.42e-1) - | 1.6272e+1 (1.93e-1) - | 9.5697e+0 (7.23e-2) - | 3.7809e+0 (7.22e-1) | |

| SMMOP8 | 500 | 6.0207e+0 (5.43e-1) - | 5.7797e+0 (2.65e-1) - | 1.6232e+1 (2.32e-1) - | 9.5594e+0 (7.10e-2) - | 4.0224e+0 (6.43e-1) | |

| SMMOP1 | 500 | 5.4735e+0 (7.95e-1) - | 5.5678e+0 (2.17e-1) - | 1.6393e+1 (2.29e-1) - | 8.8323e+0 (7.92e-2) - | 3.3729e+0 (5.64e-1) | |

| SMMOP2 | 500 | 5.5305e+0 (5.84e-1) - | 5.1211e+0 (7.04e-1) - | 1.8036e+1 (4.42e-1) - | 1.8420e+1 (7.10e-1) - | 3.2861e+0 (5.67e-1) | |

| SMMOP3 | 500 | 6.7319e+0 (6.50e-1) - | 5.3189e+0 (3.18e-1) - | 1.7989e+1 (5.32e-1) - | 1.9095e+1 (7.18e-1) - | 4.5030e+0 (6.43e-1) | |

| SMMOP4 | 8 | 500 | 4.9510e+0 (4.88e-1) - | 5.6880e+0 (1.79e-1) - | 1.7129e+1 (2.38e-1) - | 8.8994e+0 (6.70e-2) - | 3.3106e+0 (6.69e-1) |

| SMMOP5 | 500 | 2.5692e+0 (7.54e-1) - | 5.6244e+0 (1.90e-1) - | 1.6625e+1 (2.76e-1) - | 8.8543e+0 (8.34e-2) - | 1.2242e+0 (4.30e-1) | |

| SMMOP6 | 500 | 6.1969e+0 (8.43e-1) - | 5.8609e+0 (1.66e-1) - | 1.7184e+1 (2.63e-1) - | 8.9711e+0 (1.85e-2) - | 4.2785e+0 (5.68e-1) | |

| SMMOP7 | 500 | 7.0664e+0 (6.89e-1) - | 5.2678e+0 (2.51e-1) - | 1.6391e+1 (1.62e-1) - | 9.8715e+0 (7.42e-2) - | 5.0512e+0 (4.30e-1) | |

| SMMOP8 | 500 | 6.6190e+0 (7.00e-1) - | 6.0049e+0 (1.41e-1) - | 1.6305e+1 (1.76e-1) - | 9.8741e+0 (6.47e-2) - | 4.7933e+0 (4.88e-1) | |

| +/−/= | 0/24/0 | 0/24/0 | 0/24/0 | 0/24/0 | |||

| Problem | np | D | SparseEA | MSKEA | MASR-MMEA |

|---|---|---|---|---|---|

| SMMOP1 | 500 | 7.8736e+0 (9.11e-2) - | 7.8164e+0 (8.47e-2) - | 6.8094e-1 (2.22e-1) | |

| SMMOP2 | 500 | 7.8818e+0 (8.43e-2) - | 7.7813e+0 (6.31e-2) - | 7.5096e-1 (4.71e-1) | |

| SMMOP3 | 500 | 8.0429e+0 (1.49e-1) - | 7.8448e+0 (7.75e-2) - | 2.0137e+0 (2.94e-1) | |

| SMMOP4 | 4 | 500 | 7.8387e+0 (7.12e-2) - | 7.7967e+0 (8.34e-2) - | 1.0266e+0 (5.99e-1) |

| SMMOP5 | 500 | 7.8049e+0 (7.36e-2) - | 7.8057e+0 (8.48e-2) - | 1.1532e+0 (5.25e-1) | |

| SMMOP6 | 500 | 7.9518e+0 (1.16e-1) - | 7.8973e+0 (7.17e-2) - | 2.1232e+0 (4.33e-1) | |

| SMMOP7 | 500 | 8.0283e+0 (1.19e-1) - | 7.9004e+0 (8.35e-2) - | 2.0522e+0 (5.08e-1) | |

| SMMOP8 | 500 | 8.0035e+0 (8.74e-2) - | 7.8974e+0 (8.66e-2) - | 2.6711e+0 (5.83e-1) | |

| SMMOP1 | 500 | 8.5727e+0 (7.76e-2) - | 8.5758e+0 (2.68e-2) - | 2.1114e+0 (7.90e-1) | |

| SMMOP2 | 500 | 8.5969e+0 (1.12e-1) - | 8.5727e+0 (3.10e-2) - | 2.2036e+0 (5.80e-1) | |

| SMMOP3 | 500 | 8.7225e+0 (1.25e-1) - | 8.6100e+0 (3.90e-2) - | 3.5194e+0 (7.87e-1) | |

| SMMOP4 | 6 | 500 | 8.5709e+0 (9.21e-2) - | 8.5853e+0 (3.78e-2) - | 2.0131e+0 (6.07e-1) |

| SMMOP5 | 500 | 8.5806e+0 (9.14e-2) - | 8.5807e+0 (3.69e-2) - | 2.0532e+0 (6.00e-1) | |

| SMMOP6 | 500 | 8.6528e+0 (1.15e-1) - | 8.6243e+0 (4.08e-2) - | 3.2368e+0 (4.60e-1) | |

| SMMOP7 | 500 | 8.7187e+0 (1.19e-1) - | 8.6404e+0 (4.10e-2) - | 3.7809e+0 (7.22e-1) | |

| SMMOP8 | 500 | 8.6553e+0 (1.04e-1) - | 8.6224e+0 (4.40e-2) - | 4.0224e+0 (6.43e-1) | |

| SMMOP1 | 500 | 8.9960e+0 (9.01e-2) - | 8.9789e+0 (1.56e-2) - | 3.3729e+0 (5.64e-1) | |

| SMMOP2 | 500 | 8.9588e+0 (1.19e-1) - | 8.9790e+0 (1.25e-2) - | 3.2861e+0 (5.67e-1) | |

| SMMOP3 | 500 | 9.0542e+0 (8.19e-2) - | 9.0075e+0 (1.95e-2) - | 4.5030e+0 (6.43e-1) | |

| SMMOP4 | 8 | 500 | 8.9575e+0 (9.63e-2) - | 8.9729e+0 (1.16e-2) - | 3.3106e+0 (6.69e-1) |

| SMMOP5 | 500 | 7.8160e+0 (7.27e-2) - | 7.7468e+0 (3.68e-2) - | 1.2242e+0 (4.30e-1) | |

| SMMOP6 | 500 | 9.0124e+0 (1.23e-1) - | 8.9974e+0 (1.92e-2) - | 4.2785e+0 (5.68e-1) | |

| SMMOP7 | 500 | 9.0454e+0 (1.05e-1) - | 9.0116e+0 (2.08e-2) - | 5.0512e+0 (4.30e-1) | |

| SMMOP8 | 500 | 8.9144e+0 (1.18e-1) - | 9.0075e+0 (1.92e-2) - | 4.7933e+0 (4.88e-1) | |

| +/−/= | 0/24/0 | 0/24/0 |

| Problem | D | MPMMEA | HHCMMEA | MO_Ring_PSO_SCD | MASR-MMEA |

|---|---|---|---|---|---|

| SMMOP1 | 100 | 2.6535e-3 (4.27e-4) + | 1.7463e-3 (3.47e-4) + | 7.4965e-1 (1.08e-2) - | 5.3183e-3 (1.07e-3) |

| SMMOP2 | 100 | 2.9874e-3 (3.67e-4) + | 1.6070e-3 (2.37e-4) + | 2.0301e+0 (2.29e-2) - | 5.1625e-3 (1.29e-3) |

| SMMOP3 | 100 | 4.4374e-3 (1.17e-3) + | 1.7955e-3 (3.54e-4) + | 2.1004e+0 (2.37e-2) - | 1.1246e-2 (5.05e-3) |

| SMMOP4 | 100 | 2.9300e-3 (4.12e-4) + | 2.0064e-3 (7.11e-4) + | 3.6976e-1 (3.89e-3) - | 5.2911e-3 (4.00e-4) |

| SMMOP5 | 100 | 2.8852e-3 (3.97e-4) + | 1.9255e-3 (5.36e-4) + | 3.6174e-1 (5.48e-3) - | 5.2092e-3 (4.08e-4) |

| SMMOP6 | 100 | 3.4504e-3 (6.45e-4) + | 2.8252e-3 (1.43e-3) + | 4.0424e-1 (5.47e-3) - | 8.6154e-3 (1.89e-3) |

| SMMOP7 | 100 | 3.7606e-3 (7.92e-4) + | 2.1633e-3 (4.27e-4) + | 9.3392e-1 (1.51e-2) - | 1.4345e-2 (6.26e-3) |

| SMMOP8 | 100 | 4.6457e-3 (1.95e-3) + | 2.9630e-3 (9.50e-4) + | 8.7713e-1 (1.10e-2) - | 1.5657e-2 (8.50e-3) |

| SMMOP1 | 200 | 3.9339e-3 (4.77e-4) + | 3.3987e-3 (9.29e-4) + | 8.6003e-1 (9.59e-3) - | 6.2139e-3 (8.93e-4) |

| SMMOP2 | 200 | 4.4837e-3 (6.34e-4) + | 2.6846e-3 (7.31e-4) + | 2.0948e+0 (1.34e-2) - | 6.0765e-3 (1.19e-3) |

| SMMOP3 | 200 | 9.0102e-3 (2.42e-3) + | 4.2077e-3 (2.15e-3) + | 2.1756e+0 (1.14e-2) - | 1.1487e-2 (5.33e-3) |

| SMMOP4 | 200 | 3.7343e-3 (4.34e-4) + | 2.9477e-3 (1.07e-3) + | 3.9627e-1 (3.12e-3) - | 6.1050e-3 (2.89e-4) |

| SMMOP5 | 200 | 3.6821e-3 (4.89e-4) + | 3.3440e-3 (1.23e-3) + | 4.2140e-1 (6.19e-3) - | 6.1994e-3 (3.60e-4) |

| SMMOP6 | 200 | 5.2639e-3 (1.36e-3) + | 7.4593e-3 (2.86e-3) = | 4.4210e-1 (5.10e-3) - | 8.5517e-3 (2.89e-3) |

| SMMOP7 | 200 | 6.9965e-3 (3.75e-3) + | 7.2076e-3 (4.09e-3) + | 1.1013e+0 (1.48e-2) - | 1.3756e-2 (6.81e-3) |

| SMMOP8 | 200 | 1.0094e-2 (4.66e-3) + | 1.0388e-2 (3.85e-3) + | 1.0130e+0 (1.24e-2) - | 1.8444e-2 (9.38e-3) |

| SMMOP1 | 500 | 1.0399e-2 (2.40e-3) - | 2.7242e-2 (8.19e-3) - | 1.0258e+0 (1.15e-2) - | 7.4726e-3 (4.14e-4) |

| SMMOP2 | 500 | 9.4700e-3 (2.20e-3) - | 9.6629e-3 (5.28e-3) = | 2.1125e+0 (8.28e-3) - | 7.1695e-3 (7.30e-4) |

| SMMOP3 | 500 | 2.6246e-2 (6.65e-3) - | 4.2189e-2 (1.54e-2) - | 2.2000e+0 (1.08e-2) - | 2.3844e-2 (4.07e-3) |

| SMMOP4 | 500 | 7.8683e-3 (8.12e-4) - | 1.5551e-2 (2.53e-3) - | 4.2728e-1 (2.31e-3) - | 7.2379e-3 (8.79e-4) |

| SMMOP5 | 500 | 8.3333e-3 (1.11e-3) - | 1.6771e-2 (3.62e-3) - | 5.1897e-1 (7.03e-3) - | 7.1165e-3 (7.16e-4) |

| SMMOP6 | 500 | 1.4926e-2 (3.54e-3) = | 3.8779e-2 (7.77e-3) - | 4.7865e-1 (3.06e-3) - | 1.3862e-2 (3.74e-3) |

| SMMOP7 | 500 | 3.3410e-2 (7.67e-3) = | 6.9908e-2 (1.84e-2) - | 1.3719e+0 (1.80e-2) - | 2.9276e-2 (9.06e-3) |

| SMMOP8 | 500 | 3.3146e-2 (5.21e-3) = | 5.7682e-2 (1.53e-2) - | 1.2550e+0 (1.26e-2) - | 3.3033e-2 (9.66e-3) |

| +/−/= | 24/0/0 | 24/0/0 | 24/0/0 | ||

| Problem | D | DNNSGAII | SparseEA | MSKEA | MASR-MMEA |

|---|---|---|---|---|---|

| SMMOP1 | 100 | 2.6018e-3 (8.34e-5) + | 1.1972e-3 (9.51e-5) + | 9.2136e-4 (2.17e-6) + | 5.3183e-3 (1.07e-3) |

| SMMOP2 | 100 | 1.0816e-1 (3.13e-2) - | 1.4263e-3 (2.11e-4) + | 9.2144e-4 (1.87e-6) + | 5.1625e-3 (1.29e-3) |

| SMMOP3 | 100 | 1.1100e-1 (3.72e-2) - | 1.7380e-3 (8.83e-4) + | 9.2150e-4 (2.63e-6) + | 1.1246e-2 (5.05e-3) |

| SMMOP4 | 100 | 3.0921e-3 (1.30e-4) + | 1.2029e-3 (3.63e-5) + | 1.0285e-3 (9.18e-6) + | 5.2911e-3 (4.00e-4) |

| SMMOP5 | 100 | 6.5908e-3 (6.86e-4) - | 1.2203e-3 (3.17e-5) + | 1.0269e-3 (8.44e-6) + | 5.2092e-3 (4.08e-4) |

| SMMOP6 | 100 | 2.9585e-3 (1.33e-4) + | 1.2136e-3 (4.85e-5) + | 1.0264e-3 (9.06e-6) + | 8.6154e-3 (1.89e-3) |

| SMMOP7 | 100 | 8.6335e-3 (1.42e-3) + | 1.6861e-3 (1.90e-4) + | 1.0195e-3 (4.10e-6) + | 1.4345e-2 (6.26e-3) |

| SMMOP8 | 100 | 8.6800e-3 (1.27e-3) + | 2.2438e-3 (1.06e-3) + | 1.0193e-3 (3.85e-6) + | 1.5657e-2 (8.50e-3) |

| SMMOP1 | 200 | 2.7808e-3 (7.43e-5) + | 1.5171e-3 (6.07e-4) + | 9.2137e-4 (2.53e-6) + | 6.2139e-3 (8.93e-4) |

| SMMOP2 | 200 | 2.0950e-1 (3.28e-2) - | 1.7347e-3 (3.43e-4) + | 9.2096e-4 (2.89e-6) + | 6.0765e-3 (1.19e-3) |

| SMMOP3 | 200 | 2.3633e-1 (3.70e-2) - | 5.6382e-3 (2.31e-3) + | 1.4051e-3 (1.84e-3) + | 1.1487e-2 (5.33e-3) |

| SMMOP4 | 200 | 3.2798e-3 (1.21e-4) + | 1.3327e-3 (3.93e-5) + | 1.0257e-3 (9.23e-6) + | 6.1050e-3 (2.89e-4) |

| SMMOP5 | 200 | 8.7662e-3 (6.38e-4) - | 1.3675e-3 (4.87e-5) + | 1.0258e-3 (7.43e-6) + | 6.1994e-3 (3.60e-4) |

| SMMOP6 | 200 | 3.1730e-3 (1.03e-4) + | 1.9956e-3 (1.21e-3) + | 1.0281e-3 (1.02e-5) + | 8.5517e-3 (2.89e-3) |

| SMMOP7 | 200 | 1.7260e-2 (1.14e-3) = | 2.1939e-3 (8.24e-4) + | 2.0686e-3 (3.20e-3) + | 1.3756e-2 (6.81e-3) |

| SMMOP8 | 200 | 1.8020e-2 (1.08e-3) = | 3.6776e-3 (2.01e-3) + | 1.1782e-3 (8.77e-4) + | 1.8444e-2 (9.38e-3) |

| SMMOP1 | 500 | 4.2043e-3 (3.89e-4) + | 4.6775e-3 (1.89e-3) + | 1.6543e-3 (1.09e-3) + | 7.4726e-3 (4.14e-4) |

| SMMOP2 | 500 | 3.6411e-1 (2.08e-2) - | 4.9703e-3 (1.54e-3) + | 1.1748e-3 (4.28e-4) + | 7.1695e-3 (7.30e-4) |

| SMMOP3 | 500 | 3.9087e-1 (2.31e-2) - | 1.8315e-2 (4.15e-3) + | 2.8355e-3 (1.89e-3) + | 2.3844e-2 (4.07e-3) |

| SMMOP4 | 500 | 5.0755e-3 (5.40e-4) + | 2.7394e-3 (5.78e-4) + | 1.2338e-3 (3.42e-4) + | 7.2379e-3 (8.79e-4) |

| SMMOP5 | 500 | 1.7423e-2 (7.96e-4) - | 2.5422e-3 (6.70e-4) + | 1.2605e-3 (3.81e-4) + | 7.1165e-3 (7.16e-4) |

| SMMOP6 | 500 | 5.7934e-3 (7.92e-4) + | 5.7592e-3 (1.74e-3) + | 2.8044e-3 (1.63e-3) + | 1.3862e-2 (3.74e-3) |

| SMMOP7 | 500 | 3.4293e-2 (1.64e-3) - | 1.7044e-2 (6.47e-3) + | 6.9944e-3 (5.85e-3) + | 2.9276e-2 (9.06e-3) |

| SMMOP8 | 500 | 3.5284e-2 (2.23e-3) = | 1.5129e-2 (3.58e-3) + | 3.5752e-3 (2.13e-3) + | 3.3033e-2 (9.66e-3) |

| +/−/= | 12/9/3 | 24/0/0 | 24/0/0 | 0/24/0 | |

References

- Han, Y.; Gong, D.; Jin, Y.; Pan, Q. Evolutionary Multiobjective Blocking Lot-Streaming Flow Shop Scheduling with Machine Breakdowns. IEEE Trans. Cybern. 2019, 49, 184–197. [Google Scholar] [CrossRef] [PubMed]

- Babaee Tirkolaee, E.; Goli, A.; Weber, G.W. Fuzzy Mathematical Programming and Self-Adaptive Artificial Fish Swarm Algorithm for Just-in-Time Energy-Aware Flow Shop Scheduling Problem with Outsourcing Option. IEEE Trans. Fuzzy Syst. 2020, 28, 2772–2783. [Google Scholar] [CrossRef]

- Chen, M.R.; Zeng, G.Q.; Lu, K.D. A many-objective population extremal optimization algorithm with an adaptive hybrid mutation operation. Inf. Sci. 2019, 498, 62–90. [Google Scholar] [CrossRef]

- Yue, C.; Qu, B.; Liang, J. A Multiobjective Particle Swarm Optimizer Using Ring Topology for Solving Multimodal Multiobjective Problems. IEEE Trans. Evol. Comput. 2018, 22, 805–817. [Google Scholar] [CrossRef]

- Abdel-Basset, M.; Mohamed, R.; Mirjalili, S. A novel Whale Optimization Algorithm integrated with Nelder–Mead simplex for multi-objective optimization problems. Knowl.-Based Syst. 2021, 212, 106619. [Google Scholar] [CrossRef]

- Deb, K.; Pratap, A.; Agarwal, S.; Meyarivan, T. A fast and elitist multiobjective genetic algorithm: NSGA-II. IEEE Trans. Evol. Comput. 2002, 6, 182–197. [Google Scholar] [CrossRef]

- Zhang, Q.; Li, H. MOEA/D: A Multiobjective Evolutionary Algorithm Based on Decomposition. IEEE Trans. Evol. Comput. 2007, 11, 712–731. [Google Scholar] [CrossRef]

- Liu, Y.; Yen, G.G.; Gong, D. A Multimodal Multiobjective Evolutionary Algorithm Using Two-Archive and Recombination Strategies. IEEE Trans. Evol. Comput. 2019, 23, 660–674. [Google Scholar] [CrossRef]

- Rudolph, G.; Naujoks, B.; Preuss, M. Capabilities of EMOA to Detect and Preserve Equivalent Pareto Subsets. In Evolutionary Multi-Criterion Optimization; Obayashi, S., Deb, K., Poloni, C., Hiroyasu, T., Murata, T., Eds.; Springer: Berlin/Heidelberg, Germany, 2007; pp. 36–50. [Google Scholar]

- Schutze, O.; Vasile, M.; Coello, C.A.C. Computing the Set of Epsilon-Efficient Solutions in Multiobjective Space Mission Design. J. Aerosp. Comput. Information, Commun. 2011, 8, 53–70. [Google Scholar] [CrossRef]

- Kudo, F.; Yoshikawa, T.; Furuhashi, T. A study on analysis of design variables in Pareto solutions for conceptual design optimization problem of hybrid rocket engine. In Proceedings of the 2011 IEEE Congress of Evolutionary Computation (CEC), New Orleans, LA, USA, 5–8 June 2011; pp. 2558–2562. [Google Scholar] [CrossRef]

- Sebag, M.; Tarrisson, N.; Teytaud, O.; Lefevre, J.; Baillet, S. A multi-objective multi-modal optimization approach for mining stable spatio-temporal patterns. In Proceedings of the 19th International Joint Conference on Artificial Intelligence, San Francisco, CA, USA, 30 July–5 August 2005; IJCAI’05. pp. 859–864. [Google Scholar]

- Tanabe, R.; Ishibuchi, H. A Review of Evolutionary Multimodal Multiobjective Optimization. IEEE Trans. Evol. Comput. 2020, 24, 193–200. [Google Scholar] [CrossRef]

- Deb, K.; Tiwari, S. Omni-optimizer: A Procedure for Single and Multi-objective Optimization. In Evolutionary Multi-Criterion Optimization; Coello, C.A., Hernández Aguirre, A., Zitzler, E., Eds.; Springer: Berlin/Heidelberg, Germany, 2005; pp. 47–61. [Google Scholar]

- Deb, K.; Tiwari, S. Omni-optimizer: A generic evolutionary algorithm for single and multi-objective optimization. Eur. J. Oper. Res. 2008, 185, 1062–1087. [Google Scholar] [CrossRef]

- Liu, Y.; Ishibuchi, H.; Nojima, Y.; Masuyama, N.; Shang, K. A Double-Niched Evolutionary Algorithm and Its Behavior on Polygon-Based Problems. In Parallel Problem Solving from Nature—PPSN XV; Auger, A., Fonseca, C.M., Lourenço, N., Machado, P., Paquete, L., Whitley, D., Eds.; Springer: Cham, Switzerland, 2018; pp. 262–273. [Google Scholar]

- Liang, J.J.; Yue, C.T.; Qu, B.Y. Multimodal multi-objective optimization: A preliminary study. In Proceedings of the 2016 IEEE Congress on Evolutionary Computation (CEC), Vancouver, BC, Canada, 24–29 July 2016; pp. 2454–2461. [Google Scholar] [CrossRef]

- Liu, Y.; Ishibuchi, H.; Yen, G.G.; Nojima, Y.; Masuyama, N. Handling Imbalance Between Convergence and Diversity in the Decision Space in Evolutionary Multimodal Multiobjective Optimization. IEEE Trans. Evol. Comput. 2020, 24, 551–565. [Google Scholar] [CrossRef]

- Li, W.; Zhang, T.; Wang, R.; Ishibuchi, H. Weighted Indicator-Based Evolutionary Algorithm for Multimodal Multiobjective Optimization. IEEE Trans. Evol. Comput. 2021, 25, 1064–1078. [Google Scholar] [CrossRef]

- Tian, Y.; Zheng, X.; Zhang, X.; Jin, Y. Efficient Large-Scale Multiobjective Optimization Based on a Competitive Swarm Optimizer. IEEE Trans. Cybern. 2020, 50, 3696–3708. [Google Scholar] [CrossRef] [PubMed]

- Yue, C.T.; Liang, J.J.; Qu, B.Y.; Yu, K.J.; Song, H. Multimodal Multiobjective Optimization in Feature Selection. In Proceedings of the 2019 IEEE Congress on Evolutionary Computation (CEC), Wellington, New Zealand, 10–13 June 2019; pp. 302–309. [Google Scholar] [CrossRef]

- Zoph, B.; Le, Q.V. Neural Architecture Search with Reinforcement Learning. arXiv 2016, arXiv:1611.01578. [Google Scholar]

- Jin, Y.; Sendhoff, B. Pareto-Based Multiobjective Machine Learning: An Overview and Case Studies. IEEE Trans. Syst. Man Cybern. Part C (Appl. Rev.) 2008, 38, 397–415. [Google Scholar] [CrossRef]

- Ma, X.; Liu, F.; Qi, Y.; Wang, X.; Li, L.; Jiao, L.; Yin, M.; Gong, M. A Multiobjective Evolutionary Algorithm Based on Decision Variable Analyses for Multiobjective Optimization Problems with Large-Scale Variables. IEEE Trans. Evol. Comput. 2016, 20, 275–298. [Google Scholar] [CrossRef]

- Zhang, X.; Tian, Y.; Cheng, R.; Jin, Y. A Decision Variable Clustering-Based Evolutionary Algorithm for Large-Scale Many-Objective Optimization. IEEE Trans. Evol. Comput. 2018, 22, 97–112. [Google Scholar] [CrossRef]

- Tian, Y.; Lu, C.; Zhang, X.; Cheng, F.; Jin, Y. A Pattern Mining-Based Evolutionary Algorithm for Large-Scale Sparse Multiobjective Optimization Problems. IEEE Trans. Cybern. 2022, 52, 6784–6797. [Google Scholar] [CrossRef] [PubMed]

- Tian, Y.; Lu, C.; Zhang, X.; Tan, K.C.; Jin, Y. Solving Large-Scale Multiobjective Optimization Problems with Sparse Optimal Solutions via Unsupervised Neural Networks. IEEE Trans. Cybern. 2021, 51, 3115–3128. [Google Scholar] [CrossRef]

- Tian, Y.; Zhang, X.; Wang, C.; Jin, Y. An Evolutionary Algorithm for Large-Scale Sparse Multiobjective Optimization Problems. IEEE Trans. Evol. Comput. 2020, 24, 380–393. [Google Scholar] [CrossRef]

- Qi, S.; Zou, J.; Yang, S.; Jin, Y.; Zheng, J.; Yang, X. A self-exploratory competitive swarm optimization algorithm for large-scale multiobjective optimization. Inf. Sci. 2022, 609, 1601–1620. [Google Scholar] [CrossRef]

- Tian, Y.; Liu, R.; Zhang, X.; Ma, H.; Tan, K.C.; Jin, Y. A Multipopulation Evolutionary Algorithm for Solving Large-Scale Multimodal Multiobjective Optimization Problems. IEEE Trans. Evol. Comput. 2021, 25, 405–418. [Google Scholar] [CrossRef]

- Ding, Z.; Cao, L.; Chen, L.; Sun, D.; Zhang, X.; Tao, Z. Large-scale multimodal multiobjective evolutionary optimization based on hybrid hierarchical clustering. Knowl.-Based Syst. 2023, 266, 110398. [Google Scholar] [CrossRef]

- Ioannis Giagkiozis, R.C.P.; Fleming, P.J. An overview of population-based algorithms for multi-objective optimisation. Int. J. Syst. Sci. 2015, 46, 1572–1599. [Google Scholar] [CrossRef]

- Ding, Z.; Chen, L.; Sun, D.; Zhang, X. A multi-stage knowledge-guided evolutionary algorithm for large-scale sparse multi-objective optimization problems. Swarm Evol. Comput. 2022, 73, 101119. [Google Scholar] [CrossRef]

- Li, W.; Yao, X.; Zhang, T.; Wang, R.; Wang, L. Hierarchy Ranking Method for Multimodal Multiobjective Optimization with Local Pareto Fronts. IEEE Trans. Evol. Comput. 2023, 27, 98–110. [Google Scholar] [CrossRef]

- Zou, J.; Yang, X.; Deng, Q.; Liu, Y.; Xia, Y.; Wu, Z. A grid self-adaptive exploration-based algorithm for multimodal multiobjective optimization. Appl. Soft Comput. 2024, 166, 112153. [Google Scholar] [CrossRef]

- Li, G.; Li, W.; He, L.; Gao, C. A niching differential evolution with Hilbert curve for multimodal multi-objective optimization. Swarm Evol. Comput. 2025, 95, 101952. [Google Scholar] [CrossRef]

- Yang, C.; Wu, T.; Ji, J. Two-stage species conservation for multimodal multi-objective optimization with local Pareto sets. Inf. Sci. 2023, 639, 118990. [Google Scholar] [CrossRef]

- Cao, J.; Liu, Q.; Chen, Z.; Zhang, J.; Qi, Z. Dual-space distribution metric-based evolutionary algorithm for multimodal multi-objective optimization. Expert Syst. Appl. 2025, 262, 125596. [Google Scholar] [CrossRef]

- Liu, H.; Deng, S.; Li, K.; Shi, H.; Li, M. Enhanced goal direction and remodeling of population distributions multimodal multi-objective evolutionary algorithm. Expert Syst. Appl. 2026, 295, 128913. [Google Scholar] [CrossRef]

- Liu, Z.; Yang, Y.; Cao, J.; Zhang, J.; Chen, Z.; Liu, Q. A coevolutionary algorithm using Self-organizing map approach for multimodal multi-objective optimization. Appl. Soft Comput. 2024, 164, 111954. [Google Scholar] [CrossRef]

- Wu, L.; Zhao, X.; Ye, L.; Qiao, Z.; Zuo, X. Fuzzy clustering-based large-scale multimodal multi-objective differential evolution algorithm. Swarm Evol. Comput. 2025, 93, 101856. [Google Scholar] [CrossRef]

- Yue, C.; Wu, W.; Liang, J.; Bi, Y.; Yu, K.; Chen, K.; Guo, W. An improved generalized evolutionary algorithm for constrained multimodal multiobjective optimization. Complex Intell. Syst. 2026, 12, 48. [Google Scholar] [CrossRef]

- Wei, Z.; Gao, W.; Gong, M.; Yen, G.G. A Bi-Objective Evolutionary Algorithm for Multimodal Multiobjective Optimization. IEEE Trans. Evol. Comput. 2024, 28, 168–177. [Google Scholar] [CrossRef]

- Tanabe, R.; Ishibuchi, H. A Decomposition-Based Evolutionary Algorithm for Multi-modal Multi-objective Optimization. In Parallel Problem Solving from Nature—PPSN XV; Auger, A., Fonseca, C.M., Lourenço, N., Machado, P., Paquete, L., Whitley, D., Eds.; Springer: Cham, Switzerland, 2018; pp. 249–261. [Google Scholar]

- Tanabe, R.; Ishibuchi, H. A Framework to Handle Multimodal Multiobjective Optimization in Decomposition-Based Evolutionary Algorithms. IEEE Trans. Evol. Comput. 2020, 24, 720–734. [Google Scholar] [CrossRef]

- Sun, Y.; Zhang, S. A decomposition and dynamic niching distance-based dual elite subpopulation evolutionary algorithm for multimodal multiobjective optimization. Expert Syst. Appl. 2023, 231, 120738. [Google Scholar] [CrossRef]

- Parsons, L.; Haque, E.; Liu, H. Subspace clustering for high dimensional data: A review. SIGKDD Explor. Newsl. 2004, 6, 90–105. [Google Scholar] [CrossRef]

- Zhang, C.; Li, H.; Long, S.; Yue, X.; Ouyang, H.; Zhu, H.; Li, S. MOEA/D-BDN: Multimodal multi-objective evolutionary algorithm based on bi-dynamic niche strategy and adaptive weight decomposition. Swarm Evol. Comput. 2025, 99, 102171. [Google Scholar] [CrossRef]

- Yang, X.; Zou, J.; Yang, S.; Zheng, J.; Liu, Y. A Fuzzy Decision Variables Framework for Large-Scale Multiobjective Optimization. IEEE Trans. Evol. Comput. 2023, 27, 445–459. [Google Scholar] [CrossRef]

- Wang, S.T.; Zheng, J.H.; Liu, Y.; Zou, J.; Yang, S.X. An extended fuzzy decision variables framework for solving large-scale multiobjective optimization problems. Inf. Sci. 2023, 643, 119221. [Google Scholar] [CrossRef]

- Liu, S.; Li, J.; Lin, Q.; Tian, Y.; Tan, K.C. Learning to Accelerate Evolutionary Search for Large-Scale Multiobjective Optimization. IEEE Trans. Evol. Comput. 2023, 27, 67–81. [Google Scholar] [CrossRef]

- Deb, K.; Agrawal, R.B. Simulated Binary Crossover for Continuous Search Space. Complex Syst. 1995, 9, 115–148. [Google Scholar]

- Deb, K.; Goyal, M. A combined genetic adaptive search (GeneAS) for engineering design. Comput. Sci. Inform. 1996, 26, 30–45. [Google Scholar]

- Zhou, A.; Zhang, Q.; Jin, Y. Approximating the Set of Pareto-Optimal Solutions in Both the Decision and Objective Spaces by an Estimation of Distribution Algorithm. IEEE Trans. Evol. Comput. 2009, 13, 1167–1189. [Google Scholar] [CrossRef]

- Bosman, P.; Thierens, D. The balance between proximity and diversity in multiobjective evolutionary algorithms. IEEE Trans. Evol. Comput. 2003, 7, 174–188. [Google Scholar] [CrossRef]

- Zitzler, E.; Thiele, L. Multiobjective evolutionary algorithms: A comparative case study and the strength Pareto approach. IEEE Trans. Evol. Comput. 1999, 3, 257–271. [Google Scholar] [CrossRef]

- Lalou, M.; Tahraoui, M.A.; Kheddouci, H. The Critical Node Detection Problem in networks: A survey. Comput. Sci. Rev. 2018, 28, 92–117. [Google Scholar] [CrossRef]

- Verbiest, N.; Derrac, J.; Cornelis, C.; García, S.; Herrera, F. Evolutionary wrapper approaches for training set selection as preprocessing mechanism for support vector machines: Experimental evaluation and support vector analysis. Appl. Soft Comput. 2016, 38, 10–22. [Google Scholar] [CrossRef]

- Zhang, L.; Pan, H.; Su, Y.; Zhang, X.; Niu, Y. A Mixed Representation-Based Multiobjective Evolutionary Algorithm for Overlapping Community Detection. IEEE Trans. Cybern. 2017, 47, 2703–2716. [Google Scholar] [CrossRef] [PubMed]

| Problem | D | MPMMEA | HHCMMEA | MO_Ring_PSO_SCD | DNNSGAII | SparseEA | MSKEA | LMMODE | CMMOGA_ DLF | MASR-MMEA |

|---|---|---|---|---|---|---|---|---|---|---|

| SMMOP1 | 100 | 3.4262e-1 (2.28e-1) - | 3.0936e-1 (2.88e-1) - | 5.8388e+0 (6.63e-2) - | 3.3931e+0 (1.49e-2) - | 3.3974e+0 (3.17e-2) - | 3.4412e+0 (8.45e-3) - | 3.5751e+0 (1.10e-1) - | 2.7494e+0 (3.59e-1) - | 4.2845e-2 (1.16e-2) |

| SMMOP2 | 100 | 3.8698e-1 (6.20e-2) - | 2.1168e-1 (2.15e-1) - | 7.3142e+0 (2.09e-1) - | 6.3899e+0 (6.35e-1) - | 3.3842e+0 (4.30e-2) - | 3.4416e+0 (9.20e-3) - | 1.1859e+1 (6.61e-1) - | 4.4228e+0 (8.37e-1) - | 3.6793e-2 (2.83e-2) |

| SMMOP3 | 100 | 6.9891e-1 (4.21e-1) - | 3.0060e-1 (3.09e-1) - | 7.1963e+0 (1.68e-1) - | 6.4346e+0 (7.43e-1) - | 3.4510e+0 (3.89e-2) - | 3.4675e+0 (2.71e-3) - | 1.1849e+1 (9.03e-1) - | 4.8078e+0 (7.94e-1) - | 1.3464e-1 (1.41e-1) |

| SMMOP4 | 100 | 3.1727e-1 (1.01e-1) - | 2.8843e-1 (2.27e-1) - | 6.3233e+0 (8.93e-2) - | 3.3693e+0 (1.61e-2) - | 3.3744e+0 (3.44e-2) - | 3.4361e+0 (1.11e-2) - | 3.6438e+0 (1.19e-1) - | 3.0826e+0 (4.19e-1) - | 7.5132e-2 (4.85e-2) |

| SMMOP5 | 100 | 3.2076e-1 (8.17e-2) - | 2.3373e-1 (1.36e-1) - | 6.0677e+0 (8.64e-2) - | 3.5518e+0 (4.39e-2) - | 3.3815e+0 (3.99e-2) - | 3.4315e+0 (1.22e-2) - | 3.6675e+0 (1.65e-1) - | 2.9943e+0 (2.75e-1) - | 8.0524e-2 (5.11e-2) |

| SMMOP6 | 100 | 5.4760e-1 (4.78e-1) - | 3.5769e-1 (1.71e-1) - | 6.2384e+0 (1.11e-1) - | 3.4437e+0 (1.11e-2) - | 3.4306e+0 (1.25e-2) - | 3.4662e+0 (3.22e-3) - | 3.7680e+0 (1.09e-1) - | 3.1536e+0 (4.08e-1) - | 1.5823e-1 (1.46e-1) |

| SMMOP7 | 100 | 6.4131e-1 (5.10e-1) - | 3.8089e-1 (4.02e-1) - | 5.6062e+0 (7.23e-2) - | 3.5394e+0 (3.31e-2) - | 3.4341e+0 (7.99e-3) - | 3.4689e+0 (1.71e-3) - | 3.8143e+0 (1.91e-1) - | 3.0328e+0 (2.50e-1) - | 2.1894e-1 (2.58e-1) |

| SMMOP8 | 100 | 1.1029e+0 (6.06e-1) - | 5.5143e-1 (3.97e-1) - | 5.8528e+0 (8.10e-2) - | 3.5313e+0 (2.80e-2) - | 3.4458e+0 (3.74e-2) - | 3.4704e+0 (9.59e-4) - | 3.7400e+0 (1.21e-1) - | 3.2197e+0 (2.17e-1) - | 3.7537e-1 (3.41e-1) |

| SMMOP1 | 200 | 7.7396e-1 (1.07e-1) - | 9.2270e-1 (2.52e-1) - | 9.2113e+0 (7.86e-2) - | 4.8336e+0 (2.19e-2) - | 4.8637e+0 (4.34e-2) - | 4.8829e+0 (6.55e-3) - | 5.6313e+0 (1.38e-1) - | 4.9087e+0 (2.94e-1) - | 1.2676e-1 (1.62e-1) |

| SMMOP2 | 200 | 8.4577e-1 (1.79e-1) - | 9.2762e-1 (5.19e-1) - | 1.0940e+1 (1.92e-1) - | 1.1671e+1 (7.17e-1) - | 4.8673e+0 (7.17e-2) - | 4.8825e+0 (8.42e-3) - | 1.5126e+1 (1.01e+0) - | 8.2305e+0 (1.27e+0) - | 6.7412e-2 (7.19e-2) |

| SMMOP3 | 200 | 1.4738e+0 (4.87e-1) - | 1.1286e+0 (5.13e-1) - | 1.0816e+1 (1.98e-1) - | 1.2181e+1 (7.71e-1) - | 4.9325e+0 (9.30e-2) - | 4.9136e+0 (3.55e-2) - | 1.5801e+1 (1.08e+0) - | 8.6252e+0 (1.10e+0) - | 6.5747e-1 (3.40e-1) |

| SMMOP4 | 200 | 9.1303e-1 (1.78e-1) - | 9.5634e-1 (3.72e-1) - | 9.7588e+0 (9.21e-2) - | 4.8240e+0 (2.14e-2) - | 4.8088e+0 (3.96e-2) - | 4.8800e+0 (6.65e-3) - | 5.7135e+0 (1.74e-1) - | 5.8068e+0 (6.04e-1) - | 1.7992e-1 (1.20e-1) |

| SMMOP5 | 200 | 8.8605e-1 (1.98e-1) - | 9.4876e-1 (2.38e-1) - | 9.4533e+0 (1.15e-1) - | 5.2524e+0 (5.40e-2) - | 4.8244e+0 (5.15e-2) - | 4.8775e+0 (1.00e-2) - | 5.6258e+0 (1.38e-1) - | 5.0778e+0 (5.05e-1) - | 2.2129e-1 (1.73e-1) |

| SMMOP6 | 200 | 1.3005e+0 (4.81e-1) - | 1.3137e+0 (2.95e-1) - | 9.7308e+0 (1.29e-1) - | 4.8713e+0 (6.49e-3) - | 4.8883e+0 (6.08e-2) - | 4.8969e+0 (9.60e-3) - | 6.0442e+0 (1.57e-1) - | 5.2675e+0 (5.92e-1) - | 6.4268e-1 (2.39e-1) |

| SMMOP7 | 200 | 2.1195e+0 (7.68e-1) - | 1.0386e+0 (2.47e-1) - | 8.9003e+0 (8.21e-2) - | 5.2633e+0 (3.99e-2) - | 4.8862e+0 (3.22e-2) - | 4.9204e+0 (4.30e-2) - | 5.9194e+0 (1.43e-1) - | 5.4506e+0 (4.62e-1) - | 6.9430e-1 (3.60e-1) |

| SMMOP8 | 200 | 2.0776e+0 (6.18e-1) - | 1.7249e+0 (2.32e-1) - | 9.1180e+0 (1.03e-1) - | 5.2816e+0 (3.75e-2) - | 4.9187e+0 (6.39e-2) - | 4.9121e+0 (2.51e-2) - | 5.6880e+0 (1.86e-1) - | 5.5831e+0 (2.68e-1) - | 9.8649e-1 (4.67e-1) |

| SMMOP1 | 500 | 2.9461e+0 (4.20e-1) - | 4.5552e+0 (4.20e-1) - | 1.6310e+1 (1.74e-1) - | 7.7364e+0 (5.11e-2) - | 7.8736e+0 (9.11e-2) - | 7.8164e+0 (8.47e-2) - | 9.9065e+0 (1.52e-1) - | 9.6053e+0 (5.47e-1) - | 6.9194e-1 (2.22e-1) |

| SMMOP2 | 500 | 2.8773e+0 (5.30e-1) - | 3.8229e+0 (1.17e+0) - | 1.8101e+1 (3.40e-1) - | 2.3144e+1 (5.87e-1) - | 7.8818e+0 (8.43e-2) - | 7.7813e+0 (6.31e-2) - | 2.1269e+1 (1.22e+0) - | 1.5700e+1 (1.24e+0) - | 7.6296e-1 (4.71e-1) |

| SMMOP3 | 500 | 3.7201e+0 (9.39e-1) - | 4.6911e+0 (8.10e-1) - | 1.7917e+1 (4.63e-1) - | 2.3862e+1 (6.13e-1) - | 8.0429e+0 (1.49e-1) - | 7.8448e+0 (7.75e-2) - | 2.0781e+1 (1.24e+0) - | 1.6089e+1 (1.45e+0) - | 2.0437e+0 (2.94e-1) |

| SMMOP4 | 500 | 3.1952e+0 (2.10e-1) - | 4.5826e+0 (3.72e-1) - | 1.7150e+1 (1.83e-1) - | 7.8032e+0 (7.87e-2) - | 7.8387e+0 (7.12e-2) - | 7.7967e+0 (8.34e-2) - | 1.0370e+1 (2.64e-1) - | 1.0310e+1 (9.73e-1) - | 1.1166e+0 (5.99e-1) |

| SMMOP5 | 500 | 3.2697e+0 (1.68e-1) - | 4.7510e+0 (4.57e-1) - | 1.6523e+1 (2.69e-1) - | 9.1196e+0 (1.09e-1) - | 7.8049e+0 (7.36e-2) - | 7.8057e+0 (8.48e-2) - | 1.0089e+1 (1.66e-1) - | 9.6278e+0 (2.31e-1) - | 1.1432e+0 (5.25e-1) |

| SMMOP6 | 500 | 3.7158e+0 (8.42e-1) - | 5.1628e+0 (2.23e-1) - | 1.7065e+1 (3.29e-1) - | 7.8978e+0 (7.20e-2) - | 7.9518e+0 (1.16e-1) - | 7.8973e+0 (7.17e-2) - | 1.0958e+1 (2.02e-1) - | 1.0025e+1 (9.75e-1) - | 2.2132e+0 (4.33e-1) |

| SMMOP7 | 500 | 4.5286e+0 (1.16e+0) - | 4.3086e+0 (3.64e-1) - | 1.6076e+1 (1.89e-1) - | 8.9965e+0 (6.87e-2) - | 8.0283e+0 (1.19e-1) - | 7.9004e+0 (8.35e-2) - | 1.0341e+1 (2.08e-1) - | 9.9188e+0 (2.11e-1) - | 2.1422e+0 (5.08e-1) |

| SMMOP8 | 500 | 4.5375e+0 (6.88e-1) - | 5.5221e+0 (5.57e-1) - | 1.6087e+1 (1.65e-1) - | 9.0192e+0 (7.95e-2) - | 8.0035e+0 (8.74e-2) - | 7.8974e+0 (8.66e-2) - | 1.0228e+1 (1.73e-1) - | 1.0024e+1 (2.20e-1) - | 2.7611e+0 (5.83e-1) |

| +/−/= | 0/24/0 | 0/24/0 | 0/24/0 | 0/24/0 | 0/24/0 | 0/24/0 | 0/24/0 | 0/24/0 |

| Problem | D | MPMMEA | HHCMMEA | DNNSGAII | MO_Ring_ PSO_SCD | SparseEA | MSKEA | LMMODE | CMMOGA_ DLF | MASR-MMEA |

|---|---|---|---|---|---|---|---|---|---|---|

| SMMOP1 | 100 | 9.7345e-1 (4.48e-1) - | 2.7030e-1 (1.37e-1) - | 3.7502e+0 (1.32e-2) - | 5.9342e+0 (6.46e-2) - | 3.7372e+0 (4.82e-2) - | 3.8017e+0 (1.18e-2) - | 3.6026e+0 (1.39e-1) - | 2.8100e+0 (3.95e-1) - | 1.9128e-1 (1.48e-1) |

| SMMOP2 | 100 | 1.0859e+0 (3.11e-1) - | 2.1617e-1 (1.04e-1) = | 5.2651e+0 (5.75e-1) - | 7.5018e+0 (1.81e-1) - | 3.7022e+0 (5.21e-2) - | 3.8027e+0 (1.36e-2) - | 1.1543e+1 (6.58e-1) - | 3.9713e+0 (5.07e-1) - | 2.0909e-1 (1.74e-1) |

| SMMOP3 | 100 | 1.7703e+0 (5.04e-1) - | 3.6931e-1 (1.73e-1) + | 5.5116e+0 (6.12e-1) - | 7.3038e+0 (2.21e-1) - | 3.8009e+0 (2.48e-2) - | 3.8362e+0 (1.91e-3) - | 1.1837e+1 (6.39e-1) - | 4.1925e+0 (4.90e-1) - | 6.7422e-1 (4.27e-1) |

| SMMOP4 | 100 | 7.1385e-1 (2.84e-1) - | 2.9138e-1 (1.52e-1) - | 3.7206e+0 (1.77e-2) - | 6.3386e+0 (8.97e-2) - | 3.7223e+0 (4.60e-2) - | 3.7989e+0 (9.73e-3) - | 3.8137e+0 (1.88e-1) - | 3.0370e+0 (3.17e-1) - | 2.4262e-1 (2.08e-1) |

| SMMOP5 | 100 | 7.0377e-1 (2.44e-1) - | 3.5367e-1 (1.37e-1) - | 3.8341e+0 (3.44e-2) - | 6.0951e+0 (8.99e-2) - | 3.7066e+0 (4.67e-2) - | 3.7958e+0 (1.25e-2) - | 3.8193e+0 (1.74e-1) - | 3.7933e+0 (1.45e-1) - | 2.0381e-1 (1.46e-1) |

| SMMOP6 | 100 | 1.0922e+0 (4.66e-1) - | 4.9778e-1 (1.89e-1) = | 3.8124e+0 (1.07e-2) - | 6.3841e+0 (7.58e-2) - | 3.8035e+0 (1.95e-2) - | 3.8336e+0 (2.91e-3) - | 4.1158e+0 (1.01e-1) - | 3.0806e+0 (3.71e-1) - | 7.1485e-1 (4.41e-1) |

| SMMOP7 | 100 | 1.9863e+0 (5.51e-1) - | 4.0807e-1 (1.94e-1) + | 3.9332e+0 (3.10e-2) - | 5.8067e+0 (7.46e-2) - | 3.8045e+0 (7.63e-3) - | 3.8382e+0 (1.42e-3) - | 4.0134e+0 (1.54e-1) - | 3.8569e+0 (1.40e-1) - | 1.1490e+0 (4.99e-1) |

| SMMOP8 | 100 | 2.0794e+0 (3.88e-1) - | 8.2172e-1 (2.18e-1) + | 3.9447e+0 (2.48e-2) - | 5.9415e+0 (8.22e-2) - | 3.8020e+0 (1.74e-2) - | 3.8393e+0 (1.28e-3) - | 3.7050e+0 (2.26e-1) - | 3.8124e+0 (1.45e-1) - | 1.1518e+0 (3.06e-1) |

| SMMOP1 | 200 | 1.7560e+0 (5.83e-1) - | 1.0882e+0 (1.78e-1) - | 9.2870e+0 (6.90e-2) - | 5.3382e+0 (1.73e-2) - | 5.3079e+0 (4.71e-2) - | 5.3994e+0 (9.04e-3) - | 5.5189e+0 (1.15e-1) - | 5.0945e+0 (1.74e-1) - | 4.6280e-1 (2.59e-1) |

| SMMOP2 | 200 | 1.7899e+0 (5.25e-1) - | 1.1839e+0 (4.60e-1) - | 1.0917e+1 (2.10e-1) - | 9.9155e+0 (7.59e-1) - | 5.3513e+0 (7.76e-2) - | 5.4024e+0 (6.75e-3) - | 1.5441e+1 (8.50e-1) - | 7.2784e+0 (8.58e-1) - | 5.0743e-1 (2.99e-1) |

| SMMOP3 | 200 | 2.9591e+0 (5.50e-1) - | 1.5321e+0 (3.74e-1) = | 1.0860e+1 (2.69e-1) - | 1.0231e+1 (7.72e-1) - | 5.4056e+0 (6.70e-2) - | 5.4269e+0 (2.08e-3) - | 1.5590e+1 (9.41e-1) - | 7.6269e+0 (8.09e-1) - | 1.3753e+0 (5.46e-1) |

| SMMOP4 | 200 | 1.2341e+0 (3.67e-1) - | 1.2724e+0 (2.87e-1) - | 9.8350e+0 (6.84e-2) - | 5.3218e+0 (2.23e-2) - | 5.3234e+0 (6.22e-2) - | 5.3912e+0 (1.27e-2) - | 5.8900e+0 (1.55e-1) - | 5.4175e+0 (4.66e-1) - | 4.2234e-1 (2.02e-1) |

| SMMOP5 | 200 | 1.5868e+0 (5.55e-1) - | 1.2653e+0 (2.12e-1) - | 9.5371e+0 (5.90e-2) - | 5.6487e+0 (4.92e-2) - | 5.3343e+0 (5.05e-2) - | 5.3996e+0 (1.99e-2) - | 5.6803e+0 (1.40e-1) - | 5.8077e+0 (1.28e-1) - | 4.0819e-1 (2.58e-1) |

| SMMOP6 | 200 | 2.2113e+0 (5.01e-1) - | 1.7450e+0 (1.86e-1) - | 9.8589e+0 (1.04e-1) - | 5.3928e+0 (8.82e-3) - | 5.3797e+0 (2.15e-2) - | 5.4239e+0 (2.67e-3) - | 6.2864e+0 (1.22e-1) - | 5.2485e+0 (5.05e-1) - | 1.2986e+0 (4.91e-1) |

| SMMOP7 | 200 | 3.2023e+0 (6.43e-1) - | 1.3385e+0 (3.06e-1) + | 9.1777e+0 (1.04e-1) - | 5.7180e+0 (3.10e-2) - | 5.3907e+0 (1.49e-2) - | 5.4329e+0 (1.64e-2) - | 5.9643e+0 (1.36e-1) - | 5.8498e+0 (1.67e-1) - | 1.8395e+0 (5.50e-1) |

| SMMOP8 | 200 | 3.3584e+0 (5.88e-1) - | 2.2428e+0 (2.62e-1) - | 9.2691e+0 (7.96e-2) - | 5.7159e+0 (3.68e-2) - | 5.3978e+0 (4.74e-2) - | 5.4297e+0 (1.10e-3) - | 5.5922e+0 (1.78e-1) - | 5.8187e+0 (1.90e-1) - | 1.7540e+0 (3.97e-1) |

| SMMOP1 | 500 | 4.3189e+0 (6.26e-1) - | 5.2167e+0 (4.29e-1) - | 1.6374e+1 (2.38e-1) - | 8.4914e+0 (6.36e-2) - | 8.5727e+0 (7.76e-2) - | 8.5758e+0 (2.68e-2) - | 9.5916e+0 (1.59e-1) - | 8.8795e+0 (4.89e-1) - | 2.014e+0 (7.90e-1) |

| SMMOP2 | 500 | 4.5447e+0 (7.82e-1) - | 4.4129e+0 (4.37e-1) - | 1.8031e+1 (4.71e-1) - | 2.0719e+1 (8.66e-1) - | 8.5969e+0 (1.12e-1) - | 8.5727e+0 (3.10e-2) - | 2.1420e+1 (1.16e+0) - | 1.2676e+1 (8.66e-1) - | 2.1136e+0 (5.80e-1) |

| SMMOP3 | 500 | 5.5325e+0 (7.37e-1) - | 5.0509e+0 (5.51e-1) - | 1.8150e+1 (3.50e-1) - | 2.1238e+1 (8.05e-1) - | 8.7225e+0 (1.25e-1) - | 8.6100e+0 (3.90e-2) - | 2.1546e+1 (1.11e+0) - | 1.2862e+1 (7.11e-1) - | 3.5194e+0 (7.87e-1) |

| SMMOP4 | 500 | 4.1007e+0 (5.47e-1) - | 5.3318e+0 (2.57e-1) - | 1.7139e+1 (2.56e-1) - | 8.5364e+0 (5.60e-2) - | 8.5709e+0 (9.21e-2) - | 8.5853e+0 (3.78e-2) - | 1.0421e+1 (2.33e-1) - | 9.1403e+0 (6.91e-1) - | 2.0131e+0 (6.07e-1) |

| SMMOP5 | 500 | 4.2686e+0 (5.88e-1) - | 5.3089e+0 (2.22e-1) - | 1.6693e+1 (2.90e-1) - | 9.4947e+0 (1.06e-1) - | 8.5806e+0 (9.14e-2) - | 8.5807e+0 (3.69e-2) - | 9.8896e+0 (1.13e-1) - | 9.3563e+0 (2.31e-1) - | 2.0532e+0 (6.00e-1) |

| SMMOP6 | 500 | 5.2012e+0 (7.38e-1) - | 5.4838e+0 (1.19e-1) - | 1.7121e+1 (2.77e-1) - | 8.5726e+0 (2.40e-2) - | 8.6528e+0 (1.15e-1) - | 8.6243e+0 (4.08e-2) - | 1.0924e+1 (2.31e-1) - | 9.4130e+0 (9.06e-1) - | 3.2368e+0 (4.60e-1) |

| SMMOP7 | 500 | 6.0529e+0 (6.76e-1) - | 4.9813e+0 (3.42e-1) - | 1.6272e+1 (1.93e-1) - | 9.5697e+0 (7.23e-2) - | 8.7187e+0 (1.19e-1) - | 8.6404e+0 (4.10e-2) - | 1.0113e+1 (1.64e-1) - | 9.2413e+0 (2.55e-1) - | 3.7809e+0 (7.22e-1) |

| SMMOP8 | 500 | 6.0207e+0 (5.43e-1) - | 5.7797e+0 (2.65e-1) - | 1.6232e+1 (2.32e-1) - | 9.5594e+0 (7.10e-2) - | 8.6553e+0 (1.04e-1) - | 8.6224e+0 (4.40e-2) - | 9.8571e+0 (1.57e-1) - | 9.5258e+0 (3.24e-1) - | 4.0224e+0 (6.43e-1) |

| +/−/= | 0/24/0 | 4/17/3 | 0/24/0 | 0/24/0 | 0/24/0 | 0/24/0 | 0/24/0 | 0/24/0 | ||

| Problem | np | D | MPMMEA | HHCMMEA | MO_Ring_ PSO_SCD | DNNSGAII | SparseEA | MSKEA | MASR-MMEA |

|---|---|---|---|---|---|---|---|---|---|

| SMMOP1 | 500 | 2.9461e+0 (4.20e-1) - | 4.5552e+0 (4.20e-1) - | 1.6310e+1 (1.74e-1) - | 7.7364e+0 (5.11e-2) - | 7.8736e+0 (9.11e-2) - | 7.8164e+0 (8.47e-2) - | 6.8094e-1 (2.22e-1) | |

| SMMOP2 | 500 | 2.8773e+0 (5.30e-1) - | 3.8229e+0 (1.17e+0) - | 1.8101e+1 (3.40e-1) - | 2.3144e+1 (5.87e-1) - | 7.8818e+0 (8.43e-2) - | 7.7813e+0 (6.31e-2) - | 7.5096e-1 (4.71e-1) | |

| SMMOP3 | 500 | 3.7201e+0 (9.39e-1) - | 4.6911e+0 (8.10e-1) - | 1.7917e+1 (4.63e-1) - | 2.3862e+1 (6.13e-1) - | 8.0429e+0 (1.49e-1) - | 7.8448e+0 (7.75e-2) - | 2.0137e+0 (2.94e-1) | |

| SMMOP4 | 4 | 500 | 3.1952e+0 (2.10e-1) - | 4.5826e+0 (3.72e-1) - | 1.7150e+1 (1.83e-1) - | 7.8032e+0 (7.87e-2) - | 7.8387e+0 (7.12e-2) - | 7.7967e+0 (8.34e-2) - | 1.0266e+0 (5.99e-1) |

| SMMOP5 | 500 | 3.2697e+0 (1.68e-1) - | 4.7510e+0 (4.57e-1) - | 1.6523e+1 (2.69e-1) - | 9.1196e+0 (1.09e-1) - | 7.8049e+0 (7.36e-2) - | 7.8057e+0 (8.48e-2) - | 1.1532e+0 (5.25e-1) | |

| SMMOP6 | 500 | 3.7158e+0 (8.42e-1) - | 5.1628e+0 (2.23e-1) - | 1.7065e+1 (3.29e-1) - | 7.8978e+0 (7.20e-2) - | 7.9518e+0 (1.16e-1) - | 7.8973e+0 (7.17e-2) - | 2.1232e+0 (4.33e-1) | |

| SMMOP7 | 500 | 4.5286e+0 (1.16e+0) - | 4.3086e+0 (3.64e-1) - | 1.6076e+1 (1.89e-1) - | 8.9965e+0 (6.87e-2) - | 8.0283e+0 (1.19e-1) - | 7.9004e+0 (8.35e-2) - | 2.0522e+0 (5.08e-1) | |

| SMMOP8 | 500 | 4.5375e+0 (6.88e-1) - | 5.5221e+0 (5.57e-1) - | 1.6087e+1 (1.65e-1) - | 9.0192e+0 (7.95e-2) - | 8.0035e+0 (8.74e-2) - | 7.8974e+0 (8.66e-2) - | 2.6711e+0 (5.83e-1) | |

| SMMOP1 | 500 | 4.3189e+0 (6.26e-1) - | 5.2167e+0 (4.29e-1) - | 1.6374e+1 (2.38e-1) - | 8.4914e+0 (6.36e-2) - | 8.5727e+0 (7.76e-2) - | 8.5758e+0 (2.68e-2) - | 2.1114e+0 (7.90e-1) | |

| SMMOP2 | 500 | 4.5447e+0 (7.82e-1) - | 4.4129e+0 (4.37e-1) - | 1.8031e+1 (4.71e-1) - | 2.0719e+1 (8.66e-1) - | 8.5969e+0 (1.12e-1) - | 8.5727e+0 (3.10e-2) - | 2.2036e+0 (5.80e-1) | |

| SMMOP3 | 500 | 5.5325e+0 (7.37e-1) - | 5.0509e+0 (5.51e-1) - | 1.8150e+1 (3.50e-1) - | 2.1238e+1 (8.05e-1) - | 8.7225e+0 (1.25e-1) - | 8.6100e+0 (3.90e-2) - | 3.5194e+0 (7.87e-1) | |

| SMMOP4 | 6 | 500 | 4.1007e+0 (5.47e-1) - | 5.3318e+0 (2.57e-1) - | 1.7139e+1 (2.56e-1) - | 8.5364e+0 (5.60e-2) - | 8.5709e+0 (9.21e-2) - | 8.5853e+0 (3.78e-2) - | 2.0131e+0 (6.07e-1) |

| SMMOP5 | 500 | 4.2686e+0 (5.88e-1) - | 5.3089e+0 (2.22e-1) - | 1.6693e+1 (2.90e-1) - | 9.4947e+0 (1.06e-1) - | 8.5806e+0 (9.14e-2) - | 8.5807e+0 (3.69e-2) - | 2.0532e+0 (6.00e-1) | |

| SMMOP6 | 500 | 5.2012e+0 (7.38e-1) - | 5.4838e+0 (1.19e-1) - | 1.7121e+1 (2.77e-1) - | 8.5726e+0 (2.40e-2) - | 8.6528e+0 (1.15e-1) - | 8.6243e+0 (4.08e-2) - | 3.2368e+0 (4.60e-1) | |

| SMMOP7 | 500 | 6.0529e+0 (6.76e-1) - | 4.9813e+0 (3.42e-1) - | 1.6272e+1 (1.93e-1) - | 9.5697e+0 (7.23e-2) - | 8.7187e+0 (1.19e-1) - | 8.6404e+0 (4.10e-2) - | 3.7809e+0 (7.22e-1) | |

| SMMOP8 | 500 | 6.0207e+0 (5.43e-1) - | 5.7797e+0 (2.65e-1) - | 1.6232e+1 (2.32e-1) - | 9.5594e+0 (7.10e-2) - | 8.6553e+0 (1.04e-1) - | 8.6224e+0 (4.40e-2) - | 4.0224e+0 (6.43e-1) | |

| SMMOP1 | 500 | 5.4735e+0 (7.95e-1) - | 5.5678e+0 (2.17e-1) - | 1.6393e+1 (2.29e-1) - | 8.8323e+0 (7.92e-2) - | 8.9960e+0 (9.01e-2) - | 8.9789e+0 (1.56e-2) - | 3.3729e+0 (5.64e-1) | |

| SMMOP2 | 500 | 5.5305e+0 (5.84e-1) - | 5.1211e+0 (7.04e-1) - | 1.8036e+1 (4.42e-1) - | 1.8420e+1 (7.10e-1) - | 8.9588e+0 (1.19e-1) - | 8.9790e+0 (1.25e-2) - | 3.2861e+0 (5.67e-1) | |

| SMMOP3 | 500 | 6.7319e+0 (6.50e-1) - | 5.3189e+0 (3.18e-1) - | 1.7989e+1 (5.32e-1) - | 1.9095e+1 (7.18e-1) - | 9.0542e+0 (8.19e-2) - | 9.0075e+0 (1.95e-2) - | 4.5030e+0 (6.43e-1) | |

| SMMOP4 | 8 | 500 | 4.9510e+0 (4.88e-1) - | 5.6880e+0 (1.79e-1) - | 1.7129e+1 (2.38e-1) - | 8.8994e+0 (6.70e-2) - | 8.9575e+0 (9.63e-2) - | 8.9729e+0 (1.16e-2) - | 3.3106e+0 (6.69e-1 |

| SMMOP5 | 500 | 2.5692e+0 (7.54e-1) - | 5.6244e+0 (1.90e-1) - | 1.6625e+1 (2.76e-1) - | 8.8543e+0 (8.34e-2) - | 7.8160e+0 (7.27e-2) - | 7.7468e+0 (3.68e-2) - | 1.2242e+0 (4.30e-1) | |

| SMMOP6 | 500 | 6.1969e+0 (8.43e-1) - | 5.8609e+0 (1.66e-1) - | 1.7184e+1 (2.63e-1) - | 8.9711e+0 (1.85e-2) - | 9.0124e+0 (1.23e-1) - | 8.9974e+0 (1.92e-2) - | 4.2785e+0 (5.68e-1) | |

| SMMOP7 | 500 | 7.0664e+0 (6.89e-1) - | 5.2678e+0 (2.51e-1) - | 1.6391e+1 (1.62e-1) - | 9.8715e+0 (7.42e-2) - | 9.0454e+0 (1.05e-1) - | 9.0116e+0 (2.08e-2) - | 5.0512e+0 (4.30e-1) | |

| SMMOP8 | 500 | 6.6190e+0 (7.00e-1) - | 6.0049e+0 (1.41e-1) - | 1.6305e+1 (1.76e-1) - | 9.8741e+0 (6.47e-2) - | 8.9144e+0 (1.18e-1) - | 9.0075e+0 (1.92e-2) - | 4.7933e+0 (4.88e-1) | |

| +/−/= | 0/24/0 | 0/24/0 | 0/24/0 | 0/24/0 | 0/24/0 | 0/24/0 |

| Problem | D | MPMMEA | HHCMMEA | MO_Ring_ PSO_SCD | DNNSGAII | SparseEA | MSKEA | MASR-MMEA |

|---|---|---|---|---|---|---|---|---|

| SMMOP1 | 100 | 2.6535e-3 (4.27e-4) + | 1.7463e-3 (3.47e-4) + | 7.4965e-1 (1.08e-2) - | 2.6018e-3 (8.34e-5) + | 1.1972e-3 (9.51e-5) + | 9.2136e-4 (2.17e-6) + | 5.3183e-3 (1.07e-3) |

| SMMOP2 | 100 | 2.9874e-3 (3.67e-4) + | 1.6070e-3 (2.37e-4) + | 2.0301e+0 (2.29e-2) - | 1.0816e-1 (3.13e-2) - | 1.4263e-3 (2.11e-4) + | 9.2144e-4 (1.87e-6) + | 5.1625e-3 (1.29e-3) |

| SMMOP3 | 100 | 4.4374e-3 (1.17e-3) + | 1.7955e-3 (3.54e-4) + | 2.1004e+0 (2.37e-2) - | 1.1100e-1 (3.72e-2) - | 1.7380e-3 (8.83e-4) + | 9.2150e-4 (2.63e-6) + | 1.1246e-2 (5.05e-3) |

| SMMOP4 | 100 | 2.9300e-3 (4.12e-4) + | 2.0064e-3 (7.11e-4) + | 3.6976e-1 (3.89e-3) - | 3.0921e-3 (1.30e-4) + | 1.2029e-3 (3.63e-5) + | 1.0285e-3 (9.18e-6) + | 5.2911e-3 (4.00e-4) |

| SMMOP5 | 100 | 2.8852e-3 (3.97e-4) + | 1.9255e-3 (5.36e-4) + | 3.6174e-1 (5.48e-3) - | 6.5908e-3 (6.86e-4) - | 1.2203e-3 (3.17e-5) + | 1.0269e-3 (8.44e-6) + | 5.2092e-3 (4.08e-4) |

| SMMOP6 | 100 | 3.4504e-3 (6.45e-4) + | 2.8252e-3 (1.43e-3) + | 4.0424e-1 (5.47e-3) - | 2.9585e-3 (1.33e-4) + | 1.2136e-3 (4.85e-5) + | 1.0264e-3 (9.06e-6) + | 8.6154e-3 (1.89e-3) |

| SMMOP7 | 100 | 3.7606e-3 (7.92e-4) + | 2.1633e-3 (4.27e-4) + | 9.3392e-1 (1.51e-2) - | 8.6335e-3 (1.42e-3) + | 1.6861e-3 (1.90e-4) + | 1.0195e-3 (4.10e-6) + | 1.4345e-2 (6.26e-3) |

| SMMOP8 | 100 | 4.6457e-3 (1.95e-3) + | 2.9630e-3 (9.50e-4) + | 8.7713e-1 (1.10e-2) - | 8.6800e-3 (1.27e-3) + | 2.2438e-3 (1.06e-3) + | 1.0193e-3 (3.85e-6) + | 1.5657e-2 (8.50e-3) |

| SMMOP1 | 200 | 3.9339e-3 (4.77e-4) + | 3.3987e-3 (9.29e-4) + | 8.6003e-1 (9.59e-3) - | 2.7808e-3 (7.43e-5) + | 1.5171e-3 (6.07e-4) + | 9.2137e-4 (2.53e-6) + | 6.2139e-3 (8.93e-4) |

| SMMOP2 | 200 | 4.4837e-3 (6.34e-4) + | 2.6846e-3 (7.31e-4) + | 2.0948e+0 (1.34e-2) - | 2.0950e-1 (3.28e-2) - | 1.7347e-3 (3.43e-4) + | 9.2096e-4 (2.89e-6) + | 6.0765e-3 (1.19e-3) |

| SMMOP3 | 200 | 9.0102e-3 (2.42e-3) + | 4.2077e-3 (2.15e-3) + | 2.1756e+0 (1.14e-2) - | 2.3633e-1 (3.70e-2) - | 5.6382e-3 (2.31e-3) + | 1.4051e-3 (1.84e-3) + | 1.1487e-2 (5.33e-3) |

| SMMOP4 | 200 | 3.7343e-3 (4.34e-4) + | 2.9477e-3 (1.07e-3) + | 3.9627e-1 (3.12e-3) - | 3.2798e-3 (1.21e-4) + | 1.3327e-3 (3.93e-5) + | 1.0257e-3 (9.23e-6) + | 6.1050e-3 (2.89e-4) |

| SMMOP5 | 200 | 3.6821e-3 (4.89e-4) + | 3.3440e-3 (1.23e-3) + | 4.2140e-1 (6.19e-3) - | 8.7662e-3 (6.38e-4) - | 1.3675e-3 (4.87e-5) + | 1.0258e-3 (7.43e-6) + | 6.1994e-3 (3.60e-4) |

| SMMOP6 | 200 | 5.2639e-3 (1.36e-3) + | 7.4593e-3 (2.86e-3) = | 4.4210e-1 (5.10e-3) - | 3.1730e-3 (1.03e-4) + | 1.9956e-3 (1.21e-3) + | 1.0281e-3 (1.02e-5) + | 8.5517e-3 (2.89e-3) |

| SMMOP7 | 200 | 6.9965e-3 (3.75e-3) + | 7.2076e-3 (4.09e-3) + | 1.1013e+0 (1.48e-2) - | 1.7260e-2 (1.14e-3) = | 2.1939e-3 (8.24e-4) + | 2.0686e-3 (3.20e-3) + | 1.3756e-2 (6.81e-3) |

| SMMOP8 | 200 | 1.0094e-2 (4.66e-3) + | 1.0388e-2 (3.85e-3) + | 1.0130e+0 (1.24e-2) - | 1.8020e-2 (1.08e-3) = | 3.6776e-3 (2.01e-3) + | 1.1782e-3 (8.77e-4) + | 1.8444e-2 (9.38e-3) |

| SMMOP1 | 500 | 1.0399e-2 (2.40e-3) - | 2.7242e-2 (8.19e-3) - | 1.0258e+0 (1.15e-2) - | 4.2043e-3 (3.89e-4) + | 4.6775e-3 (1.89e-3) + | 1.6543e-3 (1.09e-3) + | 7.4726e-3 (4.14e-4) |

| SMMOP2 | 500 | 9.4700e-3 (2.20e-3) - | 9.6629e-3 (5.28e-3) = | 2.1125e+0 (8.28e-3) - | 3.6411e-1 (2.08e-2) - | 4.9703e-3 (1.54e-3) + | 1.1748e-3 (4.28e-4) + | 7.1695e-3 (7.30e-4) |

| SMMOP3 | 500 | 2.6246e-2 (6.65e-3) - | 4.2189e-2 (1.54e-2) - | 2.2000e+0 (1.08e-2) - | 3.9087e-1 (2.31e-2) - | 1.8315e-2 (4.15e-3) + | 2.8355e-3 (1.89e-3) + | 2.3844e-2 (4.07e-3) |

| SMMOP4 | 500 | 7.8683e-3 (8.12e-4) - | 1.5551e-2 (2.53e-3) - | 4.2728e-1 (2.31e-3) - | 5.0755e-3 (5.40e-4) + | 2.7394e-3 (5.78e-4) + | 1.2338e-3 (3.42e-4) + | 7.2379e-3 (8.79e-4) |

| SMMOP5 | 500 | 8.3333e-3 (1.11e-3) - | 1.6771e-2 (3.62e-3) - | 5.1897e-1 (7.03e-3) - | 1.7423e-2 (7.96e-4) - | 2.5422e-3 (6.70e-4) + | 1.2605e-3 (3.81e-4) + | 7.1165e-3 (7.16e-4) |

| SMMOP6 | 500 | 1.4926e-2 (3.54e-3) = | 3.8779e-2 (7.77e-3) - | 4.7865e-1 (3.06e-3) - | 5.7934e-3 (7.92e-4) + | 5.7592e-3 (1.74e-3) + | 2.8044e-3 (1.63e-3) + | 1.3862e-2 (3.74e-3) |

| SMMOP7 | 500 | 3.3410e-2 (7.67e-3) = | 6.9908e-2 (1.84e-2) - | 1.3719e+0 (1.80e-2) - | 3.4293e-2 (1.64e-3) - | 1.7044e-2 (6.47e-3) + | 6.9944e-3 (5.85e-3) + | 2.9276e-2 (9.06e-3) |

| SMMOP8 | 500 | 3.3146e-2 (5.21e-3) = | 5.7682e-2 (1.53e-2) - | 1.2550e+0 (1.26e-2) - | 3.5284e-2 (2.23e-3) = | 1.5129e-2 (3.58e-3) + | 3.5752e-3 (2.13e-3) + | 3.3033e-2 (9.66e-3) |

| +/−/= | 16/5/3 | 15/7/2 | 0/24/0 | 11/10/3 | 24/0/0 | 24/0/0 | ||

| Problem | D | MASR-MMEA-1 | MASR-MMEA-2 | MASR-MMEA-3 | MASR-MMEA |

|---|---|---|---|---|---|

| SMMOP1 | 100 | 2.6484e-1 (2.59e-2) - | 7.0521e-1 (3.76e-1) - | 8.1947e-2 (1.57e-2) - | 2.4986e-2 (1.82e-2) |

| SMMOP2 | 100 | 3.2261e-1 (2.91e-2) - | 6.9251e-1 (4.35e-1) - | 5.8416e-2 (2.06e-2) - | 2.5019e-2 (2.35e-2) |

| SMMOP3 | 100 | 7.8345e-1 (9.74e-2) - | 7.7917e-1 (3.90e-1) - | 1.0581e-1 (1.00e-1) = | 2.0734e-1 (3.30e-1) |

| SMMOP4 | 100 | 3.2745e-1 (2.71e-2) - | 7.2141e-1 (3.34e-1) - | 1.2868e-1 (2.58e-2) - | 7.3115e-2 (5.94e-2) |

| SMMOP5 | 100 | 3.4247e-1 (5.49e-2) - | 6.8853e-1 (2.74e-1) - | 1.3155e-1 (1.92e-2) - | 6.1045e-2 (3.18e-2) |

| SMMOP6 | 100 | 6.7943e-1 (9.72e-2) - | 7.9691e-1 (3.35e-1) - | 1.5392e-1 (9.35e-2) = | 1.3192e-1 (1.30e-1) |

| SMMOP7 | 100 | 6.5162e-1 (1.81e-1) - | 1.0972e+0 (5.86e-1) - | 1.7238e-1 (1.24e-1) = | 1.8609e-1 (2.28e-1) |

| SMMOP8 | 100 | 8.5197e-1 (4.49e-1) - | 9.9827e-1 (4.24e-1) - | 4.4734e-1 (4.17e-1) = | 3.7461e-1 (2.99e-1) |

| SMMOP1 | 200 | 5.4644e-1 (4.85e-2) - | 1.8383e+0 (3.85e-1) - | 2.3445e-1 (5.23e-2) - | 7.6110e-2 (7.70e-2) |

| SMMOP2 | 200 | 5.7056e-1 (8.00e-2) - | 1.8008e+0 (4.61e-1) - | 1.8459e-1 (3.58e-2) - | 5.0990e-2 (5.03e-2) |

| SMMOP3 | 200 | 1.4418e+0 (7.54e-2) - | 1.9236e+0 (5.31e-1) - | 4.8528e-1 (2.17e-1) = | 6.5696e-1 (5.40e-1) |

| SMMOP4 | 200 | 6.8855e-1 (5.23e-2) - | 1.7496e+0 (3.56e-1) - | 2.8703e-1 (7.15e-2) - | 1.9530e-1 (1.53e-1) |

| SMMOP5 | 200 | 7.0301e-1 (5.95e-2) - | 1.6722e+0 (3.28e-1) - | 3.0333e-1 (7.99e-2) - | 2.1807e-1 (1.72e-1) |

| SMMOP6 | 200 | 1.4372e+0 (6.44e-2) - | 2.0762e+0 (4.79e-1) - | 5.1656e-1 (1.85e-1) = | 5.4537e-1 (3.44e-1) |

| SMMOP7 | 200 | 1.3769e+0 (2.00e-1) - | 2.0887e+0 (5.38e-1) - | 4.8382e-1 (3.04e-1) + | 5.9888e-1 (3.27e-1) |

| SMMOP8 | 200 | 1.4868e+0 (2.08e-1) - | 2.2954e+0 (4.27e-1) - | 6.7907e-1 (3.80e-1) + | 1.0496e+0 (4.79e-1) |

| SMMOP1 | 500 | 1.4614e+0 (1.07e-1) - | 4.0057e+0 (5.51e-1) - | 7.9550e-1 (1.89e-1) = | 6.9094e-1 (2.22e-1) |

| SMMOP2 | 500 | 1.3062e+0 (8.22e-2) - | 4.1894e+0 (5.39e-1) - | 8.3999e-1 (3.14e-1) = | 7.6096e-1 (4.71e-1) |

| SMMOP3 | 500 | 2.6822e+0 (6.43e-2) - | 4.3460e+0 (6.41e-1) - | 2.0630e+0 (4.61e-1) = | 2.0137e+0 (2.94e-1) |

| SMMOP4 | 500 | 1.7673e+0 (1.32e-1) - | 4.0802e+0 (4.07e-1) - | 1.1278e+0 (3.86e-1) = | 1.1266e+0 (5.99e-1) |

| SMMOP5 | 500 | 1.9304e+0 (2.03e-1) - | 3.9643e+0 (4.56e-1) - | 1.0523e+0 (3.14e-1) = | 1.1532e+0 (5.25e-1) |

| SMMOP6 | 500 | 3.0225e+0 (2.48e-1) - | 4.2884e+0 (4.92e-1) - | 2.1156e+0 (2.75e-1) = | 2.2232e+0 (4.33e-1) |

| SMMOP7 | 500 | 2.7967e+0 (2.69e-1) - | 4.4019e+0 (5.62e-1) - | 2.5155e+0 (9.58e-1) = | 2.1322e+0 (5.08e-1) |

| SMMOP8 | 500 | 3.0905e+0 (1.42e-1) - | 4.9335e+0 (4.10e-1) - | 2.6766e+0 (8.57e-1) = | 2.7711e+0 (5.83e-1) |

| +/−/= | 0/8/0 | 0/8/0 | 2/8/14 | ||

| Critical node detection problem | Type of variables | No. of variables | Dataset | No. of nodes | No. of edges | |

| CN1 | 102 | Hollywood Film Music 4 | 102 | 192 | ||

| CN2 | Binary | 234 | Graph Drawing Contests Data (A99) 4 | 234 | 154 | |

| CN3 | 311 | Graph Drawing Contests Data (A01) 4 | 311 | 640 | ||

| CN4 | 452 | Graph Drawing Contests Data (C97) 4 | 452 | 460 | ||

| Instance selection problem | Type of variables | No. of variables | Dataset | No. of samples | No. of features | No. of classes |

| IS1 | 862 | Fouclass 1 | 862 | 3 | ||

| IS2 | Binary | 4177 | Abalone 2 | 4177 | 6 | |

| IS3 | 11,055 | phishing 1 | 11,055 | 9 | ||

| Community detection problem | Type of variables | No. of variables | Dataset | No. of nodes | No. of edges | |

| CD1 | 105 | Polbook 3 | 105 | 441 | ||

| CD2 | Binary | 115 | Football 3 | 115 | 614 | |

| CD3 | 1133 | Email 3 | 11,055 | 5451 |

| Problem | D | HHCMMEA | MPMMEA | MASR-MMEA |

|---|---|---|---|---|

| Sparse_CN1 | 102 | 8.9540e-1 (1.28e-2) - | 8.9736e-1 (1.52e-2) - | 9.1859e-1 (2.78e-3) |

| Sparse_CN2 | 234 | 9.5357e-1 (8.03e-3) - | 9.0837e-1 (1.23e-2) - | 9.5215e-1 (4.58e-3) |

| Sparse_CN3 | 311 | 8.5266e-1 (2.02e-1) - | 8.7194e-1 (2.08e-2) - | 8.8248e-1 (1.32e-2) |

| Sparse_CN4 | 452 | 9.9382e-1 (3.69e-3) - | 9.6860e-1 (1.58e-2) - | 9.8596e-1 (1.47e-4) |

| Sparse_CD1 | 105 | 7.7298e-1 (8.80e-3) = | 7.6659e-1 (9.40e-3) - | 7.7362e-1 (1.17e-2) |

| Sparse_CD2 | 115 | 7.6239e-1 (2.29e-3) - | 7.5634e-1 (1.11e-1) - | 7.7053e-1 (2.22e-3) |

| Sparse_CD3 | 1133 | 6.7859e-1 (1.03e-2) = | 6.5817e-1 (1.49e-2) - | 6.8078e-1 (2.07e-2) |

| Sparse_IS1 | 862 | 8.5012e-1 (1.32e-2) - | 7.3625e-1 (2.95e-1) = | 8.6012e-1 (8.63e-3) |

| Sparse_IS2 | 4177 | 7.0843e-1 (8.36e-3) + | 6.9266e-1 (1.14e-1) - | 7.0238e-1 (5.23e-3) |

| Sparse_IS3 | 11,055 | 9.4989e-1 (1.83e-3) - | 7.4097e-1 (1.67e-3) - | 9.5075e-1 (2.74e-3) |

| +/−/= | 1/7/2 | 0/9/1 | ||

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Chen, B.; Sun, Y.; Hua, B. Large-Scale Sparse Multimodal Multiobjective Optimization via Multi-Stage Search and RL-Assisted Environmental Selection. Electronics 2026, 15, 616. https://doi.org/10.3390/electronics15030616

Chen B, Sun Y, Hua B. Large-Scale Sparse Multimodal Multiobjective Optimization via Multi-Stage Search and RL-Assisted Environmental Selection. Electronics. 2026; 15(3):616. https://doi.org/10.3390/electronics15030616

Chicago/Turabian StyleChen, Bozhao, Yu Sun, and Bei Hua. 2026. "Large-Scale Sparse Multimodal Multiobjective Optimization via Multi-Stage Search and RL-Assisted Environmental Selection" Electronics 15, no. 3: 616. https://doi.org/10.3390/electronics15030616

APA StyleChen, B., Sun, Y., & Hua, B. (2026). Large-Scale Sparse Multimodal Multiobjective Optimization via Multi-Stage Search and RL-Assisted Environmental Selection. Electronics, 15(3), 616. https://doi.org/10.3390/electronics15030616