Enhancing Adversarial Policy Learning via Value-Based Reward Shaping

Abstract

1. Introduction

- A Value-Based Reward Shaping (VBRS) framework is proposed to mitigate reward misalignment. By incorporating value-function guidance into reward shaping, exploration is driven by long-term strategic value rather than biased short-term heuristics.

- A two-dimensional adversarial simulation environment is constructed. Experimental results demonstrate that, compared with other baselines, VBRS achieves superior adversarial performance.

2. Problem Formulation

2.1. Markov Decision Process

- is the state space, encompassing the status of both agents and relevant environmental information.

- is the action space of agent A.

- is the state transition probability induced by the environment dynamics and the opponent’s policy, calculated as

- is the immediate reward function for agent A.

- is the discount factor.

2.2. Policy Gradient Framework and Objective Analysis

3. Methodology

3.1. Value-Based Reward Shaping Objective

3.2. Dual-Critic Estimation and Adaptive Shaping Coefficient

3.3. Actor Optimization with PPO

| Algorithm 1 PPO + VBRS:Proximal Policy Optimization with Value-Based Reward Shaping |

|

4. Experiment

4.1. Environment Setting

4.2. Reward Function Design

4.3. Opponent Policy Training

- Stage 1 Agent C remains stationary, allowing agent B to learn basic aiming.

- Stage 2 Agent C moves in straight lines with reflection, providing moving targets.

- Stage 3 (Self-Play): Agent C adopts a frozen copy of agent B’s current policy. This process is repeated for 15 iterations.

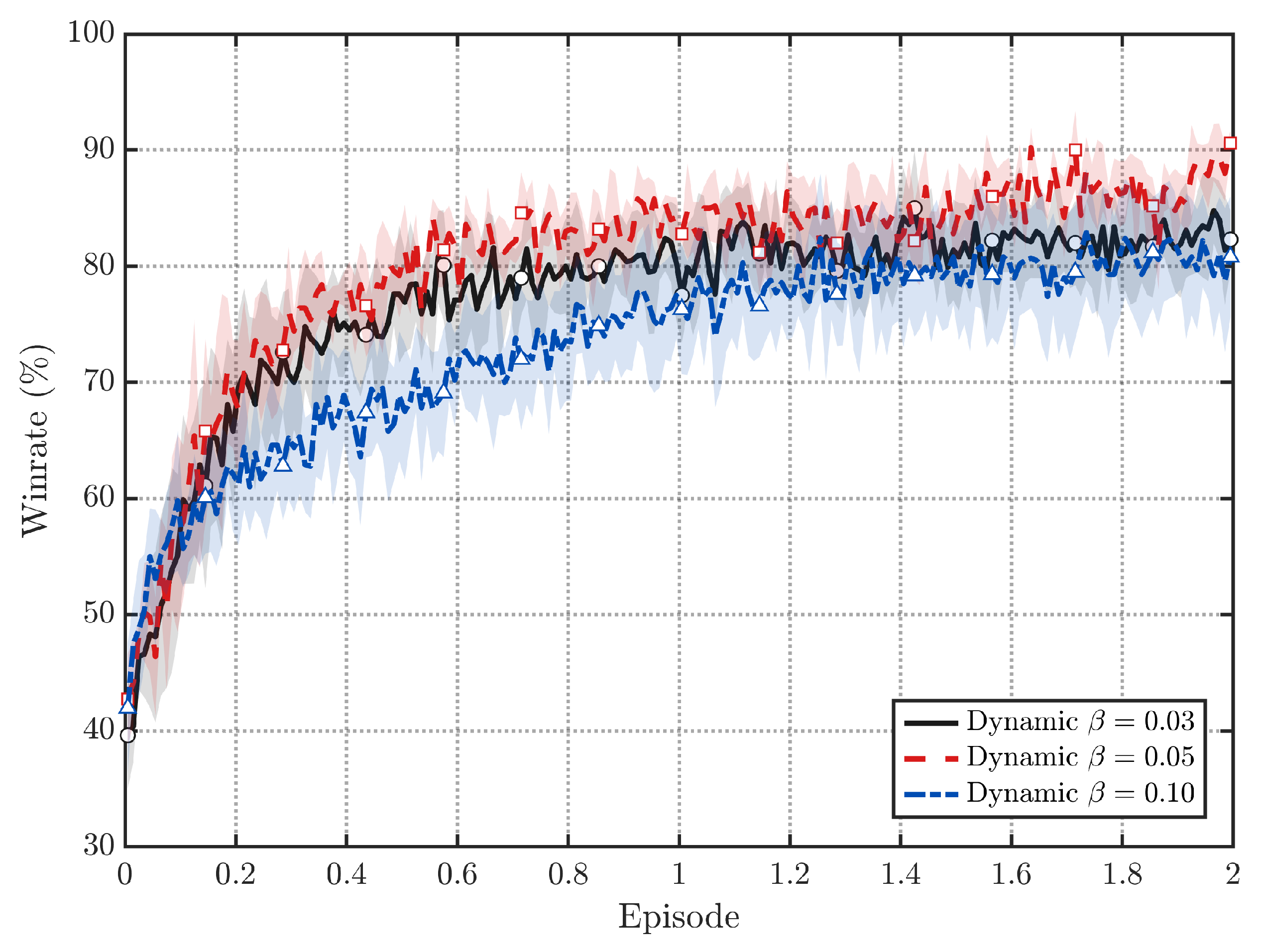

4.4. Experimental Results

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Argall, B.D.; Chernova, S.; Veloso, M.; Browning, B. A survey of robot learning from demonstration. Robot. Auton. Syst. 2009, 57, 469–483. [Google Scholar] [CrossRef]

- Hu, J.; Wang, Y.; Cheng, S.; Xu, J.; Wang, N.; Fu, B.; Ning, Z.; Li, J.; Chen, H.; Feng, C.; et al. A survey of decision-making and planning methods for self-driving vehicles. Front. Neurorobot. 2025, 19, 1451923. [Google Scholar] [CrossRef] [PubMed]

- Wang, J.; Xu, H.; Jia, H.; Zhang, X.; Yan, M.; Shen, W.; Zhang, J.; Huang, F.; Sang, J. Mobile-agent-v2: Mobile device operation assistant with effective navigation via multi-agent collaboration. Adv. Neural Inf. Process. Syst. 2024, 37, 2686–2710. [Google Scholar]

- Lin, Y.; Gao, H.; Xia, Y. Distributed Pursuit–Evasion Game Decision-Making Based on Multi-Agent Deep Reinforcement Learning. Electronics 2025, 14, 2141. [Google Scholar] [CrossRef]

- Li, Z.; Ji, Q.; Ling, X.; Liu, Q. A comprehensive review of multi-agent reinforcement learning in video games. IEEE Trans. Games 2025, 17, 873–892. [Google Scholar] [CrossRef]

- Amodei, D.; Olah, C.; Steinhardt, J.; Christiano, P.; Schulman, J.; Mané, D. Concrete problems in AI safety. arXiv 2016, arXiv:1606.06565. [Google Scholar] [CrossRef]

- Brooks, R. A robust layered control system for a mobile robot. IEEE J. Robot. Autom. 2003, 2, 14–23. [Google Scholar] [CrossRef]

- Jiang, C.; Wang, H.; Ai, J. Autonomous Maneuver Decision-making Algorithm for UCAV Based on Generative Adversarial Imitation Learning. Aerosp. Sci. Technol. 2025, 164, 110313. [Google Scholar] [CrossRef]

- Chen, C.; Song, T.; Mo, L.; Lv, M.; Lin, D. Autonomous Dogfight Decision-Making for Air Combat Based on Reinforcement Learning with Automatic Opponent Sampling. Aerospace 2025, 12, 265. [Google Scholar] [CrossRef]

- Sutton, R.S.; Barto, A.G. Reinforcement Learning: An Introduction; MIT Press: Cambridge, UK, 1998; Volume 1. [Google Scholar]

- Sutton, R.S.; McAllester, D.; Singh, S.; Mansour, Y. Policy gradient methods for reinforcement learning with function approximation. Adv. Neural Inf. Process. Syst. 1999, 12, 1057–1063. [Google Scholar]

- Schulman, J.; Wolski, F.; Dhariwal, P.; Radford, A.; Klimov, O. Proximal policy optimization algorithms. arXiv 2017, arXiv:1707.06347. [Google Scholar] [CrossRef]

- Schulman, J.; Levine, S.; Abbeel, P.; Jordan, M.; Moritz, P. Trust region policy optimization. In Proceedings of the International Conference on Machine Learning PMLR, Lille, France, 7–9 July 2015; pp. 1889–1897. [Google Scholar]

- Haarnoja, T.; Zhou, A.; Hartikainen, K.; Tucker, G.; Ha, S.; Tan, J.; Kumar, V.; Zhu, H.; Gupta, A.; Abbeel, P.; et al. Soft actor-critic algorithms and applications. arXiv 2018, arXiv:1812.05905. [Google Scholar]

- Vinyals, O.; Babuschkin, I.; Czarnecki, W.M.; Mathieu, M.; Dudzik, A.; Chung, J.; Choi, D.H.; Powell, R.; Ewalds, T.; Georgiev, P.; et al. Grandmaster level in StarCraft II using multi-agent reinforcement learning. Nature 2019, 575, 350–354. [Google Scholar] [CrossRef]

- Berner, C.; Brockman, G.; Chan, B.; Cheung, V.; Dębiak, P.; Dennison, C.; Farhi, D.; Fischer, Q.; Hashme, S.; Hesse, C.; et al. Dota 2 with large scale deep reinforcement learning. arXiv 2019, arXiv:1912.06680. [Google Scholar] [CrossRef]

- Pope, A.P.; Ide, J.S.; Mićović, D.; Diaz, H.; Rosenbluth, D.; Ritholtz, L.; Twedt, J.C.; Walker, T.T.; Alcedo, K.; Javorsek, D. Hierarchical reinforcement learning for air-to-air combat. In Proceedings of the 2021 International Conference on Unmanned Aircraft Systems (ICUAS), Athens, Greece, 15–18 June 2021; pp. 275–284. [Google Scholar]

- Trott, A.; Zheng, S.; Xiong, C.; Socher, R. Keeping your distance: Solving sparse reward tasks using self-balancing shaped rewards. Adv. Neural Inf. Process. Syst. 2019, 32, 10376–10386. [Google Scholar]

- Yu, R.; Wan, S.; Wang, Y.; Gao, C.X.; Gan, L.; Zhang, Z.; Zhan, D.C. Reward Models in Deep Reinforcement Learning: A Survey. arXiv 2025, arXiv:2506.15421. [Google Scholar] [CrossRef]

- Ng, A.Y.; Harada, D.; Russell, S. Policy invariance under reward transformations: Theory and application to reward shaping. In Proceedings of the Sixteenth International Conference on Machine Learning, San Francisco, CA, USA, 27–30 June 1999; Volume 99, pp. 278–287. [Google Scholar]

- Laud, A.; DeJong, G. The influence of reward on the speed of reinforcement learning: An analysis of shaping. In Proceedings of the 20th International Conference on Machine Learning (ICML-03), Washington, DC, USA, 21–24 August 2003; pp. 440–447. [Google Scholar]

- Devlin, S.M.; Kudenko, D. Dynamic potential-based reward shaping. In Proceedings of the 11th International Conference on Autonomous Agents and Multiagent Systems (AAMAS 2012), Valencia, Spain, 4–8 June 2012; pp. 433–440. [Google Scholar]

- Grześ, M.; Kudenko, D. Online learning of shaping rewards in reinforcement learning. Neural Netw. 2010, 23, 541–550. [Google Scholar] [CrossRef] [PubMed]

- Ma, Y.J.; Liang, W.; Wang, G.; Huang, D.A.; Bastani, O.; Jayaraman, D.; Zhu, Y.; Fan, L.; Anandkumar, A. Eureka: Human-level reward design via coding large language models. arXiv 2023, arXiv:2310.12931. [Google Scholar]

- Choi, J.; Kim, S. Predictive Risk-Aware Reinforcement Learning for Autonomous Vehicles Using Safety Potential. Electronics 2025, 14, 4446. [Google Scholar] [CrossRef]

- Wiewiora, E. Potential-based shaping and Q-value initialization are equivalent. J. Artif. Intell. Res. 2003, 19, 205–208. [Google Scholar] [CrossRef]

- Adamczyk, J.; Makarenko, V.; Tiomkin, S.; Kulkarni, R.V. Bootstrapped Reward Shaping. In Proceedings of the AAAI Conference on Artificial Intelligence, Philadelphia, PA, USA, 25 February–4 March 2025; Volume 39, pp. 15302–15310. [Google Scholar]

- Devidze, R.; Kamalaruban, P.; Singla, A. Exploration-guided reward shaping for reinforcement learning under sparse rewards. Adv. Neural Inf. Process. Syst. 2022, 35, 5829–5842. [Google Scholar]

- Yuan, M.; Li, B.; Jin, X.; Zeng, W. Automatic intrinsic reward shaping for exploration in deep reinforcement learning. In Proceedings of the International Conference on Machine Learning, Honolulu, HI, USA, 23–29 July 2023; pp. 40531–40554. [Google Scholar]

- Hu, Y.; Wang, W.; Jia, H.; Wang, Y.; Chen, Y.; Hao, J.; Wu, F.; Fan, C. Learning to utilize shaping rewards: A new approach of reward shaping. Adv. Neural Inf. Process. Syst. 2020, 33, 15931–15941. [Google Scholar]

- Littman, M.L. Markov games as a framework for multi-agent reinforcement learning. In Machine Learning Proceedings 1994; Elsevier: Amsterdam, The Netherlands, 1994; pp. 157–163. [Google Scholar]

- Chen, D.; Wang, Y.; Gao, W. A two-stage multi-objective deep reinforcement learning framework. In ECAI 2020; IOS Press: Amsterdam, The Netherlands, 2020; pp. 1063–1070. [Google Scholar]

- Baxter, J.; Bartlett, P.L. Infinite-horizon policy-gradient estimation. J. Artif. Intell. Res. 2001, 15, 319–350. [Google Scholar] [CrossRef]

- Lehmann, M. The definitive guide to policy gradients in deep reinforcement learning: Theory, algorithms and implementations. arXiv 2024, arXiv:2401.13662. [Google Scholar] [CrossRef]

- Mnih, V.; Badia, A.P.; Mirza, M.; Graves, A.; Lillicrap, T.; Harley, T.; Silver, D.; Kavukcuoglu, K. Asynchronous methods for deep reinforcement learning. In Proceedings of the International Conference on Machine Learning, New York, NY, USA, 20–22 June 2016; pp. 1928–1937. [Google Scholar]

- Yang, J.; Wang, L.; Han, J.; Chen, C.; Yuan, Y.; Yu, Z.L.; Yang, G. An air combat maneuver decision-making approach using coupled reward in deep reinforcement learning. Complex Intell. Syst. 2025, 11, 364. [Google Scholar] [CrossRef]

- Burda, Y.; Edwards, H.; Storkey, A.; Klimov, O. Exploration by random network distillation. arXiv 2018, arXiv:1810.12894. [Google Scholar] [CrossRef]

| Description | Symbol | Value |

|---|---|---|

| General Training Parameters | ||

| Discount factor | ||

| GAE parameter | ||

| PPO clipping parameter | ||

| Entropy regularization coefficient | ||

| Trajectory buffer size (steps) | N | 2048 |

| Minibatch size | B | 128 |

| PPO epochs per update | K | 6 |

| Optimizer | – | Adam |

| Policy/Value learning rates | ||

| State/action dimensions | ||

| Total training episodes (per run) | – | 20,000 |

| Number of training runs (seeds) | – | 10 |

| Maximum episode length | 2000 | |

| Evaluation Parameters | ||

| Console print interval (episodes) | 100 | |

| Win-rate record interval (episodes) | 50 | |

| Win-rate window size (episodes) | 100 | |

| Avg. discounted reward record | ||

| interval (episodes) | 50 | |

| Policy Network Parameters | ||

| Policy distribution | – | Gaussian (Equation (38)) |

| Log-std clamp range | – | |

| Hidden layers | – | (MLP) |

| Activation | – | ReLU |

| VBRS-Specific Parameters | ||

| Initial shaping coefficient | 0 | |

| Minimum shaping coefficient | ||

| Maximum shaping coefficient | ||

| EMA update rate for TD loss | ||

| Smoothing rate toward target | ||

| TD-loss normalization scale | ||

| Max per-update change of | ||

| Environment Parameters | ||

| Simulation time step | s | |

| Weapon maximum range | 5 m | |

| Maximum attack angle | ||

| (ego/opponent) | rad | |

| Reward coefficients | ||

| Terminal rewards (Win/Lose/Draw) | R | |

| Safety radius | 1 m | |

| Distance normalization factor | m | |

| Maximum velocity (per-axis) | 3 m/s | |

| Maximum acceleration (per-axis) | 1 m/s2 | |

| Collision velocity damping factor | ||

| Kinetic-energy loss ratio | ||

| Method | Final Win Rate (95% CI) | SD |

|---|---|---|

| Without VBRS (PPO) | 71.41% [67.88, 74.94]% | 4.93 |

| PBRS | 79.08% [76.59, 81.57]% | 3.48 |

| RND | 75.66% [72.46, 78.86]% | 4.47 |

| VBRS (fixed ) | 86.06% [83.29, 88.83]% | 3.43 |

| VBRS (dynamic ) | 83.22% [80.60, 85.84]% | 3.66 |

| VBRS (dynamic ) | 88.62% [86.62, 90.62]% | 3.58 |

| VBRS (dynamic ) | 80.17% [75.75, 84.59]% | 6.18 |

| Method | Test | p (vs. PPO) | Effect | Effect Type |

|---|---|---|---|---|

| VBRS () | Wilcoxon | 0.00195 ** | 1.858 | Cohen’s |

| VBRS (Fixed ) | Wilcoxon | 0.00195 ** | 2.374 | Cohen’s |

| VBRS () | Wilcoxon | 0.00195 ** | 3.209 | Cohen’s |

| PBRS | Wilcoxon | 0.00586 ** | 1.427 | Cohen’s |

| VBRS () | Wilcoxon | 0.0645 | 0.803 | Cohen’s |

| RND | Wilcoxon | 0.0840 | 0.663 | Cohen’s |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Hou, B.; Pan, G.; Chen, Y. Enhancing Adversarial Policy Learning via Value-Based Reward Shaping. Electronics 2026, 15, 463. https://doi.org/10.3390/electronics15020463

Hou B, Pan G, Chen Y. Enhancing Adversarial Policy Learning via Value-Based Reward Shaping. Electronics. 2026; 15(2):463. https://doi.org/10.3390/electronics15020463

Chicago/Turabian StyleHou, Bo, Guangyu Pan, and Yao Chen. 2026. "Enhancing Adversarial Policy Learning via Value-Based Reward Shaping" Electronics 15, no. 2: 463. https://doi.org/10.3390/electronics15020463

APA StyleHou, B., Pan, G., & Chen, Y. (2026). Enhancing Adversarial Policy Learning via Value-Based Reward Shaping. Electronics, 15(2), 463. https://doi.org/10.3390/electronics15020463