1. Introduction

Cracks in pavements, bridges and other infrastructure are often the first warning signs of material fatigue and structural weakness. If neglected, small fissures can quickly grow into major defects, reduce service life and drive up repair costs [

1]. In real-world environments, however, cracks appear under diverse lighting conditions, on a range of surface textures, and in a variety of shapes—making reliable automated detection challenging [

2]. Traditionally, inspection has relied on manual visual assessment, which is slow, costly, and subjective. Moreover, many regions—such as bridge undersides or high concrete facades—are difficult to access, limiting the reliability of human inspection [

3].

Early attempts at automating crack detection employed traditional image-processing techniques, including edge detection, thresholding, and morphological filtering. Although effective for simple cases, these methods required manual tuning and were highly sensitive to shadows, stains and background textures [

4]. To improve robustness, traditional machine-learning approaches were introduced. Methods based on Support Vector Machines [

5] (SVMs), Random Forests [

6], and handcrafted feature descriptors such as Gabor filters or Local Binary Patterns provided more stability than pure image processing. However, they still depended on manually engineered features and struggled when confronted with the wide variation present in real infrastructure images [

7].

In recent years, deep learning has become the dominant approach for crack analysis, enabling end-to-end feature learning directly from data. Convolutional neural networks (CNNs) have achieved notable progress in pixel-level localization and background separation [

4,

8,

9]. However, CNNs have limited receptive fields, which restrict their ability to capture long, discontinuous cracks or contextual dependencies [

10]. Transformer-based architectures address this limitation through self-attention, enabling the modeling of global context, but they typically require large, annotated datasets and high computational resources [

11]. Since most crack datasets are small and contain diverse surface patterns, transformers often struggle to generalize across different environments.

To enhance generalization, several hybrid CNN–Transformer approaches have been proposed. For instance, the Parallel Convolutional and Transformer Crack Network (PCTC-Net) fuses convolutional and transformer encoders to balance local and global representations, outperforming DTrC-Net [

12] on DeepCrack, Crack500, and CrackSeg9k [

11]. Similarly, MSP U-Net [

13] integrates multi-scale attention to improve segmentation in low-resolution images, achieving higher precision and recall across multiple datasets. Despite these advances, no single architecture consistently performs best under all conditions. Crack characteristics vary significantly with lighting, material properties, acquisition devices, and crack types, leading different models to exhibit complementary strengths and weaknesses. Cross-dataset evaluations further confirm that models trained on one dataset often suffer substantial performance degradation when tested on another [

14].

Motivated by this observation, this work adopts a different perspective: instead of pursuing a single universally optimal architecture, it explicitly exploits model complementarity through an adaptive Mixture-of-Experts (MoE) framework for crack segmentation.

Mixture-of-Experts (MoE) frameworks provide a principled mechanism for combining multiple specialized networks within a single predictive system. An MoE architecture consists of a set of expert models and a gating network that learns to assign each input to the most suitable experts, after which the selected predictions are combined—typically through weighted averaging. Recent studies have demonstrated the effectiveness of MoE designs in visual tasks [

15]. In medical image analysis, SAM-Med3D-MoE [

16] integrates multiple task-specific models with a foundation segmentation network. The Mixture-of-Shape-Experts [

17] (MoSE) framework introduces sparse expert activation to improve generalization. Recent surveys further confirm the growing relevance of MoE systems in computer vision [

18].

In the context of crack segmentation, however, the MoE paradigm remains largely unexplored. Most existing approaches rely on a single model trained on all data, implicitly assuming uniform suitability across diverse crack appearances and environments.

This work introduces a Mixture-of-Experts framework for crack segmentation that adaptively combines four complementary expert models using a lightweight ResNet-18 gating network trained with soft supervision. An out-of-fold validation strategy is employed to obtain unbiased performance estimates for each expert at the sample level. These estimates are normalized to form soft supervision targets that reflect the relative reliability of the experts during training. At inference time, the gating network ranks the experts for each input image, and the predictions of the top two models are selectively fused to produce the final segmentation mask.

The proposed framework is evaluated on two public datasets—Crack500 and the CrackForest Dataset (CFD)—as well as an in-house full-scene dataset (RCFD). Experimental results demonstrate that the Top-K MoE consistently outperforms the strongest individual expert, achieving improvements of up to approximately 2.5 percentage points in mean Intersection-over-Union while capturing most of the potential performance gain indicated by the oracle upper bound.

By selecting experts on a per-image basis, the proposed framework adapts to variations in crack appearance and imaging conditions, providing a flexible alternative to single-network models and fixed ensemble averaging.

With this motivation in place, the main contributions of this study can be clearly summarized as follows:

An adaptive Mixture-of-Experts framework for crack segmentation is proposed, in which multiple complementary expert models are selectively combined through a lightweight gating network based on sample-specific reliability estimation.

An out-of-fold soft supervision strategy together with a Top-K aggregation mechanism is introduced to train and deploy the gating network, enabling stable expert routing and robust prediction fusion without requiring additional annotations or modifications to the expert architectures.

Extensive experiments are conducted on three benchmark datasets, demonstrating consistent improvements over individual experts and recent state-of-the-art methods while maintaining a favorable balance between segmentation accuracy and computational cost.

The rest of this paper is organized as follows:

Section 2 reviews related work.

Section 3 details the proposed framework, including the expert models, gating network, and training strategy.

Section 4 describes the datasets and training setup.

Section 5 presents experimental results and analysis. Finally,

Section 6 concludes the paper and outlines possible directions for future work.

2. Related Work

Crack detection and segmentation have progressed through several distinct stages, evolving from early handcrafted image-processing techniques to today’s deep learning-driven models. This section provides an overview of this progression, covering traditional image processing and classical machine learning (ML) approaches, followed by CNN-based architectures, transformer and hybrid models, and recent efforts toward Mixture-of-Experts (MoE) frameworks. Beyond summarizing prior work, particular emphasis is placed on analyzing the practical limitations of existing approaches in order to motivate the methodology proposed in this study.

2.1. Traditional Image Processing and Classical Machine Learning

Early crack detection systems were built on hand-crafted image processing techniques such as Canny and Sobel edge detectors [

19,

20], and global thresholding methods like Otsu segmentation [

21]. While these approaches could identify simple crack patterns, they were highly sensitive to lighting variations, shadows, and background textures, limiting their robustness in real-world scenarios.

Classical ML pipelines attempted to improve robustness by pairing handcrafted features with SVMs, boosting, or random forests. Examples include CrackTree [

22] and the methods of Oliveira and Correia [

5], which relied on manually engineered descriptors to classify crack pixels. Despite modest gains, these techniques remained dependent on predefined features and struggled to generalize across diverse crack appearances and imaging conditions, motivating the shift toward deep-learning-based solutions.

2.2. Deep Learning with Convolutional Neural Networks

With the introduction of U-Net [

23], CNNs became the dominant paradigm in crack segmentation. U-Net’s encoder–decoder architecture enabled end-to-end learning and inspired numerous variants tailored to infrastructure inspection tasks.

DeepCrack [

4] proposed hierarchical feature learning to improve the detection of thin and discontinuous cracks, while lightweight models such as MobileNetV3 [

24] were adapted to reduce computational cost. More recent CNN-based architectures introduced refinement-driven or multi-decoder designs, including LMM [

25], Efficient CrackMaster [

9], and CrackRefineNet [

26], achieving strong segmentation performance.

Despite their effectiveness, CNN-based models are inherently limited by local convolutional receptive fields, which restrict their ability to capture long-range contextual dependencies. As a result, their performance may degrade when dealing with large-scale crack continuity, cluttered backgrounds, or complex surface textures that require broader contextual reasoning.

2.3. Transformer-Based and Hybrid Architectures

Vision Transformers introduced self-attention mechanisms capable of modeling global spatial relationships. Swin Transformer [

27] demonstrated that hierarchical attention with shifted windows can achieve competitive segmentation performance, while MobileViT [

28] explored integrating transformer blocks into lightweight CNN backbones.

Building on these advances, several transformer-based and hybrid architectures have been proposed for crack segmentation. CrackFormer [

29] leveraged multi-scale global attention to enhance crack continuity, whereas EfficientCrackNet [

30] and MSDCrack [

31] employed dual-encoder designs and multi-stage supervision. Hybrid-Segmentor [

32] further combined convolutional and transformer representations to improve fine-grained boundary detection.

Although these models demonstrate promising results, they introduce notable practical challenges. Transformer-based and hybrid architectures are typically more computationally demanding and architecturally complex, which can hinder deployment in resource-constrained or real-time inspection settings. Moreover, transformer components often require large and diverse training datasets to generalize effectively, whereas most crack datasets remain relatively small, imbalanced, and domain-specific. Consequently, these models do not consistently outperform well-designed CNN architectures across different datasets and environmental conditions, and their performance can vary substantially depending on scene characteristics.

2.4. Mixture-of-Experts Frameworks

Mixture-of-Experts (MoE) frameworks combine multiple specialized models through a learnable gating mechanism that selects or weights experts based on the input. MoE strategies have demonstrated strong potential in medical image segmentation, where frameworks such as MoSE [

17] and SAM-Med3D-MoE [

16] show that integrating task-specific models can significantly improve robustness and generalization.

MoE designs typically employ either hard gating, which assigns each input to a single expert, or soft/probabilistic gating, which distributes weights across multiple experts. Hard gating can be brittle when expert competencies overlap or routing decisions are uncertain, whereas soft gating enables smoother aggregation and more effective use of complementary information.

In the context of crack segmentation, however, MoE frameworks remain largely unexplored. Most existing approaches rely on a single architecture trained on all available data, implicitly assuming uniform suitability across diverse crack appearances, surface materials, and imaging conditions. This assumption is frequently violated in practice, as different models tend to specialize in different crack patterns and environments. Furthermore, MoE variants explored in related domains often rely on hard or one-hot routing strategies that discard useful complementary information from non-selected experts.

These observations reveal a clear gap in the literature: while crack segmentation models continue to increase in architectural complexity, limited attention has been paid to adaptive model selection mechanisms that explicitly exploit expert complementarity. This gap motivates the present work, which introduces an adaptive Top-K Mixture-of-Experts framework with soft-label supervision and out-of-fold validation to dynamically combine the strengths of multiple segmentation experts, rather than relying on a single fixed architecture.

3. Methodology

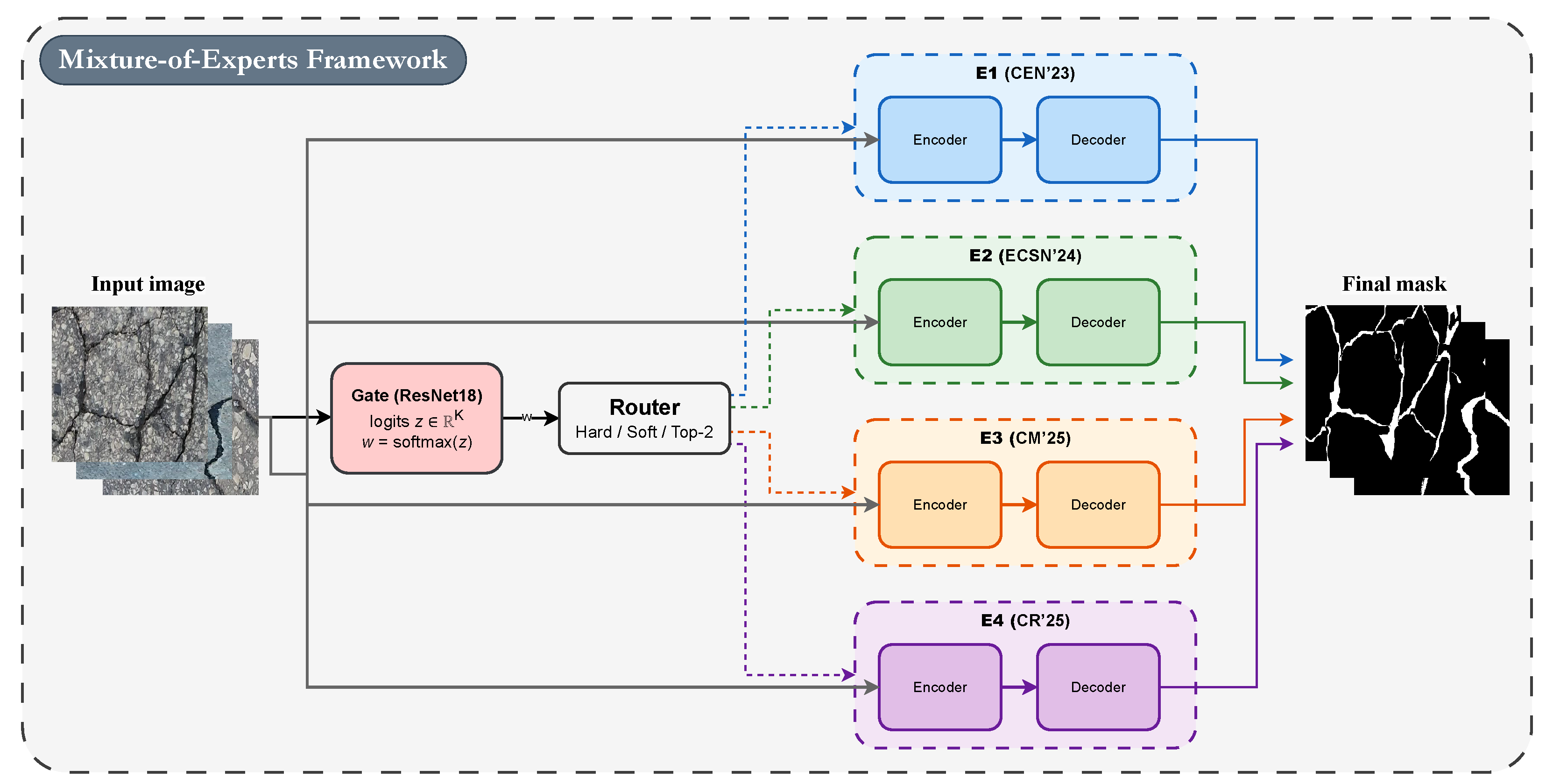

This section presents the proposed Mixture-of-Experts (MoE) crack segmentation framework. A high-level schematic of the overall protocol is illustrated in

Figure 1, while a detailed architectural overview is provided in

Figure 2. The section is organized as follows. First, the four expert models previously developed are summarized. Second, the construction of a non-leaky out-of-fold (OOF) dataset is described, where each expert is trained in a five-fold manner to generate unbiased predictions for supervision. Third, the consolidation of these predictions into hard and soft routing targets is detailed. Fourth, a lightweight ResNet-18 [

33] gating network is introduced to estimate the relative suitability of each expert for a given input image. Fifth, the MoE mixing strategies—Hard routing, Soft weighting, and the proposed Top-2 weighted aggregation—are defined. Finally, the training configurations and loss functions associated with each component are presented.

3.1. Experts (4 Models)

The proposed MoE framework builds on four previously published crack-segmentation models, which serve as fixed experts. These models are used strictly in inference mode; no fine-tuning or architectural modifications are applied. The expert set includes:

E1 (CEN’23)—Cascade Enhanced Network [

34].

E2 (ECSN’24)—Enhanced Crack Segmentation Network [

35].

E3 (CM’25)—CrackMaster [

9].

E4 (CR’25)—CrackRefineNet [

26].

These four experts were selected to maximize architectural and functional diversity. Each model emphasizes different design principles—such as cascaded feature refinement, enhanced encoder–decoder pathways, or multi-stage contextual aggregation—resulting in complementary strengths and distinct error patterns. This diversity is essential for enabling the gating network to learn meaningful routing decisions and leverage the most suitable experts for each input image.

Although the selected experts originate from our prior work, they were chosen to reflect distinct architectural paradigms and complementary prediction behaviors. Importantly, the proposed Mixture-of-Experts framework is model-agnostic and can seamlessly incorporate external CNN- or Transformer-based crack segmentation models without architectural modification.

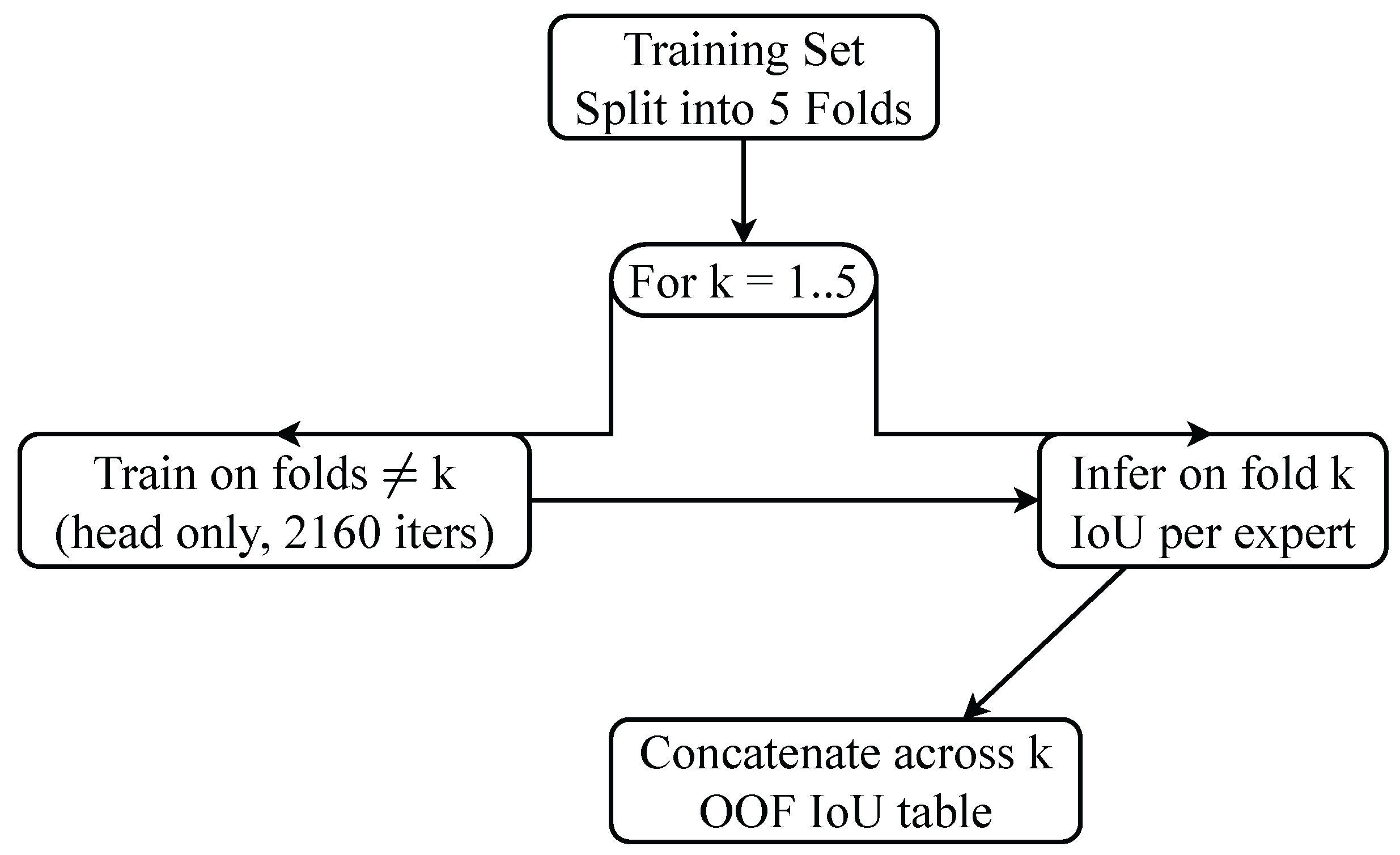

3.2. Dataset Creation via Out-of-Fold Annotation (K = 5)

To supervise the gating network without introducing data leakage, a non-leaky out-of-fold (OOF) annotation strategy is adopted. The training set is divided into five folds. For each fold

k, all expert models are trained on the remaining four folds using short training schedules with frozen backbones, and inference is performed on the held-out fold. This yields per-image IoU scores for every expert on data that was never used during that expert’s training. After all five iterations, the fold-wise predictions are concatenated into a complete OOF table that provides an unbiased estimate of each expert’s relative quality on each sample. This OOF supervision serves as the training signal for the gating network. The overall OOF generation procedure is illustrated in

Figure 3.

where

is the

i-th training image,

is its corresponding ground-truth mask, and

indexes the

j-th expert model. The function

denotes the instance of expert

j trained on all folds except fold

k, i.e., the model used to infer predictions on images belonging to the held-out fold

k. The operator

computes the intersection-over-union between the predicted mask and the ground truth. Thus,

represents the unbiased quality estimate of expert

j on sample

, obtained using a model that has never been trained on that sample.

3.3. Label Consolidation: Hard and Soft

The OOF IoU matrix provides, for every training sample, a vector containing the relative performance of the 4 experts. These values are transformed into routing targets that supervise the gating network. Two complementary supervision modes are used: hard labels and soft labels.

3.3.1. Hard Labels

In the hard-label formulation, each image is assigned to the single expert that achieved the highest OOF IoU. This yields a standard one-hot target, instructing the gate to select exactly one expert during training. Formally,

where

is the index of the best-performing expert for image

.

3.3.2. Soft Labels

Hard labels enforce a single-winner decision and ignore cases where multiple experts perform similarly. To preserve this information, a soft-label formulation is used in which the OOF IoU scores are normalized into a probability distribution. Each expert thus receives a weight proportional to its relative quality, allowing the gate to learn finer distinctions and exploit complementary strengths among experts:

where

denotes the normalized reliability of expert

for sample

.

This dual-target setup enables a direct comparison between categorical (hard) supervision and probabilistic (soft) supervision. As shown in the experimental section, the soft-label formulation yields more stable routing behavior and consistently improves Top-K aggregation performance.

In this work, IoU is adopted to derive soft routing labels because it provides a balanced, single-valued summary of segmentation quality that jointly reflects false positives and false negatives, making it well suited for expert ranking and normalization. While precision and recall are particularly informative for thin crack structures, IoU implicitly integrates both aspects and avoids introducing metric-specific bias into the supervision signal. Moreover, using a single, consistent metric simplifies optimization and improves training stability of the gating network. Incorporating multi-metric or structure-aware routing targets is a promising extension and is left for future investigation.

An illustrative example of the conversion from OOF IoUs to hard and soft routing targets is provided in

Table 1.

3.4. Gate Network

The gating network determines how much each expert should contribute to the final segmentation output for a given input. As illustrated in

Figure 2, the gate operates independently from the experts: it receives only the RGB image and does not use any internal features or predictions from the expert models. This design ensures that expert selection remains lightweight, modular, and decoupled from the architectures of the experts.

A ResNet-18 backbone [

33] is adopted as the gate due to its favorable trade-off between accuracy and computational cost, strong generalization on medium-scale datasets, and stable optimization behavior. The standard classification head is replaced with a compact multi-layer perceptron (MLP) that produces one logit per expert. All expert models are kept frozen at inference and during gate training; only the gate parameters are updated.

The gating network intentionally operates on raw RGB images rather than crack-local or expert-derived features. This choice reflects the role of the gate as a routing mechanism rather than a segmentation module. Global scene characteristics—such as illumination conditions, surface texture complexity, background clutter, and crack density—are often sufficient to estimate which expert is more reliable for a given input. Local crack responses, by contrast, are already modeled within the expert networks themselves and may introduce expert-specific bias if reused by the gate. By remaining feature-agnostic and fully decoupled from the experts, the gating network avoids information leakage, preserves modularity, and maintains robustness across heterogeneous expert architectures.

The unnormalized logits

z output by the gate are transformed into routing weights

w through a softmax function:

where

w represents the normalized contribution of each expert and forms the basis for the Soft, Hard, and Top-

K aggregation strategies described later.

3.5. MoE Mixing Strategies

Once the gate produces a weight vector for the input image x, these routing weights are used to combine the predictions of the frozen experts . Three aggregation strategies are considered in this work.

3.5.1. Hard Mixture

Only the single most likely expert is selected. The final mask equals the prediction of the expert with the highest routing weight:

3.5.2. Soft Mixture

All experts contribute to the final prediction. Their outputs are combined through a weighted sum using the gate probabilities:

3.5.3. Top-K Mixture (K = 2)

Here, the gate selects the

K highest–scoring experts. Their weights are renormalized over this subset and used for selective fusion:

where

denotes the index set of the Top-

K experts for sample

x.

The choice of reflects a trade-off between exploiting expert complementarity and maintaining computational efficiency. In practice, selecting the top two experts captures most of the potential performance gain, while reducing the risk of fusing predictions from lower-ranked experts that may share similar failure modes. Although the optimal number of experts may vary across input images, the proposed gating network performs sample-dependent ranking, allowing dominant experts to contribute more strongly when appropriate. Extending the framework to support adaptive or input-dependent K selection could be explored in future work.

In summary, the gate predicts the routing weights, the router applies one of the three selection strategies, and the chosen experts are fused to form the final segmentation mask, as illustrated in

Figure 2.

3.6. Training Strategy

This subsection describes the training procedure of the proposed Mixture-of-Experts framework, including the preparation of the expert models, the supervision strategy used for training the gating network, and the loss functions adopted under hard and soft labeling schemes. The overall objective is to learn a reliable gating mechanism that can effectively select and combine complementary experts for robust crack segmentation.

3.6.1. Experts

Each expert model was originally trained in its respective publication. For datasets not covered in prior work, the experts were retrained using their original configurations to ensure a fair and consistent comparison. During the OOF procedure, the expert backbones are frozen and only their decoder heads are briefly fine–tuned for 2160 iterations per fold. These short training cycles are used exclusively to generate fold–wise predictions for constructing the OOF supervision table; they do not modify the experts used at inference.

3.6.2. Gate

Two versions of the gating network are trained: one supervised with hard labels and one with soft labels derived from the OOF table. The gate receives only the RGB image and outputs a four–dimensional logit vector representing the predicted weights for the experts. All expert parameters remain frozen throughout training. At inference, the trained gate is paired with one of the three mixture strategies (Soft, Hard, or Top-

K) described in

Section 3.5.

3.6.3. Loss Functions

When the gate is trained with hard labels, standard cross–entropy loss is used:

For soft-label supervision, the gate is trained using the Kullback–Leibler divergence, allowing it to learn graded preferences across experts:

4. Datasets and Experimental Setup

This section presents the datasets used to evaluate the proposed framework, along with the data augmentation strategies employed during training. It also outlines the implementation details of the training setup and describes the evaluation metrics used to assess segmentation performance across tasks.

4.1. Datasets

The evaluation of the proposed MoE framework is carried out on three crack segmentation datasets: one in–house dataset (RCFD) and two public datasets (Crack500 and CFD). These datasets exhibit considerable variation in imaging conditions, crack morphology, background texture, and scene complexity. This variation provides a comprehensive basis for assessing robustness and generalization. All images are resized to 512 × 512 pixel to ensure a consistent input format during training and inference. Representative samples from the three datasets are shown in

Figure 4, and dataset statistics are reported in

Table 2.

RCFD (In-house) [

26,

36]: RCFD is constructed from multi-country road scenes derived from the RDD2022 [

37] dataset. The dataset comprises 1428 RGB full-scene images and is split into 973/170/285 images for training, validation, and testing, respectively.

Crack500 [

20]: Crack500 consists of 3368 images with manually annotated binary masks. It covers diverse camera viewpoints, surface materials, and illumination conditions. The dataset includes 1896 training images, 348 validation images, and 1124 test images.

CFD [

6]: The CrackForest Dataset contains 119 images with pixel-level crack annotations. Although smaller in scale, CFD covers a broad variety of pavement textures. Following the standard split, 101 images are used for training and 18 images are used for testing.

4.2. Data Augmentation

Only the gating network is trained in this work; all expert models remain frozen. During gate optimization, moderate appearance augmentations are applied to the RGB images, while the target supervision (OOF IoU vectors) remains unchanged. The following stochastic transformations are used: colour jittering (brightness/contrast/saturation/hue), random grayscale conversion, horizontal and vertical flips, small random rotations (), and Gaussian blur. Each augmented image is subsequently normalized using ImageNet statistics.

At test time, only resizing and normalization are applied, without any augmentation.

4.3. Implementation Details

The gate network is trained using the AdamW optimizer with a learning rate of and weight decay of . A batch size of 16 is used, and training runs for 50 epochs. A cosine learning rate schedule with linear warmup is applied. The backbone is kept frozen during the first five epochs and is then unfrozen for the remaining epochs. Early stopping is used with a patience of 7 epochs. All experiments are executed on a computer running Windows 10 Pro, equipped with an Intel Core i7-9700K CPU (64-bit, 3.60 GHz), 64 GB of RAM, and a single NVIDIA GeForce RTX 3090 GPU (24 GB).

4.4. Evaluation Metrics

Segmentation performance is assessed using pixel–wise metrics computed between the predicted mask

and the ground truth

Y. The metrics used are Intersection over Union (IoU), Precision, Recall, F1–score, and mean IoU (mIoU).

In this work (crack vs. background), therefore mIoU is the average IoU across both classes.

5. Experimental Results and Discussion

This section presents the experimental evaluation of the proposed MoE framework on the three datasets introduced in

Section 4.1. All experiments follow the same preprocessing and training settings, and segmentation performance is measured using the metrics defined in

Section 4.4.

The analysis is organized into four parts. First, the MoE configurations are compared with their constituent expert models to assess the benefit of routing-based aggregation. Second, an ablation study investigates the influence of different supervision and aggregation strategies (Hard, Soft, and Top-K) on performance. Third, the best-performing configuration is benchmarked against recent state-of-the-art crack segmentation methods to evaluate its external competitiveness. Finally, a qualitative analysis illustrates representative visual results, highlighting the improvements achieved in crack continuity, boundary precision, and robustness across datasets.

Together, these experiments provide a comprehensive understanding of both the internal effectiveness and real-world generalization capability of the proposed approach.

5.1. Comparison Against Individual Experts

The first set of experiments examines whether routing–based aggregation provides a measurable improvement over using any single expert model in isolation. For each dataset, the MoE outputs are generated using the trained gate, while the expert baselines correspond to direct inference of each expert without routing. All models are evaluated on the same held-out test sets, under identical preprocessing and input resolution. Performance differences therefore arise exclusively from the routing mechanism rather than differences in training data, annotation, or optimization pipelines.

As shown in

Table 3, the proposed Mixture-of-Experts (MoE) consistently surpasses all individual experts across the three datasets. The improvement is especially notable on RCFD, where the scene diversity and illumination changes make segmentation particularly challenging. Among the routing strategies, the Top-

K configuration achieves the best overall performance, outperforming the strongest individual expert (E4, CR’25) by approximately 1.5–2.5 percentage points in IoU and F1-score. The Soft variant also delivers strong and stable results, slightly below Top-

K, while the Hard routing baseline performs the weakest due to its limited flexibility. These findings confirm that adaptive multi-expert fusion guided by soft-supervised gating provides superior generalization and robustness under diverse real-world conditions.

5.2. Ablation and Strategy Analysis

To better understand the effect of different routing strategies within the proposed Mixture-of-Experts (MoE) framework, an ablation study is conducted comparing three configurations: (i) MoE (Hard), trained using one-hot expert supervision; (ii) MoE (Soft), trained with probabilistic soft-label targets derived from the experts’ out-of-fold (OOF) performance; and (iii) MoE (Top-K), which combines the outputs of the Top-2 ranked experts during inference. All variants use the same four trained experts, identical data augmentation, and optimization settings. Results are reported on the RCFD dataset, which includes diverse full-scene images and provides a rigorous test of the routing mechanism.

As shown in

Figure 5, both soft supervision and selective expert aggregation contribute significantly to segmentation performance. The MoE (Hard) configuration achieves the lowest results, indicating that assigning each sample to a single expert restricts the gate’s flexibility when multiple experts could jointly provide complementary information. This rigidity limits the model’s ability to handle images with overlapping visual patterns, such as variations in crack morphology or illumination conditions.

In contrast, the MoE (Soft) variant yields a consistent improvement of approximately +1.6 IoU and +1.9 F1-score over the MoE (Hard) configuration. Probabilistic supervision enables the gate to learn nuanced relationships between experts and inputs, resulting in smoother routing decisions and more effective utilization of shared expertise across samples.

Further gains are observed with the MoE (Top-K) strategy, which aggregates the predictions of the Top-ranked experts during inference. This selective fusion preserves expert specialization while reducing the risk of overconfidence associated with relying on a single model.

5.2.1. Effect of the Top-K Selection

To analyze the sensitivity of the framework to the choice of

K, additional experiments were conducted on the RCFD dataset. As shown in

Table 4, selecting

yields the best overall performance across all evaluation metrics. Increasing

K beyond two leads to marginal performance changes or slight degradation, while also increasing computational cost. These results justify the choice of

as an effective trade-off between segmentation accuracy and efficiency.

5.2.2. Effect of Expert Count and Selection

A leave-one-out analysis was conducted to evaluate whether the proposed framework depends on any single expert. As shown in

Table 5, removing one expert at a time results in a modest but consistent performance drop across all metrics. No individual expert causes a disproportionate degradation when excluded, indicating that performance gains arise from complementary interactions among experts rather than reliance on a single dominant model.

5.2.3. Inclusion of External Expert Models

To assess whether the proposed MoE framework generalizes beyond the authors’ previously developed models, additional experiments were conducted by incorporating external CNN and transformer-based architectures (U-Net and Swin Transformer) into the expert pool. As reported in

Table 6, the framework maintains strong and competitive performance under these heterogeneous expert configurations, with results remaining close to those obtained using the full in-house expert pool. This demonstrates that the proposed gating and aggregation strategy is not restricted to a closed set of experts and can effectively integrate external architectures.

It is important to note that the proposed Mixture-of-Experts framework does not assume a fixed or indispensable set of experts. Its performance gains stem from the diversity and complementarity of the available models rather than their number alone. The ablation results consistently confirm that adaptive expert selection, rather than a particular expert configuration, is the primary driver of performance improvement. Exploring expert pruning strategies or adaptive K selection to further balance performance and computational cost remains a promising direction for future work.

5.3. Comparison with State-of-the-Art Methods

To further evaluate the effectiveness and generalization capability of the proposed Mixture-of-Experts (MoE) framework, its performance is compared with recent crack segmentation models, including both convolutional and transformer-based architectures. The CNN-based models include U-Net [

23], DeepCrack [

4], MobileNetV3 [

24], LMM [

25], CrackMaster [

9], and CrackRefineNet [

26]. The transformer-based counterparts comprise SwinT [

27], MobileViT [

28], CrackFormer

II [

29], EfficientCrackNet [

30], MSDCrack [

31], and Hybrid-Segmentor [

32]. All models are evaluated under identical preprocessing, input resolution (512 × 512), and dataset splits to ensure a fair and reproducible comparison. The results are reported separately for each dataset to illustrate performance under different imaging conditions.

As shown in

Table 7, on the RCFD dataset—comprising challenging full-scene road images with diverse lighting and complex backgrounds—the proposed MoE (Top-

K) achieves the best performance across all metrics. It reaches 67.82% IoU and 81.16% F1-score, outperforming the strongest CNN baseline, CrackRefineNet, by +2.4 IoU and +2.1 F1-score points. Transformer-based methods such as SwinT and MobileViT remain competitive but fall short of the MoE. These results highlight that adaptive expert routing effectively captures diverse surface and illumination patterns, offering stronger generalization than single CNN or transformer models.

Table 8 reports the results on the Crack500 dataset, which contains diverse pavement textures under moderate lighting variation, the MoE (Top-

K) again achieves the best overall performance. It records 64.31% IoU and 77.93% F1-score, exceeding CrackRefineNet by +2.3 IoU and Hybrid-Segmentor by +6.2 IoU. The balanced precision (75.94%) and recall (80.02%) confirm that the MoE maintains strong crack sensitivity while suppressing false positives. These results demonstrate robust cross-domain generalization on large-scale datasets.

As summarized in

Table 9, the proposed MoE (Top-

K) continues to demonstrate strong performance on the CFD dataset, which contains a smaller number of high-texture pavement images, the MoE (Top-

K) also maintains superior performance, achieving 56.24% IoU and 71.92% F1-score. It improves upon CrackRefineNet by +1.7 IoU and delivers well-balanced precision (65.41%) and recall (80.16%). These results indicate that the proposed framework generalizes effectively even under limited data conditions by leveraging complementary expert knowledge to reduce overfitting.

Overall, the proposed Mixture-of-Experts consistently achieves the highest performance across all three datasets, outperforming both CNN- and transformer-based baselines. The consistent improvement in IoU and F1-score confirms that routing-based expert aggregation is an efficient and scalable alternative to building deeper or more complex single networks, particularly when multiple high-quality expert models are already available.

5.4. Qualitative Analysis of Results

Figure 6 provides qualitative comparisons of the proposed MoE (Top-

K) framework against a diverse set of crack segmentation models, including CrackRefineNet, CrackMaster, Enh–CrackNet, CascadeNet, U–Net, DeepCrack, SwinT, CrackFormer II, Eff–CrackNet, and LMM. The visualization highlights true positives (blue), false positives (red), and false negatives (green) across three datasets: RCFD, Crack500, and CFD.

On the RCFD dataset, the MoE (Top-K) model consistently captures thin crack structures that several single-expert models struggle with. For example, DeepCrack and U–Net often produce scattered false positives along textured regions, while Transformer-based models such as SwinT and CrackFormer II may oversmooth delicate crack boundaries. The MoE, by contrast, delivers cleaner and more continuous crack traces, particularly in cases where illumination changes or road markings confuse individual models. CrackRefineNet and CrackMaster perform strongly on many samples, but each exhibits occasional failure modes—CrackMaster may miss extremely thin branches, whereas CrackRefineNet may introduce slight over-segmentation in highly textured surfaces. The MoE balances these behaviors by adaptively selecting the most reliable experts per sample.

On the Crack500 dataset, where background texture and crack morphology vary substantially, the complementary strengths of the experts become more evident. Eff–CrackNet, though lightweight, sometimes produces fragmented detections, while Enh–CrackNet and CascadeNet can overreact to rough pavement patterns, leading to false positives. In contrast, the MoE preserves the fine topology of intersecting cracks while suppressing noisy activations. In several cases, the MoE prediction visually resembles the best aspects of both CrackMaster and CrackRefineNet, combining structural sensitivity with noise suppression.

On the CFD dataset, which contains challenging low-contrast cracks, many models show noticeable limitations: LMM and U–Net tend to miss faint crack segments, while DeepCrack and Enh–CrackNet may produce spurious edges under harsh lighting. The MoE (Top-K) produces more coherent and stable predictions, accurately following the crack path without introducing unnecessary artifacts. The adaptive weighting is particularly beneficial in scenes where neither CNN- nor Transformer-based models alone are consistently reliable.

Overall, the qualitative visualizations reinforce the quantitative findings: the proposed MoE (Top-K) framework generates cleaner boundaries, preserves fine structural details, and reduces both false positives and missed crack regions. By leveraging sample-dependent expert selection, the MoE achieves visual quality that no single expert consistently reaches on its own.

5.5. Analysis of Failure Cases

Despite the strong overall performance of the proposed MoE framework, some failure cases still occur, particularly in scenes with weak contrast, highly textured backgrounds, or extremely thin crack patterns.

Figure 7 summarizes representative examples and illustrates how individual experts and the MoE behave under such challenging conditions.

In several cases, standalone experts disagree substantially in their predictions. For instance, CEN’23 (E1) often produces overly conservative masks, missing fine crack branches, while CM’25 (E3) may generate thicker or fragmented crack segments when background textures resemble crack edges. ECSN’24 (E2) and CR’25 (E4) generally perform better, but even these models occasionally misinterpret shadows or stains as cracks, especially on asphalt surfaces with noisy intensity patterns.

A key observation from the failure analysis is that incorrect MoE predictions are primarily associated with ambiguous expert ranking rather than isolated expert failure. In visually challenging scenes, the gating network does not always assign the highest weights to the experts that achieve the best standalone IoU scores. This behavior arises because the gate operates on global RGB appearance cues and may encounter inputs where background textures or illumination patterns resemble the visual signatures learned by certain experts.

As a consequence, the Top-K selection may include experts whose inductive biases are less suitable for the specific crack morphology present in the image. In such cases, the MoE inherits correlated errors from the selected experts, leading to localized false positives or missed thin crack regions. This highlights an implicit assumption of the Top-K strategy—namely, that the selected experts provide complementary information—which may not hold under strong visual ambiguity.

Nonetheless, even in these difficult scenarios, the MoE output often remains competitive. In cases where experts make complementary errors—such as one model over-segmenting while another under-segmenting—the weighted fusion yields a more stable prediction than any single expert. However, when the selected experts fail in a similar manner, the aggregation mechanism cannot compensate for these shared errors.

Overall, the observed failure cases reflect inherent limitations of scene-level routing under ambiguous visual conditions rather than instability of the proposed architecture. They motivate future improvements, such as uncertainty-aware gating, explicit modeling of expert disagreement, feature-space alignment between experts and the gate, or adaptive K selection, to further enhance robustness in challenging environments.

5.6. Complexity Analysis

To assess the practical computational cost of the proposed approach, a comparative complexity analysis is presented in

Table 10, reporting the number of trainable parameters, inference time, floating-point operations per second (FLOPs), and frames per second (FPS) for the proposed Mixture-of-Experts (MoE) framework and representative state-of-the-art crack segmentation models. All measurements are conducted using an input resolution of

on the same hardware configuration described in

Section 4.3.

For the proposed MoE, the reported number of parameters corresponds to the total parameter count of all expert models and the lightweight ResNet-18 gating network, as all experts must be stored even though only a subset is activated at inference time. Inference time, FLOPs, and FPS are measured end-to-end and include the cost of the gating network and the Top-K selected experts only, reflecting the actual execution strategy used during inference. As expert selection is input-dependent, the reported runtime and FLOPs represent the average Top-K execution cost over the test data.

For cascade-based methods, such as CascadeNet, the reported complexity accounts for sequential inference through multiple stages. In this case, an initial network is applied first, and its output logits are concatenated with the original RGB image and fed into a second encoder–decoder network, resulting in increased computational cost due to multi-stage execution.

As shown in

Table 10, lightweight models such as MobileNetV3 and EfficientCrackNet achieve fast inference with minimal parameters but typically exhibit lower segmentation accuracy. In contrast, high-capacity CNN and transformer-based models provide stronger representational power at increased computational cost. The proposed MoE introduces additional overhead compared to single-expert inference due to the evaluation of multiple experts; however, this overhead remains moderate because the gating network is lightweight and only the most relevant experts are activated. Overall, the proposed framework offers a favorable trade-off between segmentation accuracy and computational cost, making adaptive expert routing a practical solution when multiple pre-trained models are available.