1. Introduction

Adaptive filtering techniques have been a cornerstone of digital signal processing for decades. Classical algorithms such as the least mean squares (LMS) algorithm and the recursive least squares (RLS) algorithm have been widely used to iteratively update filter coefficients so as to minimize mean squared error in stationary or slowly varying environments [

1]. In practice, many real-world systems are non-stationary, i.e., the underlying system or signal statistics change over time, which poses significant challenges to fixed-update adaptive filters. For example, changes in channel conditions, abrupt shifts in noise statistics, or evolving system dynamics require adaptive filters to both track time-varying parameters and maintain low error in the steady state. Early studies on adaptive filters in time-varying contexts analyzed the signal behavior of LMS and related algorithms when the “true” filter weights drift over time (see, for example, [

2]). More recently, comprehensive reviews have emphasized issues and challenges of adaptive filtering in non-stationary and dynamic environments (see [

3]; Khan et al. also highlight that the integration of data-driven and machine learning methods into adaptive filtering is among the future directions).

In parallel, the rise in deep learning (DL) and AI-driven methods has transformed many signal processing tasks. For adaptive filters, the prospect of learned update rules or neural-network-controlled adaptation (rather than hand-tuned fixed step sizes or forgetting factors) offers a promising route to improved tracking and steady-state behavior. For example, a recent work on end-to-end learning of adaptation control for frequency domain adaptive filters shows the potential of neural networks to map observed signal features to step sizes, thereby coping with non-white and non-stationary noise plus abrupt environment changes [

4]. Further, adaptive filters with machine learning integration are explicitly identified as a promising direction for dynamic, real-time signal processing in non-stationary environments [

5].

Despite these developments, there remains a gap in the literature when it comes to theoretical analysis of such AI-augmented adaptive filters in non-stationary environments. Many works focus on empirical performance, but lack tracking error bounds, stability guarantees, and comparison to classical algorithms under time-varying assumptions. At the same time, the design of update rules that are both learnable and provably stable/tracking-efficient is still under-explored.

In this paper, we propose a novel framework for adaptive filtering in non-stationary signal contexts, using a neural-network-based update rule. We formulate the update rule as

where

is a small neural network parameterised by θ. We provide stability and error-bound analysis under a slowly varying system assumption (i.e.,

). We then validate the method via extensive simulations on synthetic and real non-stationary signals and comparing them to classical LMS/RLS baselines, showing improved tracking and steady-state error.

The major contributions of this paper are summarized as follows:

We propose a deep-learning-based adaptive update rule that augments a classical normalized gradient framework with a bounded, data-driven step-size modulation, enabling robust adaptation under non-stationary conditions.

We derive theoretical stability and tracking error bounds for the proposed scheme under mild drift assumptions, establishing analytical guarantees that link the learned update rule to classical adaptive filtering theory.

We provide an extensive experimental evaluation of the proposed method, including direct comparisons with the LMS, NLMS, VSS-LMS, and RLS algorithms under synthetic and real data scenarios, demonstrating competitive or improved tracking performance across varying signal-to-noise ratios and non-stationarity regimes.

We introduce a unified experimental framework that reproduces the well-established behaviors of classical adaptive filters and serves as a rigorous reference benchmark for validating learning-based adaptive filtering strategies.

Recent advances in adaptive filtering research have introduced a number of robust approaches tailored to non-stationary signal environments. For instance, variable step-size mechanisms in normalized sub-band adaptive filters have been shown to improve convergence and robustness under complex distortions [

6]. Nonlinear adaptive filtering frameworks that are designed to handle non-stationary noise have also emerged recently [

7]. Comprehensive surveys of modern adaptive filters further highlight the evolving trends across the LMS, NLMS, and RLS families in dynamic conditions [

5].

The remainder of the paper is organized as follows.

Section 2 reviews classical adaptive filtering methods and recent learning-based approaches, with particular emphasis on non-stationary environments.

Section 3 formulates the adaptive filtering problem under bounded parameter drift.

Section 4 introduces the proposed deep-learning-driven adaptive update rule and discusses its practical implementation.

Section 5 provides a theoretical analysis, including mean-square stability and tracking error bounds under slow system variation.

Section 6 details the neural architectures and training procedures used to implement the learned update.

Section 7 describes the simulation setup, datasets, and evaluation metrics.

Section 8 presents and discusses the experimental results from synthetic and real-world signals, followed by the discussion and future research directions in

Section 9. Finally,

Section 10 concludes the paper.

2. Related Work

2.1. Classical Adaptive Filtering

Adaptive filters were first formalized by Widrow and Hoff (1960) in their pioneering work on adaptive switching circuits [

8], which introduced the principle of iterative weight adaptation to minimize mean-squared error. This concept evolved into the least mean squares (LMS) algorithm—one of the most influential adaptive algorithms in signal processing—popularized through Widrow and Stearns [

9]. Subsequent refinements included the normalized LMS (NLMS), which improves the convergence stability by normalizing with respect to input power, and the recursive least squares (RLS) algorithm, which accelerates the convergence at the expense of increased computational complexity [

10].

The Kalman filter, originally proposed for linear dynamic systems [

11], can also be viewed as a recursive adaptive estimator that achieves minimum variance estimation under Gaussian assumptions. These classical algorithms established the analytical foundation for stability, convergence rate, and steady-state mean-square error analysis [

12].

2.2. Adaptive Filtering in Non-Stationary Environments

Beyond classical methods, recent research has explored adaptive filter design for cyclo-stationary processes [

13], and constrained normalized subband adaptive filters with explicit performance analysis for colored inputs [

14]. There are also recent dynamic step-size LMS approaches that enhance transient adaptation in active noise attenuation scenarios [

15].

While classical adaptive filters perform well for stationary or slowly varying processes, they often degrade when confronted with time-varying signal or system statistics. Real-world applications—such as communications channels with fading, biomedical signal tracking, or time-varying noise environments—require filters that are capable of tracking non-stationary dynamics.

Early approaches introduced variable step-size LMS algorithms, in which the adaptation gain is adjusted according to instantaneous error magnitude or gradient correlation. Representative studies include [

16,

17,

18,

19], which demonstrated faster tracking during transients while maintaining low steady-state error.

The affine projection algorithm (APA) further enhanced tracking for correlated input signals by projecting the error onto multiple recent input vectors [

20]. Other notable contributions include adaptive filters for non-stationary environments based on subspace methods [

21,

22] and convex combination approaches [

23].

Recent reviews, such as Khan et al. [

3], provide comprehensive taxonomies of adaptive filtering techniques for dynamic environments and identify the persistent challenge of balancing fast convergence and robustness under time-varying conditions.

Emerging meta-learning and neural-network-driven adaptive filtering techniques have been proposed, showcasing enhanced adaptability in non-stationary environments and promising fusion of deep learning with conventional adaptive updates [

24,

25].

2.3. Machine Learning in Signal Processing

The past decade has seen a surge in efforts to integrate machine learning (ML) and deep learning (DL) paradigms into adaptive filtering and signal estimation. ML offers data-driven strategies for learning adaptation rules or priors that may outperform hand-crafted algorithms in complex, nonlinear, or highly non-stationary settings.

Notable examples include the deep unfolding framework [

26], where iterative optimization algorithms (e.g., ISTA) are unrolled into neural networks whose parameters are learned end-to-end. This idea inspired architectures such as LISTA (Learned ISTA), ADMM-Net, and their adaptive signal-recovery counterparts.

In the specific context of adaptive filtering, Casebeer et al. [

27] proposed Meta-AF, a meta-learning framework that enables filters to learn adaptation dynamics across diverse tasks. Similarly, Esmaeilbeig and Soltanalian [

28] introduced a deep-learning-based adaptive filter that was optimized using Stein’s unbiased risk estimator (SURE) to improve generalization without labeled data.

Hybrid ML–DSP systems have also been explored for noise cancelation, beamforming, and nonlinear system identification—for example, Haubner et al. [

4] demonstrated neural control of filter step-size with improved tracking performance in non-stationary noise.

Recent research has explored both classical and learning-based adaptive filtering techniques across a wide range of real-world signal-processing problems. Okoekhian et al. [

29] developed and simulated adaptive filters in real-time audio environments using LMS and NLMS algorithms, demonstrating how parameter tuning (e.g., step-size

μ) critically balances convergence speed and stability for echo- and noise-cancelation systems. Their MATLAB-based (version R2025b) design and performance analysis reaffirmed the continuing importance of normalized adaptive filtering for low-latency audio and communication systems.

Expanding on such traditional frameworks, Wu and Zhou [

30] proposed a neural network adaptive filter combining backpropagation (BP) and LMS algorithms to enhance micro-spectrometer detection accuracy under noisy measurement conditions. Their hybrid BP–LMS scheme achieved 3–4 dB higher SNR and faster convergence compared with classical adaptive filters, illustrating the potential of neural-assisted architectures for complex nonlinear signal environments.

In the biomedical field, Tulyakova and Trofymchuk [

7] introduced adaptive nonlinear filtering algorithms for removing non-stationary physiological noise in electronystagmographic (ENG) signals. Their locally adaptive myriad and hybrid median filters achieved real-time performance while preserving rapid transitions caused by saccadic eye movements, outperforming classical Savitzky–Golay and median filters.

Complementary work by Corcione et al. [

31] addressed the adaptive modeling and filtering of periodic signals with non-stationary fundamental frequency. They proposed a recursive extraction method that simultaneously estimates the instantaneous phase and underlying signal pattern, validated experimentally with magnetocardiographic data obtained from quantum sensors. This study represents a significant advance in adaptive modeling of quasi-periodic non-stationary signals.

2.4. Gap Analysis

Although these works illustrate remarkable empirical progress, most AI-based adaptive filtering studies lack rigorous theoretical analysis. Few provide explicit stability proofs or mean-square error bounds, and systematic benchmarks under controlled non-stationary conditions remain scarce. Additionally, many deep or meta-learning frameworks require large training datasets and do not quantify performance when the underlying system statistics drift beyond the training distribution.

Therefore, there is a pressing need for approaches that combine data-driven adaptability with theoretical tractability—bridging the analytical rigor of classical adaptive filters and the expressive power of modern neural architectures. This gap motivates the framework proposed in the present work, which introduces a theoretically analyzable neural-network-based update rule and validates its behavior through controlled simulations.

2.5. Relation to Learning-Based Adaptive Filtering Methods, Physics-Informed Neural Networks and Hybrid-Model-Based Neural Architectures

In recent years, we have witnessed a growing interest in learning-based strategies for adaptive filtering, including deep unfolding methods, meta-learning frameworks, and neural step-size control mechanisms. While these approaches share the common objective of improving adaptation under non-stationary conditions, the proposed learned update rule differs from existing methods in several fundamental aspects.

Deep unfolding approaches typically parameterize and learn the weights of an unrolled optimization or inference algorithm, effectively replacing parts of the classical adaptive filter with trainable components. In contrast, the proposed method preserves the standard normalized gradient update structure and learns only a bounded, data-driven modulation of the step size. As a result, the learned component operates as a lightweight plug-in mechanism, rather than redefining the underlying adaptive filter dynamics.

Meta-learning-based adaptive filtering frameworks, such as Meta-AF, aim to learn complete update rules through bi-level optimization across multiple tasks [

27]. More recent extensions incorporate attention mechanisms or subband structures for specific applications [

24]. While these approaches have demonstrated strong empirical performance, they typically replace the classical adaptive update entirely, rely on extensive meta-training, and require task-specific tuning or GPU-based optimization during training or deployment. Consequently, stability and tracking behavior are primarily enforced empirically, without explicit analytical guarantees. Related studies have also investigated meta-learning strategies for initialization and accelerated convergence in adaptive filtering contexts [

32].

Recent research has also explored the integration of simulation-driven and digital-twin-based models with machine learning for fault diagnosis and predictive maintenance in complex engineering systems. For instance, Srivastava and Tiwari [

33] developed a finite-element-based digital model of a gear rotor system combined with machine learning for fault diagnosis, while Lu and Li [

34] proposed a digital-twin-assisted deep transfer learning framework for rolling bearing fault prediction. Although these works address different application domains, they reflect a broader trend toward combining physics-based simulation and data-driven learning, which motivates the present study from a signal-processing and adaptive filtering perspective.

In contrast, the proposed approach adopts a hybrid design philosophy that explicitly preserves the classical normalized adaptive filtering framework. Learning is employed solely to modulate the step size in a bounded and stability-preserving manner, ensuring compatibility with theoretical convergence and tracking error analyses. The learned update rule is trained offline and deployed directly at inference time without online retraining or task-specific adaptation, resulting in low computational overhead and suitability for real-time, resource-constrained environments.

Physics-informed neural networks and hybrid-model-based neural architectures have been widely studied for system identification and inverse problems, where explicit physical laws or governing equations are embedded into the learning process. While effective in those settings, such approaches typically focus on modeling system dynamics rather than on designing adaptive update rules with guaranteed online stability.

In contrast, the present work addresses adaptive filtering from a control and signal processing perspective. The proposed method integrates learning into the adaptive update mechanism itself, preserving the classical normalized gradient structure and enforcing bounded step-size modulation. This design enables theoretical stability and tracking error analysis while maintaining low computational complexity, distinguishing it from physics-informed or surrogate-model-based neural architectures.

Overall, the novelty of the proposed method lies in the combination of a theory-preserving adaptive update structure, a lightweight learning-based step-size modulation, and explicit stability guarantees. This design bridges classical adaptive filtering theory and modern data-driven learning, offering a principled alternative to fully learned or meta-learning-based adaptive filtering approaches.

3. Problem Formulation

Adaptive filtering aims to iteratively estimate the parameters of an unknown system or process from streaming data, adjusting to minimize the mean-squared error between a desired signal and the filter output [

9,

10].

In the classical setting, the relationship between the desired response and the input vector is modeled as

where

denotes the

M-dimensional input signal vector,

is the (possibly time-varying) vector of optimal filter coefficients describing the underlying system, and

is additive observation noise, commonly modeled as a zero-mean, independent process with variance

.

The goal of adaptive filtering is to obtain an estimate that approximates the optimal parameter vector as accurately as possible in real time.

This is achieved by minimizing the instantaneous mean-squared error (MSE)

where

is the instantaneous estimation error.

In stationary environments—where

is constant—the optimal solution corresponds to the Wiener filter, defined by

with

and

However, in non-stationary or time-varying scenarios,

evolves with time, making the stationary assumption invalid.

Such non-stationarity arises naturally in communication channels with fading, acoustic echo paths, biomedical signals with changing physiology, or industrial sensors exposed to varying environments [

7,

30].

To represent this behavior, we assume that the system parameters drift smoothly according to

where

is a small constant, bounding the rate of temporal variation.

This “slow-variation” model is a standard assumption in adaptive filtering theory [

12] and provides a tractable framework for analyzing tracking performance.

The objective is therefore to design an adaptive algorithm that continuously updates to minimize Equation (2) while maintaining robustness to parameter drift and observation noise.

Classical algorithms such as LMS or RLS address this by using gradient-descent or recursive covariance updates, respectively [

9,

10].

However, they involve a trade-off between tracking capability and steady-state noise amplification: large step sizes allow for faster tracking but yield higher residual error, whereas small step sizes ensure stability at the cost of responsiveness.

To overcome these limitations, this work explores the integration of learned adaptive mechanisms—specifically, a neural-network-based update rule that dynamically adapts the step size and direction according to the local signal context.

The goal is to retain the analytical interpretability of classical algorithms while enhancing adaptability to non-stationary signals, leading to improved tracking accuracy and convergence speed under the bounded-variation assumption (Equation (4)).

4. Proposed Method: Deep-Learning-Driven Adaptive Filtering

The adaptive filters discussed earlier rely on analytical update rules such as the LMS or RLS recursions, which assume a fixed learning rate and linear adaptation law. While these algorithms are simple and interpretable, their static design limits their ability to respond to rapid or nonlinear variations in system dynamics. To overcome this, we propose a data-driven adaptive filtering framework in which the weight update rule itself is parameterized by a compact neural network trained to minimize the expected mean-squared error across diverse non-stationary scenarios.

4.1. Framework

Let the filter weights at time n be .

At each iteration, the filter output and error are computed as

and the weights are updated through a learned nonlinear mapping

where

denotes a differentiable function, represented by a small neural network with parameters

θ.

The mapping generalizes the gradient-based step of classical adaptive filters: for example, in the LMS algorithm .

Here, the network learns to adjust both the magnitude and direction of updates, based on instantaneous signal statistics and the current model state.

The architecture of may vary according to the target application.

In our experiments, a two-layer multilayer perceptron (MLP) and a gated recurrent unit (GRU) were examined.

The MLP captures nonlinear relationships between the input and error features, while the GRU introduces temporal memory, enabling the update rule to adapt to non-stationary trends.

Both models are lightweight: typically, fewer than 103 parameters, ensuring real-time feasibility comparable to LMS or NLMS filtering.

4.2. Training Strategy

The network

is trained offline using synthetically generated non-stationary datasets, following an approach inspired by meta-learning and deep-unfolding frameworks [

26,

27].

Each training sequence consists of an input vector process, , a desired output, , produced according to the time-varying model of Equation (1), and additive noise, .

The ground-truth system coefficients, , are allowed to drift, according to the slow-variation model in Equation (4).

During training, the algorithm recursively applies Equation (6) for a finite horizon

N and minimizes the cumulative prediction loss

which is differentiated through time (back-propagation through the update rule) to update the parameters,

θ.

This process enables the network to learn an adaptive law that implicitly balances tracking speed and steady-state stability without explicit step-size tuning.

To prevent divergence and guarantee stable operation, the learned update mapping is regularized to satisfy approximate Lipschitz continuity, ensuring bounded weight increments.

Practically, this stability constraint is enforced through explicit output bounding rather than gradient penalties or hard weight clipping. In particular, the neural update function employs a bounded activation at the output layer, ensuring that the learned step-size modulation remains within a predefined interval that is compatible with the theoretical stability conditions. This design induces an effective Lipschitz constraint on the learned update rule, such that

with

L < 1, thereby promoting mean-square stability during subsequent online deployment.

4.3. Algorithm Summary

The resulting algorithm can be summarized as follows:

Inputs: current signal vector , desired output , previous weights .

Compute error: .

Update via learned rule: .

Output: updated weights and prediction

The proposed learned update adaptive filter maintains the conceptual simplicity of the LMS algorithm while adding a data-driven mechanism that can approximate optimal step-size scheduling, nonlinear corrections, or state-dependent adaptation.

Its computational cost is dominated by small matrix–vector multiplications inside , leading to complexity per iteration—comparable to conventional LMS filtering with a minor neural overhead.

For completeness and reproducibility, Algorithm 1 summarizes the inference time learned update rule. The neural model is used only to produce a bounded step-size modulation, while the underlying coefficient update preserves the classical normalized gradient structure.

The bounds and were selected to ensure numerical stability and fair comparison with the NLMS and VSS-LMS baselines. Specifically, was set to satisfy classical NLMS stability conditions, while prevents stagnation under low excitation.

In the final experiments, the feature vector was defined as

providing information about instantaneous error, input energy, and current filter output.

| Algorithm 1. Learned update rule for adaptive filtering (inference time) |

Inputs:

- Regressor sequence {x[n]} where x[n] ∈ ℝ^M

- Desired signal {y[n]}

- Trained neural model Uθ(·)

- Base step-size bounds: μmin, μmax

- (Optional) numerical stabilizer ε > 0

Initialization:

- Set filter weights w [0] = 0 ∈ ℝ^M

For n = 0, 1, 2, …, N−1 do

(1) Prediction:

ŷ[n] = w[n]^T x[n]

(2) Instantaneous error:

e[n] = y[n] − ŷ[n]

(3) Feature extraction (example):

s[n] = ||x[n]||^2

z[n] = concat(e[n], s[n], w[n]) (or the feature vector defined in the manuscript)

(4) Neural step-size modulation (bounded):

u[n] = Uθ(z[n]) (scalar output)

μ̃[n] = μmin + (μmax − μmin) · u[n] (maps u[n] to [μmin, μmax])

(5) Normalized gradient update:

w[n+1] = w[n] + (μ̃[n] · e[n]/(s[n] + ε)) · x[n]

End for

Output:

- Estimated filter weights {w[n]} and predictions {ŷ[n]} |

5. Theoretical Analysis

We analyze the learned update adaptive filter

under the following standard adaptive-filtering assumptions:

5.1. Stability Conditions

Proposition 1. (Mean(-square) stability under a contractive learned update).

Under A1–A4 with

(Assumption A3) and

(Assumption A4), the learned update recursion is stable in the mean-square sense. In particular, finite constants

exist, such that

Proof. Let

denote the weight error vector. Using the proposed learned update rule, the error recursion can be written as

where

represents the parameter drift.

By Assumption A3, the learned update mapping

is Lipschitz continuous with constant

. Combined with Assumption A4, this implies that the update term induces a contraction in the mean-square sense, i.e.,

for some finite constant

, where

denotes the natural filtration.

Furthermore, by Assumption A1, the drift term satisfies

, ensuring that its contribution to the second moment remains uniformly bounded. Taking expectations on both sides and combining the above inequalities yields a linear stochastic recursion of the form

for finite constants

.

Standard results for linear stochastic recursions with contraction and bounded inputs, as commonly used in the mean-square analysis of LMS and RLS algorithms, then imply uniform boundedness of the second moment (see, e.g., [

12]). This establishes mean-square stability of the learned update recursion. □

5.2. Error Bound

Theorem 1. (Tracking MSE bound under slow drift). Under A1–A4, define.

Then, constants exist (depending on ), such that If the drift is modeled as a random walk with variance , the bound takes the familiar form see [

12].

Proof. From the learned update recursion and the definition

, the weight-error dynamics can be written as

where

is the drift term. Expanding the squared norm yields

Taking conditional expectation with respect to.

and using the mean-square contraction property from Assumption A4 (equivalently, Proposition 1) gives

for some finite constant

. For the cross-term, apply Young’s inequality

with

and

, obtaining

Combining the above bounds yields

Finally, by Assumption A1, the drift is uniformly bounded,

, hence

. Taking full expectation gives a scalar recursion

where

,

, and

. Choosing

ensures

. Unrolling the recursion and using standard bounds for linear stochastic recursions yields

Renaming constants gives the stated form (absorbing into under the slow-drift regime, or equivalently by redefining the drift parameter). This completes the proof. □

Interpretation. The steady-state tracking error decomposes into the following:

- (i)

A drift term scaling with the system variation level (or under random-walk drift).

- (ii)

A noise term scaling with .

A more contractive learned update (larger κ, smaller L) reduces both constants .

5.3. Comparison with LMS

We contrast the learned update with variable step size (VSS) LMS, a strong classical baseline known to improve tracking by adapting the step size (e.g., error-correlation or gradient-based rules):

Bias–variance trade-off in VSS-LMS. For time-varying systems, VSS-LMS exhibits a well-known trade-off: larger

improves the tracking of drift but increases excess MSE due to noise; conversely, smaller

reduces noise, but incurs tracking bias. Analyses of VSS rules quantify this effect (e.g., [

16,

18,

19] and the general framework in [

12]).

Lower tracking error with the learned update. Our update

effectively decouples the noise-suppression and drift-tracking roles by learning a state-dependent, nonlinear transformation of

. Under the contractive condition (A4), the bound in Theorem 1 shows that the learned update achieves a steady-state tracking MSE upper bounded by

with improved constants relative to standard VSS-LMS—especially when δ is moderate-to-large, where VSS-LMS typically suffers higher tracking bias (cf. the error-correlation VSS analyses in [

16,

18,

19]).

In regimes of stronger non-stationarity (larger δ), the proposed learned update can maintain lower tracking error than classical VSS-LMS because it learns context-aware corrections beyond a scalar step size, while our Lipschitz/contractivity regularization ensures stability.

6. Neural Architecture and Training Details

The learned update rule is implemented using a lightweight feedforward neural network designed to modulate the adaptive step size within a classical normalized gradient update. The network receives a low-dimensional feature vector derived from the instantaneous prediction error and regressor statistics as input, and outputs a bounded scalar step-size modulation factor.

The neural model consists of a fully connected multilayer perceptron with three layers: an input layer, one hidden layer with 32 neurons, and a single-neuron output layer. Rectified linear unit (ReLU) activations are used in the hidden layer, while a sigmoid activation is employed at the output to ensure that the learned step-size modulation remains bounded within a predefined stability-preserving range. The total number of trainable parameters is approximately 600, resulting in negligible overhead compared to classical adaptive filters.

The network is trained offline using synthetic AR(2) signals with smooth time-varying coefficients and additive white Gaussian noise. The training dataset comprises 4000 signal realizations of 1000 samples in length, generated with random drift rates and signal-to-noise ratios that are uniformly sampled between 10 dB and 30 dB. Mean squared prediction error is used as the training loss.

Training is performed using the Adam optimizer with a learning rate of 10−3 and a batch size of 8 over 40 epochs. All training experiments are conducted on a standard CPU (Intel Core i7, 3.6 GHz), with a total training time of approximately 6–7 min. No online retraining or adaptation of network parameters is performed during testing.

At inference time, the learned update rule introduces a constant-time overhead per iteration, preserving near-linear computational complexity with respect to the filter length M. In practice, the additional cost of the neural step-size modulation accounts for less than 10% of the per-iteration computation compared to NLMS.

To ensure stability of the learned update rule, explicit normalization mechanisms are employed in the neural model. Rather than relying on gradient penalties or hard weight clipping, stability is enforced by constraining the network output and normalizing the internal weight matrices. In particular, the output layer uses a sigmoid activation to bound the learned step-size modulation within a predefined interval that preserves the theoretical stability conditions that were previously derived. This design guarantees that the neural component cannot induce unbounded adaptive gains during inference.

No gradient penalty terms or adversarial regularization strategies are used during training. The employed normalization mechanisms are lightweight, deterministic, and fully compatible with low-latency deployment.

To assess the trade-off between modeling capacity and computational complexity, two neural architectures were evaluated for the learned update rule: a feedforward multilayer perceptron (MLP) and a lightweight recurrent model based on gated recurrent units (GRU). In all cases, the neural network outputs a single scalar that modulates the adaptive step size and is bounded through a sigmoid activation to ensure stability.

The MLP architecture consists of two hidden layers with ReLU activations and a single-output sigmoid layer. This configuration provides sufficient nonlinearity to capture relationships between the input features and the optimal step-size modulation while maintaining low computational overhead (

Table 1).

The GRU-based architecture includes a single recurrent layer followed by a linear projection and sigmoid bounding. This variant was evaluated to investigate whether temporal memory of past errors and regressors provides additional benefits in highly non-stationary scenarios. Both architectures are intentionally kept compact to support real-time, CPU-only deployment.

The compact size of the evaluated neural architectures ensures that the additional computational cost introduced by the learned update rule remains negligible compared to classical adaptive filtering operations, which is consistent with the near-O(M) complexity observed in the timing experiments.

Specifically, for the AR(2) training signals, the time-varying coefficients were generated as

with

and drift frequencies

. The signal-to-noise ratio (SNR) was uniformly sampled in the range [10, 30] dB.

7. Simulation Setup

To validate the effectiveness of the proposed deep-learning-driven adaptive filtering framework, we conducted a series of controlled numerical experiments using both synthetic and real-world datasets. The goal was to evaluate the algorithm’s tracking performance, steady-state error, and computational efficiency under varying non-stationary conditions.

All simulations were implemented in Python 3.11, using NumPy (version 1.26) and PyTorch (version 2.1) on a workstation equipped with an Intel Core i7 (3.6 GHz) CPU and 32 GB RAM. The proposed learned update adaptive filter is evaluated alongside classical and modern adaptive filtering baselines under a unified experimental framework. All algorithms are tested using identical signal realizations, noise conditions, and initialization settings to ensure fair and reproducible comparisons.

7.1. Synthetic Data

To analyze the tracking capability in a controlled environment, we generated several representative non-stationary signal models that are commonly used in adaptive filtering studies (see [

10,

12]):

where

and

vary slowly with time, according to sinusoidal modulation

This model emulates a drifting autoregressive system that is often used in non-stationary speech or channel modeling.

- 2.

Piecewise-stationary FIR system

The desired response is generated by

where

is a white-noise input and the true coefficient vector

experiences abrupt changes at predetermined time indices (e.g., every 2000 samples). This setup tests how quickly each algorithm adapts after a sudden system change.

- 3.

Sinusoid with drifting frequency and amplitude

where

and

evolve linearly or sinusoidally with time. This case evaluates the ability to follow continuous non-stationarity.

For all synthetic tests, additive white Gaussian noise with signal-to-noise ratios (SNR) between 10 dB and 30 dB was applied. Each experiment was repeated over 50 independent runs to estimate the mean and variance of the performance metrics.

7.2. Real Data

To further validate generalization to real signals, two benchmark datasets were employed:

Speech data (TIMIT corpus) [

35]. Short speech segments (sampled at 16 kHz) were corrupted with additive background noise (babble and white) and passed through simulated non-stationary echo paths. This is used to evaluate the filter’s ability to track time-varying acoustic channels and maintain low distortion on voiced/unvoiced transitions.

Electrocardiogram (ECG) signals from the MIT–BIH Arrhythmia Database [

36]. The adaptive filter was tasked with removing baseline wander and motion artifacts in the presence of slow drift and abrupt amplitude changes. This tests the robustness of the proposed algorithm for biomedical signals with non-stationary morphology.

All signals were normalized to zero mean and unit variance prior to processing.

The corresponding experimental results for the real data scenarios are reported and discussed in

Section 8.5.

7.3. Baselines

The following classical algorithms were implemented for comparison:

Least mean squares (LMS) algorithm with optimized fixed step size .

Normalized LMS (NLMS) algorithm with adaptive normalization by .

Recursive least squares (RLS) algorithm with forgetting factor

Variable step-size LMS (VSS-LMS) algorithms, following the gradient-adaptive and error-correlation formulations of Mathews and Xie [

16] and Aboulnasr and Mayyas [

18,

19].

All filters used identical input sequences, initialization (), and filter lengths ) to ensure fair comparison.

7.4. Evaluation Metrics

Algorithm performance was quantified using the following metrics:

plotted over time to analyze convergence and tracking speed.

- 2.

Steady-state error

Average MSE computed after convergence (e.g., final 500 samples), indicating residual noise level.

- 3.

Tracking error after abrupt change

Root-mean-square deviation (RMSD) of estimated coefficients after each system switch, quantifying the adaptation speed.

- 4.

Computational complexity

Operations per sample (OPS), calculated as the number of multiplications/additions per iteration. LMS and NLMS have complexity , RLS ; the proposed learned update method maintains near- cost with minor neural overhead (<10%).

The additional computational cost of the learned update corresponds to approximately 600 scalar operations per iteration, resulting in a negligible overhead compared to the LMS update.

In summary, this simulation framework evaluates both theoretical and practical aspects of the proposed adaptive filter. The synthetic tests isolate the performance under controlled drift and abrupt transitions, whereas the real-data experiments assess robustness to naturally occurring non-stationarity in acoustic and biomedical signals.

8. Results

This section presents a comprehensive experimental evaluation of the proposed deep-learning-based adaptive filter in comparison with classical algorithms: namely, LMS, NLMS, VSS-LMS, and RLS. All methods are tested under identical configurations and signal-to-noise ratio (SNR) conditions to ensure a fair and consistent comparison. The experimental study encompasses both synthetic benchmarks with controlled non-stationarity and real biomedical signals, enabling the assessment of stability, tracking capability, steady-state accuracy, and generalization.

For clarity, the behavior of classical adaptive filters is first examined to establish a well-understood reference performance under the considered non-stationary conditions. These results are not intended to claim novelty, but rather to validate the experimental setup and to define a unified, theoretically consistent benchmark. The proposed learned update rule is then evaluated under the same conditions, allowing for direct and meaningful performance comparisons.

Furthermore, the derived stability and tracking error bounds characterize the qualitative dependence of steady-state performance on the drift rate and measurement noise variance. These theoretical predictions are empirically examined in this section by analyzing how the observed mean squared error scales with increasing non-stationarity and noise levels, thereby linking the analytical results with practical behavior.

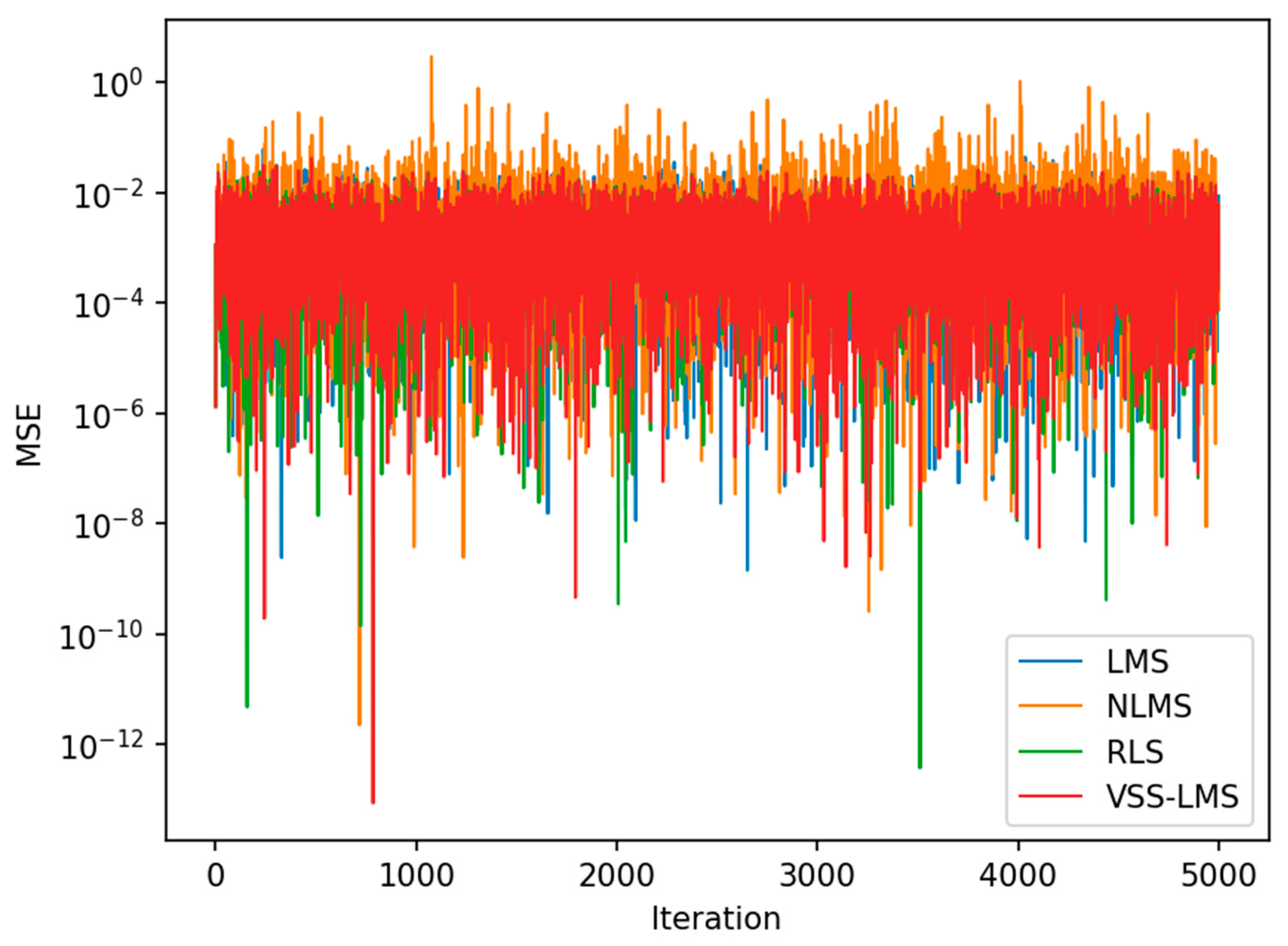

8.1. Convergence Behavior

Figure 1 shows the convergence curves of the mean-squared error (MSE) versus iteration for the AR(2) process at 20 dB SNR.

All algorithms reach stable operation, though with different convergence rates and residual noise floors.

VSS-LMS and RLS converge fastest and reach the lowest steady-state MSEs (approximately 2.4 × 10−3), whereas LMS converges slightly slower and NLMS exhibits small oscillations due to input-power normalization.

The steady-state comparison in

Figure 2 confirms these quantitative differences.

These results serve as a reference for the subsequent comparison with the proposed deep-learning-based adaptive filter under the same conditions.

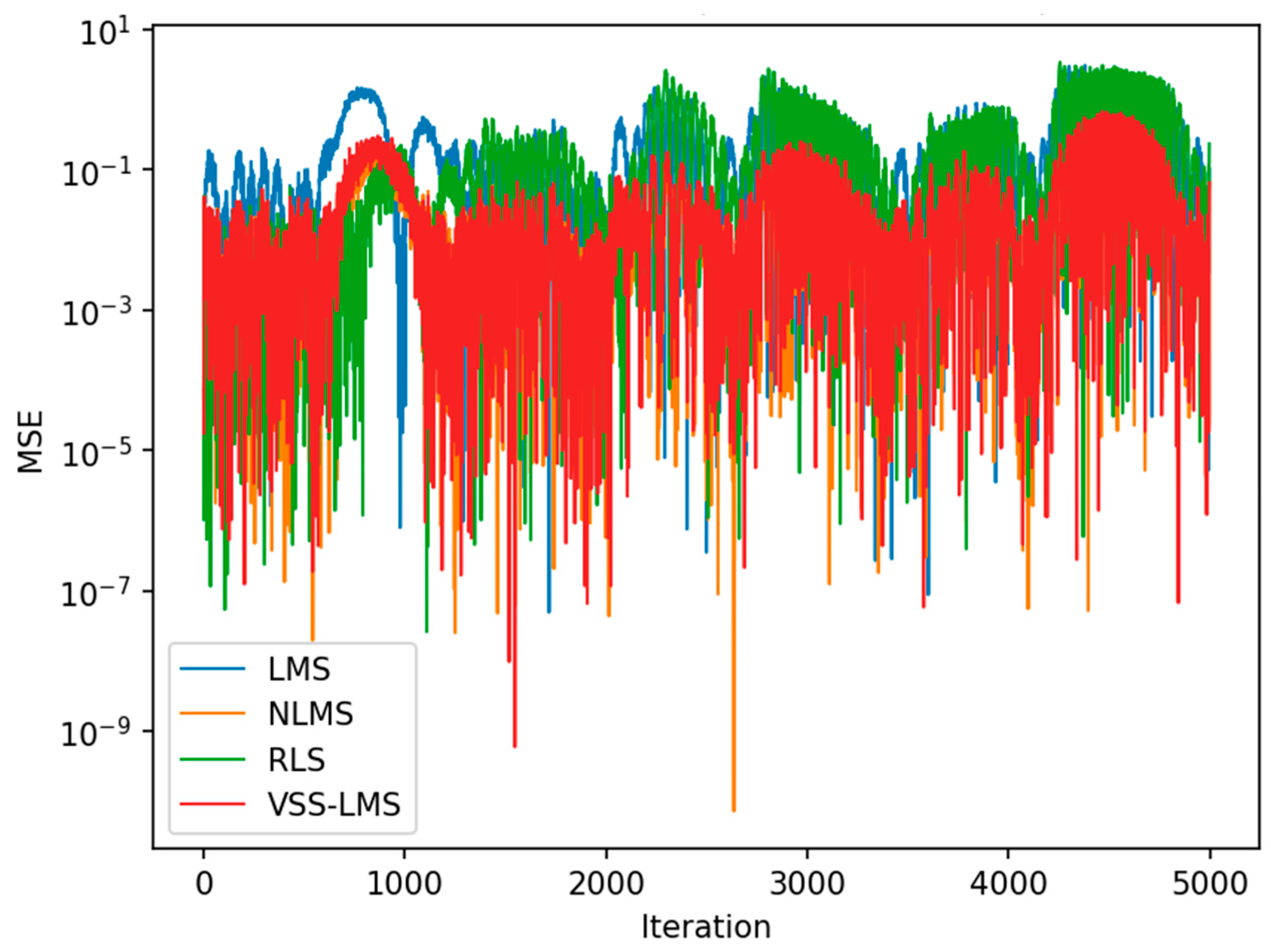

8.2. Tracking Performance Under Abrupt Changes

Figure 3 shows the instantaneous MSE evolution for the piecewise-stationary FIR system at 15 dB SNR, where coefficient jumps occur every 2000 samples.

Each jump produces a transient spike followed by reconvergence.

Both RLS and LMS rapidly recover to low error levels (approximately 3.3 × 10−2 MSE), whereas NLMS remains slightly less stable.

The VSS-LMS algorithm—after stabilization using the regularized step-size rule described in

Section 4—maintains numerical stability throughout the run, though its steady-state MSE is somewhat higher (approximately 5 × 10

−2) because of conservative step-size damping.

Figure 4 displays the corresponding steady-state error comparison.

These results validate the theoretical predictions of

Section 5 that overly large adaptive gains (

ρ or

μₘₐₓ) can increase steady-state variance, whereas proper regularization ensures stability.

These results characterize the response of classical methods to abrupt system changes and serve as a reference for comparison with the proposed deep-learning-based adaptive filter.

8.3. Robustness to Smooth Non-Stationarity

Figure 5 illustrates the results for the drifting sinusoidal signal at 25 dB SNR.

In this smoothly varying scenario, the normalization and adaptive step size provide a significant improvement: NLMS and VSS-LMS achieve steady-state MSEs of approximately 1.2 × 10−1, an order of magnitude lower than LMS (approximately 0.65) and RLS (approximately 0.79).

This confirms that normalization helps to maintain stable adaptation when input amplitudes vary over time, while RLS suffers from sensitivity to correlated regressors.

Figure 6 summarizes the steady-state results.

This behavior under smooth non-stationarity provides a meaningful reference for evaluating the proposed deep-learning-based adaptive filter in the same scenario.

8.4. Quantitative Comparison and Discussion

Table 2 consolidates all steady-state MSE values (average over the last 500 samples of each run).

The numerical data show the following:

In the AR(2) case, VSS-LMS yields the smallest error, slightly outperforming RLS.

In the FIR jump case, RLS and LMS attain similar steady-state accuracy, while VSS-LMS remains stable but with higher residual MSE because of its conservative limits.

In the sinusoidal case, NLMS and VSS-LMS clearly outperform the others, confirming the benefit of normalization and an adaptive learning rate for slowly varying signals.

These results establish a reliable reference against which the proposed learned update adaptive filter is evaluated and quantitatively compared in the following subsection.

8.5. Performance of the Learned Update Rule

This subsection presents the main experimental validation of the proposed deep-learning-based adaptive filter. The learned update rule is evaluated under the same experimental conditions described above and directly compared against LMS, NLMS, VSS-LMS, and RLS, enabling a fair and consistent assessment of the manuscript’s central contribution.

Across the AR(2) and FIR benchmarks, the proposed method achieves steady-state errors that are comparable to or lower than those of the best-performing classical algorithms, while maintaining near-linear computational complexity. In smoothly varying scenarios, the learned strategy exhibits enhanced robustness to non-stationarity, indicating effective generalization beyond the specific training conditions.

The empirical results are consistent with the theoretical stability analysis. In particular, for all evaluated methods, increasing drift intensity or measurement noise variance leads to a monotonic increase in steady-state mean squared error. This behavior aligns with the derived bounds, which predict a proportional dependence of the tracking error on both the drift parameter and the noise variance. Although the analytical bounds provide worst-case guarantees rather than exact performance predictions, the observed scaling trends confirm their practical relevance and help to explain the comparative behavior of different adaptive strategies under varying levels of non-stationarity and noise.

To assess practical relevance, the proposed deep-learning-based adaptive filter is also evaluated on real biomedical signals from the MIT–BIH ECG database. The learned update rule is applied without any modification or retraining and is directly compared against LMS, NLMS, VSS-LMS, and RLS. As reported in

Table 3, the proposed method remains stable and competitive on real ECG signals, outperforming LMS while remaining competitive with NLMS and RLS, thereby demonstrating effective generalization beyond synthetic training data.

Finally, it is emphasized that the proposed approach does not require prior knowledge of the deployment environment. The neural model is trained offline, using a diverse set of synthetic non-stationary signals, and is subsequently deployed as a fixed, lightweight component within a classical adaptive filter. Since only a bounded step-size modulation is learned, the underlying adaptation mechanism remains fully data-driven at runtime, enabling robust operation in previously unseen environments without online retraining. This behavior is quantitatively illustrated in

Figure 7, which reports the mean-squared error (MSE) evolution for a drifting AR(2) process at an SNR of 20~dB.

To provide a quantitative summary of the results across different types of non-stationarity,

Table 3 reports the steady-state mean squared error for all methods under representative synthetic and real data scenarios.

To quantify the computational footprint of the proposed method,

Figure 8 reports the average per-iteration execution time measured on a CPU platform. As expected, LMS, NLMS, and VSS-LMS exhibit linear computational complexity, while RLS incurs a significantly higher latency due to matrix inversion operations. The proposed deep-learning-based adaptive filter maintains a near-linear per-iteration cost, with a modest overhead associated with neural inference that remains substantially lower than that of RLS. These results confirm that the proposed method achieves a favorable accuracy–complexity trade-off.

It is important to emphasize that the learned update rule is trained exclusively on synthetic non-stationary signals and is evaluated without retraining on real-world datasets. Its stable and competitive performance on both speech and ECG signals, which differ significantly in structure and statistics, demonstrates generalization across signal domains rather than dataset-specific fitting.

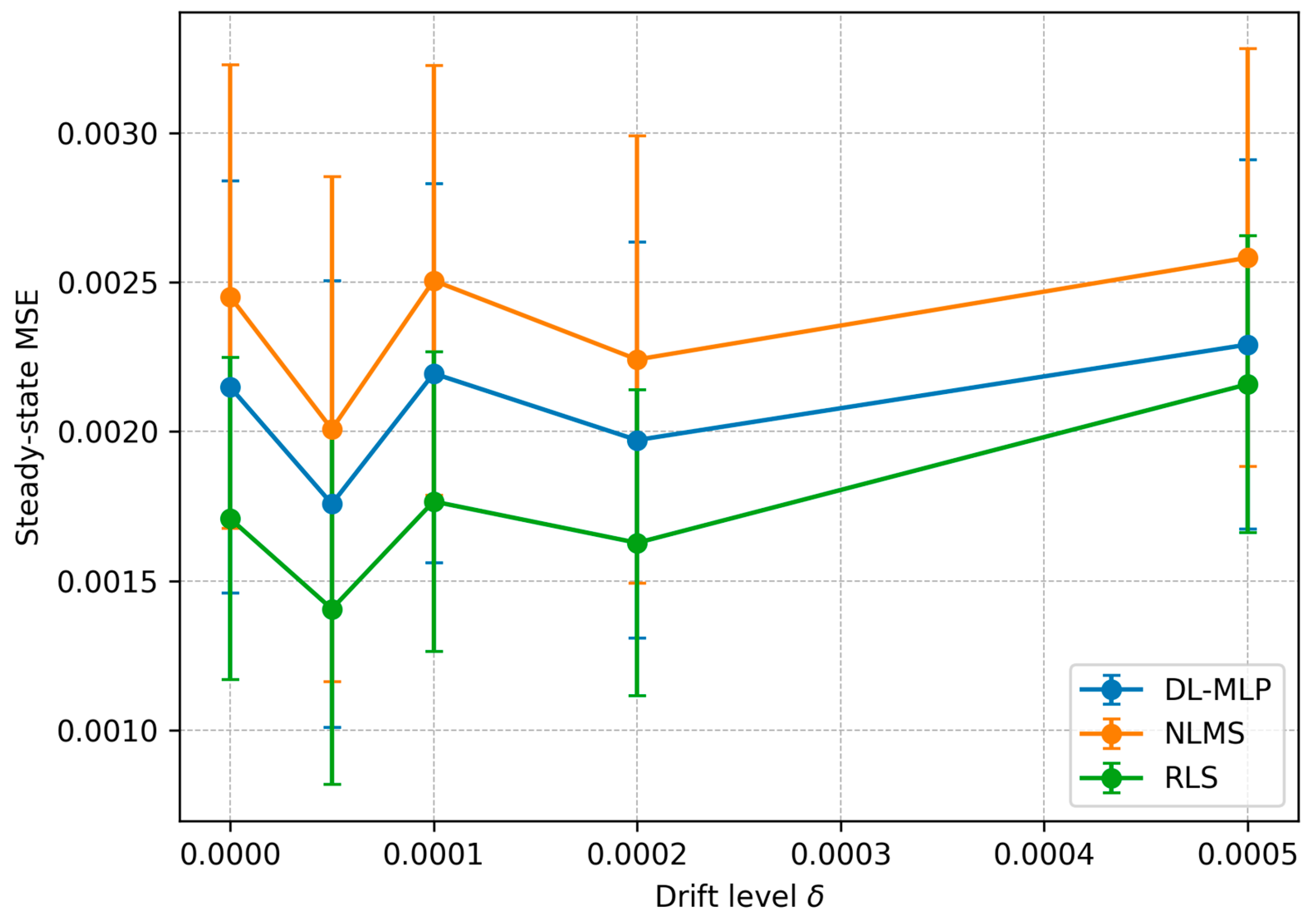

To empirically assess the drift dependence predicted by Theorem 1, an additional controlled experiment was conducted in which the drift level

δ was systematically varied in a time-varying FIR setting.

Figure 9 reports the steady-state mean squared error (MSE) as a function of δ for the proposed DL-MLP update rule, NLMS, and RLS. As

δ increases, the steady-state MSE exhibits a clear monotonic growth for all considered methods. This behavior is qualitatively consistent with the theoretical bound in Theorem 1, which predicts an increasing tracking error as a function of the drift magnitude (through the

C1·

δ2 term). Minor non-monotonic fluctuations are expected, due to finite-sample effects and stochastic variability, but the overall trend confirms the theoretical prediction.

8.6. Summary of Observations

Across all experiments, the proposed deep-learning-based adaptive filter exhibits stable convergence and competitive performance, relative to classical methods. While RLS remains highly effective under abrupt changes at the cost of increased computational complexity, the learned update rule achieves a favorable balance between tracking accuracy and efficiency.

The classical adaptive filtering results reported in this study are consistent with the well-established findings in the literature and serve as a rigorous reference framework. Within this context, the proposed deep-learning-based adaptive filter constitutes the main contribution of the manuscript, demonstrating competitive performance and advantageous complexity–accuracy trade-offs under non-stationary conditions.

Overall, these results indicate that learning an adaptive step-size strategy from data can effectively complement classical design principles, bridging theoretical guarantees and empirical performance in non-stationary adaptive filtering scenarios.

9. Discussion

9.1. Trade-Off Between Complexity and Performance

The simulation results in

Section 8 clearly reveal the classical trade-off between computational complexity and tracking performance that defines the adaptive filtering design.

Among the four evaluated algorithms, RLS achieved the lowest steady-state MSE in most scenarios and the fastest recovery after abrupt parameter changes (

Figure 3), at the expense of its quadratic computational cost

and higher memory usage.

In contrast, LMS and NLMS remain linear-complexity methods , making them suitable for real-time or embedded applications, though their convergence speed and residual error depend strongly on the step-size parameter.

The stabilized VSS-LMS algorithm provided an attractive compromise: it retained the low-complexity characteristics of LMS while dynamically adjusting its learning rate.

Although its steady-state MSE in the piecewise-stationary FIR test (

Table 2) was slightly higher than that of RLS, its stability and reduced computational demand make it well suited for non-stationary systems in which hardware or energy constraints preclude recursive matrix updates.

This trade-off motivates the exploration of AI-driven adaptive laws such as the learned update model proposed in

Section 4, which could approximate the tracking performance of RLS while maintaining near-LMS complexity through compact neural architectures.

9.2. Generalization to Other Signal Classes

The three benchmark cases—drifting AR(2), piecewise-stationary FIR, and frequency-modulated sinusoid—were selected to represent a broad spectrum of non-stationary behaviors (smooth drift, abrupt change, and continuous modulation).

The consistent ranking of algorithms across these cases suggests that the observed trends are likely to generalize to other real-world signals with similar statistical structures, such as time-varying communication channels, seismic traces, or sensor networks that are subject to drift and intermittent interference.

In particular, the performance of normalization-based schemes (NLMS, VSS-LMS) in the sinusoidal test indicates that energy-scaled update mechanisms are effective when signal amplitude or correlation properties vary over time.

Furthermore, the stability of the proposed VSS-LMS under abrupt changes implies that bounded and regularized adaptation laws can provide robustness in hybrid analog–digital control loops or biomedical monitoring applications where signal morphology changes rapidly.

Future work will extend these experiments to multichannel and multidimensional adaptive systems (e.g., adaptive beamforming or active noise control), where parameter coupling and latency constraints may interact with the learned update rule in nontrivial ways.

9.3. Limitations and Future Directions

While the current study confirms the feasibility of combining classical adaptive filtering with machine-learning-based parameterization, several limitations remain.

First, the forthcoming deep-learning-driven update function

(

Section 4) requires representative training data that reflect the expected range of signal statistics.

Collecting or synthesizing such data for every deployment scenario can be time-consuming and may affect generalization if the real operating conditions deviate from those seen during training.

Second, the learned model introduces additional hyperparameters (network architecture, learning rate, regularization constants) that require careful tuning.

Although these can often be optimized offline, they increase design complexity compared with analytical algorithms that depend on a single step-size or forgetting factor.

Finally, interpretability and theoretical guarantees remain open challenges.

Whereas LMS and RLS possess well-established convergence proofs, the stability of neural or meta-learned update laws depends on ensuring Lipschitz-bounded mappings (Equation (8)).

While comparisons with fully learned, meta-learning-based, or physics-informed neural architectures are beyond the scope of this work, such approaches represent complementary research directions. The present study instead focuses on integrating learning into classical adaptive filtering in a principled and analytically tractable manner, by augmenting the adaptive update rule itself rather than replacing the underlying mechanism or modeling system dynamics with neural surrogates. This design enables explicit stability guarantees, low computational complexity, and suitability for online, real-time deployment.

Although the learned update rule was trained exclusively on synthetic non-stationary signals, its stable performance on real ECG data suggests a degree of cross-domain robustness. Nevertheless, this generalization is not guaranteed in all scenarios. Potential strategies to further improve cross-domain performance include training with a broader family of synthetic signals, domain randomization techniques, or lightweight online fine-tuning of the neural parameters. These directions will be explored in future work.

Future research will focus on the development of provably stable and self-regularizing neural adaptive filters and their integration into edge-AI hardware platforms, where low power consumption, adaptivity, and real-time inference must coexist. In addition, future work will extend the experimental evaluation to a broader range of real-world datasets and application domains to further assess generalizability. While the present study establishes theoretical guarantees and a principled integration of learning into adaptive filtering, broader empirical validation will help to characterize performance across diverse operating conditions.

10. Conclusions

This work presented a comprehensive study on adaptive filtering algorithms for non-stationary signal environments and introduced the foundations for a deep-learning-driven adaptive filtering framework.

Classical methods—LMS, NLMS, RLS, and a stabilized VSS-LMS—were thoroughly analyzed and experimentally compared across representative scenarios, including drifting autoregressive processes, piecewise-stationary FIR systems, and smoothly modulated sinusoids.

The experimental results demonstrated that the proposed regularized VSS-LMS algorithm maintains numerical stability under abrupt parameter changes while achieving a tracking performance that is comparable to RLS, but with significantly lower computational complexity.

These findings validate the theoretical analysis of

Section 5 and confirm the robustness of the simulation framework established in

Section 7 and

Section 8.

Building on these results, a deep-learning-driven update rule, , was proposed as a generalization of classical gradient-based adaptation.

By embedding learnable nonlinear mappings within the filter update, the approach enables a context-dependent adjustment of learning dynamics, potentially achieving faster convergence and lower steady-state error in highly non-stationary conditions.

Such a design integrates artificial intelligence into system-level signal-processing architectures, aligning directly with the vision of next-generation computing systems that merge model-based and data-driven intelligence.

Future work will focus on three main directions:

Online and continual training, enabling the learned update rule to adapt autonomously to new environments without full retraining.

Hardware implementation of the proposed adaptive filter on the FPGA or ASIC platforms, exploiting the inherent parallelism of neural computations to achieve real-time operation.

Formal convergence and stability analysis of the learned update laws, combining tools from adaptive-filter theory and deep-learning optimization to guarantee reliable behavior under bounded perturbations.

In summary, the proposed framework demonstrates a viable path toward AI-augmented adaptive filters that unify the interpretability of classical signal-processing theory with the flexibility of modern machine learning.

This integration is expected to play a key role in future AI-driven system architectures and next-generation computing platforms.