Multimodal Intelligent Perception at an Intersection: Pedestrian and Vehicle Flow Dynamics Using a Pipeline-Based Traffic Analysis System

Abstract

1. Introduction

2. Related Work

2.1. Literature Review

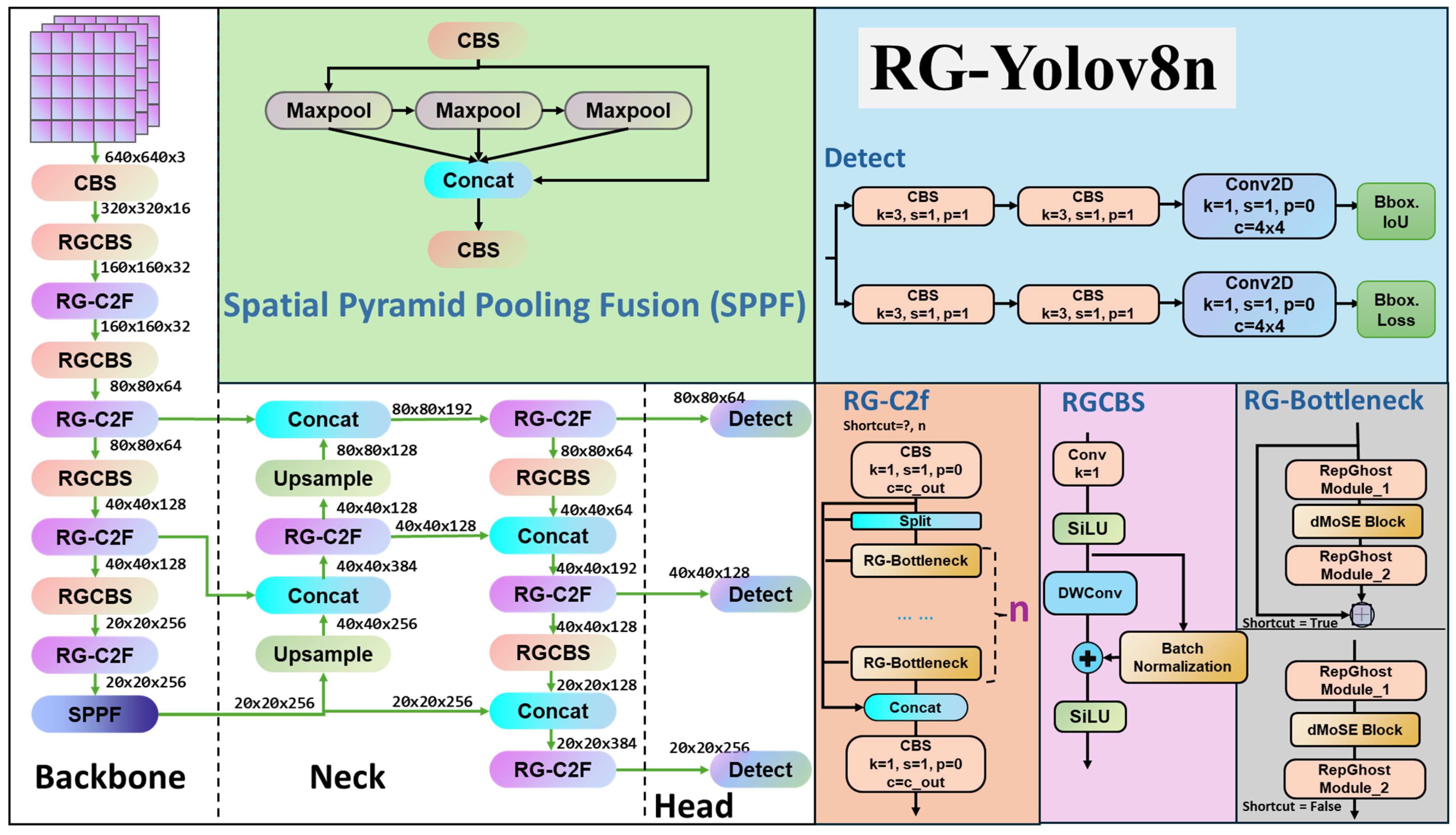

2.2. Object Detection Models

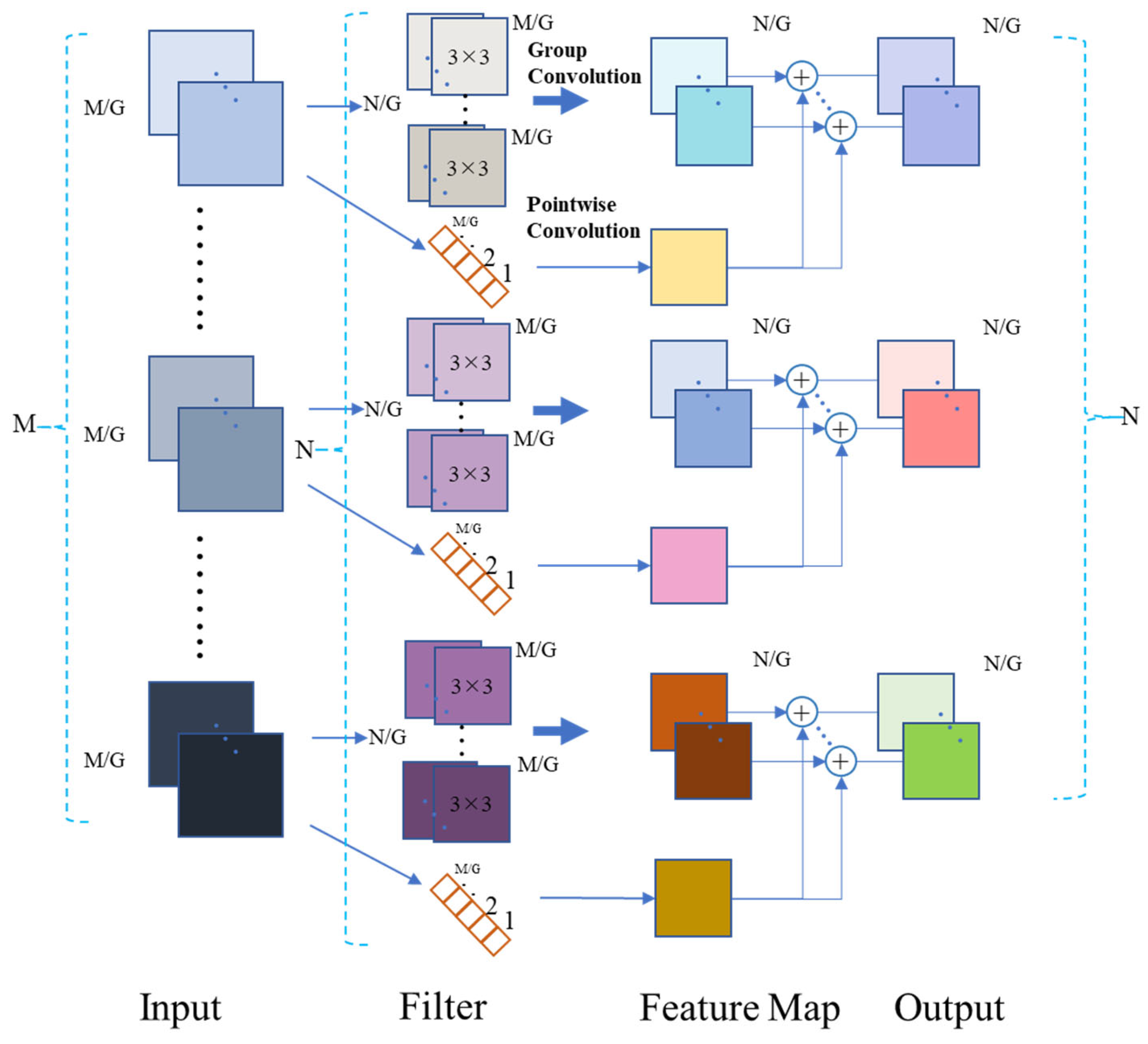

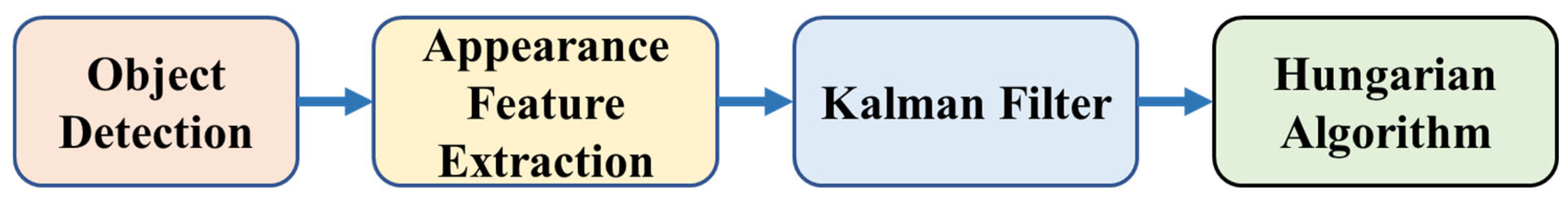

2.3. Reparameterization Ghost Bottleneck, and Dual Convolution

2.4. Large Language Models

2.5. Retrieval-Augmented Generation

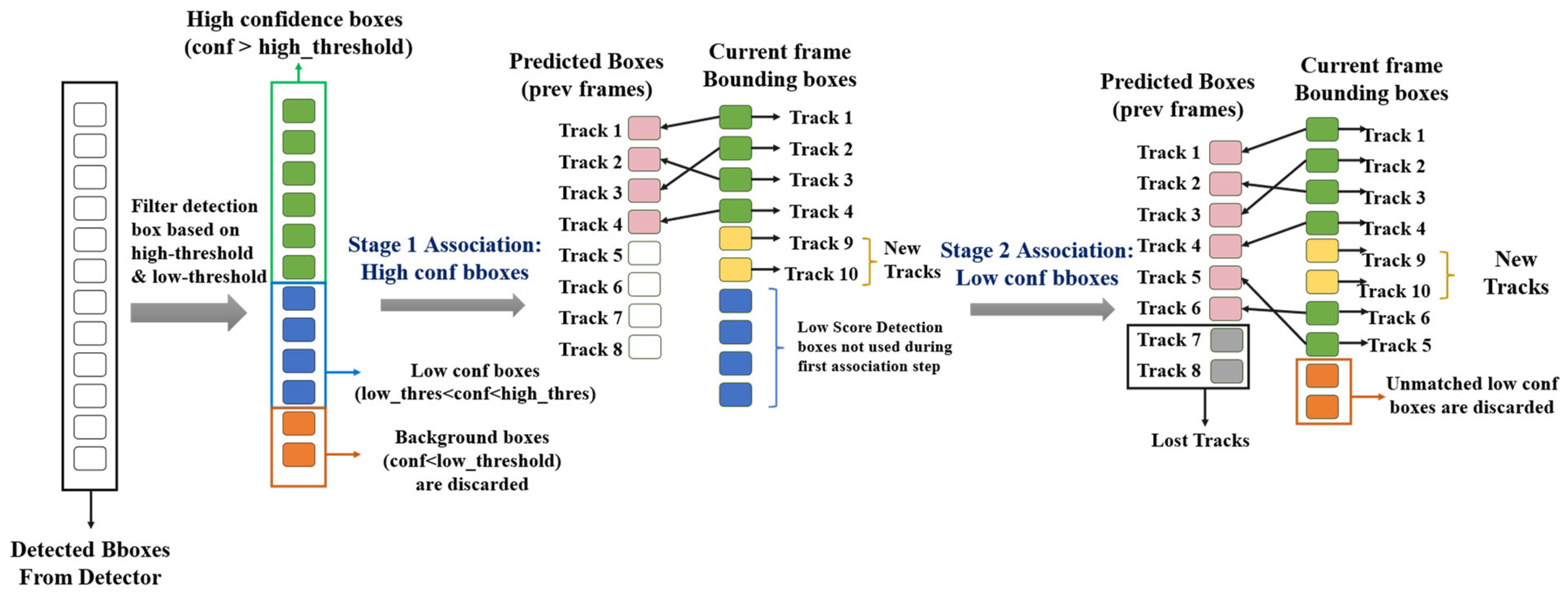

2.6. Multiple Object Tracking (MOT)

3. Method

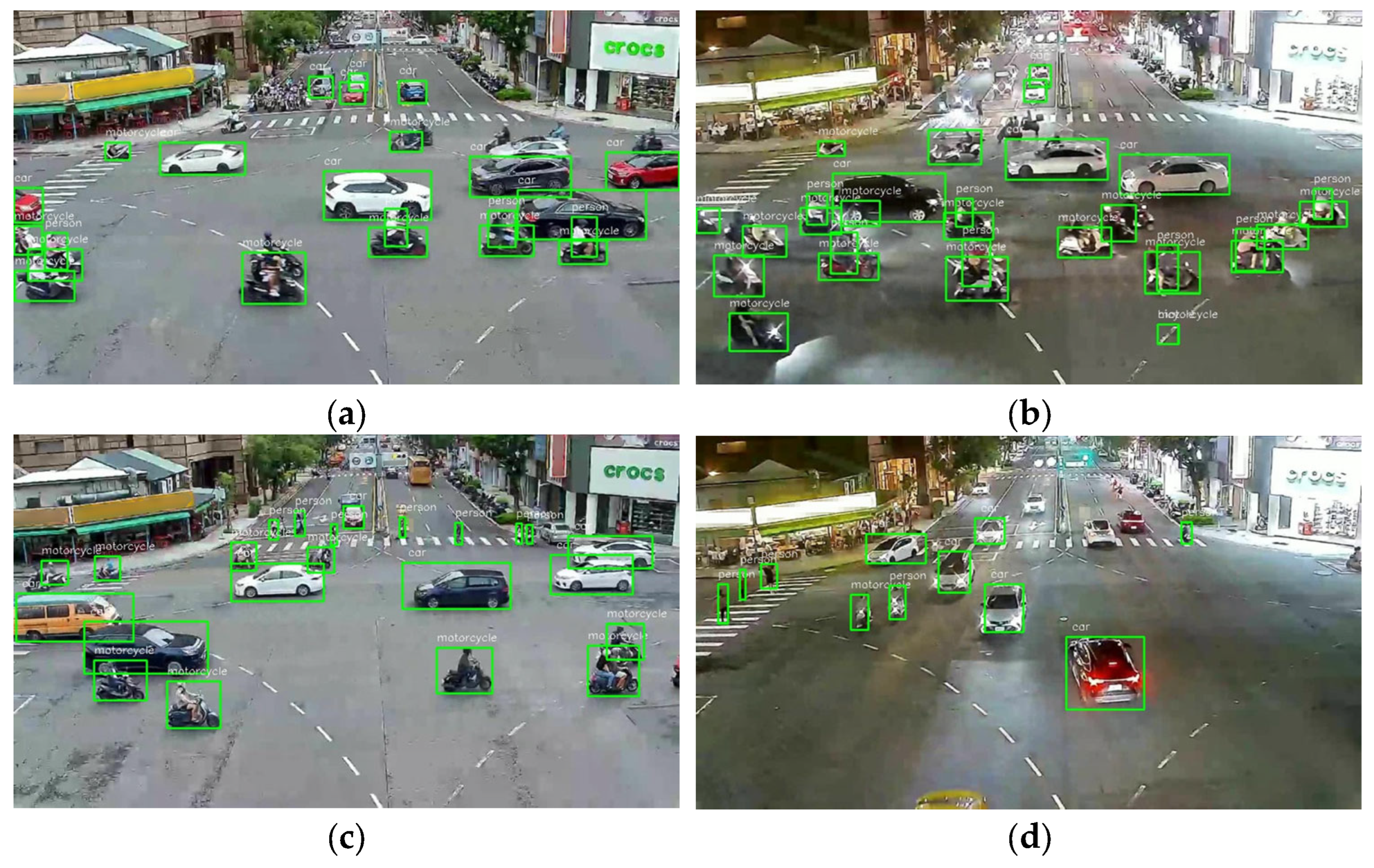

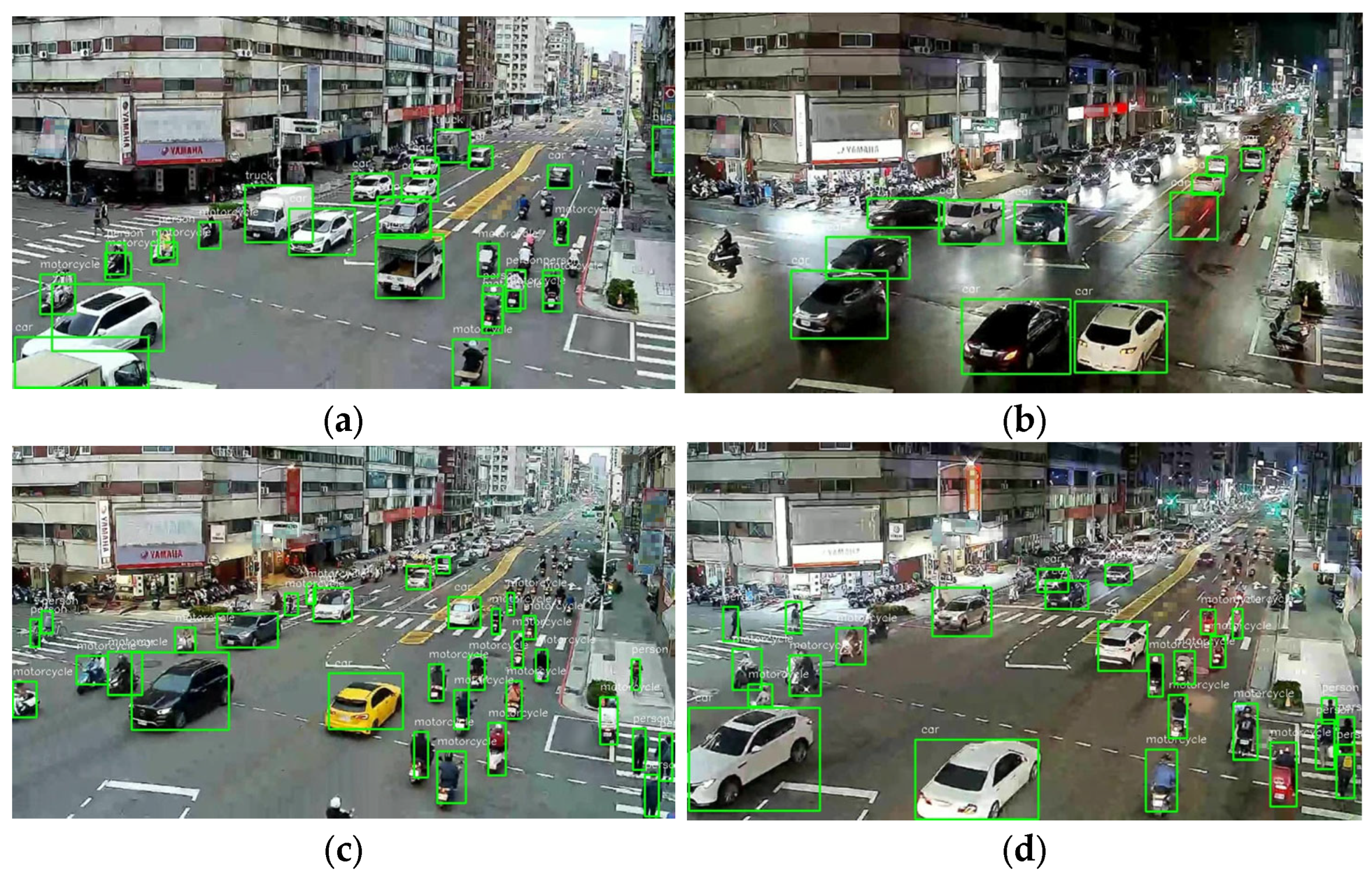

3.1. Data Collection, Processing, and Model Training & Testing

3.2. Improved Object Detection Models

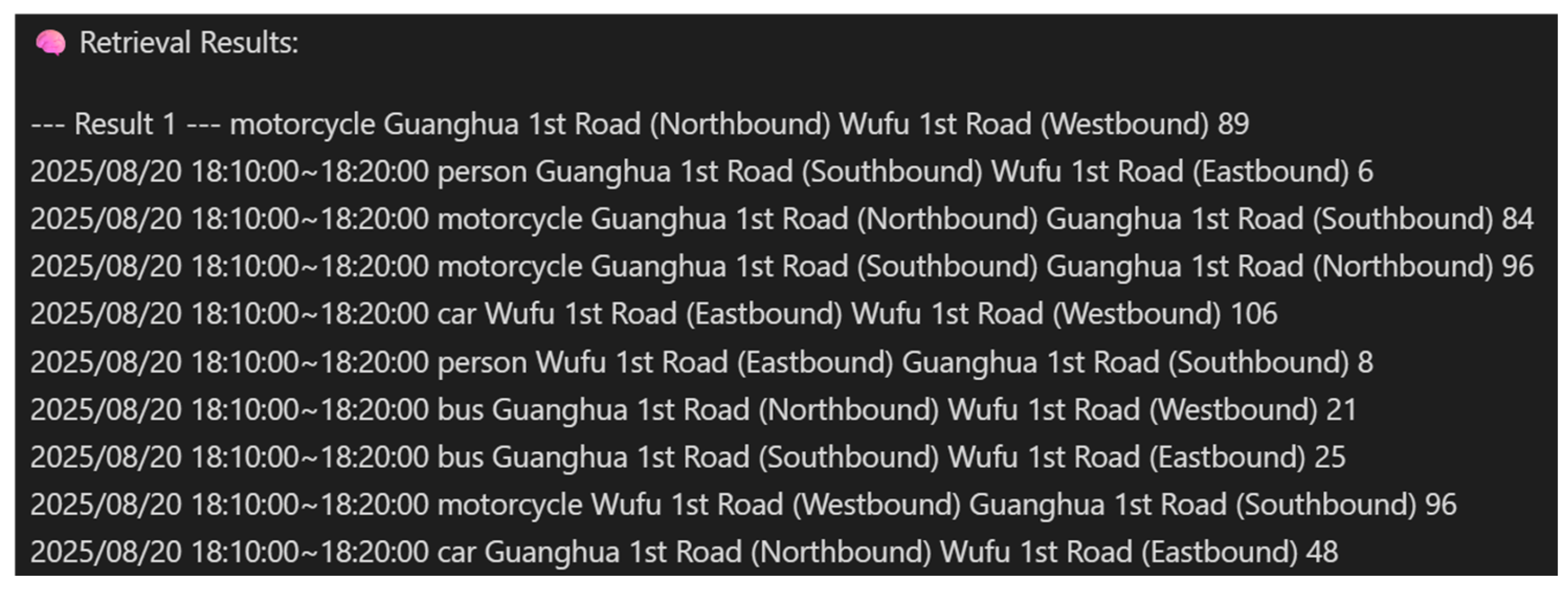

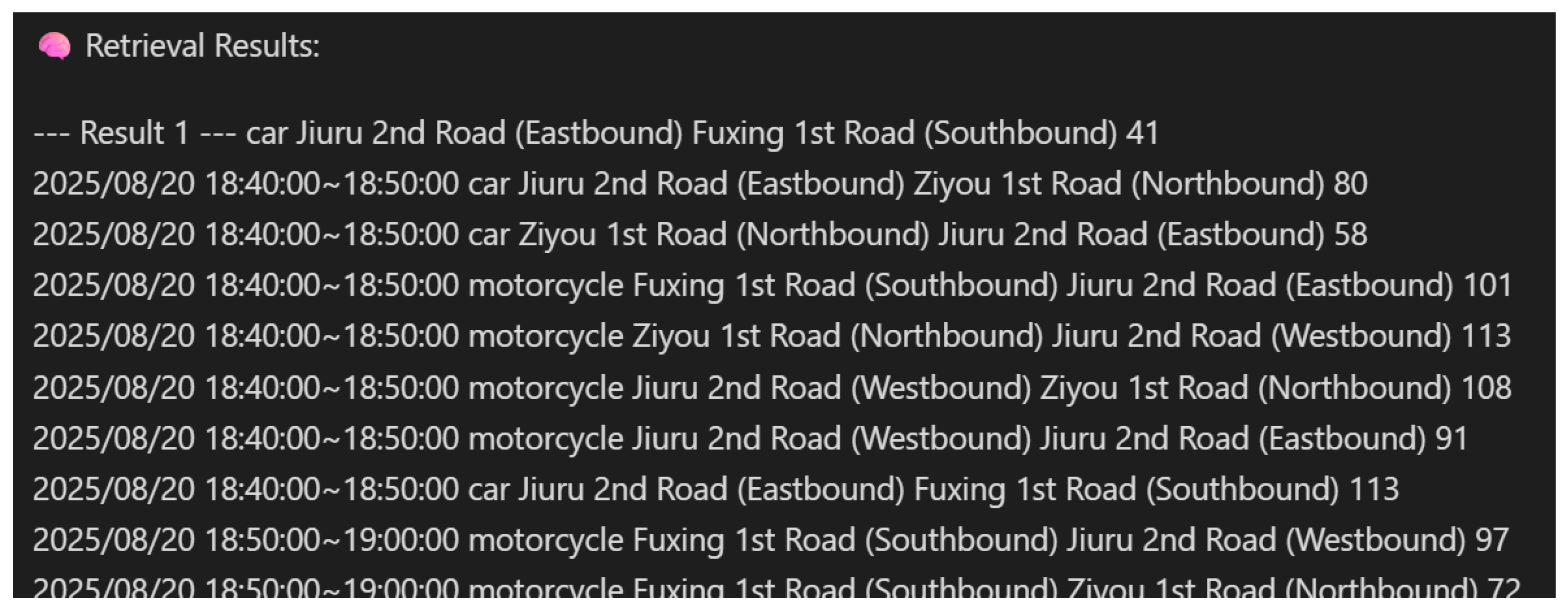

3.3. Traffic Flow Calculation and Edge-Based Real-Time Image Acquisition

- (1)

- Vehicle Type: e.g., car, truck, bus;

- (2)

- Entry: the direction from which the vehicle enters the intersection;

- (3)

- Exit: the direction through which the vehicle leaves the intersection;

- (4)

- Count: the number of cars passing through the specified entry and exit directions during the time interval.

3.4. Acquisition of Urban Environment and Traffic-Related Monitoring Data

- (1)

- Site Name (sitename): Name of the monitoring station.

- (2)

- AQI (aqi): Air Quality Index.

- (3)

- Primary Pollutant (pollutant): The pollutant contributes most to the AQI.

- (4)

- PM2.5/PM10: Concentrations of delicate particulate matter and suspended particles.

- (5)

- Status (status): Air quality status (e.g., Good, Moderate, Unhealthy for Sensitive Groups).

- (6)

- Publish Time (publishtime): Data update time.

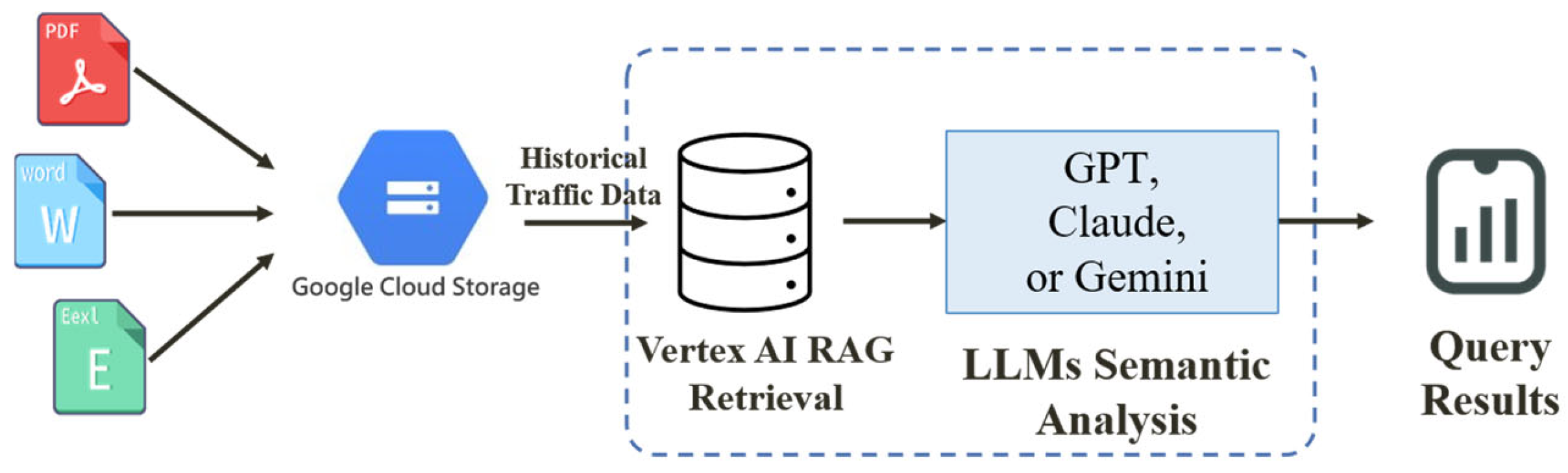

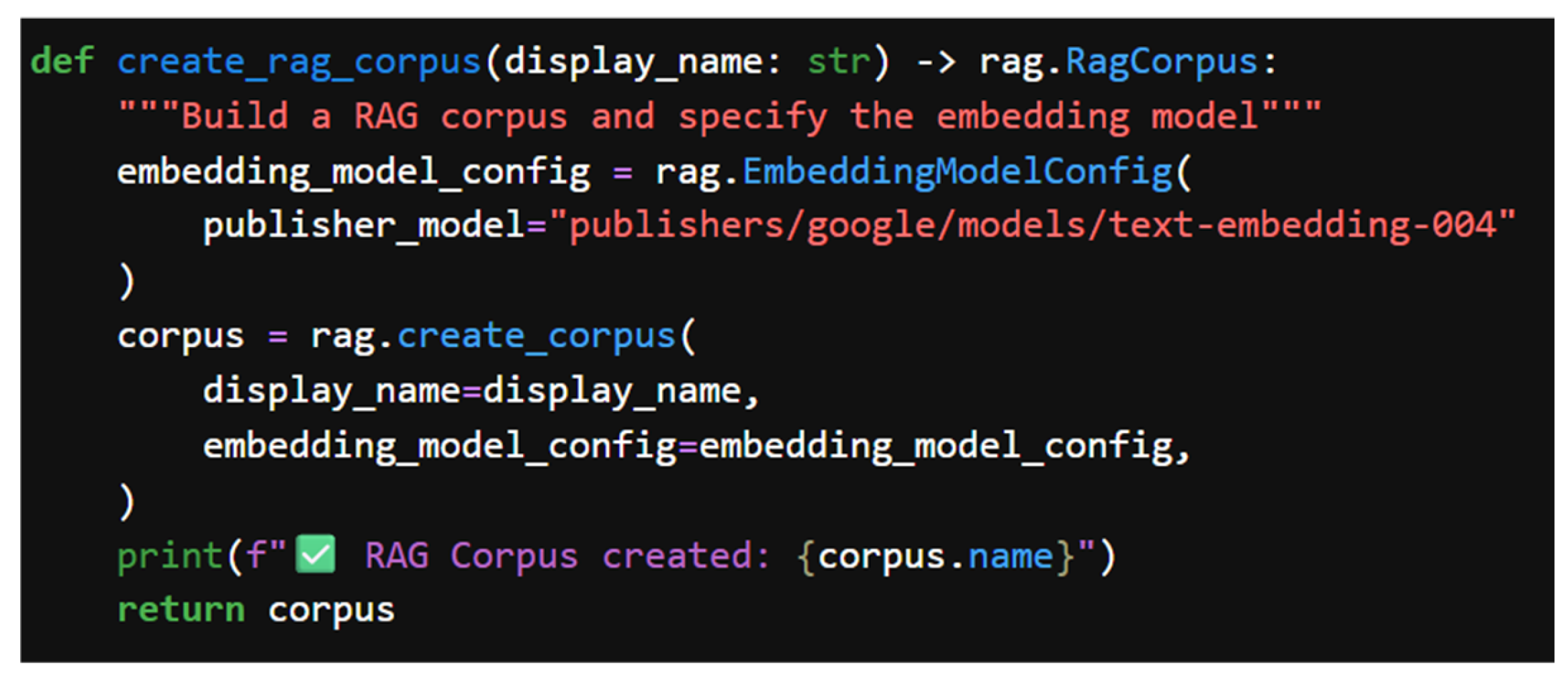

3.5. Data Management and Retrieval Integration

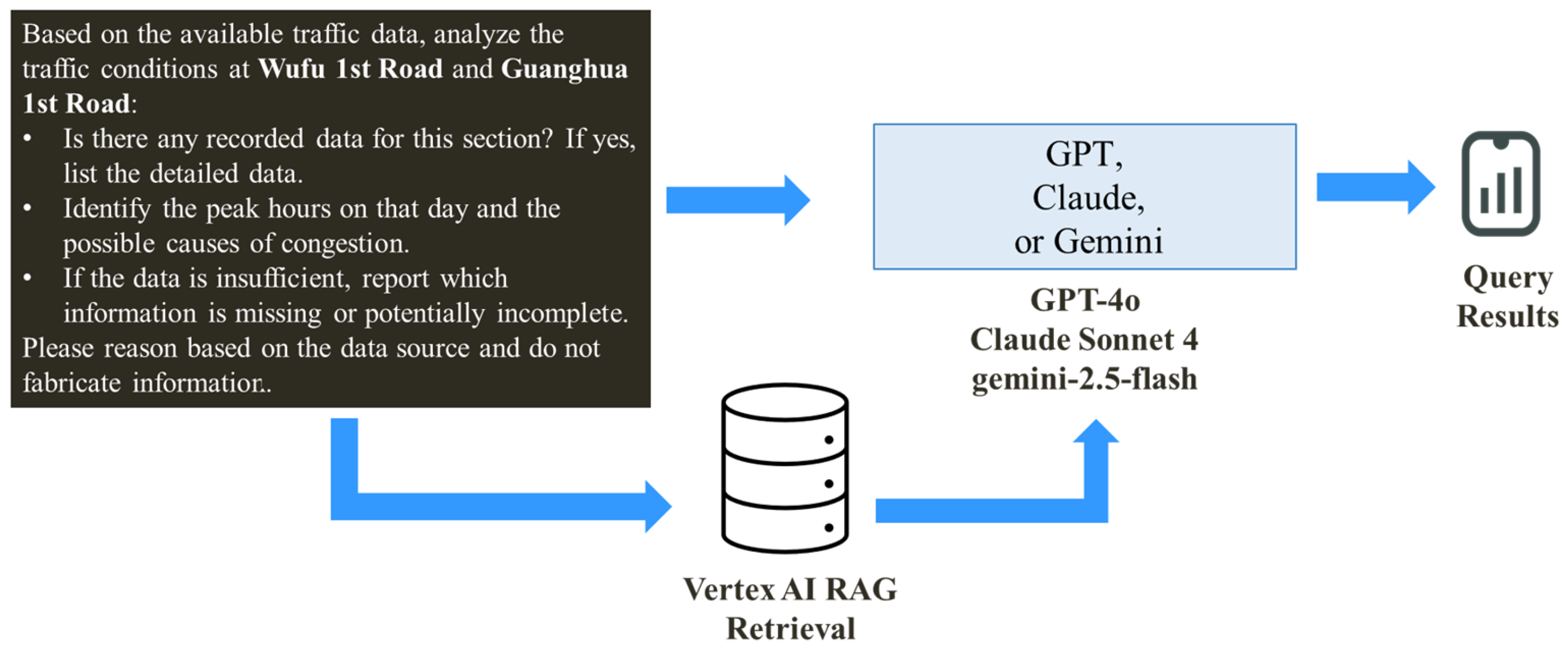

3.6. AI Analysis and Decision Support

3.7. User Interface Webpage in the PTAS

4. Experimental Results and Discussion

4.1. Experiment Setting

4.2. Object Detection and Tracking Data Collection and Model Training

4.3. Object Detection and Tracking Model Parameter Settings

4.4. Knowledge Base Construction, RAG Retrieval, and LLMs Query Process

4.5. PTAS User Interface Webpage and Analysis Reports

4.6. PTAS Traffic Analysis Results Assessment

4.7. Performance Evaluation

4.8. Discussion

5. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Putri, T.D. Intelligent Transportation Systems (ITS): A Systematic Review Using a Natural Language Processing (NLP) Approach. Heliyon 2021, 7, e08615. [Google Scholar] [CrossRef] [PubMed]

- Zhang, J.; Huang, J.; Jin, S.; Lu, S. Vision-Language Models for Vision Tasks: A Survey. IEEE Trans. Pattern Anal. Mach. Intell. 2024, 46, 5625–5644. [Google Scholar] [CrossRef] [PubMed]

- Voulodimos, A.; Doulamis, N.; Doulamis, A.; Protopapadakis, E. Deep Learning for Computer Vision: A Brief Review. Comput. Intell. Neurosci. 2018, 2018, 7068349. [Google Scholar] [CrossRef] [PubMed]

- Luo, W.; Xing, J.; Milan, A.; Zhang, X.; Liu, W.; Kim, T.-K. Multiple Object Tracking: A Literature Review. Artif. Intell. 2021, 293, 103448. [Google Scholar] [CrossRef]

- Chowdhary, K.R. (Ed.) Natural Language Processing. In Fundamentals of Artificial Intelligence; Springer: Berlin/Heidelberg, Germany, 2020; pp. 603–649. [Google Scholar] [CrossRef]

- Lewis, P.; Perez, E.; Piktus, A.; Petroni, F.; Karpukhin, V.; Goyal, N.; Küttler, H.; Lewis, M.; Yih, W.-T.; Rocktäschel, T.; et al. Retrieval-Augmented Generation for Knowledge-Intensive NLP Tasks. Adv. Neural Inf. Process. Syst. 2020, 33, 9459–9474. [Google Scholar] [CrossRef]

- Wang, S.; Yang, H.; Liu, W. Research on the construction and application of retrieval enhanced generation (RAG) model based on knowledge graph. Sci. Rep. 2025, 15, 40425. [Google Scholar] [CrossRef]

- Naveed, H.; Khan, A.U.; Qiu, S.; Saqib, M.; Anwar, S.; Usman, M.; Mian, A. A Comprehensive Overview of Large Language Models. ACM Trans. Intell. Syst. Technol. 2025, 16, 106. [Google Scholar] [CrossRef]

- Oladimeji, D.; Gupta, K.; Kose, N.A.; Gundogan, K.; Ge, L.; Liang, F. Smart Transportation: An Overview of Technologies and Applications. Sensors 2023, 23, 3880. [Google Scholar] [CrossRef]

- Tao, J.; Wang, H.; Zhang, X.; Li, X.; Yang, H. An Object Detection System Based on YOLO in Traffic Scene. In Proceedings of the 2017 6th International Conference on Computer Science and Network Technology (ICCSNT), Dalian, China, 21–22 October 2017; pp. 315–319. [Google Scholar] [CrossRef]

- Hu, Q.; Paisitkriangkrai, S.; Shen, C.; van den Hengel, A.; Porikli, F. Fast Detection of Multiple Objects in Traffic Scenes with a Common Detection Framework. IEEE Trans. Intell. Transp. Syst. 2016, 17, 1002–1014. [Google Scholar] [CrossRef]

- Jiménez-Bravo, D.M.; Murciego, Á.L.; Mendes, A.S.; Sánchez San Blás, H.; Bajo, J. Multi-Object Tracking in Traffic Environments: A Systematic Literature Review. Neurocomputing 2022, 494, 43–55. [Google Scholar] [CrossRef]

- Movahedi, M.; Choi, J. The Crossroads of LLM and Traffic Control: A Study on Large Language Models in Adaptive Traffic Signal Control. IEEE Trans. Intell. Transp. Syst. 2024, in press. [Google Scholar] [CrossRef]

- Sohan, M.; Sai Ram, T.; Rami Reddy, C.V. A Review on YOLOv8 and Its Advancements. In Algorithms for Intelligent Systems: Data Intelligence and Cognitive Informatics; Springer Nature: Singapore, 2024; pp. 529–545. [Google Scholar] [CrossRef]

- He, L.; Zhou, Y.; Liu, L.; Cao, W.; Ma, J. Research on object detection and recognition in remote sensing images based on YOLOv11. Sci. Rep. 2025, 15, 14032. [Google Scholar] [CrossRef] [PubMed]

- Alif, M.A.R. YOLOv11 for Vehicle Detection: Advancements, Performance, and Applications in Intelligent Transportation Systems. arXiv 2024, arXiv:2410.22898. [Google Scholar] [CrossRef]

- Wang, A.; Chen, H.; Liu, L.; Chen, K.; Lin, Z.; Han, J. YOLOv10: Real-Time End-to-End Object Detection. Adv. Neural Inf. Process. Syst. 2024, 37, 107984–108011. [Google Scholar] [CrossRef]

- Chang, B.R.; Tsai, H.-F.; Syu, J.-S. Implementing High-Speed Object Detection and Steering Angle Prediction for Self-Driving Control. Electronics 2025, 14, 1874. [Google Scholar] [CrossRef]

- Zhong, J.; Chen, J.; Mian, A. DualConv: Dual Convolutional Kernels for Lightweight Deep Neural Networks. IEEE Trans. Neural Netw. Learn. Syst. 2022, 34, 9528–9535. [Google Scholar] [CrossRef]

- Kumar, P. Large Language Models (LLMs): Survey, Technical Frameworks, and Future Challenges. Artif. Intell. Rev. 2024, 57, 260. [Google Scholar] [CrossRef]

- De Zarzà, I.; de Curtò, J.; Roig, G.; Calafate, C.T. LLM Multimodal Traffic Accident Forecasting. Sensors 2023, 23, 9225. [Google Scholar] [CrossRef]

- Liu, C.; Yang, S.; Xu, Q.; Li, Z.; Long, C.; Li, Z.; Zhao, R. Spatial-Temporal Large Language Model for Traffic Prediction. In Proceedings of the 2024 25th IEEE International Conference on Mobile Data Management (MDM), Singapore, 24–27 June 2024; pp. 31–40. [Google Scholar] [CrossRef]

- Touvron, H.; Lavril, T.; Izacard, G.; Martinet, X.; Lachaux, M.A.; Lacroix, T.; Rozière, B.; Goyal, N.; Hambro, E.; Azhar, F.; et al. LLaMA: Open and Efficient Foundation Language Models. arXiv 2023, arXiv:2302.13971. [Google Scholar] [CrossRef]

- Nazir, A.; Wang, Z. A Comprehensive Survey of ChatGPT: Advancements, Applications, Prospects, and Challenges. Meta-Radiology 2023, 1, 100022. [Google Scholar] [CrossRef]

- Villarreal, M.; Poudel, B.; Li, W. Can ChatGPT Enable ITS? The Case of Mixed Traffic Control via Reinforcement Learning. In Proceedings of the 2023 IEEE 26th International Conference on Intelligent Transportation Systems (ITSC), Rhodes, Greece, 17–20 September 2023; pp. 3749–3755. [Google Scholar] [CrossRef]

- Anthropic. System Card: Claude Opus 4 & Claude Sonnet 4; Anthropic: San Francisco, CA, USA, 2025; Available online: https://www.anthropic.com/claude-4-system-card (accessed on 10 December 2025).

- Google DeepMind. Gemini 2.5: Pushing the Frontier with Advanced Reasoning, Multimodality, Long Context, and Next Generation Agentic Capabilities; Technical Report; Google DeepMind: Mountain View, CA, USA, 16 June 2025; Available online: https://storage.googleapis.com/deepmind-media/gemini/gemini_v2_5_report.pdf (accessed on 15 December 2025).

- Moraga, Á.; de Curtò, J.; de Zarzà, I.; Calafate, C.T. AI-Driven UAV and IoT Traffic Optimization: Large Language Models for Congestion and Emission Reduction in Smart Cities. Drones 2025, 9, 248. [Google Scholar] [CrossRef]

- Ma, L.; Zhang, R.; Han, Y.; Yu, S.; Wang, Z.; Ning, Z.; Zhang, J.; Xu, P.; Li, P.; Ju, W.; et al. A Comprehensive Survey on Vector Database: Storage and Retrieval Technique, Challenge. arXiv 2023, arXiv:2310.11703. [Google Scholar] [CrossRef]

- Xu, H.; Yuan, J.; Zhou, A.; Xu, G.; Li, W.; Ban, X.; Ye, X. GenAI-Powered Multi-Agent Paradigm for Smart Urban Mobility: Opportunities and Challenges for Integrating Large Language Models (LLMs) and Retrieval-Augmented Generation (RAG) with Intelligent Transportation Systems. arXiv 2024, arXiv:2409.00494. [Google Scholar]

- Hou, X.; Wang, Y.; Chau, L.P. Vehicle Tracking Using Deep SORT with Low Confidence Track Filtering. In Proceedings of the 16th IEEE International Conference on Advanced Video and Signal-Based Surveillance (AVSS), Auckland, New Zealand, 4–7 September 2019; IEEE: Piscataway, NJ, USA, 2019; pp. 1–6. [Google Scholar] [CrossRef]

- Zhang, Y.; Sun, P.; Jiang, Y.; Yu, D.; Weng, F.; Yuan, Z.; Luo, P.; Liu, W.; Wang, X. ByteTrack: Multi-Object Tracking by Associating Every Detection Box. In Proceedings of the European Conference on Computer Vision (ECCV), Tel Aviv, Israel, 23–27 October 2022; Springer: Cham, Switzerland, 2022; pp. 1–21. [Google Scholar] [CrossRef]

| Resource | Workstation |

|---|---|

| GPU | nVDIA GeForce RTX 4070 Ti |

| CPU | Intel(R) Xeon(R) W-2223 CPU @ 3.60 GHz |

| Memory | DDR5-5600 RAM 16 GB × 2 |

| Storage | KXG60ZNV512G KIOXIA 1 TB |

| Software | Version |

|---|---|

| Python | 3.9.18 |

| Anaconda | 23.7.4 |

| Flask | 3.1.0 |

| Spyder | 5.5.1 |

| Jupyter Notebook | 1.0.0 |

| OpenCV | 4.11.0.86 |

| DarkLabel | 2.4 |

| Number of Images | Object Classification | Data Division Ratio |

|---|---|---|

| 6633 sheets | 5 types (pedestrians, vehicles, trucks, buses, motorcycles) | Training set 87.5% (for detection only) Validation set 8.3% (for detection only) Test set 4.2% (for both detection and tracking) |

| Number of Images | Object Classification | Data Division Ratio |

|---|---|---|

| 1000 sheets | 5 types (pedestrians, vehicles, trucks, buses, motorcycles, etc.) | Training set 80% (for detection only) Test set 20% (for both detection and tracking) |

| Model | GPT-4o | Claude Sonnet 4 | Gemini-2.5-Flash |

|---|---|---|---|

| Factor | |||

| Data Description | Simplified summary focusing on covered time, tool types, and directions | Very clear, listing data sources and analysis details | Provides actual numerical examples (e.g., 17:00–17:10, vehicle and pedestrian flow data) |

| Peak Hour Analysis | Simplified as mainly 18:10–19:00 | Pinpoints 18:10–19:00, highlighting peak for vehicles and motorcycles | Identifies dual peaks at 07:00–09:00 and 17:00–19:00 |

| Congestion Cause Analysis | Attributed to commuting concentration and arterial road pressure | Due to concentrated evening demand and arterial road intersection pressure | High traffic volume, narrow road width, and multiple intersections |

| Traffic Accident Analysis & Suggestions | Mentions accident in Qianzhen District (not at the intersection), but notes diversion effect | Mentions accident in Qianzhen District (not at the intersection), but notes diversion effect | Mentions accidents in Qianzhen and Sanmin, emphasizing “no accidents at the intersection.” |

| Construction Impact & Suggestions | Briefly states “no construction.” | Clearly states “no construction.” | States “no construction.” |

| Air Quality Analysis | Summarized as “Good, suitable for outdoor activities.” | Provides data from Qianjin and Fuxing stations (AQI 25–43), concluding “suitable for outdoor activities.” | Mentions the same stations, with a consistent conclusion |

| Noise Analysis | Summarized as “Lingya station meets standards, minor impact.” | Provides Lingya station data (day/evening/night), confirms standards met, minor impact. | Provides Lingya + Fude station data, notes higher values at Fude, suggests soundproofing |

| Data Gaps Noted | Mentions some limitations (e.g., lack of location details) | Lists comprehensively (time, speed, signals, capacity, weather, etc.) | Points out missing data such as “vehicle speed, flow rate.” |

| Structure, Logic & Readability | Highly condensed, concise, and easy to read | Clear and well-structured, complete point by point | Bullet-point style with many examples, slightly lengthy and cluttered |

| Improvement Suggestions | Divert peak-hour traffic, continue monitoring | Provides specific suggestions to supplement data | Suggests soundproofing measures |

| Mode of Expression | Provides a concise view | Provides a comprehensive view | Provides a comprehensive view |

| Metrics | Yolov8n | RG-Yolov8n | DuCRG-Yolov8n | Yolov11n | RG-Yolov11n | DuCRG-Yolov11n |

|---|---|---|---|---|---|---|

| Speed (fps) | 46.08 | 46.72 | 47.25 | 63.52 | 65.18 | 68.25 |

| Precision (%) | 88.7 | 88.8 | 89.0 | 89.4 | 89.6 | 91.4 |

| Recall (%) | 86.8 | 86.2 | 82.7 | 86.1 | 86.3 | 87.1 |

| F1_score | 0.862 | 0.875 | 0.857 | 0.877 | 0.878 | 0.4435 |

| Metrics | ByteTrack | Deep SORT | ||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Yolov8n | RG-Yolov8n | DuCRG-Yolov8n | Yolov11n | RG-Yolov11n | DuCRG-Yolov11n | Yolov8n | RG-Yolov8n | DuCRG-Yolov8n | Yolov11n | RG-Yolov11n | DuCRG-Yolov11n | |

| MOTA | 0.5225 | 0.406 | 0.5445 | 0.5155 | 0.6205 | 0.7195 | 0.203 | 0.291 | 0.326 | 0.291 | 0.345 | 0.4165 |

| IDF1 | 0.6765 | 0.701 | 0.7325 | 0.6775 | 0.741 | 0.851 | 0.623 | 0.6145 | 0.6345 | 0.599 | 0.656 | 0.679 |

| MOTP | 0.1153 | 0.1075 | 0.1012 | 0.1022 | 0.1076 | 0.08735 | 0.1158 | 0.1269 | 0.11005 | 0.10965 | 0.12815 | 0.1012 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Chang, B.R.; Tsai, H.-F.; Chen, C.-C. Multimodal Intelligent Perception at an Intersection: Pedestrian and Vehicle Flow Dynamics Using a Pipeline-Based Traffic Analysis System. Electronics 2026, 15, 353. https://doi.org/10.3390/electronics15020353

Chang BR, Tsai H-F, Chen C-C. Multimodal Intelligent Perception at an Intersection: Pedestrian and Vehicle Flow Dynamics Using a Pipeline-Based Traffic Analysis System. Electronics. 2026; 15(2):353. https://doi.org/10.3390/electronics15020353

Chicago/Turabian StyleChang, Bao Rong, Hsiu-Fen Tsai, and Chen-Chia Chen. 2026. "Multimodal Intelligent Perception at an Intersection: Pedestrian and Vehicle Flow Dynamics Using a Pipeline-Based Traffic Analysis System" Electronics 15, no. 2: 353. https://doi.org/10.3390/electronics15020353

APA StyleChang, B. R., Tsai, H.-F., & Chen, C.-C. (2026). Multimodal Intelligent Perception at an Intersection: Pedestrian and Vehicle Flow Dynamics Using a Pipeline-Based Traffic Analysis System. Electronics, 15(2), 353. https://doi.org/10.3390/electronics15020353