A Frequency Identification Method for Differential Frequency-Hopping Signals Based on the Super-Resolution Reconstruction of Time–Frequency Images

Abstract

1. Introduction

- We propose a CU-Net network for reconstructing super-resolution time–frequency images, which can reduce noise components.

- We design a ResNet structure for recognizing frequency-hopping sequences.

- Using various datasets, we demonstrate that frequency-hopping sequences can be effectively identified under low-SNR conditions.

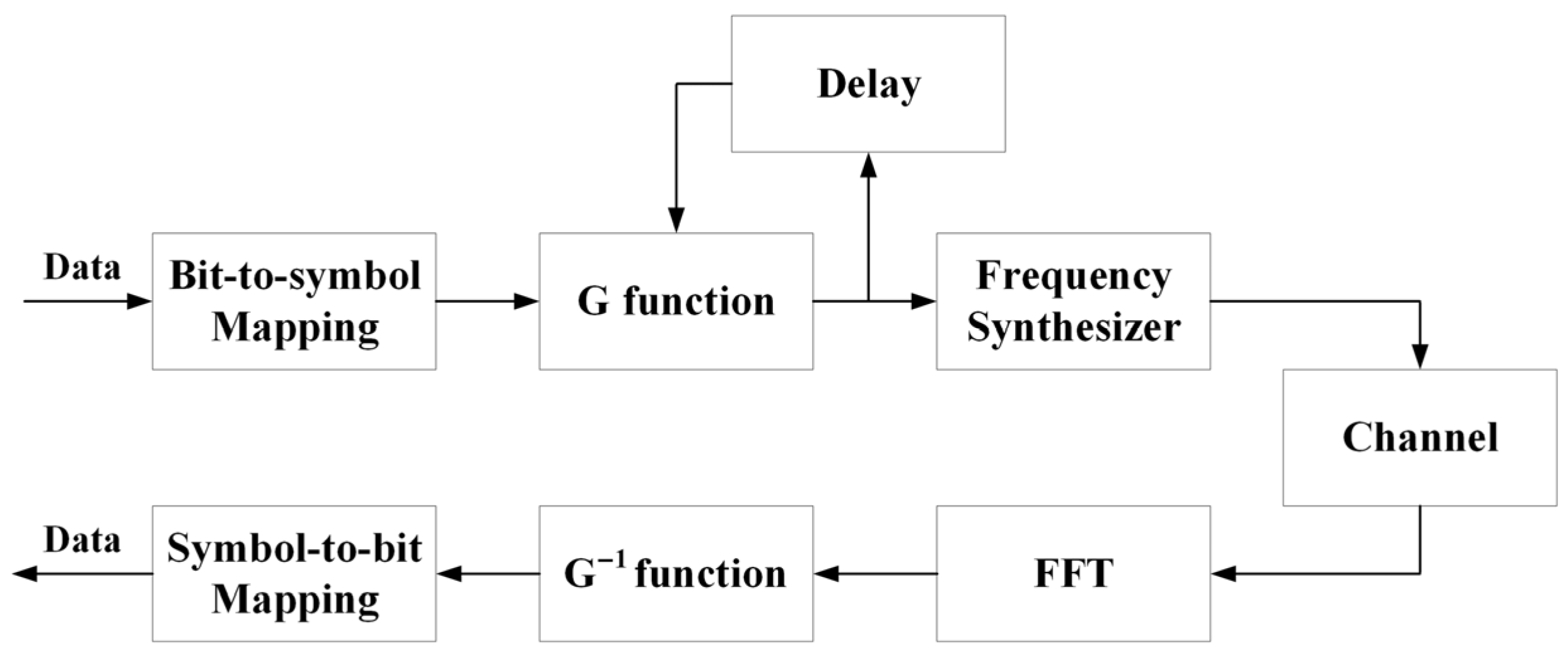

2. Models of the DFH Communication System

2.1. Theory Models of the DFH Communication System

2.2. The Principles of G Function

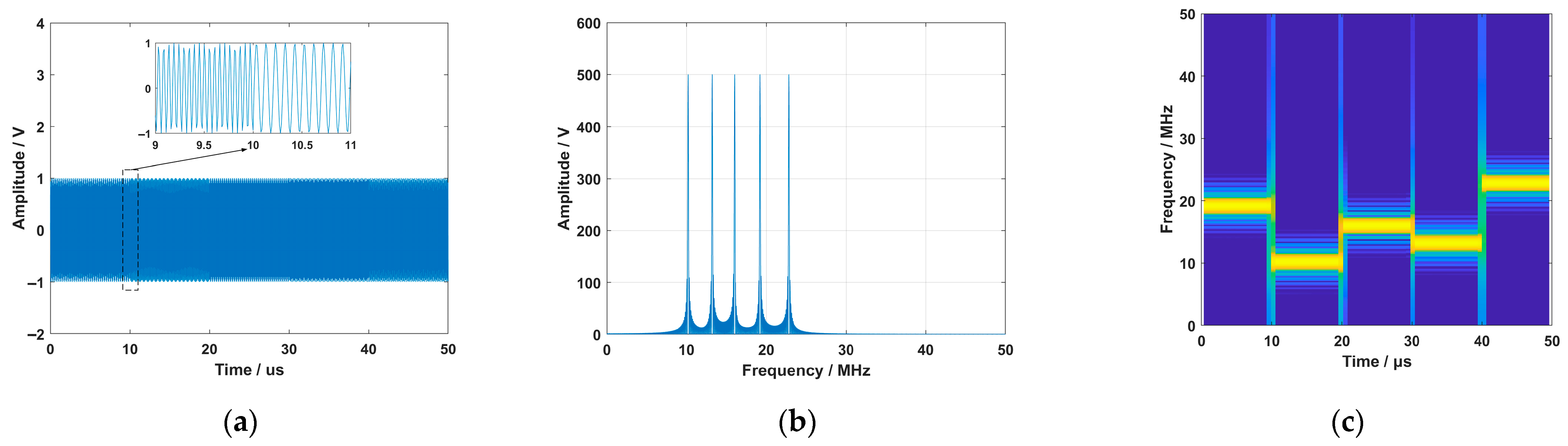

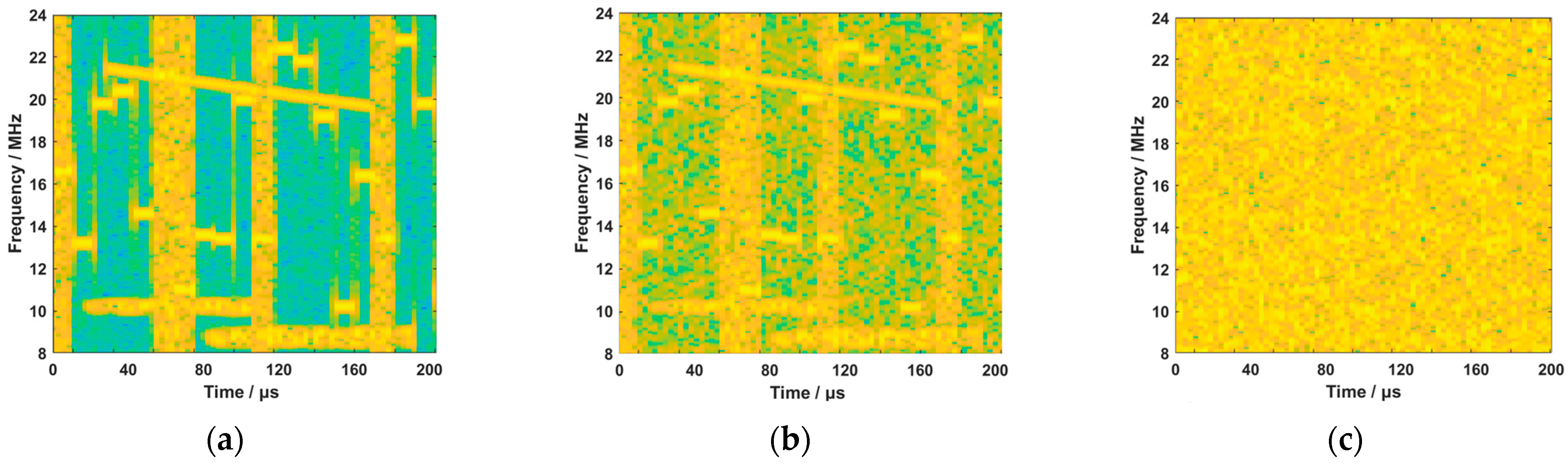

2.3. Analysis of the Limitation of Frequency Recognition of DFH Signals in Low-SNR Conditions

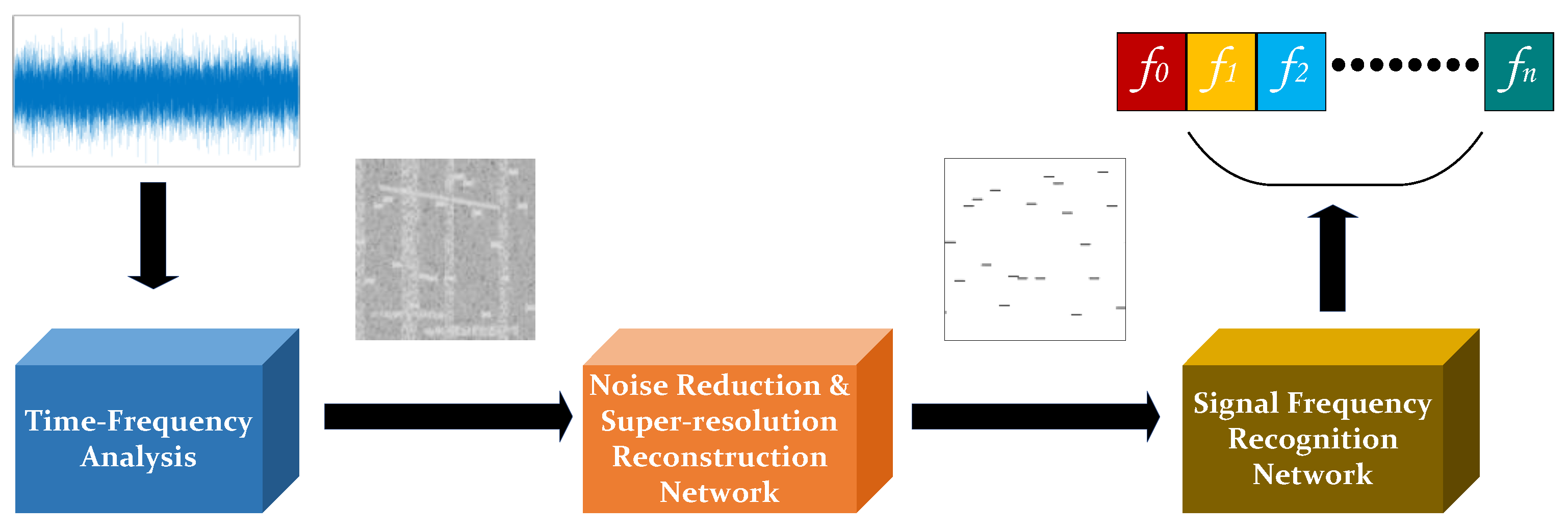

3. Frequency Recognition Method for DFH Signals Based on Super-Resolution Reconstruction of Time–Frequency Images (TFISrR)

3.1. Method Motivation

3.2. Method Structure

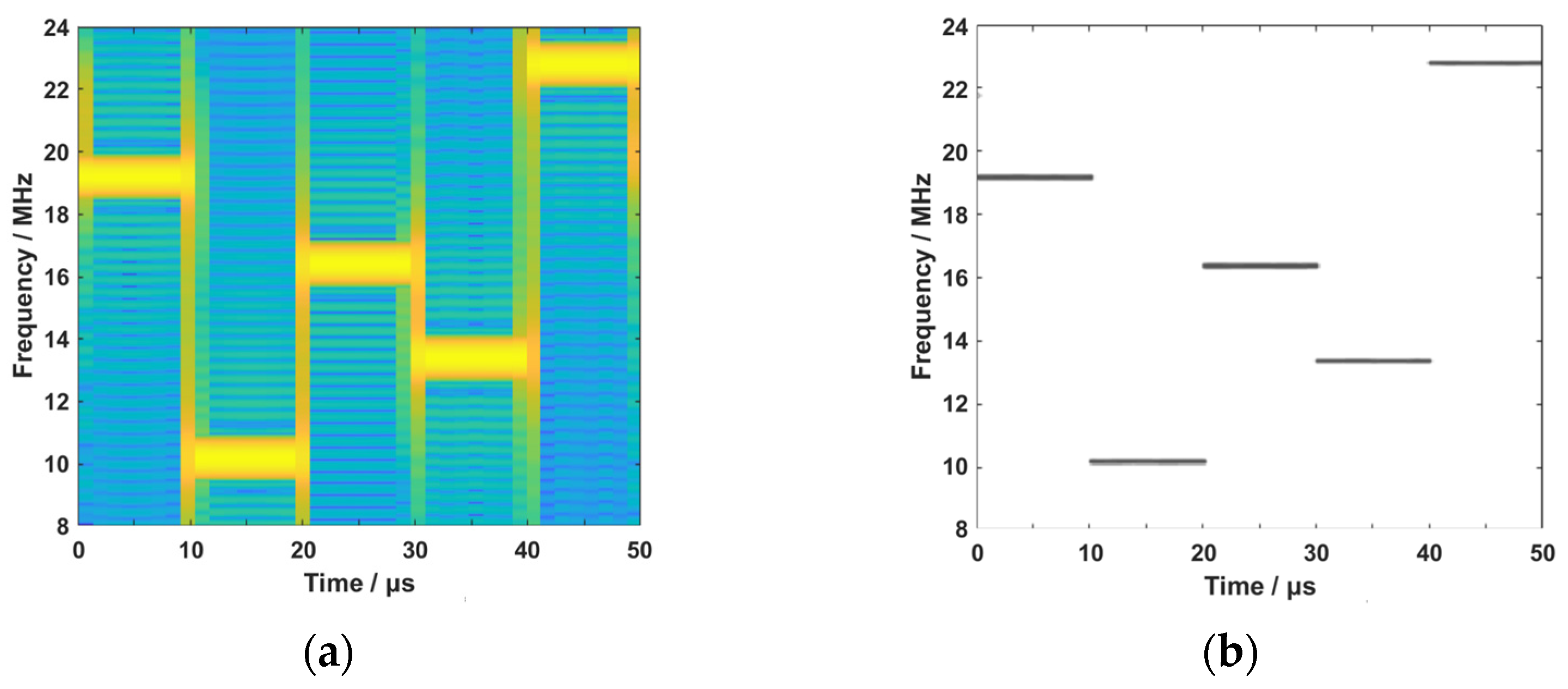

3.2.1. Time–Frequency Analysis

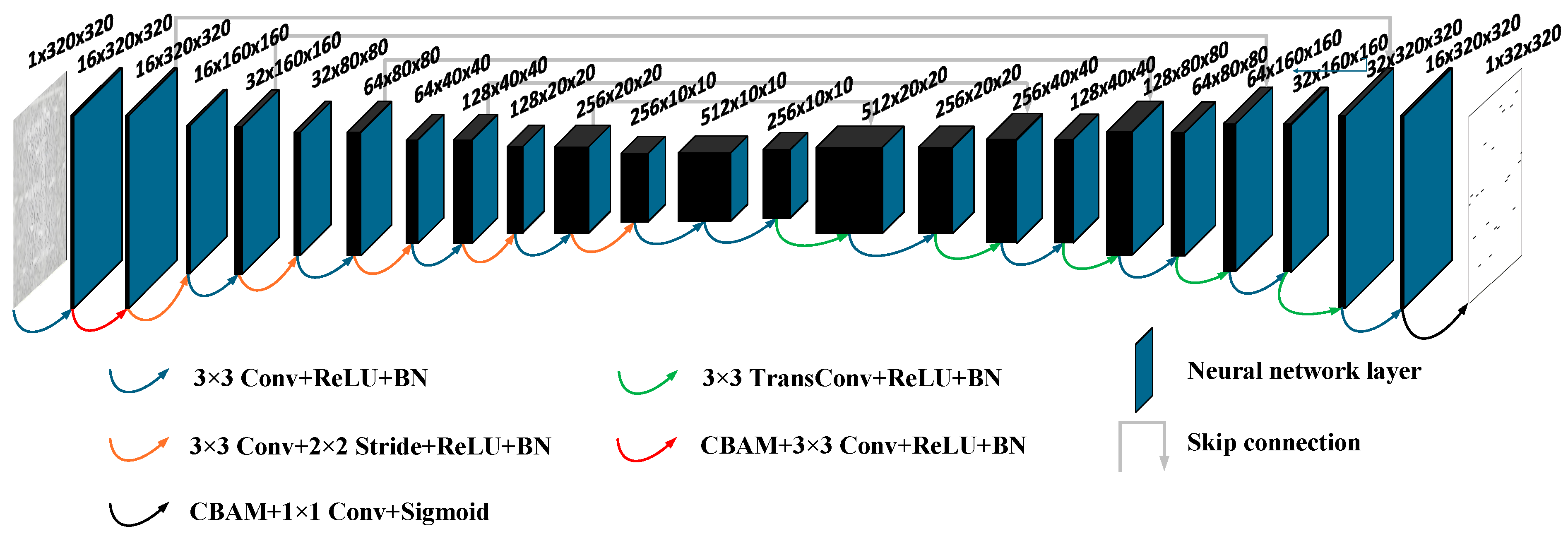

3.2.2. Noise Reduction and Super-Resolution Reconstruction Network

- Network structure.

- Encoder and decoder.

- CBAM.

- Network parameter configuration.

3.2.3. Signal Frequency Recognition Network

- Network architecture.

- Residual block.

- Network parameter configuration.

4. Experimental Section

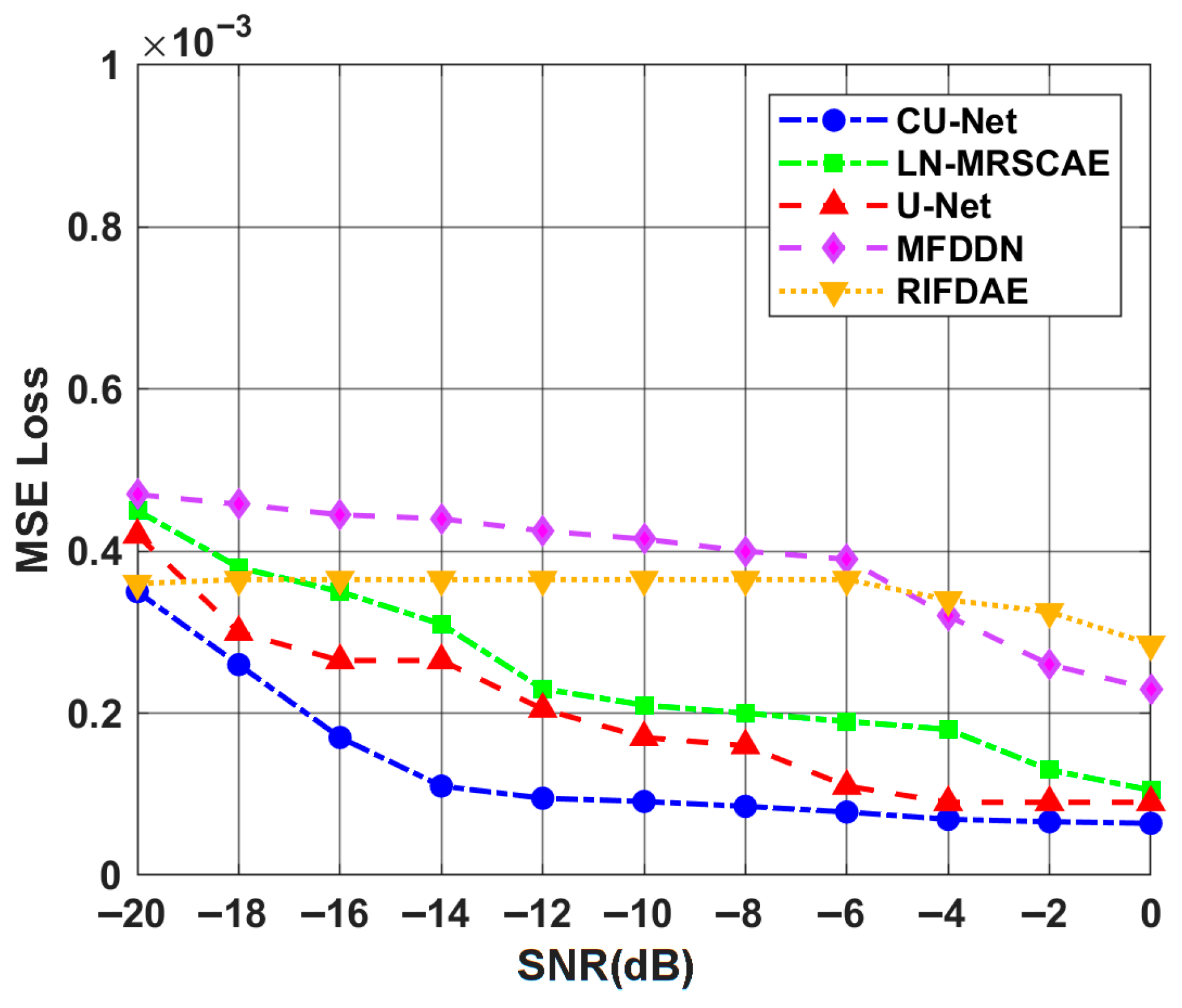

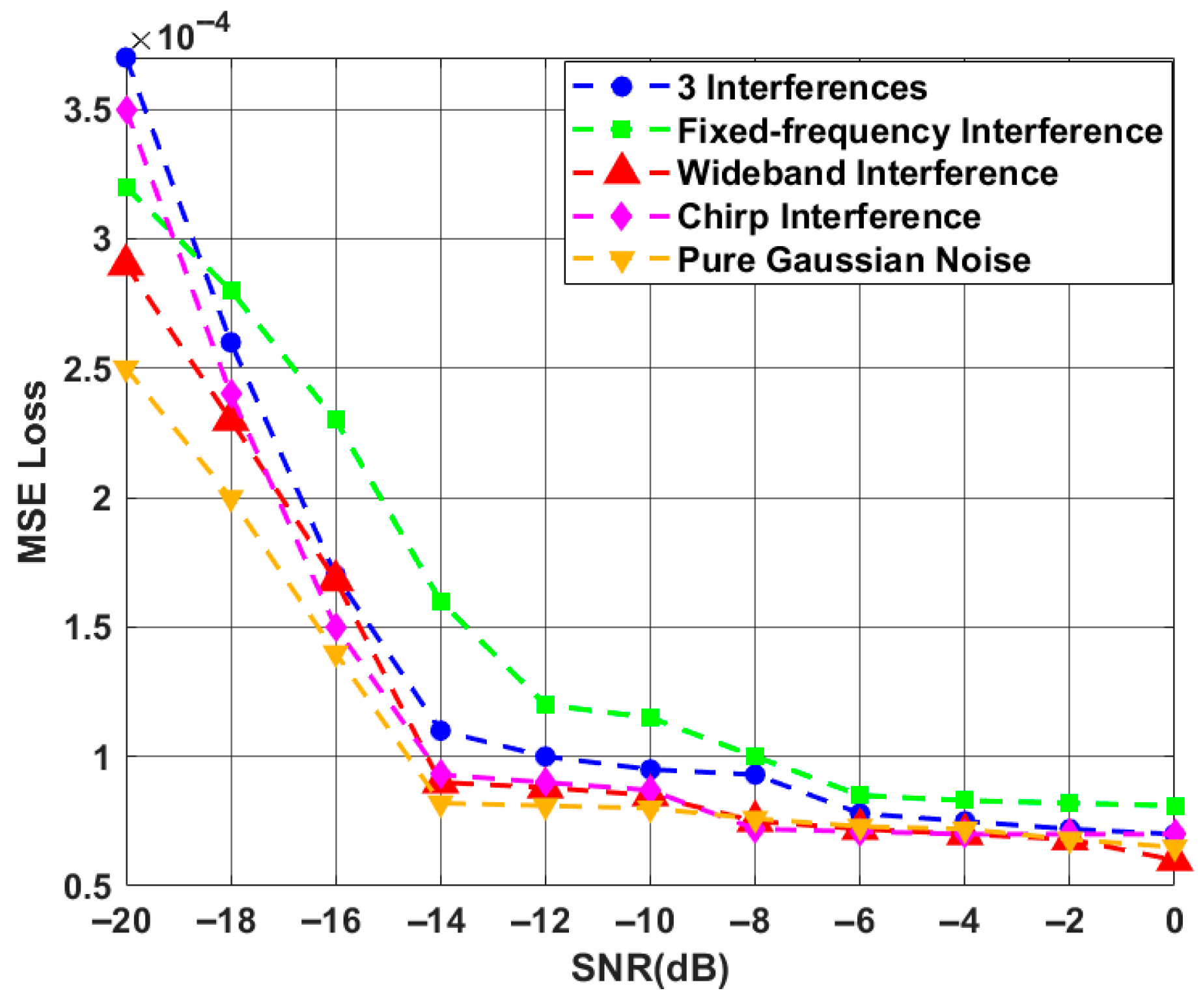

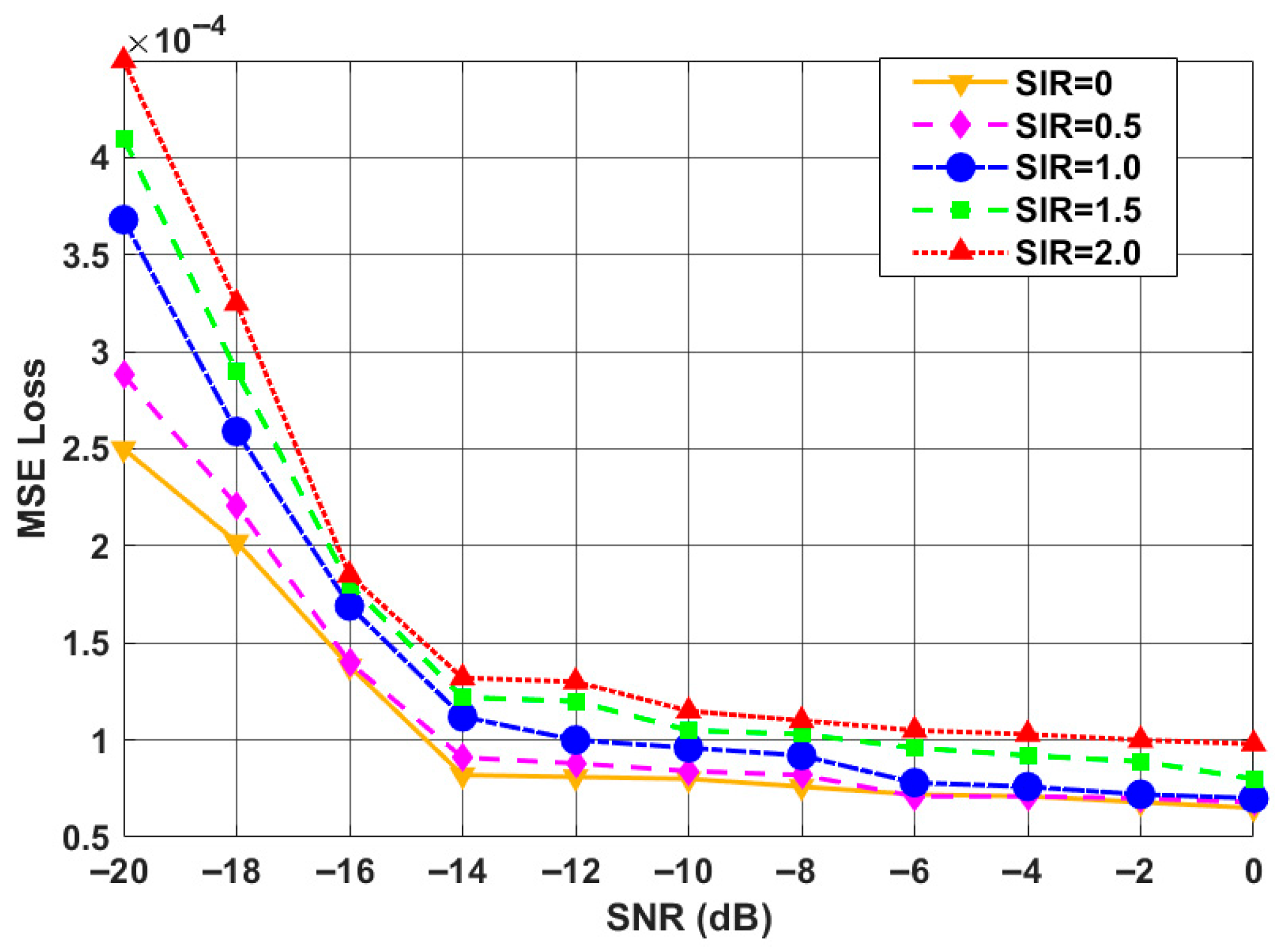

4.1. Performance Evaluation of CU-Net

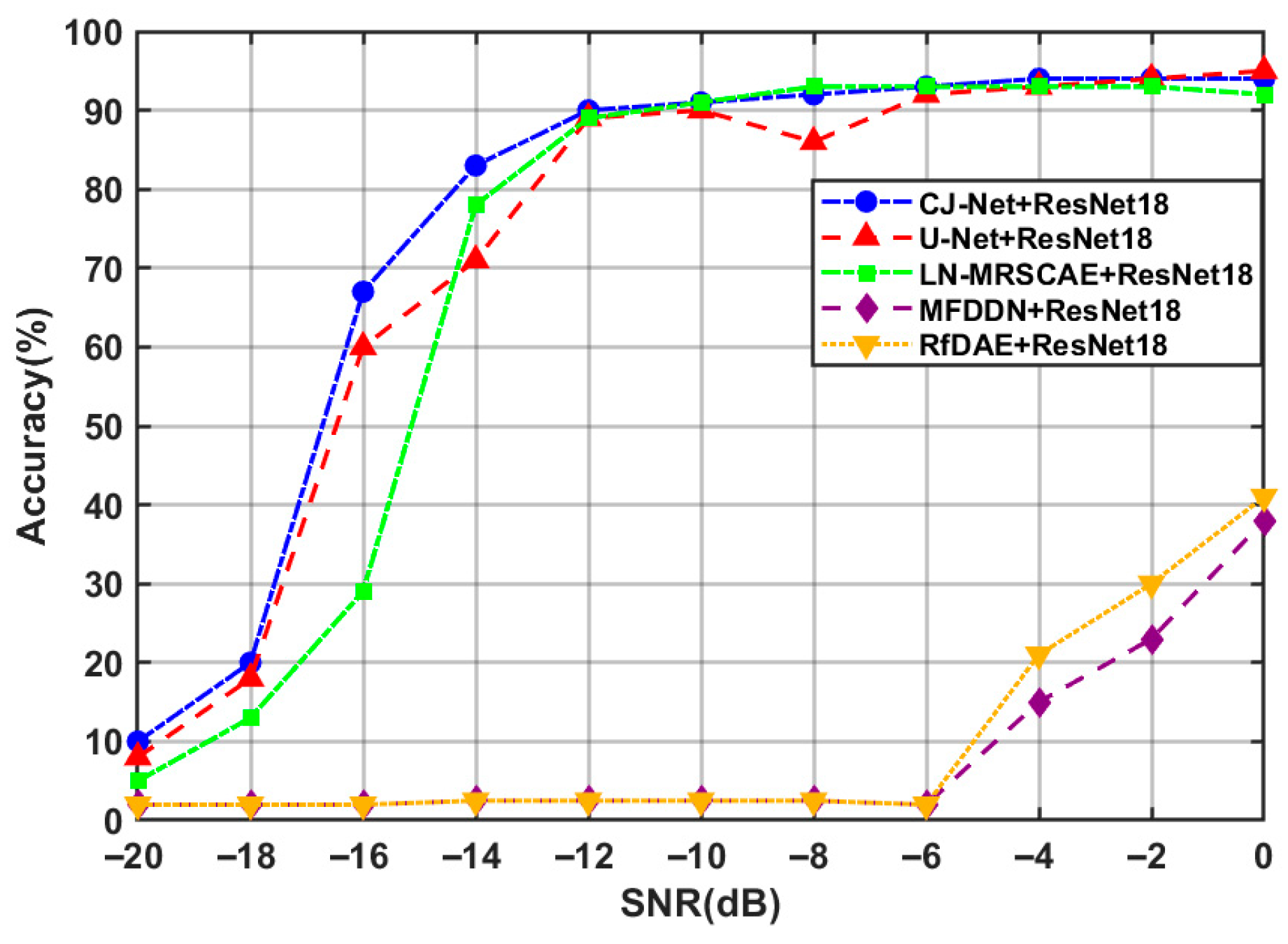

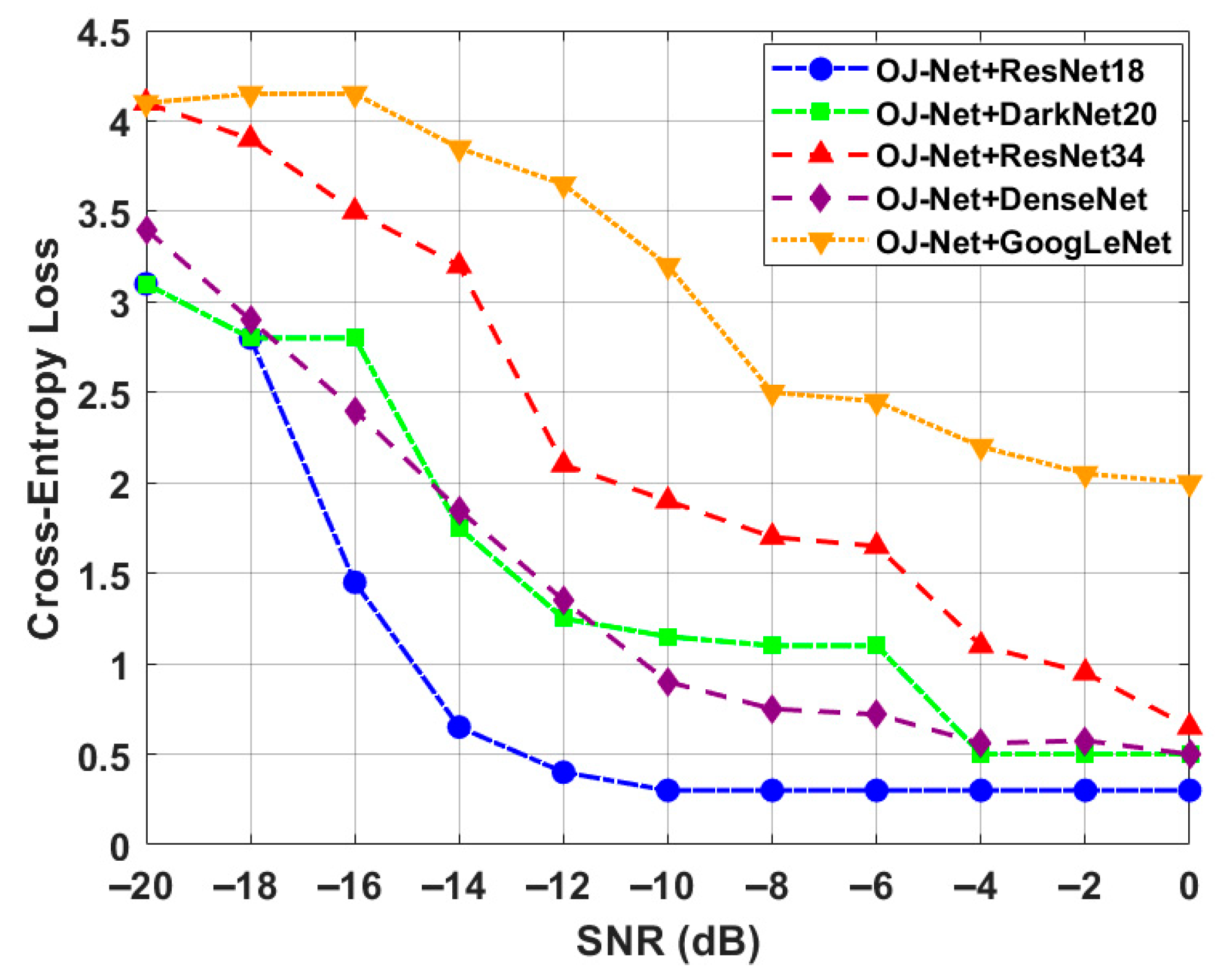

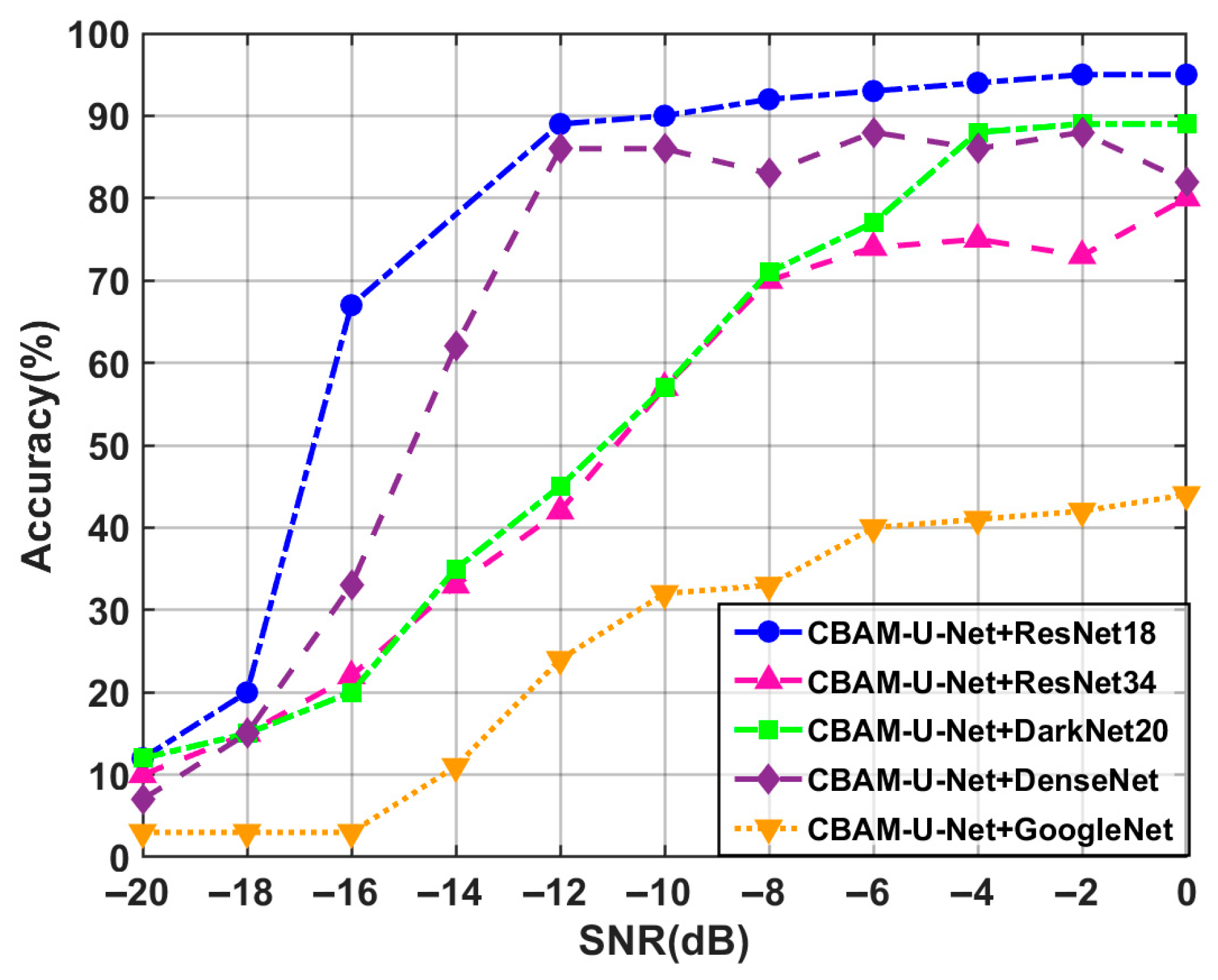

4.2. Performance Evaluation of ResNet

- A complete frame containing 19 valid hops and 2 incomplete hops, resulting in 21 frequency labels;

- A full 20-hop sequence with 64 optional frequency points per hop.

- The super-resolved outputs from LN-MRSCAE, MFDDN, RIFDAE, U-Net, and CU-Net were fed into the same ResNet for recognition comparison.

5. Conclusions and Future Work

Author Contributions

Funding

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Chen, R.; Shi, J.; Yang, L.-L.; Li, Z.; Guan, L. High-Security Sequence Design for Differential Frequency Hopping Systems. IEEE Syst. J. 2021, 15, 4895–4906. [Google Scholar] [CrossRef]

- Mansour, A.; Osswald, C. Interception of Signals with Very Weak SINR. IEEE Access 2024, 12, 97346–97373. [Google Scholar] [CrossRef]

- Du, W.; Yang, L.; Wang, H.; Gong, X.; Zhang, L.; Li, C.; Ji, L. LN-MRSCAE: A novel deep learning based denoising method for mechanical vibration signals. J. Vib. Control 2023, 30, 459–471. [Google Scholar] [CrossRef]

- Wang, Y.-M.; Cao, G.-Q. A multiscale convolution neural network for bearing fault diagnosis based on frequency division denoising under complex noise conditions. Complex. Intell. Syst. 2023, 9, 4263–4285. [Google Scholar] [CrossRef]

- Xie, H.-H.; Yuan, Y.; Zeng, S.-Y. Radar Complex Intermediate Frequency Signal Denoising Based on Convolutional Auto-Encoder Network. IEEE Access 2023, 11, 93090–93097. [Google Scholar] [CrossRef]

- Hu, T.; Xu, B.; Wang, Y.; Zhu, J.; Zhou, J.; Wan, Z. Mine Microseismic Signal Denoising Based on a Deep Convolutional Autoencoder. Shock Vib. 2023, 2023, 6225923. [Google Scholar] [CrossRef]

- Razzaq, H.; Hussain, Z. Instantaneous Frequency Estimation of FM Signals under Gaussian and Symmetric α-Stable Noise: Deep Learning versus Time-Frequency Analysis. Information 2022, 14, 18. [Google Scholar] [CrossRef]

- Li, M.-D.; Xie, J.; Yang, H.-J.; Geng, M.-J.; Liu, J.-C. Specific Emitter Identification of Frequency Hopping Signals Based on Feature Extraction and Deep Residual Network. IEEE Access 2022, 10, 119084–119094. [Google Scholar] [CrossRef]

- Yuan, Z.; Zhao, Z.-J.; Zhang, Y.-P.; Zheng, S.-L.; Dai, S.-G. Intelligent Reception of Frequency Hopping Signals Based on CVDP. Appl. Sci. 2023, 13, 7604. [Google Scholar] [CrossRef]

- Feng, Y.-X.; Su, J.-K.; Qian, B. A Construction Method for the Random Factor-Based G Function. Appl. Sci. 2024, 14, 10478. [Google Scholar] [CrossRef]

- Fan, K.-C.; Hu, M.; Zhao, M.-C.; Qi, L.; Xie, W.-J.; Zou, H.-Y.; Wu, B.; Zhao, S.-S.; Wang, X.-W. RMSRGAN: A Real Multispectral Imagery Super-Resolution Reconstruction for Enhancing Ginkgo Biloba Yield Prediction. Forests 2024, 15, 859. [Google Scholar] [CrossRef]

- Yu, C.-H.; Hong, L.-Y.; Pan, T.-P.; Li, Y.-F.; Li, T.-T. ESTUGAN: Enhanced Swin Transformer with U-Net Discriminator for Remote Sensing Image Super-Resolution. Electronics 2023, 12, 4235. [Google Scholar] [CrossRef]

- Li, J.-X.; Zheng, K.; Li, Z.; Gao, L.-R.; Jia, X.-P. X-Shaped Interactive Autoencoders with Cross-Modality Mutual Learning for Unsupervised Hyperspectral Image Super-Resolution. IEEE Trans. Geosci. Remote Sens. 2023, 61, 1–17. [Google Scholar] [CrossRef]

- Shi, Z.-P.; Geng, H.T.; Wu, F.-L.; Geng, L.-C.; Zhuang, X.-R. Radar-SR3: A Weather Radar Image Super-Resolution Generation Model Based on SR3. Atmosphere 2024, 15, 40. [Google Scholar] [CrossRef]

- Lv, Y.-N.; Lin, X.; Wang, P.; Li, H.-D. Analysis of paper types based on three dimensional fluorescence spectroscopy combined with Resnet34. Anal. Methods 2025, 17, 7320–7326. [Google Scholar] [CrossRef]

- Mohammad-Alikhani, A.; Jamshidpour, E.; Dhale, S.; Akrami, M.; Pardhan, S.; Nahid-Mobarakeh, B. Fault Diagnosis of Electric Motors by a Channel-Wise Regulated CNN and Differential of STFT. IEEE Trans. Ind. Appl. 2025, 61, 3066–3077. [Google Scholar] [CrossRef]

- Faisal, K.; Mir, H.; Sharma, R. Human Activity Recognition from FMCW Radar Signals Utilizing Cross-Terms Free WVD. IEEE Internet Things J. 2024, 11, 14383–14394. [Google Scholar] [CrossRef]

- Zhu, C.-Y.; Cao, T.-Y.; Zhao, X.-Q.; Yang, Y.-C.; Xu, Z.-W. A Time-Frequency Domain Detection Method for Measurement Data of Non-Stationary Signals Based on Optimized Hilbert-Huang Transform. IEEE Instrum. Meas. Mag. 2023, 26, 29–39. [Google Scholar] [CrossRef]

- Xu, Z.-M.; Wu, Q.-H.; Ai, X.-F.; Liu, X.-B.; Wu, J.; Zhao, F. Micromotion Frequency Estimation of Multiple Space Targets Based on RCD Spectrum. IEEE Trans. Aerosp. Electron. Syst. 2025, 61, 14019–14030. [Google Scholar] [CrossRef]

- Wang, Y.-Y.; He, S.-R.; Wang, C.-R.; Li, Z.; Li, J.; Dai, H.-J.; Xie, J.-L. Detection and parameter estimation of frequency hopping signal based on the deep neural network. Int. J. Electron. 2021, 109, 520–536. [Google Scholar] [CrossRef]

- Lin, M.-Y.; Tian, Y.; Zhang, X.-X.; Huang, Y.-H. Parameter Estimation of Frequency-Hopping Signal in UCA Based on Deep Learning and Spatial Time–Frequency Distribution. IEEE Sens. J. 2023, 23, 7460–7474. [Google Scholar] [CrossRef]

- Chen, Z.-Y.; Shi, Y.-W.; Wang, Y.-W.; Li, X.-B.; Yu, X.-H.; Shi, Y.-R. Unlocking Signal Processing with Image Detection: A Frequency Hopping Detection Scheme for Complex EMI Environments Using STFT and CenterNet. IEEE Access 2023, 11, 46004–46014. [Google Scholar] [CrossRef]

- Sun, B.; Hu, W.-T.; Wang, H.; Wang, L.; Deng, C.-Y. Remaining Useful Life Prediction of Rolling Bearings Based on CBAM- CNN-LSTM. Sensors 2025, 25, 554. [Google Scholar] [CrossRef]

- Betti, A.; Tucci, M. YOLO-S: A Lightweight and Accurate YOLO-like Network for Small Target Detection in Aerial Imagery. Sensors 2023, 23, 1865. [Google Scholar] [CrossRef]

- Liu, S.-Z.; Adil, M.; Ma, L.; Mazhar, S.; Qiao, G. DenseNet-Based Robust Channel Estimation in OFDM for Improving Underwater Acoustic Communication. IEEE J. Ocean. Eng. 2025, 50, 1518–1537. [Google Scholar] [CrossRef]

- Subba, A.; Sunaniya, A. Computationally optimized brain tumor classification using attention based GoogLeNet-style CNN. Expert. Syst. Appl. 2025, 260, 125443. [Google Scholar] [CrossRef]

| Index | Layers | Structure | Shape |

|---|---|---|---|

| 0 | Xinput | - | 1 × 320 × 320 |

| 1 | X0 | Conv-BN-ReLU | 16 × 320 × 320 |

| 2 | X1 | CBAM-Conv-BN-ReLU | 16 × 320 × 320 |

| 3 | X2 | Downsampling | 16 × 160 × 160 32 × 160 × 160 |

| 4 | X3 | Downsampling | 32 × 80 × 80 64 × 80 × 80 |

| 5 | X4 | Downsampling | 64 × 40 × 40 128 × 40 × 40 |

| 6 | X5 | Downsampling | 128 × 20 × 20 256 × 20 × 20 |

| 7 | X6 | Downsampling | 256 × 10 × 10 512 × 10 × 10 |

| 8 | X′5 | Upsampling | 512 × 20 × 20 256 × 20 × 20 |

| 9 | X′4 | Upsampling | 256 × 40 × 40 128 × 40 × 40 |

| 10 | X′3 | Upsampling | 128 × 80 × 80 64 × 80 × 80 |

| 11 | X′2 | Upsampling | 64 × 160 × 160 32 × 160 × 160 |

| 12 | X′1 | Upsampling | 32 × 320 × 320 16 × 320 × 320 |

| 13 | Xoutput | CBAM-Conv-BN-ReLU + Sigmoid | 1 × 320 × 320 |

| Index | Layers | Structure | Shape |

|---|---|---|---|

| 0 | Xinput | - | 1 × 320 × 320 |

| 1 | X1 | Conv-BN-ReLU | 64 × 160 × 160 |

| 2 | X2 | MaxPool | 64 × 80 × 80 |

| 3 | X3 | 2 × Res Block | 64 × 80 × 80 128 × 40 × 40 |

| 4 | X4 | 2 × Res Block | 128 × 40 × 40 256 × 20 × 20 |

| 5 | X5 | 2 × Res Block | 256 × 20 × 20 512 × 10 × 10 |

| 6 | X6 | AvgPool | 512 × 1 × 1 |

| 7 | Result | FC + SoftMax | 21 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Yang, P.; Qian, B.; Mu, B.; Qi, M.; Wang, H. A Frequency Identification Method for Differential Frequency-Hopping Signals Based on the Super-Resolution Reconstruction of Time–Frequency Images. Electronics 2026, 15, 2070. https://doi.org/10.3390/electronics15102070

Yang P, Qian B, Mu B, Qi M, Wang H. A Frequency Identification Method for Differential Frequency-Hopping Signals Based on the Super-Resolution Reconstruction of Time–Frequency Images. Electronics. 2026; 15(10):2070. https://doi.org/10.3390/electronics15102070

Chicago/Turabian StyleYang, Pengteng, Bo Qian, Bingzhen Mu, Mingjiao Qi, and Hailong Wang. 2026. "A Frequency Identification Method for Differential Frequency-Hopping Signals Based on the Super-Resolution Reconstruction of Time–Frequency Images" Electronics 15, no. 10: 2070. https://doi.org/10.3390/electronics15102070

APA StyleYang, P., Qian, B., Mu, B., Qi, M., & Wang, H. (2026). A Frequency Identification Method for Differential Frequency-Hopping Signals Based on the Super-Resolution Reconstruction of Time–Frequency Images. Electronics, 15(10), 2070. https://doi.org/10.3390/electronics15102070