1. Introduction

Simultaneous Localization and Mapping (SLAM) is a crucial technology in fields such as robotic autonomous navigation and augmented reality. Single-sensor SLAM systems are mainly categorized into LiDAR-based SLAM and vision-based SLAM. LiDAR SLAM performs stably in structured environments but is limited by the radar detection range during operation [

1]; visual SLAM relies on stable texture features in the environment; under severe illumination changes or dynamic object occlusions, camera observations become limited, so its localization and mapping performance tends to degrade [

2]. The IMU can measure acceleration and angular velocity at a high frequency, with advantages of high measurement accuracy, insensitivity to the surrounding environment, and high-frequency acquisition of the robot’s internal motion information, but it has cumulative error [

3]. VIO achieves stable and accurate state estimation in complex scenes by fusing visual and inertial measurements; it uses visual geometric constraints to suppress IMU integration drift, and leverages the IMU’s high-frequency measurements to compensate for the uncertainty of inter-frame visual tracking and to provide scale information [

4]. A classic method in this domain is the VINS-Mono, a complete and efficient tightly coupled optimization framework proposed to systematically achieve high-precision, real-time monocular visual-inertial state estimation and exert a profound impact on subsequent research [

5].

Based on the sensor data fusion strategy, VIO can be categorized into two methods, loosely coupled [

6] and tightly coupled [

7]. In the former, the camera and IMU modules independently perform pose estimation, and their results are fused subsequently. In the latter, raw measurements from both sensors are directly merged, and state estimation is performed via joint optimization, thus better utilizing the complementary properties of different sensors. Bescos et al. utilize stereo vision to achieve multi-object tracking functionality, they jointly optimize the trajectories of objects in both dynamic and static scenes within a sliding window based on a tightly coupled approach [

8]. Li et al. combine data such as GNSS carrier phase with visual-inertial navigation using tight coupling, designing the high-precision P3-VINS method [

9]. Although the tightly coupled method has higher computational complexity, it makes full use of the raw sensor measurement information, resulting in higher estimation accuracy and robustness compared to the loosely coupled method.

From the perspective of visual information processing, VIO methods are primarily categorized into feature-based methods [

10,

11] and direct methods [

12,

13]. In direct methods, the camera pose is estimated by minimizing the photometric error between images; this approach does not require feature point extraction and descriptor matching, making it suitable for texture-less scenes. However, direct methods rely on the photometric consistency assumption and are easily interfered by factors such as illumination changes, camera exposure adjustments, and motion blur in practical applications. In feature-based methods, representative pixels on the image are selected for feature matching, and the relative camera motion is then estimated using algorithms such as epipolar geometry [

14] or PnP [

15]. Most feature-based methods rely on point feature tracking and matching. However, these point features are highly susceptible to degradation in low-texture, repetitive-structure, or drastically changing illumination environments, leading to feature tracking loss and pose estimation drift, which limits the system’s robustness. Notably, man-made environments are rich in line features. These features can be stably extracted and tracked even in low-texture regions, and they can provide stronger geometric constraints, thus offering crucial support for enhancing the robustness and accuracy of VIO.

In the early stages of VIO research, line features are mainly applied in offline 3D reconstruction (SFM) and small-scale visual odometry (VO), preliminarily validating their potential in geometric recovery. However, limited by computational efficiency and matching robustness, line features struggle to meet real-time requirements. Pumarola et al. are the first to propose an initialization mechanism based on line features and construct a point-line tightly coupled SLAM system, enabling cold start in low-texture scenes completely lacking point features [

16]. However, full-process line feature processing and the corresponding Bundle Adjustment (BA) computational overhead reduce the system’s real-time performance. Gomez-Ojeda et al. propose a stereo vision-based tightly coupled SLAM system that integrates points and lines, using a joint point-line Bag-of-Words model for loop closure detection throughout the whole pipeline to improve robustness in low-texture environments [

17]. He et al. propose a method that fuses point and line features in a tightly coupled VIO framework [

18]. This work represents spatial lines using Plücker coordinates and an orthonormal parameterization method, and jointly optimizes the constructed line feature residuals along with point features and IMU data in a sliding window. Fu et al. propose PL-VINS [

19], which improves the LSD detector by tuning hidden parameters and applying a length rejection strategy, thereby increasing line extraction speed. They also establish a more precise point-to-line distance residual model based on Plücker coordinates and achieve efficient point-line-inertial tight coupling within a sliding-window optimization framework, leading to noticeable improvements in accuracy and real-time performance. However, PL-VINS still suffers from line fragmentation and structural discontinuity due to the lack of geometric merging and verification mechanisms, which limits the stability and quality of line feature constraints. Xu et al. propose the EPLF-VINS system [

20], which adopts a modified EDLines detector for line segment extraction and replaces descriptor-based matching with line optical flow tracking, thereby significantly improving the efficiency of line feature processing. In addition, it presents an endpoint-independent residual model that eliminates the dependence on unstable line segment endpoints. However, this method mainly focuses on improving the speed of detection and tracking, and does not directly optimize the geometric structural quality of line features. Therefore, the geometric integrity of its line features still has considerable room for further improvement.

These studies demonstrate that line features can provide stable geometric constraints and suppress drift in low-texture regions. Current line segment detection methods tend to generate a large number of redundant segments with similar orientations, spatial proximity, or local overlaps. Direct use of these segments increases the computational burden on the system and adversely affects the accuracy of back-end optimization. Therefore, how to effectively leverage single-frame geometric information at the front end to remove redundant segments, construct stable representations, and provide high-quality constraints for the back end becomes a key issue in improving the performance of point-line fused VIO.

Table 1 compares the differences in line feature processing pipelines among representative point-line VIO methods, comprehensively illustrating the line feature processing strategy of each approach.

To address the aforementioned issues, this work presents a VIO system built upon geometrically optimized line features, aiming to alleviate line segment fragmentation and enhance structural representation in complex scenes. The key contributions of this work are summarized as follows:

First, a complete front-end geometric optimization pipeline for line features is proposed. This pipeline adopts a line segment filtering strategy based on length threshold, and integrates the proposed merging mechanism based on geometric consistency constraints, verification mechanism based on endpoint distances, and triangulation method for optimized line features based on epipolar constraints. It removes redundant and unstable short segments, improves the structural integrity of line features, and thereby provides reliable geometric constraints for back-end optimization.

Second, the optimized line features, point features, IMU pre-integration, and marginalization prior information are integrated into a tightly coupled sliding window optimization framework. A point-line fused state estimation model is constructed to achieve high-precision and robust pose estimation.

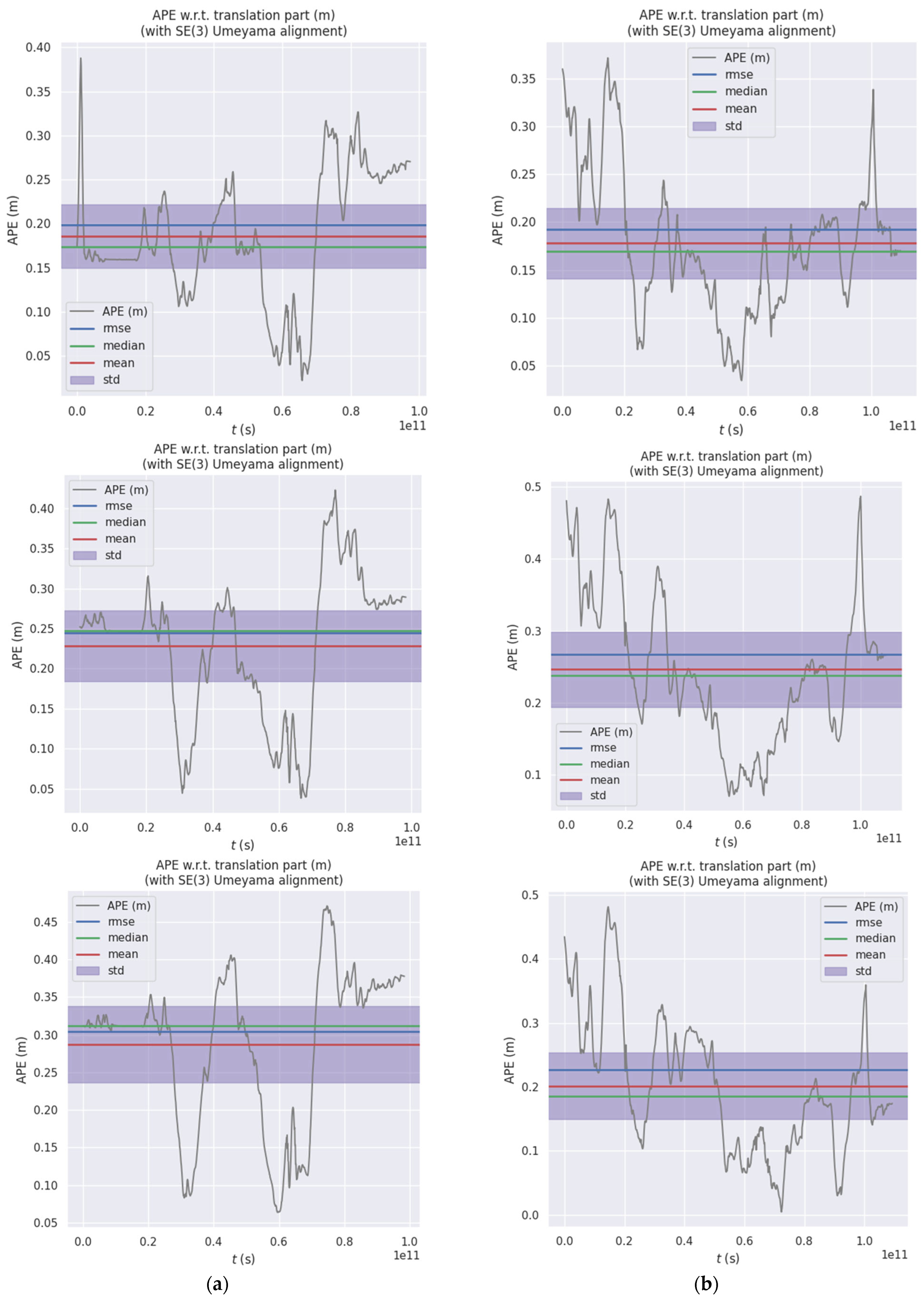

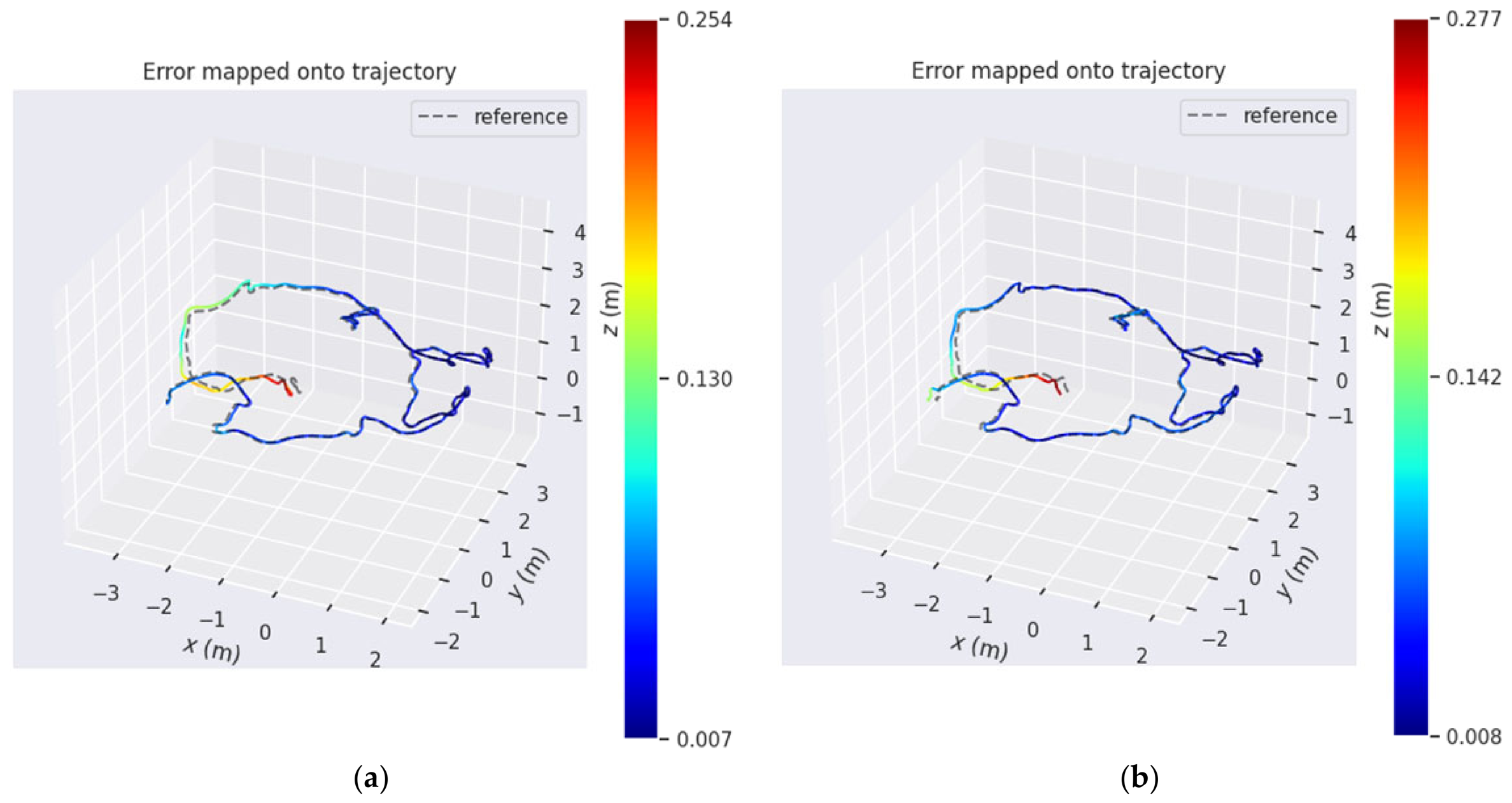

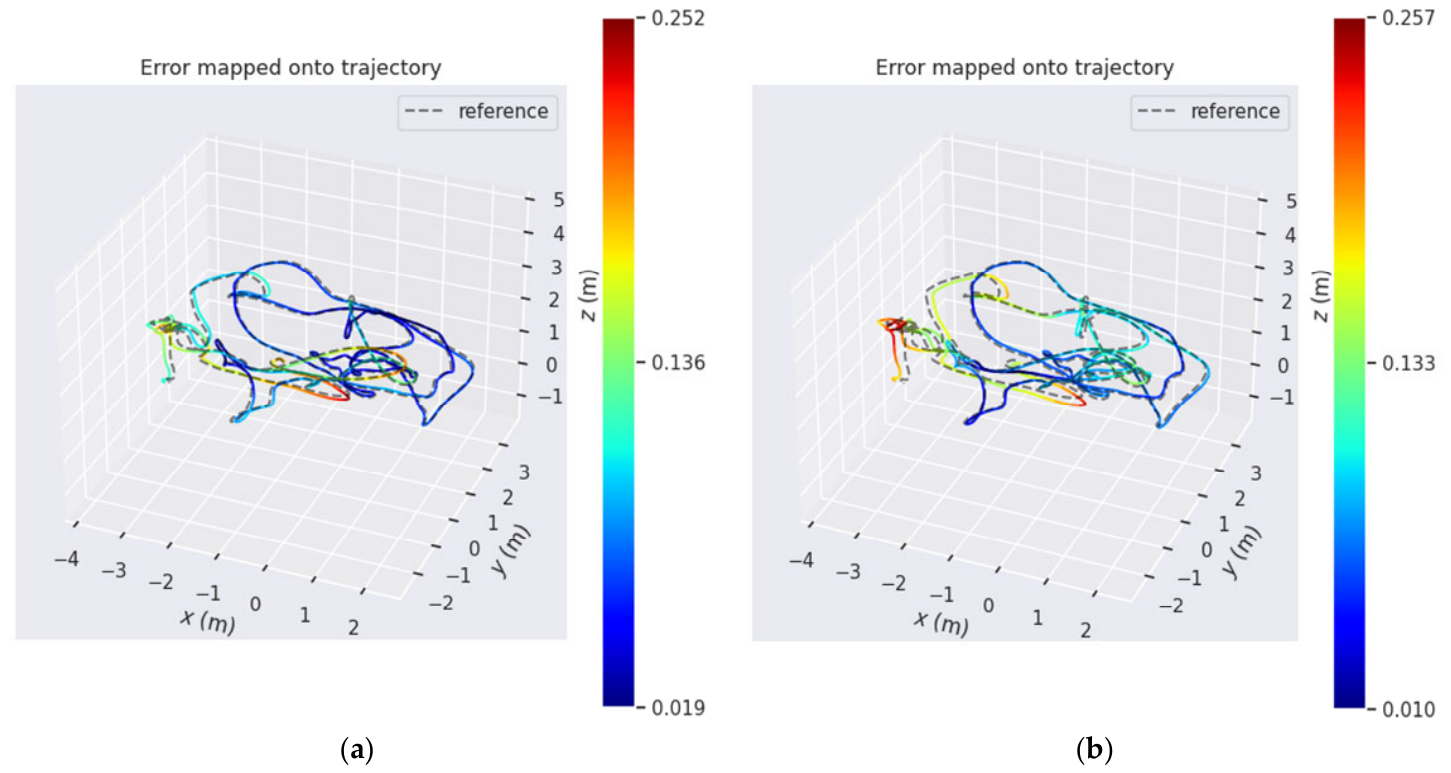

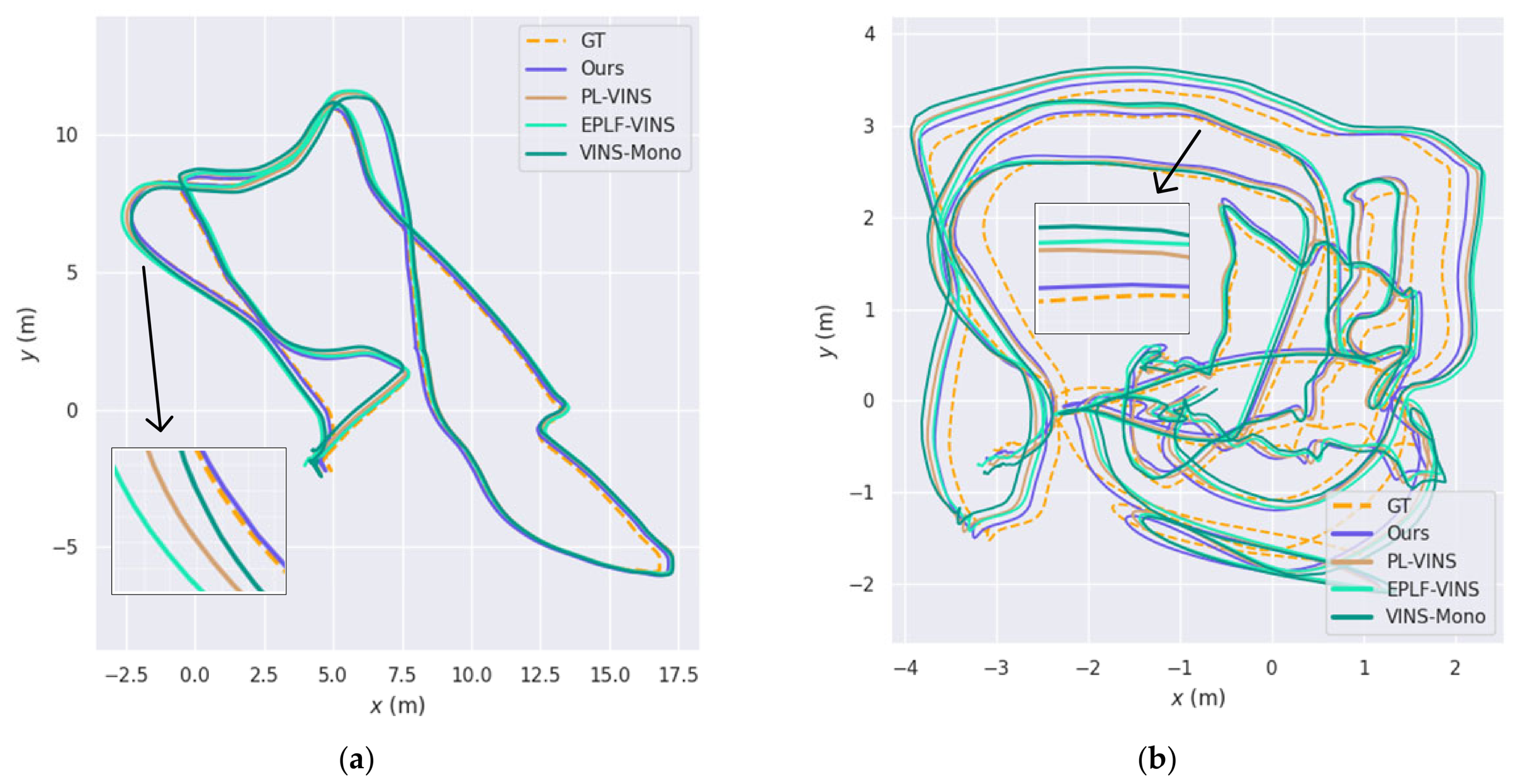

Finally, comprehensive experimental validation is conducted on the public EuRoC dataset. The results show that the proposed method improves pose estimation accuracy, system robustness, and overall efficiency in complex environments.

3. Preliminaries and System Overview

3.1. Notations

For clarity, this work presents the notations and coordinate frame conventions employed throughout the paper. The world frame, IMU frame, and camera frame are denoted by , and , respectively. Gravity is assumed to be aligned with the axis of the world frame. Rotations are represented using either matrices or quaternions , while translations are represented using 3D vectors . Specifically, the coordinate transformation from the IMU frame to the camera frame is described by the extrinsic parameters, comprising a translation vector and a rotation quaternion . A double-subscript notation is adopted here, where denotes a quantity associated with the transformation from the camera frame to the world frame.

3.2. System Overview

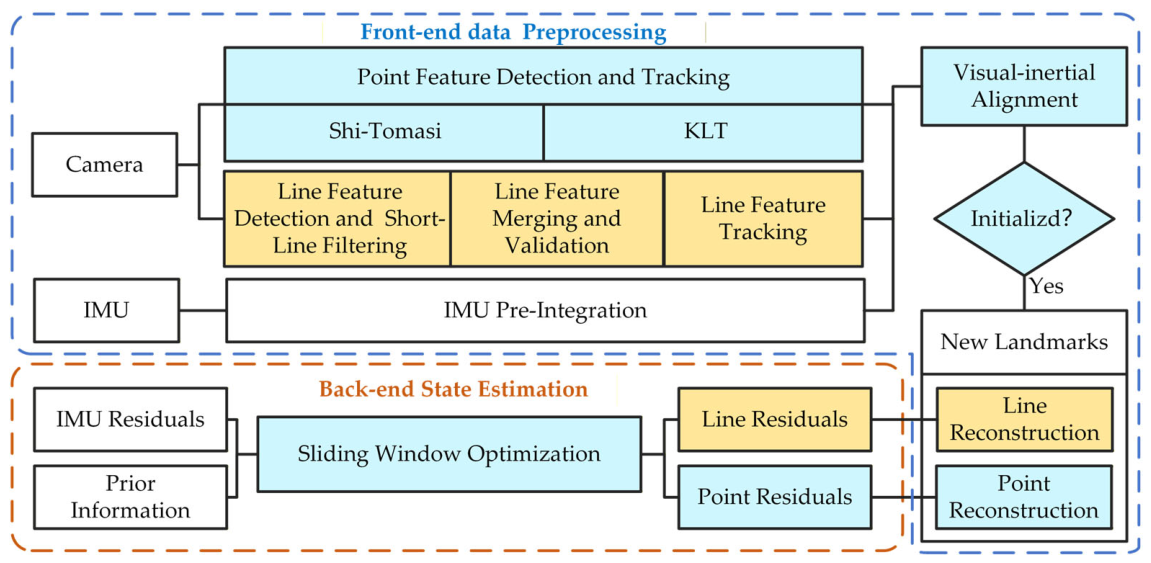

This work proposes a tightly coupled point-line VIO system designed to enhance pose estimation accuracy and system robustness in challenging scenarios. As depicted in

Figure 1, the system consists of two modules: the front end and the back end. The front end is primarily responsible for preprocessing visual-inertial data, while the back end achieves high-precision state estimation based on a sliding window optimization framework.

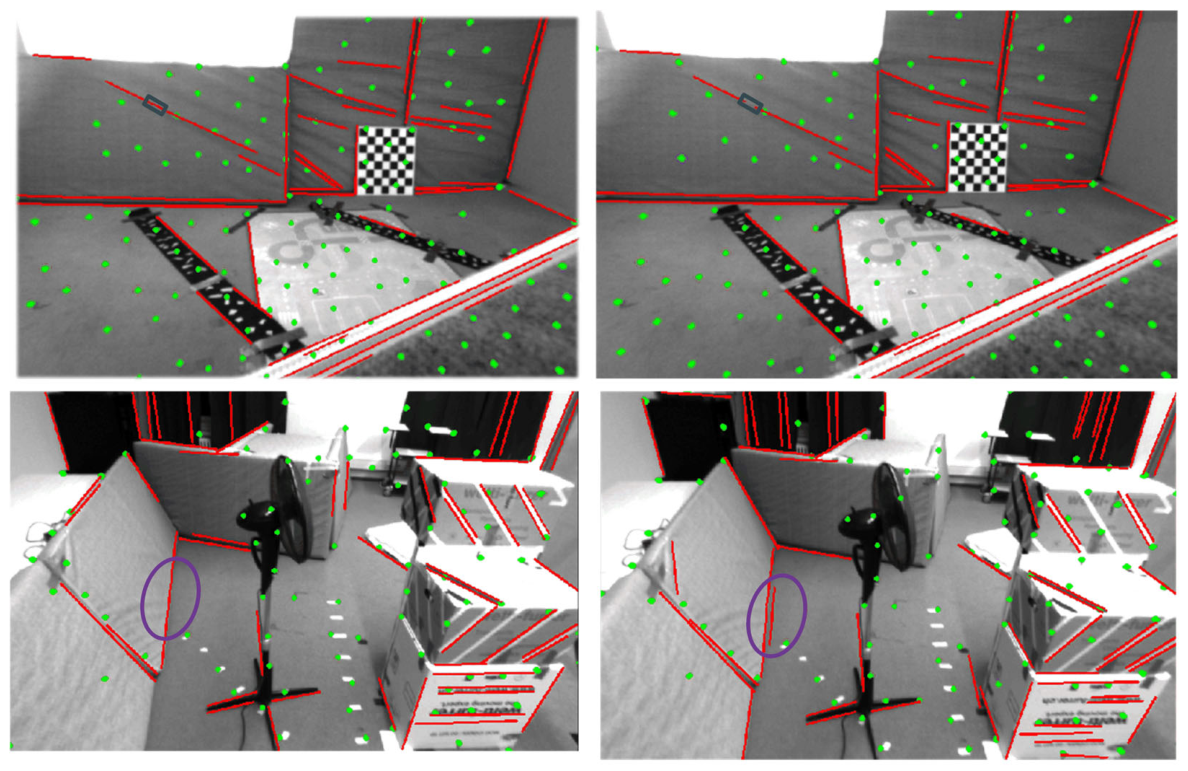

During the data preprocessing stage, the system processes point features, line features, and IMU data in parallel. For point features, salient corners in the image are first detected using the Shi–Tomasi algorithm, and subsequently tracked across adjacent frames via the KLT optical flow method; afterwards, the essential matrix between two frames is estimated based on the RANSAC algorithm, and outliers are rejected by leveraging epipolar constraints, ultimately yielding reliable point track pairs. For line features, the system first uses an improved LSD algorithm to extract line segment structures from the image and merges them based on geometric constraints. It verifies the validity of merged line segments through endpoint distance constraints, eliminating segments that do not satisfy these constraints. Next, the processed line segments are characterized using LBD descriptors and matched via the K-Nearest Neighbors (KNN) algorithm. A Hamming distance threshold filters out candidate matches with low similarity to enhance matching quality. Subsequently, the successfully matched line segments are triangulated using epipolar geometry constraints to recover their 3D spatial line features. Simultaneously, the system pre-integrates IMU data between keyframes to provide necessary motion constraints for back-end state estimation.

During the state estimation stage, the back end constructs a nonlinear least-squares problem within a sliding window using visual feature observations and IMU pre-integration measurements from the front end. Its cost function incorporates visual reprojection errors, IMU pre-integration errors, and a prior residual from marginalization. The primary goal is to accurately estimate keyframe poses, while simultaneously optimizing state vectors including velocity, sensor biases, and the 3D coordinates of map features. To ensure real-time performance and computational efficiency, a marginalization strategy removes old frames during optimization, transforming their constraint information into a prior term acting on the remaining state variables. Ultimately, the system outputs a high-precision pose trajectory and the corresponding sparse point-line map.

4. Methodology

4.1. LSD-Based Line Segment Filtering Strategy

The stability and geometric quality of line features are crucial for the accuracy and robustness of VIO. Although the LSD algorithm can effectively detect line segments in images, its local gradient-based nature lacks the capacity for global geometric continuity judgment, often producing fragmented and redundant short segments. These short segments not only carry limited geometric information but also increase the computational burden of subsequent processing and can lead to mismatches under viewpoint changes, ultimately compromising system accuracy and robustness.

To enhance line feature quality, this paper configures key parameters of the LSD algorithm. First, the image pyramid level count is configured as 2 and the scale factor as 0.5, aiming to maintain multi-scale detection capability while controlling computational cost. Second, the minimum density threshold is set to 0.6 to filter out line segments with insufficient geometric significance. Building upon this, a short segment length threshold is used to remove overly short line segments in the image. Specifically, this threshold

is defined as a fraction of the image size as:

where

and

denote the width and height of the image, respectively, and

is the length scaling factor, empirically set to 0.125. Increasing its value will filter out longer, more stable, but fewer line segments. The operator

represents the ceiling operation. This filtering method removes redundant short segments, providing a cleaner set of line features for subsequent processing and state estimation.

4.2. Geometric-Constrained Line Segment Merging

In VIO, long and continuous line features provide stronger and more stable geometric structural constraints than short segments. However, edge detection errors often cause originally continuous long lines to be incorrectly segmented into multiple adjacent short segments. This mis-segmentation not only increases the computational burden of the system but also disrupts the continuity of the original structure, severely weakening the constraining power that line features exert in back-end optimization. To address this issue, this paper proposes a line segment merging strategy grounded in geometric constraints.

To determine whether two line segments can be merged, this method adopts the following geometric criteria. Given two detected line segments and in the current frame, defined by their start points and and end points and , respectively, their lengths are first calculated. Considering that longer segments provide more reliable direction estimates, the longer segment is used as the reference to mitigate potential noise introduced by shorter segments. After identifying the reference segment, the direction vectors of both segments are computed. Direction consistency is then evaluated using the dot product and the resulting angle difference. If the dot product is negative, it indicates opposing directions; in this case, the endpoints of the shorter segment are swapped to align its direction. Subsequently, the angle between the two vectors is calculated. If this angle is less than a set threshold , they are deemed to satisfy the direction consistency condition. This threshold was determined by trial and error. Next, the spatial proximity between the segments is further assessed using two geometric distance metrics: the perpendicular distance , which refers to the distance from an endpoint to the infinite line of the other segment; and the projection distance , which refers to the shortest distance from an endpoint to the other segment itself. The thresholds for these distances are determined empirically as pixels and pixels. If any pair of endpoints satisfies both the distance thresholds and the angle difference condition, the two segments are considered mergeable into a complete segment .

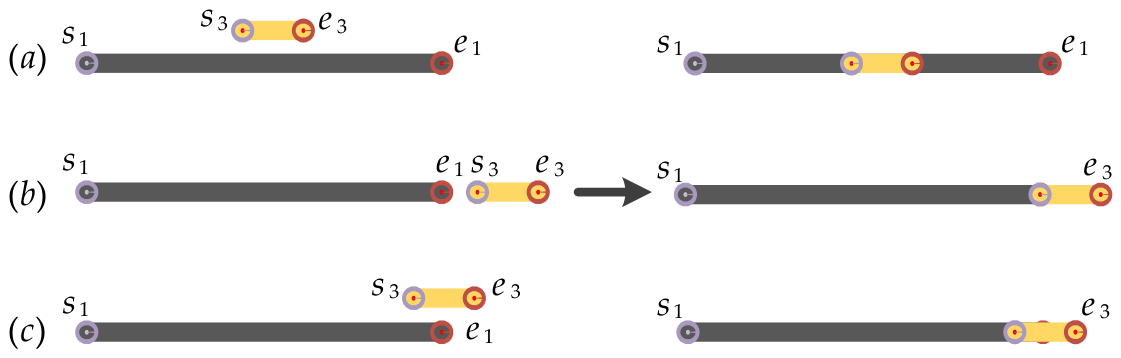

The merged segments can be categorized into three distinct extension types, as illustrated in

Figure 2. During the merging process, the start and end points of the new segment are adjusted according to the specific merge type. The complete workflow of the merging process is detailed in Algorithm 1.

| Algorithm 1: Line Segments Merging |

| | |

| | |

| 1 | if

then |

| 2 | |

| 3 | end if |

| 4 | if

then |

| 5 | |

| 6 | end if |

| 7 | if and then |

| 8 | if then |

| 9 | |

| 10 |

then |

| 11 | |

| 12 |

then |

| 13 | |

| 14 | |

| 15 | end if |

| 16 | Return

|

4.3. Structural Consistency Verification of Merged Lines

To ensure the geometric accuracy of the merged segments from

Section 4.2 and prevent incorrect mergers, this paper proposes a verification method based on endpoint distance constraints. This verification performs a geometric consistency assessment on the merged line results, ensuring the reliability of line features in the VIO system. Its core idea is to construct the line equation using the endpoints of the merged segment and use this as a benchmark to verify whether the geometric structure of the original segments is reasonably preserved after merging.

Specifically, the line model is first determined based on the two endpoints of the merged segment. Subsequently, the shortest distances from the two unused endpoints of the original segments to this line are calculated, respectively. If both distances fall within the set distance threshold , the merge is considered geometrically consistent, and the segment is retained for subsequent feature tracking and matching; otherwise, it is deemed an invalid merge and discarded.

This verification mechanism filters out geometrically inconsistent merging results, avoiding spurious observations from erroneous mergers. By providing more reliable geometric constraints, this mechanism enhances the pose estimation performance of the VIO system, as validated by the experimental results in

Section 5. The complete implementation process of the system is shown in Algorithm 2.

| Algorithm 2: Line Segments Verification and Refinement |

| | |

| | |

| 1 | if then |

| 2 |

then |

| 3 | if

then |

| 4 | |

| 5 | else |

| 6 | |

| 7 | end if |

| 8 | else if

then |

| 9 | if

then |

| 10 | |

| 11 | else |

| 12 | |

| 13 | end if |

| 14 | else if

then |

| 15 | if

then |

| 16 | |

| 17 | |

| 18 | |

| 19 | |

| 20 | |

| 21 | end if |

| 22 | Return

|

4.4. 3D Reconstruction of Line Features

The triangulation of line features aims to recover their 3D spatial parameters from matched 2D line segments across adjacent frames, utilizing epipolar constraints. However, directly using raw line segments generated by the LSD detector for triangulation poses fundamental challenges, as these segments are often fragmented and redundant. This not only increases the probability of mismatches but also makes the endpoints of short segments susceptible to noise, significantly undermining the reliability of geometric constraints. These factors collectively lead to increased errors in depth calculation during epipolar geometry-based triangulation, thereby compromising the accuracy of spatial structure reconstruction.

To address this, this section performs triangulation based on the stable line segments output from

Section 4.1,

Section 4.2 and

Section 4.3, which have undergone geometric enhancement. The triangulation schematic for merged segments is illustrated in

Figure 3. Consider two adjacent keyframes with camera centers

and

, and corresponding camera pose

. The image planes of the two cameras are

and

. Two short segments detected by LSD, denoted as

and

, are projected onto the image planes. Their projections on

are

and

, and on

are

and

. Direct triangulation would recover two separate 3D line segments, as illustrated by the red and green spatial lines in

Figure 3. In contrast, our method merges them according to the geometric constraints described in

Section 4.2. For example,

and

on

are merged into a single segment

; similarly, the merged segment is

obtained on

. Subsequently, triangulation is performed on the matched merged segments and using the camera geometry

, recovering a more complete 3D line feature

.

This strategy enhances computational efficiency by merging the two independent triangulations traditionally performed on fractured short line segments into a single triangulation of the complete long segment. Furthermore, the complete long segment resulting from geometric merging provides stronger geometric constraints for triangulation, contributing to coherent and structurally complete 3D maps. These geometrically optimized 3D line features form the foundation for the line reprojection residual model in the subsequent tightly coupled optimization framework.

4.5. Modeling of Line Reprojection Residuals

The construction of the line feature reprojection residual model is a critical step for incorporating geometric constraints into the nonlinear optimization framework, entailing three key components: spatial line parameterization, projection mapping modeling, and residual function design.

First, to achieve an efficient and stable representation of 3D lines, this paper employs Plücker coordinates for parameterization modeling. Specifically, given a 3D line

in the world frame, it can be converted to the camera frame as follows:

Next, the line feature

in the camera coordinate frame is projected onto the image plane using the following equation, yielding its corresponding 2D line expression:

where

denotes the normal vector of the plane containing the spatial line, and

represents the projection matrix of the line feature, incorporating the focal lengths

,

, and the principal point coordinates

,

. In the normalized image plane, the projection matrix

reduces to the identity matrix.

Finally, based on the aforementioned projection relationship, the reprojection residual for the 3D spatial line

observed in the

ith frame is formulated as the perpendicular distances from the endpoints to the projected line

, which corresponds to the projection of

onto that image plane. As shown in

Figure 4, the specific calculation formula is as follows:

where

denotes the full set of state variables within the sliding window, and

represents the

line feature observed in the

camera frame.

and

are the endpoint coordinates of the observed line segment

.

To minimize this residual within the sliding-window optimization framework, it is necessary to compute its Jacobian matrix

with respect to the relevant state variables. The systematic derivation and its expanded form are presented as follows:

where

stands for the set of line features captured by at least two camera frames within the sliding window.

4.6. Sliding Window-Based Tightly Coupled Optimization

Based on the established line feature reprojection residual model, this paper constructs a tightly coupled nonlinear optimization pipeline for VIO to achieve deep fusion of multi-sensor data and high-precision estimation of the system state. To address the state estimation problem, the BA strategy is adopted, constructing the objective function by minimizing the reprojection residuals of both point and line features. To circumvent the cubic computational complexity inherent to global BA, a fixed-length sliding window mechanism is employed to constrain the optimization scale. This approach ensures estimation accuracy while effectively controlling computational complexity and guaranteeing numerical convergence stability.

The state variables to be optimized within the sliding window are given as follows:

where

represents the IMU state of the

ith keyframe, comprising its position, velocity, orientation in the world frame, along with the accelerometer and gyroscope biases in the body frame.

,

, and

represent the numbers of keyframes, point features, and line features within the sliding window, respectively;

is the inverse depth parameter for the

mth point feature; and

denotes the orthonormal representation parameters of the

lth line feature in the world coordinate frame.

Based on the above definitions, a tightly coupled nonlinear least-squares problem is formulated. The overall objective function

incorporates the reprojection residuals of point and line features, the IMU pre-integration residuals, and prior information. It is constructed to derive the maximum a posteriori estimate of the system state and is defined as follows:

where

denotes the IMU pre-integration residual between consecutive frames

and

;

and

represent the reprojection residuals of point and line features, respectively;

,

, and

are heir corresponding sets of observations;

,

, and

denote the corresponding measurement covariance matrices.

denotes the prior information from marginalization within the sliding window.

To enhance the system’s robustness against mismatches and measurement noise, the Huber robust kernel function

is applied to weigh all residual terms. Finally, the Ceres Solver [

35] library is adopted to iteratively solve the aforementioned nonlinear least-squares problem, outputting the optimized system state.

6. Conclusions

To address the insufficient utilization of geometric information from line features in VIO, this paper proposes a tightly coupled VIO system based on geometrically optimized line features. The main contributions include a complete front-end geometric optimization pipeline for line features. This pipeline first adopts a length-threshold-based filtering strategy to remove redundant and unstable short segments from images, and further integrates the proposed geometric-consistency-based merging mechanism, endpoint-distance-based verification mechanism, and epipolar-constraint-based triangulation method for optimized line features, thereby improving the structural integrity of line features. On this basis, the optimized line features are integrated with point features, IMU pre-integration, and marginalization priors into a sliding-window optimization framework, constructing a robust and accurate point-line fused state estimation system. Experimental results show that the proposed method reduces the APE by 17.57%, 9.88%, and 6.65% compared to VINS-Mono, PL-VINS, and EPLF-VINS, respectively. Additionally, compared to PL-VINS, it reduces the line feature processing time by 4.16% and the average per-frame processing time by 2.36%, validating its effectiveness in trajectory estimation accuracy, processing efficiency, and 3D scene reconstruction. Future work will systematically analyze matching errors and outlier sensitivity of line features, and concentrate on integrating the method with deep learning-based front ends to improve pose estimation performance in complex environments.