Robust Low-Carbon Multimodal Transport Route Optimization for Containers Under Dual Uncertainty: A Proximal Policy Optimization Approach

Abstract

1. Introduction

1.1. Research Background and Motivation

1.2. Literature Review

1.3. Research Objectives

1.4. Structure of the Paper

2. Problem Description and Model Establishment

2.1. Problem Description

2.2. Model Assumptions

- Cargo remains an integral whole during transportation, with no splitting permitted.

- Each transfer node supports only one transshipment operation.

- Transportation route follows a one-way flow; each node city is traversed once at most.

- All nodes are equipped with sufficient transshipment facilities and processing capacity.

- Railway and waterway timetables are not considered.

- The capacity on each voyage segment is sufficient to meet the current TEU demand.

2.3. Mathematical Formulation

2.4. Construction of Markov Decision Process (MDP)

2.4.1. State Space

2.4.2. Action Space

2.4.3. State Transition Probability

2.4.4. Reward Function

2.4.5. Discount Factor

3. Proposed Methodology

3.1. Reinforcement Learning (RL) and Algorithm Selection

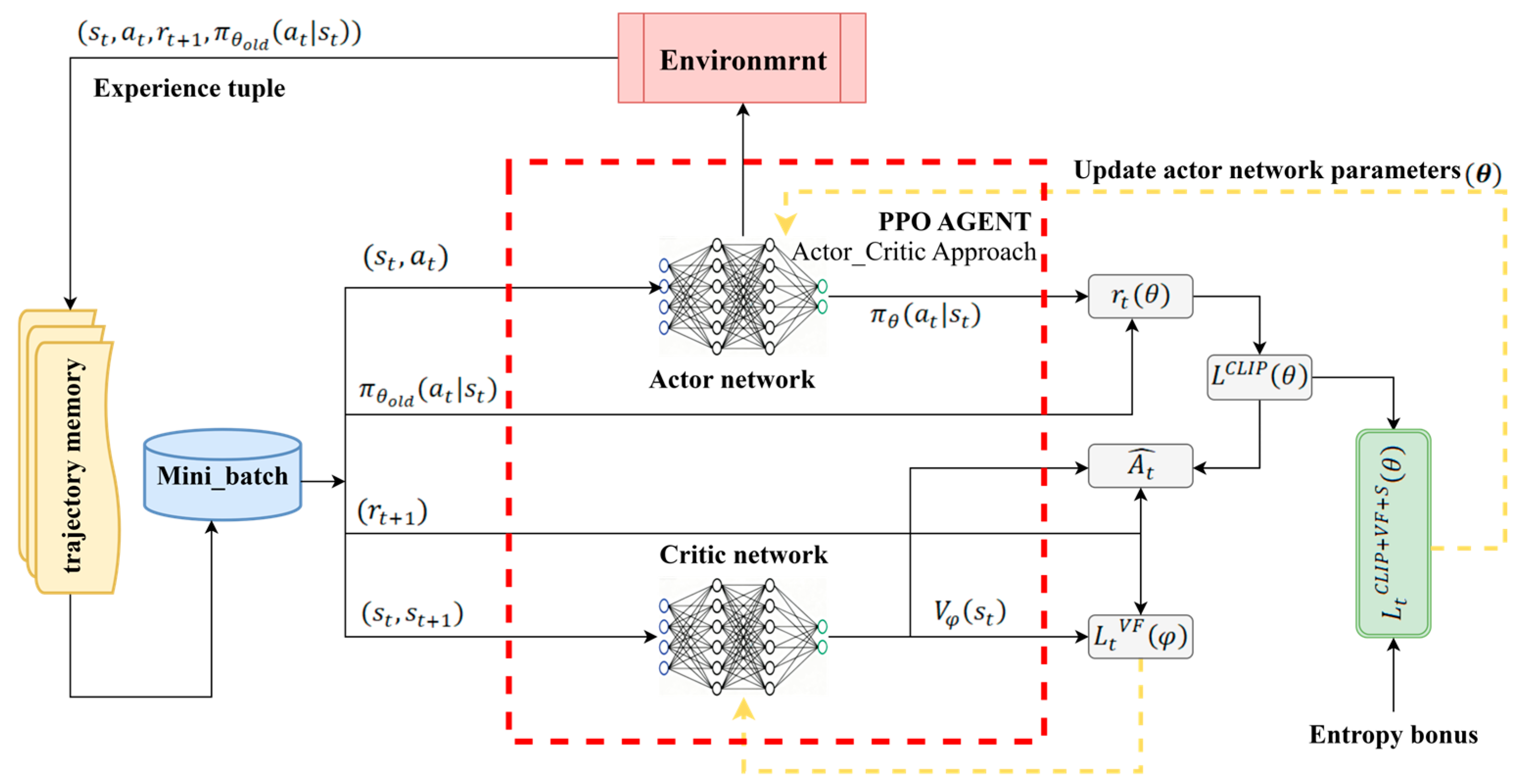

3.2. Proximal Policy Optimization (PPO) Algorithm

- Actor–Critic Architecture.

- 2.

- PPO-Clip Objective Function.

- 3.

- Value Loss Function.

- 4.

- Entropy Regularization Term.

- 5.

- Final Objective Function.

- 6.

- Algorithm Flow.

3.3. Implementation Details and Hyperparameters

4. Case Study and Experimental Analysis

4.1. Experimental Setup and Data

4.1.1. Case Background: Chongqing–Singapore Route

4.1.2. Parameter Settings

4.2. Algorithm Validation and Training Stability Analysis

4.2.1. Algorithm Validation

4.2.2. Training Internal Stability Analysis

4.3. Robustness in Practical Scenarios: PPO vs. Traditional Methods

- (1)

- 45 TEU scenario

- (2)

- 90 TEU scenario

- (3)

- 180 TEU scenario

4.4. Sensitivity Analysis

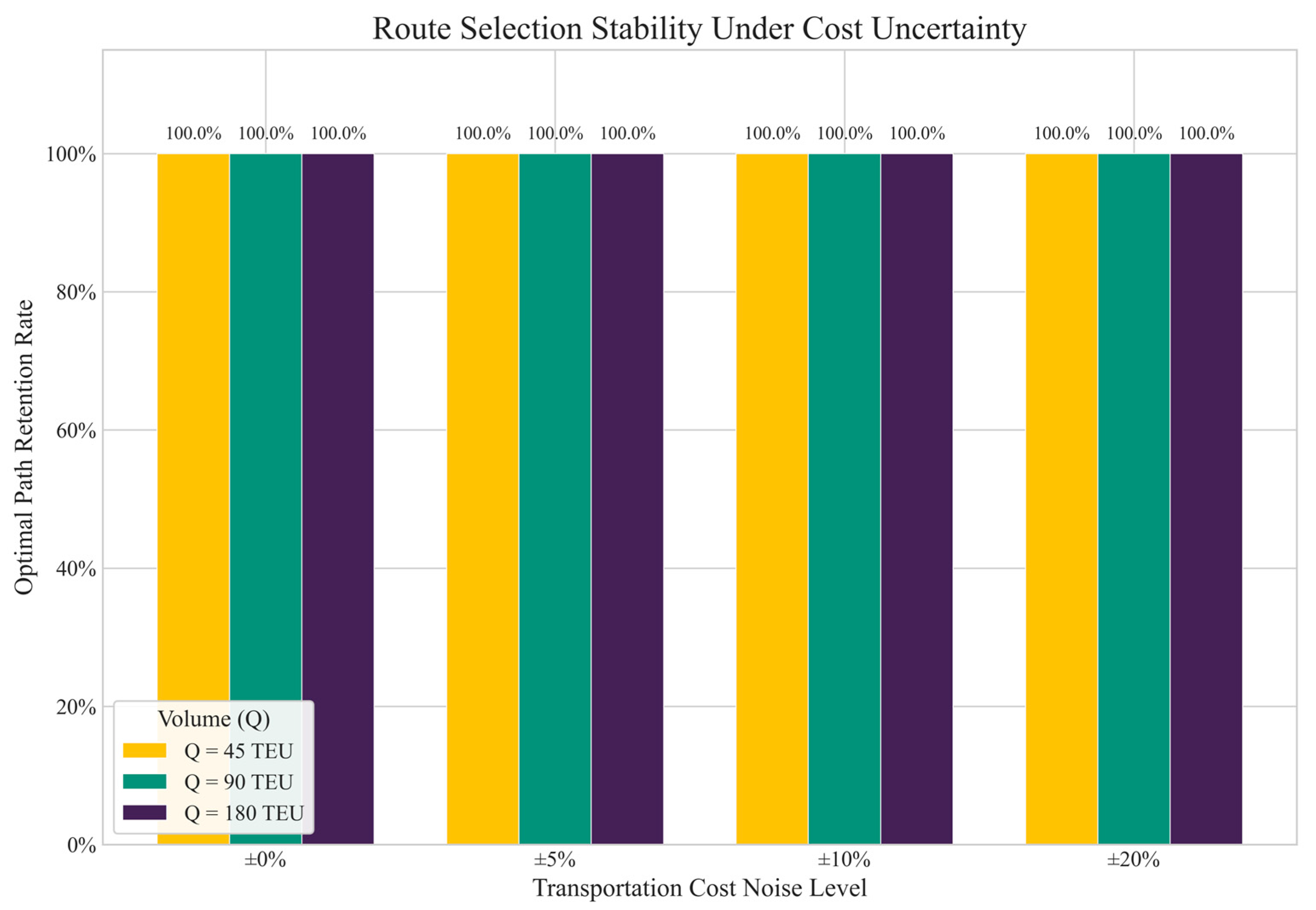

4.4.1. Sensitivity to Transportation Cost Fluctuations

4.4.2. Sensitivity to Carbon Tax Rate

5. Discussion

6. Conclusions

- (1)

- Comparative experiments between PPO and classic reinforcement learning algorithms DQN and A2C show that PPO offers clear advantages in multimodal transport route optimization, because clipped surrogate objective function reduces travel-time variability. This study provides empirical evidence that Policy-Gradient methods outperform Value-based methods in stochastic routing problems with discrete action spaces, offering a reference for algorithm selection in the field of intelligent logistics.

- (2)

- Statistical analysis of training metrics (policy entropy and critic value loss) from five random seeds shows that PPO trains well and is low sensitive to initial conditions.

- (3)

- Comparison with MILP and GA shows that while MILP and GA have marginally lower costs in low-cargo-volume cases, PPO can perform better in realistic travel-time fluctuations. It maintains a small stochastic late rate, a narrow robustness gap, and the lowest carbon emissions in all three cargo-volume cases, and gets to the optimal route.

- (4)

- Sensitivity analysis shows that the model is robust to transportation cost changes and is sensitive to carbon tax changes. Under cost disturbances, the model always keeps the best route and the average cost close to the initial deterministic cost. As carbon-tax levels rise, routes are adjusted to balance transportation costs and carbon emissions. This provides decision support for low carbon transport policy design.

- (5)

- In conclusion, this paper establishes a novel MDP framework that couples a progressive carbon tax mechanism with dual uncertainties. We find that reinforcement learning is applicable to the multi-objective path optimization problem of container multimodal transport and can adapt to the path strategy. In addition, compared with traditional static methods, the PPO algorithm is more adaptable to the randomness of environmental changes, and its results have a certain degree of robustness.

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

Abbreviations

| PPO | Proximal Policy Optimization |

| RL | Reinforcement Learning |

| MDP | Markov Decision Process |

| DQN | Deep Q-Network |

| A2C | Advantage Actor-Critic |

| SAC | Soft Actor-Critic |

| TRPO | Trust Region Policy Optimization |

| MILP | Mixed-Integer Linear Programming |

| GA | Genetic Algorithm |

| TROA | Tyrannosaurus Optimization Algorithm |

| FAGSO | Fireworks Algorithm hybridized with a Gravitational Search Operator |

| NSGA-II | Non-dominated Sorting Genetic Algorithm II |

References

- Ahmady, M.; Eftekhari Yeghaneh, Y. Optimizing the Cargo Flows in Multi-Modal Freight Transportation Network Under Disruptions. Iran. J. Sci. Technol. Trans. Civ. Eng. 2022, 46, 453–472. [Google Scholar] [CrossRef]

- Miechowicz, W.; Kiciński, M.; Miechowicz, I.; Merkisz-Guranowska, A. The Attractiveness of Regional Transport as a Direction for Improving Transport Energy Efficiency. Energies 2024, 17, 4844. [Google Scholar] [CrossRef]

- Pezzella, G.; Baaqel, H.; Messa, G.M.; Sarathy, S.M. Towards Decarbonized Heavy-Duty Road Transportation: Design and Carbon Footprint of Adsorption-Based Carbon Capture Technologies Using Life Cycle Thinking. Chem. Eng. J. 2025, 508, 161168. [Google Scholar] [CrossRef]

- Dini, N.; Yaghoubi, S.; Bahrami, H. Logistics Performance Index-Driven in Operational Planning for Logistics Companies: A Smart Transportation Approach. Transp. Policy 2025, 160, 42–62. [Google Scholar]

- Shramenko, N.; Muzylyov, D.; Shramenko, V. Methodology of Costs Assessment for Customer Transportation Service of Small Perishable Cargoes. Int. J. Bus. Perform. Manag. 2020, 21, 132–148. [Google Scholar] [PubMed]

- Solano-Charris, E.L.; Prins, C.; Santos, A.C. Solving the Bi-Objective Robust Vehicle Routing Problem with Uncertain Costs and Demands. RAIRO Oper. Res. Rech. Opér. 2016, 50, 689–714. [Google Scholar] [CrossRef]

- Feng, X.; Song, R.; Yin, W.; Yin, X.; Zhang, R. Multimodal Transportation Network with Cargo Containerization Technology: Advantages and Challenges. Transp. Policy 2023, 132, 128–143. [Google Scholar]

- Amine Masmoudi, M.; Baldacci, R.; Mancini, S.; Kuo, Y.H. Multi-Compartment Waste Collection Vehicle Routing Problem with Bin Washer. Transp. Res. Part E Logist. Transp. Rev. 2024, 189, 103681. [Google Scholar]

- Chang, T.S. Best Routes Selection in International Intermodal Networks. Comput. Oper. Res. 2008, 35, 2877–2891. [Google Scholar] [CrossRef]

- Tagiltseva, J.; Vasilenko, M.; Kuzina, E.; Drozdov, N.; Parkhomenko, R.; Prokopchuk, V.; Skichko, E.; Bagiryan, V. The Economic Efficiency Justification of Multimodal Container Transportation. Transp. Res. Procedia 2022, 63, 264–270. [Google Scholar] [CrossRef]

- Ding, L. Multimodal Transport Information Sharing Platform with Mixed Time Window Constraints Based on Big Data. J. Cloud Comput. 2020, 9, 11. [Google Scholar] [CrossRef]

- Raicu, S.; Popa, M.; Costescu, D. Uncertainties Influencing Transportation System Performances. Sustainability 2022, 14, 7660. [Google Scholar] [CrossRef]

- Feng, F.; Zheng, F.; Zhang, Z.; Wang, L. A Framework for Low-Carbon Container Multimodal Transport Route Optimization Under Hybrid Uncertainty: Model and Case Study. Appl. Sci. 2025, 15, 6894. [Google Scholar] [CrossRef]

- Li, M.; Sun, X. Path Optimization of Low-Carbon Container Multimodal Transport under Uncertain Conditions. Sustainability 2022, 14, 14098. [Google Scholar] [CrossRef]

- Xu, Q.; Huang, X.; Zhang, W.; Zhao, H.; Zhang, H.; Jin, Z. Eurasian Container Intermodal Transportation Network: A Robust Optimization with Uncertainty and Carbon Emission Constraints. Front. Mar. Sci. 2025, 12, 1576006. [Google Scholar] [CrossRef]

- Ge, D. Optimal Path Selection of Multimodal Transport Based on Ant Colony Algorithm. J. Phys. Conf. Ser. 2021, 2083, 032011. [Google Scholar] [CrossRef]

- Shoukat, R.; Xiaoqiang, Z. Is Multimodal Transportation Greener and Faster than Intermodal in Full Container Load? A Case from Pakistan–China. Energy Syst. 2025, 16, 1117–1142. [Google Scholar]

- Cheng, J. Research on Optimizing Multimodal Transport Path under the Schedule Limitation Based on Genetic Algorithm. J. Phys. Conf. Ser. 2022, 2258, 012014. [Google Scholar]

- Liu, S. Multimodal Transportation Route Optimization of Cold Chain Container in Time-Varying Network Considering Carbon Emissions. Sustainability 2023, 15, 4435. [Google Scholar] [CrossRef]

- Zheng, C.; Sun, K.; Gu, Y.; Shen, J.; Du, M. Multimodal Transport Path Selection of Cold Chain Logistics Based on Improved Particle Swarm Optimization Algorithm. J. Adv. Transp. 2022, 2022, 5458760. [Google Scholar] [CrossRef]

- Wan, S.P.; Gao, S.Z.; Dong, J.Y. Trapezoidal Cloud Based Heterogeneous Multi-Criterion Group Decision-Making for Container Multimodal Transport Path Selection. Appl. Soft Comput. 2024, 154, 111374. [Google Scholar] [CrossRef]

- Rezki, N.; Mansouri, M. Deep Learning Hybrid Models for Effective Supply Chain Risk Management: Mitigating Uncertainty While Enhancing Demand Prediction. Acta Logist. 2024, 11, 589–604. [Google Scholar] [CrossRef]

- Zhu, W.; Wang, H.; Zhang, X. Synergy Evaluation Model of Container Multimodal Transport Based on BP Neural Network. Neural Comput. Appl. 2021, 33, 4087–4095. [Google Scholar] [CrossRef]

- Yan, Y.; Chow, A.H.F.; Ho, C.P.; Kuo, Y.-H.; Wu, Q.; Ying, C. Reinforcement Learning for Logistics and Supply Chain Management: Methodologies, State of the Art, and Future Opportunities. Transp. Res. Part E Logist. Transp. Rev. 2022, 162, 102712. [Google Scholar] [CrossRef]

- Nazari, M.; Oroojlooy, A.; Snyder, L.; Takac, M. Reinforcement Learning for Solving the Vehicle Routing Problem. In Advances in Neural Information Processing Systems; Curran Associates, Inc.: Red Hook, NY, USA, 2018; Volume 31. [Google Scholar]

- Chen, X.; Tian, Y. Learning to Perform Local Rewriting for Combinatorial Optimization. In Advances in Neural Information Processing Systems; Curran Associates, Inc.: Red Hook, NY, USA, 2019; Volume 32. [Google Scholar]

- Kool, W.; van Hoof, H.; Welling, M. Attention, Learn to Solve Routing Problems! arXiv 2019, arXiv:1803.08475. [Google Scholar] [CrossRef]

- Lu, H.; Zhang, X.; Yang, S. A Learning-Based Iterative Method for Solving Vehicle Routing Problems. In Proceedings of the International Conference on Learning Representations, New Orleans, LA, USA, 6–9 May 2019. [Google Scholar]

- Joe, W.; Lau, H.C. Deep Reinforcement Learning Approach to Solve Dynamic Vehicle Routing Problem with Stochastic Customers. Proc. Int. Conf. Autom. Plan. Sched. ICAPS 2020, 30, 394–402. [Google Scholar] [CrossRef]

- Zhao, J.; Mao, M.; Zhao, X.; Zou, J. A Hybrid of Deep Reinforcement Learning and Local Search for the Vehicle Routing Problems. IEEE Trans. Intell. Transp. Syst. 2021, 22, 7208–7218. [Google Scholar] [CrossRef]

- Zhang, Z.; Liu, H.; Zhou, M.; Wang, J. Solving Dynamic Traveling Salesman Problems With Deep Reinforcement Learning. IEEE Trans. Neural Netw. Learn. Syst. 2023, 34, 2119–2132. [Google Scholar] [CrossRef] [PubMed]

- Irannezhad, E.; Prato, C.G.; Hickman, M. An Intelligent Decision Support System Prototype for Hinterland Port Logistics. Decis. Support Syst. 2020, 130, 113227. [Google Scholar] [CrossRef]

- Kim, B.; Jeong, Y.; Shin, J.G. Spatial Arrangement Using Deep Reinforcement Learning to Minimise Rearrangement in Ship Block Stockyards. Int. J. Prod. Res. 2020, 58, 5062–5076. [Google Scholar] [CrossRef]

- Guo, C.; Thompson, R.G.; Foliente, G.; Peng, X. Reinforcement Learning Enabled Dynamic Bidding Strategy for Instant Delivery Trading. Comput. Ind. Eng. 2021, 160, 107596. [Google Scholar] [CrossRef]

- Farahani, A.; Genga, L.; Schrotenboer, A.H.; Dijkman, R. Capacity Planning in Logistics Corridors: Deep Reinforcement Learning for the Dynamic Stochastic Temporal Bin Packing Problem. Transp. Res. Part E Logist. Transp. Rev. 2024, 191, 103742. [Google Scholar] [CrossRef]

- Sun, Y.; Mao, X.; Yin, X.; Liu, G.; Zhang, J.; Zhao, Y. Optimizing Carbon Tax Rates and Revenue Recycling Schemes: Model Development, and a Case Study for the Bohai Bay Area, China. J. Clean. Prod. 2021, 296, 126519. [Google Scholar] [CrossRef]

- Mnih, V.; Kavukcuoglu, K.; Silver, D.; Rusu, A.A.; Veness, J.; Bellemare, M.G.; Graves, A.; Riedmiller, M.; Fidjeland, A.K.; Ostrovski, G.; et al. Human-Level Control Through Deep Reinforcement Learning. Nature 2015, 518, 529–533. [Google Scholar] [CrossRef] [PubMed]

- Hu, K.; Li, M.; Song, Z.; Xu, K.; Xia, Q.; Sun, N.; Zhou, P.; Xia, M. A Review of Research on Reinforcement Learning Algorithms for Multi-Agents. Neurocomputing 2024, 599, 128068. [Google Scholar] [CrossRef]

- Wang, H.; Ye, Y.; Zhang, J.; Xu, B. A Comparative Study of 13 Deep Reinforcement Learning Based Energy Management Methods for a Hybrid Electric Vehicle. Energy 2023, 266, 126497. [Google Scholar] [CrossRef]

- del Rio, A.; Jimenez, D.; Serrano, J. Comparative Analysis of A3C and PPO Algorithms in Reinforcement Learning: A Survey on General Environments. IEEE Access 2024, 12, 146795–146806. [Google Scholar] [CrossRef]

- Sharma, R.; Garg, P. Reinforcement Learning Advances in Autonomous Driving: A Detailed Examination of DQN and PPO. In Proceedings of the 2024 Global Conference on Communications and Information Technologies (GCCIT), Bengaluru, India, 25–26 October 2024; pp. 1–5. [Google Scholar]

- Schulman, J.; Wolski, F.; Dhariwal, P.; Radford, A.; Klimov, O. Proximal Policy Optimization Algorithms. arXiv 2017, arXiv:1707.06347. [Google Scholar] [CrossRef]

| Problem | Method | Study |

|---|---|---|

| travelling salesman problem | Policy Gradient | [25] |

| capacitated vehicle routing problem | Actor-Critic | [26] |

| travelling salesman problem, vehicle routing problem | Policy Gradient | [27] |

| vehicle routing problem | Policy Gradient | [28] |

| dynamic vehicle routing problem | Deep Q-Network | [29] |

| vehicle routing problem | Policy Gradient | [30] |

| dynamic travelling salesman problem | Policy Gradient | [31] |

| routing | Adaptive Dynamic Programming | [32] |

| allocation | Asynchronous Advantage Actor-Critic | [33] |

| carriers | Deep Q-Network, Tabular Q-learning | [34] |

| carriers | Proximal Strategy Optimization | [35] |

| Hyperparameter | Symbol | Value | Description |

|---|---|---|---|

| Learning Rate | 1 × 10−4 | Step size for network weight updates | |

| Discount Factor | 0.99 | Weighting for future rewards | |

| GAE Parameter | 0.95 | For Generalized Advantage Estimation | |

| Clipping Ratio | 0.2 | Constraints policy update within | |

| Value Loss Coeff | 0.5 | Weight of the critic loss term | |

| Entropy Coeff. | 0.01 | Encourages exploration to avoid local optima | |

| Update Epochs | 10 | Number of iterations per policy update | |

| Update Timesteps | 2048 | Data collection batch size before update | |

| Max Training Steps | 150,000 | Total steps for the training process | |

| Random Seeds | - | 5 Seeds | 42, 101, 202, 303, 404 (For stability verification) |

| Code | City | Code | City | Code | City | Code | City |

|---|---|---|---|---|---|---|---|

| 1 | Chongqing | 7 | Vientiane | 13 | Bangkok | 19 | Qinzhou Port |

| 2 | Pingxiang | 8 | Huangtong | 14 | Shanghai Port | 20 | Hong kong Ting Yi District |

| 3 | Mohan | 9 | Liuzhou | 15 | Nanning | 21 | Nansha Port |

| 4 | Guiyang | 10 | Yangon | 16 | Litang | 22 | Singapore |

| 5 | Huaihua | 11 | Baise | 17 | Yantian Port | ||

| 6 | Haioi | 12 | Sihanoukville | 18 | Kuala Lumpur |

| Transport Modes | Transport Price | Average Speed | Unit Carbon Emission |

|---|---|---|---|

| Railway | 2.8 CNY/km | 85 km/h | 1.27 kg/TEU.km |

| Highway | 6 CNY/km | 65 km/h | 3.73 kg/TEU.km |

| Waterway | 1 CNY/km (<500 km) | 35 km/h | 0.42 kg/TEU.km |

| 0.6 CNY/km (>500 km) | |||

| Seaway | 3 CNY/km | 35 km/h | 0.42 kg/TEU.km |

| Transport Segment | Highway | Railway | Waterway | Transport Segment | Highway | Railway | Waterway |

|---|---|---|---|---|---|---|---|

| (1, 2) | 1060 | - | - | (9, 16) | 150 | 135 | 198 |

| (1, 3) | 1514 | - | - | (10, 12) | - | - | 1200 |

| (1, 4) | 392 | 424 | - | (11, 15) | 230 | 223 | - |

| (1, 5) | 563 | 602 | - | (12, 13) | 940 | - | - |

| (1, 19) | 1065 | 1217 | 2478 | (13, 18) | 1480 | 665 | 2047 |

| (1, 14) | 1667 | - | 2400 | (13, 22) | - | - | 1574 |

| (2, 6) | 172 | 167 | - | (14, 22) | - | - | 4700 |

| (3, 7) | 680 | 422 | - | (15, 19) | 139 | 117 | - |

| (4, 8) | 127 | 315 | - | (16, 19) | 204 | 215 | 300 |

| (4, 9) | 495 | 489 | 564 | (17, 20) | 50 | 80 | 84 |

| (5, 9) | 448 | 453 | - | (17, 22) | - | - | 2408 |

| (5, 17) | 920 | 974 | - | (18, 22) | 325 | - | 426 |

| (5, 21) | 870 | 976 | - | (19, 22) | 4270 | - | 2450 |

| (6, 10) | 1100 | - | - | (20, 22) | - | - | 2670 |

| (7, 13) | 600 | - | - | (21, 22) | - | - | 4000 |

| (8, 11) | 400 | - | - |

| Transshipment | Cost: CNY/TEU | Time: h/TEU | Carbon Emission: kg/TEU |

|---|---|---|---|

| Highway– Railway | 82.6 | 0.05 | 2.24 |

| Highway– Waterway | 178.64 | 0.06 | 2.07 |

| Railway– Waterway | 242.06 | 0.08 | 1.90 |

| Waterway– Seaway | 200.00 | 0.07 | 1.50 |

| Highway– Railway | 82.6 | 0.05 | 2.24 |

| Scenario | Index | Method | ||

|---|---|---|---|---|

| MILP | GA | PPO | ||

| 45TEU | Route | 1-4-9-16-19-22 | 1-4-9-16-19-22 | 1-19-22 |

| Mode | R-W-W-W-W | R-W-W-W-W | R-W | |

| Deterministic Cost (CNY) | 455,381 | 455,381 | 499,331 | |

| Stochastic Average Cost (CNY) | 515,766 | 511,971 | 499,574 | |

| Standard Deviation (CNY) | 24,976 | 22,311 | 1228 | |

| Stochastic Late Rate | 58% | 59% | 4% | |

| Robustness Gap | +13.3% | +12.4% | +0.5% | |

| Carbon Emissions (Kg) | 48,112.20 | 48,112.20 | 48,465.90 | |

| 90TEU | Route | 1-4-9-16-19-22 | 1-19-22 | 1-19-22 |

| Mode | R-R-W-W-W | R-W | R-W | |

| Deterministic Cost (CNY) | 892,287 | 904,691 | 904,691 | |

| Stochastic Average Cost (CNY) | 992,408 | 987,034 | 908,864 | |

| Standard Deviation (CNY) | 64,167 | 66,527 | 10,626 | |

| Stochastic Late Rate | 95% | 92% | 17% | |

| Performance Gap | +11.2% | +9.1% | +0.4% | |

| Carbon Emissions (Kg) | 98,578.00 | 96,931.80 | 96,931.80 | |

| 180TEU | Route | 1-19-22 | 1-19-22 | 1-19-22 |

| Mode | R-W | R-W | R-W | |

| Deterministic Cost (CNY) | 1,720,256 | 1,720,256 | 1,720,256 | |

| Stochastic Average Cost (CNY) | 1,770,229 | 1,761,626 | 1,780,535 | |

| Standard Deviation (CNY) | 62,099 | 63,728 | 73,172 | |

| Stochastic Late Rate | 53% | 50% | 52% | |

| Performance Gap | +2.9% | +2.7% | +3.5% | |

| Carbon Emissions (Kg) | 193,863.60 | 193,863.60 | 193,863.60 | |

| Noise Levels | Route Change vs. 0% Noise | Success Rate | Average Cost Change (%) | Total Cost Variation Range (%) |

|---|---|---|---|---|

| 5% | No | 100% | −0.02 | (−2.03, 2.03) |

| 10% | No | 100% | −0.16 | (−4.32, 4.03) |

| 20% | No | 100% | +0.08 | (−8.08, 8.18) |

| Level | Carbon Tax Rate | Route | Mode | Time (Hours) | Carbon Emissions(kg) | Total Cost (CNT) |

|---|---|---|---|---|---|---|

| 90TEU | (0,0,0,0) | 1-4-9-16-19-22 | R-R-W-W-W | 108.36 | 98,578.00 | 880,500.60 |

| (0.025,0.05,0.075,0.01) | 1-4-9-16-19-22 | R-R-W-W-W | 108.36 | 98,578.00 | 886,394.01 | |

| (0.05,0.1,0.15,0.2) | 1-19-22 | R-W | 91.52 | 96,777.90 | 904,690.77 | |

| (0.075,0.15,0.225,0.3) | 1-19-22 | R-W | 91.52 | 96,777.90 | 910,426.03 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Zhang, R.; Dai, C.; Li, Y. Robust Low-Carbon Multimodal Transport Route Optimization for Containers Under Dual Uncertainty: A Proximal Policy Optimization Approach. Electronics 2026, 15, 5. https://doi.org/10.3390/electronics15010005

Zhang R, Dai C, Li Y. Robust Low-Carbon Multimodal Transport Route Optimization for Containers Under Dual Uncertainty: A Proximal Policy Optimization Approach. Electronics. 2026; 15(1):5. https://doi.org/10.3390/electronics15010005

Chicago/Turabian StyleZhang, Rui, Cuilian Dai, and Yunpeng Li. 2026. "Robust Low-Carbon Multimodal Transport Route Optimization for Containers Under Dual Uncertainty: A Proximal Policy Optimization Approach" Electronics 15, no. 1: 5. https://doi.org/10.3390/electronics15010005

APA StyleZhang, R., Dai, C., & Li, Y. (2026). Robust Low-Carbon Multimodal Transport Route Optimization for Containers Under Dual Uncertainty: A Proximal Policy Optimization Approach. Electronics, 15(1), 5. https://doi.org/10.3390/electronics15010005