A Binary Convolution Accelerator Based on Compute-in-Memory

Abstract

1. Introduction

1.1. Background

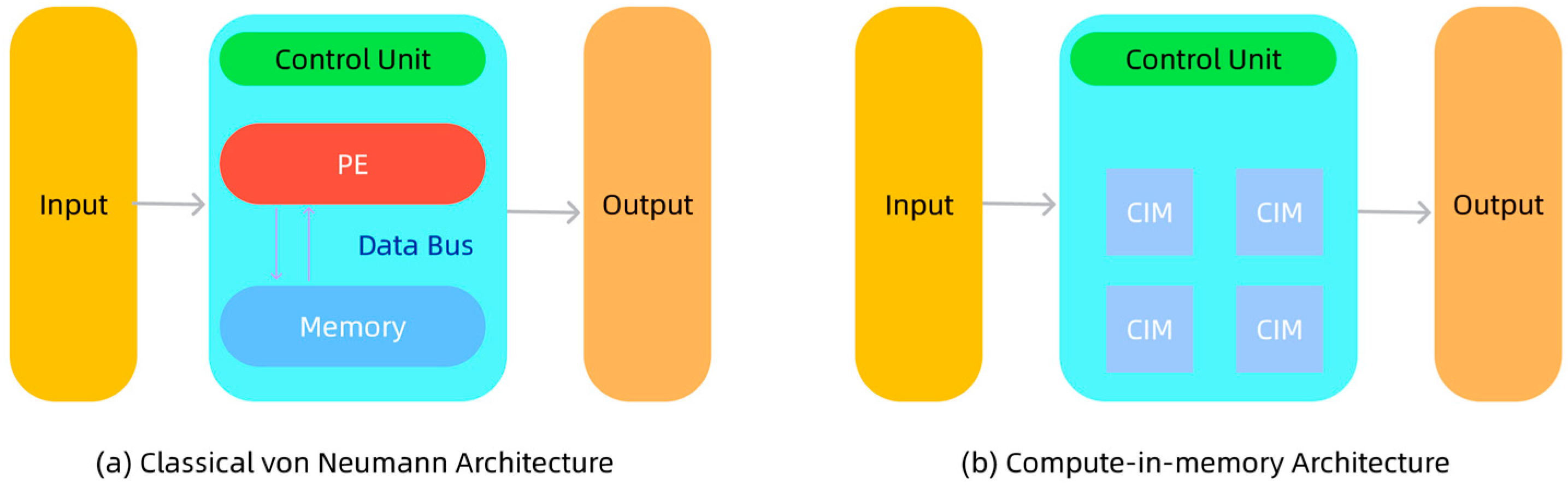

1.2. Computing-in-Memory

2. Purely Binary Convolution CIM Design

2.1. Overview

2.2. Computing Framework

2.3. Functions of the Accelerator Modules

| Algorithm 1 SIMD Computing |

| RESET_HW_STATE() (OPCODE, K_SIZE, IN_HW_LOG, IN_C_LOG, OUT_C_LOG, S1, S2, W_BASE, BN_BASE) = DECODE(iInstruction) OUT_H = (1 << IN_HW_LOG) / S1 OUT_W = (1 << IN_HW_LOG) / S1 for out_pixel_idx = 0 to (OUT_H * OUT_W) - 1 do for out_ch_group_idx = 0 to ( (1 << OUT_C_LOG) / 64 ) - 1 do ACCUMULATORS = ZERO_ACCUMULATORS() ACCUMULATORS = ACCUMULATORS + CALCULATE_CONV_SUM( out_pixel_idx, out_ch_group_idx, K_SIZE, (1 << IN_C_LOG), W_BASE, S1, S2) if OPCODE == 2’b01 then ACCUMULATORS = ACCUMULATORS + CALCULATE_RES_SUM(out_pixel_idx, out_ch_group_idx, W_BASE, (1<<IN_C_LOG), (1<< OUT_C_LOG), S2) end if BN_PARAMS = FETCH_BN_PARAMS(BN_BASE, out_ch_group_idx) BINARIZED_OUTPUT = SIMD_BINARIZE(ACCUMULATORS, BN_PARAMS) STORE_OUTPUT_FEATURES(out_pixel_idx, out_ch_group_idx, BINARIZED_OUTPUT) end for end for SWAP_IO_BUFFERS() SIGNAL_CALCULATION_COMPLETE() RETURN PROCESSED_DATA_REFERENCE() |

3. FPGA-Based Accelerator Validation

3.1. Simulation of the Purely Binary Accelerator

3.2. FPGA Prototyping and On-Board Verification

4. Experimental Results and Conclusions

4.1. Performance

4.2. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Chang, Z.; Liu, S.; Xiong, X.; Cai, Z.; Tu, G. A survey of recent advances in edge-computing-powered artificial intelligence of things. IEEE Internet Things J. 2021, 8, 13849–13875. [Google Scholar] [CrossRef]

- Garg, T.; Khullar, S. Big data analytics: Applications, challenges & future directions. In Proceedings of the 2020 8th International Conference on Reliability, Infocom Technologies and Optimization (Trends and Future Directions) (ICRITO), Noida, India, 4–5 June 2020; IEEE: Piscataway, NJ, USA, 2020; pp. 923–928. [Google Scholar]

- Ali, M.; Roy, S.; Saxena, U.; Sharma, T.; Raghunathan, A.; Roy, K. Compute-in-memory technologies and architectures for deep learning workloads. IEEE Trans. Very Large Scale Integr. (VLSI) Syst. 2022, 30, 1615–1630. [Google Scholar] [CrossRef]

- Liu, S.; Radway, R.M.; Wang, X.; Kwon, J.; Trippel, C.; Levis, P.; Mitra, S.; Wong, H.-S.P. Future of Memory: Massive, Diverse, Tightly Integrated with Compute-from Device to Software. In Proceedings of the 2024 IEEE International Electron Devices Meeting (IEDM), San Francisco, CA, USA, 7–12 November 2024; IEEE: New York, NY, USA, 2024; pp. 1–4. [Google Scholar]

- Zou, X.; Xu, S.; Chen, X.; Yan, L.; Han, Y. Breaking the von Neumann bottleneck: Architecture-level processing-in-memory technology. Sci. China Inf. Sci. 2021, 64, 160404. [Google Scholar] [CrossRef]

- Sun, W.; Yue, J.; He, Y.; Huang, Z.; Wang, J.; Jia, W.; Li, Y.; Lei, L.; Jia, H.; Liu, Y. A survey of computing-in-memory processor: From circuit to application. IEEE Open J. Solid-State Circuits Soc. 2023, 4, 25–42. [Google Scholar] [CrossRef]

- Jhang, C.J.; Xue, C.X.; Hung, J.M.; Chang, F.C.; Chang, M.F. Challenges and trends of SRAM-based computing-in-memory for AI edge devices. IEEE Trans. Circuits Syst. I Regul. Pap. 2021, 68, 1773–1786. [Google Scholar] [CrossRef]

- Liang, T.; Glossner, J.; Wang, L.; Shi, S.; Zhang, X. Pruning and quantization for deep neural network acceleration: A survey. Neurocomputing 2021, 461, 370–403. [Google Scholar] [CrossRef]

- Qin, H.; Gong, R.; Liu, X.; Bai, X.; Song, J.; Sebe, N. Binary neural networks: A survey. Pattern Recognit. 2020, 105, 107281. [Google Scholar] [CrossRef]

- Zou, Q.; Wang, Y.; Wang, Q.; Zhao, Y.; Li, Q. Deep learning-based gait recognition using smartphones in the wild. IEEE Trans. Inf. Forensics Secur. 2020, 15, 3197–3212. [Google Scholar] [CrossRef]

- Tanigawa, T.; Noda, M.; Ishiura, N. Efficient FPGA implementation of binarized neural networks based on generalized parallel counter tree. In Proceedings of the Workshop on Synthesis and System Integration of Mixed Information Technologies (SASIMI), Taipei, China, 11–12 March 2024; pp. 32–37. [Google Scholar]

- Vatsavai, S.S.; Karempudi, V.S.P.; Thakkar, I. An optical xnor-bitcount based accelerator for efficient inference of binary neural networks. In Proceedings of the 2023 24th International Symposium on Quality Electronic Design (ISQED), San Francisco, CA, USA, 5–7 April 2023; IEEE: New York, NY, USA, 2023; pp. 1–8. [Google Scholar]

- Sun, J.; Houshmand, P.; Verhelst, M. Analog or digital in-memory computing? benchmarking through quantitative modeling. In Proceedings of the 2023 IEEE/ACM International Conference on Computer Aided Design (ICCAD), San Francisco, CA, USA, 29 October–2 November 2023; IEEE: New York, NY, USA, 2023; pp. 1–9. [Google Scholar]

- Perri, S.; Zambelli, C.; Ielmini, D.; Silvano, C. Digital In-Memory Computing to Accelerate Deep Learning Inference on the Edge. In Proceedings of the 2024 IEEE International Parallel and Distributed Processing Symposium Workshops (IPDPSW), San Francisco, CA, USA, 27–31 May 2024; IEEE: New York, NY, USA, 2024; pp. 130–133. [Google Scholar]

- Houshmand, P.; Cosemans, S.; Mei, L.; Papistas, I.; Bhattacharjee, D.; Debacker, P.; Mallik, A.; Verkest, D.; Verhelst, M. Opportunities and limitations of emerging analog in-memory compute DNN architectures. In Proceedings of the 2020 IEEE International Electron Devices Meeting (IEDM), San Francisco, CA, USA, 12–18 December 2020; IEEE: New York, NY, USA, 2020; pp. 29.1.1–29.1.4. [Google Scholar]

- Channamadhavuni, S.; Thijssen, S.; Jha, S.K.; Ewetz, R. Accelerating AI applications using analog in-memory computing: Challenges and opportunities. In Proceedings of the 2021 Great Lakes Symposium on VLSI, Virtual, 22–25 June 2021; pp. 379–384. [Google Scholar]

- Qin, R.; Yan, Z.; Zeng, D.; Jia, Z.; Liu, D.; Liu, J.; Abbasi, A.; Zheng, Z.; Cao, N.; Ni, K.; et al. Robust implementation of retrieval-augmented generation on edge-based computing-in-memory architectures. In Proceedings of the 43rd IEEE/ACM International Conference on Computer-Aided Design, New York, NY, USA, 27–31 October 2024; pp. 1–9. [Google Scholar]

- Lin, Z.; Tong, Z.; Zhang, J.; Wang, F.; Xu, T.; Zhao, Y.; Wu, X.; Peng, C.; Lu, W.; Zhao, Q.; et al. A review on SRAM-based computing in-memory: Circuits, functions, and applications. J. Semicond. 2022, 43, 031401. [Google Scholar] [CrossRef]

- Sun, X.; Liu, R.; Peng, X.; Yu, S. Computing-in-memory with SRAM and RRAM for binary neural networks. In Proceedings of the 2018 14th IEEE International Conference on Solid-State and Integrated Circuit Technology (ICSICT), Qingdao, China, 31 October–3 November 2018; IEEE: New York, NY, USA, 2018; pp. 1–4. [Google Scholar]

- Mourya, M.V.; Bansal, H.; Verma, D.; Suri, M. RRAM IMC based Efficient Analog Carry Propagation and Multi-bit MVM. In Proceedings of the 2024 8th IEEE Electron Devices Technology & Manufacturing Conference (EDTM), Bangalore, India, 3–6 March 2024; IEEE: New York, NY, USA, 2024; pp. 1–3. [Google Scholar]

- Antolini, A.; Lico, A.; Zavalloni, F.; Scarselli, E.F.; Gnudi, A.; Torres, M.L.; Canegallo, R.; Pasotti, M. A readout scheme for PCM-based analog in-memory computing with drift compensation through reference conductance tracking. IEEE Open J. Solid-State Circuits Soc. 2024, 4, 69–82. [Google Scholar] [CrossRef]

- Huang, L.; Qin, J.; Zhou, Y.; Zhu, F.; Liu, L.; Shao, L. Normalization techniques in training dnns: Methodology, analysis and application. IEEE Trans. Pattern Anal. Mach. Intell. 2023, 45, 10173–10196. [Google Scholar] [CrossRef] [PubMed]

- Jooq, M.K.Q.; Behbahani, F.; Moaiyeri, M.H. Ultra-efficient fully programmable membership function generator based on independent double-gate FinFET technology. Int. J. Circuit Theory Appl. 2023, 51, 4485–4502. [Google Scholar] [CrossRef]

- Qin, H.; Gong, R.; Liu, X.; Shen, M.; Wei, Z.; Yu, F.; Song, J. Forward and backward information retention for accurate binary neural networks. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 13–19 June 2020; pp. 2250–2259. [Google Scholar]

- Liu, Y.; Zhang, H.; Sun, Z.; Duan, F.; Ma, Y.; Lu, W.; Caiafa, C.F.; Solé-Casals, J. HSBNN: A High-Scalable Bayesian Neural Networks Accelerator Based on Field Programmable Gate Arrays (FPGA). Cogn. Comput. 2025, 17, 100. [Google Scholar] [CrossRef]

- Cen, R.; Zhang, D.; Kang, Y.; Wang, D. HA-BNN: Hardware-Aware Binary Neural Networks for Efficient Inference. In Proceedings of the 2024 IEEE 17th International Conference on Signal Processing (ICSP), Suzhou, China, 28–31 October 2024; IEEE: New York, NY, USA, 2024; pp. 176–180. [Google Scholar]

- Li, Z.; Bilavarn, S. Partial Reconfiguration for Energy-Efficient Inference on FPGA: A Case Study with ResNet-18. In Proceedings of the 2024 27th Euromicro Conference on Digital System Design (DSD), Paris, France, 28–30 August 2024; IEEE: New York, NY, USA, 2024; pp. 291–297. [Google Scholar]

- Yang, A. Research of FPGA-based neural network accelerators. In Proceedings of the IET Conference Proceedings CP895, Stevenage, UK, 11 October 2024; The Institution of Engineering and Technology: Stevenage, UK, 2024; Volume 2024, pp. 117–122. [Google Scholar]

| Paper | [25] | [26] | [27] | [28] | This Paper |

|---|---|---|---|---|---|

| Model | BNN | BNN | ResNet-18 | Zynq Net | ResNet-14 |

| FPGA | ZYBO Z7-20 | PYNQ-Z1 | PYNQ-Z2 | Virtex-7 VC709 | PYNQ-Z2 |

| LUTs | 49,387 | 49,600 | 74,482 | 338,922 | 25,186 |

| BRAM (Mb) | 2.46 | 5.04 | 4.92 | 49.6 | 68 |

| Power (W) | 1.8 | 2.15 | 2.85 | 11.5 | 1.65 |

| Frequency (MHz) | 100 | 100 | 100 | 125 | 50 |

| FPS/MHz | 118.3 | 42.0 | 0.04 | 0.125 | 6.83 |

| GOPS/W | 166 | 70 | 7 | N/A | 66.9 |

| Bit width | 32 | 1 | 8 | 16 | 1 |

| Residual/BN | NO/NO | Partial/Yes | Yes/Yes | N/A | Yes/Yes |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Cui, W.; Zheng, Z.; Li, P.; Li, M.; Liu, Y.; Chi, Y. A Binary Convolution Accelerator Based on Compute-in-Memory. Electronics 2026, 15, 117. https://doi.org/10.3390/electronics15010117

Cui W, Zheng Z, Li P, Li M, Liu Y, Chi Y. A Binary Convolution Accelerator Based on Compute-in-Memory. Electronics. 2026; 15(1):117. https://doi.org/10.3390/electronics15010117

Chicago/Turabian StyleCui, Wenpeng, Zhe Zheng, Pan Li, Ming Li, Yu Liu, and Yingying Chi. 2026. "A Binary Convolution Accelerator Based on Compute-in-Memory" Electronics 15, no. 1: 117. https://doi.org/10.3390/electronics15010117

APA StyleCui, W., Zheng, Z., Li, P., Li, M., Liu, Y., & Chi, Y. (2026). A Binary Convolution Accelerator Based on Compute-in-Memory. Electronics, 15(1), 117. https://doi.org/10.3390/electronics15010117