A Source-to-Source Compiler to Enable Hybrid Scheduling for High-Level Synthesis

Abstract

1. Introduction

- 1.

- We propose the Pseudo-Cycle-Accurate (PCA) model to achieve source level dynamism to facilitate hybrid scheduling for HLS.

- 2.

- We propose a novel intermediate representation (IR) termed an Execution Graph (EG) by extending the Gated SSA (GSSA) [3] representation as the foundation to support the transformation from a plain loop kernel to its equivalent PCA model.

- 3.

- We propose an automated framework with key algorithms to transform a plain design into a PCA model, which is fully compatible with existing HLS tools adopting static scheduling strategy.

- 4.

- The framework was evaluated with a set of benchmarks, and the results demonstrate significant performance improvements over a commercial static-scheduling-based HLS tool (Vitis HLS) as well as better resource–performance tradeoffs over other state-of-the-art, open-source, dynamic- and hybrid-scheduling-based tools.

2. Preliminaries

2.1. HLS and Scheduling in HLS

2.2. Static Scheduling

2.3. Dynamic Scheduling

2.4. Hybrid Scheduling

3. Related Work

3.1. Dynamic-Scheduling-Based HLS

3.2. Hybrid-Scheduling-Based HLS

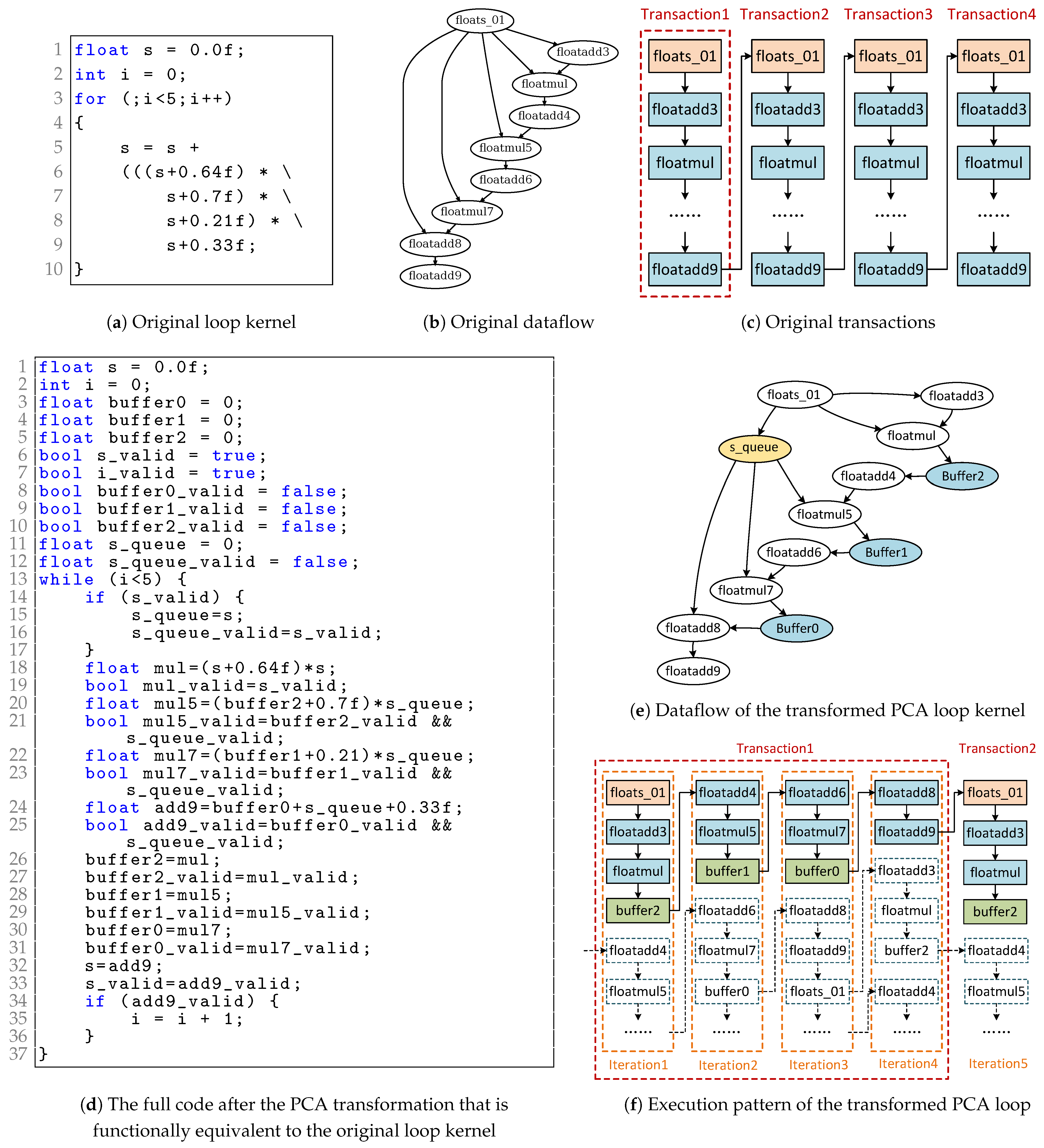

4. PCA Model

4.1. Concept of Virtual Cycle and PCA Model

4.2. PCA Model for Regular Loop

4.2.1. Behavior

4.2.2. Scheduling

4.3. PCA Model for Loops with Control Flows

4.3.1. Behavior

4.3.2. Scheduling

4.4. PCA Transformation

4.4.1. Buffer Insertion

4.4.2. Queue Insertion

| Algorithm 1 Queue node insertion algorithm. The input is the dataflow graph E omitting the back edge after the buffer insertion. The output is a list of edges along which a queue node is to be inserted. Buffer nodes are denoted by , queue nodes by , and recurrence variables by . |

| Require: ▹ The edge set of the given E that should be inserted with nodes |

| Initialize ▹ The result edge set |

| Initialize ▹ The accumulated delay for each node |

| for each in do ▹ Traverse the node in the EG following the topological order |

| if is then |

| ▹ node has zero accumulated delay |

| else if is then |

| ▹ node increases the accumulated delay by one cycle |

| else |

| ▹ Regular nodes take the max delay of its inputs |

| for each in do |

| if then |

| ▹ Insert node for the edge with a short delay input |

| end if |

| end for |

| end if |

| end for |

4.4.3. Handling Complex Control Flow

4.4.4. Recurrence Synchronization

4.4.5. Communications Among SCCs

4.4.6. Control Logic Generation

5. Proposed Framework

5.1. Overall Flow

5.2. Execution Graph Representation

5.2.1. GSSA

- The node acts as a multiplexer to select the value from one of its data inputs according to the value of the control inputs. It is an unified representation of the - pairs and the node in LLVM IR, which introduce the dynamic execution through control flow (normally from if-else and switch structures) and dataflow (normally from the ternary operation), respectively.

- The node acts as the recurrence point to propagate values crossing the loop iterations. Each node represents one node in the loop header of an LLVM loop. It is initialized with a value outside of the loop and then updated with a value computed within the loop at each iteration.

- The node represents the memory access operation in the LLVM IR denoted as , which means that the array a’s element is replaced with v. This node allows arrays to be considered as atomic objects, and the memory alias can be directly revealed in the graph.

5.2.2. EG

- The node acts as the buffer to hold the valid input value from being propagated until next iteration, which increases the recurrence distance of a node.

- The node acts as the queue node to reserve the valid input value to synchronize the execution of multi-input nodes with different input latency: If the input of the node is valid, it propagates the valid value and keeps it valid until its input node present the next valid value, then its output is updated with the new valid input value.

- The node acts as a synchronizer of different nodes in an SCC, which commits all nodes when all of their input nodes are valid. Its validity also indicates whether a transaction of the original is completed.

- The node acts as a handshaking channel between two communicating SCCs. A node should be inserted between any def–use pair where the def node and use node belong to two different SCCs. It not only propagates the validity from the def node but also monitors the readiness of the use node. If the node indicates invalid, the destination SCC can be stalled. Otherwise, if the node indicates not-ready, the source SCC can be backpressured to stall.

5.3. Handling Inter-SCC Handshakings

5.3.1. The Node

5.3.2. Handshaking Between SCCs

5.3.3. The FIFO Sizing and Deadlock Avoidance

5.4. Handling Memory Access

5.5. Frequency Degradation and Mitigation Strategies

6. Evaluation

6.1. Experiment Setup

6.2. Benchmarks

6.3. Comparison with Baseline Tools

6.3.1. Comparison with SS

6.3.2. Comparison with DS and DASS

7. Conclusions and Discussion

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

Abbreviations

| HLS | High-Level Synthesis |

| PCA | Pseudo-Cycle-Accurate |

| II | Initiation Interval |

| IR | Intermediate Representation |

| EG | Execution Graph |

| GSSA | Gated SSA |

| HDL | Hardware Description Language |

| CDFG | Control Data Flow Graph |

| CFG | Control Flow Graph |

| DFG | Data Flow Graph |

| LLVM | Low-Level Virtual Machine |

| MLIR | Multi-Level Intermediate Representation |

| BB | Basic Block |

| FSM | Finite State Machine |

| DAG | Directed Acyclic Graph |

| SCC | Strongly Connected Component |

References

- AMD Xilinx. Vitis High-Level Synthesis User Guide (UG1399). 2025. Available online: https://docs.xilinx.com/r/en-US/ug1399-vitis-hls (accessed on 19 November 2025).

- Rokicki, S.; Pala, D.; Paturel, J.; Sentieys, O. What you simulate is what you synthesize: Designing a processor core from c++ specifications. In Proceedings of the 2019 IEEE/ACM International Conference on Computer-Aided Design (ICCAD), Westminster, CO, USA, 4–7 November 2019; pp. 1–8. [Google Scholar]

- Tu, P.; Padua, D. Gated SSA-based demand-driven symbolic analysis for parallelizing compilers. In Proceedings of the 9th International Conference on Supercomputing, Barcelona, Spain, 3–7 July 1995; pp. 414–423. [Google Scholar]

- Cong, J.; Liu, B.; Neuendorffer, S.; Noguera, J.; Vissers, K.; Zhang, Z. High-level synthesis for FPGAs: From prototyping to deployment. IEEE Trans.-Comput.-Aided Des. Integr. Circuits Syst. 2011, 30, 473–491. [Google Scholar] [CrossRef]

- LLVM. The LLVM Compiler Infrastructure. 2025. Available online: https://www.llvm.org/ (accessed on 19 November 2025).

- Micheli, G.D. Hardware/Software Co-Design of Run-Time Schedulers for Real-Time Systems. Des. Autom. Embed. Syst. 2001, 6, 89. [Google Scholar]

- Zhang, Z.; Liu, B. SDC-based modulo scheduling for pipeline synthesis. In Proceedings of the 2013 IEEE/ACM International Conference on Computer-Aided Design (ICCAD), San Jose, CA, USA, 4–7 November 2013; pp. 211–218. [Google Scholar]

- Canis, A.; Brown, S.D.; Anderson, J.H. Modulo SDC scheduling with recurrence minimization in high-level synthesis. In Proceedings of the 2014 24th International Conference on Field Programmable Logic and Applications (FPL), Munich, Germany, 2–4 September 2014; pp. 1–8. [Google Scholar]

- Rau, B.R. Iterative modulo scheduling. Int. J. Parallel Program. 1996, 24, 3–64. [Google Scholar] [CrossRef]

- Carloni, L.P.; McMillan, K.L.; Sangiovanni-Vincentelli, A.L. Theory of latency-insensitive design. IEEE Trans.-Comput.-Aided Des. Integr. Circuits Syst. 2001, 20, 1059–1076. [Google Scholar] [CrossRef]

- Josipović, L.; Marmet, A.; Guerrieri, A.; Ienne, P. Resource sharing in dataflow circuits. ACM Trans. Reconfigurable Technol. Syst. 2023, 16, 1–27. [Google Scholar] [CrossRef]

- Xu, J.; Murphy, E.; Cortadella, J.; Josipović, L. Eliminating Excessive Dynamism of Dataflow Circuits Using Model Checking. In Proceedings of the 2023 ACM/SIGDA International Symposium on Field Programmable Gate Arrays, Monterey, CA, USA, 12–14 February 2023; pp. 27–37. [Google Scholar]

- Cheng, J.; Josipović, L.; Constantinides, G.A.; Ienne, P.; Wickerson, J. Combining dynamic & static scheduling in high-level synthesis. In Proceedings of the 2020 ACM/SIGDA International Symposium on Field-Programmable Gate Arrays, Seaside, CA, USA, 23–25 February 2020; pp. 288–298. [Google Scholar]

- Cheng, J.; Josipović, L.; Constantinides, G.A.; Ienne, P.; Wickerson, J. DASS: Combining Dynamic & Static Scheduling in High-Level Synthesis. IEEE Trans.-Comput.-Aided Des. Integr. Circuits Syst. 2021, 41, 628–641. [Google Scholar]

- Cheng, J.; Wickerson, J.; Constantinides, G.A. Finding and finessing static islands in dynamically scheduled circuits. In Proceedings of the 2022 ACM/SIGDA International Symposium on Field-Programmable Gate Arrays, Virtual, 27 February–1 March 2022; pp. 89–100. [Google Scholar]

- Szafarczyk, R.; Nabi, S.W.; Vanderbauwhede, W. Compiler Discovered Dynamic Scheduling of Irregular Code in High-Level Synthesis. In Proceedings of the 2023 33rd International Conference on Field-Programmable Logic and Applications (FPL), Hamburg, Germany, 30 August–1 September 2023; pp. 1–9. [Google Scholar]

- Cortadella, J.; Kishinevsky, M. Synchronous elastic circuits with early evaluation and token counterflow. In Proceedings of the 44th Annual Design Automation Conference, San Diego, CA, USA, 4–8 June 2007; pp. 416–419. [Google Scholar]

- Edwards, S.A.; Townsend, R.; Barker, M.; Kim, M.A. Compositional dataflow circuits. ACM Trans. Embed. Comput. Syst. (TECS) 2019, 18, 1–27. [Google Scholar] [CrossRef]

- Cortadella, J.; Kishinevsky, M.; Grundmann, B. Synthesis of synchronous elastic architectures. In Proceedings of the 43rd Annual Design Automation Conference, San Francisco, CA, USA, 24–28 July 2006; pp. 657–662. [Google Scholar]

- Venkataramani, G.; Budiu, M.; Chelcea, T.; Goldstein, S.C. C to Asynchronous Dataflow Circuits: An End-to-End Toolflow. 2004. Available online: https://kilthub.cmu.edu/articles/C_to_Asynchronous_Dataflow_Circuits_An_End-to-End_Toolflow/6603986/files/12094370.pdf (accessed on 19 November 2025).

- Hoover, G.; Brewer, F. Synthesizing synchronous elastic flow networks. In Proceedings of the Conference on Design, Automation and Test in Europe, Grenoble, France, 10–14 March 2008; pp. 306–311. [Google Scholar]

- Chatterjee, S.; Kishinevsky, M.; Ogras, U.Y. xMAS: Quick formal modeling of communication fabrics to enable verification. IEEE Des. Test Comput. 2012, 29, 80–88. [Google Scholar] [CrossRef]

- Putnam, A.; Bennett, D.; Dellinger, E.; Mason, J.; Sundararajan, P.; Eggers, S. CHiMPS: A C-level compilation flow for hybrid CPU-FPGA architectures. In Proceedings of the 2008 International Conference on Field Programmable Logic and Applications, Heidelberg, Germany, 8–10 September 2008; pp. 173–178. [Google Scholar]

- Townsend, R.; Kim, M.A.; Edwards, S.A. From functional programs to pipelined dataflow circuits. In Proceedings of the 26th International Conference on Compiler Construction, Saarbrücken, Germany, 26 March–3 April 2017; pp. 76–86. [Google Scholar]

- Josipović, L.; Brisk, P.; Ienne, P. From C to elastic circuits. In Proceedings of the 2017 51st Asilomar Conference on Signals, Systems, and Computers, Pacific Grove, CA, USA, 29 October–1 November 2017; pp. 121–125. [Google Scholar]

- Josipović, L.; Guerrieri, A.; Ienne, P. From C/C++ code to high-performance dataflow circuits. IEEE Trans.-Comput.-Aided Des. Integr. Circuits Syst. 2021, 41, 2142–2155. [Google Scholar] [CrossRef]

- Liu, J.; Rizzi, C.; Josipović, L. Load-store queue sizing for efficient dataflow circuits. In Proceedings of the 2022 International Conference on Field-Programmable Technology (ICFPT), Hong Kong, China, 5–9 December 2022; pp. 1–9. [Google Scholar]

- Wang, H.; Rizzi, C.; Josipović, L. MapBuf: Simultaneous technology mapping and buffer insertion for hls performance optimization. In Proceedings of the 2023 IEEE/ACM International Conference on Computer Aided Design (ICCAD), San Francisco, CA, USA, 29 October–2 November 2023; pp. 1–9. [Google Scholar]

- Alle, M.; Morvan, A.; Derrien, S. Runtime dependency analysis for loop pipelining in high-level synthesis. In Proceedings of the 50th Annual Design Automation Conference, Austin, TX, USA, 2–6 June 2013; pp. 1–10. [Google Scholar]

- Liu, J.; Bayliss, S.; Constantinides, G.A. Offline synthesis of url dependence testing: Parametric loop pipelining for HLS. In Proceedings of the 2015 IEEE 23rd Annual International Symposium on Field-Programmable Custom Computing Machines, Boston, MA, USA, 1–4 May 2015; pp. 159–162. [Google Scholar]

- Tan, M.; Liu, G.; Zhao, R.; Dai, S.; Zhang, Z. ElasticFlow: A complexity-effective approach for pipelining irregular loop nests. In Proceedings of the 2015 IEEE/ACM International Conference on Computer-Aided Design (ICCAD), Austin, TX, USA, 2–6 November 2015; pp. 78–85. [Google Scholar]

- Dai, S.; Zhao, R.; Liu, G.; Srinath, S.; Gupta, U.; Batten, C.; Zhang, Z. Dynamic hazard resolution for pipelining irregular loops in high-level synthesis. In Proceedings of the 2017 ACM/SIGDA International Symposium on Field-Programmable Gate Arrays, Monterey, CA, USA, 22–24 February 2017; pp. 189–194. [Google Scholar]

- Derrien, S.; Marty, T.; Rokicki, S.; Yuki, T. Toward speculative loop pipelining for high-level synthesis. IEEE Trans.-Comput.-Aided Des. Integr. Circuits Syst. 2020, 39, 4229–4239. [Google Scholar] [CrossRef]

- Gorius, J.M.; Rokicki, S.; Derrien, S. SpecHLS: Speculative accelerator design using high-level synthesis. IEEE Micro 2022, 42, 99–107. [Google Scholar] [CrossRef]

- Gorius, J.M.; Rokicki, S.; Derrien, S. A Unified Memory Dependency Framework for Speculative High-Level Synthesis. In Proceedings of the 33rd ACM SIGPLAN International Conference on Compiler Construction, Edinburgh, UK, 2–3 March 2024; pp. 13–25. [Google Scholar]

- She, Y.; Liu, J.; Huang, Y.; Cheung, R.C.; Yan, H. A Speculative Loop Pipeline Framework with Accurate Path Modeling for High-Level Synthesis. ACM Trans. Reconfigurable Technol. Syst. 2025, 18, 1–33. [Google Scholar] [CrossRef]

- Carloni, L.P. From latency-insensitive design to communication-based system-level design. Proc. IEEE 2015, 103, 2133–2151. [Google Scholar] [CrossRef]

- Josipović, L.; Sheikhha, S.; Guerrieri, A.; Ienne, P.; Cortadella, J. Buffer placement and sizing for high-performance dataflow circuits. ACM Trans. Reconfigurable Technol. Syst. (TRETS) 2021, 15, 1–32. [Google Scholar]

- Rangan, R.; Vachharajani, N.; Vachharajani, M.; August, D.I. Decoupled software pipelining with the synchronization array. In Proceedings of the 13th International Conference on Parallel Architecture and Compilation Techniques—PACT 2004, Antibes Juan-les-Pins, France, 29 September–3 October 2004; pp. 177–188. [Google Scholar]

- CLANG. Clang: A C Language Family Frontend for LLVM. 2025. Available online: https://clang.llvm.org/ (accessed on 19 November 2025).

- LLVM. LLVM Loop Terminology (and Canonical Forms). Available online: https://llvm.org/docs/LoopTerminology.html (accessed on 19 November 2025).

- Ferrante, J.; Ottenstein, K.J.; Warren, J.D. The program dependence graph and its use in optimization. ACM Trans. Program. Lang. Syst. (TOPLAS) 1987, 9, 319–349. [Google Scholar] [CrossRef]

- Li, P.; Agrawal, K.; Buhler, J.; Chamberlain, R.D. Deadlock avoidance for streaming computations with filtering. In Proceedings of the Twenty-Second Annual ACM Symposium on Parallelism in Algorithms and Architectures, New York, NY, USA, 13–15 June 2010; pp. 243–252. [Google Scholar]

- Josipović, L.; Brisk, P.; Ienne, P. An out-of-order load-store queue for spatial computing. ACM Trans. Embed. Comput. Syst. (TECS) 2017, 16, 1–19. [Google Scholar] [CrossRef]

- Xilinx. Xilinx Vitis 2022.2, 2022. Available online: https://www.xilinx.com/support/download/index.html/content/xilinx/en/downloadNav/vitis/2022-2.html (accessed on 19 November 2025).

- Cheng, J. JianyiCheng: HLS Benchmarks First Release; 2019. Available online: https://zenodo.org/records/3561115 (accessed on 19 November 2025).

- Morvan, A.; Derrien, S.; Quinton, P. Polyhedral bubble insertion: A method to improve nested loop pipelining for high-level synthesis. IEEE Trans.-Comput.-Aided Des. Integr. Circuits Syst. 2013, 32, 339–352. [Google Scholar] [CrossRef]

- PollyLLVM. Polly LLVM Framework for High-Level Loop and Data-Locality Optimizations. 2025. Available online: https://polly.llvm.org/ (accessed on 19 November 2025).

- Chethan, K.H.; Kapre, N. Hoplite-DSP: Harnessing the Xilinx DSP48 multiplexers to efficiently support NoCs on FPGAs. In Proceedings of the 2016 26th International Conference on Field Programmable Logic and Applications (FPL), Lausanne, Switzerland, 29 August–2 September 2016; pp. 1–10. [Google Scholar]

- Abdelfattah, M.S.; Betz, V. Networks-on-chip for FPGAs: Hard, soft or mixed? ACM Trans. Reconfigurable Technol. Syst. (TRETS) 2014, 7, 1–22. [Google Scholar] [CrossRef]

- Lattner, C.; Amini, M.; Bondhugula, U.; Cohen, A.; Davis, A.; Pienaar, J.; Riddle, R.; Shpeisman, T.; Vasilache, N.; Zinenko, O. MLIR: Scaling compiler infrastructure for domain specific computation. In Proceedings of the 2021 IEEE/ACM International Symposium on Code Generation and Optimization (CGO), Virtual, 27 February–3 March 2021; pp. 2–14. [Google Scholar]

- CIRCT. Circuit IR Compilers and Tools. 2025. Available online: https://circt.llvm.org/ (accessed on 19 November 2025).

| Benchmarks | Description |

|---|---|

| sparseMatrix | conditional memory access |

| gSum, vecNormTran, getTanh | imbalanced control flow with 2 recurrence paths |

| gSumIf | imbalanced control flow with 3 recurrence paths |

| histogram | RAW dependence with irregular memory access |

| BNNKernel | regular but complex memory data hazard |

| gesummv, covariance | regular kernels not amenable to dynamic scheduling |

| Benchmark | Work | F (MHz) | F/FSS | Cycles | C/CSS | WCT () | T/TSS | LUTs | FFs | DSPs | A/ASS | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| SS | 257.5 | 1.00 | 21,501 | 1.00 | 83.5 | 1.00 | 138 | 320 | 3 | 818 | 1.00 | |

| DS | 123.7 | 0.48 | 9426 | 0.44 | 76.2 | 0.91 | 1356 | 1544 | 6 | 3620 | 4.43 | |

| DASS | 80.4 | 0.31 | 6778 | 0.32 | 84.3 | 1.01 | 985 | 1123 | 6 | 2828 | 3.46 | |

| sparseMatrix | Ours | 227.6 | 0.88 | 15,113 | 0.70 | 66.4 | 0.80 | 329 | 774 | 8 | 2063 | 2.52 |

| SS | 230.7 | 1.00 | 24,115 | 1.00 | 104.5 | 1.00 | 931 | 1567 | 5 | 3098 | 1.00 | |

| DS | 125.5 | 0.54 | 11,358 | 0.47 | 90.5 | 0.87 | 4238 | 3956 | 31 | 11,914 | 3.85 | |

| DASS | 128.2 | 0.56 | 19,974 | 0.83 | 155.8 | 1.49 | 2315 | 2176 | 7 | 5331 | 1.72 | |

| gSum | Ours | 195.8 | 0.85 | 13,804 | 0.57 | 70.5 | 0.67 | 981 | 1933 | 11 | 4234 | 1.37 |

| SS | 279.4 | 1.00 | 52,004 | 1.00 | 186.1 | 1.00 | 516 | 827 | 5 | 1943 | 1.00 | |

| DS | 112.5 | 0.40 | 14,704 | 0.28 | 130.7 | 0.70 | 3256 | 2569 | 8 | 6785 | 3.49 | |

| DASS | 109.7 | 0.39 | 15,161 | 0.29 | 138.2 | 0.74 | 1578 | 2056 | 8 | 4594 | 2.36 | |

| getTanh | Ours | 254.8 | 0.91 | 28,716 | 0.55 | 112.7 | 0.61 | 1098 | 1762 | 6 | 3580 | 1.84 |

| SS | 254.8 | 1.00 | 23,070 | 1.00 | 90.5 | 1.00 | 1798 | 3104 | 5 | 5502 | 1.00 | |

| DS | 133.6 | 0.52 | 13,066 | 0.57 | 97.8 | 1.08 | 5114 | 7099 | 7 | 13,053 | 2.37 | |

| DASS | 128.5 | 0.50 | 15,112 | 0.66 | 117.6 | 1.30 | 3956 | 5077 | 6 | 9753 | 1.77 | |

| vecNormTrans | Ours | 237.1 | 0.93 | 17,451 | 0.76 | 73.6 | 0.81 | 3229 | 4166 | 5 | 7995 | 1.45 |

| SS | 285.1 | 1.00 | 15,006 | 1.00 | 52.6 | 1.00 | 282 | 539 | 2 | 1061 | 1.00 | |

| DS | 76.4 | 0.27 | 3789 | 0.25 | 49.6 | 0.94 | 1833 | 4430 | 2 | 6503 | 6.13 | |

| DASS | 72.8 | 0.26 | 3691 | 0.25 | 50.7 | 0.96 | 1814 | 4430 | 2 | 6484 | 6.11 | |

| histogram | Ours | 266.4 | 0.93 | 12,095 | 0.81 | 45.4 | 0.86 | 677 | 1095 | 2 | 2012 | 1.90 |

| SS | 257.5 | 1.00 | 11,407 | 1.00 | 44.3 | 1.00 | 299 | 385 | 3 | 1044 | 1.00 | |

| DS | 89.5 | 0.35 | 5003 | 0.44 | 55.9 | 1.26 | 1697 | 2006 | 9 | 4783 | 4.58 | |

| DASS | 89.5 | 0.35 | 7706 | 0.68 | 86.1 | 1.94 | 907 | 997 | 9 | 2984 | 2.86 | |

| BNNKernel | Ours | 202.0 | 0.78 | 7716 | 0.68 | 38.2 | 0.86 | 531 | 708 | 7 | 2079 | 1.99 |

| SS | 238.3 | 1.00 | 24,116 | 1.00 | 101.2 | 1.00 | 1213 | 1972 | 7 | 4025 | 1.00 | |

| DS | 139.5 | 0.59 | 23,283 | 0.97 | 166.9 | 1.65 | 6886 | 6412 | 60 | 20,498 | 5.09 | |

| DASS | 122.7 | 0.51 | 17,497 | 0.73 | 142.6 | 1.41 | 3897 | 5019 | 12 | 10,356 | 2.57 | |

| gSumIf | Ours | 207.6 | 0.87 | 14,553 | 0.60 | 70.1 | 0.69 | 2119 | 4103 | 13 | 7782 | 1.93 |

| SS | 264.8 | 1.00 | 786,466 | 1.00 | 2970.5 | 1.00 | 898 | 1764 | 5 | 3262 | 1.00 | |

| DS | 112.5 | 0.42 | 1,211,445 | 1.54 | 10,768.4 | 3.63 | 1448 | 4976 | 7 | 7264 | 2.23 | |

| DASS | 96.3 | 0.36 | 1,116,050 | 1.42 | 11,589.3 | 3.90 | 1496 | 1586 | 5 | 3682 | 1.13 | |

| gesummv | Ours | 264.8 | 1.00 | 786,466 | 1.00 | 2970.5 | 1.00 | 898 | 1764 | 5 | 3262 | 1.00 |

| SS | 265.3 | 1.00 | 241,651 | 1.00 | 911.0 | 1.00 | 1670 | 2688 | 5 | 4958 | 1.00 | |

| DS | 129.6 | 0.49 | 354,910 | 1.47 | 2738.5 | 3.01 | 4219 | 4396 | 5 | 9215 | 1.86 | |

| DASS | 124.3 | 0.47 | 409,618 | 1.70 | 3295.4 | 3.62 | 2178 | 3864 | 5 | 6642 | 1.34 | |

| covariance | Ours | 265.3 | 1.00 | 241,651 | 1.00 | 911.0 | 1.00 | 1670 | 2688 | 5 | 4958 | 1.00 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

She, Y.; Huang, Y.; Liu, J.; Cheung, R.C.C.; Yan, H. A Source-to-Source Compiler to Enable Hybrid Scheduling for High-Level Synthesis. Electronics 2025, 14, 4578. https://doi.org/10.3390/electronics14234578

She Y, Huang Y, Liu J, Cheung RCC, Yan H. A Source-to-Source Compiler to Enable Hybrid Scheduling for High-Level Synthesis. Electronics. 2025; 14(23):4578. https://doi.org/10.3390/electronics14234578

Chicago/Turabian StyleShe, Yuhan, Yanlong Huang, Jierui Liu, Ray C. C. Cheung, and Hong Yan. 2025. "A Source-to-Source Compiler to Enable Hybrid Scheduling for High-Level Synthesis" Electronics 14, no. 23: 4578. https://doi.org/10.3390/electronics14234578

APA StyleShe, Y., Huang, Y., Liu, J., Cheung, R. C. C., & Yan, H. (2025). A Source-to-Source Compiler to Enable Hybrid Scheduling for High-Level Synthesis. Electronics, 14(23), 4578. https://doi.org/10.3390/electronics14234578