The hardware components of the ecosystem have to address the following fundamental requirements:

A group of lightweight solutions exists for embedded devices, enabling edge processing using units with limited computational capabilities. The hardware platform must be accompanied by optimized algorithms that ensure a balance between image quality and performance while maintaining the assumed energy efficiency and meeting strict cost optimization requirements.

Following hardware, which is essentially a one-time purchase (unless lost, which is not uncommon in forest environments), the next cost factor is associated with maintaining data transmission in telecommunication networks. Modern solutions such as Starlink are excluded due to their cost, with preference given to GPRS transmission in GSM networks and integration with the Internet. Due to the abundance of available offers, no specific provider is analyzed; it is assumed that a cost-effective solution can be found for the defined ecosystem task. In contrast to free-of-charge networks, such as LoRa and nRF24L01, which operate in license-free bands, GSM-based transmission may involve operational costs; however, the wide range of available offers allows for selecting an optimal plan tailored to the system’s requirements. Nevertheless, this non-zero cost is typically related to the volume of transmitted data. Therefore, the algorithms are designed to optimize the amount of transmitted data, ensuring that only the data necessary for situational assessment is sent, i.e., required to decide whether an image should be retained or deleted, without the need for physical site visits.

2.1. Base Components

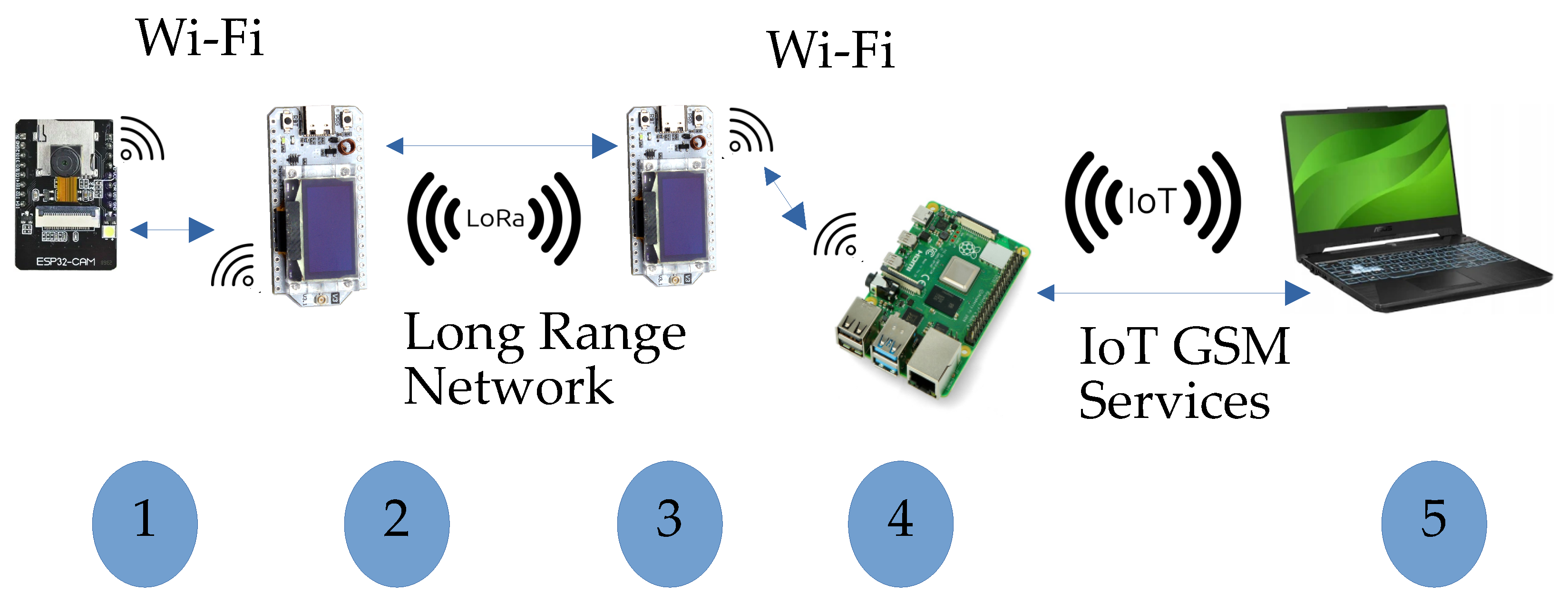

The base components of the photo trap ecosystem (

Figure 1) include the following:

Camera—provides access to any pixel of the recorded image and allows for the deletion of unnecessary images;

Microcontrollers with long-range RF network modems—enable the transmission of collected data;

IoT GSM gateway—ensures integration with the GSM network;

Operator workstation—equipped with software for evaluating and selecting transmitted images.

Some components may be functionally combined into a single module to reduce purchase costs by eliminating one microcontroller. However, such a solution may complicate development due to the diversity of system architectures.

Table 1 presents some popular universal platforms with Linux-based operating systems available on the market in the embedded device category, comparing their computational performance, energy efficiency, and costs. These platforms most commonly perform key Industrial IoT (IIoT) [

15,

16] tasks within edge or Fog computing models. The presence of embedded operating systems enhances their flexibility in engineering practice. The results from the 7-Zip benchmark test for the most popular embedded platforms, allowing for a performance comparison based on standardized ratings in a specific operating environment, are presented. In terms of performance, the Jetson Orin Nano [

17] is the best choice; however, its high purchase cost and significant energy consumption make it unsuitable from an economical point of view. However, the Jetson Nano family offers CUDA acceleration for computational processes with low response time requirements. Nevertheless, this feature is not decisive for the selection of the platform.

The most balanced choice is the Raspberry Pi 4, which offers very good performance [

18] in relation to its purchase cost and low energy consumption. Nevertheless, in our tests, we use the Raspberry Pi 3 to verify and optimize the algorithms under worst-case conditions. Additionally, it represents the most cost-effective solution.

However, certain system tasks exhibit lower requirements in terms of responsiveness and computational power. Within the proposed ecosystem, this includes a camera module and transmission modules integrated with a microcontroller. Our focus is placed on the widely adopted Arduino software platform (ver. 1.8.19 for Linux), which facilitates the rapid deployment of technical projects, thereby reducing the cost of application development and testing. This process is further supported by the use of sketches—predefined code snippets readily adaptable for integration into custom applications.

Table 2 presents a comparison of parameters for popular microcontrollers compatible with the Arduino environment. Although energy consumption across both platforms is comparable, performance metrics clearly indicate ESP32 as the most advantageous solution.

Since both the ESP32 and Raspberry Pi 3 platforms have integrated interfaces for the Wi-Fi standard, the next step involves selecting appropriate transmission modules. We consider two standards operating in license-free radio bands, both of which support documented long-range communication and maintain compatibility with the Arduino platform. The first is the LoRa transmission standard, represented by the SX1278 module; the second is the nRF24L01 module, which operates in the 2.4 GHz ISM band. The costs associated with acquiring and these modules are presented in

Table 3.

System transmission to the operator’s station is provided via a GSM modem and the Internet. When used as an IoT gateway, the Raspberry Pi 3 enables integration with a wide range of GSM modems through its USB ports, supporting various transmission standards. The market offers a broad selection of modems priced from a few to several USD, allowing for flexible adaptation to the financial model of the communication service provided by the operator.

2.2. Image Transfer and Reconstruction

The use of long-range RF networks is inherently constrained by limitations in signal power and transmission distance, which consequently lead to a significant reduction in bitrate. One approach to mitigating this issue involves reducing the volume of transmitted data. To achieve this, we employ Monte Carlo sampling to limit the number of transmitted data points and reconstruct image thumbnails from the sampled data. This method has previously been proposed as an alternative to conventional image downscaling algorithms; the full theoretical aspect of the method is presented in [

19]. The image thumbnail shown in

Figure 2 is generated by randomly selecting N pixels without replacement and grouping them into blocks of a predefined size (e.g., 6 × 6, 8 × 8, or 16 × 16 pixels) based on their original spatial locations in the image. For each block, a new pixel value is computed using a predefined function f(x)—for example, the arithmetic mean that is used in further studies, considering it as a convenient practical recommendation.

The resulting image serves as an approximation of the original, with its quality depending on the number of samples used in the reconstruction. The greater the number of samples, the higher the fidelity of the reconstructed image.

Depending on the chosen block size, the reconstruction yields thumbnails of varying resolutions, as illustrated in

Figure 3. For the block size of 6 × 6 pixels, the resulting image resolution approximates the Common Intermediate Format (CIF), which provides sufficient visual clarity for an operator’s analysis of transmitted image thumbnails. It is worth noting that the CIF defines a video sequence with a resolution of 352 × 288 pixels, used in the Video CD, although significantly smaller than a typical one in the PAL standard which is 720 × 576 pixels. Some other low-resolution reconstruction variants are less suitable for human perception but may still be valuable for scene analysis by artificial intelligence systems.

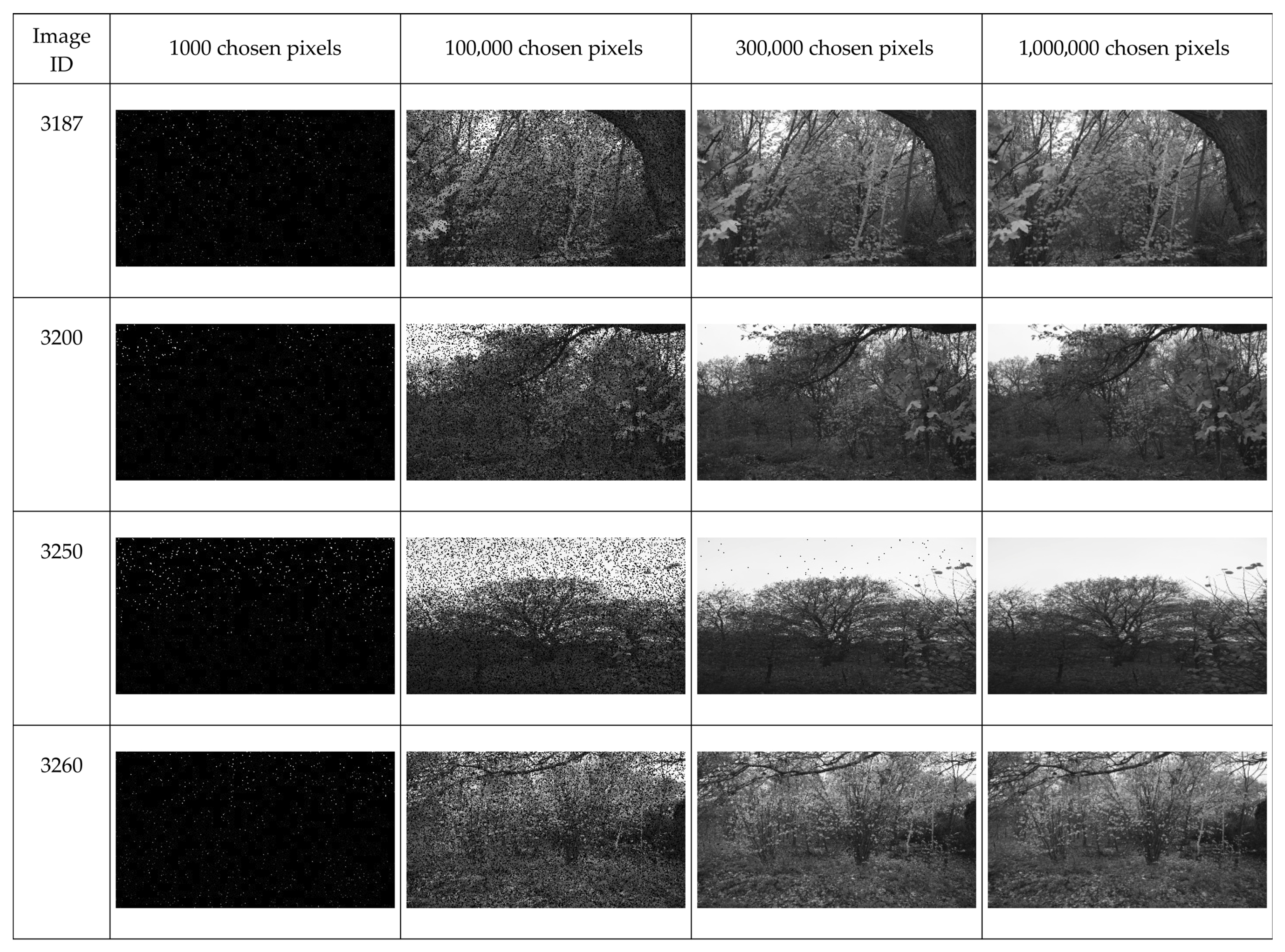

Figure 4 presents four example image reconstructions (rows) obtained with varying numbers of analyzed samples (columns). For 1000 samples, individual reconstructed pixels are visible against the original background, which appears as an artifact resulting from the initialization (zeroing) of the reconstruction array. In subsequent iterations, the images exhibit progressively improved quality, with a decreasing number of artifacts caused by blocks lacking pixel information. These artifacts can either be filtered out or allowed to disappear naturally as more samples are acquired, assuming the sampling process is continuous and the number of samples increases over time.

With 300,000 samples (nearly seven times fewer than the 2,073,600 pixels in an original Full HD image), a clear and detailed reconstruction is achieved, with only minor distortions remaining.

2.3. Data Flow Optimization Processes

Figure 5 illustrates exemplary information flows within the proposed ecosystem. On one hand, control commands are transmitted, allowing the operator to delete irrelevant images (Delete) or retain them (Save). On the other hand, the primary data stream consists of image data transmitted using Monte Carlo sampling.

The system faces two major constraints:

Long-range radio networks are characterized by a low bitrate, necessitating data reduction to ensure realistic image transmission times;

In GSM networks, operators may charge fees based on the volume of transmitted data.

An optimization scenario aimed at reducing network traffic is as follows. Image sampling is performed continuously until all data are transmitted, whereas reconstruction (at the IoT gateway) is executed cyclically. Only a thumbnail of predefined quality is sent to the operator. The transmission process is paused until the operator decides whether to retain or discard the image, based on the evaluation of its contents. If the image should be retained, transmission resumes during periods of system idle time. Once the full image is received, it is made available to the operator for archiving and subsequently deleted from both the camera and the IoT gateway.

A critical aspect of this approach is the automatic determination of the moment when the image reaches sufficient clarity to be submitted for evaluation, taking into account the limited computational resources of the IoT gateway.

To assess the quality of the reconstructed image, blind image quality assessment (IQA) metrics can be used, along with a decision criterion that determines when the image is acceptable for visual content evaluation.

From the variety of blind IQA metrics available in the PyIQA library [

20], only those capable of completing computations without software failure or excessive runtime were selected—initially, under 10 min for Full HD images and under 30 s for thumbnails. Metrics were initially filtered by the above time constraints to allow for comparative analysis; however, for operational deployment, only those with sub-second thumbnail evaluation were considered feasible. Although some metrics, such as HyperIQA, met the specified thresholds, their cumulative time–energy cost in an online pipeline on the Raspberry Pi 3 was unacceptable within the system’s operational budget; therefore, they remained offline benchmarks only. The most common cause of failure was insufficient memory, particularly for indicators based on deep learning models.

Table 4 summarizes the computation times on a Raspberry Pi 3 for Full HD and 320 × 180-pixel thumbnail images.

“Software error” refers to any condition that leads to uncontrolled program termination, most often due to memory allocation errors in image processing libraries. “Excessive execution time” is defined as an empirically determined time threshold of 30 s devoted for computing an IQA metric for a single image.

The evaluation was conducted on a test set of 140 Full HD images captured in forest environments under various conditions and perspectives, along with their corresponding thumbnails generated during reconstruction using different numbers of randomly sampled pixels. All blind IQA indicators available in PyIQA were considered, but only five of them, meeting the above-mentioned time constraints, were included in further analysis.

A brief overview of the blind image quality assessment metrics selected for further analysis is presented below. Each metric represents a distinct methodological approach to evaluating image quality without reference images and has been chosen based on its computational feasibility on resource-constrained platforms such as the Raspberry Pi 3.

The five methods considered in the analysis include the following:

ARNIQA (leArning distoRtion maNifold for Image Quality Assessment) [

21]—A modern deep learning-based metric that utilizes neural networks to learn distortion manifolds in a self-supervised manner; it is based on the assumption that different types of distortions form continuous spaces in the feature domain, enabling more precise quality assessment.

BRISQUE (Blind/Referenceless Image Spatial Quality Evaluator) [

22]—A classical metric based on natural scene statistics (NSS), which analyzes local distortions in the spatial domain; it employs a linear regression model to predict image quality based on statistical features extracted from the image; it is fast and reliable for traditional types of distortions.

HyperIQA [

9]—An advanced deep learning metric that incorporates attention mechanisms and a hyper-network architecture to adaptively weight different image regions during quality assessment; it demonstrates a high correlation with human perception across a wide range of distortions.

NIMA (Neural Image Assessment) [

23]—A convolutional neural network-based metric that predicts a distribution of quality scores rather than a single value; it is trained on large datasets with human ratings and effectively models the subjectivity of image quality perception.

PIQE (Perception-based Image Quality Evaluator) [

24]—A metric focused on perceptual as pects of image quality, primarily analyzing blur and blocky distortions; it is computationally efficient and performs well on compressed and blurred images.

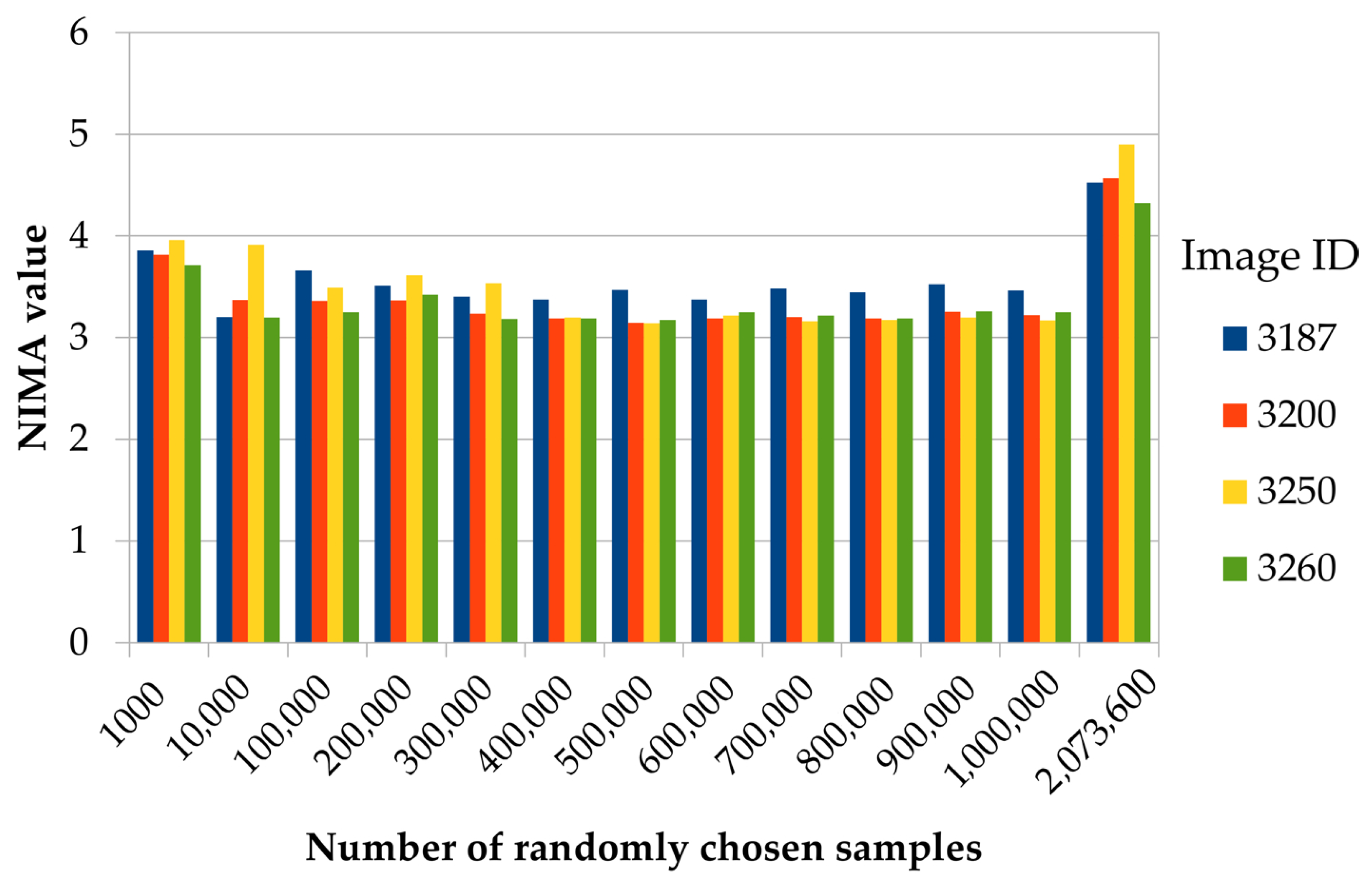

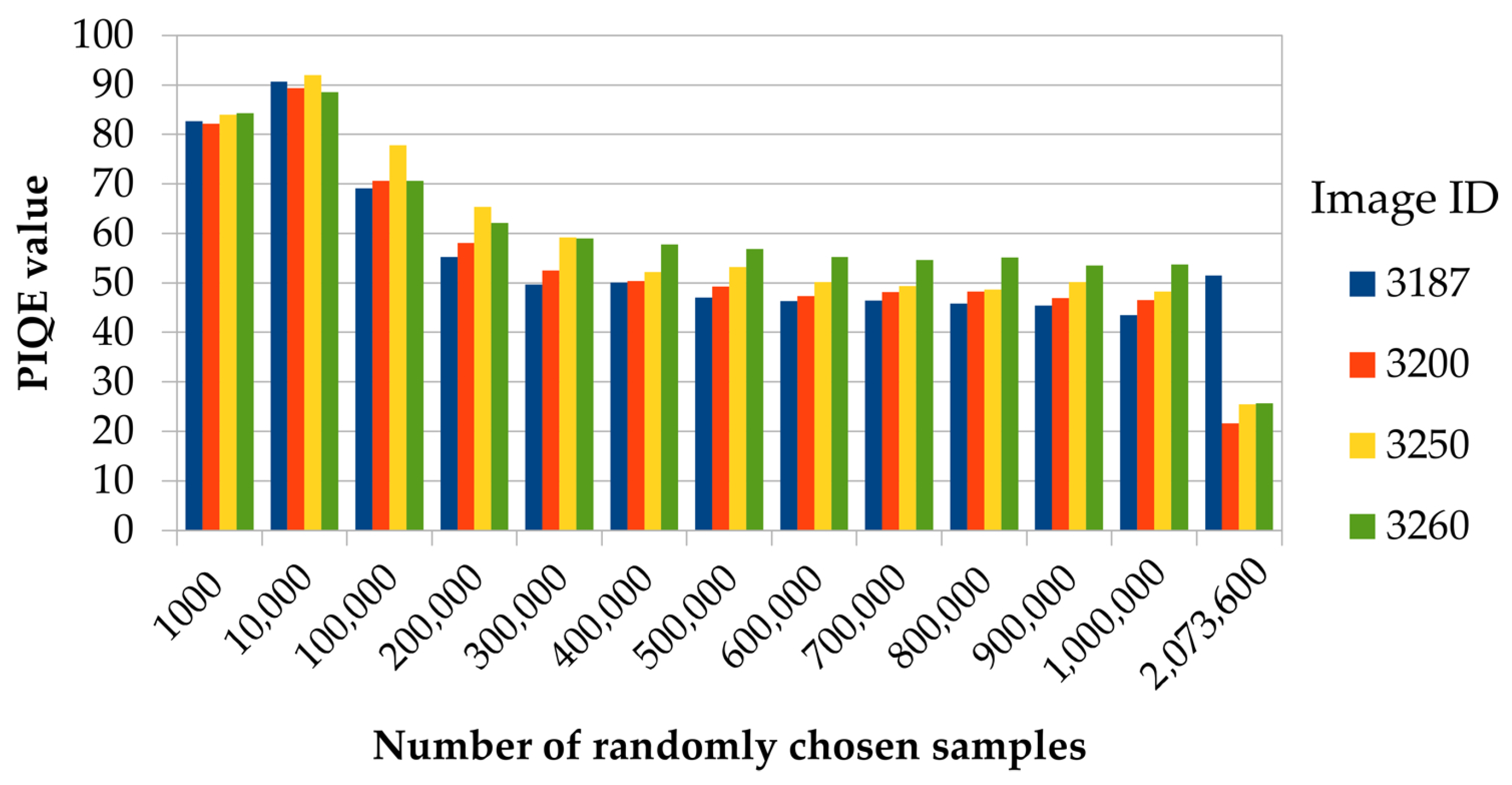

The analysis of the plots presented in

Figure 6,

Figure 7,

Figure 8,

Figure 9 and

Figure 10 enables an assessment of the stabilization potential of each quality evaluation method, using the bars on the right (obtained for the maximum number of samples, i.e., the reconstructed image without the use of the Monte Carlo method) as a reference point. It is important to note that early stabilization and sensitivity to incremental improvements represent different aspects: HyperIQA reaches stable values early, although they are quite far from the reference ones, whereas the BRISQUE metric reacts more strongly to quality changes during reconstruction. It is also worth noting that the penultimate set of bars represents 1,000,000 samples, i.e., less than a half of the full reconstructed image, resulting in a visible difference between the values of some of the metrics for the last two sets of bars in

Figure 6,

Figure 7,

Figure 8,

Figure 9 and

Figure 10.

As mentioned, HyperIQA reaches stable values relatively early and then remains stable, whereas the BRISQUE metric exhibits the steepest convergence, making it the most sensitive to incremental improvements during reconstruction. Nevertheless, despite these observations, the PIQE indicator was initially selected for the considered application due to its balance of accuracy and computational efficiency. The PIQE results remain within a narrow range from 0.32 to 0.40, indicating high consistency in quality assessment. The ARNIQA values also stabilize relatively quickly, although this metric exhibits a greater variability during the initial stages of reconstruction. After surpassing 400,000 samples, the values stabilize within the range from 0.54 to 0.60. The NIMA indicator shows moderate stabilization, with a downward trend as the number of samples increases. The PIQE and BRISQUE metrics exhibit the slowest stabilization, with significant fluctuations throughout all reconstruction stages, making them less suitable for an early-stage quality evaluation.

Although HyperIQA demonstrates the fastest stabilization and leads to results consistent with expectations for relatively few samples, it is operationally infeasible on resource-constrained platforms such as the Raspberry Pi 3. It additionally confirms the choice of the PIQE as the practical metric for real-time implementation.

In practice, a more insightful approach involves analyzing the stabilization dynamics of no-reference image quality metrics during the reconstruction process. To quantify this behavior, the convergence rate coefficient

b is introduced, defined as the slope in the linear regression model applied to the deviation sequence:

where the following notation is used:

This formulation is adapted from classical linear regression theory [

25] to address the specific challenge of evaluating the temporal behavior of no-reference quality metrics in low-data image reconstruction scenarios. The coefficient

b quantifies how rapidly metric values converge toward their reference value, with negative values indicating the convergence and the magnitude representing the rate of stabilization. The formal definition is given as follows:

where

Let denote the quality metric value computed from n randomly sampled pixels and the reference value obtained from the full reconstruction using all 2,073,600 pixels. The deviation quantifies the distance from the reference quality. The linear regression model assumes a constant convergence rate and a monotonic approach to . This assumption holds for 100,000 samples, where visual inspection and residual analysis confirm strong linear behavior across 140 forest images. The empirical threshold was validated by observing consistent convergence patterns with low residual variance in this sampling regime.

During analysis (the results are presented in

Table 5), the following factors were taken into account:

Data aggregation—all measurements across different images were treated as a single observation sequence;

Common trend—the computed convergence rate coefficient (b) represents the overall tendency of approaching the reference value across the entire dataset;

Averaging effects—differences between individual images were averaged;

Assumed linearity—the convergence process was assumed to follow a linear trend, which may not hold in the early stages of reconstruction when the sample size is small.

Observations and practical implications:

BRISQUE shows the fastest convergence toward the reference value, with a steep decline (coefficient = −2.674) as the number of reconstruction samples increases;

PIQE also converges rapidly, with a coefficient of −0.916, indicating a systematic improvement in quality assessment during reconstruction;

NIMA demonstrates moderate convergence speed (−0.058) but maintains stability throughout the process;

ARNIQA and HyperIQA exhibit very small convergence coefficients (−0.001), suggesting that their values remain relatively stable during reconstruction, without a clear trend of improvement.

The PIQE’s (

Table 6) opinion-unaware design and low computational cost make it optimal for real-time quality monitoring on resource-constrained IoT devices, despite its slightly lower correlation with subjective scores compared to the BRISQUE metric.

This interpretation aligns with the convergence analysis: the BRISQUE indicator is the best for early detection due to its steep convergence trend, whereas HyperIQA stabilizes quickly but changes further only a little. Summarizing the analysis of the data presented in

Table 4 and

Table 5, as well as

Figure 6,

Figure 7,

Figure 8,

Figure 9 and

Figure 10, the optimal final choice—balancing computation time, stability, and convergence rate—is the PIQE metric. Moreover, the observed usage characteristics align well with the practical application guidelines for this metric.

Thumbnail Quality Selection Criteria and Simulation Results

Based on publicly available exemplary programs in MATLAB version R2025a and Python version 3.9.2, e.g., acquired from GitHub (

https://github.com, accessed on 11 September 2025), StackOverflow (

https://stackoverflow.com, accessed on 11 September 2025), etc.,

Table 7 summarizes the typical value ranges of the IQA metrics that are most commonly associated with specific subjective image quality ratings. It is important to note that these thresholds are derived from practical recommendations and may require adjustment depending on the specific application domain.

These data will be useful for verifying the correctness of the automation process, which is critical for optimizing the amount of data transmitted within the system.

A key aspect of pausing or terminating the data stream is the definition of a quality criterion for the reconstructed thumbnail—its quality must be sufficiently high to allow for a meaningful content evaluation by the operator. This criterion is defined as the presence of at least three stabilization points, where each point corresponds to a quality metric value computed for a specific number of samples, and all three values fall within a predefined range. This range is determined by the mean absolute deviation (MAD) for each metric, and the stabilization points must occur within a defined sampling interval.

The iteration process is terminated if, for at least three consecutive thumbnails, the quality metric value

Q remains within a range no wider than the MAD value corresponding to the given metric.

where

—quality metric values for three consecutive samples.

—mean absolute deviation for the given quality metric:

MADBRISQUE = 17.117;

MADPIQE = 12.996;

MADNIMA = 0.467;

MADARNIQA = 0.144;

MADHyperIQA = 0.02.

The thumbnail corresponding to the last stabilization point is selected as the final input image for further processing. Pearson’s correlations r between the MAD and convergence rate were analyzed:

For the full dataset, r = 0.924, p = 0.025, and N = 5 metrics.

For the trimmed dataset (excluding extreme cases), r = 0.833, and p = 0.080.

The strong positive correlation (r = 0.924, p < 0.05) confirms that metrics with higher mean absolute deviation exhibit proportionally faster convergence rates, analogous to Hooke’s law in mechanics: greater initial displacement from equilibrium generates a stronger restorative force.

The linear scale in

Figure 11 reveals that the correlation

r = 0.924 is largely dominated by the two high MAD metrics (based on BRISQUE and PIQE), while the three low MAD metrics (based on ARNIQA, HyperIQA, NIMA) are “crowded” to the left. This suggests that the relationship between MAD and

is bimodal. In this case, we have two modes with different “stronger restorative forces”. The most useful class of metrics in practice is the one that ensures faster convergence (represented by BRISQUE and PIQE).

To verify the correctness of the stabilization detection mechanism, some simulations were conducted using a test dataset, with quality metrics computed every 10,000 samples. Since the early stages of reconstruction often introduce noise-like artifacts, an additional metric—the Peak Signal-to-Noise Ratio (PSNR)—was calculated. For this purpose, the reference image was defined as the thumbnail reconstructed using all available samples.

The selected results from the simulation are presented in

Table 8. The evaluation was performed on a dataset of 140 forest images, with sampling intervals of 10,000 pixels.

To evaluate the robustness of the three-point stabilization criterion, a confusion matrix analysis was performed on the complete test dataset of 140 forest images. The ground truth was defined as follows:

Reference image: Full reconstruction using all available samples (2,073,600 pixels).

Acceptability threshold: A thumbnail is considered “ready for operator review” if it achieves the following: a PSNR ≥ 28 dB (relative to full reconstruction) AND SSIM ≥ 0.85 (relative to full reconstruction).

These thresholds were established based on pilot studies with human operators, where thumbnails meeting both criteria were consistently rated as “sufficient for wildlife identification and scene analysis.”

The classification outcomes were interpreted as follows:

True Positive (TP): Stabilization detected AND thumbnail meets the quality threshold;

False Positive (FP): Stabilization detected BUT thumbnail quality insufficient;

True Negative (TN): No stabilization detected AND thumbnail quality insufficient;

False Negative (FN): No stabilization detected BUT thumbnail quality already sufficient.

Table 9 presents the confusion matrix results for all five evaluated IQA metrics, with performance measured across sensitivity (recall), specificity, overall accuracy, and F1-score.

The classification metrics used during the analysis are as follows:

Sensitivity (Recall) = —reflecting the ability to detect when a thumbnail is ready;

Specificity = —reflecting the ability to avoid premature stopping;

Accuracy = —reflecting the overall correctness;

F1-score = —used as an alternative overall index.

The confusion matrix shows that the PIQE achieves a sensitivity of 94% with only a 4.3% false negative rate, confirming the robustness of the three-point MAD-based stopping rule for operational implementation on low-computing systems.

In summary, the proposed photo trap ecosystem integrates the cost-effective hardware and the optimized algorithms to ensure efficient image transmission and processing in remote forest environments. By leveraging modular components and scalable communication protocols, the system achieves a balance between performance, energy consumption, and operational cost, laying the foundation for sustainable and autonomous wildlife monitoring.

Furthermore, the PIQE metric—identified through simulation and analytical comparison as the most suitable for early-stage image quality evaluation—stands out due to its unique balance of computational efficiency, perceptual relevance, and rapid stabilization. Unlike deep learning-based indicators, the PIQE performs reliably on resource-constrained platforms such as the Raspberry Pi 3, with computation times under one second for thumbnail images. Its sensitivity to blur and blocky distortions aligns well with the artifacts introduced by Monte Carlo sampling, making it particularly effective for assessing low-resolution reconstructions.

The PIQE metric also demonstrates a favorable convergence rate and low mean absolute deviation, enabling the robust detection of stabilization points during iterative image reconstruction. These properties ensure that the PIQE can serve as a practical and responsive criterion for terminating data transmission, minimizing bandwidth usage while preserving sufficient image clarity for the operator’s decisions.

In addition to the stabilization point-based criterion, the PIQE metric was further verified during real-world field tests. This step is essential to confirm the practical applicability and robustness of the proposed optimization strategy.

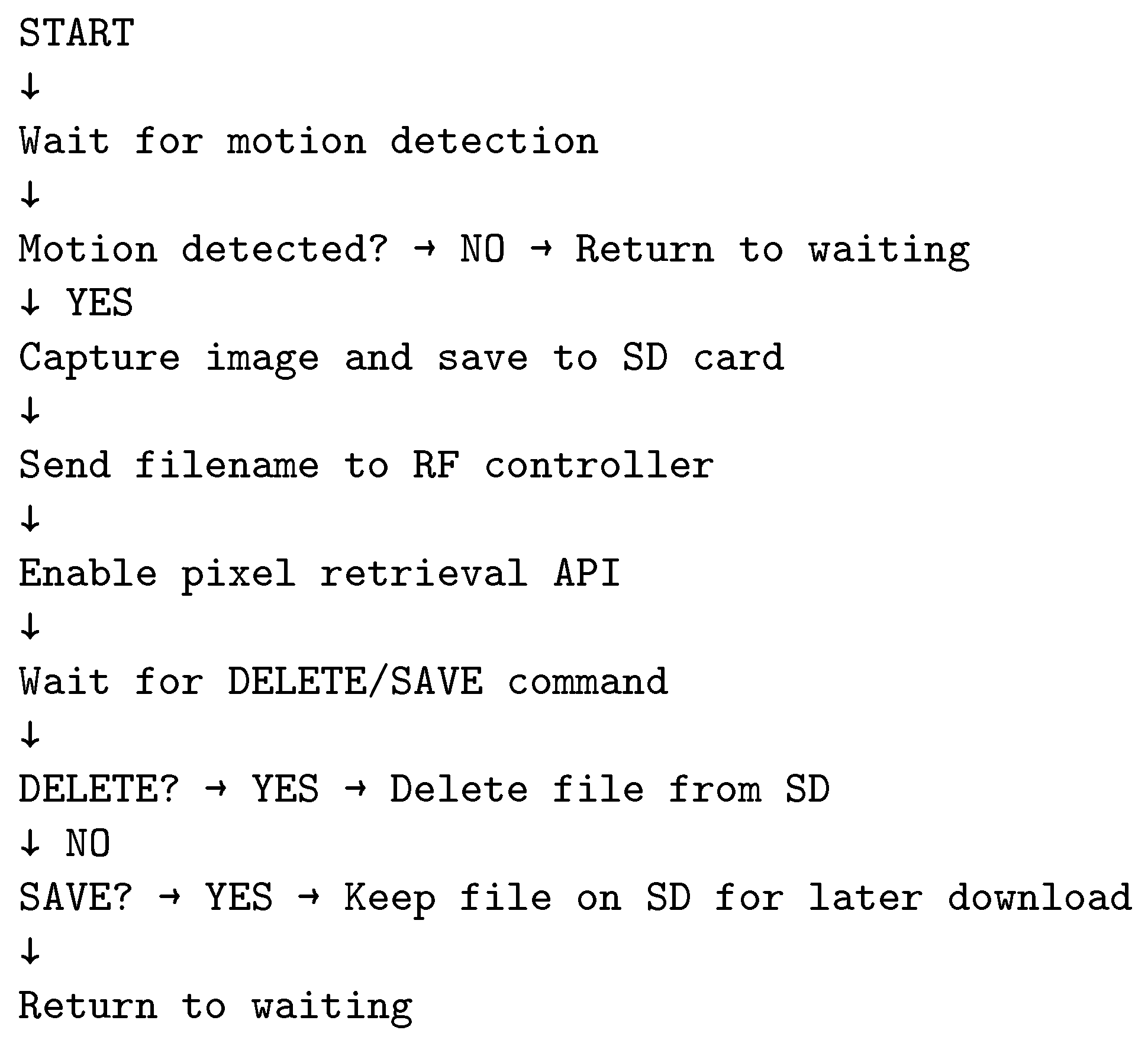

The described algorithms and transmission interruption criteria allow for the definition of a pseudo-code for the entire IoT ecosystem (

Figure 12,

Figure 13,

Figure 14 and

Figure 15).

Monte Carlo sampling exhibits inherent error resilience through statistical averaging:

Statistical Error Correction: Each 6 × 6-pixel block accumulates multiple random samples during transmission; the block value computed as naturally averages out erroneous samples; for K samples per block, a single transmission error impacts only 1/K of the final value.

Graceful Degradation: Lost packets reduce sample count but do not corrupt existing data. At stabilization (180,000–230,000 samples), an average of 3–4 samples/block provides 25–33% error dilution without ECC overhead.

Synchronization Resilience: Periodic sync frames (every 1000 samples) enable recovery from desynchronization; the loss of a single sync frame affects a maximum of 1000 samples before the next resynchronization point.