Driving for More Moore on Computing Devices with Advanced Non-Volatile Memory Technology

Abstract

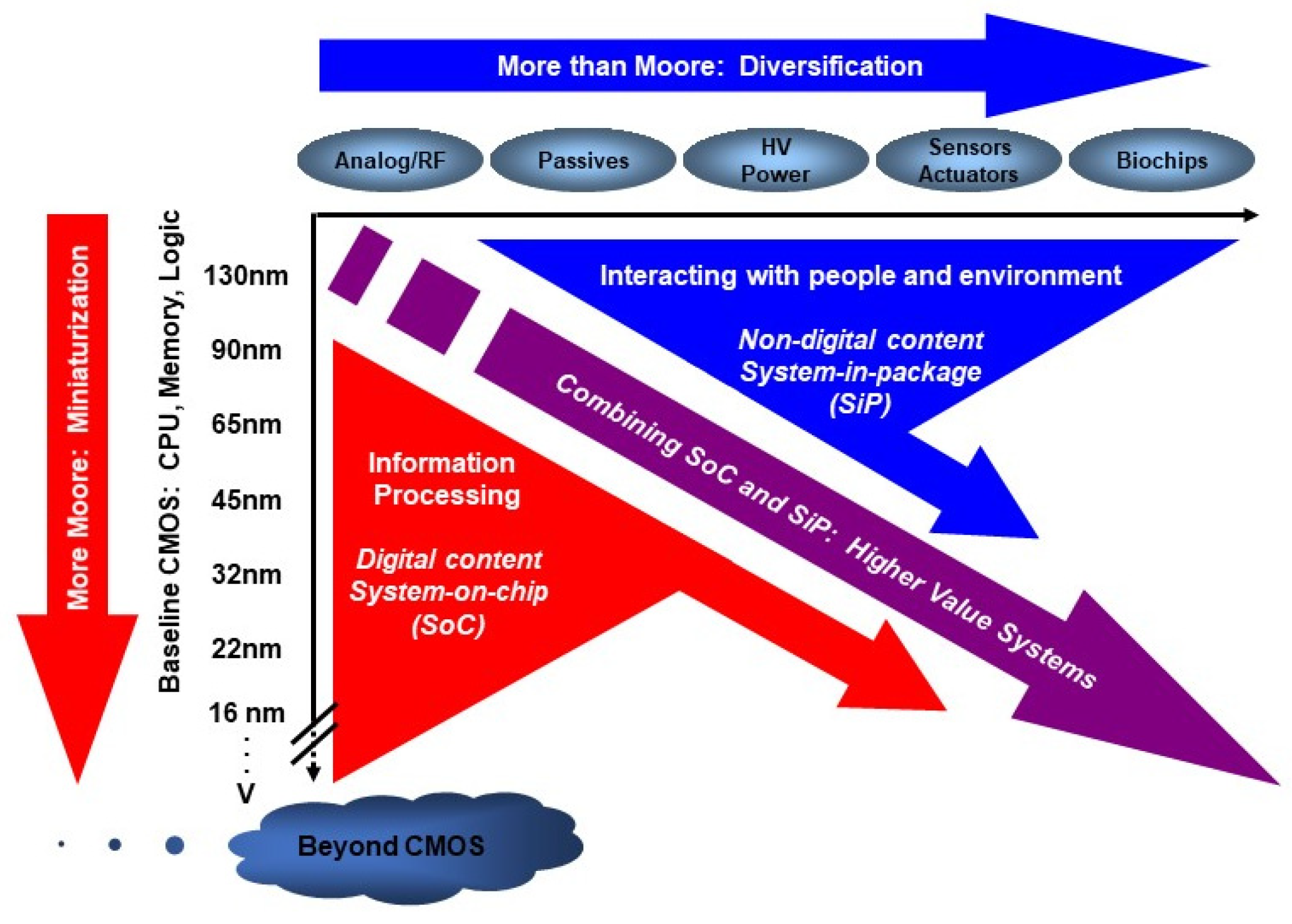

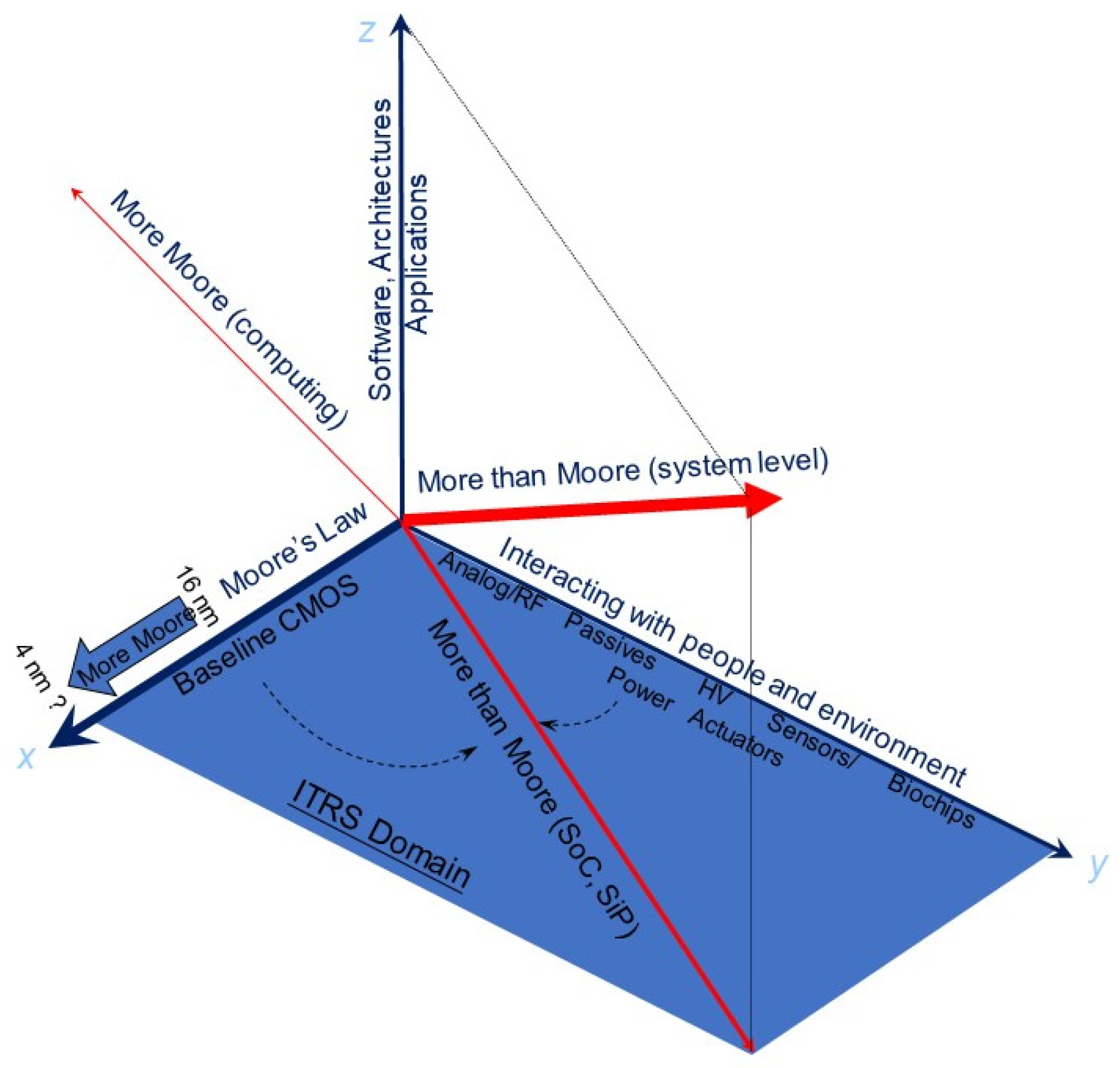

1. A Different Perspective of More-than-Moore and More Moore

- (a)

- RF and analog CMOS circuits for communications and signal processing;

- (b)

- On-chip integration of passive components such as capacitors and inductors;

- (c)

- High-voltage and power-management devices for energy control;

- (d)

- Transducers and sensors capable of detecting and processing physical, chemical, and biological signals;

- (e)

- Biochips designed for biomedical diagnostics and interfacing with living systems.

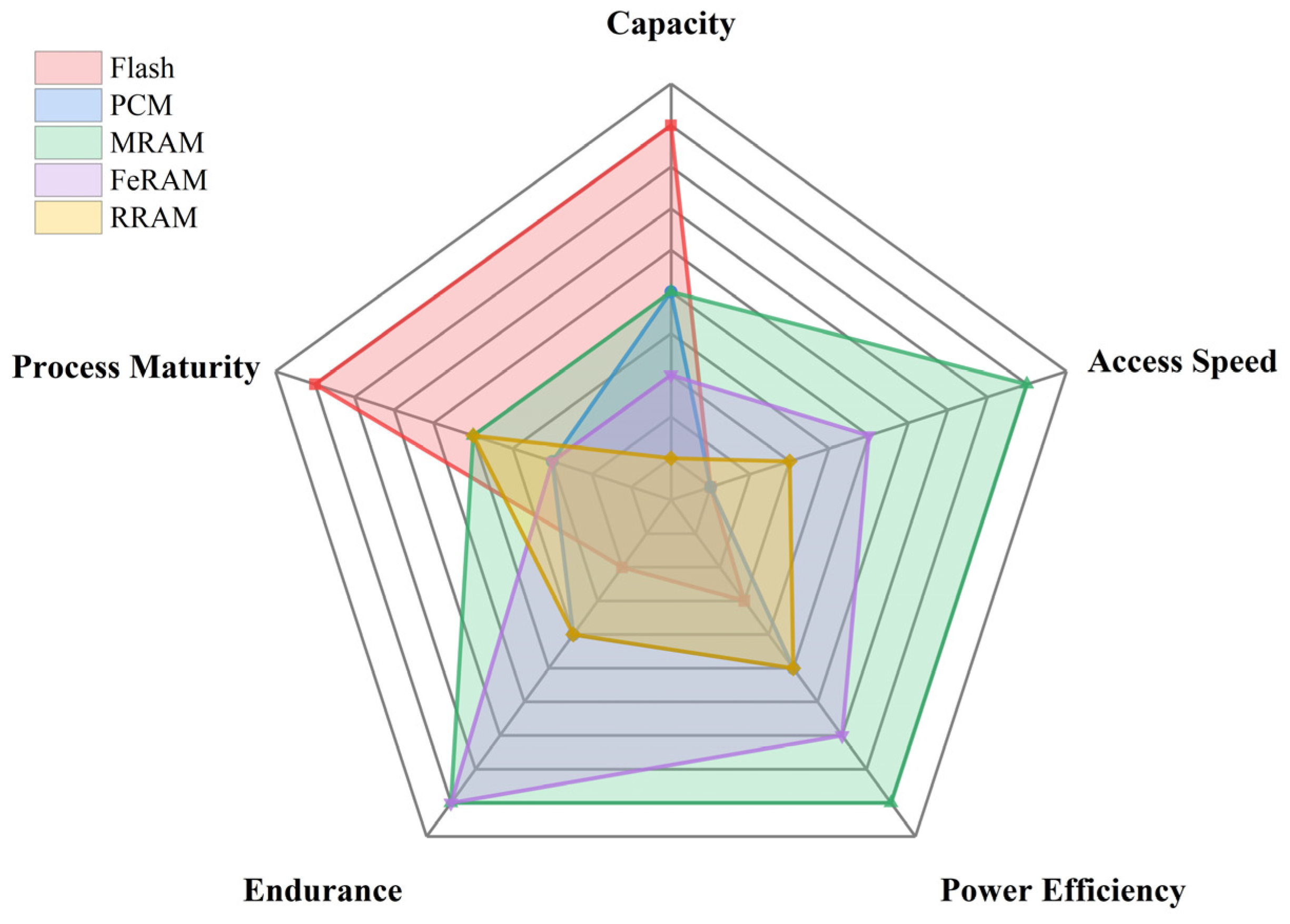

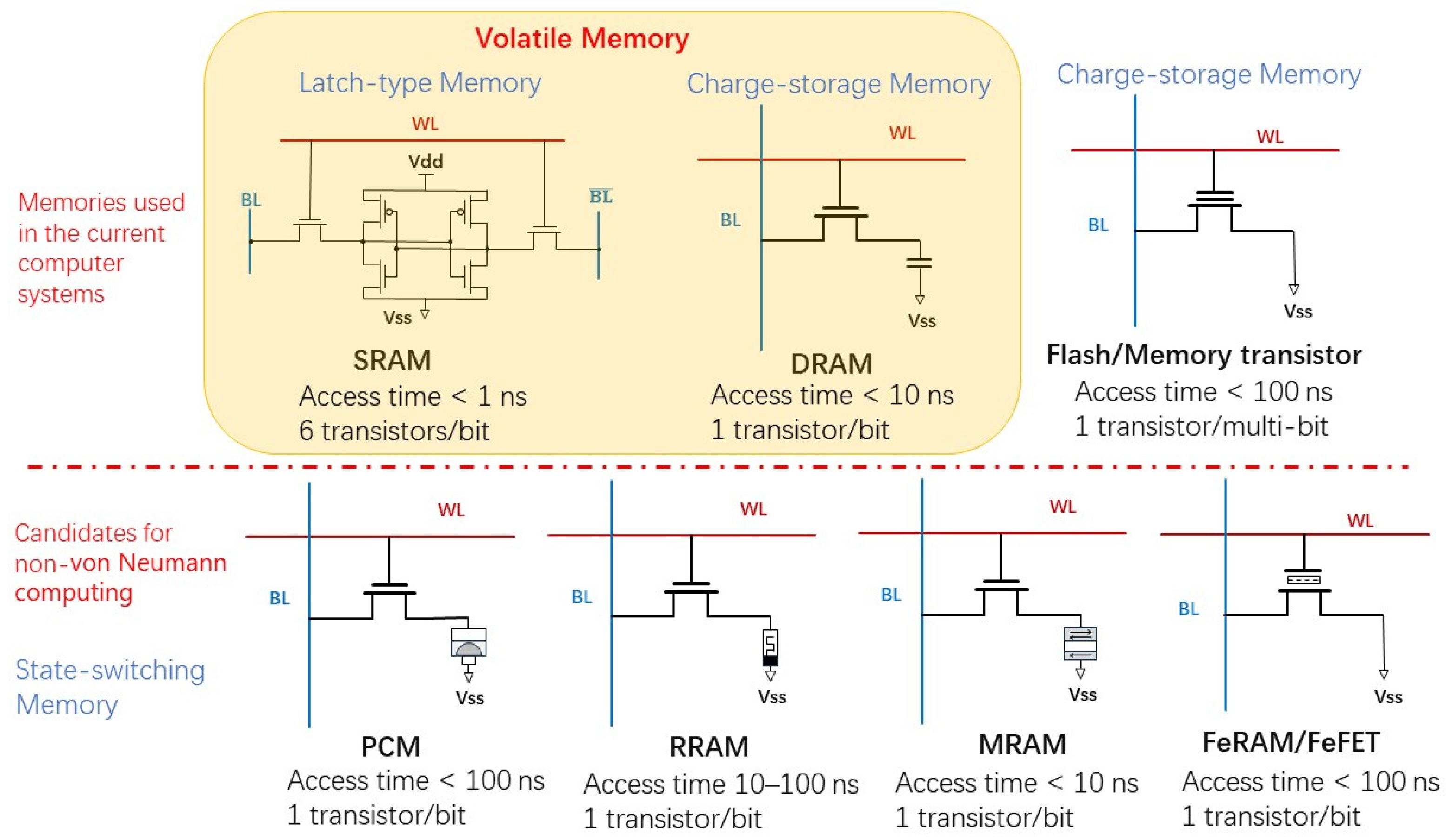

2. Overview of Non-Volatile Memory Technology

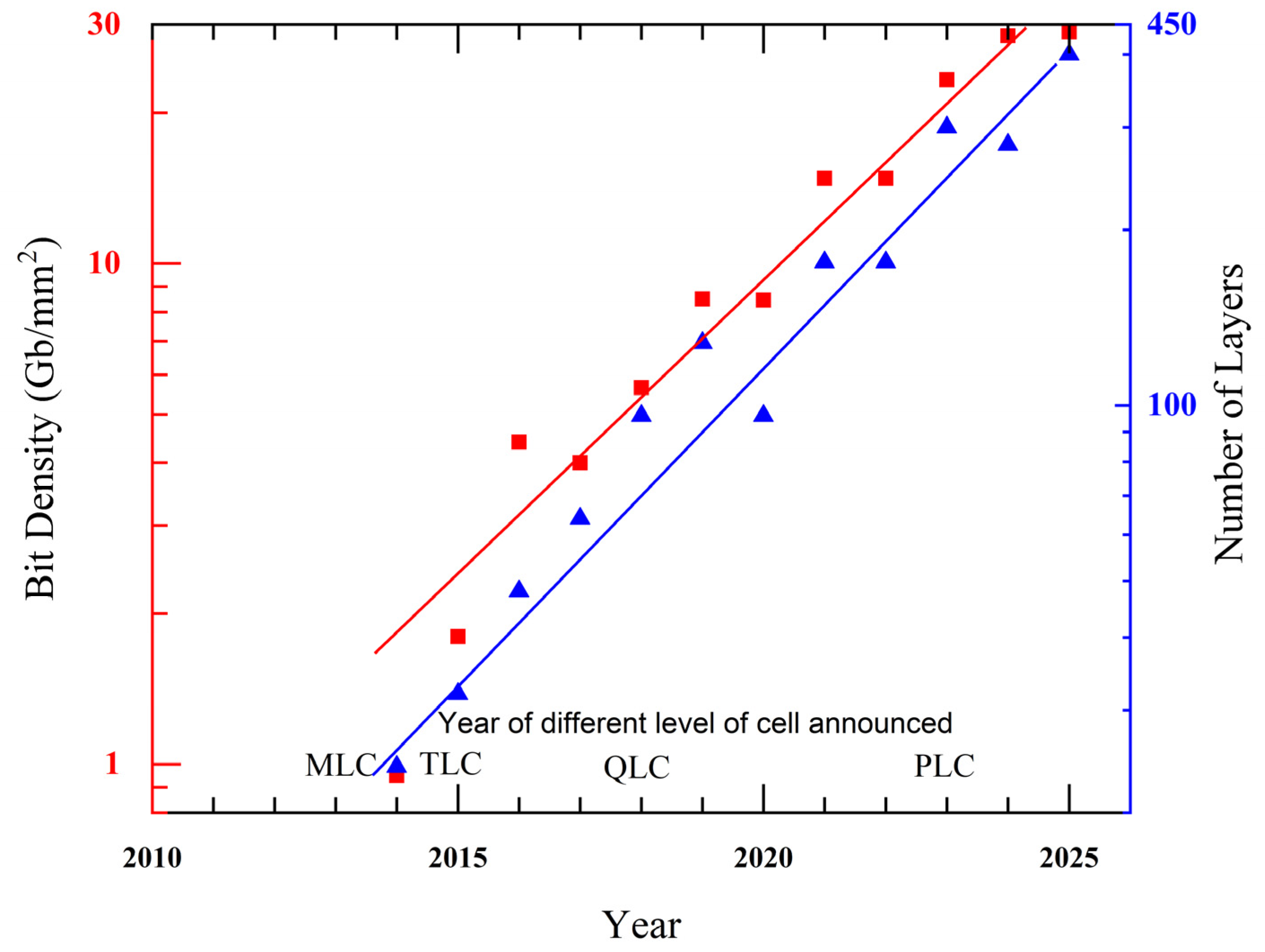

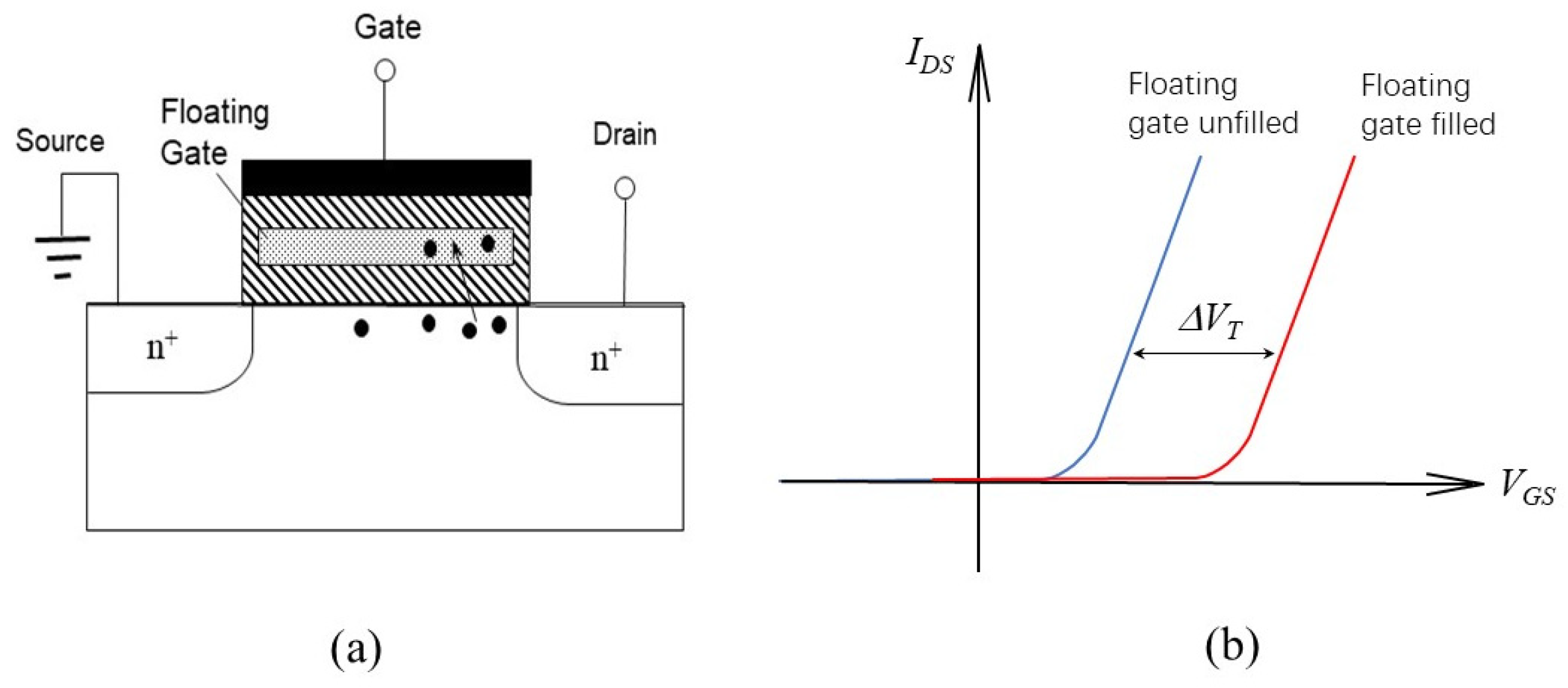

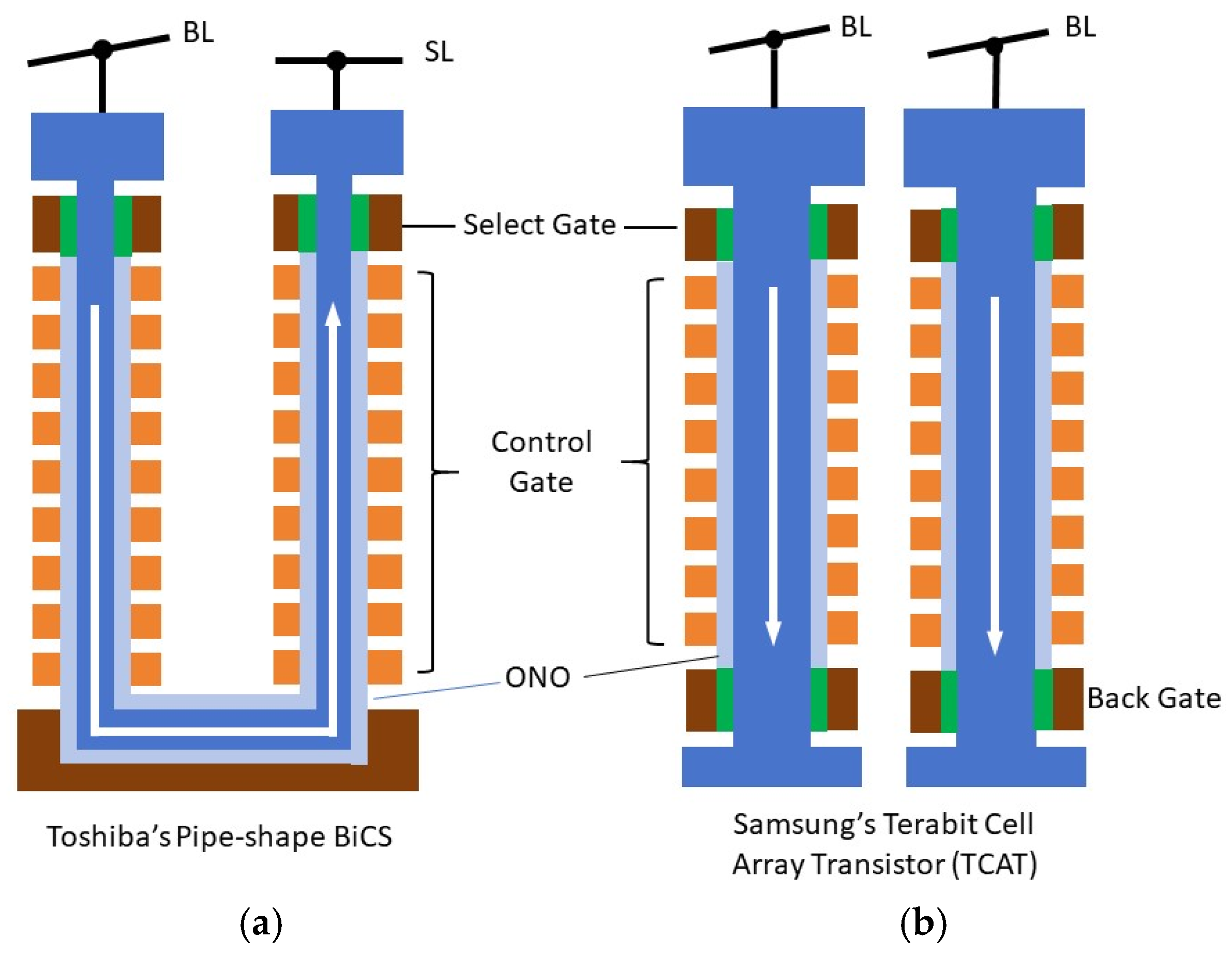

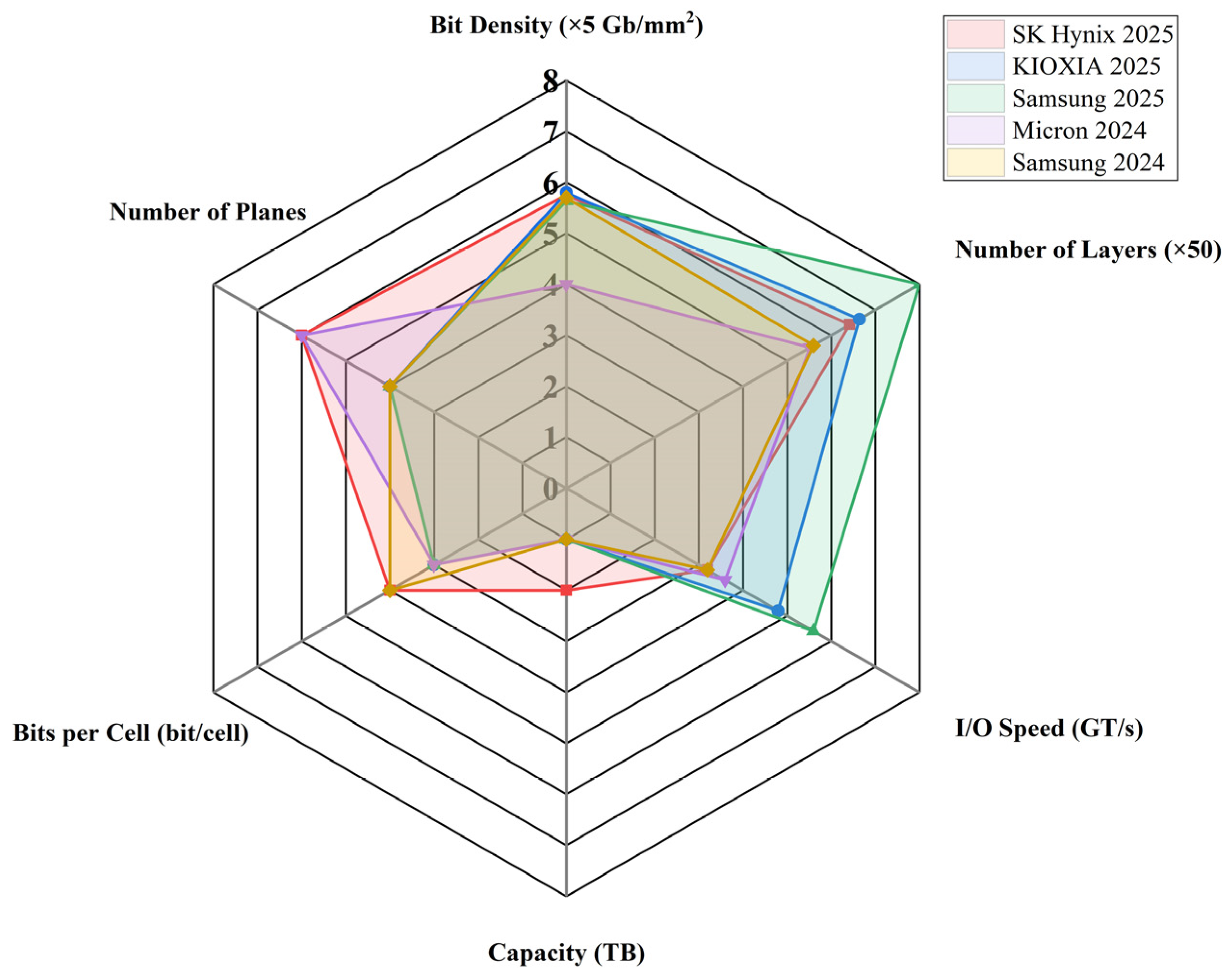

2.1. Flash NAND: Another Benchmark of CMOS Technology

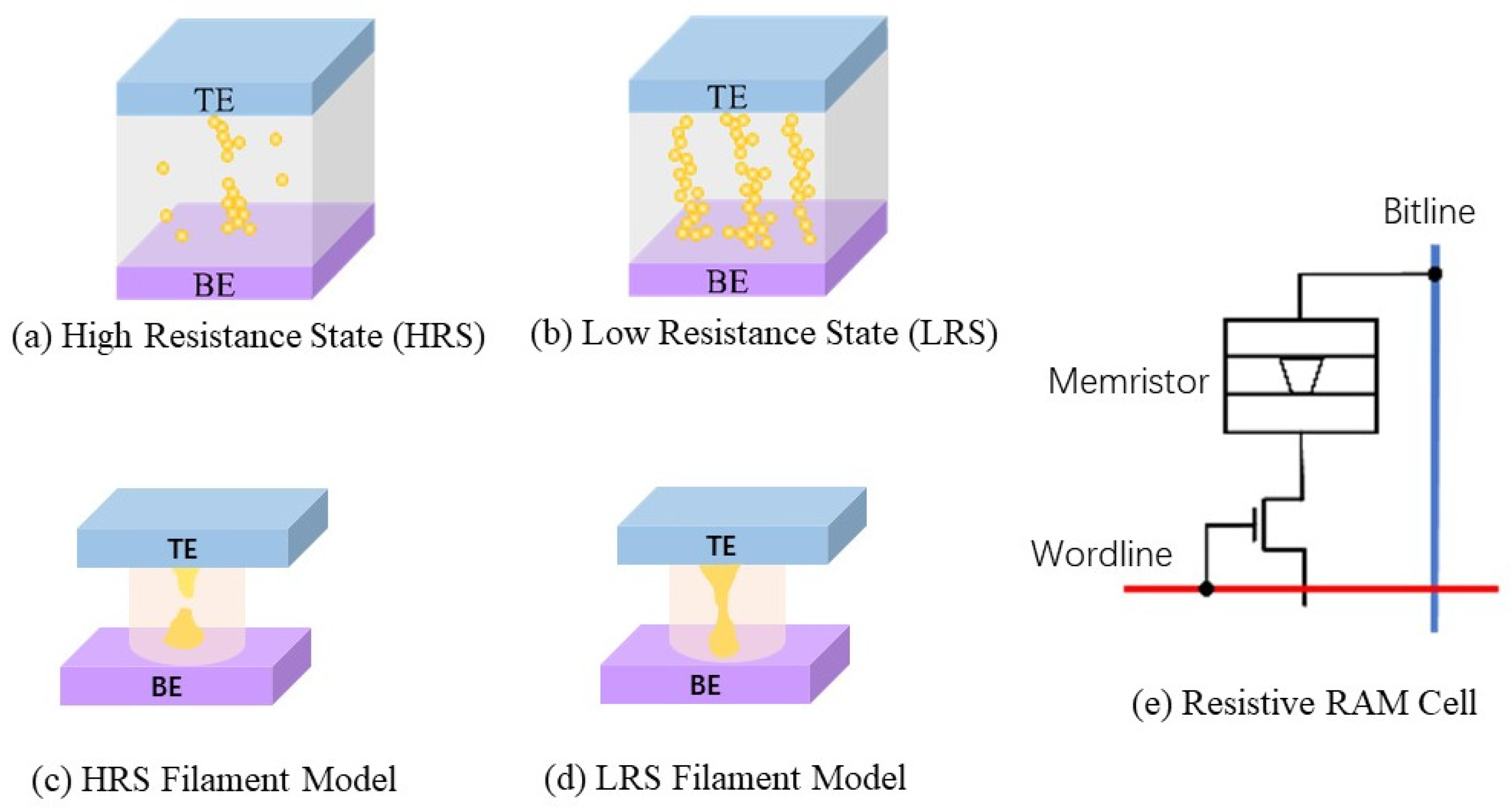

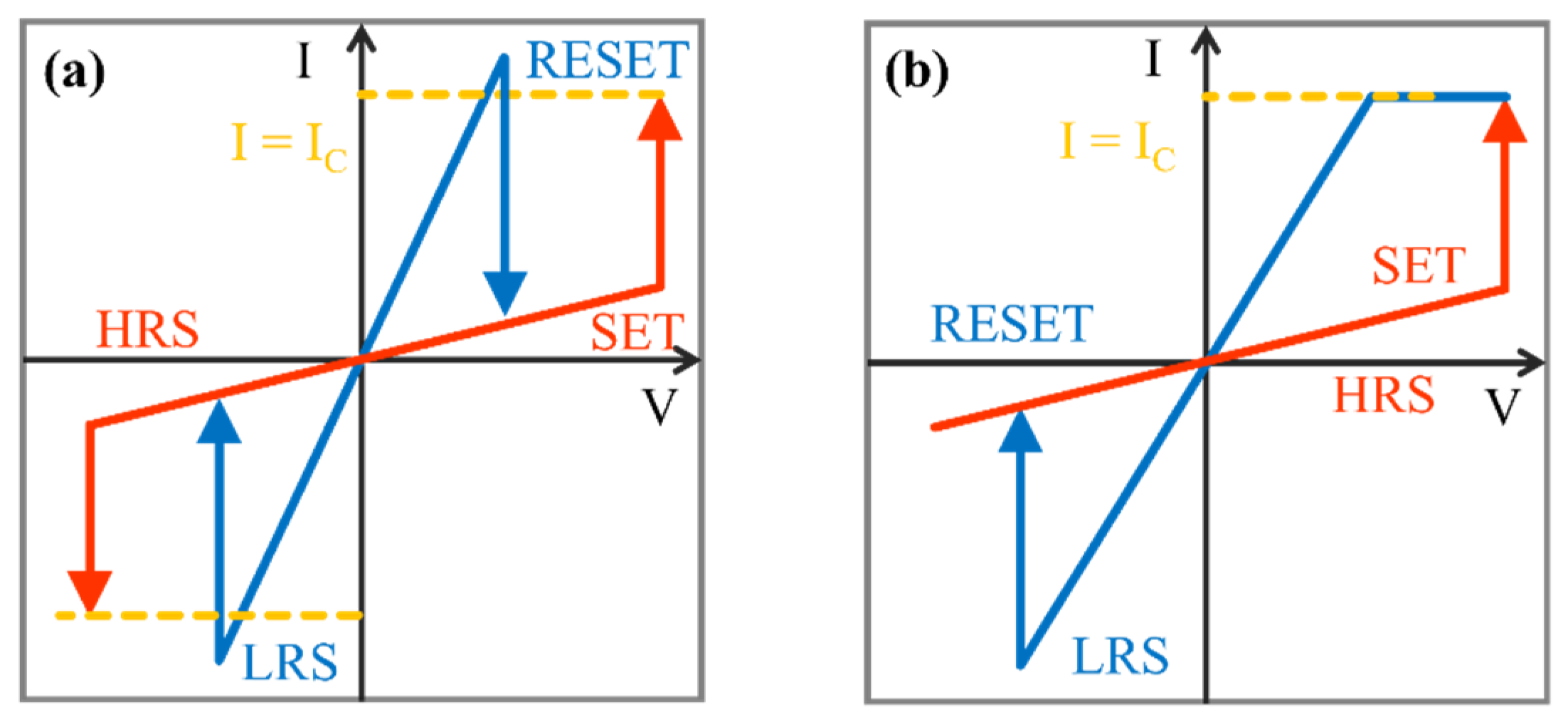

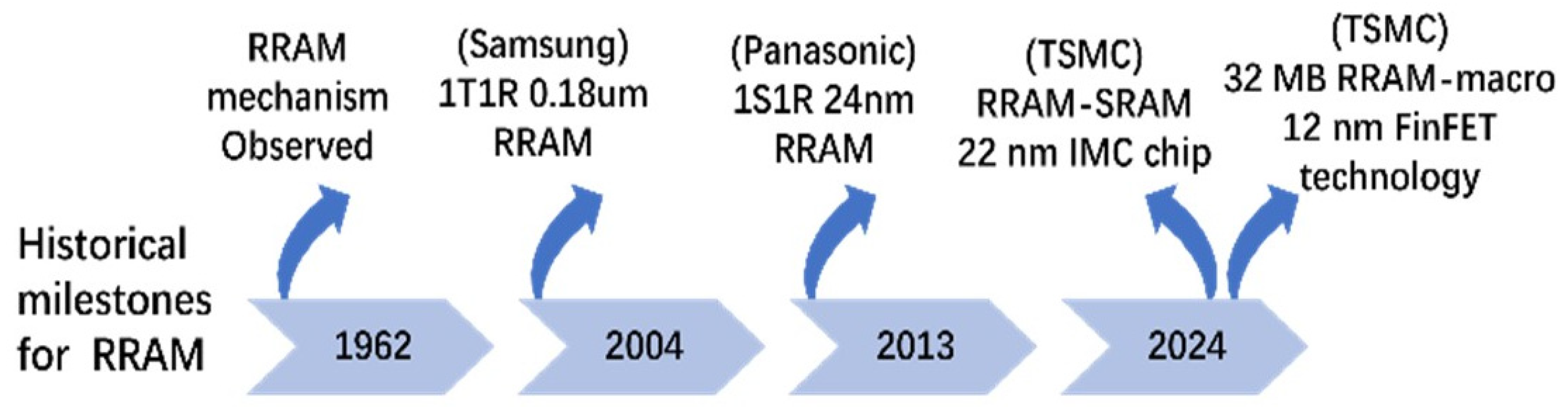

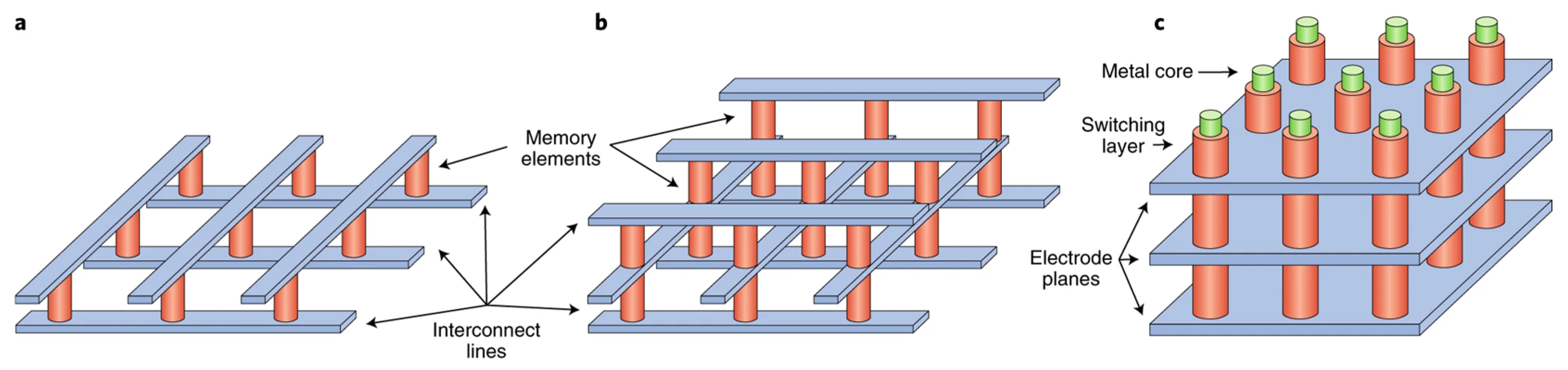

2.2. Resistive Random-Access Memory (RRAM)

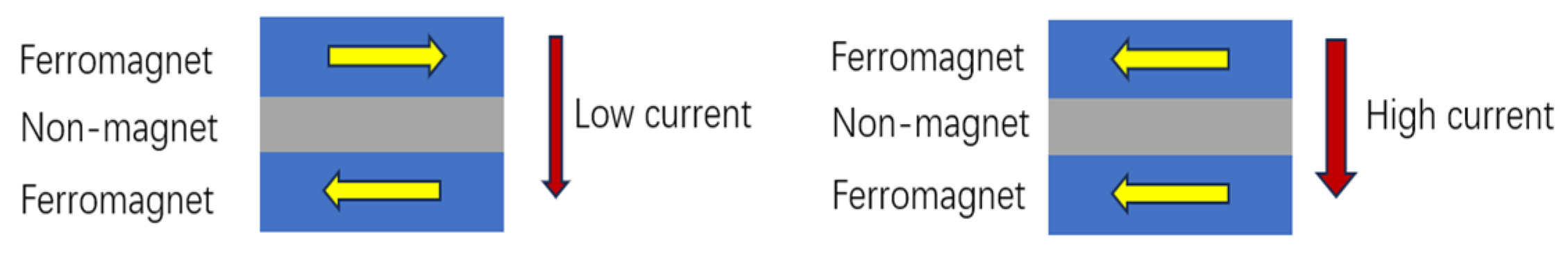

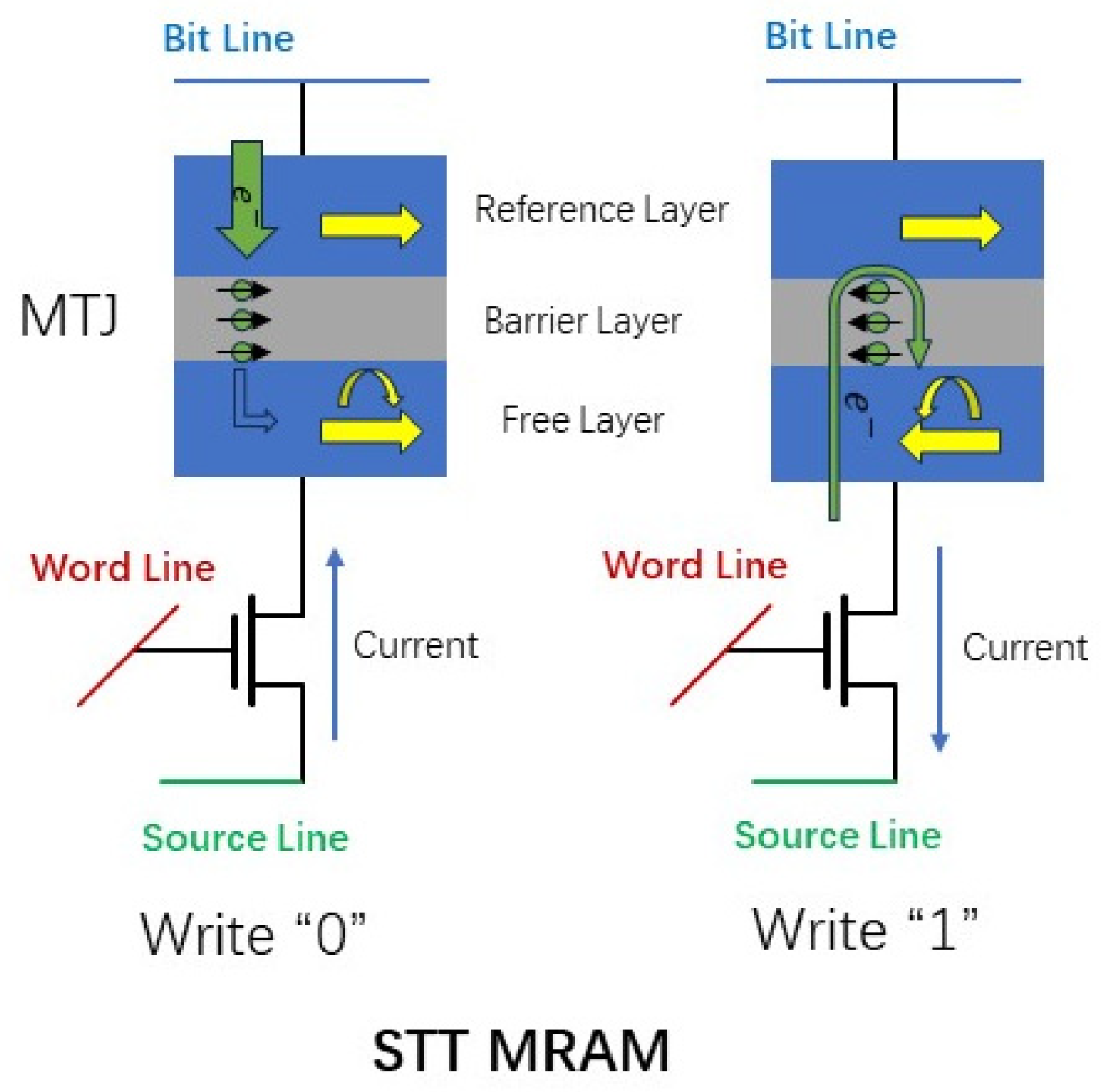

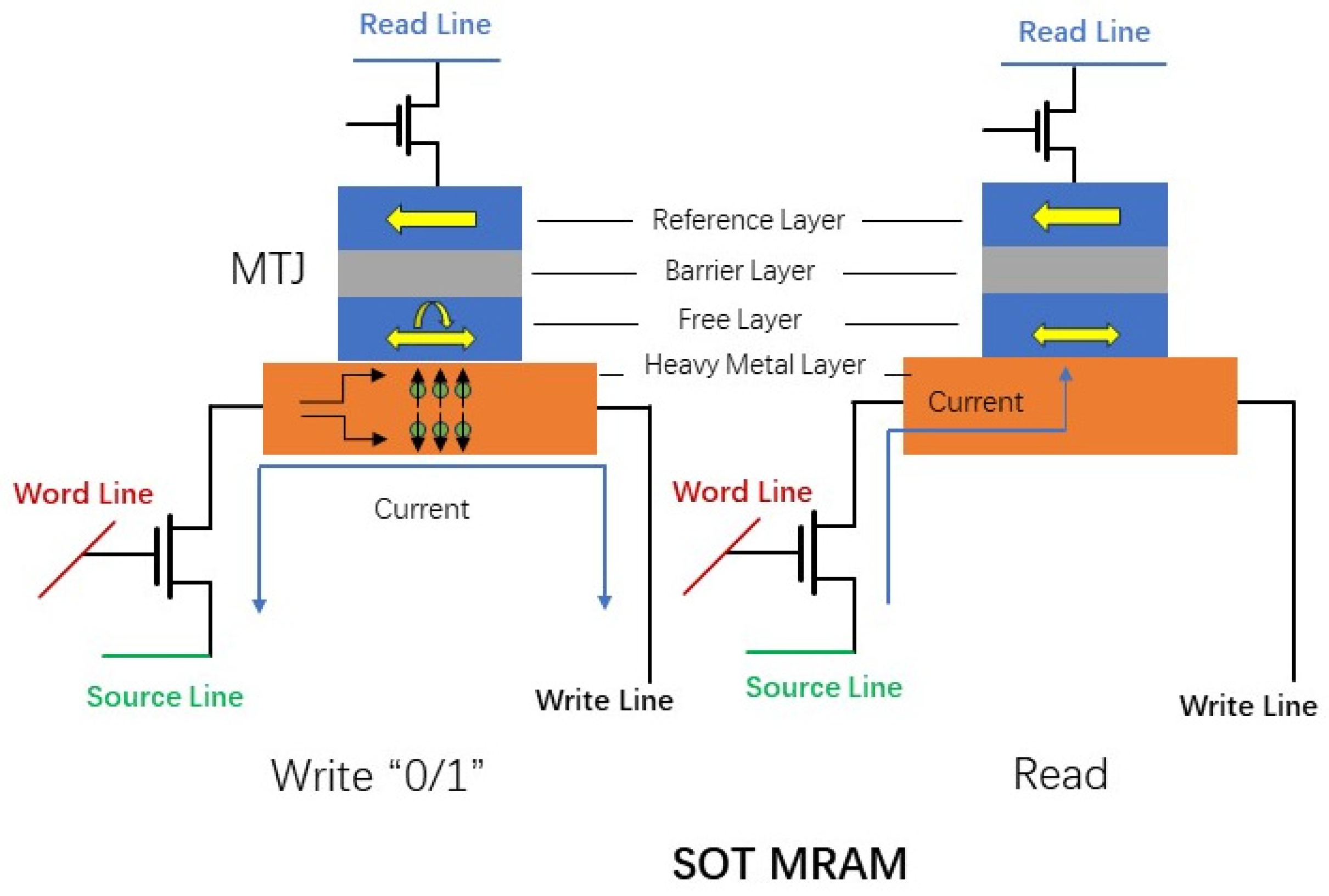

2.3. Magnetic Random-Access Memory (MRAM)

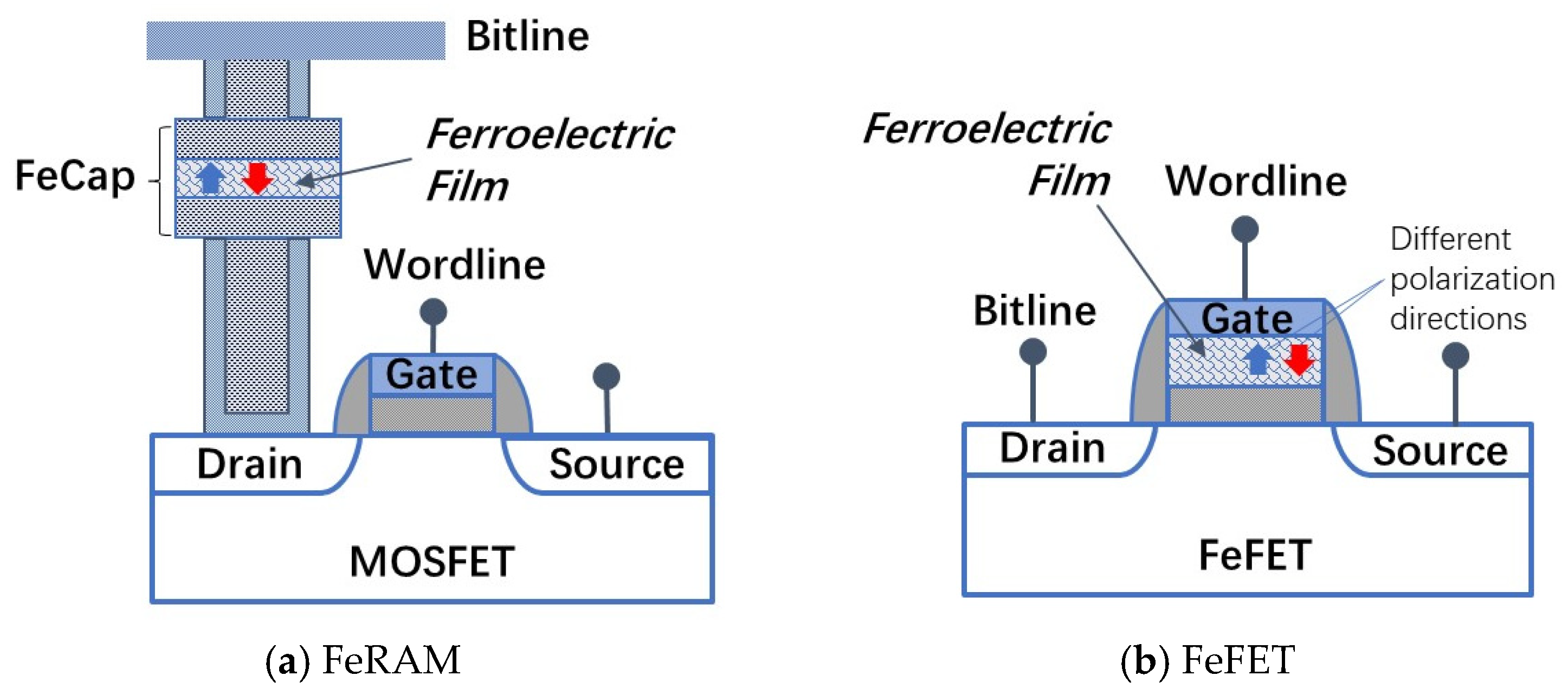

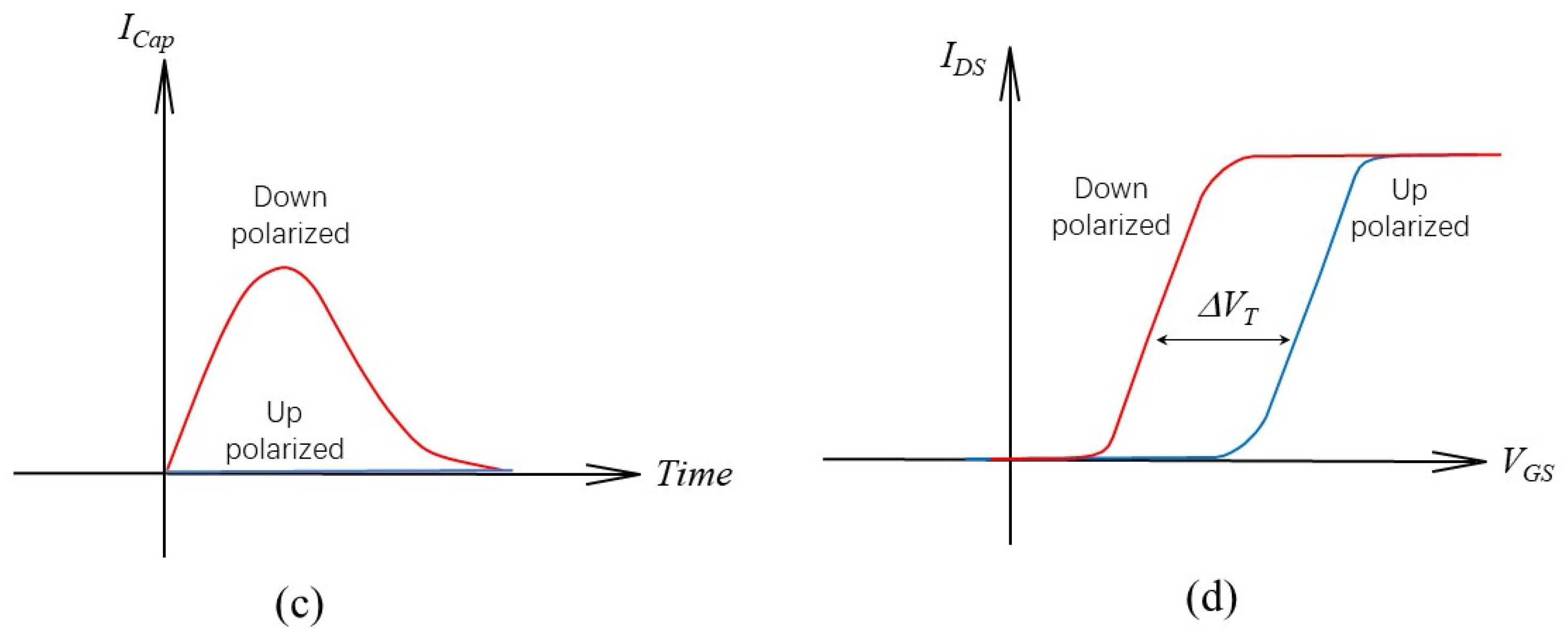

2.4. Ferroelectric RAM (FeRAM) and FeFET

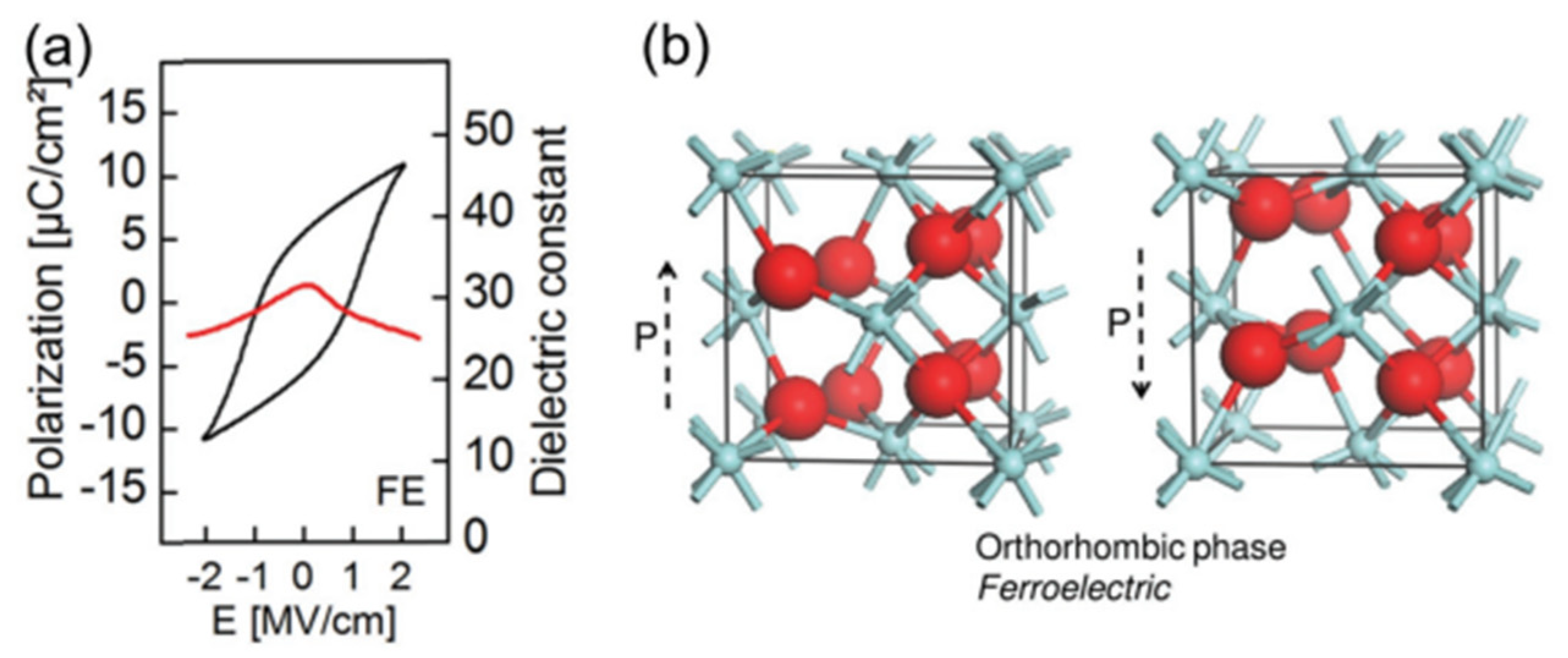

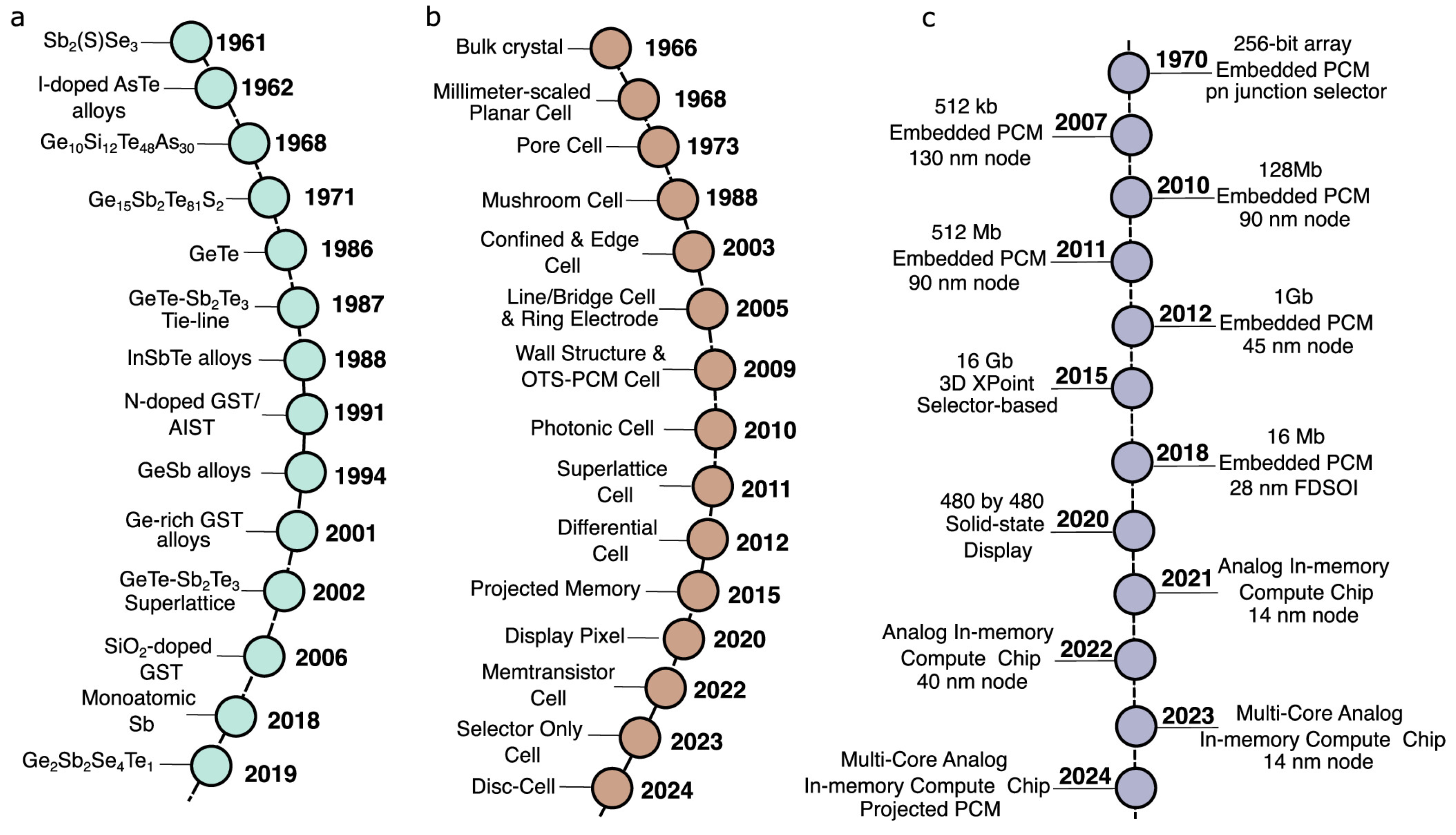

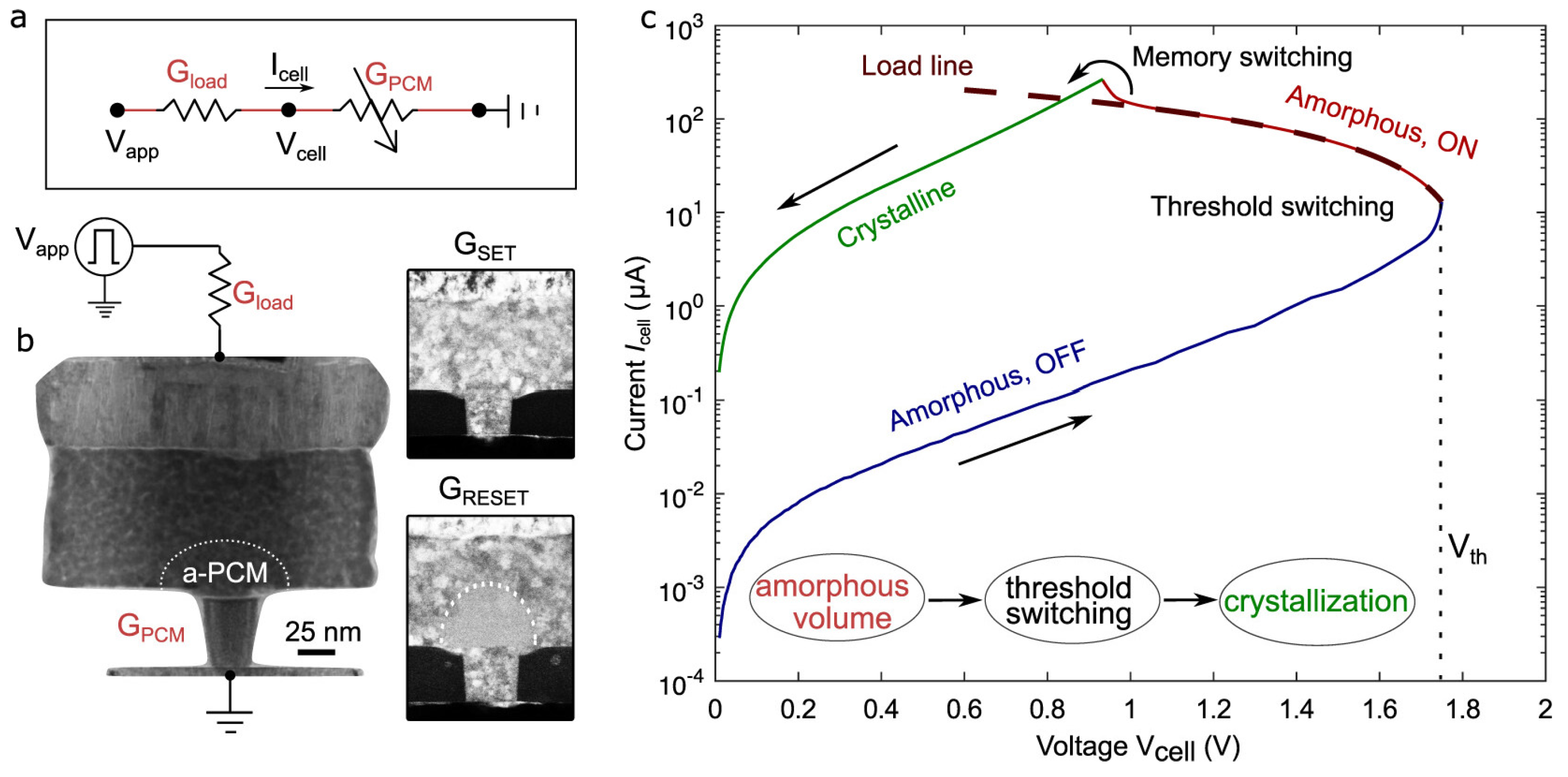

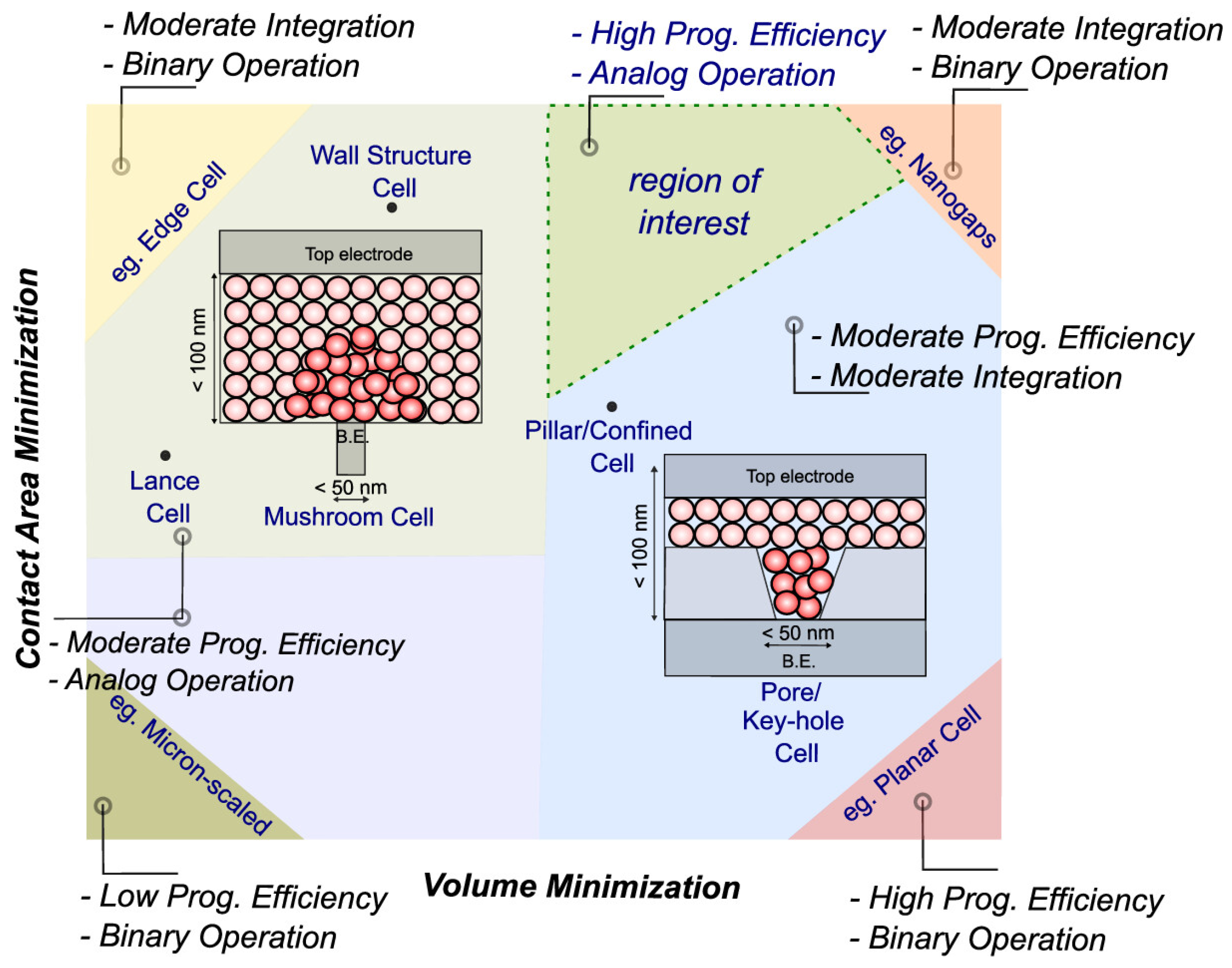

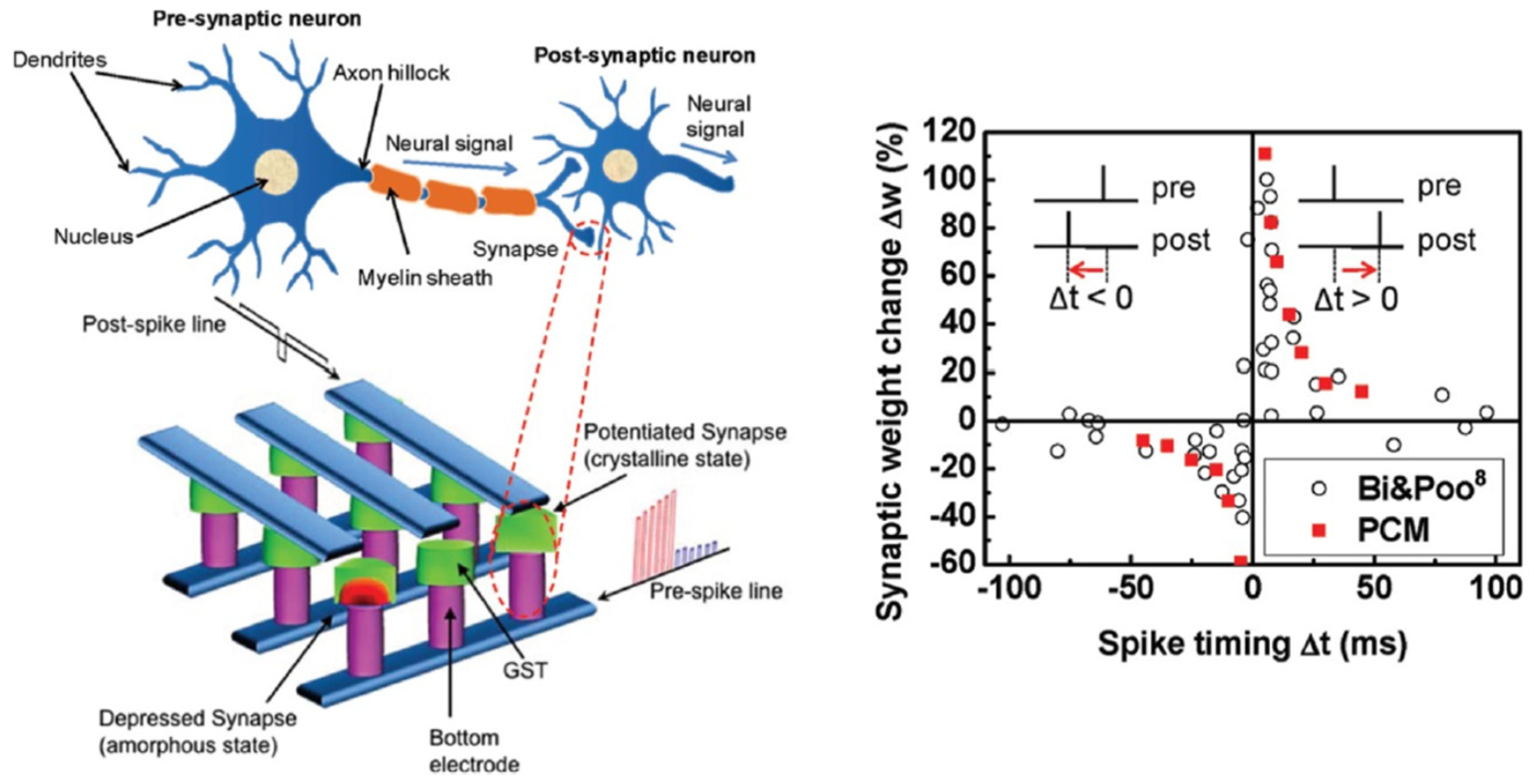

2.5. Phase-Change Memory (PCM)

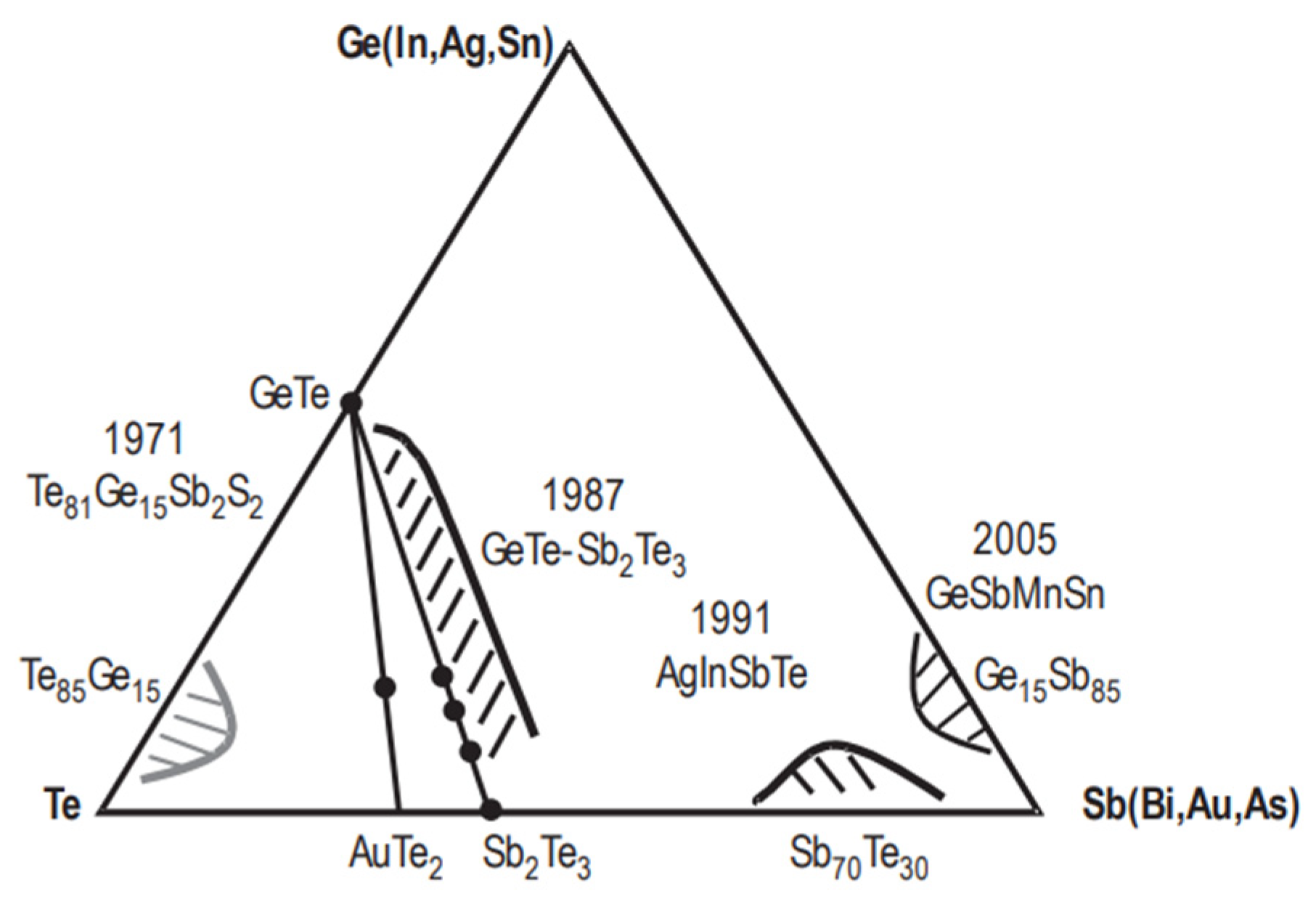

2.6. Summary

3. Memory Technology for More Moore in Computing

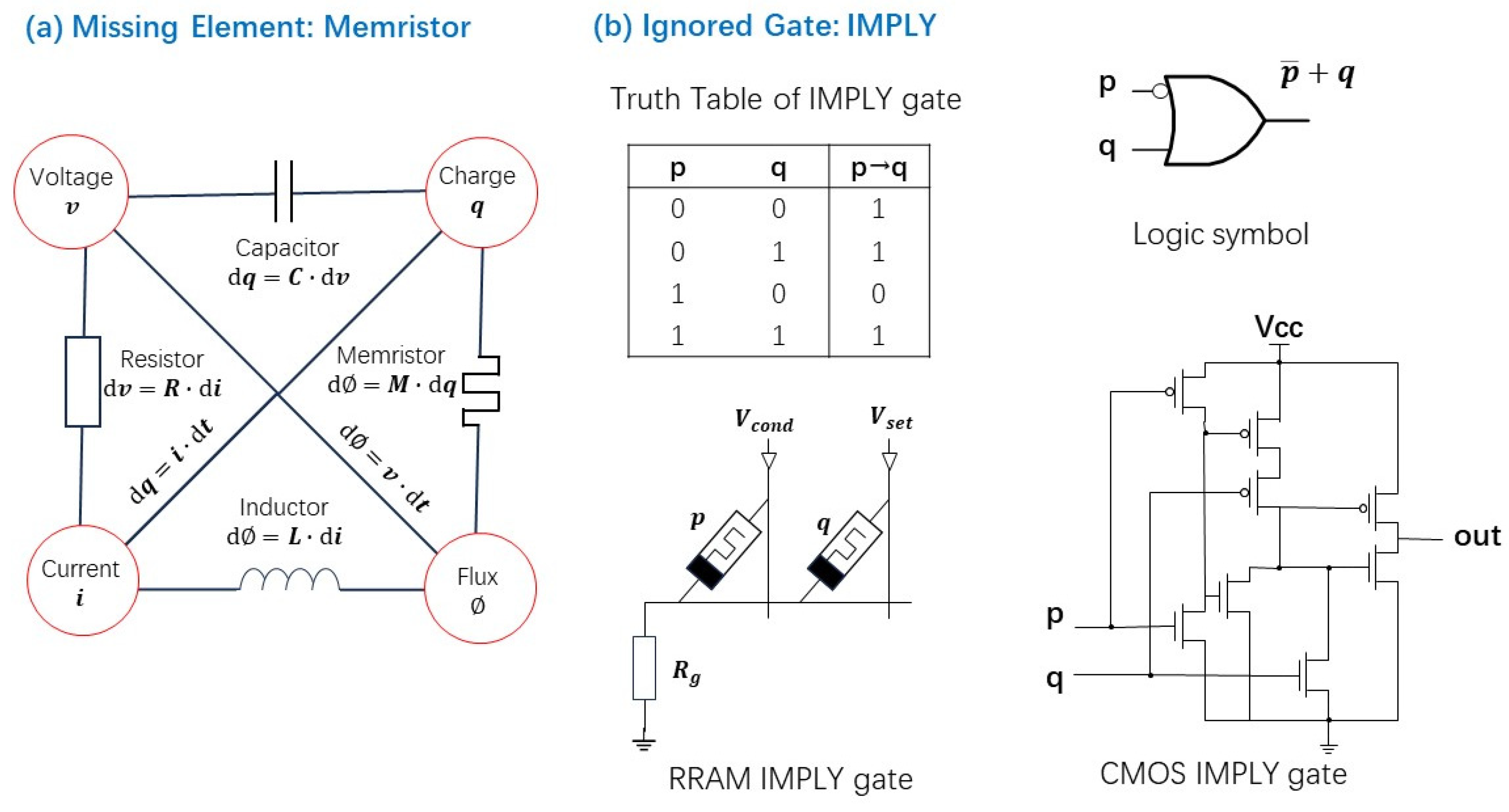

3.1. Missing Element and Ignored Logic Gate

- -

- If p is in HRS (logic 0), no current flows, and q’s state remains unchanged. The output is thus equal to q.

- -

- If p is in LRS (logic 1) and q is in HRS (logic 0), current flows through the circuit, switching q to LRS. The output becomes logic 1.

- -

- If both p and q are in LRS, current flows, so q remains unchanged at logic 1.

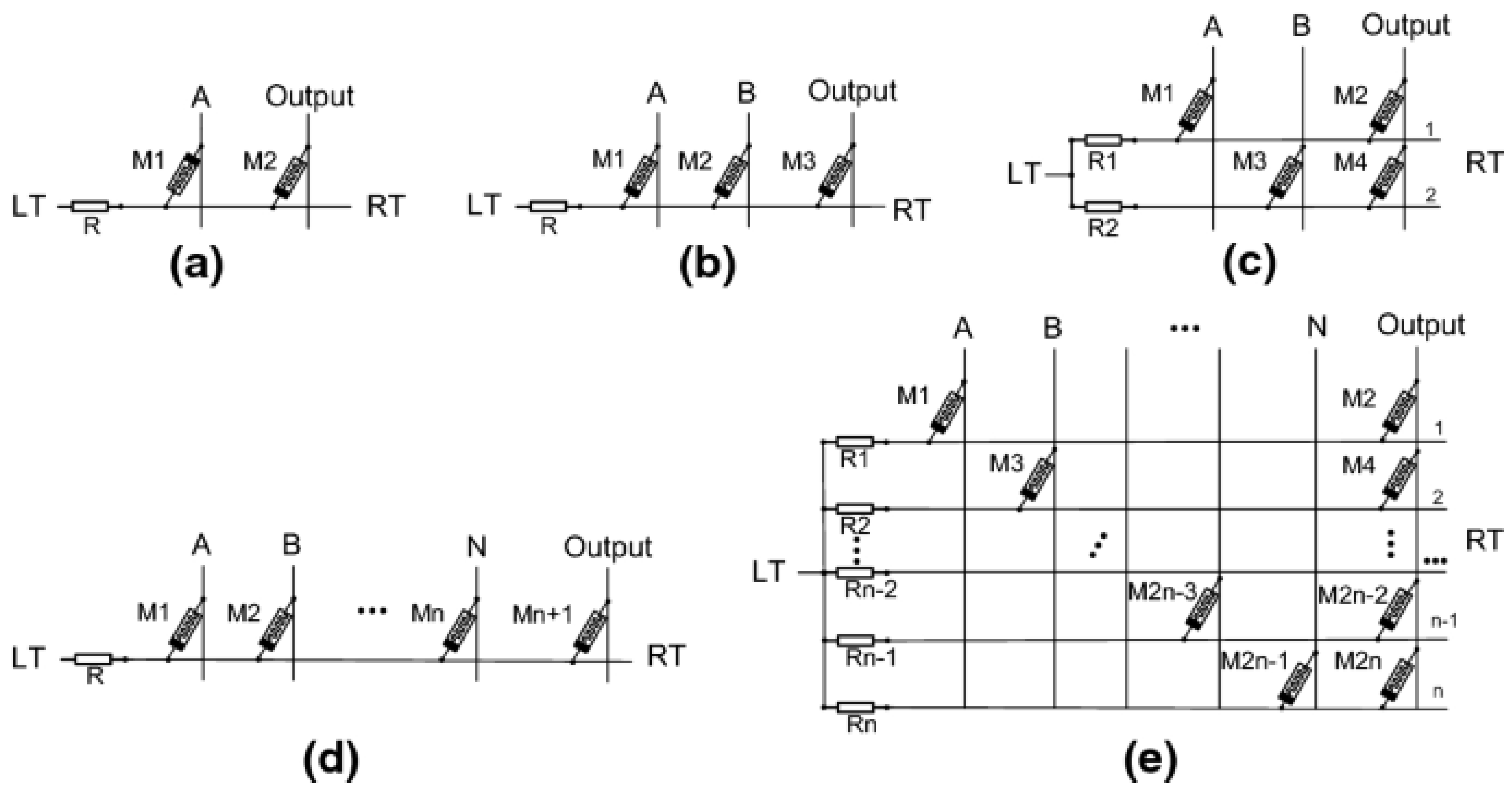

3.2. Conventional Logic Block Built with Memristor

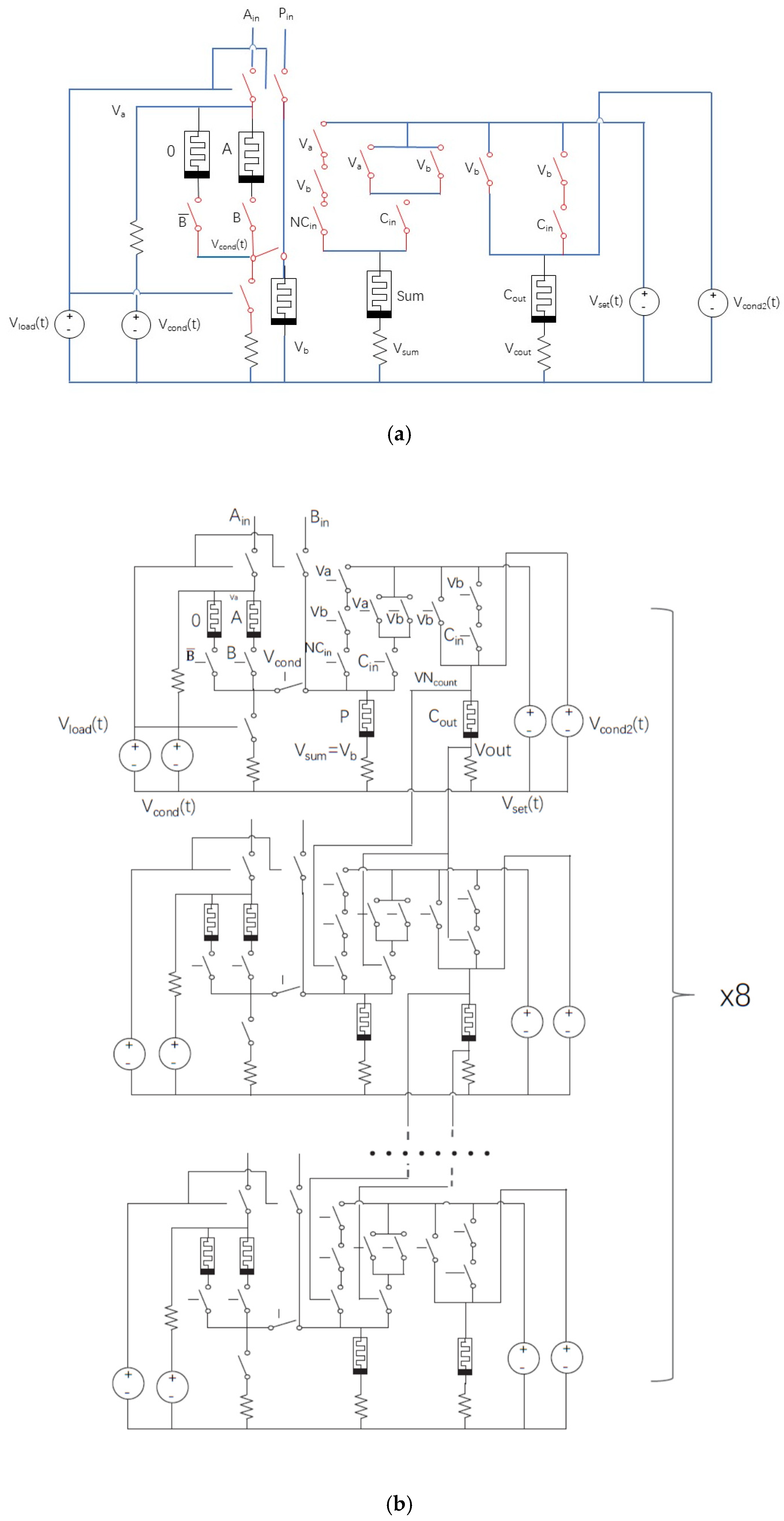

3.2.1. Full-Adder Design Using Memristors

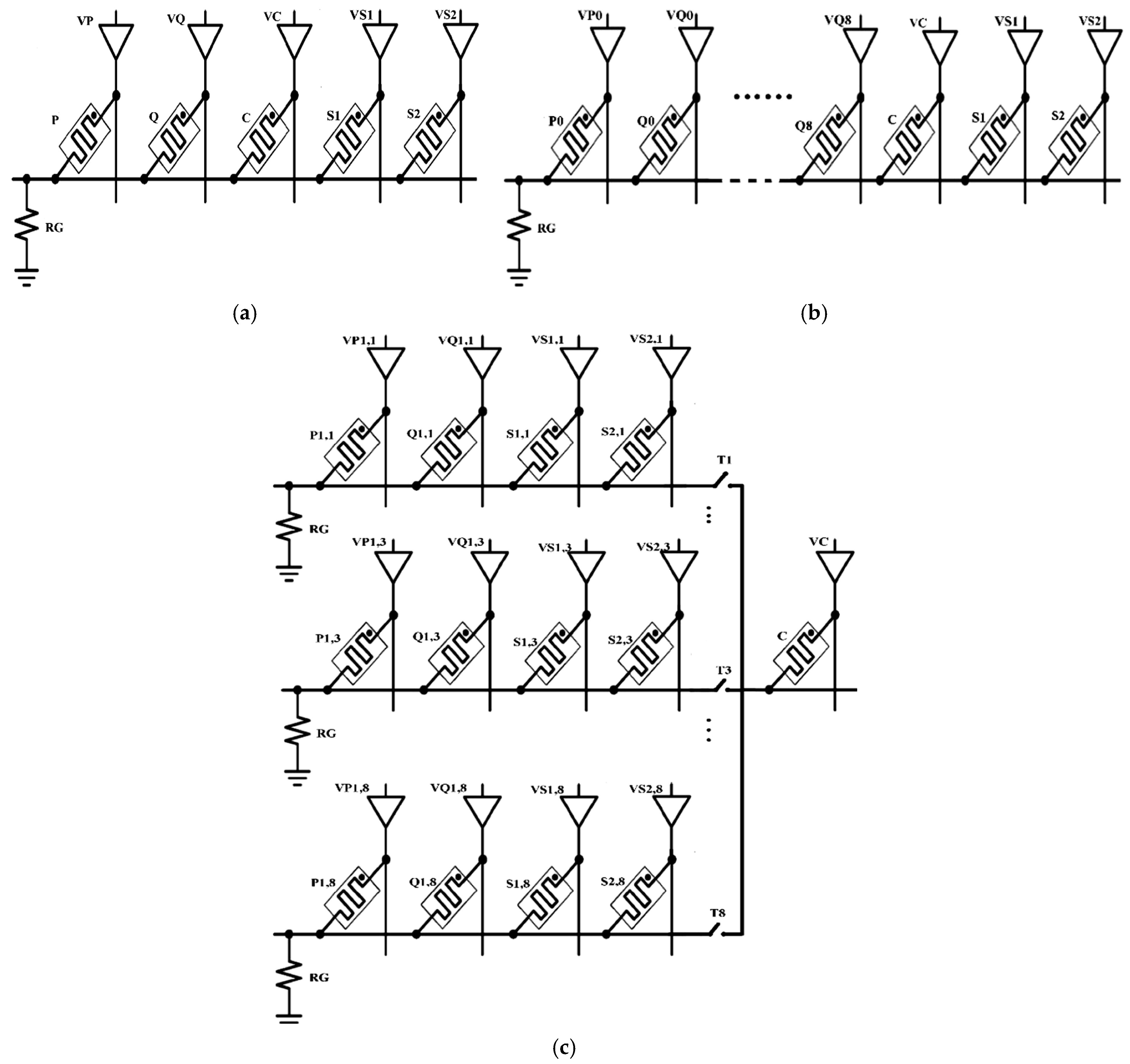

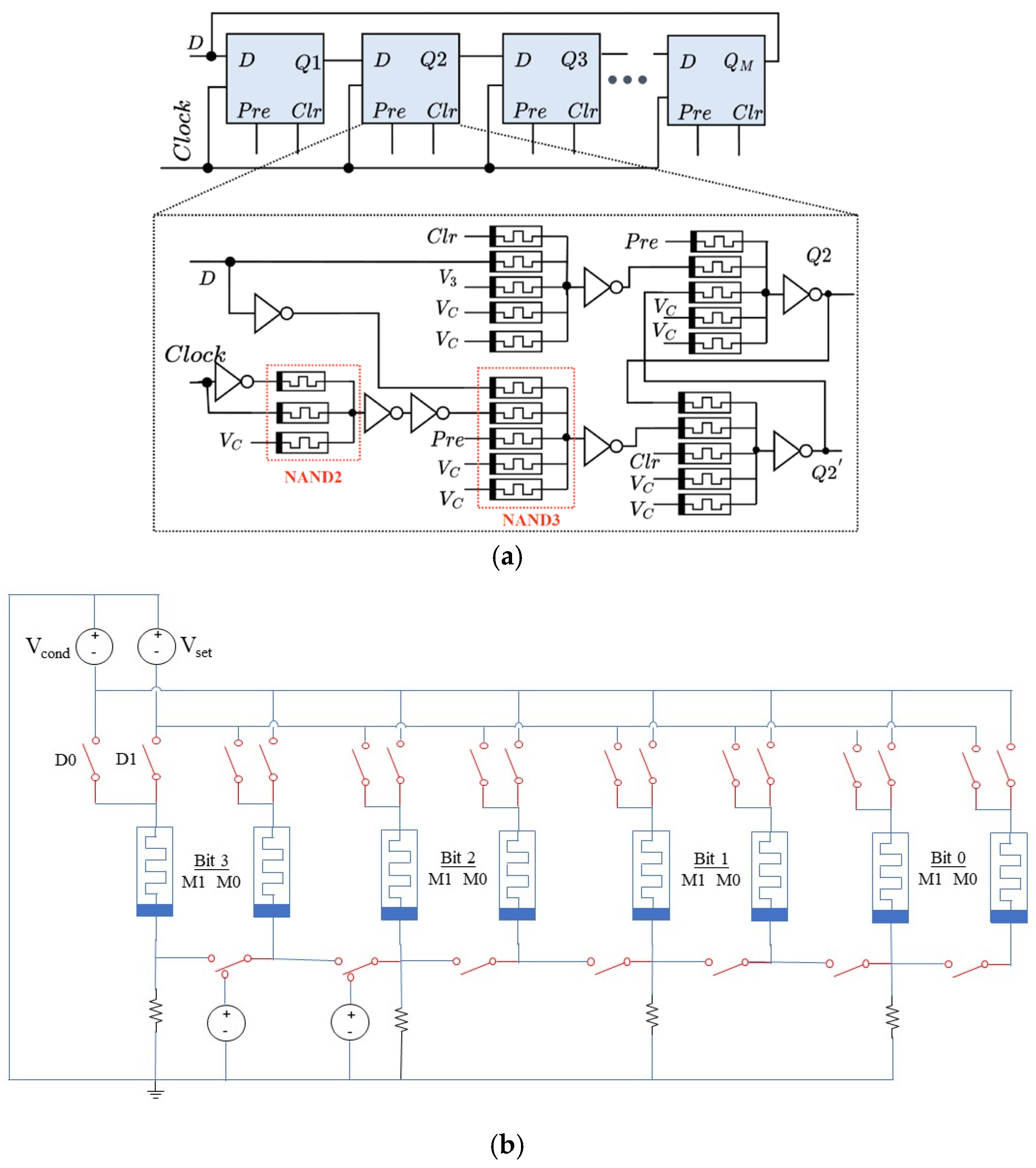

3.2.2. Shift Register Design Using Memristors

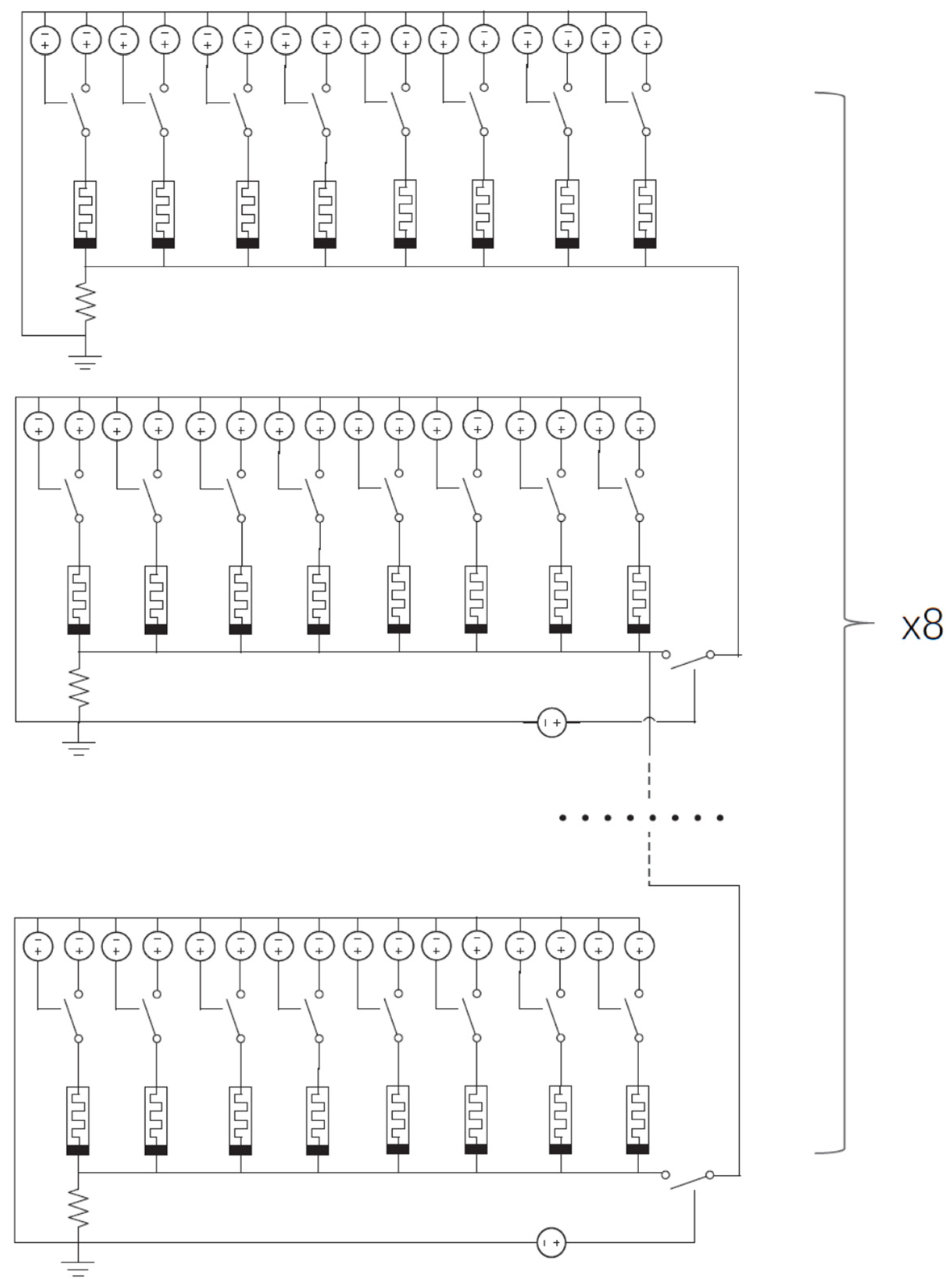

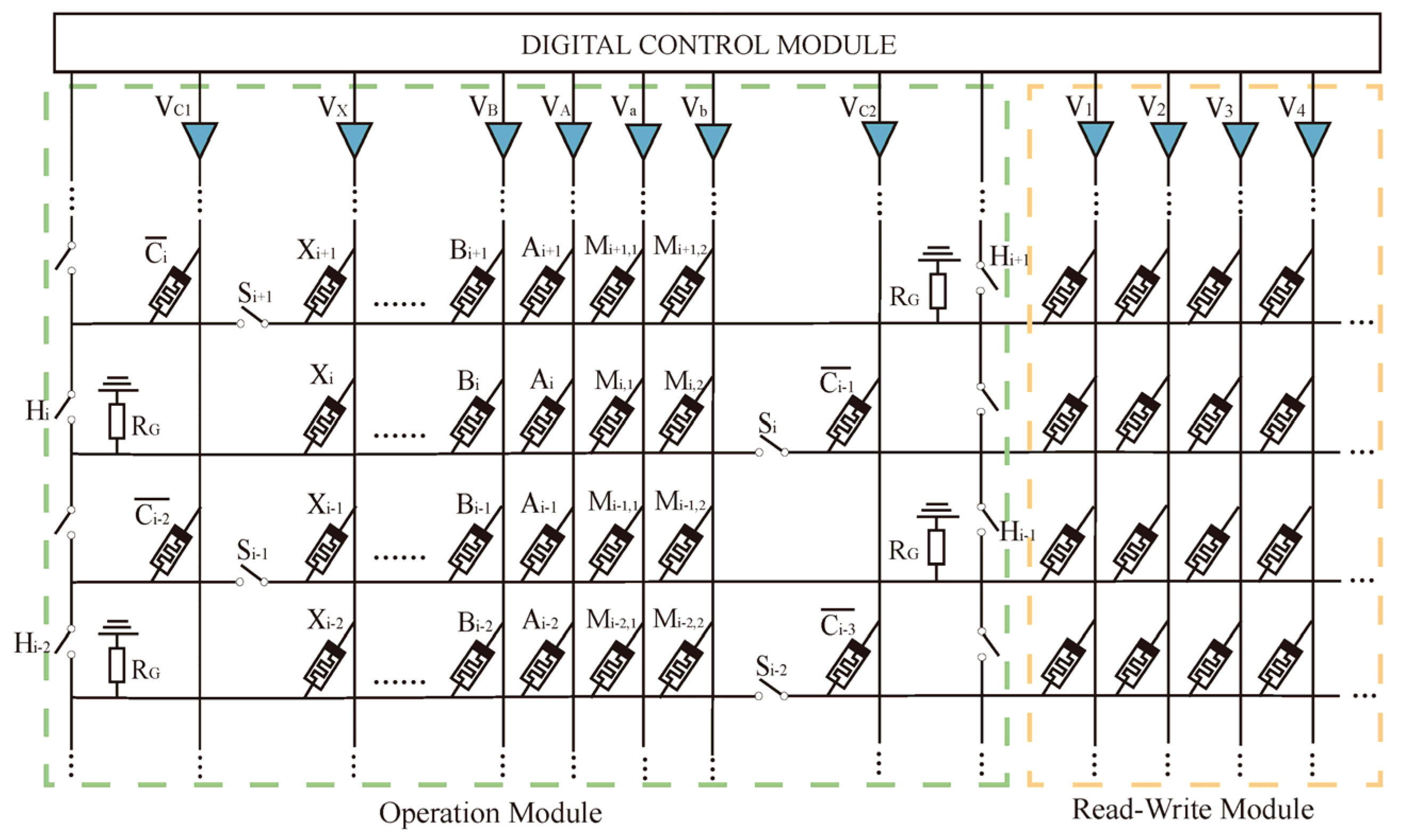

3.2.3. Multiplier Designs Using Memristors

3.3. Impact of Non-Volatile Memory Technology in Computing

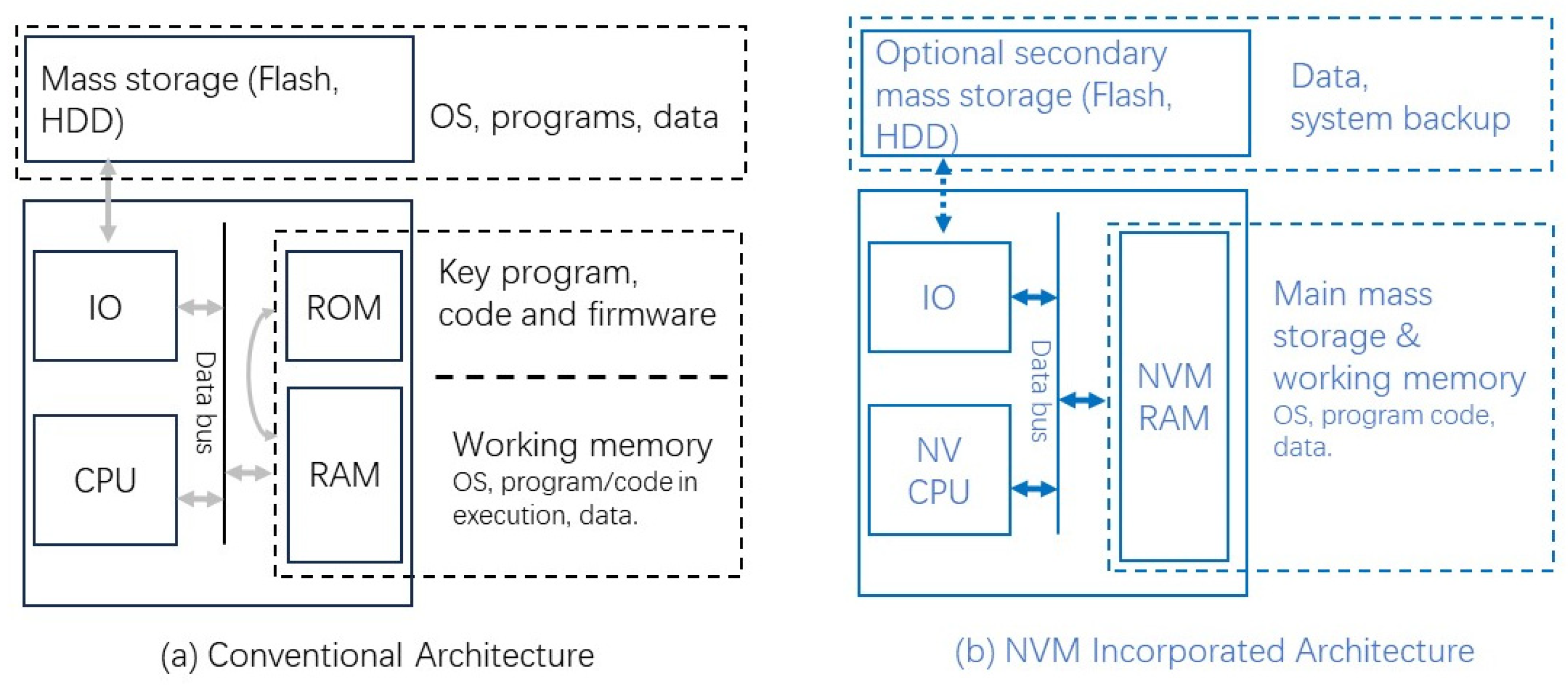

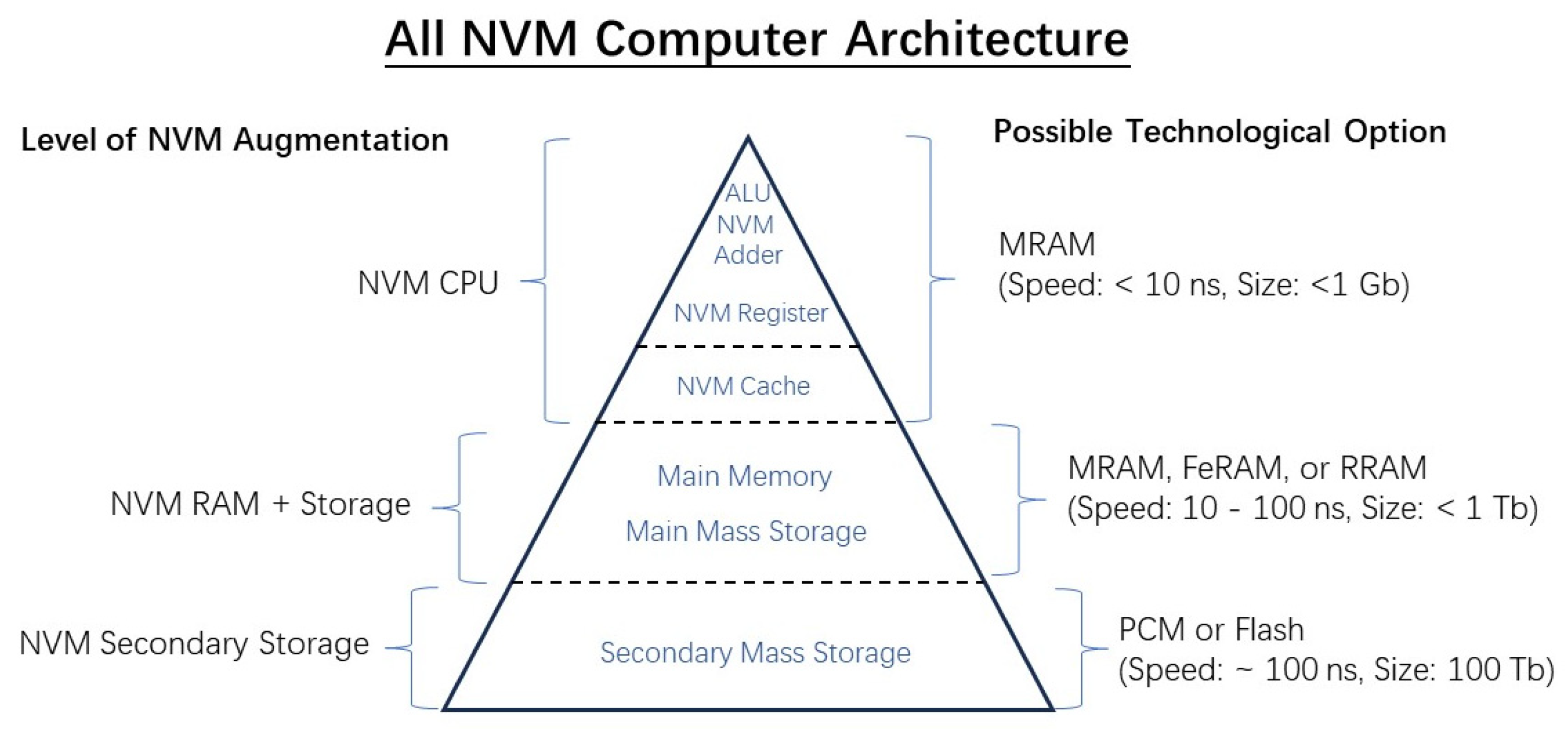

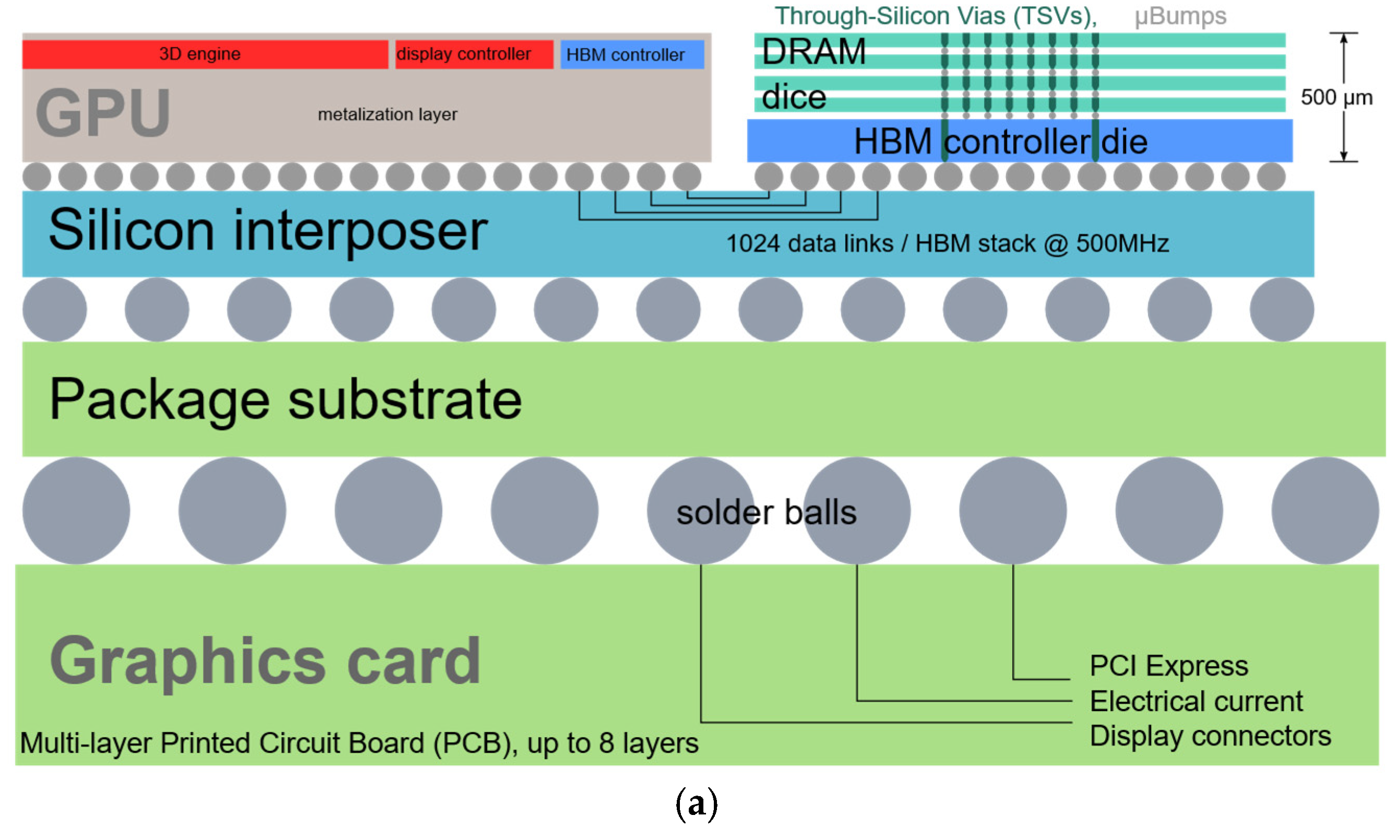

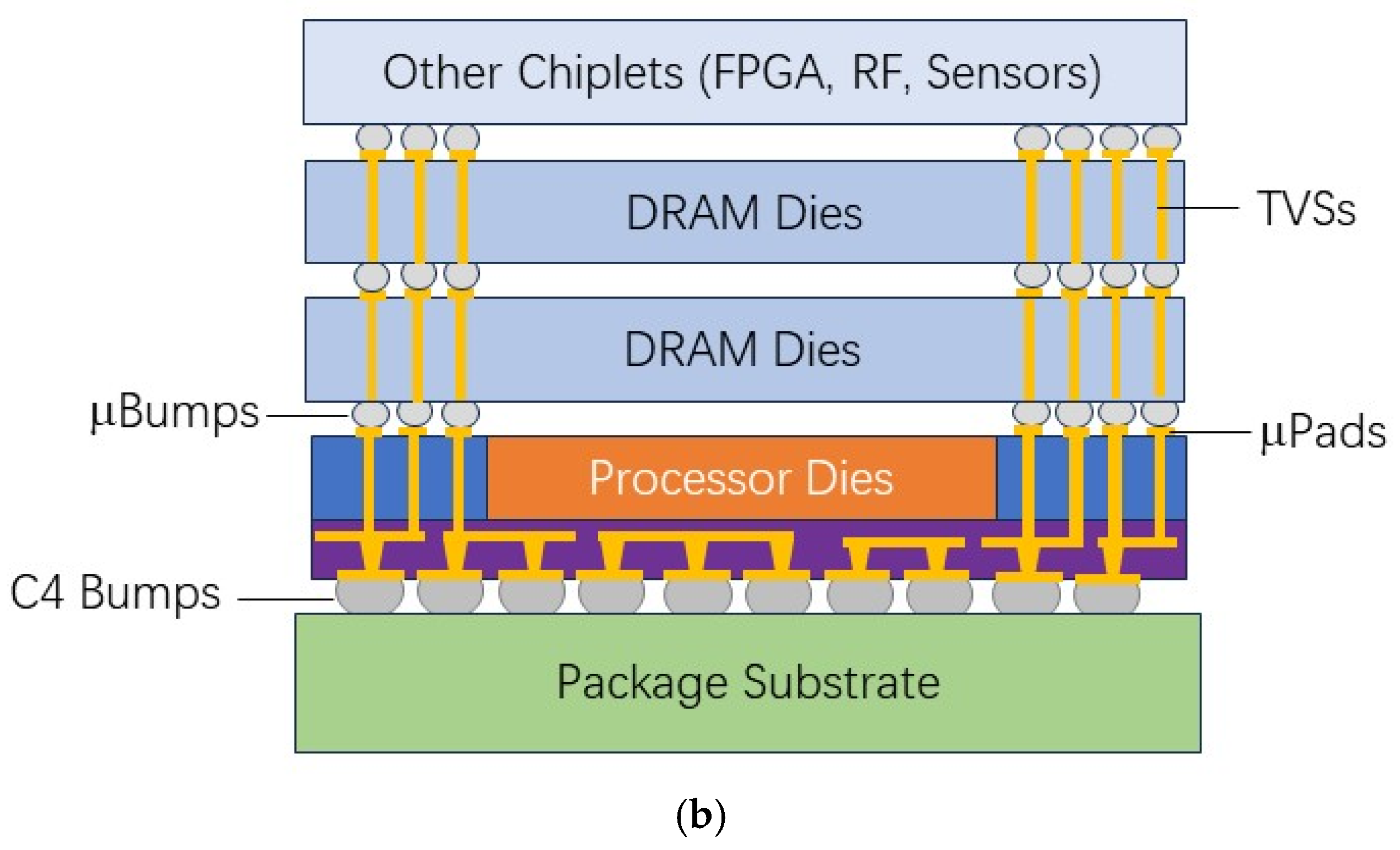

3.3.1. Memory Replacement and NVM Augmenting to Von-Neuman Computer

3.3.2. Near Memory Computing

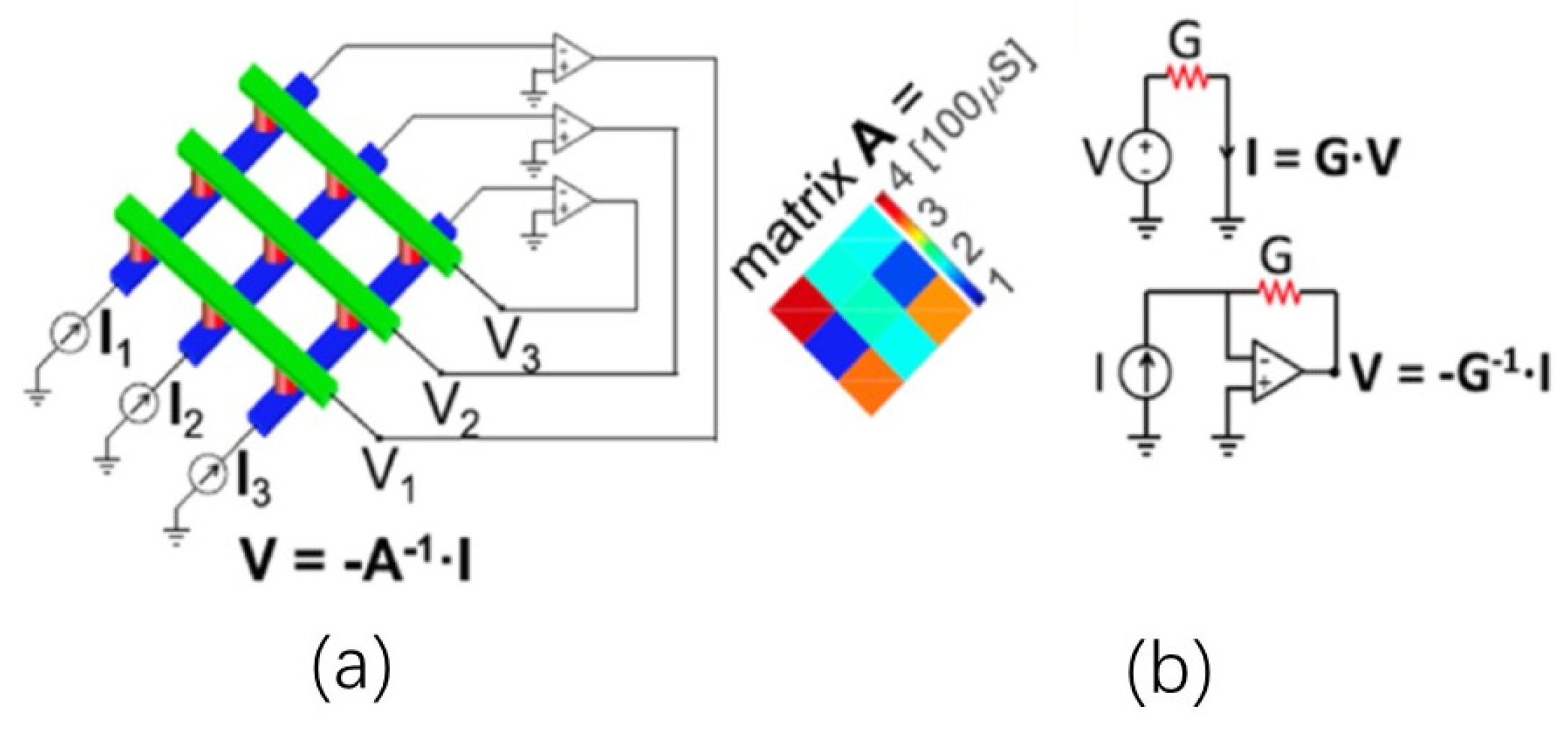

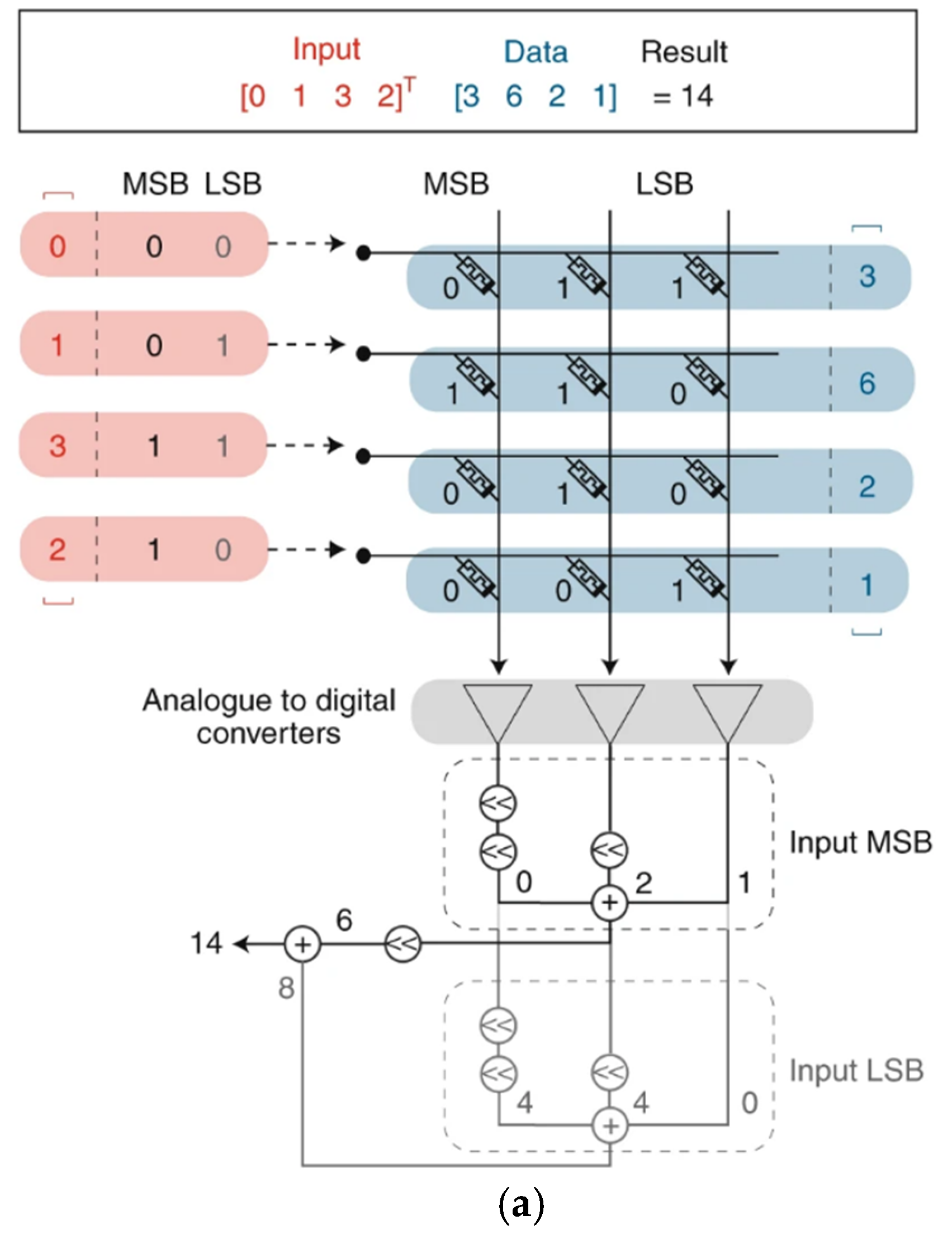

3.3.3. In-Memory Computing (IMC)

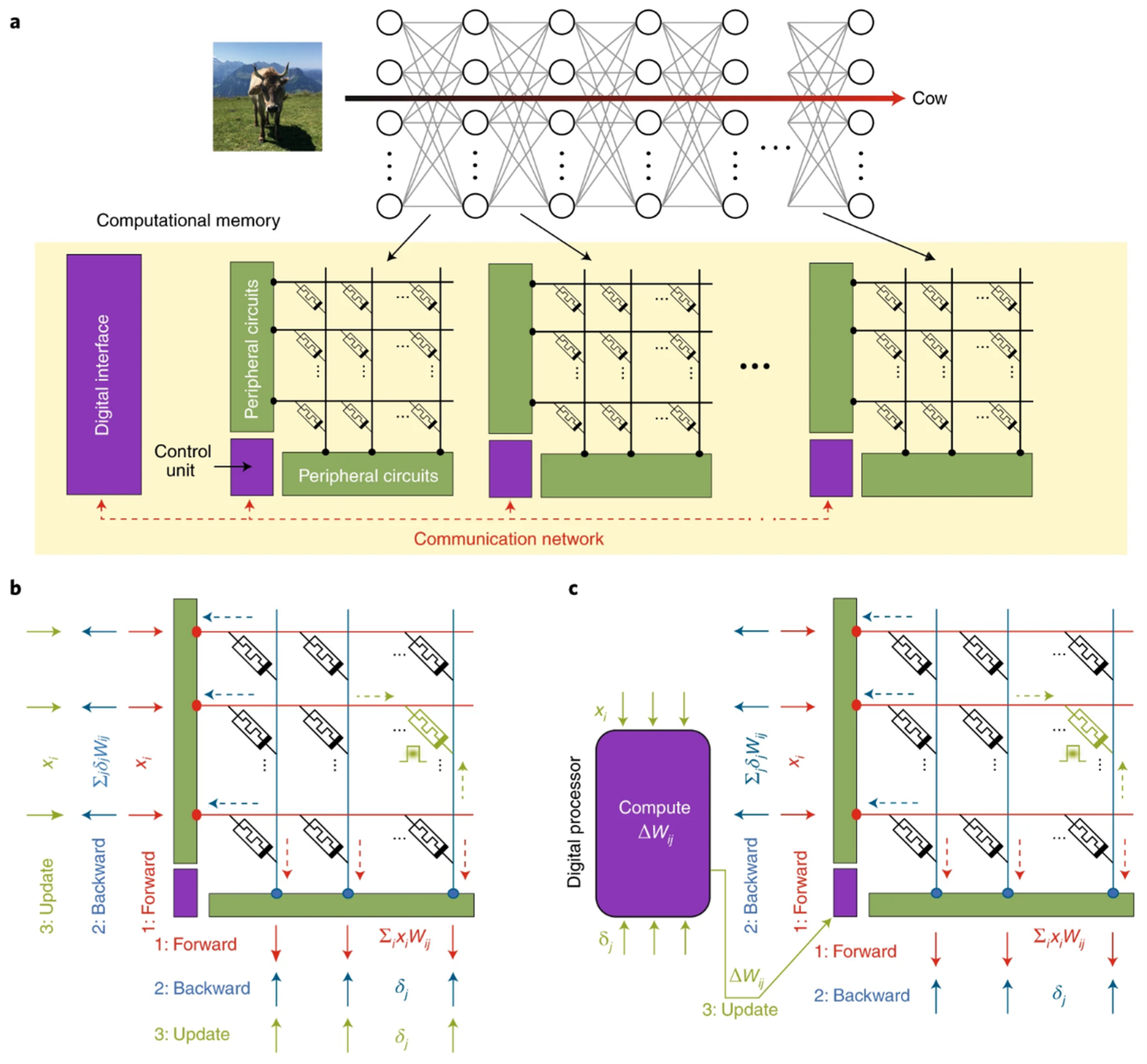

3.3.4. Neuromorphic Computing

4. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Moore, G.E. Cramming More Components onto Integrated Circuits. Electronics 1965, 38, 114–117. [Google Scholar] [CrossRef]

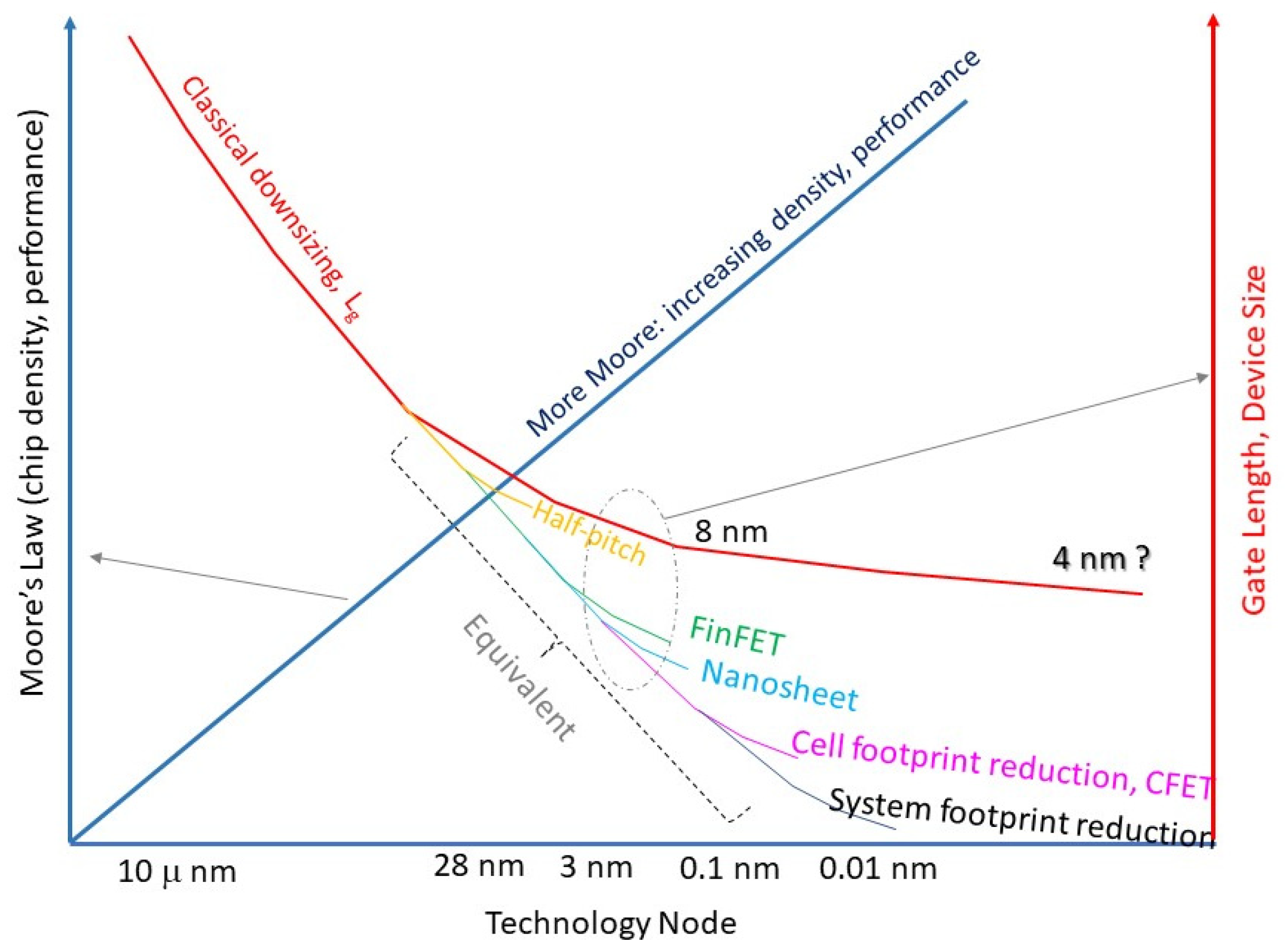

- Wong, H.; Zhang, J.; Liu, J. Quest for more Moore at the end of Device Downsizing. J. Circuits Syst. Comput. 2025, 34, 2441003. [Google Scholar] [CrossRef]

- Wong, H.; Iwai, H. On the Scaling of Subnanometer EOT Gate Dielectrics for Ultimate nano CMOS Technology. Microelectron. Eng. 2015, 138, 57–76. [Google Scholar] [CrossRef]

- Wong, H. On the CMOS Device Downsizing, More Moore, More than Moore, and More-than-Moore for More Moore. In Proceedings of the 2021 IEEE 32nd International Conference on Microelectronics (MIEL), Nis, Serbia, 12–14 September 2021; pp. 9–15. [Google Scholar]

- Wong, H.; Zhang, J.; Liu, J. Contacts at the Nanoscale and for Nanomaterials. Nanomaterials 2024, 14, 386. [Google Scholar] [CrossRef] [PubMed]

- Ye, P.D.; Ernst, T.; Khare, M.V. The Nanosheet Transistor Is the Next and Maybe Last Step in Moores-Law. IEEE Spectr. 2019, 30. Available online: https://spectrum.ieee.org/the-nanosheet-transistor-is-the-next-and-maybe-last-step-in-moores-law (accessed on 22 January 2024).

- Wong, H.; Kakushima, K. On the Vertically Stacked Gate-All-Around Nanosheet and Nanowire Transistor Scaling beyond the 5 nm Technology Node. Nanomaterials 2022, 12, 1739. [Google Scholar] [CrossRef]

- Dennard, R.H.; Gaensslen, F.; Yu, H.-N.; Rideout, L.; Bassous, E.; LeBlanc, A. Design of ion-implanted MOSFET’s with very small physical dimensions. IEEE J. Solid-State Circuits 1974, 9, 256–268. [Google Scholar] [CrossRef]

- Arden, W.; Brillouët, M.; Cogez, P.; Graef, M.; Huizing, B.; Mahnkopf, R. More-than-Moore White Paper; International Technology Roadmap for Semiconductors (ITRS). 2010. Available online: https://www.seas.upenn.edu/~ese5700/spring2015/IRC-ITRS-MtM-v2%203.pdf (accessed on 26 June 2025).

- Cheng, Y.; Deen, M.J.; Chen, C.H. MOSFET Modeling for RF IC Design. IEEE Trans. Electron Devices 2005, 52, 1286–1303. [Google Scholar] [CrossRef]

- Liou, J.J.; Schwierz, F. RF MOSFET: Recent Advances, Current Status and Future Trends. Solid-State Electron. 2003, 47, 1881–1895. [Google Scholar] [CrossRef]

- Siu, S.-L.; Wong, H.; Tam, W.-S.; Kakusima, K.; Iwai, H. Subthreshold Parameters of Radio-Frequency Multi-Finger Nanometer MOS Transistors. Microelectron. Reliab. 2009, 49, 387–391. [Google Scholar] [CrossRef]

- Li, B.; Gao, M.; Cai, X.; Gao, Y.; Xia, R. A 3.7-to-10 GHz Low Phase Noise Wideband LC-VCO Array in 55-nm CMOS Technology. Electronics 2022, 11, 1897. [Google Scholar] [CrossRef]

- Jeong, J.; Kim, S.K.; Kim, J.M.; Geum, D.-M.; Kim, D.H.; Kim, J.; Kim, S.H.; Park, J.; Lee, J.; Lee, S.; et al. Heterogeneous and Monolithic 3D Integration of III–V-Based Radio Frequency Devices on Si CMOS Circuits. ACS Nano 2022, 16, 9031–9040. [Google Scholar] [CrossRef]

- Minixhofer, R.; Feilchenfeld, N.; Knaipp, M.; Röhrer, G.; Park, J.M.; Zierak, M.; Eichenmair, H.; Levy, M.; Loeffler, B.; Hershberger, D.; et al. A 120V 180nm High Voltage CMOS Smart Power Technology for System-on-Chip Integration. In Proceedings of the 2010 IEEE International Symposium on Power Semiconductor Devices & ICs (ISPSD), Hiroshima, Japan, 6–10 June 2010; pp. 217–220. [Google Scholar]

- Bustillo, J.; Fife, K.; Merriman, B.; Rothberg, J. Development of the ion torrent CMOS chip for DNA sequencing. In Proceedings of the 2013 IEEE International Electron Devices Meeting, Washington, DC, USA, 9–11 December 2013; p. 6724584. [Google Scholar] [CrossRef]

- Zhao, X.; Yan, J. CMOS Integrated Circuits for Low-Power and High-Efficiency Applications. Electronics 2024, 13, 3600. [Google Scholar]

- STMicroelectronics Whitepaer: Benefits of Using ST’s Wide Bandgap Technology. Available online: https://www.st.com/content/st_com/en/premium-content/premium-content-white-paper-unique-properties-of-wide-bandgap-materials.html (accessed on 26 June 2025).

- Sheikhan, A.; Narayanan, E.M.S. Characteristics of a 1200 V Hybrid Power Switch Comprising a Si IGBT and a SiC MOSFET. Micromachines 2024, 15, 1337. [Google Scholar] [CrossRef] [PubMed]

- Bao, W.; Zhang, J.; Wong, H.; Liu, J.; Li, W. Emerging Copper-to-Copper Bonding Techniques: Enabling High-Density Interconnects for Heterogeneous Integration. Nanomaterials 2025, 15, 729. [Google Scholar] [CrossRef]

- Ren, C.; Zhang, Y.; Nadappuram, B.P.; Akpinar, B.; Klenerman, D.; Ivanov, A.P.; Edel, J.B. Integration of Graphene Field-Effect Transistors with CMOS Readout Circuits for Ultra-Sensitive Biosensing. ACS Appl. Electron. Mater. 2021, 3, 4418–4423. [Google Scholar] [CrossRef]

- Filipovic, L.; Selberherr, S. Application of Two-Dimensional Materials towards CMOS-Integrated Gas Sensors. Nanomaterials 2022, 12, 3651. [Google Scholar] [CrossRef] [PubMed]

- Wong, H.; Filip, V.; Wong, C.K.; Chung, P.S. Silicon Integrated Photonics Begins to Revolutionize. Microelectron. Reliab. 2007, 47, 1–10. [Google Scholar] [CrossRef]

- Wong, C.K.; Wong, H.; Chan, M.; Chow, Y.T.; Chan, H.P. Silicon Oxy-Nitride Integrated Waveguide for On-Chip Optical Interconnects Applications. Microelectron. Reliab. 2008, 48, 212–218. [Google Scholar] [CrossRef]

- Wong, C.K.; Wong, H.; Kok, C.W.; Chan, M. Silicon Oxynitride Prepared by Chemical Vapor Deposition as Optical Waveguide Materials. J. Cryst. Growth 2006, 288, 171–175. [Google Scholar] [CrossRef]

- Wong, H.; Filip, V.; Nicolaescu, D.; Chu, P.L. A Novel High-Efficiency Light Emitting Device Based on Silicon Nanostructures and Tunneling Carrier Injection. J. Vac. Sci. Technol. B 2005, 23, 2449–2456. [Google Scholar] [CrossRef]

- Xing, P.; Ma, D.H.; Ooi, K.J.A.; Choi, J.W.; Agarwal, A.M.; Tan, D.T.H. CMOS-Compatible PECVD Silicon Carbide Platform for Linear and Nonlinear Optics. ACS Photonics 2019, 6, 1162–1167. [Google Scholar] [CrossRef]

- Chen, Y.; Lin, H.; Hu, J.J.; Li, M. Heterogeneously Integrated Silicon Photonics for the Mid-Infrared and Spectroscopic Sensing. ACS Nano 2014, 8, 6955–6961. [Google Scholar] [CrossRef]

- Karnik, T. Recent Advances and Future Challenges in 2.5D/3D Heterogeneous Integration. In Proceedings of the 2022 International Symposium on Physical Design (ISPD ’22), Virtual, 27–30 March 2022; Association for Computing Machinery: New York, NY, USA, 2022; p. 95. [Google Scholar] [CrossRef]

- Lau, J.H. Heterogeneous Integrations; Springer: Berlin/Heidelberg, Germany, 2019. [Google Scholar] [CrossRef]

- Lau, J.H. Chiplet Design and Heterogeneous Integration Packaging; Springer: New York, NY, USA, 2023. [Google Scholar] [CrossRef]

- Jeon, H.J.; Hong, S.J. Ammonia Plasma Surface Treatment for Enhanced Cu–Cu Bonding Reliability for Advanced Packaging Interconnection. Coatings 2024, 14, 1449. [Google Scholar] [CrossRef]

- Wong, H. Abridging CMOS Technology. Nanomaterials 2022, 12, 4245. [Google Scholar] [CrossRef]

- Liu, Y.; Duan, X.; Shin, H.J.; Park, S.; Huang, Y.; Duan, X. Promises and Prospects of Two-Dimensional Transistors. Nature 2021, 591, 43–53. [Google Scholar] [CrossRef]

- Knobloch, T.; Selberherr, S.; Grasser, T. Challenges for Nanoscale CMOS Logic based on Two-Dimensional Materials. Nanomaterials 2022, 12, 3548. [Google Scholar] [CrossRef] [PubMed]

- Wong, H. Nano CMOS Gate Dielectric Engineering; CRC Press: Boca Raton, FL, USA, 2012. [Google Scholar]

- Ishimaru, K. Future of Non-Volatile Memory—From Storage to Computing. In Proceedings of the 2019 IEEE International Electron Devices Meeting (IEDM), San Francisco, CA, USA, 7–11 December 2019; pp. 1.3.1–1.3.6. [Google Scholar]

- Mannocci, P.; Farronato, M.; Lepri, N.; Cattaneo, L.; Glukhov, A.; Sun, Z.; Ielmini, D. In-Memory Computing with Emerging Memory Devices: Status and Outlook. APL Mach. Learn. 2023, 1, 010902. [Google Scholar] [CrossRef]

- Mutlu, O.; Ghose, S.; Gómez-Luna, J.; Ausavarungnirun, R. A Modern Primer on Processing in Memory. In Emerging Computing: From Devices to Systems: Looking Beyond Moore and Von Neumann; Sabry Aly, M.M., Chattopadhyay, A., Eds.; Springer: Singapore, 2023; pp. 171–243. [Google Scholar] [CrossRef]

- Fantini, P. Memory Technology Enabling Future Computing Systems. APL Mach. Learn. 2025, 3, 020901. [Google Scholar] [CrossRef]

- Sun, Z.; Kvatinsky, S.; Si, X.; Mehonic, A.; Cai, Y.; Huang, R. A Full Spectrum of Computing-in-Memory Technologies. Nat. Electron. 2023, 6, 823–835. [Google Scholar] [CrossRef]

- Molas, G.; Nowak, E. Advances in Emerging Memory Technologies: From Data Storage to Artificial Intelligence. Appl. Sci. 2021, 11, 11254. [Google Scholar] [CrossRef]

- Park, S.-S.; Lyu, J.-D.; Kim, M.; Lee, J.; Song, Y.; Yu, C.-H.; Makoto, H.; Kwon, Y.; Park, J.-H.; Kim, H.-J.; et al. 30.1 A 28Gb/Mm24XX-Layer 1Tb 3b/Cell WF-Bonding 3D-NAND Flash with 5.6Gb/S/Pin IOs. In Proceedings of the 2025 IEEE International Solid-State Circuits Conference (ISSCC), San Francisco, CA, USA, 16–20 February 2025; pp. 1–3. [Google Scholar] [CrossRef]

- Masuoka, F.; Asano, M.; Iwahashi, H.; Komuro, T.; Tanaka, S. A new flash E2PROM cell using triple polysilicon technology. In Proceedings of the 1984 International Electron Devices Meeting, San Francisco, CA, USA, 9–12 December 1984. [Google Scholar] [CrossRef]

- Endoh, T.; Kinoshita, K.; Tanigami, T.; Wada, Y.; Sato, K.; Yamada, K.; Yokoyama, T.; Takeuchi, N.; Tanaka, K.; Awaya, N.; et al. Novel Ultra High Density Flash Memory with a Stacked-Surrounding Gate Transistor (S-SGT) Structured Cell. In Proceedings of the International Electron Devices Meeting. Technical Digest (Cat. No.01CH37224), Washington, DC, USA, 2–5 December 2001. [Google Scholar] [CrossRef]

- Tanaka, H.; Kido, M.; Yahashi, K.; Oomura, M.; Katsumata, R.; Kito, M.; Fukuzumi, Y.; Sato, M.; Nagata, Y.; Matsuoka, Y.; et al. Bit Cost Scalable Technology with Punch and Plug Process for Ultra High Density Flash Memory. In Proceedings of the 2007 IEEE Symposium on VLSI Technology, Kyoto, Japan, 12–14 June 2007. [Google Scholar] [CrossRef]

- Ishiduki, M.; Fukuzumi, Y.; Katsumata, R.; Kido, M.; Tanaka, H.; Komori, Y.; Nagata, Y.; Fujiwara, T.; Maeda, T.; Mikajiri, Y.; et al. Optimal Device Structure for Pipe-Shaped BiCS Flash Memory for Ultra High Density Storage Device with Excellent Performance and Reliability. In Proceedings of the 2009 IEEE International Electron Devices Meeting (IEDM), Baltimore, MD, USA, 7–9 December 2009; pp. 1–4. [Google Scholar] [CrossRef]

- Jang, J.; Kim, H.S.; Cho, W.; Cho, H.; Kim, J.; Shim, S.I.; Younggoan; Jeong, J.-H.; Son, B.-K.; Kim, D.W.; et al. Vertical cell array using TCAT(Terabit Cell Array Transistor) technology for ultra-high density NAND flash memory. In Proceedings of the 2009 Symposium on VLSI Technology, Kyoto, Japan, 15–17 June 2009; Available online: https://ieeexplore.ieee.org/document/5200595 (accessed on 26 June 2025).

- Seo, M.-S.; Park, S.-K.; Endoh, T. 3-D Vertical FG Nand Flash Memory with a Novel Electrical S/D Technique Using the Extended Sidewall Control Gate. IEEE Trans. Electron Devices 2011, 58, 2966–2973. [Google Scholar] [CrossRef]

- Whang, N.S.; Lee, N.K.; Shin, N.D.; Kim, N.B.; Kim, N.M.; Bin, N.J.; Han, N.J.; Kim, N.S.; Lee, N.B.; Jung, N.Y.; et al. Novel 3-Dimensional Dual Control-Gate with Surrounding Floating-Gate (DC-SF) NAND Flash Cell for 1Tb File Storage Application. In Proceedings of the 2010 International Electron Devices Meeting, San Francisco, CA, USA, 6–8 December 2010; pp. 29.7.1–29.7.4. [Google Scholar] [CrossRef]

- Noh, Y.; Ahn, Y.; Yoo, H.; Han, B.; Chung, S.; Shim, K.; Lee, K.; Kwak, S.; Shin, S.; Choi, I.; et al. A New Metal Control Gate Last Process (MCGL Process) for High Performance DC-SF (Dual Control Gate with Surrounding Floating Gate) 3D NAND Flash Memory. In Proceedings of the 2012 Symposium on VLSI Technology (VLSIT), Honolulu, HI, USA, 12–14 June 2012; pp. 19–20. [Google Scholar] [CrossRef]

- Seo, M.-S.; Lee, B.-H.; Park, S.; Tetsuo, E. A Novel 3-D Vertical FG NAND Flash Memory Cell Arrays Using the Separated Sidewall Control Gate (S-SCG) for Highly Reliable MLC Operation. In Proceedings of the 2011 3rd IEEE International Memory Workshop (IMW), Monterey, CA, USA, 22–25 May 2011; pp. 1–4. [Google Scholar] [CrossRef]

- Khakifirooz, A.; Anaya, E.; Balasubrahmanyam, S.; Bennett, G.; Castro, D.; Egler, J.; Fan, K.; Ferdous, R.; Ganapathi, K.; Guzman, O.; et al. A 1.67Tb, 5b/Cell Flash Memory Fabricated in 192-Layer Floating Gate 3D-Nand Technology and Featuring a 23.3 Gb/Mm2 Bit Density. IEEE Solid-State Circuits Lett. 2023, 6, 161–164. [Google Scholar] [CrossRef]

- Kim, M.; Yun, S.W.; Park, J.; Park, H.K.; Lee, J.; Kim, Y.S.; Na, D.; Choi, S.; Song, Y.; Lee, J.; et al. A High-Performance 1Tb 3b/cell 3D-NAND Flash with a 194MB/s Write Throughput on over 300 Layers. In Proceedings of the 2023 IEEE International Solid-State Circuits Conference (ISSCC), San Francisco, CA, USA, 19–23 February 2023; pp. 27–29. [Google Scholar] [CrossRef]

- Yanagidaira, K.; Sako, M.; Hirashima, Y.; Matsuno, J.; Higashi, Y.; Shimizu, Y.; Imamoto, A.; Kawaguchi, K.; Tabata, K.; Nakano, T.; et al. A 1Tb 3b/Cell 3D-Flash Memory with a 29%-Improved-Energy-Efficiency Read Operation and 4.8Gb/S Power-Isolated Low-Tapped-Termination I/Os. In Proceedings of the 2025 IEEE International Solid-State Circuits Conference (ISSCC), San Francisco, CA, USA, 16–20 February 2025; pp. 1–3. [Google Scholar] [CrossRef]

- Cho, W.; Jeong, C.; Kim, J.; Jung, J.; Ahn, K.; Goo, J.; Lee, S.; Cho, K.; Cho, T.; Kim, D.; et al. A 321-Layer 2Tb 4b/Cell 3D-NAND-Flash Memory with a 75MB/S Program Throughput. In Proceedings of the 2025 IEEE International Solid-State Circuits Conference (ISSCC), San Francisco, CA, USA, 16–20 February 2025; pp. 512–514. [Google Scholar] [CrossRef]

- Kawai, K.; Einaga, Y.; Oikawa, Y.; He, Y.; Iorio, B.; Yamada, S.; Kamata, Y.; Iwasaki, T.; D’Alessandro, A.; Yu, E.; et al. 13.7 A 1Tb Density 3b/Cell 3D-NAND Flash on a 2YY-Tier Technology with a 300MB/S Write Throughput. In Proceedings of the 2024 IEEE International Solid-State Circuits Conference (ISSCC), San Francisco, CA, USA, 18–22 February 2024; pp. 244–246. [Google Scholar] [CrossRef]

- Jung, W.; Kim, H.; Kim, D.-B.; Kim, T.-H.; Lee, N.; Shin, D.; Kim, M.; Rho, Y.; Lee, H.-J.; Hyun, Y.; et al. 13.3 A 280-Layer 1Tb 4b/Cell 3D-NAND Flash Memory with a 28.5Gb/Mm2 Areal Density and a 3.2GB/S High-Speed IO Rate. In Proceedings of the 2024 IEEE International Solid-State Circuits Conference (ISSCC), San Francisco, CA, USA, 18–22 February 2024; pp. 236–237. [Google Scholar] [CrossRef]

- Waser, R.; Aono, M. Nanoionics-Based Resistive Switching Memories. Nat. Mater. 2007, 6, 833–840. [Google Scholar] [CrossRef] [PubMed]

- Hickmott, T.W. Low-Frequency Negative Resistance in Thin Anodic Oxide Films. J. Appl. Phys. 1962, 33, 2669–2682. [Google Scholar] [CrossRef]

- Seo, S.; Baek, I.G.; Kim, D.H.; Lee, M.J.; Lee, B.H.; Park, Y.; Yoo, I.K.; Yoon, J.S.; Hwang, H. Reproducible Resistance Switching in Polycrystalline NiO Films. Appl. Phys. Lett. 2004, 85, 5655–5657. [Google Scholar] [CrossRef]

- Lee, H.-Y.; Chen, Y.-S.; Wu, S.-S.; Chen, P.-S.; Wang, C.-C.; Tzeng, P.-J.; Lin, C.-H.; Lin, F.; Lien, C.-H.; Tsai, M.-J. Low Power and High Speed Bipolar Switching with a Thin Reactive Ti Buffer Layer in Robust HfO2-Based RRAM. In Proceedings of the IEEE International Electron Devices Meet, San Francisco, CA, USA, 15–17 December 2008; pp. 1–4. [Google Scholar]

- Ielmini, D.; Wong, H.-S.P. In-Memory Computing with Resistive Switching Devices. Nat. Electron. 2018, 1, 333–343. [Google Scholar] [CrossRef]

- Baek, I.G.; Lee, D.C.; Lee, M.J.; Park, Y.; Lee, J.H.; Kim, S.; Kim, I.; Hwang, H. Highly Scalable Nonvolatile Resistive Memory Using Simple Binary Oxide Driven by Asymmetric Unipolar Voltage Pulses. In Proceedings of the IEDM Technical Digest IEEE International Electron Devices Meeting, San Francisco, CA, USA, 13–15 December 2004; pp. 587–590. [Google Scholar]

- Lee, C.-F.; Chen, Y.-C.; Liao, S.-M.; Chen, C.-H.; Liu, S.-C.; Lin, M.-J.; Hsieh, T.-H.; Chien, S.-H.; Lin, Y.-M.; Wang, T.-W.; et al. A 1.4 Mb 40-nm Embedded ReRAM Macro with 0.07 µm2 Bit Cell, 2.7 mA/100 MHz Low-Power Read and Hybrid Write Verify for High Endurance Application. In Proceedings of the 2017 IEEE Asian Solid-State Circuits Conference (A-SSCC), Seoul, Republic of Korea, 6–8 November 2017; pp. 141–144. [Google Scholar]

- Xue, C.-X.; Hung, J.-M.; Kao, H.-Y.; Huang, Y.-H.; Huang, S.-P.; Chang, F.-C.; Chen, P.; Liu, T.-W.; Jhang, C.-J.; Su, C.-I.; et al. 16.1 A 22 nm 4 Mb 8b-Precision ReRAM Computing-in-Memory Macro with 11.91 to 195.7 TOPS/W for Tiny AI Edge Devices. In Proceedings of the 2021 IEEE International Solid-State Circuits Conference (ISSCC), San Francisco, CA, USA, 13–22 February 2021; Volume 64, pp. 250–252. [Google Scholar]

- Huang, Y.-C.; Chang, C.-F.; Lin, M.-J.; Chien, S.-H.; Lee, C.-F.; Wang, T.-W.; Xue, C.-X.; Chen, Y.-C.; Liao, S.-M.; Lee, H.-Y.; et al. 15.7 A 32 Mb RRAM in a 12 nm FinFET Technology with a 0.0249 µm2 Bit-Cell, a 3.2 GB/s Read Throughput, a 10 KCycle Write Endurance and a 10-Year Retention at 105 °C. In Proceedings of the 2024 IEEE International Solid-State Circuits Conference (ISSCC), San Francisco, CA, USA, 18–22 February 2024; Volume 67, pp. 254–256. [Google Scholar]

- Valentian, A.; Rummens, F.; Vianello, E.; Pace, S.; Jaffré, R.; Molas, G.; Catthoor, F.; De Salvo, B. Fully Integrated Spiking Neural Network with Analog Neurons and RRAM Synapses. In Proceedings of the 2019 IEEE International Electron Devices Meeting, San Francisco, CA, USA, 7–11 December 2019; pp. 14.3.1–14.3.4. [Google Scholar]

- Yao, P.; Wu, H.; Gao, B.; Tang, J.; Zhang, Q.; Zhang, W.; Yang, J.J.; Qian, H. Fully Hardware-Implemented Memristor Convolutional Neural Network. Nature 2020, 577, 641–646. [Google Scholar] [CrossRef]

- Kent, A.D.; Worledge, D.C. A New Spin on Magnetic Memories. Nat. Nanotechnol. 2015, 10, 187–191. [Google Scholar] [CrossRef] [PubMed]

- Kan, J.J.; Park, C.; Ching, C.; Hsia, A.H.; Kalitsov, A.; Lyle, A.; Houssameddine, D.; Zhu, J.-G.; Leng, T.; Tehrani, S. A Study on Practically Unlimited Endurance of STT-MRAM. IEEE Trans. Electron. Devices 2017, 64, 3639–3646. [Google Scholar] [CrossRef]

- Hanyu, T.; Endoh, T.; Suzuki, D.; Ikeda, S.; Kudo, M.; Fujita, T.; Yoda, Y.; Ishikawa, K.; Ohno, H.; Tanaka, H. Standby-Power-Free Integrated Circuits Using MTJ-Based VLSI Computing. Proc. IEEE 2016, 104, 1844–1863. [Google Scholar] [CrossRef]

- Julliere, M. Tunneling Between Ferromagnetic Films. Phys. Lett. A 1975, 54, 225–226. [Google Scholar] [CrossRef]

- Miyazaki, T.; Tezuka, N. Giant Magnetic Tunneling Effect in Fe/Al2O3/Fe Junction. J. Magn. Magn. Mater. 1995, 139, L231–L234. [Google Scholar] [CrossRef]

- Parkin, S.S.P.; Kaiser, C.; Panchula, A.; Rice, P.M.; Hughes, B.; Samant, M.; Yang, S.-H. Giant Tunnelling Magnetoresistance at Room Temperature with MgO (100) Tunnel Barriers. Nat. Mater. 2004, 3, 862–867. [Google Scholar] [CrossRef] [PubMed]

- Yuasa, S.; Nagahama, T.; Fukushima, A.; Suzuki, Y.; Ando, K. Giant Room-Temperature Magnetoresistance in Single-Crystal Fe/MgO/Fe Magnetic Tunnel Junctions. Nat. Mater. 2004, 3, 868–871. [Google Scholar] [CrossRef] [PubMed]

- Ikeda, S.; Hayakawa, J.; Ashizawa, Y.; Lee, Y.M.; Miura, K.; Hasegawa, H.; Tsunoda, M.; Matsukura, F.; Ohno, H. Tunnel Magnetoresistance of 604% at 300K by Suppression of Ta Diffusion in CoFeB/MgO/CoFeB Pseudo-Spin-Valves Annealed at High Temperature. Appl. Phys. Lett. 2008, 93, 082508. [Google Scholar] [CrossRef]

- Tehrani, S.; Slaughter, J.M.; DeHerrera, M.; Keshavarzi, A.; Engel, B.; Janesky, J.; Calder, K.; Whig, R.; Dave, R.; Naji, K. Magnetoresistive Random Access Memory Using Magnetic Tunnel Junctions. Proc. IEEE 2003, 91, 703–714. [Google Scholar] [CrossRef]

- Slonczewski, J.C. Current-Driven Excitation of Magnetic Multilayers. J. Magn. Magn. Mater. 1996, 159, L1–L7. [Google Scholar] [CrossRef]

- Berger, L. Emission of Spin Waves by a Magnetic Multilayer Traversed by a Current. Phys. Rev. B 1996, 54, 9353–9358. [Google Scholar] [CrossRef]

- Hatsuda, K.; Aikawa, H.; Seo, S.M.; Rho, K.; Cha, S.Y.; Zeissler, K. A 64Gb DDR4 STT-MRAM Using a Time-Controlled Discharge-Reading Scheme for a 0.001681 μm2 1T-1MTJ Cross-Point Cell. In Proceedings of the 2025 IEEE International Solid-State Circuits Conference (ISSCC), San Francisco, CA, USA, 16–20 February 2025; pp. 30.6.1–30.6.4. [Google Scholar]

- Valasek, J. Piezoelectric and Allied Phenomena in Rochelle Salt. Phys. Rev. 1921, 17, 475–481. [Google Scholar] [CrossRef]

- Mikolajick, T.; Park, M.H.; Begon-Lours, L.; Slesazeck, S. From ferroelectric material optimization to neuromorphic devices. Adv. Mater. 2023, 35, 2206042. [Google Scholar] [CrossRef]

- Böscke, T.; Müller, J.; Bräuhaus, D.; Schröder, U.; Böttger, U. Ferroelectricity in hafnium oxide thin films. Appl. Phys. Lett. 2011, 99, 102903. [Google Scholar] [CrossRef]

- Fantini, P. Phase Change Memory Applications: The History, the Present and the Future. J. Phys. D Appl. Phys. 2020, 53, 283002. [Google Scholar] [CrossRef]

- Wong, H.S.P.; Salahuddin, S. Memory Leads the Way to Better Computing. Nat. Nanotechnol. 2015, 10, 191–194. [Google Scholar] [CrossRef]

- Matsui, C.; Sun, C.; Takeuchi, K. Design of Hybrid SSDs with Storage Class Memory and NAND Flash Memory. Proc. IEEE 2017, 105, 1812–1821. [Google Scholar] [CrossRef]

- Kim, T.; Lee, S. Evolution of Phase-Change Memory for the Storage-Class Memory and Beyond. IEEE Trans. Electron. Devices 2020, 67, 1394–1406. [Google Scholar] [CrossRef]

- Ovshinsky, S.R. Reversible Electrical Switching Phenomena in Disordered Structures. Phys. Rev. Lett. 1968, 21, 1450–1453. [Google Scholar] [CrossRef]

- Wuttig, M.; Yamada, N. Phase-Change Materials for Rewriteable Data Storage. Nat. Mater. 2007, 6, 824–832. [Google Scholar] [CrossRef] [PubMed]

- Burr, G.W.; Brightsky, M.J.; Sebastian, A.; Salinga, M.; Krebs, D.; Weidenhaupt, M.; Lam, C.; Happ, T.D.; Friedrich, I.; Happ, T. Recent Progress in Phase-Change Memory Technology. IEEE J. Emerg. Sel. Top. Circuits Syst. 2016, 6, 146–162. [Google Scholar] [CrossRef]

- Burr, G.W.; Breitwisch, M.; Franceschini, M.; Kurdi, B.; Millar, S.; Padilla, A.; Rajendran, B.; Raoux, S.; Rice, P.M.; Shenoy, R.; et al. Phase Change Memory Technology. J. Vac. Sci. Technol. B 2010, 28, 223. [Google Scholar] [CrossRef]

- Le Gallo, M.; Sebastian, A. An Overview of Phase-Change Memory Device Physics. J. Phys. D Appl. Phys. 2020, 53, 213002. [Google Scholar] [CrossRef]

- Syed, G.S.; Le Gallo, M.; Sebastian, A. Phase-Change Memory for In-Memory Computing. Chem. Rev. 2025, 125, 5163–5194. [Google Scholar] [CrossRef]

- Lee, J.I.; Park, H.; Cho, S.L.; Park, Y.L.; Bae, B.J.; Park, J.H.; Choi, J.H.; Kim, T.S.; Cho, Y.S.; An, H.G.; et al. Highly Scalable Phase Change Memory with CVD GeSbTe for Sub-50 nm Generation. In Proceedings of the 2007 IEEE Symposium on VLSI Technology, Kyoto, Japan, 12–14 June 2007; pp. 102–103. [Google Scholar]

- Ha, Y.H.; Yi, J.H.; Horii, H.; Park, J.H.; Joo, S.H.; Park, S.O.; Bae, B.J.; Cho, S.L.; Chung, U.; Moon, J.T. An Edge Contact Type Cell for Phase Change RAM Featuring Very Low Power Consumption. In Proceedings of the 2003 Symposium on VLSI Technology, Kyoto, Japan, 10–12 June 2003; pp. 175–176. [Google Scholar]

- Jeong, C.-W.; Ahn, S.-J.; Hwang, Y.-N.; Song, Y.-J.; Oh, J.-H.; Lee, S.-Y.; Kim, K.-H.; Sohn, S.-K.; Hwang, C.S. Highly Reliable Ring-Type Contact for High-Density Phase Change Memory. Jpn. J. Appl. Phys. 2006, 45 (Suppl. S4), 3233. [Google Scholar] [CrossRef]

- Im, D.H.; Lee, J.I.; Cho, S.L.; An, H.G.; Kim, D.H.; Kim, I.S.; Park, H.; Ahn, D.H.; Horii, H.; Park, S.O.; et al. A Unified 7.5 nm Dash-Type Confined Cell for High Performance PRAM Device. In Proceedings of the 2008 IEEE International Electron Devices Meeting, San Francisco, CA, USA, 15–17 December 2008; pp. 1–4. [Google Scholar]

- Wong, H.S.P.; Raoux, S.; Kim, S.B.; Liang, J.; Reifenberg, J.P.; Rajendran, B.; Asheghi, M.; Goodson, K.E. Phase Change Memory. Proc. IEEE 2010, 98, 2201–2227. [Google Scholar] [CrossRef]

- Noé, P.; Vallée, C.; Hippert, F.; Fillot, F.; Raty, J.-Y. Phase-Change Materials for Non-Volatile Memory Devices: From Technological Challenges to Materials Science Issues. Semicond. Sci. Technol. 2017, 33, 013002. [Google Scholar] [CrossRef]

- Zhou, X.; Xia, M.; Rao, F.; Wang, Y.; Cai, Y.; Xu, L.; Zhu, M.; Song, Z.; Zhang, L.; Liu, Y.; et al. Understanding Phase-Change Behaviors of Carbon-Doped Ge2Sb2Te5 for Phase-Change Memory Application. ACS Appl. Mater. Interfaces 2014, 6, 14207–14214. [Google Scholar] [CrossRef]

- Yin, Y.; Sone, H.; Hosaka, S. Characterization of Nitrogen-Doped Sb2Te3 Films and Their Application to Phase-Change Memory. J. Appl. Phys. 2007, 102, 064506. [Google Scholar] [CrossRef]

- Zhou, X.; Wu, L.; Song, Z.; Cai, Y.; Rao, F.; Liu, B.; Wang, W.; Xu, L.; Zhu, M.; Zhang, L.; et al. Nitrogen-Doped Sb-Rich Si–Sb–Te Phase-Change Material for High-Performance Phase-Change Memory. Acta Mater. 2013, 61, 7324–7333. [Google Scholar] [CrossRef]

- Cheng, H.Y.; Wu, J.Y.; Cheek, R.; Zhang, Y.; Li, J.; Lam, C.; Burr, G.W.; Raoux, S. A Thermally Robust Phase Change Memory by Engineering the Ge/N Concentration in (Ge,N)xSbyTez Phase Change Material. In Proceedings of the 2012 International Electron Devices Meeting, San Francisco, CA, USA, 10–13 December 2012; pp. 31.1.1–31.1.4. [Google Scholar]

- Yin, Y.; Morioka, S.; Kozaki, S.; Hosaka, S. Oxygen-Doped Sb2Te3 for High-Performance Phase-Change Memory. Appl. Surf. Sci. 2015, 349, 230–234. [Google Scholar] [CrossRef]

- Wong, H.S.P. Stanford Memory Trends. Available online: https://nano.stanford.edu/stanford-memory-trends (accessed on 23 November 2017).

- Navarro, G.; Bourgeois, G.; Kluge, J.; Krebs, D. Phase-Change Memory: Performance, Roles and Challenges. In Proceedings of the 2018 IEEE International Memory Workshop (IMW), Kyoto, Japan, 13–16 May 2018; pp. 1–4. [Google Scholar]

- Ren, K.; Xia, M.; Zhu, S.; Wu, L.; Rao, F.; Song, Z.; Xu, L.; Zhu, M.; Wang, Y.; Zhou, X. Crystal-Like Glassy Structure in Sc-Doped BiSbTe Ensuring Excellent Speed and Power Efficiency in Phase Change Memory. ACS Appl. Mater. Interfaces 2020, 12, 16601–16608. [Google Scholar] [CrossRef]

- Chen, B.; Chen, Y.; Chen, Y.; Song, Z.; Zhou, X. Anomalous Crystallization Kinetics of Ultrafast ScSbTe Phase-Change Memory Materials Induced by Nitrogen Doping. Acta Mater. 2022, 238, 118211. [Google Scholar] [CrossRef]

- Kim, O.; Kim, Y.; Kim, H.L.; Lee, D.; Song, J.; Yun, D. Growth Mechanism of Ge2Sb2Te5 Thin Films by Atomic Layer Deposition Supercycles of GeTe and SbTe. Surf. Interfaces 2024, 53, 105101. [Google Scholar] [CrossRef]

- Yin, Q.; Chen, L. Crystallization Behavior and Electrical Characteristics of Ga–Sb Thin Films for Phase Change Memory. Nanotechnology 2020, 31, 215709. [Google Scholar] [CrossRef]

- Sousa, V.; Navarro, G. Material Engineering for PCM Device Optimization. In Phase Change Memory: Device Physics, Reliability and Applications; Springer International Publishing: Cham, Switzerland, 2017; pp. 181–222. [Google Scholar]

- Simpson, R.E.; Fons, P.; Kolobov, A.V.; Fukaya, T.; Krbal, M.; Yagi, T.; Tominaga, J. Interfacial Phase-Change Memory. Nat. Nanotechnol. 2011, 6, 501–505. [Google Scholar] [CrossRef] [PubMed]

- Wu, X.; Khan, A.I.; Lee, H.; Park, J.H.; Cho, S.L.; Lee, J.I. Novel Nanocomposite-Superlattices for Low Energy and High Stability Nanoscale Phase-Change Memory. Nat. Commun. 2024, 15, 13. [Google Scholar] [CrossRef] [PubMed]

- Chen, S.; Yang, K.; Wu, W.; Lin, C.; Li, H.; Wang, W. Superlattice-Like Sb–Ge Thin Films for High Thermal Stability and Low Power Phase Change Memory. J. Alloys Compd. 2018, 738, 145–150. [Google Scholar] [CrossRef]

- Bozorg-Grayeli, E.; Reifenberg, J.P.; Panzer, M.A.; Asheghi, M.; Goodson, K.E. Temperature-Dependent Thermal Properties of Phase-Change Memory Electrode Materials. IEEE Electron. Device Lett. 2011, 32, 1281–1283. [Google Scholar] [CrossRef]

- Wang, L.; Gong, S.; Yang, C.; Zhang, L.; Zhu, M.; Rao, F. Towards Low Energy Consumption Data Storage Era Using Phase-Change Probe Memory with TiN Bottom Electrode. Nanotechnol. Rev. 2016, 5, 455–460. [Google Scholar] [CrossRef]

- Liang, J.; Jeyasingh, R.G.D.; Chen, H.Y.; Wong, H.S.P. An Ultra-Low Reset Current Cross-Point Phase Change Memory with Carbon Nanotube Electrodes. IEEE Trans. Electron. Devices 2012, 59, 1155–1163. [Google Scholar] [CrossRef]

- Kim, T.H.; Park, S.W.; Lee, H.J.; Choi, J.Y.; Oh, H.W.; Kim, S.J.; Lee, K.S. Effect of Transition Metal Dichalcogenide Based Confinement Layers on the Performance of Phase-Change Heterostructure Memory. Small 2023, 19, 2303659. [Google Scholar] [CrossRef]

- Zheng, C.; Simpson, R.E.; Tang, K.; Chen, Y.; Xu, H.; Tominaga, J.; Raty, J.-Y.; Wuttig, M. Enabling Active Nanotechnologies by Phase Transition: From Electronics, Photonics to Thermotics. Chem. Rev. 2022, 122, 15450–15500. [Google Scholar] [CrossRef]

- Shen, J.; Lv, S.; Chen, X.; Zhao, Y.; Zhou, X.; Wu, L.; Song, Z. Thermal Barrier Phase Change Memory. ACS Appl. Mater. Interfaces 2019, 11, 5336–5343. [Google Scholar] [CrossRef]

- Zhu, M.; Ren, K.; Song, Z. Ovonic Threshold Switching Selectors for Three-Dimensional Stackable Phase-Change Memory. MRS Bull. 2019, 44, 715–720. [Google Scholar] [CrossRef]

- Lee, P.-H.; Lee, C.-F.; Shih, Y.-C.; Lin, H.-J.; Chang, Y.-A.; Lu, C.-H.; Chen, Y.-L.; Lo, C.-P.; Chen, C.-C.; Kuo, C.-H.; et al. A 16nm 32Mb Embedded STT-MRAM with a 6ns Read-Access Time, a 1M-Cycle Write Endurance, 20-Year Retention at 150 °C and MTJ-OTP Solutions for Magnetic Immunity. In Proceedings of the 2023 IEEE International Solid-State Circuits Conference (ISSCC), San Francisco, CA, USA, 19–23 February 2023; pp. 494–496. [Google Scholar] [CrossRef]

- Arnaud, F.; Zuliani, P.; Reynard, J.-P.; Gandolfo, A.; Disegni, F.; Mattavelli, P.; Gomiero, E.; Samanni, G.; Jahan, C.; Berthelon, R.; et al. High Density Embedded PCM Cell in 28nm FDSOI Technology for Automotive Micro-Controller Applications. In Proceedings of the 2020 IEEE International Electron Devices Meeting (IEDM), San Francisco, CA, USA, 12–18 December 2020; pp. 24.2.1–24.2.4. [Google Scholar] [CrossRef]

- Ramaswamy, N.; Calderoni, A.; Zahurak, J.; Servalli, G.; Chavan, A.; Chhajed, S.; Balakrishnan, M.; Fischer, M.; Hollander, M.; Ettisserry, D.P.; et al. NVDRAM: A 32Gbit Dual Layer 3D Stacked Non-Volatile Ferroelectric Memory with Near-DRAM Performance for Demanding AI Workloads. In Proceedings of the 2023 International Electron Devices Meeting (IEDM), San Francisco, CA, USA, 9–13 December 2023; pp. 1–4. [Google Scholar]

- Kim, W.; Jung, C.; Yoo, S.; Hong, D.; Hwang, J.; Yoon, J.; Jung, O.; Choi, J.; Hyun, S.; Kang, M.; et al. A 1.1 V 16 Gb DDR5 DRAM with Probabilistic-Aggressor Tracking, Refresh-Management Functionality, Per-Row Hammer Tracking, a Multi-Step Pre-charge, and Core Bias Modulation for Security and Reliability Enhancement. In Proceedings of the 2023 IEEE International Solid-State Circuits Conference (ISSCC), San Francisco, CA, USA, 19–23 February 2023; pp. 1–3. [Google Scholar] [CrossRef]

- Yu, S.; Kim, T.-H. Semiconductor Memory Technologies: State-of-the-Art and Future Trends. IEEE Comput. 2024, 57, 83–89. [Google Scholar] [CrossRef]

- Chen, W.-H.; Dou, C.; Li, K.-X.; Lin, W.-Y.; Li, P.-Y.; Huang, J.-H.; Wang, J.-H.; Wei, W.-C.; Xue, C.-X.; Chiu, Y.-C.; et al. CMOS-Integrated Memristive Non-Volatile Computing-in-Memory for AI Edge Processors. Nat. Electron. 2019, 2, 420–428. [Google Scholar] [CrossRef]

- Udaya Mohanan, K. Resistive Switching Devices for Neuromorphic Computing: From Foundations to Chip Level Innovations. Nanomaterials 2024, 14, 527. [Google Scholar] [CrossRef]

- Chua, L.O. Memristor—The Missing Circuit Element. IEEE Trans. Circ. Theory 1971, 18, 507–519. [Google Scholar] [CrossRef]

- Strukov, D.B.; Snider, G.S.; Stewart, D.R.; Williams, R.S. The Missing Memristor Found. Nature 2008, 453, 80–83. [Google Scholar] [CrossRef]

- Barraj, I.; Mestiri, H.; Masmoudi, M. Overview of Memristor-Based Design for Analog Applications. Micromachines 2024, 15, 505. [Google Scholar] [CrossRef]

- Boole, G. An Investigation of the Laws of Thought on Which Are Founded the Mathematical Theories of Logic and Probabilities; original work published 1854; CreateSpace Independent Publishing Platform: Charleston, SC, USA, 2015. [Google Scholar]

- Shannon, C.E. A Symbolic Analysis of Relay and Switching Circuits. Trans. Am. Inst. Electr. Eng. 1938, 57, 713–723. [Google Scholar] [CrossRef]

- Kvatinsky, S.; Satat, G. Memristor-Based Material Implication (IMPLY) Logic: Design Principles and Methodologies. IEEE Trans. Very Large Scale Integr. Syst. 2014, 22, 2054–2066. [Google Scholar] [CrossRef]

- Rose, G.S.; Rajendran, J. Leveraging Memristive Systems in the Construction of Digital Logic Circuits. Proc. IEEE 2012, 100, 2033–2049. [Google Scholar] [CrossRef]

- Huang, Y.; Li, S.; Yang, Y.; Chen, C. Progress on Memristor-Based Analog Logic Operation. Electronics 2023, 12, 2486. [Google Scholar] [CrossRef]

- Ahmad, K.; Abdalhossein, R. Novel Design for A Memristor-based Full Adder Using A New IMPLY Logic Approach. J. Comput. Electron. 2018, 17, 1303–1314. [Google Scholar] [CrossRef]

- Teimoory, M.; Amirsoleimani, A.; Shamsi, J.; Ahmadi, A.; Alirezaee, S.; Ahmadi, M. Optimized Implementation of Memristor-Based Full Adder by Material Implication Logic. In Proceedings of the 2014 21st IEEE International Conference on Electronics, Circuits and Systems (ICECS), Marseille, France, 7–10 December 2014; pp. 562–565. [Google Scholar] [CrossRef]

- Cui, X.; Ma, X.; Lin, Q.; Zhang, H.; Zhang, Y.; Li, Z. Design of High-Speed Logic Circuits with Four-Step RRAM-Based Logic Gates. Circ. Syst. Signal Process. 2020, 39, 2822–2840. [Google Scholar] [CrossRef]

- Kang, S.M.; Leblebici, Y. CMOS Digital Integrated Circuits: Analysis and Design, 4th ed.; McGraw-Hill: New York, NY, USA, 2014. [Google Scholar]

- Srinivsarao, B.N.; Mahalakshmi, B. Design and Implementation of 17 Transistors Full Adder Cell. Int. J. Res. 2018, 5, 16846–16851. [Google Scholar]

- Li, X.; Liu, Y.; Wang, Z.; Wang, F.; Zeng, H.; Xu, H.; Chen, Y.; Wang, Y.; Zhu, H.; Zhang, Y.; et al. Non-Volatile Shift Register Based on Memristive Devices. Sci. Rep. 2016, 6, 25034. [Google Scholar] [CrossRef]

- Nair, V.V.; Reghuvaran, C.; John, D.; Choubey, B.; James, A. ESSM: Extended Synaptic Sampling Machine with Stochastic Echo State Neuro-Memristive Circuits. IEEE J. Emerg. Sel. Top. Circuits Syst. 2023, 13, 965–974. [Google Scholar] [CrossRef]

- Teimoory, M.; Amirsoleimani, A.; Ahmadi, A.; Alirezaee, S.; Salimpour, S.; Ahmadi, M. Memristor-Based Linear Feedback Shift Register Based on Material Implication Logic. In Proceedings of the 2015 European Conference on Circuit Theory and Design (ECCTD), Trondheim, Norway, 24–26 August 2015; pp. 1–4. [Google Scholar] [CrossRef]

- Guckert, L.; Swartzlander, E.E. Optimized Memristor-Based Multipliers. IEEE Trans. Circ. Syst. I 2017, 64, 373–38570. [Google Scholar] [CrossRef]

- Sun, J.; Li, Z.; Jiang, M.; Sun, Y. Efficient Data Transfer and Multi-Bit Multiplier Design in Processing in Memory. Micromachines 2024, 15, 770. [Google Scholar] [CrossRef]

- Chang, C.-H.; Chang, V.S.; Pan, K.H.; Lai, K.T.; Ng, J.-A.; Chen, C.Y.; Wu, B.; Lin, C.; Liang, C.-S.; Tsao, C.P.; et al. Critical Process Features Enabling Aggressive Contacted Gate Pitch Scaling for 3nm CMOS Technology and Beyond. In Proceedings of the 2022 International Electron Devices Meeting (IEDM), San Francisco, CA, USA, 3–7 December 2022; pp. 27.1.1–27.1.4. [Google Scholar] [CrossRef]

- Chandrasekaran, N.; Ramaswamy, N.; Mouli, C. Memory Technology: Innovations Needed for Continued Technology Scaling and Enabling Advanced Computing Systems. In Proceedings of the 2020 IEEE International Electron Devices Meeting (IEDM), San Francisco, CA, USA, 12–18 December 2020; pp. 10.1.1–10.1.8. [Google Scholar] [CrossRef]

- Mutlu, O. Processing Data Where It Makes Sense in Modern Computing Systems: Enabling In-Memory Computation. In Proceedings of the 2019 Great Lakes Symp. VLSI (GLSVLSI), Tysons Corner, VA, USA, 9–11 May 2019; pp. 5–6. Available online: https://dblp.org/db/conf/glvlsi/glvlsi2019 (accessed on 26 June 2025).

- Kawahara, T.; Ito, K.; Takemura, R.; Ohno, H. Spin-Transfer Torque RAM Technology: Review and Prospect. Microelectron. Reliab. 2012, 52, 613–627. [Google Scholar] [CrossRef]

- Singh, G.; Chelini, L.; Corda, S.; Awan, A.J.; Stuijk, S.; Jordans, R.; Corporaal, H.; Boonstra, A.-J. Near-Memory Computing: Past, Present, and Future. Microprocess. Microsyst. 2019, 71, 102868. [Google Scholar] [CrossRef]

- Kim, J.; Lee, H.; Park, S.; Choi, D.; Jeong, Y.; Kwon, J.; Moon, H.; Seo, M.; Han, J.; Cho, K.; et al. A 1ynm 16Gb 4.8TFLOPS/W HBM-PIM with Bank-Level Programmable AI Engines. In Proceedings of the IEEE International Solid-State Circuits Conference (ISSCC), San Francisco, CA, USA, 19–23 February 2023. [Google Scholar]

- AMD Zen Core Architecture. Available online: https://www.amd.com/en/technologies/zen-core.html (accessed on 1 July 2025).

- Jung, S.; Lee, H.; Myung, S.; Kim, H.; Yoon, S.K.; Kwon, S.-W.; Ju, Y.; Kim, M.; Yi, W.; Han, S.; et al. A Crossbar Array of Magnetoresistive Memory Devices for In-Memory Computing. Nature 2022, 601, 211–216. [Google Scholar] [CrossRef]

- Wan, W.; Kubendran, R.; Schaefer, C.; Eryilmaz, S.B.; Zhang, W.; Wu, D.; Deiss, S.; Raina, P.; Qian, H.; Gao, B.; et al. A Compute-In-Memory Chip Based on Resistive Random-Access Memory. Nature 2022, 608, 504–512. [Google Scholar] [CrossRef] [PubMed]

- Sebastian, A.; Le Gallo, M.; Khaddam-Aljameh, R.; Eleftheriou, E. Memory Devices and Applications for In-Memory Computing. Nat. Nanotechnol. 2020, 15, 529–544. [Google Scholar] [CrossRef]

- Sun, Z.; Pedretti, G.; Ambrosi, E.; Bricalli, A.; Wang, W.; Ielmini, D. Solving matrix equations in one step with cross-point resistive array. Proc. Natl. Acad. Sci. USA 2019, 116, 4123–4128. [Google Scholar] [CrossRef]

- Kudithipudi, D.; Schuman, C.; Vineyard, C.M.; Pandit, T.; Merkel, C.; Kubendran, R.; Aimone, J.B.; Orchard, G.; Mayr, C.; Benosman, R.; et al. Neuromorphic Computing at Scale. Nature 2025, 637, 801–812. [Google Scholar] [CrossRef]

- Prezioso, M.; Merrikh-Bayat, F.; Hoskins, B.D.; Adam, G.C.; Likharev, K.K.; Strukov, D.B. Training and Operation of an Integrated Neuromorphic Network Based on Metal-Oxide Memristors. Nature 2015, 521, 61–64. [Google Scholar] [CrossRef] [PubMed]

- Kuzum, D.; Jeyasingh, R.G.D.; Lee, B.; Wong, H.-S.P. Nanoelectronic programmable synapses based on phase change materials for brain-inspired computing. Nano Lett. 2011, 12, 2179. [Google Scholar] [CrossRef] [PubMed]

- Huo, Q.; Yang, Y.; Wang, Y.; Lei, D.; Fu, X.; Ren, Q.; Xu, X.; Luo, Q.; Xing, G.; Chen, C.; et al. A Computing-in-Memory Macro Based on Three-Dimensional Resistive Random-Access Memory. Nat. Electron. 2022, 5, 469–477. [Google Scholar] [CrossRef]

- Le Gallo, M.; Khaddam-Aljameh, R.; Stanisavljevic, M.; Vasilopoulos, A.; Kersting, B.; Dazzi, M.; Karunaratne, G.; Brändli, M.; Singh, A.; Müller, S.M.; et al. A 64-Core Mixed-Signal in-Memory Compute Chip Based on Phase-Change Memory for Deep Neural Network Inference. Nat. Electron. 2023, 6, 680–693. [Google Scholar] [CrossRef]

| STT MRAM SCM/ DRAM | MRAM Embedded | SOT Cache | PCM Stand Alone | PCM Embedded | RRAM Stand Alone | RRAM Embedded | FeRAM | FeFET | |

|---|---|---|---|---|---|---|---|---|---|

| Capacity | >1 Gb | 10–100 Mb | >1 Mb | Gb | 10–100 Mb | ~Gb targetted | 1–10 Mb | Poor | Small |

| Scalability | Medium | Medium | Poor | Good | Good | Medium | Good | Medium | Poor |

| MLC | No | No | No | Possible | Possible | Possible | Possible | Possible | Possible |

| 3D Integration | No | No | No | Yes | Yes | Yes | Yes | No | No |

| Architecture | Xbar | Xbar | 3 terminals | Xbar | 1T1R | Xbar | 1T1R | 1T1R | 3 terminals |

| Retention | >1 yr 100 °C | Automotive 150 °C 10 ys | 85–100 °C | 85–100 °C | Automotive | 10 yrs 85 °C | 10 yrs > 85 °C | 85–100 °C | SMT compliant |

| Latency | 10 ns | 10 ns | <1 ns | 100 ns | 100 ns | 100 ns | 100 ns | <20 ns | 5 ns |

| Power | pJ/bit | pJ/bit | fJ/bit | 10 pJ/bit | 10 pJ/bit >200 uA | 1–10 pJ/bit | 1–10 pJ/bit ~100 uA | 10 fJ/bit | 10 fJ/bit |

| Endurance | 1010 | >106 | >1010 | 107 | 106 | 107 | 106 | >1011 (destructive read) | 104–105 |

| Variability | NA | NA | NA | Issue (drift) | Issue (drift) | Issue (variability, noise) | Issue (variability, noise) | Variability @small size | Variability @small size |

| Space | DRAM | NVM | Cache | SCM (storage, memory) | MPU, MCU | SCM (storage, memory) | MPU, MCU | DRAM | Flash |

| Maturity Example of products | Products: Everspin, Avalanche (persistent SRAM) | Product: Avalanche, TSMC (offers STTMRAM) | No product | Products: Intel/ Micron, Intel | Products: sampling: ST Microelectronics | No product | Products: Panasonic, Dialog, TSMC | Products (PZT): Texas Instruments, ujitsu, Cypress | Good |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Wong, H.; Li, W.; Zhang, J.; Bao, W.; Wu, L.; Liu, J. Driving for More Moore on Computing Devices with Advanced Non-Volatile Memory Technology. Electronics 2025, 14, 3456. https://doi.org/10.3390/electronics14173456

Wong H, Li W, Zhang J, Bao W, Wu L, Liu J. Driving for More Moore on Computing Devices with Advanced Non-Volatile Memory Technology. Electronics. 2025; 14(17):3456. https://doi.org/10.3390/electronics14173456

Chicago/Turabian StyleWong, Hei, Weidong Li, Jieqiong Zhang, Wenhan Bao, Lichao Wu, and Jun Liu. 2025. "Driving for More Moore on Computing Devices with Advanced Non-Volatile Memory Technology" Electronics 14, no. 17: 3456. https://doi.org/10.3390/electronics14173456

APA StyleWong, H., Li, W., Zhang, J., Bao, W., Wu, L., & Liu, J. (2025). Driving for More Moore on Computing Devices with Advanced Non-Volatile Memory Technology. Electronics, 14(17), 3456. https://doi.org/10.3390/electronics14173456