Abstract

Demand for OIS (Optical Image Stabilization) actuator modules, developed for shake correction technologies in industries such as smartphones, drones, IoT, and AR/VR, is increasing. To enable real-time and precise inspection of these modules, an AI algorithm that maximizes defect detection accuracy is required. This study proposes an unsupervised learning-based algorithm that is robust to noise and capable of real-time processing for accurate defect classification of OIS actuators in a smart factory environment. The proposed algorithm performs noise-reduction preprocessing, considering the sensitivity of small components and lighting imbalances, and defines three dynamic Regions of Interest (ROIs) to address positional deviations. A customized AutoEncoder (AE) is trained for each ROI, and defect classification is conducted based on reconstruction errors, followed by a final comprehensive decision. Experimental results show that the algorithm achieves an accuracy of 0.9944 and an F1 score of 0.9971 using only a camera without the need for expensive sensors. Furthermore, it demonstrates an average processing time of 2.79 ms per module, ensuring real-time capability. This study contributes to precise quality inspection in smart factories by proposing a robust and scalable unsupervised inspection algorithm.

1. Introduction

Most modern smartphones can automatically detect and compensate for user-induced shaking, enabling users to easily capture high-resolution images. The technology developed for this automatic detection and compensation is the Optical Image Stabilization (OIS) actuator. The OIS actuator is a key component that corrects minute movements of the camera lens to reduce image blur caused by motion. It is an essential part of high-performance multi-camera systems in flagship smartphones from major manufacturers such as Apple (iPhone) [1], Samsung (Galaxy) [2], Google (Pixel) [3], and Xiaomi (Mi) [4]. Recently, the demand for stabilization technology has been rapidly expanding beyond smartphones to various industrial sectors, including IoT-based imaging systems, drones, and AR/VR devices. With the growing demand in these sectors, three recent market research reports project that the OIS module market will continue its upward trend, showing a compound annual growth rate (CAGR) of approximately 10% [5,6,7].

With increasing demand, manufacturing processes are being automated and advanced based on smart factory systems; however, the defect classification stage still often depends on manual inspection. In the process of transitioning the defect inspection stage to smart factory-based automation and advancement, the following challenges arise. First, the system must effectively detect all types of rare defects that occur sporadically during production. Second, the OIS module is a highly sensitive and compact component that reacts to minor vibrations and lighting variations, making it difficult to remove noise and accurately classify defects without the use of expensive sensors. Third, real-time processing and limited computational resources on manufacturing lines restrict the deployment of large-scale AI models, necessitating the design of lightweight algorithms. Finally, in actual manufacturing environments, environmental variability (such as deviations in component positioning, changes in lighting conditions, and light reflections) leads to inconsistencies in the data. These issues lead to increased labeling costs, degraded model generalization performance, as well as challenges in the scalability and maintenance of AI systems. Therefore, to overcome these challenges and ensure consistent defect inspection, a robust and lightweight AI-based defect classification automation technology that can adapt to environmental variations is required.

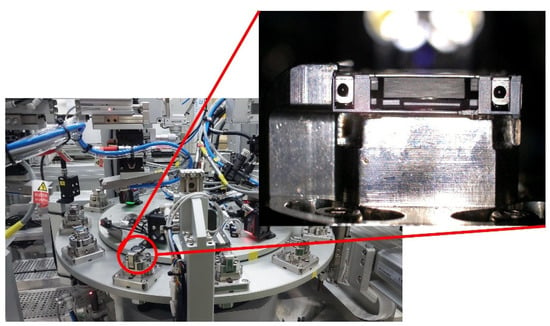

This paper aims to develop a robust and real-time defect classification algorithm for OIS actuator modules in smart factory environments, as illustrated in Figure 1. To achieve this, it proposes an unsupervised learning-based defect classification approach that combines image preprocessing with an AutoEncoder (AE) [8] for accurate defect detection. Vision-based inspection in real manufacturing settings remains challenging due to process noise, lighting imbalance, and positional variation of components. To address the environmental sensitivity of small components, a step-by-step preprocessing procedure is first applied to eliminate noise. Then, to improve classification accuracy, processing speed, and robustness to positional uncertainty, three dynamic Regions of Interest (ROI) are defined. Each preprocessed ROI image is processed by a customized AE, and reconstruction errors are used to classify defects. The initial preprocessing step is specifically designed to handle noise, lighting inconsistencies, and component placement variation. To address the issue of positional uncertainty and to reduce computational load for improved processing speed, an algorithm is introduced to dynamically assign three detection ROIs. Additional preprocessing is performed for each detection ROI based on its characteristics to finalize brightness correction and noise removal. The final preprocessed images are used as input for three customized AEs. Each AE, based on unsupervised learning [9], is trained only on normal data without labels and performs defect classification for the three ROIs based on reconstruction errors between normal and defective samples. The individual classification results are then aggregated to determine the final defect status, completing the inspection of the OIS actuator module. The proposed algorithm demonstrates strong noise robustness using only a standard camera and achieves real-time performance through a lightweight design. Moreover, its unsupervised learning foundation ensures adaptability to varying factory conditions, enhancing scalability and deployment potential. Experimental results show an accuracy of 98.03% for normal data, 100% for defective data, and an average processing time of 2.97 ms per module. In particular, the algorithm achieves an F1 score of 0.9971, successfully detecting all defective cases while maintaining high performance in classifying normal data. This research presents a reliable AI-based automated inspection approach capable of replacing manual visual inspection and contributes to the implementation of intelligent quality control in smart factories.

Figure 1.

Smart factory for OIS (Optical Image Stabilization) actuators.

The structure of the paper is as follows. Section 2 reviews previous studies on supervised and unsupervised defect detection methods in smart factory and manufacturing processes, as well as noise reduction preprocessing techniques. Section 3 presents the overall structure of the proposed algorithm. It separately describes the camera-based noise reduction preprocessing, the unsupervised defect classification process, and the data collection procedure. Section 4 outlines the experimental methods and results related to the preprocessing techniques and defect classification. Finally, Section 5 discusses the conclusions of the study, potential applications, and future research directions.

2. Related Work

Defect detection in industrial manufacturing processes is critical for ensuring product quality and reliability. Among the essential components used in various machines, screws are particularly important, making defect detection for these parts crucial. Accordingly, a number of studies have focused on developing convolutional neural networks (CNN) models to classify screws with and without various types of defects [10]. Beyond simple components like screws, there are also studies targeting defect detection in specialized parts such as rotating machinery. One such study proposed a novel CNN architecture that incorporates Bayesian optimization and channel fusion techniques, achieving high defect detection performance for rotors [11]. Other research has focused on enhancing production efficiency by proactively identifying defects during the manufacturing process. To achieve early defect identification, a real-time processing architecture was designed [12]. In addition to real-time capability, another study introduced an incremental learning approach to improve automation in defect detection, surpassing the performance of existing models. This approach begins with unsupervised learning in the early stages, transitions to semi-supervised learning, and eventually adopts mixed-supervision once sufficient data is available [13]. Moreover, defect detection has also been extended beyond component manufacturing to printed circuit boards (PCBs), where contextual information is leveraged for improved detection [14]. As research increasingly incorporates contextual data, some recent studies address the limitations of traditional large language models (LLMs) in visual defect recognition by adapting them for defect detection tasks [15].

Acquiring data for specialized components and equipment in various manufacturing processes remains a significant challenge. There have been studies focused on defect detection for specialized components or semiconductors, rather than general parts such as screws or bolts [16,17,18,19,20]. One study focuses on defect detection in gear wheels by selectively classifying normal and defective gear wheels using an AE, followed by clustering with the DBSCAN algorithm [21] to accurately identify defective regions. Beyond visible defect detection in simple PCBs, other studies address hidden or non-visible defects, using infrared sensors and deviation matrix clustering to classify anomalies. Another line of research targets the shearer, a key piece of equipment in coal mining production. Due to the complexity of such equipment, this study overcomes the limitation of relying solely on localized defect detection to assess overall functionality. In automotive manufacturing, research on anomaly detection in sheet metal glue lines aims to improve production and resource efficiency. For unsupervised anomaly detection, AEs are commonly employed, and patch-based AEs have been proposed to enhance detection performance [22,23]. These models reconstruct images in patch units or integrate the Vision Transformer (ViT) architecture [24] with AEs. Building upon this trend, a study proposed a simple yet efficient ViT-based architecture called ViTAD to address the multi-class unsupervised anomaly detection (MUAD) problem [25]. ViTAD demonstrates high training efficiency on datasets such as MVTec AD without relying on complex reconstruction processes. To overcome the limitations of AEs (which struggle with capturing global information) and CNNs, which may suffer from generalization errors, recent research has focused on leveraging ViT structures for unsupervised anomaly detection [26]. For example, a ViT-based model combining a memory module and a coordinate attention (CA) mechanism has shown strong performance on the MVTec AD and BeanTech AD datasets, enabling more precise global context understanding and reducing generalization errors. Another approach introduces a ViT-based model that learns normal patterns as vector-quantized (VQ) prototypes, effectively addressing the reconstruction ambiguity between normal and anomalous data in multi-class settings [27]. Furthermore, the Inductive Transformer (ITran) has been proposed to enhance anomaly detection accuracy in industrial applications [28]. ITran utilizes a multi-layer pyramid architecture and skip connections to extract multi-scale features and determine anomalies. By incorporating convolutional operations and inductive bias into the ViT backbone, ITran demonstrates efficient performance even on small-scale datasets. Some studies also focus on simultaneous defect localization, not just detection [29,30,31]. Techniques such as texture-based synthetic defect augmentation and hierarchical encoder-decoder structures are used to improve localization. Others propose decoder architectures for AEs that can identify defect locations without parameter learning. Additional research includes improvements to the traditional SSIM [32] reconstruction loss through AW-SSIM and the use of artificially generated defect data to boost anomaly detection performance [33]. A recent study has demonstrated effective anomaly detection without using embedding techniques, in contrast to the current trend showing that embedding methods can achieve high accuracy. This study improves memory efficiency and inference speed by leveraging paired low-light and high-light images to achieve high anomaly detection performance [34]. Finally, there is a study that overcomes the limitation of conventional defect detection models, which perform inference based on a fixed structure once trained. This study proposes a real-time dynamic model that continuously integrates the structure of normal data during manufacturing processes, in contrast to traditional static defect detection models [35].

Table 1 presents a performance comparison of major anomaly detection algorithms in the manufacturing domain. Most of these algorithms report their results using the MVTec AD dataset [36], where a higher (area under roc) AUROC value (closer to 1) indicates better anomaly detection performance. In manufacturing processes, the accuracy of anomaly detection depends not only on improvements in model architecture but also significantly on the noise preprocessing process. One study analyzed the causes of image quality degradation in PCB manufacturing environments and experimented with defect detection methods tailored to each scenario [37]. To address noise in the image reconstruction process, some research has enhanced anomaly detection performance using a noise-to-norm approach [38,39]. There is also a study that investigates the frequency bias between the reconstruction of normal and defective data. Based on this frequency bias, the study proposes a novel learning method that utilizes the high-frequency components of the data [40]. To eliminate noise, patch-level preprocessing methods have been proposed, using noise classifiers to remove noise in each image patch [41]. Additionally, a study introduced the fused directional distance (FDD) loss function, which considers distance and angular differences between training data to effectively suppress noise [42]. Lastly, a generative diffusion model was utilized to improve both defect localization and reconstruction performance. This study injects random noise into the data through a generative model and is designed to enable fine-grained pixel-level learning [43].

Table 1.

Summary of key algorithm performance for anomaly detection in manufacturing.

3. Algorithm

3.1. Background Knowledge for OIS Actuator

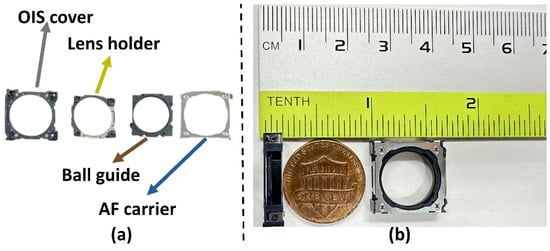

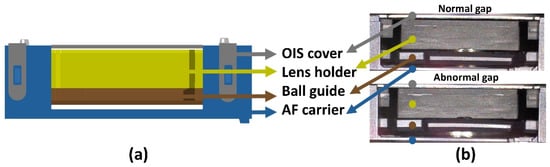

This study develops a real-time unsupervised learning-based defect classification algorithm with excellent performance for detecting defects in OIS actuators, a technology used to correct camera shake in smartphones. The OIS actuator is an essential module in high-performance camera systems and is composed of four miniature components. Figure 2a shows an image listing the detailed components that make up the corresponding part, consisting of the OIS cover, lens holder, ball guide, and autofocus (AF) carrier. A photo of the fully assembled module is presented in Figure 2b. The OIS actuator is a compact component, measuring approximately 0.67 inches in length and 0.1 inches in height. This study focuses on defect classification related to the structural gap observed on one of the four sides of the actuator, specifically the side known as the “hook2” surface. Figure 3a illustrates a simplified schematic of the hook2 surface, where the gray, yellow, brown, and blue regions represent the OIS cover, lens holder, ball guide, and AF carrier, respectively. In this study, three specific gaps on the hook2 surface are defined as defect classification targets: (1) the structural gap between the OIS cover and the lens holder, (2) the gap between the lens holder and the ball guide, and (3) the gap between the ball guide and the AF carrier. Figure 3b presents an actual image of the hook2 surface, visually highlighting the differences between normal and defective gaps, particularly in gap (3) between the ball guide and the AF carrier. Compared to a normal module, a defective module shows a widened gap between the ball guide and AF carrier, and a narrowed gap between the lens holder and ball guide, which serve as key indicators of structural anomalies.

Figure 2.

Comparison image of OIS actuator components and dimensions: (a) Details for each components; (b) Size reference of OIS actuator.

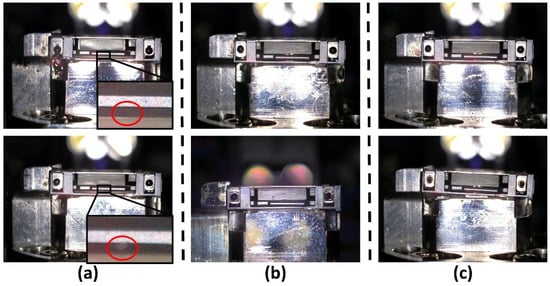

Figure 3.

Diagram and collected comparison images of normal and defective hook2 surface of OIS actuator: (a) Structural layout of the OIS actuator; (b) Comparison of gap conditions in the OIS actuator.

Analysis of the OIS actuator reveals that the three gaps exhibit distinct visual characteristics depending on their position, and each gap has a different allowable tolerance based on design specifications. According to the module design drawings provided by a partner company, the tolerance ranges for each gap are as follows: (1) the gap between the OIS cover and the lens holder is 0.10 mm (+0.05/−0.07), (2) the gap between the lens holder and the ball guide is 0.08 mm (±0.05), and (3) the gap between the ball guide and the AF carrier is 0.09 mm (+0.05/−0.06). As intended in the design, the actual module image shown in Figure 3b confirms that gap (1) between the OIS cover and lens holder appears relatively narrow, while gaps (2) and (3) are comparatively wider. However, due to structural characteristics and lighting conditions, each gap exhibits visual differences, making it difficult to effectively detect boundaries using a uniform preprocessing or detection method. Specifically, gap (1) is very narrow and uniform, making it sensitive to lighting variations and noise, thus requiring precise brightness correction. Gap (2), while structurally and visually uniform, is partially occluded by the jig used to fix the module, which blurs its boundary and affects detection accuracy. Finally, the gap (3) between the ball guide and the AF carrier is adjacent to the lower edge of the jig, resulting in light reflections and structurally irregular brightness. Therefore, it is essential to design differentiated preprocessing strategies and detection algorithms that consider the specific characteristics of each gap. By applying tailored preprocessing techniques (such as noise suppression and brightness normalization) optimized for each gap, the overall accuracy and reliability of the defect classification system can be significantly improved.

3.2. Proposed Algorithm

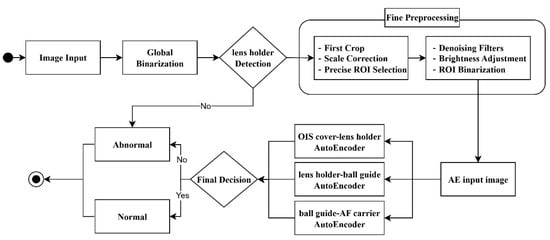

This study proposes a real-time unsupervised defect classification algorithm that combines a customized image preprocessing algorithm and an AE, designed to meet key constraints such as noise robustness, real-time performance, and scalability. The overall workflow of the proposed algorithm is illustrated in Figure 4 and Figure 5. First, the input image is binarized to detect the lens holder, and three ROIs corresponding to the target gaps are defined. For each ROI, noise reduction preprocessing (such as filtering and brightness normalization) is applied to generate the final preprocessed images, which are used as inputs to the AE. If lens holder detection fails, the image is immediately classified as defective. If detection succeeds, the three preprocessed ROI images are individually fed into their respective AEs, and a final defect classification is made based on thresholded reconstruction errors. Accordingly, the first stage (image preprocessing) and the second stage (unsupervised defect detection) together enable improvements in processing speed and classification accuracy, as well as enhanced model scalability.

Figure 4.

Proposed algorithm for the developed defect classification.

Figure 5.

Flowchart of the proposed algorithm.

3.3. High-Noise Reduction Technique for Small Components

In a smart factory environment, image preprocessing is essential for eliminating various irregular factors that reduce the accuracy of defect classification in OIS actuator images. The target gaps for classification are defined as the spaces between internal components, which appear on the image as boundaries between white-colored components and dark-colored gaps. However, due to various noise sources and lighting imbalances, it is difficult to accurately detect these gaps. Images collected in real-world settings often contain noise such as smudges, dust, and adhesive residue, all of which significantly hinder accurate gap boundary detection. Furthermore, the inspection system is enclosed in a glass case, making it prone to light reflections and uneven illumination, which can result in images that are either overly dark or bright. Additionally, OIS actuators are sometimes not properly seated in the jig, causing variations in the module’s position within the image. If ROIs are statically defined, the target components may fall outside the designated areas. These subtle positional deviations are particularly problematic given the small size of the module, making defect classification highly sensitive. To address these challenges, this study applies the following preprocessing steps to ensure consistent data quality: thresholding to enhance the gap visibility, noise removal using median blur and guided filter, brightness adjustment to correct lighting imbalance, and ROI realignment (cropping and resizing) to mitigate positional instability. Figure 6 illustrates examples of noise, lighting imbalance, and positional variability found in real images, highlighting the necessity of the proposed preprocessing algorithm.

Figure 6.

Necessity of preprocessing for collected data: (a) Comparison of smudge-induced differences; (b) Comparison of illumination-induced lighting differences; (c) Comparison of OIS actuator positional misalignment.

In the defect classification of OIS actuators, issues such as noise, lighting imbalance, and component position deviations significantly reduce the accuracy of gap-based classification. Therefore, a dedicated preprocessing algorithm is essential to address these challenges. This section explains the necessity of preprocessing for each issue type, accompanied by illustrative examples. Figure 6a demonstrates how noise distorts the gap boundary. The figure compares the differences in detecting the gap between the ball guide and the AF carrier depending on the presence or absence of noise. In the top image, the enlarged area beneath the ball guide clearly shows a clean, dark gap, while in the bottom image, the same area is partially obscured by a white smudge, distorting the gap appearance. Even such minor noise can be critical, as the gap appears only about 2 to 9 pixels wide in the original image (1280 × 960). Figure 6b compares brightness levels caused by installation environment differences. The top and bottom images were captured using two identically manufactured defect inspection devices, but due to differences in their installation environments, the brightness of the collected images varies. Therefore, brightness adjustment preprocessing is necessary to address lighting imbalance. Figure 6c compares well-seated and misaligned OIS actuators. Most of the time, the device is properly seated in the jig during operation, as shown in the top image; however, there are occasional cases where it is not properly seated, as illustrated in the bottom image. Even in cases where the OIS actuator is not severely misaligned, as shown in the image below, slight misalignment that is difficult to detect with the naked eye can significantly affect the placement of the ROI for the gap, which is only about 2 to 9 pixels wide within the component. To address this, dynamic ROI adjustment is necessary. Figure 6 visually demonstrates the effects of noise, lighting imbalance, and positional instability in actual images, supporting the necessity of the proposed step-by-step image preprocessing algorithm.

This study designs a step-by-step preprocessing algorithm to simultaneously achieve noise removal, rapid and accurate defect classification, and high defect detection performance in OIS actuator images. Targeting small and noise-sensitive OIS actuator data, the proposed method consistently extracts three ROIs regardless of the OIS actuator’s position within the image by using the lens holder as a reference point. For each of the three gap regions, precise preprocessing is applied. The detailed procedure is as follows.

- Global Binarization: The input image (1280 × 960) is binarized to emphasize the lens holder region.

- Lens Holder Detection: Starting from the estimated initial coordinates of the lens holder (defined through experiments as (637, 350)), pixels are expanded upward, downward, leftward, and rightward. The first occurrence of consecutive black pixels in each direction is considered the boundary of the lens holder, enabling automatic detection of its region. The expected width and height of the lens holder are approximately 356 and 89 pixels, respectively, which are used as reference tolerances to validate the detected region. This detected region is then used for subsequent preprocessing steps.

- Initial Cropping and Scaling: To exclude unnecessary surrounding areas from the input image, the image is cropped based on the center of the detected lens holder region. A 4× magnification is then applied to obtain an enlarged image.

- Gap-Specific ROI Assignment: From the enlarged image, three ROIs corresponding to the three target gaps are defined and extracted. The final dimensions of each preprocessed ROI are as follows:

- (1)

- ROI for OIS cover–lens holder: 1410 × 90

- (2)

- ROI for lens holder–ball guide: 1410 × 130

- (3)

- ROI for ball guide–AF carrier: 1410 × 140

- Noise Removal: To effectively reduce noise while preserving edge structures, a combination of a median filter and a guided filter (both determined through experimental validation) is applied to the image. Specifically, a median filter with a kernel size of 5 is used to suppress salt-and-pepper noise, followed by a guided filter with a radius of 8 and a regularization parameter (ε) of 500 to further smooth the image while maintaining structural details.

- ROI Brightness Adjustment: To account for the unique visual characteristics of each ROI, brightness correction is performed individually per region. Specifically, only the pixels whose intensity values fall within a predefined brightness range (low_thresh to high_thresh) are selectively enhanced by a fixed increase_value. This targeted adjustment improves contrast and feature visibility while avoiding overexposure of already bright regions.

- ROI Binarization: Each ROI is binarized to compress the data and produce the final preprocessed images.

3.4. Real-Time Precision Defect Classification Based on Unsupervised Learning

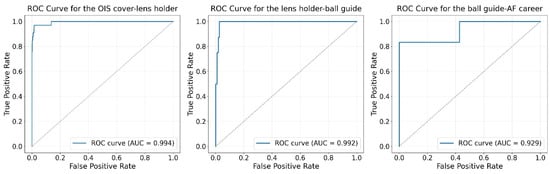

The final images produced through the proposed preprocessing algorithm effectively address key constraints in the manufacturing process, such as noise, lighting imbalance, and positional deviation. To perform precise defect classification under such environmental variability, it is necessary to minimize labeling costs. In addition, an unsupervised learning-based AE is suitable, as it can sensitively respond to structural anomalies in the gap regions. To quantitatively validate this, Figure 7 presents the Receiver Operating Characteristic (ROC) curves and the Area Under the Curve (AUC) values obtained through exploratory analysis using AEs on the three final preprocessed images. In the ROC curves, the vertical axis represents the True Positive Rate (TPR), and the horizontal axis represents the False Positive Rate (FPR). TPR indicates the proportion of actual defective samples correctly identified as defective, while FPR denotes the proportion of normal samples incorrectly classified as defective. As shown in Figure 7, the ROC curves are skewed toward the upper-left corner, above the baseline of a random classifier (y = x), indicating that high TPR and low FPR were achieved simultaneously. In fact, all AEs achieved AUC values above 0.9, demonstrating that even reconstruction errors alone from the proposed preprocessed images can reliably distinguish between normal and defective samples. These results experimentally validate that the unsupervised AE-based approach is well-suited for detecting structural anomalies in gap regions within this manufacturing process.

Figure 7.

ROC (Receiver Operating Characteristic) curves and AUC (Area Under the Curve) scores for defect detection using AE based on preprocessed image types.

In this study, a defect classification algorithm centered on an unsupervised learning-based AE was designed to satisfy both real-time performance and model scalability requirements in a smart factory environment. AEs are well-suited for this task due to their ability to operate without labeled data, low computational cost, and adaptability to environmental changes. These characteristics make AEs ideal for real-time inference on large-scale datasets while maintaining high scalability. For practical defect classification processes, the classification and result transmission must be completed within 0.5 s per image, as required by the partner company. Considering image transfer time, inference time, and result transmission, the entire process must be completed within approximately 0.2 to 3 s per image. Therefore, the fast inference capability of AE-based architectures makes them advantageous for deployment in real-world applications. The proposed algorithm follows a structured procedure. First, a step-by-step preprocessing algorithm is applied to generate images optimized for AE input. The AE then reconstructs these input images, and defect classification is performed based on the reconstruction error between the input and the output images. Only normal data are used for training the AE, while a mixed dataset containing both normal and defective samples is used during the testing phase. Defect classification is performed by setting an optimal threshold based on the AUC, allowing for flexible decision-making without relying on a fixed criterion. This architecture contributes effectively to the development of advanced quality inspection systems in smart factories, offering high-speed inference, label-free training, and strong adaptability to environmental variability. The detailed procedure for AE-based reconstruction-driven defect classification is presented as follows.

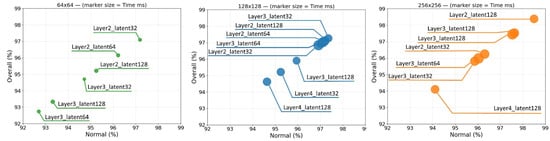

- Each AE input image obtained from the step-by-step preprocessing procedure is fed into three customized AEs, each tailored to a specific gap region. The architectures of these AEs were optimized based on extensive experimentation: the AE for the OIS cover–lens holder gap uses a 256 × 256 input size with two layers and a latent dimension of 128; the AE for the lens holder–ball guide gap employs a 128 × 128 input with four layers and a latent space of 32; and the AE for the ball guide–AF carrier gap adopts a 128 × 128 input with four layers and a latent dimension of 64. These model configurations were selected to balance reconstruction accuracy and computational efficiency for each specific ROI.

- The reconstruction error between the reconstructed image and the original AE input image is calculated.

- Defect classification is performed based on a threshold value defined in advance through AUC-based experiments.

- Finally, an AND operation is applied to the three classification results from the AE models. The final output is classified as normal only if all three results are normal; otherwise, the final result is classified as defective.

4. Experiments and Results

Chapter 4 describes the experimental environment and comparative evaluations conducted to assess the performance of the developed image preprocessing algorithm and the unsupervised learning-based defect classification algorithm. The experiments were carried out on a high-performance computing system equipped with an Intel i9-13900K processor (24 cores @ 3.00GHz, 32 threads), 32 GB of DDR5 RAM, and an NVIDIA GeForce RTX 4090 GPU with 24 GB of GDDR6X memory. The software environment was based on Windows 11, utilizing CUDA 12.4 for GPU acceleration. The experiments were conducted using Python 3.10 and PyTorch 2.4.0, implemented within both vs. Code and JupyterLab environments. As described earlier in Chapter 4, approximately 10,000 images were collected using an automated defect classification device provided by a partner company. For AE training, the following consistent hyperparameters were applied: batch size of 16, learning rate of 1 × 10−4, and 50 training epochs. The baseline models used for comparison (UniNet [44], ACR-DSVDD [45], SLAD [46], and RD4AD [47]) were implemented using their official source code with default hyperparameter settings. To evaluate the efficiency of the proposed algorithm in defect classification, five key quantitative metrics were used: accuracy, precision, recall, F1 score, and average processing time. The sequence of experiments is as follows: First, the data collection process using the automated defect classification device is described. Second, a comparative study of the step-by-step preprocessing methods is presented. Third, experiments on unsupervised learning-based defect detection are conducted. Finally, the defect classification performance of the proposed algorithm is evaluated.

4.1. Data Collection

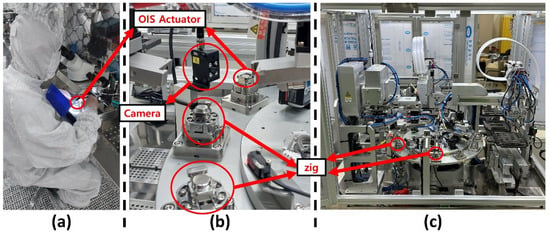

For the training and testing of the defect classification algorithm in this study, approximately 10,000 images of the hook2 surface of OIS actuators were collected from an actual smart factory environment. Among the available formats (RAW, PNG, and JPEG) the JPEG format was selected to achieve a balance between storage efficiency and image quality. The normal and defective data were directly collected using an automated defect classification device developed by a partner company, which was designed to replace the traditional human-centered inspection process.

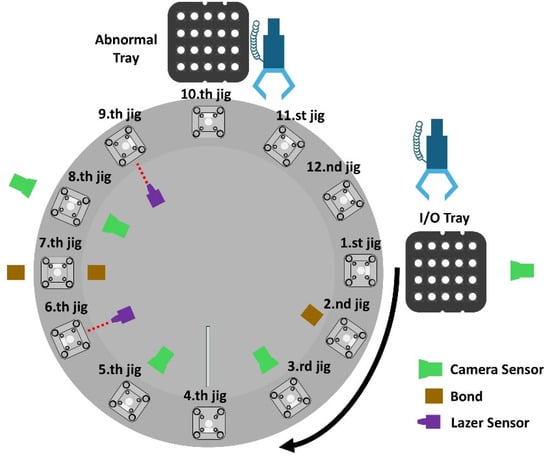

Figure 8a illustrates the conventional inspection method, in which a worker visually inspects each module through a microscope. Figure 8b shows a close-up photograph of a camera equipped with an integrated flash capturing an OIS actuator mounted in the jig. Figure 8c presents the automated defect classification device, which consists of 12 jigs, five fixed cameras, two laser sensors, input/output trays, and a separate reject tray. The device operates as follows: a robotic arm picks one of the 20 OIS actuator modules placed in a 5 × 4 tray, captures an image with the first camera, and then places the module into the first jig. The jigs are mounted on a rotating platform and pass through 12 stages, including adhesive dispensing, filter attachment, imaging, orientation adjustment, and gap inspection. At the eighth jig position, images of both the hook1 and hook2 surfaces are captured simultaneously and are directly used for gap-based defect classification. At the ninth jig, a laser sensor checks the orientation of the module, and at the tenth jig, modules are sorted based on the final defect classification result: a module classified as normal is rotated back to the starting point and placed onto the input/output tray, whereas a defective one is transferred to the reject tray at the tenth jig. The entire cycle takes approximately one minute. Figure 9 presents the full schematic of the automated defect classification device. This precise and fully automated setup enables the consistent collection of high-quality data, supporting the development of a reliable and practical defect classification algorithm under real manufacturing conditions.

Figure 8.

Comparison of manual and automated defect classification systems for OIS actuators in a smart factory environment: (a) Cleanroom inspection; (b) Camera–jig close-up; (c) Inspection overview.

Figure 9.

Overview of the rotary jig-based process for automated defect classification of OIS actuators.

4.2. Results of High-Noise Reduction for Small Components

This study proposes a method to enhance defect classification accuracy and reduce unnecessary computational load by dynamically assigning ROIs for gap analysis based on the lens holder. Instead of using complex deep learning or machine learning techniques, the method employs a pixel color-based edge detection approach via binarization, thereby achieving both computational efficiency and accuracy. This approach features a simple processing structure while enabling fast and reliable detection of the lens holder. To maximize lens holder detection performance, experiments were conducted using various binarization thresholds as summarized in Table 2. Specifically, thresholds ranging from 51, 102, 153, 204 to 255 were evaluated. The detection performance was assessed using standard metrics derived from the confusion matrix: precision, recall, accuracy, and F1 score. The results showed that the threshold range of 102–255 produced the highest number of true positives (TP), with precision = 1.000, recall = 0.9995, accuracy = 0.9995, and F1 score = 0.9997, indicating the best overall performance. In contrast, when the threshold was set between 204–255, detection failures led to zero true positives, causing precision and F1 score to be undefined. These results highlight the critical importance of selecting an appropriate binarization threshold for accurate lens holder detection. In conclusion, by determining the optimal binarization threshold range, the lens holder can be accurately detected and used as a reference to dynamically assign ROIs, thereby improving both the computational efficiency and classification accuracy of the entire algorithm.

Table 2.

Comparison of lens holder detection performance according to binarization threshold range.

In this study, a preprocessing algorithm combining a median filter and a guided filter was adopted to maximize the accuracy of AE-based defect classification model. This combination demonstrated the most effective noise reduction performance among various tested techniques while maintaining real-time processing capability. Specifically, after defining ROIs and applying 4× magnification to the cropped gap areas, the combined filtering approach effectively removed both magnification-induced noise and noise originating from the imaging environment. Complex deep learning-based denoising methods were excluded due to real-time processing constraints, and the focus was placed on lightweight, filter-based algorithms. A total of 30 preprocessing methods were compared, including thresholding, basic filtering, morphological operations, interpolation techniques, average filters, weighted average filters, and rank-order. These methods were tested both individually and in various combinations. The combined filtering methods selected for evaluation were those that outperformed single methods without compromising real-time performance. Figure 10 presents a comparison of the results obtained by applying all 30 algorithms to the same cropped image. The evaluation was conducted visually by both component experts and computer vision specialists, confirming that the median + guided filter combination yielded the best results. While this comparison was based on images cropped to include the entire component, in actual deployment, the chosen preprocessing combination is applied individually to each of the three gap ROIs before being used as input for the AE model. In conclusion, the adoption of this optimal preprocessing algorithm (balancing real-time performance and denoising precision) provides a strong foundation for maximizing the effectiveness of the unsupervised AE-based model.

Figure 10.

Comparison results of 30 image preprocessing techniques applied to ROI-based OIS actuator.

4.3. Experimental Results of Unsupervised Learning-Based Defect Classification

For each of the three gap positions, an optimized AE model architecture was designed, and their individual predictions were integrated using an AND operation to complete the final defect classification algorithm. The final evaluation results showed an accuracy of 98.03% (5566/5678) for normal samples and 100% (50/50) for defective samples, resulting in an overall accuracy of 98.04% (5616/5728), demonstrating strong performance. To determine the optimal AE model structure for defect classification using the preprocessed images (processed through noise reduction and dynamic ROI assignment) a series of experiments were conducted. The number of AE layers was varied (2, 3, and 4 layers), the latent space dimensions were set to 32, 64, and 128, and the input image sizes were tested at 64 × 64, 128 × 128, and 256 × 256. Approximately 5000 images were split into training and test datasets with an 8:2 ratio. Model performance was evaluated using accuracy on normal images, accuracy on defective images, and overall accuracy. The test dataset included 1137 normal and 33 defective images. Table 3 presents the defect classification performance of the AE model for the OIS cover–lens holder gap, including layer depth, latent space size, input image dimensions, AUC-derived threshold values, and average inference time per image. Among all configurations, the structure with an input size of 128 × 128, four layers, and a latent space of 64 yielded the highest overall accuracy of 98.46%. However, one of the key requirements from the partner company is to prioritize the accuracy of defective sample detection. Therefore, a model configuration with an input image size of 256 × 256, two layers, and a latent space dimension of 128 was adopted. Although another configuration with 256 × 256 input, two layers, and a latent space of 64 detected all but one defective image, its lower normal accuracy made it unsuitable. The overall results in Table 3 show that larger input sizes, deeper layers, or larger latent spaces do not necessarily guarantee better performance. Table 3 reports the Wilson 95% confidence intervals for the normal accuracy of each configuration (e.g., 1120/1137 = 98.50%, 95% CI [97.62, 99.06]). Because the normal class has a sufficiently large sample size (n = 1137), the interval width is very narrow, indicating stable estimates and allowing interpretation of differences at the first decimal place. The Wilson interval is computed using the sample size n, the critical value z corresponding to the desired confidence level, and the number of successes x. The formulas for the interval are provided in Equations (1) and (2). Therefore, similar experiments were conducted for the other two gaps (lens holder–ball guide and ball guide–AF carrier) to identify their respective optimal AE architectures. For the lens holder–ball guide gap, the selected model achieved 98.42% accuracy for normal samples (1123/1141), 83.33% for defective samples (5/6), and 98.34% overall accuracy. The selected configuration was: input size 128 × 128, four layers, and latent space of 32. For the ball guide–AF carrier gap, the model achieved 99.91% accuracy for normal samples (1141/1142), 75.00% for defective samples (3/4), and 99.83% overall accuracy. In conclusion, optimal AE-based defect classification models were designed for all three gaps (OIS cover–lens holder, lens holder–ball guide, and ball guide–AF carrier) and their predictions were integrated using an AND operation for the final classification. This approach quantitatively demonstrates its robustness against environmental noise and positional uncertainty, achieving high reliability in defect detection under real manufacturing conditions. In Table 3, the AE configuration that achieved the highest defective detection performance with 32 out of 33 correct detections exhibited significantly lower accuracy in normal detection, making it unsuitable as an optimal AE structure. The next-best configurations, each achieving 31 out of 33 correct defective detections, amounted to 21 in total. Their performance comparisons, grouped by image size, are visualized in Figure 11 as a scatter plot. The x-axis represents normal detection accuracy, the y-axis denotes average (overall) performance, and the marker size reflects inference time, where smaller markers indicate faster inference. Among all configurations, the model with an image size of 256 × 256, two encoder layers, and a latent dimension of 128 clearly demonstrates the best overall performance.

Table 3.

Performance and inference speed comparison of AE architectures for defect classification of the OIS cover–lens holder gap.

Figure 11.

Scatter plot of normal/overall accuracy for configurations with 31 correct detections of defective samples (grouped by image size, marker size = time (ms)).

To validate the performance superiority of the proposed unsupervised learning-based defect classification algorithm, a comparative experiment was conducted against a machine learning-based gap classification algorithm that utilized the same preprocessing procedure. The test dataset used for this comparison consisted of 5728 images (5678 normal and 50 defective samples). The proposed algorithm achieved an overall accuracy of 98.04%, representing a +3.47% improvement over the 94.57% accuracy of the machine learning-based method. Notably, the proposed algorithm achieved 100% accuracy on defective samples, which is a significant +14% improvement compared to 86% for the machine learning approach. For normal samples, the proposed method also outperformed the baseline, achieving 98.03% accuracy compared to 94.65%, a +3.38% improvement. The baseline machine learning method followed the same step-by-step preprocessing process (including noise reduction and ROI assignment) but instead of using an AE, it classified defects based on length estimation derived from the gap boundary positions. Specifically, the algorithm detects black-to-white pixel transitions to estimate the start and end coordinates of the gap at the pixel level. Based on these coordinates, the average length of the gap is calculated and converted into millimeters. Defect classification is then performed by determining whether the measured length falls within the acceptable range for a normal sample. The results from all three gaps were combined using an AND operation to determine the final defect classification. The comparison demonstrates that the unsupervised AE-based approach is more sensitive to structural anomalies than the simple length-based machine learning method and shows greater robustness to noise and positional variation. This experiment quantitatively confirms the effectiveness of the proposed unsupervised learning-based classification system, even in unstructured environmental conditions, and supports its practical applicability in real-world manufacturing settings.

This study compares the proposed unsupervised learning-based defect classification algorithm with existing deep learning-based unsupervised anomaly detection and defect classification algorithms used in industrial manufacturing processes. Table 4 summarizes the performance of each algorithm in terms of accuracy, precision, recall (sensitivity), F1 score, and average inference time. All baseline algorithms used in the comparison are based on unsupervised deep learning models and trained solely on normal data, without requiring labeled samples. Among the six anomaly detection algorithms evaluated, the proposed algorithm achieved the highest performance across all key metrics: accuracy (0.9944), precision (0.9943), recall (1.0000), and F1 score (0.9971). While ACR-DSVDD [45] and SLAD [46] (both unsupervised deep learning-based methods that utilize distributional shifts and scale-guided representation learning, respectively) also demonstrated relatively strong accuracy at 0.9918 and 0.9923, respectively, they still fell short of the proposed method. UniNet [44], a versatile student–teacher-based framework applicable to both supervised and unsupervised settings, and RD4AD [47], which leverages a reverse knowledge distillation approach to amplify representational discrepancies on anomalous inputs, were also included in the evaluation. Additionally, classical reconstruction-based models such as AE [8] and Variational Autoencoder (VAE) [48] (both trained on normal data and detecting anomalies through reconstruction error) were assessed for comparison. To further investigate each algorithm’s ability to identify actual defective samples during the inspection process, accuracy was calculated separately for defective cases. Among the six baseline algorithms, UniNet achieved 24% (12/50), ACR-DSVDD [45] 6% (3/50), SLAD 46% (23/50), RD4AD 76% (38/50), AE 64% (32/50), and VAE 50% (25/50), with none exceeding the 90% threshold. Furthermore, all baseline methods reported recall values below 0.9. In contrast, the proposed algorithm achieved 100% accuracy (50/50) for defective samples and a perfect recall score of 1.0000, demonstrating its outstanding defect detection capability, particularly due to its effective noise reduction preprocessing. These results confirm that the proposed method is highly specialized for defect detection. Moreover, its F1 score was the highest among all compared methods, indicating superior overall performance in distinguishing between normal and defective cases.

Table 4.

Performance comparison of unsupervised learning-based algorithms for anomaly detection and defect classification.

5. Conclusions and Future Work

This study proposed an automated defect classification algorithm that combines a specialized image preprocessing algorithm and an unsupervised learning-based classification model tailored for small components such as OIS actuators. Since defect detection during the inspection process plays a critical role in ensuring manufacturing yield and reliability, the developed algorithm is designed to achieve strong overall performance while exhibiting high sensitivity to defective cases. Experimental results demonstrated classification accuracies of 98.03% for normal samples and 100% for defective samples, with an F1 score of 0.9971, successfully detecting all defective instances. In addition, the average processing time per module satisfied real-time requirements. By integrating effective noise reduction techniques with unsupervised defect classification, the proposed method addresses key constraints in smart factory environments, including noise, environmental variability, and real-time processing demands. As a result, manual inspection of OIS actuators can be replaced with a faster, more precise, and fully automated quality control process. Future research will aim to enhance the generalizability of the proposed algorithm by extending gap-based defect classification beyond the currently analyzed hook2 surface to the other three sides of the OIS actuator. In addition, to reflect a key requirement from the partner company that the algorithm be applicable to other compact OIS modules, the proposed method was designed using an unsupervised learning framework. This approach enables adaptability without the need for labeled data. This study focuses on structural anomaly detection of gap morphology in the target component. Building on this foundation, we plan to extend the algorithm to structural gap-morphology anomaly detection across a broader range of compact OIS modules, thereby further strengthening its generalizability and practical applicability. Furthermore, we will continue to conduct studies that consolidate its generalizability and empirically validate its applicability in real manufacturing environments.

Author Contributions

Conceptualization, S.L., T.K., S.K., J.A. and N.K.; methodology, S.L. and T.K.; software, S.L. and T.K.; validation, S.L. and T.K.; formal analysis, S.L. and T.K.; investigation, S.L. and T.K.; resources, S.K. and N.K.; data curation, S.L. and T.K.; writing—original draft preparation, S.L. and T.K.; writing—review and editing, S.L., T.K., S.K. and J.A.; visualization, S.L. and T.K.; supervision, J.A. and N.K.; project administration, S.L.; funding acquisition, J.A. and N.K. All authors have read and agreed to the published version of the manuscript.

Funding

This research was supported by Basic Science Research Program through the National Research Foundation of Korea (NRF) funded by the Ministry of Education (RS-2020-NR049579). This work was supported by the IITP (Institute of Information & Communications Technology Planning & Evaluation)-ICAN (ICT Challenge and Advanced Network of HRD) grant funded by the Korean government (Ministry of Science and ICT) (IITP-2025- RS-2024-00436954).

Data Availability Statement

The datasets presented in this study are available in this article. Requests to access the datasets should be directed to the authors of the study.

Acknowledgments

The authors sincerely thank one another for their collaboration throughout this research. In particular, they extend their heartfelt appreciation to their advisors for their invaluable insights, continuous support, and guidance, all of which played a crucial role in the successful completion of this study.

Conflicts of Interest

The authors declare no conflicts of interest.

Abbreviations

The following abbreviations are used in this manuscript:

| OIS | Optical Image Stabilization |

| AF | AutoFocus |

| ROI | Region of Interest |

| ROC | Receiver Operating Characteristic |

| AUC | Area Under the Curve |

| TPR | True Positive Rate |

| FPR | False Positive Rate |

| AE | AutoEncoder |

References

- Apple iPhone Website. Available online: https://www.apple.com/iphone/ (accessed on 25 July 2025).

- Samsung Galaxy Phone Website. Available online: https://www.samsung.com/sec/smartphones/all-smartphones/ (accessed on 25 July 2025).

- Google Pixel Phone Website. Available online: https://store.google.com/us/?hl=en-US®ionRedirect=true (accessed on 25 July 2025).

- Xiaomi Mi Phone Website. Available online: https://www.mi.com/global/mobile/ (accessed on 25 July 2025).

- OIS Market Insight. Available online: https://www.verifiedmarketreports.com/product/optical-image-stabilizer-ois-market-size-and-forecast/ (accessed on 25 July 2025).

- OIS Market Outlook. Available online: https://dataintelo.com/report/global-optical-image-stabilizer-ois-market (accessed on 25 July 2025).

- Camera Optical Image Stabilizer Market Opportunities 2025. Available online: https://www.linkedin.com/pulse/camera-optical-image-stabilizer-market-xzbif (accessed on 25 July 2025).

- Kramer, M.A. Nonlinear principal component analysis using autoassociative neural networks. AIChE J. 1991, 37, 233–243. [Google Scholar] [CrossRef]

- Sohl-Dickstein, J.; Weiss, E.; Maheswaranathan, N.; Ganguli, S. Deep Unsupervised Learning using Nonequilibrium Thermodynamics. PMLR 2015, 37, 2256–2265. [Google Scholar]

- Breitenbach, J.; Eckert, I.; Mahal, V.; Baumgartl, H.; Buettner, R. Automated Defect Detection of Screws in the Manufacturing Industry Using Convolutional Neural Networks. In Proceedings of the 55th Hawaii International Conference on System Sciences, HICSS 2022, Virtual Event/Maui, HI, USA, 4–7 January 2022. [Google Scholar]

- Zou, L.; Zhuang, K.J.; Zhou, A.; Hu, J. Bayesian optimization and channel-fusion-based convolutional autoencoder network for fault diagnosis of rotating machinery. Eng. Struct. 2023, 280, 115708. [Google Scholar] [CrossRef]

- Weiss, E.; Caplan, S.; Horn, K.; Sharabi, M. Real-Time Defect Detection in Electronic Components during Assembly through Deep Learning. Electronics 2024, 13, 1551. [Google Scholar] [CrossRef]

- Kozamernik, N.; Bračun, D. A novel FuseDecode Autoencoder for industrial visual inspection: Incremental anomaly detection improvement with gradual transition from unsupervised to mixed-supervision learning with reduced human effort. Comput. Ind. 2025, 164, 104198. [Google Scholar] [CrossRef]

- Lim, J.Y.; Lim, J.Y.; Baskaran, V.M.; Wang, X. A deep context learning based PCB defect detection model with anomalous trend alarming system. Results Eng. 2023, 17, 100968. [Google Scholar] [CrossRef]

- Li, Y.; Yuan, S.; Wang, H.; Li, Q.; Liu, M.; Xu, C.; Shi, G.; Zuo, W. Triad: Empowering LMM-based Anomaly Detection with Vision Expert-guided Visual Tokenizer and Manufacturing Process. arXiv 2025, arXiv:2503.13184. [Google Scholar] [CrossRef]

- Klarák, K.; Andok, R.; Malík, P.; Kuric, I.; Ritomský, M.; Ritomský, I.; Tsai, H.Y. From Anomaly Detection to Defect Classification. Sensors 2024, 24, 429. [Google Scholar] [CrossRef]

- Wang, Z.; Yuan, H.; Lv, J.; Liu, C.; Xu, H.; Li, J. Anomaly Detection and Fault Classification of Printed Circuit Boards Based on Multimodal Features of the Infrared Thermal Imaging. IEEE Trans. Instrum. Meas. 2024, 73, 1–13. [Google Scholar] [CrossRef]

- Song, Y.; Wang, W.; Wu, Y.; Fan, Y.; Zhao, X. Unsupervised anomaly detection in shearers via autoencoder networks and multi-scale correlation matrix reconstruction. Int. J. Coal Sci. Technol. 2024, 11, 79. [Google Scholar] [CrossRef]

- Chen, S.; Bandaru, S.; Marti, S.; Bekar, E.T.; Skoogh, A. Comparison of unsupervised image anomaly detection models for sheet metal glue lines. Eng. Appl. Artif. Intell. 2025, 153, 110740. [Google Scholar] [CrossRef]

- Song, S.; Baek, J.G. New Anomaly Detection in Semiconductor Manufacturing Process using Oversampling Method. ICAART 2020, 2, 926–932. [Google Scholar] [CrossRef]

- Ester, M.; Kriegel, H.P.; Sander, J.; Xu, X. Density based spatial clustering of applications with noise. KDD AAAI Press 1996, 240, 226–231. [Google Scholar]

- Cui, Y.; Liu, Z.; Lian, S. Patch-Wise Auto-Encoder for Visual Anomaly Detection. In Proceedings of the IEEE International Conference on Image Processing (ICIP), Kuala Lumpur, Malaysia, 8–11 October 2023; pp. 870–874. [Google Scholar] [CrossRef]

- Lee, Y.; Kang, P. AnoViT: Unsupervised Anomaly Detection and Localization with Vision Transformer-Based Encoder-Decoder. IEEE Access 2022, 10, 46717–46724. [Google Scholar] [CrossRef]

- Dosovitskiy, A.; Beyer, L.; Kolensnikov, A.; Weissenborn, D.; Zhai, X.; Unterthiner, T.; Dehghani, M.; Minderer, M.; Heigold, G.; Gelly, S.; et al. An Image is Worth 16x16 Words: Transformers for Image Recognition at Scale. arXiv 2020, arXiv:2010.11929. [Google Scholar] [CrossRef]

- Zhang, J.; Chen, X.; Wang, Y.; Wang, C.; Liu, Y.; Li, X.; Yang, M.-H.; Tao, D. Exploring Plain ViT Reconstruction for Multi-class Unsupervised Anomaly Detection. Comput. Vis. Image Underst. 2025, 253, 104308. [Google Scholar] [CrossRef]

- Yang, Q.; Guo, R. An Unsupervised Method for Industrial Image Anomaly Detection with Vision Transformer-Based Autoencoder. Sensors 2024, 24, 2440. [Google Scholar] [CrossRef]

- Lu, R.; Wu, Y.J.; Tian, L.; Wang, D.; Chen, B.; Liu, X. Hierarchical vector quantized transformer for multi-class unsupervised anomaly detection. Adv. Neural Inf. Process. Syst. 2023, 36, 8487–8500. [Google Scholar]

- Cai, X.; Xiao, R.; Zeng, Z.; Gong, P.; Ni, Y. ITran: A novel transformer-based approach for industrial anomaly detection and localization. Eng. Appl. Artif. Intell. 2023, 125, 106677. [Google Scholar] [CrossRef]

- Mehta, D.; Klarmann, N. Autoencoder-Based Visual Anomaly Localization for Manufacturing Quality Control. Mach. Learn. Knowl. Extr. 2023, 6, 1–17. [Google Scholar] [CrossRef]

- Chen, H.; Chen, P.; Mao, H.; Jiang, M. A Hierarchically Feature Reconstructed Autoencoder for Unsupervised Anomaly Detection. arXiv 2024, arXiv:2405.09148. [Google Scholar] [CrossRef]

- Shen, H.; Wei, B.; Ma, Y. Unsupervised anomaly detection for manufacturing product images by significant feature space distance measurement. Mech. Syst. Signal Process. 2024, 212, 111328. [Google Scholar] [CrossRef]

- Wang, Z.; Bovik, A.C.; Sheikh, H.R.; Simonceli, E.P. Image quality assessment: From error visibility to structural similarity. IEEE Trans. Image Process. 2004, 13, 600–612. [Google Scholar] [CrossRef]

- Getachew Shiferaw, T.; Yao, L. Autoencoder-Based Unsupervised Surface Defect Detection Using Two-Stage Training. J. Imaging 2024, 10, 111. [Google Scholar] [CrossRef] [PubMed]

- Hong, D.C.; Tan, P.X.; Nguyen, A.N.; Pham, M.K.; Duong, T.H.A.; Huynh, T.-M.; Bui, S.-A.; Nguyen, D.-M.; Ha, Q.-H.; Trinh, V.-A.; et al. Unsupervised industrial anomaly detection using paired well-lit and low-light images. J. Comput. Des. Eng. 2025, 12, 41–61. [Google Scholar] [CrossRef]

- McIntosh, D.; Albu, A.B. Unsupervised, Online and On-The-Fly Anomaly Detection for Non-stationary Image Distributions. In Proceedings of the European Conference on Computer Vision (ECCV), Milan, Italy, 29 September–4 October 2024; pp. 428–445. [Google Scholar] [CrossRef]

- Bergmann, P.; Fauser, M.; Sattlegger, D.; Steger, C. MVTec AD—A Comprehensive Real-World Dataset for Unsupervised Anomaly Detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Long Beach, CA, USA, 15–20 June 2019; pp. 9584–9592. [Google Scholar] [CrossRef]

- Park, J.H.; Kim, Y.S.; Seo, H.; Cho, Y.J. Analysis of Training Deep Learning Models for PCB Defect Detection. Sensors 2023, 23, 2766. [Google Scholar] [CrossRef]

- Zhang, H.; Wang, Z.; Zeng, D.; Wu, Z.; Jiang, Y.G. DiffusionAD: Norm-Guided One-Step Denoising Diffusion for Anomaly Detection. IEEE Trans. Pattern Anal. Mach. Intell. 2025, 47, 7140–7152. [Google Scholar] [CrossRef] [PubMed]

- Deng, S.; Sun, Z.; Zhuang, R.; Gong, J. Noise-to-Norm Reconstruction for Industrial Anomaly Detection and Localization. Appl. Sci. 2023, 13, 12436. [Google Scholar] [CrossRef]

- Liu, T.; Li, B.; Du, X.; Jiang, B.; Geng, L.; Wang, F.; Zhao, Z. FAIR: Frequency-aware Image Restoration for Industrial Visual Anomaly Detection. arXiv 2023, arXiv:2309.07068. [Google Scholar] [CrossRef]

- Jiang, X.; Liu, J.; Wang, J.; Nie, Q.; Wu, K.; Liu, Y.; Wang, C.; Zheng, F. SoftPatch: Unsupervised Anomaly Detection with Noisy Data. In Proceedings of the 36th Conference on Neural Information Processing Systems (NeurIPS 2022), New Orleans, LA, USA, 28 November–9 December 2022; Volume 1123, pp. 15433–15445. [Google Scholar] [CrossRef]

- Yan, S.; Shao, H.; Xiao, Y.; Liu, B.; Wan, J. Hybrid robust convolutional autoencoder for unsupervised anomaly detection of machine tools under noises. Robot Comput.-Integr. manuf. 2023, 79, 102441. [Google Scholar] [CrossRef]

- Lu, F.; Yao, X.; Fu, C.W.; Jia, J. Removing Anomalies as Noises for Industrial Defect Localization. In Proceedings of the 2023 IEEE/CVF International Conference on Computer Vision (ICCV), Paris, France, 1–6 October 2023; pp. 16120–16129. [Google Scholar] [CrossRef]

- Wei, S.; Jiang, J.; Xu, X. UniNet: A Contrastive Learning-Guided Unified Framework with Feature Selection for Anomaly Detection. In Proceedings of the Computer Vision and Pattern Recognition Conference (CVPR), Nashville, TN, USA, 11–15 June 2025; pp. 9994–10003. Available online: https://openaccess.thecvf.com/content/CVPR2025/html/Wei_UniNet_A_Contrastive_Learning-guided_Unified_Framework_with_Feature_Selection_for_CVPR_2025_paper.html (accessed on 25 July 2025).

- Li, A.; Qiu, C.; Kloft, M.; Smyth, P.; Rudolph, M.; Mandt, S. Zero-Shot Anomaly Detection via Batch Normalization. Adv. Neural Inf. Process. Syst. 2023, 36, 40963–40993. [Google Scholar] [CrossRef]

- Xu, H.; Wang, Y.; Wei, J.; Jian, S.; Li, Y.; Liu, N. Fascinating supervisory signals and where to find them: Deep anomaly detection with scale learning. In Proceedings of the 40th International Conference on Machine Learning (ICML), Honolulu, HI, USA, 23–29 July 2023; Volume 1611, pp. 38655–38673. Available online: https://dl.acm.org/doi/10.5555/3618408.3620019 (accessed on 25 July 2025).

- Deng, H.; Li, X. Anomaly Detection via Reverse Distillation from One-Class Embedding. In Proceedings of the 2022 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), New Orleans, LA, USA, 18–24 June 2022; pp. 9727–9736. [Google Scholar] [CrossRef]

- Kingma, D.P.; Welling, M. Auto-Encoding Variational Bayes. arXiv 2013, arXiv:1312.6114. [Google Scholar] [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).