1. Introduction

The widespread adoption of 5G and the continuous advancement of 6G technologies are driving the swift advancement of the Internet of Vehicles (IoV) [

1]. As a representative scenario of the Internet of Things (IoT) [

1] in transportation systems, IoV is showing unprecedented growth potential. Diverse vehicular applications have become deeply integrated into everyday life, ranging from driving safety and in-vehicle entertainment to mobility services [

2]. However, vehicles with constrained computational resources may face significant challenges in processing these tasks efficiently. Cloud servers are typically located far from end users [

3]. Transmitting the exponentially growing vehicular application data to centralized cloud servers imposes a substantial burden [

4] on the network and leads to unpredictable latency, making it unsuitable for IoV scenarios with strict real-time requirements. Mobile edge computing (MEC) [

5] addresses this issue by relocating data processing from remote cloud centers to edge nodes closer to the data source, significantly lowering communication delay and enhancing response times [

6]. Vehicle edge computing (VEC), an emerging paradigm integrating MEC with IoV, enables the offloading of computationally intensive and latency-sensitive tasks to VEC servers or roadside units, thereby reducing latency and improving service quality for users [

7]. However, the increasing number of service-requesting vehicles intensifies competition for limited edge resources, resulting in excessive load pressure on edge servers (ES). With advancements in vehicle intelligence, smart vehicles now possess certain levels of computational and caching capabilities. Aggregating and utilizing the idle resources [

4] of these vehicles can both expand the computational capacity of the VEC system and alleviate the burden on edge servers. Nonetheless, the high mobility of vehicles and the dynamic environment often cause existing task offloading approaches to perform poorly in such unpredictable and rapidly changing systems.

Edge intelligence, which integrates edge computing with artificial intelligence, is a promising emerging technology. Deep reinforcement learning (DRL), a subfield of artificial intelligence, fuses the powerful perceptual abilities of deep learning with the decision-making capabilities of reinforcement learning. It is increasingly used to tackle decision-making problems in dynamic and complex environments, offering an effective solution for tasks such as offloading and resource allocation. Compared with heuristic algorithms, DRL offers greater robustness and more stable convergence, enabling instant and dynamic decision-making. Digital twin (DT) is an emerging technology that creates digital replicas of physical entities based on real-time status and historical data. Until 2025, DT technology has been widely adopted in a large variety of domains [

8], such as smart community, healthcare and intelligent transportation systems. By ensuring reliable communication between the physical and digital domains, DT bridges the gap [

2] between physical space and its digital representation, enhancing real-time interaction and enabling close monitoring [

9]. The mapping between virtual models and physical entities provides comprehensive insights into the VEC network, enabling feature extraction and predictive analysis of physical vehicles and dynamic environments [

10,

11].

In recent years, some studies have started to explore the integration of DT technology with DRL to solve complex problems in practical applications. The role of DT varies across different use cases. For instance, one study [

12] utilizes DT to predict the arrival capabilities of future tasks and then applies DRL algorithms to optimize offloading strategies, determining whether tasks should be offloaded to cloud centers, edge servers, or local computing resources. Another study [

13] leverages DT’s ability to sense global and historical information, assisting in vehicle clustering, and then employ DRL algorithms to identify the optimal offloading location for tasks. Additionally, a further study [

14] uses DRL to make resource allocation [

15] and task offloading decisions, while DT simulates the real-world environment of autonomous vehicles to gather global information and optimize resource distribution. The combination of these technologies provides theoretical support for efficient vehicle management and demonstrates high quality and feasibility in practical applications.

Research on DT-based solutions for VEC networks is still in its early stages. First, most existing DT-assisted vehicular task offloading approaches rely on full offloading, whereas partial offloading strategies have shown greater potential in reducing latency. Second, the majority of current studies focus on single-objective optimization, typically minimizing latency. However, user requirements can vary, and single-objective approaches may not adequately address the diverse needs of all users. Furthermore, few studies have investigated the integration of DT technology with DRL approaches in the context of VEC networks. To deeply explore the performance of the combination of these two technologies in VEC, this paper proposes a novel digital twin-assisted intelligent task offloading (DTAITO) approach for VEC networks to support efficient offloading decision-making. The primary contributions of this paper can be outlined as follows:

(1) The partial task offloading optimization model is proposed to jointly minimize latency and energy consumption. This model balances computational performance with energy efficiency while accommodating the diverse requirements of IoV users.

(2) The digital twin network is used to capture real-time, system-wide information on vehicles and network conditions. A Gravity-Inspired Vehicle Clustering (GIVC) algorithm is applied to dynamically form highly resource-compatible clusters, ensuring reliable and stable communication links among vehicles within each cluster. This approach significantly lowers the complexity of task offloading, increases task scheduling success rates, and improves overall system performance under highly dynamic IoV environments.

(3) The TD3 algorithm is introduced to make real-time task offloading decisions in complex and dynamic vehicular networks, improving both the efficiency and stability of decision-making.

(4) A feedback mechanism is incorporated to dynamically tune the parameters of the GIVC algorithm according to task offloading outcomes. This enhances the adaptability and robustness of the clustering process across varying scenarios, further increasing offloading success rates and improving network performance.

The organization of this paper is outlined as follows:

Section 2 reviews related work,

Section 3 describes the system model and optimization problems,

Section 4 describes the DTAITO approach in detail,

Section 5 analyzes the experimental evaluation, and

Section 6 concludes the study.

3. System Model

3.1. Network Model

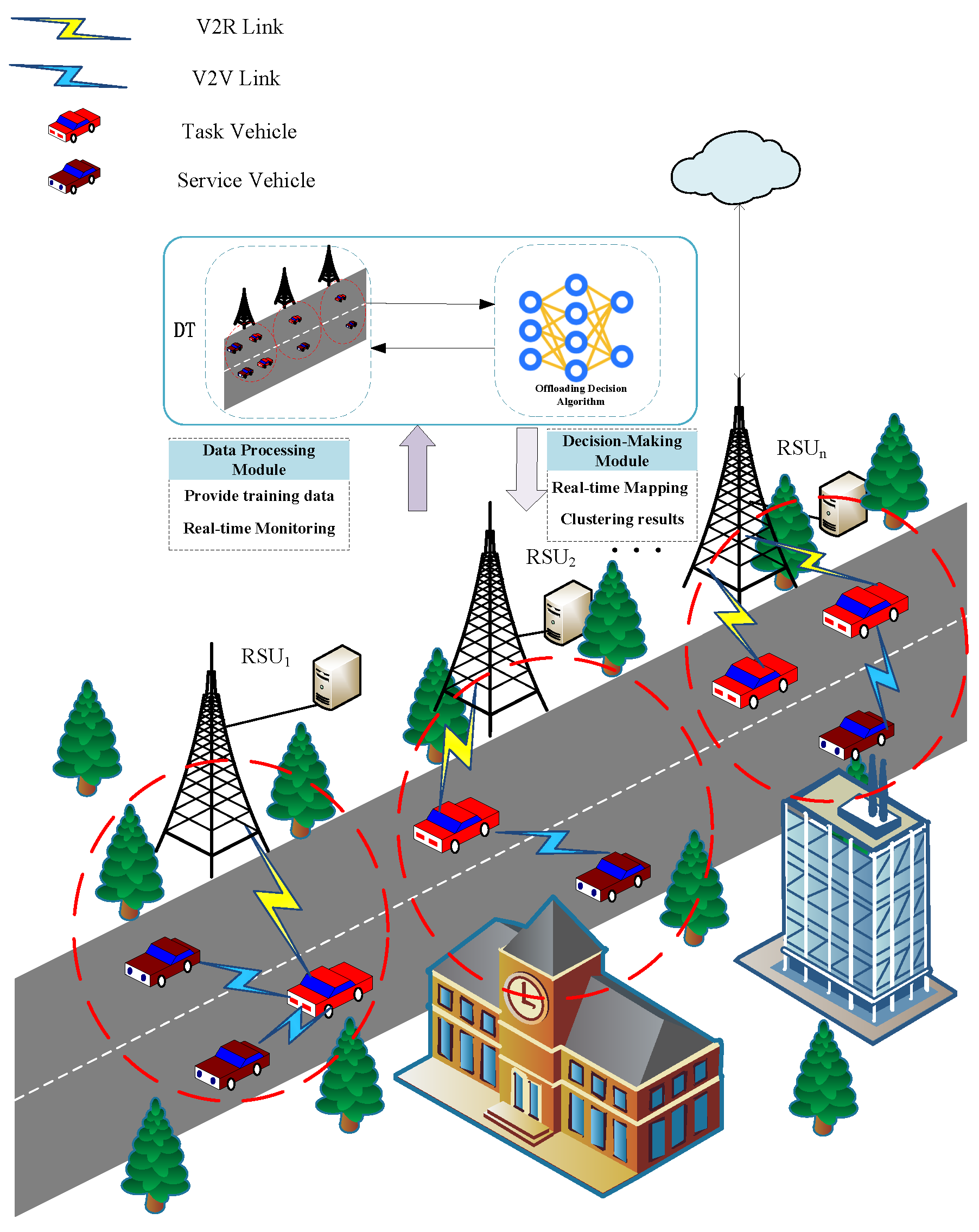

The digital twin-assisted VEC architecture is illustrated in

Figure 1. The digital twin network (DTN) is structured into two parts: a digital twin layer and a physical entity layer. Meanwhile, the vehicular edge computing system is organized into three tiers, namely the cloud, edge, and user layers. Vehicles in the user layer are responsible for collecting and analyzing data, and they offload tasks to other vehicles when computational resources are insufficient, thereby reducing the burden on roadside units (RSUs). RSUs in the edge layer communicate wirelessly with surrounding vehicles, acquiring real-time vehicle information and generating digital twin models to reflect and predict the physical state of vehicles in real time. The cloud layer consists of high-performance servers responsible for efficiently managing the edge servers. The architecture of the DTN comprises three fundamental modules: model mapping, data storage and digital twin management. These modules are intended to facilitate real-time updates and sustain synchronization across the physical and digital layers.

By utilizing a clustering algorithm assisted by the DTN deployed on RSUs, the comprehensive information of all vehicles on the road can be acquired, enabling the dynamic grouping of vehicles into distinct aggregation clusters. Each aggregation group consists of an RSU connected to an edge server and

K vehicles, denoted by the set

. All vehicles in the cluster have access to the RSU. When a vehicle lacks sufficient resources to execute a task locally, it initiates constrained offloading within the group, thereby narrowing the candidate offloading targets in advance. Vehicles within the group that lack the computational capacity to handle intensive tasks [

32] send task requests to the RSU. The RSU then makes optimal offloading decisions based on factors such as the available resources and load of the connected ES, as well as the idle resources of other vehicles, thereby reducing both task execution energy consumption and delay.

Based on their roles in requesting or providing computational resources, vehicles within an aggregation group are categorized as service vehicles (SVs) and task vehicles (TVs). Assume there are K vehicles on the road. Among them, i vehicles request task offloading during a given period and are represented by , while j vehicles provide computational resources and are represented by . We let express the task of vehicle, where represents the number of CPU cycles required to complete task , represents the size of the input data and represents the maximum tolerable latency of task . This work adopts a partial offloading approach, whereby the task of can be offloaded to either the RSU or an SV according to an optimal offloading ratio. We let represent the proportion of the task offloaded, and represent the proportion executed locally, with . means that it will be fully offloaded, denotes that the task will be executed entirely locally. represents partial task offloading, in which the task is partially executed locally and partially at the ES, enabling collaborative processing between the local device and the ES.

3.2. Social Relationship Model

Considering the highly dynamic properties of VEC networks, unstable communication links can significantly increase both energy consumption and latency. Therefore, ensuring the stability of inter-vehicle communication links is critical. To address this, the DTAITO approach introduces a social relationship model that integrates multi-scale factors within the VEC network. It constructs social trust values from raw data and represents them as a social trust matrix, which serves as a quantitative indicator of inter-vehicle social relationships. This matrix is used to describe the stability of communication links between vehicles, thereby mitigating the challenges posed by vehicular mobility. The core idea of the social trust value is to assess the level of trust between two vehicles based on their proximity, evaluated using two key parameters: directional similarity and velocity similarity.

(1)

Directional Similarity:

is used to indicate whether the directions of vehicles

and

are aligned. It is defined by the following expression:

(2)

Velocity Similarity: Let

and

represent the speeds of vehicle

i and

j, respectively. The velocity similarity between the two vehicles is denoted by

, and it is defined by the following expression:

Based on directional similarity and velocity similarity, the social trust value between vehicle

i and

j is expressed as:

where

and

are the weighting coefficients for directional and velocity similarity, respectively. A higher value of

indicates a stronger trust level between vehicle

i and

j. For ease of representation,

is introduced to describe the trust relationships among vehicles within a given region.

By clustering vehicles according to the social relationship model, communication links within each aggregation group are stabilized, thereby mitigating the latency and energy overhead caused by link instability or disconnection. When applying the DRL algorithm for offloading decisions, this approach significantly reduces the decision space and improves the efficiency of V2V task offloading.

3.3. Communication Model

When the task of

is offloaded to the ES, data transmission is required. The communication model we adopt is similar to that presented in [

33]. Let

represent the data transmission rate from

to RSU or

, and it is calculated as follows:

where

represents the bandwidth of the wireless channel,

indicates the distance between

,

represents the transmission power used by the vehicle to upload data.

is the exponent of path loss,

indicates the distance between

and ES or another vehicle

,

denotes the wireless channel gain,

indicates the inter-cell interference of the uplink [

34], and

refers to the Gaussian noise power in the channel. Given that the delay in returning the results is minimal and can be disregarded, the transmission rate for result delivery is not considered in this study.

Offloading an excessive number of tasks to the SVs can cause significant interference, impacting communication efficiency. Additionally, interference in the communication link may result in fluctuations in the V2V communication range. The instant V2V communication, denoted as

, is given by the following expression:

where

ℓ is the adjustment coefficient and

is the initial communication distance.

3.4. Task Offloading Model

3.4.1. Local Computing Model

When a vehicle has sufficient computational resources to process its own tasks, it does not need to rely on the ES or other vehicles. Under this circumstance, the local computation of the task involves both latency and energy consumption, denoted as

and

, respectively. The corresponding formulas are given as follows:

where

represents the computational capability of

, and

denotes the power consumption coefficient of the vehicle.

3.4.2. Edge Computing Model

When the task of

is offloaded, and the RSU has sufficient computational resources with low load, the DTAITO approach allows the requesting vehicle to offload its task to the edge server associated to the RSU for processing. In this case, the total delay is primarily composed of the transmission delay from the vehicle to the RSU and the computation delay at the ES. The energy consumption includes both the transmission energy to the RSU and the execution energy at the ES. The transmission and computation delays for tasks offloaded to the ES are expressed as follows:

The total delay for task processing at the ES can be expressed as:

where

represents the computational resources allocated by the ES to task

, which must be less than

.

denotes the computational capacity of the ES, measured in CPU cycles per second. As the output data size after task execution is much smaller than the input data size, the result return delay in the DTAITO approach will be reasonably ignored. The transmission energy to the RSU and the execution energy at the ES are expressed as follows:

The total energy consumption for task execution at the ES is displayed by:

When the RSU lacks sufficient computational resources or is heavily loaded, the task is offloaded to a service vehicle with available computing capacity for execution. Under this circumstance, the total delay

for completing the task comprises the transmission delay

for sending

to

, and the computation delay

for executing it on

. The corresponding expressions are given as follows:

where

represents the computational resources allocated by

to

, and

must be less than

.

denotes the computational capability of

, measured in CPU cycles per second. Therefore, the total delay for executing task

offloaded to

is given by:

Under the assistance of

, the total energy consumption

for completing the task includes the transmission energy from

to

and the computation energy consumed by

when processing the task on

. The respective expressions are given as follows:

Therefore, the total energy consumption

for task

offloaded to

for processing is expressed as:

Therefore, when task

of

is offloaded, the delay

and energy consumption

for the offloaded portion are given by the following expressions:

Since partial offloading allows local and offloaded computations to be executed in parallel, the execution delay and energy consumption for task

can be formulated based on the above equations as follows:

3.5. Problem Formulation

In line with most existing studies, this paper adopts energy consumption and delay as the key performance metrics for evaluating system efficiency. Two weighting parameters are introduced to transform the multi-objective optimization problem of energy consumption and delay into a single-objective one. By adjusting these weights, the model can accommodate varying user [

35] preferences. The total cost incurred by all vehicles within a cluster can be formulated as Equation (

24):

where constraint (

25) requires that

must select

within the aggregation group to provide computational services. Constraint (

26) ensures that the execution time of task

does not surpass its maximum tolerable latency. Constraint (

27) stipulates that the offloading ratio of task

must not exceed 1, allowing any proportion of the task to be processed locally or offloaded. Constraints (

28) and (

29) ensure that the computing resources allocated to

by

and ES do not exceed their respective maximum computational capacities. Constraint (

30) requires that the total computing resources allocated by the RSU to task

do not exceed its available computational capacity. Constraint (

31) defines

and

as the relative weights of delay and energy consumption, which can be adjusted according to different users’ service requirements.

When

, the time complexity of obtaining the optimal solution increases exponentially with the number of

. This problem has been proven to be NP-hard [

36]. With the introduction of the partial offloading problem, where

, the problem becomes more complex. Therefore, this study employs a DRL algorithm to solve it.

4. Proposed Approach

The DTAITO approach proposed in this study addresses the aforementioned problems through the GIVC algorithm and TD3 algorithm. First, the approach uses the GIVC algorithm assisted by DT for vehicle clustering, ensuring the stability of the links between vehicles within the aggregation group. Then, the TD3 algorithm is employed to obtain the optimal offloading decision, with the design of the action space, state space, and reward function for the partial offloading problem. By training a neural network, the optimal solution for the task offloading problem is obtained. In the end, a feedback mechanism is introduced to adjust the parameters of clustering according to the offloading results, enhancing the robustness of the GIVC algorithm in various environments.

4.1. Gravity-Inspired Vehicle Clustering (GIVC) Algorithm

Given the high vehicle density, direct large-scale scheduling is impractical. Aggregation groups are formed based on the availability and demand for computational resources, using the DT and GIVC algorithms to determine the optimal offloading space, thereby effectively reducing the complexity of task scheduling. A DT node for the VEC is established in each RSU, where each RSU collects the topology of vehicles and computational capabilities in the surrounding environment. Data is transmitted through wired communication, constructing the overall DT network of the VEC. For clarity, the DTAITO approach in this paper defines the elements of the DT network as , where represents the digital model of the vehicle, consisting of the task set , the social trust value set , the computational resource set and the transmission rate set . denotes the correlation coefficient between computational resources, social trust values, and transmission rates in the DT network. is the cyclic sequence number, which assists in parameter updates.

The GIVC algorithm used for clustering in the DTAITO approach is an improvement based on the K-Means algorithm, inspired by Newton’s law of gravitation. This paper uses the algorithm to generate optimal aggregation groups, each consisting of one RSU and V vehicles. In this study, the “mass” of a vehicle

i is defined as:

where

denotes the number of CPU cycles required by vehicle

i to complete task

and

represents the computational capability of each vehicle. This variable is used to estimate the computational demand of vehicle

i, which is supplemented by offloading tasks to address resource deficiencies. When vehicle

i acts as the service vehicle, its “mass” becomes

. Next, according to Equation (

32), the gravitational force between vehicle

i and vehicle

j can be expressed as:

where the numerator of Equation (

33) primarily represents the supply–demand relationship strength between vehicle

i and vehicle

j, reflecting their relative strength in terms of computational resource requirements. The proposed method modifies the K-Means algorithm by replacing its distance metric in the denominator with social trust values and communication rates. Through the incorporation of the gravitational formula (

33) in place of the distance element, enhanced clustering performance for vehicles is achieved.

Let the ensemble of vehicles in the region excluding the cluster centers be denoted as

, and the set of vehicles serving as the cluster centers be denoted as

. Ultimately, the aggregation groups produced by the algorithm are collectively denoted as

. The specific procedure of Algorithm 1 is as follows.

| Algorithm 1 GIVC Algorithm |

- Input:

Desired number of aggregation groups; number of vehicles - Output:

A set of m aggregation groups - 1:

Initialize model parameters , , , and the value of the number of aggregation groups m; - 2:

Initialize the aggregation group set ; - 3:

Randomly select m vehicles as cluster centers ; - 4:

for

do - 5:

Update to ; - 6:

end for - 7:

for

do - 8:

Calculate the gravitational set using Equation ( 33); - 9:

Select the vehicles that make ; - 10:

Update to ; - 11:

end for - 12:

return .

|

4.2. Task Offloading Decision Approach

The Markov Decision Process (MDP) is formulated as a mathematical model to capture sequential decision-making in stochastic environments. First, the DTAITO approach models the above optimization problem as an MDP, and then uses the TD3 to make task offloading decisions.

4.2.1. Markov Decision Process

The MDP model is typically represented by a four-tuple (S, A, T, R), where S denotes the state space, A represents the action space, T is the state transition probability function (which is unknown in this paper), and R is the immediate reward obtained by performing action A in state S. Based on the above definitions, the problem under study is modeled as the following MDP model.

(1)

State Space: The state space of the entire system is obtained through the DT-assisted clustering process, which includes the vehicles number in the aggregation group, the task information of TV, the related information between SV and TV, and the information of ES. Therefore, the system state is expressed as:

where

can further be expressed as

, which includes the task size, the required computational resources, and the maximum tolerable delay.

denotes the information of task vehicle

, including its transmission power

, computation power

, and remaining computational resources

.

represents the information of service vehicle

, including its computation power

and remaining computational resources

.

denotes the information of the ES connected to the RSU, including its computation capacity

and remaining computational resources

.

(2)

Action Space: To determine the offloading ratio and offloading target for each vehicle,

can represent three scenarios: full local execution, partial task offloading, and complete offloading.

denotes the task offloading location for

, where

indicates offloading to the ES connected to the RSU or to an eligible

. Therefore, the action space is expressed as:

(3)

Reward: After executing action

A in state

S, the agent receives the corresponding reward

. The goal of the DRL algorithm is to maximize cumulative rewards. To align the objective function with the goal of the algorithm, this study designs the following reward function:

In this case, when the agent successfully completes the task as required, it receives reward . If the agent fails to complete the task as required, it incurs a corresponding penalty . Under this setup, the larger the reward value, the smaller the offloading cost Z that is focused on, thus achieving effective optimization of the system’s performance.

4.2.2. Vehicle Partial Task Offloading with TD3

Given that the DQN [

37] method is not well-suited for continuous action control problems and that the Deep Deterministic Policy Gradient (DDPG [

38]) algorithm exhibits poor robustness in hyperparameter selection and tuning, the DTAITO approach chooses the TD3 algorithm to make partial offloading task decisions for vehicles. TD3 addresses issues such as value function overestimation bias, training instability, and slow convergence speed that exist in DDPG during policy optimization. Several improvements have been proposed to enhance the performance and stability of algorithm: First, a dual Q-network architecture is used, with two independent critic networks learning two Q-functions in parallel. When calculating target values, the smaller of the two Q-function estimates is chosen to effectively suppress the overestimation problem caused by value function approximation errors. Secondly, a target policy smoothing mechanism is introduced during the target policy calculation process. By adding truncated Gaussian noise to the target action, the tendency of the policy function to overfit in the steep regions of the Q-value function is reduced, thus improving the stability and robustness of the learning process. Finally, a delayed policy update mechanism is introduced, where the actor network is updated once for every several updates to the critic network. Consequently, the policy network benefits from more reliable value estimates, leading to reduced variance and improved training efficiency.

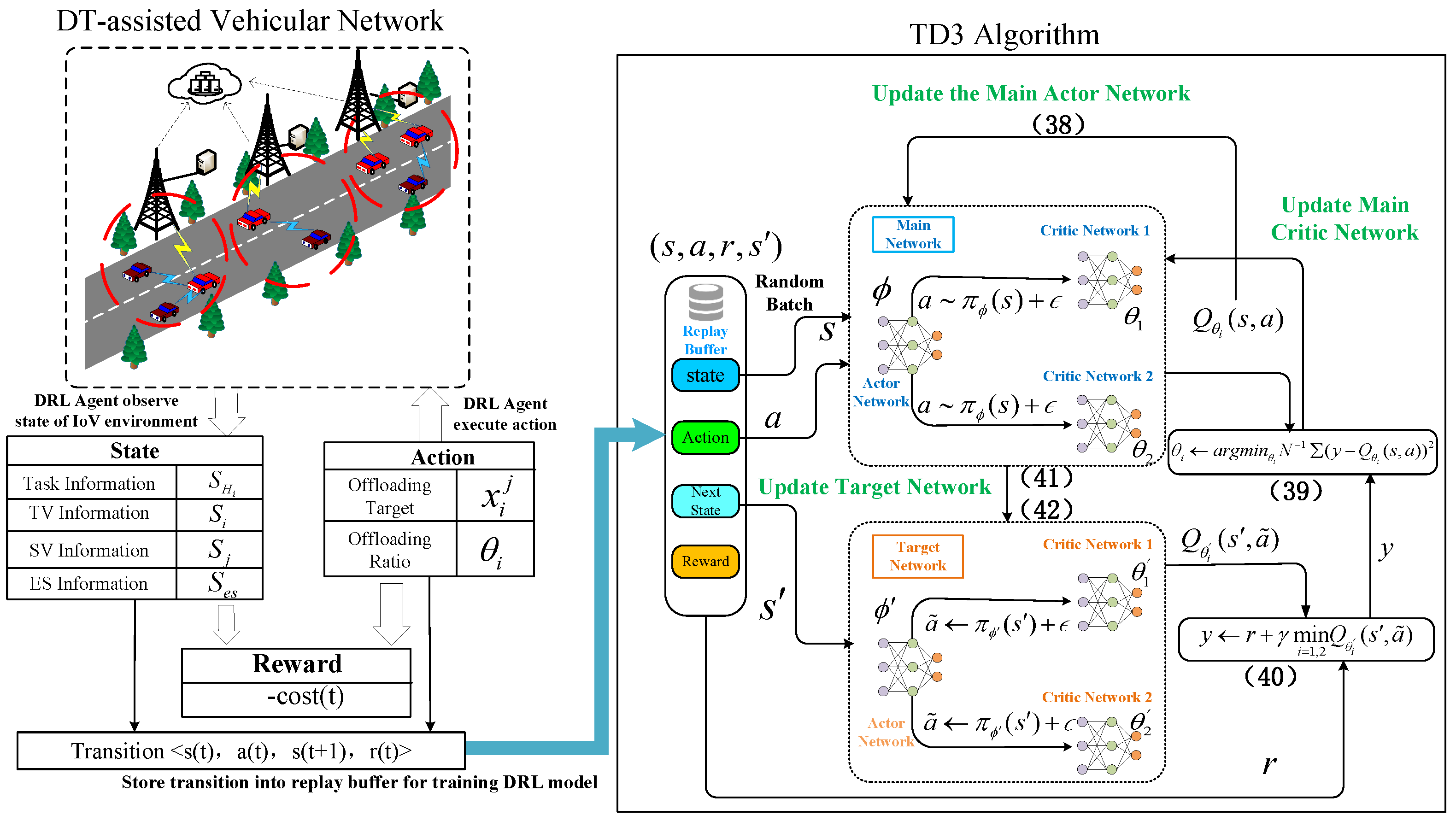

The flowchart of the TD3 algorithm is shown in

Figure 2. First, the TD3 algorithm employs a DRL model composed of a main and a target network. Each network is composed of two critic networks and an actor network, which are used to approximate the policy function and the value function, respectively. At the same time, the parameters of the critic and actor networks in the target network are denoted as

,

and

. The role of the actor network

is to generate action

, that is:

where

represents the noise, which plays a crucial role in promoting exploration within the DRL model. It follows a normal distribution, characterized by a mean of 0 and a variance of

.

The actor network receives its input from the state space, which is derived from the data provided by the DT. The actor network selects action

based on the present state

, then engages with the environment, leading to the next state

and producing the reward

associated with performing that action in the given state. The resulting four-tuple

is then stored in the experience replay buffer for updating the TD3 network parameters. To update the parameters of the main actor network, the TD3 algorithm utilizes the deterministic policy gradient. The expression for the deterministic policy gradient is as follows:

where

represents the first critic network of the main network, and

represents the actor network of the main network, which is used to approximate the optimal policy.

The parameter update formula for the main network is as follows:

where

represents the actual value of the Q-function, and

represents the target Q-value of the target critic network. The calculation formula for y is as follows:

where

represents the discount factor, which lies within the range

.

r represents the immediate reward.

is the action generated by

based on state

.

The parameters of the target network will be updated using soft updates, ensuring smoother and more stable parameter updates. The update formula is as follows:

where

represents the soft update rate of the target network, which is typically set to a small value, such as 0.01 in this study. The specific details are shown in Algorithm 2.

The computational complexity of the TD3 algorithm for partial offloading based on VEC can be analyzed by considering the operations performed at each step. The initialization of networks and the replay buffer has a complexity of

. For each episode, initializing the exploration process and the environment is a constant time operation, giving a complexity of

. Within each episode, there are

T timesteps. Each timestep involves action selection, execution, observation, storing transitions, sampling mini-batches, target action calculation, target Q-value computation, and critic network update, each contributing

or

, where

N is the mini-batch size. Every

d timesteps, the policy is updated, involving the actor network update and target network soft update, contributing complexities of

and

. Summing up the complexities, the overall complexity is

+

+

. Upon simplification, the dominant term is

, indicating that the computational complexity of TD3 algorithm is

, showing that the complexity scales linearly with the number of episodes

M, the number of timesteps per episode

T, and the mini-batch size

N.

| Algorithm 2 TD3 Algorithm for Partial Offloading Based on VEC |

- Input:

Task information and resource information - Output:

Optimal task offloading strategy - 1:

Initialize the actor network and critic network , using random parameters ; - 2:

Initialize the target network parameters , , ; - 3:

Initialize the mini-batch S and the experience replay buffer B; - 4:

Initialize the soft update factor and the discount factor ; - 5:

for

to

M

do - 6:

Reset simulation environment of VEC and obtain clustering results; - 7:

Get initial state ; - 8:

for to T do - 9:

Obtain action using Equation ( 37); - 10:

Execute action , obtaining reward and the next state ; - 11:

if B is not full then - 12:

Store into B for training the network; - 13:

else - 14:

Randomly replace in B; - 15:

end if - 16:

Randomly sample mini-batch of N transitions from replay memory B; - 17:

Update with Equation ( 38); - 18:

Update and with Equation ( 39); - 19:

if then - 20:

Update the target network parameters using soft updates based on Equations ( 41) and ( 42); - 21:

end if - 22:

end for - 23:

end for

|

4.2.3. Feedback Mechanism

To further improve the robustness of the GIVC algorithm in dynamic and complex scenarios, a feedback mechanism is introduced, dynamically adjusting the parameters of the next cycle based on the offloading results from the previous cycle. Let denote the set of gravitational parameters within cycle T, which is updated according to the comparison results of the adjacent cycles.

First, the update formula for

is as follows:

where Equation (

43) demonstrates the role of resource factors in the gravitational model. In the formula, the coefficient denotes the ratio of computational resources of the current DT network to those of the final DT network within cycle

T. If this ratio is above 1, it implies that the average resource requirement of the aggregation group has increased during the new mapping cycle. Therefore,

must be adjusted upward to strengthen the sensitivity of resource alignment in clustering.

is used to measure the role of social trust values in the gravitational model. The update expression is given by:

where

and

denote the social trust values between the two vehicles during cycles

and

T, respectively. When the coefficient in the formula is less than 1, it indicates that the social trust level within the aggregation group has increased in the new cycle. Therefore,

can be reduced to enhance the role of social trust values in the clustering process.

denotes the impact of data transmission capability in the gravitational model. The update formula can be expressed as follows:

where the coefficient in the formula represents the ratio of data transmission capability between the current cycle and the previous cycle.

represents the transmission time between vehicle

m and

n in cycle

. If this value is below 1, reducing

can increase the relative importance of data transmission capability during the clustering process.

Dynamically adjusting the parameters of the gravitational model can better reflect computational resource demands, social trust values, and data transmission capabilities, thereby effectively improving the accuracy of clustering and further optimizing the performance of the VEC network.

6. Conclusions

This paper proposes a DTAITO task offloading approach for VEC systems, which comprehensively considers both delay and energy consumption, and defines it as a combinatorial optimization problem. The approach aims to provide an efficient and intelligent task offloading solution for dynamic and heterogeneous vehicular network environments, ensuring the smooth execution of user tasks. First, DT technology and the GIVC algorithm are used to cluster the vehicles on the road, obtaining the optimal decision space and ensuring the stability of vehicle links within the same aggregation group. Next, a partial task offloading method based on the TD3 algorithm is proposed. This method trains the offloading policy through the TD3 algorithm to obtain the best offloading decision. Finally, to ensure the stability of the clustering algorithm in different scenarios, a feedback mechanism is employed to adaptively tune the parameters of the GIVC algorithm according to offloading outcomes, thereby improving clustering performance in the subsequent round. Simulation results show that the proposed DTAITO approach outperforms other offloading approaches in terms of total delay, total cost, total energy consumption, and success rate. Although this study has made notable progress in both methodology and experimentation, several limitations remain to be addressed. First, in terms of digital twin technology, only a simplified version of the core functionalities was implemented, without constructing a comprehensive digital twin system that encompasses modeling, real-time interaction, and feedback mechanisms. This limitation may restrict the generalizability and applicability of this work. Second, due to space constraints, privacy preservation and secure communication during data transmission among vehicles were not sufficiently considered, which could pose potential risks in real vehicular networks.

Future work can be carried out in several directions. (1) Integrating resource allocation with task offloading to achieve more efficient system scheduling in multi-user competitive environments, thereby reducing resource wastage and improving overall performance; (2) developing advanced privacy-preserving mechanisms, such as differential privacy, to ensure data security while maintaining offloading efficiency; (3) exploring the feasibility of coordinating multiple edge servers within aggregation groups, aiming to enhance system adaptability and robustness in dynamic and complex scenarios; and (4) deploying a full-scale digital twin system and validating it in representative smart transportation scenarios, in order to assess its feasibility and practical value. By pursuing these directions, future studies are expected to better align with real-world requirements and provide both theoretical insights and practical support for the deep integration of edge computing and digital twin technologies.