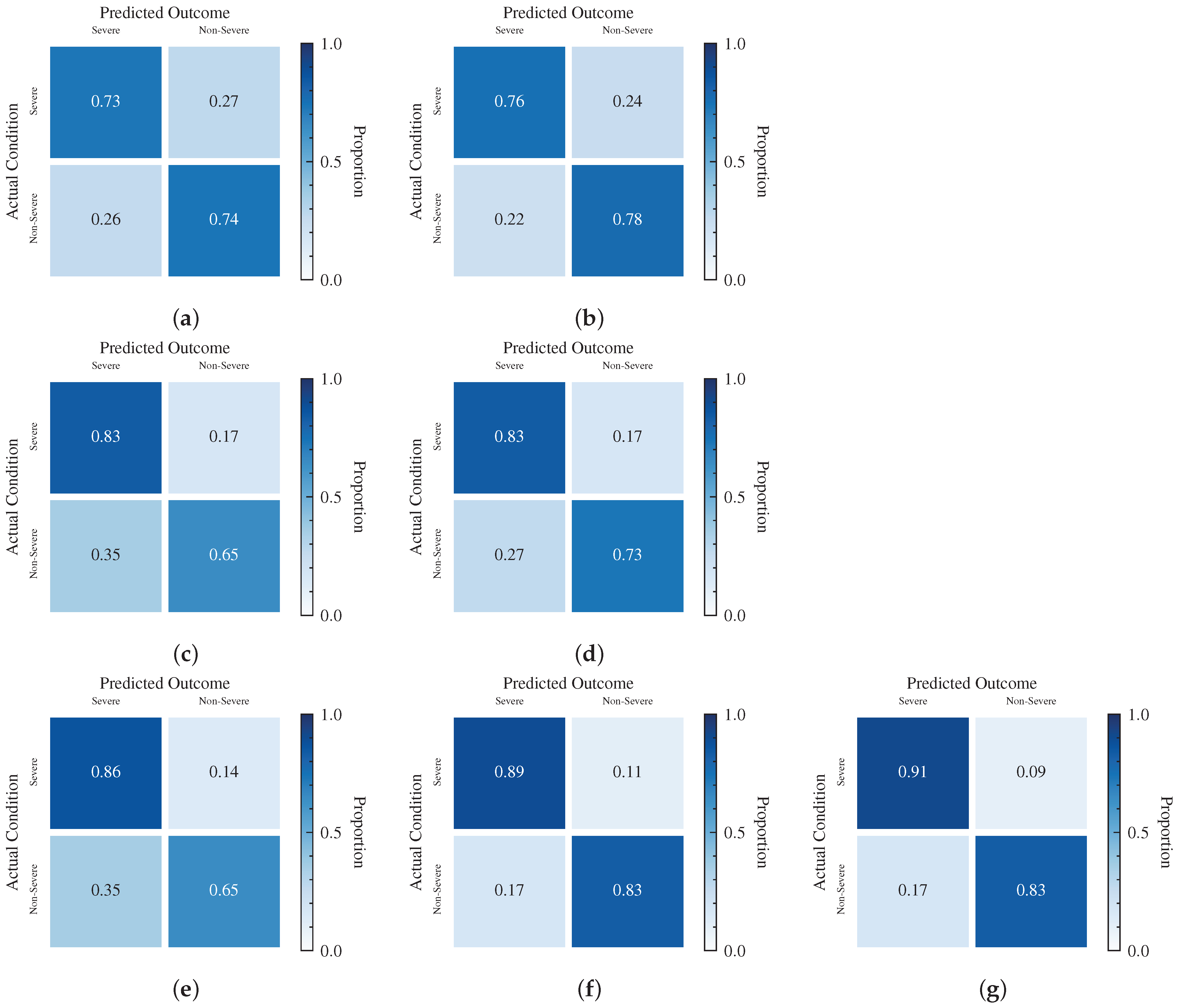

4.1. System Initialization

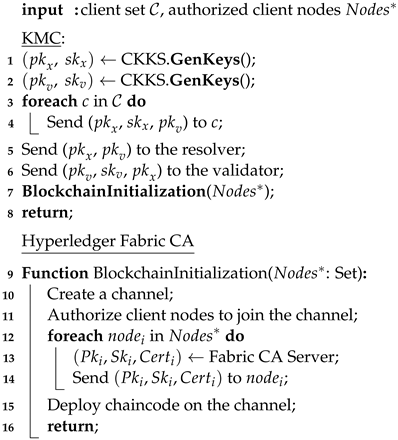

System initialization is divided into the following two parts (See Algorithm 1).

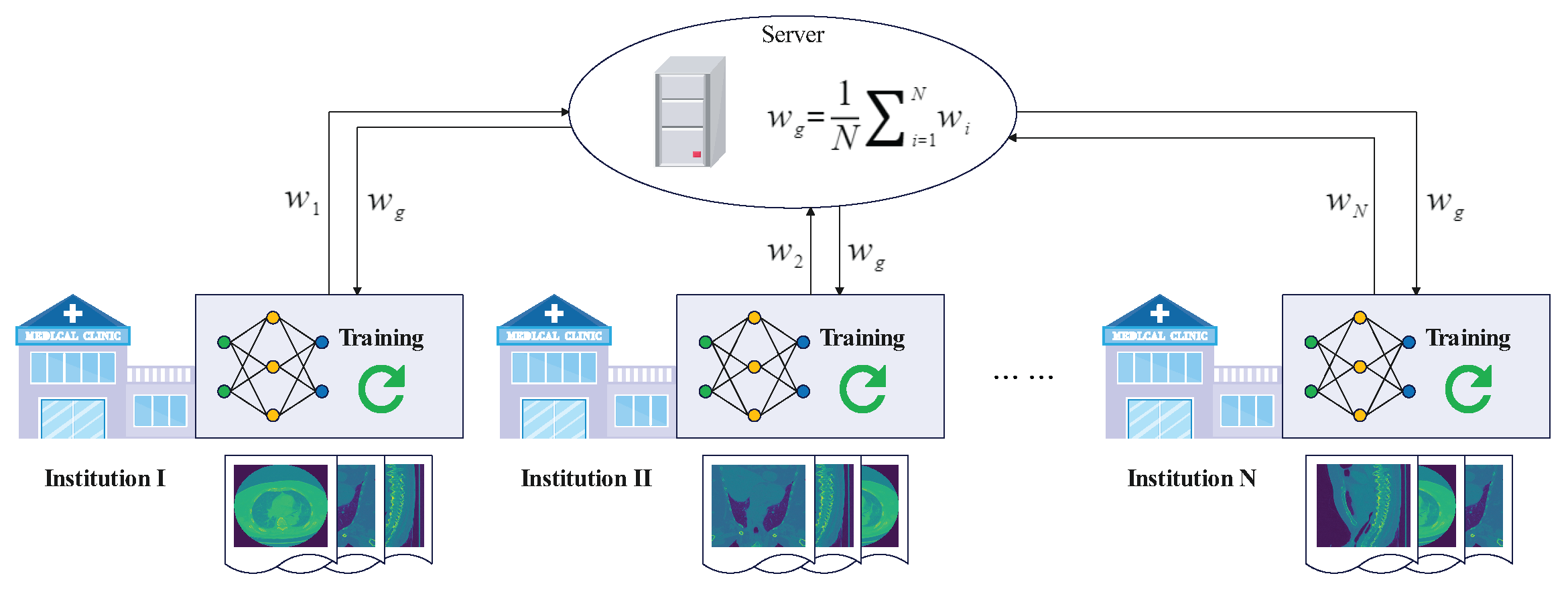

In the initialization phase of the federated learning service, we configure a pair of public/private keys for each client node in the system through KMC, and configure another pair of public/private keys for the validator. Here, the keys and are used for the subsequent homomorphic encryption computation. Then, we released two federated learning tasks, one for predicting the probability of the COVID-19 disease and the other for predicting the probability of COVID-19 disease severity. Meanwhile, we submit the original global model to the blockchain.

When the blockchain service is initialized, we create a channel for each federated learning task through the blockchain network. Next, we add orderer nodes, peer nodes, and organization members to the channel, and deploy the chaincode program. At the same time, the Hyperledger Fabric CA issues keys and certificates

for each node authorized to join the channel. Finally, we generate a genesis block for this channel and store the original global model block on the blockchain. In this way, each federated learning task can be run through these nodes on the corresponding channel of the blockchain.

| Algorithm 1: SystemInitialization |

![Electronics 12 02068 i001 Electronics 12 02068 i001]() |

4.2. Local Model Training

When a client participating in a federated learning task starts to perform a round of local training, they first downloads the last round of global model from the blockchain through peer nodes and decrypt it using , which then serves as the local model for the new round of the training process. Then, the client sends the local model and the local dataset to the trainer, which is responsible for performing the local model training task (See Algorithm 2).

The trainer performs the local training process through the mini-batch SGD (Stochastic Gradient Descent) algorithm. We define

as the loss function on a batch size

b of samples

, then:

where

x represent the feature and label for a certain sample and

is the loss function on a single sample. Therefore, we obtain the local gradient

and update the local model

through the SGD optimization algorithm, where ∇ is the derivative operation and

is the local training learning rate.

| Algorithm 2: LocalModelTraining |

![Electronics 12 02068 i002 Electronics 12 02068 i002]() |

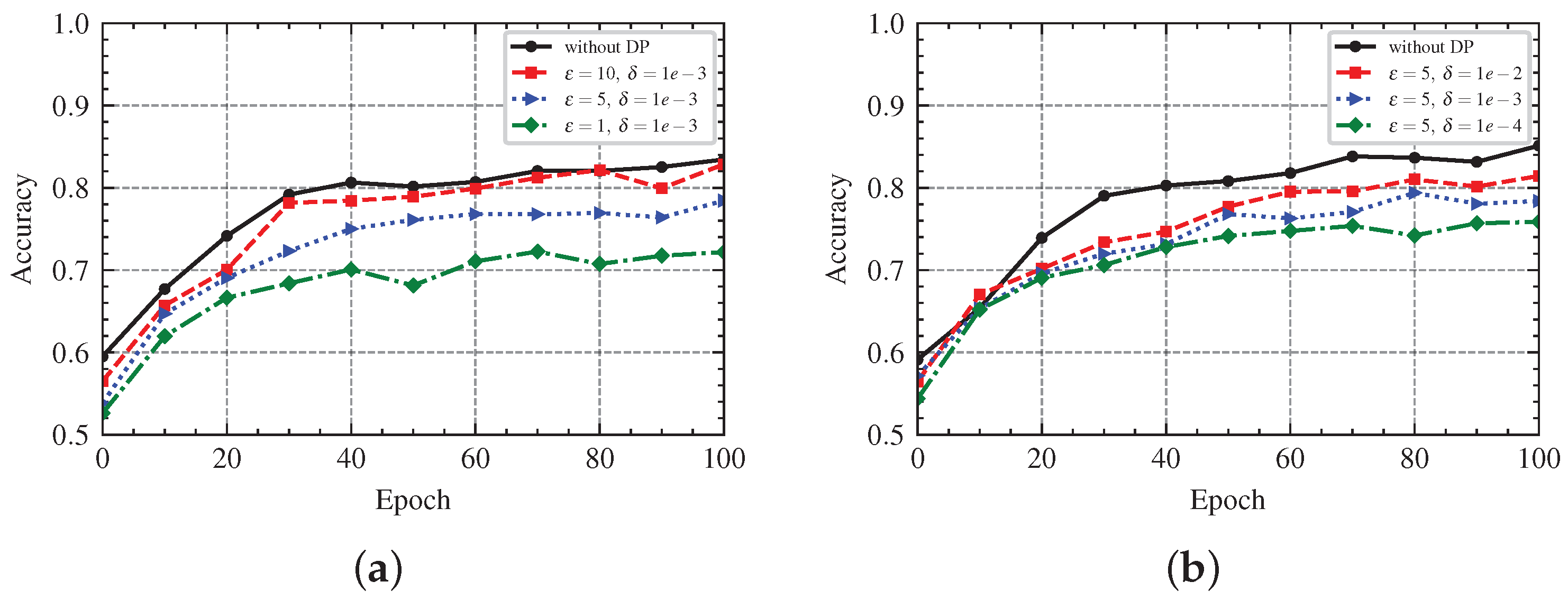

To enhance the privacy of local training, we integrate a -differential privacy scheme for the trainer. Furthermore, we achieve a DP-SGD gradient perturbation algorithm implemented by the Gaussian mechanism, where Gaussian noise is added to the gradient during local training. We need to determine a Gaussian distribution to generate Gaussian noise for applying the Gaussian mechanism.

We set appropriate hyperparameters

satisfying

where

z is the noise multiplier,

C is the gradient clipping parameter,

is the sensitivity of the gradient, and

represent the

-differential privacy. Then, we have

Thus, we only need to set the values of the parameters

to obtain a Gaussian distribution

, which corresponds to

-differential privacy. In a short, we can add Gaussian noise to the gradient in three steps: ① First, we compute the gradient at each sample and clip it to a fixed range

. ② Then, we add Gaussian noise

to the clipped gradient. ③ Finally, we update the model using the gradient with Gaussian noise as follow:

where

is the Gaussian noise.

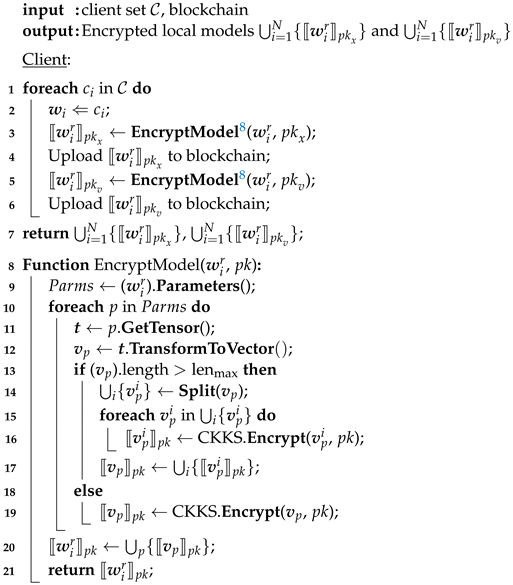

4.3. Upload Local Model

When the trainer completes a round of local training, the client

gets the new local model

. Before uploading the local model to the resolver and blockchain, we need to encrypt it using homomorphic encryption. Homomorphic encryption is a technique that supports operations over ciphertext without compromising the security of the user data [

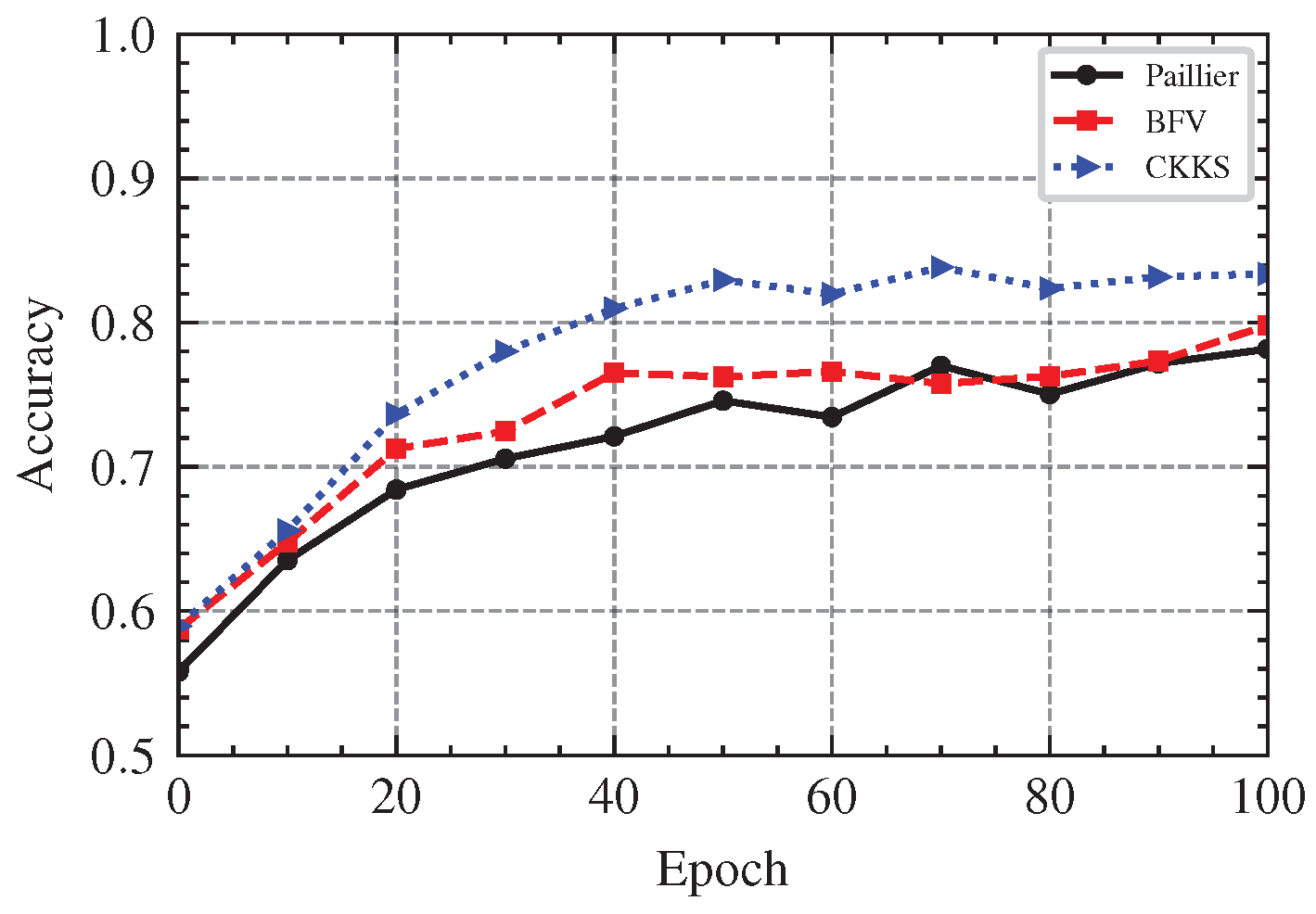

41]. The classical Paillier [

42] semi-homomorphic encryption scheme only supports homomorphic addition and homomorphic scalar multiplication operations in the range of integers, while the second-generation fully homomorphic encryption schemes represented by the BGV scheme [

43] and BFV scheme [

44] also only support integer operations. Considering that the model parameters are the signed floating-point numbers, it is necessary to quantize and trim the model parameters if these schemes are adopted, which will undoubtedly bring additional computational costs. In contrast, the CKKS [

45] fully homomorphic encryption scheme proposed in 2017 supports floating-point addition and multiplication homomorphic operations for real or complex numbers. The calculation results are approximate, which is suitable for machine learning model training and other scenarios that do not require exact results.

Therefore, we choose to encrypt the local model using the CKKS homomorphic encryption scheme. In the details of encryption, we transform the model parameters of the local model into vectors at each layer and encrypt the vectors layer by layer. Furthermore, if the vector is too long to exceed the specified maximum length limit, we split it into multiple vectors and encrypt it multiple times. Specifically, we use the client’s public key

and the validator’s public key

to encrypt the local model

to gain

and

, respectively. Then, the client

uploads

and

to the blockchain as a transaction, and sends

to the resolver at the same time (See Algorithm 3).

| Algorithm 3: UploadLocalModel |

![Electronics 12 02068 i003 Electronics 12 02068 i003]() |

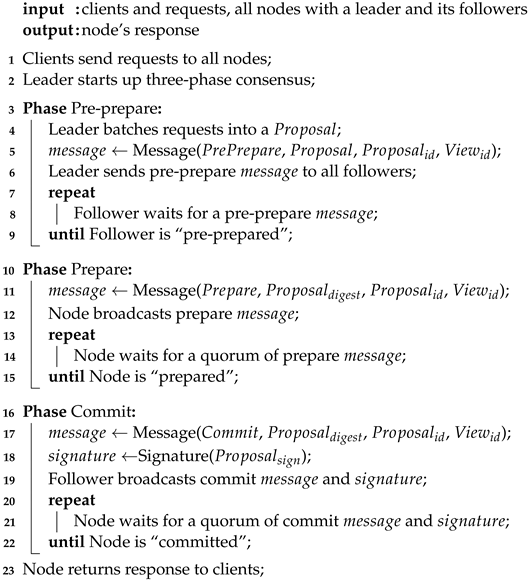

4.4. Global Model Aggregation

After receiving all clients’ local models, the resolver adopts model averaging approach to aggregate all local models

to obtain the global model

:

However, some malicious clients may deliberately send wrong local models to interfere with the aggregation process, thereby making the aggregation result invalid and the whole training process fail. In addition, the “honest” but “curious” server (resolver or validator) is likely to infer sensitive information about the client data from the model parameters, which brings concerns about user data privacy. Therefore, we need to consider counteracting the adverse effects of poisoning attacks and protecting the data privacy of the client. In this way, the secure aggregation process may be expressed as:

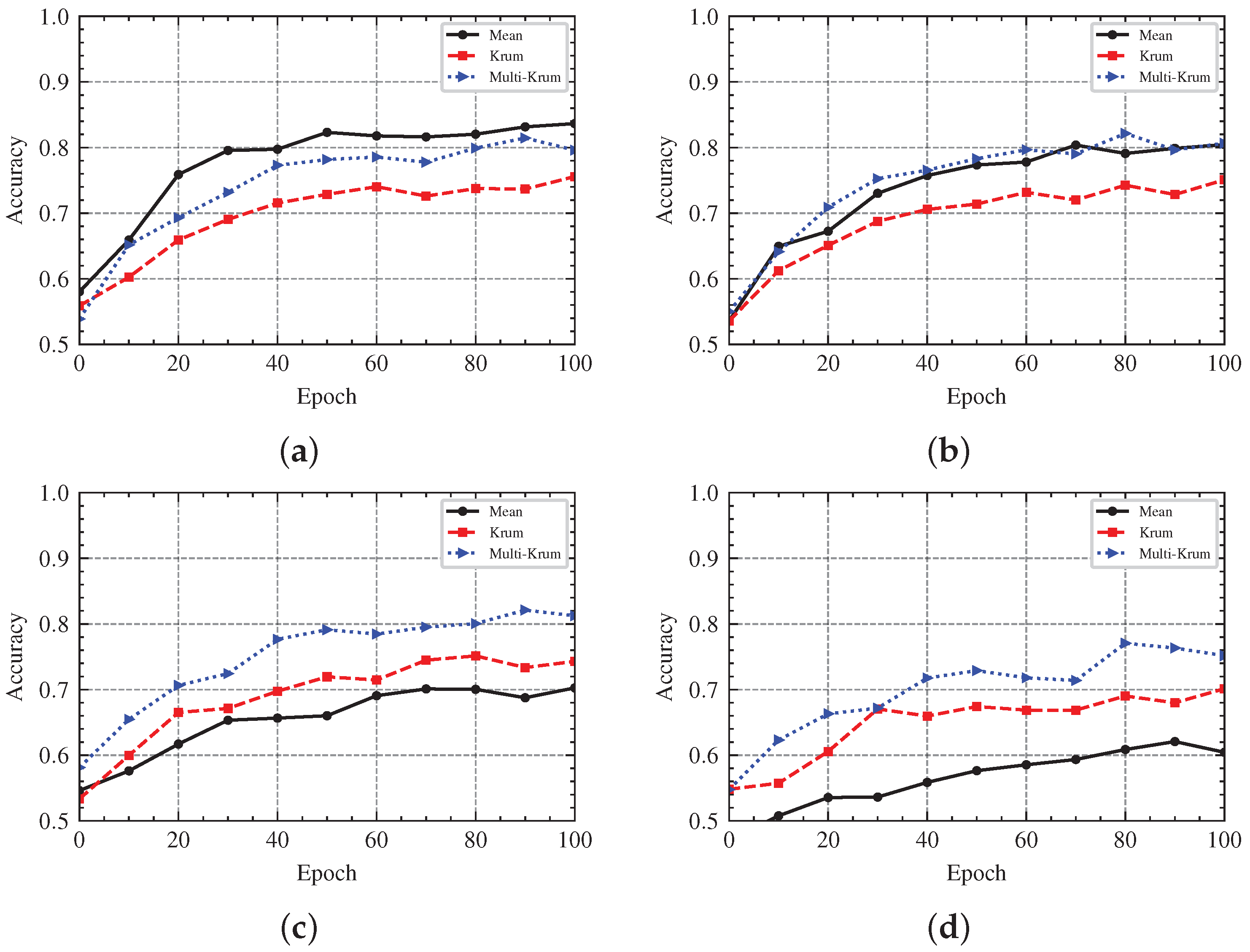

Next, we present some details about the secure aggregation process. We mainly divide global model aggregation into two processes: model filtering and model aggregation (See Algorithm 4).

In the process of model filtering, we adopt a Byzantine robust Multi-Krum aggregation algorithm, which is a popular aggregation rule in distributed machine learning. The Multi-Krum algorithm is a variant of the Krum algorithm and it is resistant to Byzantine attacks. We assume that there is a distributed system consisting of a server and

n nodes, in which

f nodes may be Byzantine nodes. Each node submits a vector to the server, and the server is responsible for aggregating these vectors. The strategy of the Krum algorithm is to select one of the

n vectors that is most similar to the others as the global representative. For any vector

v, the Krum algorithm calculates the Euclidean distance between the vector

v and other vectors, and selects the nearest

distances to sum as the score of vector

v. Then, the vector with a minimum score is representative of all vectors. The Euclidean distance is calculated as:

where

d is the Euclidean distance between two

n-dimensional vectors

and

. The Multi-Krum algorithm uses the same strategy as the Krum algorithm, except that the Multi-Krum algorithm selects

m vectors to average as the global representative, which is equivalent to performing

m rounds of the Krum algorithm. The two cases

and

correspond to the Krum algorithm and averaging algorithm.

First, the resolver calculates the Euclidean distance between any two models,

and

. We assume that

and

are two n-dimensional vectors,

and

, respectively. Thus, we can calculate the Euclidean distance between

and

as follows:

Let

, then

s denotes the sum over all elements of vector

. The vector can be calculated from the following:

where the operator ∘ represents the Hadamard product. Hence, we calculate that:

Since the CKKS homomorphic encryption scheme not only provides homomorphic addition, subtraction, and multiplication, but also supports ciphertext vector rotation, vector rotation enables the operation of the summing of all the elements of a vector. Let

represent rotating the vector

with

t times, and then we can obtain:

| Algorithm 4: GlobalModelAggregate |

![Electronics 12 02068 i004a Electronics 12 02068 i004a]() ![Electronics 12 02068 i004b Electronics 12 02068 i004b]() |

Furthermore, when rotating the vector

for

times, we obtain:

Then, we sum

and obtain:

Following the above process, we can obtain:

Therefore, we are able to compute by homomorphic addition, multiplication and rotation. Since , we are not able to obtain directly. In fact, there is no need to calculate the Euclidean distance between and , as we can merely use the square of the distance.

After completing the calculation of the Euclidean distance between any two local models, the resolver sends the result to the validator, which is the square of the distance, and the validator decrypts the result with the secure key . Then, the validator sorts the set of distances corresponding to each model in ascending order and takes the sum of the first k distances to obtain a score (, where “+1” represents the distance between the model and itself).Next, the validator adds the client corresponding to the model with a minimum score to the selected clients set and removes it from the candidate clients set. Finally, the validator repeats the above process m times to add m items to the selected set. When finished, the validator sends the last selected clients to the resolver.

In the process of model aggregation, the resolver chooses the corresponding local model according to the selected clients set to participate in the aggregation process. Specifically, the resolver uses the model averaging algorithm for global model aggregation on all selected models. The model average algorithm is shown as follows:

Since the model is encrypted, the resolver needs to calculate the global model through homomorphic operations:

In this way, the validator obtains the global model

. However, the global model is encrypted with the validator’s public key

and cannot be used by the client directly. Therefore, the resolver needs to send the global model

to the validator for decryption and then is encrypted with the client’s public key

. In order to avoid privacy leakage issues caused by directly exposing the global model

to the validator, the resolver needs to generate a random vector

for multiplying with the global model

and sends product

to the validator. Then, the validator sends

re-encrypted with the client’s public key

to the resolver. Finally, the resolver can obtain the global model

by calculating

.

In the global model aggregation stage, not only the final generated global model needs to be uploaded to the blockchain, but also the resolver and validator need to submit the intermediate results to the blockchain for recording their behavior. This helps to verify the dishonest behavior of the central server and ensure the robustness of the system.

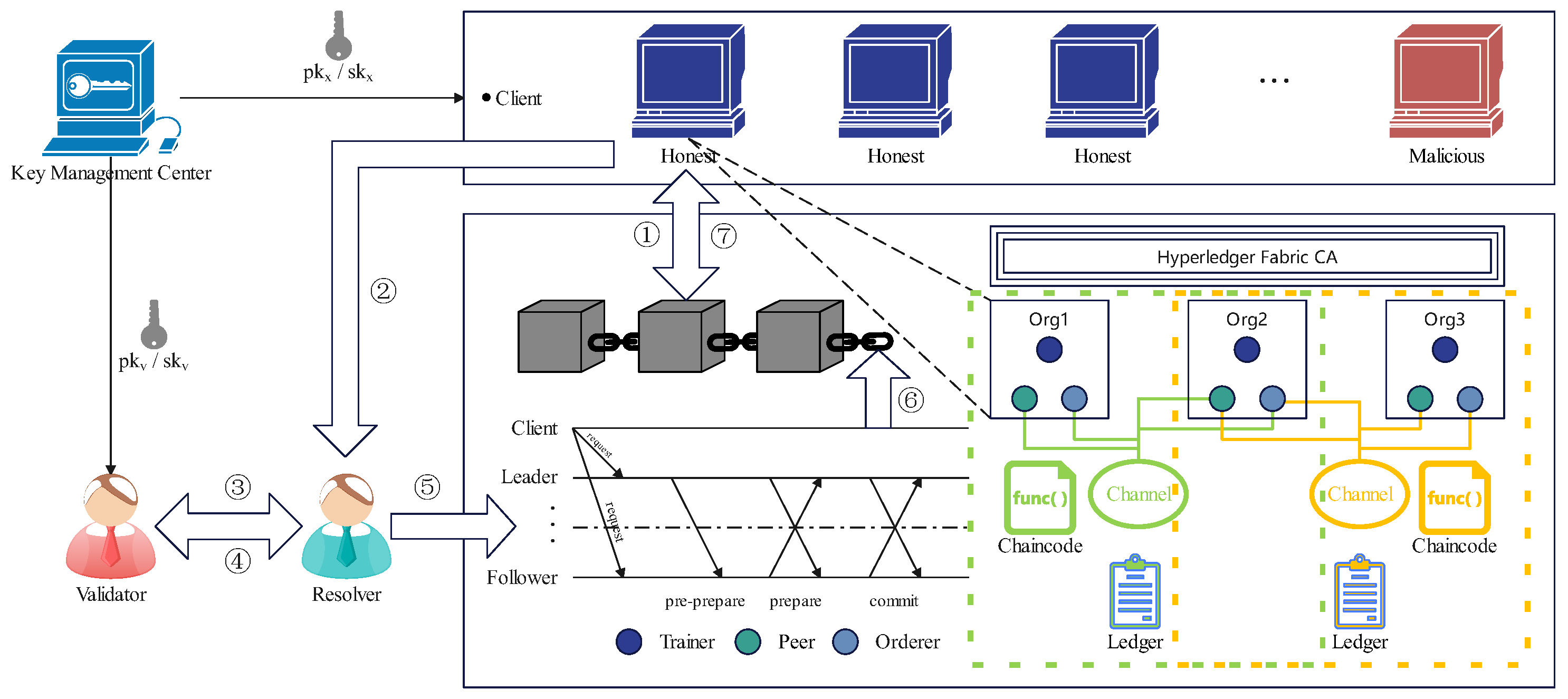

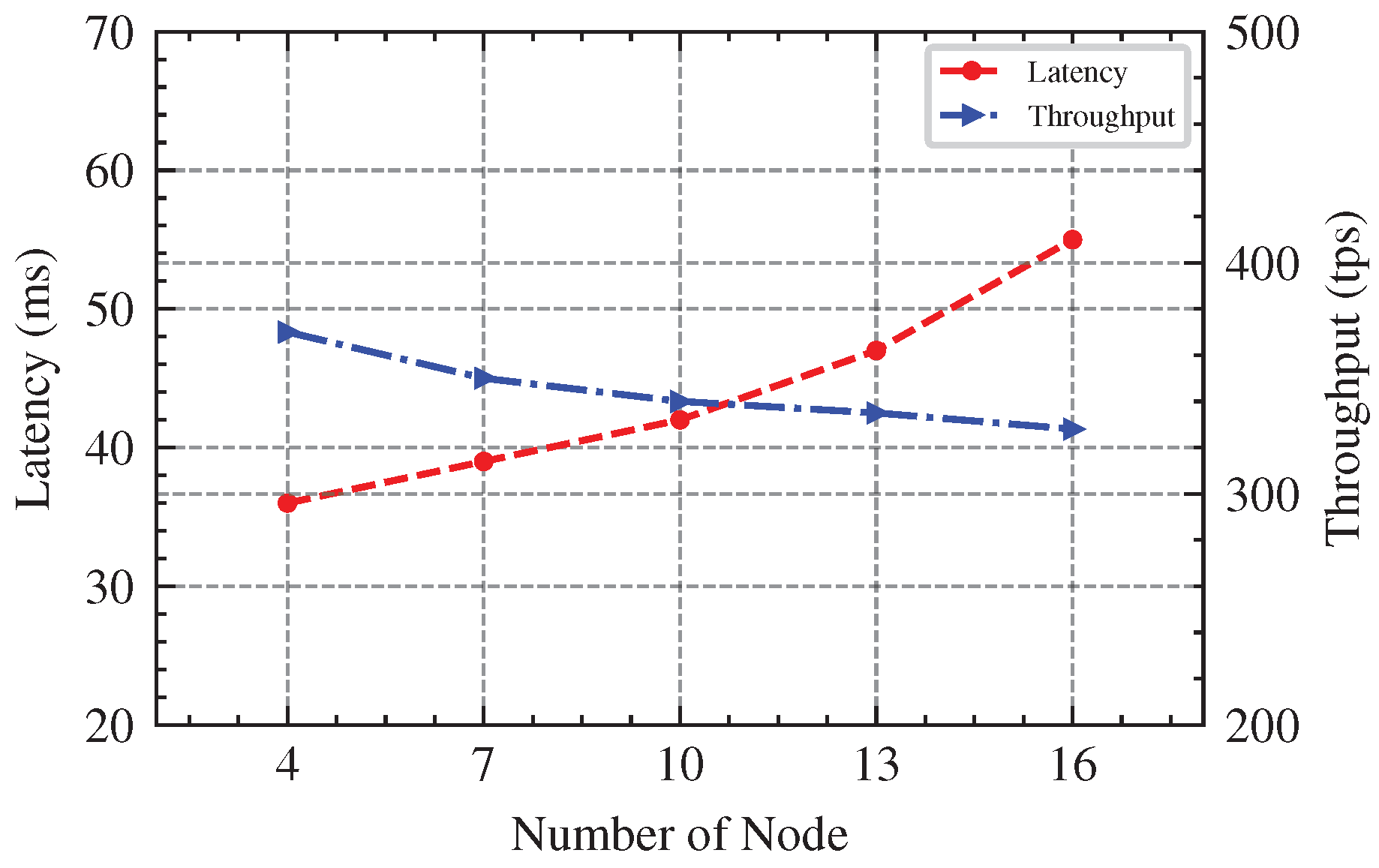

4.5. SmartBFT Consensus

In our decentralized storage design, we adopt Hyperledger Fabric as the underlying blockchain technology. This is an open source distributed ledger technology that is modular, configurable, and supports smart contracts; one of its most attractive features is the design of a pluggable consensus protocol. In v2.0, it provides the Raft consensus protocol, eliminating the Kafka and Solo sorting service. However, in the decentralized scenario, it is necessary to introduce the Byzantine fault tolerance (BFT) consensus protocol, but Hyperledger Fabric lacks support for BFT consensus protocol. Therefore, we decided to adopt the SmartBFT consensus as the solution for the Hyperledger Fabric sorting service.

We assume that the number of nodes participating in the consensus is n and that there are at most f Byzantine nodes, so the SmartBFT algorithm can ensure that the system is running safely when at most f nodes fail. The consensus process begins with a client sending requests to all nodes. The leader of the current view batches requests into a proposal and starts executing the three-phase consensus. At the end, at least nodes could submit the proposals, and if a view change is occurring, the proposal will be forwarded to the next view leader. The three-phases of consensus are pre-prepare, prepare and commit as following (See Algorithm 5).

Pre-prepare: In the pre-prepare phase, the leader assembles the requests into a proposal () in batches, which is then assigned a sequence number (). Next, the leader sends a pre-prepare message to all of its followers, which contains the proposal, sequence number, and current view number. If the proposal is valid and the follower has not accepted another different pre-prepare message with the same sequence number and view number, it will accept the pre-prepare message, and once follower accepts a pre-prepare message, it will be “pre-prepared” and enter the prepare phase.

Prepare: The “pre-prepared” follower accepts a pre-prepare message and broadcasts a prepare message , which includes the digest of the accepted proposal, sequence number, and current view number. Next, each node waits to receive a quorum of prepare messages with the same digest, sequence number and view number. When the node receives a quorum of prepare messages, it will be “prepared” and enter the commit phase.

Commit: The “prepared” node broadcasts a commit message and a signature on the , where the content of the commit message is the same as the prepare message. Then, every node waits to receive a quorum of commit messages and signatures. After receiving a quorum of validated commit messages, the node will be “committed” and deliver its response to the client.

The pre-prepare phase and prepare phase are used to fully order requests sent in the same view, even if there is an error in the leader proposing the order requests. The prepare phase and commit phase are used to ensure that submitted requests are fully order requests across views. After the three-phase consensus, SmartBFL has completed the process of accepting transactions, sorting transactions, and returning transaction blocks by the order nodes.

| Algorithm 5: SmartBFTConsensus |

![Electronics 12 02068 i005 Electronics 12 02068 i005]() |