Short-Term Demand Prediction of Shared Bikes Based on LSTM Network

Abstract

1. Introduction

2. Theory and Methods

2.1. Predictive Models Based on Machine Learning

2.1.1. XGBoost

2.1.2. Bagging

2.1.3. Random Forest

2.1.4. Light Gradient Boosting (LightGBM)

2.1.5. Stacking Model

2.2. Predictive Models Based on LSTM

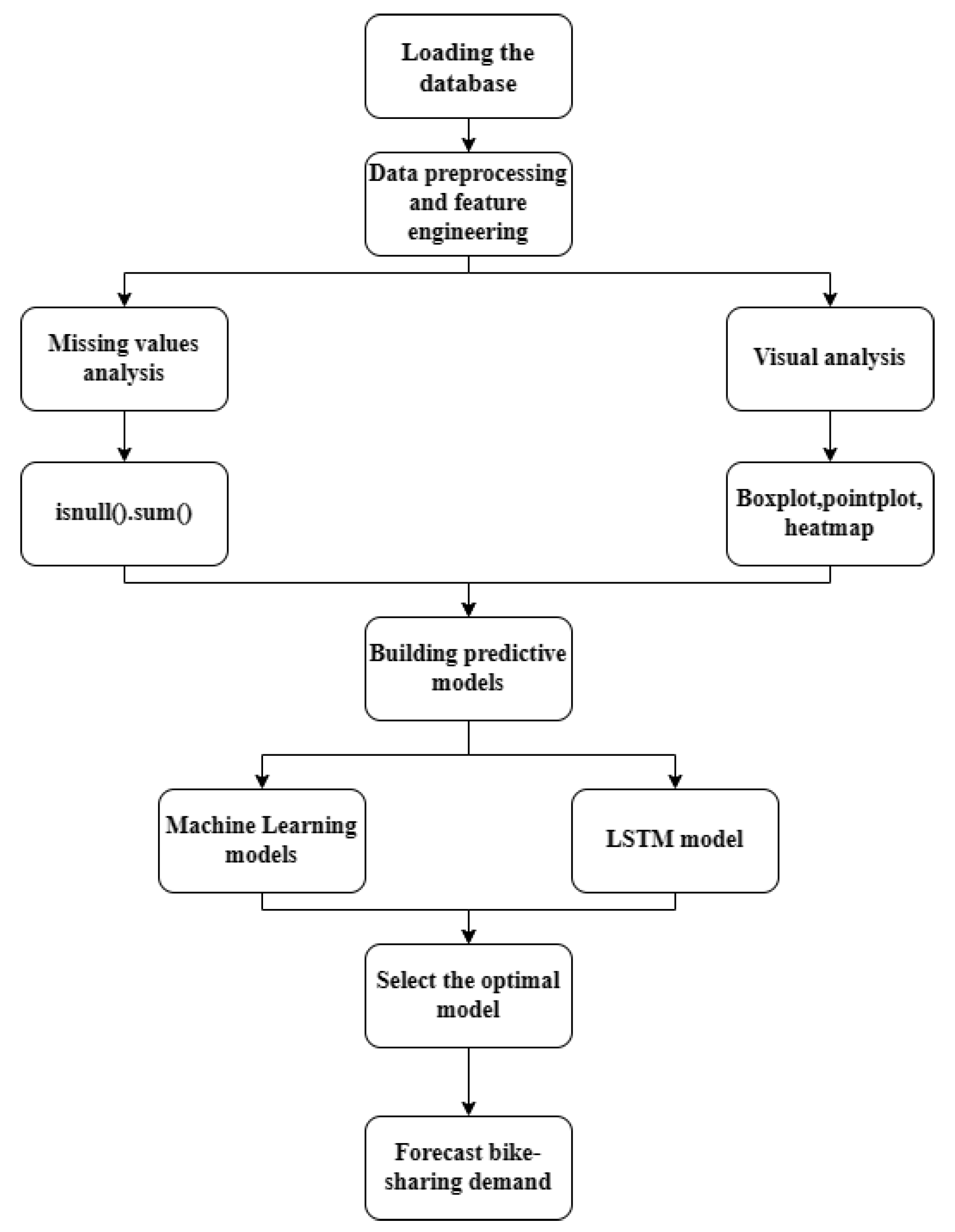

2.3. Experiment Process

2.3.1. Experimental Environment

2.3.2. Acquisition and Introduction of Experimental Data Sets

2.3.3. Experimental Data Preprocessing

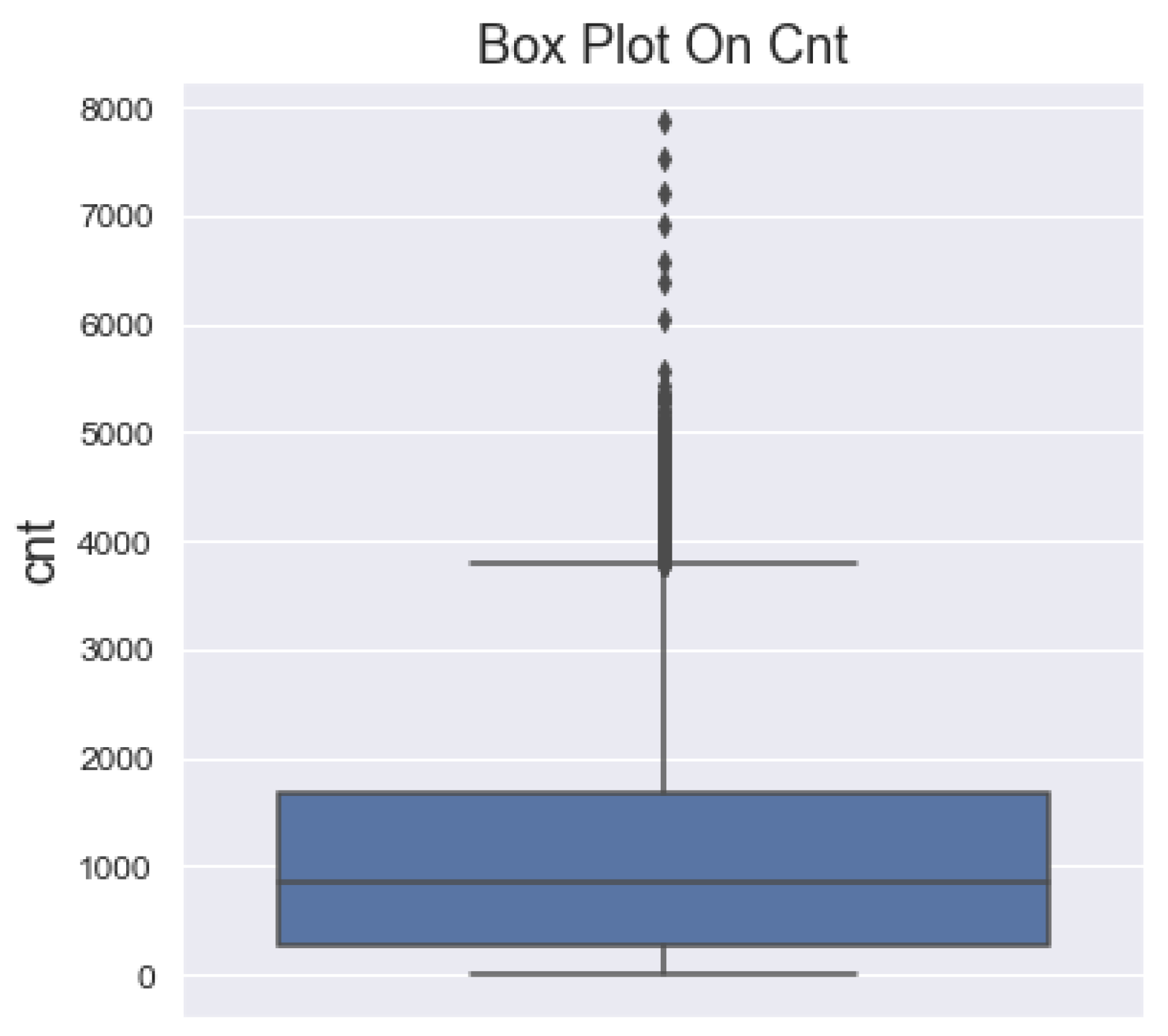

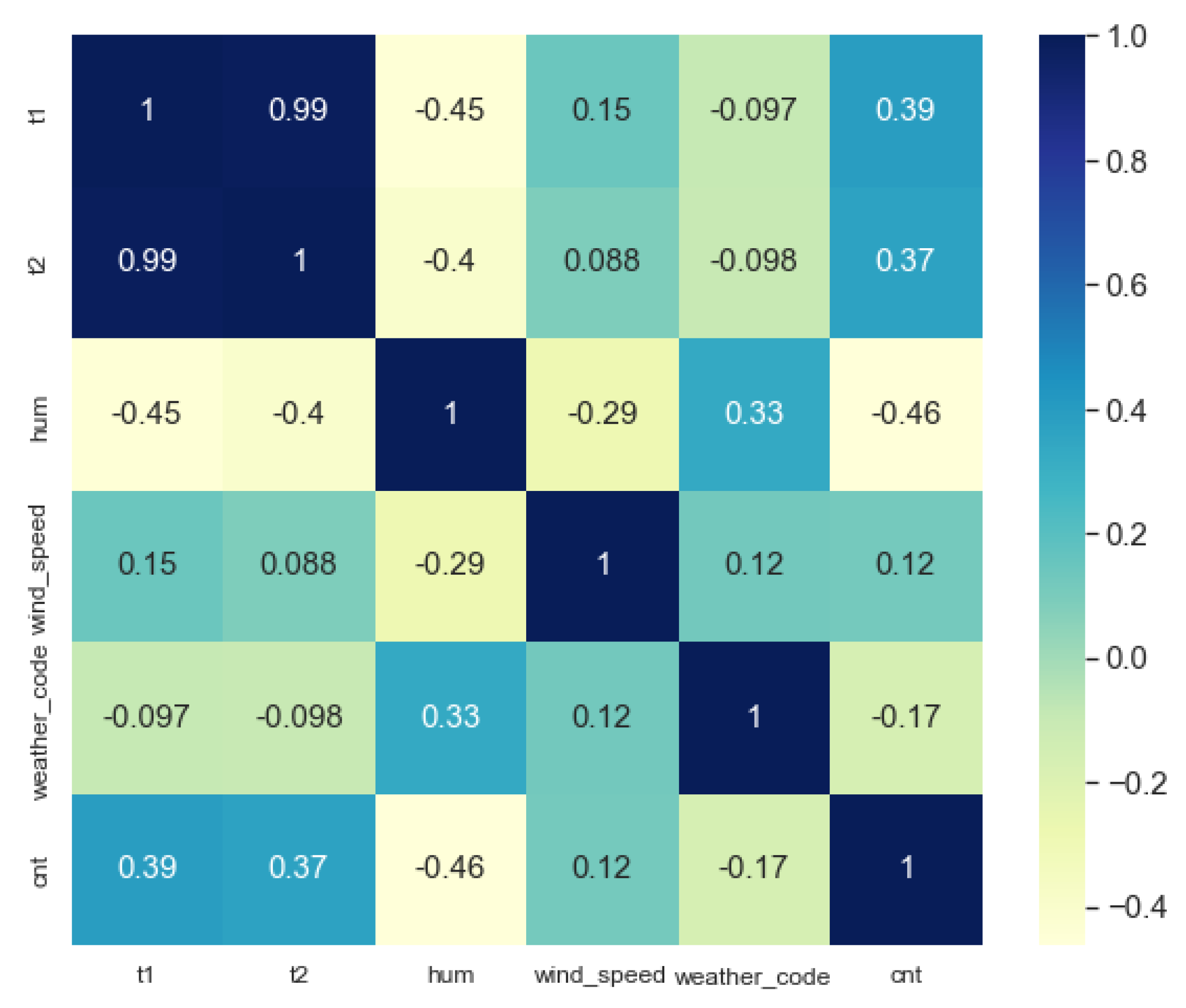

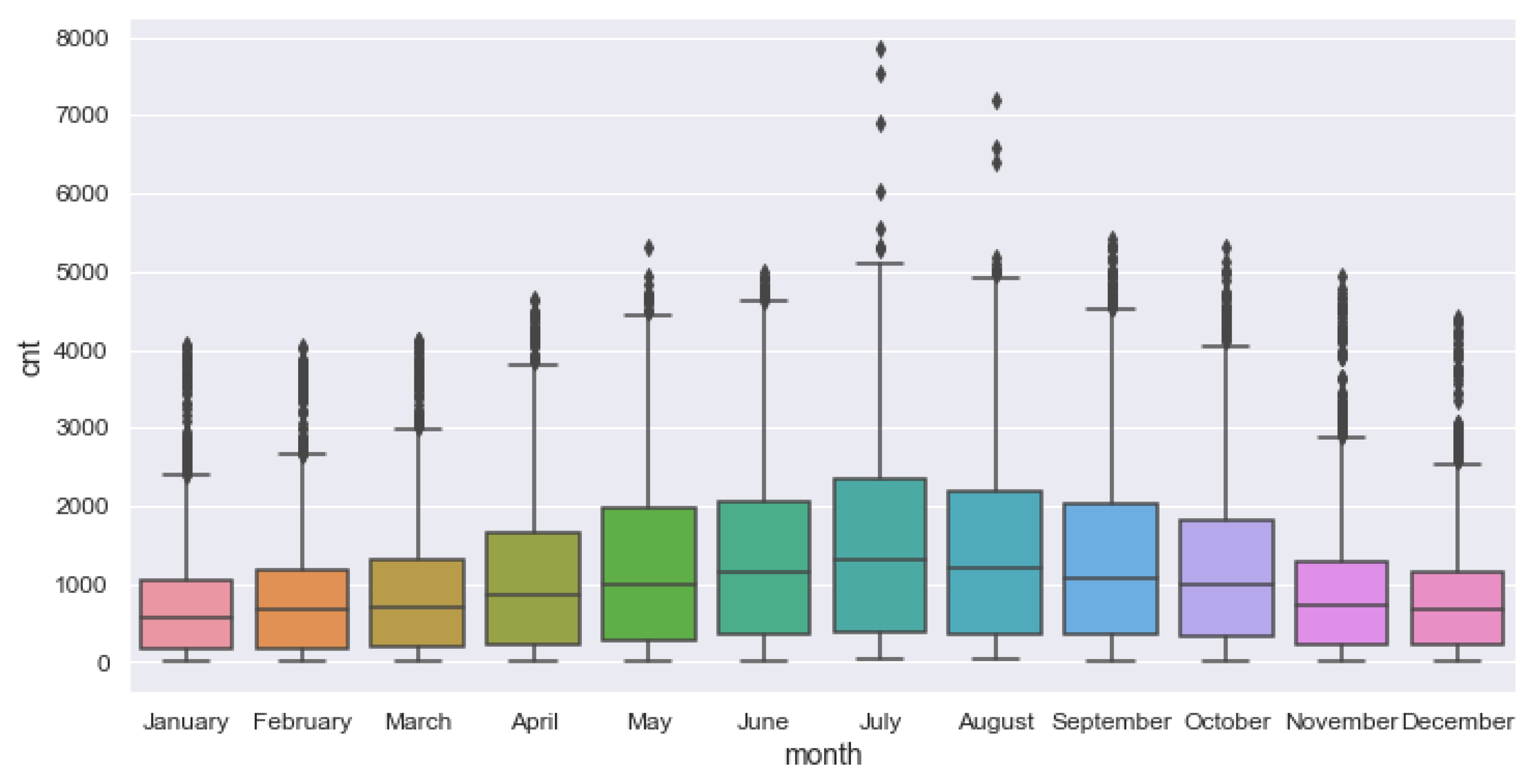

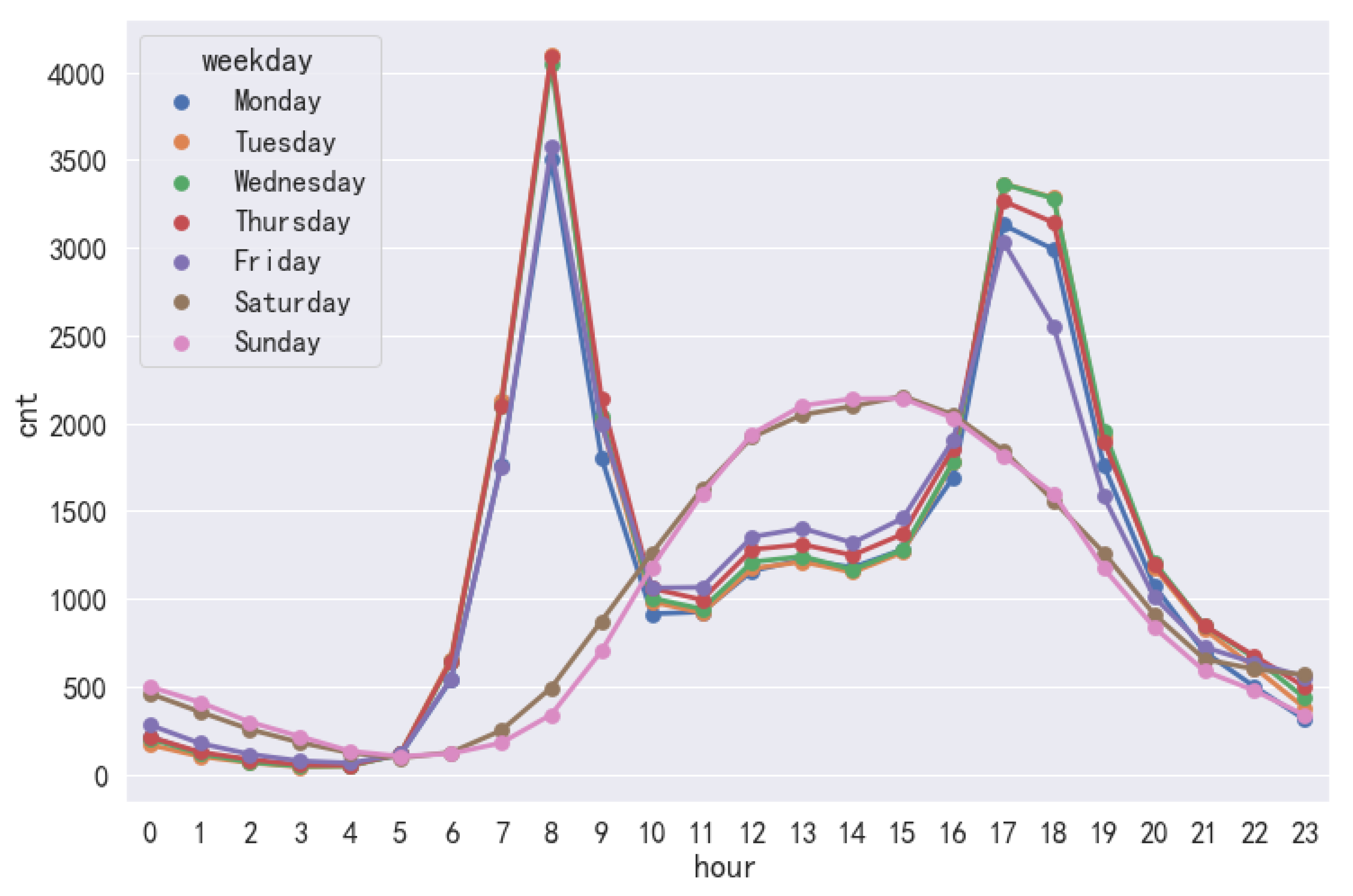

2.3.4. Analysis of the Influencing Factors

2.3.5. Predictive Model Evaluation Metrics

3. Predictive Model Analysis

3.1. Model Structure

- Layer number settings: build the LSTM model structure, set the number of LSTM layers to 4 and the feature size to 12, the input of LSTM is the [time_steps, feature], and the output layer is 1.

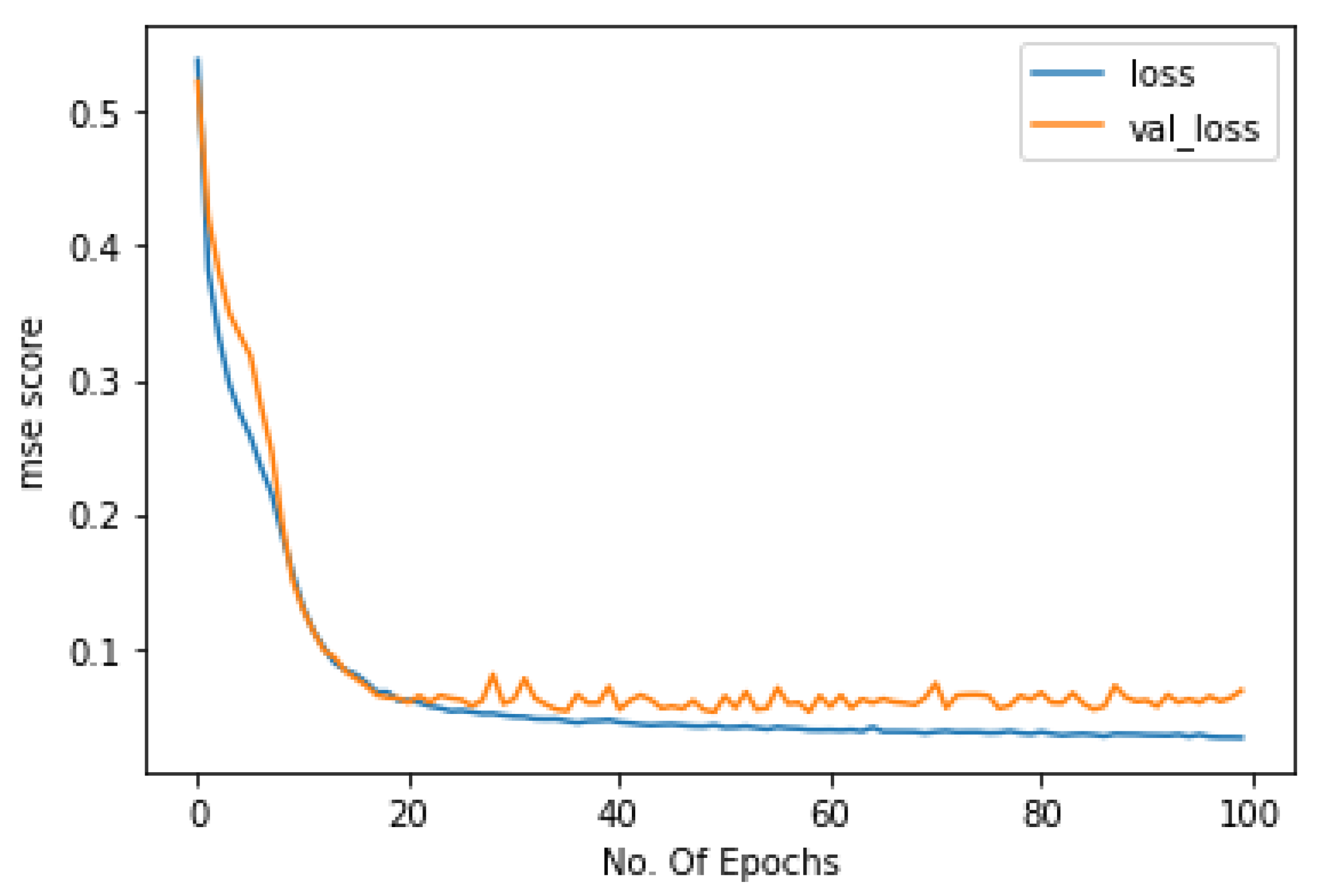

- Model parameter settings: when debugging the LSTM neural network, we tried to change the batch size of training samples, the number of neurons, and the step size. The batch sizes were set to 32, 64, and 128; the time_steps were set to 10, 12, 24; and the numbers of neurons were set to 32, 64, and 128. Its results with respect to these parameters are given in the following tables. The learning_rate is set to 0.0005, the optimizer chooses Adam, the loss function is set as mse, and the LSTM model is trained for 100 rounds (epochs). In order to prevent overfitting in the training process, the dropout of each layer is set to 0.2. For the activation function, the ReLU activation function is chosen. The main purpose is to reduce the interdependence of the parameters and alleviate the overfitting problem.

- Dimension transformation: when inputting the features into the prediction model, the tensor needs to be transformed into a two-dimensional matrix to use its computed results as inputs to the hidden layer. Finally, the tensor is transformed into three dimensions as the input to the LSTM class. In addition, batch processing of data is performed via the get_batches function.

3.2. Model Prediction Results

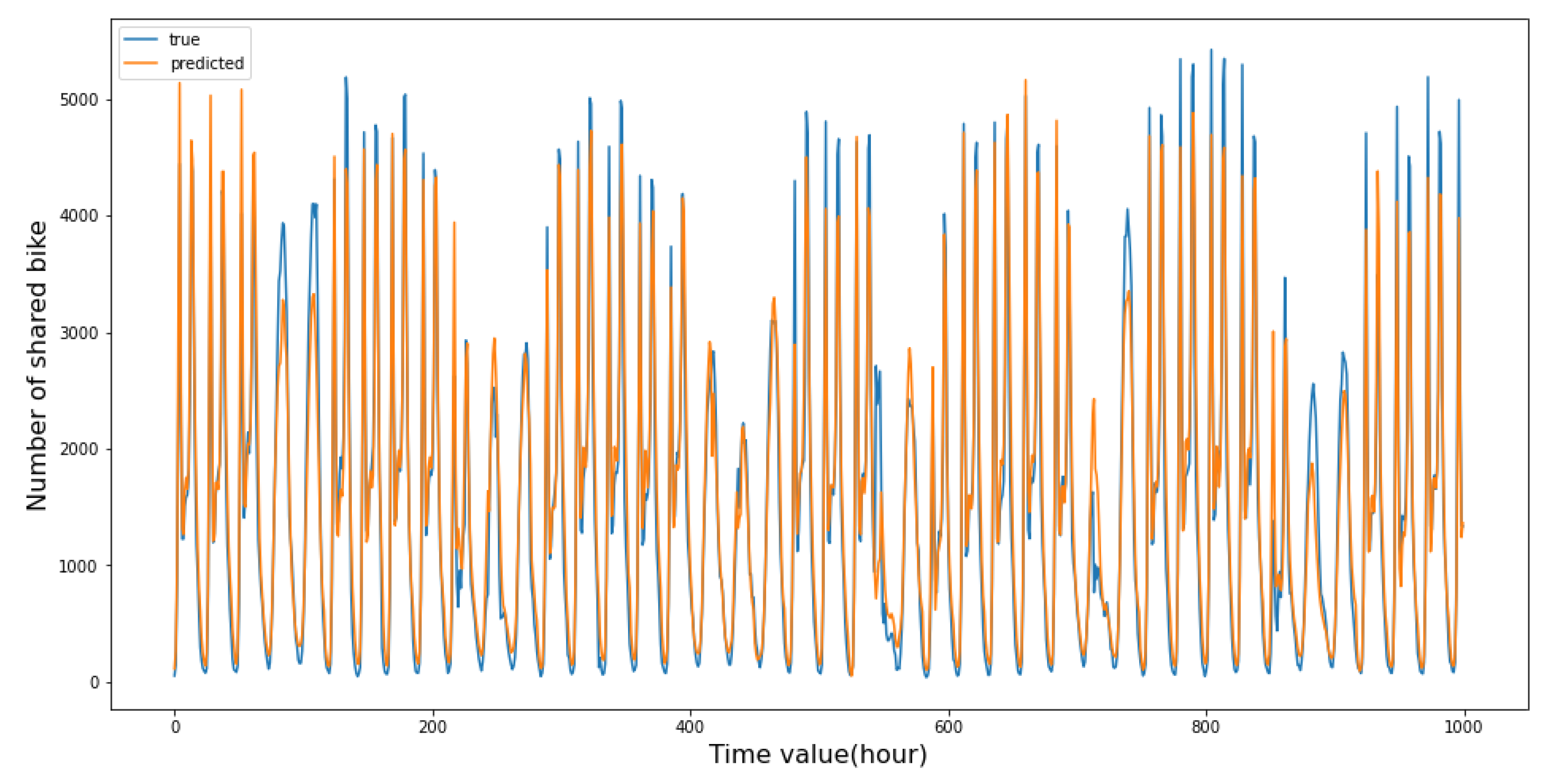

3.2.1. Prediction Results of LSTM Neural Network Model

3.2.2. Predictive Model Comparison

4. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Wang, S.; Zhang, J.; Liu, L.; Duan, Z.-Y. Bike-Sharing-A new public transportation mode: State of the practice and prospects. In Proceedings of the IEEE International Conference on Emergency, Beijing, China, 8–10 August 2010. [Google Scholar]

- Jiang, H.; Song, S.; Zou, X.; Lu, L. How Bike-Sharing Affects Cities; World Resources Institute (WRI): Washington, DC, USA, 2020. [Google Scholar]

- Campbell, A.A.; Cherry, C.R.; Ryerson, M.S.; Yang, X. Factors influencing the choice of shared bicycles and shared electric bikes in Beijing. Transp. Res. Part C Emerg. Technol. 2016, 67, 399–414. [Google Scholar] [CrossRef]

- Yoshida, N.; Ye, W. Commuting travel behavior focusing on the role of shared transportation in the wake of the COVID-19 pandemic and the Tokyo Olympics. TIATSS Res. 2021, 45, 405–416. [Google Scholar] [CrossRef]

- Peng, Y.; Liang, T.; Yang, Y.; Yin, H.; Li, P.; Deng, J. A Key Node Optimization Scheme for Public Bicycles Based on Wavefront Theory. Int. J. Artif. Intell. Tools 2020, 29, 2040016. [Google Scholar] [CrossRef]

- Mattson, J.; Godavarthy, R. Bike Share in Fargo, North Dakota: Keys to Success and Factors Affecting Ridership. Sustain. Cities Soc. 2017, 34, 172–182. [Google Scholar] [CrossRef]

- Faghih-Imani, A.; Eluru, N.; El-Geneidy, A.M.; Rabbat, M.; Haq, U. How land-use and urban form impact bicycle flows: Evidence from the bicycle-sharing system (BIXI) in Montreal. J. Transp. Geogr. 2014, 41, 306–314. [Google Scholar] [CrossRef]

- Bacciu, D.; Carta, A.; Gnesi, S.; Semini, L. An experience in using machine learning for short-term predictions in smart transportation systems. J. Log. Algebr. Methods Program. 2016, 87, 52–66. [Google Scholar] [CrossRef]

- Ryan; Stephen, P.; Bajari; Patrick. Machine Learning Methods for Demand Estimation. Am. Econ. Rev. 2015, 105, 481–485. [Google Scholar] [CrossRef]

- Cao, D.D.; Fan, S.R.; Xia, K.W. Comparison of machine learning methods for short-term demand forecasting of shared bicycles. Comput. Simul. 2021, 38, 92–97. [Google Scholar]

- Liu, M.; Shi, J. A cellular automata traffic flow model combined with a BP neural network based microscopic lane changing decision model. J. Intell. Transp. Syst. 2019, 23, 309–318. [Google Scholar] [CrossRef]

- Gao, X.; Lee, G.M. Moment, based rental prediction for bicycle-sharing transportation systems using a hybrid genetic algorithm and machine learning. Comput. Ind. Eng. 2019, 128, 60–69. [Google Scholar] [CrossRef]

- Connor, J.; Martin, R.; Atlas, L. Recurrent neural networks and robust time series prediction. IEEE Trans. Neural Netw. 1994, 5, 240–254. [Google Scholar] [CrossRef]

- Qiu, X.; Ren, Y.; Suganthan, P.N.; Amaratunga, G.A.J. Empirical mode decomposition based ensemble deep learning for load demand time series forecasting. Appl. Soft Comput. 2017, 54, 246–255. [Google Scholar] [CrossRef]

- Tian, Y.; Zhang, K.; Li, J.; Lin, X.; Yang, B. LSTM-based traffic flow prediction with missing data. Neuro Comput. 2018, 318, 297–305. [Google Scholar] [CrossRef]

- Liu, M.; Shi, J. Short-Term Traffic Flow Prediction Based on KNN-LSTM. In Proceedings of the 2021 IEEE 7th International Conference on Cloud Computing and Intelligent Systems, Xi’an, China, 7–8 November 2021. [Google Scholar]

- Viadinugroho, R.A.A.; Rosadi, D. Long Short-Term Memory Neural Network Model for Time Series Forecasting: Case Study of Forecasting IHSG during COVID-19 Outbreak. J. Phys. Conf. Ser. 2021, 1863, 012016. [Google Scholar] [CrossRef]

- Ma, X.; Tao, Z.; Wang, Y.; Yu, H.; Wang, Y. Long short-term memory neural network for traffic speed prediction using remote microwave sensor data. Transp. Res. Part Emerg. Technol. 2015, 54, 187–197. [Google Scholar] [CrossRef]

- Sundermeyer, M.; Schlüter, R.; Ney, H. LSTM Neural Networks for Language Modeling. In Proceedings of the Interspeech 2012, ISCA’s 13th Annual Conference, Portland, OR, USA, 9–13 September 2012. [Google Scholar]

- Wang, X.; Sun, H.; Zhang, S.; Lv, Y.; Li, T. Bike sharing rebalancing problem with variable demand. Phys. A Stat. Mech. Its Appl. 2022, 591, 1266–1267. [Google Scholar] [CrossRef]

- Zhang, Y.; Mi, Z. Environmental benefits of bike sharing: A big data-based analysis. Appl. Energy 2018, 220, 296–301. [Google Scholar] [CrossRef]

- Zhang, D.; Yu, C.; Desai, J.; Lau, H.Y.K.; Srivathsan, S. A time-space network flow approach to dynamic repositioning in bicycle sharing systems. Transp. Res. Part B Methodol. 2017, 103, 188–207. [Google Scholar] [CrossRef]

- Chen, T.; Guestrin, C. XGBoost: A Scalable Tree Boosting System. In Proceedings of the 22nd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, San Francisco, CA, USA, 13–17 August 2016. [Google Scholar]

- Dantas, T.M.; Oliveira, F.L.C.; Repolho, H.M.V. Air transportation demand forecast through Bagging Holt Winters methods. J. Air Transp. Manag. 2017, 59, 116–123. [Google Scholar] [CrossRef]

- Breiman, L. Random forests. Mach. Learn. 2001, 45, 5–32. [Google Scholar] [CrossRef]

- Zhang, L.; Suganthan, P.N. Random Forests with ensemble of feature spaces. Pattern Recognit. 2014, 47, 3429–3437. [Google Scholar] [CrossRef]

- Ke, G.; Meng, Q.; Finley, T.; Wang, T.; Chen, W.; Ma, W.; Ye, Q.; Lin, T.-Y. LightGBM: A Highly Efficient Gradient Boosting Decision Tree. Neural Inf. Process. Syst. 2017, 30, 52. [Google Scholar]

- Han, L.; Yu, C.; Chen, Y.; Tang, X. Shared bicycle dynamic distribution model based on Boosting algorithm. In Proceedings of the 2019 Chinese Control and Decision Conference (CCDC), Nanchang, China, 3–5 June 2019; pp. 3972–3977. [Google Scholar]

- Li, L.; Lin, Y.; Yu, D.; Liu, Z.; Gao, Y.; Qiao, J. A Multi-Organ Fusion and LightGBM Based Radiomics Algorithm for High-Risk Esophageal Varices Prediction in Cirrhotic Patients. IEEE Access 2021, 9, 15041–15052. [Google Scholar] [CrossRef]

- Li, M.; Yan, C.; Liu, W. The network loan risk prediction model based on Convolutional neural network and Stacking fusion model. Appl. Soft Comput. 2021, 113, 107961. [Google Scholar] [CrossRef]

- Hochreiter, S.; Schmidhuber, J. Long Short-Term Memory. Neural Comput. 1997, 9, 1735–1780. [Google Scholar] [CrossRef] [PubMed]

- Tai, K.S.; Socher, R.; Manning, C.D. Improved Semantic Representations from Tree-Structured Long Short-Term Memory Networks. Comput. Sci. 2015, 5, 1–36. [Google Scholar]

- Kaltenbrunner, A.; Meza, R.; Grivolla, J.; Codina, J.; Banchs, R. Urban cycles and mobility patterns: Exploring and predicting trends in a bicycle-based public transport system. Pervasive Mob. Comput. 2010, 6, 455–466. [Google Scholar] [CrossRef]

- Siami-Namini, S.; Tavakoli, N.; Siami Namin, A. A Comparison of ARIMA and LSTM in Forecasting Time Series. In Proceedings of the 2018 17th IEEE International Conference on Machine Learning and Applications (ICMLA), Orlando, FL, USA, 17–20 December 2018; pp. 1394–1401. [Google Scholar]

| Properties | Description and Value Range |

|---|---|

| timestamp | timestamp field for grouping the data [4/1/2015/00:00:00, 3/1/2017/23:00:00] |

| cnt | the count of bike shares [0, 7860] |

| t1 | real temperature, unit: °C [−1.5, 34.0] |

| t2 | apparent air temperature, unit: °C [−6.0, 34.0] |

| hum | humidity in percentage [20.5, 100.0] |

| windspeed | wind speed, unit: km/h [0.0, 56.5] |

| isholiday | 0 = non holiday 1 = holiday |

| isweekend | 0 = working day 1 = weekend |

| season | Seasonal Category 0 = spring 1 = summer 2 = fall 3 = winter |

| weathercode | Weather category 1 = clear/mostly clear but have some values with haze/fog/patches of fog/fog in vicinity 2 = scattered clouds/few clouds 3 = broken clouds 4 = cloudy 7 = rain/light rain shower/light rain 10 = rain with thunderstorm 26 = snowfall 94 = freezing fog |

| Batch_Size | Number of Neurons | RMSE | Score |

|---|---|---|---|

| 32 | 32 | 335.68 | 0.912 |

| 64 | 345.73 | 0.906 | |

| 128 | 357.58 | 0.899 | |

| 64 | 32 | 373.94 | 0.890 |

| 64 | 329.49 | 0.915 | |

| 128 | 354.90 | 0.901 | |

| 128 | 32 | 332.15 | 0.914 |

| 64 | 342.07 | 0.908 | |

| 128 | 342.03 | 0.907 |

| Batch_Size | Number of Neurons | RMSE | Score |

|---|---|---|---|

| 32 | 32 | 352.02 | 0.903 |

| 64 | 355.72 | 0.901 | |

| 128 | 350.33 | 0.904 | |

| 64 | 32 | 344.29 | 0.907 |

| 64 | 336.37 | 0.911 | |

| 128 | 361.55 | 0.897 | |

| 128 | 32 | 353.83 | 0.902 |

| 64 | 368.25 | 0.892 | |

| 128 | 373.38 | 0.891 |

| Batch_Size | Number of Neurons | RMSE | Score |

|---|---|---|---|

| 32 | 32 | 353.75 | 0.902 |

| 64 | 314.17 | 0.922 | |

| 128 | 333.82 | 0.912 | |

| 64 | 32 | 337.36 | 0.911 |

| 64 | 348.93 | 0.904 | |

| 128 | 367.07 | 0.894 | |

| 128 | 32 | 328.38 | 0.915 |

| 64 | 334.48 | 0.912 | |

| 128 | 359.23 | 0.899 |

| Predictive Model | LSTM | Stacking Model | Light GBM | Random Forest | Bagging | XGBoost | Extra Tree Regressor | OLS Model |

|---|---|---|---|---|---|---|---|---|

| RMSE | 314.17 | 351.47 | 356.57 | 358.13 | 366.13 | 367.19 | 487.95 | 881.62 |

| score | 0.922 | 0.857 | 0.853 | 0.805 | 0.805 | 0.843 | 0.724 | 0.099 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Shi, Y.; Zhang, L.; Lu, S.; Liu, Q. Short-Term Demand Prediction of Shared Bikes Based on LSTM Network. Electronics 2023, 12, 1381. https://doi.org/10.3390/electronics12061381

Shi Y, Zhang L, Lu S, Liu Q. Short-Term Demand Prediction of Shared Bikes Based on LSTM Network. Electronics. 2023; 12(6):1381. https://doi.org/10.3390/electronics12061381

Chicago/Turabian StyleShi, Yi, Liumei Zhang, Shengnan Lu, and Qiao Liu. 2023. "Short-Term Demand Prediction of Shared Bikes Based on LSTM Network" Electronics 12, no. 6: 1381. https://doi.org/10.3390/electronics12061381

APA StyleShi, Y., Zhang, L., Lu, S., & Liu, Q. (2023). Short-Term Demand Prediction of Shared Bikes Based on LSTM Network. Electronics, 12(6), 1381. https://doi.org/10.3390/electronics12061381