Lexicon-Based vs. Bert-Based Sentiment Analysis: A Comparative Study in Italian

Abstract

:1. Introduction

- Verify the performance of one of the best language models available for the Italian language, i.e., BERTBase Italian XXL, by providing a dataset of reviews created ad hoc;

- Test the performance of one of the best NooJ-based lexical analysis systems available for the Italian language, starting with the Sentix and SentIta lexicons, on the same dataset;

- Understand and compare the performance of the two systems using tools such as SHAP for qualitative analysis and explainability of AI models.

2. Background and Related Works

2.1. Machine and Deep Learning Based Approaches

2.2. Lexicon-Based Approaches

3. Materials and Methods

3.1. Tested Architectures

3.1.1. Deep Learning-Based Architecture: BERT

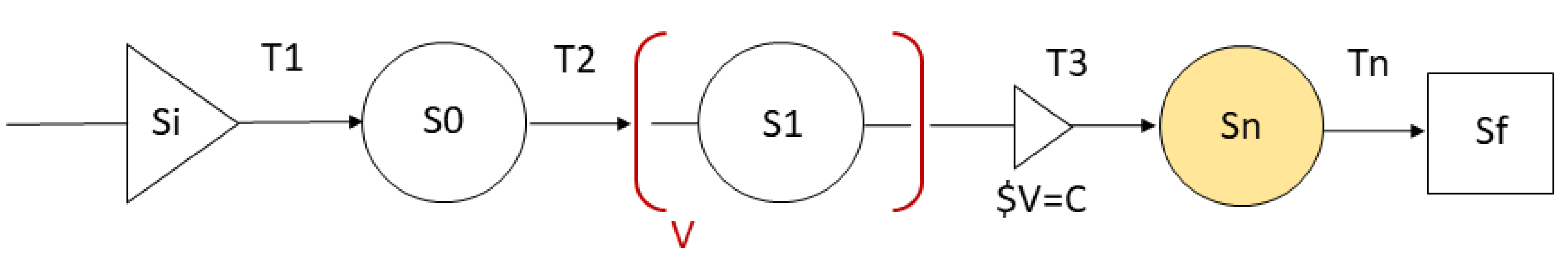

Transformer

BERTBase Italian XXL

SHAP Explanation Approach

3.1.2. Lexicon-Based Method

- Negation, e.g., niente affatto (English: no way) and per nulla al mondo (English: for anything in the world);

- Intensification, e.g., davvero[+] eccezionale[+3] [+3] (English: truly exceptional) and Parzialmente[−]deludente[−2]anche il reparto degli attori [−1] (English: Partially unsatisfying also the actor staff);

- Comparison, e.g., Il suo motore era anche il più brioso[+2] [+3] (English: Its engine was also the most lively) and Un film peggiore di qualsiasi telefilm [−3] (English: A movie worse than whatever tv series).

3.2. Dataset and Evaluation Metrics

- The identification of the proper polarity of the reviews that are close to neutrality;

- The treatment of the reviews that contain both positive and negative claims;

- The correct analysis of reviews in which positive and negative comments are not related to the described product, e.g., delivery issues or plots in movies and books reviews [74].

- Accuracy, that states the number of labels correctly identified;

- Micro- score defined as , where Precision and Recall .

4. Results and Discussion

4.1. Quantitative Analysis

4.2. Qualitative Analysis

- La colazione e molto buona e suggestiva servita un piccolo salone con caminetto (English: The breakfast is very good and evocative served in a small lounge with a fireplace);

- Una piccola bomboniera (English: A small party favor).

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Araque, O.; Corcuera-Platas, I.; Sánchez-Rada, J.F.; Iglesias, C.A. Enhancing deep learning sentiment analysis with ensemble techniques in social applications. Expert Syst. Appl. 2017, 77, 236–246. [Google Scholar] [CrossRef]

- Thet, T.T.; Na, J.; Khoo, C.S.G. Aspect-based sentiment analysis of movie reviews on discussion boards. J. Inf. Sci. 2010, 36, 823–848. [Google Scholar] [CrossRef]

- Liu, B. Sentiment Analysis and Subjectivity. In Handbook of Natural Language Processing, 2nd ed.; Indurkhya, N., Damerau, F.J., Eds.; Chapman and Hall/CRC: London, UK, 2010; pp. 627–666. [Google Scholar]

- Hutto, C.J.; Gilbert, E. VADER: A Parsimonious Rule-Based Model for Sentiment Analysis of Social Media Text. In Proceedings of the Eighth International Conference on Weblogs and Social Media, ICWSM 2014, Ann Arbor, MI, USA, 1–4 June 2014; Adar, E., Resnick, P., Choudhury, M.D., Hogan, B., Oh, A.H., Eds.; The AAAI Press: Menlo Park, CA, USA, 2014. [Google Scholar]

- Cristianini, N.; Shawe-Taylor, J. An Introduction to Support Vector Machines and Other Kernel-Based Learning Methods; Cambridge University Press: Cambridge, UK, 2010. [Google Scholar]

- Chen, T.; Xu, R.; He, Y.; Wang, X. Improving sentiment analysis via sentence type classification using BiLSTM-CRF and CNN. Expert Syst. Appl. 2017, 72, 221–230. [Google Scholar] [CrossRef] [Green Version]

- Pota, M.; Esposito, M.; Palomino, M.A.; Masala, G.L. A Subword-Based Deep Learning Approach for Sentiment Analysis of Political Tweets. In Proceedings of the 32nd International Conference on Advanced Information Networking and Applications Workshops, AINA 2018 workshops, Krakow, Poland, 16–18 May 2018; Barolli, L., Takizawa, M., Enokido, T., Ogiela, M.R., Ogiela, L., Javaid, N., Eds.; IEEE Computer Society: Piscataway, NJ, USA, 2018; pp. 651–656. [Google Scholar] [CrossRef] [Green Version]

- Pota, M.; Ventura, M.; Catelli, R.; Esposito, M. An Effective BERT-Based Pipeline for Twitter Sentiment Analysis: A Case Study in Italian. Sensors 2021, 21, 133. [Google Scholar] [CrossRef]

- Pota, M.; Ventura, M.; Fujita, H.; Esposito, M. Multilingual evaluation of pre-processing for BERT-based sentiment analysis of tweets. Expert Syst. Appl. 2021, 181, 115119. [Google Scholar] [CrossRef]

- Pang, B.; Lee, L.; Vaithyanathan, S. Thumbs up? Sentiment Classification using Machine Learning Techniques. In Proceedings of the 2002 Conference on Empirical Methods in Natural Language Processing, EMNLP 2002, Philadelphia, PA, USA, 6–7 July 2002; pp. 79–86. [Google Scholar] [CrossRef] [Green Version]

- Mukherjee, S.; Joshi, S. Author-Specific Sentiment Aggregation for Polarity Prediction of Reviews. In Proceedings of the Ninth International Conference on Language Resources and Evaluation, LREC 2014, Reykjavik, Iceland, 26–31 May 2014; Calzolari, N., Choukri, K., Declerck, T., Loftsson, H., Maegaard, B., Mariani, J., Moreno, A., Odijk, J., Piperidis, S., Eds.; European Language Resources Association (ELRA): Reykjavik, Iceland, 2014; pp. 3092–3099. [Google Scholar]

- Perikos, I.; Hatzilygeroudis, I. Aspect based sentiment analysis in social media with classifier ensembles. In Proceedings of the 16th IEEE/ACIS International Conference on Computer and Information Science, ICIS 2017, Wuhan, China, 24–26 May 2017; Zhu, G., Yao, S., Cui, X., Xu, S., Eds.; IEEE Computer Society: Piscataway, NJ, USA, 2017; pp. 273–278. [Google Scholar] [CrossRef]

- Diamantini, C.; Mircoli, A.; Potena, D. A Negation Handling Technique for Sentiment Analysis. In Proceedings of the 2016 International Conference on Collaboration Technologies and Systems, CTS 2016, Orlando, FL, USA, 31 October–4 November 2016; Smari, W.W., Natarian, J., Eds.; IEEE Computer Society: Piscataway, NJ, USA, 2016; pp. 188–195. [Google Scholar] [CrossRef]

- Mikolov, T.; Sutskever, I.; Chen, K.; Corrado, G.S.; Dean, J. Distributed Representations of Words and Phrases and their Compositionality. In Proceedings of the Advances in Neural Information Processing Systems 26: 27th Annual Conference on Neural Information Processing Systems 2013, Lake Tahoe, NV, USA, 5–8 December 2013; pp. 3111–3119. [Google Scholar]

- Pennington, J.; Socher, R.; Manning, C.D. Glove: Global Vectors for Word Representation. In Proceedings of the 2014 Conference on Empirical Methods in Natural Language Processing, EMNLP 2014, Doha, Qatar, 25–29 October 2014; A Meeting of SIGDAT, a Special Interest Group of the ACL. Moschitti, A., Pang, B., Daelemans, W., Eds.; The Association for Computational Linguistics: Stroudsburg, PA, USA, 2014; pp. 1532–1543. [Google Scholar] [CrossRef]

- Cao, K.; Rei, M. A Joint Model for Word Embedding and Word Morphology. In Proceedings of the 1st Workshop on Representation Learning for NLP, Rep4NLP@ACL 2016, Berlin, Germany, 11 August 2016; Blunsom, P., Cho, K., Cohen, S.B., Grefenstette, E., Hermann, K.M., Rimell, L., Weston, J., Yih, S.W., Eds.; Association for Computational Linguistics: Stroudsburg, PA, USA, 2016; pp. 18–26. [Google Scholar] [CrossRef]

- Li, Y.; Pan, Q.; Yang, T.; Wang, S.; Tang, J.; Cambria, E. Learning Word Representations for Sentiment Analysis. Cogn. Comput. 2017, 9, 843–851. [Google Scholar] [CrossRef]

- Yu, L.; Wang, J.; Lai, K.R.; Zhang, X. Refining Word Embeddings Using Intensity Scores for Sentiment Analysis. IEEE ACM Trans. Audio Speech Lang. Process. 2018, 26, 671–681. [Google Scholar] [CrossRef]

- Hao, Y.; Mu, T.; Hong, R.; Wang, M.; Liu, X.; Goulermas, J.Y. Cross-Domain Sentiment Encoding through Stochastic Word Embedding. IEEE Trans. Knowl. Data Eng. 2020, 32, 1909–1922. [Google Scholar] [CrossRef] [Green Version]

- Sukthanker, R.; Poria, S.; Cambria, E.; Thirunavukarasu, R. Anaphora and coreference resolution: A review. Inf. Fusion 2020, 59, 139–162. [Google Scholar] [CrossRef]

- Zhang, L.; Wang, S.; Liu, B. Deep learning for sentiment analysis: A survey. Wiley Interdiscip. Rev. Data Min. Knowl. Discov. 2018, 8, e1253. [Google Scholar] [CrossRef] [Green Version]

- Yadav, A.; Vishwakarma, D.K. Sentiment analysis using deep learning architectures: A review. Artif. Intell. Rev. 2020, 53, 4335–4385. [Google Scholar] [CrossRef]

- Kim, Y. Convolutional Neural Networks for Sentence Classification. In Proceedings of the 2014 Conference on Empirical Methods in Natural Language Processing, EMNLP 2014, Doha, Qatar, 25–29 October 2014; A Meeting of SIGDAT, a Special Interest Group of the ACL. Moschitti, A., Pang, B., Daelemans, W., Eds.; The Association for Computational Linguistics: Stroudsburg, PA, USA, 2014; pp. 1746–1751. [Google Scholar] [CrossRef] [Green Version]

- Kalchbrenner, N.; Grefenstette, E.; Blunsom, P. A Convolutional Neural Network for Modelling Sentences. In Proceedings of the 52nd Annual Meeting of the Association for Computational Linguistics, ACL 2014, Baltimore, MD, USA, 22–27 June 2014; The Association for Computer Linguistics: Stroudsburg, PA, USA, 2014; pp. 655–665. [Google Scholar] [CrossRef] [Green Version]

- Socher, R.; Perelygin, A.; Wu, J.; Chuang, J.; Manning, C.D.; Ng, A.Y.; Potts, C. Recursive Deep Models for Semantic Compositionality Over a Sentiment Treebank. In Proceedings of the 2013 Conference on Empirical Methods in Natural Language Processing, EMNLP 2013, Grand Hyatt, Seattle, Seattle, WA, USA, 18–21 October 2013; A Meeting of SIGDAT, a Special Interest Group of the ACL. The Association for Computational Linguistics: Stroudsburg, PA, USA, 2013; pp. 1631–1642. [Google Scholar]

- Hochreiter, S.; Schmidhuber, J. Long Short-Term Memory. Neural Comput. 1997, 9, 1735–1780. [Google Scholar] [CrossRef] [PubMed]

- Li, D.; Qian, J. Text sentiment analysis based on long short-term memory. In Proceedings of the 2016 First IEEE International Conference on Computer Communication and the Internet (ICCCI), Wuhan, China, 13–15 October 2016. [Google Scholar] [CrossRef]

- Baziotis, C.; Pelekis, N.; Doulkeridis, C. DataStories at SemEval-2017 Task 4: Deep LSTM with Attention for Message-level and Topic-based Sentiment Analysis. In Proceedings of the 10th International Workshop on Semantic Evaluation, SemEval@NAACL-HLT 2016, San Diego, CA, USA, 16–17 June 2016; Bethard, S., Cer, D.M., Carpuat, M., Jurgens, D., Nakov, P., Zesch, T., Eds.; The Association for Computer Linguistics: Stroudsburg, PA, USA, 2017; pp. 747–754. [Google Scholar] [CrossRef] [Green Version]

- Peters, M.E.; Neumann, M.; Iyyer, M.; Gardner, M.; Clark, C.; Lee, K.; Zettlemoyer, L. Deep Contextualized Word Representations. In Proceedings of the 2018 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, NAACL-HLT 2018, New Orleans, LA, USA, 1–6 June 2018; Walker, M.A., Ji, H., Stent, A., Eds.; Association for Computational Linguistics: Stroudsburg, PA, USA, 2018; pp. 2227–2237. [Google Scholar] [CrossRef] [Green Version]

- Howard, J.; Ruder, S. Universal Language Model Fine-tuning for Text Classification. In Proceedings of the 56th Annual Meeting of the Association for Computational Linguistics, ACL 2018, Melbourne, Australia, 15–20 July 2018; Gurevych, I., Miyao, Y., Eds.; Association for Computational Linguistics: Stroudsburg, PA, USA, 2018; Volume 1, pp. 328–339. [Google Scholar] [CrossRef] [Green Version]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, L.; Polosukhin, I. Attention is All you Need. In Proceedings of the Advances in Neural Information Processing Systems 30: Annual Conference on Neural Information Processing Systems 2017, Long Beach, CA, USA, 4–9 December 2017; Guyon, I., von Luxburg, U., Bengio, S., Wallach, H.M., Fergus, R., Vishwanathan, S.V.N., Garnett, R., Eds.; The Association for Computational Linguistics: San Diego, CA, USA, 2017; pp. 5998–6008. [Google Scholar]

- Radford, A.; Narasimhan, K. Improving Language Understanding by Generative Pre-Training. Available online: rhttps://cdn.openai.com/research-covers/language-unsupervised/language_understanding_paper.pdf (accessed on 1 October 2021).

- Devlin, J.; Chang, M.; Lee, K.; Toutanova, K. BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding. In Proceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, NAACL-HLT 2019, Minneapolis, MN, USA, 2–7 June 2019; Burstein, J., Doran, C., Solorio, T., Eds.; Association for Computational Linguistics: Stroudsburg, PA, USA, 2019; pp. 4171–4186. [Google Scholar] [CrossRef]

- Augustyniak, L.; Kajdanowicz, T.; Kazienko, P. Comprehensive analysis of aspect term extraction methods using various text embeddings. Comput. Speech Lang. 2021, 69, 101217. [Google Scholar] [CrossRef]

- Ray, B.; Garain, A.; Sarkar, R. An ensemble-based hotel recommender system using sentiment analysis and aspect categorization of hotel reviews. Appl. Soft Comput. 2021, 98, 106935. [Google Scholar] [CrossRef]

- Hatzivassiloglou, V.; McKeown, K.R. Predicting the Semantic Orientation of Adjectives. In Proceedings of the 35th Annual Meeting of the Association for Computational Linguistics and 8th Conference of the European Chapter of the Association for Computational Linguistics, Proceedings of the Conference, Universidad Nacional de Educación a Distancia (UNED), Madrid, Spain, 7–12 July 1997; Cohen, P.R., Wahlster, W., Eds.; Morgan Kaufmann Publishers/ACL: Burlington, MA, USA, 1997; pp. 174–181. [Google Scholar] [CrossRef] [Green Version]

- Taboada, M.; Gillies, M.A.; McFetridge, P. Sentiment classification techniques for tracking literary reputation. In LREC Workshop: Towards Computational Models of Literary Analysis; 2006; pp. 36–43. Available online: https://citeseerx.ist.psu.edu/viewdoc/downloaddoi=10.1.1.412.9475&rep=rep1&type=pdf#page=42 (accessed on 1 October 2021).

- Benamara, F.; Cesarano, C.; Picariello, A.; Recupero, D.R.; Subrahmanian, V.S. Sentiment Analysis: Adjectives and Adverbs are Better than Adjectives Alone. In Proceedings of the First International Conference on Weblogs and Social Media, ICWSM 2007, Boulder, CO, USA, 26–28 March 2007. [Google Scholar]

- Vermeij, M. The Orientation of User Opinions through Adverbs, Verbs and Nouns. Available online: https://citeseerx.ist.psu.edu/viewdoc/download?doi=10.1.1.74.4909&rep1&pdf (accessed on 1 October 2021).

- Neviarouskaya, A.; Prendinger, H.; Ishizuka, M. Compositionality Principle in Recognition of Fine-Grained Emotions from Text. In Proceedings of the Third International Conference on Weblogs and Social Media, ICWSM 2009, San Jose, CA, USA, 17–20 May 2009; Adar, E., Hurst, M., Finin, T., Glance, N.S., Nicolov, N., Tseng, B.L., Eds.; The AAAI Press: Palo Alto, CA, USA, 2009. [Google Scholar]

- Taboada, M.; Brooke, J.; Tofiloski, M.; Voll, K.D.; Stede, M. Lexicon-Based Methods for Sentiment Analysis. Comput. Linguist. 2011, 37, 267–307. [Google Scholar] [CrossRef]

- Bloom, K. Sentiment Analysis Based on Appraisal Theory and Functional Local Grammars. Ph.D. Thesis, Illinois Institute of Technology, Chicago, IL, USA, 2011. [Google Scholar]

- Moilanen, K.; Pulman, S.G. The Good, the Bad, and the Unknown: Morphosyllabic Sentiment Tagging of Unseen Words. In Proceedings of the ACL 2008, Proceedings of the 46th Annual Meeting of the Association for Computational Linguistics, Columbus, OH, USA, 15–20 June 2008; The Association for Computer Linguistics: Stroudsburg, PA, USA, 2008; pp. 109–112. [Google Scholar]

- Neviarouskaya, A. Compositional Approach for Automatic Recognition of Fine-Grained Affect, Judgment, and Appreciation in Text (Soft Computing, < Special Issue> Doctorial Theses on Aritificial Intelligence). J. Jpn. Soc. Artif. Intell. 2012, 27, 88. [Google Scholar]

- Esuli, A.; Sebastiani, F. Determining the semantic orientation of terms through gloss classification. In Proceedings of the 2005 ACM CIKM International Conference on Information and Knowledge Management, Bremen, Germany, 31 October–5 November 2005; Herzog, O., Schek, H., Fuhr, N., Chowdhury, A., Teiken, W., Eds.; ACM: New York, NY, USA, 2005; pp. 617–624. [Google Scholar] [CrossRef]

- Esuli, A.; Sebastiani, F. Determining Term Subjectivity and Term Orientation for Opinion Mining. In Proceedings of the EACL 2006, 11st Conference of the European Chapter of the Association for Computational Linguistics, Trento, Italy, 3–7 April 2006; McCarthy, D., Wintner, S., Eds.; The Association for Computer Linguistics: Stroudsburg, PA, USA, 2006. [Google Scholar]

- Paulo-Santos, A.; Ramos, C.; Marques, N.C. Determining the Polarity of Words through a Common Online Dictionary. In Progress in Artificial Intelligence, Proceedings of the 15th Portuguese Conference on Artificial Intelligence, EPIA 2011, Lisbon, Portugal, 10–13 October 2011; Antunes, L., Pinto, H.S., Eds.; Lecture Notes in Computer Science; Springer: Berlin/Heidelberg, Germany, 2011; Volume 7026, pp. 649–663. [Google Scholar] [CrossRef]

- Awadallah, A.H.; Radev, D.R. Identifying Text Polarity Using Random Walks. In Proceedings of the 48th Annual Meeting of the Association for Computational Linguistics, Uppsala, Sweden, 11–16 July 2010; Hajic, J., Carberry, S., Clark, S., Eds.; The Association for Computer Linguistics: Stroudsburg, PA, USA, 2010; pp. 395–403. [Google Scholar]

- Qiu, G.; Liu, B.; Bu, J.; Chen, C. Expanding Domain Sentiment Lexicon through Double Propagation. In Proceedings of the 21st International Joint Conference on Artificial Intelligence, Pasadena, CA, USA, 11–17 July 2009; pp. 1199–1204. [Google Scholar]

- Kanayama, H.; Nasukawa, T. Fully Automatic Lexicon Expansion for Domain-oriented Sentiment Analysis. In Proceedings of the 2006 Conference on Empirical Methods in Natural Language Processing, Sydney, Australia, 22–23 July 2006; pp. 355–363. [Google Scholar]

- Baroni, M.; Vegnaduzzo, S. Identifying subjective adjectives through web-based mutual information. In Proceedings of the 15th Conference on Natural Language Processing (KONVENS 2019), Erlangen, Germany, 7 May 2019. [Google Scholar]

- Miller, G.A. WordNet: A Lexical Database for English. Commun. ACM 1995, 38, 39–41. [Google Scholar] [CrossRef]

- Baccianella, S.; Esuli, A.; Sebastiani, F. SentiWordNet 3.0: An Enhanced Lexical Resource for Sentiment Analysis and Opinion Mining. In Proceedings of the International Conference on Language Resources and Evaluation, LREC 2010, Valletta, Malta, 17–23 May 2010; Calzolari, N., Choukri, K., Maegaard, B., Mariani, J., Odijk, J., Piperidis, S., Rosner, M., Tapias, D., Eds.; European Language Resources Luxembourg: Luxembourg, 2010. [Google Scholar]

- Pang, B.; Lee, L. A Sentimental Education: Sentiment Analysis Using Subjectivity Summarization Based on Minimum Cuts. In Proceedings of the 42nd Annual Meeting of the Association for Computational Linguistics, Barcelona, Spain, 21–26 July 2004; pp. 271–278. [Google Scholar] [CrossRef] [Green Version]

- Stone, P.J.; Hunt, E.B. A computer approach to content analysis: Studies using the General Inquirer system. In Proceedings of the 1963 Spring Joint Computer Conference, AFIPS 1963 (Spring), Detroit, MI, USA, 21–23 May 1963; Johnson, E.C., Ed.; ACM: New York, NY, USA, 1963; pp. 241–256. [Google Scholar] [CrossRef]

- Basile, V.; Nissim, M. Sentiment analysis on Italian tweets. In Proceedings of the 4th Workshop on Computational Approaches to Subjectivity, Sentiment and Social Media Analysis, WASSA@NAACL-HLT 2013, Atlanta, GA, USA, 14 June 2013; Balahur, A., der Goot, E.V., Montoyo, A., Eds.; The Association for Computer Linguistics: Stroudsburg, PA, USA, 2013; pp. 100–107. [Google Scholar]

- Pelosi, S. SentIta and Doxa: Italian Databases and Tools for Sentiment Analysis Purposes. In Proceedings of the Second Italian Conference on Computational Linguistics CLiC-it 2015; Accademia University Press: Turin, Italy, 2015; pp. 226–231. [Google Scholar] [CrossRef] [Green Version]

- Di Gennaro, P.; Rossi, A. The FICLIT+CS@UniBO System at the EVALITA 2014 Sentiment Polarity Classification Task. In Proceedings of the First Italian Conference on Computational Linguistics CLiC-it 2014 and of the Fourth International Workshop EVALITA 2014, Pisa, Italy, 9–11 December 2014. [Google Scholar] [CrossRef]

- Bolioli, A.; Salamino, F.; Porzionato, V. Social Media Monitoring in Real Life with Blogmeter Platform. In Proceedings of the First International Workshop on Emotion and Sentiment in Social and Expressive Media: Approaches and Perspectives from AI (ESSEM 2013) A workshop of the XIII International Conference of the Italian Association for Artificial Intelligence (AI*IA 2013), CEUR-WS.org, CEUR Workshop Proceedings, Turin, Italy, 3 December 2013; Volume 1096, pp. 156–163. [Google Scholar]

- Castellucci, G.; Croce, D.; Basili, R. A Language Independent Method for Generating Large Scale Polarity Lexicons. In Proceedings of the Tenth International Conference on Language Resources and Evaluation LREC 2016, Portorož, Slovenia, 23–28 May 2016; Calzolari, N., Choukri, K., Declerck, T., Goggi, S., Grobelnik, M., Maegaard, B., Mariani, J., Mazo, H., Moreno, A., Odijk, J., et al., Eds.; European Language Resources Association (ELRA): Paris, France, 2016. [Google Scholar]

- Cambria, E.; Li, Y.; Xing, F.Z.; Poria, S.; Kwok, K. SenticNet 6: Ensemble Application of Symbolic and Subsymbolic AI for Sentiment Analysis. In Proceedings of the CIKM ’20: The 29th ACM International Conference on Information and Knowledge Management, Virtual Event, Ireland, 19–23 October 2020; d’Aquin, M., Dietze, S., Hauff, C., Curry, E., Cudré-Mauroux, P., Eds.; ACM: New York, NY, USA, 2020; pp. 105–114. [Google Scholar] [CrossRef]

- Pianta, E.; Bentivogli, L.; Girardi, C. MultiWordNet: Developing an aligned multilingual database. In First International Conference on Global WordNet; Global WordNet Association: Weesp, The Netherlands, 2002; pp. 293–302. [Google Scholar]

- Miller, G.A.; Fellbaum, C. WordNet then and now. Lang. Resour. Eval. 2007, 41, 209–214. [Google Scholar] [CrossRef]

- Navigli, R.; Ponzetto, S.P. BabelNet: The automatic construction, evaluation and application of a wide-coverage multilingual semantic network. Artif. Intell. 2012, 193, 217–250. [Google Scholar] [CrossRef]

- Pelosi, S. Morphological Relations for the Automatic Expansion of Italian Sentiment Lexicons. In Proceedings of the Automatic Processing of Natural-Language Electronic Texts with NooJ—9th International Conference, NooJ 2015, Minsk, Belarus, 11–13 June 2015; Revised Selected Papers; Communications in Computer and Information Science. Okrut, T., Hetsevich, Y., Silberztein, M., Stanislavenka, H., Eds.; Springer: Berlin/Heidelberg, Germany, 2015; Volume 607, pp. 41–51. [Google Scholar] [CrossRef]

- Pelosi, S.; Maisto, A.; Vitale, P.; Vietri, S. Mining Offensive Language on Social Media. In Proceedings of the Fourth Italian Conference on Computational Linguistics (CLiC-it 2017), Rome, Italy,, 11–13 December 2017; CEUR-WS.org, CEUR Workshop Proceedings. Volume 2006. [Google Scholar]

- Pelosi, S. Semantically Oriented Idioms for Sentiment Analysis. A Linguistic Resource for the Italian Language. In Proceedings of the Advanced Information Networking and Applications—Proceedings of the 34th International Conference on Advanced Information Networking and Applications, AINA-2020, Caserta, Italy, 15–17 April 2020; Advances in Intelligent Systems and Computing. Barolli, L., Amato, F., Moscato, F., Enokido, T., Takizawa, M., Eds.; Springer: Berlin/Heidelberg, Germany, 2020; Volume 1151, pp. 1069–1077. [Google Scholar] [CrossRef]

- Vitale, P.; Pelosi, S.; Falco, M. #andràtuttobene: Images, Texts, Emojis and Geodata in a Sentiment Analysis Pipeline. In Proceedings of the Seventh Italian Conference on Computational Linguistics, CLiC-it 2020, Bologna, Italy, 1–3 March 2021; CEUR-WS.org, CEUR Workshop Proceedings. Volume 2769. [Google Scholar]

- Schuster, M.; Nakajima, K. Japanese and Korean voice search. In Proceedings of the 2012 IEEE International Conference on Acoustics, Speech and Signal Processing, ICASSP 2012, Kyoto, Japan, 25–30 March 2012; pp. 5149–5152. [Google Scholar] [CrossRef]

- Wu, Y.; Schuster, M.; Chen, Z.; Le, Q.V.; Norouzi, M.; Macherey, W.; Krikun, M.; Cao, Y.; Gao, Q.; Macherey, K.; et al. Google’s Neural Machine Translation System: Bridging the Gap between Human and Machine Translation. arXiv 2016, arXiv:1609.08144. [Google Scholar]

- Hendrycks, D.; Gimpel, K. Bridging Nonlinearities and Stochastic Regularizers with Gaussian Error Linear Units. arXiv 2016, arXiv:1606.08415. [Google Scholar]

- Kokalj, E.; Skrlj, B.; Lavrac, N.; Pollak, S.; Robnik-Sikonja, M. BERT meets Shapley: Extending SHAP Explanations to Transformer-based Classifiers. In Proceedings of the EACL Hackashop on News Media Content Analysis and Automated Report Generation, EACL 2021, Online, 19 April 2021; Toivonen, H., Boggia, M., Eds.; Association for Computational Linguistics: Stroudsburg, PA, USA, 2021; pp. 16–21. [Google Scholar]

- Bekavac, B.; Kocijan, K.; Silberztein, M.; Sojat, K. (Eds.) Formalising Natural Languages: Applications to Natural Language Processing and Digital Humanities. In In Proceedings of the 14th International Conference, NooJ 2020, Zagreb, Croatia, 5–7 June 2020; Revised Selected Papers, Communications in Computer and Information Science. Springer: Berlin/Heidelberg, Germany, 2021; Volume 1389. [Google Scholar] [CrossRef]

- Maisto, A.; Pelosi, S.; Stingo, M.; Guarasci, R. A hybrid Method for the Extraction and Classification of Product Features from User-Generated Contents. 2017. Available online: http://siba-ese.unisalento.it/index.php/linguelinguaggi/article/download/18352/15749 (accessed on 1 October 2021).

| HyperParameter | Value |

|---|---|

| Attention heads | 12 |

| Batch size | 8 |

| Epochs | 5 |

| Hidden size | 768 |

| Hidden layers | 12 |

| Learning rate | 0.00001 |

| Maximum sequence length | 512 |

| Parameters | 110 M |

| Negation Operator | Sentiment Word | Word Polarity | Shifted Polarity |

|---|---|---|---|

| non (not) | fantastico (fantastic) | +3 | −1 |

| bello (beautiful) | +2 | −2 | |

| carino (nice) | +1 | −2 | |

| scialbo (dull) | −1 | +1 | |

| brutto (ugly) | −2 | +1 | |

| orribile (horrible) | −3 | −1 |

| Strong Negation Operator | Sentiment Word | Word Polarity | Shifted Polarity |

|---|---|---|---|

| per niente (in no way) | fantastico (fantastic) | +3 | -2 |

| bello (beautiful) | +2 | −3 | |

| carino (nice) | +1 | −3 | |

| scialbo (dull) | −1 | +2 | |

| brutto (ugly) | −2 | +2 | |

| orribile (horrible) | −3 | +1 |

| Strong Negation Operator | Sentiment Word | Word Polarity | Shifted Polarity |

|---|---|---|---|

| poco (little) | fantastico (fantastic) | +3 | −1 |

| bello (beautiful) | +2 | −1 | |

| carino (nice) | +1 | −1 | |

| nuovo (new) | 0 | −1 | |

| scialbo (dull) | −1 | +1 | |

| brutto (ugly) | −2 | +1 | |

| orribile (horrible) | −3 | −1 |

| NooJ (SentIta) | NooJ (SentIta + Sentix) | BERTBase Italian XXL | |

|---|---|---|---|

| Accuracy | 0.7583 | 0.7667 | 0.9283 |

| 0.8517 | 0.8846 | 0.9332 |

| NooJ (SentIta) | NooJ (SentIta + Sentix) | BERT | |

|---|---|---|---|

| Precision | 0.84 | 0.77 | 0.73 |

| Recall | 0.70 | 0.85 | 1 |

| 0.76 | 0.81 | 0.84 | |

| Accuracy | 0.67 | 0.70 | 0.73 |

| Wrongly detected reviews | |||

| Precision | 0.83 | 0.79 | 0.63 |

| Recall | 0.67 | 0.85 | 1 |

| 0.74 | 0.81 | 0.77 | |

| Accuracy | 0.63 | 0.71 | 0.63 |

| Correctly detected reviews | |||

| Precision | 0.86 | 0.67 | 0.70 |

| Recall | 0.75 | 0.86 | 1 |

| 0.80 | 0.75 | 0.82 | |

| Accuracy | 0.67 | 0.60 | 0.70 |

| Scores | ||||

|---|---|---|---|---|

| Review Parts (Italian) | Review Parts (English) | BERT (through SHAP) | NooJ (SentIta) | Nooj (SentIta + Sentix) ) |

| “Posizione unica cosa positiva” 2 (su 5 stelle Recensito il 6 settembre 2013 Io e le mie amiche volevamo trascorrere il capodanno a Londra e cercando qualcosa di economico abbiamo trovato il Lonsdale. | “Location only good thing” 2 (out of 5 stars Reviewed 6 September 2013 My friends and I wanted to spend New Year’s Eve in London and looking for something cheap we found the Lonsdale. | +0.490 | +2 (“positiva”, “good”) +2 (“economico”, “cheap”) | +2 (“positiva”, “good”) +1 (“amiche”, friends) +2 (“economico”, “cheap”) |

| Dopo aver prenotato abbiamo scoperto che solo le “suite” hanno un bagno privato, mentre per le altre stanze si deve usare il bagno in comune del piano. Il bagno in questione era pulito il primo giorno, ma alla fine del viaggio la vasca era intasata dai capelli degli altri ospiti! | After booking we found out that only the “suites” have a private bathroom, while for the other rooms you have to use the shared bathroom on the floor. The bathroom in question was clean on the first day, but by the end of the trip the tub was clogged with hair from other guests! | +0.323 | +2 (“pulito”, “clean”) −2 (“intasata”, “clogged”) | −2 (“scoperto”, discovered) −1 (“privato”, “private”) 1 (“privato”, "private”) −1 (“altre”, “other”) −2 (“altre”, “other”) 1 (“comune”, “in common”) −2 (“comune”, “in common”) −1 (“piano”, “floor”) −2 (“piano”, “slow”) 2 (“pulito”, “clean”) −1 (“primo”, “first”) 1 (“primo”, “first”) −1 (“fine”, “end”) −2 (“fine”, “end”) −2 ("intasata”, “clogged”) −3 (“altri ospiti!”, “other guests”) |

| Appena entrati nell’hotel si sentiva una forte puzza, che era presente anche nelle nostre stanze. La mia stanza aveva l’armadio completamente rotto. | As soon as we entered the hotel there was a strong smell, which was also there in our rooms. My room had a completely broken wardrobe. | −0.037 | −3 (“completamente rotto”, “completely broken”) | −1 ("presente", "there") −3 (“completamente rotto”, “completely broken”) 1 (“completamente rotto”, “completely broken”) 2 (“completamente rotto”, “completely broken”) |

| A causa dell’odore dovevamo dormire con la finestra aperta. | Because of the smell we had to sleep with the window open. | +0.042 | 0 | 1 (“aperta”, “opened”) −2 (“aperta”, “opened”) |

| Le lenzuola erano pulite. Ottima la posizione. | The sheets were clean. Good location. | +3.086 | +2 (“pulite”, “clean”) +3 ("ottima") | +2 (“pulite”, i.e., “clean”) +3 (“ottima”, “good location”) |

| Overall score (Predicted label) | >0 (Positive) | >0 (Positive) | <0 (Negative) | |

| Ground Truth | Negative | |||

| Scores | ||||

|---|---|---|---|---|

| Review Parts (Italian) | Review Parts (English) | BERT (through SHAP) | NooJ (SentIta) | Nooj (SentIta + Sentix) |

| Ne sono ancora innamorato anche se la tratto male, (ho delle bruciature di sigarette sui sedili, mi è scappata!). | I’m still in love with it even though I treat it badly (I’ve got cigarette burns on the seats, it’s escaped!). | −0.948 | +3 (innamorato, “in love”) −2 (male, “badly”) −2 (ho delle bruciature di sigarette, “I’ve got cigarette burns”) | −1 (“Ne sono ancora innamorato”, “I’m still in love with it”) 1 (“Ne sono ancora innamorato”, “I’m still in love with it”) 1 (“tratto”, “treat”) 3 (“tratto”, “treat2”) −2 (“male”, “bad”) −2 (“ho delle bruciature di sigarette”, “(I’ve got cigarette burns”) |

| difetti: ho il tettuccio apribile: si è rotto dopo i primi caldi e i primi freddi, xkè ci sono dei pezzi di plastica che si seccano dopo un po’. | faults: I have a sunroof: it broke after the first warm and cold spells, because there are pieces of plastic that dry out after a while. | −0.594 | −2 (“rotto”, “broke”) | −2 (“rotto”, “broken”) −3 (“primi caldi”, “first warm”) 3 (“primi caldi”, “first warm”) −3 (“primi freddi”, “first cold”) 3 (“primi freddi”, ”first cold”) |

| per fortuna me licambiano adesso in garanzia. Si è rotto anche la serpentina del gasolio, x fortuna in garanzia. | fortunately, they are now replacing it under warranty. The diesel coil also broke, luckily under warranty. | −1.530 | −2 (“rotto”, “broke”) | 1 (“adesso”, “now”) −2 (“rotto”, “broken”) |

| Il vetro fischia. il clacson funziona male. lo sterzo è largo, troppo largo. però è bella. motore silenzioso, ma se vai sopra i 100 col tettuccio chiuso, fa rumore. Dietro si tromba bene. | The glass whistles. the horn works badly. the steering is wide, too wide. but it’s beautiful. quiet engine, but if you go over 100 with the roof closed, it makes noise. Behind it you fuck well. | −1.520 | −2 (“male”, “badly”) −2 (“troppo largo”, “too wide”) +2 (“bella”, “beautiful”) +2 (“bene”, “well”) | −2 (“male”, “bad”) −2 troppo largo", “too wide”) 2 (“bella”, “beautiful”) −1 (“silenzioso”, “silent”) 1 (“silenzioso”, “silent”) −2 (“silenzioso”, “silent”) −2 (“chiuso”, “closed”) 2 (”bene”, “well”) |

| Overall score (Predicted label) | <0 (Negative) | <0 (Negative) | <0 (Negative) | |

| Ground Truth | Positive | |||

| Scores | ||||

|---|---|---|---|---|

| Review Parts (Italian) | Review Parts (English) | BERT (through SHAP) | NooJ (SentIta) | Nooj (SentIta + Sentix) |

| Non mi è mai piaciuta.. colpa di quel muso troppo serio. Ha un baule niente male, motori molto tranquilli. E’ la classica familiare che strizza l’occhio alle donne... | I’ve never liked it...it’s that too serious nose. It’s got a nice trunk, very quiet engines. It’s the classic family car that winks at women... | −0.261 | −2 (“Non mi è mai piaciuta”, “I’ve never liked it”) −2 (“troppo serio”, “too serious”) +2 (“niente male”, “nice”) +3 (“molto tranquilli”, “very quiet”) | −2 (“Non mi è mai piaciuta”, “I’ve never liked it”) 3 (“troppo serio”, “too serious”) −2 (“troppo serio”, “too serious”) −2 (“niente male”, “nice”) 2 (“niente male”, “nice”) 3 (“molto tranquilli”, “very quiet”) 3 (“classica familiare”, “classic family car”) |

| linea tondeggiante, versioni speciali | rounded line, special versions | −0.054 | 0 | 0 |

| (come la Pinko, che si distingue per il loro dorato, e la D&G, molto chic che si distingue per la diversa colorazione delle luci posteriori). | (such as Pinko, which is distinguished by its golden colour, and the D&G, very chic which is distinguished by the different colouring of the rear lights). | +0.384 | 0 | 3 (“molto chic”, “very chic”) 1 (“diversa”, “different”) −2 (“diversa”, “different”) 2 (“diversa”, “different”) |

| Con l’arrivo della nuova C3 la gamma si è ridotta all’essenziale e il modello ha assunto un nuovo nome “C3 Classic”. Un usato del 2004/2005 si aggira attorno ai 4800/5000 euro max (esemplari con pochi km e in ottimo stato). | With the arrival of the new C3, the range has been reduced to its essentials and the model given a new name ’C3 Classic’. A used 2004/2005 model is around 4800/5000 euros max (models with few km and in excellent condition). | −0.313 | +2 (“essenziale”, “essentials”) +2 (“esemplari”, exemplary”) +3 (“ottimo”, “excellent”) | −2 (“nuova”, “new”) 2 (“nuova”, “new”) 1 (“gamma”, “range”) 2 (“essenziale”, “essential”) −2 (“nuovo”, “new”) 2 (“nuovo”, “new”) 2 (“attorno”, “around”) 2 (“esemplari”, “exemplary”) 3 (“ottimo”, “excellent”) |

| Purtroppo per i possessori che intendono venderla, l’arrivo del nuovo modello ha fatto scendere parecchio il valore del “vecchio”. I consumi? Siamo attorno ai 16 km al litro! | Unfortunately for owners who intend to sell it, the arrival of the new model has caused the value of the ’old’ one to drop considerably. Fuel consumption? It’s around 16km per litre! | −0.303 | 0 | −3 (“Purtroppo”, “Unfortunately”) −2 (“nuovo”, “new”) 2 (“nuovo”, “new”) −2 (“parecchio”, “a lot”) −1 (“vecchio”, “old”) 1 (“vecchio”, “old”) −3 (“vecchio”, “old”) −2 (“vecchio”, “old”) 2 (“vecchio”, “old”) 2 (“Siamo attorno”, “It’s around”) |

| Overall score (Predicted label) | <0 (Negative) | >0 (Positive) | >0 (Positive) | |

| Ground Truth | Negative | |||

| Scores | ||||

|---|---|---|---|---|

| Review Parts (Italian) | Review Parts (English) | BERT (through SHAP) | NooJ (SentIta) | Nooj (SentIta + Sentix) |

| La scorsa settimana sono andata da un concessionario Citroen per vedere e provare la nuova C3, praticamente bella. i commenti si sprecano, forse è tato un amore a prima vista, gli interni sono molto belli, e l’esterno ricorda il vecchio maggiolone o comunque macchine di quell’epoca. | Last week I went to a Citroen dealer to see and try out the new C3, which is practically beautiful. The comments are endless, perhaps it was love at first sight, the interior is very nice, and the exterior is reminiscent of the old Beetle or at least cars from that era. | +0.932 | +2 (“bella”, “beautiful”) +3 (“molto belli”, “very nice”) | −2 (“nuova”, “new”) 2 (“nuova”, “new”) −1 (“praticamente bella”, “practically beautiful”) 1 (“praticamente bella”, “practically beautiful”) 3 (“molto belli”, “very nice”) 2 (“esterno”, “exterior”) −3 (vecchio”, “old”) −2 (“vecchio”, “old”) −1 (“vecchio”, “old”) 1 (“vecchio”, “old”) 2 (“vecchio”, “old”) |

| Sicuramente sarà la macchina che acquisterò subito dopo le vacanze estive, visto che ormai la mia piccola utilitaria ha deciso di abbandonarmi. | It will definitely be the car I buy right after the summer holidays, as my little hatchback has now decided to abandon me. | +0.190 | 0 | 1 (“Sicuramente”, “definitely”) 2 (“Sicuramente”, “definitely”) −2 (“piccola”, “small”) −1 (“piccola”, “small”) 2 (“piccola”, “small”) 2 (“deciso”, “decided”) |

| La C3 non è troppo grande, ma nemmeno troppo piccola, l’ideale per una donna, | The C3 is not too big, but not too small either, ideal for a woman, | +0.940 | −3 (“troppo grande”, “too big”) −3 (“troppo piccola”, “too small”) +2 (“ideale”, “ideal”) | −1 (“non è troppo grande”, “ is not too big”) −3 (troppo piccola”, “too small”) −2 (“troppo piccola”, “too small”) 3 (“troppo piccola”, “too small”) 3 (“ideale”, “ideal”) |

| una come me che deve scarrozzare due figli, la spesa da fare, e perchè no, anche per andare a fare shopping con le amiche. | someone like me who has to drive two children, shopping to do, and why not, also to go shopping with friends. | +1.031 | 0 | 1 (“amiche”) 2 (“amiche”) |

| Consiglio a tutti di andare a vederla e a provarla, | I recommend everyone to go and see it and try it out, | +0.872 | 0 | 0 |

| non resterete delusi. | you will not be disappointed. | +0.433 | −3 (“delusi”, “disappointed”) | 3 (“delusi”, “disappointed”) |

| Overall score (Predicted label) | >0 (Positive) | <0 (Negative) | <0 (Negative) | |

| Ground Truth | Positive | |||

| Scores | ||||

|---|---|---|---|---|

| Review Parts (Italian) | Review Parts (English) | BERT (through SHAP) | NooJ (SentIta) | Nooj (SentIta + Sentix) |

| Devo ancora capire se mi convince del tutto! Sono molto contenta della maneggevolezza e del motore di questa macchina. E’ scattante e ha il cambio preciso preciso… | I still have to see if I’m completely convinced! I am very happy with the handling and the engine of this car. It’s quick and has a precise precise gearbox… | +0.933 | +2 (“scattante”, “quick”) +3 (“preciso preciso”, “precise precise”) | −1 (“ancora”, “still”) 1 (“ancora”, “still”) −3 (“molto contenta”, “very happy”) 3 (“molto contenta”, “very happy”) 2 (“scattante”, “quick”) 3 (“preciso preciso”, “precise precise”) |

| Però ha alcuni particolari che mi lasciano un po’ a desiderare. La visibilità è un po’ scarsa sopratutto quando giro a destra la forma rotondetta del montante mi impedisce di vedere bene e devo sempre sporgermi. E’ un po’ fastidioso! | But it does have a few details that leave me wanting. The visibility is a bit poor, especially when turning right, the rounded shape of the pillar prevents me from seeing well and I always have to lean out. It’s a bit annoying! | −1.081 | −3 (“po’ scarsa”, “bit poor”) +2 (“bene”, “well”) −3 (“po’ fastidioso”, “bit annoying”) | −3 (“alcuni particolari”, “few details”) −3 (“po’ scarsa”, “a bit poor”) 1 (“sopratutto”, “above all”) 1 (“destra”, “right”) 2 (“destra”, “right”) 2 (“bene”, “good”) −1 (“sempre”, “always”) −3 (“po’ fastidioso!”, “a bit annoying”) |

| E poi la grandezza dell’abitacolo per me che sono piccola va benissimo ma quando ci sale qualcuno appena un po’ più grosso di me fa fatica a starci comodo con le gambe e le braccia nonostante si sia sistemato il seggiolino. | And then the size of the passenger compartment is just fine for me as a small person, but when someone a little bigger than me gets in, it’s hard for me to get my legs and arms into it comfortably, even though I’ve adjusted the seat. | −0.063 | −1 (“fatica”, “hard”) +2 (“comodo”, “comfortably”) | 2 (“poi”) 1 (“benissimo”) 3 (“benissimo”) 2 (“benissimo”) −1 (“fatica”) 1 (“fatica”) 2 (“comodo”) |

| Nell’insieme sono piccoli particolari. Ma se guidi tutti i giorni contano. | All in all, these are small details. But if you drive every day they count. | +0.105 | 0 | −1 (“insieme”) 1 (“insieme”) 1 (“particolari”) −2 (“particolari”) |

| Overall score (Predicted label) | <0 (Negative) | >0 (Positive) | >0 (Positive) | |

| Ground Truth | Positive | |||

| Scores | ||||

|---|---|---|---|---|

| Review parts (Italian) | Review parts (English) | BERT (through SHAP) | NooJ (SentIta) | Nooj (SentIta + Sentix) |

| “Ottima posizione” 4 su 5 stelle Recensito il 13 giugno 2013 Albergo veramente carino e moderno nel cuore di piccadilly circus, personale attento e cordiale. | "Great location" 4 out of 5 stars Reviewed 13 June 2013 Really nice and modern hotel in the heart of piccadilly circus, attentive and friendly staff. | +0.265 | +3 (“Ottima”, “Great”) + 2 (“carino”, “nice”) + 2 (“cordiale”, “friendly”) | 3 (“Ottima”, “Great”) 2 (“veramente carino”, “really nice”) −1 (“moderno”, “modern”) 2 (“moderno”, “modern”) 2 (“attento”, “attentive”) 2 (“cordiale”, “friendly”) |

| Se soggiornate in questo Hotel vi consiglio di prendere il tè nella libreria, un luogo veramente moderno elegante e rilassante, vi serviranno il tè con una selezione di dolci e sandwich buonissimi!! | If you stay at this Hotel I would recommend you to have tea in the library, a really modern elegant and relaxing place, they will serve you tea with a selection of delicious cakes and sandwiches!! | +0.408 | +2 (“elegante”, “elegant”) +2 (“rilassante”, “relaxing”) +2 (“dolci”, “cakes”) +3 (“buonissimi!!”, “delicious”) | 2 (“veramente moderno”, “really modern”) 2 (“elegante”, “elegant”) 2 (“rilassante”, “relaxing”) 2 (“dolci”, “cakes”) 3 (“buonissimi!!”, “delicious”) |

| Devo anche dire che questo Hotel ha due difetti: (1) è leggermente rumoroso (specie se soggiornate durante il weekend si sentono molto i rumori provenienti dalla strada). | I also have to say that this hotel has two flaws: (1) it is a bit noisy (especially if you stay during the weekend you can hear a lot of noise coming from the street). | −0.254 | −2 (“rumoroso”, “noisy”) | 1 (“è leggermente rumoroso”, “a bit noisy ”) 2 (“è leggermente rumoroso”, “a bit noisy ”) 1 (“specie”, “especially”) |

| (2) Durante il nostro soggiorno siamo andati in piscina ma l’acqua non era riscaldata ed era ghiacciata e ci è stato detto che se volevamo avrebbero potuto riscaldare l’acqua per il giorno dopo… | (2) During our stay we went to the swimming pool but the water was not heated and it was freezing cold and we were told that if we wanted they could heat the water for the next day… | −0.652 | −2 (“non era riscaldata”, “was not heated”) | −2 (“non era riscaldata”, “was not heated”) −2 (“ghiacciata”, “freezing”) −1 (“detto”, “told”) 2 (“detto”, “told”) −2 (“non”, “not”) 1 (“non”, “not”) |

| un albergo di questa categoria non può permettersi uno scivolone del genere! | a hotel of this category can not afford such a slip! | −0.673 | 0 | 0 |

| Overall score (Predicted label) | <0 (Negative) | >0 (Positive) | >0 (Positive) | |

| Ground Truth | Positive | |||

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Catelli, R.; Pelosi, S.; Esposito, M. Lexicon-Based vs. Bert-Based Sentiment Analysis: A Comparative Study in Italian. Electronics 2022, 11, 374. https://doi.org/10.3390/electronics11030374

Catelli R, Pelosi S, Esposito M. Lexicon-Based vs. Bert-Based Sentiment Analysis: A Comparative Study in Italian. Electronics. 2022; 11(3):374. https://doi.org/10.3390/electronics11030374

Chicago/Turabian StyleCatelli, Rosario, Serena Pelosi, and Massimo Esposito. 2022. "Lexicon-Based vs. Bert-Based Sentiment Analysis: A Comparative Study in Italian" Electronics 11, no. 3: 374. https://doi.org/10.3390/electronics11030374

APA StyleCatelli, R., Pelosi, S., & Esposito, M. (2022). Lexicon-Based vs. Bert-Based Sentiment Analysis: A Comparative Study in Italian. Electronics, 11(3), 374. https://doi.org/10.3390/electronics11030374