Exploring the Measurement Lab Open Dataset for Internet Performance Evaluation: The German Internet Landscape

Abstract

:1. Introduction

- A procedure to confine the tremendous number of measurements to a representative subset. We do this by selection of the time of day, selection of providers, and congestion control protocols.

- We analyze the impact of the servers, sites, and locations to which clients perform their measurements against. In detail, we carve out that, in the MLab, the location as well as the autonomous system the server is located in impacts the results. For comparative studies, such effects have to be considered.

- By the use of these findings and confinements of the dataset:

- –

- We show that congestion controls, deployed in the last years, redeem their goal by the evaluation of the open real-world dataset.

- –

- We identify impacts on Internet performance during the beginning of the COVID-19 pandemic. By the split of the dataset and the analysis of the individual autonomous systems (AS), we demonstrate that the throughput and latency degraded only partially in the Internet. This degradation disappeared fast.

- –

- We compare ISPs and their evolution to give insights about their performance during busy hours.

2. Related Work

3. Material and Methods

3.1. Materials—What Is Measured by NDT?

- At least 8 KB of data was transferred;

- Test duration was between 9 and 60 s;

- Congestion was detected (by loss or bufferbloat, see [11]);

- Tests with NULL results are excluded;

- Tests from MLab Operations and Management infrastructure are excluded.

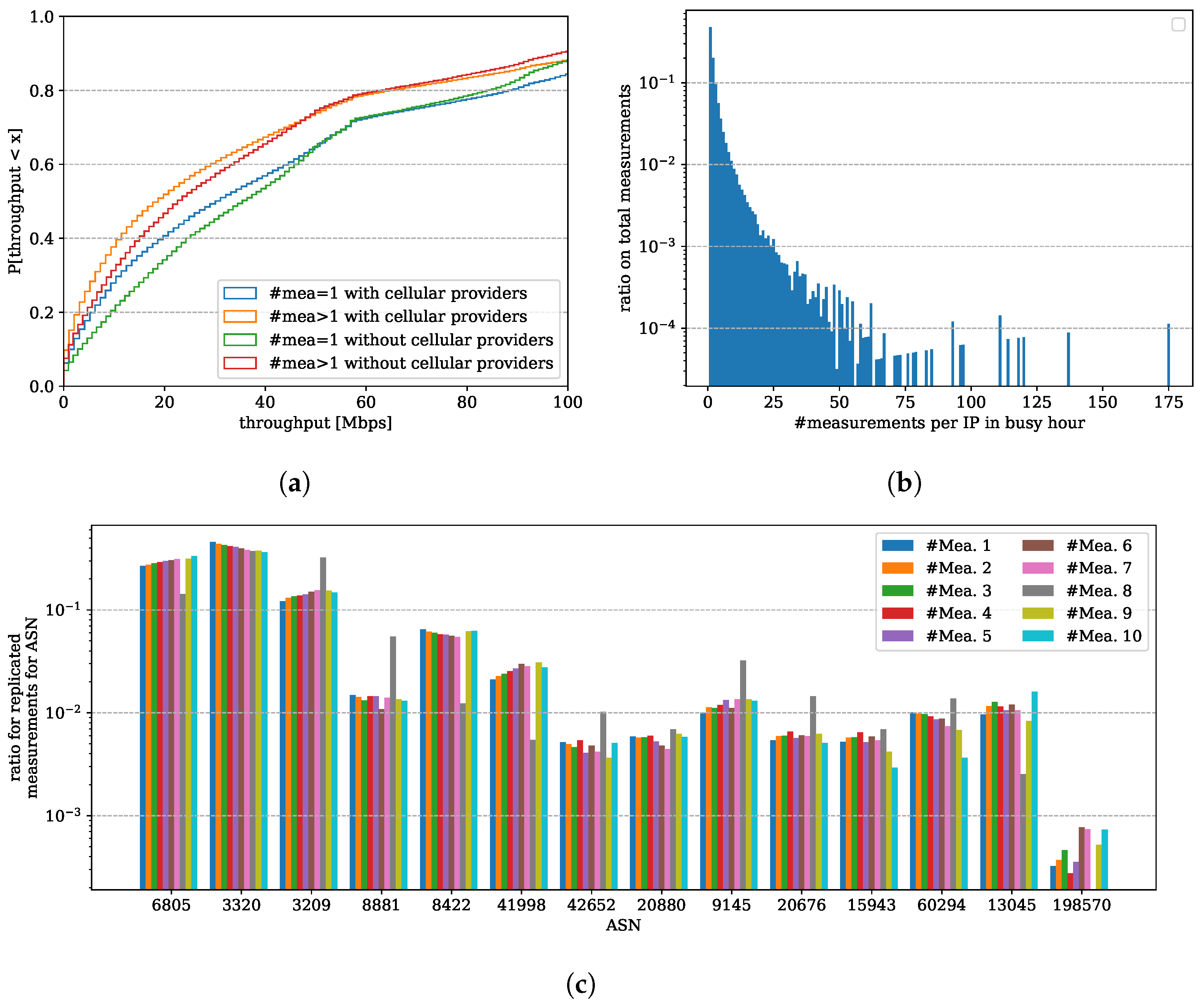

3.2. Methods

3.3. Threats to Validity

4. Results

4.1. Popular ASNs in Germany

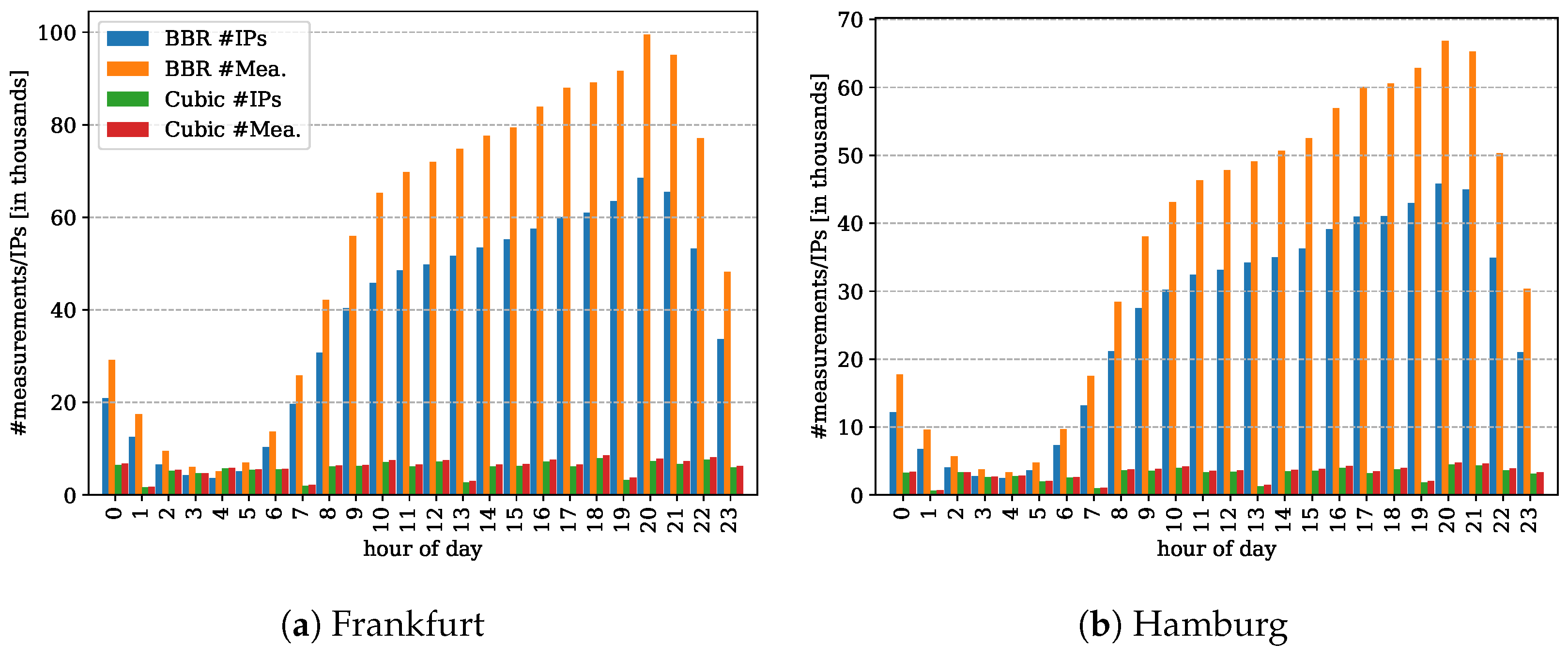

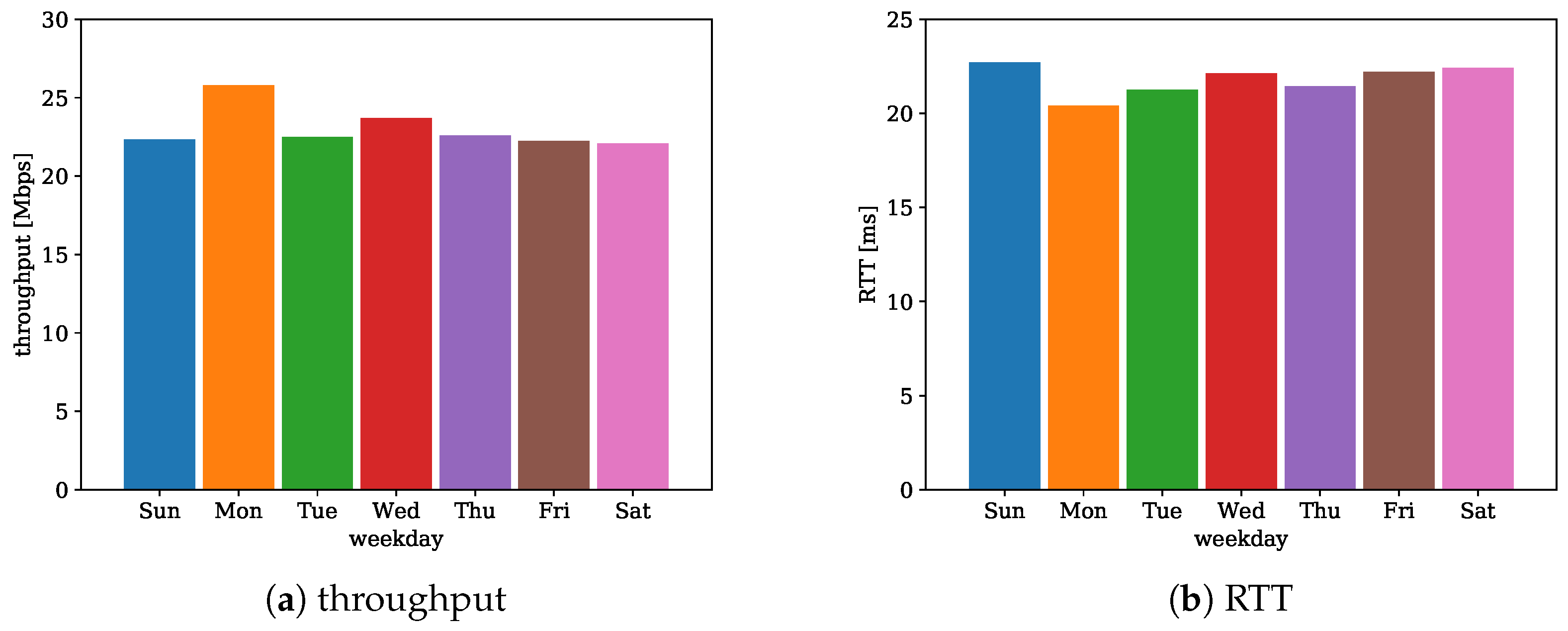

4.2. Busy Hours and Days

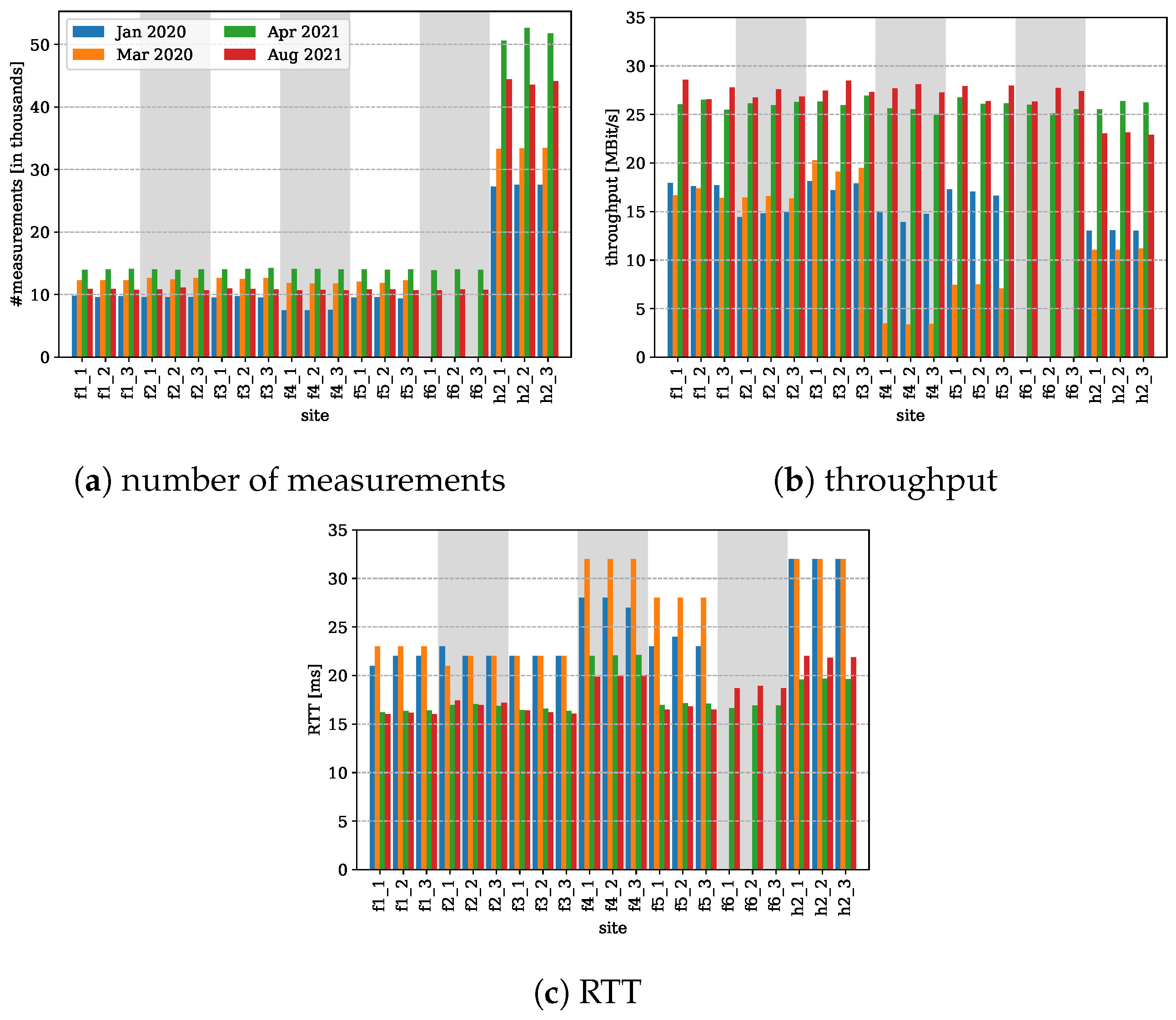

4.3. MLab Locations in Germany

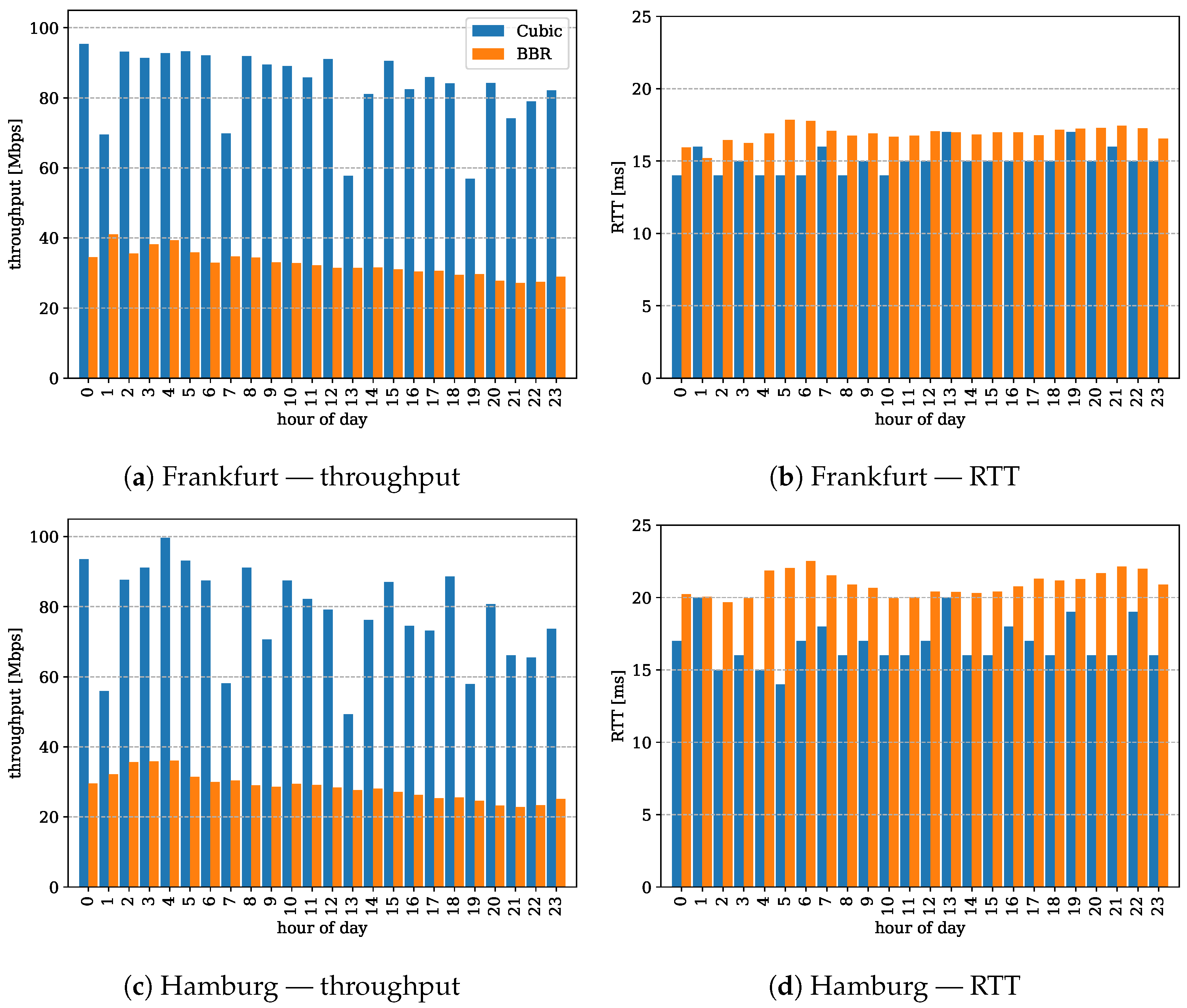

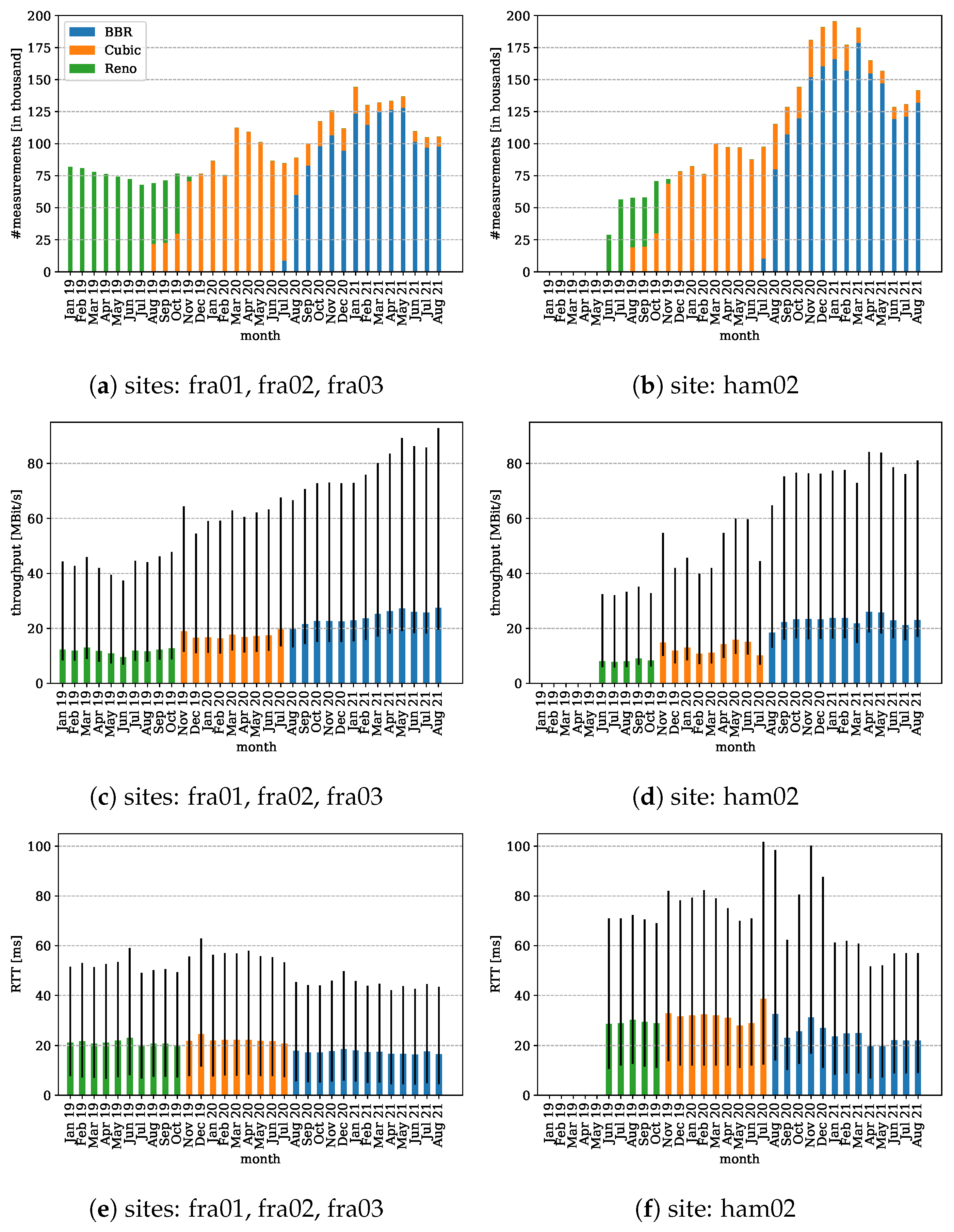

4.4. Performance Evolution of Congestion Control Protocols

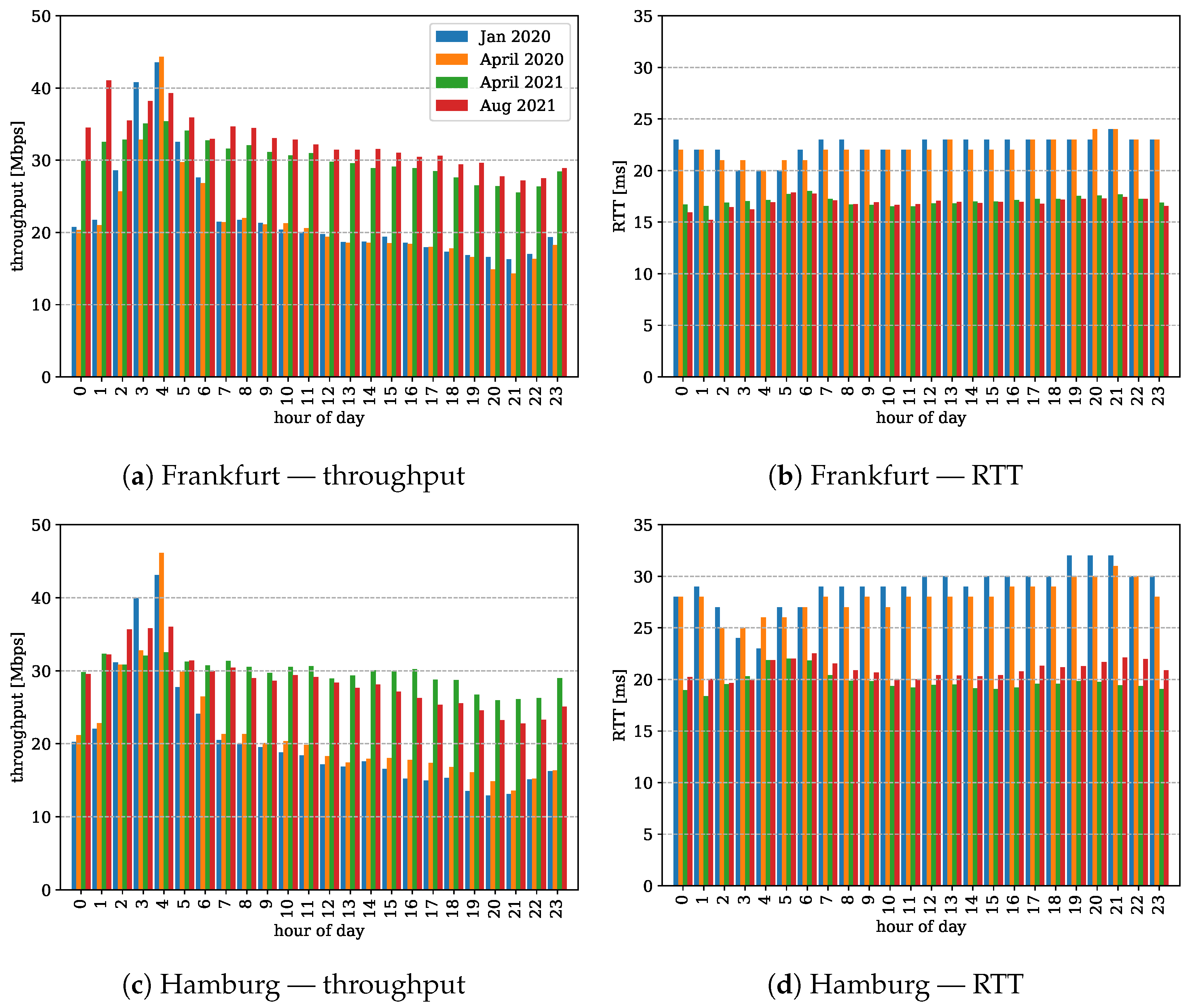

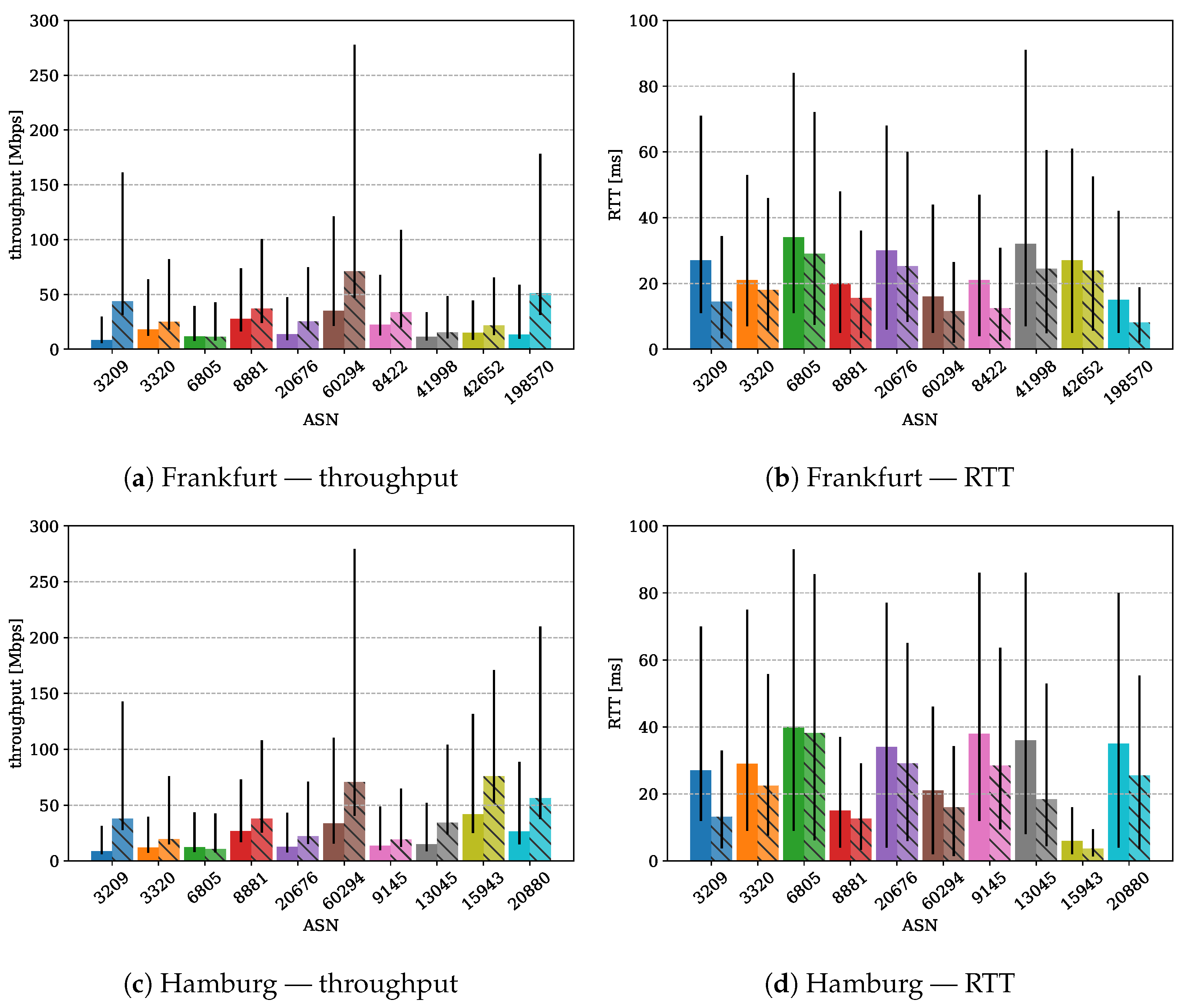

4.5. Comparison and Evolution of Popular Internet Service Providers

5. Discussion

5.1. Internet Performance Evaluation Methodology

5.2. Performance Evaluation

Performance Impairments during the Lockdown—Does the Internet Bear Up against the Rush?

5.3. Evolution of Congestion Control Protocols—Do Protocols Keep Their Promises?

6. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Data Availability Statement

Conflicts of Interest

References

- ITU. G.114: One-Way TRANSMISSION Time. Available online: https://www.itu.int/rec/T-REC-G.114-200305-I/en (accessed on 1 January 2022).

- Di Domenico, A.; Perna, G.; Trevisan, M.; Vassio, L.; Giordano, D. A Network Analysis on Cloud Gaming: Stadia, GeForce Now and PSNow. Network 2021, 1, 247–260. [Google Scholar] [CrossRef]

- Flinck Lindström, S.; Wetterberg, M.; Carlsson, N. Cloud Gaming: A QoE Study of Fast-paced Single-player and Multiplayer Gaming. In Proceedings of the 2020 IEEE/ACM 13th International Conference on Utility and Cloud Computing (UCC), Leicester, UK, 7–10 December 2020; pp. 34–45. [Google Scholar]

- European Commission. A Digital Agenda for Europe; European Commission: Brussels, Belgium, 2010.

- European Court of Auditors. Broadband in the EU Member States: Despite Progress, Not All the Europe 2020 Targets Will Be Met; Publications Office of the European Union Luxembourg: Luxembourg, 2018. [Google Scholar]

- European Union. Directive (EU) 2018/1972 of the European Parliament and of the Council—European Electronic Communications Code; European Union: Brussels, Belgium, 2018. [Google Scholar]

- Bundesrepublik Deutschland. Gesetz zur Umsetzung der Richtlinie (EU) 2018/1972 des Europäischen Parlaments und des Rates vom 11. Dezember 2018 über den Europäischen Kodex für die Elektronische Kommunikation (Neufassung) und zur Modernisierung des Telekommunikationsrechts (Telekommunikationsmodernisierungsgesetz); Bundesministerium der Justiz und für Verbraucherschutz: Bonn, Germany, 2021. [Google Scholar]

- European Union. Regulation (EU) 2015/2120 of the European Parliament and of the Council—European Electronic Communications Code; European Union: Brussels, Belgium, 2015. [Google Scholar]

- Bundesnetzagentur für Elektrizität, Gas, Telekommunikation, Post und Eisenbahnen. Verfügung Nr. 99/2021; Bundesnetzagentur für Elektrizität, Gas, Telekommunikation, Post und Eisenbahnen: Bonn, Germany, 2021.

- Federal Communications Commission. Measuring Broadband America. Available online: https://www.fcc.gov/general/measuring-broadband-america (accessed on 1 January 2022).

- Clark, D.D.; Wedeman, S. Measurement, Meaning and Purpose: Exploring the M-Lab NDT Dataset; SSRN Scholarly Paper ID 3898339; Social Science Research Network: Rochester, NY, USA, 2021. [Google Scholar]

- Høiland-Jørgensen, T.; Ahlgren, B.; Hurtig, P.; Brunstrom, A. Measuring Latency Variation in the Internet. In Proceedings of the 12th International on Conference on Emerging Networking Experiments and Technologies, Orlando, FL, USA, 9–12 December 2019; Association for Computing Machinery: New York, NY, USA, 2016; pp. 473–480. [Google Scholar]

- Rajabiun, R.; McKelvey, F. Complementary realities: Public domain Internet measurements in the development of Canada’s universal access policies. Inf. Soc. 2019, 35, 81–94. [Google Scholar] [CrossRef]

- Deng, X.; Feng, Y.; Gharakheili, H.H.; Sivaraman, V. Estimating Residential Broadband Capacity using Big Data from M-Lab. arXiv 2019, arXiv:1901.07059. [Google Scholar]

- de Donato, W.; Botta, A.; Pescapè, A. HoBBIT: A Platform for Monitoring Broadband Performance from the User Network. In Proceedings of the 6th International Workshop on Traffic Monitoring and Analysis (TMA), London, UK, 14 April 2014; Lecture Notes in Computer Science. Dainotti, A., Mahanti, A., Uhlig, S., Eds.; Springer: Berlin/Heidelberg, Germany, 2014; Volume 8406, pp. 65–77. [Google Scholar]

- Sundaresan, S.; de Donato, W.; Feamster, N.; Teixeira, R.; Crawford, S.; Pescapè, A. Measuring Home Broadband Performance. Commun. ACM 2012, 55, 100–109. [Google Scholar] [CrossRef]

- Feamster, N.; Livingood, J. Measuring Internet Speed: Current Challenges and Future Recommendations. Commun. ACM 2020, 63, 72–80. [Google Scholar] [CrossRef]

- Bauer, S.; Clark, D.; Lehr, W. Gigabit Broadband Measurement Workshop Report. SIGCOMM Comput. Commun. Rev. 2020, 50, 60–65. [Google Scholar] [CrossRef]

- Liu, S.; Schmitt, P.; Bronzino, F.; Feamster, N. Characterizing Service Provider Response to the COVID-19 Pandemic in the United States. In Passive and Active Measurement; Springer International Publishing: Cham, Switzerland, 2021; pp. 20–38. [Google Scholar]

- Feldmann, A.; Gasser, O.; Lichtblau, F.; Pujol, E.; Poese, I.; Dietzel, C.; Wagner, D.; Wichtlhuber, M.; Tapiador, J.; Vallina-Rodriguez, N.; et al. Implications of the COVID-19 Pandemic on the Internet Traffic. In Proceedings of the Broadband Coverage in Germany, 15th ITG-Symposium, Online, 2–3 March 2021. [Google Scholar]

- Measurement Lab. The M-Lab NDT Data Set. Available online: https://measurementlab.net/tests/ndt (accessed on 1 January 2022).

- Mathis, M.; Heffner, J.; Reddy, R. Web100: Extended TCP Instrumentation for Research, Education and Diagnosis. SIGCOMM Comput. Commun. Rev. 2003, 33, 69–79. [Google Scholar] [CrossRef]

- Linux Programmer’s Manual—SOCK_DIAG. Available online: https://man7.org/linux/man-pages/man7/sock_diag.7.html (accessed on 1 January 2022).

- Ha, S.; Rhee, I.; Xu, L. CUBIC: A New TCP-Friendly High-Speed TCP Variant. SIGOPS Oper. Syst. Rev. 2008, 42, 64–74. [Google Scholar] [CrossRef]

- Cardwell, N.; Cheng, Y.; Gunn, C.S.; Yeganeh, S.H.; Jacobson, V. BBR: Congestion-Based Congestion Control: Measuring Bottleneck Bandwidth and Round-Trip Propagation Time. Queue 2016, 14, 20–53. [Google Scholar] [CrossRef]

- Gettys, J.; Nichols, K. Bufferbloat: Dark Buffers in the Internet. Commun. ACM 2012, 55, 57–65. [Google Scholar] [CrossRef] [Green Version]

- Measurement Lab. M-Lab—Conceptual & Technical Scope & Policies. Available online: https://www.measurementlab.net/mlab-scope (accessed on 1 July 2019).

- Measurement Lab. Locate API Usage. Available online: https://github.com/m-lab/locate/blob/master/USAGE.md (accessed on 28 July 2020).

- Chris Ritzo. NDT Unified Views Now Published. Available online: https://www.measurementlab.net/blog/new-ndt-unified-views (accessed on 7 May 2020).

- Riegel, B.J.; Bauer, S.; Zirngibl, J. An overview on Measurement Lab. In Proceedings of the Seminar Innovative Internet Technologies and Mobile Communication, Munich, Germany, 28 February 2020. [Google Scholar]

- De Cicco, L.; Carlucci, G.; Mascolo, S. Congestion Control for WebRTC: Standardization Status and Open Issues. IEEE Commun. Stand. Mag. 2017, 1, 22–27. [Google Scholar] [CrossRef]

| Ref. | Scope | Findings | Our Contribution |

|---|---|---|---|

| [11] | Evaluation of the MLab NDT testing procedure for ISPs in the US. | Shows that justifiable conclusions can be drawn from the dataset and showcases pitfalls on the usage of the data. | Especially, the observation that single IPs perform multiple measurements is recovered for German ISPs, we further analyze the performance impact and occurrence of multiple measurements at different ISPs. |

| [12] | Measures latency variations on the MLab dataset and passive packet traces between 2010 and 2015. | Large latency variations above 100 ms are common during connection. | We do not evaluate the latency variation during the connection, but we observe small round trip times (RTTs) in 2021, even in the 75% percentile below 100 ms. |

| [13] | Evaluation of public domain Internet measurements for Canada’s access networks by comparison speed tests from MLab, Akamai, and Ookla. | The MLab and Akamai datasets return similar results, whereas the Ookla speed test returns typically greater results. | We evaluate German ISPs with a procedure to extract representative data that confines the evaluation to busy hours, ISPs, and sites as well as locations of the measurement servers. |

| [14] | Propose a method to evaluate broadband capacity exemplary for Australia and the US. The focus is on throughput and congestion count as well the evaluation of the effect that different households may share the same IP by the use of network address translation. | Present that sharing IP addresses is common in Australia and individual households can be identified. Further results indicate the achievable throughput of these households. | See line above. |

| [15] | Design and deployment of a large-scale platform for measuring broadband performance to be able to schedule and control experiments centrally. | A controllable platform avoids pitfalls of user-initiated tests. The evaluation shows that users do not achieve contracted rates. | We provide insights to the evaluation of user initiated tests. The trade-off is between less control on the experiments and the convenience in collected samples. |

| [16] | Measuring broadband performance by the use of home routers. | The use of home routers avoids effects of home networks on the measurement and enables scheduling of experiments as well as comparison of different modems. Still, traffic shaping at ISPs hinder their comparisons. | See line above. |

| [17] | Evaluation of Internet speed tests under point of view of the interest of governmental organizations to evaluate the digital infrastructure. | Shows flaws of current testing procedures and provides considerations for future testing. | Even the authors criticize current testing procedures but still highlighting their importance. We show that with curation of the dataset of imperfect measurement procedures, representative results can be extracted. |

| [18] | Report of a research workshop for broadband measurements. | Presents ten takeaways on the current state of broadband measurements and gives future directions. | The report elaborates generally on the difficulties, importance, and challenges on broadband measurements. We hope, that we can contribute to this field with our evaluation procedure. |

| [19] | Evaluation of the COVID-19 pandemic on the Internet using datasets from ISP interconnects, the Measuring Broadband America (MBA) database, and information from the Internet-wide border gateway protocol tables. | A significant increase in the delay and peak traffic rate is detected in the beginning of the lockdown. In addition, it is limited to specific ISPs. ISPs mitigate the increase in the delay by increasing transport capacities. | We present the impact of the lockdown in Germany. The results present a partial performance degradation in few transit networks in which measurement servers are located. |

| [20] | Implications of the COVID-19 pandemic on the Internet traffic using datasets from ISPs, exchange points, mobile operators, and educational networks. | Increase in traffic at ISPs and internet exchange points but decrease in educational networks. Moreover, usual daily pattern change during the lockdown. | We provide results on an open dataset and show that performance degradation only occurred partially and reveal a similar usage pattern. |

| Location | Site | Provider | ASN |

|---|---|---|---|

| Frankfurt | fra01 | Telia Company AB | AS1299 |

| Frankfurt | fra02 | GTT Communications Inc. | AS3257 |

| Frankfurt | fra03 | Vodafone Group PLC | AS1273 |

| Frankfurt | fra04 | Level 3 Parent, LLC | AS3356 |

| Frankfurt | fra05 | TATA COMMUNICATIONS (AMERICA) INC | AS6453 |

| Frankfurt | fra06 | TELECOM ITALIA SPARKLE S.p.A. | AS6762 |

| Hamburg | ham02 | Telia Company AB | AS1299 |

| AS Name | AS Num. | #Mea. | Ratio | ISP |

|---|---|---|---|---|

| Deutsche Telekom AG | 3320 | 1,639,053 | 0.32 | BCN |

| Vodafone GmbH | 3209 | 1,447,654 | 0.29 | BCN |

| Telefonica Germany GmbH & Co. OHG | 6805 | 441,232 | 0.09 | BCN |

| Stadtnetz Bamberg mbH | 198570 | 439,031 | 0.09 | BR |

| 1&1 Versatel Deutschland GmbH | 8881 | 225,464 | 0.04 | BN |

| Universitaet Stuttgart | 553 | 91,861 | 0.02 | - |

| NetCologne GmbH | 8422 | 90,210 | 0.02 | BR |

| inexio Gmbh | 42652 | 53,142 | 0.01 | BN |

| Plusnet GmbH | 20676 | 44,684 | 0.01 | BN |

| Deutsche Glasfaser Wholesale GmbH | 60294 | 39,772 | 0.01 | BN |

| NetCom BW GmbH | 41998 | 37,178 | 0.01 | BR |

| ∑ | 0.89 |

| AS Name | AS Num. | #Mea. | Ratio | ISP |

|---|---|---|---|---|

| Deutsche Telekom AG | 3320 | 902,032 | 0.32 | BCN |

| Vodafone GmbH | 3209 | 848,908 | 0.30 | BCN |

| Telefonica Germany GmbH & Co. OHG | 6805 | 424,176 | 0.15 | BCN |

| EWE TEL GmbH | 9145 | 127,245 | 0.04 | BR |

| 1&1 Versatel Deutschland GmbH | 8881 | 108,331 | 0.04 | BN |

| wilhelm.tel GmbH | 15943 | 45,427 | 0.02 | BR |

| htp GmbH | 13045 | 32,626 | 0.01 | BR |

| Plusnet GmbH | 20676 | 28,314 | 0.01 | BN |

| Tele Columbus AG | 20880 | 25,597 | 0.01 | BN |

| Deutsche Glasfaser Wholesale GmbH | 60294 | 23,813 | 0.01 | BN |

| ∑ | 0.91 |

| Application | Data Rate | Latency |

|---|---|---|

| Voice | 64 kbps | 200 ms |

| Video streaming (HD) | 5 Mbps | few seconds |

| Video streaming (UHD) | 25 Mbps | few seconds |

| Cloud gaming | 44 Mbps | 25 ms |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Lübben, R.; Misfeld, N. Exploring the Measurement Lab Open Dataset for Internet Performance Evaluation: The German Internet Landscape. Electronics 2022, 11, 162. https://doi.org/10.3390/electronics11010162

Lübben R, Misfeld N. Exploring the Measurement Lab Open Dataset for Internet Performance Evaluation: The German Internet Landscape. Electronics. 2022; 11(1):162. https://doi.org/10.3390/electronics11010162

Chicago/Turabian StyleLübben, Ralf, and Nico Misfeld. 2022. "Exploring the Measurement Lab Open Dataset for Internet Performance Evaluation: The German Internet Landscape" Electronics 11, no. 1: 162. https://doi.org/10.3390/electronics11010162

APA StyleLübben, R., & Misfeld, N. (2022). Exploring the Measurement Lab Open Dataset for Internet Performance Evaluation: The German Internet Landscape. Electronics, 11(1), 162. https://doi.org/10.3390/electronics11010162