Detecting Nuisance Calls over Internet Telephony Using Caller Reputation

Abstract

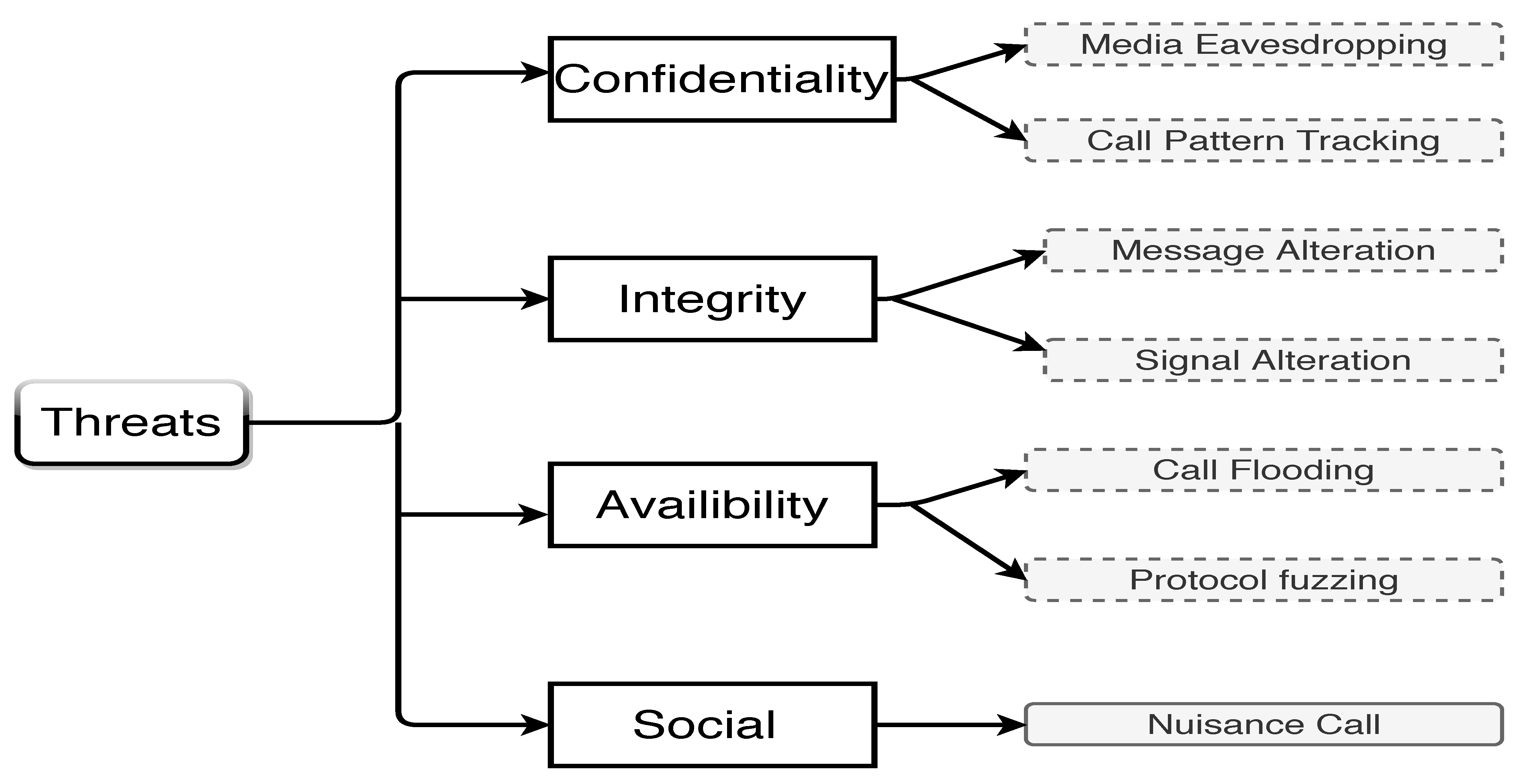

1. Introduction

2. Related Work

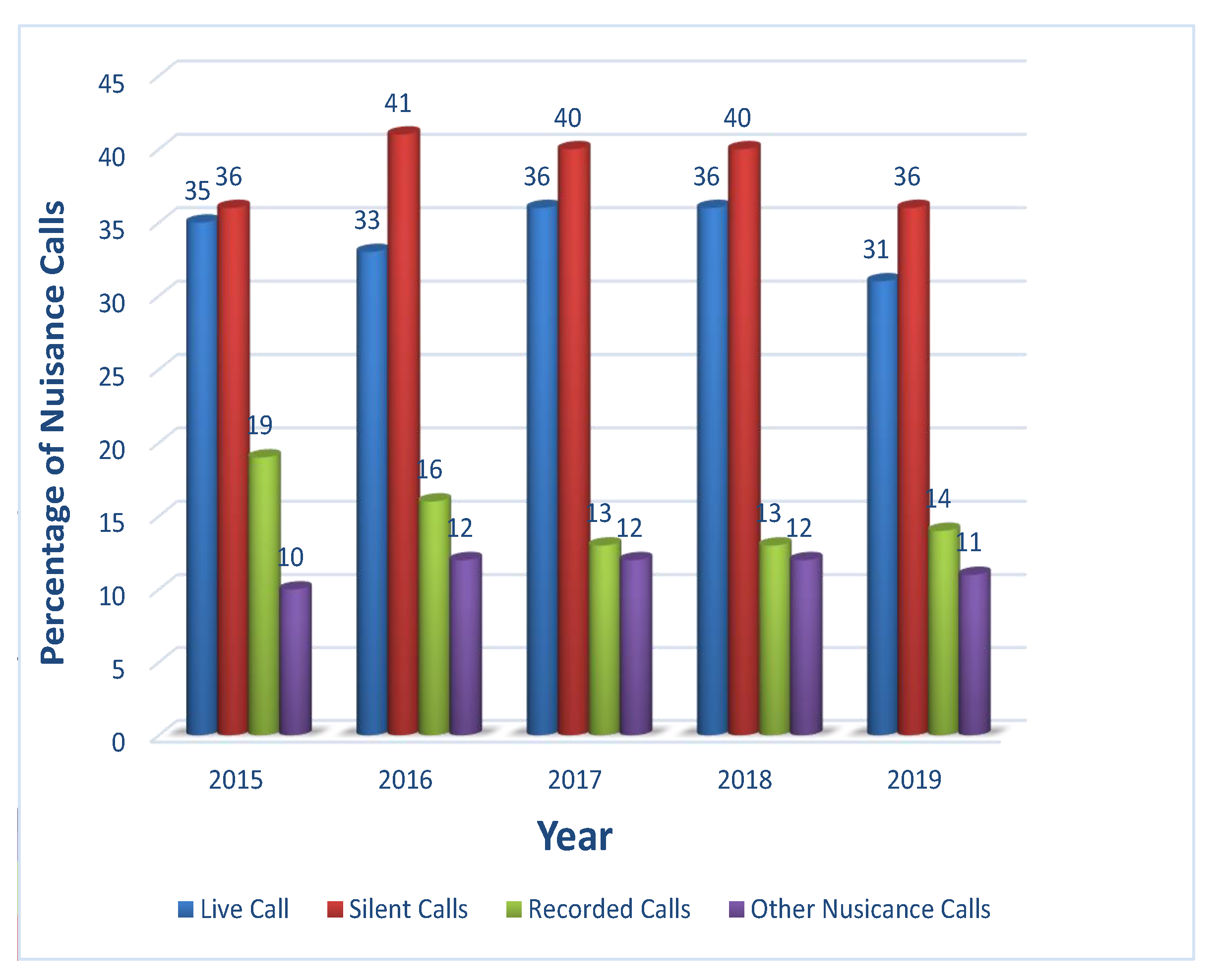

- Existing techniques are only applicable to automatically dialed prerecorded voice-spam. These mechanisms do not consider other types of unwanted nuisance calls. However, the nuisance calls statistical report presented in [31] shows that live and silent spam calls occur in a much larger quantity compared to recorded spam calls. Figure 2 shows that in 2019 alone the percentage of live and silent calls was 31% and 36%, well above the 14% of recorded calls. Live calls are generally made for telemarketing and scam purposes whereas silent calls occur in Denial-Of-Service (DoS) attacks. Accordingly, the existing spam detection mechanisms do not treat the majority of nuisance calls and need to be revised to detect other more relevant types of spam calls. Moreover, live and silent spam calls are considered to be more harmful to the users as well as to the network.

- Several existing mechanisms are very effective in detecting spam in communication networks. They usually correctly identify spammers by using their behavioral statistics. The very high rate of detecting spammer is however coupled with a tendency to a high false-positive rate. This means that many legitimate calls are incorrectly identified as spam. For instance, calls made from legitimate call center representatives have short call duration, high outgoing call rate, and low incoming call rate. These are features very similar to spammers, and thus such calls are prone to be mistakenly identified as spam in the communication network. The reputation damage for a service provider that blocks a legitimate call by misidentifying it as spam is very high. Therefore, we need a new approach that can differentiate with certainty between spammers and legitimate users that have similar call patterns.

- The user identity over Internet Telephony is easily generated by filling in self-asserted profile information without any identity proofing. Attackers can easily create fake identities and generate calls without fear of getting penalized. Upon detection, they can perform a whitewashing attack by simply re-entering the network by creating a new identity. Thus, whitewashing remains an effective method for spammers to avoid detection and continue spamming in the network. As per our knowledge, none of the existing methods provide a solution to combat whitewashing attacks. To effectively detect nuisance calls in Internet Telephony it is essential to provide defense against whitewashing attacks.

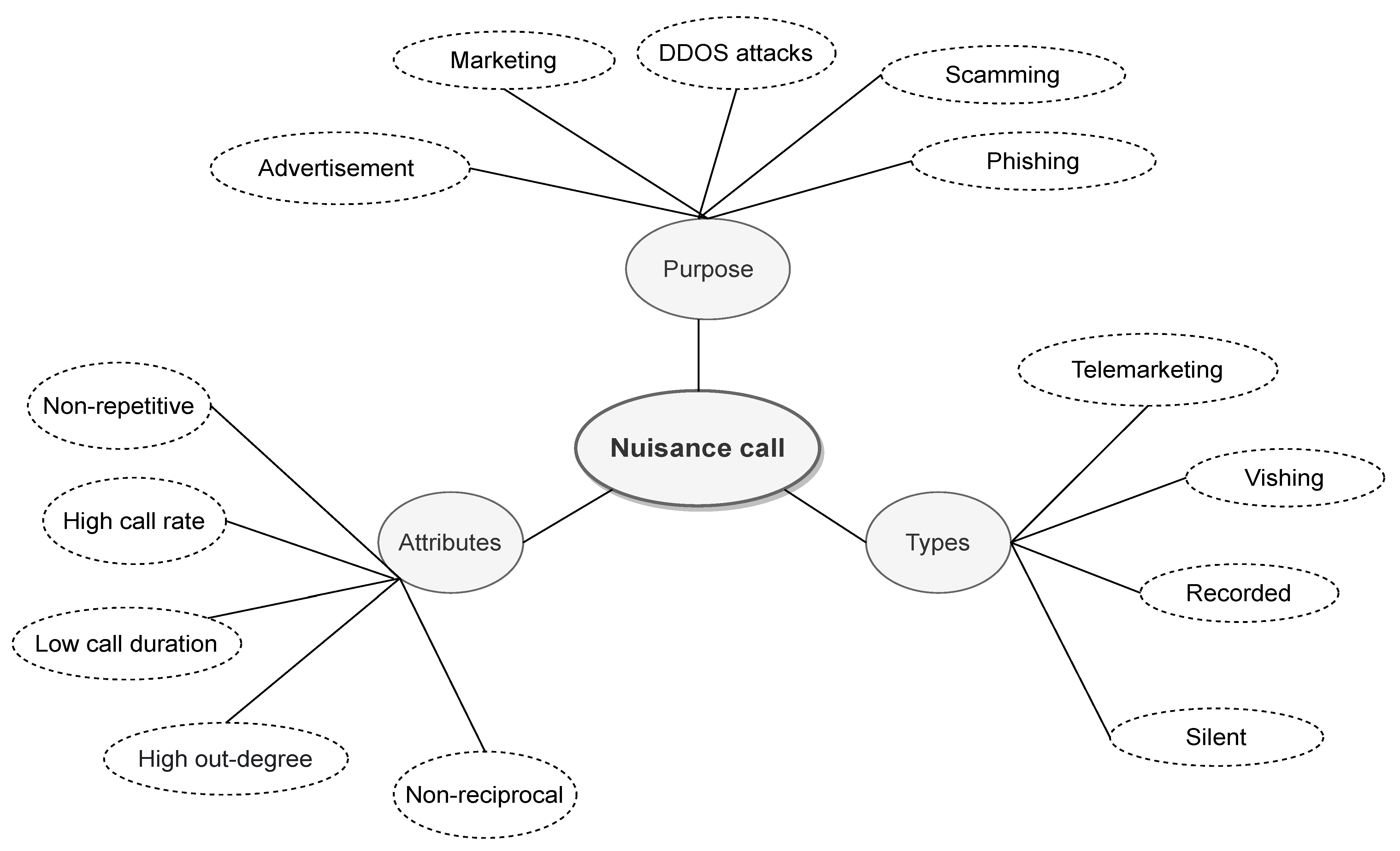

3. Nuisance Call

3.1. Classification

- Telemarketing: Telemarketing calls are made by salespersons to convince customers in buying their products or services. It is a method of direct marketing that involves direct human interaction. Telemarketing is looked upon negatively by consumers as they consider them annoying and disturbing in nature. Telemarketers often use high-pressure techniques to sell their products which are considered unethical. Furthermore, they may consist of several scams and frauds in which fraudulent telemarketers try to deceit and cheat their victims. Phone scams often involve some sort of payment from victims by fooling them. Telemarketing is usually beneficial for mobile cellular networks as they earn more revenue when telemarketers generated calls over the network. However, Internet telephony operates on a different business model in which their focus is to retain their customers by facilitating them with enhanced services. Therefore, Internet telephony services put their utmost effort to reduce telemarketing over their network.

- Voice phishing: Vishing or voice phishing is conducted over a phone call in which the attacker tricks a callee into providing confidential information that is later misused. The attacker uses social engineering to trick the victim into sharing personal or financial details such as account number, card number, and passwords. The attacker usually claims to be from some trusted organization such as a bank or telephone company. By claiming to be from a legitimate organization they deceive the victim into thinking that providing the information is for their benefit. Attackers may also deceive the victim by tricking them to install malware on the phone that tracks and extracts information about the victim.

- Recorded: Recorded calls are automatically dialed calls that are broadcast over the communication network for marketing and advisement purposes. Such calls are sent in bulk and have a fixed duration of the call. Instead of dialing each number separately the recorded call are sent repeatedly using autodialers. An autodialer is a software that automatically dials telephone numbers and plays a recorded message when the call is received. Internet telephony remains an attractive medium to play recorded messages due to its cost-effectiveness. Telemarketers usually use recorded advertisements and messages to promote their products and services to a large number of audience promptly.

- Silent: Silent calls are abandoned calls in which the callee hears nothing. Silent calls are also generated using autodialers where instead of playing a recorded message the callee hears nothing and has no means to determine who the caller is. The silent calls are usually generated purposely to conduct DDoS attacks over the network. The purpose of a DDoS attack is to prevent callers from using the network. An attacker or a group of attackers use many autodialers to generate an immense amount of silent calls over the network at the same time. A flood of silent calls can halt or significantly disrupt the services of the network. These types of nuisance calls if generated in bulk are highly disruptive for the Internet telephony providers. It harms the reputation of the service provider as the consumers are either unable to access the network or are unable to receive the expected quality of service.

3.2. Characteristics

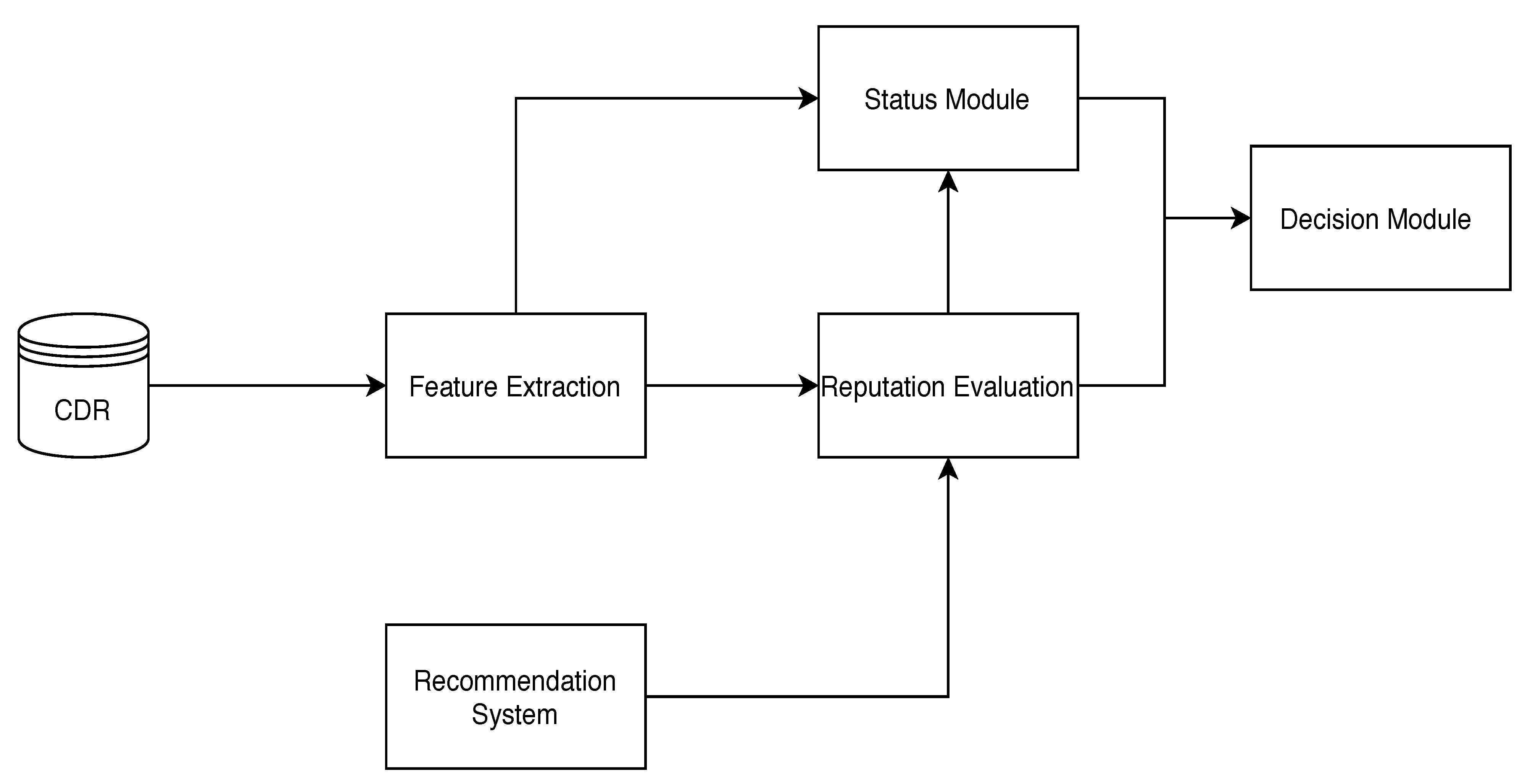

4. Caller Reputation Model

- Requirement 1: The nuisance detection mechanism should be implemented in such a way that minimum changes are required to the infrastructure of the web service provider.

- Requirement 2: The nuisance detection mechanism should work in parallel with the signaling process, to cause minimum observable delay to the caller.

- Requirement 3: The nuisance detection mechanism should be able to detect nuisance calls while eradicating the possibility of falsely detecting a legitimate call as a nuisance call (false positive).

- Requirement 4: The nuisance detection mechanism should be able to detect different types of nuisance calls generated over Internet telephony, covering the cases discussed in Section 3.1.

- Requirement 5: The nuisance detection mechanism should be robust against whitewashing attacks which are used by malicious callers to discard their bad reputation in the network.

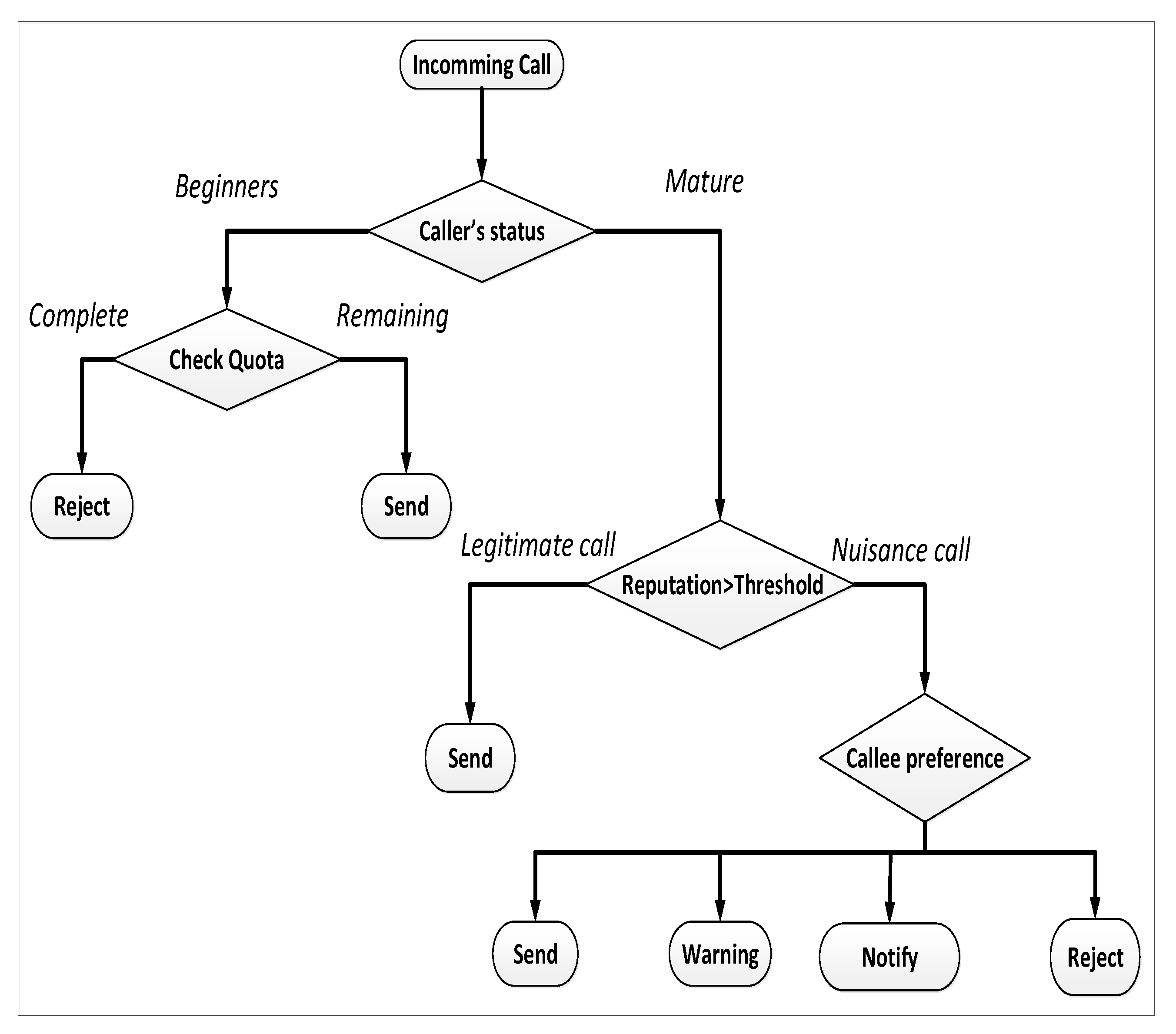

- Requirement 6: The nuisance detection mechanism should allow the user to choose what action the service provider should take in case a malicious caller tries to send a call request.

4.1. Feature Module

4.2. Recommendation System

4.3. Reputation Evaluation

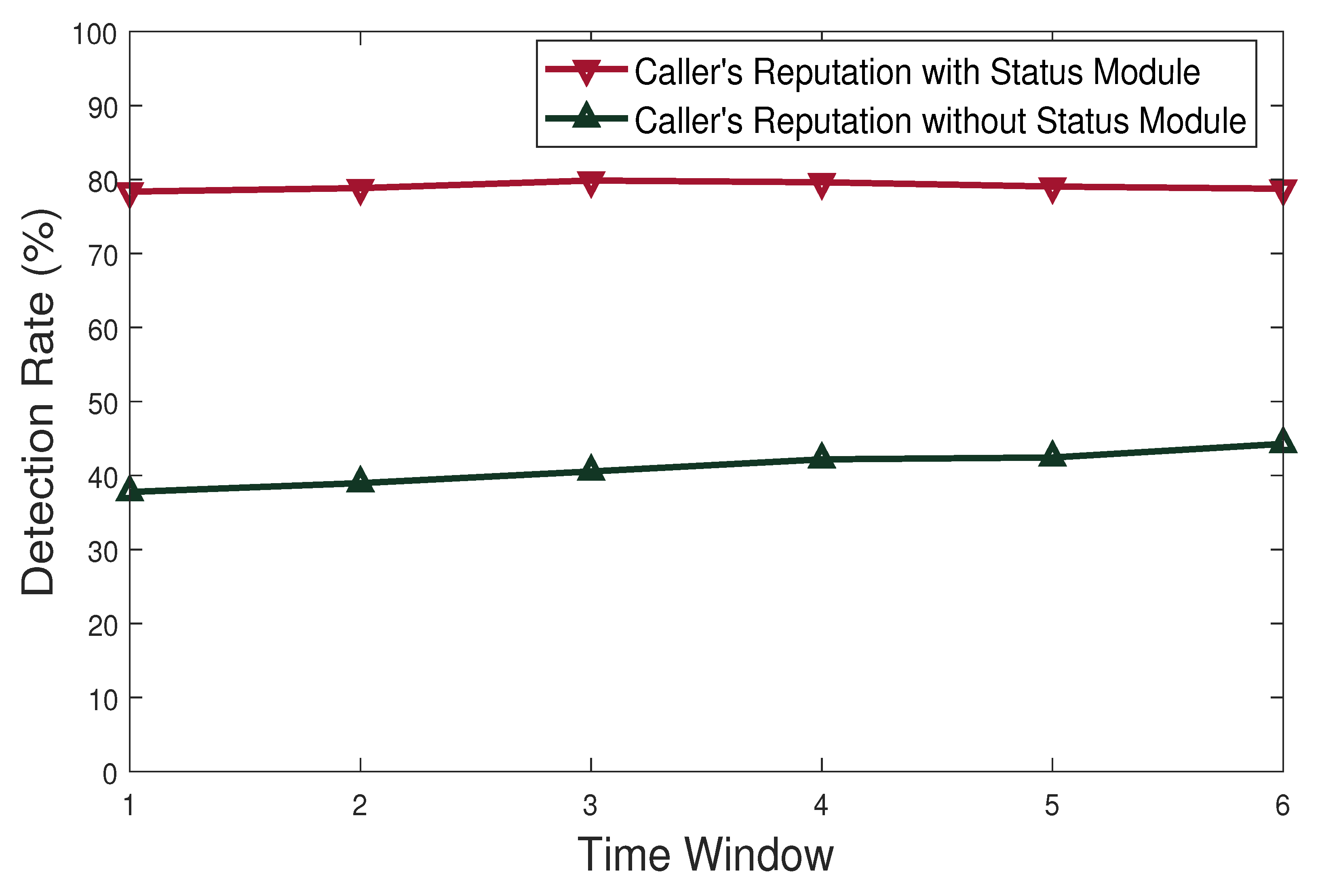

4.4. Status Module

4.5. Decision Module

5. Experimentation and Results

5.1. Simulation Setup

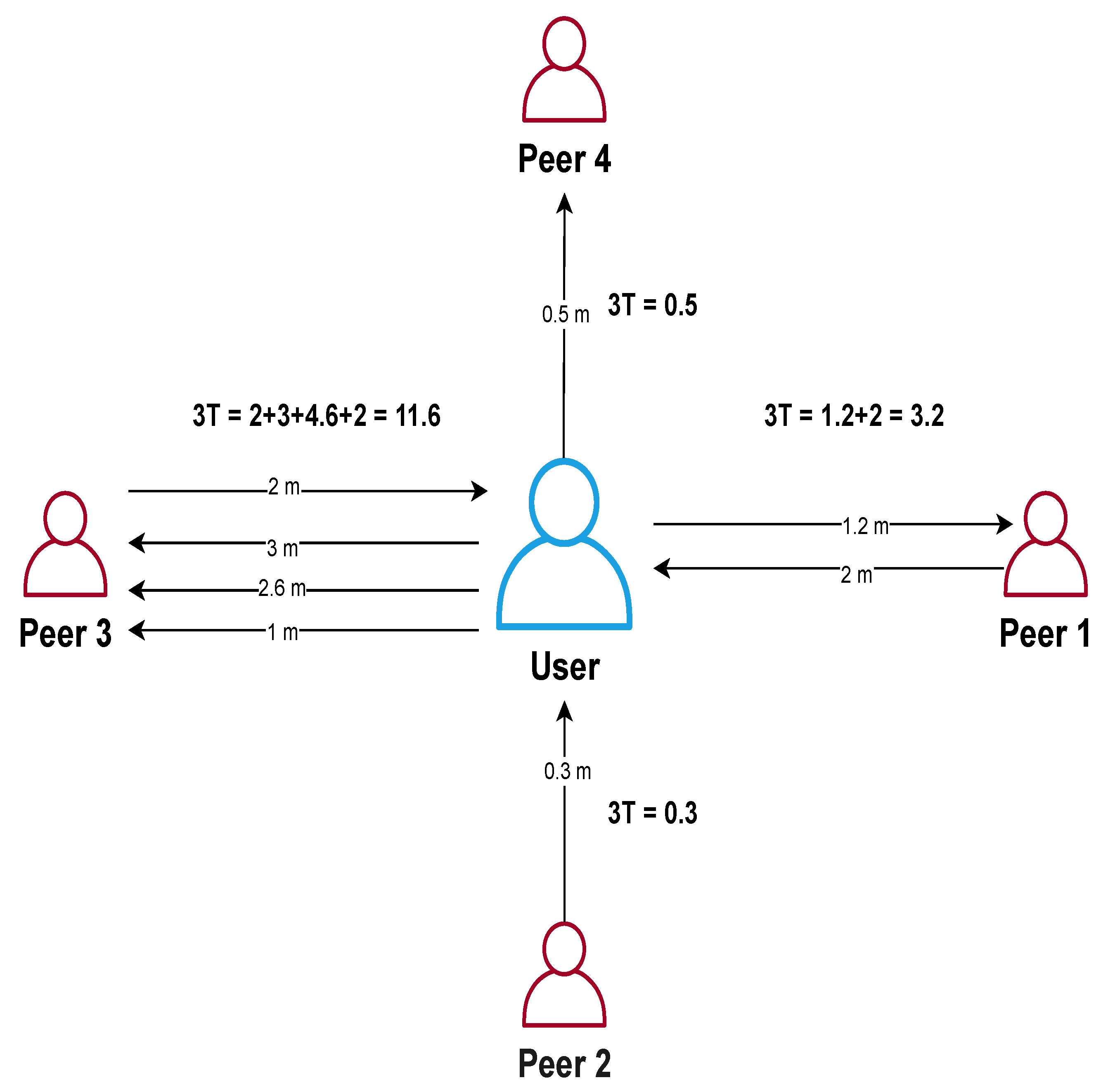

- Genuine: Genuine callers are legitimate users of the network, characterized by long-duration repetitive and reciprocal calling behavior with their social group. We use the statistics presented in [15] to model genuine callers in our network. The call rate of genuine callers follows a Poisson distribution with mean 5 calls. 80% of their calls are distributed within the social group which consists of 4–5 peers. The call duration of genuine callers is modeled using a normal distribution with mean 5 and variance 3.

- Distinct: Distinct callers are legitimate users of the network that have high out-degree and short duration calls with a very low amount of repetitive and reciprocal calls. For instance, an employer that delivers short messages to its employees or a job seeker that calls different organizations to apply. Therefore it is difficult to differentiate them from malicious callers. For the distinct caller, we use a Poisson distribution for call rate and exponential distribution for call duration.

- Telemarketers: Telemarketers are malicious callers that follow a non-repetitive and non-reciprocal call pattern with a high out-degree when compared to genuine legitimate callers in the network. A telemarketer tries to connect with a large number of peers while receiving a small number of calls. We choose a constant value for the call rate because Telemarketers generate calls repeatedly in a fixed time unit. The calls made by Telemarketers usually are of short duration due to the nature of their calls. Therefore the call duration is generated similar to a distinct caller using the same distribution and mean value.

- Autodialers: Autodialers are software that automatically generates pre-recorded advertisements calls. Autodialers usually collect identities by crawling the web or using telephone directories and generate a fixed amount of calls in a time period. Therefore, we choose a constant value for the call rate. Autodialers generate pre-recorded short voice messages, however, callees usually try to end the call right after detecting that it is a prerecorded call. Therefore, the lognormal distribution is a good representation for their call duration, here having and .

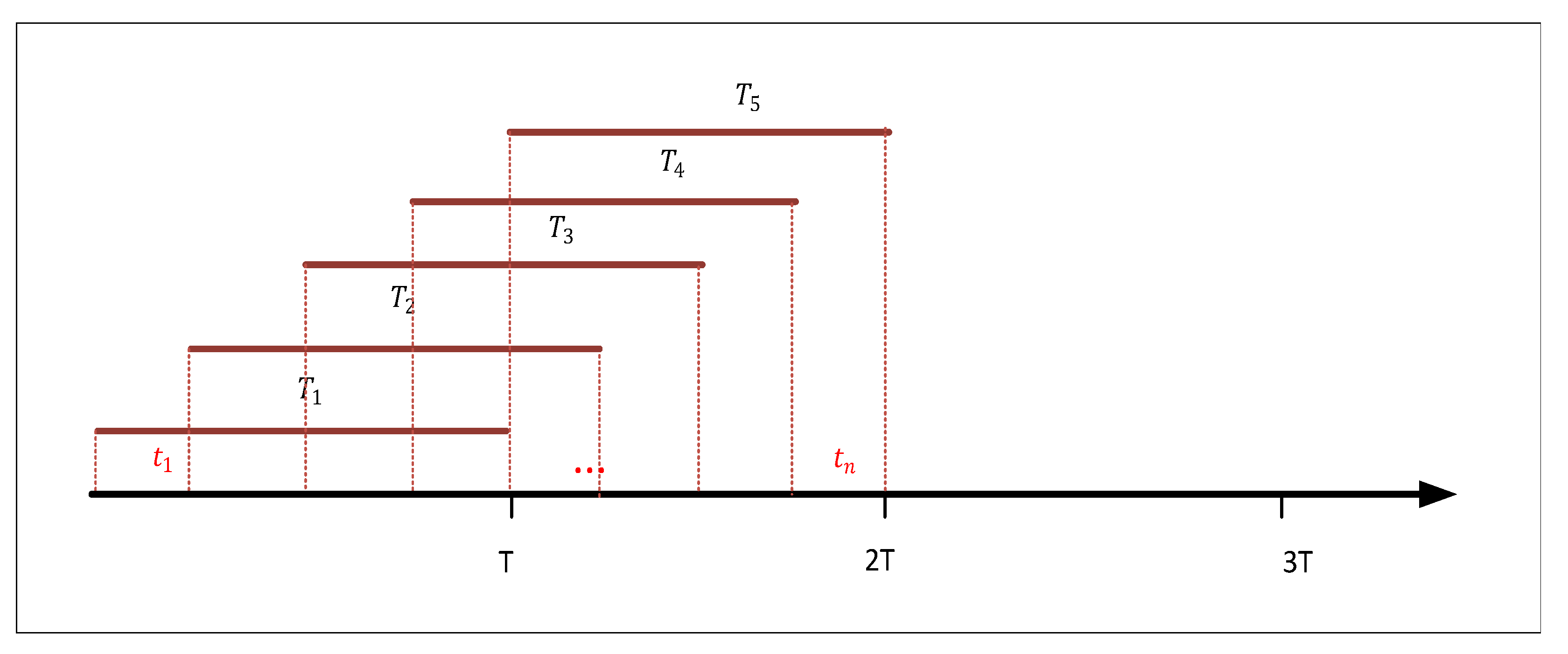

- Attackers: Attackers are malicious callers that generate silent calls in bulk to conduct a DDoS attack on the network. They usually flood silent calls in the network to consume network resources and overwhelm the service, so that legitimate call requests cannot be processed. As attackers flood silent calls in the network, we chose a constant value for call rate and call duration as shown in Figure 1.

5.2. Performance Evaluation

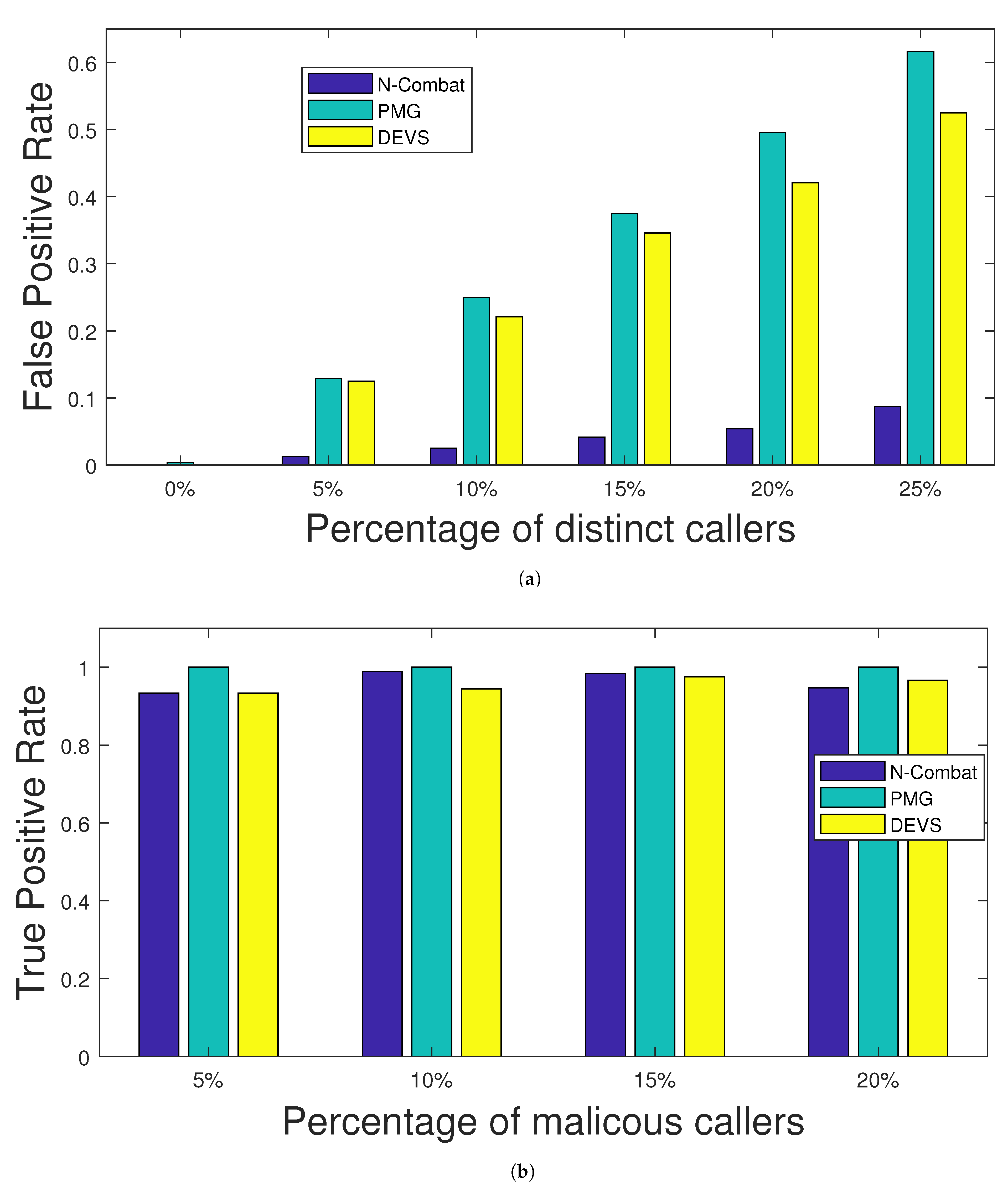

- False Positive Rate: It is the number of legitimate callers wrongly identified as malicious callers over the total number of legitimate callers in the network.

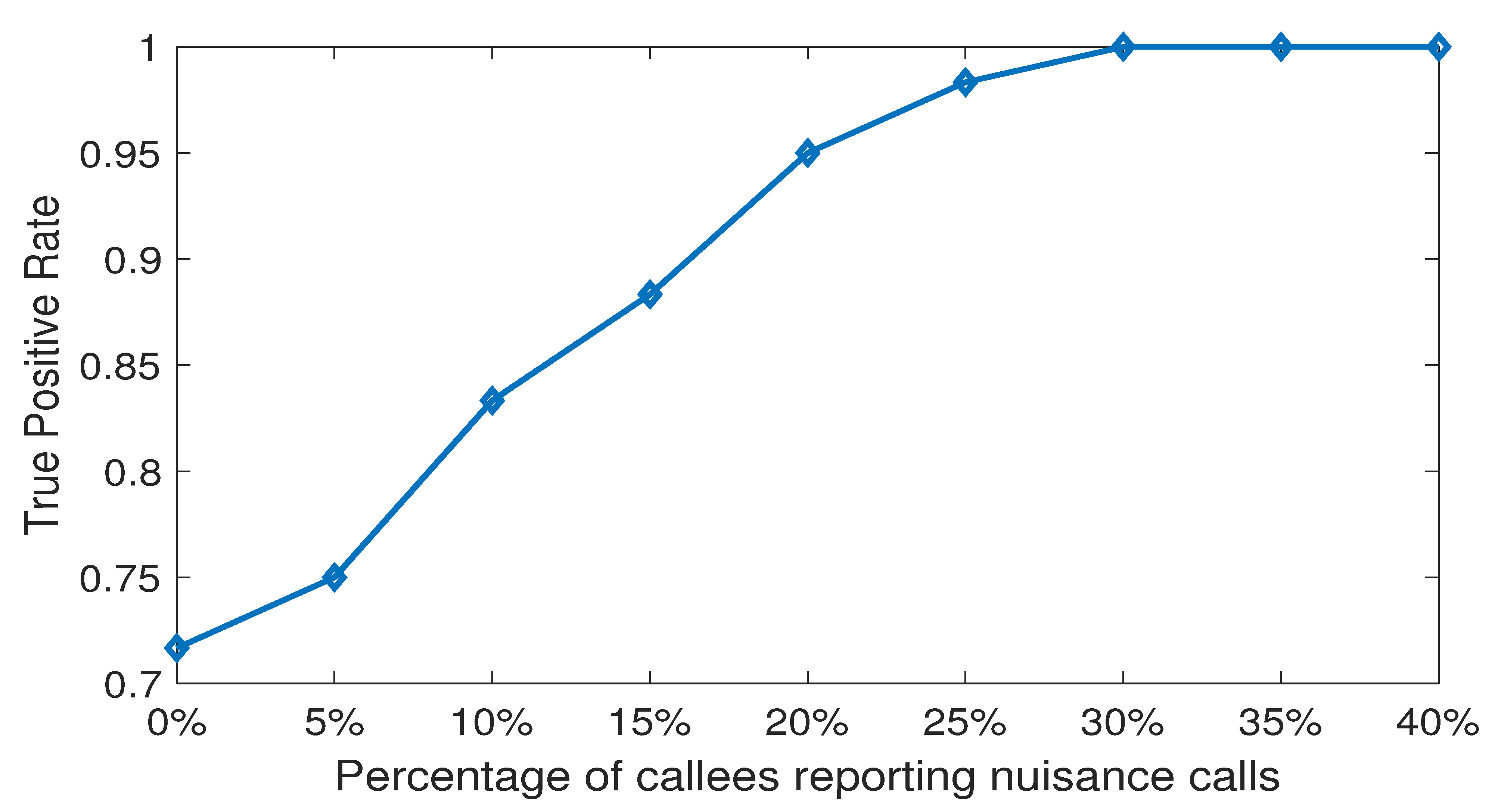

- True Positive Rate: It is the amount of malicious caller correctly identified over the total number of malicious callers present in the network.

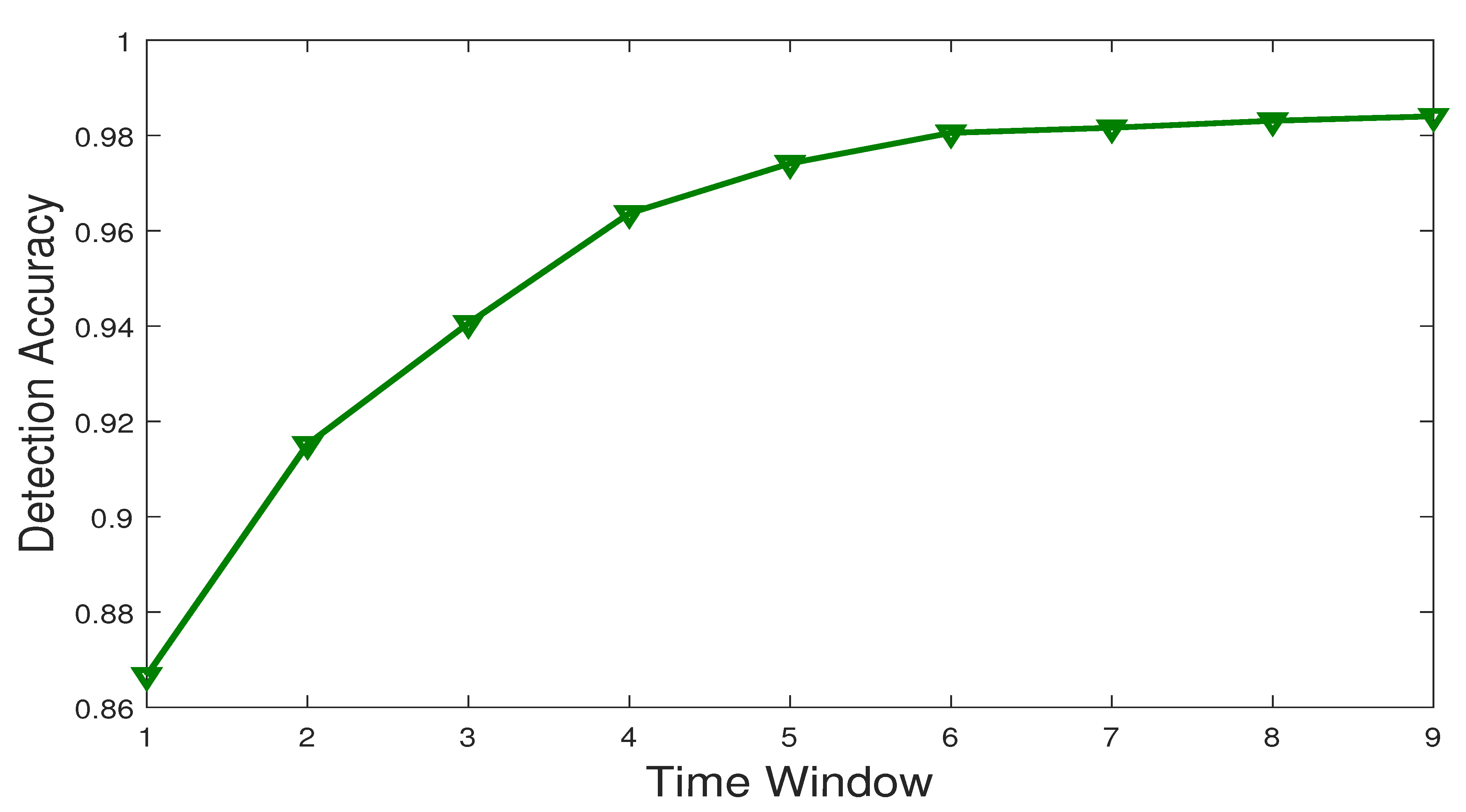

- Detection Accuracy: It is the number of correct identification of the caller’s nature over the total number of callers present in the network. A correct identification occurs when genuine and distinct callers are detected as legitimate, while telemarketers, autodialers, and attackers are detected as malicious.

- Detection Rate: It is the percentage of detected nuisance calls over the total number of nuisance calls generated in the network.

5.2.1. Exp 1: Impact of Recommendation

5.2.2. Exp 2: Computing Detection Accuracy

5.2.3. Exp 3: Efficiency Against Whitewashing Attacks

5.2.4. Exp 4: Performance Comparison with PMG and DEVS

6. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Jennings, C.; Boström, H.; Bruaroey, J. WebRTC 1.0: Real-Time Communication between Browsers; W3C Recommendation. 26 January 2021. Available online: https://www.w3.org/TR/2021/REC-webrtc-20210126/ (accessed on 26 January 2021).

- Javed, I.T.; Copeland, R.; Crespi, N.; Emmelmann, M.; Corici, A.; Bouabdallah, A.; Zhang, T.; El Jaouhari, S.; Beierle, F.; Göndör, S.; et al. Cross-domain identity and discovery framework for web calling services. Ann. Telecommun. 2017, 72, 459–468. [Google Scholar] [CrossRef]

- Persistence Market Research. Global Market Study on VOIP Services; Technical Report; Persistence Market Research: New York, NY, USA, 2018. [Google Scholar]

- Keromytis, A.D. A Comprehensive Survey of Voice over IP Security Research. IEEE Commun. Surv. Tutor. 2012, 14, 514–537. [Google Scholar] [CrossRef]

- Zhang, Y.; Wu, H.; Zhang, J.; Wang, J.; Zou, X. TW-FCM: An Improved Fuzzy-C-Means Algorithm for SPIT Detection. In Proceedings of the 2018 27th International Conference on Computer Communication and Networks (ICCCN), Hangzhou, China, 30 July–2 August 2018; pp. 1–9. [Google Scholar] [CrossRef]

- Gad, A.F. Comparison of signaling and media approaches to detect VoIP SPIT attack. In Proceedings of the 2018 International Conference on Innovative Trends in Computer Engineering (ITCE), Aswan, Egypt, 19–21 February 2018; pp. 56–62. [Google Scholar] [CrossRef]

- Xie, T.; Li, C.; Tang, J.; Tu, G. How Voice Service Threatens Cellular-Connected IoT Devices in the Operational 4G LTE Networks. In Proceedings of the 2018 IEEE International Conference on Communications (ICC), Kansas City, MO, USA, 20–24 May 2018; pp. 1–6. [Google Scholar] [CrossRef]

- Muttavarapu, A.S.; Dantu, R.; Thompson, M. Distributed Ledger for Spammers’ Resume. In Proceedings of the 2019 IEEE Conference on Communications and Network Security (CNS), Washington, DC, USA, 10–12 June 2019; pp. 1–9. [Google Scholar] [CrossRef]

- Koilada, D.V.S.R.K. Strategic Spam Call Control and Fraud Management: Transforming Global Communications. IEEE Eng. Manag. Rev. 2019, 47, 65–71. [Google Scholar] [CrossRef]

- Toyoda, K.; Park, M.; Okazaki, N.; Ohtsuki, T. Novel Unsupervised SPITters Detection Scheme by Automatically Solving Unbalanced Situation. IEEE Access 2017, 5, 6746–6756. [Google Scholar] [CrossRef]

- Sengar, H.; Wang, X.; Nichols, A. Call Behavioral Analysis to Thwart SPIT Attacks on VoIP Networks. In Security and Privacy in Communication Networks: Proceedings of the 7th International ICST Conference, SecureComm 2011, London, UK, 7–9 September 2011; Revised Selected Papers; Rajarajan, M., Piper, F., Wang, H., Kesidis, G., Eds.; Springer: Berlin/Heidelberg, Germany, 2012; pp. 501–510. [Google Scholar] [CrossRef]

- Azad, M.; Morla, R.; Arshad, J.; Salah, K. Clustering VoIP caller for SPIT identification. Secur. Commun. Netw. 2016, 9, 4827–4838. [Google Scholar] [CrossRef]

- Morla, M.A.A.R. Early identification of spammers through identity linking, social network and call features. J. Comput. Sci. 2017, 23, 157–172. [Google Scholar]

- Shin, D.; Ahn, J.; Shim, C. Progressive multi gray-leveling: A voice spam protection algorithm. IEEE Netw. 2006, 20, 18–24. [Google Scholar] [CrossRef]

- Bokharaei, H.K.; Sahraei, A.; Ganjali, Y.; Keralapura, R.; Nucci, A. You can SPIT, but you can’t hide: Spammer identification in telephony networks. In Proceedings of the 2011 Proceedings IEEE INFOCOM, Shanghai, China, 10–15 April 2011; pp. 41–45. [Google Scholar] [CrossRef]

- Iranmanesh, S.A.; Sengar, H.; Wang, H. A Voice Spam Filter to Clean Subscribers’ Mailbox. In Security and Privacy in Communication Networks; Keromytis, A.D., Di Pietro, R., Eds.; Springer: Berlin/Heidelberg, Germany, 2013; pp. 349–367. [Google Scholar]

- Strobl, J.; Mainka, B.; Grutzek, G.; Knospe, H. An efficient search method for the content-based identification of telephone-SPAM. In Proceedings of the 2012 IEEE International Conference on Communications (ICC), Ottawa, ON, Canada, 10–15 June 2012; pp. 2623–2627. [Google Scholar]

- Shah, M.; Harras, K. Hitting Three Birds with One System: A Voice-Based CAPTCHA for the Modern User. In Proceedings of the 2018 IEEE International Conference on Web Services (ICWS), San Francisco, CA, USA, 2–7 July 2018; pp. 257–264. [Google Scholar] [CrossRef]

- Quittek, J.; Niccolini, S.; Tartarelli, S.; Stiemerling, M.; Brunner, M.; Ewald, T. Detecting SPIT Calls by Checking Human Communication Patterns. In Proceedings of the 2007 IEEE International Conference on Communications, Glasgow, Scotland, 24–28 June 2007; pp. 1979–1984. [Google Scholar] [CrossRef]

- Soupionis, Y.; Koutsiamanis, R.A.; Efraimidis, P.; Gritzalis, D. A Game-Theoretic Analysis of Preventing Spam over Internet Telephony via Audio CAPTCHA-Based Authentication. J. Comput. Secur. 2014, 22, 383–413. [Google Scholar] [CrossRef]

- Reaves, B.; Blue, L.; Abdullah, H.; Vargas, L.; Traynor, P.; Shrimpton, T. AuthentiCall: Efficient Identity and Content Authentication for Phone Calls. In Proceedings of the 26th USENIX Security Symposium (USENIX Security 17), Vancouver, BC, Canada, 16 August 2017; USENIX Association: Vancouver, BC, Canada, 2017; pp. 575–592. [Google Scholar]

- Su, M.; Tsai, C. Using data mining approaches to identify voice over IP spam. Int. J. Commun. Syst. 2015, 28, 187–200. [Google Scholar] [CrossRef]

- Hansen, M.; Hansen, M.; Moller, J.; Rohwer, T.; Tolkmit, C.; Waack, H. Developing a legally compliant reachability management system as a countermeasure against spit. In Proceedings of the Third Annual VoIP Security Workshop, Berlin, Germany, 1–2 June 2006. [Google Scholar]

- Balasubramaniyan, V.A.; Ahamad, M.; Park, H. CallRank: Combating SPIT using call duration, social networks and global reputation. In Proceedings of the Fourth Conference on Eamil and Anti-Spam (CEAS 2007), Mountain View, CA, USA, 2–3 August 2007. [Google Scholar]

- Azad, M.; Morla, R. Caller-REP: Detecting Unwanted Calls with Caller Social Strength. Comput. Secur. 2013, 39, 219–236. [Google Scholar] [CrossRef]

- Kolan, P.; Dantu, R. Socio-technical Defense Against Voice Spamming. Acm Trans. Auton. Adapt. Syst. 2007, 2. [Google Scholar] [CrossRef]

- Wang, F.; Wang, F.R.; Huang, B.; Yang, L.T. ADVS: A reputation-based model on filtering SPIT over P2P-VoIP networks. J. Supercomput. 2013, 64, 744–761. [Google Scholar] [CrossRef]

- Kim, H.J.; Kim, M.J.; Kim, Y.; Jeong, H.C. DEVS-Based modeling of VoIP spam callers’ behavior for SPIT level calculation. Simul. Model. Pract. Theory 2009, 17, 569–584. [Google Scholar] [CrossRef]

- Wu, Y.; Bagchi, S.; Singh, N.; Wita, R. Spam detection in voice-over-IP calls through semi-supervised clustering. In Proceedings of the 2009 IEEE/IFIP International Conference on Dependable Systems Networks, Lisbon, Portugal, 29 June–2 July 2009; pp. 307–316. [Google Scholar]

- Chikha, R.; Abbes, T.; Chikha, W. Behavior-based approach to detect spam over IP telephony attacks. Int. J. Inf. Secur. 2016, 15, 131–143. [Google Scholar] [CrossRef]

- Ofcom. Statistical Release Calender; Technical Report; Ofcom: London, UK, 2019.

- Javed, I.T.; Toumi, K.; Crespi, N. TrustCall: A Trust Computation Model for Web Conversational Services. IEEE Access 2017, 5, 24376–24388. [Google Scholar] [CrossRef]

- Nanavati, A.; Gurumurthy, S.; Das, G.; Chakraborty, D.; Dasgupta, K.; Mukherjea, S.; Joshi, A. On the Structural Properties of Massive Telecom Call Graphs: Findings and Implications. In Proceedings of the 15th ACM International Conference on Information and Knowledge Management, Arlington, VA, USA, 6–11 November 2006; pp. 435–444. [Google Scholar]

- Melo, P.; Akoglu, L.; Faloutsos, C.; Loureiro, A. Surprising Patterns for the Call Duration Distribution of Mobile Phone Users. In Machine Learning and Knowledge Discovery in Databases, Proceedings of the European Conference, ECML PKDD 2010, Barcelona, Spain, 20–24 September 2010; Part III; Springer: Berlin/Heidelberg, Germany, 2010; pp. 354–369. [Google Scholar]

- Guo, J.; Liu, F.; Zhu, Z. Estimate the Call Duration Distribution Parameters in GSM System Based on K-L Divergence Method. In Proceedings of the 2007 International Conference on Wireless Communications, Networking and Mobile Computing, Shanghai, China, 21–25 September 2007; pp. 2988–2991. [Google Scholar] [CrossRef]

| Caller Type | Call Rate | Call Duration |

|---|---|---|

| Genuine | Poisson | Normal , |

| Distinct | Poisson | Exponential |

| Telemarketer | Constant 10 | Exponential |

| Autodialer | Normal and | Exponential |

| Attacker | Constant 50 | Constant |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Javed, I.T.; Toumi, K.; Alharbi, F.; Margaria, T.; Crespi, N. Detecting Nuisance Calls over Internet Telephony Using Caller Reputation. Electronics 2021, 10, 353. https://doi.org/10.3390/electronics10030353

Javed IT, Toumi K, Alharbi F, Margaria T, Crespi N. Detecting Nuisance Calls over Internet Telephony Using Caller Reputation. Electronics. 2021; 10(3):353. https://doi.org/10.3390/electronics10030353

Chicago/Turabian StyleJaved, Ibrahim Tariq, Khalifa Toumi, Fares Alharbi, Tiziana Margaria, and Noel Crespi. 2021. "Detecting Nuisance Calls over Internet Telephony Using Caller Reputation" Electronics 10, no. 3: 353. https://doi.org/10.3390/electronics10030353

APA StyleJaved, I. T., Toumi, K., Alharbi, F., Margaria, T., & Crespi, N. (2021). Detecting Nuisance Calls over Internet Telephony Using Caller Reputation. Electronics, 10(3), 353. https://doi.org/10.3390/electronics10030353