1. Introduction

Face recognition (FR) systems are conventionally presented with primary facial features such as eyes, nose, and mouth, i.e., non-occluded faces. However, a wide range of situations and circumstances impose that people wear masks in which faces are partially hidden or occluded. Such common situations include pandemics, laboratories, medical operations, or immoderate pollution. For instance, according to World Health Organization (WHO) [

1] and Centers for Disease Control and Prevention (CDC) [

2], the best way to protect people from the COVID-19 virus and avoid spreading or being infected with the disease is wearing face masks and practicing social distancing. Accordingly, all countries in the world require that people wear a protective face mask in public places, which has driven a need to investigate and understand how such face recognition systems perform with masked faces.

However, implementing such safety guidelines earnestly challenges the existing security and authentication systems that rely on FR already put in place. Most of the recent algorithms have been proposed towards determining whether a face is occluded or not, i.e., masked-face detection. Although saving people’s lives is compelling, there is an urgent demand to authenticate persons wearing masks without the need to uncover them. For instance, premises access control and immigration points are among many locations where subjects make cooperative presentations to a camera, which raises a problem of face recognition because the occluded parts are necessary for face detection and recognition.

Moreover, many organizations have already developed and deployed the necessary datasets in-house for facial recognition as a means of person authentication or identification use. Facial authentication, known as 1:1 matching, is an identity proofing procedure that verifies whether someone is who they declare to be. In the performance of a secure authentication, a personal facial image is taken, from which a biometric template is created and compared against an existing facial signature. In contrast, facial identification, known as 1:N matching, is a form of biometric recognition in which a person is identified by comparing and analyzing the individual pattern against a large database of known faces. Unfortunately, occluded faces complicate the subjects to be recognized accurately, thus threatening the validity of current datasets and making such in-house FR systems inoperable.

Recently, the National Institute for Standards and Technology (NIST) [

3] presented the performance of a set of face recognition algorithms developed and tuned after the COVID-19 pandemic (post-COVID-19), which follows their first study on pre-COVID-19 algorithms [

4]. They concluded that the majority of recognition algorithms evaluated after the pandemic still show a performance degradation when faces are masked. Additionally, the recognition performance deteriorates when both the enrolment and verification images are masked. This imposes the demand to tackle such authentication concerns using more robust and reliable facial recognition systems under different settings. For example, the concerted efforts to apply important facial technologies, e.g., people screening at immigration points, are undefended. Consequently, many leading vendors of such biometric technologies, including NEC [

5] and Thales [

6], have been forced to adapt their existing algorithms after the coronavirus pandemic in order to improve the accuracy of FR systems applied on persons wearing masks.

In recent years, deep learning technologies have made great breakthroughs in both theoretical progress and practical applications. The majority of FR systems have been shifted to apply deep learning models since the MFR has become a frontier research direction in the field of computer vision [

7,

8]. However, research efforts had been under way, even before the COVID-19 pandemic, on how deep learning could improve the performance of existing recognition systems with the existence of masks or occlusions. For instance, the task of occluded face recognition (OFR) has attracted extensive attention, and many deep learning methods have been proposed, including sparse representations [

9,

10], autoencoders [

11], video-based object tracking [

12], bidirectional deep networks [

13], and dictionary learning [

14].

Even though it is a crucial part of recognition systems, the problem of occluded face images, including masks, has not been completely addressed. Many challenges are still under thorough investigation and analysis, such as the large computation cost, robustness against image variations and occlusions, and learning discriminating representations of occluded faces. This made the effective utilization of deep learning architectures and algorithms one of the most decisive tasks toward the feasible face detection and recognition technologies. Therefore, facial recognition with occluded images will remain highly controversial for a prolonged period, and great research works will be increasingly presented for MFR and OFR. More implementations will be also continuously enhanced to track the movement of people wearing masks in real time [

15,

16,

17].

Over the last few years, a rapid growth in the amount of research works has been witnessed in the field of MFR. The task of MFR or OFR has been employed in many applications such as secure authentication at checkpoints [

18] and monitoring people with face masks [

19]. However, the algorithms, architectures, models, datasets, and technologies proposed in the literature to deal with occluded or masked faces lack a common mainstream of development and evaluation. Additionally, the diversity of deep learning approaches devoted to detecting and recognizing people wearing masks is absolutely beneficial, but there is a demand to review and evaluate the impact of such technologies in this field.

Such important advances with various analogous challenges motivated us to conduct this review study with the aim of providing a comprehensive resource for those interested in the task of MFR. This study focuses on the most current progressing face recognition methods that are designed and developed on the basis of deep learning. The main contribution of this timely study is threefold:

To shape and present a generic development pipeline, which is broadly adopted by the majority of proposed MFR systems. A thorough discussion of the main phases of this framework is introduced, in which deep learning is the baseline.

To comprehensively review the recent state-of-the-art approaches in the domain of MFR or OFR. The major deep learning techniques utilized in the literature are presented. In addition, the benchmarking datasets and evaluation metrics that are commonly used to assess the performance of MFR systems are discussed.

To highlight many advances, challenges, and gaps in this emerging task of facial recognition, thereby providing important insights into how to utilize the current progressing technologies in different research directions. This review study is devoted to serving the community of FR and inspiring more research works.

The rest of this paper is organized as follows:

Section 2 prefaces the study scope and some statistics on the existing works,

Section 3 introduces the generic pipeline of MFR that is widely adopted in literature,

Section 4 summarizes the benchmarking datasets used for MFR,

Section 5 presents and discusses the recent state-of-the-art methods devoted for MFR,

Section 6 presents the common metrics used in literature to evaluate the performance of MFR algorithms,

Section 7 highlights the main challenges and directions in this field with some insights provided to inspire the future research works, and

Section 8 concludes this comprehensive study. A taxonomy of the main issues covered in this study is provided in

Appendix A and all the abbreviations are listed in

Appendix B.

2. Related Studies

Face recognition is one of the most important tasks that has been extensively studied in the field of computer vision. Human faces provide largely better characteristics to recognize the person’s identity compared to other common biometric-based approaches such as iris and fingerprints [

20]. Therefore, many recognition systems have employed facial recognition features for forensics and security check purposes. However, the performance of FR algorithms is negatively affected by the presence of face disturbances such as occlusions and variation in illumination and facial expressions. For the task of MFR, the traditional methods of FR are confounded with complicated and occluded faces and therefore heighten the demand of adapting them to learn effective masked-face representations.

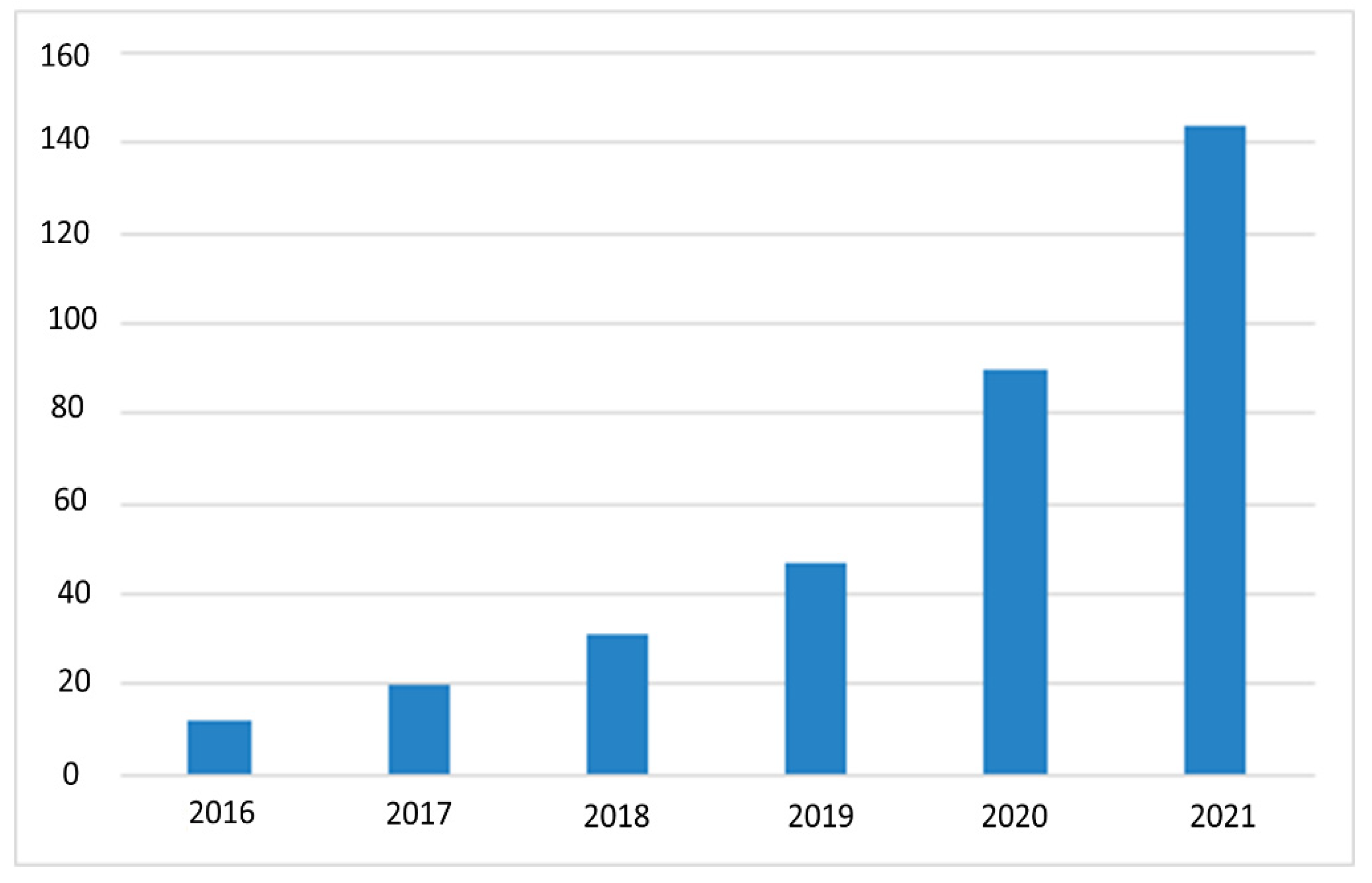

Since the COVID-19 pandemic, the research efforts in the domain of MFR have been dramatically increased, which have extended the existing FR or OFR methods and achieved promising accuracy results by a large margin. Most importantly, deep learning approaches have increasingly been developed to tackle the challenges of MFR. A search query is performed on the major digital libraries to track the growth of research interest in the tasks of MFR and OFR. A set of search strings is formulated on the leading repositories to find papers in which the use of deep learning techniques in a facial-based recognition context. The search results of MFR articles have been retrieved from IEEE Xplore, Scopus, ACM digital library, Web of Science, Wiley, Ei Compendex, and EBSCOhost. These repositories include popular symposiums, journals, workshops, and conference articles over the last five years.

However, the search queries were tuned on the basis of the goal of this study. The manuscripts and references retrieved from these repositories have been filtered further to generate a list of the most related articles to the task of MFR or OFR. Despite that, our main aim is to review and discuss the deep learning techniques used in the domain of MFR; the rapid evolution in the research works dealing with the task of OFR are highlighted, as demonstrated in

Figure 1. It is important to note that the previous works dedicated for the OFR consider the general objects that hide the key facial features such as scarfs, hair styles, eyeglasses, as well as face masks. This work is focused on the face masks as a challenging factor for OFR in the wild using deep learning techniques.

Exhaustive surveys on FR [

20,

21,

22,

23,

24,

25] and OFR [

3,

26,

27,

28] have been published in recent years. These studies have set standard algorithmic pipelines and highlighted many important challenges and research directions. However, they focused on traditional methods and deep learning developed for recognizing the face with or without occlusions. To the best of our knowledge, there are no studies that have recently reviewed the domain of MFR with deep learning methods. Moreover, the surveys on OFR have focused on some issues, challenges, and technologies.

The NIST [

3] recently reviewed the performance of FR algorithms before and after the COVID-19 pandemic. They evaluated the existing algorithms (pre-pandemic) after tuning them to deal with masked or concluded faces. They showed how these algorithms still tend to perform lower than the satisfactory level. However, this report is a quantitative study limited by reporting the accuracy of recognition algorithms on faces occluded by synthetic masks using two photography datasets collected in U.S. governmental applications, e.g., border crossing photographs. These algorithms were also submitted to NIST with no prior information on whether or not designed with the expectation of occluded faces.

Zeng et al. [

26] reviewed the existing face recognition methods that only consider the occluded faces. They categorized the evaluated approaches into three main phases, which are the occlusion feature extraction, occlusion detection, and occlusion recovery and recognition. However, this study considered face masks as one of many occlusion objects and restricted the task of MFR by one dataset. It also generally assessed many algorithms and implementations, including deep learning approaches. Zhang et al. [

27] presented a thorough review of facial expression analysis algorithms with partially occluded faces. Face masks were only used in this survey as examples of objects challenging the recognition system of facial expressions. Moreover, deep learning approaches were among six techniques evaluated in the presence of partial occlusion. Lahasan et al. [

28] discussed three challenges that affect the FR systems, which are face occlusion, face expressions, and dataset variations. They classified the state-of-the-art approaches into holistic and part-based approaches. The role of several datasets and competitions in tackling these challenges has been also discussed.

Our study is distinguished from the existing surveys by providing a comprehensive review of recent advances and algorithms developed in the scope of MFR. It focuses on deep learning techniques, architectures, and models utilized in the pipeline of MFR, including face detection, unmasking, restoration, and matching. Additionally, the benchmarking datasets and evaluation metrics are presented. Many challenges and insightful research directions are highlighted and discussed. This timely study would inspire more research works toward providing further improvements in the task of MFR.

3. The MFR Pipeline

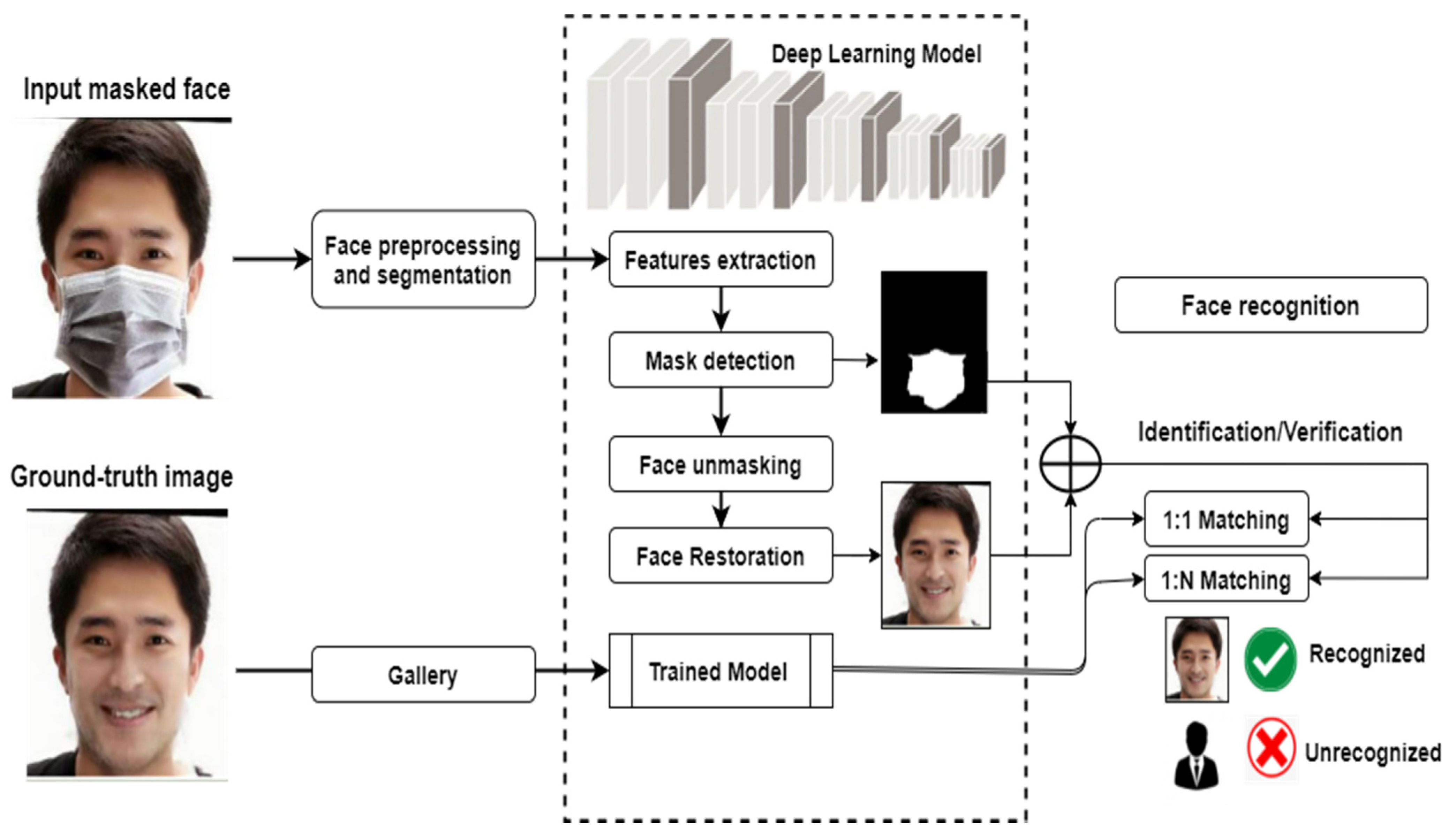

This section presents how MFR systems are typically developed through a set of sophisticated phases, as depicted in

Figure 2. The generic methodology is mainly based on deep learning models that are widely adopted to learn discriminating features of masked faces. As can be observed from this pipeline, several crucial steps are typically put in place toward developing the final recognition system, as discussed in the following subsections.

Firstly, a collection of original masked images with corresponding ground-truth images are prepared. This usually includes splitting them into categorical directories for the purpose of model training, validation, and testing. This is followed by some preprocessing operations such as data augmentation and image segmentation. Then, a set of key facial features are extracted using one or more deep learning models usually pretrained on general-purpose images and fine-tuned on a new collection, i.e., masked faces. Such features should be discriminative enough to detect the face masks accurately. A procedure of face unmasking is then applied in order to restore the masked face and return an estimation of the original face. Finally, the predicted face is matched against the original ground-truth faces to decide whether or not a particular person is identified or verified.

3.1. Image Preprocessing

The performance of FR systems, with or without masks, is largely influenced by the nature of face images used in the training, validation, and testing stages. There are few publicly available datasets that include facial image pairs with and without mask objects to sufficiently train the MFR system in a progressing manner. Therefore, this strengthens the requirement of enriching the testbed by additional synthetic images with various types of face masks [

29,

30], as well as improving the generalization capability of deep learning models. Among the most popular methods used to synthesize the face masks are MaskTheFace [

31], MaskedFace-Net [

32], deep convolutional neural network (DCNN) [

33], CYCLE-GAN [

34], Identity Aware Mask GAN (IAMGAN) [

35], and starGAN [

36].

Images have also been widely pre-processed using data augmentation, by which many operations could be applied to enrich the amount and variation of images such as image cropping, flipping, rotation, and alignment. Other augmentation processes are also applied to improve the quality of image representation such as image re-scaling, segmentation, noise removal, or smoothing. Moreover, image adjustment can be performed to improve its sharpness, and the variance of Laplacian [

37] is one of the commonly adopted approaches.

To obtain better image representations, several methods segment the image into local parts instantly or semantically, then represent them by the ordered property of facial parts [

38] or a set of discriminative components [

39,

40]. However, some techniques feed the image to an existing tool to detect the facial landmarks [

41], while others represent the input still-image by a generic descriptor such as low-rank regularization [

42] and sparse representation [

43].

3.2. Deep Learning Models

Many well-known methods have been proposed and attempted to recognize human faces by hand-crafted local or global features, such as LBP [

44], SIFT [

45], and Gabor [

46]. However, these holistic approaches suffer from the ability to maintain the uncontrolled facial changes that deviate from their initial assumptions [

20]. Later, shallow image representations were introduced, e.g., learning-based dictionary descriptors [

47], to improve the distinctiveness and compactness problems of previous methods. Although the accuracy improvements are achieved, these shallow representations still tend to show low robustness against real-world applications and instability against facial appearance variations.

After 2010, deep learning methods were rapidly developed and utilized in a form of multiple deep layers for feature extraction and image transformation. With time, they proved a superiority in learning multiple levels of facial representations that correspond to different levels of abstraction [

48], showing solid invariance to the face changes, including lighting, expression, pose, or disguise. Deep learning models are able to combine low-level and high-level abstraction to represent and recognize stable facial identity with strong distinctiveness. In the remaining part of this section, common deep learning models used for masked face recognition are introduced.

3.2.1. Convolutional Neural Networks

Convolutional neural network (CNN) is one of the most effective neural networks that has shown its superiority in a wide range of applications, including image classification, recognition, retrieval, and object detection. CNNs typically consist of cascaded layers to control the degree of shift, scale, and distortion [

49], which are input, convolutional, subsampling, fully connected, and output layers. They can efficiently learn various kinds of intra-class differences from training data, such as illumination, pose, facial expression, and age [

50]. CNN-based models have been widely utilized and trained on numerous large-scale face datasets [

48,

51,

52,

53,

54,

55].

One of the most popular pretrained architectures that has been successfully used in FR tasks is AlexNet [

56]. With the availability of integrated graphics processing units (GPUs), AlexNet decreased the training time and minimized the errors, even with large-scale datasets [

57]. VGG16 and VGG19 [

58] are also very common CNN-based architectures that have been utilized in various computer vision applications, including face recognition. The VGG-based models typically provide convolution-based features or representations. Despite the remarkable achieved accuracy, they suffer from the training time and complexity [

59].

Over time, the task of image recognition became more complex and therefore it should be handled by deeper neural networks. However, if more layers are added to the networks, it becomes more complicated and difficult to train; hence, an accuracy decay is usually encountered. To overcome this challenge, residual network (ResNet) [

60] was introduced, which stacks extra layers and accomplishes higher performance and accuracy. The added layers can learn complex features; however, adding more layers must be empirically determined to control any degradation in the model performance. MobileNet [

61] is one of the most important lightweight deep neural networks that mainly depends on a streamline architecture, and it is commonly used for FR tasks. Its architecture showed a high performance with hyperparameters, and the calculations of the model are faster [

62].

Inception and its variations [

63,

64,

65] are also popular CNN-based architecture; their novelty lies in using modules or blocks to build networks that contain convolutional layers instead of stacking them. Xception [

66] is an extreme version of inception that replaces the modules of inception with depth-wise separable convolutions.

Table 1 summarizes the main characteristics of the popular CNN-based models used in the domain of MFR.

3.2.2. Autoencoders

Autoencoder is a popular deep neural network that provides an unsupervised feature learning-based paradigm to efficiently encode and decode the data [

67]. Due to its ability in learning robust features from a huge size of unlabeled data automatically [

68], noticeable research efforts have been paid to encode the input data into low-dimensional space with significant and discriminative representations, which is accomplished by a decoder. Then, a decoder reverses the process to generate the key features from the encoded stage with backpropagation at the training time [

69]. Autoencoders have been effectively utilized for the task of OFR, such as LSTM-autoencoders [

70], double channel SSDA (DC-SSDA) [

71], de-corrupt autoencoders [

72], and 3D landmark-based variational autoencoder [

73].

3.2.3. Generative Adversarial Networks

Generative adversarial networks (GANs) [

74] are used to automatically explore and learn the regular patterns from the input data without extensively annotated training data. GAN consists of a pair of neural networks: generator and discriminator. The generator uses random values from a given distribution as noisy data and produces new features. The discriminator represents a binary classifier that classifies the generated features and decides whether they are fake or real. GANs are called adversarial due to their adversarial trained setting since the generator and the discriminator seek to optimize an opposing loss function in a minimax game (i.e., a zero-sum game). Another important fact that should be stressed is that the common problems of FR such as face synthesis [

75], cross-age face recognition [

76], pose invariant face recognition [

77], and makeup-invariant face recognition [

78] have been addressed using GANs.

3.2.4. Deep Belief Network

Deep belief network (DBN) is a set of multiple hidden units of different layers that are internally connected without connecting the units in the same layer. It typically includes a series of restricted Boltzmann machines (RBMs) or autoencoder where each hidden sub-layer acts as a visible layer for the next hidden sub-layer and the last layer is a softmax layer used in the classification process. DBNs have also utilized in the domain of FR [

79] and OFR [

80].

3.2.5. Deep Reinforcement Learning

Reinforcement learning learns from the nearby environment; therefore, it emulates the procedure of human decision making by authorizing the agent to choose the action from its experiences by trial and error [

81]. An agent is an entity that can perceive its environment through sensors and act upon that environment through actuators. The union of deep learning and reinforcement learning is effectively applied in deep FR such as attention-aware [

82] and margin-aware [

15] methods.

3.2.6. Specific MFR Deep Networks

Many deep learning architectures have been specifically developed or tuned for the task of FR or OFR, and they noticeably contributed to the performance improvement. FaceNet [

83] maps images to Euclidean space via deep neural networks, which builds face embeddings according to the triplet loss. When the images belong to the same person, the distance between them will be small in the Euclidean space while the distance will be large if those images belong to different people. This feature enables FaceNet to work on different tasks such as face detection, recognition, and clustering [

84]. SphereFace [

8] is another popular FR system that is rendering geometric interpretation and enabling CNNs to learn angularly discriminative features, which makes it efficient in face representation learning. ArcFace [

7] is also an effective FR network based on similarity learning that replaces softmax loss with an angular margin loss. It calculates the distance between images using cosine similarity to find the smallest distance.

Deng et al. [

85] have also proposed MFCosface as a MFR algorithm on the basis of the large margin cosine loss. It efficiently overcomes the problem of low recognition rates with mask occlusions by detecting the key facial features of masked faces. MFCosface also relies on the large margin cosine loss. It optimizes the representations of facial features by adding an attention mechanism to the model. VGGFace [

48] is a face recognition system that includes a deep convolution neural network for recognition based on VGG-Very-Deep-16 CNN architecture. It also includes a face detector and localizer based on a cascade deformable parts model. DeepID [

86] was introduced to learn discriminative deep face representation through classifying large-scale face images into a large number of identities, i.e., face identification. However, the learned face representations are challenged by the significant intrapersonal variations that have been reduced by many DeepID variants, such as joint face identification-verification presented in DeepID + 2 [

87].

3.3. Feature Extraction

Feature extraction is a crucial step in the face recognition pipeline that aims at extracting a set of features discriminative enough to represent and learn the key facial attributes such as eyes, mouth, nose, and texture. With the existence of face occlusions and masks, this process becomes more complicated, and the existing face recognition systems need to be adapted to extract representative yet robust facial features. In the context of masked face recognition, the feature extraction approaches can be divided into shallow and deep representation methods.

Shallow feature extraction is a traditional method that explicitly formulates a set of handcrafted features with low learning or optimization mechanisms. Some methods use the handcrafted low-level features to find the occluded local parts and dismiss them from the recognition [

88]. LBPs [

44], SIFT [

45], HOG [

89], and codebooks [

90] are among the popular descriptors that represent holistic learning, local features, and shallow learning approaches. In the non-occluded face recognition tasks, they have achieved a noticeable accuracy and robustness against many face changes such as illumination, affine, rotation, scale, and translation. However, the performance of shallow features has shown a degradation while dealing with occluded faces, including face masks, which have been largely outperformed by the deep representations obtained by deep learning models.

Many methods were created and evaluated to extract features from faces using deep learning. Li et al. [

91] assumed that the features of masked faces often include mask region-related information that should be modeled individually and learned two centers for each class instead of only one, i.e., one center for the full-face images and one for the masked face images. Song et al. [

92] introduced a multi-stage mask learning strategy that is mainly based on CNN, by which they aimed at finding and dismissing the corrupted features from the recognition. Many other attention-aware and context-aware methods have extracted the image features using an additional subnet to acquire the important facial regions [

93,

94,

95].

Graph image representations with deep graph convolutional networks (GCN) have also been utilized in the domain of masked face detection, reconstruction, and recognition [

96,

97,

98]. GCNs have shown high capabilities in learning and handling face images using spatial or spectral filters that are built for a shared or fixed graph structure. However, learning the graph representations is commonly restricted with the number of GCN layers and the unfavorable computational complexity. The 3D space features have been also investigated for the task of occluded or masked 3D face recognition [

34,

99,

100]. The 3D face recognition methods mimic the real vision and understanding of the human face features, and therefore they can help to improve the performance of the existing 2D recognition systems. The 3D facial features are robust against many face changes such as illumination variations, facial expressions, and face directions.

3.4. Mask Detection

Recently, face masks have become one of the common objects that occlude the facial parts, coming in different styles, sizes, textures, and colors. This strengthens the requirement of training the deep learning models to accurately detect the masks. Most of the existing detection methods, usually introduced for object detection, are tuned and investigated in the task of mask detection. Regions with CNN features (R-CNN) [

101] has had a global adoption in the domain of object detection, in which a deep ConvNet is utilized to classify object proposals. In the context of occluded faces, R-CNN extracts thousands of facial regions by feeding them to a CNN network and applying a selective search algorithm, which generates a feature vector for each region. Subsequently, the presence of an object within that candidate facial region proposal from the extracted feature will be classified by support vector machine (SVM). Fast R-CNN [

102] and Faster R-CNN [

103] were also introduced to enhance the performance by transforming the R-CNN architecture. However, these methods have notable drawbacks such as the training process is a multi-stage pipeline and therefore expensive in terms of space and time. Moreover, the R-CNN slowly performs a ConvNet forward pass for each object proposal without sharing computation. Zhang et al. [

104] proposed a context-attention R-CNN as a detection framework of wearing face masks. This framework is used to expand the intra-class distance and reduce the inter-class distance by extracting distinguishing features.

Consequently, more research efforts have been concentrated on using the segmentation-based deep networks for mask detection. Fully convolutional neural network (FCN) [

105] is a semantic segmentation architecture that is mainly used with a CNN-based autoencoder that does not contain any dense layers. It is a developed version of a popular classification module by modifying the fully connected layers and replacing them with 1 × 1 convolution. U-Net [

106] has also been firstly introduced for the biomedical image segmentation but widely applied in many computer vision applications, including face detection [

107,

108]. It includes an encoder that captures the image context using a series of convolutional and max-pooling layers while a decoder up-samples the encoded information using transposed convolutions. Then, feature maps from the encoder are concatenated to the feature maps of the decoder. This helps in better learning of contextual (relationship between pixels of the image) information.

Other effective methods for MFR or OFR have also been proposed in the literature. Wang et al. [

109] introduced a one-shot-based face detector called face attention network (FAN), which utilizes the feature pyramid network to address the occlusion and false positive issue for the faces with different scales. Ge et al. [

110] proposed an LLE-CNN to detect masked faces through combining pre-trained CNNs to extract candidate facial regions and represent them with high dimensional descriptors. Then, a locally linear embedding module forms the facial descriptors into vectors of weights to recover any missing facial cues in the masked regions. Finally, the classification and regression tasks employ the weighted vectors as input to identify the real facial regions. Lin et al. [

111] introduced the modified LeNet (MLeNet) by increasing the number of units in the output layer and feature maps with a smaller filter size, which in turn further reduces overfitting and increases the performance of masked face detection with a small amount of training images. Alguzo et al. [

98] presented multi-graph GCN-based features to detect face masks using multiple filters. They used the embedded geometric information calculated on the basis of distance and correlation graphs to extract and learn the key facial features. Negi et al. [

112] detected face masks on the Simulated Masked Face Dataset (SMFD) by proposing CNN- and VGG16-based deep learning models and combining AI-based precautionary measures.

Local features fusion-based deep networks have also been applied to a nonlinear space for masked face detection, as introduced by Peng et al. [

113]. Many other detection-based works [

19,

114,

115] have utilized the conventional local and global facial features based on the key face parts, e.g., nose and mouth.

The concept of face mask assistant (FMA) has recently been introduced by Chen et al. [

116] as a face detection method based on a mobile microscope. They obtained micro-photos of the face mask, then the globally and locally consistent image completion (GLCM) is utilized to extract texture features and to choose contrast, correlation, energy, and homogeneity as facial features. Fan et al. [

117] proposed a deep learning-based single-shot light-weight face mask detector to meet lower computational requirements for embedded systems. They introduced the single-shot light-weight face mask detector (SL-FMDet), which worked effectively due to its low hardware requirements. The lightweight backbone caused a lower feature extraction capability, which was a big obstacle. To solve this problem, the authors extracted rich context information and focused on the crucial face mask-related areas to learn more discriminating features for faces with and without masks. Ieamsaard et al. [

118] studied and developed a deep learning model for face mask detection and trained it on YoloV5 at five different epochs. The YoloV5 was used with CNN to verify the existence of face mask and if the mask is placed correctly on the face.

3.5. Face Unmasking

There are various approaches adopted in the literature for object removal, which is the mask in this study context. Several common methods are presented here into learning-based object removal and non-learning-based object removal algorithms.

For learning-based approaches, Shetty et al. [

119] proposed a GAN-based model that receives an input image, then removes the target object automatically. Li et al. [

120] and Iizuka et al. [

121] introduced two different models to learn a global coherency and complete the corrupted region by removing the target object and reconstructing the damaged part using a GAN setup. Khan et al. [

122] used a coarse-to-fine GAN-based approach to remove the objects from facial images.

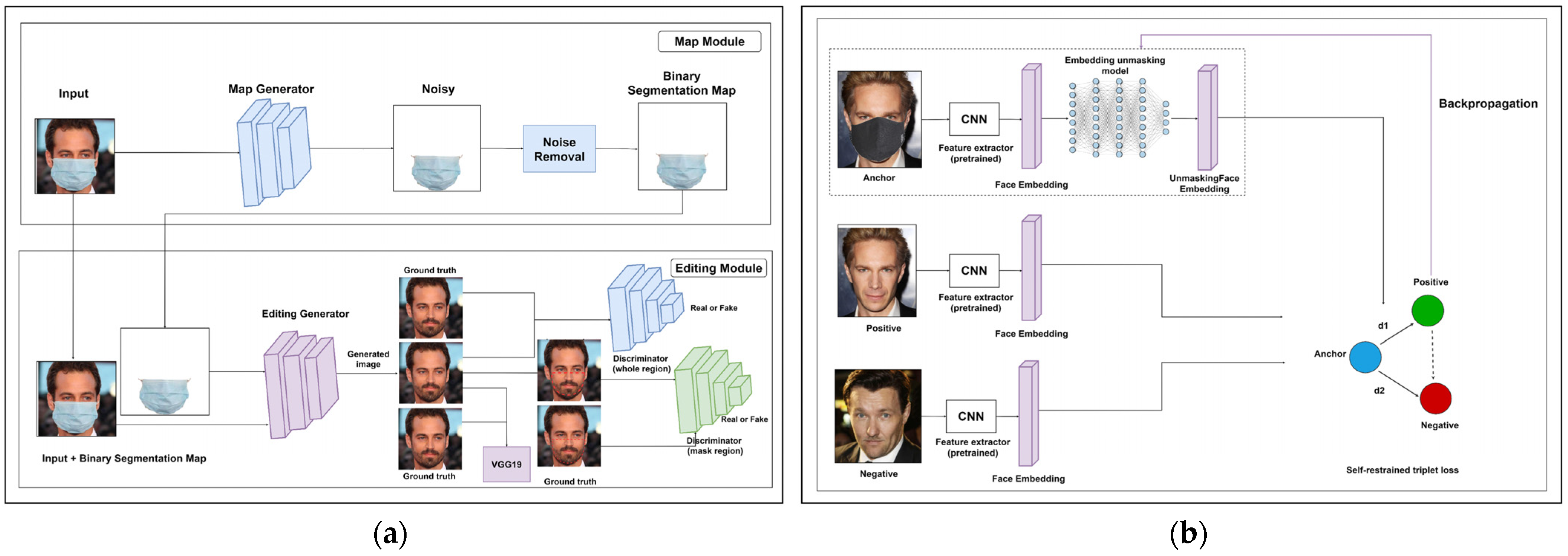

For mask removal, Boutros et al. [

123] presented an embedding unmasking model (EUM) that takes a feature embedding extracted from the masked face as input. It generates a new feature embedding similar to an embedding of an unmasked face of the same identity with unique properties. Din et al. [

29,

30] used a GAN setup with two discriminators to automatically remove the face mask.

For non-learning approaches, Criminisi et al. [

124] introduced a model that removes the undesired part of an image and creates a new region that suits the missing region then matches what is left of the image synthetically. Wang [

125] proposed a regularized factor that adjusts the curve of the patch priority function in order to compute the filling order. Park et al. [

126] used principal component analysis (PCA) reconstruction and recursive error compensation to remove eyeglasses from facial images. Hays et al. [

127] presented an image completion algorithm that depends on a large database of images to search for similar information and embed it into the corrupted pixel of input sample.

3.6. Face Restoration

After unmasking the face, any missing parts should be estimated and restored in order to conduct the identity matching process to make the recognition decision, i.e., recognized or unrecognized identity.

One of the pioneering works in image reconstruction is sparse representation-based classification (SRC) [

128] for robust OFR. Various variants of SRC were introduced for specific problems in FR, such as the extended SRC (ESRC) for the task of under-sampled FR [

129] and the group sparse coding (GSC) [

130] for increasing the discriminative ability of face reconstruction. Many other methods have been proposed to reconstruct the missing parts of occluded faces. Yuan et al. [

131] used support vector discrimination dictionary and Gabor occlusion dictionary-based SRC (SVGSRC) for OFR. Sparse representation and particle filtering were combined and investigated by Li et al. [

132]. Cen et al. [

133] also presented a classification scheme based on a depth dictionary representation for robust OFR. A 2D image matrix-based error model named nuclear norm-based matrix regression (NMR) for OFR was also discussed in [

134]. A sparse regularized NMR method by introducing L1-norm constraint instead of L2-norm on the representation of the NMR framework was introduced in [

135]. However, the image reconstruction methods showed many well-known drawbacks such as the need for an overcomplete dictionary and a large increase in gallery images leading to a complexity problem, as well as their limitation in the generalization capability.

Deep learning methods have addressed such challenges in order to recover the missing part in the facial image. In the last few years, GAN-based methods [

120,

121,

136] have been utilized with global and local discriminators to handle the task of face reconstruction. Yeh et al. [

137] used the semantic image inpainting-based data to compute the missing pixels and regions. Yet, they cannot preserve facial identity. Consequently, Zhao et al. [

138] introduced a model to retrieve the missing pixel parts under various head poses while trying to preserve the identity on the basis of an identity loss and a pose discriminator in network training. Duan et al. [

139] proposed an end-to-end BoostGAN network that consists of three parts: multi-occlusion frontal-view generator, multi-input boosting network, and multi-input discriminator. This approach is equipped with a coarse-to-fine face de-occlusion and frontalization network ensemble. Yu et al. [

140] proposed a coarse-to-fine GAN-based approach with a novel contextual attention module for image inpainting. Din et al. [

29,

30] used GAN-based image inpainting for image completion through an image-to-image translation approach. Duan et al. [

141] used GANs to handle the face frontalization and face completion tasks simultaneously. They introduced a two-stage generative adversarial network (TSGAN) and proposed an attention model that is based on occluded masks. Moreover, Luo et al. [

142] used GANs to introduce the EyesGAN framework, which is mainly used to construct the face based on the eyes.

Ma et al. [

143] presented a face completion method, called learning and preserving face completion network (LP-FCN), to parse face images and extract the features of face identity-preserving (FIP) concurrently. This method is mainly based on CNN, which is trained to transform the FIP features. These features are fused to feed them into a decoder that generates the complete image.

Figure 3 shows two approaches that have been recently proposed to unmask faces and restore the missing facial parts.

3.7. Face Matching and Recognition

Face matching by deep features for FR and MFR can be considered as a problem of face verification or identification. In order for this task to be accomplished, a set of images of identified subjects is initially fed to the system during the training and validation phase. In the testing phase, a new unseen subject is presented to the system to make a recognition decision. For a set of deep features or descriptors to be effectively learnt, an adequate loss function should be implemented and applied. There are two common matching approaches adopted by the community of MFR: 1-to-1 and 1-to-N (1-to-many). In both approaches, common distance measures are usually used, such as Euclidean-based L2 and cosine. The procedure of 1-to-1 similarity matching is typically used in face verification, which is applied between the ground-truth image collection and the test image to determine whether the two images refer to the same person, whereas the procedure of 1-to-N similarity matching is employed in face identification that investigates the identity of a specific masked face.

Many methods have been introduced to enhance the discrimination level of deep features with the aim at making the process of face matching more accurate and effective, e.g., metric learning [

144] and sparse representations [

145]. Deep learning models for matching face identities have widely used the softmax loss-based and triplet loss-based models. Softmax-loss-based models rely on training a multi-class classifier regarding one class for each identity in the training dataset using a softmax function [

92,

93]. On the other hand, triplet loss-based models [

83] are characterized in learning the embedding immediately by matching the results of various inputs to minimize the intra-class distance and therefore maximize the inter-class distance. However, the performance of softmax loss-based and triplet loss-based models suffer from the facemask occlusions [

146,

147].

Recently, numerous research works have also been presented in the literature to solve the MFR tasks. For instance, effective approaches have shown high FR performance either by GAN-based methods to unmask faces before feeding them to the face recognition model [

29,

91], by extracting features only from the upper part of the face [

147], or by training the face recognition network with a combination of masked and unmasked faces [

31,

35]. Anwar et al. [

31] combined the VGG2 dataset [

55] with augmented masked faces and trained the model using the original pipeline defined in FaceNet [

83], which in turn enabled the model to distinguish if a face is wearing a mask or not on the basis of the features of the upper half of the face. Montero et al. [

148] introduced a full-training pipeline of ArcFace-based face recognition models for MFR. Geng et al. [

35] were able to identify two centers for each identity that match the full-face images and the masked face images sequentially using the domain constrained ranking (DCR).

4. Standard Datasets

This section introduces the common benchmarking datasets used in literature to evaluate the MFR methods. The Synthetic CelebFaces Attributes (Synthetic CelebA) [

29] dataset consists of 10,000 synthetic images available publicly. CelebA [

149] is a large-scale face attributes dataset with more than 200,000 celebrity images. It was built using 50 types of synthetic masks of various sizes, shapes, colors, and structures. In the building of the synthetic samples, the face was aligned using eye-coordinates for all images, and then the mask was put randomly on the face using Adobe Photoshop.

The Synthetic Face-Occluded Dataset [

30] was created using the publicly available CelebA and CelebA-HQ [

150] datasets. CelebA-HQ is a large-scale face attribute dataset with more than 30,000 celebrity images. Each face image is cropped and roughly aligned by eye position. The occlusions were synthesized by five popular non-face objects: hands, mask, sunglasses, eyeglasses, and microphone. More than 40 various kinds of each object were used with a variety in sizes, shapes, colors, and structures. Moreover, non-face objects were randomly put on faces.

The Masked Face Detection Dataset (MFDD), Real-World Masked Face Recognition Dataset (RMFRD), and Masked Face Recognition Dataset (SMFRD) were also introduced in [

17]. MFDD includes 24,771 images of masked faces to enable the MFR model to detect the masked faces accurately. RMFRD includes 5000 images of 525 people with masks, and 90,000 images of the same people without masks. This dataset is the largest dataset available for MFR. To make the dataset more diverse, researchers introduced SMFRD, which consists of 500,000 images of synthetically masked faces of 10,000 people on the Internet. The RMFRD dataset was used in [

151], as the unconscionable face images resulting from incorrect equivalence were manually eliminated. Furthermore, the right face regions were cropped with the help of semi-automatic annotation tools, such as LabelImg and LabelMe.

The Masked Face Segmentation and Recognition (MFSR) dataset [

35] consists of two parts. The first part includes 9742 images of masked faces that were collected from the Internet with masked region segmentation annotation that is labeled manually. The second part includes 11,615 images of 1004 identities, where 704 of them are real-world collected and the rest of images were collected from the Internet, in which each identity has at least one image of both masked and unmasked faces. Celebrities in Frontal-Profile in the Wild (CFP) [

152] includes faces from 500 celebrities in frontal and profile views. Two verification protocols with 7000 comparisons to each are presented: one compares only frontal faces (FF) and the other compares FF and profile faces (FP).

AgeDB dataset [

153] is the first manually gathered dataset in the wild. It includes 16,488 images from 568 celebrities of various ages. It also contains four verification protocols where the compared faces have an age difference of 5, 10, 20, and 30 years. In [

154], they created a new dataset by aligning their data with a 3D Morphable Model. It consists of 3D scans for 100 females and 100 males. In [

155], they prepared 200 images and classified them then performed their model on two datasets of masked face recognition. They used 100 pictures for a masked face and 100 pictures for an unmasked face.

The MS1MV2 [

7] dataset is a refined version of the MS-Celeb1M dataset [

52]. MS1MV2 includes 58 million images of 85,000 various identities. Boutros et al. [

123] produced a masked version of MS1MV2 noted as MS1MV2-Masked. The mask type and color were randomly chosen for each image to provide the mask color and cover more variations in the training dataset. A subset of 5000 images was randomly chosen from MS1MV2-Masked to verify the model during the training phase. For the evaluation phase, the authors used two real masked face datasets: Masked Faces in Real World for Face Recognition (MFR2) [

31] and the Extended Masked Face Recognition (EMFR) datasets [

146]. MFR2 includes 269 images of 53 identities taken from the internet. Hence, the images in the MRF2 dataset can be considered to be captured under in-the-wild conditions. The database includes images of masked and unmasked faces with an average of five images per identity.

The EMFR is gathered from 48 participants using their webcams under three varied sessions: session 1 (reference), session 2, and session 3 (probes). The sessions were captured on three distinct days. The baseline reference (BLR) includes 480 images from the first video of the first session (day). The mask reference (MR) holds 960 images from the second and third videos of the first session. The baseline probe (BLP) includes 960 images from the first video of the second and third sessions and holds face images with no mask. The mask probe (MP) includes 1920 images from the second and third videos of the second and third sessions.

The Labeled Faces in the Wild (LFW) dataset [

156] includes 50,000 images approximately. For training, Golwalkar et al. [

157] used masked faces of 13 people and 204 images. For testing, they used the same face images but with 25 images of each person. Moreover, the LFW-SM variant dataset was introduced in [

31], which extends the LFW dataset with simulated masks, and it contains 13,233 images of 5749 people. Many MFR methods also used the VGGFace2 [

55] dataset for training, which consists of 3 million images of 9131 people with nearly 362 images per person. The Masked Faces in the Wild (MFW) mini dataset [

37] was created by gathering 3000 images of 300 people from the Internet, containing five images of masked faces and five of unmasked faces for every person. The Masked Face Database (MFD) [

158] includes 45 subjects with 990 images of females and males.

In [

159], two datasets for MFR were introduced: Masked Face Verification (MFV) that consists of 400 pairs for 200 identities, and Masked Face Identification (MFI) that consists of 4916 images of 669 identities. The Oulu-CASIA NIR-VIS dataset [

160] includes 80 identities with six expressions per identity and consists of 48 NIR and 48 VIS images per identity. CASIA NIR-VIS 2.0 [

161] contains 17,580 face images with 725 identities, and the BUAA-VisNir dataset [

162] consists of images of 150 identities, including nine NIR and nine VIS images for each identity.

VGG-Face2_m [

85] is a new version of the VGG-Face dataset. It contains over 3.3 million images of 9131 identities. CASIA-FaceV5_m [

85] is a refined version of CASIA-FaceV5, which contains 2500 images of 500 Asian people, with five images for each person.

The Webface dataset [

51] is collected from the IMBb and consists of 500,000 images of 10,000 identities. The AR dataset [

163] contains 4000 images of 126 identities. It is widely used in various OFR tasks. The Extend Yela B dataset [

164] contains 16,128 images of 28 identities under nine poses and 64 illumination conditions. It is widely used in face recognition tasks.

Table 2 shows the main characteristics of the datasets used in the masked face recognition task.

Figure 4 also shows some sample images taken from common benchmarking MFR datasets.