Data-Driven Modelling of Human-Human Co-Manipulation Using Force and Muscle Surface Electromyogram Activities

Abstract

:1. Introduction

2. Literature Review

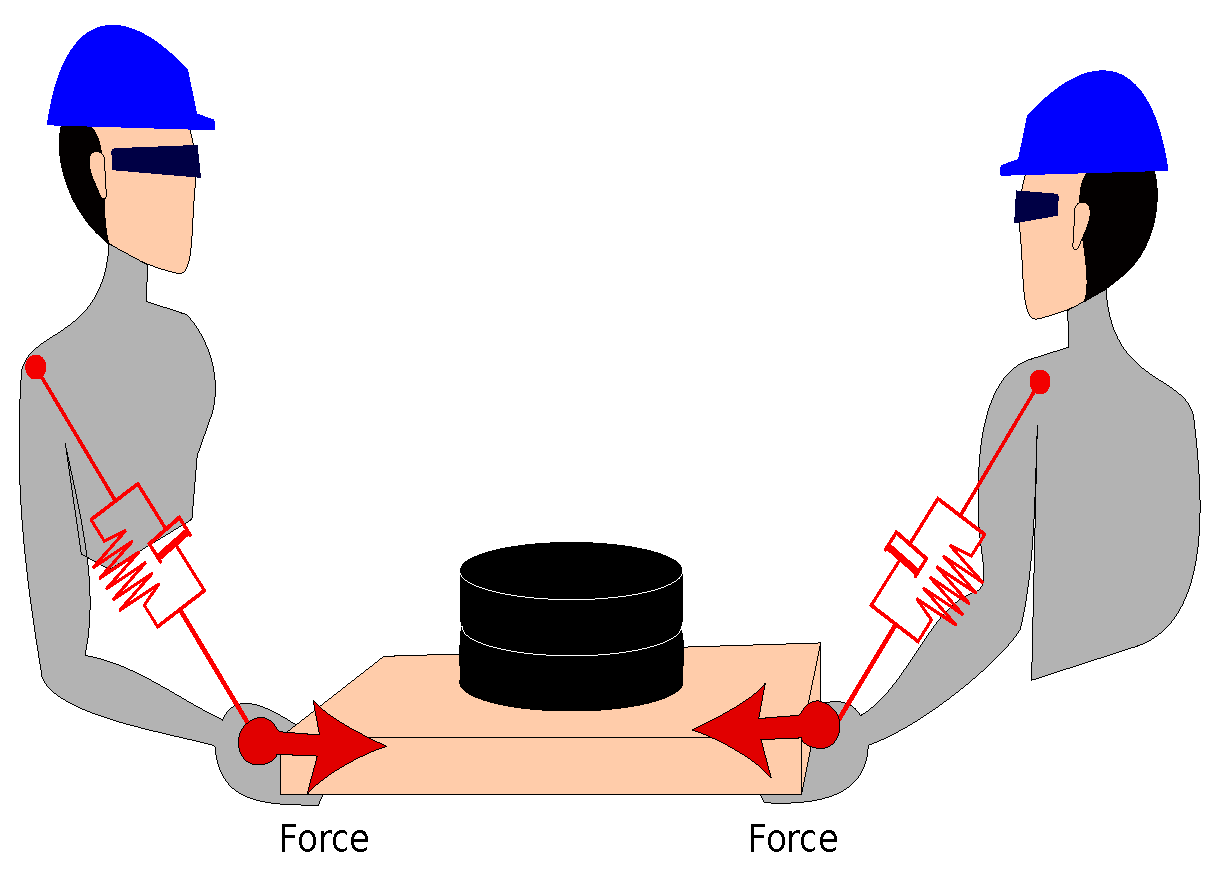

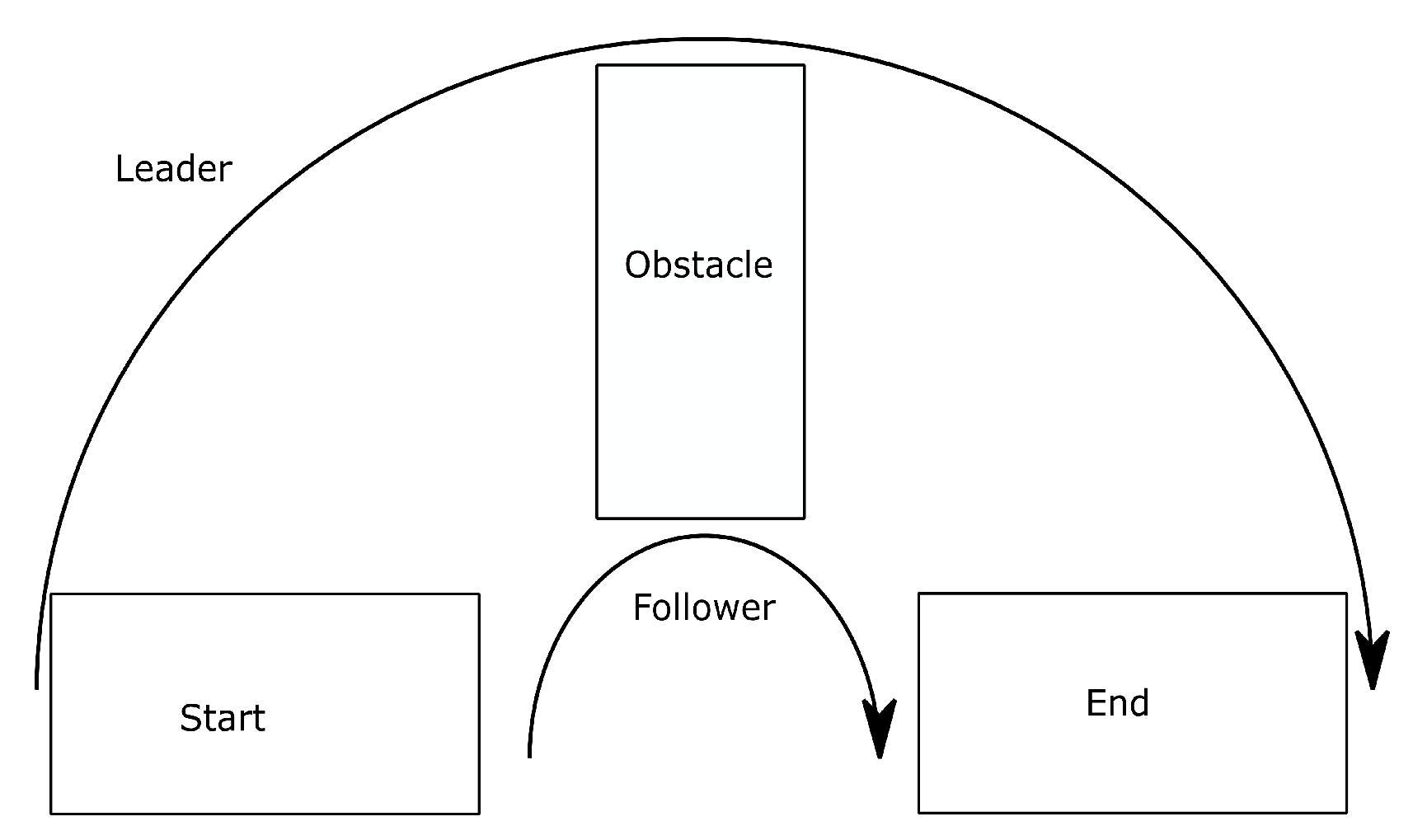

3. Problem Definition

4. Experimental Setup and Data Collection

- A six-axis F/T (F/T) sensor [41].

- Surface electromyography (sEMG). [42], which is worn by the follower human

- Motion tracker markers: eight cameras—VICON Vantage 5, https://www.vicon.com/hardware/cameras/vantage/.

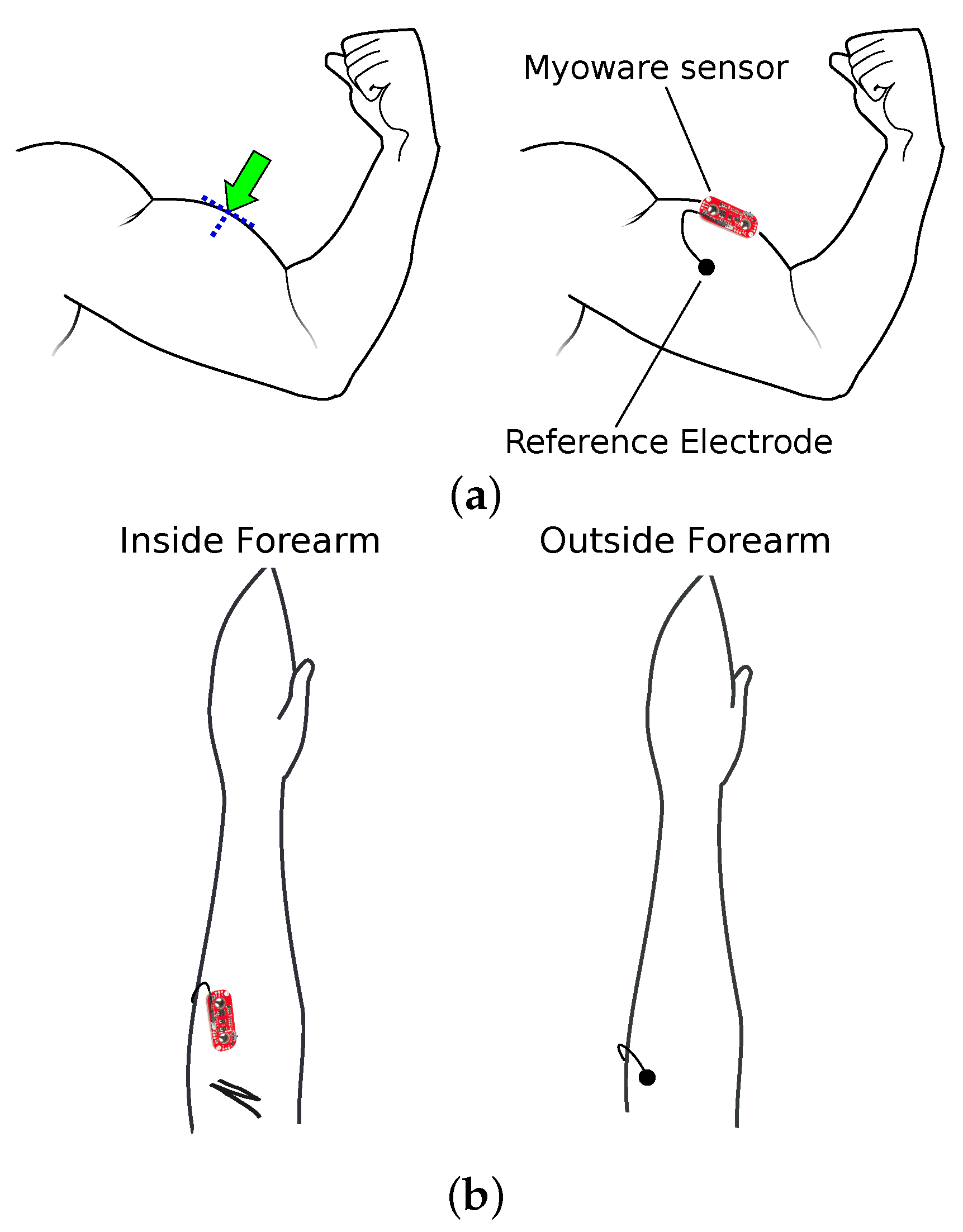

Sensor Placement

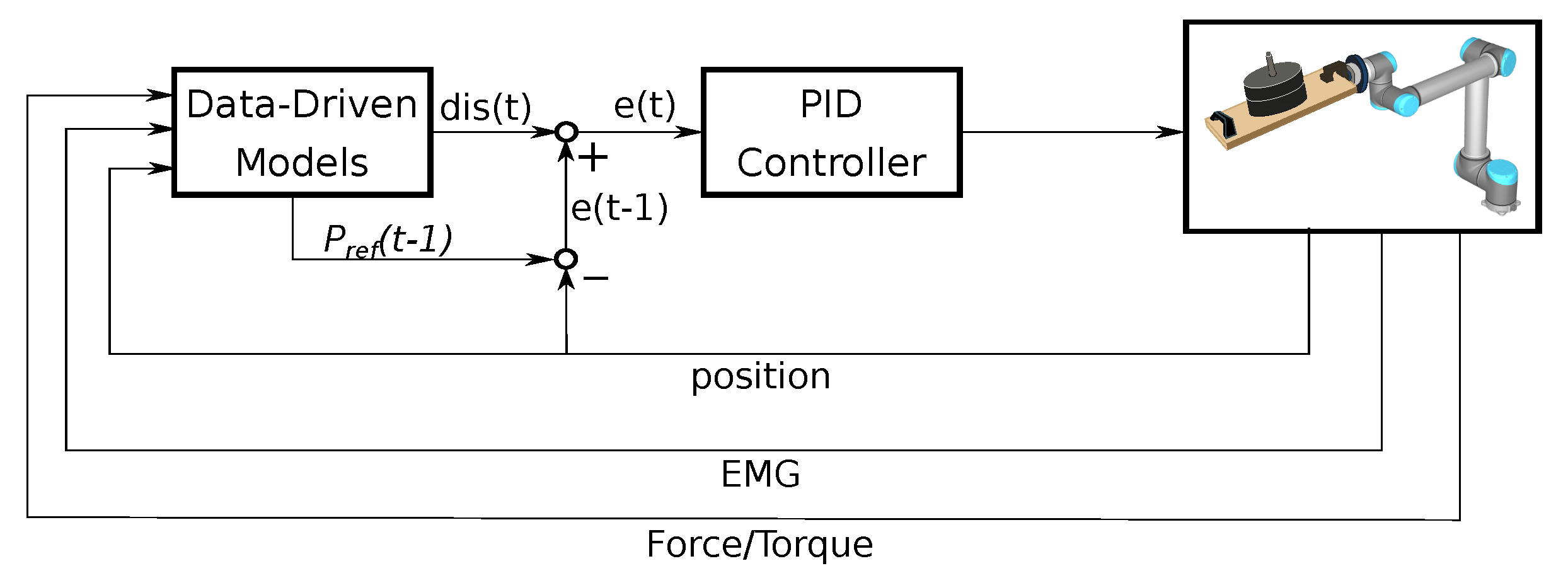

5. Methodology

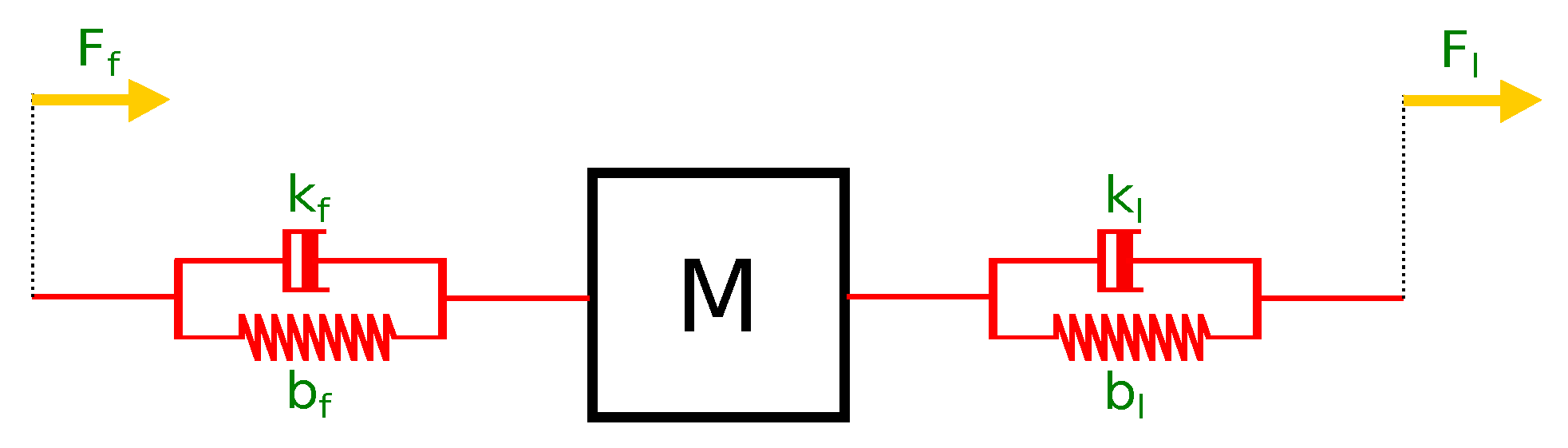

5.1. Mathematical Modelling

5.2. Model-Free Approaches: Data-Driven Models

5.3. Hybrid Modelling Approach (HM)

6. Simulation Setup

7. Results and Discussion

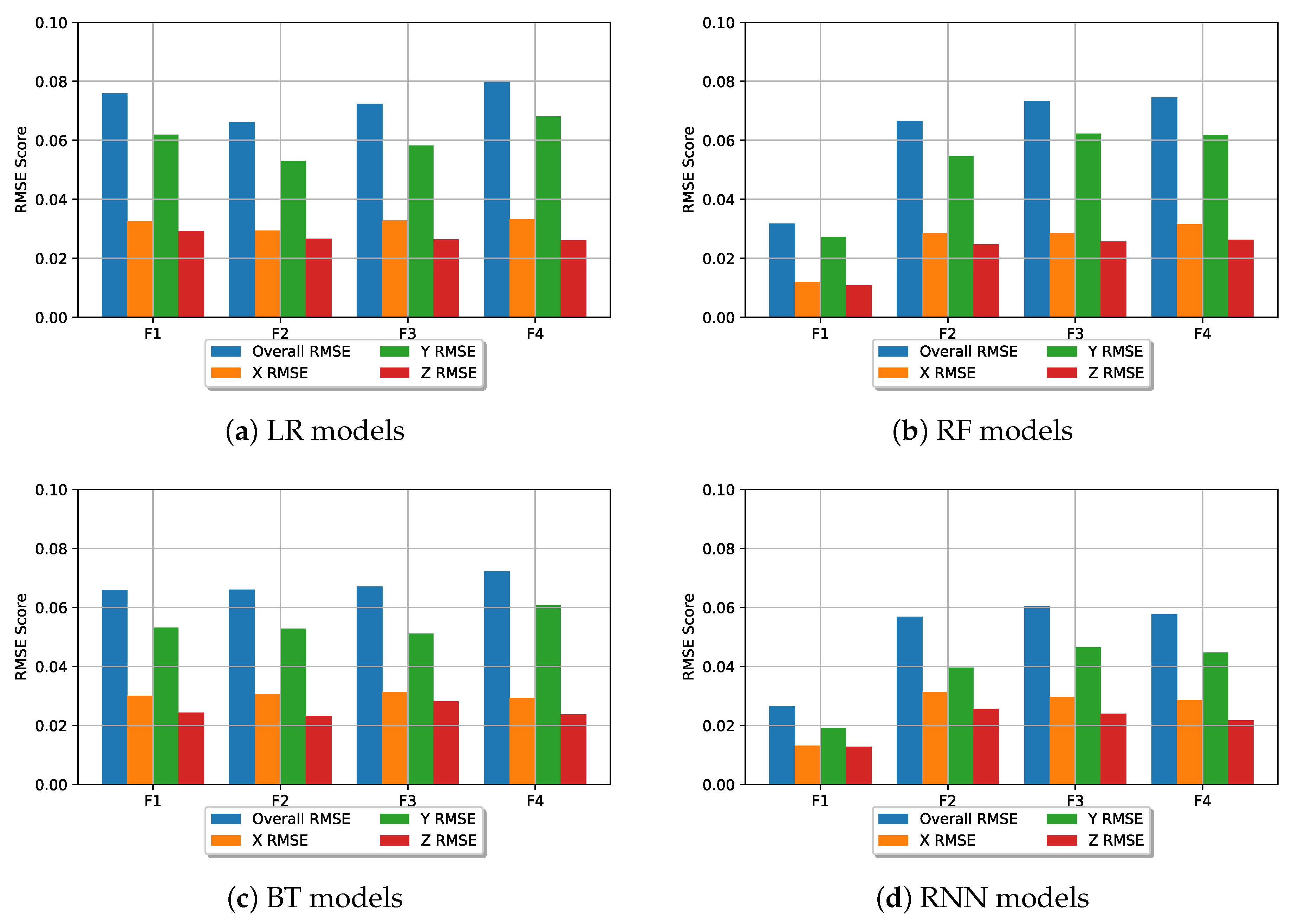

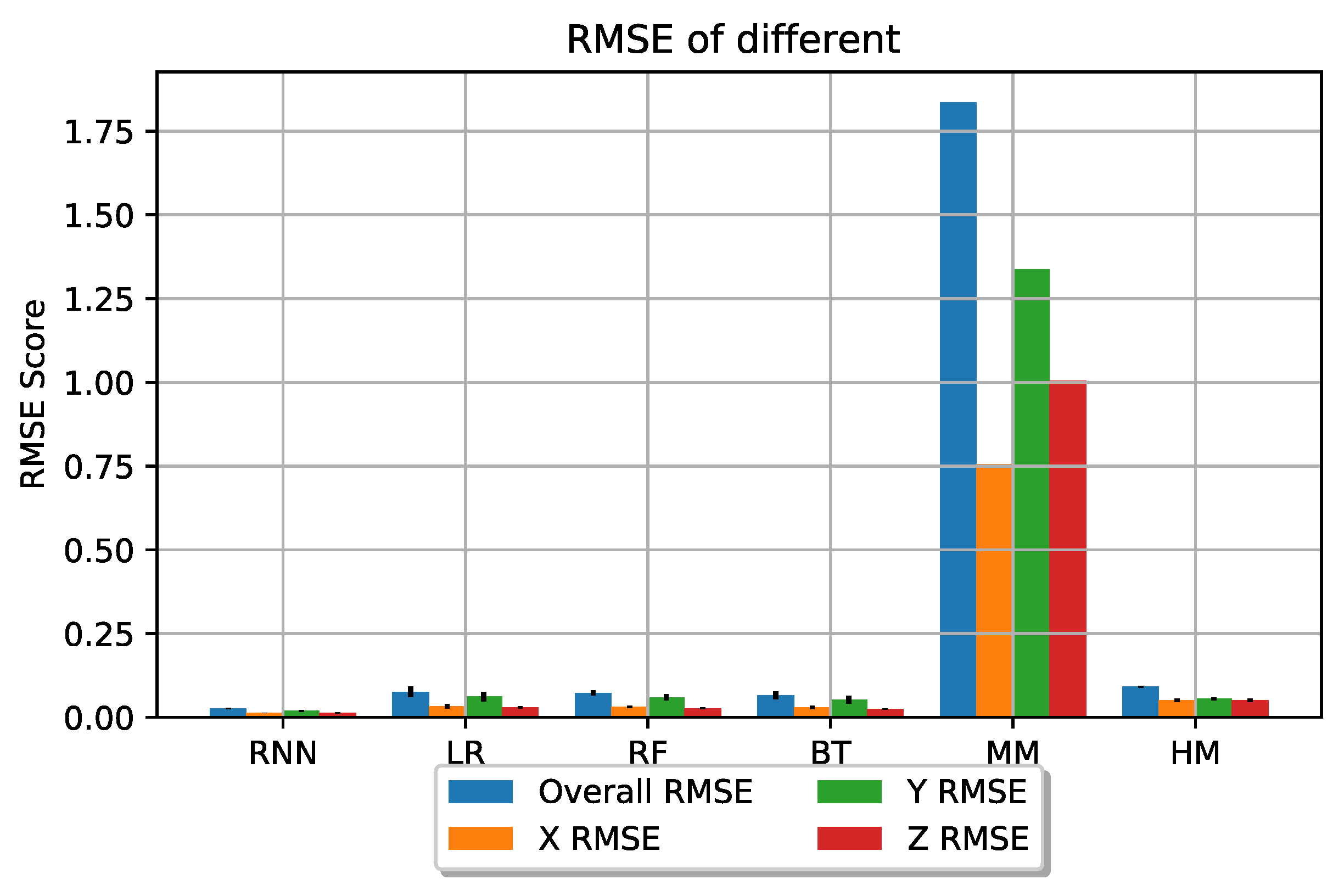

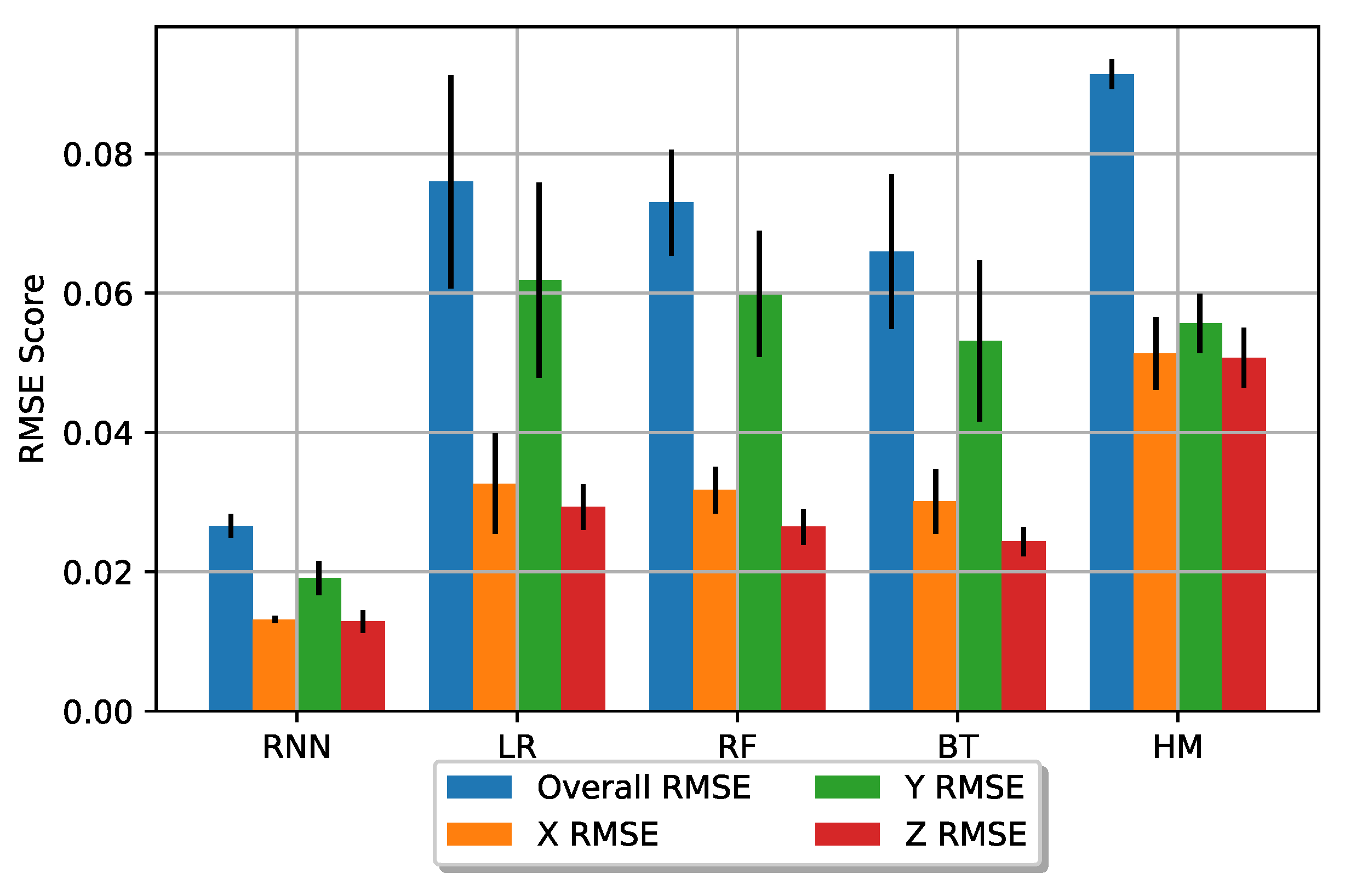

7.1. Results

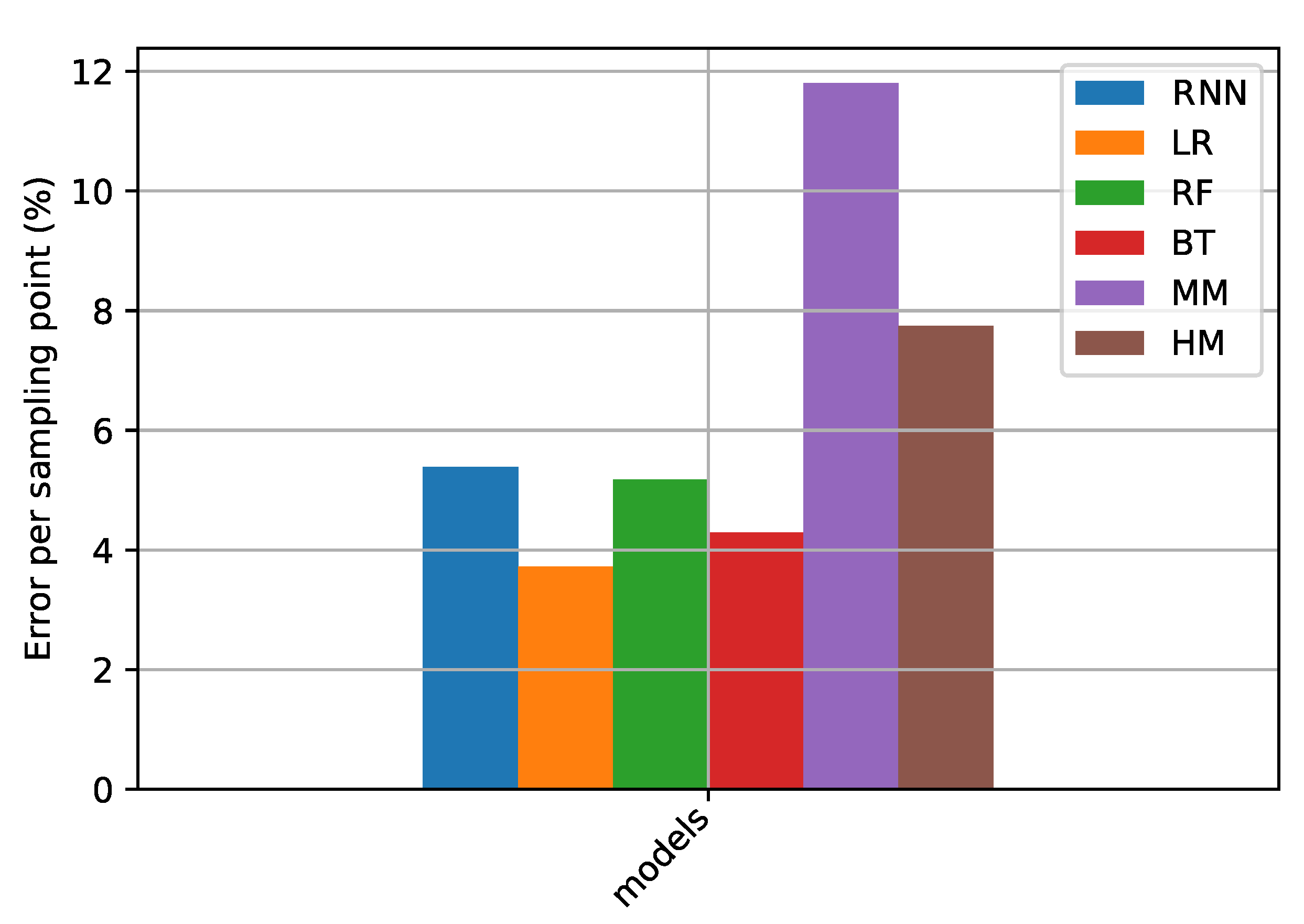

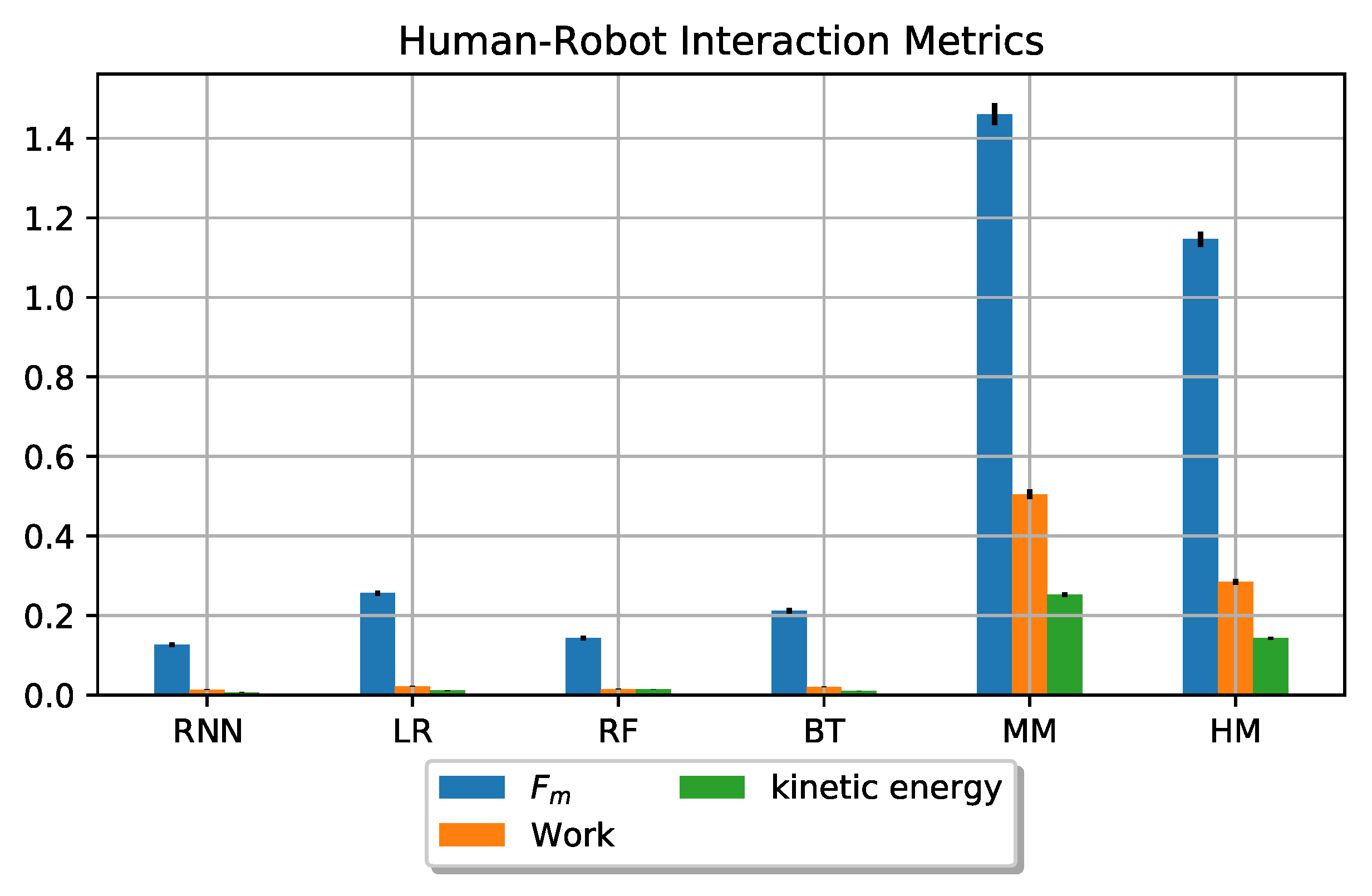

Simulation Results

7.2. Discussion

8. Conclusions and Future Work

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Tsarouchi, P.; Makris, S.; Chryssolouris, G. Human-robot interaction review and challenges on task planning and programming. Int. J. Comput. Integr. Manuf. 2016, 29, 916–931. [Google Scholar] [CrossRef]

- Kroemer, O.; Niekum, S.; Konidaris, G. A review of robot learning for manipulation: Challenges, representations, and algorithms. arXiv 2019, arXiv:1907.03146. [Google Scholar]

- Zou, F. Standard for human-robot Collaboration and its Application Trend. Aeronaut. Manuf. Technol. 2016, 58–63, 76. [Google Scholar]

- Hentout, A.; Aouache, M.; Maoudj, A.; Akli, I. Human–robot interaction in industrial collaborative robotics: A literature review of the decade 2008–2017. Adv. Robot. 2019, 33, 764–799. [Google Scholar] [CrossRef]

- ISO/TS 15066. Robots and Robotic Devices Collaborative Robots; ISO: Geneva, Switzerland, 2016. [Google Scholar]

- Haddadin, S.; Haddadin, S.; Khoury, A.; Rokahr, T.; Parusel, S.; Burgkart, R.; Bicchi, A.; Albu-Schäffer, A. On making robots understand safety: Embedding injury knowledge into control. Int. J. Robot. Res. 2012, 31, 1578–1602. [Google Scholar] [CrossRef]

- Kaneko, K.; Harada, K.; Kanehiro, F.; Miyamori, G.; Akachi, K. September. Humanoid robot HRP-3. In Proceedings of the 2008 IEEE/RSJ International Conference on Intelligent Robots and Systems, Nice, France, 22–26 September 2008; pp. 2471–2478. [Google Scholar]

- Robla-Gomez, S.; Becerra, V.M.; Llata, J.R.; Gonzalez-Sarabia, E.; Torre-Ferrero, C.; Perez-Oria, J. Working Together: A Review on Safe human-robot Collaboration in Industrial Environments. IEEE Access. 2017, 5, 26754–26773. [Google Scholar] [CrossRef]

- Pratt, J.E.; Krupp, B.T.; Morse, C.J.; Collins, S.H. The RoboKnee: An exoskeleton for enhancing strength andendurance during walking. In Proceedings of the Robotics and Automation (ICRA), 2004 IEEE International Conferenceon, New Orleans, LA, USA, 26 April–1 May 2004; Volume 3, pp. 2430–2435. [Google Scholar] [CrossRef]

- Peternel, L.; Noda, T.; Petrič, T.; Ude, A.; Morimoto, J.; Babič, J. Adaptive control of exoskeleton robots for periodic assistive behaviours based on EMG feedback minimisation. PLoS ONE 2016, 11, e0148942. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Dalle Mura, M.; Dini, G. Designing assembly lines with humans and collaborative robots: A genetic approach. CIRP Ann. 2019, 68, 1–4. [Google Scholar] [CrossRef]

- Hayes, B.; Scassellati, B. Challenges in shared-environment human-robot collaboration. Learning 2013, 8, 1–6. [Google Scholar]

- Oberc, H.; Prinz, C.; Glogowski, P.; Lemmerz, K.; Kuhlenkötter, B. Human Robot Interaction-learning how to integrate collaborative robots into manual assembly lines. Procedia Manuf. 2019, 31, 26–31. [Google Scholar] [CrossRef]

- Schmidtler, J.; Knott, V.; Hölzel, C.; Bengler, K. Human Centered Assistance Applications for the working environment of the future. Occup. Ergon. 2015, 12, 83–95. [Google Scholar] [CrossRef]

- Weichhart, G.; Åkerman, M.; Akkaladevi, S.C.; Plasch, M.; Fast-Berglund, Å.; Pichler, A. Models for Interoperable Human Robot Collaboration. IFAC-PapersOnLine 2018, 51, 36–41. [Google Scholar] [CrossRef]

- Yanco, H.A.; Drury, J. October. Classifying human-robot interaction: An updated taxonomy. In Proceedings of the 2004 IEEE International Conference on Systems, Man and Cybernetics (IEEE Cat. No. 04CH37583), The Hague, The Netherlands, 10–13 October 2004; Volume 3, pp. 2841–2846. [Google Scholar]

- Sieber, D.; Deroo, F.; Hirche, S. November. Formation-based approach for multi-robot cooperative manipulation based on optimal control design. In Proceedings of the 2013 IEEE/RSJ International Conference on Intelligent Robots and Systems, Tokyo, Japan, 3–7 November 2013; pp. 5227–5233. [Google Scholar]

- Ikeura, R.; Inooka, H. Variable impedance control of a robot for cooperation with a human. Proc. IEEE Int. Conf. Robot. Autom. 1995, 3, 3097–3102. [Google Scholar]

- Ikeura, R.; Morita, A.; Mizutani, K. Variable damping characteristics in carrying an object by two humans. In Proceedings of the 6th IEEE International Workshop on Robot and Human Communication. RO-MAN’97 SENDAI, Sendai, Japan, 29 September–1 October 1997. [Google Scholar]

- Rahman, M.M.; Ikeura, R.; Mizutani, K. Control characteristics of two humans in cooperative task and its application to robot Control. IECON Proc. Ind. Electron. Conf. 2000, 1, 1773–1778. [Google Scholar]

- Bussy, A.; Gergondet, P.; Kheddar, A.; Keith, F.; Crosnier, A. Proactive behavior of a humanoid robot in a haptic transportation task with a human partner. In Proceedings of the 2012 IEEE RO-MAN: The 21st IEEE International Symposium on Robot and Human Interactive Communication, Paris, France, 9–13 September 2012. [Google Scholar]

- Galin, R.; Meshcheryakov, R. Review on human-robot Interaction During Collaboration in a Shared Workspace. In International Conference on Interactive Collaborative Robotics; Springer: Cham, Switzerland, 2019; pp. 63–74. [Google Scholar]

- Huang, J.; Pham, D.T.; Wang, Y.; Qu, M.; Ji, C.; Su, S.; Xu, W.; Liu, Q.; Zhou, Z. A case study in human–robot collaboration in the disassembly of press-fitted components. Proc. Inst. Mech. Eng. Part B J. Eng. Manuf. 2020, 234, 654–664. [Google Scholar] [CrossRef]

- Hakonen, M.; Piitulainen, H.; Visala, A. Current state of digital signal processing in myoelectric interfaces and related applications. Biomed. Signal Process. Control 2015, 18, 334–359. [Google Scholar] [CrossRef] [Green Version]

- Mielke, E.; Townsend, E.; Wingate, D.; Killpack, M.D. human-robot co-manipulation of extended objects: Data-driven models and control from analysis of human-human dyads. arXiv 2020, arXiv:2001.00991. [Google Scholar]

- Townsend, E.C.; Mielke, E.A.; Wingate, D.; Killpack, M.D. Estimating Human Intent for Physical human-robot Co-Manipulation. arXiv 2017, arXiv:1705.10851. [Google Scholar]

- Buerkle, A.; Lohse, N.; Ferreira, P. Towards Symbiotic human-robot Collaboration: Human Movement Intention Recognition with an EEG. In Proceedings of the UK-RAS19 Conference: “Embedded Intelligence: Enabling & Supporting RAS Technologies” Proceedings, Loughborough, UK, 27 January 2019; pp. 52–55. [Google Scholar]

- Wang, P.; Liu, H.; Wang, L.; Gao, R.X. Deep learning-based human motion recognition for predictive context-aware human-robot collaboration. CIRP Ann. 2018, 67, 17–20. [Google Scholar] [CrossRef]

- Mukhopadhyay, S.C. Wearable sensors for human activity monitoring: A review. IEEE Sens. J. 2014, 15, 1321–1330. [Google Scholar] [CrossRef]

- Peternel, L.; Fang, C.; Tsagarakis, N.; Ajoudani, A. A selective muscle fatigue management approach to ergonomic human-robot co-manipulation. Robot. Comput. Integr. Manuf. 2019, 58, 69–79. [Google Scholar] [CrossRef]

- Chowdhury, R.H.; Reaz, M.B.; Ali, M.A.B.M.; Bakar, A.A.; Chellappan, K.; Chang, T.G. Surface electromyography signal processing and classification techniques. Sensors 2013, 13, 12431–12466. [Google Scholar] [CrossRef] [PubMed]

- Lazar, J.; Feng, J.H.; Hochheiser, H. Measuring the human. In Research Methods in Human-Computer Interaction, 2nd ed.; Hochheiser, E., Ed.; Morgan Kaufmann: Boston, MA, USA, 2017; pp. 369–409. [Google Scholar]

- Géron, A. Hands-on Machine Learning with Scikit-Learn, Keras, and TensorFlow: Concepts, Tools, and Techniques to Build Intelligent Systems; O’Reilly Media: Sebastopol, CA, USA, 2019. [Google Scholar]

- Naufel, S.; Glaser, J.I.; Kording, K.P.; Perreault, E.J.; Miller, L.E. A muscle-activity-dependent gain between motor cortex and EMG. J. Neurophysiol. 2019, 121, 61–73. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Peternel, L.; Tsagarakis, N.; Ajoudani, A. A human–robot co-manipulation approach based on human sensorimotor information. IEEE Trans. Neural Syst. Rehabil. Eng. 2017, 25, 811–822. [Google Scholar] [CrossRef] [PubMed]

- Buerkle, A.; Al-Yacoub, A.; Ferreira, P. An Incremental Learning Approach for Physical human-robot Collaboration. In Proceedings of the 3rd UK Robotics and Autonomous Systems Conference (UKRAS 2020), Lincoln, UK, 17 April 2020. [Google Scholar]

- Buerkle, A.; Eaton, W.; Lohse, N.; Bamber, T.; Ferreira, P. EEG based arm movement intention recognition towards enhanced safety in symbiotic Human-Robot Collaboration. Robot. Comput. Integr. Manuf. 2021, 70, 102137. [Google Scholar] [CrossRef]

- Li, Y.; Ge, S.S. Human–robot collaboration based on motion intention estimation. IEEE/ASME Trans. Mech. 2013, 19, 1007–1014. [Google Scholar] [CrossRef]

- Herbrich, R.; Graepel, T.; Obermayer, K. Support vector learning for ordinal regression. In IET Conference Proceedings; Institution of Engineering and Technology: London, UK, 1999. [Google Scholar]

- Lieber, R.L.; Jacobson, M.D.; Fazeli, B.M.; Abrams, R.A.; Botte, M.J. Architecture of selected muscles of the arm and forearm: Anatomy and implications for tendon transfer. J. Hand Surg. 1992, 17, 787–798. [Google Scholar] [CrossRef]

- ATI Industrial Automation. Force/Torque Sensor Delta Datasheet. Available online: https://www.ati-ia.com/products/ft/ft_models.aspx?id=Delta (accessed on 18 February 2020).

- Myoware. EMG Sensor Datasheet. Available online: https://cdn.sparkfun.com/datasheets/Sensors/Biometric/MyowareUserManualAT-04-001.pdf (accessed on 11 February 2020).

- Al-Yacoub, A.; Buerkle, A.; Flanagan, M.; Ferreira, P.; Hubbard, E.M.; Lohse, N. Effective human-robot collaboration through wearable sensors. In Proceedings of the 2020 25th IEEE International Conference on Emerging Technologies and Factory Automation (ETFA), Cranfield, UK, 3–4 November 2020; Volume 1, pp. 651–658. [Google Scholar]

- Brunton, S.L.; Kutz, J.N. Data-Driven Science and Engineering: Machine Learning, Dynamical Systems, and Control; Cambridge University Press: Cambridge, UK, 2019. [Google Scholar]

- He, T.; Xie, C.; Liu, Q.; Guan, S.; Liu, G. Evaluation and comparison of random forest and A-LSTM networks for large-scale winter wheat identification. Remote Sens. 2019, 11, 1665. [Google Scholar] [CrossRef] [Green Version]

- Reinhart, R.F.; Shareef, Z.; Steil, J.J. Hybrid analytical and data-driven modeling for feed-forward robot control. Sensors 2017, 17, 311. [Google Scholar] [CrossRef]

- Naik, G.R. Computational Intelligence in Electromyography Analysis: A Perspective on Current Applications and Future Challenges; InTech: Rijeka, Croatia, 2012. [Google Scholar]

- Wyeld, T.; Hobbs, D. Visualising Human Motion: A First Principles Approach using Vicon data in Maya. In Proceedings of the 2016 20th International Conference Information Visualisation (IV), Lisbon, Portugal, 19–22 July 2016; pp. 216–222. [Google Scholar]

- Al-Yacoub, A.; Zhao, Y.C.; Eaton, W.; Goh, Y.M.; Lohse, N. Improving human robot collaboration through Force/Torque based learning for object manipulation. Robot. Comput. Integr. Manuf. 2021, 69, 102111. [Google Scholar] [CrossRef]

| Feature Set | Features | Set Dimension |

|---|---|---|

| Features 1 (F1) | normalised F/T, normalised EMG (arm/forearm), previous 3D displacements | |

| Features 2 (F2) | F/T, previous 3D displacements | |

| Features 3 (F3) | F/T, EMG (arm/forearm), previous 3D displacements | |

| Features 4 (F4) | normalised F/T, EMG (arm/forearm), previous 3D displacements |

| Model | X | Y | Z |

|---|---|---|---|

| LR | 0.032 | 0.060 | 0.027 |

| RF | 0.030 | 0.060 | 0.026 |

| BT | 0.030 | 0.054 | 0.025 |

| RNN | 0.026 | 0.037 | 0.021 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Al-Yacoub, A.; Flanagan, M.; Buerkle, A.; Bamber, T.; Ferreira, P.; Hubbard, E.-M.; Lohse, N. Data-Driven Modelling of Human-Human Co-Manipulation Using Force and Muscle Surface Electromyogram Activities. Electronics 2021, 10, 1509. https://doi.org/10.3390/electronics10131509

Al-Yacoub A, Flanagan M, Buerkle A, Bamber T, Ferreira P, Hubbard E-M, Lohse N. Data-Driven Modelling of Human-Human Co-Manipulation Using Force and Muscle Surface Electromyogram Activities. Electronics. 2021; 10(13):1509. https://doi.org/10.3390/electronics10131509

Chicago/Turabian StyleAl-Yacoub, Ali, Myles Flanagan, Achim Buerkle, Thomas Bamber, Pedro Ferreira, Ella-Mae Hubbard, and Niels Lohse. 2021. "Data-Driven Modelling of Human-Human Co-Manipulation Using Force and Muscle Surface Electromyogram Activities" Electronics 10, no. 13: 1509. https://doi.org/10.3390/electronics10131509

APA StyleAl-Yacoub, A., Flanagan, M., Buerkle, A., Bamber, T., Ferreira, P., Hubbard, E.-M., & Lohse, N. (2021). Data-Driven Modelling of Human-Human Co-Manipulation Using Force and Muscle Surface Electromyogram Activities. Electronics, 10(13), 1509. https://doi.org/10.3390/electronics10131509