Change—what it is, why it happens and how to achieve it, especially in the human psyche—has taken many forms throughout recorded history: for the ancient Greek philosopher Heraclitus, change came from cosmic fire [

1]; for the great Chinese teacher Confucius, change was perpetually created by the eternal struggle between opposing forces [

2]; for the medieval theologian Thomas Aquinas, change originated from another world [

3]; for the systems theory and family therapy pioneer Paul Watzlawick, change emerged from paradoxes [

4]; for the information-ager, change is driven by technology. Strangely enough, all of them would be correct if referring to human psyche: Heraclitus’ cosmic fire is love (commonly mythologized as one of the sources of change, e.g., by the pre-Socratic philosopher Empedocles [

5]), presumed to be the seed that produces and sustains change [

6]; Confucius’ opposing forces represent cognitive dissonance, behavior opposing attitudes and beliefs, resulting “in a psychologically uncomfortable state that motivates people to reduce the dissonance [...] by changing their attitudes to be more consonant” [

7] (p. 1469); Aquinas’ another world is the world of the human mind; Watzlawick’s paradoxes demystify Confucius’ divine opposing forces through a pragmatic psychotherapeutic framework; and the most de nos jours of all—the information ager’s notion of how technology influences us is currently one of the most broadly discussed topics that strongly conforms to the reality of living in the information society [

8].

Such pervasive technology can produce attitude and behavior change in many domains of human life where it is sought—we generally want ourselves and others to be healthy, which means exercising, sleeping and eating well; to feel well, which means successfully navigating situations and thoughts that lead us down the path of mental issues, such as stress, anxiety and depression (SAD); and other behaviors, of which most can be found in the UN’s Sustainable Development Goals [

9]. Issues, addressed by such behaviors, have in the recent decades seen a catastrophic rise. One issue particularly affected by the recent COVID-19 pandemic is mental health. The lack of resources and effective systemic frameworks in the field of mental is not a recent development, but it was the pandemic that had exposed how disastrous neglecting people’s well-being for decades can be [

10]. Existing systems were further incapacitated by imposed social distancing, where the bond between people and mental health experts severed drastically. Decision-makers are consequently turning towards technology to help in what is not only a pandemic of the body, but also a pandemic of the mind. This work contributes a piece in the needed mosaic of a systematized effort to identify how technology can help in tackling this mental health crisis. This section continues with a statement on our motivation for this work and why we believe it is needed. Afterwards, it presents three interconnected areas of research that meet for such interdisciplinary efforts: attitude and behavior change (ABC) support systems, intelligent cognitive assistant (ICA) technology, and digital mental health. Afterwards, it presents related review papers, highlighting why their insufficiency for our purposes and for computer science researchers in general, and ends the section with an outline of the paper.

1.1. Motivation for This Work

The need for this work—a review paper on intelligent cognitive assistants for attitude and behavior change in mental health, specifically for stress, anxiety and depression, from a perspective of researchers in technology-related fields—arose from investigating the technological trends and underpinnings of dialogically driven technology, used for psychological help. The search turned out three kinds of papers: (1) review papers, written by clinical experts and psychologists for clinical experts and psychologists [

11,

12,

13,

14,

15,

16]; (2) experiments on symptom relief in people with mental health issues, where an ICA was used; and (3) scattered papers by researchers who designed their own ICAs for mental health.

Papers described in (1) (see the first paragraph of this section), and more thoroughly detailed in

Section 1.5, although insightful and helpful in several ways, were not meant for experts in our field of research, as some of them clearly explicate: “This review aimed to inform health professionals” [

11] (p. 11) and “[a]lthough [embodied conversational agent (ECA)] research is almost inherently interdisciplinary, we refrained from going too deep into the technological aspects. This was because our target audience consisted of health professionals with a generally less technical background and we wanted to focus on opening up the ECA domain for them as well as providing them with an overview of the available evidence for application in routine clinical practice” [

11] (p. 13). They covered very little technical, if any, aspects of ICAs used in experiments to offer psychological help, and mostly focused on the effects they had on participants. Introducing technical aspects into such work was the first incentive for our present work.

The review papers that do exist on this topic, although not technical, covered a number of papers that presumably should offer some information on ICAs for mental health, used in their experiments. However, such papers, described in (2) (see the first paragraph of this section), offer either little or no technical description of the systems used, or the systems used were proprietary and their technical details are not disclosed [

17,

18,

19]. This made it clear that the works this review strives to examine was not included in previous reviews.

Papers in (3) (see the first paragraph of this section) are the kind of papers this work focuses on. To be rigid in our systematization, we applied various criteria that papers had to meet to be included in this review (for more, see

Section 2.5). We mostly focused on work that was of technical nature and where it was clear from the system design which technologies and methods were used to provide effective psychological help and cause attitude or behavior change.

The need for the proposed review was identified before: “[I]t has to be noted that, depending on how one would like to use ECAs in future work, many more detailed questions could be investigated surrounding ECA design aspects, such as the required capabilities for, and their impact on, specific disorders or types of ECA interventions” [

11] (p. 13). However, to the best of our knowledge, the existing review papers fall under the group of papers described in (1). The specific details that we are interested in as opposed to the latter, warranting our work, can be found in our Research Questions subsection.

This paper is the first systematized overview of intelligent cognitive assistants for attitude and behavior change in mental health with a focus on stress, anxiety and depression from a technical perspective. Due to the technical focus, we opted for the state-of-the-art review (for more, see

Section 2.1). This novel research is necessarily interdisciplinary, combining knowledge from computer science, psychology, behavioral sciences, cognitive science, psychotherapy, and related fields. Multiple perspectives, drawing from the authors’ backgrounds, are offered on the topic, mainly from computer and cognitive science.

1.2. Attitude and Behavior Change Support Systems

ABC support systems are computer systems that attempt to “change attitudes or behaviors or both (without using coercion or deception)” [

20] (p. 20) and to “aid and motivate people to adopt behaviors that are beneficial to them and their community while avoiding harmful ones” [

21] (p. 66). Attitude and behavior change signifies a phenomenon that is considered to be a temporary or lasting effect on an individual regarding their attitude or behavior as compared to what their attitudes were or how they behaved in the past [

21] (p. 66). ABC support systems belong to the larger family of persuasive technology (PT). PT is the result of the vast advances in behavioral sciences in regards to psychological change [

22], human decision-making [

23] and related phenomena [

24] as well as the arrival of digital technologies, artificial intelligence (AI) and big data. Many societal efforts have been put into creating technologies that would help, motivate, guide and persuade people into bettering themselves and the world around them, though such technology can be and has been abused as well [

25]). ABC support systems have also been in the forefront of research (e.g., at the world’s biggest AI conference, IJCAI) for helping achieve the United Nations Sustainable Development Goals, which include ensuring “healthy lives and promote well-being for all at all ages”, “inclusive and quality education for all and promote lifelong learning”, taking “urgent action to combat climate change and its impacts” and others [

26]. PT is already used in the health and wellness areas, where it tracks people’s behavior as well as their physiological and psychological processes, responding to them by trying to affect their mental states by offering psychotherapeutic advice or to motivate them into making different decisions, e.g., in regards to healthy eating [

21]. There are also applications in areas such as education or environmental sustainability, where people are nudged towards greener behavior [

27].

Major persuasive and ABC frameworks [

28], that such technologies employ, include: Cialdini’s Principles of Persuasion (CPP) [

22], Fogg Behavior Model (FBM) [

20] and Persuasive System Design Model (PSDM) [

29], with firm verification of their effectiveness [

30].

CPP is based on the idea that general persuasive strategies are not equally effective for everyone. It identifies various strategies that affect different groups of people differently. Interactive, adaptive technology can be utilized to personalize itself to specific strategies that work for specific groups of people.

FBM is based on the idea that a certain behavior is the result of motivation, ability and a trigger occurring at the same time. Therefore, a person changing their behavior has to be sufficiently motivated, has to possess the ability to change the behavior, and has to be triggered to change the behavior. These are then combined in personalized ways to find the most effective strategies for an individual.

PSDM is based on the need for effective design and evaluation of persuasive systems, and mostly offers a framework for what kind of content and functionality PT should consider. PSDM includes four principles upon which to design PT: (1) primary task support, which supports the user’s carrying out of their primary task; (2) dialogue support, which helps users move towards their goals; (3) system credibility, which raises the user’s belief in the system’s quality; and (4) social support, which motivates the user by leveraging social influence.

Another powerful and effective behavioral change concept—Richard Thaler, its author, received the Nobel Prize for it—is the ‘nudge theory’. Nudge is “any aspect of the choice architecture that alters people’s behavior in a predictable way without forbidding any options or significantly changing their economic incentive”, where “the intervention must be easy and cheap to avoid” [

24] (p. 6). Nudges are being incorporated into PT and ABC support systems as well [

31].

For persuasive strategies to be as effective as possible, they have to be tailored to a number of specifics. There are 4 factors in the framework of the Communication-Persuasion Paradigm [

32] that determine the influence: (1) characteristics of the source (i.e., the message sender); (2) the message; (3) characteristics of the destination (or the receiver of the message); and (4) the context.

For determining effective strategies, personality models, such as Big Five personality traits (B5) [

33] or Hexaco [

34], as well as domain specific questionnaires, offer PT a useful way to model a person. Personality is measured on different dimensions (e.g., in B5: openness, conscientiousness, extroversion, agreeableness, neuroticism), which try to describe psychological and cognitive functionalities of individuals, e.g., their mental states and decision-making abilities. Knowledge in specific domains relies on PT’s use of questionnaires. For mental health, SAD questionnaires [

35] can be used to categorize people with SAD symptoms, which leads to better strategy selection. Such questionnaires give insight into what influences which individuals the most. Empirical phenomenology can also be employed for more detailed first-person accounts [

36], which can be used for extracting linguistic features [

37,

38] or for other tweaking of ABC techniques in PT. Furthermore, combining subjective data with physiological data is also proving useful for adaptive technologies [

39].

These frameworks and models appear in several technological platforms. The most frequently used platforms are mobile and handheld devices (28%), followed by games (17%), web and social networks (14%), other specialized devices (13%), desktop applications (12%), sensors and wearable devices (9%), and ambient and public displays (5%) [

21].

ABC can be delivered through various software systems. Intelligent cognitive assistants (or chatbot, chatterbot, interactive agent, conversational AI, smartbot, bot) seem to be the most advanced [

11,

12,

13,

14,

15,

16,

40]. The next subsection introduces such systems and describes why they seem to be the best vessel for delivering ABC.

1.3. Intelligent Cognitive Assistant Technology

There is a lack of consensus regarding the term with which to label technologies this review describes. Conversational agents, dialogue systems, smart conversational interfaces, relational agents, chatbots, and so on—in the end, we decided to go into the direction of the SRC workshop on the ICA technology [

41], and label it intelligent cognitive assistant (ICA) technology, as it seems to better describe the capabilities such systems are designed to have. They are not only intelligent in terms of being able to converse and have a language model, they have many other abilities that are human-like, relating to human cognition and intelligence. ICA technology has therefore been touted as the next revolution in human–computer coexistence. The technology dates back to the beginning of AI, where one of the first chatbots was developed and available outside of a research laboratory—Weizenbaum’s simulation of a Rogerian psychotherapist called ELIZA [

42]. However, technological progress has only recently laid the foundations for broad adoption in the form of ICAs such as Alexa and Siri as well as more domain-specific agents such as Woebot [

17]. Alexa, Siri and Google Home, however close to certain human capabilities they may seem, still often fail outside of very basic, secretary-like tasks. When used in more expert domains, such as mental health, they quickly start repeating themselves, as they only have very generic models that end up in common phrases and trivial platitudes. Sometimes, their remarks can be even dangerous for the user, as they may be perceived as flippant and negative, or give wrong medical advice. Testing their response to stressful accounts, they either do not understand or they fail to show empathy beyond empty words [

43]. Expert domains of engagement therefore need domain-specific ICAs.

ICAs, which can be deployed in many devices, e.g., as virtual agents or robots, are striving to: understand context; be adaptive and flexible; learn and develop; be autonomous; be communicative, collaborative and social; be interactive and personalized; be anticipatory and predictive; perceive; act; have internal goals and motivation; interpret; and reason. To be able to come close to such capabilities, ICAs are embedded with a cognitive architecture (CogA), a “hypothesis about the fixed structures that provide a mind, whether in natural or artificial systems, and how they work together—in conjunction with knowledge and skills embodied within the architecture—to yield intelligent behavior in a diversity of complex environments” [

44] (para. 2). Most importantly, ICAs possess the ability to converse in natural language. This seems to be the most immediate way in which humans communicate [

45], and the effects of a dialogue on human mental states cannot be overestimated. ICAs, coupled with ABC capabilities, are establishing as a very promising PT.

Using ICAs for ABC is still a new field of research, despite ELIZA being the first chatbot, as chatbots have mostly been explored for education, customer support or in other simple question-answer contexts [

46]. What makes ICAs for ABC unique, is that users reveal personal information more freely, which makes systems more successful in their goals [

47]. ABC ICAs and their users also form a more longitudinal relationship. The interactions are not a one-off, where it is difficult to understand the users and act immediately with efficient strategies. This makes such ICAs able to learn from historical interactions and improve in achieving ABC. However, there is a considerable lack of evaluation standardization of PT and ABC support systems, which makes the research field prone to the introduction of researcher bias.

ICAs, besides being a vessel to understand users through modeling their psychological and physiological aspects and use such knowledge to enact ABC, present as an ideal platform for offering help in the field of mental health because of the ability to converse. This opens up new solutions in the field of digital mental health.

1.4. Digital Mental Health

Although the COVID-19 pandemic has revealed in full the problems that mental healthcare has [

10] as well as thoroughly exacerbated existing well-being of people [

48], the mental health pandemic has been raging for far longer [

49,

50]. Various decision-makers—especially world organizations, national governments and other leaders—are starting to recognize this, which is why mental well-being appears in Goal 3 of the 17 UN Sustainable Development Goals [

26]. Most common mental health issues include stress, anxiety and depression (SAD); these have seen the biggest rise in the recent decades [

51].

Before the COVID-19 pandemic, figures for SAD symptoms in some groups reached over 70% for overwhelming stress symptoms, which make people unable to cope [

52,

53] and about 8% for disorders, connected with stress, such as post-traumatic stress disorder [

54]; almost up to 34% for anxiety disorder [

55]; and up to 27% for depressive symptoms [

56] and 6% for depressive disorders [

57]. With the COVID-19 pandemic, we are seeing these numbers rise [

48,

58,

59]. The number of people with SAD symptoms and disorders increased up to more than three-fold [

60]. What is even more worrying is that mental health issues are very underreported, especially in developing countries [

61]. How different countries report the state of their population’s mental health is also rough at best and the data are mostly about the adult population [

55]. This is further skewed by the fact that up to 85% of people in low- and middle-income countries receive no mental health treatment [

62], treatment coverage in high-income countries for certain disorders only reaches 33% [

63], and up to 96% people with SAD do not seek treatment at all [

64].

Mental health issues have substantial, multi-faceted consequences, not only affecting the patient, but also their immediate surroundings (family, caretakers) and the wider society [

65]. Patients are faced with a decreased quality of life, poorer educational outcomes, lowered productivity and potential subsequent poverty, social problems, abuse vulnerabilities, and additional non-mental health problems. Patients’ immediate family and caretakers face increased emotional and physical challenges, decreased household income, and increased financial costs. Society as a whole faces exacerbating public health issues, corrosion of social cohesion, and the loss of several GDP percentage points and billions of dollars expenditure per nation annually. What ends up happening is that SAD increasingly perpetuates SAD. Too often, the direct result of this is the worst possible one—loss of human life. Many countries still struggle with a high suicide rate [

51]. The reasons for increasing of SAD include a critical lack of mental health professionals and regulations [

66] as well as inequality in access to care [

67,

68].

The conditions in which mental healthcare finds itself in, especially in a post-COVID-19 world, seem to present an opportunity for development of technological and other scientific therapy-based interventions, especially as individuals with mental health issues prefer therapies to medication [

69]. Digital mental health, a still insufficiently explored area of research and practice, represents a way to explore how technology can complement existing mental healthcare systems to be more effective in delivering help to people that need it.

Technologies that increase the operability and effectiveness of healthcare are many [

70,

71,

72,

73], but we concentrate on addressing the implications using ICAs as PT in mental health has. By not only focusing on what ICAs offer but also on possible problems they bring, we try to provide a fair account of the potential of digital mental health as a whole.

We identified the following areas where using PT can offer positive possibilities in mental healthcare: cost, availability, stigma, and prevention. Identified negative possibilities include group exclusion, research bias, privacy problems, lack of longitudinal research, ethics of using personal information for persuasion, potential risks of digital dependence, potential problems of automation and job loss of mental healthcare professionals, and possible cost increase in certain aspects.

Positive possibilities:

Cost related to the service of mental healthcare professionals varies, not only due to country standards, but also on country regulations and subsidies. It highly depends on the number of practicing professionals. Regardless, it presents a barrier for people of lower socio-economic backgrounds [

74]. PT for mental health can be realistically made free of charge (and many times is [

17]) due to the much lower costs attached to it [

68]. PT also offers collecting data on often overlooked (and disadvantaged) populations, thus lowering systemic bias in analysis, as well as targeting patients with low-priority conditions.

Availability refers to location, time, and cost. Location-based availability concerns people with no direct access to mental healthcare (e.g., remote areas) [

75]. Time-based availability concerns people needing help when their chosen professional is unavailable (e.g., panic attack during the night). PT may also minimize problems related to transportation [

76]. Cost-based availability concerns people needing more than the minimum recommended amount of hours of psychological help per week [

77]. Research [

77,

78] shows that more frequent therapy results in better outcomes, and complementary use of PT for mental health can bridge that gap for people not being able to afford more therapy by still having access to help.

Stigma refers self-stigma—the prejudice which people with mental issues turn against themselves—and public stigma—the reaction that the general population has to people with mental issues. Both are prevailing problems [

79], causing up to 96% people not deciding to seek treatment [

64]. Research shows that people are more comfortable disclosing themselves to a computerized system than to a person [

47]. Introducing PT for this group of people may result in offering help to people that would otherwise never receive it as well as helping people get better to the point of visiting a professional.

Prevention refers to the blind spot in mental healthcare: people only come in (if at all) seeking treatment, while a lot of issues can be prevented beforehand. PT can work indirectly by providing “support for better decision making, emotional regulation or interpersonal interactions,” which are "necessary to ensure that psychological, emotional and social deficits do not spiral into clinical disorder," or directly by improving “both the screening and early delivery of interventions to reduce risk factors and build psychological resources” [

76] (p. 336). Therefore, treatment should not be the only target for PT, as prevention also lowers the amount of mental health issues present and thus relieve stress on healthcare.

Negative possibilities:

Group exclusion refers to those groups of people which can not only be excluded from technology-oriented mental healthcare, but may find themselves even further distanced from or even removed from the society. The groups include the elderly, who have difficulties integrating technology into their lives [

80]; the lowest socio-economic class, who may not benefit from PT due to their lack of access to technology [

81]; and culturally-specific groups, whose cultural or sociopolitical specifics prevent them from adopting technology [

82]. Fortunately, PT research is fledging in certain low-income parts of the world [

83].

Researcher bias refers to the lack of evaluation standards of PT for mental health in this interdisciplinary endeavor. This is due to two factors: the field’s youth and the various disciplines tackling the field individually. The possible problems are many: (1) PT are not always studied in empirical experiments, but in quasi-experiments [

84] or no experiments at all, but if there are empirical experiments, it is mostly with PT that is proprietary and is thus harder to change; (2) the metric on which to evaluate such systems is unclear (usually comes indirectly from their effectiveness in an experiment where the goal is SAD symptoms relief [

12]); (3) no consensus on what data is needed. This results in many unfounded presuppositions of researchers, ending in problematic practice.

Cost increase refers to the possibility that using PT in mental health may delay “the provision of traditional treatments with greater evidence of efficacy or by increasing the numbers of patients receiving services” [

76] (p. 336). More research is needed to be able to understand the costs alleviated and costs incurred by implementing such systems.

Other potential problems are less related to our work, but worth the mention nevertheless: (1) the problem of personal information privacy [

85]; (2) the problem of the lack of longitudinal research on behavior change with PT [

86]; (3) the ethics of using personal information for persuasion [

85]; (4) the potential risks of digital dependence [

87]; and (5) the potential problem of automation and job loss of mental healthcare professionals.

Reviews on this topic are favorable [

11,

12,

13,

14,

15,

16,

40], agreeing that “early evidence shows that with the proper approach and research, the mental health field could use conversational agents in psychiatric treatment.” [

12] (p. 456). Related review papers are presented next.

1.5. Related Work

Due to the novel viewpoint of our review, deciding on the parameters of what constitutes as related work was non-trivial. It was established that none of the found review papers covered ICAs that try to induce ABC for SAD from a technical point of view—analyzing their software structures, algorithms used, datasets which they utilize, etc. The review papers we present in this section therefore consists of work that analyzed such systems in a way that is “aimed to inform health professionals” [

11] (p. 11) as opposed to researchers in the fields of computer science.

Related work is divided into three groups: (1) papers that review the use of ICAs (under different synonyms—conversational agents, chatbots, etc.) for delivering help in mental health; (2) papers that review the use of applications in general for delivering help in mental health; and (3) papers that review the use of ICAs for delivering help in health in general.

We identified six related works, belonging to the first group of papers. Provoost et al. [

11] focused on embodied conversational agents (ECAs), which beside language also simulate some properties of human face-to-face conversation, including non-verbal behavior. They tried to provide an overview of the possibilities such systems present and to investigate the evidence base for their effectiveness. They found 54 studies with ECAs for treating mood disorders, anxiety, psychoses, autism, and disorders connected to substance use, which use different techniques, including reinforcement of social behaviors through expressions and multimodal conversations, to reduce symptoms. They concluded that this avenue presented an emerging and important research endeavor, with the limited results so far showing positive outcomes. They also called for more research and the production of more such systems. Vaidyam et al. [

12] explored chatbots in psychiatry for assessment as well as intervention purposes. They focused on chatbots for depression, anxiety, schizophrenia, bipolar and substance abuse disorders. From 10 studies that fit their criteria, they found that the reported outcomes in using chatbots showed benefit in psychoeducation and self-adherence, as well as it being an enjoyable tool that patients used. They concluded that early evidence was promising, however they called for more research from all the actors in this interdisciplinary field. Abd-Alrazaq et al. [

13] identified chatbots as a possible remedy for the shortage of mental health workers, which prompted them to pool effectiveness and safety results of 12 studies on using chatbots for depression, distress, stress, and acrophobia. They found that there is a lack of evidence on whether their effect was clinically important, but they concluded that they are safe. They warned that there is a lack of standardized evaluation metrics, resulting in high risk of bias. Gaffney et al. [

14] investigated ECAs and their usability for general psychological distress. In 13 identified studies, they discovered that the efficacy and acceptability were promising with most studies showing significant reductions in mental issue symptoms. They called for researchers to produce more work on exploring mechanisms of change such systems can employ to increase efficacy, be it technical or not. Bendig et al. [

15] focused on chatbots used in clinical psychology and psychotherapy. They found that most experiments done are pilot studies where it is hard to produce high-quality evidence. They report that practicability, feasibility, and acceptance of chatbots was very promising, although such technologies were still highly experimental, especially because applying technology in such a complex domain is difficult. They ended the review calling for funding to evaluate chatbots on effectiveness, sustainability, and safety. Abd-alrazaq et al. [

16] reviewed chatbots for mental health, not excluding any mental disorders or purposes of chatbots in mental health. They found 41 chatbots, some only used for screening (n = 10) or training (n = 12), while other were used for therapy (n = 17) or without a specific purpose. Most treated depression (n = 16) or autism (n = 10). As the authors before, Abd-alrazaq et al. called for more evidence, but recognized possible utility of early integration of such systems in mental healthcare.

We identified four related works, belonging to the second group of papers. Bakker et al. [

88] focused on any mental health app for mental health. They discovered that they lack functionality and features. They also noted a lack of research on the efficacy of apps, worrying about a complete lack of trials of any kind. They presented their own recommendations for developers of such apps. Orji and Moffatt [

21] reviewed persuasive technology from the span of 16 years and they comprehensively detailed their designs, research methods, strategies and theories they use to persuade, as well as targeted behaviors. They concluded that persuasive technology was a promising avenue for wellness, but that the field was lacking longitudinal research and current technological limitations. Chan et al. [

89] surveyed the use of mobile apps in psychiatric treatments. They called on mental health practitioners to show a bigger understanding for using such apps, what their features were, what should be studied more to advance their capabilities and what the possible issues may be in integrating them into clinical workflows. They concluded that patients with various mental illnesses and severities may benefit from them, despite their social and technological backgrounds, however, better practices for evaluating apps, understanding user needs, and educating them on their use was needed to increase the apps’ efficacy, on top of ensuring ethical and risk-free protocols. Torous et al. [

90] researched smartphone apps and focused on their adoption by clinics or consumers, as the uptake was still low in spite of the potential of apps to improve quality and access to mental healthcare. They reported high heterogeneity in metric reported by studies, and found that despite apps being even successful in their goals, they lacked user testing, privacy protection, and mechanisms that establish trustworthiness. They also did not tackle emergencies. They called for further research in all fields connected to this technology.

Finally, we identified five related works, belonging to the third group of papers. Laranjo et al. [

40] focused on conversational agents with unconstrained natural language input capabilities for any health-related purpose, targeting customers as well as health professionals. They analyzed 14 different conversational agents with mostly finite-state or frame-based dialogue systems, focusing on patient self-care. Very few presented non-quasi-experimental studies. However, most reported satisfying efficacy, but they rarely evaluated patient safety. Authors ended the paper calling for better experimental designs and standardization in such works. Montenegro et al. [

91] developed a taxonomy based on 40 papers related to conversational agents applied to healthcare, and with the taxonomy identified existing challenges and research gaps. They found many systems supporting patients as well as physicians, with a minority of systems focusing on student training. Most of the agents surveyed focus on health literacy, which the authors considered a future trend in the future of changing health behaviors. They discovered that the most lacking areas were bringing such technology to the elderly and making advances in user involvement, which included better interactions, interfaces, and models of learning. Safi et al. [

92] investigated chatbots in the medical field from a technical aspect pertaining to their development. They identified 45 studies on using chatbots for health purposes. The most common method was pattern matching method, used commonly for question-answer conversations in providing information users ask for. Generating original output, not a pre-existing one, was rare. Very few studies collected any user data. The authors found such systems useful for providing information to interested users. Abd-Alrazaq et al. [

93] performed an overview of technical (non-clinical) metrics used for evaluating dialog agents in healthcare. By scanning 65 studies, they found 27 technical metrics, pertaining to chatbots generally, to their response generation and understanding, and to their aesthetics. Their work tries to systematize and push the direction towards standardization of how to evaluate chatbots non-clinically. Pereira and Díaz [

94] surveyed chatbots for health behavior change. They identified 30 papers that used health chatbots in their study, and found out that nutritional disorders and neurological disorders were the most targeted health issues, that the chatbots tried to change human competence in tackling these issues, and that users most appreciated the personalization and consumability aspects of these chatbots. Again, the authors noted that case studies were lacking and that technological implications were almost never discussed.

The rest of the paper is organized as follows:

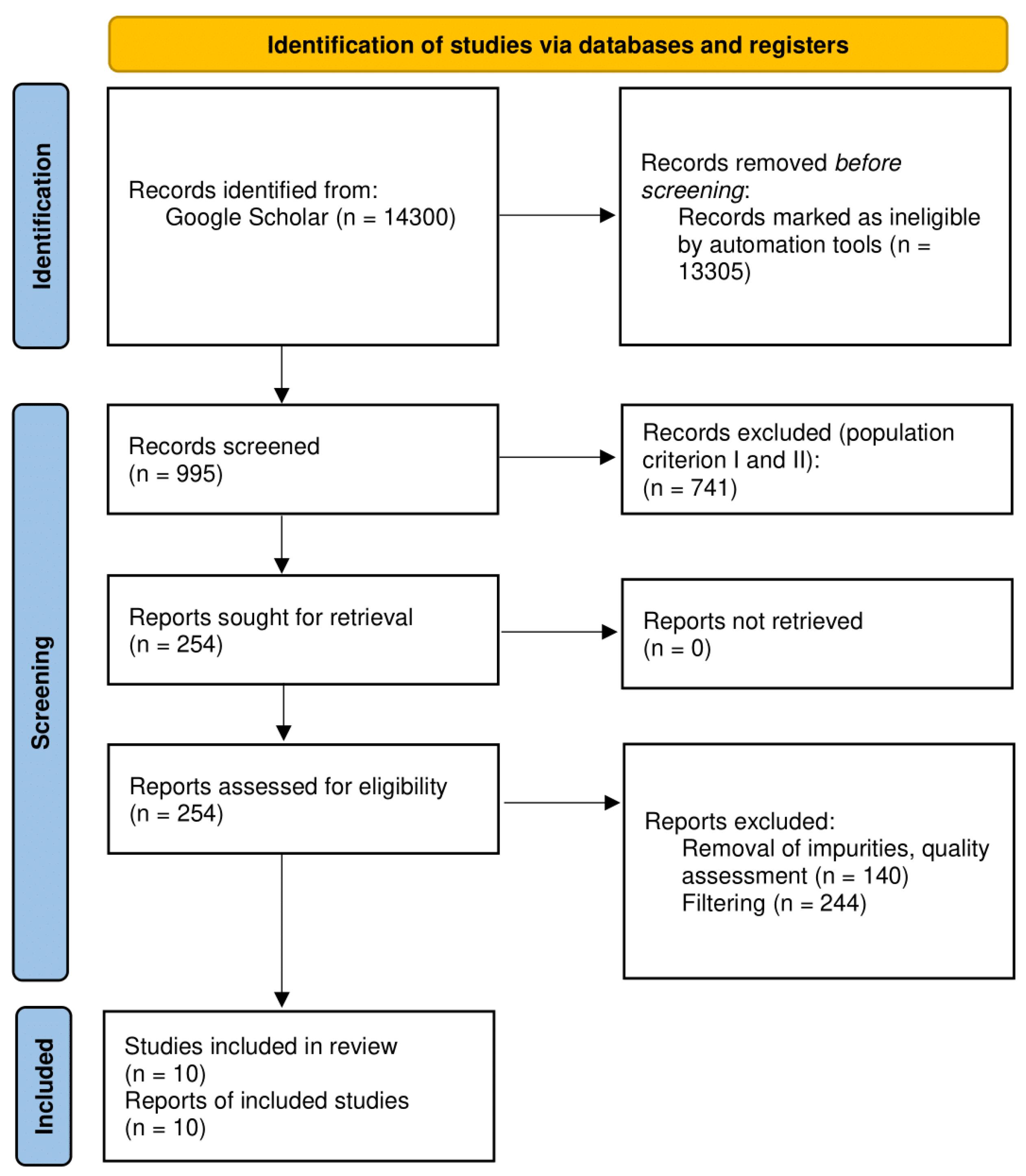

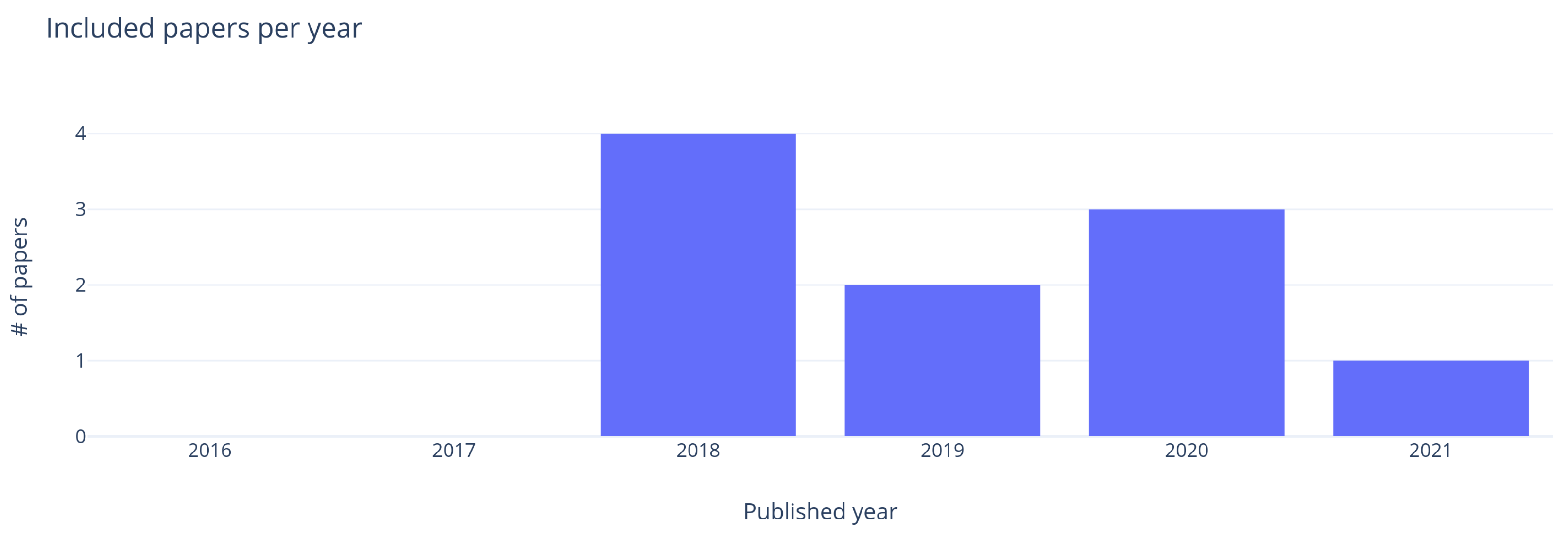

Section 2 presents the materials and methods used for this review, focusing on research methodology, study design, research questions, search strategy, paper selection criteria, and data extraction.

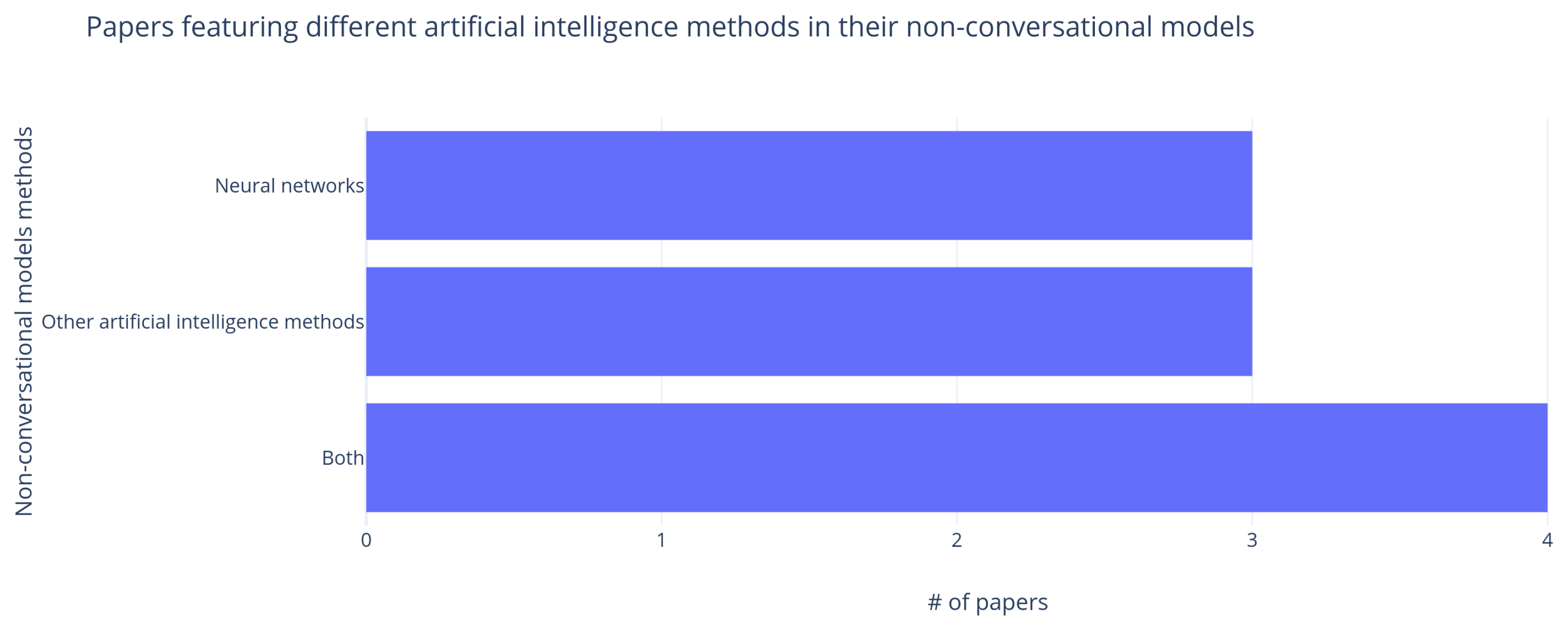

Section 3 presents the results, focusing on search results and paper selection, description of selected papers, main findings, and answers to the research question. In

Section 4, the work is discussed and compared to existing review, the technology is evaluated and advantages and disadvantages are listed. The paper finishes with

Section 5.