The Role of Digital Maturity Assessment in Technology Interventions with Industrial Internet Playground

Abstract

:1. Introduction

2. Materials and Methods

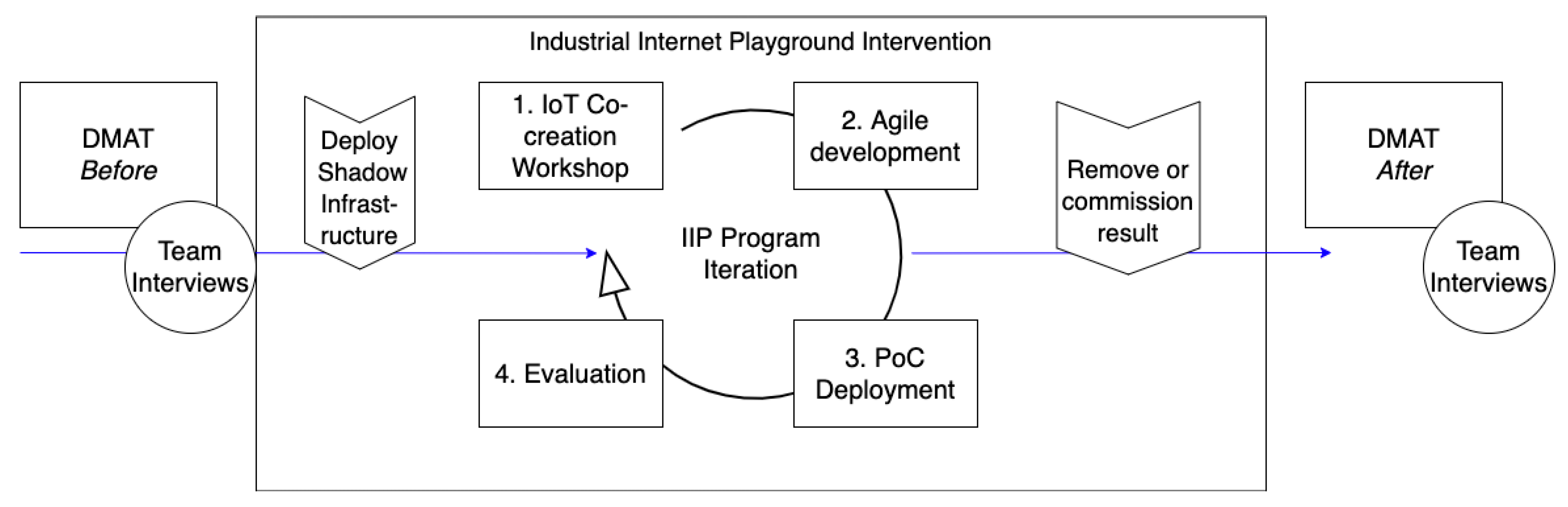

2.1. The Industrial Internet Playground (IIP)

2.2. Shadow Infrastructure (SI)

2.3. The Digital Maturity Assessment Tool

- Leadership (difficulty in creating urgency, vision and direction for the digital transformation)

- Institutional (resistance to change in the form of attitudes of old employees, legacy technology, innovation fatigue and politics) [45].

- Digital capabilities (e.g., strategy, technological expertise, business models, customer experience)

- Leadership capabilities (e.g., governance, change management, culture) [46].

- Strategy

- 2.

- Culture

- 3.

- Organisation

- 4.

- Process

- 5.

- Technology

- 6.

- Customers and Partners

3. Results

4. Analysis and Discussion

5. Conclusions

Supplementary Materials

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Lasi, H.; Fettke, P.; Kemper, H.G.; Feld, T.; Hoffmann, M. Industry 4.0. Bus. Inf. Syst. Eng. 2014, 6, 239–242. [Google Scholar] [CrossRef]

- Vermesan, O.; Friess, P.; Guillemin, P.; Gusmeroli, S.; Sundmaeker, H.; Bassi, A.; Jubert, I.; Mazura, M.; Harrison, M.; Eisenhauer, M.; et al. Internet of Things Strategic Research Roadmap. In Internet of Things—Global Technological and Societal Trends; River Publishers: Nordjylland, Denmark, 2009. [Google Scholar]

- Morrar, R.; Arman, H. The Fourth Industrial Revolution (Industry 4.0): A Social Innovation Perspective. Technol. Innov. Manag. Rev. 2017, 7, 12–20. [Google Scholar] [CrossRef] [Green Version]

- MPI Group. The Internet of Things Has Finally Arrived. Available online: http://mpi-group.com/wp-content/uploads/2016/01/IoT-Summary2016.pdf (accessed on 27 March 2021).

- Boyes, H.; Hallaq, B.; Cunningham, J.; Watson, T. The industrial internet of things (IIoT): An analysis framework. Comput. Ind. 2018, 101, 1–12. [Google Scholar] [CrossRef]

- Dickson, B. What Is the Difference between Greenfield and Brownfield Iot Development? TechTalks. 2016. Available online: https://bdtechtalks.com/2016/09/22/what-is-the-difference-between-greenfield-and-brownfield-iot-development/ (accessed on 6 June 2020).

- Hernández, E.; Senna, P.; Silva, D.; Rebelo, R.; Barros, A.C.; Toscano, C. Implementing RAMI4.0 in Production—A Multi-case Study. In Proceedings of the 10th World Congress on Engineering Asset Management (WCEAM 2015), Tampere, Finland, 28–30 September 2015; Springer: Berlin/Heidelberg, Germany, 2020; pp. 49–56. [Google Scholar]

- Pessot, E.; Zangiacomi, A.; Battistella, C.; Rocchi, V.; Sala, A.; Sacco, M. What matters in implementing the factory of the future: Insights from a survey in European manufacturing regions. J. Manuf. Technol. Manag. 2020. [Google Scholar] [CrossRef]

- Frysak, J.; Kaar, C.; Stary, C. Benefits and pitfalls applying RAMI4.0. In Proceedings of the IEEE Industrial Cyber-Physical Systems, ICPS 2018, St. Petersburg, Russia, 15–18 May 2018; Institute of Electrical and Electronics Engineers Inc.: New York, NY, USA, 2018; pp. 32–37. [Google Scholar]

- Ferreira, F.; Faria, J.; Azevedo, A.; Marques, A.L. Industry 4.0 as enabler for effective manufacturing virtual enterprises. In Proceedings of the IFIP Advances in Information and Communication Technology, London, UK, 15–17 June 2016; Volume 480, pp. 274–285. [Google Scholar]

- Mu, E.; Kirsch, L.J.; Butler, B.S. The assimilation of enterprise information system: An interpretation systems perspective. Inf. Manag. 2015, 52, 359–370. [Google Scholar] [CrossRef]

- Carr, N.G. IT Doesn’t Matter. Harv. Bus. Rev. 2003, 81, 24–38. [Google Scholar] [PubMed]

- Yi, M.Y.; Jackson, J.D.; Park, J.S.; Probst, J.C. Understanding information technology acceptance by individual professionals: Toward an integrative view. Inf. Manag. 2006, 43, 350–363. [Google Scholar] [CrossRef]

- Rogers, E.M. Diffusion of Innovations, 4th ed.; Simon & Schuster: New York, NY, USA, 2003. [Google Scholar]

- Fishbein, M.; Ajzen, I. Belief, Attitude, Intention and Behaviour: An Introduction to Theory and Research; Addison-Wesley: Boston, MA, USA, 1975; Volume 27. [Google Scholar]

- Ajzen, I. The theory of planned behavior. Organ. Behav. Hum. Decis. Process. 1991, 50, 179–211. [Google Scholar] [CrossRef]

- Taylor, S.; Todd, P.A. Understanding information technology usage: A test of competing models. Inf. Syst. Res. 1995, 6, 144–176. [Google Scholar] [CrossRef]

- Bagozzi, R.P. The legacy of the technology acceptance model and a proposal for a paradigm shift. J. Assoc. Inf. Syst. 2007, 8, 244–254. [Google Scholar] [CrossRef]

- Davis, F.; Bagozzi, R.; Warshaw, P. User Acceptance of Computer Technology: A Comparison of Two Theoretical Models. Manag. Sci. 1989, 35, 982–1003. [Google Scholar] [CrossRef] [Green Version]

- Venkatesh, V.; Davis, F.D. Theoretical extension of the Technology Acceptance Model: Four longitudinal field studies. Manag. Sci. 2000, 46, 186–204. [Google Scholar] [CrossRef] [Green Version]

- Venkatesh, V.; Bala, H. Technology acceptance model 3 and a research agenda on interventions. Decis. Sci. 2008, 39, 273–315. [Google Scholar] [CrossRef] [Green Version]

- Lai, P. The Litterature Review of Technology Adoption Models and Theories for the Novelty Technology. J. Inf. Syst. Technol. Manag. 2017, 14, 21–38. [Google Scholar] [CrossRef] [Green Version]

- Aagaard, A. Digital Business Models—Driving Transforming and Innovation; Palgrave MacMillan: London, UK, 2019. [Google Scholar]

- Morrish, J.; Figueredo, K.; Haldeman, S.; Brandt, V. The Industrial Internet of Things, Volume B01: Business Strategy and Innovation Framework; Industrial Internet Consortium: Needham, MA, USA, 2016; Volume 48. [Google Scholar]

- DIN SPEC 91345—2016-04—Beuth.De. Available online: https://www.beuth.de/de/technische-regel/din-spec-91345/250940128 (accessed on 30 March 2021).

- Collins, T. IoT Methodology: A Methodology for Building the Internet of Things. 2017. Available online: http://www.iotmethodology.com/ (accessed on 30 March 2021).

- Jones, T.S.; Richey, R.C. Rapid prototyping methodology in action: A developmental study. Educ. Technol. Res. Dev. 2000, 48, 63–80. [Google Scholar] [CrossRef]

- Venable, J.; Pries-Heje, J.; Baskerville, R. FEDS: A Framework for Evaluation in Design Science Research. Eur. J. Inf. Syst. 2016, 25, 77–89. [Google Scholar] [CrossRef] [Green Version]

- Ståhlbröst, A.; Holst, M. The Living Lab Methodology Handbook. 2012. Available online: http://www.ltu.se/cms_fs/1.101555!/file/LivingLabsMethodologyBook_web.pdf (accessed on 9 September 2019).

- Chesbrough, H. Open Innovation Results: Going beyond the Hype and Getting down to Business; Oxford University Press: Oxford, UK, 2019; ISBN 9780198841906. [Google Scholar]

- Ståhlbröst, A.; Bergvall-Kåreborn, B. FormIT: An Approach to User Involvement. In European Living Labs: A New Approach for Human Centric Regional Innovation; Wissenschaftlicher Verlag: New York, NY, USA, 2008; pp. 63–75. ISBN 978-3-86573-343-6. [Google Scholar]

- Corallo, A.; Latino, M.E.; Neglia, G. Methodology for User-Centered Innovation in Industrial Living Lab. ISRN Ind. Eng. 2013, 2013, 1–8. [Google Scholar] [CrossRef]

- Siemens Technology at the LivingLab LivingLab (REDIRECT) Siemens Österreich. Available online: https://new.siemens.com/at/en/company/topic-areas/living-lab-vienna/siemens-technology-at-the-living-lab.html (accessed on 23 September 2020).

- Rentrop, C.; Zimmermann, S. Shadow IT: Management and Control of unofficial IT Standardization of Applications. In Proceedings of the 6th International Conference on Digital Society, Valencia, Spain, 30 January–4 February 2012; pp. 98–102. [Google Scholar]

- De Carolis, A.; Macchi, M.; Negri, E.; Terzi, S. A maturity model for assessing the digital readiness of manufacturing companies. In IFIP Advances in Information and Communication Technology; Springer: New York, NY, USA, 2017; Volume 513, pp. 13–20. [Google Scholar]

- Wendler, R. The maturity of maturity model research: A systematic mapping study. In Information and Software Technology; Elsevier: Amsterdam, The Netherlands, 2012; Volume 54, pp. 1317–1339. [Google Scholar]

- Becker, J.; Knackstedt, R.; Pöppelbuß, J. Developing Maturity Models for IT Management. Bus. Inf. Syst. Eng. 2009, 1, 213–222. [Google Scholar] [CrossRef]

- Schumacher, A.; Erol, S.; Sihn, W. A Maturity Model for Assessing Industry 4.0 Readiness and Maturity of Manufacturing Enterprises. In Procedia CIRP; Elsevier BV: Amsterdam, The Netherlands, 2016; Volume 52, pp. 161–166. [Google Scholar]

- Zare, M.S.; Tahmasebi, R.; Yazdani, H. Maturity assessment of HRM processes based on HR process survey tool: A case study. Bus. Process Manag. J. 2018, 24, 610–634. [Google Scholar] [CrossRef]

- Bates, S.; White, B. Everything Changed. Or Did It? Compare Your Sector; Harvey Nash/KPMG CIO Survey. 2020. Available online: https://assets.kpmg/content/dam/kpmg/xx/pdf/2020/10/harvey-nash-kpmg-cio-survey-2020.pdf (accessed on 16 February 2021).

- Bughin, J.; Hazan, E.; Lund, S.; Dahlström, P.; Wiesinger, A.; Subramaniam, A. Skill Shift: Automation and the Workforce of the Future. Available online: https://www.mckinsey.com/featured-insights/future-of-organizations-and-work/skill-shift-automation-and-the-future-of-the-workforce (accessed on 16 February 2021).

- Capgemini Consulting. Digitalizing HR: Connecting the Workforce—Global HR Barometer Research Report. Available online: https://www.capgemini.com/consulting-nl/wp-content/uploads/sites/33/2017/08/hr_barometer_rapport_0.pdf (accessed on 27 March 2021).

- Maier, A.M.; Moultrie, J.; Clarkson, P.J. Assessing organizational capabilities: Reviewing and guiding the development of maturity grids. IEEE Trans. Eng. Manag. 2012, 59, 138–159. [Google Scholar] [CrossRef]

- Westerman, G.; Bonnet, D.; McAfee, A. Leading Digital: Turning Technology into Business Transformation. Havard Bus. Rev. 2015, 52, 10. [Google Scholar]

- Fitzgerald, M.K.; Didier Bonnet, N.; Welch, M. Embracing Digital Technology. MIT Sloan Manag. Rev. 2013, 55, 1–26. [Google Scholar]

- Rossmann, A. Digital Maturity: Conceptualization and Measurement Model. In Proceedings of the 39th International Conference on Information Systems, San Francisco, CA, USA, 13–16 December 2018; Volume 2. [Google Scholar]

- Fink, A. Conducting Research Literature Reviews: From the Internet to Paper, 2nd ed.; Sage Publications: Newbury Park, CA, USA, 2005; ISBN 9781544318479. [Google Scholar]

- Mithas, S.; Tafti, A.; Mitchell, W. How a Firm’s Competitive Environment and Digital Strategic Posture Influence Digital Business Strategy. MIS Q. 2013, 37, 511–536. [Google Scholar] [CrossRef] [Green Version]

- Kane, G.C.; Palmer, D.; Phillips, A.N.; Kiron, D.; Buckley, N. Achieving Digital Maturity. Adapting Your Company to a Changing World. Available online: https://sloanreview.mit.edu/projects/achieving-digital-maturity/ (accessed on 27 March 2021).

- MITSloan. Interactive Charts: Reaching beyond Digital Transformation. Available online: https://sloanreview.mit.edu/2017-digital-business-interactive-tool/ (accessed on 27 March 2021).

- Deuze, M. Participation, remediation, bricolage: Considering principal components of a digital culture. Inf. Soc. 2006, 22, 63–75. [Google Scholar] [CrossRef]

- Kane, G.C.; Palmer, D.; Philips, A.N.; Kiron, D.; Buckley, N. Strategy, Not Technology, Drives Digital Transformation. MIT Sloan Manag. Rev. 2015, 14, 1–25. [Google Scholar]

- Vey, K.; Fandel-Meyer, T.; Zipp, J.S.; Schneider, C. Learning & Development in Times of Digital Transformation: Facilitating a Culture of Change and Innovation. Int. J. Adv. Corp. Learn. 2017, 10, 22. [Google Scholar] [CrossRef] [Green Version]

- Johansson, J.; Abrahamsson, L.; Kåreborn, B.B.; Fältholm, Y.; Grane, C.; Wykowska, A. Work and Organization in a Digital Industrial Context. Manag. Rev. 2017, 28, 281–297. [Google Scholar] [CrossRef]

- Eden, R.; Burton-Jones, A.; Casey, V.; Draheim, M. Digital Transformation Requires Workforce Transformation. MIS Q. Exec. 2019, 18, 4. [Google Scholar] [CrossRef]

- Gölzer, P.; Fritzsche, A. Data-driven operations management: Organisational implications of the digital transformation in industrial practice. Prod. Plan. Control 2017, 28, 1332–1343. [Google Scholar] [CrossRef]

- Scuotto, V.; Santoro, G.; Bresciani, S.; Del Giudice, M. Shifting intra- and inter-organizational innovation processes towards digital business: An empirical analysis of SMEs. Creat. Innov. Manag. 2017, 26, 247–255. [Google Scholar] [CrossRef]

- Dremel, C.; Herterich, M.M.; Wulf, J.; Waizmann, J.C.; Brenner, W. How AUDI AG established big data analytics in its digital transformation. MIS Q. Exec. 2017, 16, 81–100. [Google Scholar]

- Pousttchi, K.; Gleiss, A.; Buzzi, B.; Kohlhagen, M. Technology impact types for digital transformation. In Proceedings of the 21st IEEE Conference on Business Informatics, CBI 2019, Moscow, Russia, 15–17 July 2019; Institute of Electrical and Electronics Engineers Inc.: New York, NY, USA, 2019; Volume 1, pp. 487–494. [Google Scholar]

- Aagaard, A.; Presser, M.; Beliatis, M.; Mansour, H.; Nagy, S. A Tool for Internet of Things Digital Business Model Innovation. In Proceedings of the 2018 IEEE Globecom Workshops, GC Wkshps 2018, Abu Dhabi, United Arab Emirates, 9–13 December 2018; Institute of Electrical and Electronics Engineers Inc.: New York, NY, USA, 2019. [Google Scholar]

- Seddon, P.B.; Constantinidis, D.; Tamm, T.; Dod, H. How does business analytics contribute to business value? Inf. Syst. J. 2017, 27, 237–269. [Google Scholar] [CrossRef]

- Setia, P.; Venkatesh, V.; Joglekar, S. Leveraging digital technologies: How information quality leads to localized capabilities and customer service performance. MIS Q. Manag. Inf. Syst. 2013, 37, 565–590. [Google Scholar] [CrossRef] [Green Version]

- Accenture. Technology Vision for Insurance 2016: People First: The Primacy of People in the Age of Digital Insurance. Available online: https://www.accenture.com/t20160314T114937w/us-en/_acnmedia/Accenture/Omobono/TechnologyVision/pdf/Technology-Trends-Technology-Vision-2016.PDF%0D%0A%0D%0A (accessed on 30 March 2021).

- Sahu, N.; Deng, H.; Mollah, A. Investigating The Critical Success Factors Of Digital Transformation For Improving CustomerExperience. Int. Conf. Inf. Resour. Manag. 2018, 18, 13. [Google Scholar]

- Beckers, S.F.M.; Van Doorn, J.; Verhoef, P.C. Good, better, engaged? The effect of company-initiated customer engagement behavior on shareholder value. J. Acad. Mark. Sci. 2017, 46, 366–383. [Google Scholar] [CrossRef] [Green Version]

- Verhoef, P.C.; Broekhuizen, T.; Bart, Y.; Bhattacharya, A.; Qi, D.J.; Fabian, N.; Haenlein, M. Digital transformation: A multidisciplinary reflection and research agenda. J. Bus. Res. 2021, 122, 889–901. [Google Scholar] [CrossRef]

| UI view | https://{{shadow-infra-1-host}}/app/<domain>/<environment>/<agent>/<construct> |

| REST endpoint | https://{{shadow-infra-1-host}}/api/<domain>/<environment>/<agent>/<construct> |

| Websocket URL | wss://{{shadow-infra-1-host}}/<domain>/<environment>/<agent>/<construct> |

| MQTT Subscription Topic | subscribe <domain>/<environment>/<agent>/<construct> |

| Database collection | mongodb://<domain>/<environment>/<agent>/<construct> |

|

|

|

| 3.25 | 3.20 | 3.40 |

|

|

|

| 3.25 | 2.75 | 3.25 |

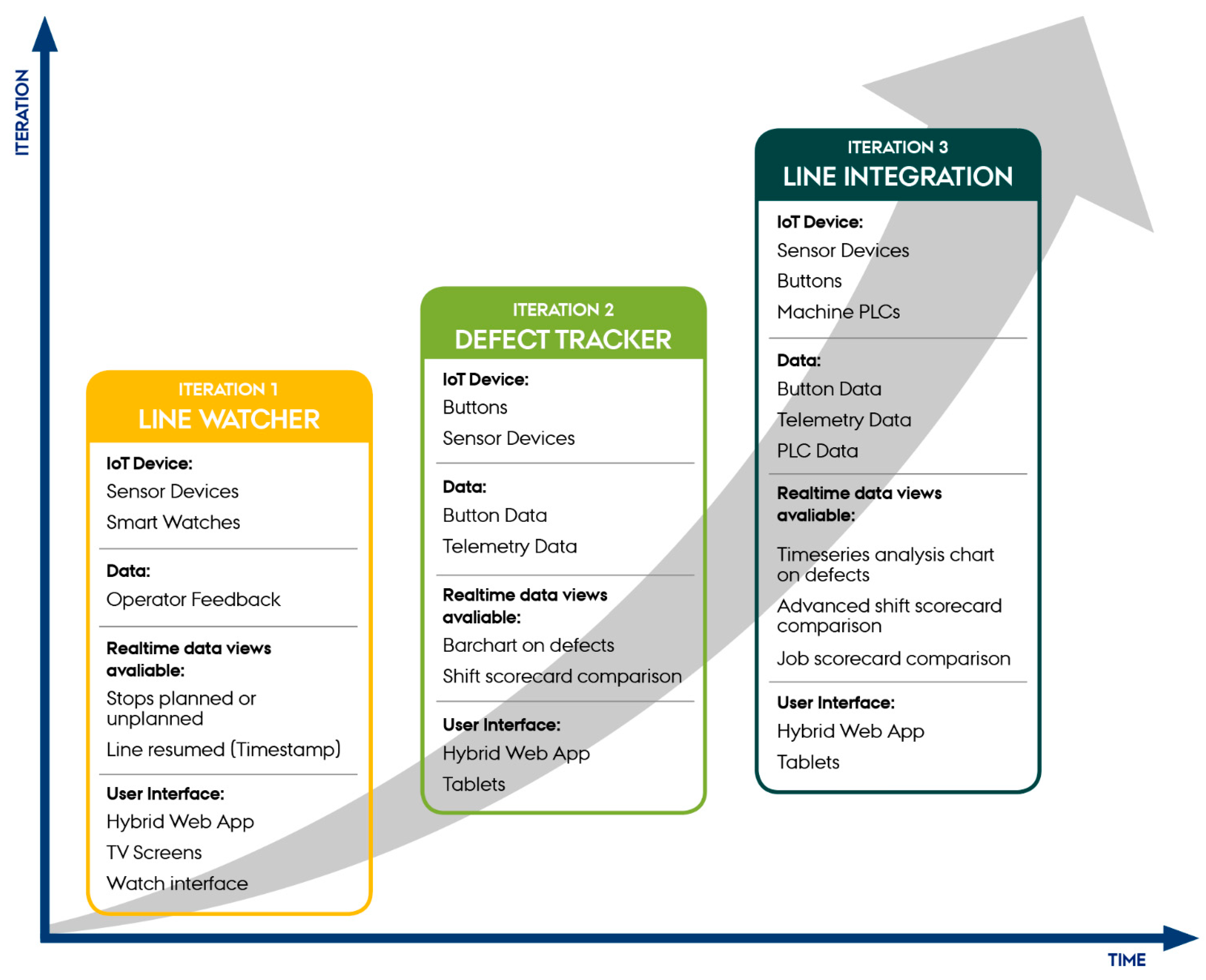

| Iteration | 1 | 2 | 3 |

|---|---|---|---|

| Topic | Proactive maintenance | Quality control | Operations |

| Problem | Maintenance call out is slow, reactive and not trackable. Past operational data is not easy to access and understand by maintenance | QC station is manual, and no data is recorded about scale or frequency of defects encountered | Acquiring and correlating defect data with mixing process data from ERP system is too time consuming to react in a timely way |

| Solution | Linewatcher—A wearable device operators can use as an ‘andon’ notifier of production start, stop and support requests | Defect tracker buttons—Programmable buttons to log defect type and timestamp | Line integration PLC microservice—Integrate PLC data from moisture, line speed and stoppage reasons to indicate defect causes |

| Unique technologies | Android wearable app Live dashboard | Particle Argon microcontroller Live Dashboard Historic Dashboard | PLC telemetry translator Live Dashboard Job and Shift Scorecard |

| SI components | Hybrid web-app REST API Smart TV | Microcontroller library REST API Tablet Scorecard analysis | Microservice MQTT API Tablet Scorecard analysis |

| Ability to reach users’ needs | No—wearable was too unnatural to wear and did not provide enough detailed input options | Yes—accepted and used beyond the trial period to understand current issue | Yes—accepted and used in complete correlation dashboard monitor |

| Key Effects and Value Created in Staff Sections | |||

|---|---|---|---|

| Production Operator Teams | Production Manager Team | Technical Team | C-Level |

| A more comprehensive understanding of the overall impact of the specific quality processes for which each operator is in charge. Easier monitoring of product up-time and defects, due to the automated button registration system of manual visual defect/error detection. Team leaders’ understanding of the production line settings and effects is supported by the live visualisation of the production line in the hybrid web app. | Ability to analyse abstract machine data for trend identification in operational processes and procedures, e.g., for forecasting. Overview of production efficiency with a benchmark graph, which continuously detects product up-time and defects, compared against the current recipe. An eye-opener that showed more production line errors than previously assumed. Ability to compare the operators’ button-registered defects in the production line with the overall number of rejects in the final quality control of the produced batch. Cause and effect insights through the automated visualisation of the correlation between environmental factors (telemetry data from sensors) and manual action factors (e.g., error detection buttons). Team meetings and planning are now supported by the live visualisation of the production line in the hybrid web app. | Knowledge of basic ways to collect data, connect machines and users with IoT smart devices and the types of data that are collectable. Objective insights into process changes and machine configurations that enable maintenance based on data. Data to begin analysis of the raw materials affecting the processing and mixing parameters on the end quality. Enable operators to adjust configurations in an informed way. Closer collaboration with machine suppliers based on a joint interest in making equipment data an asset. | Data insights that are valuable when approaching new international partners and customers. Data-driven strategic decision-making becomes a reality, as no subjective/approximate estimates affect the calculated figures. |

|

|

|

| Before 3.25 After 4.00 | Before 3.20 After 3.60 | Before 3.40 After 3.80 |

|

|

|

| Before 3.25 After 3.50 | Before 2.75 After 4.00 | Before 3.25 After 3.50 |

| Dimension/Change | Company’s Influence on POC | Direct Technology Change | Impact of Intervention |

|---|---|---|---|

| Strategy +0.75 | Low digital capabilities resulted in weak influence over the POC directions. No aligned vision across stakeholders | No immediate change measured | Raised awareness of complexity in systems (production, support, IT) and the scale to which change must be considered |

| Culture +0.4 | Digital culture was minimal with the result that user experience became a priority to reduce “data overload” for users | No immediate change measured | Increasing operator willingness to contribute across intervention and POC acceptance indicates change, but sustainability is unclear |

| Organisation +0.4 | Stakeholders understood weak points and problem areas, which resulted in a common requirement for each POC | Potential new way for the production team to carry out weekly meetings and root-cause analysis | Multiple departments worked together to carry out intervention; however, final artefact benefited only one team |

| Process +0.25 | Critical importance due to realisations of bottleneck issues and clear wasteful manual methods documented via co-creation | Introduction of data logger buttons, user interface screens, IoT Platform and new integrations with PLC | Complete digital solution with data-driven reporting was devised to replace subjective configurations to processes |

| Technology +1.25 | Good level of digitisation knowledge (automation, sensors), low digitalisation (IT/IS). Designs were limited to support spreadsheet and web browser formats for accessibility | New addition of sensing, user interfaces and data-driven dashboards to operations | New technologies introduced encouraged digitalisation of existing process and offered examples and showed potential for new methods (e.g., proactive maintenance) |

| Customers and Partners +0.25 | Multi-stage production relied on a range of international suppliers, yet very little was fully digitalised and ready to be integrated with SI | New integration between PLCs and IoT suppliers required clearer roles and responsibilities than what the typical way of working offered | Allowed one supplier to develop a new feature in their product. Case company found that this sort of innovation benefited the supplier more than them |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Aagaard, A.; Presser, M.; Collins, T.; Beliatis, M.; Skou, A.K.; Jakobsen, E.M. The Role of Digital Maturity Assessment in Technology Interventions with Industrial Internet Playground. Electronics 2021, 10, 1134. https://doi.org/10.3390/electronics10101134

Aagaard A, Presser M, Collins T, Beliatis M, Skou AK, Jakobsen EM. The Role of Digital Maturity Assessment in Technology Interventions with Industrial Internet Playground. Electronics. 2021; 10(10):1134. https://doi.org/10.3390/electronics10101134

Chicago/Turabian StyleAagaard, Annabeth, Mirko Presser, Tom Collins, Michail Beliatis, Anita Krogsøe Skou, and Emilie Mathilde Jakobsen. 2021. "The Role of Digital Maturity Assessment in Technology Interventions with Industrial Internet Playground" Electronics 10, no. 10: 1134. https://doi.org/10.3390/electronics10101134

APA StyleAagaard, A., Presser, M., Collins, T., Beliatis, M., Skou, A. K., & Jakobsen, E. M. (2021). The Role of Digital Maturity Assessment in Technology Interventions with Industrial Internet Playground. Electronics, 10(10), 1134. https://doi.org/10.3390/electronics10101134