1. Introduction

Currently, the world is facing the challenges posed by the COVID-19 pandemic [

1]. In the last few months, due to these changes, almost the entire population of the world has been affected in terms of their day to day operations. Nowadays, people are working, studying, shopping and socializing from a distance. The need for physical distancing has also affected peoples’ emotions and their expression. Social media platforms became one of the main outlets on which people express, among others, their thoughts, sentiments, emotions and so forth, regarding the pandemic situation. Recent studies also show that social media has been one of the main channels for misinformation [

2], especially during the ongoing pandemic crisis. Besides this, social media channels were considered and used by relevant Public Health Authorities for the distribution of information to the wider public [

3]. Kosova, as a young country, has been following these trends. The National Institute of Public Health of Kosova has been utilizing their Facebook page to disseminate information and recommendations daily regarding the pandemic situation. These posts have created much engagement with local populations in terms of impressions and comments where general public shared their thoughts and emotions, as well as their sentiments regarding the ongoing pandemic that the country was/is going through. The social engagement on Facebook around the Public Health Institute posts created a rich and diverse set of data that captured quite well the overall public discourse and sentiments around the COVID-19 pandemic in the Albanian language.

Sentiment analysis within academia is defined as a computational examination of end user opinions, attitudes and emotions expressed towards a particular topic or event [

4]. Sentiment analysis systems use various learning approaches to detect sentiment from textual data including lexicon-based [

5], machine/deep learning [

6,

7], a combination of lexicon and machine learning [

8], concept-based learning approaches [

9,

10], and so forth. Sentiment analysis became an important field of research for machine learning applications. Predominately, social media sentiment analysis has been one of the main field of research especially during the current COVID-19 pandemic [

1]. In these studies, the prime focus has been on assessing public sentiment analysis in order to gain insights for making appropriate public health responses. Besides this, other areas where sentiment analysis is applied include, among others, election predictions [

11], financial markets [

12], students’ reviews [

13,

14], and so forth, just to name a few. A common denominator of all these cases across diverse application areas shows that sentiment analysis is a valuable tool to provide accurate insights into general public opinions about particular topics of interest. The application of the sentiment analysis is closely related to the availability of the data sets that are usually related to high-resource languages. In our case of analysis, we were dealing with a relatively low-resource language, the Albanian language. Having in mind the possibility that sentiment analysis could provide insights into peoples’ opinions during the pandemic and the measures taken for its prevention. Motivated by this, we designed this study, in which we created a data set and evaluated different deep learning models and conventional machine learning algorithms in a low-resource language such as the Albanian language. The main contributions of this article are as follows:

The collection of a large-scale dataset composed of 10,742 manually classified Facebook comments related to the COVID-19 pandemic. To the best of our knowledge, this is the first study that performed sentiment analysis of Facebook comments in a low-resource language such as the Albanian language.

A deep learning based sentiment analyser called AlbAna is proposed and validated on the collected and curated COVID-19 dataset.

An attention mechanism is used to characterize the word level interactions within a local and global context to capture the semantic meaning of words.

The rest of the paper is structured as follows:

Section 2 presents some related work, especially from the context of sentiment analysis in the Albanian language. In

Section 3, we describe the methodology used to conduct the research. A description of the dataset and classifier models used to conduct the experiments is provided in

Section 4.

Section 5 depicts experimental results followed by their discussion presented in

Section 6. The paper concludes with some future directions presented in

Section 7.

2. Related Work

Albanian is spoken by over 10 million speakers, as the official language of Kosovo and Albania, but is also one of two official languages in North Macedonia, and is spoken by the Albanian community in the Balkans and the region, as well as among the large Albanians’ migrants community residing mainly in European countries, America and Oceania. Albanian is an Indo-European language, an independent branch of its own, featuring lexical peculiarities distinguishable from other languages [

15].

Considering the advancement of research in Natural Language Processing (NLP) and in sentiment analysis in particular for many world-spoken languages, NLP and sentiment analysis research on the Albanian language stands behind even some other low resource languages.

Sentiment analysis research for the English language has achieved significant results already, and advanced to not only adapting the latest theories in the areas of lexical analysis and machine learning (ML), but also at application level [

6,

7,

16,

17,

18,

19].

In [

16], the literature on sentiment analysis using different ML techniques over social media data to predict epidemics and outbreaks, or for other application domains is surveyed. ML, linguistic-based and hybrid approaches for sentiment analysis are compared. ML approaches take precedence over linguistic-based except for short sentences. Classical ML techniques such as SVM, Naive Bayes, Logistic Regression, Random Forest and Decision Trees are shown as most accurate, each for certain dataset and domain.

In [

17], in an analysis about COVID-19 on 85.04M tweets from 182 countries during March to June 2020, the distribution of sentiments was found to vary over time and country, uncovering thus the public perception of emerging policies such as social distancing and remote work. Authors conclude that social media analysis for other platforms and languages is critical towards identifying misinformation and online discourse.

In [

18], Facebook pages of Public Health Authorities (PHAs) and the public response in Singapore, the United States, and England from January 2019 to March 2020 are analyzed in terms of the outreach effects. Among metrics measured are mean posts per day ranging 1.4 to 5, mean comments per post ranging 12.5 to 255.3, mean sentiment polarity, positive to negative sentiments ratio ranging 0.55 to 0.94, and toxicity in comments which turned to be rare across all PHAs.

In [

6], the authors seek to understand the usefulness/harm of tweets by identifying sentiments and opinions in themes of serious concerns like pandemics. The proposed model for sentiment analysis uses deep learning classifiers with accuracy up to 81%. Another proposed model bases on fuzzy logic and is implemented by SVM with an accuracy of 79%.

In [

19], sentiment analysis of Tweets about Coronavirus using Naive Bayes and Logistic Regression is presented. Tweets of varying lengths, that is, less than 77 characters (small to medium) and less than 120 characters (longer) are analyzed separately. Naive Bayes performed better on classifying small to medium size Coronavirus Tweets sentiments with an accuracy of 91%. For longer Tweets, both methods showed weak performance with an accuracy not over 57%.

The reaction of people from different cultures to the Coronavirus expressed on social media and their attitudes about the actions taken by different countries is analyzed in [

7]. Tweets related to COVID-19 were collected for six neighboring countries with similar cultures and circumstances including Pakistan, India, Norway, Sweden, USA and Canada. Three different deep learning models including DNN, LSTM, and CNN along with three general-purpose word embedding techniques, namely fastText, GloVE and GloVe for twitter, were employed for sentiment analysis. The best performance with an F1-score of 82.4% was achieved by LSTM with fastText.

Work on other languages concerning sentiment analysis is also growing, such as on German [

20,

21], Swedish [

22,

23], or multilingual social media posts [

24]. A detailed description of the past and recent advancements on multilingual sentiment analysis conducted on both formal and informal languages used on online social platforms is explored in the survey conducted by Lo et al. in [

25].

There are only a few works on sentiment analysis (opinion mining) in the Albanian language [

26,

27,

28], as well as few related to sentiment analysis on emotion detection in the Albanian language [

29,

30].

In [

26], an ML model is developed to classify documents as having positive or negative sentiment. The corpora built to develop the model consists of 400 documents covering five different topics, each topic represented by 80 documents tagged evenly with positive and negative sentiment. Six different ML algorithms, namely Bayesian Logistic Regression, Logistic Regression, SVM, Voted Perceptron, Naive Bayes and Hyper Pipes are used for classification, performing with 86% to 92% accuracy depending on the topic. The whole corpora being political news articles is characterized by a complex language and very rich technical vocabulary. The paper concludes that a larger dataset in the Albanian language is needed to achieve a high performance sentiment classifier.

In [

27], a comprehensive selection of ML algorithms is evaluated for opinion mining in the Albanian language, resulting to five best performing algorithms, Logistic and Multi Class Classifier, Hyper Pipes, RBF Classifier, and RBF Network with 79% to 94% of correctly classified instances. The opinions are classified as positive or negative. The classification model is developed over a corpus of 500 newspaper articles in Albanian covering 5 different subjects, each with a balanced set of articles with positive and negative opinions. The results varied also from subject to subject. This research is later extended from an in-domain corpus to multi-domains corpuses combining opinions from 5 different topics [

28]. All the corpuses are used to train and test for opinion mining the performance of 50 classification algorithms implemented in Weka. Algorithms perform better in in-domain corpus than in multi-domain corpus. As authors state, a bigger corpus in the Albanian language could provide a clearer picture on the performance of classification algorithms for opinion mining.

In [

29], a CNN sentence-based classifier is developed to classify a given text fragment into one out of six pre-defined emotion classes based on Ekman’ model: joy, fear, disgust, anger, shame and sadness. Experimental evaluation shows that a deep learning model (CNN) with classification accuracy of emotions ranging from 67% to 92.4% in overall outperforms three classical classification algorithms, Naive Bayes (NB), Instance-based learner (IBK), and Support Vector Machines (SMO). Findings related to the impact of the length of text on classification are also presented. The stemming of text prior to classification improves the accuracy. Another contribution is the corpus built—some 6000 posts by politicians on Facebook in the Albanian language—to develop the model. Further, in [

30], the authors extend their framework with clustering to extract representative sets of generic words for a given emotion class. The authors list deep neural network architectures that take the sequential nature of text data into account, such as LSTM, as worth considering for an emotion detection model in the future.

Our approach follows the rationale that a larger dataset is a prerequisite for developing a model that is not prone to overfitting. Recalling the classification results in the related work mentioned above, there is a huge variation in accuracy between distinct sub-datasets of a size of merely some hundreds of tagged data. Moreover, the sequential ordering of text is to be learned from, and hence considering the usage of deep neural networks for the model as well as NLP based representation techniques, that is, static and contextualized word embeddings, is unavoidable. Multi-class classification into positive, neutral, and negative sentiments is also of interest to validate the applicability of our approach not only to sentiment analysis, but also to other domains like detecting emotions or other multi-class text mining tasks (e.g., review of items in the scale 1 to 10) in the Albanian Language.

3. Methodology

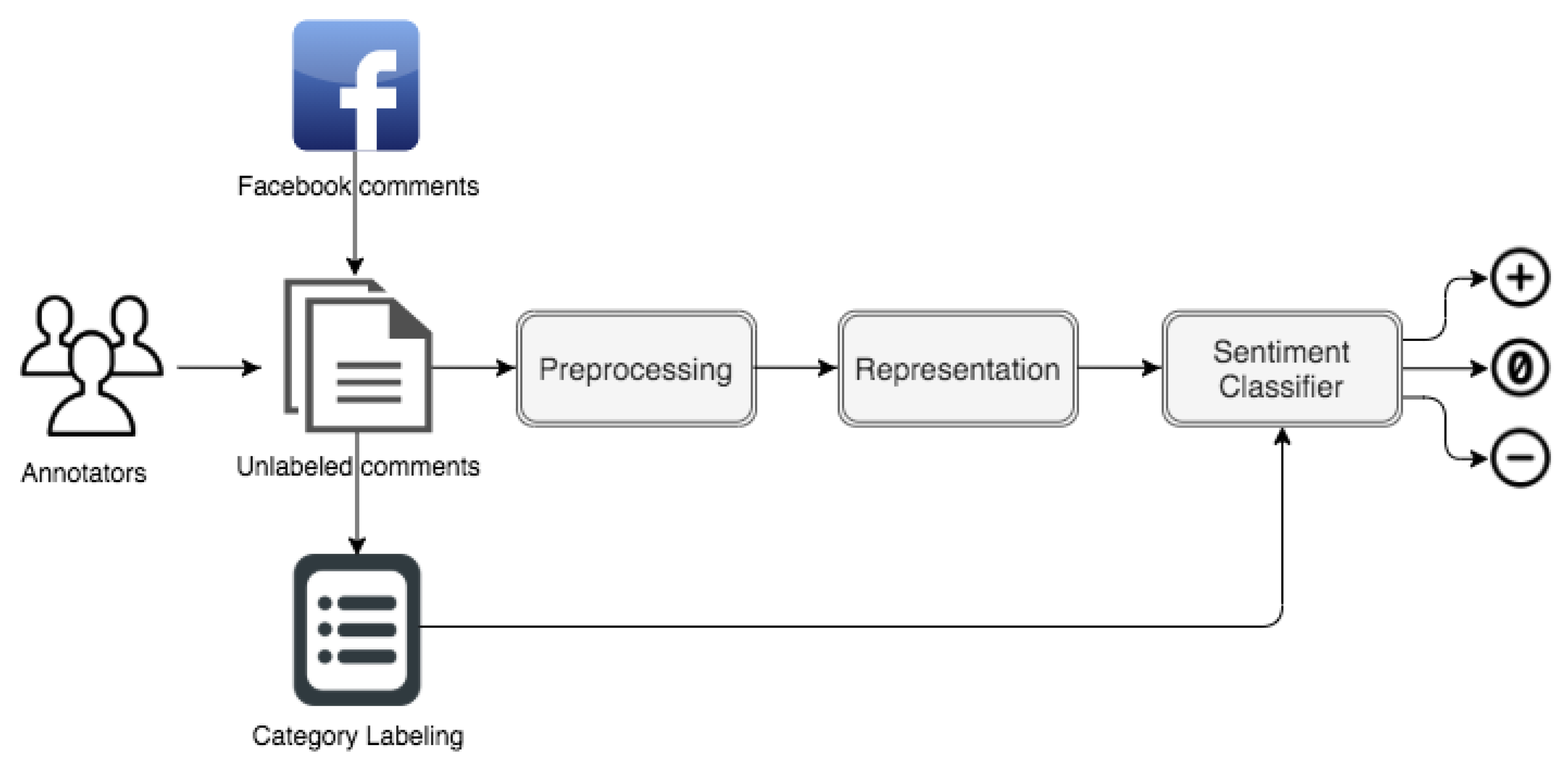

The research was carried out using a quantitative research method and it was comprised of five phases including the first two that constitute human-related tasks and the remaining three phases in which a machine is involved. More specifically, the first phase entailed collecting users’ posts on Facebook from the day when the first few cases were reported (13 March until 15 August). The second phase was constituted of the labeling of collected posts. A manual labeling process has taken place where three human annotators assessed the attitudes and opinions of users expressed in Facebook posts and properly classified them to either positive, neutral or negative categories.

In the third phase, a text pre-processing was performed to remove punctuation, words with length less or equal to two characters, and words that are not purely constructed of alphabetical characters from users’ comments. Additionally, all text comments were converted to lowercase.

The fourth phase involved a representation model to prepare and transform the posts to an appropriate numerical format to be fed into the sentiment classifiers. A bag of word representation model with its implementations, term frequency inverse document frequency— was employed. Furthermore, we used a representation model that generated dense vector representations for words occurring in comments known as word embeddings. A static pre-trained word embedding method called fastText along with a contextualized word embeddings model—BERT, were used to learn and generate word vectors.

The final phase is constituted of the sentiment analyser, which aims to predict the sentiment of each users’ comment into one of the three categories, namely positive, neutral or negative. The analyser involves several classifiers including deep neural networks as well as conventional machine learning algorithms for sentiment orientation detection.

A high level architecture of the proposed A

lbA

na analyser involving all the phases elaborated above is illustrated in

Figure 1.

6. Discussion

Based on the experimental results provided in

Section 5, deep learning models (1D-CNN, BiLSTM, Hybrid, and BERT) generally perform better than conventional machine learning models (SVM, NB, DT, RF). This can be attributed to the capabilities of deep neural networks on modeling textual comments. 1D-CNN is a classifier model which is good at identifying local features in the comments regardless of their position. The model also applies pooling to reduce the output dimensionality and extract the most salient features. On the other hand, BiLSTM is a classification model that has the ability to capture contextual information in both forward and backward directions and to learn long-range dependencies from the comments. Hybrid classifier model takes the advantages of both complementary 1D-CNN and BiLSTM architectures whereas BERT classifier is capable to understand the meaning of each word using a bidirectional strategy and attention mechanism.

It is also interesting to note that the performance of all the deep learning models is improved using the attention mechanism. This mechanism is used to explicitly make the classifiers more robust for understanding the semantic meaning of each word within a local or global context. The empirical data (

Figure 8) showed that the local context works better than the global one in our case.

Another interesting fact that can be observed from the experimental results is a better class-wise performance achieved from deep learning classifiers compared to conventional machine learning models. A significant improvement is evident in classes with small numbers of comments. More specifically, the neutral class registered an average F1 score of 62.38% when deep learning classifiers with attention mechanism and domain embeddings are applied for sentiment classification compared to an average F1 score of 55.91% obtained from conventional classifiers with distribution embeddings.

Despite better performance of deep learning classifiers on our sentiment classification task, there are still a few advantages to using conventional machine learning models. One advantage is that these models are financially and computationally cheap as they can run on decent CPU and do not require very expensive hardware such as GPU and TPU. Another advantage of conventional classifier models is the interpretability. These models are easy to interpret and understand as they involve direct feature engineering in contrast to deep learning models which extract features automatically.

In general, the results are inspiring given the fact that the Albanian language is considered a resource-constrained language and it faces many challenges when it comes to natural language processing tasks in general, and in sentiment analysis in particular. These challenges involve both technical and linguistic related aspects. From a technical point of view, systems for sentiment analysis of Albanian text face a scarcity of NLP tools and techniques such as tools for text stemming and lemmatization, list of stop words etc. From a linguistic perspective, there are various aspects which affect the performance of sentiment analysis systems for the Albanian language including negations (explicit and implicit) slang words/acronyms, figurative language (sarcasm, irony), etc.

7. Conclusions and Future Work

This article presented a sentiment analyser for extracting opinions, thoughts and attitudes of people expressed on social media related to the COVID-19 pandemic. Three deep neural networks utilizing an attention mechanism and a pre-trained embedding model, that is, fastText, are trained and validated on a real-life large-scale dataset collected for this purpose. The dataset consisted of users’ comments in the Albanian language posted on NIPHK Facebook page during the period of March to August 2020. Our findings showed that our proposed sentiment analyser performed pretty well, even outperforming the baseline classifier on the collected dataset. Specifically, an F1 score of 72.09% is achieved by integrating a local attention mechanism with BiLSTM. These results are very promising considering the fact that the dataset is composed of social media user-generated reviews which are typically written in an informal manner—without any regard to standards of the Albanian language and also consisting of informal words and phrases like slang words, emoticons, acronyms, etc. The findings validated the usefulness of our proposed approach as an effective solution for handling users’ sentiment expressed in social media in low-resource languages.

In future work, we will focus on studying more colloquial textual data on social platforms like Twitter, Instagram, and so forth, and propose deep learning models that can be enriched with semantically rich representations [

32] for effectively extracting peoples’ opinions and attitudes. Another interesting aspect that will be investigated in the future is using emojis (emoticons) as an input data because they are also an effective way to express people’s emotions and attitudes towards a certain event. Furthermore, the collected dataset is highly imbalanced with the neutral class having more than half of the comments, thus future work will concentrate on applying data balancing strategies including synthetic data generation and oversampling techniques, that is, SMOTE, as well as text generation models such as GPT-2.