Multi-Agent Deep Deterministic Policy Gradient-Based Coordinated Control for Urban Expressway Entrance–Arterial Interfaces

Abstract

1. Introduction

- Control-unit scope remains narrow: Most studies use single strategies or RM-signal RM-VSL pairs, overlook dual upstream influences, and lack a unified, dynamic scheme jointly optimizing VSL, RM, and intersection signals for the interface.

- Coupling mechanisms are suboptimal: Coordination often relies on fixed rules or one-way feedback: MPC/GA need accurate models, heavy calibration, and nontrivial computation-hindering, real-time, and bidirectional coupling under stochastic demand, mixed traffic, and partial observability.

- Robustness to phase transitions is insufficient: Single-point or weakly coordinated control struggles with capacity drops and instability; multi-agent decisions conflict without explicit safety/stability mediation, degrading throughput and elevating risk.

- A multi-agent architecture is formulated to jointly optimize variable speed limits, ramp metering, and intersection signals via MADDPG while representing dual upstream influences. The design resolves local conflicts, strengthens density regulation, and enhances control effectiveness under time-varying mixed conditions.

- A bidirectional coupling mechanism is proposed that consolidates local observations into a joint state and coordinates asynchronous control cycles through time-slice alignment and action holding. Unlike prior rule-based or MPC/GA approaches that rely on heavy calibration, this mechanism enables threshold-free real-time coordination while preserving feasibility.

- A multi-region coordination mechanism is proposed to achieve cooperative optimization across different spatial and temporal domains, ensuring globally optimal traffic performance and overcoming the local optima, uncoordinated timings, and cascading breakdowns inherent in isolated control.

2. Literature Review

2.1. Applications of Reinforcement Learning at Intersections

2.2. Coordinated Control at Expressway–Urban Road Interfaces

3. Problem Description

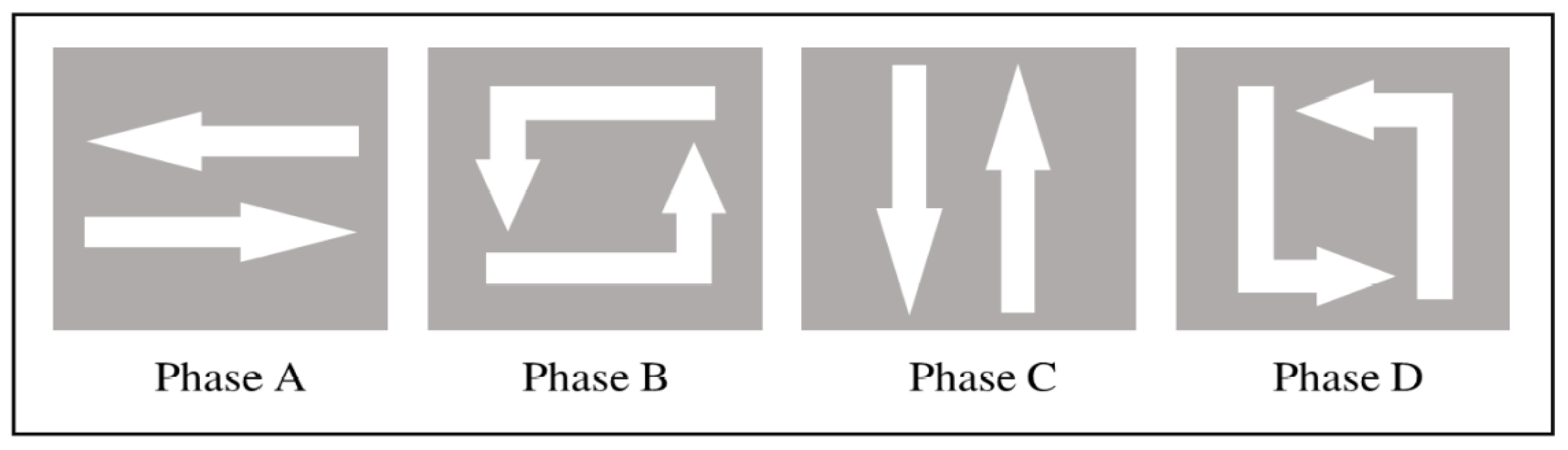

3.1. Interface Types and Study Area

3.2. Control Problem Characterization

3.3. Generalization of the Localized Modeling Approach

4. MADDPG-Based Coordinated Control Strategy

4.1. Agent Design

4.2. State Space

- (1)

- VSL state:

- (2)

- RM state:

- (3)

- ISC state:

4.3. Action Space

- (1)

- VSL action space:

- (2)

- RM action space:

- (3)

- ISC action space:

4.4. Asynchronous Control Cycle Coordination Mechanism

4.5. Reward Design

- Critical-density tracking: Penalizing keeps the mainline near the efficient operating point on the fundamental diagram; when density approaches/exceeds , the policy lowers the VSL and throttles ramp inflow to avoid breakdown.

- Speed homogenization: Penalizing promotes smooth speed profiles, reducing disturbance gain and preventing stop-go amplification in the VSL zone.

- No spillback: Penalizing enforces storage limits at ramps/junctions; when queues rise, ISC prioritizes discharge (longer greens) and/or RM tightens inflow (shorter greens) to avert spillback.

- Smooth actuation: Penalizing discourages myopic high-frequency toggling, thereby stabilizing both speed fields and queue dynamics.

4.6. Collaborative Control Algorithm

| Algorithm 1 Cooperative Traffic Control Strategy |

| Input: The location of the agents, the traffic simulation environment, and the action exploration parameters |

| Output: Updated agent actions and network parameters |

| , and assign corresponding target networks |

| 2: Clear the experience replay pool, set training hyperparameters |

| do |

| 5: for each time interval do |

| according to the cooperative control strategy |

| for each time step in the experience replay pool, regardless of whether all agents update actions |

| 9: if the experience pool reaches capacity then |

| = 1, 2, 3 do |

| 11: Sample a mini-batch of experience data from the experience pool |

| : , |

| : |

| 15: end for |

| 16: end if |

| 17: end for |

| 18: end for |

4.7. Real-Time Feasibility and Computational Complexity

- (1)

- Asynchronous control cycle coordination. VSL, RM, and ISC agents operate on heterogeneous cycles (30 s, 5 s, and 10–50 s, respectively), aligned on a unified 5 s grid with an action-holding mechanism. Here, the 5 s grid refers to the global scheduling/communication tick used for state aggregation and due-check, not the signal cycle length; the ISC cycle remains 10–50 s through phase holding, while decisions are synchronized to the 5 s grid by action holding and time quantization. This avoids unnecessary updates while preserving feasibility constraints such as minimum green times and legal speed limits.

- (2)

- Lightweight execution. Only actor networks are invoked online, while critic networks are used solely during training. Each actor is a compact fully connected model, ensuring constant-time inference.

- (3)

- Complexity analysis. Let denote the cost of one actor forward pass and indicate whether agent is due at tick . The per-step cost is

- (4)

- Online execution pipeline (software mechanism). The system deployment strictly follows CTDE: critics are used only for offline training, and the real-time side does not execute critics; online operation involves only actor policies. The real-time system is driven by a 5 s scheduler and executes: (i) collect local detector summaries and the broadcast compact shared state; (ii) normalize the state; (iii) check due flags of each controller; (iv) perform one actor forward inference only for controllers that are due; otherwise hold the previous action; (v) apply feasibility constraints (legal speed limits, minimum green time, queue safety thresholds, etc.); and (vi) dispatch commands to RM/VSL/ISC. This pipeline uses constant memory, has bounded computation per tick, and is consistent with the complexity analysis in Equation (20).

5. Experimental Setup and Results

5.1. Scenario and Parameter Settings

5.1.1. Scenario Design

5.1.2. Parameter Settings

5.2. Baseline Controllers

5.3. Training Procedure and Results

5.4. Comparative Analysis of Control Performance

5.4.1. Comparison of Vehicle Spatiotemporal Trajectories

5.4.2. Comparative Analysis of Control Actions

5.4.3. Comparative Analysis of Traffic Efficiency

5.4.4. Sensitive Analysis

6. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Yuan, T.; Ioannou, P.A. Integrated freeway traffic control using Q-learning with adjacent arterial traffic considerations. IEEE Trans. Intell. Transp. Syst. 2025, 26, 7655–7666. [Google Scholar] [CrossRef]

- Chen, S.; Mao, B.; Liu, S.; Sun, Q.; Wei, W.; Zhan, L. Computer-aided analysis and evaluation on ramp spacing along urban expressways. Transp. Res. Part C Emerg. Technol. 2013, 36, 381–393. [Google Scholar]

- Wang, L.; Abdel-Aty, M.; Lee, J.; Shi, Q. Analysis of real-time crash risk for expressway ramps using traffic, geometric, trip generation, and socio-demographic predictors. Accid. Anal. Prev. 2019, 122, 378–384. [Google Scholar] [PubMed]

- Peng, T.; Xu, X.; Li, Y.; Wu, J.; Li, T.; Dong, X.; Cai, Y.; Wu, P.; Ullah, S. Enhancing expressway ramp merge safety and efficiency via spatiotemporal cooperative control. IEEE Access 2025, 13, 25664–25682. [Google Scholar] [CrossRef]

- Xu, Z.; Zheng, Y.; Li, Y. Coordinated control of urban expressways and connecting intersection based on genetic algorithm. In Proceedings of the 2024 9th International Conference on Computer and Communication Systems (ICCCS), Xi’an, China, 19–22 April 2024; IEEE: Piscataway, NJ, USA, 2024; pp. 1132–1138. [Google Scholar]

- Ma, J.; Zeng, Y.; Chen, D. Ramp spacing evaluation of expressway based on entropy-weighted TOPSIS estimation method. Systems 2023, 11, 139. [Google Scholar] [CrossRef]

- Papageorgiou, M. A new approach to time-of-day control based on a dynamic freeway traffic model. Transp. Res. Part B Methodol. 1980, 14, 181–196. [Google Scholar]

- Papageorgiou, M.; Habib, H.S.; Blosseville, J.M. ALINEA: A local feedback control law for on-ramp metering. Transp. Res. Rec. 1991, 1320, 58–64. [Google Scholar]

- Yue, W.; Yang, H.; Li, M.; Wang, Y.; Zhou, Y.; Zheng, P. Hierarchical control based on ramp metering and variable speed limit for port motorway. Systems 2025, 13, 446. [Google Scholar] [CrossRef]

- Cheng, R.; Lou, H.; Wei, Q. Analysis of the impact for mixed traffic flow based on the time-varying model predictive control. Systems 2025, 13, 481. [Google Scholar] [CrossRef]

- Smulders, S. Control of freeway traffic flow by variable speed signs. Transp. Res. Part B Methodol. 1990, 24, 111–132. [Google Scholar] [CrossRef]

- Ma, C.; Guo, J.; Zhao, Y. Variable speed limit control strategy at freeway tunnel entrance based on cooperative lane changing. Phys. A Stat. Mech. Its Appl. 2023, 620, 128768. [Google Scholar]

- Guo, H.; Jia, H.; Wu, R.; Huang, Q.; Tian, J.; Liu, C.; Wang, X. Variable speed limits for mixed traffic flow with connected autonomous vehicles: A reinforcement learning approach. J. Transp. Eng. Part A Syst. 2024, 150, 04024024. [Google Scholar]

- Jin, Z.; Ma, M.; Liang, S.; Yao, H. Differential variable speed limit control strategy consider lane assignment at the freeway lane drop bottleneck. Phys. A Stat. Mech. Its Appl. 2024, 633, 129366. [Google Scholar] [CrossRef]

- Sun, R.; Hu, J.; Xie, X.; Zhang, Z. Variable speed limit design to relieve traffic congestion based on cooperative vehicle infrastructure system. Procedia Soc. Behav. Sci. 2014, 138, 427–438. [Google Scholar] [CrossRef]

- Yu, R.; Abdel-Aty, M. An optimal variable speed limits system to ameliorate traffic safety risk. Transp. Res. Part C Emerg. Technol. 2014, 46, 235–246. [Google Scholar] [CrossRef]

- Khondaker, B.; Kattan, L. Variable speed limit: A microscopic analysis in a connected vehicle environment. Transp. Res. Part C Emerg. Technol. 2015, 58, 146–159. [Google Scholar] [CrossRef]

- Han, Y.; Wang, M.; He, Z.; Li, Z.; Wang, H.; Liu, P. A linear Lagrangian model predictive controller of macro- and micro- variable speed limits to eliminate freeway jam waves. Transp. Res. Part C Emerg. Technol. 2021, 128, 103121. [Google Scholar] [CrossRef]

- Ding, H.; Zhang, L.; Chen, J.; Zheng, X.; Pan, H.; Zhang, W. MPC-based dynamic speed control of CAVs in multiple sections upstream of the bottleneck area within a mixed vehicular environment. Phys. A Stat. Mech. Its Appl. 2023, 613, 128542. [Google Scholar] [CrossRef]

- Zhang, L.; Ding, H.; Feng, Z.; Wang, L.; Di, Y.; Zheng, X.; Wang, S. Variable speed limit control strategy considering traffic flow lane assignment in mixed-vehicle driving environment. Phys. A Stat. Mech. Its Appl. 2024, 656, 130216. [Google Scholar] [CrossRef]

- Iordanidou, G.-R.; Roncoli, C.; Papamichail, I.; Papageorgiou, M. Feedback-based mainstream traffic flow control for multiple bottlenecks on motorways. IEEE Trans. Intell. Transp. Syst. 2015, 16, 610–621. [Google Scholar]

- Qiu, S.; Li, Z.; Pang, Z.; Li, Z.; Tao, Y. Multi-agent optimal control for central chiller plants using reinforcement learning and game theory. Systems 2023, 11, 136. [Google Scholar] [CrossRef]

- Karalakou, A.; Troullinos, D.; Chalkiadakis, G.; Papageorgiou, M. Deep reinforcement learning reward function design for autonomous driving in lane-free traffic. Systems 2023, 11, 134. [Google Scholar] [CrossRef]

- Jin, J.; Huang, H.; Li, Y.; Dong, Y.; Zhang, G.; Chen, J. Variable speed limit control strategy for freeway tunnels based on a multi-objective deep reinforcement learning framework with safety perception. Expert Syst. Appl. 2025, 267, 126277. [Google Scholar] [CrossRef]

- Chow, A.H.F.; Su, Z.C.; Liang, E.M.; Zhong, R.X. Adaptive signal control for bus service reliability with connected vehicle technology via reinforcement learning. Transp. Res. Part C Emerg. Technol. 2021, 129, 103264. [Google Scholar] [CrossRef]

- Pang, M.; Yang, M. Coordinated control of urban expressway integrating adjacent signalized intersections based on pinning synchronization of complex networks. Transp. Res. Part C Emerg. Technol. 2020, 116, 102645. [Google Scholar] [CrossRef]

- Deng, M.; Chen, F.; Gong, Y.; Li, X.; Li, S. Optimization of signal timing for urban expressway exit ramp connecting intersection. Sensors 2023, 23, 6884. [Google Scholar] [CrossRef]

- Cheng, M.; Zhang, C.; Jin, H.; Wang, Z.; Yang, X. Adaptive coordinated variable speed limit between highway mainline and on-ramp with deep reinforcement learning. J. Adv. Transp. 2022, 2022, 2435643. [Google Scholar] [CrossRef]

- He, Z.; Han, Y.; Yu, H.; Bai, L.; Guo, W.; Liu, P. Integrated feedback perimeter control-based ramp metering and variable speed limits for multibottleneck freeways. J. Transp. Eng. Part A Syst. 2024, 150, 04024054. [Google Scholar] [CrossRef]

- Hegyi, A.; De Schutter, B.; Hellendoorn, H. Model predictive control for optimal coordination of ramp metering and variable speed limits. Transp. Res. Part C Emerg. Technol. 2005, 13, 185–209. [Google Scholar] [CrossRef]

- Deng, F.; Jin, J.; Shen, Y.; Du, Y. A dynamic self-improving ramp metering algorithm based on multi-agent deep reinforcement learning. Transp. Lett. 2024, 16, 649–657. [Google Scholar] [CrossRef]

- Jin, J.; Li, Y.; Huang, H.; Dong, Y.; Liu, P. A variable speed limit control approach for freeway tunnels based on the model-based reinforcement learning framework with safety perception. Accid. Anal. Prev. 2024, 201, 107570. [Google Scholar] [CrossRef] [PubMed]

- Han, Y.; Hegyi, A.; Zhang, L.; He, Z.; Chung, E.; Liu, P. A new reinforcement learning-based variable speed limit control approach to improve traffic efficiency against freeway jam waves. Transp. Res. Part C Emerg. Technol. 2022, 144, 103900. [Google Scholar] [CrossRef]

- Lu, W.; Yi, Z.; Gu, Y.; Rui, Y.; Ran, B. TD3LVSL: A lane-level variable speed limit approach based on twin delayed deep deterministic policy gradient in a connected automated vehicle environment. Transp. Res. Part C Emerg. Technol. 2023, 153, 104221. [Google Scholar] [CrossRef]

- Pooladsanj, M.; Savla, K.; Ioannou, P.A. Ramp metering to maximize freeway throughput under vehicle safety constraints. Transp. Res. Part C Emerg. Technol. 2023, 154, 104267. [Google Scholar] [CrossRef]

- Wang, T.; Zhu, Z.; Zhang, J.; Tian, J.; Zhang, W. A large-scale traffic signal control algorithm based on multi-layer graph deep reinforcement learning. Transp. Res. Part C Emerg. Technol. 2024, 162, 104582. [Google Scholar] [CrossRef]

- Bie, Y.; Ji, Y.; Ma, D. Multi-agent deep reinforcement learning collaborative traffic signal control method considering intersection heterogeneity. Transp. Res. Part C Emerg. Technol. 2024, 164, 104663. [Google Scholar] [CrossRef]

- Song, X.B.; Zhou, B.; Ma, D. Cooperative traffic signal control through a counterfactual multi-agent deep actor critic approach. Transp. Res. Part C Emerg. Technol. 2024, 160, 104528. [Google Scholar] [CrossRef]

- Lin, Q.; Huang, W.; Wu, Z.; Zhang, M.; He, Z. Multi-Agent Game Theory-Based Coordinated Ramp Metering Method for Urban Expressways With Multi-Bottleneck. IEEE Trans. Intell. Transp. Syst. 2025, 26, 3643–3658. [Google Scholar] [CrossRef]

| Ref. | Scope | Paradigm | Hetero. Actuators | Async Cycles | Key Takeaway vs. Ours |

|---|---|---|---|---|---|

| [32] | VSL (tunnels) | Model-based RL + safety | No | No | Safety-aware VSL; not cross-actuator or asynchronous. |

| [33] | VSL | Distributed RL (single actuator) | No | No | Strong VSL; single actuator, no RM/ISC coupling. |

| [34] | VSL (lane-level) | DRL (TD3) | No | No | Lane-level refinement; still no multi-actuator coordination. |

| [35] | RM | Optimization + safety constraints | No | No | SOTA RM with safety; single actuator, no VSL/ISC. |

| [36] | Signals (network) | GraphRL/distributed | No | No | Scales signals via graphs; no VSL/RM; not heterogeneous. |

| [37] | Signals | Spatiotemporal graph attention MARL | No | No | Strong ATSC with ST-GAT; no VSL/RM integration. |

| [38] | Signals | Counterfactual MARL (credit assignment) | No | No | Coordination via credit assignment; not heterogeneous, no async cycles. |

| [39] | RM (multi-ramp) | Game/MARL hybrid | No | No | Multi-ramp coordination; no VSL/ISC or explicit async cycles. |

| Ours | VSL + RM + ISC | MADDPG | Yes | Yes | Conflict-aware reward + asynchronous scheduling at the expressway–arterial interface; system-level performance beyond isolated controllers. |

| Parameter | Normal Road | Ramp | Expressway |

|---|---|---|---|

| Desired Speed | 45 km/h | 39 km/h | 71 km/h |

| Maximum Acceleration | 2.4 m/s2 | 1.6 m/s2 | 3.5 m/s2 |

| Comfortable Deceleration | 1.9 m/s2 | 2.0 m/s2 | 1.8 m/s2 |

| Minimum Car Distance | 1.1 m | 1.4 m | 2.1 m |

| Desired Headway Time | 1.6 s | 1.7 s | 2.2 s |

| Parameter | Normal Road | Ramp | Expressway |

|---|---|---|---|

| Strategy Traffic Control | 2.0 | 5.0 | 0.6 |

| Cooperative Traffic Inclination | 1.0 | 1.5 | 0.5 |

| Speed Increase Benefit | 1 | 0.1 | 4 |

| Right-Turn Inclination | 0.1 | 0 | 1.0 |

| Time Interval (min) | Expressway Flow (veh/h/lane) | Ramp Flow (veh/h/lane) | Arterial Road Flow (veh/h/lane) |

|---|---|---|---|

| 0–20 | 1400 | 400 | 700 |

| 20–40 | 1500 | 500 | 800 |

| 40–60 | 1600 | 600 | 900 |

| 60–80 | 1700 | 700 | 1000 |

| 80–100 | 1800 | 800 | 1100 |

| 100–120 | 1900 | 900 | 1200 |

| Hyperparameter | Value |

|---|---|

| Training episodes | 1500 |

| Learning rate (Actor) | 0.0001 |

| Learning rate (Critic) | 0.003 |

| Discount factor | 0.99 |

| Replay buffer size | 100,000 |

| Batch size | 256 |

| Random noise | 0.2 |

| Target network update parameter | 0.005 |

| Time (min) | Flow (veh/h/lane) | Travel Time (s)/Speed (m/s)/Occupancy (%) | ||

|---|---|---|---|---|

| Method 1 | Method 2 | Method 3 | ||

| 0–20 | 1300 | 11.82/13.57/7.08 | 12.06/13.31/8.17 | 11.52/13.92/6.18 |

| 20–40 | 1400 | 12.27/13.08/8.58 | 12.76/12.58/9.98 | 11.88/13.51/7.30 |

| 40–60 | 1500 | 13.02/12.34/10.23 | 15.22/10.58/13.17 | 12.37/12.98/8.91 |

| 60–80 | 1700 | 16.02/10.06/13.95 | 21.74/7.47/19.78 | 13.56/11.85/10.96 |

| 80–100 | 1800 | 23.39/6.95/21.17 | 25.01/6.51/22.63 | 20.54/7.90/17.90 |

| 100–120 | 1900 | 29.30/5.53/22.91 | 34.62/4.68/21.87 | 24.88/6.51/23.35 |

| Time (min) | Flow (veh/h/lane) | Travel Time (s)/Speed (m/s)/Occupancy (%) | ||

|---|---|---|---|---|

| Method 1 | Method 2 | Method 3 | ||

| 0–20 | 400 | 23.32/10.72/3.32 | 23.29/10.73/3.47 | 23.27/10.74/2.84 |

| 20–40 | 500 | 23.48/10.64/4.02 | 23.53/10.62/4.18 | 23.37/10.69/3.5 |

| 40–60 | 600 | 23.65/10.57/4.68 | 23.66/10.56/4.84 | 23.35/10.67/4.17 |

| 60–80 | 700 | 26.21/9.15/5.92 | 23.86/10.47/6.15 | 23.70/10.55/4.85 |

| 80–100 | 800 | 62.72/3.99/15.76 | 40.85/6.12/10.5 | 27.55/9.05/6.4 |

| 100–120 | 900 | 135.13/1.85/38.92 | 91.42/2.69/28.85 | 63.65/3.93/16.9 |

| Time (min) | Flow (veh/h/lane) | Travel Time (s)/Speed (m/s)/Occupancy (%) | ||

|---|---|---|---|---|

| Method 1 | Method 2 | Method 3 | ||

| 0–20 | 700 | 33.38/3.07/1.15 | 26.28/3.84/1.57 | 31.92/3.18/1.86 |

| 20–40 | 800 | 35.22/2.71/1.5 | 26.02/3.87/1.56 | 31.83/3.2/2.02 |

| 40–60 | 900 | 40.32/2.57/1.84 | 29.01/3.67/2.01 | 32.33/3.09/2.2 |

| 60–80 | 1000 | 189.47/0.54/8.35 | 37.56/2.83/2.55 | 34.16/3.08/2.16 |

| 80–100 | 1100 | 225.83/0.45/30.85 | 44.92/2.36/15.4 | 38.26/2.74/10.75 |

| 100–120 | 1200 | 334.96/0.31/39.81 | 136.72/0.75/35.49 | 85.71/1.21/25.49 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Wang, S.; Wu, Z.; Yu, W. Multi-Agent Deep Deterministic Policy Gradient-Based Coordinated Control for Urban Expressway Entrance–Arterial Interfaces. Systems 2026, 14, 231. https://doi.org/10.3390/systems14030231

Wang S, Wu Z, Yu W. Multi-Agent Deep Deterministic Policy Gradient-Based Coordinated Control for Urban Expressway Entrance–Arterial Interfaces. Systems. 2026; 14(3):231. https://doi.org/10.3390/systems14030231

Chicago/Turabian StyleWang, Shunchao, Zhigang Wu, and Wangzi Yu. 2026. "Multi-Agent Deep Deterministic Policy Gradient-Based Coordinated Control for Urban Expressway Entrance–Arterial Interfaces" Systems 14, no. 3: 231. https://doi.org/10.3390/systems14030231

APA StyleWang, S., Wu, Z., & Yu, W. (2026). Multi-Agent Deep Deterministic Policy Gradient-Based Coordinated Control for Urban Expressway Entrance–Arterial Interfaces. Systems, 14(3), 231. https://doi.org/10.3390/systems14030231