1. Introduction

Recent advancements in graphic and display technology have helped in the development of visually immersive virtual reality (VR) experiences, while advancements in spatial sound have allowed users to experience a sense of place through sound. The most significant senses that allow users to experience a spatial sense in a visual realm have been given by advancements in virtual and audio technology, and a control method using a hand has become necessary to interact with virtual objects. The VIVE controller and Oculus Touch controller are two examples of commercial hand controllers. To control objects, the user holds the controllers in both hands and uses the buttons, joystick, and touch pad on the controllers to demonstrate finger motions in an animation. Even if the shape of the virtual object controlled by the user changes, the user’s hand movement remains the same, since the fingers do not move. Furthermore, vibration feedback supplied to the hand, not the finger, provides tactile input from contacting the object. The hand controller lacks physical interaction limits in the design, and develops a VR application that can provide advanced control.

Haptic devices that can physically interact with a virtual object can be divided into grounded and ungrounded types [

1]. When a force is applied, a haptic device stimulates the sensation of touch in the hands by delivering resistance feedback when moving or maintaining its location. Although grounded types such as PHANToM [

2] and SPIDAR [

3] are effective at delivering a kinesthetic to the hands, they limit users’ workspace. In this regard, the ungrounded type is appropriate for a VR environment where an object may freely move within the workspace. To generate haptic feedback, an exoskeleton device, which is a typical ungrounded type, uses sensors and actuators installed on the fingers to accurately determine the positions of both the finger and the fingertip [

4,

5]. In the VR environment, the user must use their hands to switch the head-mounted display (HMD) ON and OFF, as well as to use a two-hand controller while wearing the HMD, making a handheld-type controller more desirable due to its simplicity.

A handheld controller must have all mechanical and electronic mechanisms in a limited volume that can be held in the hands. In this regard, a simplified mechanism was designed and developed to ensure that the device could provide feedback that suited the purpose of controlling an object [

6,

7,

8,

9]. In several studies, the device provided a sensation of form by changing the shape of the objects that came into contact with the skin [

10]. The handheld haptic device uses a special mechanism for certain purposes. To resolve these limitations, we proposed the installation of linear actuators to generate haptic sensation to all five fingers, resulting in the development of a handheld-type device. According to this study, Bstick is the first handheld-type VR controller that allows the user to control a virtual object with five fingers. Five linear actuators, one for each finger, are independently controlled, and they can produce various 3D shapes. Actuators mounted to the controller can produce a rigid object as they can support the pressing force of fingers. Moreover, it can produce a soft object since it controls the movement of the actuator by monitoring the user’s pressing force. A functional game for hand rehabilitation was developed and applied for applying force with five fingers.

The two major contributions of this study are as follows:

Bstick, a handheld-type VR controller with five linear actuators, which can control the length by each finger, was designed and developed to generate in the user feelings of grabbing and controlling a virtual object in a VR area.

The study proposed an application, which uses a haptic controller to control the position of fingers by finger unit after sensing the pressing force of each user finger and calculating the resistance force from the rehabilitation level.

2. Related Work

A hand haptic device generates a frictional power and shear on the skin, as well as the sensation of grabbing an object with the hands. When a user holds a virtual object in a virtual environment, it detects the fingers’ location in real-time and limits the fingers’ movement, giving the sensation that the user is grabbing the object with their hands. A variety of mechanisms are being examined in devices designed to generate a hand with several sensations [

11,

12]. Initially, this study was conducted for the purpose of the device examining the object by installing a haptic device that can reproduce several senses to the index finger, which is frequently used to inspect an object. Recently, studies have been conducted on haptic devices that were mounted on several fingers, which could generate haptic feedback while grabbing an object. Various stimuli were delivered to the user by placing and controlling multiple vibration modules in different positions of the hand [

13,

14]. By installing an actuating device where the finger contacts, or at its angle, a physical resistance force from the tip of the user’s finger was generated, and the user would recognize the shape of the virtual object [

15,

16]. As a device that could adjust the shear force was placed on the tip of the user’s finger, features such as roughness or softness of a virtual object could be delivered [

17,

18].

A wearable haptic device with an exoskeleton structure is placed on several fingers, and controls the fingers’ movement via a mechanism, giving the user the sensation of grabbing a virtual object. Grabity was designed as a grabber type that can move linearly along an axis made up of two moving points: a thumb and the rest of the fingers. The sensation of grabbing an object can be simulated by limiting its movement when in contact with a virtual object [

6]. Wolverine delivers the sense of grabbing an object using a linear break method in the process of grabbing an object by allowing a thumb and three fingers to freely move by combining a ball joint with linear movement. Although it can sustain sufficient lightweight forces using the break method, it cannot deliver stiffness [

19]. CyberGrasp can control the movement of five fingers by controlling the stiffness with a motor, as it uses an exoskeleton structure that resembles the shape of five fingers [

4].

The wearable haptic device with an exoskeleton structure delivers an accurate physical interaction, as the device is worn on the fingers, thereby detecting the finger’s location. In the VR environment, a handheld-type haptic device is used as a VR controller because the user must wear the device on both hands and take it off while simultaneously using HMD. A handheld-type haptic device is an interface that receives user input and feedback, and has the benefit of being convenient to wear and operate with both hands. Commercial VR devices, such as the HTC VIVE and Oculus Touch, provide a location tracking function and include a user input interface such as a joystick, touch pad, or buttons; however, since the interface solely uses vibration as feedback to the user, it can only deliver a limited number of sensations to the user in a virtual environment. Hands are crucial tools for controlling or interacting virtually. To produce more diversity and complex VR content, the current hand-level interaction must be improved to finger-level interaction. In this regard, studies are being conducted on a handheld haptic controller that can receive finger-level interaction from the user, provide feedback to fingers, and deliver various sensations.

NormalTouch can express slope and deliver force feedback, as it uses a disk connected to three server motors around the index finger. The force sensor is used to measure the user’s pressing force on the disk to control the location and direction, allowing the user to recognize the shape of a virtual object [

20]. TextureTouch was designed to generate surface sensation with a 4 × 4 pin array on the index finger. This allowed a study to be conducted on a technology that can generate surface sensation and sense of shape to a finger. Haptic Revolver was designed to generate touch, shear, and texture using a controllable rotating wheel below the index finger. The rotating wheel can be designed and exchanged as per function, enabling the user to interact with various senses as required [

21]. TORC is a mechanism that can generate grabbing forces using a thumb and two fingers, and a trackpad on the thumb is used to enhance a user interface where the object is rotated and controlled by the thumb [

8]. Haptic devices such as Haptic Revolver and TORC allow the users to feel the texture of objects controlled in a VR, and to harmonize the objects’ visual texture image with the texture sensed by the fingertips. As a result, they contribute to a greater sensation of realism. PaCaPa was designed with two wings on the thumb and palm that could be used to open and close it. As a result, it can express object control on the basis of the size or angle of the virtual object [

22]. Haptic Links, various types of links that connect two VIVE controllers, allow changes based on the shape of the virtual object grabbed and manipulated with both hands [

23]. The shape of the controller is changed in accordance with the tools grabbed and used by hands in a virtual space, so that the 3D shape of the virtual object and physical shape are expressed similarly. CLAW was designed to enable movement of the index finger. This allows the user to grab a small object with the user’s index finger, or simulate the touching sensation of controlling an object with the index finger, such as in the case of a gun [

7]. Shifty provides users with haptic feedback by shifting the weight distribution inside the handheld haptic device, changing the length or thickness of an object the user is grabbing in a virtual environment [

24]. Using sliding plate contactors installed on the grab of the handheld controller, Reactive Grab would simulate the frictional sensation or twist of the object the user is grabbing [

25]. In

Table 1, the characteristics of representative haptic controllers are classified based on usability in virtual reality.

This study focused on a handheld-type haptic controller that could generate haptic feedback to enhance the sensation of reality and immersion when using virtual tools. This could be achieved by changing the methods for identifying, grabbing, and controlling a virtual object in a VR environment, and the shape of the controller.

3. VR Controller Hardware

The design objective of the VR haptic controller developed in this study was to express the effect of grabbing an object with fingers in a virtual environment. Five fingers were maintained at different positions to enable grabbing and controlling a virtual object with fingers in a VR space. Additionally, to generate a sensation of grabbing of rigid and soft objects, different types of resistance forces were applied during the stage of pressing PHANToM [

2] and SPIDAR [

3] objects with fingers. To achieve this objective, the following directions for the design were derived:

Shape rendering: To create a sensation of grabbing objects of various shapes with fingers, it was necessary for the shape of the controller to be changed physically. As a result, an independent actuating part was installed that could separately control the fingers with which a user grabbed or controlled an object, and the actuating part was designed such that the shape could be maintained despite forces being applied using the fingers.

Manipulative attribute: To be used as a VR control, it was necessary for the users to be able to wear the controller comfortably while wearing the HMD, and to move about freely. Furthermore, the device was designed as a handheld type to prevent collisions between controllers and the external environment. The handheld-type device included all actuating, controlling, and power parts in its confined area.

Mobility: With regard to VR content, the task is performed by moving around or rotating within the space. A wired device can be beneficial for a handheld-type device that can solve problems such as microprocessors and batteries while minimizing the device’s size. However, as the user cannot move freely, the device can communicate wirelessly.

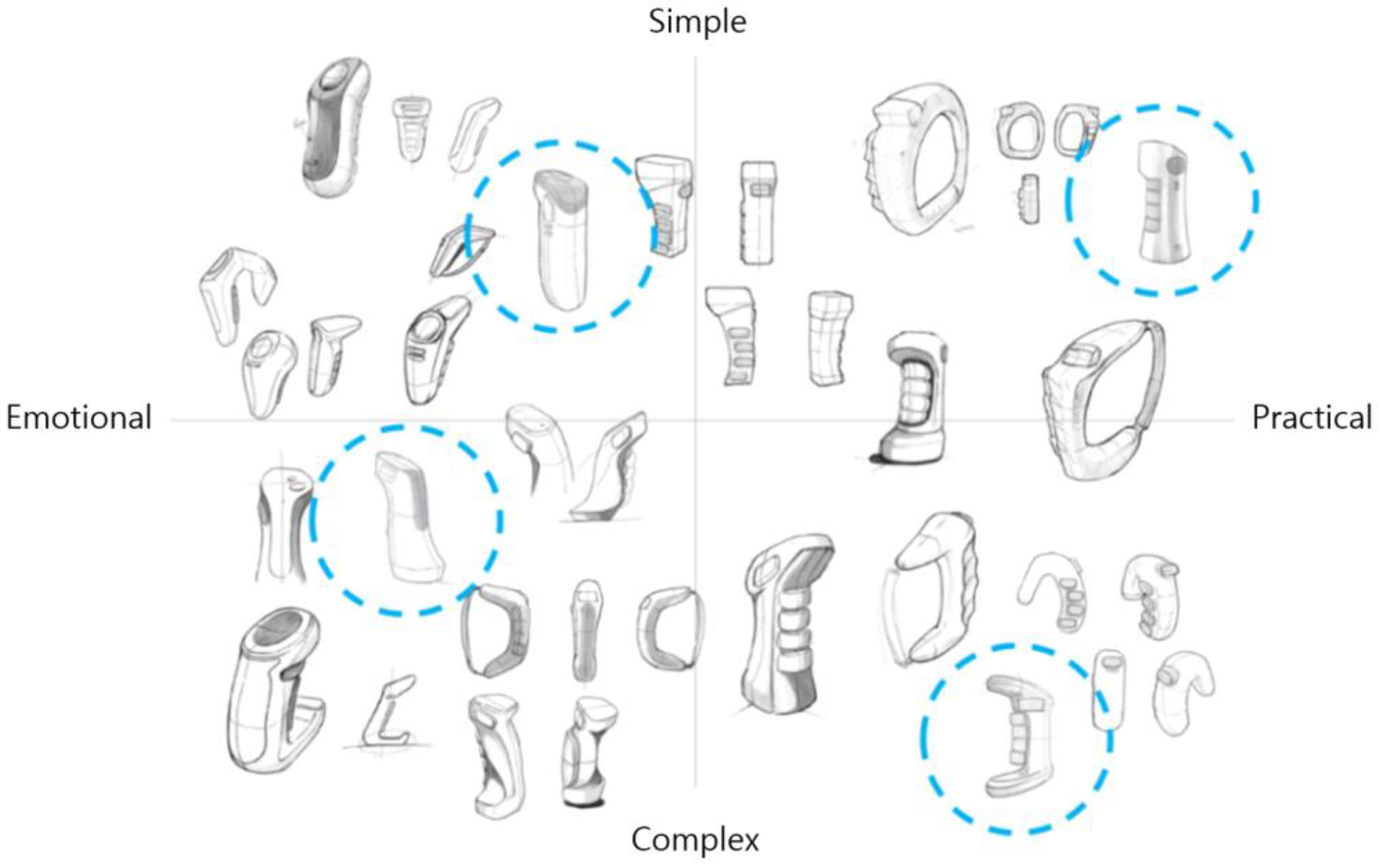

The above-mentioned design directions were used to create and develop Bstick. Based on the concept that the controller had a handheld shape and included a button-type actuating part that could make contact with five fingers, various controllers were conceptually designed as shown in

Figure 1. Taking into account the feedback from experts on the design, the most appropriate design (blue circle) was selected in each quadrant. Hardware, design, and HCI experts decided on the final exterior design as shown in

Figure 2. A mock-up model was created using a 3D printer. The printed model design was checked, and a grab test was conducted.

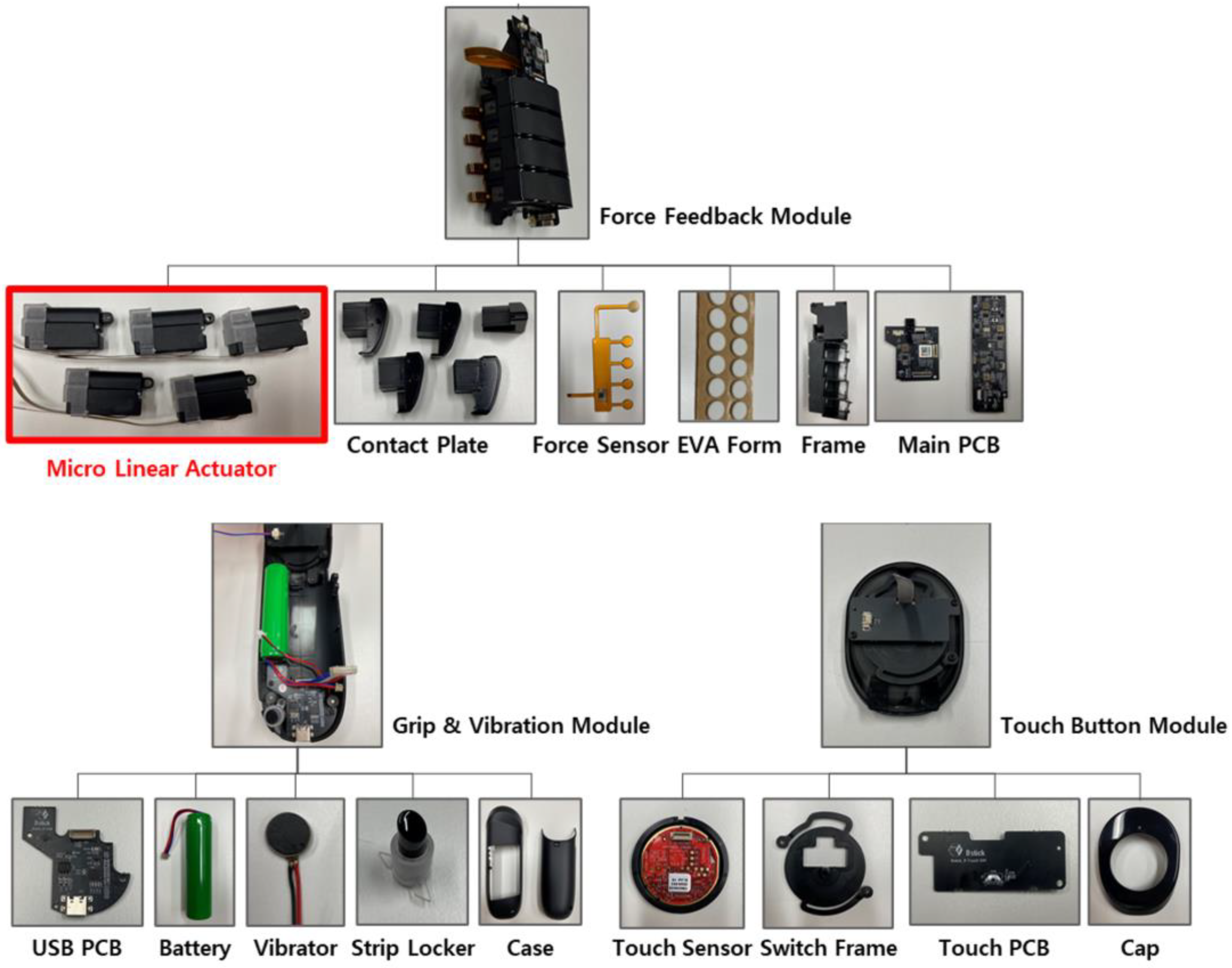

Figure 3 shows the three components that made up Bstick. It was composed of a force feedback module, a grip & vibration module, and a touch button module. The force feedback module collected data from a sensor within the device, and actuated the micro linear actuator. It included five micro linear actuators in the frame. Contact plates were on the position where the user’s five fingers were located when grabbing the controller: they could move forward and backward in conjunction with linear actuators. A force sensor was installed on each finger module, which detected the pressure when the contact plates were pressed. The main PCB module controlled the sensors and actuators in the device, and exchanged data with the PC through a wireless network. As a result, when the user pressed the contact plates, the force feedback module could detect pressure values and control linear actuators to move forward and backward, giving the sensation of grabbing an object in a virtual reality.

The grip and vibration module included the controller’s external case; a battery was placed on the rear of the case. The vibration motor was turned on, and generated vibrations. The USB PCB enabled USB communication for updating and debugging software and battery charging. The touch button module was placed on the upper part of the controller. As the thumb could move more freely compared to other fingers, a trackpad was installed on the thumb to detect the point of contact with the thumb. When the touch sensor was pressed, it could be used as a button.

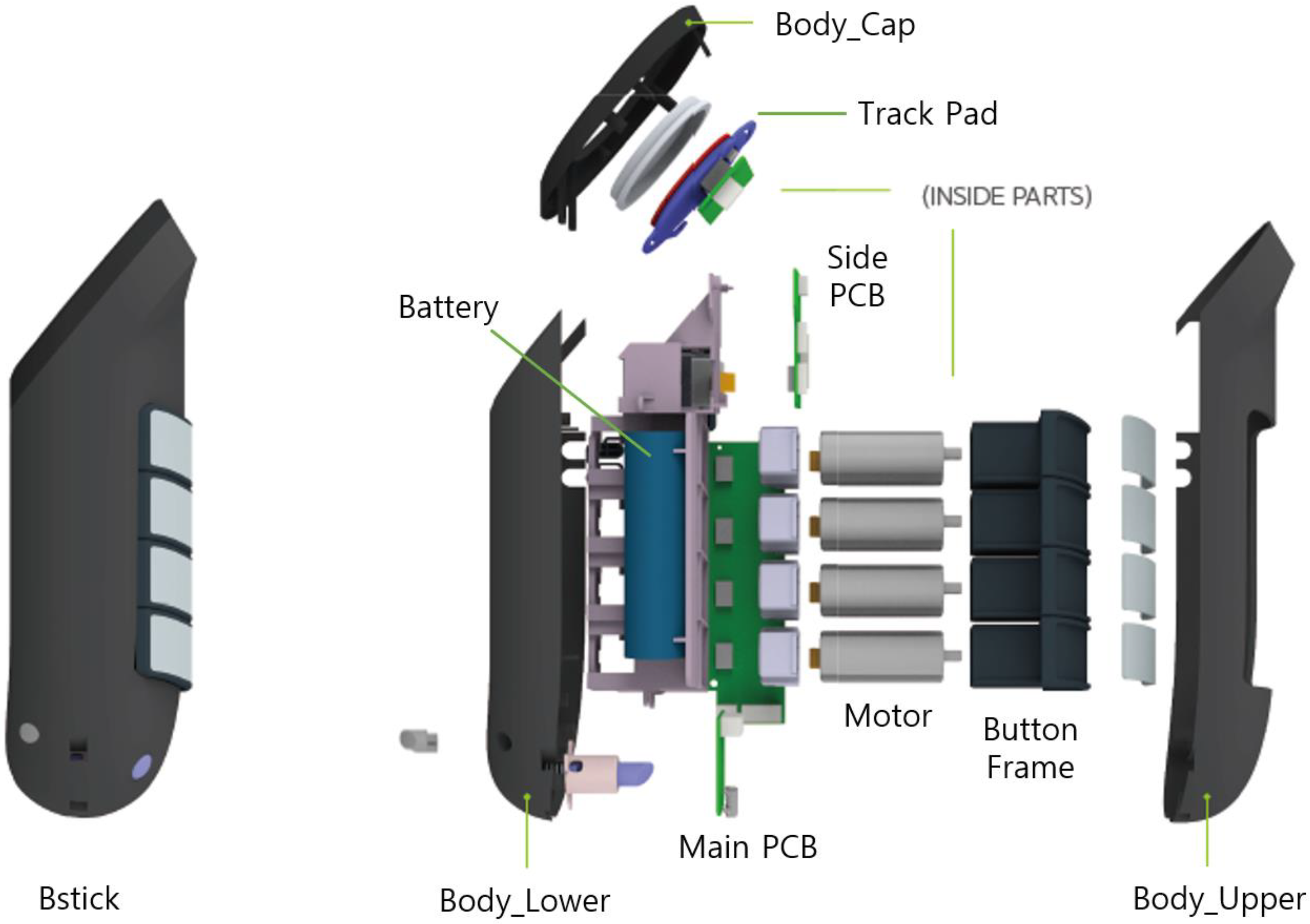

Figure 4 shows the internal structure in which various modules are combined.

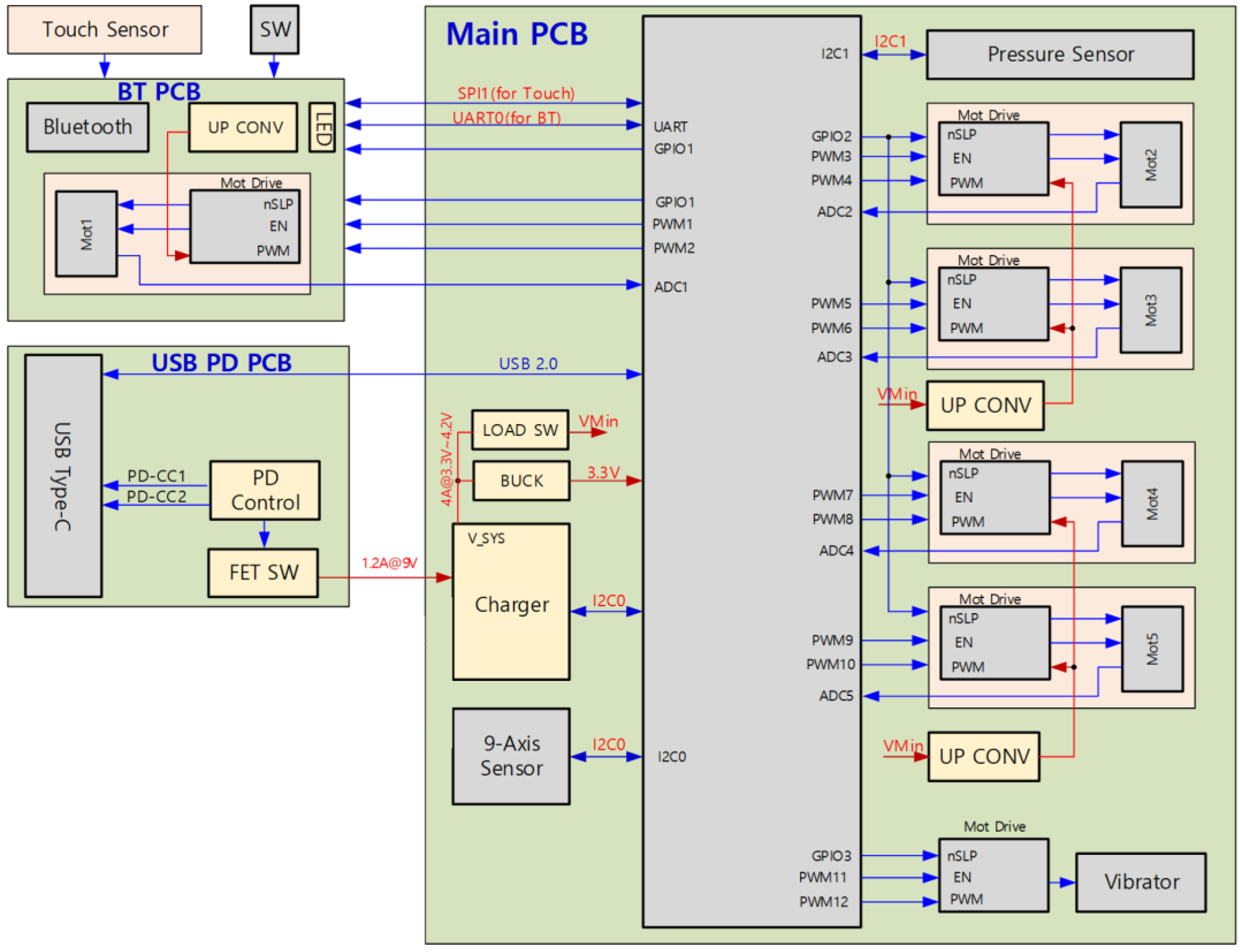

Figure 5 is a schematic circuit drawing of Bstick. As a VR controller, a pair of devices and PCB were created by designing each device that corresponded to the left and right hand, respectively, based on the same circuit design. The main module was composed of the main MCU, a motor control, a pressure sensor, a nine-axis sensor, and a battery charger. MK22FN512VDC12, having functions of ARM cortex-M4 (120 Hz) core, program memory 512 KB, and data RAM 128 KB, was used for the major MCU. As the linear actuator used in the actuating part was run at 6.5 V, a single DC to DC upconverter was assigned to two linear actuators to generate sufficient voltage and current for each motor. As a result, the voltage loss during usage and movement was reduced. The pin that provided position information was digitally converted into ADC within the MCU and used, which was designed to control the location by assigning the PWM signal channel. The fingers’ pressing force was measured via a pressing sensor, and the value processed at the sensor was inputted using I2C interface. A 15 W-level charger was supported for charging the battery. Bstick uses a 3500 mW 18650 Li-ion battery. In the USB power delivery module, a semiconductor that supported USB Type C and USB PD 3.0 was used to support 9 V charging. Bluetooth 5.0 was supported in the Bluetooth module for wireless data communication, and it processed values from touch sensors and buttons located on the thumb part.

As shown in

Figure 6, the electronic circuit was designed by dividing it into four PCBs (Main PCB, Bluetooth PCB, USB power delivery PCB, Switch PCB) to minimize the size of the controller. The PCB was fabricated, and the controller was assembled as shown in

Figure 7.

4. VR Controller Software

The VR controller software was divided into controller firmware and PC software. The software architecture within the VR controller was configured as shown in

Figure 8. The touch pad module collected the 2D location data from the point where the touch pad was in contact with the finger and button data when the touch pad was pressed. The button control module collected data from the power and function button on the controller’s side, and transmitted the data to the MCU. The pressure sensor module transmitted five pressure sensor data that matched the five fingers to the MCU. Using acceleration, gyromagnetic, and geomagnetic sensor values, the IMU module transmitted Euler angle and quaternion values to the MCU. Once the MCU transmitted the location of the motor, calculated on the basis of the pressure sensor value, to the motor control module, the motor control module controlled five motors and moved them to the designated location. The motor control module moved the motor, measured the location of the motor, and transmitted the measurement to the MCU. The MCU controlled the USB, BLE, and battery, and transmitted data received from the touch pad, button control, pressure sensor, IMU, and motor control to the BLE module to transmit data to the PC. The BLE module transmitted the data received from the PC to the MCU module, and the MCU module controlled the controller using the received data, and interacted with the VR application.

The PC software was designed using the Unity game engine. In order to record the position and orientation of the controller, a VIVE tracker was attached to the controller. The software received the tracking data of the controller directly from the VIVE tracker. When a user grabbed a haptic controller with the fingers, the MCU read the pressure values of the five fingers from the pressure sensor, and transmitted the pressure values to the PC software through the Bluetooth module. The PC software used the pressure values to calculate the movement position of the five linear actuators. When a finger touched a virtual object, the position data of the actuator was fixed, and a finger that did not touch the virtual object changed the position data. When the PC software separately calculated the position data of the five fingers and transmitted them to the MCU, the MCU moved the position of each actuator individually using the motor control module as shown in

Figure 9.

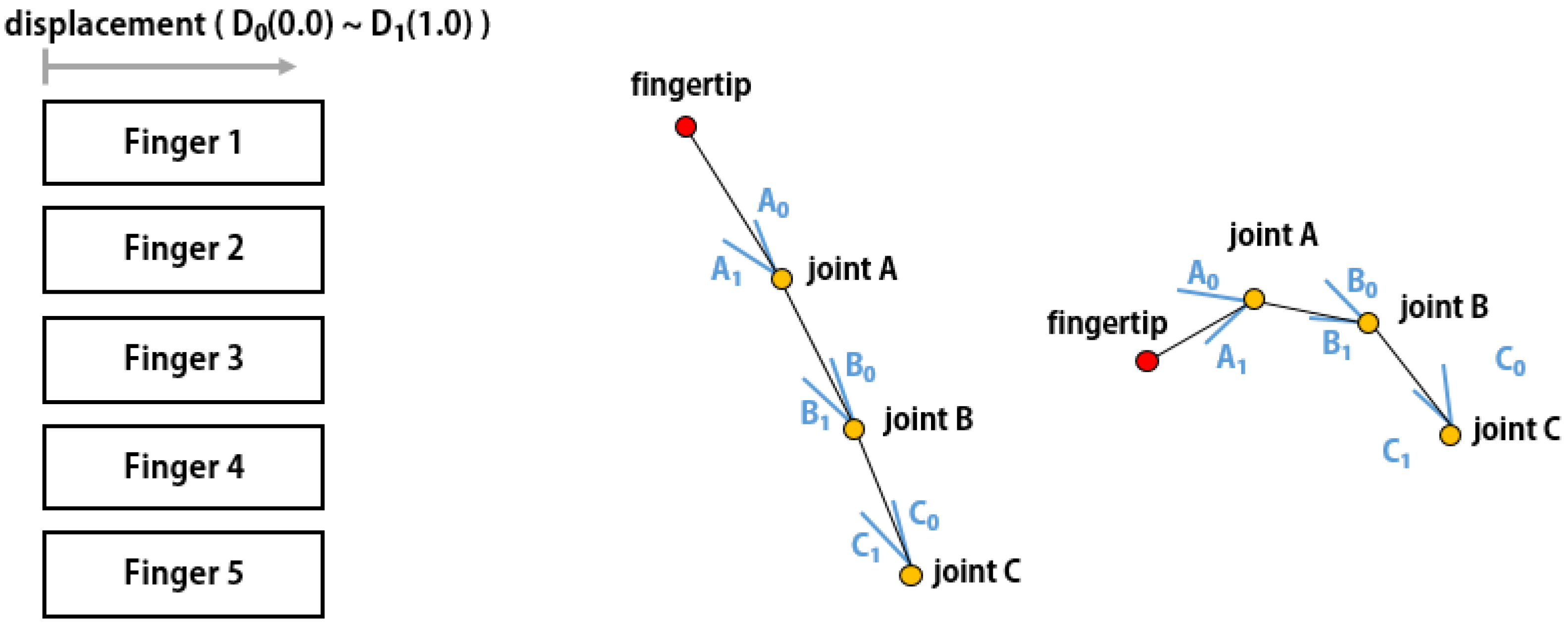

However, the hand in the VR was designed to enable movement of the finger joints. The mapping of the location of the motor transmitted from the haptic controller to the skeleton-based finger joint angle, is shown in

Figure 10. The linear movement of the haptic controller and the finger movement of the virtual hand were synchronized.

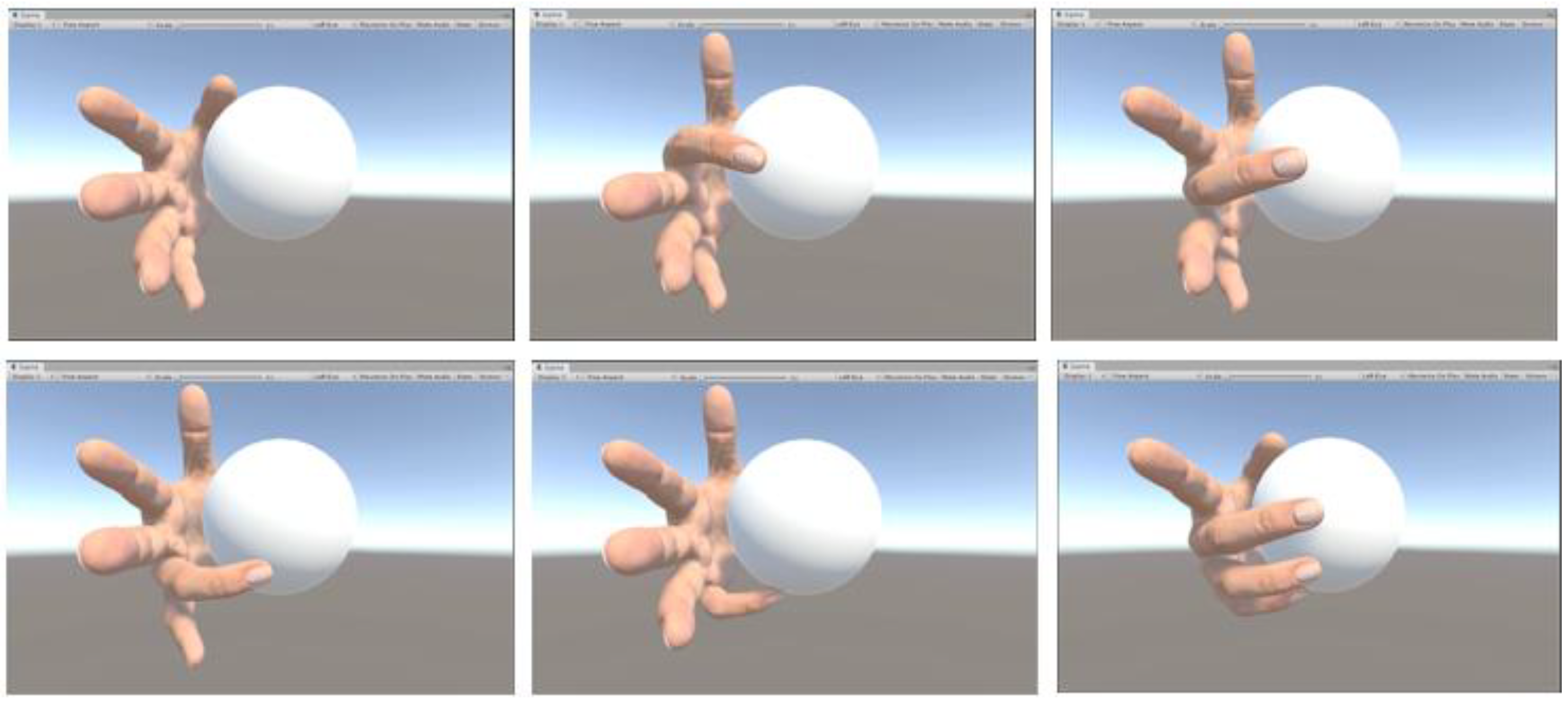

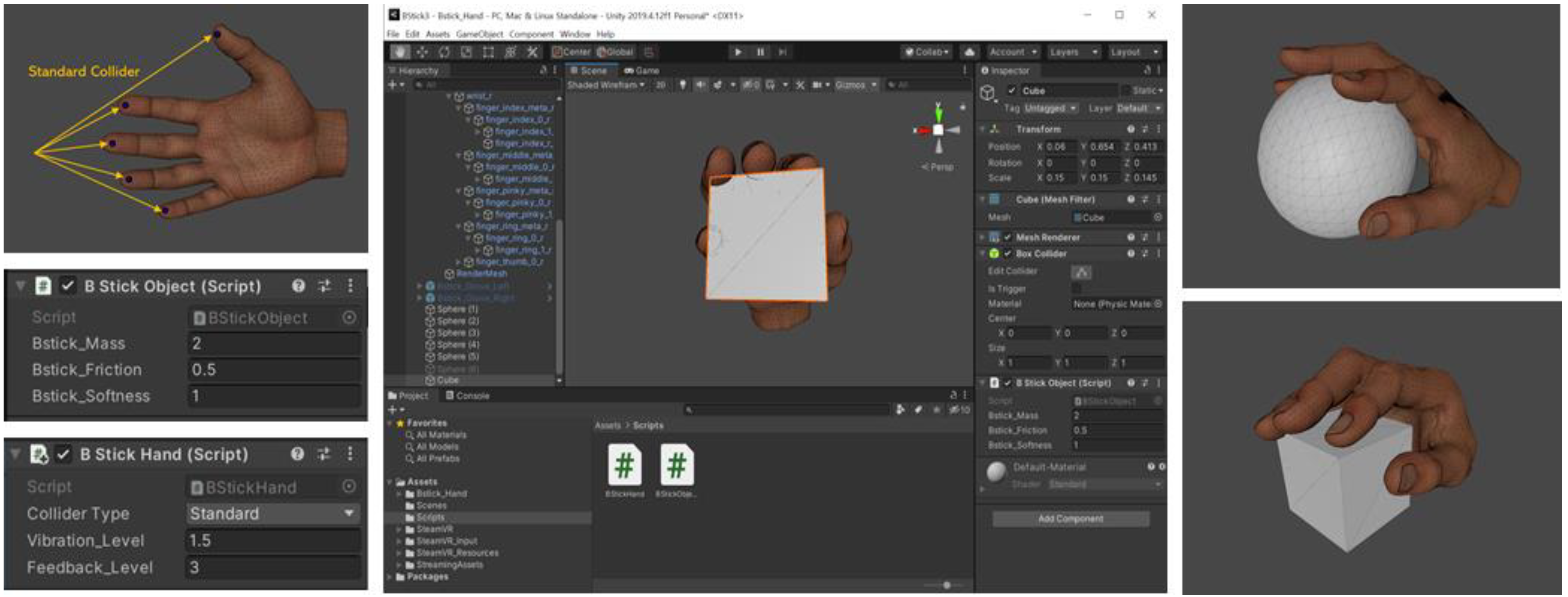

To control an object with a virtual hand in the Unity game engine, the user must identify real-time contact between the hand and the object. To use the Unity physical engine, a physical collider was configured for the object and the hand. In this study, the physical collider was placed at the fingertip. When the virtual hand touched a virtual object, it could obtain the information about the touched physical collider from the Unity physical engine. In addition, as shown in

Figure 11, it was configured to have the finger stop moving visually as it collided with a virtual object. The location of the finger that did not move was converted into a linear motor location and transmitted to the haptic controller. As the haptic controller maintained the linear actuator at the location transmitted from the PC, the location of the finger did not change, even when the user increased the pressure value by applying force to the fingers. As a result, the user could feel the rigidity of the virtual object. The vibration control module supported four types of vibration patterns: saw tooth, sine, square, and triangle. When the user touched a virtual object, the vibration pattern could be generated differently according to the characteristics of the virtual object.

5. Result and Discussion

In this study, the Bstick VR haptic controller was developed to demonstrate the effect of grabbing a virtual object with five fingers in the VR. To provide the force feedback of grabbing a virtual object, there must be no movement when pressing a button with fingers, to generate a sensation of grabbing a rigid object. Therefore, the most significant element of the controller feature was how well it could withstand the forces of the fingers. However, since the size of the controller increases when the actuator is installed to an adult’s finger press, the existing VR controller was developed to control only 2–3 fingers or to support a weak push force [

6,

7,

8,

19]. Bstick included five linear motors that could sustain the push force of five fingers, and the hardware and circuitry were compact enough to be held in the user’s hand.

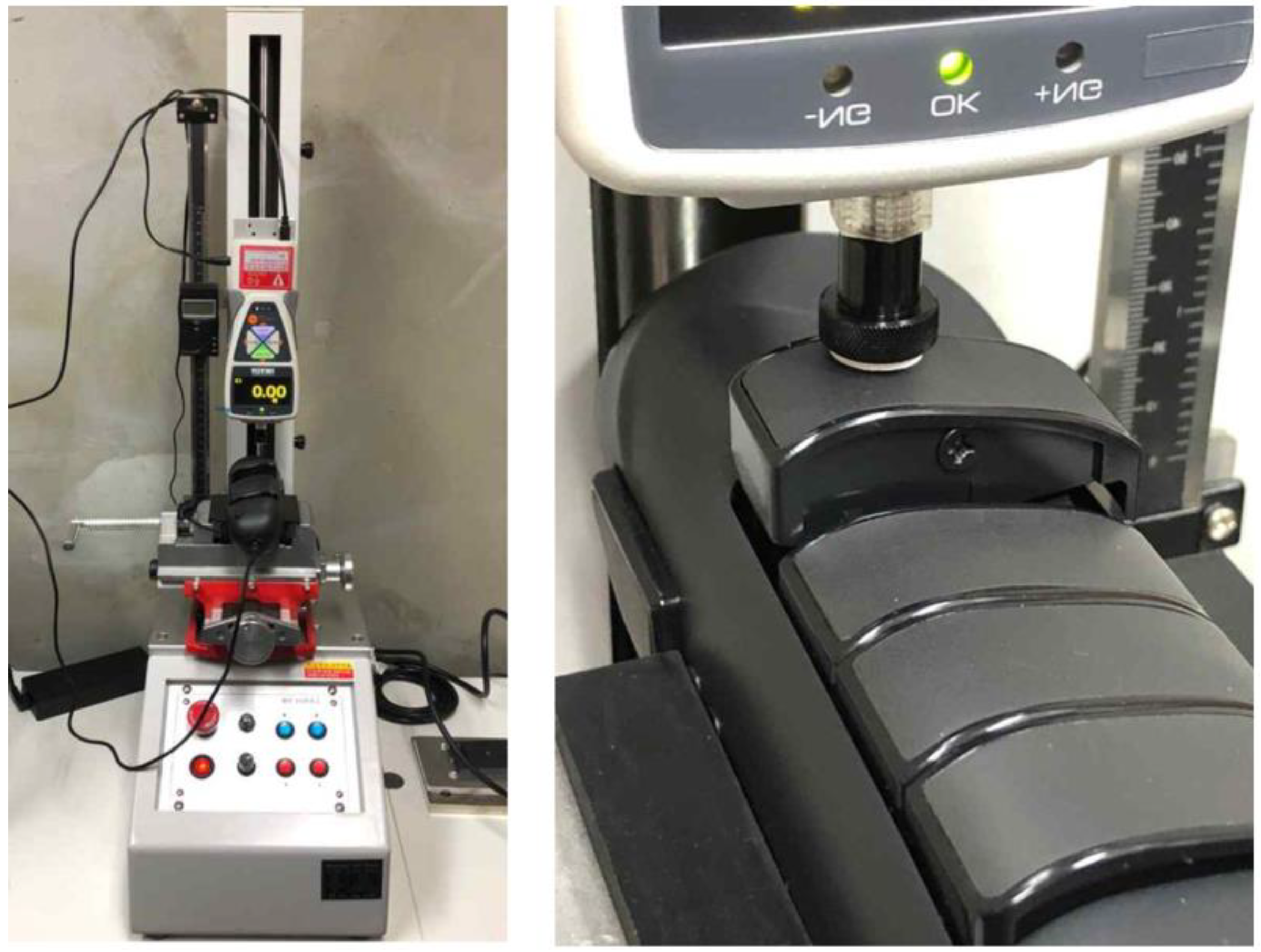

A digital push-pull gauge was used to measure the maximum force of the haptic controller motor, as shown in

Figure 12. Firstly, the haptic controller and digital push-pull gauge was fixed to the stand. Secondly, the height of the digital push-pull gauge was adjusted so that the measuring part of the push-pull gauge was located on the movement path of the finger button of the haptic controller. Thirdly, the force was measured for 30 s when the finger button pressed the digital push-pull gauge by controlling how to move the finger button. The force of the haptic controller was measured ten times at the Korea Electronic Technology Institute. As presented in

Table 2, the average value of the maximum force was 22.01 N, a result that suggests it can sufficiently stand the forces applied by an adult man’s finger.

As shown in

Figure 13, a Unity game engine is used to produce a VR rehabilitation content that uses a haptic controller. The content was developed to enable the user to follow the procedure of grabbing and moving an object with their fingers. When the controller button is pushed, the fingers of the virtual hand model move. When the finger touches the object, the object does not move even when a force is applied; this way, the user can feel a sensation of resistance. Five fingers can apply different forces and can move to several locations, thereby enabling various finger movements. Since the finger and hand will maintain certain forces to keep holding a virtual tool, it is highly effective in rehabilitation using fingers.

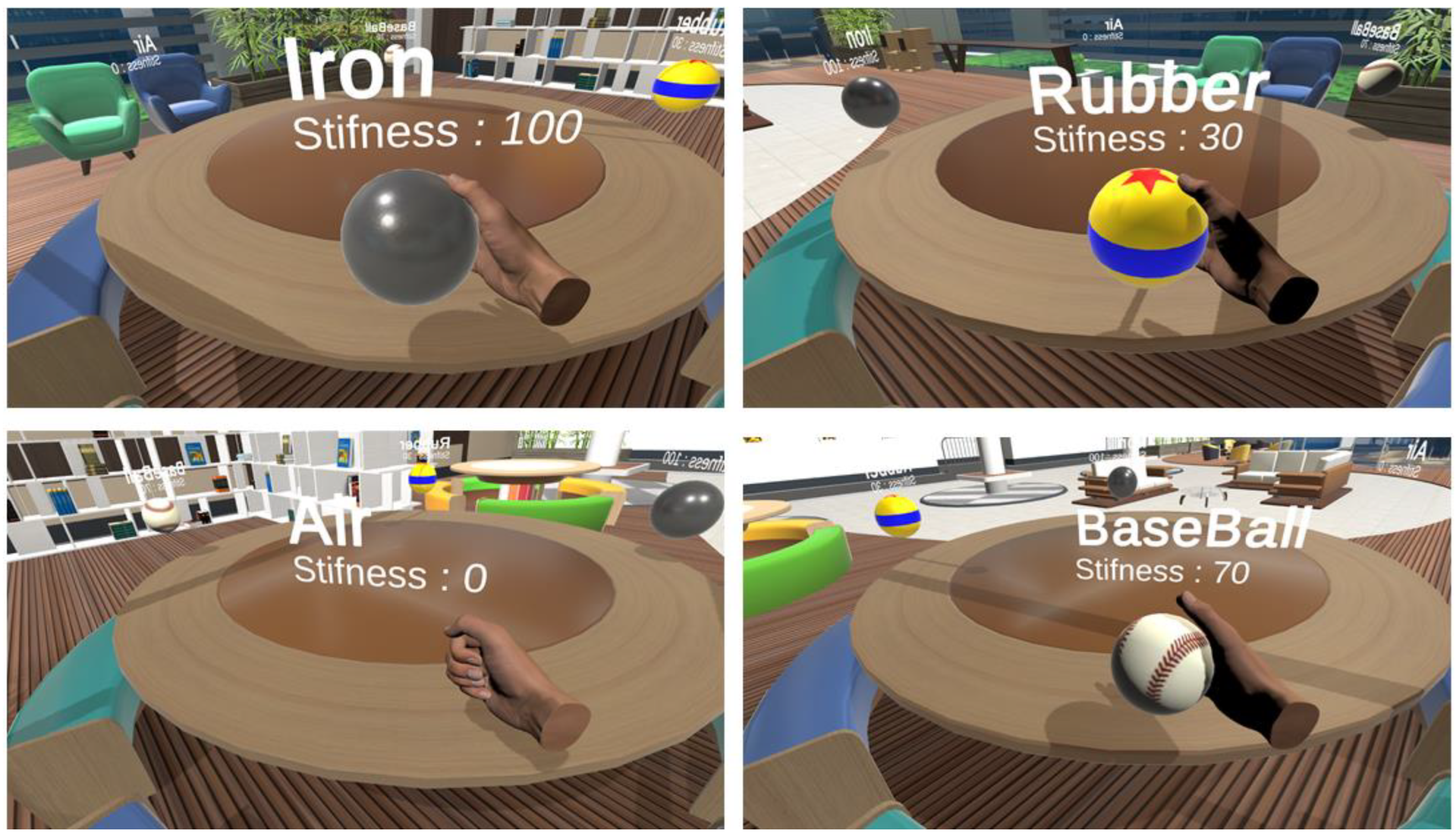

Figure 14 shows a hand rehabilitation content with a different haptic feeling depending on an object’s material.

6. Conclusions

This study proposed Bstick, a haptic controller that can control five fingers independently. Bstick can control the location of the button using linear actuators located on the button on the five fingers, delivering objects’ shape in a VR. Furthermore, by adjusting stiffness, it can grab and manipulate an object with rigidity or softness features. To properly use the VR equipment, a handheld-type haptic controller that provides wireless communication was designed. However, a handheld haptic controller includes all mechanical and electronic modules in its limited form factor. It is mandatory to optimize the design and components sufficiently. In the case of using a small actuator, when a button fails to withstand the forces applied by the finger and moves, the sensation of reality in grabbing and controlling the object is reduced. As Bstick is designed to have the ability to maintain the force at approximately 22 N for each finger, it can be applied to various VR contents using fingers. Since five actuators need to be driven, power consumption is high compared to other virtual reality controllers. In order to extend the controller operation time, it is designed using low-power MCU and Bluetooth module. It supports quick charging and can be used for about 4–5 h. Future research will be conducted to increase the capacity of the battery and reduce power consumption.

To use Bstick in the Unity game engine for a VR content production, the Unity asset for Bstick was developed. A test was conducted by producing VR rehabilitation content using a finger’s force feedback. This study focused on designing and developing a haptic controller for finger rehabilitation as the main purpose, and it can be used for other interactive contents that require a finger’s haptic feedback. For future research, we plan to reduce the size of Bstick through the development of a new micro linear actuator, to design the device structure, and to conduct research on user experience in VR using haptic controllers.