A Two-Step Method Based on

Abstract

1. Introduction

1.1. Traditional Approaches and Challenges

1.2. Data Mining Methods as Alternatives

2. Methods

2.1. The Statistic

2.2. K-Means Clustering

- Choose K initial centers (i.e., means of clusters) that can be randomly selected from data or defined by researchers. The number of clusters, K, can be determined based on the researchers’ hypotheses about response patterns or through statistical techniques. Various statistical methods are available for determining the optimal K, such as the “elbow methods” (Thorndike, 1953), silhouette width (Rousseeuw, 1987), and the Dunn index (Dunn, 1974).

- Calculate the distance between each point and each center in turn, and then assign all the points to their closest centers. The distance measure can also be user-specified, such as the Euclidean distance and Manhattan distance.

- Recalculate the new centers.

- Repeat steps 2 and 3 until clusters do not change, that is, no point switches between clusters.

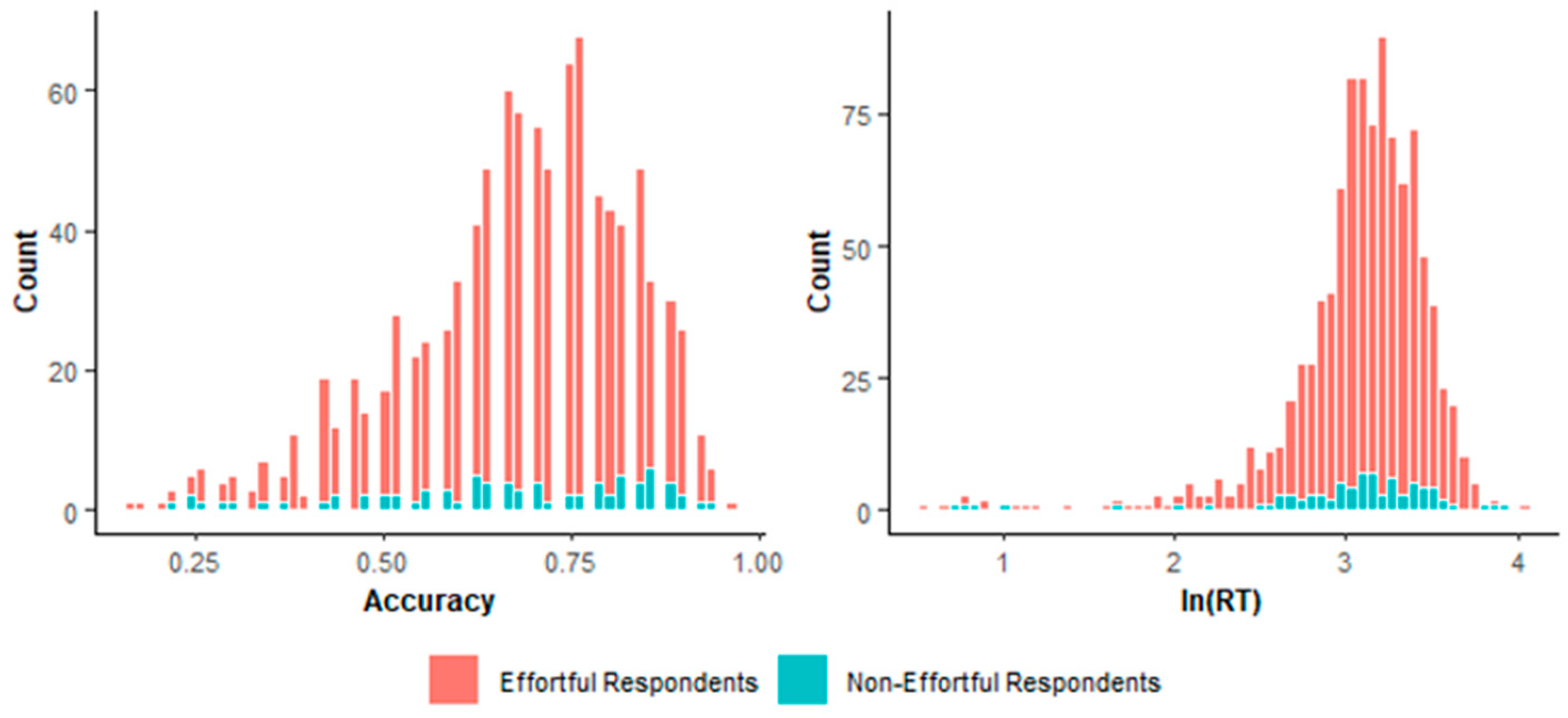

- The definition and interpretation of clusters are mainly based on response accuracy, response time, or both. For instance, the cluster with a higher average response time is more likely to be defined as the effortful group, whereas the other is more likely to be defined as the non-effortful group. This is supported by the characteristic of rapid guessing, which is typical of the non-effortful group. Specifically, rapid guessing has a shorter response time (Wise, 2015).

2.3. Self-Organization Mapping (SOM)

- Initialize and normalize weights that connect the input layer and output layer. The initialization assigns small random numbers to the weight.

- Calculate the distance between the randomly selected input neuron and all output neurons. Input neurons represent data points, while output neurons are the corresponding nodes to which the observed points map. There are more than two nodes in the output layer, whose lattice type is usually hexagonal or rectangular.

- Choose the closest output neuron as the best matching unit (BMU).

- Update the weight of the BMU and its neighbor neurons to make them more sensitive to similar input signals.

- Repeat steps 2 to 4 until the radius of the neighborhood decreases to zero.

- The group types are determined in the same manner as K-means.

2.4. The Two-Step Method

- Use K-means or SOM to enact clustering (i.e., the first identification).

- Estimate item parameters based on the effortful cluster.

- Using the above estimates, compute for all the respondents. Alternatively, only respondents with the non-effortful label in the first identification are identified for the second time. This is because the non-effortful respondents ignored by K-means and SOM in the first identification are possibly undetected by in the second identification. The non-effortful respondents, these three methods distinguished, are similar due to the similar identification strategy (i.e., based on the similarity between respondents). Man et al. (2019) found that compared with traditional person-fit statistics, the unsupervised learning algorithms would likely correctly flag a larger percentage of non-effortful respondents at the price of a lower identification rate of normally behaved respondents. So, the non-effortful identified by are possible to be included in the non-effortful group identified by the other methods.

- The respondents with > −1.645 are assigned to the effortful group. Or merge respondents in the initial effortful cluster, and with > −1.645 into the final effortful group.

3. Simulation Studies

3.1. Design

3.2. Data Generation

3.3. Analysis

3.4. Evaluation Criteria

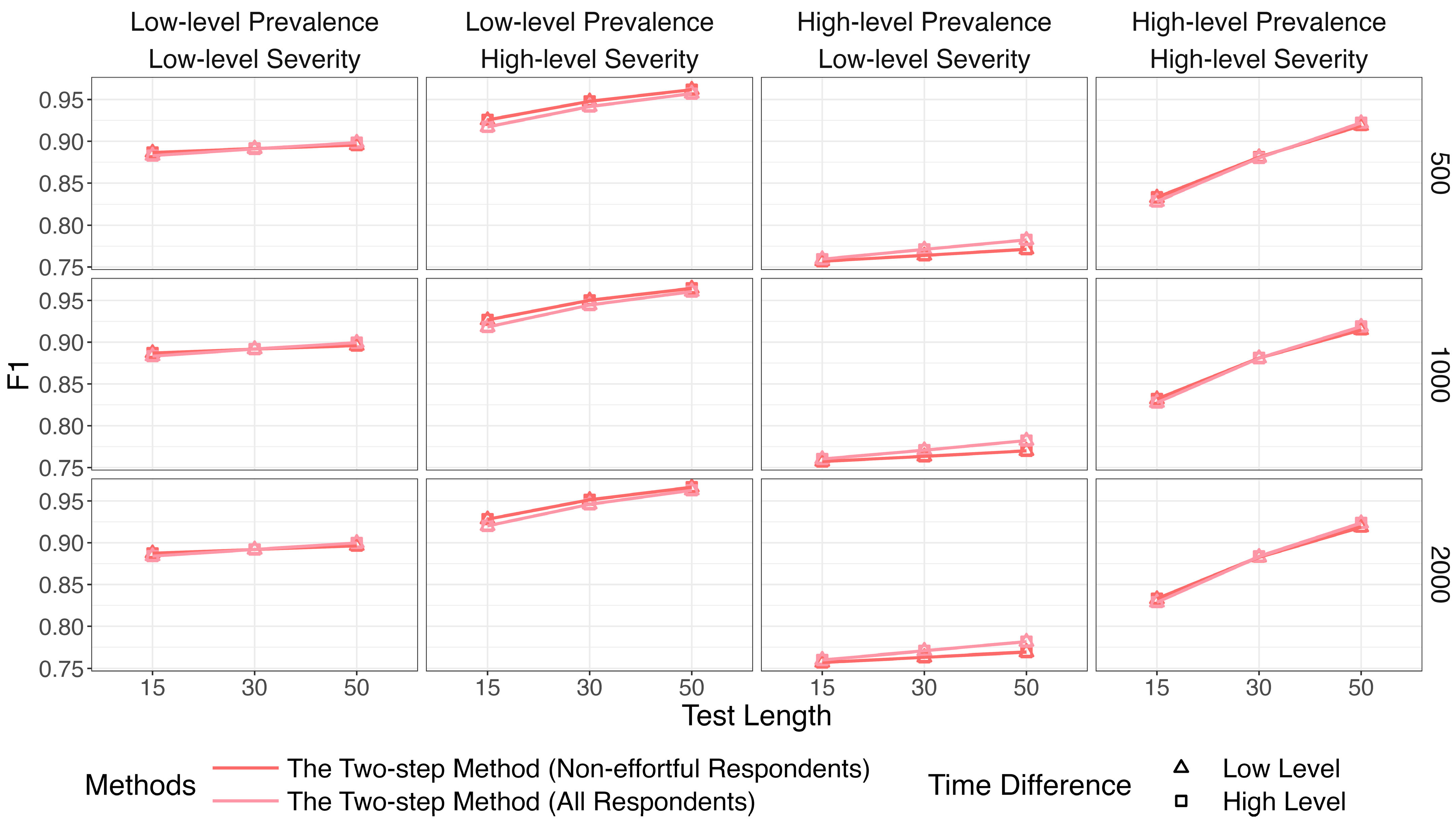

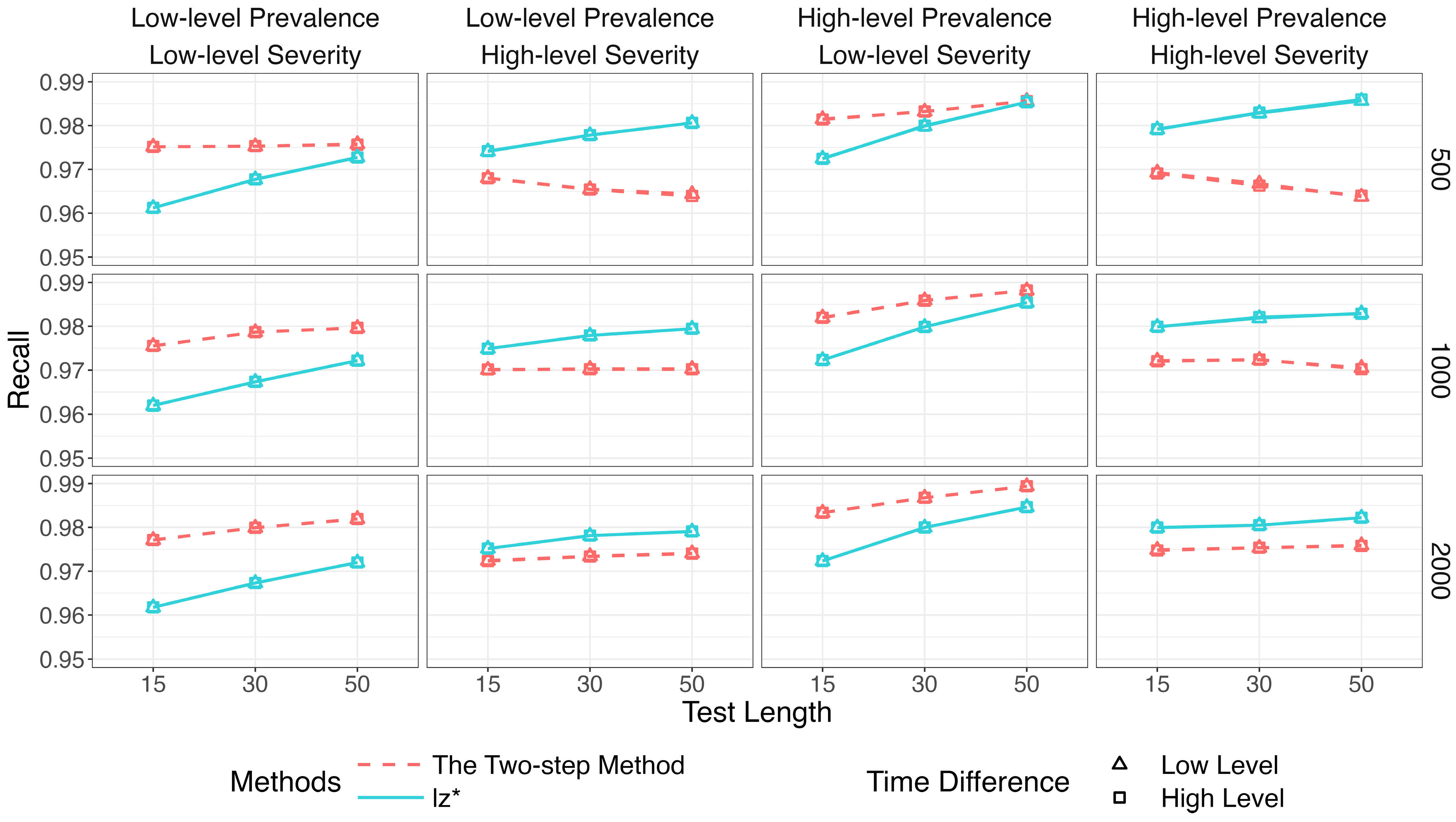

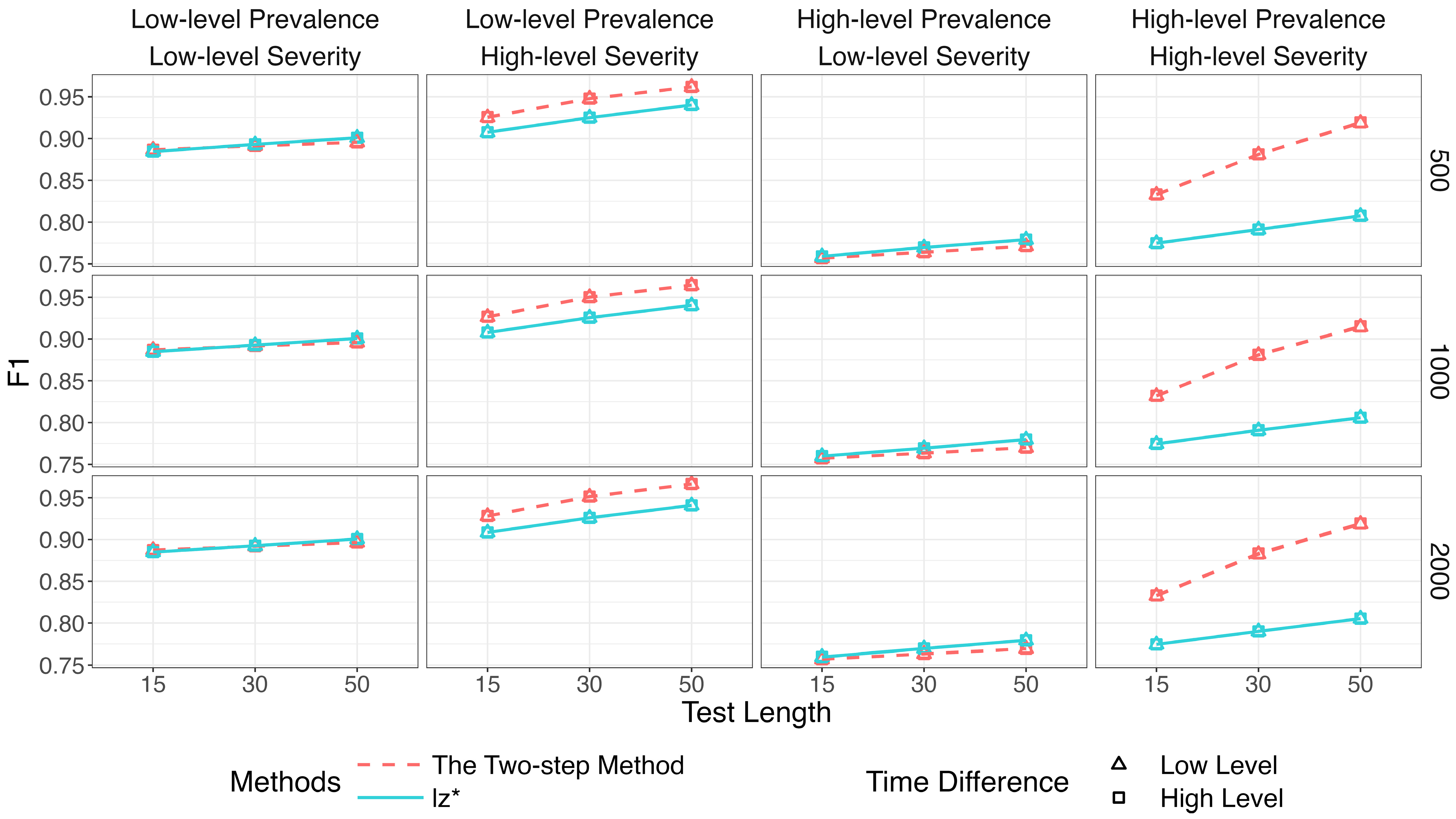

3.5. Results

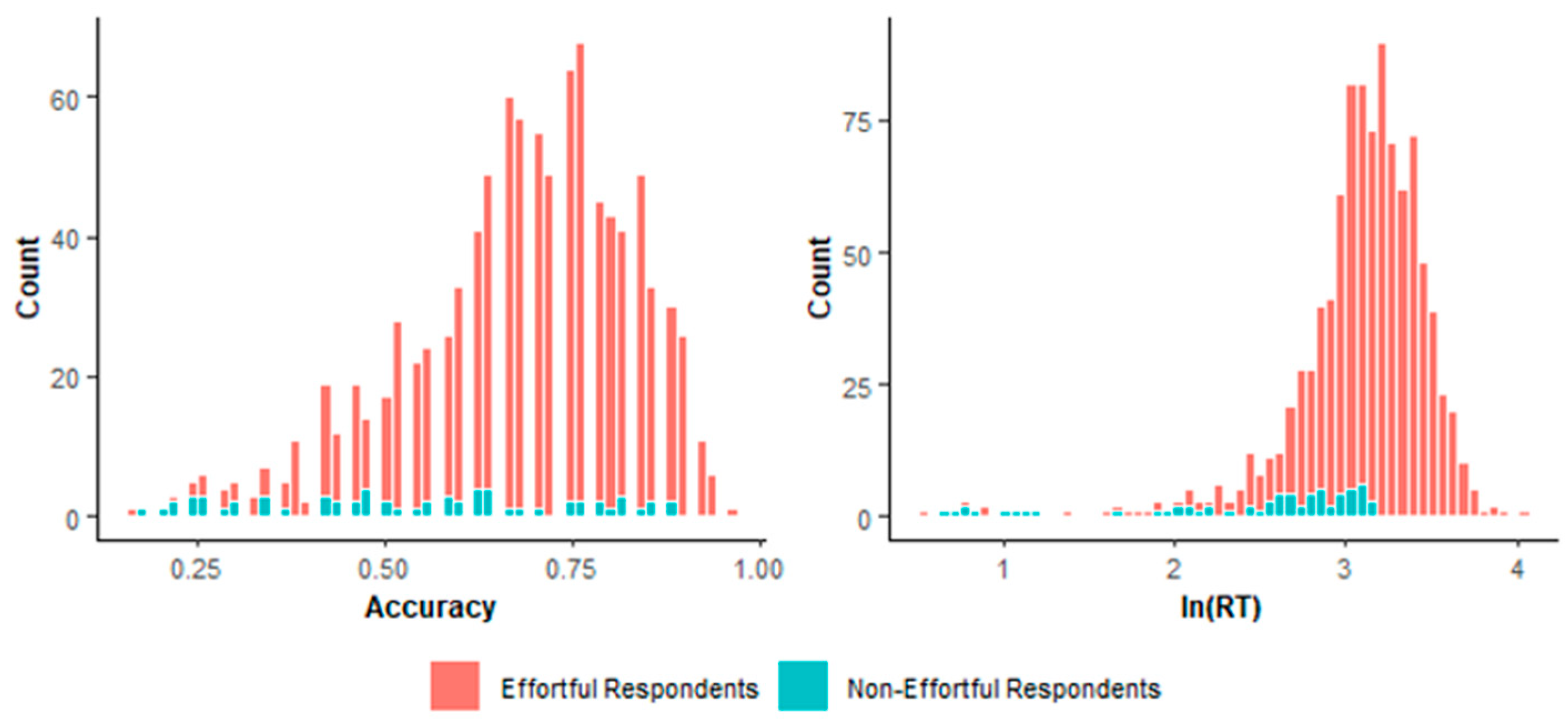

4. Empirical Example

5. Discussion

- Use K-means to enact clustering (i.e., the first identification).

- Estimate item parameters based on the effortful cluster.

- Using the above estimates, compute the test statistics (with the assumption of known item parameters) for respondents.

Supplementary Materials

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

| 1 | The results of the number of effortful respondents identified by both methods are provided in our Supplementary Materials. The results of item parameter recovery based on the identified effortful groups are provided in our Supplementary Materials. |

References

- Atkinson, A. C., Riani, M., & Cerioli, A. (2004). Exploring multivariate data with the forward search. Springer. [Google Scholar]

- Beck, M. F., Albano, A. D., & Smith, W. M. (2018). Person-fit as an index of inattentive responding: A comparison of methods using polytomous survey data. Applied Psychological Measurement, 43(5), 374–387. [Google Scholar] [CrossRef]

- Bedrick, E. J. (1997). Approximating the conditional distribution of person fit indexes for checking the rasch model. Psychometrika, 62(2), 191–199. [Google Scholar] [CrossRef]

- Birnbaum, A. (1968). Some latent trait models and their use in inferring an examinee’s ability. In F. M. Lord, & M. R. Novick (Eds.), Statistical theories of mental test scores (pp. 397–479). Addison-Wesley. [Google Scholar]

- Boughton, K. A., & Yamamoto, K. (2007). A HYBRID model for test speededness. In M. Davier, & C. H. Carstensen (Eds.), Multivariate and mixture distribution rasch models: Extensions and applications (pp. 147–156). Springer. [Google Scholar]

- Chalmers, R. P. (2012). Mirt: A multidimensional item response theory package for R environment. Journal of Statistical Software, 48(6), 1–29. [Google Scholar] [CrossRef]

- Curran, P. G. (2016). Methods for the detection of carelessly invalid responses in survey data. Journal of Experimental Social Psychology, 66, 4–19. [Google Scholar] [CrossRef]

- de la Torre, J., & Deng, W. (2008). Improving person-fit assessment by correcting the ability estimate and its reference distribution. Journal of Educational Measurement, 45(2), 159–177. [Google Scholar] [CrossRef]

- Doval, E., & Delicado, P. (2020). Identifying and classifying aberrant response patterns through functional data analysis. Journal of Educational and Behavioral Statistics, 45(6), 719–749. [Google Scholar] [CrossRef]

- Drasgow, F., Levine, M. V., & Williams, E. A. (1985). Appropriateness measurement with polychotomous item response models and standardized indices. British Journal of Mathematical and Statistical Psychology, 38(1), 67–86. [Google Scholar] [CrossRef]

- Dunn, J. C. (1974). Well-separated clusters and optimal fuzzy partitions. Journal of Cybernetics, 4(1), 95–104. [Google Scholar] [CrossRef]

- Fossey, W. A. (2017). An evaluation of clustering algorithms for modeling game-based assessment work processes. University of Maryland. [Google Scholar]

- Glas, C. A. W., & Dagohoy, A. V. T. (2007). A person fit test for Irt models for polytomous items. Psychometrika, 72(2), 159–180. [Google Scholar] [CrossRef]

- Goldhammer, F., Naumann, J., Rölke, H., Stelter, A., & Tóth, K. (2017). Relating product data to process data from computer-based competency assessment. In D. Leutner, J. Fleischer, J. Grünkorn, & E. Klieme (Eds.), Competence assessment in education (pp. 407–425). Springer. [Google Scholar] [CrossRef]

- Gorney, K., Sinharay, S., & Eckerly, C. (2024). Efficient corrections for standardized person-fit statistics. Psychometrika, 89(2), 569–591. [Google Scholar] [CrossRef]

- Gorney, K., & Wollack, J. A. (2022a). Generating models for item preknowledge. Journal of Educational Measurement, 59(1), 22–42. [Google Scholar] [CrossRef]

- Gorney, K., & Wollack, J. A. (2022b). Using item scores and distractors in person-fit assessment. Journal of Educational Measurement, 60(1), 3–27. [Google Scholar] [CrossRef]

- Hastie, T., Tibshirani, R., & Friedman, J. (2009). The elements of statistical learning: Data mining, inference, and prediction (2nd ed.). Springer. [Google Scholar] [CrossRef]

- Hong, M. R., & Cheng, Y. (2019). Robust maximum marginal likelihood (RMML) estimation for item response theory models. Behavior Research Methods, 51(2), 573–588. [Google Scholar] [CrossRef]

- Jain, A. K. (2010). Data clustering: 50 years beyond k-means. Pattern Recognition Letters, 31(8), 651–666. [Google Scholar] [CrossRef]

- Jin, K.-Y., Chen, H.-F., & Wang, W.-C. (2017). Mixture item response models for inattentive responding behavior. Organizational Research Methods, 21(1), 197–225. [Google Scholar] [CrossRef]

- Khan, S. S., & Ahmad, A. (2004). Cluster center initialization algorithm for K-means clustering. Pattern Recognition Letters, 25, 1293–1302. [Google Scholar] [CrossRef]

- Kohonen, T. (1982). Self-organized formation of topologically correct feature maps. Biological Cybernetics, 43(1), 59–69. [Google Scholar] [CrossRef]

- Kohonen, T. (1997). Self-organizing maps. Springer. [Google Scholar] [CrossRef]

- Lee, P., Joo, S., & Son, M. (2024). Detecting careless respondents in multidimensional forced choice data: An application of lz person-fit statistic to the tirt model. Journal of Business and Psychology, 39(3), 541–564. [Google Scholar] [CrossRef]

- Liao, M., Patton, J., Yan, R., & Jiao, H. (2021). Mining process data to detect aberrant test takers. Measurement: Interdisciplinary Research and Perspectives, 19(2), 93–105. [Google Scholar] [CrossRef]

- Liu, H. Y. (Ed.). (2019). Advanced statistics for psychology. China Renmin University Press. [Google Scholar]

- Liu, Y., Cheng, Y., & Liu, H. (2020). Identifying effortful individuals with mixture modeling response accuracy and response time simultaneously to improve item parameter estimation. Educational and Psychological Measurement, 80(4), 775–807. [Google Scholar] [CrossRef]

- Lu, J., Wang, C., & Shi, N. (2021). A mixture response time process model for aberrant behaviors and item nonresponses. Multivariate Behavioral Research, 58(1), 71–89. [Google Scholar] [CrossRef]

- Lundgren, E., & Eklöf, H. (2021). Within-item response processes as indicators of test-taking effort and motivation. Educational Research and Evaluation, 26(5–6), 275–301. [Google Scholar] [CrossRef]

- Magis, D., Raîche, G., & Béland, S. (2012). A didactic presentation of snijders’s lz* index of person fit with emphasis on response model selection and ability estimation. Journal of Educational and Behavioral Statistics, 37(1), 57–81. [Google Scholar] [CrossRef]

- Man, K., & Harring, J. R. (2022). Detecting preknowledge cheating via innovative measures: A mixture hierarchical model for jointly modeling item responses, response times, and visual fixation counts. Educational and Psychological Measurement, 83(5), 1059–1080. [Google Scholar] [CrossRef]

- Man, K., Harring, J. R., & Liu, Y. (2020). Methods of integrating multi-modal data for assessing aberrant test-taking behaviors. Multivariate Behavioral Research, 55(1), 155–156. [Google Scholar] [CrossRef]

- Man, K., Harring, J. R., & Sinharay, S. (2019). Use of data mining methods to detect test fraud. Journal of Educational Measurement, 56(2), 251–279. [Google Scholar] [CrossRef]

- Meade, A. W., & Craig, S. B. (2012). Identifying careless responses in survey data. Psychol Methods, 17(3), 437–455. [Google Scholar] [CrossRef]

- Meyer, J. P. (2010). A mixture rasch model with item response time components. Applied Psychological Measurement, 34(7), 521–538. [Google Scholar] [CrossRef]

- Miller, P. J., Lubke, G. H., McArtor, D. B., & Bergeman, C. S. (2016). Finding structure in data using multivariate tree boosting. Psychological Methods, 21(4), 583–602. [Google Scholar] [CrossRef]

- Min, S., & Aryadoust, V. (2021). A systematic review of item response theory in language assessment: Implications for the dimensionality of language ability. Studies in Educational Evaluation, 68, 100963. [Google Scholar] [CrossRef]

- Molenaar, D., Bolsinova, M., & Vermunt, J. K. (2018). A semi-parametric within-subject mixture approach to the analyses of responses and response times. British Journal of Mathematical and Statistical Psychology, 71(2), 205–228. [Google Scholar] [CrossRef]

- Molenaar, I. W., & Hoijtink, H. (1990). The many null distributions of person fit indices. Psychometrika, 55(1), 75–106. [Google Scholar] [CrossRef]

- Nagy, G., & Ulitzsch, E. (2021). A multilevel mixture IRT framework for modeling response times as predictors or indicators of response engagement in IRT models. Educational and Psychological Measurement, 82(5), 845–879. [Google Scholar] [CrossRef]

- Pastor, D. A., Ong, T. Q., & Strickman, S. N. (2019). Patterns of solution behavior across items in low-stakes assessments. Educational Assessment, 24(3), 189–212. [Google Scholar] [CrossRef]

- Patton, J. M., Cheng, Y., Hong, M., & Diao, Q. (2019). Detection and treatment of careless responses to improve item parameter estimation. Journal of Educational and Behavioral Statistics, 44(3), 309–341. [Google Scholar] [CrossRef]

- Patton, J. M., Cheng, Y., Yuan, K.-H., & Diao, Q. (2013). The influence of item calibration error on variable-length computerized adaptive testing. Applied Psychological Measurement, 37, 24–40. [Google Scholar]

- Pokropek, A. (2016). Grade of membership response time model for detecting guessing behaviors. Journal of Educational and Behavioral Statistics, 41(3), 300–325. [Google Scholar] [CrossRef]

- Qiao, X., & Jiao, H. (2018). Data mining techniques in analyzing process data: A didactic. Frontiers in Psychology, 9, 2231. [Google Scholar] [CrossRef]

- Ranger, J., & Kuhn, J. T. (2017). Detecting unmotivated individuals with a new model-selection approach for Rasch models. Psychological Test and Assessment Modeling, 59(3), 269–295. Available online: https://psycnet.apa.org/record/2018-59001-001 (accessed on 4 January 2026).

- Rousseeuw, P. J. (1987). Silhouettes: A graphical aid to the interpretation and validation of cluster analysis. Journal of Computational and Applied Mathematics, 20, 53–65. [Google Scholar] [CrossRef]

- Sijtsma, K. (1986). A coefficient of deviance of response patterns. Kwantitatieve Methoden: Nieuwsbrief voor Toegepaste Statistiek en Operationele Research, 7(22), 131–145. [Google Scholar]

- Sinharay, S. (2016). An NCME instructional module on data mining methods for classification and regression. Educational Measurement: Issues and Practice, 35(3), 38–54. [Google Scholar] [CrossRef]

- Sinharay, S. (2017). Are the nonparametric person-fit statistics more powerful than their parametric counterparts? Revisiting the simulations in Karabatsos (2003). Applied Measurement in Education, 30(4), 314–328. [Google Scholar] [CrossRef]

- Snijders, T. A. B. (2001). Asymptotic null distribution of person fit statistics with estimated person parameter. Psychometrika, 66(3), 331–342. [Google Scholar] [CrossRef]

- Soller, A., & Stevens, R. (2007). Applications of stochastic analyses for collaborative learning and cognitive assessment. In G. R. Hancock, & K. M. Samuelsen (Eds.), Advances in latent variable mixture models (pp. 217–253). Information Age Publishing. [Google Scholar] [CrossRef]

- Tendeiro, J. N., Meijer, R. R., & Niessen, A. S. M. (2016). PerFit: An R package for person-fit analysis in IRT. Journal of Statistical Software, 74(5), 1–27. [Google Scholar] [CrossRef]

- Thorndike, R. L. (1953). Who belongs in the family? Psychometrika, 18(4), 267–276. [Google Scholar] [CrossRef]

- Tong, H., Yu, X., Qin, C., Peng, Y., & Zhong, X. (2022). Detection of aberrant response patterns using a residual-based statistic in testing with polytomous items. Acta Psychologica Sinica, 54(9), 1122–1136. [Google Scholar] [CrossRef]

- Ulitzsch, E., von Davier, M., & Pohl, S. (2020). A hierarchical latent response model for inferences about examinee engagement in terms of guessing and item-level non-response. British Journal of Mathematical and Statistical Psychology, 73, 83–112. [Google Scholar] [CrossRef] [PubMed]

- van der Flier, H. (1982). Deviant response patterns and comparability of test scores. Journal of Cross-Cultural Psychology, 13(3), 267–298. [Google Scholar] [CrossRef]

- van der Linden, W. J. (2007). A hierarchical framework for modeling speed and accuracy on test items. Psychometrika, 72(3), 287–308. [Google Scholar] [CrossRef]

- van der Linden, W. J., & Barrett, M. D. (2016). Linking item response model parameters. Psychometrika, 81, 650–673. [Google Scholar] [CrossRef] [PubMed]

- van der Linden, W. J., & van Krimpen-Stoop, E. M. (2003). Using response times to detect aberrant responses in computerized adaptive testing. Psychometrika, 68(2), 251–265. [Google Scholar] [CrossRef]

- von Davier, M., & Molenaar, I. W. (2003). A person-fit index for polytomous rasch models, latent class models, and their mixture generalizations. Psychometrika, 68(2), 213–228. [Google Scholar] [CrossRef]

- Wang, C., & Xu, G. (2015). A mixture hierarchical model for response times and response accuracy. British Journal of Mathematical and Statistical Psychology, 68(3), 456–477. [Google Scholar] [CrossRef]

- Wang, C., Xu, G., & Shang, Z. (2018a). A two-stage approach to differentiating normal and aberrant behavior in computer based testing. Psychometrika, 83, 223–254. [Google Scholar] [CrossRef]

- Wang, C., Xu, G., Shang, Z., & Kuncel, N. (2018b). Detecting aberrant behavior and item preknowledge: A comparison of mixture modeling method and residual method. Journal of Educational and Behavioral Statistics, 43(4), 469–501. [Google Scholar] [CrossRef]

- Ward, M. K., & Meade, A. W. (2023). Dealing with careless responding in survey data: Prevention, identification, and recommended best practices. Annual Review of Psychology, 74, 577–596. [Google Scholar] [CrossRef] [PubMed]

- Warm, T. A. (1989). Weighted likelihood estimation of ability in item response theory. Psychometrika, 54(3), 427–450. [Google Scholar] [CrossRef]

- Wehrens, R., & Buydens, L. M. (2007). Self-and super-organizing maps in R: The Kohonen package. Journal of Statistical Software, 21(5), 1–19. [Google Scholar] [CrossRef]

- Wehrens, R., & Kruisselbrink, J. (2018). Flexible self-organizing maps in kohonen 3.0. Journal of Statistical Software, 87(7), 1–18. [Google Scholar] [CrossRef]

- Wise, S. L. (2015). Effort analysis: Individual score validation of achievement test data. Applied Measurement in Education, 28(3), 237–252. [Google Scholar] [CrossRef]

- Wise, S. L. (2017). Rapid-guessing behavior: Its identification, interpretation, and implications. Educational Measurement: Issues and Practice, 36, 52–61. [Google Scholar] [CrossRef]

- Wise, S. L., & DeMars, C. E. (2005). Low examinee effort in low-stakes assessment: Problems and potential solutions. Educational Assessment, 10(1), 1–17. [Google Scholar] [CrossRef]

- Yavuz Temel, G., Machunsky, M., Rietz, C., & Okropiridze, D. (2022). Investigating subscores of VERA 3 German test based on item response theory/multidimensional item response theory models. Frontiers in Education, 7, 801372. [Google Scholar] [CrossRef]

| Factor | Level | Setting |

|---|---|---|

| I | Low | 500 |

| Medium | 1000 | |

| High | 2000 | |

| J | Low | 15 |

| Medium | 30 | |

| High | 50 | |

| Low | 20% | |

| High | 40% | |

| Low | ||

| High | ||

| Low | * | |

| High | * |

| Parameter | Distribution Setting | |

|---|---|---|

| U (1, 2.5) | ||

| N (0, 1) | ||

| U (1.5, 2.5) | ||

| U (−0.2, 0.2) | ||

| High-speed | , where , | |

| Low-speed | , where , | |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Chen, Y.; Liu, Y.; Liu, H.

A Two-Step Method Based on

Chen Y, Liu Y, Liu H.

A Two-Step Method Based on

Chen, Yilan, Yue Liu, and Hongyun Liu.

2026. "A Two-Step Method Based on

Chen, Y., Liu, Y., & Liu, H.

(2026). A Two-Step Method Based on