Progressing the Development of a Collaborative Metareasoning Framework: Prospects and Challenges

Abstract

1. Introduction

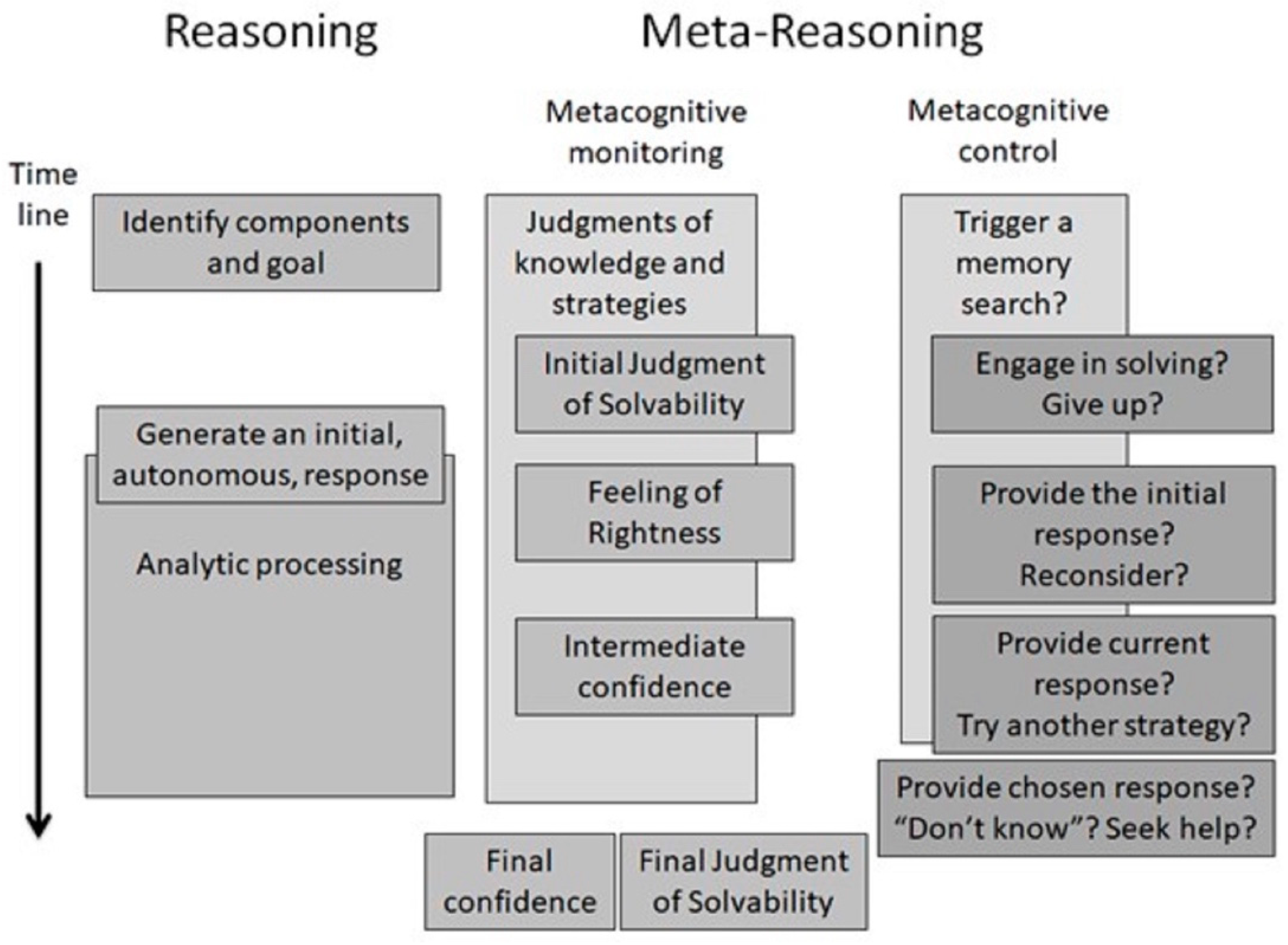

2. The Importance of Understanding Collaborative Metareasoning

3. The Metareasoning Framework

4. Research Questions in Metareasoning Research

4.1. The Underpinning Basis of Metacognitive Certainty and Uncertainty

4.2. Methods for Eliciting Judgments of Metacognitive Certainty and Uncertainty

5. Metareasoning in Teams

6. Toward a Framework of Collaborative Metareasoning

7. The Role of Language in Collaborative Metareasoning

8. Conclusions and Future Directions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Ackerman, Rakefet. 2014. The diminishing criterion model for metacognitive regulation of time investment. Journal of Experimental Psychology: General 143: 1349–68. [Google Scholar] [CrossRef]

- Ackerman, Rakefet. 2023. Bird’s-eye view of cue integration: Exposing instructional and task design factors which bias problem solvers. Educational Psychology Review 35: 55. [Google Scholar] [CrossRef]

- Ackerman, Rakefet, and Hagar Zalmanov. 2012. The persistence of the fluency–confidence association in problem solving. Psychonomic Bulletin & Review 19: 1187–92. [Google Scholar]

- Ackerman, Rakefet, and Morris Goldsmith. 2008. Control over grain size in memory reporting: With and without satisficing knowledge. Journal of Experimental Psychology: Learning, Memory, & Cognition 34: 1224–45. [Google Scholar]

- Ackerman, Rakefet, and Valerie A. Thompson. 2017. Meta-reasoning: Monitoring and control of thinking and reasoning. Trends in Cognitive Sciences 21: 607–17. [Google Scholar] [CrossRef] [PubMed]

- Ackerman, Rakefet, and Valerie A. Thompson. 2018. Meta-reasoning: Shedding meta-cognitive light on reasoning research. In The Routledge International Handbook of Thinking and Reasoning. Edited by Linden J. Ball and Valerie A. Thompson. Abingdon: Routledge, pp. 164–82. [Google Scholar]

- Ackerman, Rakefet, and Yael Beller. 2017. Shared and distinct cue utilization for metacognitive judgements during reasoning and memorisation. Thinking & Reasoning 23: 376–408. [Google Scholar]

- Ackerman, Rakefet, Elad Yom-Tov, and Ilan Torgovitsky. 2020. Using confidence and consensuality to predict time invested in problem solving and in real-life web searching. Cognition 199: 104248. [Google Scholar] [CrossRef] [PubMed]

- Alter, Adam L., and Daniel M. Oppenheimer. 2009. Uniting the tribes of fluency to form a metacognitive nation. Personality & Social Psychology Review 13: 219–35. [Google Scholar]

- Baars, Martine, Sandra Visser, Tamara Van Gog, Anique de Bruin, and Fred Paas. 2013. Completion of partially worked-out examples as a generation strategy for improving monitoring accuracy. Contemporary Educational Psychology 38: 395–406. [Google Scholar] [CrossRef]

- Bago, Bence, and Wim De Neys. 2017. Fast logic? Examining the time course assumption of dual process theory. Cognition 158: 90–109. [Google Scholar] [CrossRef]

- Ball, Linden J. 2013. Eye-tracking and reasoning: What your eyes tell about your inferences. In New Approaches in Reasoning Research. Edited by Wim De Neys and Magda Osman. Hove: Psychology Press, pp. 51–69. [Google Scholar]

- Ball, Linden J., and Bo T. Christensen. 2009. Analogical reasoning and mental simulation in design: Two strategies linked to uncertainty resolution. Design Studies 30: 169–86. [Google Scholar] [CrossRef]

- Ball, Linden J., and Bo T. Christensen. 2019. Advancing an understanding of design cognition and design metacognition: Progress and prospects. Design Studies 65: 35–59. [Google Scholar] [CrossRef]

- Ball, Linden J., Balder Onarheim, and Bo T. Christensen. 2010. Design requirements, epistemic uncertainty and solution development strategies in software design. Design Studies 31: 567–89. [Google Scholar] [CrossRef]

- Ball, Linden J., John E. Marsh, Damien Litchfield, Rebecca L. Cook, and Natalie Booth. 2015. When distraction helps: Evidence that concurrent articulation and irrelevant speech can facilitate insight problem solving. Thinking & Reasoning 21: 76–96. [Google Scholar]

- Baranski, Joseph V., and William M. Petrusic. 2001. Testing architectures of the decision–confidence relation. Canadian Journal of Experimental Psychology/Revue Canadienne de Psychologie Expérimentale 55: 195–206. [Google Scholar] [CrossRef]

- Bjørndahl, Johanne S., Riccardo Fusaroli, Svend Østergaard, and Kristian Tylén. 2015. Agreeing is not enough: The constructive role of miscommunication. Interaction Studies 16: 495–525. [Google Scholar] [CrossRef]

- Bonder, Taly, and Daniel Gopher. 2019. The effect of confidence rating on a primary visual task. Frontiers in Psychology 10: 2674. [Google Scholar] [CrossRef]

- Bowden, Edward M., Mark Jung-Beeman, Jessica Fleck, and John Kounios. 2005. New approaches to demystifying insight. Trends in Cognitive Sciences 9: 322–28. [Google Scholar] [CrossRef]

- Campitelli, Guillermo, and Paul Gerrans. 2014. Does the cognitive reflection test measure cognitive reflection? A mathematical modeling approach. Memory & Cognition 42: 434–47. [Google Scholar]

- Casakin, Hernan, Linden J. Ball, Bo T. Christensen, and Petra Badke-Schaub. 2015. How do analogizing and mental simulation influence team dynamics in innovative product design? AI EDAM 29: 173–83. [Google Scholar] [CrossRef]

- Chan, Joel, Susannah B. Paletz, and Christian D. Schunn. 2012. Analogy as a strategy for supporting complex problem solving under uncertainty. Memory & Cognition 40: 1352–65. [Google Scholar]

- Christensen, Bo T., and Christian D. Schunn. 2009. The role and impact of mental simulation in design. Applied Cognitive Psychology 23: 327–44. [Google Scholar] [CrossRef]

- Clark, Herbert H., and Deanna Wilkes-Gibbs. 1986. Referring as a collaborative process. Cognition 22: 1–39. [Google Scholar] [CrossRef]

- Coelho, Nelson E., Jr., and Luis C. Figueiredo. 2003. Patterns of intersubjectivity in the constitution of subjectivity: Dimensions of otherness. Culture & Psychology 9: 193–208. [Google Scholar]

- Cromley, Jennifer G., and Andrea J. Kunze. 2020. Metacognition in education: Translational research. Translational Issues in Psychological Science 6: 15–20. [Google Scholar] [CrossRef]

- De Neys, Wim. 2023. Advancing theorizing about fast-and-slow thinking. Behavioral and Brain Sciences 46: e111. [Google Scholar] [CrossRef] [PubMed]

- Dorst, Kees, and Nigel Cross. 2001. Creativity in the design process: Co-evolution of problem–solution. Design Studies 22: 425–37. [Google Scholar] [CrossRef]

- Double, Kit S., and Damian P. Birney. 2017. Are you sure about that? Eliciting confidence ratings may influence performance on Raven’s progressive matrices. Thinking & Reasoning 23: 190–206. [Google Scholar]

- Double, Kit S., and Damian P. Birney. 2018. Reactivity to confidence ratings in older individuals performing the Latin square task. Metacognition & Learning 13: 309–26. [Google Scholar]

- Double, Kit S., and Damian P. Birney. 2019a. Do confidence ratings prime confidence? Psychonomic Bulletin & Review 26: 1035–42. [Google Scholar]

- Double, Kit S., and Damian P. Birney. 2019b. Reactivity to measures of metacognition. Frontiers in Psychology 10: 2755. [Google Scholar] [CrossRef]

- Double, Kit S., Damian P. Birney, and Sarah A. Walker. 2018. A meta-analysis and systematic review of reactivity to judgements of learning. Memory 26: 741–50. [Google Scholar] [CrossRef] [PubMed]

- Ericsson, K. Anders, and Herbert A. Simon. 1980. Verbal reports as data. Psychological Review 87: 215–51. [Google Scholar] [CrossRef]

- Evans, Jonathan St. B. T. 2018. Dual-process theories. In The Routledge International Handbook of Thinking and Reasoning. Edited by Linden J. Ball and Valerie A. Thompson. Abingdon: Routledge, pp. 151–66. [Google Scholar]

- Evans, Jonathan St. B. T., and Keith E. Stanovich. 2013a. Dual-process theories of higher cognition: Advancing the debate. Perspectives on Psychological Science 8: 223–41. [Google Scholar] [CrossRef] [PubMed]

- Evans, Jonathan St. B. T., and Keith E. Stanovich. 2013b. Theory and metatheory in the study of dual processing: Reply to comments. Perspectives on Psychological Science 8: 263–71. [Google Scholar] [CrossRef] [PubMed]

- Figner, Bernd, Ryan O. Murphy, and Paul Siegel. 2019. Measuring electrodermal activity and its applications in judgment and decision-making research. In A Handbook of Process Tracing Methods, 2nd ed. Edited by Michael Schulte-Mecklenbeck, Anton Kuehberger and Joseph G. Johnson. Abingdon: Routledge, pp. 163–84. [Google Scholar]

- Forte, Giuseppe, Francesca Favieri, and Maria Casagrande. 2019. Heart rate variability and cognitive function: A systematic review. Frontiers in Neuroscience 13: 710. [Google Scholar] [CrossRef] [PubMed]

- Frederick, Shane. 2005. Cognitive reflection and decision making. Journal of Economic Perspectives 19: 25–42. [Google Scholar] [CrossRef]

- Gandolfi, Greta, Martin J. Pickering, and Simon Garrod. 2023. Mechanisms of alignment: Shared control, social cognition and metacognition. Philosophical Transactions of the Royal Society B 378: 20210362. [Google Scholar] [CrossRef]

- Garrod, Simon, and Anthony Anderson. 1987. Saying what you mean in dialogue: A study in conceptual and semantic co-ordination. Cognition 27: 181–218. [Google Scholar] [CrossRef]

- Gillespie, Alex, and Beth Richardson. 2011. Exchanging social positions: Enhancing perspective taking within a cooperative problem-solving task. European Journal of Social Psychology 41: 608–16. [Google Scholar] [CrossRef]

- Godfroid, Aline, and Lee Anne Spino. 2015. Reconceptualizing reactivity of think-alouds and eye tracking: Absence of evidence is not evidence of absence. Language Learning 65: 896–928. [Google Scholar] [CrossRef]

- Gonzales, Amy L., Jeffrey T. Hancock, and James W. Pennebaker. 2010. Language style matching as a predictor of social dynamics in small groups. Communication Research 37: 3–19. [Google Scholar] [CrossRef]

- Hacker, Douglas J., John Dunlosky, and Arthur C. Graesser, eds. 2009. Handbook of Metacognition in Education. Abingdon: Routledge. [Google Scholar]

- Hamilton, Katherine, Vincent Mancuso, Susan Mohammed, Rachel Tesler, and Michael McNeese. 2017. Skilled and unaware: The interactive effects of team cognition, team metacognition, and task confidence on team performance. Journal of Cognitive Engineering and Decision Making 11: 382–95. [Google Scholar] [CrossRef]

- Hedne, Mikael R., Elisabeth Norman, and Janet Metcalfe. 2016. Intuitive feelings of warmth and confidence in insight and non-insight problem solving of magic tricks. Frontiers in Psychology 7: 1314. [Google Scholar] [CrossRef] [PubMed]

- Hutchins, Edwin. 1995. Cognition in the Wild. Cambridge, MA: MIT Press. [Google Scholar]

- Kahneman, Daniel, and Shane Frederick. 2002. Representativeness revisited: Attribute substitution in intuitive judgment. In Heuristics and Biases: The Psychology of Intuitive Judgment. Edited by Thomas Gilovich, Dale Griffin and Daniel Kahneman. Cambridge, MA: Cambridge University Press, pp. 49–81. [Google Scholar]

- Kaufmann, Esther. 2022. Lens model studies: Revealing teachers’ judgements for teacher education. Journal of Education for Teaching 49: 236–51. [Google Scholar] [CrossRef]

- Kershaw, Trina C., and Stellan Ohlsson. 2004. Multiple causes of difficulty in insight: The case of the nine-dot problem. Journal of Experimental Psychology: Learning, Memory, and Cognition 30: 3–13. [Google Scholar] [CrossRef] [PubMed]

- Kizilirmak, Jasmin M., Violetta Serger, Judith Kehl, Michael Öllinger, Kristian Folta-Schoofs, and Alan Richardson-Klavehn. 2018. Feelings-of-warmth increase more abruptly for verbal riddles solved with in contrast to without Aha! experience. Frontiers in Psychology 9: 1404. [Google Scholar] [CrossRef] [PubMed]

- Krause, Jens, Graeme D. Ruxton, and Stefan Krause. 2010. Swarm intelligence in animals and humans. Trends in Ecology & Evolution 25: 28–34. [Google Scholar]

- Kruglanski, Arie W., and Gerd Gigerenzer. 2011. Intuitive and deliberate judgments are based on common principles. Psychological Review 118: 97–109. [Google Scholar] [CrossRef]

- Lakens, Daniël, and Mariëlle Stel. 2011. If they move in sync, they must feel in sync: Movement synchrony leads to attributions of rapport and entitativity. Social Cognition 29: 1–14. [Google Scholar] [CrossRef]

- Laukkonen, Ruben E., and Jason M. Tangen. 2018. How to detect insight moments in problem solving experiments. Frontiers in Psychology 9: 282. [Google Scholar] [CrossRef]

- Laukkonen, Ruben E., Daniel J. Ingledew, Hilary J. Grimmer, Jonathan W. Schooler, and Jason M. Tangen. 2021. Getting a grip on insight: Real-time and embodied Aha experiences predict correct solutions. Cognition and Emotion 35: 918–35. [Google Scholar] [CrossRef] [PubMed]

- Law, Marvin K., Lazar Stankov, and Sabina Kleitman. 2022. I choose to opt-out of answering: Individual differences in giving up behaviour on cognitive tests. Journal of Intelligence 10: 86. [Google Scholar] [CrossRef] [PubMed]

- Lei, Wei, Jing Chen, Chunliang Yang, Yiqun Guo, Pan Feng, Tingyong Feng, and Hong Li. 2020. Metacognition-related regions modulate the reactivity effect of confidence ratings on perceptual decision-making. Neuropsychologia 144: 107502. [Google Scholar] [CrossRef] [PubMed]

- Mathôt, Sebastiaan, and Ana Vilotijević. 2022. Methods in cognitive pupillometry: Design, preprocessing, and statistical analysis. Behavior Research Methods 55: 3055–77. [Google Scholar] [CrossRef] [PubMed]

- Mercier, Hugo. 2016. The argumentative theory: Predictions and empirical evidence. Trends in Cognitive Sciences 20: 689–700. [Google Scholar] [CrossRef] [PubMed]

- Mercier, Hugo, and Dan Sperber. 2011. Why do humans reason? Arguments for an argumentative theory. Behavioral & Brain Sciences 34: 57–74. [Google Scholar]

- Merleau-Ponty, Maurice. 1962. Phenomenology of Perception. Translated by Colin Smith. London: Routledge & Kegan Paul. First published 1945. [Google Scholar]

- Metcalfe, Janet. 1986. Premonitions of insight predict impending error. Journal of Experimental Psychology: Learning, Memory, and Cognition 12: 623–34. [Google Scholar] [CrossRef]

- Metcalfe, Janet, and David Wiebe. 1987. Intuition in insight and noninsight problem solving. Memory & Cognition 15: 238–46. [Google Scholar]

- Miles, Lynden K., Joanne Lumsden, Michael J. Richardson, and C. Neil Macrae. 2011. Do birds of a feather move together? Group membership and behavioral synchrony. Experimental Brain Research 211: 495–503. [Google Scholar] [CrossRef]

- Mori, Junko, and Makoto Hayashi. 2006. The achievement of intersubjectivity through embodied completions: A study of interactions between first and second language speakers. Applied Linguistics 27: 195–219. [Google Scholar] [CrossRef]

- Nelson, Thomas O., and Louis Narens. 1990. Metamemory: A theoretical framework and new findings. In The Psychology of Learning and Motivation: Advances in Research and Theory. Edited by Gordon Bower. Cambridge, MA: Academic Press, pp. 125–73. [Google Scholar]

- Paletz, Susannah B., Joel Chan, and Christian D. Schunn. 2017. The dynamics of micro-conflicts and uncertainty in successful and unsuccessful design teams. Design Studies 50: 39–69. [Google Scholar] [CrossRef]

- Paprocki, Rafal, and Artem Lenskiy. 2017. What does eye-blink rate variability dynamics tell us about cognitive performance? Frontiers in Human Neuroscience 11: 620. [Google Scholar] [CrossRef] [PubMed]

- Pennycook, Gordon, James A. Cheyne, Derek J. Koehler, and Jonathan A. Fugelsang. 2016. Is the cognitive reflection test a measure of both reflection and intuition? Behavior Research Methods 48: 341–48. [Google Scholar] [CrossRef] [PubMed]

- Perry, John, David Lundie, and Gill Golder. 2019. Metacognition in schools: What does the literature suggest about the effectiveness of teaching metacognition in schools? Educational Review 71: 483–500. [Google Scholar] [CrossRef]

- Pervin, Nargis, Tuan Q. Phan, Anindya Datta, Hideaki Takeda, and Fujio Toriumi. 2015. Hashtag popularity on twitter: Analyzing co-occurrence of multiple hashtags. In Proceedings of Social Computing and Social Media: 7th International Conference, SCSM 2015. Berlin/Heidelberg: Springer International Publishing, pp. 169–82. [Google Scholar]

- Petrusic, William M., and Joseph V. Baranski. 2003. Judging confidence influences decision processing in comparative judgments. Psychonomic Bulletin & Review 10: 177–83. [Google Scholar]

- Pickering, Martin J., and Simon Garrod. 2021. Understanding Dialogue: Language Use and Social Interaction. Cambridge, MA: Cambridge University Press. [Google Scholar]

- Quayle, Jeremy D., and Linden J. Ball. 2000. Working memory, metacognitive uncertainty and belief bias in syllogistic reasoning. Quarterly Journal of Experimental Psychology 53: 1202–23. [Google Scholar] [CrossRef] [PubMed]

- Richardson, Beth H., and Robert A. Nash. 2022. ‘Rapport myopia’ in investigative interviews: Evidence from linguistic and subjective indicators of rapport. Legal & Criminological Psychology 27: 32–47. [Google Scholar]

- Richardson, Beth H., Kathleen C. McCulloch, Paul J. Taylor, and Helen J. Wall. 2019. The cooperation link: Power and context moderate verbal mimicry. Journal of Experimental Psychology: Applied 25: 62–76. [Google Scholar] [CrossRef]

- Richardson, Beth H., Linden J. Ball, Bo T. Christensen, and John E. Marsh. 2024. Collaborative meta-reasoning in creative contexts: Advancing an understanding of collaborative monitoring and control in creative teams. In The Routledge International Handbook of Creative Cognition. Edited by Linden J. Ball and Frédéric Vallée-Tourangeau. Abingdon: Routledge, pp. 709–27. [Google Scholar]

- Richardson, Daniel C., Rick Dale, and Natasha Z. Kirkham. 2007. The art of conversation is coordination. Psychological Science 18: 407–13. [Google Scholar] [CrossRef]

- Roberts, Linds W. 2017. Research in the real world: Improving adult learners web search and evaluation skills through motivational design and problem-based learning. College & Research Libraries 78: 527–51. [Google Scholar]

- Salvi, Carola, Emanuela Bricolo, John Kounios, Edward Bowden, and Mark Beeman. 2016. Insight solutions are correct more often than analytic solutions. Thinking & Reasoning 22: 443–60. [Google Scholar]

- Saunders, John. 2022. Manchester Arena Inquiry, Volume 2: Emergency Response. London: His Majesty’s Stationery Office. [Google Scholar]

- Schegloff, Emanuel A. 1982. Discourse as an interactional achievement: Some uses of ‘uh huh’ and other things that come between sentences. In Analyzing Discourse: Text and Talk. Edited by Deborah Tannen. Washington, DC: Georgetown University Press, pp. 71–93. [Google Scholar]

- Schegloff, Emanuel A. 1992. Repair after next turn: The last structurally provided defense of intersubjectivity in conversation. American Journal of Sociology 97: 1295–345. [Google Scholar] [CrossRef]

- Schoenherr, Jordan R., Craig Leth-Steensen, and William M. Petrusic. 2010. Selective attention and subjective confidence calibration. Attention, Perception, and Psychophysics 72: 353–68. [Google Scholar] [CrossRef][Green Version]

- Schooler, Jonathan W., and Joseph Melcher. 1995. The ineffability of insight. In The Creative Cognition Approach. Edited by Steven Smith, Thomas Ward and Ronald Finke. Cambridge, MA: MIT Press, pp. 97–133. [Google Scholar]

- Schooler, Jonathan W., Stellan Ohlsson, and Kevin Brooks. 1993. Thoughts beyond words: When language overshadows insight. Journal of Experimental Psychology: General 122: 166–83. [Google Scholar] [CrossRef]

- Sebanz, Natalie, and Guenther Knoblich. 2009. Prediction in joint action: What, when, and where. Topics in Cognitive Science 1: 353–67. [Google Scholar] [CrossRef] [PubMed]

- Siegler, Robert S. 2000. Unconscious insights. Current Directions in Psychological Science 9: 79–83. [Google Scholar] [CrossRef]

- Stanovich, Keith E. 2018. Miserliness in human cognition: The interaction of detection, override and mindware. Thinking & Reasoning 24: 423–44. [Google Scholar]

- Stupple, Edward J. N., Melanie Pitchford, Linden J. Ball, Thomas E. Hunt, and Richard Steel. 2017. Slower is not always better: Response-time evidence clarifies the limited role of miserly information processing in the Cognitive Reflection Test. PLoS ONE 12: e0186404. [Google Scholar] [CrossRef]

- Tausczik, Yla R., and James W. Pennebaker. 2013. Improving teamwork using real-time language feedback. Paper presented at the SIGCHI Conference on Human Factors in Computing Systems, Paris, France, April 27–May 2; pp. 459–68. [Google Scholar]

- Thompson, Valerie A., Jamie A. P. Turner, and Gordon Pennycook. 2011. Intuition, reason, and metacognition. Cognitive Psychology 63: 107–40. [Google Scholar] [CrossRef]

- Thompson, Valerie A., Jamie A. P. Turner, Gordon Pennycook, Linden J. Ball, Hannah Brack, Yael Ophir, and Rakefet Ackerman. 2013. The role of answer fluency and perceptual fluency as metacognitive cues for initiating analytic thinking. Cognition 128: 237–51. [Google Scholar] [CrossRef]

- Thompson, Valerie, and Kinga Morsanyi. 2012. Analytic thinking: Do you feel like it? Mind & Society 11: 93–105. [Google Scholar]

- Topolinski, Sascha, and Rolf Reber. 2010. Immediate truth: Temporal contiguity between a cognitive problem and its solution determines experienced veracity of the solution. Cognition 114: 117–22. [Google Scholar] [CrossRef] [PubMed]

- Undorf, Monika, and Arndt Bröder. 2021. Metamemory for pictures of naturalistic scenes: Assessment of accuracy and cue utilization. Memory & Cognition 49: 1405–22. [Google Scholar]

- Undorf, Monika, and Edgar Erdfelder. 2015. The relatedness effect on judgments of learning: A closer look at the contribution of processing fluency. Memory & Cognition 43: 647–58. [Google Scholar]

- Undorf, Monika, Anke Söllner, and Arndt Bröder. 2018. Simultaneous utilization of multiple cues in judgments of learning. Memory & Cognition 46: 507–19. [Google Scholar]

- Unkelbach, Christian, and Rainer Greifeneder. 2013. A general model of fluency effects in judgment and decision making. In The Experience of Thinking. Edited by Christian Unkelbach and Rainer Greifeneder. Hove: Psychology Press, pp. 21–42. [Google Scholar]

- Webb, Margaret E., Daniel R. Little, and Simon J. Cropper. 2016. Insight is not in the problem: Investigating insight in problem solving across task types. Frontiers in Psychology 7: 1424. [Google Scholar] [CrossRef]

- Webb, M. E., Daniel R. Little, and Simon J. Cropper. 2018. Once more with feeling: Normative data for the aha experience in insight and noninsight problems. Behavior Research Methods 50: 2035–56. [Google Scholar] [CrossRef]

- Wiltschnig, Stefan, Bo T. Christensen, and Linden J. Ball. 2013. Collaborative problem–solution co-evolution in creative design. Design Studies 34: 515–42. [Google Scholar] [CrossRef]

- Zion, Michal, Idit Adler, and Zemira Mevarech. 2015. The effect of individual and social metacognitive support on students’ metacognitive performances in an online discussion. Journal of Educational Computing Research 52: 50–87. [Google Scholar] [CrossRef]

| Levels of Monitoring | Indicative Monitoring Processes | Example Cues Detected by Monitoring Processes |

|---|---|---|

| Self-Monitoring An individual’s perception of their own performance. | An individual’s generation of an: initial judgment of solvability; feeling of rightness; feeling of error; feeling of warmth; intermediate confidence or uncertainty; final confidence; final judgment of solvability. | An individual’s sensitivity to: processing fluency (ease of processing); perceived features of the presented task; perceived task complexity; study time; response time. |

| Other Monitoring An individual’s perception of the performance of others. | An individual’s perception of someone else’s: initial judgment of solvability; feeling of rightness; feeling of error; feeling of warmth; intermediate confidence or uncertainty; final confidence; final judgment of solvability. An individual’s perception of: alignment/misalignment. | An individual’s sensitivity to someone else’s: processing fluency (ease of processing); perceived features of the presented task; perceived task complexity; study time; response time; degree of agreement; level of understanding (potentially made manifest by language markers such as hedge words and pronoun use). |

| Joint Monitoring The unified perception of collective performance. | A group’s unified perception of: initial judgment of solvability; feeling of rightness; feeling of error; feeling of warmth; intermediate confidence or uncertainty; final confidence; final judgment of solvability. A group’s perception of: alignment/misalignment. | A group’s unified perception of: processing fluency (ease of processing); perceived features of the presented task; perceived task complexity; study time; response time; degree of agreement; level of understanding (potentially made manifest by language markers such as hedge words and pronoun use). |

| Levels of Control | Indicative Control Processes | Example Outcomes of Control Processes |

| Self-Focused Control An individual’s decisions about how to progress or terminate their own reasoning. | An individual’s procedural decision to engage in: memory search; reasoning, problem solving or decision making; response generation; response evaluation; strategy change; giving up; help-seeking. | An individual’s generation of: recalled information; an intermediate or final response (e.g., a solution, option or decision, including the decision to give up); an evaluation of an intermediate or final response; a new process or strategy (e.g., analogising, mental simulation); a request for help. |

| Other-Focused Control An individual’s decisions about how to control the performance of others. | An individual’s procedural decision to engage in: affirmation; encouragement; persuasion; argumentation; negotiation; manipulation; deception. | An individual’s generation of: alignment (e.g., situation model alignment and linguistic alignment); intersubjectvity; shared understanding; common ground; conflict resolution; misalignment; disalignment. |

| Joint Control The unified control of decisions regarding how to advance or terminate collective performance. | A group’s unified procedural decision to engage in: memory search; reasoning, problem solving or decision making; response generation; response evaluation; strategy change; giving up; help-seeking. | A group’s unified generation of: recalled information; an intermediate or final response (e.g., a solution, option or decision, including the decision to give up); an evaluation of an intermediate or final response; a new process or strategy (e.g., analogising, mental simulation); a request for help. |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Richardson, B.H.; Ball, L.J. Progressing the Development of a Collaborative Metareasoning Framework: Prospects and Challenges. J. Intell. 2024, 12, 28. https://doi.org/10.3390/jintelligence12030028

Richardson BH, Ball LJ. Progressing the Development of a Collaborative Metareasoning Framework: Prospects and Challenges. Journal of Intelligence. 2024; 12(3):28. https://doi.org/10.3390/jintelligence12030028

Chicago/Turabian StyleRichardson, Beth H., and Linden J. Ball. 2024. "Progressing the Development of a Collaborative Metareasoning Framework: Prospects and Challenges" Journal of Intelligence 12, no. 3: 28. https://doi.org/10.3390/jintelligence12030028

APA StyleRichardson, B. H., & Ball, L. J. (2024). Progressing the Development of a Collaborative Metareasoning Framework: Prospects and Challenges. Journal of Intelligence, 12(3), 28. https://doi.org/10.3390/jintelligence12030028