Metacognitive Effort Regulation across Cultures

Abstract

:1. Introduction

2. Culture-Dependent Metacognitive Monitoring Accuracy

3. Control: Goal Setting and Effort Regulation

4. The Present Study

5. Method

5.1. Participants

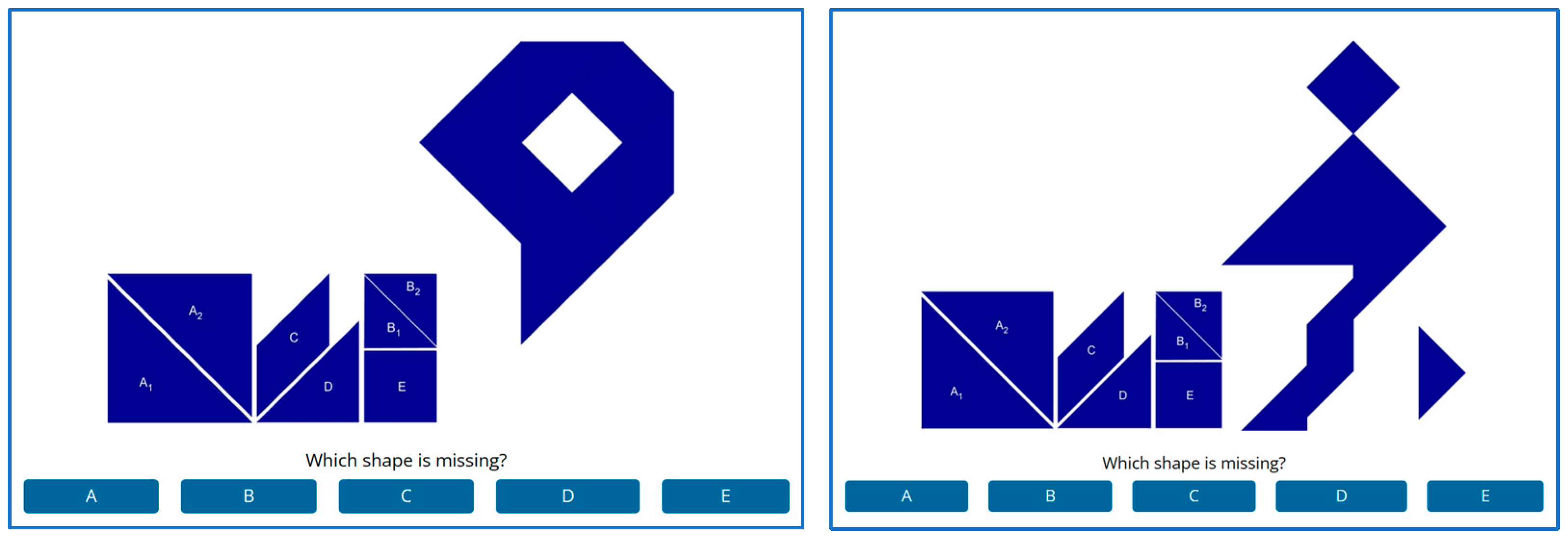

5.2. Materials and Measures

5.3. Procedure

5.4. Analysis Plan

6. Results and Discussion

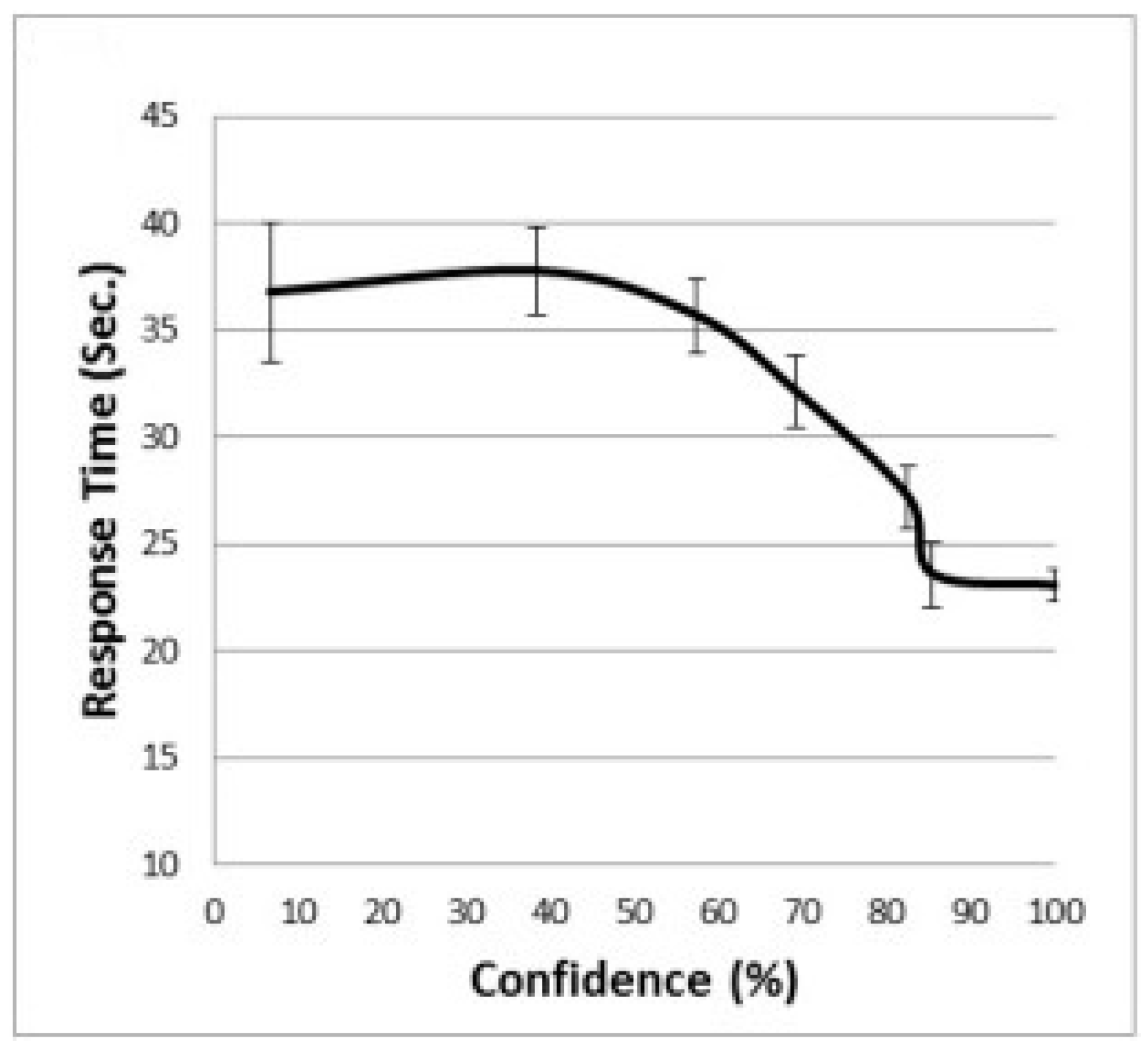

6.1. Metacognitive Monitoring

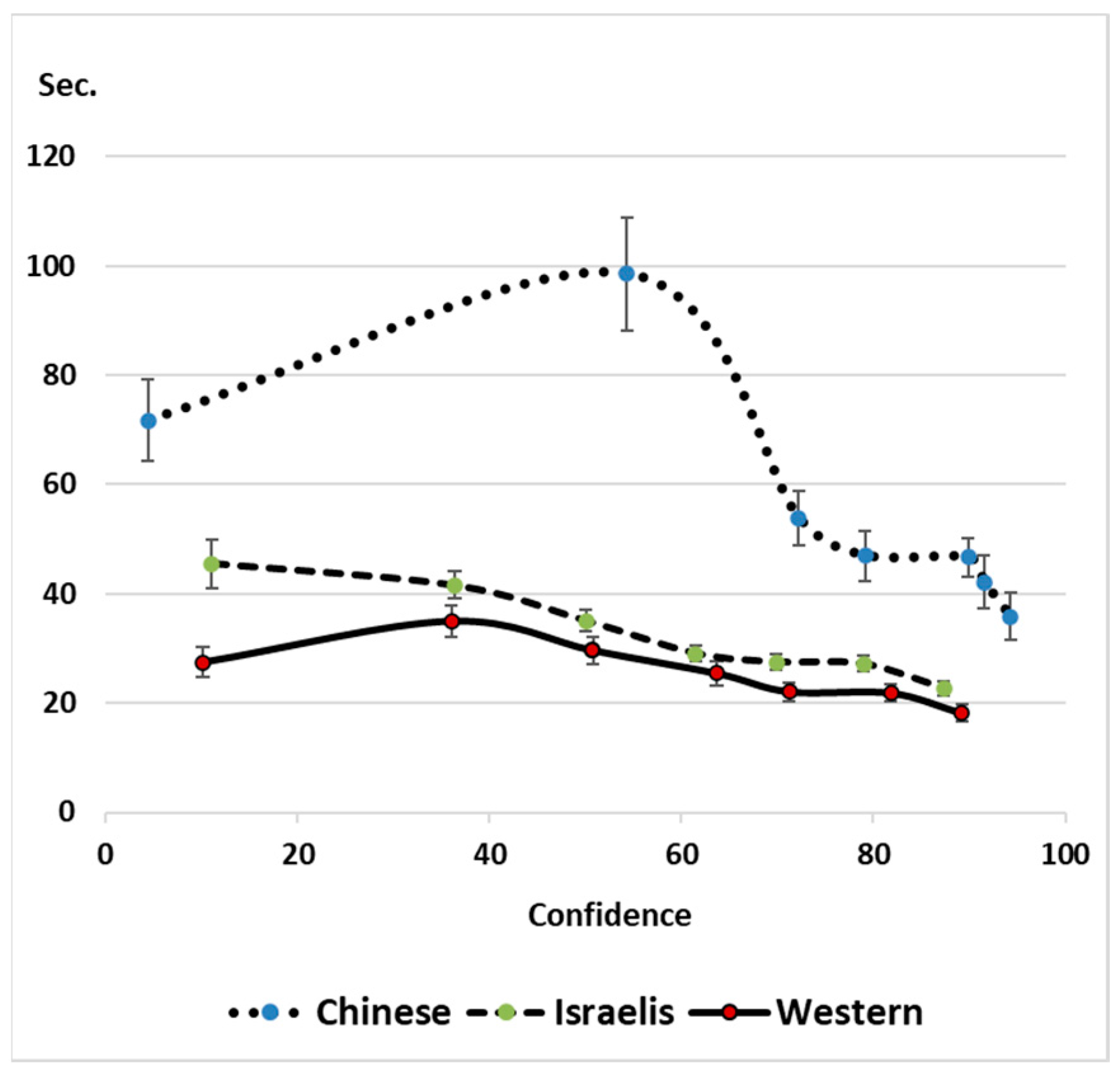

6.2. Metacognitive Control: Goal Setting and Effort Regulation

6.3. Interference of Holistic Processing

7. General Discussion

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Ackerman, Rakefet, and Kinga Morsanyi. 2023. We know what stops you from thinking forever: A metacognitive perspective. Behavioral and Brain Sciences 46: e112. [Google Scholar] [CrossRef] [PubMed]

- Ackerman, Rakefet, and Liat Levontin. Forthcoming. Mindset effects on the regulation of thinking time in problem-solving. Thinking & Reasoning. in press.

- Ackerman, Rakefet, and Morris Goldsmith. 2011. Metacognitive regulation of text learning: On screen versus on paper. Journal of Experimental Psychology: Applied 17: 18–32. [Google Scholar] [CrossRef] [PubMed]

- Ackerman, Rakefet, and Valerie A. Thompson. 2017. Meta-reasoning: Monitoring and control of thinking and reasoning. Trends in Cognitive Sciences 21: 607–17. [Google Scholar] [CrossRef] [PubMed]

- Ackerman, Rakefet, Avi Parush, Fareda Nassar, and Avraham Shtub. 2016. Metacognition and system usability: Incorporating metacognitive research paradigm into usability testing. Computers in Human Behavior 54: 101–13. [Google Scholar] [CrossRef]

- Ackerman, Rakefet, Avigdor Gal, Tomer Sagi, and Roee Shraga. 2019. A cognitive model of human bias in matching. In PRICAI 2019: Trends in Artificial Intelligence: 16th Pacific Rim International Conference on Artificial Intelligence, Cuvu, Yanuca Island, Fiji, August 26–30, 2019, Proceedings, Part I 16. Cham: Springer International Publishing, pp. 632–46. [Google Scholar]

- Ackerman, Rakefet, Elad Yom-Tov, and Ilan Torgovitsky. 2020a. Using confidence and consensuality to predict time invested in problem solving and in real-life web searching. Cognition 199: 104248. [Google Scholar] [CrossRef] [PubMed]

- Ackerman, Rakefet, Igor Douven, Shira Elqayam, and Kinneret Teodorescu. 2020b. Satisficing, meta-reasoning, and the rationality of further deliberation. In Logic and Uncertainty in the Human Mind: A Tribute to David E. Over. Edited by Shira Elqayam, Igor Douven, Jonathan St B. T. Evans and Nicole Cruz. London: Routledge, pp. 10–26. [Google Scholar]

- Ackerman, Rakefet. 2014. The diminishing criterion model for metacognitive regulation of time investment. Journal of Experimental Psychology: General 143: 1349–68. [Google Scholar] [CrossRef]

- Ackerman, Rakefet. 2019. Heuristic cues for meta-reasoning judgments: Review and methodology. Psihologijske Teme 28: 1–20. [Google Scholar] [CrossRef]

- Ackerman, Rakefet. 2023. Bird’s-eye view of cue integration: Exposing instructional and task design factors which bias problem solvers. Educational Psychology Review 35: 1–37. [Google Scholar] [CrossRef]

- Anagnost, Ann. 2004. The corporeal politics of quality (suzhi). Public Culture 16: 189–208. [Google Scholar] [CrossRef]

- Bae, Jinhee, Seok-sung Hong, and Lisa K. Son. 2021. Prior failures, laboring in vain, and knowing when to give up: Incremental versus entity theories. Metacognition and Learning 16: 275–96. [Google Scholar] [CrossRef] [PubMed]

- Bajšanski, Igor, Valnea Žauhar, and Pavle Valerjev. 2019. Confidence judgments in syllogistic reasoning: The role of consistency and response cardinality. Thinking & Reasoning 25: 14–47. [Google Scholar]

- Bandura, Albert. 1986. Social Foundations of Thought and Action. Englewood Cliffs: Prentice Hall. [Google Scholar]

- Bates, Douglas, Martin Mächler, Ben Bolker, and Steve Walker. 2015. Fitting linear mixed-effects models using lme4. Journal of Statistical Software 67: 1–48. [Google Scholar] [CrossRef]

- Bohning, Gerry, and Jody Kosack Althouse. 1997. Using tangrams to teach geometry to young children. Early Childhood Education Journal 24: 239–42. [Google Scholar] [CrossRef]

- Cacioppo, John T., Richard E. Petty, Jeffrey A. Feinstein, and W. Blair G. Jarvis. 1996. Dispositional differences in cognitive motivation: The life and times of individuals varying in need for cognition. Psychological Bulletin 119: 197–253. [Google Scholar] [CrossRef]

- Coutinho, Mariana V. C., Joshua S. Redford, Barbara A. Church, Alexandria C. Zakrzewski, Justin J. Couchman, and J. David Smith. 2015. The interplay between uncertainty monitoring and working memory: Can metacognition become automatic? Memory & Cognition 43: 990–1006. [Google Scholar]

- de Bruin, Anique B. H., Julian Roelle, Shana K. Carpenter, Martine Baars, and EFG-MRE. 2020. Synthesizing cognitive load and self-regulation theory: A theoretical framework and research agenda. Educational Psychology Review 32: 903–15. [Google Scholar] [CrossRef]

- de Bruin, Anique B. H., Keith W. Thiede, Gino Camp, and Joshua Redford. 2011. Generating keywords improves metacomprehension and self-regulation in elementary and middle school children. Journal of Experimental Child Psychology 109: 294–310. [Google Scholar] [CrossRef]

- De Neys, Wim, Sandrine Rossi, and Olivier Houdé. 2013. Bats, balls, and substitution sensitivity: Cognitive misers are no happy fools. Psychonomic Bulletin & Review 20: 269–73. [Google Scholar]

- Dello-Iacovo, Belinda. 2009. Curriculum reform and ‘quality education’ in China: An overview. International Journal of Educational Development 29: 241–49. [Google Scholar] [CrossRef]

- Dentakos, Stella, Wafa Saoud, Rakefet Ackerman, and Maggie E. Toplak. 2019. Does domain matter? Monitoring accuracy across domains. Metacognition and Learning 14: 413–36. [Google Scholar] [CrossRef]

- Double, Kit S., and Damian P. Birney. 2017. Are you sure about that? Eliciting confidence ratings may influence performance on Raven’s progressive matrices. Thinking & Reasoning 23: 190–206. [Google Scholar]

- Dunlosky, John, and Christopher Hertzog. 1998. Training programs to improve learning in later adulthood: Helping older adults educate themselves. In Metacognition in Educational Theory and Practice. Edited by Hacker D. J. and Dunlosky. Mahwah: Lawrence Erlbaum Associates, pp. 249–75. [Google Scholar]

- Dunning, David, Kerri Johnson, Joyce Ehrlinger, and Justin Kruger. 2003. Why people fail to recognize their own incompetence. Current Directions in Psychological Science 12: 83–87. [Google Scholar] [CrossRef]

- Dweck, Carol S. 2008. Can personality be changed? The role of beliefs in personality and change. Current Directions in Psychological Science 17: 391–94. [Google Scholar] [CrossRef]

- Dweck, Carol S., Chi-yue Chiu, and Ying-yi Hong. 1995. Implicit theories and their role in judgments and reactions: A word from two perspectives. Psychological Inquiry 6: 267–85. [Google Scholar] [CrossRef]

- Erez, Miriam, and Rikki Nouri. 2010. Creativity: The influence of cultural, social, and work contexts. Management and Organization Review 6: 351–70. [Google Scholar] [CrossRef]

- Evans, Jonathan St BT. 2006. The heuristic-analytic theory of reasoning: Extension and evaluation. Psychonomic Bulletin & Review 13: 378–95. [Google Scholar]

- Faul, Franz, Edgar Erdfelder, Albert-Georg Lang, and Axel Buchner. 2007. G* Power 3: A flexible statistical power analysis program for the social, behavioral, and biomedical sciences. Behavior Research Methods 39: 175–91. [Google Scholar] [CrossRef]

- Fiedler, Klaus, Rakefet Ackerman, and Chiara Scarampi. 2019. Metacognition: Monitoring and controlling one’s own knowledge, reasoning, and decisions. In Introduction to the Psychology of Human Thought. Edited by R. J. Sternberg and J. Funke. Heidelberg: Heidelberg University Publishing, pp. 89–111. [Google Scholar]

- Fong, Vanessa L. 2004. Only Hope: Coming of Age under China’s One-Child Policy. Redwood City: Stanford University Press. [Google Scholar]

- Funke, Joachim, Andreas Fischer, and Daniel V. Holt. 2018. Competencies for complexity: Problem solving in the twenty-first century. In Assessment and Teaching of 21st Century Skills: Research and Applications. Edited by E. Care, P. Griffin and M. Wilson. Dordrecht: Springer, pp. 41–53. [Google Scholar]

- Glickman, Moshe, Rani Moran, and Marius Usher. 2022. Evidence integration and decision confidence are modulated by stimulus consistency. Nature Human Behaviour 6: 988–99. [Google Scholar] [CrossRef]

- Glöckner, Andreas, and Tilmann Betsch. 2008. Multiple-reason decision making based on automatic processing. Journal of Experimental Psychology: Learning, Memory, and Cognition 34: 1055–75. [Google Scholar]

- Goldsmith, Morris. 2016. Metacognitive quality-control processes in memory retrieval and reporting. In The Oxford Handbook of Metamemory. Edited by John Dunlosky and Sarah Tauber. Oxford: Oxford University Press, pp. 357–85. [Google Scholar]

- Haddara, Nadia, and Dobromir Rahnev. 2022. The impact of feedback on perceptual decision-making and metacognition: Reduction in bias but no change in sensitivity. Psychological Science 33: 259–75. [Google Scholar] [CrossRef] [PubMed]

- Hagendorff, Jens, Yue Lucy Liu, and Duc Duy Nguyen. 2021. The Cultural Origins of CEO Overconfidence. Available online: http://dx.doi.org/10.2139/ssrn.3855650 (accessed on 21 August 2023).

- Halamish, Vered, and Monika Undorf. 2023. Why do judgments of learning modify memory? Evidence from identical pairs and relatedness judgments. Journal of Experimental Psychology: Learning, Memory, and Cognition 49: 547–56. [Google Scholar] [CrossRef] [PubMed]

- Halvorson, Heidi Grant, and E. Tory Higgins. 2013. Focus: Use Different Ways of Seeing the World for Success and Influence. London: Penguin. [Google Scholar]

- Haran, Uriel, Ilana Ritov, and Barbara A. Mellers. 2013. The role of actively open-minded thinking in information acquisition, accuracy, and calibration. Judgment and Decision Making 8: 188–201. [Google Scholar] [CrossRef]

- Heyes, Cecilia, Dan Bang, Nicholas Shea, Christopher D. Frith, and Stephen M. Fleming. 2020. Knowing ourselves together: The cultural origins of metacognition. Trends in Cognitive Sciences 24: 349–62. [Google Scholar] [CrossRef]

- Hoch, Emely, Yael Sidi, Rakefet Ackerman, Vincent Hoogerheide, and Katharina Scheiter. 2023. Comparing mental effort, difficulty, and confidence appraisals in problem-solving: A metacognitive perspective. Educational Psychology Review 35: 61. [Google Scholar] [CrossRef]

- Hofstede, G., G. J. Hofstede, and M. Minkov. 2010. Cultures and Organizations: Software of the Mind. New York: McGraw-Hill. [Google Scholar]

- Hoshino-Browne, Etsuko. 2012. Cultural variations in motivation for cognitive consistency: Influences of self-systems on cognitive dissonance. Social and Personality Psychology Compass 6: 126–41. [Google Scholar] [CrossRef]

- House, Robert J., Paul J. Hanges, Mansour Javidan, Peter W. Dorfman, and Vipin Gupta, eds. 2004. Culture, Leadership, and Organizations: The GLOBE Study of 62 Societies. Thousand Oaks: SAGE Publications. [Google Scholar]

- Hu, Zhishan, Keng-Fong Lam, and Zhen Yuan. 2019. Effective connectivity of the fronto-parietal network during the tangram task in a natural environment. Neuroscience 422: 202–11. [Google Scholar] [CrossRef]

- Huang, Qing, and Yu Xie. 2021. Social-demographic correlates of mindset in China. Chinese Journal of Sociology 7: 497–513. [Google Scholar] [CrossRef]

- Ji, Li-Jun, Kaiping Peng, and Richard E. Nisbett. 2000. Culture, control, and perception of relationships in the environment. Journal of Personality and Social Psychology 78: 943–55. [Google Scholar] [CrossRef]

- Kipnis, Andrew B. 2019. Governing Educational Desire: Culture, Politics, and Schooling in China. Chicago: University of Chicago Press. [Google Scholar]

- Kleitman, Sabina, Jessica Sik-Wai Hui, and Yixin Jiang. 2019. Confidence to spare: Individual differences in cognitive and metacognitive arrogance and competence. Metacognition and Learning 14: 479–508. [Google Scholar] [CrossRef]

- Koellinger, Philipp, Maria Minniti, and Christian Schade. 2007. “I think I can, I think I can”: Overconfidence and entrepreneurial behavior. Journal of Economic Psychology 28: 502–27. [Google Scholar] [CrossRef]

- Koriat, Asher, Hilit Ma’ayan, and Ravit Nussinson. 2006. The intricate relationships between monitoring and control in metacognition: Lessons for the cause-and-effect relation between subjective experience and behavior. Journal of Experimental Psychology: General 135: 36–69. [Google Scholar] [CrossRef] [PubMed]

- Ku, Kelly Y. L., and Irene T. Ho. 2010. Dispositional factors predicting Chinese students’ critical thinking performance. Personality and Individual Differences 48: 54–58. [Google Scholar] [CrossRef]

- Kurman, Jenny, and Chin Ming Hui. 2011. Promotion, prevention or both: Regulatory focus and culture revisited. Online Readings in Psychology and Culture 5: 1–16. [Google Scholar] [CrossRef]

- Kuznetsova, Alexandra, Per Bruun Brockhoff, and Rune Haubo Bojesen Christensen. 2015. Package ‘lmertest’. R Package Version 2. [Google Scholar]

- Lauterman, Tirza, and Rakefet Ackerman. 2014. Overcoming screen inferiority in learning and calibration. Computers in Human Behavior 35: 455–63. [Google Scholar] [CrossRef]

- Law, Marvin K. H., Lazar Stankov, and Sabina Kleitman. 2022. I choose to opt-out of answering: Individual differences in giving up behaviour on cognitive tests. Journal of Intelligence 10: 86. [Google Scholar] [CrossRef]

- Lee, Joohi, Joo Ok Lee, and Denise Collins. 2009. Enhancing children’s spatial sense using tangrams. Childhood Education 86: 92–94. [Google Scholar] [CrossRef]

- Lee, Ju-Whei, J. Frank Yates, Hiromi Shinotsuka, Ramadhar Singh, Mary Lu Uy Onglatco, N. S. Yen, Meenakshi Gupta, and Deepti Bhatnagar. 1995. Cross-national differences in overconfidence. Asian Journal of Psychology 1: 63–69. [Google Scholar]

- Li, Jin. 2004. Learning as a task or a virtue: US and Chinese preschoolers explain learning. Developmental Psychology 40: 595–605. [Google Scholar] [CrossRef]

- Li, Jin. 2006. Self in learning: Chinese adolescents’ goals and sense of agency. Child Development 77: 482–501. [Google Scholar] [CrossRef] [PubMed]

- Li, Jin. 2012. Cultural Foundations of Learning: East and West. Cambridge: Cambridge University Press. [Google Scholar]

- Lundeberg, Mary A., Paul W. Fox, Amy C. Brown, and Salman Elbedour. 2000. Cultural influences on confidence: Country and gender. Journal of Educational Psychology 92: 152–59. [Google Scholar] [CrossRef]

- Maniscalco, Brian, and Hakwan Lau. 2015. Manipulation of working memory contents selectively impairs metacognitive sensitivity in a concurrent visual discrimination task. Neuroscience of Consciousness 2015: niv002. [Google Scholar] [CrossRef] [PubMed]

- Metcalfe, Janet, and Nate Kornell. 2005. A region of proximal learning model of study time allocation. Journal of Memory and Language 52: 463–77. [Google Scholar] [CrossRef]

- Miele, David B., Bridgid Finn, and Daniel C. Molden. 2011. Does easily learned mean easily remembered? It depends on your beliefs about intelligence. Psychological Science 22: 320–24. [Google Scholar] [CrossRef]

- Miyamoto, Yuri, Richard E. Nisbett, and Takahiko Masuda. 2006. Culture and the physical environment. Holistic versus analytic perceptual affordances. Psychological Science 17: 113–19. [Google Scholar] [CrossRef] [PubMed]

- Moore, Don A., Amelia S. Dev, and Ekaterina Y. Goncharova. 2018. Overconfidence across cultures. Collabra: Psychology 4: 36. [Google Scholar] [CrossRef]

- Morony, Suzanne, Sabina Kleitman, Yim Ping Lee, and Lazar Stankov. 2013. Predicting achievement: Confidence vs. self-efficacy, anxiety, and self-concept in Confucian and European countries. International Journal of Educational Research 58: 79–96. [Google Scholar] [CrossRef]

- Morsanyi, Kinga, Niamh Ní Cheallaigh, and Rakefet Ackerman. 2019. Mathematics anxiety and metacognitive processes: Proposal for a new line of inquiry. Psychological Topics 28: 147–69. [Google Scholar] [CrossRef]

- Murphy, Dillon H., and Alan D. Castel. 2022. Differential effects of proactive and retroactive interference in value-directed remembering for younger and older adults. Psychology and Aging 37: 787–99. [Google Scholar] [CrossRef]

- Murphy, Dillon H., Kara M. Hoover, and Alan D. Castel. 2023. Strategic metacognition: Self-paced study time and responsible remembering. Memory & Cognition 51: 234–51. [Google Scholar]

- Nelson, Thomas O, and Louis Narens. 1990. Metamemory: A theoretical framework and new findings. In The Psychology of Learning and Motivation: Advances in Research and Theory. Edited by Gordon Bower. San Diego: Academic Press, vol. 26, pp. 125–73. [Google Scholar]

- Nelson, Thomas O., and R. Jacob Leonesio. 1988. Allocation of self-paced study time and the “labor-in-vain effect”. Journal of Experimental Psychology: Learning, Memory, and Cognition 14: 676–86. [Google Scholar]

- Nisbett, Richard E., Kaiping Peng, Incheol Choi, and Ara Norenzayan. 2001. Culture and systems of thought: Holistic versus analytic cognition. Psychological Review 108: 291–310. [Google Scholar] [CrossRef] [PubMed]

- Oyserman, Daphna, Mesmin Destin, and Sheida Novin. 2015. The context-sensitive future self: Possible selves motivate in context, not otherwise. Self and Identity 14: 173–88. [Google Scholar] [CrossRef]

- Peer, Eyal, David Rothschild, Andrew Gordon, Zak Evernden, and Ekaterina Damer. 2022. Data quality of platforms and panels for online behavioral research. Behavior Research Methods 54: 1643–62. [Google Scholar] [CrossRef]

- Peer, Eyal, Laura Brandimarte, Sonam Samat, and Alessandro Acquisti. 2017. Beyond the Turk: Alternative platforms for crowdsourcing behavioral research. Journal of Experimental Social Psychology 70: 153–63. [Google Scholar] [CrossRef]

- Pintrich, Paul R., and Elisabeth V. De Groot. 1990. Motivational and self-regulated learning components of classroom academic performance. Journal of Educational Psychology 82: 33–40. [Google Scholar] [CrossRef]

- Pleskac, Timothy J., and Jerome R. Busemeyer. 2010. Two-stage dynamic signal detection: A theory of choice, decision time, and confidence. Psychological Review 117: 864–901. [Google Scholar] [CrossRef]

- Prinz, Anja, Stefanie Golke, and Jörg Wittwer. 2020. How accurately can learners discriminate their comprehension of texts? A comprehensive meta-analysis on relative metacomprehension accuracy and influencing factors. Educational Research Review 31: 100358. [Google Scholar] [CrossRef]

- Pulford, Briony D., and Harjit Sohal. 2006. The influence of personality on HE students’ confidence in their academic abilities. Personality and Individual Differences 41: 1409–19. [Google Scholar] [CrossRef]

- Sánchez-Franco, Manuel J., Francisco J. Martínez-López, and Félix A. Martín-Velicia. 2009. Exploring the impact of individualism and uncertainty avoidance in Web-based electronic learning: An empirical analysis in European higher education. Computers & Education 52: 588–98. [Google Scholar]

- Schunk, Dale H., and Barry J. Zimmerman, eds. 2023. Self-Regulation of Learning and Performance: Issues and Educational Applications. London: Taylor & Francis. [Google Scholar]

- Shenhav, Amitai, Sebastian Musslick, Falk Lieder, Wouter Kool, Thomas L. Griffiths, Jonathan D. Cohen, and Matthew M. Botvinick. 2017. Toward a rational and mechanistic account of mental effort. Annual Review of Neuroscience 40: 99–124. [Google Scholar] [CrossRef] [PubMed]

- Sidi, Yael, Maya Shpigelman, Hagar Zalmanov, and Rakefet Ackerman. 2017. Understanding metacognitive inferiority on screen by exposing cues for depth of processing. Learning and Instruction 51: 61–73. [Google Scholar] [CrossRef]

- Stankov, Lazar, and Jihyun Lee. 2014. Overconfidence across world regions. Journal of Cross-Cultural Psychology 45: 821–37. [Google Scholar] [CrossRef]

- Stankov, Lazar, Sabina Kleitman, and Simon A. Jackson. 2015. Measures of the trait of confidence. In Measures of Personality and Social Psychological Constructs. Edited by Gregory J. Boyle, Donald H. Saklofske and Gerald Matthews. Cambridge: Elsevier Academic Press, pp. 158–89. [Google Scholar]

- Stanovich, Keith E. 2018. Miserliness in human cognition: The interaction of detection, override and mindware. Thinking & Reasoning 24: 423–44. [Google Scholar]

- Stevenson, Harold, and James W. Stigler. 1992. Learning Gap: Why our Schools are Failing and What we Can Learn from Japanese and Chinese Education. New York: Simon and Schuster. [Google Scholar]

- Sundre, Donna L., and Anastasia Kitsantas. 2004. An exploration of the psychology of the examinee: Can examinee self-regulation and test-taking motivation predict consequential and non-consequential test performance? Contemporary Educational Psychology 29: 6–26. [Google Scholar] [CrossRef]

- Teovanović, Predrag. 2019. Dual processing in syllogistic reasoning: An individual differences perspective. Psihologijske Teme 28: 125–45. [Google Scholar] [CrossRef]

- Thiede, Keith W., Thomas D. Griffin, Jennifer Wiley, and Mary CM Anderson. 2010. Poor metacomprehension accuracy as a result of inappropriate cue use. Discourse Processes 47: 331–62. [Google Scholar]

- Thompson, Valerie A., Jamie A. Prowse Turner, Gordon Pennycook, Linden J. Ball, Hannah Brack, Yael Ophir, and Rakefet Ackerman. 2013. The role of answer fluency and perceptual fluency as metacognitive cues for initiating analytic thinking. Cognition 128: 237–51. [Google Scholar] [CrossRef]

- Topolinski, Sascha, and Fritz Strack. 2009. The analysis of intuition: Processing fluency and affect in judgements of semantic coherence. Cognition and Emotion 23: 1465–503. [Google Scholar] [CrossRef]

- Trippas, Dries, Valerie A. Thompson, and Simon J. Handley. 2017. When fast logic meets slow belief: Evidence for a parallel-processing model of belief bias. Memory & Cognition 45: 539–52. [Google Scholar]

- Undorf, Monika, and Rakefet Ackerman. 2017. The puzzle of study time allocation for the most challenging items. Psychonomic Bulletin & Review 24: 2003–11. [Google Scholar]

- Van der Plas, Elisa, Shiqi Zhang, Keer Dong, Dan Bang, Jian Li, Nicholas D. Wright, and Stephen M. Fleming. 2022. Identifying cultural differences in metacognition. Journal of Experimental Psychology: General 151: 3268–80. [Google Scholar] [CrossRef] [PubMed]

- Whitcomb, Kathleen M., Dilek Önkal, Shawn P. Curley, and P. George Benson. 1995. Probability judgment accuracy for general knowledge: Cross-national differences and assessment methods. Journal of Behavioral Decision Making 8: 51–67. [Google Scholar] [CrossRef]

- Yates, J. Frank, Ju-Whei Lee, Hiromi Shinotsuka, Andrea L. Patalano, and Winston R. Sieck. 1998. Cross-cultural variations in probability judgment accuracy: Beyond general knowledge overconfidence? Organizational Behavior and Human Decision Processes 74: 89–117. [Google Scholar] [CrossRef]

- Yeung, Nick, and Christopher Summerfield. 2012. Metacognition in human decision-making: Confidence and error monitoring. Philosophical Transactions of the Royal Society B: Biological Sciences 367: 1310–21. [Google Scholar] [CrossRef]

- Zhao, Xu, Robert L. Selman, and Helen Haste. 2015. Academic stress in Chinese schools and a proposed preventive intervention program. Cogent Education 2: 1000477. [Google Scholar] [CrossRef]

- Zusho, Akane, Paul R. Pintrich, and Kai S. Cortina. 2005. Motives, goals, and adaptive patterns of performance in Asian American and Anglo American students. Learning and Individual Differences 15: 141–58. [Google Scholar] [CrossRef]

| Western General Public | Chinese Undergraduates | Israeli Undergraduates | One-Way ANOVA | |||

|---|---|---|---|---|---|---|

| Measure | N = 77 | N = 74 | N = 143 | F | p | ηp2 |

| Self-report responses | ||||||

| Challenge posed by the task | 5.74 b (0.97) | 3.96 a (1.41) | 5.63 b (1.03) | 64.69 | <.001 | 0.31 |

| Experience with thought-provoking games | 3.65 b (1.60) | 2.62 a (1.57) | 2.97 a (1.38) | 9.36 | <.001 | 0.06 |

| Actively open-minded thinking | 5.13 b (0.94) | 4.75 a (0.99) | 5.36 b (0.80) | 11.59 | <.001 | 0.07 |

| Time management | 4.71 b (1.27) | 4.29 a (0.91) | 5.21 c (1.27) | 15.33 | <.001 | 0.10 |

| Need for cognition | 4.15 a (1.11) | 4.96 c (1.11) | 4.50 b (0.89) | 12.28 | <.001 | 0.08 |

| Mindset (high represents fixed) | 3.44 a (1.15) | 4.19 b (1.39) | 3.69 a (1.35) | 6.42 | =.002 | 0.04 |

| Objective and metacognitive measures | ||||||

| Response time | 25.6 a (14.79) | 54.2 b (27.95) | 31.4 a (15.32) | 48.87 | <.001 | 0.25 |

| Success rate | 43.4 a (16.13) | 66.5 b (20.54) | 49.2 a (17.59) | 34.42 | <.001 | 0.19 |

| Efficiency | 1.24 b (0.62) | 0.87 a (0.44) | 1.09 b (0.51) | 9.52 | <.001 | 0.06 |

| Confidence | 62.5 a (12.35) | 81.6 b (12.81) | 61.1 a (12.91) | 68.82 | <.001 | 0.32 |

| Calibration | 19.1 b (16.53) | 15.2 ab (18.25) | 11.9 a (14.66) | 4.3 | =.008 | 0.03 |

| Resolution | 0.31 a (0.28) | 0.57 c (0.34) | 0.43 b (0.33) | 13.68 | <.001 | 0.09 |

| Interference of holistic processing | ||||||

| Surrounding area—Success | 0.19 ab (0.26) | 0.28 b (0.26) | 0.18 a (0.27) | 3.71 | =.026 | 0.03 |

| Surrounding area—Response time | 0.03 a (0.22) | −0.14 b (0.22) | −0.01 a (0.23) | 12.16 | <.001 | 0.08 |

| Effect | Western General Public | Chinese Undergraduates | Israeli Undergraduates |

|---|---|---|---|

| Confidence | −0.80 *** (0.07) | −1.05 *** (0.11) | −0.70 *** (0.06) |

| Confidence2 | −0.60 *** (0.20) | −1.57 *** (0.32) | −0.70 *** (0.17) |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Ackerman, R.; Binah-Pollak, A.; Lauterman, T. Metacognitive Effort Regulation across Cultures. J. Intell. 2023, 11, 171. https://doi.org/10.3390/jintelligence11090171

Ackerman R, Binah-Pollak A, Lauterman T. Metacognitive Effort Regulation across Cultures. Journal of Intelligence. 2023; 11(9):171. https://doi.org/10.3390/jintelligence11090171

Chicago/Turabian StyleAckerman, Rakefet, Avital Binah-Pollak, and Tirza Lauterman. 2023. "Metacognitive Effort Regulation across Cultures" Journal of Intelligence 11, no. 9: 171. https://doi.org/10.3390/jintelligence11090171

APA StyleAckerman, R., Binah-Pollak, A., & Lauterman, T. (2023). Metacognitive Effort Regulation across Cultures. Journal of Intelligence, 11(9), 171. https://doi.org/10.3390/jintelligence11090171