Creativity, Critical Thinking, Communication, and Collaboration: Assessment, Certification, and Promotion of 21st Century Skills for the Future of Work and Education

Abstract

1. Introduction

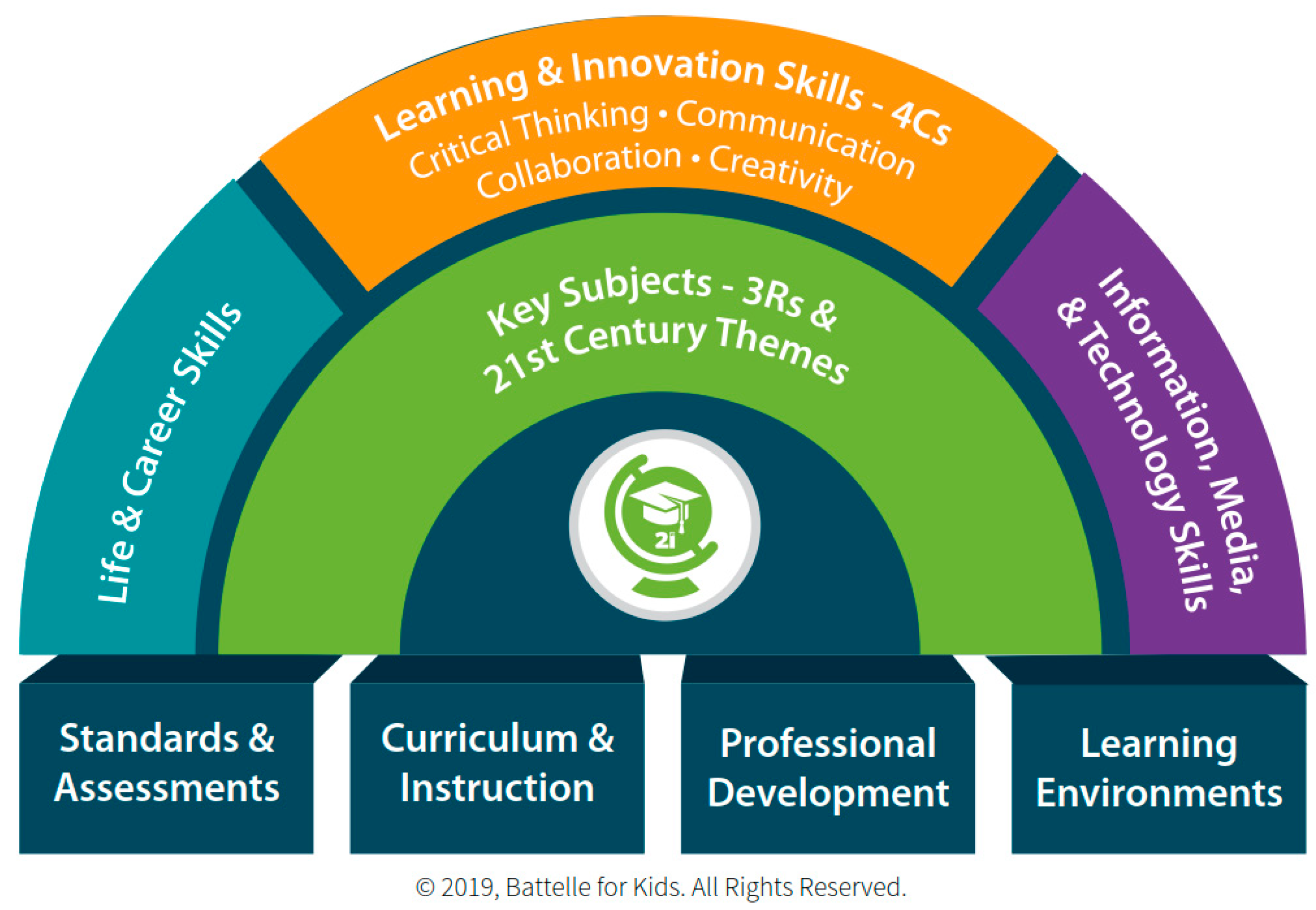

“21st Century Skills”, “Soft Skills”, and the “4Cs”

2. The 4Cs, Assessment, and Support for Development

2.1. Creativity

2.1.1. Individual Assessment of Creativity

2.1.2. Institutional and Environmental Support for Development of Creativity

2.2. Critical Thinking

2.2.1. Individual Assessment of Critical Thinking

2.2.2. Institutional and Environmental Support for Development of Critical Thinking Skills

2.3. Communication

2.3.1. Individual Assessment of Communication

2.3.2. Institutional and Environmental Support for Development of Communication Skills

2.4. Collaboration

2.4.1. Individual Assessment of Collaboration

2.4.2. Institutional and Environmental Support for Development of Collaboration and Collaborative Skills

3. Labelization: Valorization of the 4Cs and Assessing Support for Their Development

3.1. Labeling as a Means of Trust and Differentiation

3.2. Influence on Choice and Adoption of Goods and Services

3.3. Process of Labelizing Products and Services

3.4. Labelization of 21st Century Skills

4. The International Institute for Competency Development’s 21st Century Competencies 4Cs Assessment Framework for Institutions and Programs

4.1. Evaluation Grid for Creativity

4.2. Evaluation Grid for Critical Thinking

4.3. Evaluation Grid for Collaboration

4.4. Evaluation Grid for Communication

5. Assessing the 4Cs in Informal Educational Contexts: The Example of Games

5.1. The 4Cs in Informal Educational Contexts

5.2. 4Cs Evaluation Framework for Games

6. Discussion and Conclusions

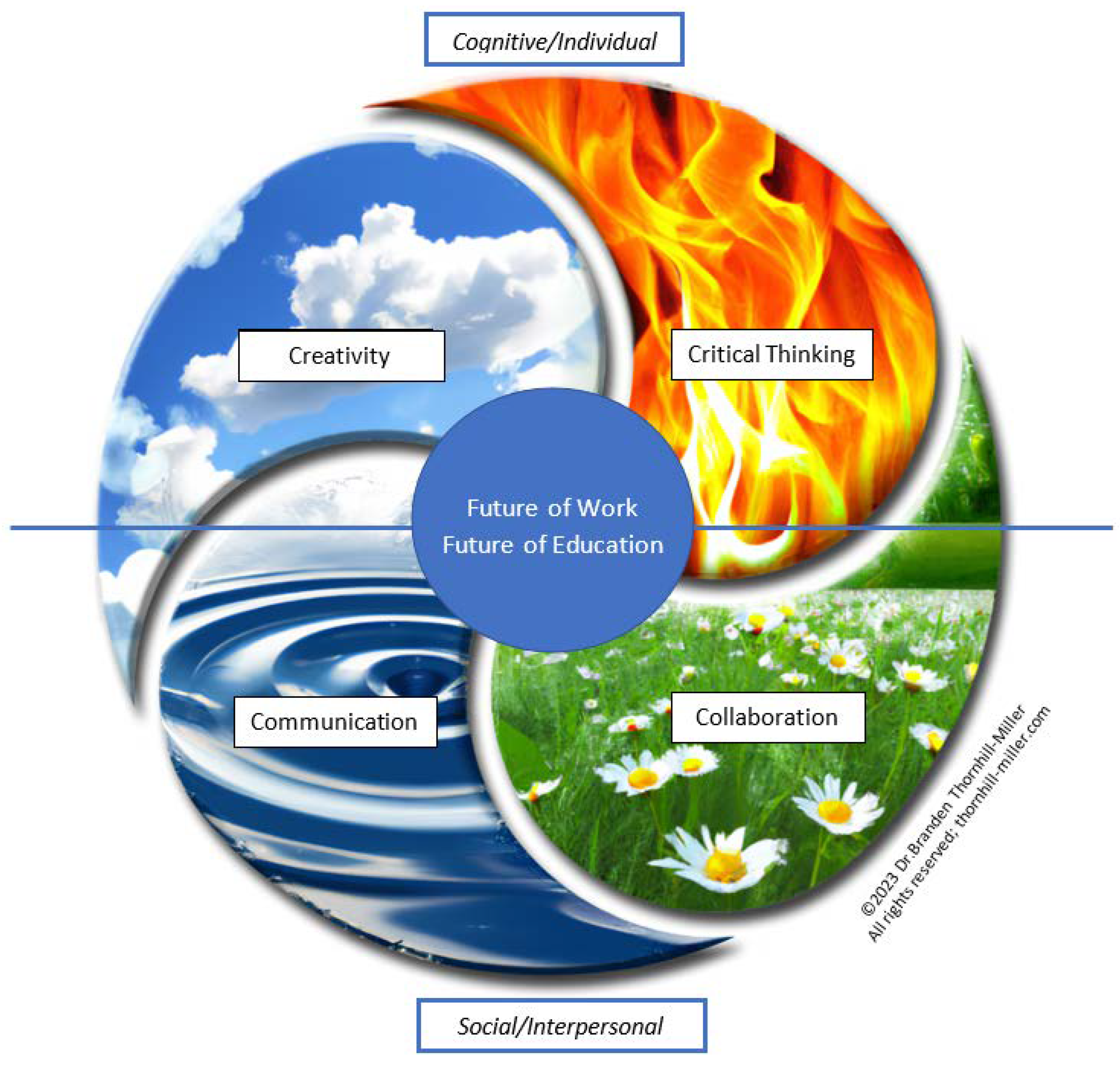

6.1. Interrelationships between the 4Cs and a New Model for Use in Pedagogy and Policy Promotion

6.2. Limitations and Future Work

6.3. Conclusion: Labelization of the 4Cs and the Future of Education and Work

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Abrami, Philip C., Robert M. Bernard, Eugene Borokhovski, David I. Waddington, C. Anne Wade, and Tonje Persson. 2015. Strategies for Teaching Students to Think Critically: A Meta-Analysis. Review of Educational Research 85: 275–314. [Google Scholar] [CrossRef]

- AbuSeileek, Ali Farhan. 2012. The Effect of Computer-Assisted Cooperative Learning Methods and Group Size on the EFL Learners’ Achievement in Communication Skills. Computers & Education 58: 231–39. [Google Scholar] [CrossRef]

- Ahern, Aoife, Caroline Dominguez, Ciaran McNally, John J. O’Sullivan, and Daniela Pedrosa. 2019. A Literature Review of Critical Thinking in Engineering Education. Studies in Higher Education 44: 816–28. [Google Scholar] [CrossRef]

- Ainsworth, Shaaron E., and Irene-Angelica Chounta. 2021. The roles of representation in computer-supported collaborative learning. In International Handbook of Computer-Supported Collaborative Learning. Edited by Ulrike Cress, Carolyn Rosé, Alyssa Friend Wise and Jun Oshima. Cham: Springer, pp. 353–69. [Google Scholar] [CrossRef]

- Alsaleh, Nada J. 2020. Teaching Critical Thinking Skills: Literature Review. The Turkish Online Journal of Educational Technology 19: 21–39. Available online: http://files.eric.ed.gov/fulltext/EJ1239945.pdf (accessed on 1 November 2022).

- Al-Samarraie, Hosam, and Shuhaila Hurmuzan. 2018. A Review of Brainstorming Techniques in Higher Education. Thinking Skills and Creativity 27: 78–91. [Google Scholar] [CrossRef]

- Amabile, Teresa M. 1982. Social Psychology of Creativity: A Consensual Assessment Technique. Journal of Personality and Social Psychology 43: 997–1013. [Google Scholar] [CrossRef]

- Amron, Manajemen Pemasaran. 2018. The influence of brand image, brand trust, product quality, and price on the consumer’s buying decision of MPV cars. European Scientific Journal 14: 228–39. [Google Scholar] [CrossRef]

- Ananiadoui, Katerina, and Magdalean Claro. 2009. 21st Century Skills and Competences for New Millennium Learners in OECD Countries. OECD Education Working Papers, No. 41. Paris: OECD Publishing. [Google Scholar] [CrossRef]

- Bailin, Sharon. 1988. Achieving Extraordinary Ends: An Essay on Creativity. Dordrecht: Springer. [Google Scholar] [CrossRef]

- Bandyopadhyay, Subir, and Jana Szostek. 2019. Thinking Critically about Critical Thinking: Assessing Critical Thinking of Business Students Using Multiple Measures. Journal of Education for Business 94: 259–70. [Google Scholar] [CrossRef]

- Barber, Herbert F. 1992. Developing Strategic Leadership: The US Army War College Experience. Journal of Management Development 11: 4–12. [Google Scholar] [CrossRef]

- Barnett, Ronald. 2015. A Curriculum for Critical Being. In The Palgrave Handbook of Critical Thinking in Higher Education. New York: Palgrave Macmillan US, pp. 63–76. [Google Scholar] [CrossRef]

- Bateson, Patrick, and Paul Martin. 2013. Play, Playfulness, Creativity and Innovation. Cambridge: Cambridge University Press. [Google Scholar] [CrossRef]

- Batey, Mark. 2012. The Measurement of Creativity: From Definitional Consensus to the Introduction of a New Heuristic Framework. Creativity Research Journal 24: 55–65. [Google Scholar] [CrossRef]

- Battelle for Kids. 2022. Framework for 21st Century Learning Definitions. Available online: http://static.battelleforkids.org/documents/p21/P21_Framework_DefinitionsBFK.pdf (accessed on 1 November 2022).

- Bellaera, Lauren, Yana Weinstein-Jones, Sonia Ilie, and Sara T. Baker. 2021. Critical Thinking in Practice: The Priorities and Practices of Instructors Teaching in Higher Education. Thinking Skills and Creativity 41: 100856. [Google Scholar] [CrossRef]

- Blessinger, Patrick, and John P. Anchan. 2015. Democratizing Higher Education: International Comparative Perspectives, 1st ed. Edited by Patrick Blessinger and John P. Anchan. London: Routledge. Available online: https://www.routledge.com/Democratizing-Higher-Education-International-Comparative-Perspectives/Blessinger-Anchan/p/book/9781138020955 (accessed on 1 November 2022).

- Bloom, Benjamin Samuel, ed. 1956. Taxonomy of Educational Objectives: The Classification of Educational Goals: Handbook I, Cognitive Domain. New York: Longmans. [Google Scholar]

- Bourgeois-Bougrine, Samira. 2022. Design Thinking. In The Palgrave Encyclopedia of the Possible. Cham: Springer International Publishing. [Google Scholar] [CrossRef]

- Bourgeois-Bougrine, Samira, Nathalie Bonnardel, Jean-Marie Burkhardt, Branden Thornhill-Miller, Farzaneh Pahlavan, Stéphanie Buisine, Jérôme Guegan, Nicolas Pichot, and Todd Lubart. 2022. Immersive Virtual Environments’ Impact on Individual and Collective Creativity: A Review of Recent Research. European Psychologist 27: 237–53. [Google Scholar] [CrossRef]

- Bourke, Sharon L., Simon Cooper, Louisa Lam, and Lisa McKenna. 2021. Undergraduate Health Professional Students’ Team Communication in Simulated Emergency Settings: A Scoping Review. Clinical Simulation in Nursing 60: 42–63. [Google Scholar] [CrossRef]

- Brookfield, Stephen D. 1997. Assessing Critical Thinking. New Directions for Adult and Continuing Education 75: 17–29. [Google Scholar] [CrossRef]

- Burkhardt, Jean-Marie, Françoise Détienne, Anne-Marie Hébert, and Laurence Perron. 2009. Assessing the ‘Quality of Collaboration’ in Technology-Mediated Design Situations with Several Dimensions. In Human-Computer Interaction—INTERACT 2009. Berlin/Heidelberg: Springer, pp. 157–60. [Google Scholar] [CrossRef]

- Camarda, Anaëlle, Lison Bouhours, Anaïs Osmont, Pascal Le Masson, Benoît Weil, Grégoire Borst, and Mathieu Cassotti. 2021. Opposite Effect of Social Evaluation on Creative Idea Generation in Early and Middle Adolescents. Creativity Research Journal 33: 399–410. [Google Scholar] [CrossRef]

- Cannon-Bowers, Janis, Scott I. Tannenbaum, Eduardo Salas, and Catherine E. Volpe. 1995. Defining team competencies and establishing team training requirements. In Team Effectiveness and Decision Making in Organizations. Edited by Richard A. Guzzo and Eduardo Salas. San Francisco: Jossey-Bass, pp. 333–80. [Google Scholar]

- Care, Esther, Claire Scoular, and Patrick Griffin. 2016. Assessment of Collaborative Problem Solving in Education Environments. Applied Measurement in Education 29: 250–64. [Google Scholar] [CrossRef]

- Care, Esther, Helyn Kim, Alvin Vista, and Kate Anderson. 2018. Education System Alignment for 21st Century Skills: Focus on Assessment. Washington, DC: Brookings Institution. [Google Scholar]

- Carmichael, Erst, and Helen Farrell. 2012. Evaluation of the Effectiveness of Online Resources in Developing Student Critical Thinking: Review of Literature and Case Study of a Critical Thinking Online Site. Journal of University Teaching and Learning Practice 9: 38–55. [Google Scholar] [CrossRef]

- Carson, Shelley H., Jordan B. Peterson, and Daniel M. Higgins. 2005. Reliability, Validity, and Factor Structure of the Creative Achievement Questionnaire. Creativity Research Journal 17: 37–50. [Google Scholar] [CrossRef]

- Casey, Betty J., Sarah Getz, and Adriana Galvan. 2008. The Adolescent Brain. Developmental Review: DR 28: 62–77. [Google Scholar] [CrossRef] [PubMed]

- Cassotti, Mathieu, Anaëlle Camarda, Nicolas Poirel, Olivier Houdé, and Marine Agogué. 2016. Fixation Effect in Creative Ideas Generation: Opposite Impacts of Example in Children and Adults. Thinking Skills and Creativity 19: 146–52. [Google Scholar] [CrossRef]

- Chameroy, Fabienne, and Lucien Veran. 2014. Immatérialité de La Qualité et Effet Des Labels Sur Le Consentement à Payer. Management International 18: 32–44. [Google Scholar] [CrossRef]

- Chiu, Fa-Chung. 2015. Improving Your Creative Potential without Awareness: Overinclusive Thinking Training. Thinking Skills and Creativity 15: 1–12. [Google Scholar] [CrossRef]

- Chulvi, Vicente, Elena Mulet, Amaresh Chakrabarti, Belinda López-Mesa, and Carmen González-Cruz. 2012. Comparison of the Degree of Creativity in the Design Outcomes Using Different Design Methods. Journal of Engineering Design 23: 241–69. [Google Scholar] [CrossRef]

- Cinque, Maria. 2016. ‘Lost in Translation’. Soft Skills Development in European Countries. Tuning Journal for Higher Education 3: 389–427. [Google Scholar] [CrossRef]

- Cömert, Musa, Jördis Maria Zill, Eva Christalle, Jörg Dirmaier, Martin Härter, and Isabelle Scholl. 2016. Assessing Communication Skills of Medical Students in Objective Structured Clinical Examinations (OSCE) - A Systematic Review of Rating Scales. PLoS ONE 11: e0152717. [Google Scholar] [CrossRef]

- Corazza, Giovanni Emanuele. 2016. Potential Originality and Effectiveness: The Dynamic Definition of Creativity. Creativity Research Journal 28: 258–67. [Google Scholar] [CrossRef]

- Corazza, Giovanni Emanuele, Frédéric Darbellay, Todd Lubart, and Chiara Panciroli. 2021. Developing Intelligence and Creativity in Education: Insights from the Space–Time Continuum. In Creativity and Learning. Edited by Soila Lemmetty, Kaija Collin, Vlad Glăveanu and Panu Forsman. Cham: Springer International Publishing, pp. 69–87. [Google Scholar] [CrossRef]

- Cotter, Katherine N., Ronald A. Beghetto, and James C. Kaufman. 2022. Creativity in the Classroom: Advice for Best Practices. In Homo Creativus. Edited by Todd Lubart, Marion Botella, Samira Bourgeois-Bougrine, Xavier Caroff, Jérôme Guégan, Christohe Mouchiroud, Julien Nelson and Franck Zenasni. Cham: Springer International Publishing, pp. 249–64. [Google Scholar] [CrossRef]

- Curtis, J. Randall, Anthony L. Back, Dee W. Ford, Lois Downey, Sarah E. Shannon, Ardith Z. Doorenbos, Erin K. Kross, Lynn F. Reinke, Laura C. Feemster, Barbara Edlund, and et al. 2013. Effect of Communication Skills Training for Residents and Nurse Practitioners on Quality of Communication with Patients with Serious Illness: A Randomized Trial. JAMA: The Journal of the American Medical Association 310: 2271. [Google Scholar] [CrossRef]

- D’Alimonte, Laura, Elizabeth McLaney, and Lisa Di Prospero. 2019. Best Practices on Team Communication: Interprofessional Practice in Oncology. Current Opinion in Supportive and Palliative Care 13: 69–74. [Google Scholar] [CrossRef]

- de Freitas, Sara. 2006. Learning in Immersive Worlds: A Review of Game-Based Learning. Bristol: JISC. Available online: http://www.jisc.ac.uk/media/documents/programmes/elearninginnovation/gamingreport_v3.pdf (accessed on 1 November 2022).

- Détienne, Françoise, Michael Baker, and Jean-Marie Burkhardt. 2012. Perspectives on Quality of Collaboration in Design. CoDesign 8: 197–99. [Google Scholar] [CrossRef]

- Diedrich, Jennifer, Emanuel Jauk, Paul J. Silvia, Jeffrey M. Gredlein, Aljoscha C. Neubauer, and Mathias Benedek. 2018. Assessment of Real-Life Creativity: The Inventory of Creative Activities and Achievements (ICAA). Psychology of Aesthetics, Creativity, and the Arts 12: 304–16. [Google Scholar] [CrossRef]

- Doyle, Denise. 2021. Creative and Collaborative Practices in Virtual Immersive Environments. In Creativity in the Twenty First Century. Edited by Anna Hui and Christian Wagner. Cham: Springer International Publishing, pp. 3–19. [Google Scholar] [CrossRef]

- Drisko, James W. 2014. Competencies and Their Assessment. Journal of Social Work Education 50: 414–26. [Google Scholar] [CrossRef]

- Dul, Jan, and Canan Ceylan. 2011. Work Environments for Employee Creativity. Ergonomics 54: 12–20. [Google Scholar] [CrossRef] [PubMed]

- Dumitru, Daniela, Dragos Bigu, Jan Elen, Aoife Ahern, Ciaran McNally, and John O’Sullivan. 2018. A European Review on Critical Thinking Educational Practices in Higher Education Institutions. Vila Real: UTAD. Available online: http://repositorio.utad.pt/handle/10348/8320 (accessed on 2 November 2022).

- Edelman, Jonathan, Babajide Owoyele, and Joaquin Santuber. 2022. Beyond Brainstorming: Introducing Medgi, an Effective, Research-Based Method for Structured Concept Development. In Design Thinking in Education. Cham: Springer International Publishing, pp. 209–32. [Google Scholar] [CrossRef]

- Etilé, Fabrice, and Sabrina Teyssier. 2016. Signaling Corporate Social Responsibility: Third-Party Certification versus Brands: Signaling CSR: Third-Party Certification versus Brands. The Scandinavian Journal of Economics 118: 397–432. [Google Scholar] [CrossRef]

- Evans, Carla. 2020. Measuring Student Success Skills: A Review of the Literature on Collaboration. Dover: National Center for the Improvement of Educational Assessment. [Google Scholar]

- Facione, Peter Arthur. 1990a. The California Critical Thinking Skills Test–College Level. Technical Report# 1. Experimental Validation and Content Validity. Available online: https://files.eric.ed.gov/fulltext/ED327549.pdf (accessed on 2 November 2022).

- Facione, Peter Arthur. 1990b. Critical Thinking: A Statement of Expert Consensus for Purposes of Educational Assessment and Instruction. Research Findings and Recommendations; Washington, DC: ERIC, Institute of Education Sciences, pp. 1–112. Available online: https://eric.ed.gov/?id=ED315423 (accessed on 2 November 2022).

- Facione, Peter Arthur. 2011. Critical thinking: What it is and why it counts. Insight Assessment 2007: 1–23. [Google Scholar]

- Faidley, Joel. 2018. Comparison of Learning Outcomes from Online and Face-to-Face Accounting Courses. Ph.D. dissertation, East Tennessee State University, Johnson City, TN, USA. [Google Scholar]

- Friedman, Hershey H. 2017. Cognitive Biases That Interfere with Critical Thinking and Scientific Reasoning: A Course Module. SSRN Electronic Journal, 1–60. [Google Scholar] [CrossRef]

- Fryer-Edwards, Kelly, Robert M. Arnold, Walter Baile, James A. Tulsky, Frances Petracca, and Anthony Back. 2006. Reflective Teaching Practices: An Approach to Teaching Communication Skills in a Small-Group Setting. Academic Medicine: Journal of the Association of American Medical Colleges 81: 638–44. [Google Scholar] [CrossRef]

- Glăveanu, Vlad Petre. 2013. Rewriting the Language of Creativity: The Five A’s Framework. Review of General Psychology: Journal of Division 1, of the American Psychological Association 17: 69–81. [Google Scholar] [CrossRef]

- Glăveanu, Vlad Petre. 2014. The Psychology of Creativity: A Critical Reading. Creativity Theories Research Applications 1: 10–32. [Google Scholar] [CrossRef]

- Goldenberg, Olga, and Jennifer Wiley. 2011. Quality, Conformity, and Conflict: Questioning the Assumptions of Osborn’s Brainstorming Technique. The Journal of Problem Solving 3: 96–118. [Google Scholar] [CrossRef]

- Graesser, Arthur C., John P. Sabatini, and Haiying Li. 2022. Educational Psychology Is Evolving to Accommodate Technology, Multiple Disciplines, and Twenty-First-Century Skills. Annual Review of Psychology 73: 547–74. [Google Scholar] [CrossRef]

- Graesser, Arthur C., Stephen M. Fiore, Samuel Greiff, Jessica Andrews-Todd, Peter W. Foltz, and Friedrich W. Hesse. 2018. Advancing the Science of Collaborative Problem Solving. Psychological Science in the Public Interest 19: 59–92. [Google Scholar] [CrossRef] [PubMed]

- Grassmann, Susanne. 2014. The pragmatics of word learning. In Pragmatic Development in First Language Acquisition. Edited by Danielle Matthews. Amsterdam: John Benjamins Publishing Company, pp. 139–60. [Google Scholar] [CrossRef]

- Hager, Keri, Catherine St Hill, Jacob Prunuske, Michael Swanoski, Grant Anderson, and May Nawal Lutfiyya. 2016. Development of an Interprofessional and Interdisciplinary Collaborative Research Practice for Clinical Faculty. Journal of Interprofessional Care 30: 265–67. [Google Scholar] [CrossRef] [PubMed]

- Halpern, Diane F. 1998. Teaching Critical Thinking for Transfer across Domains: Disposition, Skills, Structure Training, and Metacognitive Monitoring. The American Psychologist 53: 449–55. [Google Scholar] [CrossRef] [PubMed]

- Halpern, Diane F., and Dana S. Dunn. 2021. Critical Thinking: A Model of Intelligence for Solving Real-World Problems. Journal of Intelligence 9: 22. [Google Scholar] [CrossRef]

- Hanover Research. 2012. A Crosswalk of 21st Century Skills. Available online: http://www.hanoverresearch.com/wp-content/uploads/2011/12/A-Crosswalk-of-21st-Century-Skills-Membership.pdf (accessed on 15 August 2022).

- Hathaway, Julia R., Beth A. Tarini, Sushmita Banerjee, Caroline O. Smolkin, Jessica A. Koos, and Susmita Pati. 2022. Healthcare Team Communication Training in the United States: A Scoping Review. Health Communication, 1–26. [Google Scholar] [CrossRef]

- Hesse, Friedrich, Esther Care, Juergen Buder, Kai Sassenberg, and Patrick Griffin. 2015. A Framework for Teachable Collaborative Problem Solving Skills. In Assessment and Teaching of 21st Century Skills. Edited by Patrick Griffin and Esther Care. Dordrecht: Springer Netherlands, pp. 37–56. [Google Scholar]

- Hitchcock, David. 2020. Critical Thinking. In The Stanford Encyclopedia of Philosophy (Fall 2020 Edition). Edited by Nouri Zalta Edward. Stanford: Stanford University. [Google Scholar]

- Houdé, Olivier. 2000. Inhibition and cognitive development: Object, number, categorization, and reasoning. Cognitive Development 15: 63–73. [Google Scholar] [CrossRef]

- Houdé, Olivier, and Grégoire Borst. 2014. Measuring inhibitory control in children and adults: Brain imaging and mental chronometry. Frontiers in Psychology 5: 616. [Google Scholar] [CrossRef]

- Huber, Christopher R., and Nathan R. Kuncel. 2016. Does College Teach Critical Thinking? A Meta-Analysis. Review of Educational Research 86: 431–68. [Google Scholar] [CrossRef]

- Huizinga, Johan. 1949. Homo Ludens: A Study of the Play-Elements in Culture. London: Routledge. [Google Scholar]

- Humphrey, Neil, Andrew Curran, Elisabeth Morris, Peter Farrell, and Kevin Woods. 2007. Emotional Intelligence and Education: A Critical Review. Educational Psychology 27: 235–54. [Google Scholar] [CrossRef]

- International Institute for Competency Development. 2021. 21st Century Skills 4Cs Labelization. Available online: https://icd-hr21.org/offers/21st-century-competencies/ (accessed on 2 November 2022).

- Jackson, Denise. 2014. Business Graduate Performance in Oral Communication Skills and Strategies for Improvement. The International Journal of Management Education 12: 22–34. [Google Scholar] [CrossRef]

- Jahn, Gabriele, Matthias Schramm, and Achim Spiller. 2005. The Reliability of Certification: Quality Labels as a Consumer Policy Tool. Journal of Consumer Policy 28: 53–73. [Google Scholar] [CrossRef]

- Jauk, Emanuel, Mathias Benedek, and Aljoscha C. Neubauer. 2014. The Road to Creative Achievement: A Latent Variable Model of Ability and Personality Predictors. European Journal of Personality 28: 95–105. [Google Scholar] [CrossRef]

- Joie-La Marle, Chantal, François Parmentier, Morgane Coltel, Todd Lubart, and Xavier Borteyrou. 2022. A Systematic Review of Soft Skills Taxonomies: Descriptive and Conceptual Work. Available online: https://doi.org/10.31234/osf.io/mszgj (accessed on 2 November 2022).

- Jones, Stanley E., and Curtis D. LeBaron. 2002. Research on the Relationship between Verbal and Nonverbal Communication: Emerging Integrations. The Journal of Communication 52: 499–521. [Google Scholar] [CrossRef]

- Kaendler, Celia, Michael Wiedmann, Timo Leuders, Nikol Rummel, and Hans Spada. 2016. Monitoring Student Interaction during Collaborative Learning: Design and Evaluation of a Training Program for Pre-Service Teachers. Psychology Learning & Teaching 15: 44–64. [Google Scholar] [CrossRef]

- Kahneman, Daniel. 2003. A Perspective on Judgment and Choice: Mapping Bounded Rationality. The American Psychologist 58: 697–720. [Google Scholar] [CrossRef]

- Kahneman, Daniel. 2011. Thinking, Fast and Slow. New York: Macmillan. [Google Scholar]

- Karl, Katherine A., Joy V. Peluchette, and Navid Aghakhani. 2022. Virtual Work Meetings during the COVID-19 Pandemic: The Good, Bad, and Ugly. Small Group Research 53: 343–65. [Google Scholar] [CrossRef]

- Keefer, Kateryna V., James D. A. Parker, and Donald H. Saklofske. 2018. Three Decades of Emotional Intelligence Research: Perennial Issues, Emerging Trends, and Lessons Learned in Education: Introduction to Emotional Intelligence in Education. In The Springer Series on Human Exceptionality. Cham: Springer International Publishing, pp. 1–19. [Google Scholar]

- Kemp, Nenagh, and Rachel Grieve. 2014. Face-to-Face or Face-to-Screen? Undergraduates’ Opinions and Test Performance in Classroom vs. Online Learning. Frontiers in Psychology 5: 1278. [Google Scholar] [CrossRef]

- Kimery, Kathryn, and Mary McCord. 2002. Third-Party Assurances: Mapping the Road to Trust in E-retailing. The Journal of Information Technology Theory and Application 4: 63–82. [Google Scholar]

- Kohn, Nicholas W., and Steven M. Smith. 2011. Collaborative Fixation: Effects of Others’ Ideas on Brainstorming. Applied Cognitive Psychology 25: 359–71. [Google Scholar] [CrossRef]

- Kowaltowski, Doris C. C. K., Giovana Bianchi, and Valéria Teixeira de Paiva. 2010. Methods That May Stimulate Creativity and Their Use in Architectural Design Education. International Journal of Technology and Design Education 20: 453–76. [Google Scholar] [CrossRef]

- Kruijver, Irma P. M., Ada Kerkstra, Anneke L. Francke, Jozien M. Bensing, and Harry B. M. van de Wiel. 2000. Evaluation of Communication Training Programs in Nursing Care: A Review of the Literature. Patient Education and Counseling 39: 129–45. [Google Scholar] [CrossRef] [PubMed]

- Lai, Emily R. 2011. Critical thinking: A literature review. Pearson’s Research Reports 6: 40–41. [Google Scholar] [CrossRef]

- Lamri, Jérémy, and Todd Lubart. 2021. Creativity and Its’ Relationships with 21st Century Skills in Job Performance. Kindai Management Review 9: 75–91. [Google Scholar]

- Lamri, Jérémy, Michel Barabel, Olivier Meier, and Todd Lubart. 2022. Le Défi Des Soft Skills: Comment les Développer au XXIe Siècle? Paris: Dunod. [Google Scholar]

- Landa, Rebecca J. 2005. Assessment of Social Communication Skills in Preschoolers: Assessing Social Communication Skills in Children. Mental Retardation and Developmental Disabilities Research Reviews 11: 247–52. [Google Scholar] [CrossRef]

- Lee, Sang M., Jeongil Choi, and Sang-Gun Lee. 2004. The impact of a third-party assurance seal in customer purchasing intention. Journal of Internet Commerce 3: 33–51. [Google Scholar] [CrossRef]

- Lewis, Arthur, and David Smith. 1993. Defining Higher Order Thinking. Theory into Practice 32: 131–37. [Google Scholar] [CrossRef]

- Liu, Ou Lydia, Lois Frankel, and Katrina Crotts Roohr. 2014. Assessing Critical Thinking in Higher Education: Current State and Directions for next-Generation Assessment: Assessing Critical Thinking in Higher Education. ETS Research Report Series 2014: 1–23. [Google Scholar] [CrossRef]

- Lubart, Todd. 2017. The 7 C’s of Creativity. The Journal of Creative Behavior 51: 293–96. [Google Scholar] [CrossRef]

- Lubart, Todd, and Branden Thornhill-Miller. 2019. Creativity: An Overview of the 7C’s of Creative Thought. Heidelberg: Heidelberg University Publishing. [Google Scholar] [CrossRef]

- Lubart, Todd, Baptiste Barbot, and Maud Besançon. 2019. Creative Potential: Assessment Issues and the EPoC Battery/Potencial Creativo: Temas de Evaluación y Batería EPoC. Estudios de Psicologia 40: 540–62. [Google Scholar] [CrossRef]

- Lubart, Todd, Franck Zenasni, and Baptiste Barbot. 2013. Creative potential and its measurement. International Journal of Talent Development and Creativity 1: 41–51. [Google Scholar]

- Lubart, Tubart, and Branden Thornhill-Miller. 2021. Creativity in Law: Legal Professions and the Creative Profiler Approach. In Mapping Legal Innovation: Trends and Perspectives. Edited by Antoine Masson and Gavin Robinson. Cham: Springer International Publishing, pp. 1–19. [Google Scholar] [CrossRef]

- Lubin, Jeffrey, Stephan Hendrick, Branden Thornhill-Miller, Maxence Mercier, and Todd Lubart. Forthcoming. Creativity in Solution-Focused Brief Therapy.

- Lucas, Bill. 2019. Why We Need to Stop Talking about Twenty-First Century Skills. Melbourne: Centre for Strategic Education. [Google Scholar]

- Lucas, Bill. 2022. Creative Thinking in Schools across the World. London: The Global Institute of Creative Thinking. [Google Scholar]

- Lucas, Bill, and Guy Claxton. 2009. Wider Skills for Learning: What Are They, How Can They Be Cultivated, How Could They Be Measured and Why Are They Important for Innovation? London: NESTA. [Google Scholar]

- Malaby, Thomas M. 2007. Beyond Play: A New Approach to Games. Games and Culture 2: 95–113. [Google Scholar] [CrossRef]

- Marin, Lisa M., and Diane F. Halpern. 2011. Pedagogy for developing critical thinking in adolescents: Explicit instruction produces greatest gains. Thinking Skills and Creativity 6: 1–13. [Google Scholar] [CrossRef]

- Mathieu, John E., John R. Hollenbeck, Daan van Knippenberg, and Daniel R. Ilgen. 2017. A Century of Work Teams in the Journal of Applied Psychology. The Journal of Applied Psychology 102: 452–67. [Google Scholar] [CrossRef]

- Matthews, Danielle. 2014. Pragmatic Development in First Language Acquisition. Amsterdam: John Benjamins Publishing Company. [Google Scholar] [CrossRef]

- McDonald, Skye, Alison Gowland, Rebekah Randall, Alana Fisher, Katie Osborne-Crowley, and Cynthia Honan. 2014. Cognitive Factors Underpinning Poor Expressive Communication Skills after Traumatic Brain Injury: Theory of Mind or Executive Function? Neuropsychology 28: 801–11. [Google Scholar] [CrossRef]

- Moore, Brooke Noel, and Richard Parker. 2016. Critical Thinking, 20th ed. New York: McGraw-Hill Education. [Google Scholar]

- Morreale, Sherwyn P., Joseph M. Valenzano, and Janessa A. Bauer. 2017. Why Communication Education Is Important: A Third Study on the Centrality of the Discipline’s Content and Pedagogy. Communication Education 66: 402–22. [Google Scholar] [CrossRef]

- Mourad, Maha. 2017. Quality Assurance as a Driver of Information Management Strategy: Stakeholders’ Perspectives in Higher Education. Journal of Enterprise Information Management 30: 779–94. [Google Scholar] [CrossRef]

- National Education Association. 2011. Preparing 21st Century Students for a Global Society: An Educator’s Guide to the “Four Cs”. Alexandria: National Education Association. [Google Scholar]

- Nouri, Jalal, Anna Åkerfeldt, Uno Fors, and Staffan Selander. 2017. Assessing Collaborative Problem Solving Skills in Technology-Enhanced Learning Environments—The PISA Framework and Modes of Communication. International Journal of Emerging Technologies in Learning (IJET) 12: 163. [Google Scholar] [CrossRef]

- O’Carroll, Veronica, Melissa Owens, Michael Sy, Alla El-Awaisi, Andreas Xyrichis, Jacqueline Leigh, Shobhana Nagraj, Marion Huber, Maggie Hutchings, and Angus McFadyen. 2021. Top Tips for Interprofessional Education and Collaborative Practice Research: A Guide for Students and Early Career Researchers. Journal of Interprofessional Care 35: 328–33. [Google Scholar] [CrossRef]

- OECD. 2017. PISA 2015 collaborative problem-solving framework. In PISA 2015 Assessment and Analytical Framework: Science, Reading, Mathematic, Financial Literacy and Collaborative Problem Solving. Paris: OECD Publishing. [Google Scholar] [CrossRef]

- OECD. 2019a. Framework for the Assessment of Creative Thinking in PISA 2021: Third Draft. Paris: OECD. Available online: https://www.oecd.org/pisa/publications/PISA-2021-creative-thinking-framework.pdf (accessed on 2 November 2022).

- OECD. 2019b. Future of Education and Skills 2030: A Series of Concept Notes. Paris: OECD Learning Compass. Available online: https://www.oecd.org/education/2030-project/teaching-and-learning/learning/learning-compass-2030/OECD_Learning_Compass_2030_Concept_Note_Series.pdf (accessed on 2 November 2022).

- Osborn, A. F. 1953. Applied Imagination. New York: Charles Scribner’s Sons. [Google Scholar]

- Parkinson, Thomas L. 1975. The Role of Seals and Certifications of Approval in Consumer Decision-Making. The Journal of Consumer Affairs 9: 1–14. [Google Scholar] [CrossRef]

- Partnership for 21st Century Skills. 2008. 21st Century Skills Education and Competitiveness: A Resource and Policy Guide. Tuscon: Partnership for 21st Century Skills. [Google Scholar]

- Pasquinelli, Elena, and Gérald Bronner. 2021. Éduquer à l’esprit critique. Bases théoriques et indications pratiques pour l’enseignement et la formation; Rapport du Conseil Scientifique de l’Éducation Nationale. Paris: Ministère de l’Éducation Nationale, de la JEUNESSE et des Sports.

- Pasquinelli, Elena, Mathieu Farina, Audrey Bedel, and Roberto Casati. 2021. Naturalizing Critical Thinking: Consequences for Education, Blueprint for Future Research in Cognitive Science. Mind, Brain and Education: The Official Journal of the International Mind, Brain, and Education Society 15: 168–76. [Google Scholar] [CrossRef]

- Paul, Richard, and Linda Elder. 2006. Critical thinking: The nature of critical and creative thought. Journal of Developmental Education 30: 34–35. [Google Scholar]

- Paulus, Paul B., and Huei-Chuan Yang. 2000. Idea Generation in Groups: A Basis for Creativity in Organizations. Organizational Behavior and Human Decision Processes 82: 76–87. [Google Scholar] [CrossRef]

- Paulus, Paul B., and Jared B. Kenworthy. 2019. Effective brainstorming. In The Oxford Handbook of Group Creativity and Innovation. Edited by Paul B. Paulus and Bernard A. Nijstad. New York: Oxford University Press. [Google Scholar] [CrossRef]

- Paulus, Paul B., and Mary T. Dzindolet. 1993. Social Influence Processes in Group Brainstorming. Journal of Personality and Social Psychology 64: 575–86. [Google Scholar] [CrossRef]

- Paulus, Paul B., and Vincent R. Brown. 2007. Toward More Creative and Innovative Group Idea Generation: A Cognitive-Social-Motivational Perspective of Brainstorming: Cognitive-Social-Motivational View of Brainstorming. Social and Personality Psychology Compass 1: 248–65. [Google Scholar] [CrossRef]

- Peddle, Monica, Margaret Bearman, Natalie Radomski, Lisa Mckenna, and Debra Nestel. 2018. What Non-Technical Skills Competencies Are Addressed by Australian Standards Documents for Health Professionals Who Work in Secondary and Tertiary Clinical Settings? A Qualitative Comparative Analysis. BMJ Open 8: e020799. [Google Scholar] [CrossRef]

- Peña-López, Ismaël. 2017. PISA 2015 Results (Volume V): Collaborative Problem Solving. Paris: PISA, OECD Publishing. [Google Scholar]

- Popil, Inna. 2011. Promotion of Critical Thinking by Using Case Studies as Teaching Method. Nurse Education Today 31: 204–7. [Google Scholar] [CrossRef]

- Pornpitakpan, Chanthika. 2004. The Persuasiveness of Source Credibility: A Critical Review of Five Decades’ Evidence. Journal of Applied Social Psychology 34: 243–81. [Google Scholar] [CrossRef]

- Possin, Kevin. 2014. Critique of the Watson-Glaser Critical Thinking Appraisal Test: The More You Know, the Lower Your Score. Informal Logic 34: 393–416. [Google Scholar] [CrossRef]

- Proctor, Robert W., and Addie Dutta. 1995. Skill Acquisition and Human Performance. Thousand Oaks: Sage Publications, Inc. [Google Scholar]

- Putman, Vicky L., and Paul B. Paulus. 2009. Brainstorming, Brainstorming Rules and Decision Making. The Journal of Creative Behavior 43: 29–40. [Google Scholar] [CrossRef]

- Reiman, Joey. 1992. Success: The Original Handbook. Atlanta: Longstreet Press. [Google Scholar]

- Ren, Xuezhu, Yan Tong, Peng Peng, and Tengfei Wang. 2020. Critical Thinking Predicts Academic Performance beyond General Cognitive Ability: Evidence from Adults and Children. Intelligence 82: 101487. [Google Scholar] [CrossRef]

- Renard, Marie-Christine. 2005. Quality Certification, Regulation and Power in Fair Trade. Journal of Rural Studies 21: 419–31. [Google Scholar] [CrossRef]

- Restout, Emilie. 2020. Labels RSE: Un décryptage des entreprises labellisées en France. Goodwill Management. Available online: https://goodwill-management.com/labels-rse-decryptage-entreprises-labellisees/ (accessed on 2 November 2022).

- Rhodes, Mel. 1961. An Analysis of Creativity. The Phi Delta Kappan 42: 305–10. [Google Scholar]

- Rider, Elizabeth A., and Constance H. Keefer. 2006. Communication Skills Competencies: Definitions and a Teaching Toolbox: Communication. Medical Education 40: 624–29. [Google Scholar] [CrossRef]

- Riemer, Marc J. 2007. Communication Skills for the 21st Century Engineer. Global Journal of Engineering Education 11: 89. [Google Scholar]

- Rietzschel, Eric F., Bernard A. Nijstad, and Wolfgang Stroebe. 2006. Productivity Is Not Enough: A Comparison of Interactive and Nominal Brainstorming Groups on Idea Generation and Selection. Journal of Experimental Social Psychology 42: 244–51. [Google Scholar] [CrossRef]

- Ross, David. 2018. Why the Four Cs Will Become the Foundation of Human-AI Interface. Available online: https://www.gettingsmart.com/2018/03/04/why-the-4cs-will-become-the-foundation-of-human-ai-interface/ (accessed on 2 November 2022).

- Rothermich, Kathrin. 2020. Social Communication Across the Lifespan: The Influence of Empathy [Preprint]. SocArXiv. [Google Scholar] [CrossRef]

- Rusdin, Norazlin Mohd, and Siti Rahaimah Ali. 2019. Practice of Fostering 4Cs Skills in Teaching and Learning. International Journal of Academic Research in Business and Social Sciences 9: 1021–35. [Google Scholar] [CrossRef] [PubMed]

- Rychen, Dominique Simone, and Salganik Laura Hersch, eds. 2003. Key Competencies for a Successful Life and a Well-Functioning Society. Cambridge: Hogrefe and Huber. [Google Scholar]

- Sahin, Mehmet Can. 2009. Instructional Design Principles for 21st Century Learning Skills. Procedia, Social and Behavioral Sciences 1: 1464–68. [Google Scholar] [CrossRef]

- Salas, Eduardo, Kevin C. Stagl, and C. Shawn Burke. 2004. 25 Years of Team Effectiveness in Organizations: Research Themes and Emerging Needs. In International Review of Industrial and Organizational Psychology. Chichester: John Wiley & Sons, Ltd., pp. 47–91. [Google Scholar] [CrossRef]

- Salas, Eduardo, Marissa L. Shuffler, Amanda L. Thayer, Wendy L. Bedwell, and Elizabeth H. Lazzara. 2015. Understanding and Improving Teamwork in Organizations: A Scientifically Based Practical Guide. Human Resource Management 54: 599–622. [Google Scholar] [CrossRef]

- Salmi, Jamil. 2017. The Tertiary Education Imperative: Knowledge, Skills and Values for Development. Cham: Springer. [Google Scholar]

- Samani, Sanaz Ahmadpoor, Siti Zaleha Binti Abdul Rasid, and Saudah bt Sofian. 2014. A Workplace to Support Creativity. Industrial Engineering & Management Systems 13: 414–20. [Google Scholar] [CrossRef]

- Saroyan, Alenoush. 2022. Fostering Creativity and Critical Thinking in University Teaching and Learning: Considerations for Academics and Their Professional Learning. Paris: OECD. [Google Scholar] [CrossRef]

- Sasmita, Jumiati, and Norazah Mohd Suki. 2015. Young consumers’ insights on brand equity: Effects of brand association, brand loyalty, brand awareness, and brand image. International Journal of Retail & Distribution Management 43: 276–92. [Google Scholar] [CrossRef]

- Schlegel, Claudia, Ulrich Woermann, Maya Shaha, Jan-Joost Rethans, and Cees van der Vleuten. 2012. Effects of Communication Training on Real Practice Performance: A Role-Play Module versus a Standardized Patient Module. The Journal of Nursing Education 51: 16–22. [Google Scholar] [CrossRef]

- Schleicher, Andreas. 2022. Why Creativity and Creative Teaching and Learning Matter Today and for Tomorrow’s World. Creativity in Education Summit 2022. Paris: GloCT in Collaboration with OECD CERI. [Google Scholar]

- Schneider, Bertrand, Kshitij Sharma, Sebastien Cuendet, Guillaume Zufferey, Pierre Dillenbourg, and Roy Pea. 2018. Leveraging Mobile Eye-Trackers to Capture Joint Visual Attention in Co-Located Collaborative Learning Groups. International Journal of Computer-Supported Collaborative Learning 13: 241–61. [Google Scholar] [CrossRef]

- Schultz, David M. 2010. Eloquent Science: A course to improve scientific and communication skills. Paper presented at the 19th Symposium on Education, Altanta, GA, USA, January 18–21. [Google Scholar]

- Scialabba, George. 1984. Mindplay. Harvard Magazine 16: 19. [Google Scholar]

- Scott, Ginamarie, Lyle E. Leritz, and Michael D. Mumford. 2004. The Effectiveness of Creativity Training: A Quantitative Review. Creativity Research Journal 16: 361–88. [Google Scholar] [CrossRef]

- Sigafoos, Jeff, Ralf W. Schlosser, Vanessa A. Green, Mark O’Reilly, and Giulio E. Lancioni. 2008. Communication and Social Skills Assessment. In Clinical Assessment and Intervention for Autism Spectrum Disorders. Edited by Johnny L. Matson. Amsterdam: Elsevier, pp. 165–92. [Google Scholar] [CrossRef]

- Simonton, Dean Keith. 1999. Creativity from a Historiometric Perspective. In Handbook of Creativity. Edited by Robert J. Sternberg. Cambridge: Cambridge University Press, pp. 116–34. [Google Scholar] [CrossRef]

- Singh, Pallavi, Hillol Bala, Bidit Lal Dey, and Raffaele Filieri. 2022. Enforced Remote Working: The Impact of Digital Platform-Induced Stress and Remote Working Experience on Technology Exhaustion and Subjective Wellbeing. Journal of Business Research 151: 269–86. [Google Scholar] [CrossRef] [PubMed]

- Spada, Hans, Anne Meier, Nikol Rummel, and Sabine Hauser. 2005. A New Method to Assess the Quality of Collaborative Process in CSCL. In Proceedings of the 2005 Conference on Computer Support for Collaborative Learning Learning 2005: The next 10 Years!—CSCL’05, Taipei, Taiwan, May 30–June 4. Morristown: Association for Computational Linguistics. [Google Scholar]

- Spitzberg, Brian H. 2003. Methods of interpersonal skill assessment. In The Handbook of Communication and Social Interaction Skills. Edited by John O. Greene and Brant R. Burleson. Mahwah: Lawrence Erlbaum Associates. [Google Scholar]

- Sternberg, Robert. 1986. Intelligence, Wisdom, and Creativity: Three Is Better than One. Educational Psychologist 21: 175–90. [Google Scholar] [CrossRef]

- Sternberg, Robert J., and Joachim Funke. 2019. The Psychology of Human Thought: An Introduction. Heidelberg: Heidelberg University Publishing (heiUP). [Google Scholar] [CrossRef]

- Sursock, Andrée. 2021. Quality assurance and rankings: Some European lessons. In Research Handbook on University Rankings. Edited by Ellen Hazelkorn and Georgiana Mihut. Cheltenham: Edward Elgar Publishing, pp. 185–96. [Google Scholar] [CrossRef]

- Sursock, Andrée, and Oliver Vettori. 2017. Quo vadis, quality culture? Theses from different perspectives. In Qualitätskultur. Ein Blick in Die Gelebte Praxis der Hochschulen. Vienna: Agency for Quality Assurance and Accreditation, pp. 13–18. Available online: https://www.aq.ac.at/de/ueber-uns/publikationen/sonstige-publikationen.php (accessed on 2 November 2022).

- Sutter, Éric. 2005. Certification et Labellisation: Un Problème de Confiance. Bref Panorama de La Situation Actuelle. Documentaliste-Sciences de l Information 42: 284–90. [Google Scholar] [CrossRef]

- Taddei, François. 2009. Training Creative and Collaborative Knowledge-Builders: A Major Challenge for 21st Century Education. Paris: OCDE. [Google Scholar]

- Thomas, Keith, and Beatrice Lok. 2015. Teaching Critical Thinking: An Operational Framework. In The Palgrave Handbook of Critical Thinking in Higher Education. Edited by Martin Davies and Ronald Barnett. New York: Palgrave Macmillan US, pp. 93–105. [Google Scholar] [CrossRef]

- Thompson, Jeri. 2020. Measuring Student Success Skills: A Review of the Literature on Complex Communication. Dover: National Center for the Improvement of Educational Assessment. [Google Scholar]

- Thorndahl, Kathrine L., and Diana Stentoft. 2020. Thinking Critically about Critical Thinking and Problem-Based Learning in Higher Education: A Scoping Review. Interdisciplinary Journal of Problem-Based Learning 14. [Google Scholar] [CrossRef]

- Thornhill-Miller, Branden. 2021. ‘Crea-Critical-Collab-ication’: A Dynamic Interactionist Model of the 4Cs (Creativity, Critical Thinking, Collaboration and Communication). Available online: http://thornhill-miller.com/newWordpress/index.php/current-research/ (accessed on 2 November 2022).

- Thornhill-Miller, Branden, and Jean-Marc Dupont. 2016. Virtual Reality and the Enhancement of Creativity and Innovation: Underrecognized Potential Among Converging Technologies? Journal for Cognitive Education and Psychology 15: 102–21. [Google Scholar] [CrossRef]

- Thornhill-Miller, Branden, and Peter Millican. 2015. The Common-Core/Diversity Dilemma: Revisions of Humean Thought, New Empirical Research, and the Limits of Rational Religious Belief. European Journal for Philosophy of Religion 7: 1–49. [Google Scholar] [CrossRef][Green Version]

- Tomasello, Michael. 2005. Constructing a Language: A Usage-Based Theory of Language Acquisition. Cambridge: Harvard University Press. [Google Scholar] [CrossRef]

- Uribe-Enciso, Olga Lucía, Diana Sofía Uribe-Enciso, and María Del Pilar Vargas-Daza. 2017. Pensamiento Crítico y Su Importancia En La Educación: Algunas Reflexiones. Rastros Rostros 19. [Google Scholar] [CrossRef]

- van der Vleuten, Cees, Valerie van den Eertwegh, and Esther Giroldi. 2019. Assessment of Communication Skills. Patient Education and Counseling 102: 2110–13. [Google Scholar] [CrossRef]

- van Klink, Marcel R., and Jo Boon. 2003. Competencies: The triumph of a fuzzy concept. International Journal of Human Resources Development and Management 3: 125–37. [Google Scholar] [CrossRef]

- van Laar, Ester, Alexander J. A. M. Van Deursen, Jan A. G. M. Van Dijk, and Jos de Haan. 2017. The Relation between 21st-Century Skills and Digital Skills: A Systematic Literature Review. Computers in Human Behavior 72: 577–88. [Google Scholar] [CrossRef]

- van Rosmalen, Peter, Elizabeth A. Boyle, Rob Nadolski, John van der Baaren, Baltasar Fernández-Manjón, Ewan MacArthur, Tiina Pennanen, Madalina Manea, and Kam Star. 2014. Acquiring 21st Century Skills: Gaining Insight into the Design and Applicability of a Serious Game with 4C-ID. In Lecture Notes in Computer Science. Cham: Springer International Publishing, pp. 327–34. [Google Scholar] [CrossRef]

- Vincent-Lancrin, Stéphan, Carlos González-Sancho, Mathias Bouckaert, Federico de Luca, Meritxell Fernández-Barrerra, Gwénaël Jacotin, Joaquin Urgel, and Quentin Vidal. 2019. Fostering Students’ Creativity and Critical Thinking: What It Means in School. Paris: OECD Publishing. [Google Scholar] [CrossRef]

- Voogt, Joke, and Natalie Pareja Roblin. 2012. A Comparative Analysis of International Frameworks for 21st Century Competences: Implications for National Curriculum Policies. Journal of Curriculum Studies 44: 299–321. [Google Scholar] [CrossRef]

- Waizenegger, Lena, Brad McKenna, Wenjie Cai, and Taino Bendz. 2020. An Affordance Perspective of Team Collaboration and Enforced Working from Home during COVID-19. European Journal of Information Systems: An Official Journal of the Operational Research Society 29: 429–42. [Google Scholar] [CrossRef]

- Watson, Goodwin. 1980. Watson-Glaser Critical Thinking Appraisal. San Antonio: Psychological Corporation. [Google Scholar]

- Watson, Goodwin, and Edwin M. Glaser. 2010. Watson-Glaser TM II critical thinking appraisal. In Technical Manual and User’s Guide. Kansas City: Pearson. [Google Scholar]

- Weick, Karl E. 1993. The collapse of sensemaking in organizations: The Mann Gulch disaster. Administrative Science Quarterly 38: 628–52. [Google Scholar] [CrossRef]

- West, Richard F., Maggie E. Toplak, and Keith E. Stanovich. 2008. Heuristics and Biases as Measures of Critical Thinking: Associations with Cognitive Ability and Thinking Dispositions. Journal of Educational Psychology 100: 930–41. [Google Scholar] [CrossRef]

- Whitmore, Paul G. 1972. What are soft skills. Paper presented at the CONARC Soft Skills Conference, Fort Bliss, TX, USA, December 12–13; pp. 12–13. [Google Scholar]

- Willingham, Daniel T. 2008. Critical Thinking: Why Is It so Hard to Teach? Arts Education Policy Review 109: 21–32. [Google Scholar] [CrossRef]

- Wilson, Sarah Beth, and Pratibha Varma-Nelson. 2016. Small Groups, Significant Impact: A Review of Peer-Led Team Learning Research with Implications for STEM Education Researchers and Faculty. Journal of Chemical Education 93: 1686–702. [Google Scholar] [CrossRef]

- Winterton, Jonathan, Françoise Delamare-Le Deist, and Emma Stringfellow. 2006. Typology of Knowledge, Skills and Competences: Clarification of the Concept and Prototype. Luxembourg: Office for Official Publications of the European Communities. [Google Scholar]

- World Economic Forum. 2015. New Vision for Education: Unlocking the Potential of Technology. Geneva: World Economic Forum. [Google Scholar]

- World Economic Forum. 2020. The Future of Jobs Report 2020. Available online: https://www.weforum.org/reports/the-future-of-jobs-report-2020 (accessed on 2 November 2022).

- World Health Organization. 2010. Framework for Action on Interprofessional Education and Collaborative Practice. No. WHO/HRH/HPN/10.3. Geneva: World Health Organization. [Google Scholar]

- Yue, Meng, Meng Zhang, Chunmei Zhang, and Changde Jin. 2017. The Effectiveness of Concept Mapping on Development of Critical Thinking in Nursing Education: A Systematic Review and Meta-Analysis. Nurse Education Today 52: 87–94. [Google Scholar] [CrossRef]

- Zielke, Stephan, and Thomas Dobbelstein. 2007. Customers’ Willingness to Purchase New Store Brands. Journal of Product & Brand Management 16: 112–21. [Google Scholar] [CrossRef]

- Zlatić, Lidija, Dragana Bjekić, Snežana Marinković, and Milevica Bojović. 2014. Development of Teacher Communication Competence. Procedia, Social and Behavioral Sciences 116: 606–10. [Google Scholar] [CrossRef]

| Creativity | Creative Process | Creative Environment | Creative Product |

| Critical Thinking | Critical thinking about the world | Critical thinking about oneself | Critical action and decision making |

| Collaboration | Engagement and participation | Perspective taking and openness | Social regulation |

| Communication | Message formulation | Message delivery | Message and communication feedback |

| Teaching Curriculum | Aspects of the overall educational program teaching, emphasizing, and promoting the 4Cs |

| Tools and Techniques | Availability and access to different means, materials, space, and expertise, digital technologies, mnemonic and heuristic methods, etc. to assist in the proper use and exercise of the 4Cs |

| Implementation | Actual student and program use of available resources promoting the 4Cs |

| Meta-reflection | Critical reflection and metacognition on the process being engaged in around the 4Cs |

| Competence of Actors | The formal and informal training, skills, and abilities of teachers/trainers and staff and their program of development as promoters of the 4Cs |

| Outside community contact | Use and integration of the full range of resources external to the institution available to enhance the 4Cs |

| User Initiative * | Availability of resources for students to create and actualize products, programs, events, etc. that require the exercise, promotion, or manifestation of the 4Cs |

| Creativity | Originality | Divergent Thinking | Convergent Thinking | Mental Flexibility | Creative Dispositions |

| Critical Thinking | Goal-adequate judgment/ discernment | Objective thinking | Metacognition | Elaborate eeasoning | Uncertainty management |

| Collaboration | Collaboration fluency | Well-argued deliberation and consensus-based decision | Balance of contribution | Organization and coordination | Cognitive syncing, input, and support |

| Communication | Social Interactions | Social cognition | Mastery of written and spoken language | Verbal communication | Non-verbal communication |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Thornhill-Miller, B.; Camarda, A.; Mercier, M.; Burkhardt, J.-M.; Morisseau, T.; Bourgeois-Bougrine, S.; Vinchon, F.; El Hayek, S.; Augereau-Landais, M.; Mourey, F.; et al. Creativity, Critical Thinking, Communication, and Collaboration: Assessment, Certification, and Promotion of 21st Century Skills for the Future of Work and Education. J. Intell. 2023, 11, 54. https://doi.org/10.3390/jintelligence11030054

Thornhill-Miller B, Camarda A, Mercier M, Burkhardt J-M, Morisseau T, Bourgeois-Bougrine S, Vinchon F, El Hayek S, Augereau-Landais M, Mourey F, et al. Creativity, Critical Thinking, Communication, and Collaboration: Assessment, Certification, and Promotion of 21st Century Skills for the Future of Work and Education. Journal of Intelligence. 2023; 11(3):54. https://doi.org/10.3390/jintelligence11030054

Chicago/Turabian StyleThornhill-Miller, Branden, Anaëlle Camarda, Maxence Mercier, Jean-Marie Burkhardt, Tiffany Morisseau, Samira Bourgeois-Bougrine, Florent Vinchon, Stephanie El Hayek, Myriam Augereau-Landais, Florence Mourey, and et al. 2023. "Creativity, Critical Thinking, Communication, and Collaboration: Assessment, Certification, and Promotion of 21st Century Skills for the Future of Work and Education" Journal of Intelligence 11, no. 3: 54. https://doi.org/10.3390/jintelligence11030054

APA StyleThornhill-Miller, B., Camarda, A., Mercier, M., Burkhardt, J.-M., Morisseau, T., Bourgeois-Bougrine, S., Vinchon, F., El Hayek, S., Augereau-Landais, M., Mourey, F., Feybesse, C., Sundquist, D., & Lubart, T. (2023). Creativity, Critical Thinking, Communication, and Collaboration: Assessment, Certification, and Promotion of 21st Century Skills for the Future of Work and Education. Journal of Intelligence, 11(3), 54. https://doi.org/10.3390/jintelligence11030054