An Integrated Graph Model for Document Summarization

Abstract

:1. Introduction

2. Related Work

2.1. Graph-Based Approaches

2.2. Word Embeddings

3. Integrated Graph Model

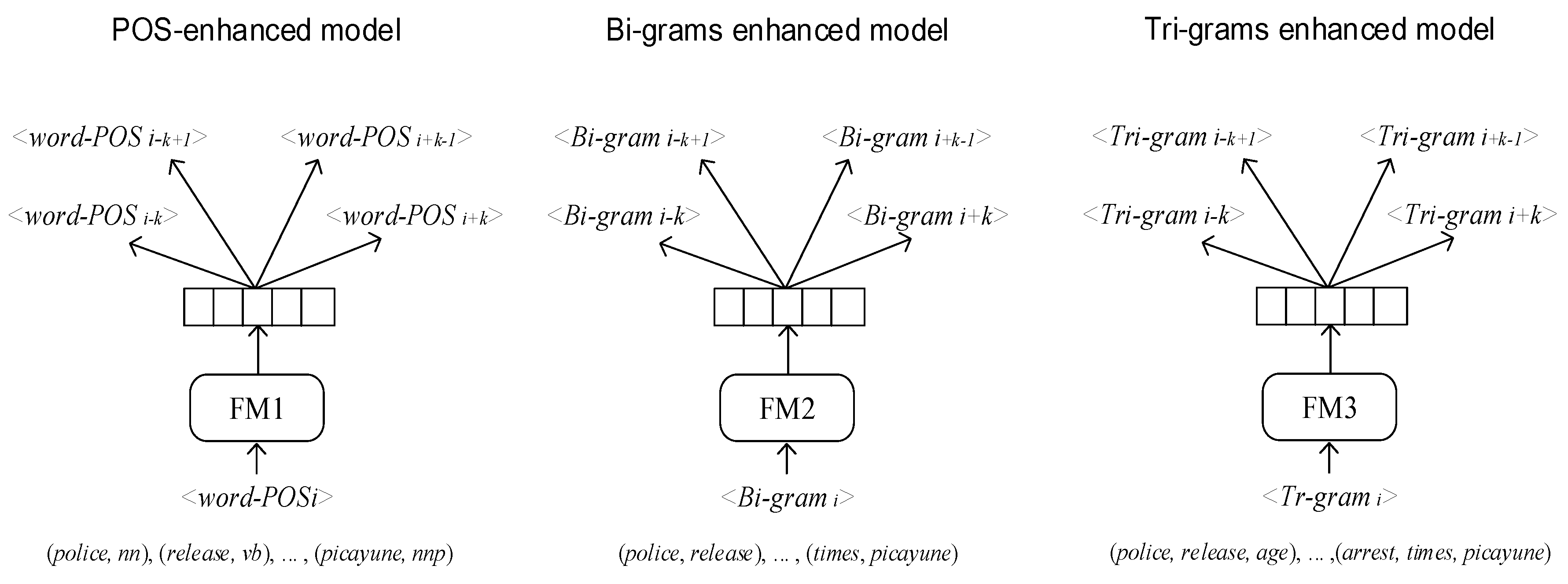

3.1. Enhanced Embedding Models

3.1.1. Part of Speech Feature

- (a)

- Please write your address on an envelope.

- (b)

- Now, I am hosting a summit with President Xi of China at the Southern White House to address the many critical issues affecting our two peoples.

3.1.2. N-Gram Feature

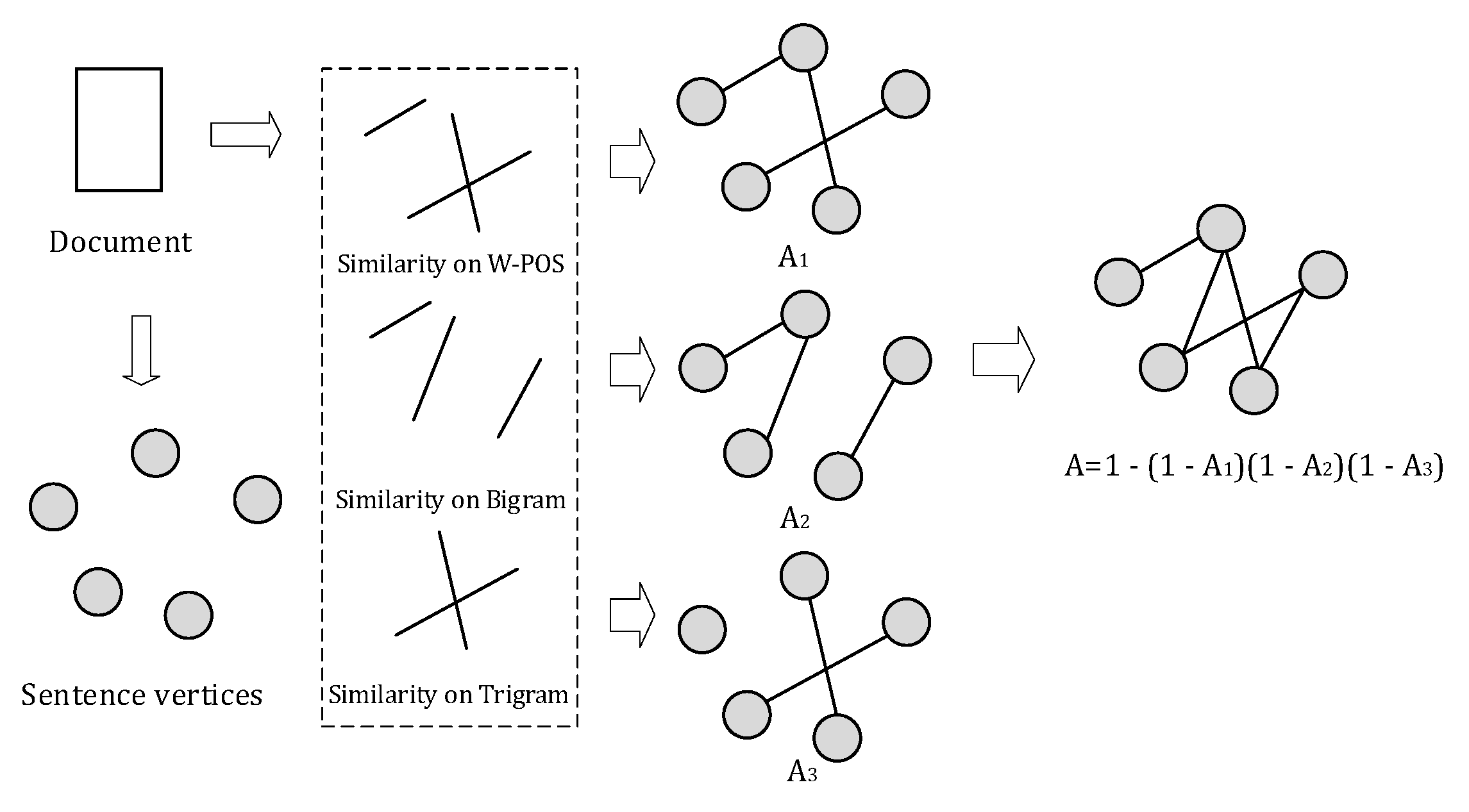

3.2. Integrated Graph Model for Summarization

3.3. Redundancy Removal

4. Experiments

4.1. Corpora and Baselines

4.2. Automatic Evaluation

4.3. Experiment Results and Discussion

5. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Liu, W.; Luo, X.; Zhang, J.; Xue, R.; Xu, R.Y.D. Semantic summary automatic generation in news event. Concurr. Comput. Pract. Exp. 2017, 29, e4287. [Google Scholar] [CrossRef]

- Ferreira, R.; Cabral, L.D.S.; Freitas, F.; Lins, R.D.; Silva, G.D.F.; Simske, S.J.; Favaro, L. A multi-document summarization system based on statistics and linguistic treatment. Expert Syst. Appl. 2014, 41, 5780–5787. [Google Scholar] [CrossRef]

- Radev, D.R.; Hovy, E.; McKeown, K. Introduction to the special issue on summarization. Comput. Linguist. 1994, 28, 399–408. [Google Scholar] [CrossRef]

- Nallapati, R.; Zhai, F.; Zhou, B. SummaRuNNer: A recurrent neural network based sequence model for extractive summarization of documents. In Proceedings of the 31st AAAI Conference on Artificial Intelligence, San Francisco, CA, USA, 4–9 February 2017. [Google Scholar]

- Gambhir, M.; Gupta, V. Recent automatic text summarization techniques: A survey. Artif. Intell. Rev. 2017, 47, 1–66. [Google Scholar] [CrossRef]

- Nenkova, A.; Maskey, S.; Liu, Y. Automatic summarization. Found. Trends Inf. Retr. 2011, 5, 103–233. [Google Scholar] [CrossRef]

- Erkan, R.; Dragomir, R. LexRank: Graph-based lexical centrality as salience in text summarization. J. Artif. Intell. Res. 2004, 22, 457–459. [Google Scholar] [CrossRef]

- Mihalcea, R.; Tarau, P. TextRank: Bringing Order into Texts. In Proceedings of the 2004 Conference on Empirical Methods in Natural Language Processing, Barcelona, Spain, 25–26 July 2004; pp. 404–411. [Google Scholar]

- Sornil, O.; Gree-Ut, K. An automatic text summarization approach using Content-Based and Graph-Based characteristics. In Proceedings of the IEEE Conference on Cybernetics & Intelligence System, Bangkok, Thailand, 7–9 June 2006; pp. 1–6. [Google Scholar]

- Fattah, M.A.; Ren, F. GA, MR, FFNN, PNN and GMM based models for automatic text summarization. Comput. Speech Lang. 2009, 23, 126–144. [Google Scholar] [CrossRef]

- Wan, X. Towards a unified approach to simultaneous Single-Document and Multi-Document summarizations. In Proceedings of the 23rd International Conference on Computational Linguistics, Beijing, China, 23–27 August 2010; pp. 1137–1145. [Google Scholar]

- Page, L. The PageRank citation ranking: Bringing order to the web. Stanf. Digit. Libr. Work. Pap. 1998, 9, 1–14. [Google Scholar]

- Lin, H.; Bilmes, J.; Xie, S. Graph-based submodular selection for extractive summarization. In Proceedings of the IEEE Automatic Speech Recognition and Understanding (ASRU), Merano, Italy, 13–17 December 2009; pp. 381–386. [Google Scholar]

- Barrera, A.; Verma, R. Combining syntax and semantics for automatic extractive Single-Document summarization. In Proceedings of the International Conference on Intelligent Text Processing and Computational Linguistics, New Delhi, India, 11–17 March 2012; Lecture Notes in Computer Science. Volume 7182, pp. 366–377. [Google Scholar]

- Fang, C.; Mu, D.; Deng, Z.; Wu, Z. Word-Sentence Co-Ranking for automatic extractive text summarization. Expert Syst. Appl. 2016, 72, 189–195. [Google Scholar] [CrossRef]

- Boom, C.D.; Canneyt, S.V.; Bohez, S.; Demeester, T.; Dhoedt, B. Learning semantic similarity for very short texts. In Proceedings of the 2015 International Conference on Data Mining Workshop, Atlantic City, NJ, USA, 14–17 November 2015; pp. 1229–1234. [Google Scholar]

- Mikolov, T.; Sutskever, I.; Chen, K.; Corrado, G.; Dean, J. Distributed representations of words and phrases and their compositionality. In Proceedings of the 27th Annual Conference on Neural Information Processing Systems, Lake Tahoe, NV, USA, 5–8 December 2013; pp. 3111–3119. [Google Scholar]

- Antiqueira, L.; Nunes, M.G.V. Complex Networks and Extractive Summarization. In The Extended Activities, Proceedings of the 9th International Conference on Computational Processing of the Portuguese Language–PROPOR, Porto Alegre, Brazil, 27–30 April 2010; Springer: Berlin/Heidelberg, Germany, 2010. [Google Scholar]

- Ge, S.S.; Zhang, Z.; He, H. Weighted graph model based sentence clustering and ranking for document summarization. In Proceedings of the 2011 4th International Conference on Interaction Sciences (ICIS), Busan, Korea, 16–18 August 2011; pp. 90–95. [Google Scholar]

- Baralis, E.; Cagliero, L.; Mahoto, N.; Fiori, A. Graph Sum: Discovering correlations among multiple terms for graph-based summarization. Inf. Sci. 2013, 249, 96–109. [Google Scholar] [CrossRef]

- Wan, X.; Yang, J. Improved affinity graph based multi-document summarization. In Proceedings of the Human Language Technology Conference of the NAACL, New York City, NY, USA, 4–9 June 2006; pp. 181–184. [Google Scholar]

- Sankarasubramaniam, Y.; Ramanathan, K.; Ghosh, S. Text summarization using Wikipedia. Inf. Process. Manag. 2014, 50, 443–461. [Google Scholar] [CrossRef]

- Khan, A.; Salim, N.; Farman, H.; Khan, M.; Jan, B.; Ahmad, A.; Ahmed, I.; Paul, A. Abstractive Text Summarization based on Improved Semantic Graph Approach. Int. J. Parallel Program. 2018, 1–25. [Google Scholar] [CrossRef]

- Kenter, T.; Rijke, M.D. Short text similarity with word embeddings. In Proceedings of the 24th ACM International on Conference on Information and Knowledge Management, Melbourne, Australia, 19–23 October 2015; pp. 1411–1420. [Google Scholar]

- Triantafillou, E.; Kiros, J.R.; Urtasun, R.; Zemel, R. Towards generalizable sentence embeddings. In Proceedings of the 1st Workshop on Representation Learning for NLP, Berlin, Germany, 11 August 2016; pp. 239–248. [Google Scholar]

- Kobayashi, H.; Noguchi, M.; Yatsuka, T. Summarization Based on Embedding Distributions. In Proceedings of the 2015 Conference on Empirical Methods for Natural Language Processing, Lisbon, Portugal, 17–21 September 2015; pp. 1984–1989. [Google Scholar]

- Lai, S.; Liu, K.; He, S.; Zhao, J. How to generate a good word embedding. IEEE Intell. Syst. 2016, 31, 5–14. [Google Scholar] [CrossRef]

- Huang, S.; Zheng, X.; Kang, H.; Chen, D. Word sense disambiguation based on positional weighted context. J. Inf. Sci. 2013, 39, 225–237. [Google Scholar] [CrossRef]

- Mering, C.V.; Jensen, L.J.; Snel, B.; Hooper, S.D.; Krupp, M.; Foglierini, M.; Jouffre, N.; Huynen, M.A.; Bork, P. STRING: Known and predicted protein–protein associations, integrated and transferred across organisms. Nucl. Acids Res. 2005, 33, 433–437. [Google Scholar] [CrossRef] [PubMed]

- Woodsend, K.; Lapata, M. Automatic generation of story highlights. In Proceedings of the 48th Annual Meeting of the Association for Computational Linguistics, Uppsala, Sweden, 11–16 July 2010; pp. 565–574. [Google Scholar]

- Parveen, D.; Ramsl, H.M.; Strube, M. Topical coherence for graph-based extractive summarization. In Proceedings of the 2015 Conference on Empirical Methods for Natural Language Processing, Lisbon, Portugal, 17–21 September 2015; pp. 1949–1954. [Google Scholar]

- Cheng, J.; Lapata, M. Neural summarization by extracting sentences and words. In Proceedings of the 54th Annual Meeting of the Association for Computational Linguistics, Berlin, Germany, 7–12 August 2016. [Google Scholar]

- Wang, R.; Stokes, N.; Doran, W.P.; Newman, E.; Carthy, J.; Dunnion, J. Comparing Topiary-Style approaches to headline generation. In Proceedings of the European Conference on Information Retrieval, Santiago de Compostela, Spain, 21–23 March 2005; Lecture Notes in Computer Science. Volume 3408, p. 11. [Google Scholar]

- Lin, C.Y.; Och, F.J. Looking for a few good metrics: ROUGE and its evaluation. In Proceedings of the Fourth NTCIR Workshop on Research in Information Access Technologies Information Retrieval, Question Answering and Summarization, NTCIR-4, Tokyo, Japan, 2–4 June 2004. [Google Scholar]

- Lin, C.Y. ROUGE: A Package for Automatic Evaluation of summaries. In Proceedings of the Workshop on Text Summarization Branches Out, Barcelona, Spain, 25–26 July 2004. [Google Scholar]

- Lee, J.H.; Sun, P.; Ahn, C.M.; Kim, D. Automatic generic document summarization based on non-negative matrix factorization. Inf. Process. Manag. 2009, 45, 20–34. [Google Scholar] [CrossRef]

- Rossiello, G.; Basile, P.; Semeraro, G. Centroid-based text summarization through compositionality of word embeddings. In Proceedings of the MultiLing 2017 Workshop on Summarization and Summary Evaluation across Source Types and Genres, Valencia, Spain, 3 April 2017; pp. 12–21. [Google Scholar]

| Target | Model. Mostsimilar (“Target”, Top n = 10) |

|---|---|

| address | addresses, addressed, ip, email, telephone, login, sender, answered, disscuss, call |

| (address, nn) | (addresses, nns), (email, nn), (postcodes, nns), (ip, nn), (names, nns), (ip, nn), (databases, nns), (usernames, rb), (contacts, nns), (login, nn) |

| (address, vb) | (discuss, vb), (addressing, vbg), (addressed, vb), (repercussions, vb), (deal, vb), (answer, vb), (resolved, vb), (reconsider, vb), (focus, vb), (tackle, vb) |

| come | coming, go, bring, see, came, get, going, look, give, want |

| true | indeed, fact, truth, truly, certainly, real, love, dreams, genuine, never |

| (come, true) | (dream, come), (dream, come), (make, dreams), (wishs, come), (wish, come), (would, dream), (experience, dream), (biggest, dream), (true, play), (absolute, dream) |

| Models | Rouge-1 | Rouge-2 | Rouge-L |

|---|---|---|---|

| Lead-3 | 43.6 | 21.0 | 40.2 |

| LReg | 43.8 | 20.7 | 40.3 |

| ILP | 45.4 | 21.3 | 42.8 |

| EmbAvg | 45.6 | 19.3 | 41.6 |

| Wikipedia-based summarizer | 46.0 | 23.0 | - |

| SummaRuNNer | 46.6 ± 0.8 | 23.1 ± 0.9 | 43.03 ± 0.8 |

| Cheng et al.’ 2016 | 47.4 | 23.0 | 43.5 |

| TGRAPH | 48.1 | 24.3 | - |

| URANK | 48.5 | 21.5 | - |

| W-POS | 47.4 | 22.4 | 43.0 |

| Bigram | 46.9 | 21.2 | 43.1 |

| Trigram | 43.1 | 18.6 | 40.5 |

| iGraph | 48.5 ± 0.4 | 22.0 ± 0.2 | 43.7 ± 0.3 |

| iGraph-R | 49.2 ± 0.3 | 23.1 ± 0.2 | 44.1 ± 0.2 |

| Models | Rouge-1 | Rouge-2 | Rouge-L |

|---|---|---|---|

| PREEFIX | 22.4 | 6.5 | 19.6 |

| TOPIARY | 25.1 | 6.5 | 20.1 |

| EmbAvg | 29.9 | 7.3 | 25.9 |

| W-POS | 30.6 | 7.0 | 27.3 |

| Bigram | 29.2 | 7.6 | 25.1 |

| Trigram | 24.3 | 6.4 | 19.5 |

| iGraph | 31.3 ± 0.4 | 7.3 ± 0.3 | 26.2 ± 0.3 |

| iGraph-R | 31.7 ± 0.2 | 7.3 ± 0.2 | 26.9 ± 0.2 |

| Models | Rouge-1 | Rouge-2 |

|---|---|---|

| LEAD | 32.4 | 6.4 |

| Peer65 | 38.2 | 9.2 |

| LexRank | 37.6 | 8.8 |

| NMF | 31.6 | 6.3 |

| C_SKIP | 38.8 | 9.97 |

| iGraph | 37.8 ± 0.3 | 8.2 ± 0.2 |

| iGraph-R | 38.0 ± 0.2 | 8.3 ± 0.4 |

© 2018 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Yang, K.; Al-Sabahi, K.; Xiang, Y.; Zhang, Z. An Integrated Graph Model for Document Summarization. Information 2018, 9, 232. https://doi.org/10.3390/info9090232

Yang K, Al-Sabahi K, Xiang Y, Zhang Z. An Integrated Graph Model for Document Summarization. Information. 2018; 9(9):232. https://doi.org/10.3390/info9090232

Chicago/Turabian StyleYang, Kang, Kamal Al-Sabahi, Yanmin Xiang, and Zuping Zhang. 2018. "An Integrated Graph Model for Document Summarization" Information 9, no. 9: 232. https://doi.org/10.3390/info9090232

APA StyleYang, K., Al-Sabahi, K., Xiang, Y., & Zhang, Z. (2018). An Integrated Graph Model for Document Summarization. Information, 9(9), 232. https://doi.org/10.3390/info9090232