Abstract

(1) Aims: This review aims to investigate whether screen-based simulated learning experiences improve on traditional teaching strategies to bridge the theory–practice gap for biomedical scientists and enhance the readiness to practice of graduates. (2) Methods: This review adheres to the systematic–narrative hybrid literature review strategy with the scope of review defined according to Cochrane guidelines for systematic reviews. To identify the potentially relevant literature, the PUBMED, CINAHL, and Web of Science bibliographic databases were searched using the identified keywords from January 2020 to February 2025. Thematic analysis of the resultant literature was conducted in line with the Braun and Clarke framework. (3) Results: The original search and analysis of the online databases returned 45 papers. Collectively these sources explore global perspectives on biomedical science education, training, and professional practice. These include the identification of core competencies that may contribute to the theory–practice gap for biomedical scientists, as well as educational interventions that aim to address them. The poor quality of existing research on simulation-based learning, mostly from academic settings, makes it challenging to apply the findings to professional practice. This limitation is primarily due to an overreliance on self-reported data and perceived learning gains rather than direct, objective evaluations of competence. Future studies should focus on objective, validated outcome measures and longitudinal follow-up to assess real-world impacts and learning transfer. (4) Conclusions: Simulation-based learning experiences have the potential to address aspects of the theory–practice gap for biomedical scientists, but the current evidence base reflects a lack of understanding regarding specific targets and strategies for its design, evaluation, and integration in this context. There is a need for more robust evidence that evaluates their impacts on readiness to practice. This need is hindered by a lack of research directly investigating the impact of simulation-based teaching and training interventions in clinical settings.

1. Introduction

Biomedical scientists play a critical role in healthcare and medical research. In the UK, the term biomedical scientist is the protected title awarded by the Health and Care Professions Council (HCPC) to those who carry out a range of laboratory investigations and scientific techniques on tissue samples and fluids to assist in the diagnosis and monitoring of disease, evaluation of the effectiveness of treatment, and delivery of expert advice for the treatment of patients and prevention of disease [1]. Investigations performed by biomedical scientists currently contribute to over 90% of patient care pathways [2]. A patient care pathway can be considered to be a road map that guides healthcare professionals in making the best decisions for a patient’s care. It includes details about appropriate tests, medications, and treatments that should be considered at each stage of a patient’s journey. The role of biomedical scientists encompasses scientific and technical expertise, in addition to a broad range of skills incorporating quality assurance, health and safety, equipment maintenance, managing contracts and budgets, interprofessional and interdisciplinary communication, education and training, and managing personnel [2].

There is currently a global shortage of biomedical scientists and their international equivalents [3,4]. A key factor contributing to this shortage is the scarcity of available clinical placements [4,5,6,7]. For example, in the UK, graduates must achieve a degree accredited by the Institute of Biomedical Science (IBMS), the professional body for biomedical scientists, and complete a period of clinical training to become qualified practitioners. However, only 20% of graduates from accredited courses secure the necessary clinical placement [6]. This means that 80% of graduates of IBMS-accredited programmes, which meet the HCPC Standards of Education and Training [8], are potentially lost to the profession, as they seek alternative careers [5].

This workforce shortage impacts training capacity and quality, as there is increased pressure on health systems to recruit fully qualified staff who are ready to practice from day one. The problem, therefore, results in a downward spiral, where workforce shortages lead to fewer training opportunities, which result in less prepared professionals, potentially further contributing to ongoing workforce shortages [4]. Ultimately, this means that even those students that do secure the necessary clinical training to qualify as practicing biomedical scientists are often poorly prepared [5], exacerbating the workforce shortage and highlighting the presence of a theory–practice gap.

The theory–practice gap refers to a disparity between theoretical concepts taught in academic settings and the practical application of those concepts in professional settings [7]. To understand how the theory–practice gap impacts the Biomedical Scientist profession, it is necessary to understand how the separate elements of the training pathways are integrated to produce qualified biomedical scientists.

The primary route to statutory registration as a biomedical scientist in the UK involves several stages. Prospective practitioners must first complete an IBMS-accredited degree. The IBMS accredit degrees on behalf of the HCPC to evidence that the degrees comply with the HCPC Standards for Education and Training [8]. IBMS accreditation criteria [9] are mapped against the QAA Subject Benchmark Statement for Biomedical Science and Biomedical Sciences [4]. Secondly practitioners must complete the IBMS Registration Portfolio in an IBMS-approved training laboratory during a clinical placement or period of clinical training of a currently unspecified duration.

The clinical placement provides access to highly specialised automation, which is often the cornerstone of practice for clinical laboratory professionals, as well as a range of pathological specimens for investigation. It provides access to complex digital systems, as well as organisational structures and cultures, and it provides access to professional role models. In this regard, the clinical placement is generally accepted as being essential to the development of core competencies and for facilitating the application of theory to practice [10].

The IBMS Registration Portfolio is mapped against the HCPC Standards of Proficiency for Biomedical Scientists [11], evidencing that the practitioner meets the minimum standards to practice safely, while IBMS training approval denotes that the laboratory is equipped to deliver appropriate preregistration training. This complex regulatory framework and biphasic training approach has been implicated in the development of a theory–practice gap for biomedical scientists [7].

The theory–practice gap has been explored for a range of healthcare professions [5,7], but Dudley and Matheson [7] present compelling evidence for the long-alluded-to theory–practice gap for biomedical scientists in a pre-registration course in the UK. Although this study was relatively small, with only nineteen participants (consisting of five academics, seven final year biomedical science students, five practicing biomedical scientists, and two representatives of professional, statutory, and regulatory bodies), it used a comprehensive Delphi approach to examine how key stakeholders understand the role of a biomedical scientist. The results show that, on average, four out of five practicing biomedical scientists agree on the key characteristics of the role, while only four out of seven students were able to agree. Alarmingly, only two out of five academics were able to agree, suggesting a potentially poor understanding of the role of a biomedical scientist among academics teaching this pre-registration course.

Similarly, in their evaluation of a government funded ‘upskilling’ programme for biomedical science graduates, Garden [5] alluded to the real-world implications of the theory–practice gap. In designing a programme, which was delivered to 68 biomedical science students from 11 universities by a partnership of academic and industry employers, Garden [5] noted an overreliance on clinical training. The study suggests the idea of a simulated placement, as simulation-based learning allows for controlled sequencing, scaffolding, and standardisation of training [5], while facilitating the practical application of theory [12,13,14,15,16].

Biomedical scientists are not alone in experiencing workforce shortages or difficulties in providing sufficient clinical placements to meet demand. Several healthcare professions (including some of those that are also regulated by the HCPC) have turned to simulation-based learning in a bid to increase capacity [12]. However, despite the growing recognition of simulation-based learning experiences in healthcare education more broadly, the HCPC has yet to issue official guidance on the role of simulation-based learning in the clinical training of biomedical scientists. This stands in contrast to other professional bodies, such as the Nursing and Midwifery Council (NMC), which have embraced simulation as a valuable component of preregistration training [17].

In its review of the Standards for Education and Training, which commenced last year, the HCPC noted the important opportunity that the use of simulation in clinical education offers learners in order to develop their knowledge and skills. The HCPC is actively seeking input for recommendations in this area [18].

Simulation-based learning is a pedagogical approach that allows for learners to ‘experience a representation of a real event for the purpose of practising, learning, evaluating, or gaining understanding of procedures, systems, and/or human interaction’ [19]. This encompasses a broad range of activities, including virtual reality experiences, role-playing exercises, or the use of specialised equipment such as medical mannequins. However, given the global issue of workforce shortages in appropriately trained clinical laboratory personnel, including biomedical scientists, it is essential that proposed solutions are accessible in the broadest range of settings, and, therefore, digital inequity is an important consideration. To this end, this review will focus on screen-based simulated learning experiences only. This incorporates activities that only require a computer, tablet, smartphone, monitor, keyboard, and/or mouse to participate.

In light of this regulatory context, it is essential to examine the current landscape of screen-based simulated learning experiences in the training of biomedical scientists and explore innovative ways to effectively integrate simulated learning into existing curricula.

Therefore, this review includes the following aims:

- To identify factors that contribute to the theory–practice gap for biomedical scientists;

- To identify the specific skillset(s) that could be targeted most effectively by simulation-based learning in order to address this gap.

2. Materials and Methods

2.1. Research Questions

The scope of this review was defined according to the Cochrane guidelines for systematic reviews [20]. The PICO framework was applied to define the population as biomedical science students or educators and their international equivalents; the intervention was defined as screen-based simulated learning experiences; the comparison was defined as alternative teaching methods such as practical laboratory experience; and the outcome was defined as readiness to practice, incorporating technical proficiency and professional identity.

The primary research questions were as follows:

RQ1: To what extent can screen-based simulated learning experiences enhance the readiness to practice of biomedical scientists?

RQ2: What specific methods and/or approaches contribute to the effectiveness of screen-based simulated learning experiences in the context of enhancing the readiness to practice of biomedical scientists?

Associated sub-questions that were necessary to adequately address the primary research questions are as follows:

RQ2.1: What factors contribute to impaired readiness to practice (the theory–practice gap) for biomedical scientists?

RQ2.2: What specific skillsets should be targeted by screen-based simulated learning experiences to address the theory–practice gap for biomedical scientists most effectively?

2.2. Justification

While a PRISMA systematic literature review is the gold standard, the broad scope of the research questions meant that strict adherence to this approach was not feasible. Therefore, this review adopted a hybrid approach, combining the rigour of a systematic search with the flexibility of narrative synthesis. Following PRISMA principles [21], a comprehensive and transparent literature search was conducted, including predefined inclusion and exclusion criteria, multiple database searches, and the use of a PRISMA flow diagram to document the screening process. However, due to the heterogeneity of study designs, contexts, and outcomes, a narrative approach was employed to synthesise and interpret the findings. This method allowed for a nuanced, contextualised discussion of the literature while maintaining the transparency and replicability of a systematic review. This approach is particularly suited for areas where evidence is diverse or emerging, as well as where conceptual insights are as valuable as empirical generalisations. This hybrid review adheres to the systematic-narrative literature review strategy proposed by Turnbull et al. [22]. Recent examples of this methodology from the field of healthcare education include Manchester and Roberts’ review of bioscience teaching and learning in undergraduate nursing education [23] and Da Veiga et al.’s review of curricular evolution in nursing education in Brazil [24].

2.3. Literature Sources

To identify the potentially relevant literature, the PUBMED, CINAHL, and Web of Science bibliographic databases were searched from 1 January 2020 to 28 February 2025. This window ensured that the most recent publications were captured, while recognising the influence of the COVID-19 pandemic, which was a major factor in the increased uptake of simulation-based learning, leading to the growing body of research in this area. The selection of databases was chosen based on their comprehensive coverage of the biomedical- and health-related literature, with a focus on education and evidence-based practice. Professional standards from the HCPC [11,18,25,26] and laboratory training approval guidance from the IBMS [27], who accredit undergraduate degrees and pre-registration laboratory training on behalf of the HCPC, were also incorporated, alongside the most recent QAA subject benchmark statement for biomedical science and biomedical sciences [1], to systematically assess the relevance of educational interventions to professional requirements for clinical practice. Upon reviewing the initial studies, a targeted follow-up investigation into the foundational research cited within them was conducted to ensure a comprehensive understanding of the subject matter.

2.4. Search Parameters

The following search strings were applied across the three databases:

Search string 1:

- (train* OR instruct* OR pedagog* OR teach* OR educat* OR competen* OR proficien*)AND (Effect* OR Efficac* OR impact*) AND (Biomedical* NEAR/3 Scien* OR “Medical laboratory*” OR “Clinical laboratory*” OR “Medical technolog*” OR “laboratory technician*” OR “laboratory professional*”) AND(Read* NEAR/3 practi* OR competen* OR “job read*” OR prepare* OR “professional identit*” OR proficien*)

Search string 2:

- (“Screen* simulat*” OR “Simulat* educat*” OR “simulat* train*” OR “computer* simulat*” OR “2D simulat*” OR “3D simulat*” OR “First person* simulat*” OR “360* video*” OR “virtual* realit*” OR “digital* simulat*”) AND (Biomedical* NEAR/3 Scien* OR “Medical laboratory*” OR “Clinical laboratory*” OR “Medical technolog*” OR “laboratory technician*” OR “laboratory professional*”) AND (Read* NEAR/3 practi* OR competen* OR “job read*” OR prepare* OR “professional identit*” OR proficien*)

Database specific limits and filters were as follows:

PUBMED: No additional filters.

CINAHL: Limited to ‘English’ language ‘research article’ publication type.

Web of Science: Limited to ‘English’ language and ‘article’ document type.

2.5. Data Cleaning

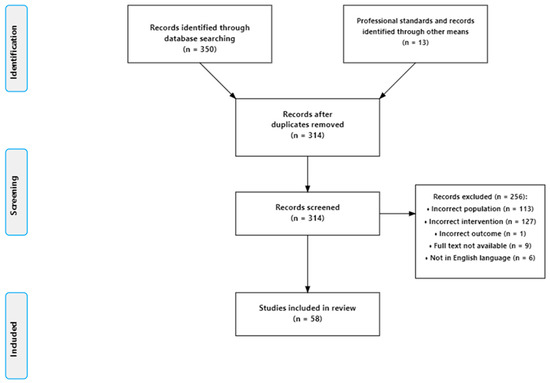

Duplicate studies were initially removed using Zotero reference management software V 7.0.13, and the remaining studies were then screened against the inclusion and exclusion criteria below (see Figure 1).

Figure 1.

Search strategy and results.

To be included in this review, studies needed to satisfy the following inclusion criteria:

- Evaluate the effectiveness of teaching or training interventions or screen-based simulated learning experiences for addressing the theory–practice gap, developing a professional identity, or readiness to practice, incorporating technical proficiency, digital competence, and transferrable or key employability skills.

- Have studies whose participants are biomedical scientist educators, students, or their international equivalents, where international equivalents were identified as medical laboratory scientists, clinical laboratory scientists, clinical laboratory professionals, medical laboratory professionals, or medical laboratory technologists.

Studies were excluded from the review if the following exclusion criteria were applicable:

- The study’s primary participants were medical doctors, nurses, technicians or laboratory assistants, or researchers in the field of biomedical science whose experience did not include clinical practice.

- Studies focused solely on student satisfaction or perceptions, without evaluating impacts on an element of readiness to practice.

- Studies focused on simulated experiences requiring additional hardware beyond a computer, monitor, keyboard, mouse, tablet, or smartphone.

- Studies that were published in languages other than English.

- Studies whose full text was not available.

2.6. Information Synthesis

Each record was reviewed by a single reviewer who conducted the screening, selection, and data extraction independently. The single reviewer was a HCPC-registered biomedical scientist with experience as a clinical laboratory training officer and university lecturer. Their expertise and experience allowed for a comprehensive evaluation of the records. Due to the anticipated heterogeneity of the included studies, this scoping review employed a narrative synthesis and thematic analysis approach to analyse the literature, explore emerging trends, and identify notable gaps in this body of research. This method allowed for the integration of findings across diverse studies to provide a comprehensive overview of the research landscape.

The included studies were assigned the following two core themes to address the research question:

- Theme 1: The effectiveness of pre-registration teaching and training in preparing biomedical scientists for practice.

- Theme 2: The potential impact of the use of screen-based simulated learning experiences in preparing biomedical scientists.

Within these themes, data were extracted from the studies, including the type of study; the setting, sample size, and profile of participants; potential sources of bias and limitations; the interventions being studied; the skills the intervention intended to develop and barriers to their development; and evidence for the effectiveness of the intervention in developing these skills.

Notebook LM was used to identify the relevant sections of each record, and this was then confirmed by a single reviewer who worked independently. Where information was missing or unclear, this was noted in the report. Risk of bias was assessed by examining the methodology of each study, considering factors such as study design, sample size, and data collection methods, to identify potential sources of bias such as self-reported data, self-selection bias, social desirability bias, unequal cohort sizes, lack of control groups, low response rates, inherent subjectivity, use of non-validated survey instruments, or confounders. All studies meeting the eligibility criteria were included, and while this scoping review did not involve a formal assessment of uncertainty or confidence in the body of evidence, several factors were considered when interpreting and synthesising the results. These included the risk of bias in individual studies, the consistency of findings across the studies, and the directness of the evidence in addressing the research questions. By addressing these factors directly in the report, we aim to provide a balanced and nuanced discussion of the available evidence, highlighting both the strengths and limitations of the existing research in this field.

The extracted data was recorded as an excel spreadsheet and was manually coded according to the skillset identified. Prior to coding, broad skills domains were identified based on a preliminary reading of the literature. Based on this initial review, four domains were identified as being relevant to the research question:

- Cognitive: Skills relating to knowledge acquisition, understanding and critical thinking;

- Practical: Skills associated with hands on, technical, or laboratory-related tasks;

- Interpersonal: Skills related to communication, collaboration, and interaction with others;

- Professional: Skills involving ethical behaviour, professional identity, or career development.

Coding was then conducted based on the intervention identified in each study and the skills the intervention sought to develop or identified as deficient, as recognised by the study’s authors. These skillsets were then grouped into the four domains according to their primary focus.

Coding was conducted by a sole reviewer with expertise in the field, ensuring consistency throughout the process. The reviewer systematically analysed each study, assigning codes based on the identified skills and domains.

To ensure the reliability and validity of the coding process, the reviewer conducted multiple iterations of the analysis, revisiting and refining the codes as necessary to ensure that the codes identified were a true reflection of the literature.

The codes were then ranked according to the frequency with which they were addressed in the literature to provide an indication of the degree of consensus. This mode of thematic analysis aligns broadly with the steps recommended by Braun and Clarke [28]. To further enhance the clarity and accessibility of the results, tabular presentations were used to organise and display key data points. The tables below summarise the distribution of research approaches, the identified factors contributing to the theory–practice gap, and the skillsets affected across the included studies.

While this scoping review does not involve formal sensitivity analyses, the narrative synthesis approach allowed for the evaluation of the robustness of the results synthesised by comparing the findings across studies and exploring the impact of the quality of the studies.

This systematic review was registered on the Open Science Framework (OSF) on 14 August 2025, with the identifier https://osf.io/f4bz5, accessed on 18 August 2025.

3. Results

The original search of the online databases returned 350 studies. This was reduced to 301 once duplicates were removed and to 45 once the paper titles, abstracts, and full texts were screened against the inclusion and exclusion criteria, as shown in Figure 1. In total, 113 studies were excluded because their primary participants were student medics, nurses, or bio-scientists more broadly. The latter reflects an issue in the literature whereby the healthcare profession of biomedical scientists is often poorly differentiated from medical researchers. In total, 127 studies were excluded because they did not focus specifically on teaching or training as the intervention under investigation. Examples of interventions that did not meet this criterion included the integration of artificial intelligence into workstreams, embedding sustainable practice and method validation. A detailed list of the included studies and their characteristics can be found in the Supplementary Materials.

Collectively, these 45 papers explored the global perspectives of the inter-related aspects of biomedical science education, training, and professional practice. These included the identification of core competencies that may contribute to the theory–practice gaps for biomedical scientists, as well as the educational interventions that aim to address them.

The literature reviewed described screen-based simulations, encompassing a range of modalities that varied in scope, interactivity, and intended learning outcomes. Virtual laboratories were reported to replicate laboratory-based tasks in a controlled digital environment [6,29,30,31]. These included procedures such as microscopic examination of blood films [32] or fine-needle aspirates [13], or macroscopic examinations of anatomy or dissection [33,34], with the aim of familiarising students with techniques and workflows prior to hands-on sessions. In contrast, scenario-based simulations, often employing augmented reality or gamified platforms, were used to immerse learners in virtual workplace settings or clinical decision-making situations [35,36]. These allowed for students to perform functions such as prioritising urgent cases [10,12,13,14], preventing pre-analytical errors, or working through case-based diagnostic reasoning without risk to patients. While virtual laboratories were described as focusing on procedural skills and technical understanding, scenario-based simulations tended to target situational judgement, problem-solving, and error management [15,16].

Other modalities identified in the literature sat between these extremes, offering focused media-rich content that aimed to support both skill acquisition and conceptual learning. For example, interactive e-books integrated multimedia resources for self-paced exploration of complex concepts [37]. Pure content-delivery platforms without interactivity (e.g., static readings) were generally excluded from the definition of simulation-based experiences.

The papers represent a mixture of quantitative, qualitative, and mixed-methods research, with a focus on empirical investigation, including surveys, focus groups, and intervention studies. Most employed a cross-sectional approach, collecting data at a single point in time, and there was limited availability of longitudinal data, as summarised in Table 1, although it should be noted that some studies referenced more than one study design.

Table 1.

Summary of study designs included.

Table 1 highlights the heterogeneity of the included studies, whereby most studies used at least some quantitative approaches, but with a range of designs that prohibited the direct comparison of findings in a statistically valid way. Eight studies were identified as having an experimental or quasi-experimental design. However, given the primary focus of the research question on readiness to practice, it was not appropriate to include a meta-analysis of these collective findings. While these studies evaluated the impact of educational interventions on outcomes such as exam performance, they did not assess how exam performance relates to professional competence. Therefore, qualitative analysis was used to provide a more nuanced exploration of the impact of these interventions on readiness to practice.

The methodological quality of the studies included was generally low, with several recurring limitations affecting internal and external validity. These included the following:

- Reliance on self-reported data: This introduced potential biases such as social desirability, recall, and response shift.

- Absence of control groups and randomization: This limited causal inferences and increased the potential for confounding.

- Sampling methods and participant characteristics: Frequent use of voluntary participation and convenience sampling, leading to selection bias and self-selection bias. Many studies had small sample sizes, low response rates (often <30%), and unequal group distributions, often skewed towards female participants, limiting both statistical power and generalisability.

- Measurement tools and outcomes: Studies often utilised non-validated or inconsistently interpreted measurement tools, with limited use of objective or observed outcomes. Few studies assessed actual competence or skill transfer, focusing instead on perceived learning gains. The use of subjective evaluation methods, such as educator-assigned internship grades, introduced potential performance and rater bias.

- Contextual limitations: Several studies were impacted by contextual factors, particularly the COVID-19 pandemic, which disrupted placements, reduced participation, and led to increased use of virtual learning platforms.

In line with PRISMA guidance [21], these limitations indicated a high or unclear risk of bias across most domains, underscoring the need for more rigorous study designs.

No formal sensitivity analyses were conducted due to the narrative approach of the scoping review. However, Table 2 and Table 3 provide an indication of the degree of consensus and consistency of the findings in the studies included.

Table 2.

Factors contributing to the theory–practice gap for biomedical scientists.

Table 3.

Skillsets across the two themes.

Key factors contributing to the theory–practice gap—and, therefore, impaired readiness to practice for biomedical scientists (RQ2.1)—are shown in Table 2.

Table 2 shows that the lack of clinical exposure for students, which is in part due to the frequent separation of the academic and clinical phases of training for biomedical scientists, which is an important contributing factor to the theory–practice gap, with nearly a quarter of the studies included addressing this theme. This may reflect an over-reliance on clinical training, alluded to by Garden [5], in that other factors in Table 2 are interconnected. For example, it could be argued that the root cause of limited authentic assessment, lack of professional role-modelling, and poor understanding of the role and professional standards pertaining to biomedical scientists is the limited clinical experience of university lecturers on biomedical science courses. This highlights a gap in the academic phase of the training of biomedical scientists, which must then be addressed on the clinical side. Moreover, these data highlight the importance of including clinical practitioners in aspects of course design, delivery, and assessment, as indicated by the IBMS degree assessment criteria [9]. However, collectively, the studies suggest that more research is needed on the specific levels of practitioner engagement needed to address these issues, as well as how to best incorporate this engagement. Additionally, the lack of standardisation of clinical training suggests that this aspect of training could benefit from the pedagogical input of the academic setting, highlighting the importance of effective collaboration between key stakeholders. More research is, therefore, also needed to investigate the frameworks that might best facilitate this collaboration.

Across the literature reviewed, ‘readiness to practice’ was conceptualised in varied but overlapping ways, reflecting differences in disciplinary focus, institutional context, and intended workforce outcomes. It was primarily defined in terms of professional competence, encompassing the measurable knowledge, skills, and behaviours aligned to standards such as ISO15189 [38] or HCPC standards, and linked to the delivery of safe, accurate, and value-based patient care [15,16,39,40,41]. However, some authors framed the concept of readiness to practice more broadly, incorporating adaptability to evolving technologies and clinical needs and the ability to contribute effectively within multidisciplinary teams [16,40,42]. The assessment of readiness also varied. Approaches included the use of validated tools to assess specific aspects, such as the Readiness for Interprofessional Learning Scale (RIPLS) [43] or the IPECC_SET 9 [44,45], to gauge confidence in collaborative attitudes, while others included stakeholder feedback from employers and educators [4,37,39,42] and reviews of reflective work products [40]. These differences illustrate that, while readiness to practice was central, its operationalisation ranged from narrowly defined technical competence to a multidimensional construct encompassing technical, cognitive, interpersonal, and adaptive capabilities, summarised in Table 3.

Table 3 expands on the scope and nature of the theory–practice gap to identify the skillsets most affected and which could, therefore, provide impactful targets for the future design of screen-based simulated learning experiences in this field (RQ2.2). In this regard, we intentionally used the term ‘skillsets’ as opposed to ‘competencies’, due to the specific definition of the latter term within biomedical science. As competencies in this field relate to the demonstration of proficiency in specific tasks under defined conditions, our focus on skillsets aimed to encompass a broader range of abilities relevant to professional practice.

Table 3 presents the total number of studies referencing each of the skillsets identified in the thematic analysis, broken down by core themes. Core themes were identified as follows: Theme 1—The effectiveness of pre-registration teaching and training in preparing biomedical scientists for practice; Theme 2—The potential impact of the use of screen-based simulated learning experiences in preparing biomedical scientists. Due to the unequal sizes of the groups, as shown in Table 2, comparing the percentages of studies referencing the different skillset by theme provides a more meaningful and accurate representation of the data in order to illustrate how different stakeholders ranked their importance.

Hands-on practical experience, technical proficiency, and subject-specific knowledge were an important skillset, with 92% of simulation-based interventions primarily targeting this. Conversely, skills such as professional behaviours and knowledge of professional standards and regulations were targeted less frequently by non-simulation-based traditional methods (26% each) compared to simulation-based methods (58% each). This could indicate a trend towards expanding the scope of simulation-based interventions in the field of biomedical science to address aspects of the development of professional identity as an adjunct to traditional teaching methods. Knowledge of industry-specific standards and regulations and professional behaviours, such as patient-centred care, specifically differentiate biomedical scientists from bio-scientists more generally. The implication of this finding is noteworthy because it suggests a recognition that readiness to practice incorporates both technical proficiency and professional identity. However, this is based on a limited sample size of just 12 studies, which explicitly explore the use of screen-based simulated learning experiences in the context of biomedical science. This includes one review article that incorporated a broader range of simulation-based activities [19], demonstrating the need for further research in this area.

Additionally, of the studies that addressed the development of professional behaviours by traditional means, i.e., those that did not incorporate simulation-based learning, most skewed towards generic employability skills, with only 7 out of the 35 studies coded in this category specifically referencing patient-centred care [4,7,39,40,43,44]. Of these, most focused on the importance of interprofessional communication in improving patient outcomes, with only three studies exploring the broader remit of biomedical scientists regarding patient-centred care. Salazar et al. [39] examined the role of biomedical scientists in patient safety, which was also a theme used in the study by Dudley and Matheson [7]. They also highlight the role of biomedical scientists in advocating for patients, which was also discussed in the study by Bashir, Wilkins, and Pallett [40]. Hence, while generic professional behaviours may be addressed more frequently in the literature, the development of a professional identity for biomedical scientists as registered healthcare professionals, as reflected by statutory professional standards [11,25,26], is underrepresented in the literature.

4. Discussion

The main purpose of this study was to investigate the potential of screen-based simulated learning experiences to address the theory–practice gap in the teaching and training of biomedical scientists. To achieve this goal, it was necessary to explore the advantages and limitations of current pedagogical approaches to assess how these might be impacted by the integration of simulations. To this end, this paper sought to identify the factors contributing to the theory–practice gap and the skillsets most impacted by it, with the overarching goal of identifying potentially impactful targets for the future design of simulated learning experiences.

4.1. The Theory–Practice Gap: The Academic Perspective

4.1.1. Lack of Clinical Experience for Lecturers

In total, 29 studies were identified as addressing factors contributing to the theory-practice gap related to the academic setting (see Table 3). Four specifically referenced the limited clinical experience of academic staff [5,7,40,46]. For example, a study by Donkin and Gusset [46] of 31 academics in the field of medical laboratory science in Australia found that most academics teaching on biomedical science courses did not have any experience of clinical practice, in contrast to other healthcare professions, where clinical experience for academics is the norm. For example, the British Dietitians Association state that academics on pre-registration programmes should include one registered dietitian per twelve learners [47], while the Royal College of Occupational Therapists state that the programme leaders should be occupational therapists [48], and the Nursing and Midwifery Council state that most learning and academic input into programmes should be delivered by registered nurses [17]. However, the study by Donkin and Gusset [46] found that academic appointments to biomedical science courses were instead heavily impacted by the appointee’s research history, with limited consideration given to prior experience of clinical practice. While this study represents a relatively small sample of academics and is based in Australia, the studies by Garden [5] and Dudley and Matheson [7] echo its sentiments in the UK. This goes some way to explaining the low rate of consensus between British academics teaching on biomedical science courses, observed by Dudley and Matheson [7], with only 41% of statements meeting the 70% agreement rate for consensus.

4.1.2. Poor Understanding of Professional Standards

Notably, the study by Donkin and Gusset [46] found that the statements ‘Statutory registration with the HCPC ensures that biomedical scientists feel empowered to make autonomous decisions’ and ‘It is not within the biomedical scientist’s remit to question clinical decisions that put the patient at risk’ failed to reach consensus among the academic group.

This is concerning, because it contravenes statutory standards for the profession. The HCPC Standards for Proficiency for biomedical scientists [11] state that biomedical scientists must be able to ‘practice as an autonomous professional, exercising their own professional judgement’. Additionally the HCPC Standards for Conduct, Performance and Ethics [20] state that registrants, such as biomedical scientists, must ‘promote and protect the interests of service users and carers’. However, when a representative of the HCPC was questioned as to the specific role of biomedical scientists in this aspect in Dudley and Matheson’s [7] study, their response suggested that it was ‘taken for granted’. This demonstrates how challenging it could be for academics with no clinical experience to contextualise HCPC standards in a meaningful way.

In a similar vein, in an action research project conducted by Millar et al. [49] exploring how communication and digital capabilities could be embedded into the undergraduate curriculum, of the 15 academics surveyed, less than half ranked reflective writing as an important skill for biomedical science students to develop. Again, this contradicts the HCPC Standards of Proficiency for biomedical scientists [11], which state that, at the point of registration, biomedical scientists must be able to reflect and review practices.

While it is essential that accredited (pre-registration) degree programmes comply with professional standards for education and training [8], they must also prepare graduates to work in the wider context of standards relating to professional practice. However, the study by Bashir, Wilkins, and Pallett [40], which evaluated the integration of pathology service users and practitioners into workshops for undergraduates, revealed that securing practitioner participation was hindered by workforce shortages, presenting substantial logistical challenges.

Collectively, these studies suggest that, as well as lack of exposure to the clinical environment, a lack of exposure to practitioners could be a compounding factor, contributing to the theory–practice gap. Simulations that are co-designed by practitioners could have the potential to amplify the voices and impacts of limited laboratory-based trainers to integrate profession-specific learning outcomes in an accurate and authentic manner while facilitating reflective practice [12,13,50]. This could impact the teaching of biomedical scientists in multiple ways.

4.1.3. Lack of Professional Role-Modelling

Donkin and Gusset [46] suggest that the lack of professional role modelling available to students can negatively impact their confidence and the development of their professional identity. However, a survey-based evaluation by Miranda et al. [13] of a simulation-based training resource involving 30 laboratory medicine students in Australia showed how screen-based simulation has the potential to address some of these concerns. The authors noted how healthcare simulations offer the opportunity to rehearse technical thinking and communication skills in a guided ‘pseudo-clinical’ environment.

4.1.4. Lack of Authentic Assessment

Donkin and Gusset [46] also indicate that the lack of clinical experience among academics impairs their ability to contextualise theoretical concepts in real-word scenarios that are representative clinical practice. Some educators have attempted to address this issue through the introduction of case-based learning [4,15,16]. In their study exploring the impact of incorporating case studies delivered by students into a redesigned workshop incorporating 48 students from four universities in the UK, Bashir et al. [4] noted that 100% of participants agreed that case studies helped them link theory to practice. However, as the students were all on placements in the clinical environment, it was unfeasible to differentiate the impact of the case studies from the overall influence of the clinical environment.

Similarly, a study by Feng, Wu, and Bi, [15] examined 70 students in China on a haematology module in a non-randomised controlled trial (intervention group, n = 51; control group, n = 19). The intervention group was taught using the Bridge in, Outcomes, Pre-assessment, Participatory learning, Post-assessment, and Summary (BOPPPS) framework. This method was combined with problem-based learning focused on case studies. Students in the intervention group achieved statistically significant higher summary evaluation scores than those in the control group (p < 0.05). Furthermore, the proportion of high-scoring students in the final evaluation significantly increased in the BOPPPS-PBL group. While this study suggests that case-based learning can support students to contextualise and situate their learning, it is challenging to isolate the impact of case-based learning from other elements of the intervention. It is also difficult to assess the authenticity of the assessment on which the outcome measures are based, which in turn makes it difficult to extrapolate how this learning would translate into the real world of clinical practice.

Li et al. [16] reported on a similar intervention involving a randomised controlled trial of 122 fourth-year clinical laboratory medicine students, also located in China, who were taught using a problem-based learning approach incorporating simulated case scenarios based on real-life clinical laboratory events developed by a teaching committee. In contrast, the study by Feng, Wu, and Bi, which did not use screen-based simulations. The study by Feng, Wu and Bi did not comment on the clinical experience of the teaching committee. The control group consisted of 56 students, while the experimental group consisted of 66 students. Notably, in this study, students underwent both a theory assessment in the academic setting and a clinical performance assessment during their clinical placement. Students in the PBL group achieved significantly higher mean scores on theory tests compared to the control group (p < 0.01). This suggests that PBL fostered a deeper understanding of theoretical knowledge related to the curriculum. The PBL group also saw significantly better clinical performance scores in their assessments conducted by laboratory supervisors during their placement (p < 0.01) compared to the control group. This suggests that the use of simulated case studies paired with problem-based learning was more effective in developing the practical skills and working competencies needed for real clinical laboratory work.

Additionally, both Li et al. [16] and Feng, Wu, and Bi [15] identified a lack of strong assessment methods in biomedical science education, which are needed to accurately reflect the wide range of skills required of a biomedical scientist. This suggests that a lack of clinical experience amongst academics [46] may make it difficult for academics to design realistic, valid, and authentic assessments. As a result, the assessments may not fully represent the experiences and requirements of practitioners. Specifically, Li et al. [16] noted that the lack of robust assessment methods makes it difficult to identify and address barriers to the effective development of professional skills, while Feng, Wu, and Bi [15] argued that a lack of sufficient heuristic instruction with assessment methods focused on theoretical and experimental results made it challenging to evaluate learning outcomes such as true problem solving and clinical reasoning. They concluded that traditional assessment methods no longer adequately address the training requirements of medical laboratory experts. The scoping review by França et al. [51] of 35 papers examining educational interventions in the biomedical science curriculum during the COVID 19 pandemic drew similar conclusions, referring to the ‘technicist culture’ of education. They explained that this approach prioritises the acquisition of knowledge, often at the expense of the skills needed to adapt to the complexities of a clinical laboratory.

Again, these findings represent an excellent opportunity to assess the potential of simulation-based learning to address these issues. Incorporating co-designed case studies and problem-based learning into simulated learning experiences could support the heuristic instruction advocated by Feng, Wu and Bi [15]. Attempts to achieve this were seen in the study by Froland et al., [36] which highlighted the beneficial potential of using simulation to allow students to investigate and resolve an analyser flag through troubleshooting procedures, for example. Analyser flags are generated by automated equipment to alert biomedical scientists to abnormal test results, which may require further investigation or confirmation.

A similar approach was demonstrated by Han et al. [45], who detailed the development of a virtual learning platform using the Unity3D game engine. The paper presents a preliminary virtual experiment to demonstrate the platform’s capabilities. The simulation included laboratory procedures such as switching on instruments, navigating menus, setting reagents, handling samples, and observing results. Students could assume the role of a biomedical scientist by interacting with virtual instruments using keyboards and mice. However, the study did not describe any user evaluation procedures to assess the platform’s effectiveness or usability. A prospective cohort study by Sun et al. [14] of 48 medical laboratory science students, evaluating the integration of virtual laboratory experiments, offered more objective evidence to support its claims. The experimental group achieved an average of 82% and 92% on their theory and practical exams, respectively, compared to an average of 76% and 82% for the control group. Additionally, approximately 98% of students agreed that virtual laboratory experiments improved their understanding of concepts. However, because the simulated learning experiences in this study were introduced as a suite of changes, it remains challenging to assess the impact of the simulated learning experiences in isolation.

Simulation-based learning with integrated learning analytics also has the potential to support more robust and authentic assessments of a more holistic skillset. The study by Verkuyl et al. [12] found that participants of simulation-based learning identified improvements in clinical judgement, prioritisation, delegation, problem solving, communication, and teamwork. These sentiments were echoed in the study by Froland et al. [36] and the review by Webb, McGahee, and Brown [19].

In a similar vein, the study by Coskun et al. [33] provides an example of how simulated learning experiences can be integrated into the curriculum in a way the supports the development of a more holistic skillset. In a curriculum development project incorporating 51 students, students used a virtual dissection table to develop digital learning material for their peers and evaluated their effectiveness, developing skills in collaboration, communication, reflective practice, metacognition, and spatial reasoning. Participants achieved a significantly higher mean percentage (approximately 92.9% on average) on exam questions related to gross skeletal structures compared to non-participants (approximately 85.5% on average) (p < 0.05). However, the study also noted a learning curve related to the use of technology, with varied levels of comfort regarding its use, as well as excessive loading times and poor response times.

While these studies provide evidence that the integration of simulated learning experiences may improve exam performance, there is little evidence to explore how this translates to clinical practice, and little detail is provided on the design and nature of the simulations themselves. However, the studies do lay the groundwork to suggest that simulation-based learning can be designed to observe and evaluate far more than technical proficiency. The data generated as a result, even if analysed to provide structured observation and qualitative feedback rather than automated analytics, could form the foundation of more robust and authentic assessments. Ideally, the design of such experiences and their associated assessments would involve input from both academic and clinical settings.

4.2. The Theory–Practice Gap: The Clinical Perspective

4.2.1. Lack of Standardisation in Clinical Training

Only six studies linked the theory–practice gap to challenges related to the clinical setting [10,12,13,33,35,52]. However, it is likely that this is reflective of the fact that 35 out of the 45 studies included were associated with the academic setting, highlighting the underrepresentation of practitioners in the literature. Of these studies, however, most emphasised the potential for simulations to replicate aspects of the clinical environment, while addressing some of its limitations. The unpredictable nature of the clinical environment introduces variation into the educational experience. In contrast, the controlled environment of simulated learning provides a standardised experience that can increase exposure to more diverse clinical scenarios, including rare but clinically important cases and technical situations that may occur less frequently in practice, with expanded opportunities for deliberate practice [12,13].

Moreover, studies such as the one by Tsai et al. [10] suggest that simulation-based learning also allows for students to learn at their own pace and according to their own style, facilitating individualised learning. In their study, students were able to engage with simulations in different modes, such as demonstration mode or operation mode, catering to different ways of learning. The study by Wahab and Dubrowski [35] had similar implications. In the virtual platform used in this study, students could access computer-based video training to support self-directed learning, and the system incorporated peer-to-peer and expert-based feedback, offering personalised guidance. These studies demonstrate the potential for simulation-based learning to alleviate some of the time constraints associated with direct supervision in clinical environments, while still supporting individualised learning. However, this potential has yet to be confirmed empirically.

4.2.2. Lack of Clinical Exposure for Students

Access to pathological specimens is also a more challenging prospect for academic settings due to the constraints of the Human Tissue Act [53] and concerns regarding biosafety and security. Concerns regarding biosafety and security are compellingly illustrated by the cross-sectional survey study of 142 medical laboratory science students and 43 clinical laboratory personnel in Saudi Arabia conducted by Abu-Siniyeh and Al-Shehri [52]. The results show that only 57% of students had a good-to-moderate understanding of biohazard signage, and only 54% knew how to deal with a biological spill. Only 23% knew the location of emergency equipment, with only 12% knowing how to use it. In contrast, 100% of laboratory workers knew the location of emergency equipment, 93% knew how to use it, and 98% knew how to handle a biological spill. The authors identified barriers—such as minimal contact with health and safety equipment, and a focus on laboratory practical sessions, alongside a lack of active involvement in health and safety procedures, resulting in a general lack of awareness—as key factors contributing to this theory–practice gap. These results thus highlight the need for increased awareness and training for the implementation of effective safety measures in clinical laboratories.

The intervention study by Tsai et al. [10] demonstrates how simulations can be used to mitigate these risks. The study used the Kirkpatrick model of training evaluation. It aimed to assess the effectiveness of a novel virtual reality (VR) software. This software was designed for simulated and interactive high-level virological testing based on a widely adopted automated platform in clinical practice. The researchers noted that students on placement are often not permitted to carry out complex virology testing due to its inherent biosafety risks. The Kirkpatrick framework consists of four levels, ranging from learner reactions at level 1, learning attainment at level 2, behavioural change at level 3, and impact on organisational outcomes at level 4. In the study, which incorporated 31 medical laboratory science students, level 1 was operationalised as trainee satisfaction scores, level 2 was operationalised as written test scores, and level 3 was operationalised as self-reported survey data, investigating the extent to which the students applied the knowledge and skills before and after the intervention. The validity of this experimental design in evaluating level 3 of the Kirkpatrick model is questionable, firstly because it relies on self-reported data rather than an objective assessment of student behaviour, and secondly because level 3 of the Kirkpatrick model aims to measure the transfer of learning to on-the-job behaviour, but the students were assessed in an academic setting. This is important, because the 3D simulation used to assess the student’s behaviour for level 3 of the framework may not have accurately reflected the nuances and pressures of the clinical setting, such as dealing with real patient samples, time constraints, and the consequences associated with error. However, the evidence for level 2 is more convincing. The results show that the average post-training scores of participants exposed to 2D and 3D VR training were significantly higher than those who received only traditional demonstration teaching (p < 0.001).

Other attempts to address issues regarding sample handling using simulations include the technical report by Wahab and Dubrowski [35]. The small sample of nine students enrolled on a medical laboratory sciences programme detailed the development and user-based evaluation of a virtual simulation-based microtomy training module. However, the scope of the evaluation was limited, primarily focusing on the usability and perceived educational value based on module feedback, rather than the objective assessment of skill development. Additionally, the authors noted that the lack of tactile feedback and demonstration of a real microtome in use were barriers to skill development, and that the simulation was unable to replicate the full range of complexity associated with using a real microtome. These sentiments were echoed by Coskun et al. [33], who also noted the lack of haptic feedback in their assessment of virtual dissection compared to real-world dissection.

Miranda et al. [13] conducted a slightly larger study incorporating 30 laboratory medicine students, evaluating the effectiveness of a virtual microscopy imager to simulate rapid on-site evaluation of fine-needle aspiration cytology samples. They found that 86% of participants rated the resource as very or extremely useful. In total, 90% of participants viewed 6–30 images during the study period and praised the virtual imager for its ability to support flexible learning as a resource available on and off campus and accessible via a range of devices, including laptops, tablets, and smartphones. However, there were difficulties around focusing the cytology images, and a minority of students commented on the speed of loading the images. The study also noted that more experienced students expressed a preference for light microscopy over the virtual imager. Interestingly, these more advanced students benefited from an unsupervised dedicated microscopy suite, and the authors posit that the community of practice this facilitated contributed to their preference for light microscopy—although students also felt that light microscopy was a valuable skill that could not be replaced by the virtual imager. However, the study authors highlighted how this intervention mirrors the trend in switching from light microscopy to digital microscopy seen in practice, enhancing the familiarity of the students with the digital analysis of cellular smears and tissue samples.

4.3. The Scope and Nature of the Theory Practice Gap

The skills most targeted by interventions across the literature were identified and ranked according to frequency in order to give an indication of the scope and nature of the theory–practice gap (see Table 3). The three most frequently targeted skill domains in both the academic and clinical settings were the following:

- Hands-on practical experience, technical proficiency, and subject-specific knowledge;

- Critical thinking and problem-solving, including data analysis and interpretation;

- Self-directed learning, reflective practice, and continued professional development.

4.3.1. Hands-On Practical Skills, Technical Proficiency, and Subject-Specific Knowledge

Given the previously discussed challenges of exposing students to patient samples, specialised equipment, and automation, it is perhaps unsurprising that 25 out of the 45 studies included most frequently identified hands-on experience as lacking in graduates and newly qualified biomedical scientists; to some degree, this will be unavoidable [29,30,34,54,55].

In their retrospective study examining the impact of face-to-face teaching methods on academic performance, Albalushi et al. [54] identified a fall from an average 80.4% to 75.7% in practical examination scores for the 88 biomedical science students in their cohort when practical teaching was suspended because of the pandemic. They cited challenges in developing necessary motor skills and interpersonal skills as key factors contributing to this shortfall, demonstrating the importance of hands-on experience.

However, there have been attempts to address this limitation of academic settings. In their evaluation of a novel educational intervention, Carriere et al. [29] implemented a student-led in vivo drug trial using an aquatic worm as a model organism. The intervention was designed to develop key laboratory skills such as dealing with dilutions, data recording, in vivo techniques, and experimental design. However, the authors noted digital constraints in the development of these skills, with limited access to computers. Students noted ‘real life’ insights into vivo research, which they felt enabled the development of hands-on skills that would be applicable to their future careers. More objectively, lecturers also noted that the students were able to generate robust and reproducible data and actively reflect on their practice, considering the importance of experimental design. However, these findings represent a small sample size of 54 students and a low survey response rate of 15% (n = 8).

The study by Zhang et al. [31] also used a model organism to teach hands-on laboratory skills in an experimental study based in China. The authors modified a bacteria’s genetic makeup to disable its disease-causing capacity, making it safe for students to use in a teaching laboratory. This allowed for students to practice real-world techniques with an organism that closely resembled those encountered in clinical settings, providing an authentic learning experience without the associated risk of working with potentially harmful pathogens. In a mock examination to assess practical skills such as bacterial culture, zoning and streak-plating, colony selection, and interpreting results, students in the experimental group achieved an average of approximately 97% compared to the control group’s average score of approximately 90%, noting that the control group used simulation-based learning only. However, the authors provide no explicit information on the sample size, stating only the inclusion of three cohorts.

In an equally novel approach to teaching hands-on skills, Diaz and Woolley [34] investigated the impact of body painting on learning anatomy. Their mixed-methods study included 311 students from healthcare programmes, including biomedical science, but did not explicitly state what proportion of the cohort were biomedical science students. The body painting sessions were designed as an alternative to the more traditional use of cadaver dissection which might be encountered in practice. In total, 72% of students agreed that the sessions improved their understanding of anatomy, and qualitative data from focus groups suggested that the activity promoted more meaningful engagement with lecturers and peers. However, questions were raised as to the cultural sensitivity of the experience, with some participants raising concerns about body image and self-consciousness. Notably, the authors did not collect data on how the intervention impacted objective measures of academic or professional performance.

In contrast, the experimental study by Younes et al. [56], involving a cohort including 108 medical laboratory science students, noted a significantly higher exam performance for topics using a live in front of students (LIST) approach to teaching practical anatomy skills. Additionally, 69% of students agreed that this approach to demonstrating practical anatomy enhanced their skills.

Therefore, in summary, the literature offers some innovative non-simulation based approaches to provide students with hands-on laboratory experience in academic settings. However, small samples sizes and limited details about study methods hinder the reproducibility of these studies. Additionally, the lack of objective assessments of academic or professional achievement restricts the strength of evidence for the effectiveness of these interventions. It is also important to note that there is little overlap between the skills developed in academic interventions and the discipline-specific competencies seen most frequently in clinical practice.

In contrast, several simulation-based learning experiences for biomedical scientists targeted technical proficiency across multiple competencies: transfusion, microbiology, histology, chemistry, haematology, laboratory information systems, molecular diagnostics, urinalysis [19], cytological examination [57], phlebotomy [36], coagulation testing [58], and virology testing [10]. In the qualitative study by Froland et al. [36], evaluating a gamified application designed for practising phlebotomy, the students noted a focus on real-world accuracy, which contributed to developing their familiarity with the practical aspects of the skill. Some commented on how this resource supported them to apply theory to practice, stating that it would have been a valuable asset when they first learned the procedure. They also commented that the tool allowed for them to learn from their errors. However, the study’s authors noted that the initial simplicity of the app might not cater to the needs of more experienced students, and the app did not cover the full spectrum of challenges that might be encountered in a real-world setting. They concluded that, at this stage of development, web and mobile applications might lack the level of realism needed for optimal skills transfer.

While the studies by Miranda et al. [13], Froland et al. [59], and Tsai et al. [10] suggest that, in the domain of technical proficiency, simulated learning experiences may support scaffolding that enhances learner confidence, helping to bridge the application of theory to practice, they also highlight issues of fidelity and over-simplification as common complaints in regard to simulation-based learning. They provide little robust evidence of their actual impact on practical skill acquisition, with most measurement outcomes reliant on self-reported student perception rather than objective competency assessments of technical proficiency. This is reflected in the study by Zhang et al. [31], who noted that students from the control group, who used only simulated learning experiences, had a statistically poorer exam performance than those who completed face-to-face teaching using a model organism in the laboratory. Similarly, in the study by Duzan et al. [55], students whose clinical placement was replaced solely by simulated learning felt unprepared for professional registration or clinical practice. The cumulative findings from these studies indicate that simulation-based learning has the potential to serve as a valuable adjunct to clinical training, offering synergistic benefits when integrated into the overall educational framework. However, while it is possible that the introduction of simulation-based learning could facilitate the optimisation of the duration of clinical training to build capacity, it cannot entirely supplant the indispensable experience and hands-on practise gained through actual clinical training.

It must also be acknowledged that the use of simulated learning experiences in this context is still in its infancy, as reflected by studies such as Han et al. [58], who report mainly on the development of resources, rather than user evaluations. As noted in the study by Froland et al. [59], with further development, simulated learning experiences may help to address challenges in the education of biomedical scientists, such as limited resources, time constraints both on campus and on placement, and difficulties around replicating authentic work environments. However, current evidence for the effectiveness of simulations in developing technical skills is weak. Focusing on other skill areas might be more productive and could improve professional development outcomes for biomedical scientists.

4.3.2. Critical Thinking and Problem-Solving

In total, 24 out of 45 studies focused on critical thinking and problem-solving. Critical thinking and problem-solving skills are consistently identified as being deficient in biomedical science graduates, as indicated by a review of 112 sources by Pearse and Scott [6] examining the historical challenges around clinical laboratory education. The scoping review of 32 papers conducted by França et al. [51] concurs with this finding. The review, focusing on educational interventions for healthcare professionals, including laboratory personnel, identified the need for ongoing training to enhance critical reasoning.

Academic attempts to address this shortfall include the previously discussed studies by Feng, Wu, and Bi [15] and Li et al. [16]. However, in an alternative approach to developing critical analysis, a mixed-method research project by Oates and Johnson [60] investigated the impact of students evaluating GenAI (generative artificial intelligence)-produced essays. A small sample of ten masters students were tasked with evaluating the AI output, using the peer-reviewed literature to support their assessment. The cohort showed a statistically significant (p = 0.04) difference of 9% higher marks on average for critical evaluation compared to the overall course average.

These studies suggest that academia may have the tools to address deficiencies in critical thinking and problem-solving, but it will require a shift towards more student-centred approaches with more authentic and holistic assessments. Li et al. [16] noted that such student-centred approaches typically ask more of educators, requiring an investment in time and energy for lesson preparation, as well as rich practical experience. Again, previously discussed studies have alluded to how these challenges could be potentially mitigated by simulation-based learning [10,12,14,36,58,61] by collaborating with practitioners to co-design simulations that can amplify their impact by producing authentic scenarios and assessments that reflect professional standards and the real-world autonomy expected in practice. Simulations could present students with complex diagnostic scenarios. Students would have to analyse data, interpret results, make decisions, and evaluate the outcome of those decisions. They could practice this critical thinking repeatedly in a safe environment. Unlike the GenAI essay evaluation (which was just one assignment), simulations could provide ongoing practice.

4.3.3. Self-Directed Learning, Reflective Practice, and Continued Professional Development

In total, 23 out of the 45 studies focused on self-directed learning, reflective practice, and continued professional development. As noted by Millar et al. [37], reflective writing is an essential standard of proficiency required of all biomedical scientists. It is an essential element of continued professional development, which is mandated by the HCPC [25] for its registrants. Millar et al.’s [37] study suggests that embedding reflective practice earlier in the curriculum could assist students in becoming more independent learners by supporting them to develop more personalised approaches. These sentiments are echoed in the studies by Bashir, Wilkins, and Pallett [40] and Robertson, Hughes, and Rhind [62]. In Robertson, Hughes, and Rhind’s [62] mixed-methods study encompassing 159 biomedical science students, the authors adapted Miller’s Pyramid, a model for assessing levels of clinical competence [63], to support students to develop assessment literacy. This modified framework focused on enhancing the students’ understanding of the skills being assessed and how they related to professional roles in biomedical and biological sciences. The authors noted how this framework supported the application of theory, helping students to move beyond isolated subject-specific knowledge. Participants indicated enhanced confidence in independent learning and exercising judgement. The students also suggested that the intervention helped them to be more inquisitive and take responsibility for their own learning. The pyramid itself depicts how the acquisition and development of skills assessed advanced the students from undergraduates to practitioner graduates. However, the study does not offer any objective evidence that graduates had fully achieved a practitioner level of mastery.

It is worth noting that true mastery is usually based on repetition, in contrast to many traditional higher-education assessments. As discussed in the review by Winget and Persky [64], mastery-based learning focuses on iterative rounds of formative assessments and feedback, which continue until the student can demonstrate a pre-defined level of mastery. This mirrors the competency-based approach seen in clinical settings. The introduction of this approach formed part of a revised assessment strategy in the study by Feng, Wu, and Bi, [15], which demonstrated improved assessment outcomes in conjunction with the implementation of problem-based learning. However, with an intervention group sample size of only 19 students across two-year cohorts, questions arise as to the scalability of this approach.

In contrast, the study by Sun et al. [14] noted how the flexibility of virtual simulation experiments promoted self-directed learning, while their repeatability fostered a deeper understanding of concepts and allowed for students to react faster when they were working independently offline. Similarly, the study by Wahab and Dubrowski [35] describes how virtual simulation on gamified networks allowed for students to demonstrate initiative and responsibility in their learning. Additionally, the increased opportunity for deliberate practice and reflection are noted as key strengths of simulation-based learning compared to traditional teaching methods in several other studies [19]. However, in the study by Sun et al. [14], students also identified challenges around transitioning to the more active learning style associated with simulation-based learning, including significant increases in the required time and energy expended outside of the classroom.

The study by Miranda et al. [57] also emphasised the importance of debriefing (a planned session aimed at improving future performance) in simulation-based learning to encourage reflection. This suggests that how simulations are integrated into the curriculum is as important as the simulations themselves. However, several studies highlight the need for further research in this area of simulation-based education [12,19,36], indicating a gap in our understanding regarding the most effective methods for incorporating screen-based simulations into the curriculum.

4.3.4. Interprofessional Collaboration and Communication

In total, 19 out of the 45 studies addressed interprofessional collaboration and communication. The Centre for the Advancement of Interprofessional Education (CAIPE) defines interprofessional education as occasions when members or students of two or more professions learn with, from, and about each other to improve collaboration and the quality of care and services [65]. Studies suggest that interprofessional education prepares students to work effectively in interprofessional teams and promotes collaborative professional relationships [37,44,45,66]. This is reflected in the essential competency of effective communication addressed in a range of professional standards, including the HCPC Standards of Proficiency for biomedical scientists [11].

The survey-based quality-improvement project by Salazar et al. [39], examining the developing role of the Doctorate in clinical laboratory sciences, also emphasised the need to enhance communication between the laboratory and broader healthcare setting. Of the fifteen laboratory personnel that enrolled in the programme—which focussed on collaborative skills in healthcare, leadership in diagnostic management teams, and development of diagnostic algorithms—nine accepted a new job position within 6 months of graduation, and a further five were promoted. In the accompanying survey of 55 laboratory managers, 82% agreed that the graduates of the programme were valuable as a laboratory resource and provided helpful consultation to service users, benefiting clinical performance—thus emphasising the demand for these skills.

In the study by Bashir et al. [41] incorporating 93 biomedical science students attending an interprofessional education-based workshop, 82% of students agreed that they needed to know more about the roles of other healthcare professionals, and 88% of students agreed that working with students from other healthcare programmes improved their awareness of other healthcare roles and responsibilities. Most importantly, 96% of students agreed that integrated working between different health professionals in practice benefits patients. However, this study did not use a validated instrument to objectively assess the impact of the intervention.

The study by Miyata et al. [43], incorporating 75 medical technology students, took a similar approach, but did incorporate the use of a validated instrument. The students, who attended a two-day case-based interprofessional digital workshop, were evaluated using the Readiness for Interprofessional Learning Scale before and after the intervention. For the medical technology students, the mean difference in total RIPLS score was 2.19, with a medium effect size (r = 0.41). However, the authors identified a lack of effective digital learning and group-work tools for biophysical diagnoses.