Shedding Light on the Dark Web: Authorship Attribution in Radical Forums

Abstract

:1. Introduction

2. Background and Related Work

2.1. Machine Learning Methods

2.2. Deep Learning Methods

2.3. Tor and the Dark Web

3. Dataset

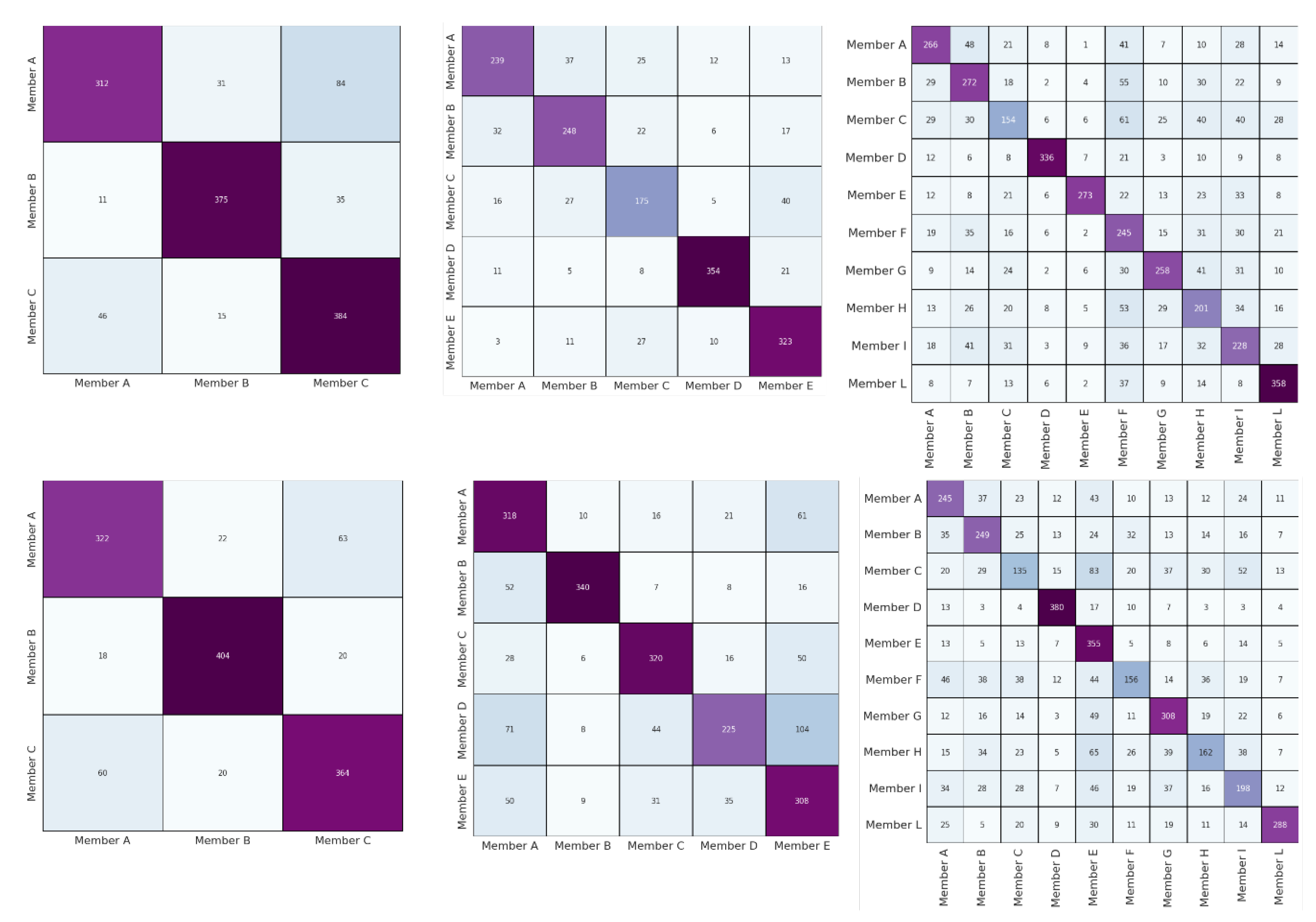

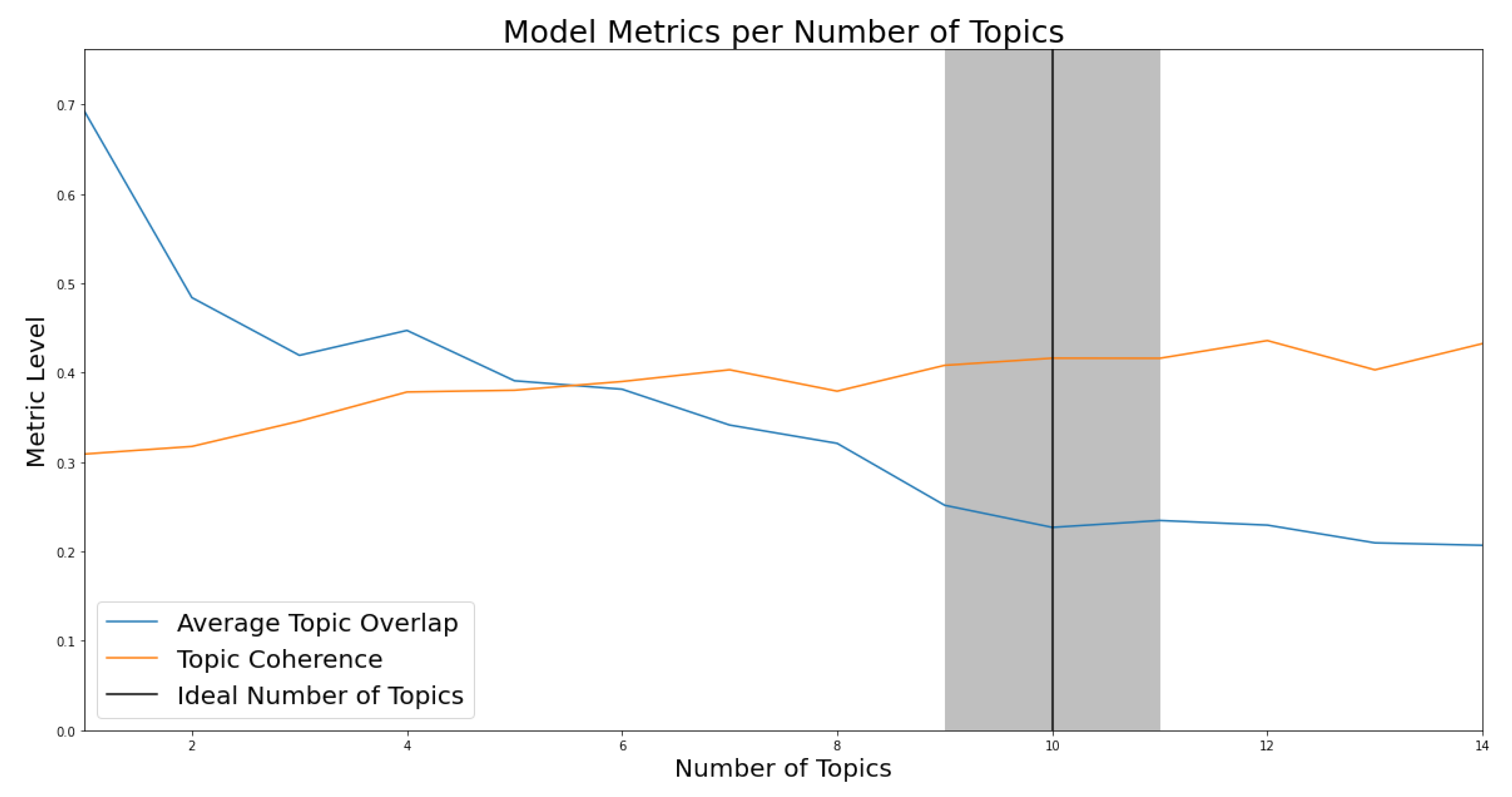

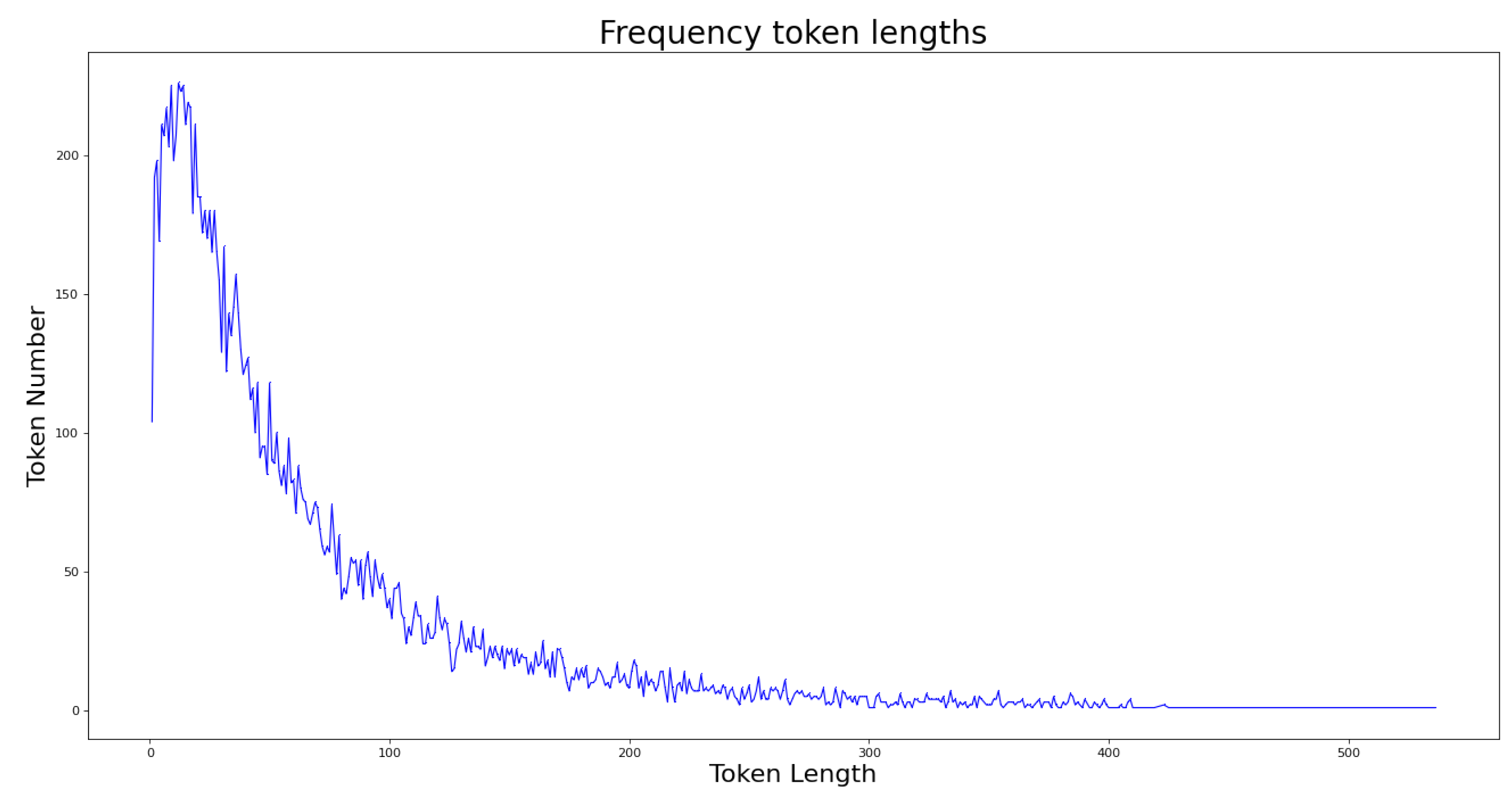

3.1. Topic Analysis

3.2. Dataset Building

4. Methods

4.1. Style- and Lexical-Based Features

- Pre-processing was done using NLTK features. The text was tokenized, stopwords were removed, and surface cleaning was done. Then, BoW and TF-IDF were applied to the pre-processed input, respectively. Therefore, the models based on these two inputs were called BoW and TF-IDF plainness.

- Pre-processing was done using POS tags, which are unique labels assigned to each token (word) to indicate grammatical categories and other information, such as time and number (plural/singular) of words. POS tags were extracted using the method proposed by Toutanova et al. [54]. In order to differentiate between the input consisting of POS and the input not constructed by POS, BoW and TF-IDF that took pre-processing with POS were called bag of part-of-speech tags (BoPOS) and TF-IDFPOS.

4.2. KERMIT

4.3. Transformer Models

- Multi-lingual [58], which is similar to BERT but is trained on a Wikipedia dump of 100 languages.

- XLNet [17], which unlike the previous is based on a generalized autoregressive pre-training technique that allows the learning of bidirectional contexts by maximizing the expected likelihood over all permutations of the factorization order. XLNet is trained on Wikipedia, BookCorpus [57], Giga5 [59], Clueweb [60], and Common Crawl [61].

- ERNIE [18] introduced a language model representation that addresses the inadequacy of BERT and utilizes an external knowledge graph for named entities. ERNIE is pre-trained on the Wikipedia corpus and Wikidata knowledge base.

- ELECTRA [19] proposes a mechanism for "corrupting" the input token by replacing it with a token that potentially fits the place. The training is done by trying to guess whether each token is a corrupted input or not. To make its performance comparable to BERT, they trained the model on the same dataset that BERT was trained on.

- DistilBERT [62] is a pre-training method for a smaller, more general-purpose language representation model than BERT. In DistilBERT, a distillation of knowledge is proposed during the pre-training phase by reducing the size of BERT by 40%, while retaining 97% of its language comprehension capabilities and being 60% faster.

4.4. Experimental Setup

4.5. Results

4.6. Discussion

4.7. Future Works

4.8. Limitations

5. Conclusions

Author Contributions

Funding

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

Appendix A

References

- Pillay, S.R.; Solorio, T. Authorship attribution of web forum posts. In Proceedings of the 2010 eCrime Researchers Summit, Dallas, TX, USA, 18–20 October 2010; pp. 1–7. [Google Scholar] [CrossRef]

- Ranaldi, L.; Zanzotto, F.M. Hiding Your Face Is Not Enough: User identity linkage with image recognition. Soc. Netw. Anal. Min. 2020, 10, 56. [Google Scholar] [CrossRef]

- Wagemakers, J. The Concept of Bay’a in the Islamic State’s Ideology. Perspect. Terror. 2015, 9, 98–106. [Google Scholar]

- Johansson, F.; Kaati, L.; Shrestha, A. Detecting Multiple Aliases in Social Media. In Proceedings of the 2013 IEEE/ACM International Conference on Advances in Social Networks Analysis and Mining, Niagara, ON, Canada, 25–29 August 2013; Association for Computing Machinery: New York, NY, USA, 2013. ASONAM ’13. pp. 1004–1011. [Google Scholar] [CrossRef]

- Reilly, B.C. Doing More with More: The Efficacy of Big Data in the Intelligence Community. Am. Intell. J. 2015, 32, 18–24. [Google Scholar]

- Operation Onymous|Europol—Europol.europa.eu. Available online: https://www.europol.europa.eu/operations-services-and-innovation/operations/operation-onymous (accessed on 14 May 2022).

- Relazione al Parlamento 2021-Sistema di Informazione per la Sicurezza della Repubblica—Sicurezzanazionale.gov.it. Available online: https://www.sicurezzanazionale.gov.it/sisr.nsf/relazione-annuale/relazione-al-parlamento-2021.html (accessed on 14 May 2022).

- Park, A.J.; Beck, B.; Fletche, D.; Lam, P.; Tsang, H.H. Temporal analysis of radical dark web forum users. In Proceedings of the 2016 IEEE/ACM International Conference on Advances in Social Networks Analysis and Mining (ASONAM), San Francisco, CA, USA, 18–21 August 2016; pp. 880–883. [Google Scholar] [CrossRef]

- Arabnezhad, E.; La Morgia, M.; Mei, A.; Nemmi, E.N.; Stefa, J. A Light in the Dark Web: Linking Dark Web Aliases to Real Internet Identities. In Proceedings of the IEEE 40th International Conference on Distributed Computing Systems (ICDCS), Singapore, 29 November–1 December 2020; pp. 311–321. [Google Scholar] [CrossRef]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, Ł.; Polosukhin, I. Attention is all you need. In Proceedings of the Advances in Neural Information Processing Systems, Long Beach, CA, USA, 4–9 December 2017; pp. 5998–6008. [Google Scholar]

- Zanzotto, F.M.; Santilli, A.; Ranaldi, L.; Onorati, D.; Tommasino, P.; Fallucchi, F. KERMIT: Complementing Transformer Architectures with Encoders of Explicit Syntactic Interpretations. In Proceedings of the 2020 Conference on Empirical Methods in Natural Language Processing (EMNLP), Online, 16–20 November 2020; Association for Computational Linguistics: Stroudsburg, PA, USA, 2020; pp. 256–267. [Google Scholar] [CrossRef]

- Madigan, D.; Genkin, A.; Lewis, D.; Fradkin, D. Bayesian Multinomial Logistic Regression for Author Identification. Aip Conf. Proc. 2005, 803, 509. [Google Scholar]

- Aborisade, O.; Anwar, M. Classification for Authorship of Tweets by Comparing Logistic Regression and Naive Bayes Classifiers. Proceedings 2018 IEEE International Conference on Information Reuse and Integration (IRI), Salt Lake City, UT, USA, 7–9 July 2018; pp. 269–276. [Google Scholar] [CrossRef]

- Sari, Y.; Stevenson, M.; Vlachos, A. Topic or Style? Exploring the Most Useful Features for Authorship Attribution. In Proceedings of the 27th International Conference on Computational Linguistics, Santa Fe, NM, USA, 20–26 August 2018; Association for Computational Linguistics: Stroudsburg, PA, USA, 2018; pp. 343–353. [Google Scholar]

- AZSecure-data.org—azsecure-data.org. Available online: https://www.azsecure-data.org (accessed on 14 May 2022).

- Devlin, J.; Chang, M.W.; Lee, K.; Toutanova, K. BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding. In Proceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, Minneapolis, MN, USA, 2–7 June 2019; Association for Computational Linguistics: Stroudsburg, PA, USA, 2019; Volume 1, pp. 4171–4186. [Google Scholar] [CrossRef]

- Yang, Z.; Dai, Z.; Yang, Y.; Carbonell, J.G.; Salakhutdinov, R.; Le, Q.V. XLNet: Generalized Autoregressive Pretraining for Language Understanding. In Proceedings of the NeurIPS, Vancouver, BC, Canada, 8–14 December 2019. [Google Scholar]

- Sun, Y.; Wang, S.; Feng, S.; Ding, S.; Pang, C.; Shang, J.; Liu, J.; Chen, X.; Zhao, Y.; Lu, Y.; et al. ERNIE 3.0: Large-scale Knowledge Enhanced Pre-training for Language Understanding and Generation. arXiv 2021, arXiv:2107.02137. [Google Scholar]

- Clark, K.; Luong, M.T.; Le, Q.V.; Manning, C.D. ELECTRA: Pre-training Text Encoders as Discriminators Rather Than Generators. In Proceedings of the ICLR, Addis Ababa, Ethiopia, 30 April 2020. [Google Scholar]

- van der Goot, R.; Ljubešić, N.; Matroos, I.; Nissim, M.; Plank, B. Bleaching Text: Abstract Features for Cross-lingual Gender Prediction. In Proceedings of the 56th Annual Meeting of the Association for Computational Linguistics, Melbourne, Australia, 15–20 July 2018; Association for Computational Linguistics: Stroudsburg, PA, USA, 2018; Volume 2, Short Papers. pp. 383–389. [Google Scholar] [CrossRef]

- Anwar, T.; Abulaish, M. A Social Graph Based Text Mining Framework for Chat Log Investigation. Digit. Investig. 2014, 11, 349–362. [Google Scholar] [CrossRef]

- Ho, T.N.; Ng, W.K. Application of Stylometry to DarkWeb Forum User Identification. In Proceedings of the ICICS, Singapore, 29 November–2 December 2016. [Google Scholar]

- Grieve, J. Quantitative Authorship Attribution: An Evaluation of Techniques. Lit. Linguist. Comput. 2007, 22, 251–270. [Google Scholar] [CrossRef]

- Petrovic, S.; Petrovic, I.; Palesi, I.; Calise, A. Weighted Voting and Meta-Learning for Combining Authorship Attribution Methods. In Proceedings of the 19th International Conference, Madrid, Spain, 21–23 November 2018; pp. 328–335. [Google Scholar] [CrossRef]

- Stamatatos, E. A survey of modern authorship attribution methods. J. Am. Soc. Inf. Sci. Technol. 2009, 60, 538–556. [Google Scholar] [CrossRef]

- Potha, N.; Stamatatos, E. A Profile-Based Method for Authorship Verification. In Artificial Intelligence: Methods and Applications; Likas, A., Blekas, K., Kalles, D., Eds.; Springer International Publishing: Cham, Switzerland, 2014; pp. 313–326. [Google Scholar]

- Allison, B.; Guthrie, L. Authorship Attribution of E-Mail: Comparing Classifiers over a New Corpus for Evaluation. In Proceedings of the Sixth International Conference on Language Resources and Evaluation (LREC2008), Marrakesh, Morocco, 28–30 May 2008; European Language Resources Association (ELRA): Marrakech, Morocco, 2008. [Google Scholar]

- Seroussi, Y.; Zukerman, I.; Bohnert, F. Authorship Attribution with Topic Models. Comput. Linguist. 2014, 40, 269–310. [Google Scholar] [CrossRef]

- Soler-Company, J.; Wanner, L. On the Relevance of Syntactic and Discourse Features for Author Profiling and Identification. In Proceedings of the 15th Conference of the European Chapter of the Association for Computational Linguistics, Valencia, Spain, 3–7 April 2017; Association for Computational Linguistics: Valencia, Spain, 2017; Volume 2, Short Papers. pp. 681–687. [Google Scholar]

- Bacciu, A.; Morgia, M.L.; Mei, A.; Nemmi, E.N.; Neri, V.; Stefa, J. Cross-Domain Authorship Attribution Combining Instance Based and Profile-Based Features. In Proceedings of the CLEF, Lugano, Switzerland, 9–12 September 2019. [Google Scholar]

- Ruder, S.; Ghaffari, P.; Breslin, J.G. Character-level and Multi-channel Convolutional Neural Networks for Large-scale Authorship Attribution. arXiv 2016, arXiv:1609.06686v1. [Google Scholar] [CrossRef]

- Zhang, R.; Hu, Z.; Guo, H.; Mao, Y. Syntax Encoding with Application in Authorship Attribution. In Proceedings of the EMNLP, Brussels, Belgium, 31 October–4 November 2018; pp. 2742–2753. [Google Scholar]

- Qian, C.; He, T.; Zhang, R. Deep Learning Based Authorship Identification; Stanford University: Stanford, CA, USA, 2017. [Google Scholar]

- Bagnall, D. Author Identification using Multi-Headed Recurrent Neural Networks. arXiv 2015, arXiv:1506.04891v2. [Google Scholar] [CrossRef]

- Barlas, G.; Stamatatos, E. Cross-Domain Authorship Attribution Using Pre-trained Language Models. Artificial Intelligence Applications and Innovations; Maglogiannis, I., Iliadis, L., Pimenidis, E., Eds.; Springer International Publishing: Cham, Switzerland, 2020; pp. 255–266. [Google Scholar]

- Liu, Y.; Che, W.; Wang, Y.; Zheng, B.; Qin, B.; Liu, T. Deep Contextualized Word Embeddings for Universal Dependency Parsing. ACM Trans. Asian Low-Resour. Lang. Inf. Process. 2019, 19, 1–17. [Google Scholar] [CrossRef]

- Howard, J.; Ruder, S. Universal Language Model Fine-tuning for Text Classification. In Proceedings of the 56th Annual Meeting of the Association for Computational Linguistics, Melbourne, Australia, 15–20 July 2018; Association for Computational Linguistics: Stroudsburg, PA, USA, 2018; pp. 328–339. [Google Scholar] [CrossRef]

- Goldstein-Stewart, J.; Winder, R.; Sabin, R. Person Identification from Text and Speech Genre Samples. In Proceedings of the 12th Conference of the European Chapter of the ACL (EACL 2009), Association for Computational Linguistics, Stroudsburg, PA, USA; 2009; pp. 336–344. [Google Scholar]

- Fabien, M.; Villatoro-Tello, E.; Motlicek, P.; Parida, S. BertAA: BERT fine-tuning for Authorship Attribution. In Proceedings of the 17th International Conference on Natural Language Processing (ICON), Patna, India, 18–21 December 2020; NLP Association of India (NLPAI), Indian Institute of Technology Patna: Patna, India, 2020; pp. 127–137. [Google Scholar]

- Klimt, B.; Yang, Y. The Enron Corpus: A New Dataset for Email Classification Research. In Proceedings of the 15th European Conference on Machine Learning, ECML’04, Pisa, Italy, 20–24 September 2004; Springer: Berlin/Heidelberg, Germany, 2004; pp. 217–226. [Google Scholar] [CrossRef]

- Deutsch, C.; Paraboni, I. Authorship attribution using author profiling classifiers. In Natural Language Engineering; Cambridge University Press: Cambridge, UK, 2022; pp. 1–28. [Google Scholar] [CrossRef]

- Manolache, A.; Brad, F.; Burceanu, E.; Bărbălău, A.; Ionescu, R.C.; Popescu, M.C. Transferring BERT-like Transformers’ Knowledge for Authorship Verification. arXiv 2021, arXiv:2112.05125. [Google Scholar]

- Dingledine, R.; Mathewson, N.; Syverson, P. Tor: The Second-Generation Onion Router. In Proceedings of the 13th Conference on USENIX Security Symposium—Volume 13, USENIX Association, SSYM’04, Anaheim, CA, USA, 9–11 August 2004; p. 21. [Google Scholar]

- Spitters, M.; Klaver, F.; Koot, G.; van Staalduinen, M. Authorship Analysis on Dark Marketplace Forums. In Proceedings of the 2015 European Intelligence and Security Informatics Conference, Manchester, UK, 7–9 September 2015; pp. 1–8. [Google Scholar] [CrossRef]

- Swain, S.; Mishra, G.; Sindhu, C. Recent approaches on authorship attribution techniques—An overview. In Proceedings of the 2017 International Conference of Electronics, Communication and Aerospace Technology (ICECA), Coimbatore, India, 20–22 April 2017; Volume 1, pp. 557–566. [Google Scholar] [CrossRef]

- Ranaldi, L.; Nourbakhsh, A.; Patrizi, A.; Ruzzetti, E.S.; Onorati, D.; Fallucchi, F.; Zanzotto, F.M. The Dark Side of the Language: Pre-trained Transformers in the DarkNet. arXiv 2022, arXiv:2201.05613. [Google Scholar]

- Scrivens, R.; Davies, G.; Frank, R.; Mei, J. Sentiment-Based Identification of Radical Authors (SIRA). In Proceedings of the 2015 IEEE International Conference on Data Mining Workshop (ICDMW), Atlantic City, NJ, USA, 14–17 November 2015; pp. 979–986. [Google Scholar] [CrossRef]

- Zhang, Y.; Zeng, S.; Huang, C.N.; Fan, L.; Yu, X.; Dang, Y.; Larson, C.A.; Denning, D.; Roberts, N.; Chen, H. Developing a Dark Web collection and infrastructure for computational and social sciences. In Proceedings of the 2010 IEEE International Conference on Intelligence and Security Informatics, Vancouver, BC, Canada, 23–26 May 2010; pp. 59–64. [Google Scholar] [CrossRef]

- Chen, H.; Chung, W.; Qin, J.; Reid, E.; Sageman, M.; Weimann, G. Uncovering the Dark Web: A case study of Jjihad on the Web. J. Am. Soc. Inf. Sci. Technol. 2008, 59, 1347–1359. [Google Scholar] [CrossRef]

- Abbasi, A.; Chen, H.; Salem, A. Sentiment Analysis in Multiple Languages: Feature Selection for Opinion Classification in Web Forums. ACM Trans. Inf. Syst. 2008, 26, 1–34. [Google Scholar] [CrossRef]

- Blei, D.M.; Ng, A.Y.; Jordan, M.I. Latent Dirichlet Allocation. J. Mach. Learn. Res. 2003, 3, 993–1022. [Google Scholar]

- Klema, V.; Laub, A. The singular value decomposition: Its computation and some applications. IEEE Trans. Autom. Control 1980, 25, 164–176. [Google Scholar] [CrossRef]

- Bird, S.; Klein, E.; Loper, E. Natural Language Processing with Python: Analyzing Text with the Natural Language Toolkit; O’Reilly: Beijing, China, 2009. [Google Scholar]

- Toutanova, K.; Klein, D.; Manning, C.D.; Singer, Y. Feature-Rich Part-of-Speech Tagging with a Cyclic Dependency Network. In Proceedings of the 2003 Human Language Technology Conference of the North American Chapter of the Association for Computational Linguistics; 2003; pp. 252–259. [Google Scholar]

- Cortes, C.; Vapnik, V. Support-vector networks. Mach. Learn. 1995, 20, 273–297. [Google Scholar] [CrossRef]

- Zhu, M.; Zhang, Y.; Chen, W.; Zhang, M.; Zhu, J. Fast and Accurate Shift-Reduce Constituent Parsing. In Proceedings of the 51st Annual Meeting of the Association for Computational Linguistics, Sofia, Bulgaria, 4–9 August 2013; Association for Computational Linguistics: Stroudsburg, PA, USA, 2013; Volume 1, Long Papers. pp. 434–443. [Google Scholar]

- Zhu, Y.; Kiros, R.; Zemel, R.; Salakhutdinov, R.; Urtasun, R.; Torralba, A.; Fidler, S. Aligning Books and Movies: Towards Story-like Visual Explanations by Watching Movies and Reading Books. arXiv 2015, arXiv:1506.06724. [Google Scholar]

- Pires, T.; Schlinger, E.; Garrette, D. How multilingual is Multilingual BERT? arXiv 2019, 1502. [Google Scholar]

- Parker, R.; Graff, D.; Kong, J.; Chen, K.; Maeda, K. English Gigaword Fifth Edition ldc2011t07; Technical Report; Linguistic Data Consortium: Philadelphia, PA, USA, 2011. [Google Scholar]

- Callan, J.; Hoy, M.; Yoo, C.; Zhao, L. Clueweb09 Data Set. 2009. Available online: https://ir-datasets.com/clueweb09.html (accessed on 12 September 2022).

- Crawl, C. Common Crawl. 2019. Available online: http://commoncrawl.org (accessed on 12 September 2022).

- Sanh, V.; Debut, L.; Chaumond, J.; Wolf, T. DistilBERT, a distilled version of BERT: Smaller, faster, cheaper and lighter. arXiv 2019, arXiv:1910.01108. [Google Scholar]

- Wolf, T.; Debut, L.; Sanh, V.; Chaumond, J.; Delangue, C.; Moi, A.; Cistac, P.; Rault, T.; Louf, R.; Funtowicz, M.; et al. HuggingFace’s Transformers: State-of-the-art Natural Language Processing. arXiv 2019, arXiv:1910.03771. [Google Scholar]

- Kingma, D.P.; Ba, J. Adam: A Method for Stochastic Optimization. CoRR 2015, arXiv:1412.6980. [Google Scholar]

- Sun, C.; Qiu, X.; Xu, Y.; Huang, X. How to Fine-Tune BERT for Text Classification. In Chinese Computational Linguistics; Sun, M., Huang, X., Ji, H., Liu, Z., Liu, Y., Eds.; Springer International Publishing: Cham, Switzerland, 2019; pp. 194–206. [Google Scholar]

- Zhang, Z.; Wu, Y.; Zhao, H.; Li, Z.; Zhang, S.; Zhou, X.; Zhou, X. Semantics-aware BERT for language understanding. In Proceedings of the Thirty-Fourth AAAI Conference on Artificial Intelligence (AAAI-2020), New York, NY, USA, 7–12 February 2020. [Google Scholar]

- Goldberg, Y. Assessing BERT’s Syntactic Abilities. arXiv 2019, arXiv:1901.05287. [Google Scholar]

- Iyer, A.; Vosoughi, S. Style Change Detection Using BERT. In Proceedings of the CLEF, Thessaloniki, Greece, 22–25 September 2020. [Google Scholar]

- Podkorytov, M.; Biś, D.; Liu, X. How Can the [MASK] Know? The Sources and Limitations of Knowledge in BERT. In Proceedings of the 2021 International Joint Conference on Neural Networks (IJCNN), Shenzhen, China, 18–22 July 2021; pp. 1–8. [Google Scholar] [CrossRef]

- Choshen, L.; Eldad, D.; Hershcovich, D.; Sulem, E.; Abend, O. The Language of Legal and Illegal Activity on the Darknet. In Proceedings of the 57th Annual Meeting of the Association for Computational Linguistics, Florence, Italy, 28 July–2 August 2019; Association for Computational Linguistics: Stroudsburg, PA, USA, 2019; pp. 4271–4279. [Google Scholar] [CrossRef]

- Ma, X.; Xu, P.; Wang, Z.; Nallapati, R.; Xiang, B. Domain Adaptation with BERT-based Domain Classification and Data Selection. In Proceedings of the 2nd Workshop on Deep Learning Approaches for Low-Resource NLP (DeepLo 2019), Hong Kong, China, 3 November 2019; Association for Computational Linguistics: Stroudsburg, PA, USA, 2019; pp. 76–83. [Google Scholar] [CrossRef] [Green Version]

- Carlini, N.; Tramer, F.; Wallace, E.; Jagielski, M.; HerbertVoss, A.; Lee, K.; Roberts, A.; Brown, T.; Song, D.; Erlingsson, U.; et al. Extracting Training Data from Large Language Models. In Proceedings of the USENIX Security Symposium, Online, 11–13 August 2021. [Google Scholar]

- Ranaldi, L.; Fallucchi, F.; Santilli, A.; Zanzotto, F.M. KERMITviz: Visualizing Neural Network Activations on Syntactic Trees. In Metadata and Semantic Research; Garoufallou, E., Ovalle-Perandones, M.A., Vlachidis, A., Eds.; Springer International Publishing: Cham, Switzerland, 2022; pp. 139–147. [Google Scholar]

| Message | Member |

|---|---|

| So she wants to be widowed after few years? | Member A |

| Jihad is a part of the deen so when she asks | |

| a husband to fulfil part of the deen | |

| then there’s nothing wrong with this. | |

| It includes many things. Also going for | Member A |

| Jihad doesn’t mean getting killed. | |

| if that’s what she means he has to be killed then this is forbidden | |

| since she’s asking something unknown. | |

| If the companions and tabi’een had a consensus as is claimed, | Member B |

| anyone who would go against the consensus would be a kaafir | |

| It is just to many things for me personally to justify sistsers attending | Member B |

| mixed universites of the kuffar. | |

| Looking up to and learning from a kaafir, | |

| should be done by strong capable | |

| muslim men who won’t begin to learn, love respect the kuffar. | |

| (you should not let the weaker women/children be | |

| in this setting especially YOUNG ones who have | |

| never been exposed to such things). | |

| Salam, It is encouraging to see that there are women | Member C |

| whom do appreciate the efforts | |

| of the good men of this ummah. | |

| Marriage is definitely from the | |

| tremendous ni’aam(favors, support, kindness) | |

| of Allah subhanahu wa ta’ala. | |

| May Allah open the doors of al-jannah for the women | Member C |

| whom strive to please their husbands | |

| and the men who do the things to make that an easy task, ameen. |

| Models | Methods | 3 Authors | 5 Authors | 10 Authors |

|---|---|---|---|---|

| Style- and lexical-based | BoW | 84.1 (± 1.5) | 73.2 (± 1.2) | 58.3 (± 0.8) |

| BoPOS | 84.9 (±0.85) | 74.5 (±1.4) | 57.6 (± 0.9) | |

| TF-IDF | 83.7 (± 0.6) | 74.1 (± 1.7) | 57.8 (± 1.2) | |

| TF-IDF | 74.6 (± 0.55) | 72.5 (± 0.86) | 58.9 (±1.21) | |

| Bleaching text | 70.3 (± 0.5) | 70.8 (± 0.5) | 56.9 (± 0.5) | |

| Syntax-based | KERMIT | 83.95 (± 1.84) | 70.94 (± 1.35) | 56.34 (± 1.52) |

| Transformer-based | 62.1 (± 1.45) | 47.6 (± 1.62) | 32.7 (± 0.94) | |

| 40.2 (± 1.14) | 29.1(± 0.92) | 23.3 (± 1.23) | ||

| 45.7 (± 1.32) | 31.7 (± 2.23) | 19.9 (± 1.53) | ||

| 61.4 (± 1.15) | 45.7 (± 1.22) | 32.1 (± 0.85) | ||

| 62.1 (± 1.37) | 42.4 (± 1.43) | 29.4 (± 0.93) | ||

| 59.8 (± 1.55) | 46.9 (± 1.62) | 32.5 (± 1.23) | ||

| BERT | 80.7 (± 1.33) | 69.8 (± 1.27) | 58.2 (± 1.24) | |

| 76.2 (± 2.12) | 63.3 (± 1.23) | 50.8 (± 1.46) |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Ranaldi, L.; Ranaldi, F.; Fallucchi, F.; Zanzotto, F.M. Shedding Light on the Dark Web: Authorship Attribution in Radical Forums. Information 2022, 13, 435. https://doi.org/10.3390/info13090435

Ranaldi L, Ranaldi F, Fallucchi F, Zanzotto FM. Shedding Light on the Dark Web: Authorship Attribution in Radical Forums. Information. 2022; 13(9):435. https://doi.org/10.3390/info13090435

Chicago/Turabian StyleRanaldi, Leonardo, Federico Ranaldi, Francesca Fallucchi, and Fabio Massimo Zanzotto. 2022. "Shedding Light on the Dark Web: Authorship Attribution in Radical Forums" Information 13, no. 9: 435. https://doi.org/10.3390/info13090435

APA StyleRanaldi, L., Ranaldi, F., Fallucchi, F., & Zanzotto, F. M. (2022). Shedding Light on the Dark Web: Authorship Attribution in Radical Forums. Information, 13(9), 435. https://doi.org/10.3390/info13090435