Introducing the Architecture of FASTER: A Digital Ecosystem for First Responder Teams

Abstract

:1. Introduction

- Highlighting the details of FASTER’s architecture and presenting the outcomes of the implementation experience;

- Sharing the results from the evaluation events that have been completed and presenting a list of events that are scheduled until the end of the project;

- Discussing possible next steps in an effort to go beyond the delivered architecture of FASTER, using the experience coming from the design and implementation of it, and to provide ideas and suggestions on possible future extensions that can address more sophisticated hazards (e.g., fake news detection regarding on-going disasters).

2. FASTER Architecture: Requirements and Goals

- Category 1—Augmented Reality (AR) for Operational Situational Awareness: the tools in this category aim to offer more efficient situational awareness and decision making to practitioners in critical conditions that require full attention and focus from involved FRs. To achieve this, AR technology is used to deliver, in real-time, information gathered from the other components of the ecosystem (e.g., alerts, team status and location, sensor values), filtering the information and providing targeted content to the AR user. AR is supplied both through mobile phones and AR glasses (e.g., HoloLens) and enables FRs to access previously unreachable information in a contextual fashion, by superimposing the data to the real world.The specific modules/tools in the architecture that are included in this category are:

- ○

- Mobile Augmented Reality for Operational Support;

- ○

- Extended Vision Technologies using Commercial Light-Weight UAVs.

- Category 2—Mobile and Wearable Technologies: Mobile and wearable technologies are developed in FASTER to enable communication between and to FRs. FRs will be able to receive and send information in an easy way that does not hinder their work, enabled by the technologies’ recognition of gestures and voice commands. Control centers can oversee the location and personal information of FRs in the field and relay messages and alerts. Such technologies are being developed in FASTER, not only for humans but for animals (e.g., dogs/K9s) as well, in order to collect valuable information about the animals’ activities.The specific modules/tools in the architecture that are included in this category are:

- ○

- Smart wearables and textiles;

- ○

- Animal Wearables for Behavior Recognition;

- ○

- Sensory data fusion.

- Category 3—Body and Gesture-based User Interfaces: FASTER provides a framework for wearable devices that capture and identify arm/body movements exploiting artificial intelligence (AI). It provides non-visual/nonaudible communication capabilities, translating movements or critical readings from paired wearable devices to coded messages, able to be communicated to cooperating agents on the field through vibrations on wearable devices and following Morse code.The specific modules/tools in the architecture that are included in this category are:

- ○

- Hand gesture recognition for remote FRs communication;

- ○

- Gesture-based UxV.

- Category 4—Autonomous Vehicles: The FASTER robotic vehicle includes a robotic platform able to integrate different sensors (i.e., optical and thermal cameras, environmental, nuclear, biological, chemical, radiological and explosives). Moreover, an array of drones of different sizes and equipped with different payloads that will be capable of providing different services to the first responder’s operators can also be deployed.The specific modules/tools in the architecture that are included in this category are:

- ○

- Robotic Platform;

- ○

- Swarm operational capabilities to allow complex tasks.

- Category 5—Resilient Communications Support: FASTER provides a resilient communication infrastructure allowing FRs to easily communicate during a disaster. In particular, a novel, low-cost device called ResCuE, which is capable of delivering, through broadcasting, critical information to first responders or instructions to a crowd of Civilians is developed.

- ○

- Emergency communication box (ResCuE);

- ○

- 5G-enabled communication infrastructure;

- ○

- Communication mesh through opportunistic relay services;

- ○

- Blockchain distributed network (distributed ledger technology—AIngle);

- Category 6—Common Operational Picture: In disaster scenarios, a portable control center (PCC) collectively visualizes all the information needed for having a better understanding of the emergency, such as event location and people involved, enabling immediate reactions and supporting decision making. In particular, PCC provides to the emergency management teams a portable common operational picture (PCOP) with a clear perception of the scene, highlighting the relations between the actors involved (first responders, victims, NGOs, etc.) and environmental factors (risks, hazards, points-of-interest) in respect to time and space. The PCOP constitutes an information integration and visualization medium of data coming from heterogeneous information sources (e.g., sensors, robots, UAVs and mobile devices).The specific modules/tools in the architecture that are included in this category are:

- ○

- Portable control center;

- ○

- Social media analysis;

- ○

- Mission management and progress monitoring.

- -

- The IFAFRI (https://www.internationalresponderforum.org/, accessed on 20 February 2022) guidelines: IFAFRI is the International Forum to Advance First Responder Innovation, an organization created by international government leaders that gives a greater voice to FRs. IFAFRI has tried to identify potential areas of research and development where there may be opportunities for industry and academia to propose and develop innovative solutions. The forum focuses on the technologies needed to help FRs conduct their missions safely, effectively and efficiently and provides an overall umbrella for the requirements that should be met, and FASTER was aligned with IFAFRI’s main aims.

- -

- Members of the consortium: FASTER is a project that holds a consortium that includes (among others) eight first responder teams from seven European countries. These teams have been presented with the potentials of each tool and have presented a list of user requirements explicitly for them.

- -

- Innovative and efficient tools covering real-time gathering and processing of heterogeneous physiological and critical environmental data from smart textiles, wearables, sensors, and social media;

- -

- Extended inspection capabilities and physical mitigation;

- -

- Tools for individual health assessment and disaster scene analysis for early warnings and risk mitigation;

- -

- Improved ergonomics providing augmented reality tools for enhanced information streaming;

- -

- Resilient communication at the field level and at the infrastructure level;

- -

- Tactical situational awareness providing innovative visualization services for a portable common operational picture (COP) for both indoor and outdoor scenario representation;

- -

- Efficient cooperation and interoperability among first responders, law enforcements agencies (LEAs), community members and other resource providers.

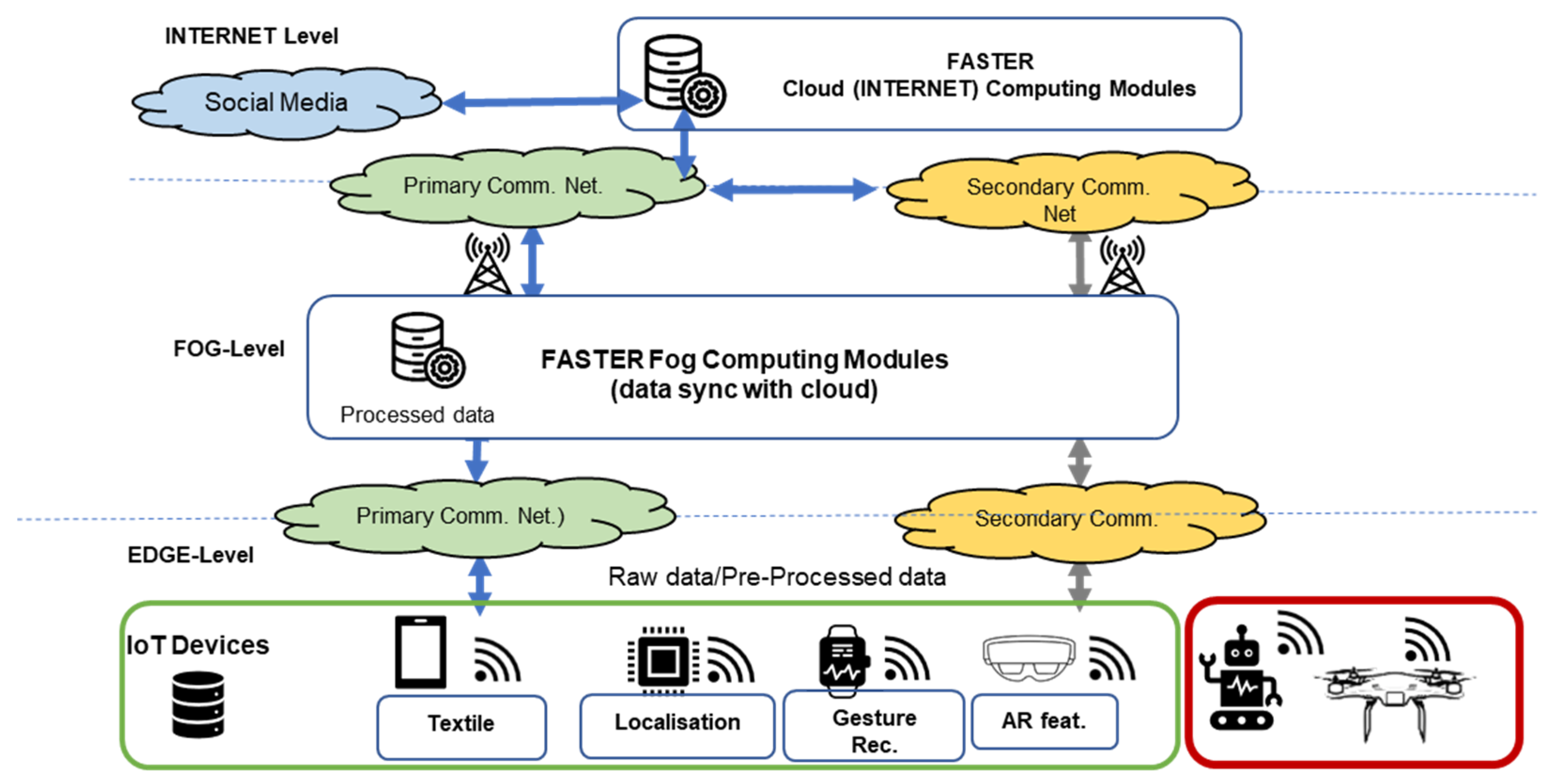

3. FASTER Architecture Design

- -

- The bottom layer is called “EDGE-Layer” and consists of IoT devices, from data aggregators and monitoring tools. Data aggregators can be smartphone applications, single-board computer applications and unmanned aerial vehicles (UAVs) that share information to the layer above (i.e., FOG-Layer). The monitoring tools are applications that assist FRs on the field by providing them with crucial information (e.g., biometrics, environmental data, video streaming and area mapping).

- -

- The next layer, in the middle of the three-layer approach, is called the “FOG-Layer”, and the architecture of this layer is based on event-driven microservices. The core service of this layer is a pub/sub system (e.g., a KAFKA implementation), and all the other services function based on the updates of the pub/sub system topics. FOG is a layer that supports the EGDE even without connectivity to the INTERNET layer. It is deployed locally and makes sure that FASTER tools operate and co-operate as defined and when Internet connection to the backbone communication network is achieved, synchronizing with the INTERNET layer to include the characteristics supported by it.

- -

- The top layer is called “INTERNET-Layer” and its purpose is to provide analysis in more depth and central processing of several emergency scenes. Similar to the FOG-Layer, the architecture of the INTERNET-Layer is an event-driven microservices architecture. The core part, once more, is a pub/sub system, and any changes to it trigger the rest of the services.

3.1. INTERNET Layer

- Social Media Analysis gathers information from Twitter streams and analyzes the in order to extract further information that will enhance the operation on the field.

- QoS Monitoring Framework monitors all systems in the FASTER ecosystem by collecting information such as latency, latency, bandwidth, computation efficiency, as well as other application metrics. Based on these data, the team that manages the ecosystem makes decision about services replacement, configuration, scaling-up, etc.

- Scene Analysis from Building Sensor Data analyzes data from sensors in a building to trigger events such as high levels of CO2 and high temperature.

- Mission Management service provides functionality that supports the operation on the field using a chatbot application for in-field responders and processing and monitoring of the gathered information. Portable Command and Control Center is a visualization system and has a crucial role in the ecosystem because it is the service that monitors the operation on the field.

3.2. FOG Layer

- AR for operational support creates and augments reality to allow first responders to work hands-free by displaying useful information (commands, information from PCOP, alerts etc.) and sharing annotations.

- Portable command operation picture (PCOP) is the eye of the team that manages the operation. It visualizes all the incoming data and processes information from the EDGE layer.

- Building sensor data visualization service creates all the appropriate visualizations with building sensor data gathered from sources in the EDGE layer.

- Scene analysis from video identifies potential risks on the field by using video data (AR devices, UxVs) and a trained AI algorithm.

- Information processing for enhanced COP assists PCOP by analyzing the data produced by the rest components of the FASTER ecosystem, to extract useful knowledge and events.

- Scene analysis from IoT sensor data generates local awareness alerts by analyzing alerts produced by wearables and textiles in the EDGE layer.

- UxVs gesture control component gives the advantage to first responders to control UxVs intuitively without using controllers.

- Extended vision extends the field of view of first responders using UAVs for gathering video from the field and visualizes it to first responders in AR device such Microsoft’s HoloLens (https://www.microsoft.com/en-us/hololens, accessed on 20 February 2022).

- 2D/3D mapping provides first responders with 2D and 3D mapping of the affected area of the mission. This service uses images gathered from UAVs to provide updated maps along with location information.

- The Secure IoT Middleware (SIM) provides a set of services/functionalities that secures the operation in the FOG layer. Such services are authentication, authorization, and accounting (AAA), which protect all the services in this layer, as well as traffic analysis to protect infrastructure from malicious users, mechanisms that detect personal information on incoming packets, and which anonymize it, and finally a mechanism that validates data before producing them for the appropriate topic.

3.3. EDGE Layer

- Building sensors on the EDGE layer produce information to the pub/sub system, and the building sensor data module sends them to the INTERNET layer for analyses purposes.

- Smart Textiles Framework (STF) consists of sensors integrated in wearable textiles, providing biometric and environmental data for each FR to the FOG layer. Smart textiles are interconnected to smartphones in order to produce the generated data to the FOG layer.

- Behavioral recognition Animal Wearable is a custom wearable solution that uses AI algorithms to provide notifications when the animal barks or when specific movements are repeated (by the animal) that are performed when a victim is located.

- Movement recognition tool (MORSE) provides a novel mechanism for translating critical arm moves and gestures to messages using AI techniques.

- Mini-UAVs capabilities provide video streaming of the surrounding areas

- AR device and mobile application helps the FR see the needed information on their glasses and to act, allowing them to move freely in the surroundings.

- Chatbot client will help share mission updates and critical information

- ResCuE is a low-cost resilient communication device that supports the broadcasting of short text messages when no communication infrastructure is available.

- Weather station delivers local weather information to the FR deployed in the area.

- UGV with navigation capabilities allows the FR to use the drones with their hand

- Swarm of UAVs capabilities to be deployed when communications are damaged and used to deliver messages, forming an ad hoc communication network.

4. Evaluation Results

4.1. A Use Case Scenario

4.2. Scenario Description

5. Expanding FASTER’s Architecture

6. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- European Environment Agency. Available online: https://www.eea.europa.eu/ (accessed on 13 December 2021).

- Wehrli, A.; Herkendell, J.; Jol, A. Mapping the Impacts of Natural Hazards and Technological Accidents in Europe; European Environment Agency (EEA): København, Denmark, 2010. [Google Scholar]

- European Environment Agency. Available online: https://www.eea.europa.eu/ims/economic-losses-from-climate-related (accessed on 13 December 2021).

- Forzieri, G.; Cescatti, A.; e Silva, F.B.; Feyen, L. Increasing risk over time of weather-related hazards to the European population: A data-driven prognostic study. Lancet Planet. Health 2017, 1, e200–e208. [Google Scholar] [CrossRef]

- First Responder Advanced Technologies for Safe and Efficient Emergency Response. Available online: https://www.faster-project.eu/ (accessed on 13 December 2021).

- Piscitelli, S.; Arnaudo, E.; Rossi, C. Multilingual Text Classification from Twitter during Emergencies. In Proceedings of the 2021 IEEE International Conference on Consumer Electronics (ICCE), Las Vegas, NV, USA, 10–12 January 2021; pp. 1–6. [Google Scholar] [CrossRef]

- Sainidis, D.; Tsiakmakis, D.; Konstantoudakis, K.; Albanis, G.; Dimou, A.; Daras, P. Single-handed Gesture UAV Control and Video Feed AR Visualization for First Responders. In Proceedings of the International Conference on Information Systems for Crisis Response and Management (ISCRAM), Blacksburg, VA, USA, 23–26 May 2021. [Google Scholar]

- Patrikakis, C.Z.; Kogias, D.G.; Chatzigeorgiou, C.; Kalyvas, D.; Katsadouros, E.; Giannousis, C. A method for measuring urban space density of people and deliver notification, with respect to privacy. In Proceedings of the 2021 IEEE International Conference on Consumer Electronics (ICCE), Las Vegas, NV, USA, 10–12 January 2021; pp. 1–6. [Google Scholar] [CrossRef]

- Ragab, A.R.; Isaac, M.S.A.; Luna, M.A.; Peña, P.F. Unmanned Aerial Vehicle Swarming. In Proceedings of the 2021 International Conference on Engineering and Emerging Technologies (ICEET), Istanbul, Turkey, 27–28 September 2021; pp. 1–6. [Google Scholar] [CrossRef]

- Luna, M.A.; Ragab, A.R.; Isac, M.S.A.; Peña, P.F.; Cervera, P.C. A New Algorithm Using Hybrid UAV Swarm Control System for Firefighting Dynamical Task Allocation. In Proceedings of the 2021 IEEE International Conference on Systems, Man, and Cybernetics (SMC), Melbourne, Australia, 17–20 October 2021; pp. 655–660. [Google Scholar] [CrossRef]

- Kasnesis, P.; Doulgerakis, V.; Uzunidis, D.; Kogias, D.G.; Funcia, S.I.; González, M.B.; Giannousis, C.; Patrikakis, C.Z. Deep Learning Empowered Wearable-Based Behavior Recognition for Search and Rescue Dogs. Sensors 2022, 22, 993. [Google Scholar] [CrossRef] [PubMed]

- Richards, M. Event-Driven Architecture. In Software Architecture Patterns; O’REILLY: Newton, MA, USA, 2015; pp. 18–19. [Google Scholar]

- Kumar, S.; Asthana, R.; Upadhyay, S.; Upreti, N.; Akbar, M. Fake news detection using deep learning models: A novel approach. Trans. Emerg. Telecommun. Technol. 2020, 31, e3767. [Google Scholar] [CrossRef]

- Lu, Y.-J.; Li, C.T. GCAN: Graph-aware Co-Attention Networks for Explainable Fake News Detection on Social Media. arXiv 2020, arXiv:2004.11648. [Google Scholar]

- Kasnesis, P.; Heartfield, R.; Toumanidis, L.; Liang, X.; Loukas, G.; Patrikakis, C. A prototype deep learning paraphrase identification service for discovering information cascades in social networks. In Proceedings of the 2020 IEEE International Conference on Multimedia & Expo Workshops (ICMEW), London, UK, 6–10 July 2020; pp. 1–4. [Google Scholar]

- Wang, Q.; Guo, Y.; Yu, L.; Li, P. Earthquake prediction based on spatio-temporal data mining: An LSTM network approach. IEEE Trans. Emerg. Top. Comput. 2017, 8, 148–158. [Google Scholar] [CrossRef]

- Asim, K.M.; Idris, A.; Iqbal, T.; Martínez-Álvarez, F. Earthquake prediction model using support vector regressor and hybrid neural networks. PLoS ONE 2018, 13, e0199004. [Google Scholar] [CrossRef]

- Sayad, Y.O.; Mousannif, H.; Al Moatassime, H. Predictive modeling of wildfires: A new dataset and machine learning approach. Fire Saf. J. 2019, 104, 130–146. [Google Scholar] [CrossRef]

- Ghorbanzadeh, O.; Valizadeh Kamran, K.; Blaschke, T.; Aryal, J.; Naboureh, A.; Einali, J.; Bian, J. Spatial prediction of wildfire susceptibility using field survey gps data and machine learning approaches. Fire 2019, 2, 43. [Google Scholar] [CrossRef] [Green Version]

- Trafalis, T.B.; Adrianto, I.; Richman, M.B. Richman. Active learning with support vector machines for tornado prediction. In International Conference on Computational Science; Springer: Berlin/Heidelberg, Germany, 2007; pp. 1130–1137. [Google Scholar]

- Huang, B.; Zhang, R.; Lu, Z.; Zhang, Y.; Wu, J.; Zhan, L.; Hung, P.C. BPS: A reliable and efficient pub/sub communication model with blockchain-enhanced paradigm in multi-tenant edge cloud. J. Parallel Distrib. Comput. 2020, 143, 167–178. [Google Scholar] [CrossRef]

- Hufstetler, W.A.; Ramos, M.J.H.; Wang, S. NFC unlock: Secure two-factor computer authentication using NFC. In Proceedings of the 2017 IEEE 14th International Conference on Mobile Ad Hoc and Sensor Systems (MASS), Orlando, FL, USA, 22–25 October 2017; pp. 507–510. [Google Scholar]

- Ali, Z.; Shah, M.A.; Almogren, A.; Ud Din, I.; Maple, C.; Khattak, H.A. Named data networking for efficient iot-based disaster management in a smart campus. Sustainability 2020, 12, 3088. [Google Scholar] [CrossRef]

- Wang, X.; Cai, S. Secure healthcare monitoring framework integrating NDN-based IoT with edge cloud. Future Gener. Comput. Syst. 2020, 112, 320–329. [Google Scholar] [CrossRef]

- Rawat, D.B.; Doku, R.; Adebayo, A.; Bajracharya, C.; Kamhoua, C. Blockchain enabled named data networking for secure vehicle-to-everything communications. IEEE Netw. 2020, 34, 185–189. [Google Scholar] [CrossRef]

- Wilson, S.; Guliani, H.; Boichev, G. On the economics of post-traumatic stress disorder among first responders in Canada. J. Community Saf. Well-Being 2016, 1, 26–31. [Google Scholar] [CrossRef] [Green Version]

- Subhani, A.R.; Mumtaz, W.; Saad, M.N.B.M.; Kamel, N.; Malik, A.S. Machine learning framework for the detection of mental stress at multiple levels. IEEE Access 2017, 5, 13545–13556. [Google Scholar] [CrossRef]

- Pandey, P.S. Machine learning and IoT for prediction and detection of stress. In Proceedings of the 2017 17th International Conference on Computational Science and Its Applications (ICCSA), Trieste, Italy, 3–6 July 2017; pp. 1–5. [Google Scholar]

- Vuppalapati, C.; Raghu, N.; Veluru, P.; Khursheed, S. A system to detect mental stress using machine learning and mobile development. In Proceedings of the 2018 International Conference on Machine Learning and Cybernetics (ICMLC), Chengdu, China, 15–18 July 2018; Volume 1, pp. 161–166. [Google Scholar]

| A/A | Requirement/Capability Gap | Categories of Tools Used to Meet the Requirement | FASTER Solution/Tool |

|---|---|---|---|

| 1 | The ability to know the location of responders and their proximity to threats and hazards in real time | Categories 1 (Situational awareness), 2 (Mobile and Wearable Technologies), 6 (Common Operational Picture) |

|

| 2 | The ability to detect, monitor, and analyze passive and active threats and hazards at incident scenes in real time |

|

|

| 3 | The ability to rapidly identify hazardous agents and contaminants |

|

|

| 4 | The ability to incorporate information from multiple and non-traditional sources into incident command operations |

|

|

| 5 | The ability to maintain interoperable communications with responders in any environmental conditions |

|

|

| 6 | The ability to obtain critical information remotely about the extent, perimeter, or interior of the incident |

|

|

| 7 | The ability to conduct on-scene operations remotely without endangering responders |

|

|

| 8 | The ability to monitor the physiological signs of emergency responders |

|

|

| 9 | The ability to create actionable intelligence based on data and information from multiple sources |

|

|

| 10 | The ability to provide appropriate and advanced personal protective equipment |

|

|

| Module Name | PT Pilot | JP Pilot 1 | ES Pilot | GR Pilot | FR Pilot | JP Pilot 2 | PL Pilot | IT Final | FI Final | ES Final | Total |

|---|---|---|---|---|---|---|---|---|---|---|---|

| Portable command and control center | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | 10 |

| Smart textiles framework | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | 8 | ||

| Animal harness for behavior recognition | ✔ | ✔ | ✔ | ✔ | ✔ | 5 | |||||

| MORSE—gesture communication | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | 7 | |||

| RESCUE—communication box | ✔ | ✔ | ✔ | ✔ | ✔ | 5 | |||||

| Extended vision using mini-UAVs+ gesture control | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | 8 | ||

| 2D/3D mapping and AI scene analysis (aerial) | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | 10 |

| Ground autonomous vehicles and 3D mapping | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | 6 | ||||

| Swarm of drones for complex tasks | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | 6 | ||||

| Augmented reality for operational support | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | 6 | ||||

| Mission management tool | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | 8 | ||

| Social media analysis | ✔ | ✔ | ✔ | ✔ | 3 | ||||||

| Building Situation Tool (BUST) | ✔ | 1 | |||||||||

| Local weather station | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | 6 | ||||

| UAV relay for extended communication capabilities | ✔ | ✔ | ✔ | ✔ | ✔ | 5 | |||||

| 5G-enabled network | ✔ | ✔ | 2 |

| First Testing/Piloting Round | Second Testing/Piloting Round |

|---|---|

| Communication, Interoperability, Security | Communication, Interoperability, Security, Authentication, Encryption |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Katsadouros, E.; Kogias, D.G.; Patrikakis, C.Z.; Giunta, G.; Dimou, A.; Daras, P. Introducing the Architecture of FASTER: A Digital Ecosystem for First Responder Teams. Information 2022, 13, 115. https://doi.org/10.3390/info13030115

Katsadouros E, Kogias DG, Patrikakis CZ, Giunta G, Dimou A, Daras P. Introducing the Architecture of FASTER: A Digital Ecosystem for First Responder Teams. Information. 2022; 13(3):115. https://doi.org/10.3390/info13030115

Chicago/Turabian StyleKatsadouros, Evangelos, Dimitrios G. Kogias, Charalampos Z. Patrikakis, Gabriele Giunta, Anastasios Dimou, and Petros Daras. 2022. "Introducing the Architecture of FASTER: A Digital Ecosystem for First Responder Teams" Information 13, no. 3: 115. https://doi.org/10.3390/info13030115

APA StyleKatsadouros, E., Kogias, D. G., Patrikakis, C. Z., Giunta, G., Dimou, A., & Daras, P. (2022). Introducing the Architecture of FASTER: A Digital Ecosystem for First Responder Teams. Information, 13(3), 115. https://doi.org/10.3390/info13030115