A Survey on Sentiment Analysis and Opinion Mining in Greek Social Media

Abstract

:1. Introduction

- A comprehensive survey of methods, tools, linguistic corpora and models that can be employed for sentiment analysis and opinion mining in Greek texts.

- A comparative evaluation of the state-of-the-art sentiment classification methods that employ pre-trained language models, both in the binary and three-class sentiment classification that also considers a highly represented neutral class.

- A new pre-trained language model for Greek social media texts, that has been trained on a large corpus collected from the Greek-speaking social media accounts.

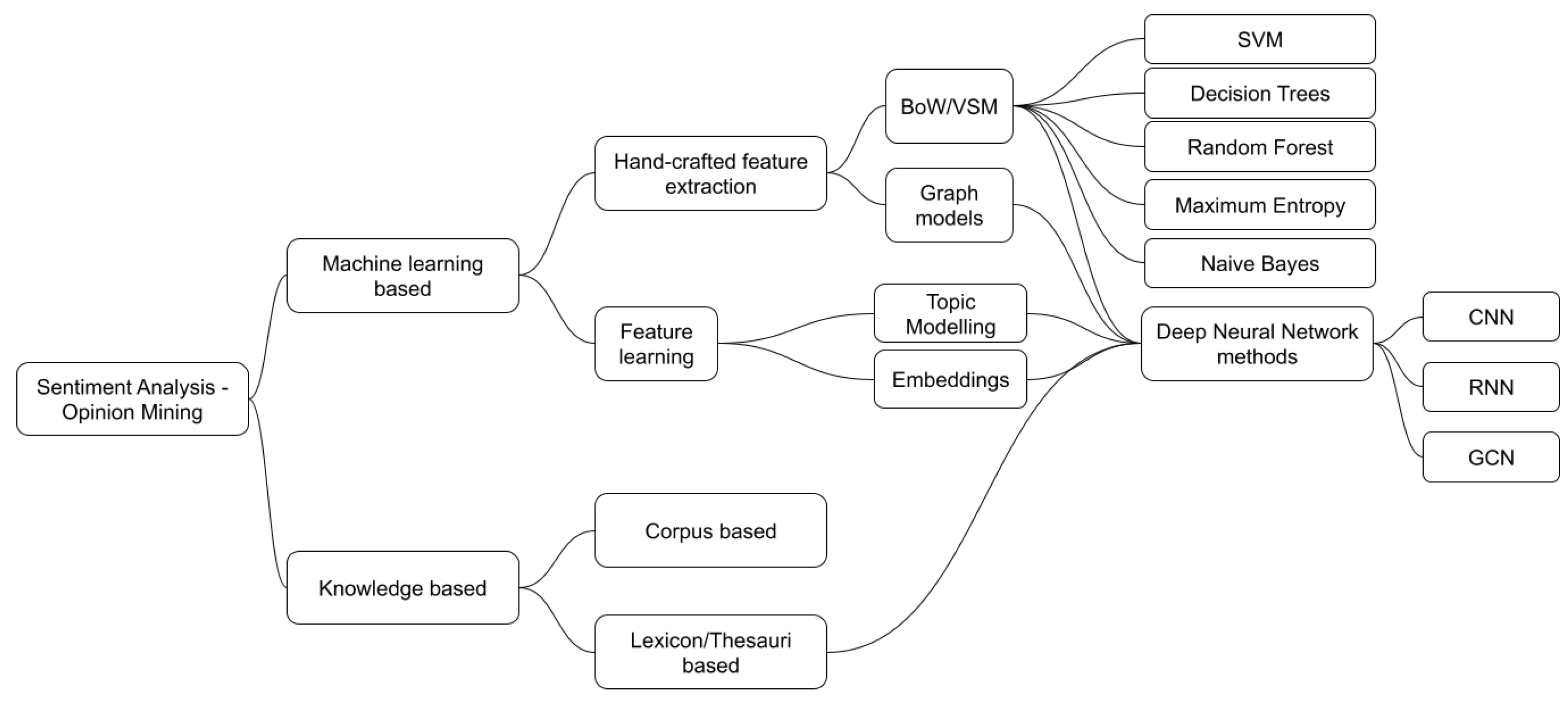

2. Related Work

2.1. NLP Models and Software

2.2. Annotated Corpora

2.3. Greek Embeddings

3. Proposed Method

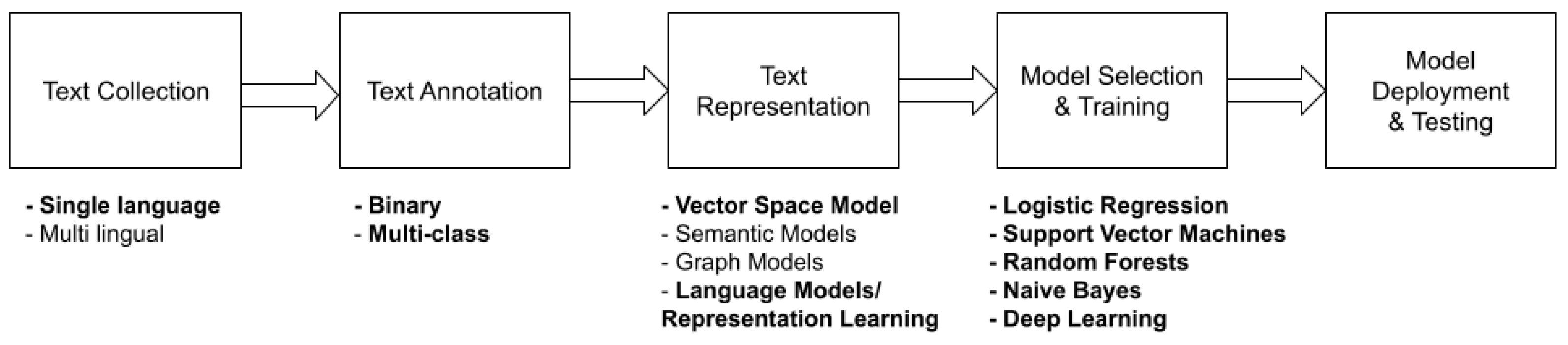

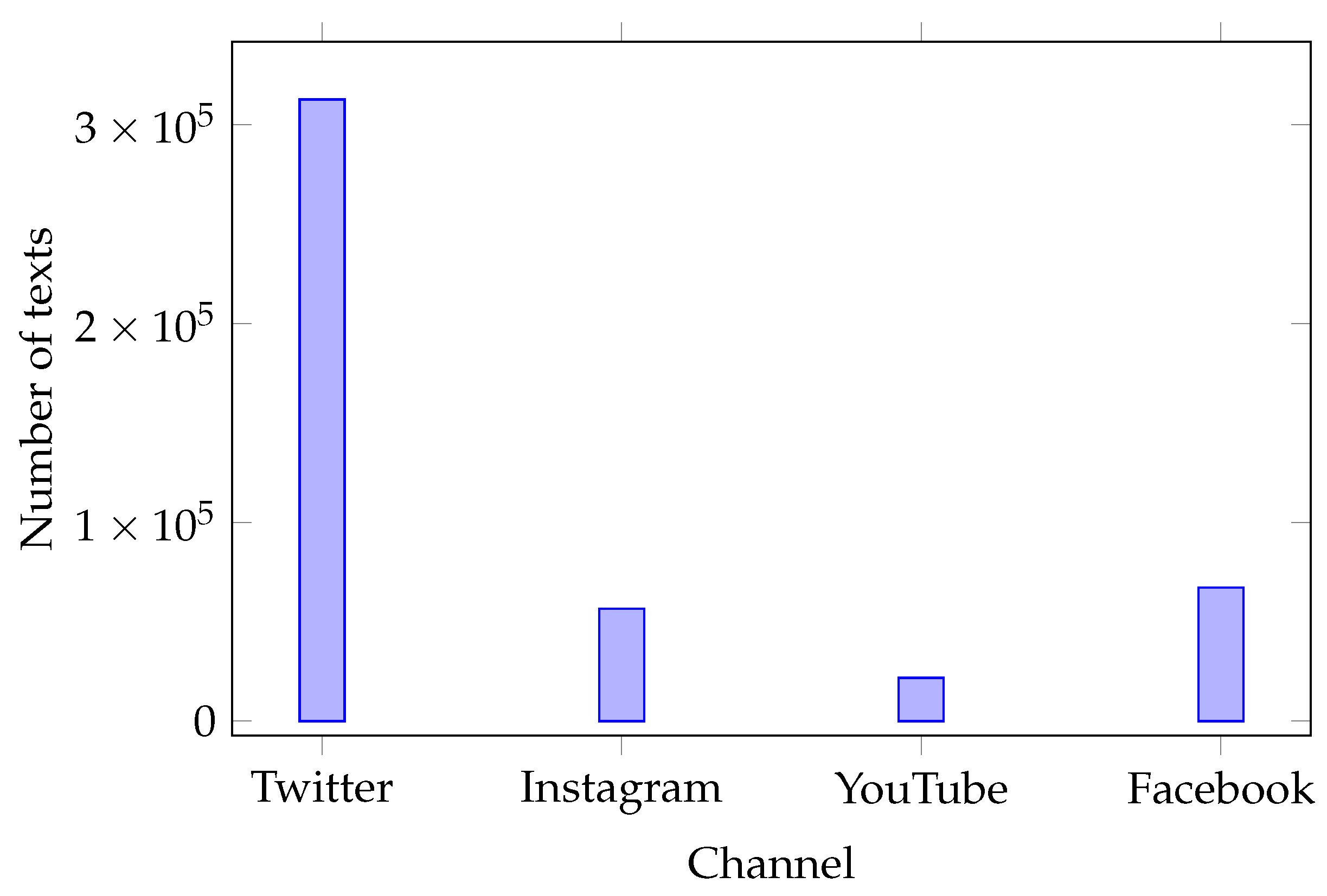

3.1. Datasets, Algorithms, and Models Employed

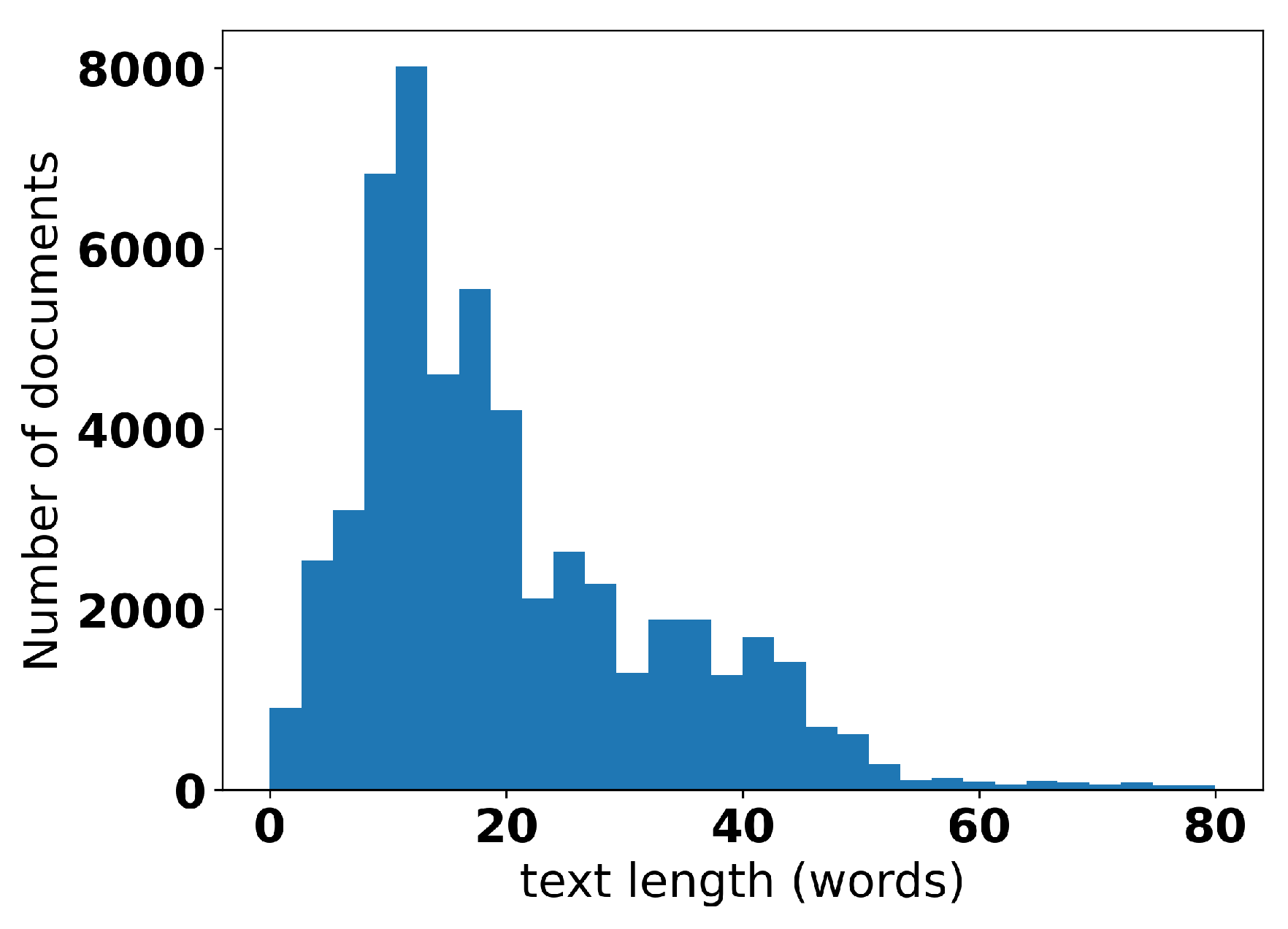

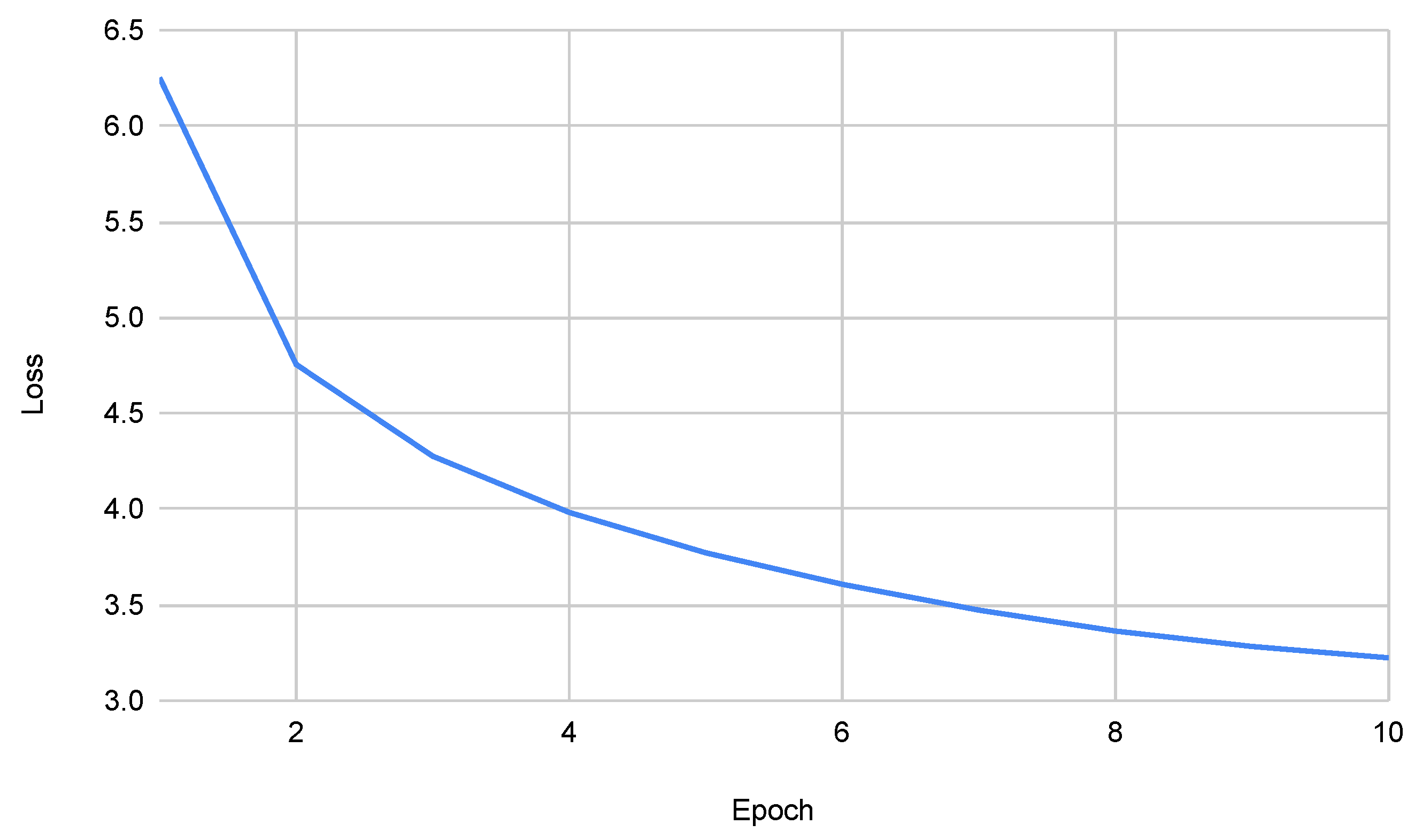

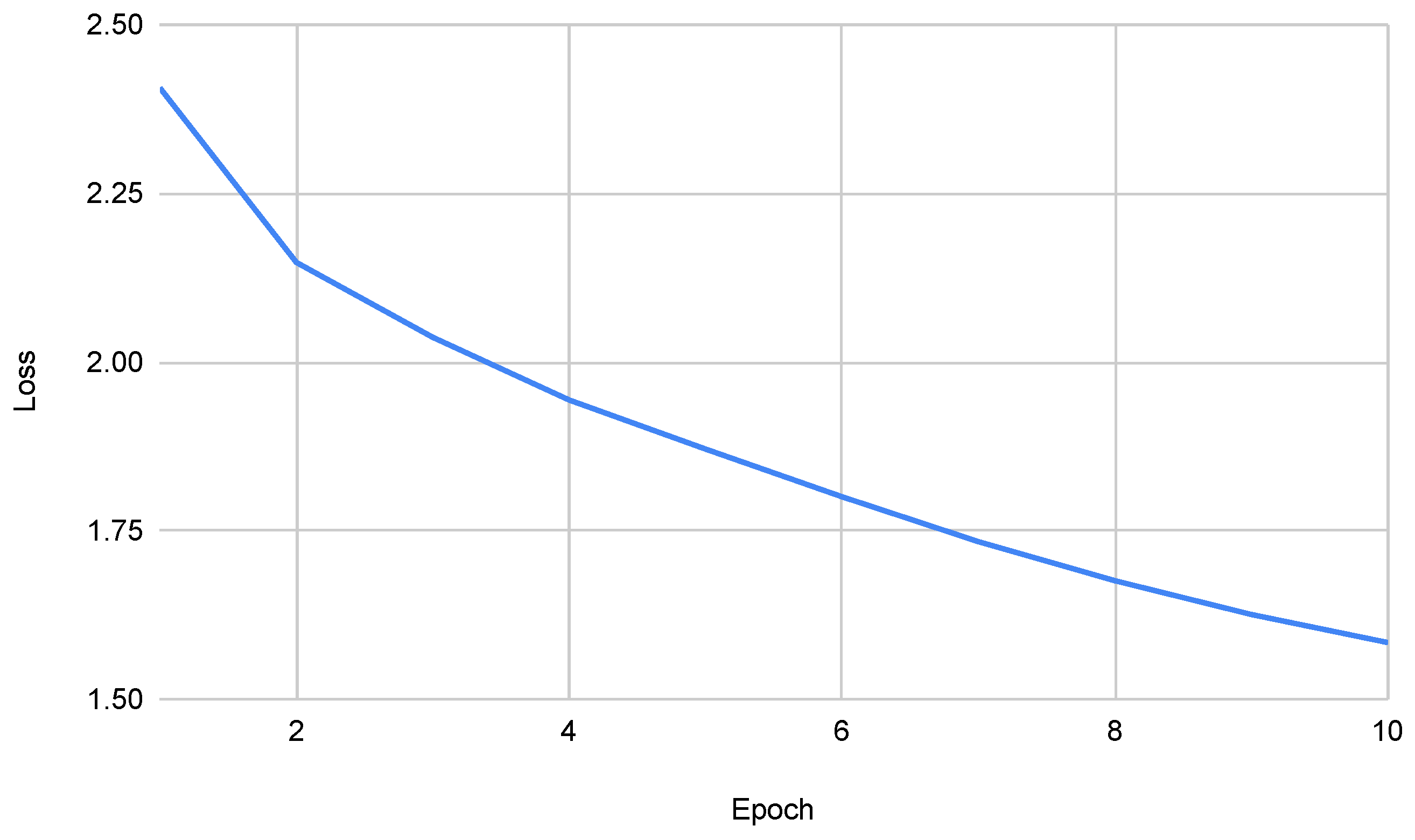

3.2. Training a Language Model for the Greek Social Media

4. Results

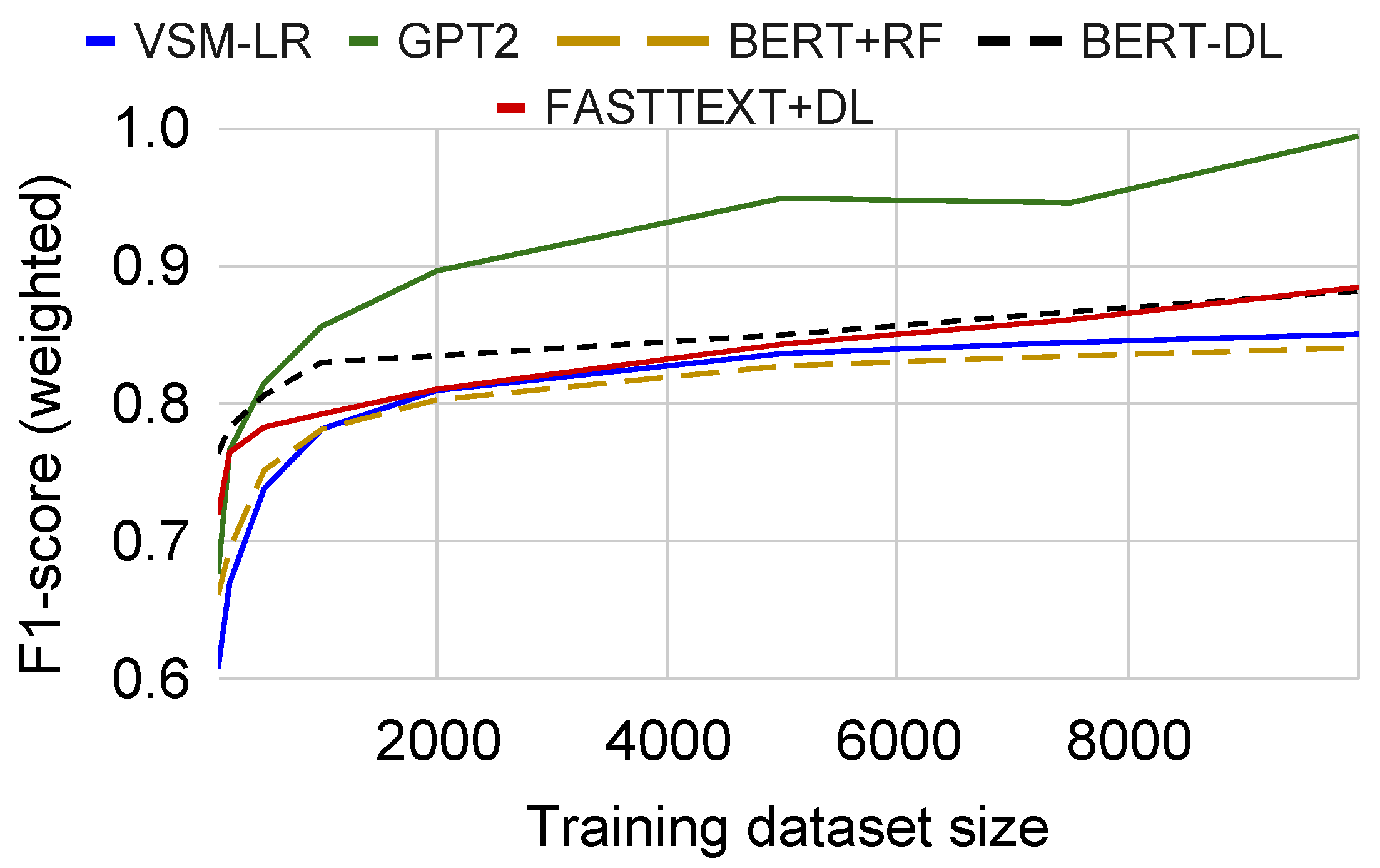

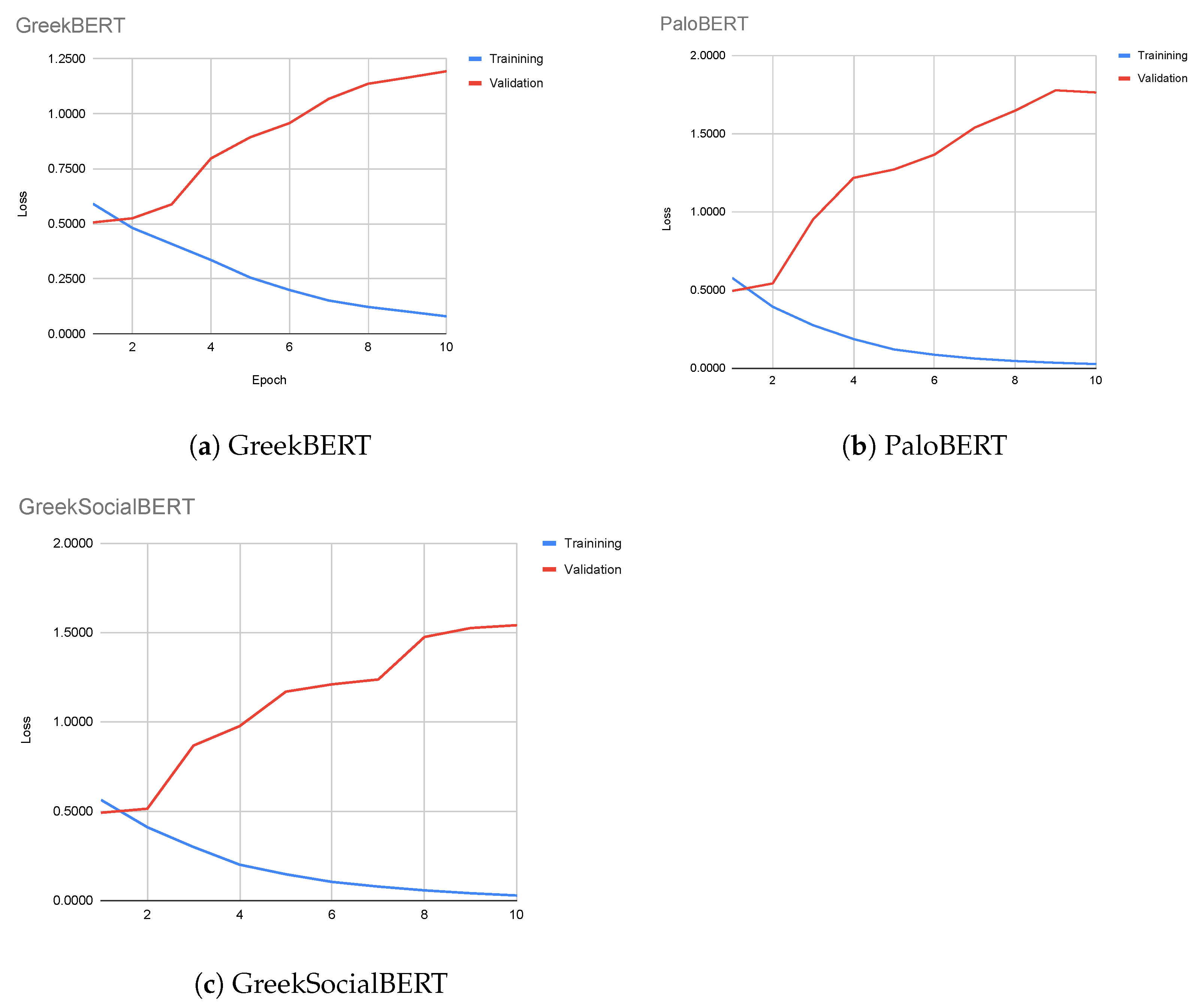

4.1. Binary Sentiment Prediction with Pre-Trained Language Models

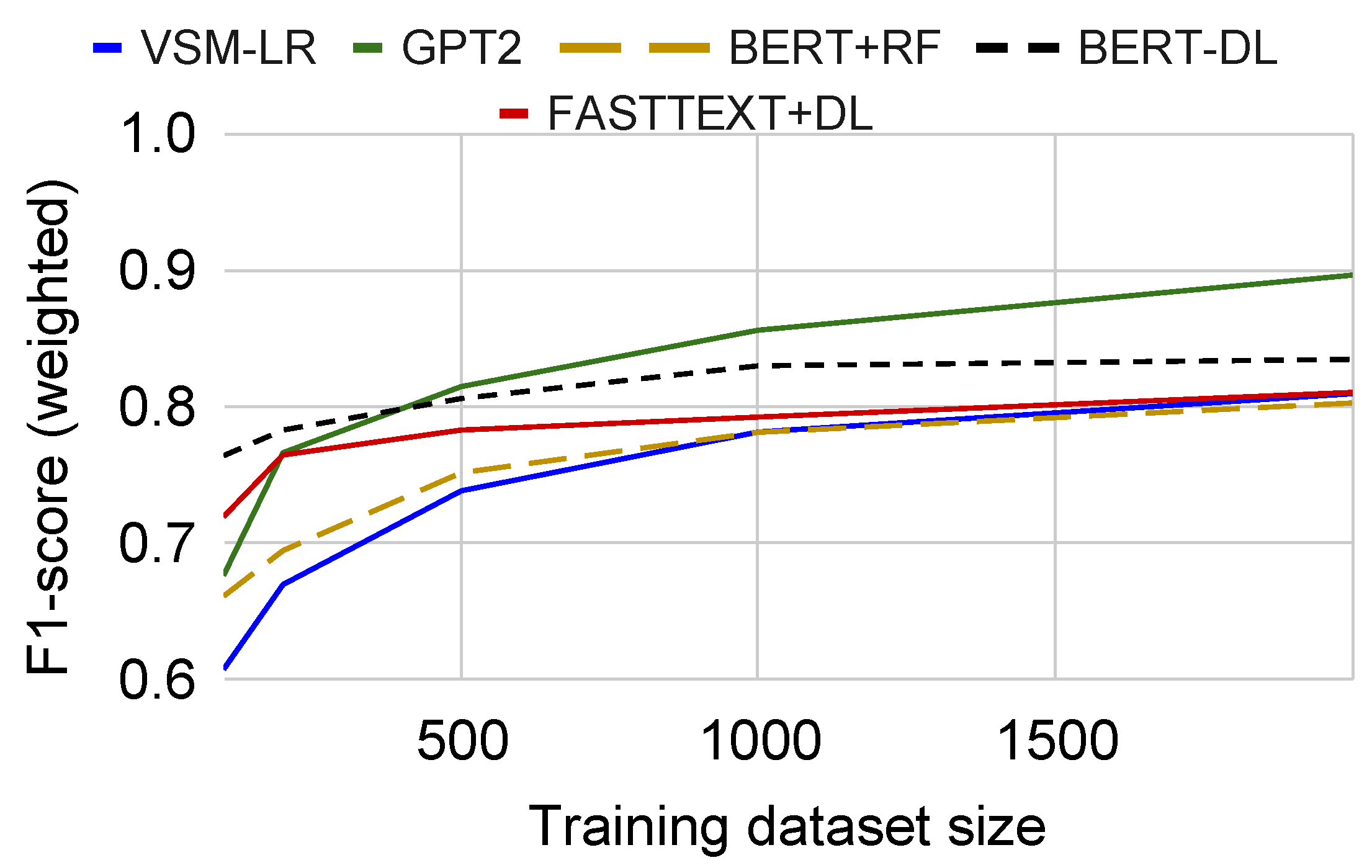

4.2. Three-Class Sentiment Prediction with Custom Language Models

5. Discussion & Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

Abbreviations

| BERT | Bidirectional Encoder Representations from Transformers |

| BoW | Bag of words |

| DL | Deep Learning |

| ELMo | Embeddings from Language Models |

| GPT-2 | Generative Pre-trained Transformer 2 |

| GPT-3 | Generative Pre-trained Transformer 3 |

| LDA | Linear Discriminant Analysis |

| LR | Logistic Regression |

| LSA | Latent Semantic Analysis |

| LSI | Latent Semantic Indexing |

| LSTM | Long Short-Term Memory |

| NB | Naive bayesian classifier |

| NER | Named-Entity Recognition |

| NLP | Natural Language Processing |

| NN | Neural Network |

| VSM | Vector Space Model |

| OOV | Out of Vocabulary |

| PLSA | Probabilistic Latent Semantic Analysis |

| PLSI | Probabilistic Latent Semantic Indexing |

| POS | Part of Speech |

| RBF | Radial Basis Function |

| RF | Random Forest |

| TF-IDF | Term Frequency, Inverse Document Frequency |

| SVM | Support Vector Machines |

| VSM | Vector Space Model |

References

- Zhang, W.; Xu, M.; Jiang, Q. Opinion mining and sentiment analysis in social media: Challenges and applications. In Proceedings of the International Conference on HCI in Business, Government, and Organizations, Las Vegas, NV, USA, 15–20 July 2018; Springer: Cham, Switzerland, 2018; pp. 536–548. [Google Scholar]

- Soong, H.C.; Jalil, N.B.A.; Ayyasamy, R.K.; Akbar, R. The essential of sentiment analysis and opinion mining in social media: Introduction and survey of the recent approaches and techniques. In Proceedings of the 2019 IEEE 9th Symposium on Computer Applications & Industrial Electronics (ISCAIE), Kota Kinabalu, Malaysia, 27–28 April 2019; pp. 272–277. [Google Scholar]

- Samal, B.; Behera, A.K.; Panda, M. Performance analysis of supervised machine learning techniques for sentiment analysis. In Proceedings of the 2017 Third International Conference on Sensing, Signal Processing and Security (ICSSS), Chennai, India, 4–5 May 2017; pp. 128–133. [Google Scholar]

- Katakis, I.M.; Varlamis, I.; Tsatsaronis, G. Pythia: Employing lexical and semantic features for sentiment analysis. In Proceedings of the Joint European Conference on Machine Learning and Knowledge Discovery in Databases, Nancy, France, 14–18 September 2014; Springer: Berlin/Heidelberg, Germany, 2014; pp. 448–451. [Google Scholar]

- Penalver-Martinez, I.; Garcia-Sanchez, F.; Valencia-Garcia, R.; Rodriguez-Garcia, M.A.; Moreno, V.; Fraga, A.; Sanchez-Cervantes, J.L. Feature-based opinion mining through ontologies. Expert Syst. Appl. 2014, 41, 5995–6008. [Google Scholar] [CrossRef]

- Maxwell, M.; Hughes, B. Frontiers in linguistic annotation for lower-density languages. In Proceedings of the Workshop on Frontiers in Linguistically Annotated Corpora 2006, Sydney, Australia, 15–16 July 2006; Association for Computational Linguistics: Stroudsburg, PA, USA, 2006; pp. 29–37. [Google Scholar]

- Zhou, H.; Chen, L.; Shi, F.; Huang, D. Learning bilingual sentiment word embeddings for cross-language sentiment classification. In Proceedings of the 53rd Annual Meeting of the Association for Computational Linguistics and the 7th International Joint Conference on Natural Language Processing (Volume 1: Long Papers), Beijing, China, 26–31 July 2015; pp. 430–440. [Google Scholar]

- Xu, K.; Wan, X. Towards a universal sentiment classifier in multiple languages. In Proceedings of the 2017 Conference on Empirical Methods in Natural Language Processing, Copenhagen, Denmark, 7–11 September 2017; pp. 511–520. [Google Scholar]

- Balazs, J.A.; Velásquez, J.D. Opinion mining and information fusion: A survey. Inf. Fusion 2016, 27, 95–110. [Google Scholar] [CrossRef]

- Dey, A.; Jenamani, M.; Thakkar, J.J. Senti-N-Gram: An n-gram lexicon for sentiment analysis. Expert Syst. Appl. 2018, 103, 92–105. [Google Scholar] [CrossRef]

- Taher, S.A.; Akhter, K.A.; Hasan, K.A. N-gram based sentiment mining for bangla text using support vector machine. In Proceedings of the 2018 International Conference on Bangla Speech and Language Processing (ICBSLP), Sylhet, Bangladesh, 21–22 September 2018; pp. 1–5. [Google Scholar]

- Violos, J.; Tserpes, K.; Varlamis, I.; Varvarigou, T. Text classification using the n-gram graph representation model over high frequency data streams. Front. Appl. Math. Stat. 2018, 4, 41. [Google Scholar] [CrossRef]

- Skianis, K.; Malliaros, F.; Vazirgiannis, M. Fusing document, collection and label graph-based representations with word embeddings for text classification. In Proceedings of the Twelfth Workshop on Graph-Based Methods for Natural Language Processing (TextGraphs-12), New Orleans, LA, USA, 6 June 2018; pp. 49–58. [Google Scholar]

- Maas, A.; Daly, R.E.; Pham, P.T.; Huang, D.; Ng, A.Y.; Potts, C. Learning word vectors for sentiment analysis. In Proceedings of the 49th Annual Meeting of the Association for Computational Linguistics: Human Language Technologies, Portland, OR, USA, 19–24 June 2011; pp. 142–150. [Google Scholar]

- Kwon, H.J.; Ban, H.J.; Jun, J.K.; Kim, H.S. Topic modeling and sentiment analysis of online review for airlines. Information 2021, 12, 78. [Google Scholar] [CrossRef]

- Rana, T.A.; Cheah, Y.N.; Letchmunan, S. Topic Modeling in Sentiment Analysis: A Systematic Review. J. ICT Res. Appl. 2016, 10, 76–93. [Google Scholar] [CrossRef]

- Tang, D.; Wei, F.; Yang, N.; Zhou, M.; Liu, T.; Qin, B. Learning sentiment-specific word embedding for twitter sentiment classification. In Proceedings of the 52nd Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), Baltimore, MD, USA, 23–25 June 2014; pp. 1555–1565. [Google Scholar]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, U.; Polosukhin, I. Attention is All You Need. In Proceedings of the 31st International Conference on Neural Information Processing Systems (NIPS’17), Long Beach, CA, USA, 4–9 December 2017; Curran Associates Inc.: Red Hook, NY, USA, 2017; pp. 6000–6010. [Google Scholar]

- Devlin, J.; Chang, M.W.; Lee, K.; Toutanova, K. Bert: Pre-training of deep bidirectional transformers for language understanding. In Proceedings of the NAACL-HLT, Minneapolis, MN, USA, 2–7 June 2019; pp. 4171–4186. [Google Scholar]

- Ethayarajh, K. How contextual are contextualized word representations? comparing the geometry of BERT, ELMo, and GPT-2 embeddings. arXiv 2019, arXiv:1909.00512. [Google Scholar]

- Budzianowski, P.; Vulić, I. Hello, it’s GPT-2–how can I help you? towards the use of pretrained language models for task-oriented dialogue systems. arXiv 2019, arXiv:1907.05774. [Google Scholar]

- Radford, A.; Narasimhan, K.; Salimans, T.; Sutskever, I. Improving Language Understanding by Generative Pre-Training. Available online: https://www.cs.ubc.ca/~amuham01/LING530/papers/radford2018improving.pdf (accessed on 1 July 2021).

- Papantoniou, K.; Tzitzikas, Y. NLP for the Greek Language: A Brief Survey. In Proceedings of the 11th Hellenic Conference on Artificial Intelligence, Athens, Greece, 2–4 September 2020; pp. 101–109. [Google Scholar]

- Nikiforos, M.N.; Voutos, Y.; Drougani, A.; Mylonas, P.; Kermanidis, K.L. The Modern Greek Language on the Social Web: A Survey of Data Sets and Mining Applications. Data 2021, 6, 52. [Google Scholar] [CrossRef]

- GitHub. Skroutz/Greek_Stemmer: A Simple Greek Stemming Library. 2014. Available online: https://github.com/skroutz/greek_stemmer (accessed on 1 July 2021).

- Ntais, G. Development of a Stemmer for the Greek Language. Master’s Thesis, Department of Computer and Systems Sciences, Stockholm University/Royal Institute of Technology, Stockholm, Sweden, 2006; pp. 1–40. [Google Scholar]

- Prokopidis, P.; Desipri, E.; Koutsombogera, M.; Papageorgiou, H.; Piperidis, S. Theoretical and practical issues in the construction of a Greek dependency treebank. In Proceedings of the 4th Workshop on Treebanks and Linguistic Theories (TLT 2005), Barcelona, Spain, 9–10 December 2005. [Google Scholar]

- AUEB. NLP Group. 2021. Available online: http://nlp.cs.aueb.gr/software.html (accessed on 1 July 2021).

- Nikiforos, M.N.; Kermanidis, K.L. A Supervised Part-Of-Speech Tagger for the Greek Language of the Social Web. In Proceedings of the 12th Language Resources and Evaluation Conference, Marseille, France, 11–16 May 2020; pp. 3861–3867. [Google Scholar]

- Lucarelli, G.; Vasilakos, X.; Androutsopoulos, I. Named entity recognition in greek texts with an ensemble of svms and active learning. Int. J. Artif. Intell. Tools 2007, 16, 1015–1045. [Google Scholar] [CrossRef]

- Makrynioti, N.; Grivas, A.; Sardianos, C.; Tsirakis, N.; Varlamis, I.; Vassalos, V.; Poulopoulos, V.; Tsantilas, P. PaloPro: A platform for knowledge extraction from big social data and the news. Int. J. Big Data Intell. 2017, 4, 3–22. [Google Scholar] [CrossRef]

- Sadegh, M.; Ibrahim, R.; Othman, Z.A. Opinion mining and sentiment analysis: A survey. Int. J. Comput. Technol. 2012, 2, 171–178. [Google Scholar]

- Grigoriadou, M.; Kornilakis, H.; Galiotou, E.; Stamou, S.; Papakitsos, E. The software infrastructure for the development and validation of the Greek WordNet. Rom. J. Inf. Sci. Technol. 2004, 7, 89–105. [Google Scholar]

- BalkaNet. Project Home Page. 2000. Available online: http://www.dblab.upatras.gr/balkanet/ (accessed on 1 July 2021).

- Guo, X.; Li, J. A Novel Twitter Sentiment Analysis Model with Baseline Correlation for Financial Market Prediction with Improved Efficiency. In Proceedings of the 2019 Sixth International Conference on Social Networks Analysis, Management and Security (SNAMS), Granada, Spain, 22–25 October 2019; pp. 472–477. [Google Scholar] [CrossRef] [Green Version]

- Petasis, G.; Spiliotopoulos, D.; Tsirakis, N.; Tsantilas, P. Sentiment analysis for reputation management: Mining the greek web. In Proceedings of the Hellenic Conference on Artificial Intelligence, Ioannina, Greece, 15–17 May 2014; Springer: Cham, Switzerland, 2014; pp. 327–340. [Google Scholar]

- Petasis, G.; Karkaletsis, V.; Paliouras, G.; Androutsopoulos, I.; Spyropoulos, C.D. Ellogon: A new text engineering platform. arXiv 2002, arXiv:cs/0205017. [Google Scholar]

- Prokopidis, P.; Piperidis, S. A Neural NLP toolkit for Greek. In Proceedings of the 11th Hellenic Conference on Artificial Intelligence, Athens, Greece, 2–4 September 2020; pp. 125–128. [Google Scholar]

- Bird, S.; Klein, E.; Loper, E. Natural Language Processing with Python: Analyzing Text with the Natural Language Toolkit; O’Reilly Media, Inc.: Sebastopol, CA, USA, 2009. [Google Scholar]

- Honnibal, M.; Montani, I.; Van Landeghem, S.; Boyd, A. SpaCy: Industrial-Strength Natural Language Processing in Python. 2020. Available online: https://zenodo.org/record/5115698#.YRnUSEQzZPY (accessed on 1 July 2021).

- Apache Software Foundation. OpenNLP Natural Language Processing Library. 2014. Available online: http://opennlp.apache.org/ (accessed on 1 July 2021).

- GitHub. Eellak/Gsoc2018-Spacy: [GSOC] Greek Language Support for Spacy.io Python NLP Software. 2018. Available online: https://github.com/eellak/gsoc2018-spacy (accessed on 1 July 2021).

- CLARIN ERIC. Part-of-Speech Taggers and Lemmatizers. 2021. Available online: https://www.clarin.eu/resource-families/tools-part-speech-tagging-and-lemmatization (accessed on 1 July 2021).

- Wołk, K. Real-Time Sentiment Analysis for Polish Dialog Systems Using MT as Pivot. Electronics 2021, 10, 1813. [Google Scholar] [CrossRef]

- Štrimaitis, R.; Stefanovič, P.; Ramanauskaitė, S.; Slotkienė, A. Financial Context News Sentiment Analysis for the Lithuanian Language. Appl. Sci. 2021, 11, 4443. [Google Scholar] [CrossRef]

- Pecar, S.; Šimko, M.; Bielikova, M. Improving Sentiment Classification in Slovak Language. In Proceedings of the 7th Workshop on Balto-Slavic Natural Language Processing, Florence, Italy, 2 August 2019; pp. 114–119. [Google Scholar]

- Kalamatianos, G.; Mallis, D.; Symeonidis, S.; Arampatzis, A. Sentiment analysis of greek tweets and hashtags using a sentiment lexicon. In Proceedings of the 19th Panhellenic Conference on Informatics, Athens, Greece, 1–3 October 2015; pp. 63–68. [Google Scholar]

- Tsakalidis, A.; Papadopoulos, S.; Voskaki, R.; Ioannidou, K.; Boididou, C.; Cristea, A.I.; Liakata, M.; Kompatsiaris, Y. Building and evaluating resources for sentiment analysis in the Greek language. Lang. Resour. Eval. 2018, 52, 1021–1044. [Google Scholar] [CrossRef] [Green Version]

- Outsios, S.; Karatsalos, C.; Skianis, K.; Vazirgiannis, M. Evaluation of Greek Word Embeddings. In Proceedings of the 12th Language Resources and Evaluation Conference, Marseille, France, 11–16 May 2020; European Language Resources Association: Marseille, France, 2020; pp. 2543–2551. [Google Scholar]

- Greek Word2Vec. 2018. Available online: http://archive.aueb.gr:7000/ (accessed on 1 July 2021).

- Giatsoglou, M.; Vozalis, M.G.; Diamantaras, K.; Vakali, A.; Sarigiannidis, G.; Chatzisavvas, K.C. Sentiment analysis leveraging emotions and word embeddings. Expert Syst. Appl. 2017, 69, 214–224. [Google Scholar] [CrossRef]

- Fares, M.; Kutuzov, A.; Oepen, S.; Velldal, E. Word vectors, reuse, and replicability: Towards a community repository of large-text resources. In Proceedings of the 21st Nordic Conference on Computational Linguistics, Gothenburg, Sweden, 22–24 May 2017; Association for Computational Linguistics: Stroudsburg, PA, USA, 2017; pp. 271–276. [Google Scholar]

- Grave, E.; Bojanowski, P.; Gupta, P.; Joulin, A.; Mikolov, T. Learning Word Vectors for 157 Languages. In Proceedings of the International Conference on Language Resources and Evaluation (LREC 2018), Miyazaki, Japan, 7–12 May 2018. [Google Scholar]

- Koutsikakis, J.; Chalkidis, I.; Malakasiotis, P.; Androutsopoulos, I. Greek-bert: The greeks visiting sesame street. In Proceedings of the 11th Hellenic Conference on Artificial Intelligence, Athens, Greece, 2–4 September 2020; pp. 110–117. [Google Scholar]

- Suárez, P.J.O.; Sagot, B.; Romary, L. Asynchronous pipeline for processing huge corpora on medium to low resource infrastructures. In Proceedings of the 7th Workshop on the Challenges in the Management of Large Corpora (CMLC-7), Cardiff, UK, 22 July 2019; Leibniz-Institut für Deutsche Sprache: Mannheim, Germany, 2019. [Google Scholar]

- Common Crawl. 2021. Available online: http://commoncrawl.org/ (accessed on 1 July 2021).

- Hugging Face. 2021. Available online: https://huggingface.co/nikokons/gpt2-greek (accessed on 1 July 2021).

- Esuli, A.; Sebastiani, F. Determining term subjectivity and term orientation for opinion mining. In Proceedings of the 11th Conference of the European Chapter of the Association for Computational Linguistics, Trento, Italy, 5–6 April 2006. [Google Scholar]

- Salton, G.; Wong, A.; Yang, C.S. A vector space model for automatic indexing. Commun. ACM 1975, 18, 613–620. [Google Scholar] [CrossRef] [Green Version]

- Hofmann, T. Probabilistic latent semantic indexing. In Proceedings of the 22nd Annual International ACM SIGIR Conference on Research and Development in Information Retrieval, Berkeley, CA, USA, 15–19 August 1999; pp. 50–57. [Google Scholar]

- Sonawane, S.S.; Kulkarni, P.A. Graph based representation and analysis of text document: A survey of techniques. Int. J. Comput. Appl. 2014, 96, 19. [Google Scholar] [CrossRef]

- Liu, Z.; Lin, Y.; Sun, M. Representation Learning and NLP. In Representation Learning for Natural Language Processing; Springer: Singapore, 2020; pp. 1–11. [Google Scholar] [CrossRef]

- Aggarwal, C.C.; Zhai, C. A survey of text classification algorithms. In Mining Text Data; Springer: Boston, MA, USA, 2012; pp. 163–222. [Google Scholar]

- Vijayan, V.K.; Bindu, K.; Parameswaran, L. A comprehensive study of text classification algorithms. In Proceedings of the 2017 International Conference on Advances in Computing, Communications and Informatics (ICACCI), Udupi, India, 13–16 September 2017; pp. 1109–1113. [Google Scholar]

- Kowsari, K.; Jafari Meimandi, K.; Heidarysafa, M.; Mendu, S.; Barnes, L.; Brown, D. Text classification algorithms: A survey. Information 2019, 10, 150. [Google Scholar] [CrossRef] [Green Version]

- Hartmann, J.; Huppertz, J.; Schamp, C.; Heitmann, M. Comparing automated text classification methods. Int. J. Res. Mark. 2019, 36, 20–38. [Google Scholar] [CrossRef]

- Pranckevičius, T.; Marcinkevičius, V. Comparison of naive bayes, random forest, decision tree, support vector machines, and logistic regression classifiers for text reviews classification. Balt. J. Mod. Comput. 2017, 5, 221. [Google Scholar] [CrossRef]

- FastText. Word Vectors for 157 Languages. 2020. Available online: https://fasttext.cc/docs/en/crawl-vectors.html (accessed on 1 July 2021).

- GitHub. Nlpaueb/Greek-Bert: A Greek Edition of BERT Pre-Trained Language Model. 2021. Available online: https://github.com/nlpaueb/greek-bert (accessed on 1 July 2021).

- Liu, Y.; Ott, M.; Goyal, N.; Du, J.; Joshi, M.; Chen, D.; Levy, O.; Lewis, M.; Zettlemoyer, L.; Stoyanov, V. Roberta: A robustly optimized bert pretraining approach. arXiv 2019, arXiv:1907.11692. [Google Scholar]

- HPC. National HPC Infrastructure. 2016. Available online: https://hpc.grnet.gr/en/ (accessed on 1 July 2021).

- Hugging Face. 2021. Available online: https://huggingface.co/gealexandri/palobert-base-greek-uncased-v1 (accessed on 1 July 2021).

- Hugging Face. 2021. Available online: https://huggingface.co/gealexandri/greeksocialbert-base-greek-uncased-v1 (accessed on 1 July 2021).

- Tsytsarau, M.; Palpanas, T. Managing diverse sentiments at large scale. IEEE Trans. Knowl. Data Eng. 2016, 28, 3028–3040. [Google Scholar] [CrossRef]

- Edwards, C. The best of NLP. Commun. ACM 2021, 64, 9–11. [Google Scholar] [CrossRef]

| Positive | Negative | Neutral | |

|---|---|---|---|

| Blogs | 79 | 99 | 433 |

| 1271 | 553 | 4575 | |

| Forums | 1 | 3 | 14 |

| 257 | 23 | 2128 | |

| News | 697 | 372 | 3201 |

| 4295 | 6869 | 33,297 | |

| YouTube | 442 | 58 | 1143 |

| Total | 7042 | 7977 | 44,791 |

| Negative | Positive | Neutral | Avg.F1 | |||||||

|---|---|---|---|---|---|---|---|---|---|---|

| Language Model | P | R | F1 | P | R | F1 | P | R | F1 | |

| GreekBERT | 67.88 | 26.10 | 37.70 | 72.04 | 38.86 | 50.49 | 81.46 | 96.02 | 88.14 | 77.14 |

| PaloBERT | 62.70 | 42.58 | 50.72 | 76.39 | 35.56 | 48.53 | 82.66 | 94.13 | 88.03 | 78.53 |

| GreekSocialBERT | 61.35 | 41.63 | 49.60 | 62.82 | 55.10 | 58.71 | 84.73 | 91.10 | 87.80 | 79.40 |

| Negative | Positive | Neutral | Avg.F1 | |||||||

| Language Model | P | R | F1 | P | R | F1 | P | R | F1 | |

| GreekBERT | 61.93 | 38.83 | 47.73 | 70.25 | 41.74 | 52.37 | 82.88 | 93.53 | 87.88 | 78.45 |

| PaloBERT | 89.86 | 13.11 | 22.89 | 71.27 | 42.24 | 53.04 | 80.23 | 97.32 | 87.95 | 79.80 |

| GreekSocialBERT | 71.43 | 23.55 | 35.42 | 67.77 | 45.76 | 54.63 | 81.71 | 95.44 | 88.04 | 80.17 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Alexandridis, G.; Varlamis, I.; Korovesis, K.; Caridakis, G.; Tsantilas, P. A Survey on Sentiment Analysis and Opinion Mining in Greek Social Media. Information 2021, 12, 331. https://doi.org/10.3390/info12080331

Alexandridis G, Varlamis I, Korovesis K, Caridakis G, Tsantilas P. A Survey on Sentiment Analysis and Opinion Mining in Greek Social Media. Information. 2021; 12(8):331. https://doi.org/10.3390/info12080331

Chicago/Turabian StyleAlexandridis, Georgios, Iraklis Varlamis, Konstantinos Korovesis, George Caridakis, and Panagiotis Tsantilas. 2021. "A Survey on Sentiment Analysis and Opinion Mining in Greek Social Media" Information 12, no. 8: 331. https://doi.org/10.3390/info12080331

APA StyleAlexandridis, G., Varlamis, I., Korovesis, K., Caridakis, G., & Tsantilas, P. (2021). A Survey on Sentiment Analysis and Opinion Mining in Greek Social Media. Information, 12(8), 331. https://doi.org/10.3390/info12080331