SOPHIA: An Event-Based IoT and Machine Learning Architecture for Predictive Maintenance in Industry 4.0

Abstract

1. Introduction

2. Related Work

3. Materials and Methods

3.1. Log File Data Collection

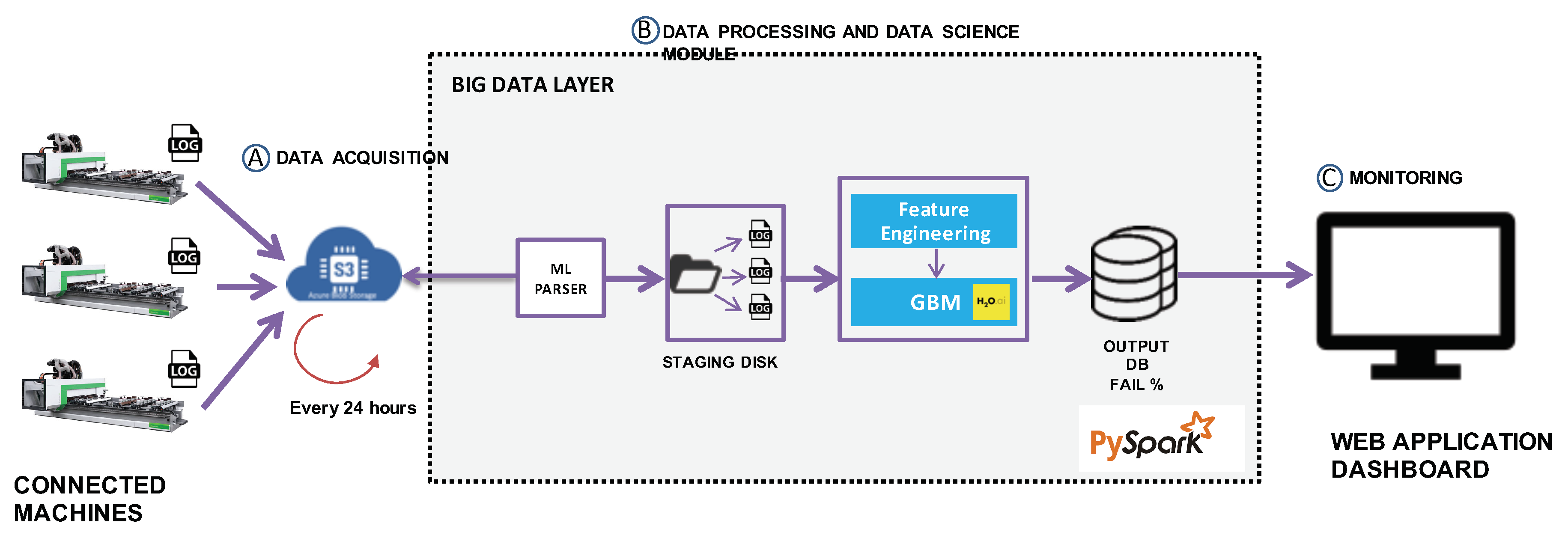

3.2. Big Data Architecture

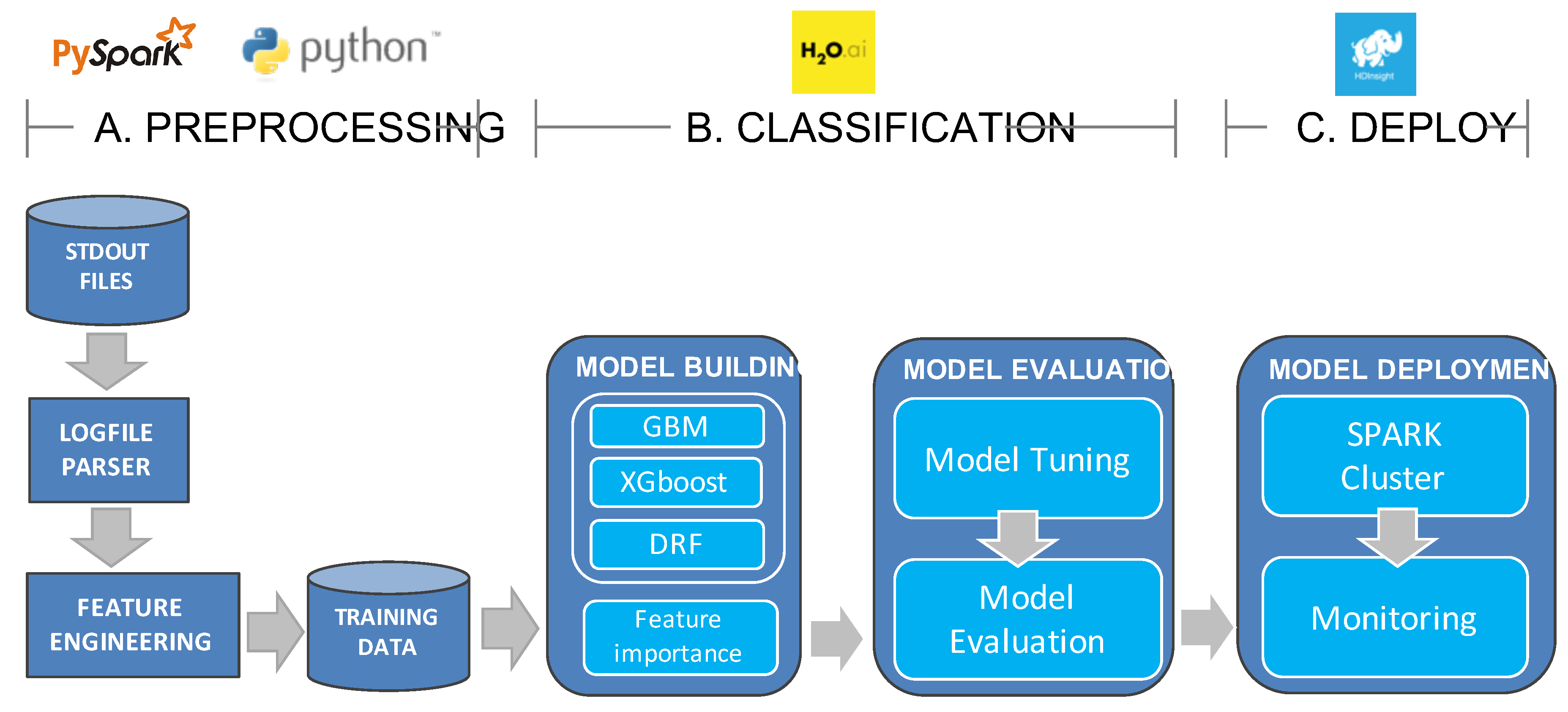

3.3. Data Science Module

3.3.1. Log-File Parsing Module

3.3.2. Feature Engineering

3.3.3. Model Optimization

3.3.4. Machine Learning Model Building

3.4. Experimental Procedures and Metrics

- Accuracy, Recall and Precision:where = True Positives, = True Negatives, = False Positives, and = False Negatives.

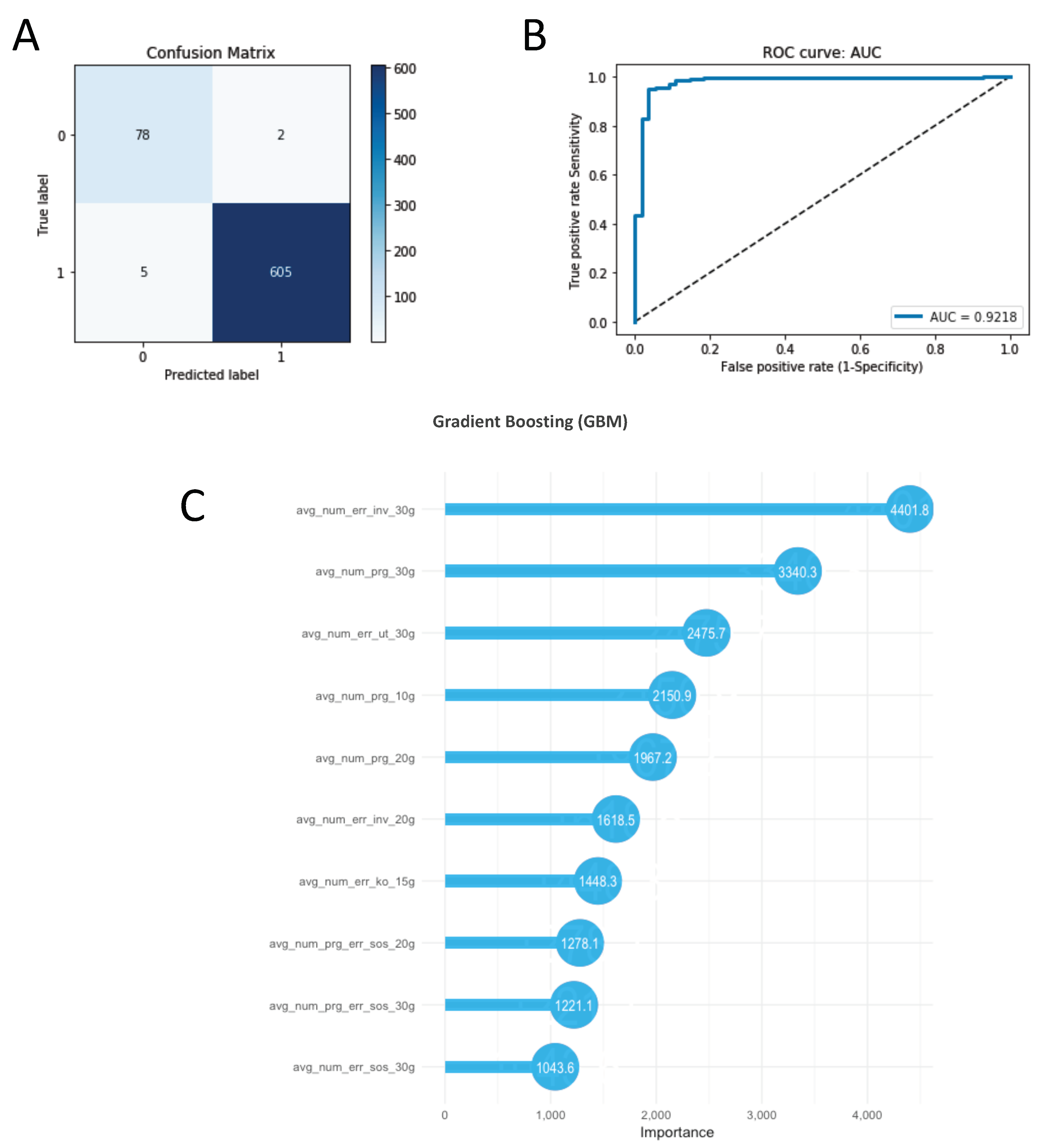

- Receiver operating characteristic (ROC): is designed by plotting the true positive rate (TPR) against the false positive rate (FPR) at various threshold settings. It illustrates the performance of a binary classifier as its threshold is changed. We used an area under the ROC curve (AUC) to compare the performance of classifiers.

- Confusion matrix: the square matrix that shows the type of error in a supervised task. For the considered binary task we show , , and values.

4. Experimental Results

4.1. Log File Parser

4.2. Feature Engineering

4.3. Classification Results

4.4. Model Feature Importance

5. Platform Integration: PdM Application and Machine Down Monitoring

6. Discussions

7. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

Appendix A

| StdOut Flag | Regular Expression | Format TXT | Description |

|---|---|---|---|

| START | START.* | START (18/10/2018 10:00:08)=BdriveIso/Step13 _strettaDX campo5 650x334x19 _20181018100008547,0,1,0,-1,1 | Indicates the timestamp of program execution START |

| Start - BBOX_35 | BBOX_35 | PLC: 277545608678.051 BBOX_35(1 1)[6137216] | Indicates the start point of an execution center |

| BBOX | BBOX_.* | PLC: 15401013.151 BBOX_22(0 1)[4598] | All BBOX events |

| Errori PLC | ERRORE ?=(PLC.*?) ?>.* | ERRORE (0-27/09/2018 08:47:25)=PLC 9314 >I/O device missing or incorrectly defined. ybn.10.0.10.PE75 (PE71-IN) | All PLC errors |

| End - BBOX_35 | BBOX_35 | PLC: 277545608678.051 BBOX_35(0 0)[6136089] | Indicate the end point of an execution center |

| ENDP | ENDP.* | ENDP (18/10/2018 09:59:57)=0,0 | Indicates the timestamp of program execution END |

| Error Classification Group | PLC Code |

|---|---|

| Emergency/inverter | PLC9004, PLC9705 |

| Security control/inverter | PLC90455 |

| KO | PLC9001, PLC9024, PLC9096, PLC9103, PLC9224, PLC9282, PLC9284,PLC9366,PLC9532,PLC9763,PLC9766,PLC9952,PLC9955,PLC90177, PLC90208,PLC90223,PLC90226,PLC90429,PLC90712,PLC90713,PLC90714, PLC90715,PLC90716,PLC90717,PLC90718,PLC91219,PLC91220 |

| KO / Emergency | PLC9690 |

| KO / Inverter | PLC9038 |

| KO / Overheating | PLC9054, PLC9530, PLC9531, PLC90609 |

| Emergency | PLC9005, PLC9035, PLC9068,PLC9191,PLC9448,PLC9517,PLC9643,PLC9689, PLC9954,PLC9996,PLC90062,PLC90467,PLC90473,PLC90607 |

| Emergency/Security control | PLC90454, PLC90456, PLC90465,PLC90485,PLC90620,PLC90623, PLC90625,PLC90627,PLC90628 |

| Inverter | PLC9029, PLC9093, PLC9514,PLC9644,PLC9746,PLC9044,PLC9915 |

| Overheating | PLC90445, PLC90446, PLC90448,PLC90449,PLC9012,PLC90489,PLC90490 |

| Overheating / inverter | PLC90500 |

| Security control | PLC90457, PLC90458, PLC90459,PLC90461,PLC90469,PLC90470, PLC90477,PLC90478,PLC90481,PLC90482,PLC90483,PLC90621, PLC90622,PLC90624,PLC90626 |

| Security control / inverter | PLC90462 |

| Tool change | PLC9116,PLC9118,PLC9145,PLC9146,PLC9147, PLC9148,PLC9149,PLC9159,PLC9167,PLC9172, PLC9338,PLC9364,PLC9740,PLC9742 |

| Model | Hyperparameter Ranges | Optimal Hyperpameters |

|---|---|---|

| Distributed Random Forest (DRF) | Max depth = [5, 6, 7, 8, 9] Number of trees = [10, 20, 30, 40, 50] Sample rate = [0.5, 0.6, 0.7, 0.8, 1.0] Column sample rate per tree = [0.2, 0.4, 0.5, 0.6, 0.7, 0.8, 1.0] | Max depth = 9 Number of trees = 30 Sample rate = 0.8 Column sample rate per tree = 0.5 |

| Gradient Boosting Machine (GBM) | Learning rate = [0.05, 0.1, 0.2] Max depth = [ 5, 6, 7, 8, 9] Number of trees = [50, 100, 150] Sample rate = [0.7, 0.8, 0.9] | Learning rate = 0.2 Max depth = 9 Number of trees = 150 Sample rate = 0.7 |

| Extreeme Gradient Boosting (XGBoost) | Learning rate = [0.05, 0.1, 0.2] Max depth = [4, 5, 6, 7, 8, 9] Number of trees = [50, 100, 150,200,250,300] Sample rate = [0.7, 0.8, 0.9] Column sample rate per tree = [0.2, 0.4, 0.5, 0.6, 0.7, 0.8, 1.0] | Learning rate = 0.05 Max depth = 9 Number of trees = 300 Sample rate = 0.7 Column sample rate per tree = 0.7 |

References

- Mönch, L.; Fowler, J.W.; Dauzere-Peres, S.; Mason, S.J.; Rose, O. A survey of problems, solution techniques, and future challenges in scheduling semiconductor manufacturing operations. J. Sched. 2011, 14, 583–599. [Google Scholar] [CrossRef]

- Susto, G.A.; Schirru, A.; Pampuri, S.; Pagano, D.; McLoone, S.; Beghi, A. A predictive maintenance system for integral type faults based on support vector machines: An application to ion implantation. In Proceedings of the 2013 IEEE International Conference on Automation Science and Engineering (CASE), Madison, WI, USA, 17–21 August 2013; pp. 195–200. [Google Scholar]

- Paolanti, M.; Romeo, L.; Felicetti, A.; Mancini, A.; Frontoni, E.; Loncarski, J. Machine learning approach for predictive maintenance in industry 4.0. In Proceedings of the 2018 14th IEEE/ASME International Conference on Mechatronic and Embedded Systems and Applications (MESA), Oulu, Finland, 2–4 July 2018; pp. 1–6. [Google Scholar]

- Susto, G.A.; Pampuri, S.; Schirru, A.; Beghi, A.; De Nicolao, G. Multi-step virtual metrology for semiconductor manufacturing: A multilevel and regularization methods-based approach. Comput. Oper. Res. 2015, 53, 328–337. [Google Scholar] [CrossRef]

- Romeo, L.; Loncarski, J.; Paolanti, M.; Bocchini, G.; Mancini, A.; Frontoni, E. Machine learning-based design support system for the prediction of heterogeneous machine parameters in industry 4.0. Expert Syst. Appl. 2020, 140, 112869. [Google Scholar] [CrossRef]

- Romeo, L.; Paolanti, M.; Bocchini, G.; Loncarski, J.; Frontoni, E. An Innovative Design Support System for Industry 4.0 Based on Machine Learning Approaches. In Proceedings of the 2018 5th International Symposium on Environment-Friendly Energies and Applications (EFEA), Rome, Italy, 24–26 September 2018; pp. 1–6. [Google Scholar]

- Susto, G.A.; Beghi, A.; De Luca, C. A predictive maintenance system for epitaxy processes based on filtering and prediction techniques. IEEE Trans. Semicond. Manuf. 2012, 25, 638–649. [Google Scholar] [CrossRef]

- Coleman, C.; Coleman, C.; Damodaran, S.; Chandramouli, M.; Deuel, E. Making Maintenance Smarter: Predictive Maintenance and the Digital Supply Network; Deloitte University Press: New York, NY, USA, 2017. [Google Scholar]

- Fraser, K.; Hvolby, H.H.; Watanabe, C. A review of the three most popular maintenance systems: How well is the energy sector represented? Int. J. Glob. Energy Issues 2011, 35, 287–309. [Google Scholar] [CrossRef]

- Chen, F. Issues in the continuous improvement process for preventive maintenance: Observations from Honda, Nippondenso and Toyota. Prod. Inventory Manag. J. 1997, 38, 13–16. [Google Scholar]

- Lei, Y.; Li, N.; Gontarz, S.; Lin, J.; Radkowski, S.; Dybala, J. A model-based method for remaining useful life prediction of machinery. IEEE Trans. Reliab. 2016, 65, 1314–1326. [Google Scholar] [CrossRef]

- Yoon, J.T.; Youn, B.D.; Yoo, M.; Kim, Y.; Kim, S. Life-cycle maintenance cost analysis framework considering time-dependent false and missed alarms for fault diagnosis. Reliab. Eng. Syst. Saf. 2019, 184, 181–192. [Google Scholar] [CrossRef]

- Sakib, N.; Wuest, T. Challenges and Opportunities of Condition-based Predictive Maintenance: A Review. Procedia CIRP 2018, 78, 267–272. [Google Scholar] [CrossRef]

- Lei, Y.; Li, N.; Guo, L.; Li, N.; Yan, T.; Lin, J. Machinery health prognostics: A systematic review from data acquisition to RUL prediction. Mech. Syst. Signal Process. 2018, 104, 799–834. [Google Scholar] [CrossRef]

- Sipos, R.; Fradkin, D.; Moerchen, F.; Wang, Z. Log-based predictive maintenance. In Proceedings of the 20th ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, New York, NY, USA, 24–27 August 2014; pp. 1867–1876. [Google Scholar]

- Wang, J.; Li, C.; Han, S.; Sarkar, S.; Zhou, X. Predictive maintenance based on event-log analysis: A case study. IBM J. Res. Dev. 2017, 61, 11–121. [Google Scholar] [CrossRef]

- Jardine, A.K.; Lin, D.; Banjevic, D. A review on machinery diagnostics and prognostics implementing condition-based maintenance. Mech. Syst. Signal Process. 2006, 20, 1483–1510. [Google Scholar] [CrossRef]

- Heng, A.; Tan, A.C.; Mathew, J.; Montgomery, N.; Banjevic, D.; Jardine, A.K. Intelligent condition-based prediction of machinery reliability. Mech. Syst. Signal Process. 2009, 23, 1600–1614. [Google Scholar] [CrossRef]

- Khan, S.; Yairi, T. A review on the application of deep learning in system health management. Mech. Syst. Signal Process. 2018, 107, 241–265. [Google Scholar] [CrossRef]

- Xia, T.; Dong, Y.; Xiao, L.; Du, S.; Pan, E.; Xi, L. Recent advances in prognostics and health management for advanced manufacturing paradigms. Reliab. Eng. Syst. Saf. 2018, 178, 255–268. [Google Scholar] [CrossRef]

- Goldszmidt, M. Bayesian network classifiers. In Wiley Encyclopedia of Operations Research and Management Science; John Wiley & Sons, Inc.: Hoboken, NJ, USA, 2010. [Google Scholar]

- Gullà, F.; Cavalieri, L.; Ceccacci, S.; Germani, M. A BBN-based Method to Manage Adaptive Behavior of a Smart User Interface. Procedia CIRP 2016, 50, 535–540. [Google Scholar] [CrossRef]

- Ghahramani, Z. Learning dynamic Bayesian networks. In International School on Neural Networks, Initiated by IIASS and EMFCSC; Springer: Berlin/Heidelberg, Germany, 1997; pp. 168–197. [Google Scholar]

- Arroyo-Figueroa, G.; Sucar, L.E. A temporal Bayesian network for diagnosis and prediction. arXiv 2013, arXiv:1301.6675. [Google Scholar]

- Salfner, F. Predicting Failures with Hidden Markov Models. In Proceedings of the 5th European Dependable Computing Conference (EDCC-5), Budapest, Hungary, 20–22 April 2005. [Google Scholar]

- Vrignat, P.; Avila, M.; Duculty, F.; Kratz, F. Failure event prediction using hidden markov model approaches. IEEE Trans. Reliab. 2015, 64, 1038–1048. [Google Scholar] [CrossRef]

- Zhou, Z.J.; Hu, C.H.; Xu, D.L.; Chen, M.Y.; Zhou, D.H. A model for real-time failure prognosis based on hidden Markov model and belief rule base. Eur. J. Oper. Res. 2010, 207, 269–283. [Google Scholar] [CrossRef]

- Canizo, M.; Onieva, E.; Conde, A.; Charramendieta, S.; Trujillo, S. Real-time predictive maintenance for wind turbines using Big Data frameworks. In Proceedings of the 2017 IEEE International Conference on Prognostics and Health Management (ICPHM), Dallas, TX, USA, 19–21 June 2017; pp. 70–77. [Google Scholar]

- Bousdekis, A.; Hribernik, K.; Lewandowski, M.; von Stietencron, M.; Thoben, K.D. A Unified Architecture for Proactive Maintenance in Manufacturing Enterprises. In Enterprise Interoperability VIII; Springer: Cham, Switzerland, 2019; pp. 307–317. [Google Scholar] [CrossRef]

- Liu, Z.; Jin, C.; Jin, W.; Lee, J.; Zhang, Z.; Peng, C.; Xu, G. Industrial AI Enabled Prognostics for High-speed Railway Systems. In Proceedings of the 2018 IEEE International Conference on Prognostics and Health Management (ICPHM), Seattle, WA, USA, 11–13 June 2018; pp. 1–8. [Google Scholar] [CrossRef]

- Pedregosa, F.; Varoquaux, G.; Gramfort, A.; Michel, V.; Thirion, B.; Grisel, O.; Blondel, M.; Prettenhofer, P.; Weiss, R.; Dubourg, V.; et al. Scikit-learn: Machine learning in Python. J. Mach. Learn. Res. 2011, 12, 2825–2830. [Google Scholar]

- Breiman, L. Random forests. Mach. Learn. 2001, 45, 5–32. [Google Scholar] [CrossRef]

- Chen, T.; Guestrin, C. Xgboost: A scalable tree boosting system. In Proceedings of the 22nd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, San Francisco, CA, USA, 13–17 August 2016; pp. 785–794. [Google Scholar]

- Lipton, Z.C. The mythos of model interpretability. Queue 2018, 16, 31–57. [Google Scholar] [CrossRef]

- Friedman, J.H. Greedy function approximation: A gradient boosting machine. Ann. Stat. 2001, 29, 1189–1232. [Google Scholar] [CrossRef]

- Abu-Samah, A.; Shahzad, M.; Zamai, E.; Said, A.B. Failure prediction methodology for improved proactive maintenance using Bayesian approach. IFAC-PapersOnLine 2015, 48, 844–851. [Google Scholar] [CrossRef]

- Xu, Y.; Sun, Y.; Liu, X.; Zheng, Y. A digital-twin-assisted fault diagnosis using deep transfer learning. IEEE Access 2019, 7, 19990–19999. [Google Scholar] [CrossRef]

- Gan, C.L. Prognostics and Health Management of Electronics: Fundamentals, Machine Learning, and the Internet of Things; John Wiley & Sons, Inc.: Hoboken, NJ, USA, 2020. [Google Scholar]

- Sun, B.; Feng, J.; Saenko, K. Return of frustratingly easy domain adaptation. In Proceedings of the Thirtieth AAAI Conference on Artificial Intelligence, Phoenix, AZ, USA, 12–17 February 2016. [Google Scholar]

| Lag Features | |

|---|---|

| (W = {30,20,15,10} days) | Description |

| Programs | Reports the average frequency of program aggregates of size W |

| Security control errors | Reports the average frequency of error aggregates of size W |

| Inverter errors | Reports the average frequency of inverter error aggregates of size W |

| KO error | Reports the average frequency of KO error aggregates of size W |

| SOS error | Reports the average frequency of SOS aggregates of size W |

| Emergency errors | Reports the average frequency of emergency errors aggregates of size W |

| Overheating error | Reports the average frequency of overheating error aggregates of size W |

| Tool change | Reports the average frequency of tool change aggregates of size W |

| Global errors | Report the average frequency all error (security control, inverter, KO, SOS, overheating, tool change) aggregates of size W |

| SOS program error | Report the average frequency number of programs within of size W |

| Accuracy | Recall | Precision | |||||

|---|---|---|---|---|---|---|---|

| Model | RUL | Training | Testing | Training | Testing | Training | Testing |

| Distributed Random Forest (DRF) | 30 | 97.4 (0.4) | 96.8 | 98.8 (0.4) | 96.5 | 98.3 (0.3) | 100 |

| 20 | 97.8 (0.2) | 96.5 | 99.3 (0.2) | 96.5 | 98.4 (0.4) | 99.3 | |

| 10 | 98.2 (0.5) | 96.5 | 99.6 (0.2) | 96.9 | 98.5 (0.4) | 99.3 | |

| Gradient Boosting Machine (GBM) | |||||||

| 30 | 98.2 (0.2) | 98.9 | 99.6 (0.1) | 99.6 | 98.3 (0.3) | 99.1 | |

| 20 | 98.7 (0.4) | 97.8 | 99.5 (0.2) | 98.7 | 99.1 (0.2) | 98.8 | |

| 10 | 98.5 (0.8) | 97.8 | 93.3 (0.2) | 97.9 | 99.2 (0.6) | 99.8 | |

| Extreme Gradient Boosting (XGBoost) | |||||||

| 30 | 98.1 (0.5) | 98.8 | 99.5 (0.1) | 100 | 98.4 (0.7) | 98.7 | |

| 20 | 98.3 (0.7) | 96.3 | 99.7 (0.01) | 100 | 98.4(0.7) | 96.2 | |

| 10 | 98.4 (0.6) | 98.8 | 99.6 (0.1) | 99.8 | 98.8 (0.7) | 99.8 | |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Calabrese, M.; Cimmino, M.; Fiume, F.; Manfrin, M.; Romeo, L.; Ceccacci, S.; Paolanti, M.; Toscano, G.; Ciandrini, G.; Carrotta, A.; et al. SOPHIA: An Event-Based IoT and Machine Learning Architecture for Predictive Maintenance in Industry 4.0. Information 2020, 11, 202. https://doi.org/10.3390/info11040202

Calabrese M, Cimmino M, Fiume F, Manfrin M, Romeo L, Ceccacci S, Paolanti M, Toscano G, Ciandrini G, Carrotta A, et al. SOPHIA: An Event-Based IoT and Machine Learning Architecture for Predictive Maintenance in Industry 4.0. Information. 2020; 11(4):202. https://doi.org/10.3390/info11040202

Chicago/Turabian StyleCalabrese, Matteo, Martin Cimmino, Francesca Fiume, Martina Manfrin, Luca Romeo, Silvia Ceccacci, Marina Paolanti, Giuseppe Toscano, Giovanni Ciandrini, Alberto Carrotta, and et al. 2020. "SOPHIA: An Event-Based IoT and Machine Learning Architecture for Predictive Maintenance in Industry 4.0" Information 11, no. 4: 202. https://doi.org/10.3390/info11040202

APA StyleCalabrese, M., Cimmino, M., Fiume, F., Manfrin, M., Romeo, L., Ceccacci, S., Paolanti, M., Toscano, G., Ciandrini, G., Carrotta, A., Mengoni, M., Frontoni, E., & Kapetis, D. (2020). SOPHIA: An Event-Based IoT and Machine Learning Architecture for Predictive Maintenance in Industry 4.0. Information, 11(4), 202. https://doi.org/10.3390/info11040202