Design of Distributed Discrete-Event Simulation Systems Using Deep Belief Networks

Abstract

1. Introduction and Background

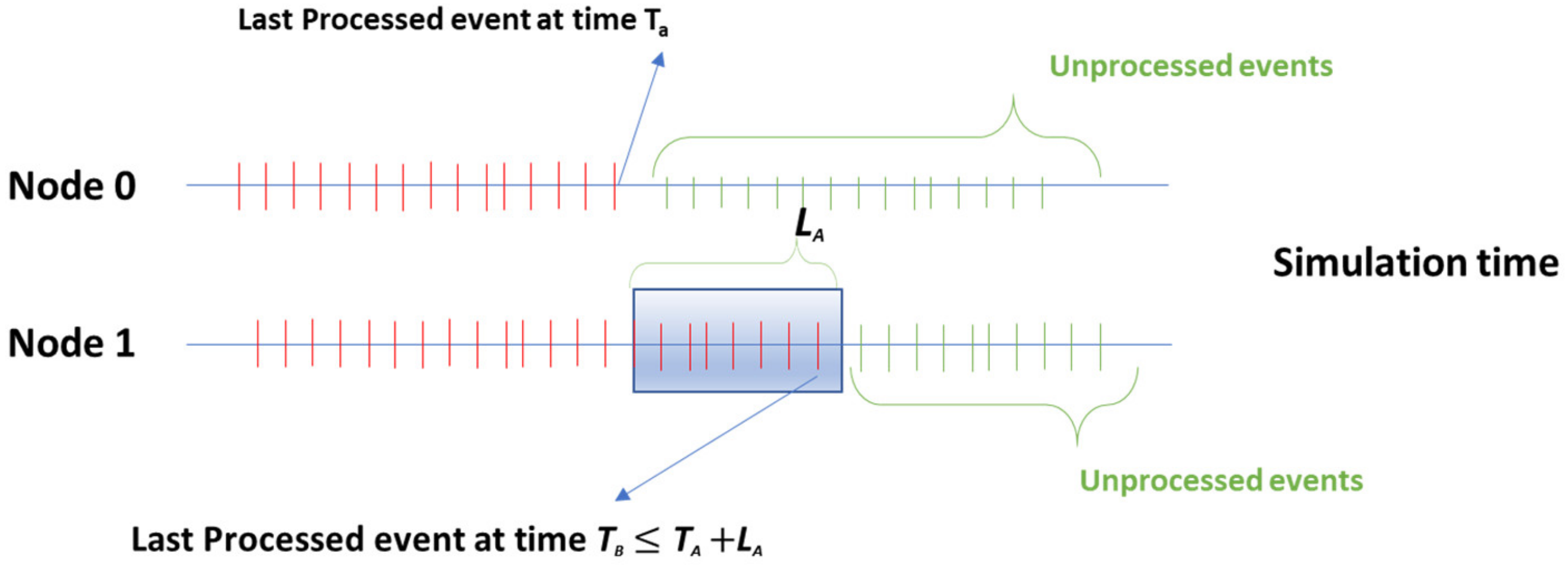

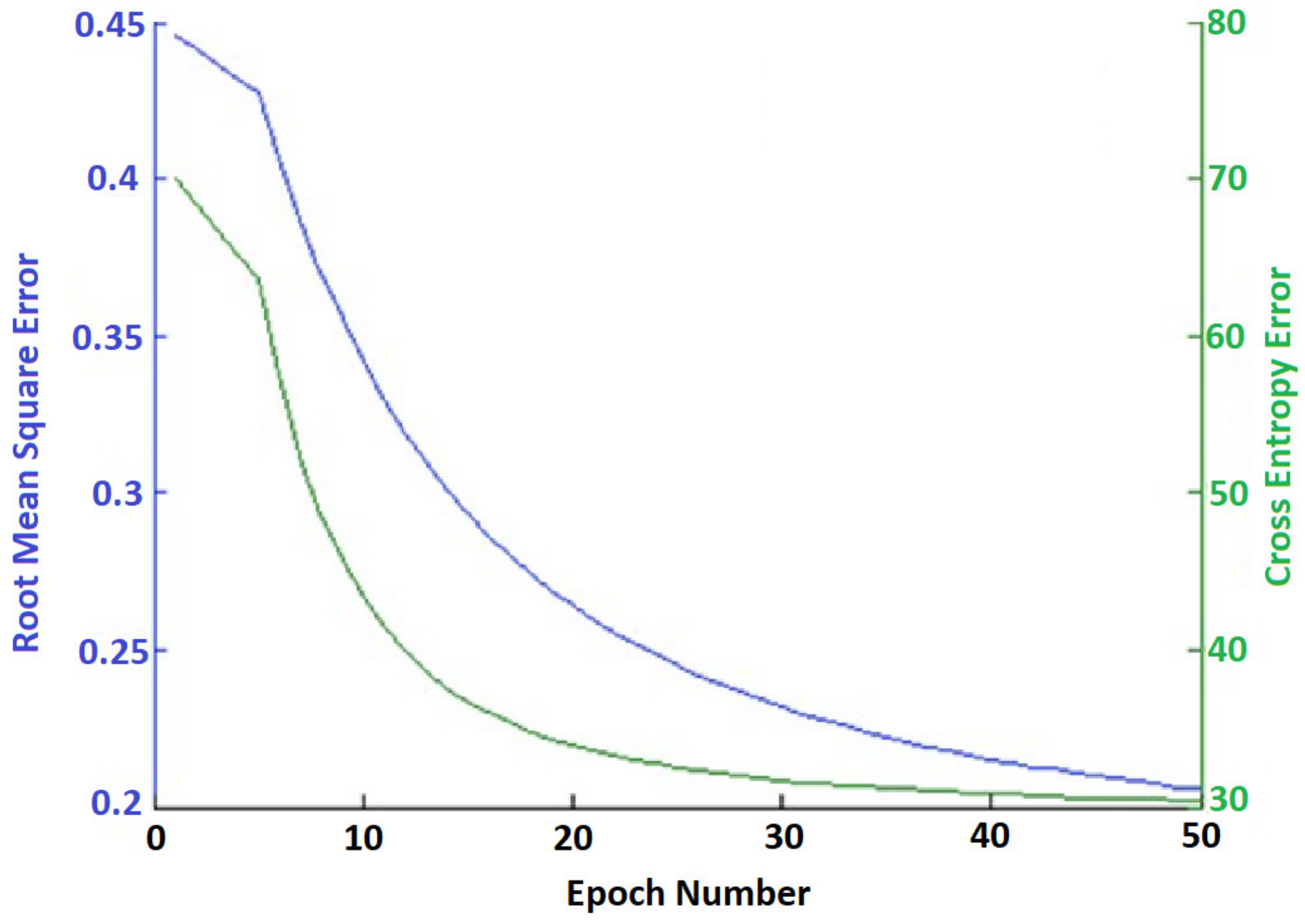

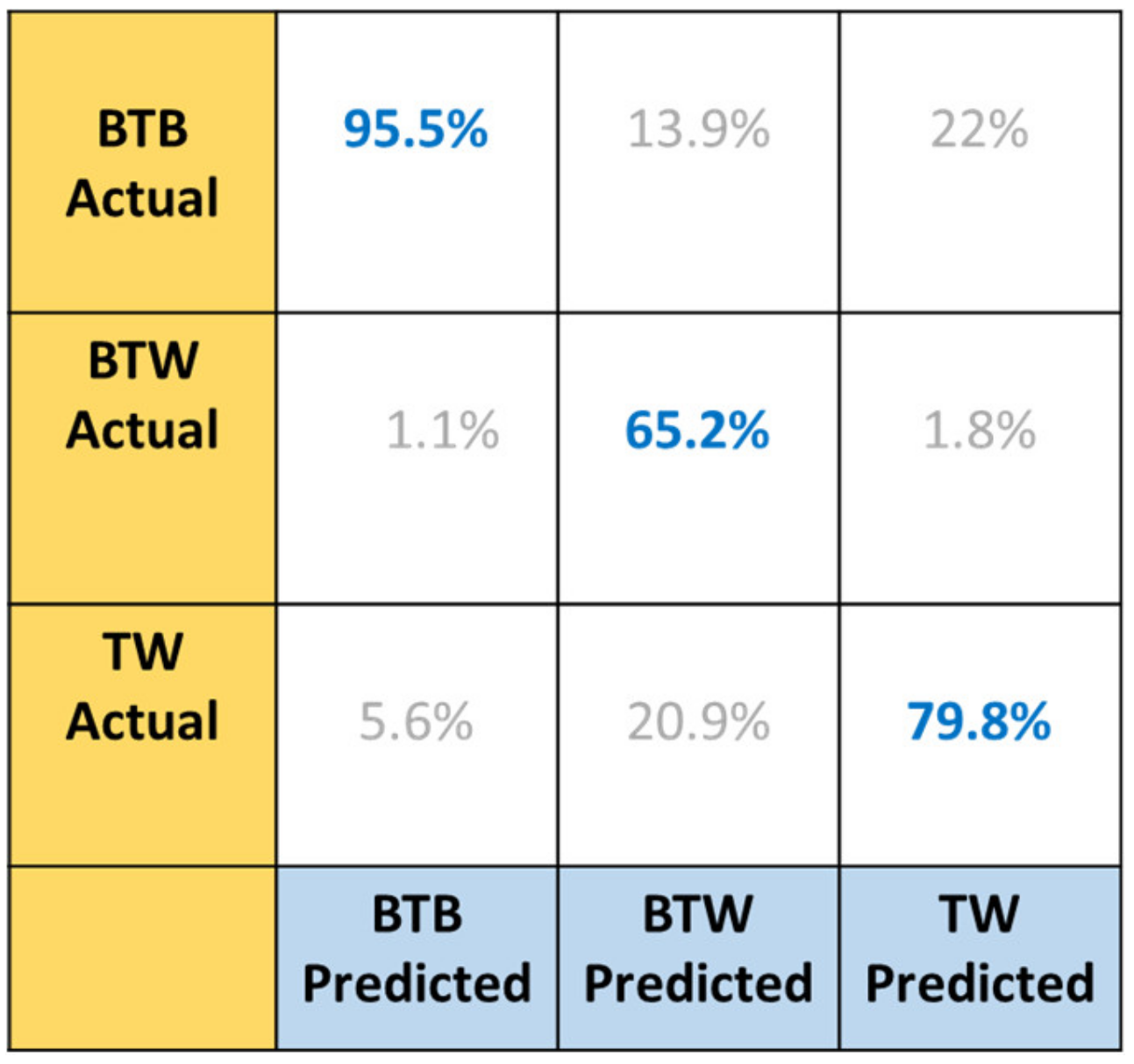

1.1. Conservative and Optimistic Schemes

1.1.1. Conservative Viewpoint

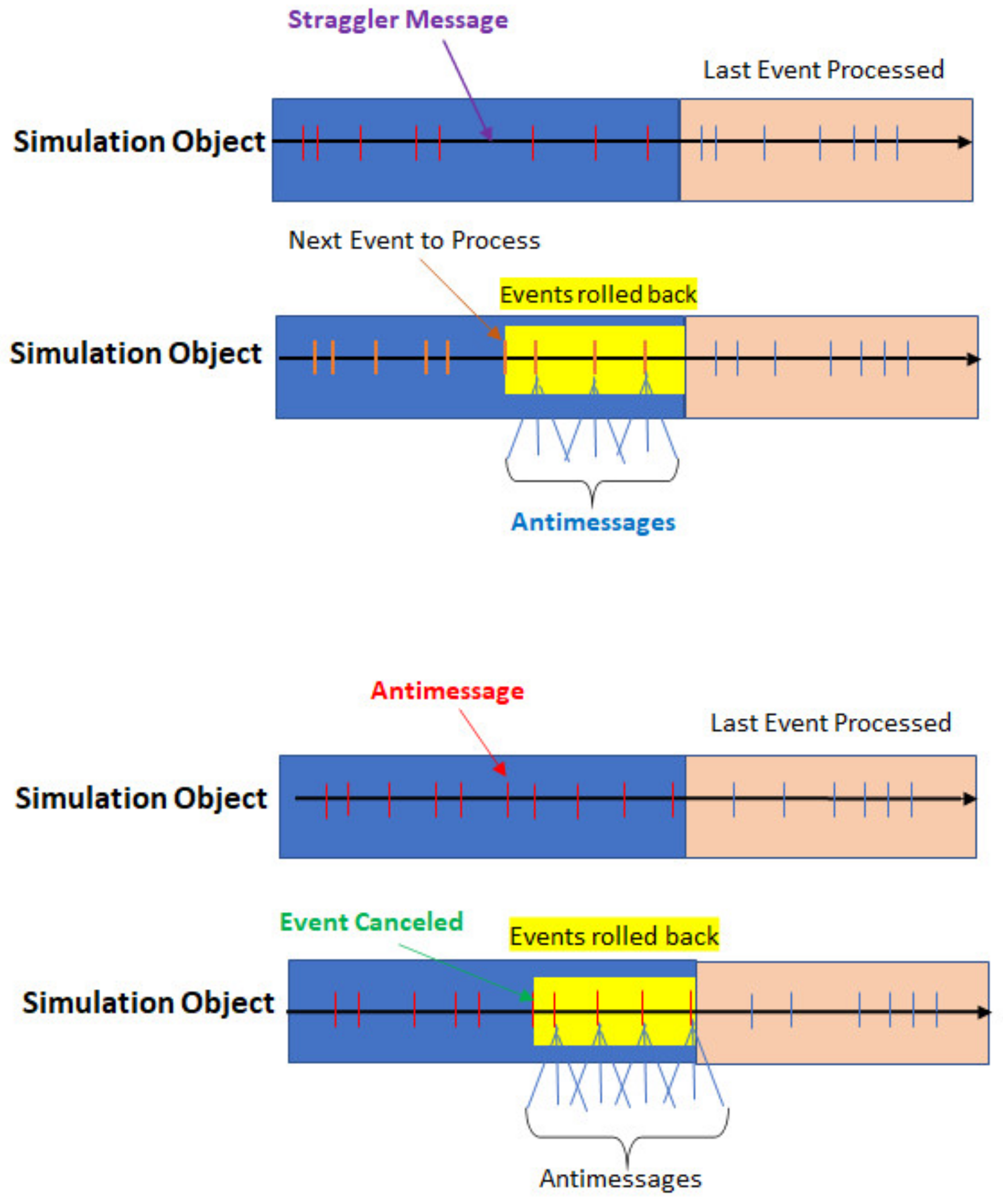

1.1.2. Optimistic Viewpoint

Time Warp (TW)

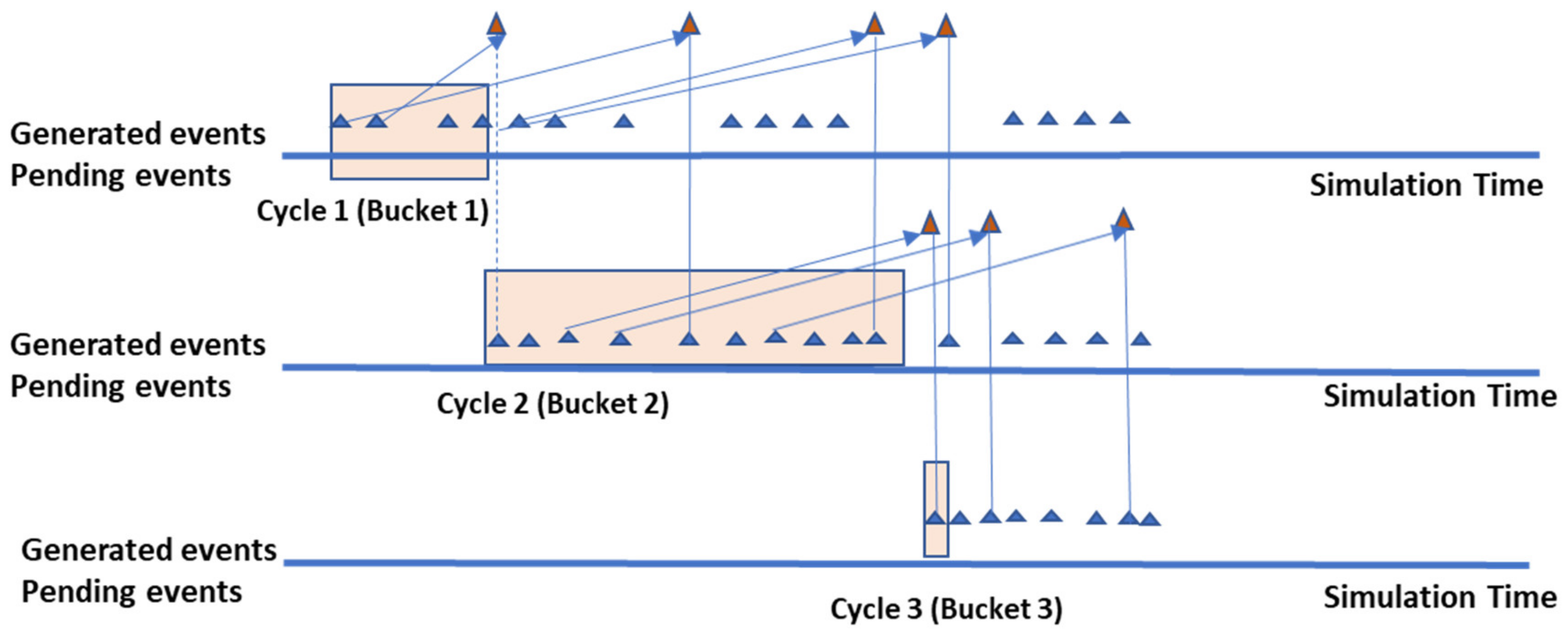

Breathing Time Buckets (BTB)

- BTB is TW without the scheme of using antimessages.

- BTB deals with events in the same style as fixed time buckets. The difference is that the size of the cycles is not predetermined.

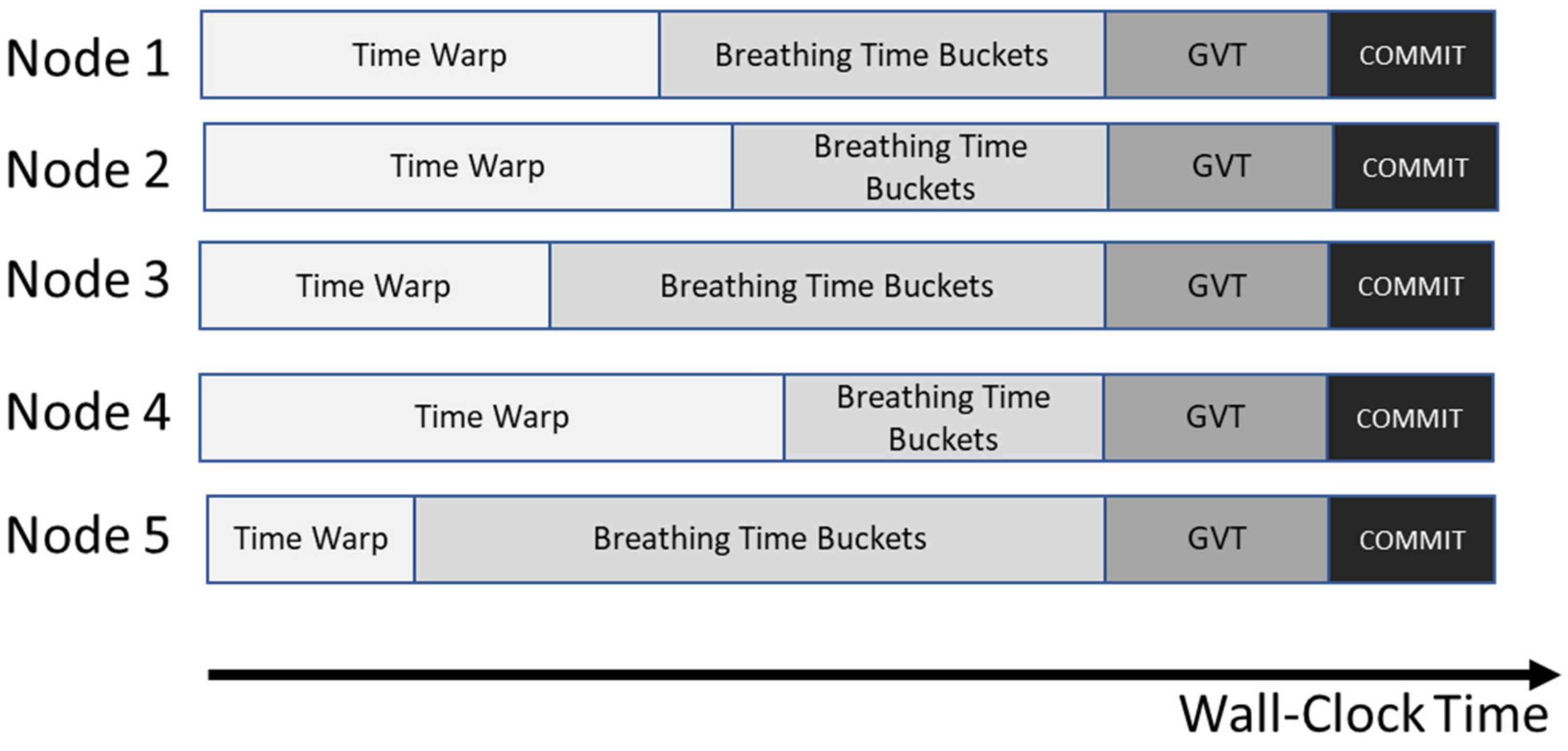

Breathing Time Warp (BTW)

- TW phase: This phase starts with TW. There is a crucial flow parameter to fine-tune called Nrisk. “Nrisk is the number of events processed beyond GVT by each node” (locally) “that are allowed to send their messages with risk” [21].

- BTB phase: At the end of the TW phase, messages are held back, and the BTB phase starts execution.

- Computing GVT: At the end of the BTB phase, computing GVT is performed. There are two other crucial flow parameters to fine-tune called Ngvt and Nopt. “Ngvt is the number of messages received by each node before requesting a GVT update” [21]. On the other hand, “Nopt is the number of events allowed to be processed on each node beyond GVT” [21]. Therefore, Ngvt and Nopt control when GVT is calculated.

- Committed Events: The events that are executed before GVT is committed.

1.2. Problem Statement

2. Deep Belief Networks

3. Validation and Variations of the Implementation of Deep Belief Networks (DBNs)

4. Selection of a Parallel and Distributed Discrete-Event Simulation (PDDES) Platform

4.1. WarpIV Engine

4.2. Advantages of WarpIV

- It features state-of-the-art conservative, optimistic, and sequential time management modes.

- It distributes models and simulation objects automatically across multiple processors (even using the Internet) while handling event processing in logical time.

- It offers an excellent interface.

- It is updated to the latest operating systems, network connections, and extensions built to enhance functionality.

- It supports interoperability and reusability.

- There are training courses and support available.

5. Programming in Warp IV

- It is a discrete-event simulation program (with capabilities to be executed in parallel/distributed computing environments). WarpIV provides a rollbackable version of the standard template library (STL) to accommodate mainstream C++ programmers [16,41]. Therefore, the programming is built using C/C++.

- Time is in seconds for the simulation clock.

- There are two (2) types of simulation objects (SOs):

- Aircraft.

- Ground Radars.

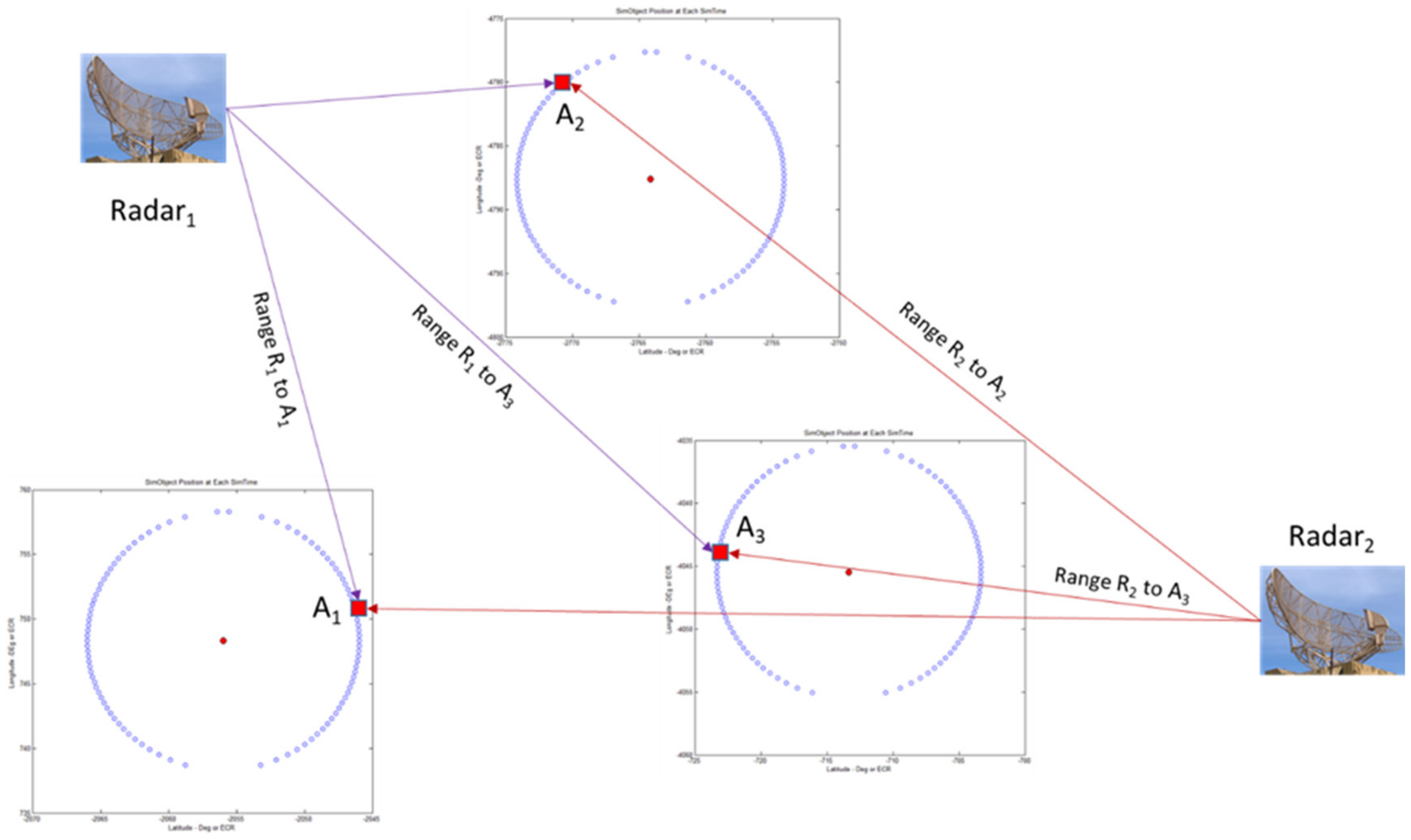

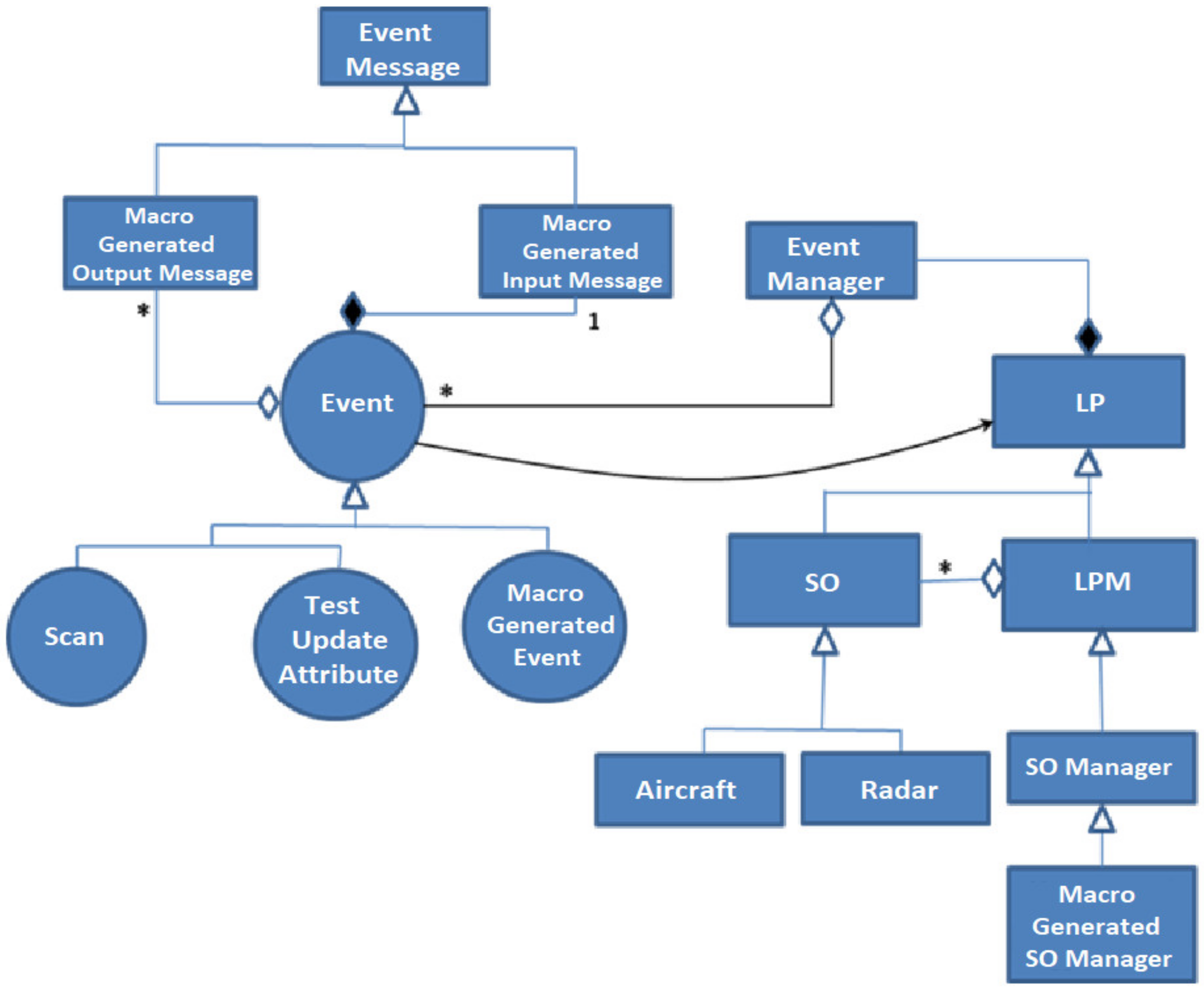

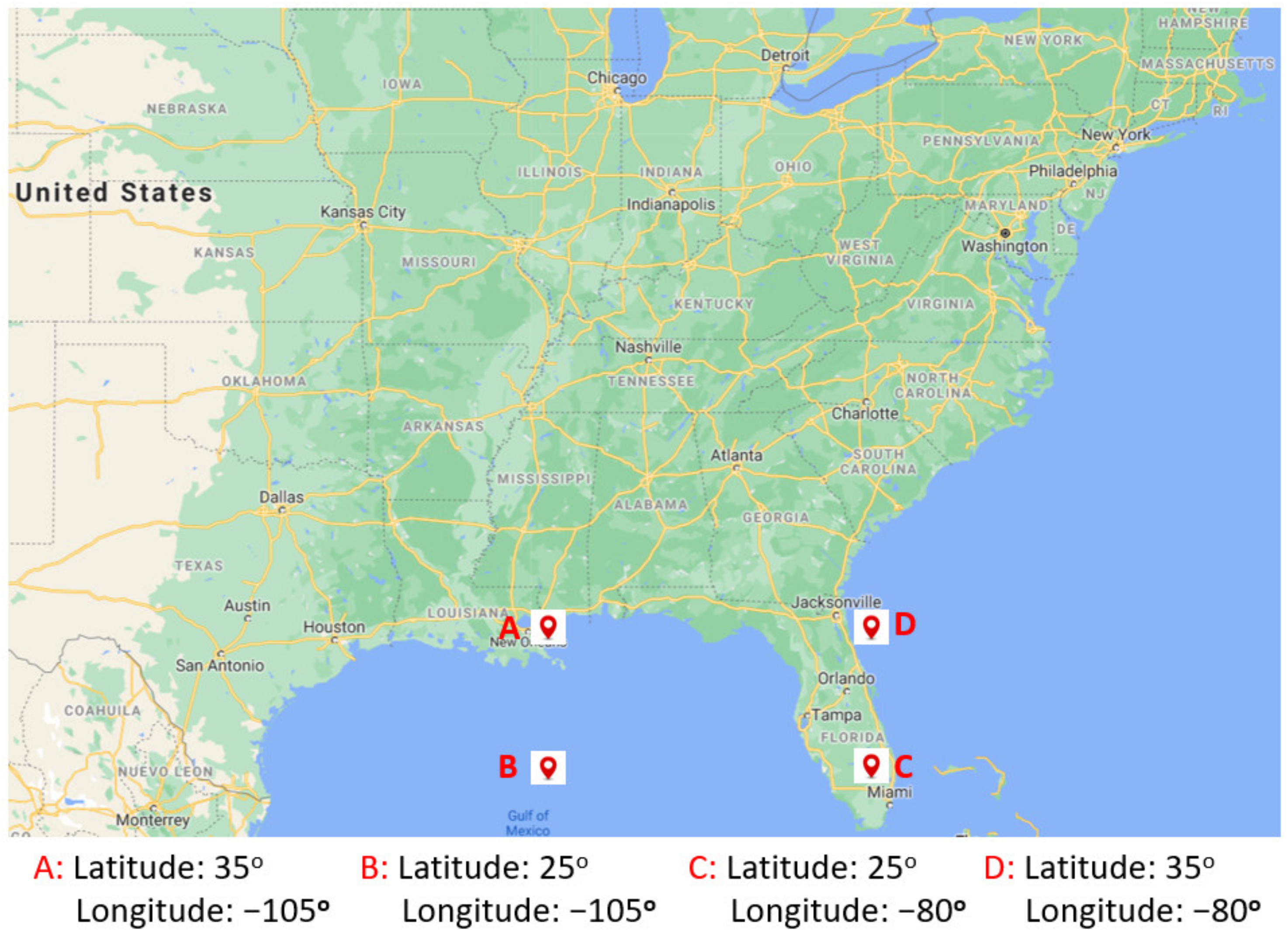

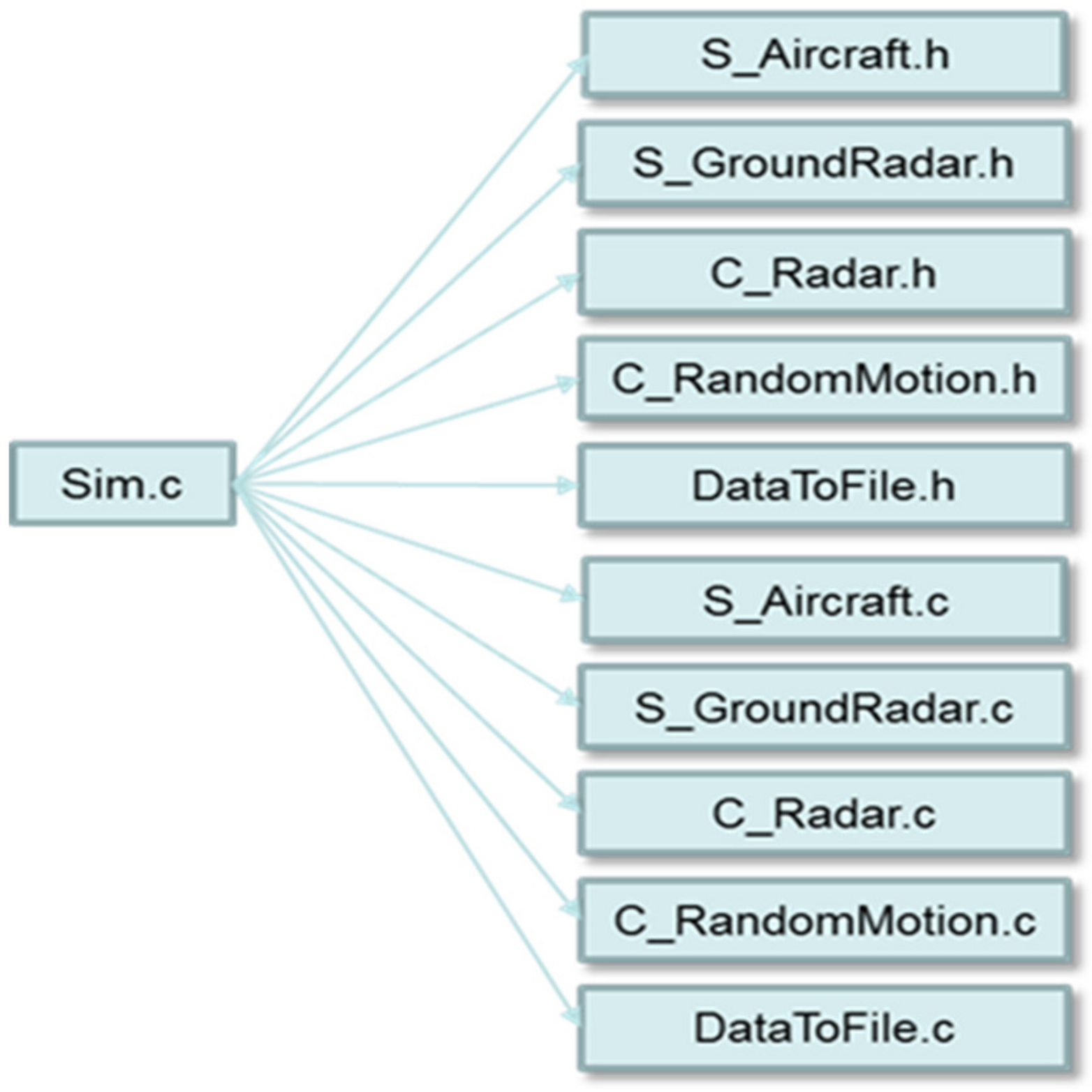

There is an event TestUpdateAttribute that updates the trajectory of the aircraft at specific times.The event for the radars is Scan. At the initial simulation time, Scan is scheduled, and it happens at regular intervals depending on the technical specifications of the radars. WarpIV engine has the class logical process (LP) (Figure 8). Simulation object (SO) is a regular LP class and inherits from the LP Class. The logical process manager (LPM) can have several simulation objects (SOs), and a simulation object can belong to only one LPM. A SO manager class (that inherits from the LPM Class) for each user-defined simulation object type is automatically generated by a macro (for this case study is Aircraft and Radar). With regards to events: events always have one input message and zero or more outgoing messages that are generated and sent to create new events. Events inherit from the event class (Scan and TestUpdateAttribute for this case study).The simulation randomly initializes the position of each aircraft and radar in the selected theater of operations. Earth-centered rotational Cartesian coordinates (ECR) represent positions (X, Y, Z). After initialization, the simulation begins with the aircraft flying in trajectories and the radars scanning the airspace to detect them using pre-established technical specifications. - The theater of operations is read from a file with the corresponding longitude and latitude. The speed (maximum and minimum) of the aircraft is read from a file (m/s). The range (scanning) of the radar can be read from a file or hardcoded in the program. See Figure 9 for an example of a theater of operations.

- After the initialization routines, the simulation senses an aircraft’s proximity to a radar utilizing the predefined technical specifications.

- The TestUpdateAttribute event points to the method TestUpdateAttribute(). The event’s framework scheduler kicks off this method at simulation time = zero. At each simulation time, each parallel instance (one for each aircraft) of the TestUpdateAttribute() method in C_RandomMotion.C (Figure 9) computes the path position of each aircraft.

- The Scan event points to method Scan() for each radar. The event’s framework scheduler kicks off this method at simulation time = zero. At each discrete simulation time, each parallel instance of the Scan() method computes the proximity of an aircraft to each ground radar. Proximity (range) is calculated in parallel using radar position and moving entity position vectors via Range = √∆X2 + ∆Y2 + ∆X2, where ∆ represents the difference between radar and aircraft positions (∆latitude, ∆longitude, and ∆altitude) in earth-centered rotational coordinates (ECR).

- The aircraft does not know the existence of the radars, but the radars can know their position.

- T (wall-clock time) is a measure of the actual time from start to finish, containing the time due to scheduled interruptions or waiting for computational assets.

- Speedup relative is the wall-clock time for a single node (sequential) divided by T (wall-clock time), considering all of the nodes used for that synchronization scheme (the wall-clock time of the node with the maximum value).

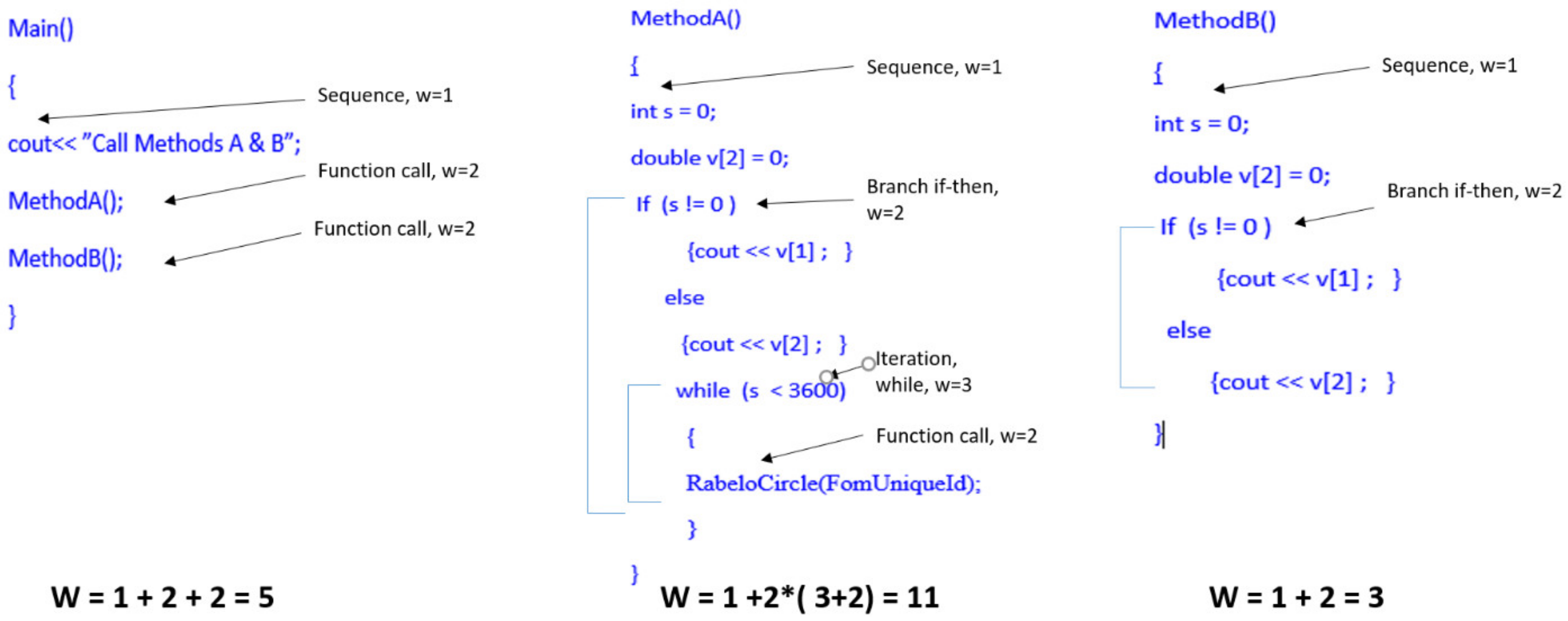

6. Measuring the Complexity of a Parallel Distributed Discrete-Event Simulation Implementation

- Cognitive weights of the software;

- Simulation objects;

- Classes of simulation objects;

- Mean of events;

- Standard deviation of events;

- Mean of cognitive weights used by simulation objects;

- Standard deviation of the cognitive weights;

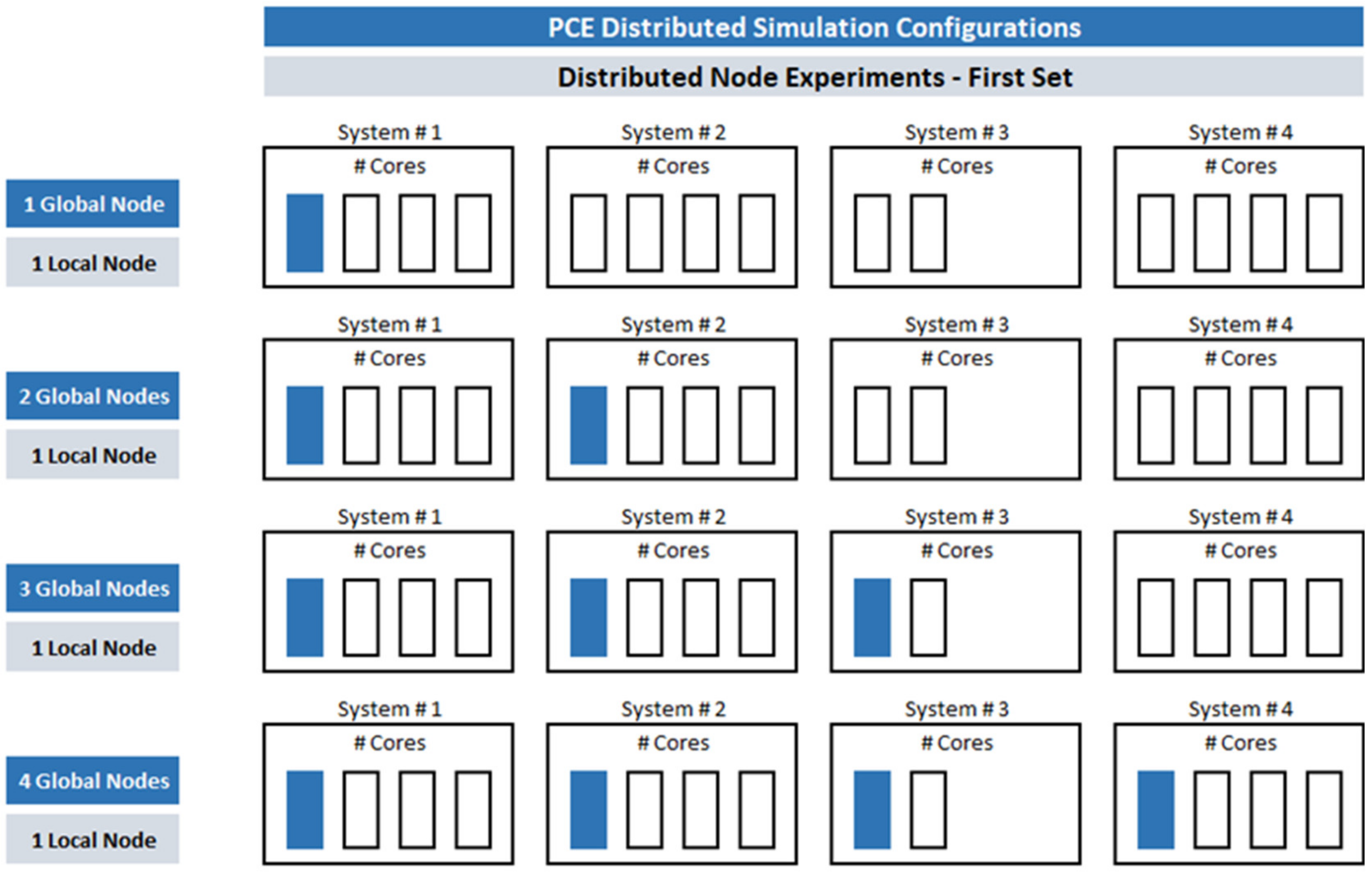

- Global nodes;

- Mean of the local nodes per global node;

- Standard deviation of the local nodes;

- Mean number of cores/threads utilized;

- Standard deviation of the number of cores/threads utilized;

- Mean of the CPUs’ speed;

- Standard deviation of the CPUs’ speed;

- Mean of the memory size;

- Standard deviation of the memory size;

- Critical path;

- Theoretical (maximum) speedup;

- Ratio of local events divided by the local and global events;

- Ratio of the number of subscriber objects divided by publishers and subscribers;

- Scatter or block distribution.

7. Results

8. Conclusions and Further Research

Author Contributions

Funding

Conflicts of Interest

References

- Borshchev, A. The Big Book of Simulation Modeling: Multimethod Modeling with AnyLogic 6; AnyLogic North America: Chicago, IL, USA, 2013. [Google Scholar]

- Fujimoto, R. Parallel and Distributed Simulation, 1st ed.; John Wiley & Sons: New York, NY, USA, 2000. [Google Scholar]

- Page, E.; Buss, A.; Fishwick, P.; Healy, K.; Nance, R.; Paul, R. Web-based Simulation: Revolution or Evolution? ACM Trans. Modeling Comput. Simul. (TOMACS) 2000, 10, 3–17. [Google Scholar] [CrossRef]

- Fujimoto, R.; Malik, A.; Park, A. Parallel and distributed Simulation in the cloud. SCS Modeling Simul. Mag. 2010, 3, 1–10. [Google Scholar]

- Jávor, A.; Fur, A. Simulation on the Web with distributed models and intelligent agents. Simulation 2012, 88, 1080–1092. [Google Scholar] [CrossRef]

- Amoretti, M.; Zanichelli, F.; Conte, G. Efficient autonomic cloud computing using online discrete event simulation. J. Parallel Distrib. Comput. 2013, 73, 767–776. [Google Scholar] [CrossRef]

- Jafer, S.; Liu, Q.; Wainer, G. Synchronization methods in Parallel and distributed discrete-event Simulation. Simul. Model. Pract. Theory 2013, 30, 54–73. [Google Scholar] [CrossRef]

- Padilla, J.; Diallo, S.; Barraco, A.; Lynch, C.; Kavak, H. Cloud-based simulators: Making simulations accessible to non-experts and experts alike. In Proceedings of the 2014 Winter Simulation Conference 2014, Savannah, GA, USA, 7–10 December 2014; pp. 3630–3639. [Google Scholar]

- Yoginath, S.; Perumalla, K. Efficient Parallel Discrete Event Simulation on cloud/virtual machine platforms. ACM Trans. Modeling Comput. Simul. (TOMACS) 2015, 26, 1–26. [Google Scholar] [CrossRef]

- Padilla, J.; Lynch, C.; Diallo, S.; Gore, R.; Barraco, A.; Kavak, H.; Jenkins, B. Using simulation games for teaching and learning discrete-event simulation. In Proceedings of the 2016 Winter Simulation Conference (WSC), Washington, DC, USA, 11–14 December 2016; pp. 3375–3384. [Google Scholar]

- Liu, D.; De Grande, R.; Boukerche, A. Towards the Design of an Interoperable Multi-cloud Distributed Simulation System. In Proceedings of the 2017 Spring Simulation Multi-Conference—Annual Simulation Symposium, Virginia Beach, VA, USA, 23−26 April 2017; pp. 1–12. [Google Scholar]

- Diallo, S.; Gore, R.; Padilla, J.; Kavak, H.; Lynch, C. Towards a World Wide Web of Simulation. J. Def. Modeling Simul. Appl. Methodol. Technol. 2017, 14, 159–170. [Google Scholar] [CrossRef]

- Shchur, L.; Shchur, L. Parallel Discrete Event Simulation as a Paradigm for Large Scale Modeling Experiments. In Proceedings of the XVII International Conference “Data Analytics and Management in Data Intensive Domains” (DAMDID/RCDL’2015), Obninsk, Russia, 13−16 October 2015. [Google Scholar]

- Tang, Y.; Perumalla, K.; Fujimoto, R.; Karimabadi, H.; Driscoll, J.; Omelchenko, Y. Optimistic parallel discrete event simulations of physical systems using reverse computation. In Proceedings of the Workshop on Principles of Advanced and Distributed Simulation (PADS’05), Monterey, CA, USA, 1−3 June 2005. [Google Scholar]

- Ziganurova, L.; Novotny, M.; Shchur, L. Model for the evolution of the time profile in optimistic parallel discrete event simulations. In Proceedings of the International Conference on Computer Simulation in Physics and Beyond, Moscow, Russia, 6–10 September 2015. [Google Scholar]

- Steinman, J. The WarpIV Simulation Kernel. In Proceedings of the Workshop on Principles of Advanced and Distributed Simulation (PADS 2005), Monterey, CA, USA, 1–3 June 2005. [Google Scholar]

- Steinman, J. Breathing Time Warp. In Proceedings of the 7th Workshop on Parallel and Distributed Simulation (PADS93), San Diego, CA, USA, 16–19 May 1993. [Google Scholar]

- Cortes, E.; Rabelo, L.; Lee, G. Using Deep Learning to Configure Parallel Distributed Discrete-Event Simulators. In Artificial Intelligence: Advances in Research and Applications, 1st ed.; Rabelo, L., Bhide, S., Gutierrez, E., Eds.; Nova Science Publishers: Hauppauge, NY, USA, 2018. [Google Scholar]

- Steinman, J. Discrete-Event Simulation, and the Event Horizon. ACM SIGSIM Simul. Dig. 1994, 24, 39–49. [Google Scholar] [CrossRef]

- Steinman, J. Discrete-Event Simulation and the Event Horizon Part 2: Event List Management. ACM SIGSIM Simul. Dig. 1996, 26, 170–178. [Google Scholar] [CrossRef]

- Steinman, J.; Nicol, D.; Wilson, L.; Lee, C. Global Virtual Time and Distributed Synchronization. In Proceedings of the 1995 Parallel and Distributed Simulation Conference, Lake Placid, NY, USA, 14–16 June 1995. [Google Scholar]

- Hinton, G.; Salakhutdinov, R. Reducing the dimensionality of data with neural networks. Science 2006, 313, 504–507. [Google Scholar] [CrossRef]

- Yu, K.; Jia, L.; Chen, Y.; Xu, W. Deep learning: Yesterday, today, and tomorrow. J. Comput. Res. Dev. 2013, 50, 1799–1804. [Google Scholar]

- Jiang, L.; Zhou, Z.; Leung, T.; Li, T.; Fei-Fei, L. Mentornet: Learning data-driven curriculum for very deep neural networks on corrupted labels. In Proceeding of the Thirty-Fifth International Conference on Machine Learning, Stockholmsmässan, Stockholm, Sweden, 10–15 July 2018. [Google Scholar]

- Hinton, G. A practical guide to training restricted Boltzmann machines. Momentum 2010, 9, 926. [Google Scholar]

- Mohamed, A.; Sainath, T.; Dahl, G.; Ramabhadran, B.; Hinton, G.; Picheny, M. Deep belief networks using discriminative features for phone recognition. In Proceedings of the Acoustics, Speech and Signal Processing (ICASSP), 2011 IEEE International Conference, Prague, Czech Republic, 22–27 May 2011. [Google Scholar]

- Mohamed, A.; Dahl, G.; Hinton, G. Acoustic modeling using deep belief networks. IEEE Trans. Audio Speech Lang. Process. 2012, 20, 14–22. [Google Scholar] [CrossRef]

- Huang, W.; Song, G.; Hong, G. Deep architecture for traffic flow prediction: Deep belief networks with multitask learning. IEEE Trans. Intell. Transp. Syst. 2014, 15, 2191–2201. [Google Scholar] [CrossRef]

- Sarikaya, R.; Hinton, G.; Deoras, A. Application of deep belief networks for natural language understanding. IEEE/ACM Trans. Audio Speech Lang. Process. 2014, 22, 778–784. [Google Scholar] [CrossRef]

- Movahedi, F.; Coyle, J.; Sejdić, E. Deep belief networks for electroencephalography: A review of recent contributions and future outlooks. IEEE J. Biomed. Health Inform. 2018, 22, 642–652. [Google Scholar] [CrossRef]

- Hinton, G.; Osindero, S.; Yee-Whye, T. A Fast Learning Algorithm for Deep Belief Nets. Neural Comput. 2006, 18, 1527–1554. [Google Scholar] [CrossRef]

- Cho, K.; Ilin, A.; Raiko, T. Improved learning of Gaussian-Bernoulli restricted Boltzmann machines. In Artificial Neural Networks and Machine Learning—ICANN 2011; Springer: Berlin/Heidelberg, Germany, 2011; Volume 6791, pp. 10–17. [Google Scholar]

- LeCun, Y.; Bottou, L.; Bengio, Y.; Haffner, P. Gradient-based learning applied to document recognition. Proc. IEEE 1998, 86, 2278–2324. [Google Scholar] [CrossRef]

- LeCun, Y.; Corinna, C. THE MNIST DATABASE of Handwritten Digits. Available online: http://yann.lecun.com/exdb/mnist/ (accessed on 22 September 2020).

- Wu, M.; Chen, L. Image Recognition Based on Deep Learning. In Proceedings of the 2015 Chinese Automation Congress (CAC), Wuhan, China, 27–29 November 2015; pp. 542–546. [Google Scholar]

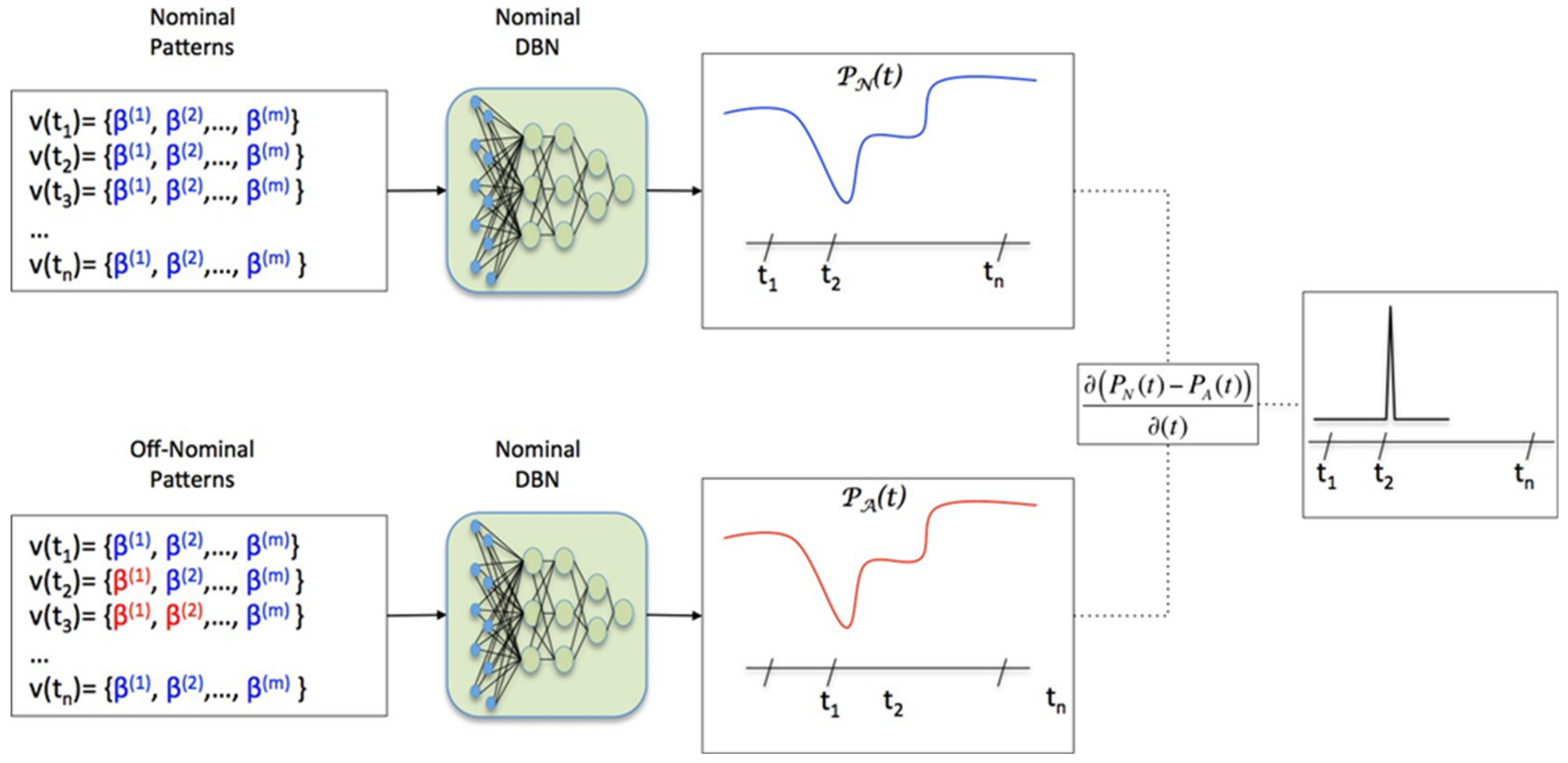

- Cortes, E.; Rabelo, L. An architecture for monitoring and anomaly detection for space systems. SAE Int. J. Aerosp. 2013, 6, 81–86. [Google Scholar] [CrossRef]

- Carothers, C.; Bauer, D.; Pearce, S. ROSS: A high-performance, low memory modular time warp system. J. of Parallel Distrib. Comput. 2002, 62, 1648–1669. [Google Scholar] [CrossRef]

- Mubarak, M.; Carothers, C.; Ross, R.; Carns, P. Using massively parallel Simulation for MPI collective communication modeling in extreme-scale networks. In Proceedings of the 2014 Winter Simulation Conference, Savannah, GA, USA, 7–10 December 2014; pp. 3107–3118. [Google Scholar]

- Steinman, J.; Lammers, C.; Valinski, M. A Proposed Open Cognitive Architecture Framework (OpenCAF). In Proceedings of the 2009 Winter Simulation Conference, Austin, TX, USA, 13–16 December 2009. [Google Scholar]

- Steinman, J.; Lammers, C.; Valinski, M.; Steinman, W. External Modeling Framework and the OpenUTF. Report of WarpIV Technologies. Available online: http://www.warpiv.com/Documents/Papers/EMF.pdf (accessed on 30 September 2020).

- Plauger, P.; Stepanov, A.; Lee, M.; Musser, D. The C++ Standard Template Library; Prentice-Hall PTR, Prentice-Hall Inc.: Upper Saddle River, NJ, USA, 2001. [Google Scholar]

- Shao, J.; Wang, Y. A new measure of software complexity based on cognitive weights. Can. J. Electr. Comput. Eng. 2003, 28, 69–74. [Google Scholar] [CrossRef]

- Misra, S. A Complexity Measure based on Cognitive Weights. Int. J. Theor. Appl. Comput. Sci. 2006, 1, 1–10. [Google Scholar]

- Kent, E.; Hoops, S.; Mendes, P. Condor-COPASI: High-throughput computing for biochemical networks. BMC Syst. Biol. 2012, 6, 91. [Google Scholar] [CrossRef] [PubMed]

- Wang, Y.; Jung, Y.; Supinie, T.; Xue, M. A Hybrid MPI–OpenMP Parallel Algorithm and Performance Analysis for an Ensemble Square Root Filter Designed for Multiscale Observations. J. Atmos. Ocean. Technol. 2013, 30, 1382–1397. [Google Scholar] [CrossRef]

- Zhan, D.; Qian, J.; Cheng, Y. Balancing global and local search in parallel efficient global optimization algorithms. J. Glob. Optim. 2017, 67, 873–892. [Google Scholar] [CrossRef]

- Grandison, A.; Cavanagh, Y.; Lawrence, P.; Galea, E. Increasing the Simulation Performance of Large-Scale Evacuations Using Parallel Computing Techniques Based on Domain Decomposition. Fire Technol. 2017, 53, 1399–1438. [Google Scholar] [CrossRef]

- Rumelhart, D.; Hinton, G.; Williams, R. Learning representations by back-propagating errors. Nature 1986, 323, 533–536. [Google Scholar] [CrossRef]

- Wang, X.; Zhao, Y.; Pourpanah, F. Recent advances in deep learning. Int. J. Mach. Learn. Cybern. 2020, 11, 747–750. [Google Scholar] [CrossRef]

- Ren, K.; Zheng, T.; Qin, Z.; Liu, X. Adversarial Attacks and Defenses in Deep Learning. Engineering 2020, 6, 346–360. [Google Scholar] [CrossRef]

| Flight Number | Flight Date | Start GMT | End GMT | TCID |

|---|---|---|---|---|

| 133 | 24 February 2011 | 124700 | 150000 | SA133B |

| 132 | 14 May 2010 | 090000 | 111000 | SA132B |

| 131 | 4 May 2010 | 010627 | 045800 | SA131A |

| 128 | 28 August 2009 | 185712 | 210000 | SA128B |

| 126 | 14 October 2008 | 154210 | 190000 | SA126A |

| Space Shuttle Main Engine Main Fuel Valve Telemetry Retrieved | ||||

| Engine 3: E41T3153A1, E41T3154A1 (β(1), β(2)). Engine 2: E41T2153A1, E41T2154A1 (β(3), β(4)). Engine 1: E41T1153A1, E41T1154A1 (β(5), β(6)). | ||||

| Learning Rate | Hidden Layer 1 Neurons | Hidden Layer 2 Neurons | Hidden Layer 3 Neurons | Number Output Neurons | RBM Mini-Batch Size | RBM Epochs | DBN Mini-Batch Size | DBN Epochs | Weight Cost | Momentum |

|---|---|---|---|---|---|---|---|---|---|---|

| 10−6 | 30 | 20 | 10 | 1 | 50 | 50 | 50 | 1 | 0.01 | 0.5 |

| # Nodes | ||||||

|---|---|---|---|---|---|---|

| Local | Global | Wall Clock Time (s) | Speedup Rel | Speedup Theoretical | Server | |

| BTW | 1 | 1 | 16.5 | 1 | 3 | PC1 |

| 1 | 2 | 14.1 | 1.2 | 3 | PC1 | |

| 1 | 3 | 12.4 | 1.3 | 3 | PC1 | |

| 1 | 4 | 11.4 | 1.4 | 3 | PC1 | |

| 2 to 4 | 14 | 6.1 | 2.7 | 3 | PC1 | |

| 4 | 8 | 6.5 | 2.6 | 3 | PC1 | |

| 4 | 4 | 9.4 | 1.8 | 3 | ||

| 3 | 3 | 10.5 | 1.6 | 3 | ||

| BTB | 1 | 1 | 16.1 | 1 | 3 | PC1 |

| 1 | 2 | 62.1 | 0.3 | 3 | PC1 | |

| 1 | 3 | 148 | 0.1 | 3 | PC1 | |

| 1 | 4 | 162.6 | 0.1 | 3 | PC1 | |

| 2 to 4 | 14 | 7.7 | 2.1 | 3 | PC1 | |

| 4 | 8 | 6.2 | 2.6 | 3 | PC1 | |

| 4 | 4 | 9.4 | 1.7 | 3 | ||

| 3 | 3 | 10.2 | 1.6 | 3 | ||

| TW | 1 | 1 | 17.2 | 1 | 3 | PC1 |

| 1 | 2 | 13.8 | 1.2 | 3 | PC1 | |

| 1 | 3 | 12.6 | 1.4 | 3 | PC1 | |

| 1 | 4 | 10.9 | 1.6 | 3 | PC1 | |

| 2 to 4 | 14 | 5.9 | 2.9 | 3 | PC1 | |

| 4 | 8 | 6.2 | 2.8 | 3 | PC1 | |

| 4 | 4 | 10 | 1.7 | 3 | ||

| 3 | 3 | 11.4 | 1.5 | 3 | ||

| Cognitive Weights | |||

|---|---|---|---|

| C_Radar.C | 3 | ||

| C_Radar::Init() | 41 | ||

| C_Radar::Terminate() | 13 | ||

| C_Radar::DiscoverFo | 5 | ||

| C_Radar::RemoveFo | 5 | ||

| C_Radar::UpdateFoAttributes | 5 | ||

| C_Radar::ReflectFoAttributes | 5 | ||

| C_Radar::Scan() | 2547 | <-Event | |

| C_RandomMotion.C | 5 | ||

| C_RandomMotion::Init | 83 | ||

| C_RandomMotion::Terminate | 7 | ||

| C_RandomMotion::TestUpdateAttribute | 144 | <-Event | |

| C_RandomMotion::RabeloCircle | 16 | ||

| S_AirCraft.C | 0 | ||

| S_AirCraft::Init() | 9 | ||

| S_AirCraft::Terminate() | 7 | ||

| S_GroundRadar.C | 0 | ||

| S_GroundRadar::Init() | 0 | ||

| S_GroundRadar::Terminate() | 7 | ||

| Sim.c | |||

| main | 17 | ||

| Total Program Weights | 2919 |

| Inputs (21 Input Neurons) | |

| Total Simulation Program Cognitive Weights | 2919 |

| Number of Sim objects | 6 |

| Types of Sim objects | 3 |

| Mean Events per Object | 1 |

| STD Events per Simulation Object | 0 |

| Mean Cog Weights of All objects | 1345 |

| STD Cog Weights of All objects | 1317 |

| Number of Global Nodes | 4 |

| Mean Local Nodes per Computer | 1 |

| STD Local Nodes per Computer | 0 |

| Mean number of cores | 1 |

| STD Number of cores | 0 |

| Mean processor Speed | 2.1 |

| STD processor Speed | 0.5 |

| Mean RAM | 6.5 |

| STD RAM | 1.9 |

| Critical Path% | 0.32 |

| Theoretical Speedup | 3 |

| Local Events/(Local Events + External Events) | 1 |

| Subscribers/(Publishers + Subscribers) | 0.5 |

| Block or Scatter? | 1 |

| Outputs (3 output neurons) | |

| BTB | 0 |

| BTW | 0 |

| TW | 1 |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Cortes, E.; Rabelo, L.; Sarmiento, A.T.; Gutierrez, E. Design of Distributed Discrete-Event Simulation Systems Using Deep Belief Networks. Information 2020, 11, 467. https://doi.org/10.3390/info11100467

Cortes E, Rabelo L, Sarmiento AT, Gutierrez E. Design of Distributed Discrete-Event Simulation Systems Using Deep Belief Networks. Information. 2020; 11(10):467. https://doi.org/10.3390/info11100467

Chicago/Turabian StyleCortes, Edwin, Luis Rabelo, Alfonso T. Sarmiento, and Edgar Gutierrez. 2020. "Design of Distributed Discrete-Event Simulation Systems Using Deep Belief Networks" Information 11, no. 10: 467. https://doi.org/10.3390/info11100467

APA StyleCortes, E., Rabelo, L., Sarmiento, A. T., & Gutierrez, E. (2020). Design of Distributed Discrete-Event Simulation Systems Using Deep Belief Networks. Information, 11(10), 467. https://doi.org/10.3390/info11100467