A Systematic Mapping Study of MMOG Backend Architectures

Abstract

1. Introduction

2. Motivation

3. Methodology

3.1. Research Questions

- What are the main challenges in developing MMOG backends?

- Which criteria are the most relevant to categorize studies into groups?

- What are the research trends over time, in terms of the approaches used for MMOG backends?

- Are there indications of alternative, promising research directions for realizing MMOG backends?

3.2. Search Strategy

3.3. Selection Criteria

Review Process

3.4. Data Collection

- Do the authors identify any challenges in their methodologies? Are these relevant to the study?

- Is the paper focused on a certain area of MMOG development/deployment? How are the described methods evaluated?

- What is the approach utilized by the authors to implement the backend of their MMOG? Do they use any specific tools? In what context was their study conducted? When was it published?

- When data from all studies are collected and analyzed, is there any emerging correlation between time and the methods used? Are there any gaps that have not been explored so far?

4. Selection of Criteria

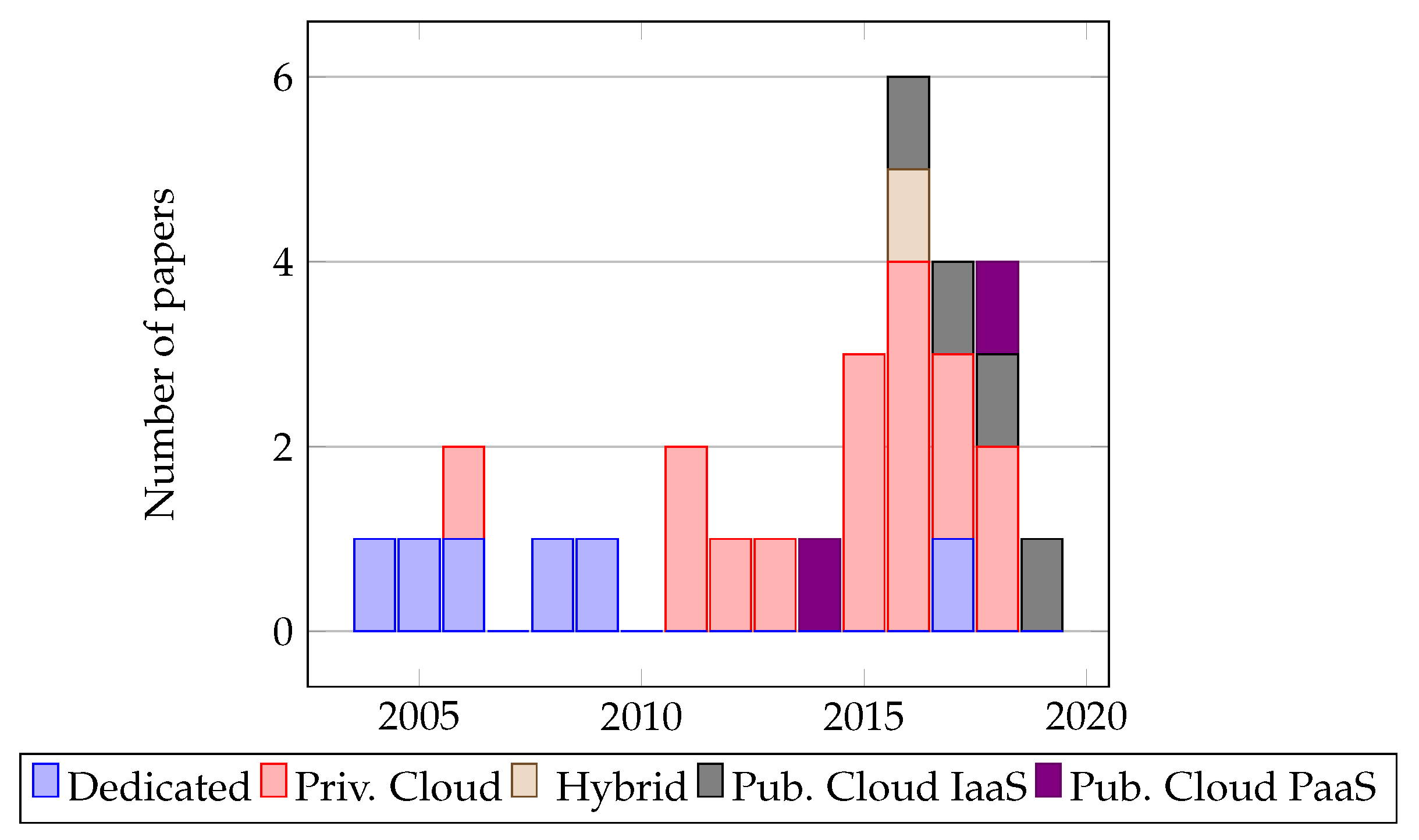

4.1. Infrastructure

- Dedicated (D): Use of network facilities that are specifically purposed to enable one type of application and require direct management at the hardware level.

- Private clouds (PrC): Use of proprietary clouds that are built and maintained privately and can offer higher availability, scalability etc.

- Public clouds (PuC): Use of public clouds which are owned by a third party. Services are leased to the game provider for a given price model. These are further categorized as IaaS and PaaS.

- Hybrid clouds (HC): In the context of this paper we define a hybrid cloud as a combination of private and public clouds to enable MMOGs.

- Unknown (U): Unknown—that is, no information could be identified.

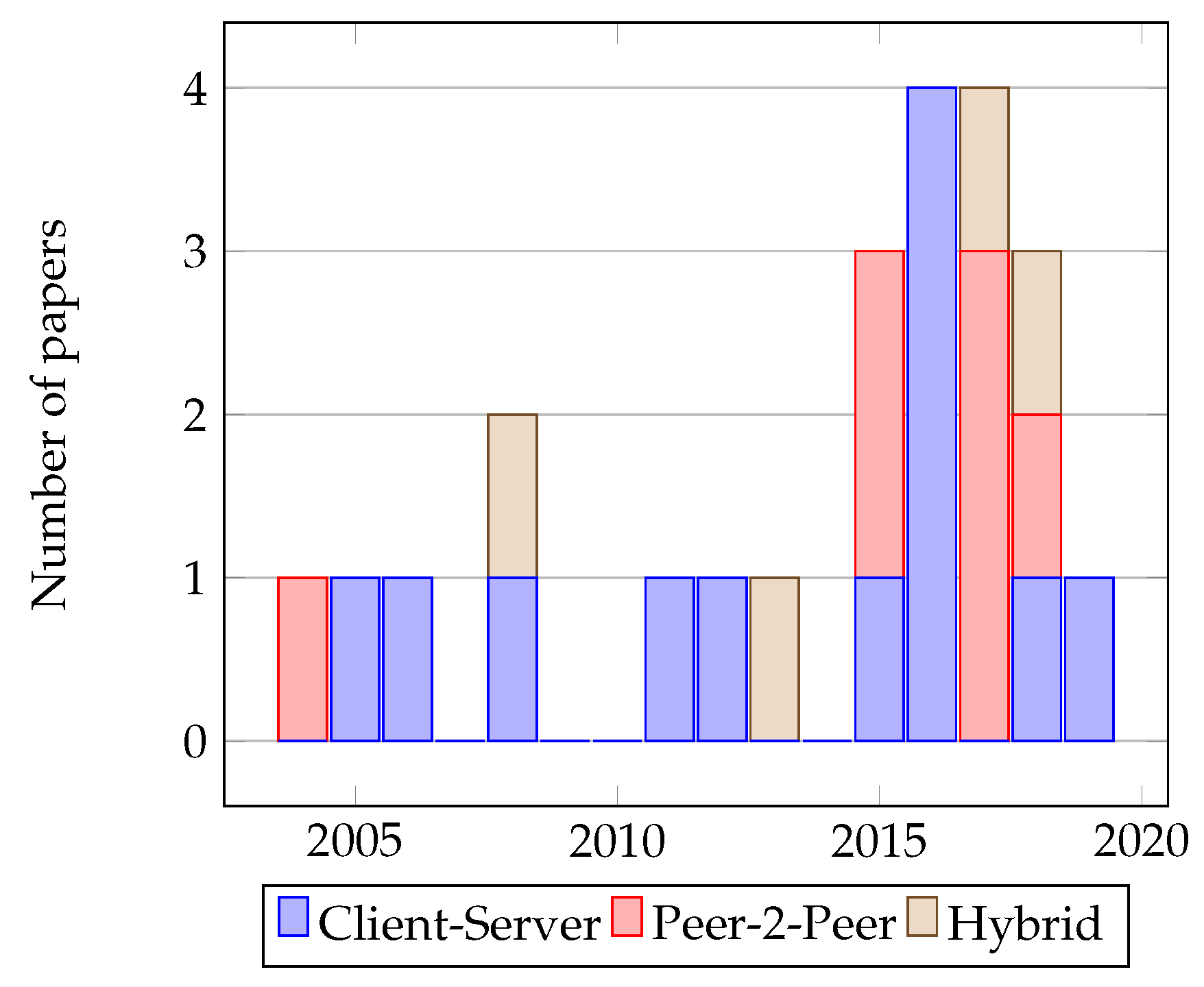

4.2. Architecture

- Client Server (CS): This approach offloads most of the workload on a central server that performs processing and provides data to requesters called clients.

- Peer-to-peer (P2P): P2P splits the workload among equipotent peers in a network and uses algorithms to synchronize processing. Each peer preforms a part of the workload and can send data to other peers.

- Hybrid (H): A hybrid approach utilizes both the client-server and peer-to-peer architectures at different levels in the architecture.

- Unknown (U): Unknown—that is, no information could be identified.

4.3. Performance

- Simulation (S): The authors have used computer simulations to conduct their experiments and evaluate the performance of their solutions.

- Modelling (M): The authors provide mathematical or computational models and utilize them to predict the performance of their solution.

- Unknown (U): Unknown—that is, no information could be identified.

- Pre-existing MMOGs (P): Studies that have been identified to use pre-existing MMOGs to carry out their evaluation.

- Other (O): Use of other types of applications or case-studies to carry out an evaluation.

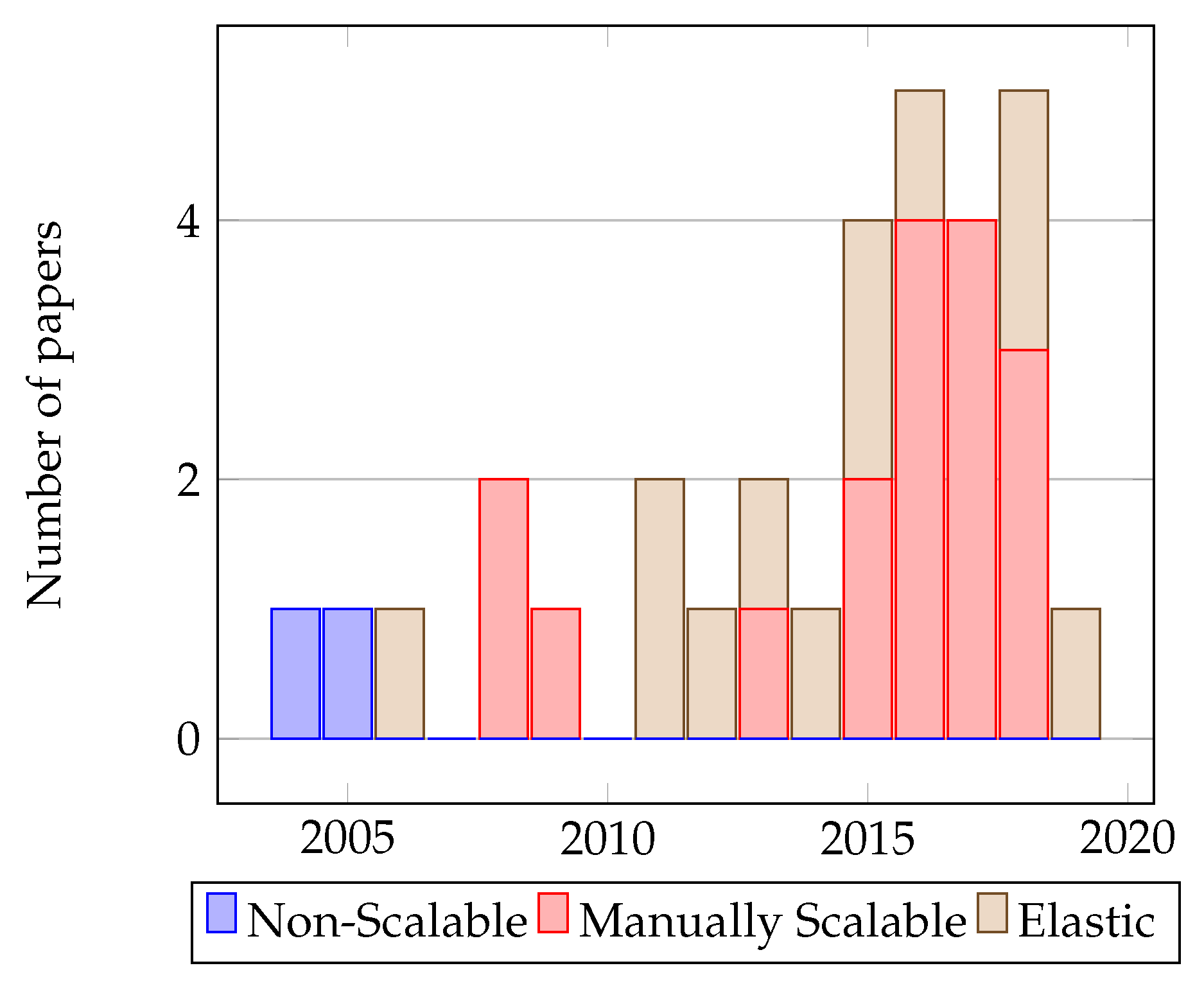

4.4. Scalability

- Not scalable (NS): This category describes a system that cannot support a varying number of users (and thus workload).

- Manually Scalable (MS): These systems can respond to changing workloads but depend on supervision from a system administrator—and usually the manual procurement and installment of hardware.

- Elastic—or automatically scalable—(E)): Such solutions respond to changing workloads by automatically allocating resources without any supervision.

- Unknown (U): Unknown—that is, no information could be identified.

4.5. Persistence

- Relational Databases (R): Relational Database Management Systems (RDBMSs) store data as rows and columns in a table and use the Structured Query Language (SQL) to describe relationships between them.

- NoSQL (N): Non-relational database systems which have no schema and rely on collections of data instead of tables.

- Unknown (U): Unknown—that is, no information could be identified.

4.6. Security

- Loose security (L): Defines practices to secure a solution that are not controlled at the architecture level.

- Tight security (T): Defines security practices that are controlled at the architecture level.

- Unknown (U): Unknown—that is, no information could be identified.

4.7. Other Criteria

5. Approaches for Developing and Deploying MMOG Backends

5.1. Infrastructure

5.1.1. Dedicated Infrastructure

5.1.2. Private/Proprietary Clouds

- High availability: Cloud systems offer high availability, usually 99% or higher.

- Elasticity: In cloud-based systems, there are enough resources and provisioning policies for the system to scale up or down, following the on-demand model.

- Virtualization: Access to the infrastructure is not done physically/directly but through a virtualization medium.

- Automation: A cloud system automates a number of administrative processes such as storage configuration, security policies and more.

5.1.3. Public/Commodity Clouds

5.1.4. Hybrid Clouds

5.2. Architecture

5.2.1. Client-Server Architecture

- Players are not always interested in receiving updates about certain areas of the map—especially if these areas are far away.

- When two players are near the border between two parts, they still need to see and interact with each other—however this is not trivial when these are hosted on separate nodes.

- Regardless of synchronization scheme, there is a possibility the game state will be invalid for events that occur near borders and affect players on both sides.

- A player standing near the border of two regions hosted by different servers needs to be able to receive event updates within their AoI from both servers.

- A player may suddenly move to an area handled by a different server (this is usually known as “teleporting” in games).

- An event originating in one server may end up in a region covered by another server. A typical example of this is shooting a rocket that travels from one area to another before exploding.

- An event that occurs near the border may affect multiple regions, hosted on different servers. An example of this is a bomb exploding at a border, affecting players in adjacent regions.

- Zoning: Partitions the game world into areas that are “handled independently by separate machines”. This technique is particularly useful in slow-paced games such as MMORPGs.

- Replication: Parallelizes game sessions with large numbers of players gathering in certain hot-spots. Each server computes the state of a number of “active entities” that are based on it, while it synchronizes the state of other “shadow entities” that are based on different machines. This technique is primarily used in fast-paced games such as FPS games.

- Instancing: “Distributes the session load by starting multiple parallel instances of highly populated zones”. These zones are independent from each other.

5.2.2. Peer-to-Peer Architecture

- Reduces delay for messages and eliminates localized congestion,

- Allows players to launch their own games without a lot of investment,

- Allows games to overcome bottlenecks of the server-only computation,

- Is more resilient and available because it does not have a single point of failure.

- Inherent scalability, as the available resources grow with the number of users,

- Robustness as systems using this architecture can self-repair when a peer fails,

- Avoids bottlenecks as network traffic is distributed among the users.

5.2.3. Hybrid Architecture

5.3. Scalability

5.3.1. Consistency

- Geographic: The world is divided into regions at initialization. For example a room inside a building can be considered a separate geographic region. This is similar to zoning mentioned in Reference [16].

5.3.2. Load Balancing

5.3.3. Load Prediction and Provision

5.4. Persistence

- The applications must be highly scalable to accommodate a potentially large audience of users,

- Rapid development of features and fast time-to-market is essential for competitiveness,

- Services must be responsive, therefore a system must have low latency,

- The system should provide a consistent view of data—therefore updates need to be made visible immediately,

- Services must be highly available and resilient to multiple types of failure.

5.5. Performance

5.6. Security

- Fixed-delay cheat: where a fixed amount of delay is added into each packet.

- Timestamp cheat: where timestamps are changed to alter when events occur.

- Suppressed update cheat: where updates are purposedly not sent to other players.

- Inconsistency cheat: where different updates are sent to different players.

5.7. Other Trends in Cloud-Based Games

6. Analysis and Future Trends

6.1. Infrastructure

6.2. Architecture

6.3. Performance

6.4. Scalability

6.5. Persistence

6.6. Security

6.7. Directions for Cloud-Based MMOG Backends

7. Conclusions

Funding

Conflicts of Interest

References

- Armbrust, M.; Fox, A.; Griffith, R.; Joseph, A.D.; Katz, R.; Konwinski, A.; Lee, G.; Patterson, D.; Rabkin, A.; Stoica, I.; et al. A view of cloud computing. Commun. ACM 2010, 53, 50–58. [Google Scholar] [CrossRef]

- Regalado, A. Who Coined ’Cloud Computing’? MIT Technology Review. 2011. Available online: https://www.technologyreview.com/s/425970/who-coined-cloud-computing (accessed on 23 April 2019).

- Shabani, I.; Kovaçi, A.; Dika, A. Possibilities offered by Google App Engine for developing distributed applications using datastore. In Proceedings of the 2014 Sixth International Conference on Computational Intelligence, Communication Systems and Networks (CICSyN), Tetova, Macedonia, 27–29 May 2014; pp. 113–118. [Google Scholar]

- Bergsten, H. JavaServer Pages; O’Reilly: Tokyo, Japan, 2003; pp. 34–37. [Google Scholar]

- Ducheneaut, N.; Yee, N.; Nickell, E.; Moore, R.J. Building an MMO With Mass Appeal: A Look at Gameplay in World of Warcraft. Games Cult. 2006, 1, 281–317. [Google Scholar] [CrossRef]

- Krause, S. A case for mutual notification: a survey of P2P protocols for massively multiplayer online games. In Proceedings of the 7th ACM SIGCOMM Workshop on Network and System Support for Games, Worcester, MA, USA, 21–22 October 2008; pp. 28–33. [Google Scholar]

- Carter, C.; El Rhalibi, A.; Merabti, M. A survey of aoim, distribution and communication in peer-to-peer online games. In Proceedings of the 2012 21st International Conference on Computer Communications and Networks (ICCCN), Munich, Germany, 30 July–2 August 2012; pp. 1–5. [Google Scholar]

- Webb, S.; Soh, S. A survey on network game cheats and P2P solutions. Aust. J. Intell. Inf. Process. Syst. 2008, 9, 34–43. [Google Scholar]

- Gilmore, J.S.; Engelbrecht, H.A. A survey of state persistency in peer-to-peer massively multiplayer online games. IEEE Trans. Parallel Distrib. Syst. 2011, 23, 818–834. [Google Scholar] [CrossRef]

- Abdulazeez, S.A.; El Rhalibi, A.; Merabti, M.; Al-Jumeily, D. Survey of solutions for Peer-to-Peer MMOGs. In Proceedings of the 2015 International Conference on Computing, Networking and Communications (ICNC), Anaheim, CA, USA, 16–19 February 2015; pp. 1106–1110. [Google Scholar]

- Cai, W.; Shea, R.; Huang, C.Y.; Chen, K.T.; Liu, J.; Leung, V.C.; Hsu, C.H. A survey on cloud gaming: Future of computer games. IEEE Access 2016, 4, 7605–7620. [Google Scholar] [CrossRef]

- Kitchenham, B.; Charters, S.; Budgen, D.; Brereton, P.; Turner, M.; Linkman, S.; Jorgensen, M.; Mendes, E.; Visaggio, G. Guidelines for Performing Systematic Literature Reviews in Software Engineering; EBSE Technical Report Version 2.3; EBSE: Goyang, Korea, 2007. [Google Scholar]

- Chu, H.S. Building a Simple Yet Powerful MMO Game Architecture. Verkkoarkkitehtuuri Part. 2008. Available online: https://www.ibm.com/developerworks/library/ar-powerup1/ (accessed on 23 April 2019).

- Google. Overview of Cloud Game Infrastructure. 2018. Available online: https://cloud.google.com/solutions/gaming/cloud-game-infrastructure (accessed on 5 March 2019).

- Shaikh, A.; Sahu, S.; Rosu, M.C.; Shea, M.; Saha, D. On demand platform for online games. IBM Syst. J. 2006, 45, 7–19. [Google Scholar] [CrossRef]

- Nae, V.; Prodan, R.; Iosup, A. Massively multiplayer online game hosting on cloud resources. Cloud Comput. Princ. Paradig. 2011, 19, 491–509. [Google Scholar]

- Shaikh, A.; Sahu, S.; Rosu, M.; Shea, M.; Saha, D. Implementation of a service platform for online games. In Proceedings of the 3rd ACM SIGCOMM Workshop on Network and System Support for Games, Portland, OR, USA, 30 August–3 September 2004; pp. 106–110. [Google Scholar]

- Barri, I.; Roig, C.; Giné, F. Distributing game instances in a hybrid client-server/P2P system to support MMORPG playability. Multimed. Tools Appl. 2016, 75, 2005–2029. [Google Scholar] [CrossRef]

- LeadingEdgeTech.co.uk. How Is Cloud Computing Different from Traditional IT Infrastructure? 2019. Available online: https://www.leadingedgetech.co.uk/it-services/it-consultancy-services/cloud-computing/how-is-cloud-computing-different-from-traditional-it-infrastructure/ (accessed on 17 March 2019).

- Nae, V.; Iosup, A.; Prodan, R. Dynamic resource provisioning in massively multiplayer online games. IEEE Trans. Parallel Distrib. Syst. 2011, 22, 380–395. [Google Scholar] [CrossRef]

- Dhib, E.; Boussetta, K.; Zangar, N.; Tabbane, N. Modeling Cloud gaming experience for Massively Multiplayer Online Games. In Proceedings of the 13th IEEE Consumer Communications & Networking Conference (CCNC), Las Vegas, NV, USA, 9–12 January 2016; pp. 381–386. [Google Scholar]

- Mishra, D.; El Zarki, M.; Erbad, A.; Hsu, C.H.; Venkatasubramanian, N. Clouds+ games: A multifaceted approach. IEEE Internet Comput. 2014, 18, 20–27. [Google Scholar] [CrossRef]

- Satyanarayanan, M.; Bahl, V.; Caceres, R.; Davies, N. The case for vm-based cloudlets in mobile computing. IEEE Pervasive Comput. 2009, 8, 14–23. [Google Scholar] [CrossRef]

- Najaran, M.T.; Krasic, C. Scaling online games with adaptive interest management in the cloud. In Proceedings of the 2010 9th Annual Workshop on Network and Systems Support for Games, Taipei, Taiwan, 16–17 November 2010; pp. 1–6. [Google Scholar]

- Dhib, E.; Zangar, N.; Tabbane, N.; Boussetta, K. Resources allocation trade-off between cost and delay over a distributed Cloud infrastructure. In Proceedings of the 2016 7th International Conference on Sciences of Electronics, Technologies of Information and Telecommunications (SETIT), Hammamet, Tunisia, 18–20 December 2016; pp. 486–490. [Google Scholar]

- Dhib, E.; Boussetta, K.; Zangar, N.; Tabbane, N. Cost-aware virtual machines placement problem under constraints over a distributed cloud infrastructure. In Proceedings of the 2017 Sixth International Conference on Communications and Networking (ComNet), Hammamet, Tunisia, 29 March–1 April 2017; pp. 1–5. [Google Scholar]

- Zahariev, A. Google App Engine; Helsinki University of Technologyx: Espoo, Finland, 2009; pp. 1–5. [Google Scholar]

- Nae, V.; Prodan, R.; Fahringer, T.; Iosup, A. The impact of virtualization on the performance of massively multiplayer online games. In Proceedings of the 8th Annual Workshop on Network and Systems Support for Games, Paris, France, 23–24 November 2009; p. 9. [Google Scholar]

- Negrão, A.P.; Veiga, L.; Ferreira, P. Task based load balancing for cloud aware massively Multiplayer Online Games. In Proceedings of the 2016 IEEE 15th International Symposium onNetwork Computing and Applications (NCA), Cambridge, MA, USA, 24–27 October 2016; pp. 48–51. [Google Scholar]

- Assiotis, M.; Tzanov, V. A distributed architecture for massive multiplayer online role-playing games. In Proceedings of the 5th ACM SIGCOMM Workshop on Network and System Support for Games (NetGames’ 06), Hawthorne, NY, USA, 10–11 October 2005. [Google Scholar]

- GauthierDickey, C.; Zappala, D.; Lo, V. Distributed Architectures for massively multiplayer online games. In Proceedings of the ACM NetGames Workshop, Portland, OR, USA, 30 August 2004. [Google Scholar]

- Kavalionak, H.; Carlini, E.; Ricci, L.; Montresor, A.; Coppola, M. Integrating peer-to-peer and cloud computing for massively multiuser online games. Peer-to-Peer Netw. Appl. 2015, 8, 301–319. [Google Scholar] [CrossRef]

- Mildner, P.; Triebel, T.; Kopf, S.; Effelsberg, W. Scaling online games with NetConnectors: A peer-to-peer overlay for fast-paced massively multiplayer online games. Comput. Entertain. (CIE) 2017, 15, 3. [Google Scholar] [CrossRef]

- Jardine, J.; Zappala, D. A hybrid architecture for massively multiplayer online games. In Proceedings of the 7th ACM SIGCOMM Workshop on Network and System Support for Games, Worcester, MA, USA, 21–22 October 2008; pp. 60–65. [Google Scholar]

- Matsumoto, K.; Okabe, Y. A Collusion-Resilient Hybrid P2P Framework for Massively Multiplayer Online Games. In Proceedings of the 2017 IEEE 41st Annual Computer Software and Applications Conference (COMPSAC), Turin, Italy, 4–8 July 2017; Volume 2, pp. 342–347. [Google Scholar]

- Zhang, W.; Chen, J.; Zhang, Y.; Raychaudhuri, D. Towards efficient edge cloud augmentation for virtual reality mmogs. In Proceedings of the Second ACM/IEEE Symposium on Edge Computing, San Jose, CA, USA, 12–14 October 2017; p. 8. [Google Scholar]

- Plumb, J.N.; Kasera, S.K.; Stutsman, R. Hybrid network clusters using common gameplay for massively multiplayer online games. In Proceedings of the 13th International Conference on the Foundations of Digital Games, Malmo, Sweden, 7–10 August 2018; p. 2. [Google Scholar]

- Blackman, T.; Waldo, J. Scalable Data Storage in Project Darkstar; Technical Report; Sun Microsystems: Menlo Park, CA, USA, 2009. [Google Scholar]

- Lu, F.; Parkin, S.; Morgan, G. Load balancing for massively multiplayer online games. In Proceedings of the 5th ACM SIGCOMM Workshop on Network and System Support for Games, Singapore, 30–31 October 2006; p. 1. [Google Scholar]

- Chuang, W.C.; Sang, B.; Yoo, S.; Gu, R.; Kulkarni, M.; Killian, C. Eventwave: Programming model and runtime support for tightly-coupled elastic cloud applications. In Proceedings of the 4th Annual Symposium on Cloud Computing, Santa Clara, CA, USA, 1–3 October 2013; p. 21. [Google Scholar]

- Nae, V.; Prodan, R.; Fahringer, T. Cost-efficient hosting and load balancing of massively multiplayer online games. In Proceedings of the 2010 11th IEEE/ACM International Conference on Grid Computing, Brussels, Belgium, 25–28 October 2010; pp. 9–16. [Google Scholar]

- Meiländer, D.; Gorlatch, S. Modeling the scalability of real-time online interactive applications on clouds. Future Gener. Comput. Syst. 2018, 86, 1019–1031. [Google Scholar] [CrossRef]

- Farlow, S.; Trahan, J.L. Periodic load balancing heuristics in massively multiplayer online games. In Proceedings of the 13th International Conference on the Foundations of Digital Games, Malmo, Sweden, 7–10 August 2018; p. 29. [Google Scholar]

- Weng, C.F.; Wang, K. Dynamic resource allocation for MMOGs in cloud computing environments. In Proceedings of the 2012 8th International Wireless Communications and Mobile Computing Conference (IWCMC), Limassol, Cyprus, 27–31 August 2012; pp. 142–146. [Google Scholar]

- Ghobaei-Arani, M.; Khorsand, R.; Ramezanpour, M. An autonomous resource provisioning framework for massively multiplayer online games in cloud environment. J. Netw. Comput. Appl. 2019, 142, 76–97. [Google Scholar] [CrossRef]

- Brewer, E. Spanner, Truetime and the Cap Theorem. 2017. Available online: https://ai.google/research/pubs/pub45855 (accessed on 20 August 2019).

- Vogels, W. Eventually consistent. Commun. ACM 2009, 52, 40–44. [Google Scholar] [CrossRef]

- Agrawal, D.; Das, S.; El Abbadi, A. Big data and cloud computing: current state and future opportunities. In Proceedings of the 14th International Conference on Extending Database Technology, Uppsala, Sweden, 21–24 March 2011; pp. 530–533. [Google Scholar]

- Chang, F.; Dean, J.; Ghemawat, S.; Hsieh, W.C.; Wallach, D.A.; Burrows, M.; Chandra, T.; Fikes, A.; Gruber, R.E. Bigtable: A distributed storage system for structured data. ACM Trans. Comput. Syst. (TOCS) 2008, 26, 4. [Google Scholar] [CrossRef]

- Baker, J.; Bond, C.; Corbett, J.C.; Furman, J.; Khorlin, A.; Larson, J.; Leon, J.M.; Li, Y.; Lloyd, A.; Yushprakh, V. Megastore: Providing Scalable, Highly Available Storage for Interactive Services. In Proceedings of the Conference on Innovative Data System Research (CIDR), Asilomar, CA, USA, 9–12 January 2011; pp. 223–234. [Google Scholar]

- Diao, Z.; Zhao, P.; Schallehn, E.; Mohammad, S. Achieving Consistent Storage for Scalable MMORPG Environments. In Proceedings of the 19th International Database Engineering & Applications Symposium, Yokohama, Japan, 13–15 July 2015; pp. 33–40. [Google Scholar]

- Diao, Z. Cloud-based Support for Massively Multiplayer Online Role-Playing Games. Ph.D. Thesis, University of Magdeburg, Magdeburg, Germany, 2017. [Google Scholar]

- Shea, R.; Liu, J.; Ngai, E.C.H.; Cui, Y. Cloud gaming: architecture and performance. IEEE Netw. 2013, 27, 16–21. [Google Scholar] [CrossRef]

- Lin, Y.; Shen, H. Cloud fog: Towards high quality of experience in cloud gaming. In Proceedings of the 2015 44th International Conference on Parallel Processing, Beijing, China, 1–4 September 2015; pp. 500–509. [Google Scholar]

- Gascon-Samson, J.; Kienzle, J.; Kemme, B. Dynfilter: Limiting bandwidth of online games using adaptive pub/sub message filtering. In Proceedings of the 2015 International Workshop on Network and Systems Support for Games, Zagreb, Croatia, 3–4 December 2015; p. 2. [Google Scholar]

- Yusen, L.; Deng, Y.; Cai, W.; Tang, X. Fairness-aware Update Schedules for Improving Consistency in Multi-server Distributed Virtual Environments. In Proceedings of the 9th EAI International Conference on Simulation Tools and Techniques, Prague, Czech Republic, 22–23 August 2016; pp. 1–8. [Google Scholar]

- El Rhalibi, A.; Al-Jumeily, D. Dynamic Area of Interest Management for Massively Multiplayer Online Games Using OPNET. In Proceedings of the 2017 10th International Conference on Developments in eSystems Engineering (DeSE), Paris, France, 14–16 June 2017; pp. 50–55. [Google Scholar]

- Baughman, N.E.; Levine, B.N. Cheat-proof playout for centralized and distributed online games. In Proceedings of the IEEE INFOCOM 2001, Conference on Computer Communications, Twentieth Annual Joint Conference of the IEEE Computer and Communications Society (Cat. No. 01CH37213), Anchorage, AK, USA, 22–26 April 2001; Volume 1, pp. 104–113. [Google Scholar]

- Jamin, S.; Cronin, E.; Filstrup, B. Cheat-proofing dead reckoned multiplayer games. In Proceedings of the 2nd International Conference on Application and Development of Computer Games, Hong Kong, China, 6–7 January 2003; Volume 67. [Google Scholar]

- Lin, Y.; Shen, H. Leveraging fog to extend cloud gaming for thin-client MMOG with high quality of experience. In Proceedings of the IEEE 35th International Conference on Distributed Computing Systems (ICDCS), Columbus, OH, USA, 29 June–2 July 2015; pp. 734–735. [Google Scholar]

- Burger, V.; Pajo, J.F.; Sanchez, O.R.; Seufert, M.; Schwartz, C.; Wamser, F.; Davoli, F.; Tran-Gia, P. Load dynamics of a multiplayer online battle arena and simulative assessment of edge server placements. In Proceedings of the 7th International Conference on Multimedia Systems, Klagenfurt, Austria, 10–13 May 2016; p. 17. [Google Scholar]

- Plumb, J.N.; Stutsman, R. Exploiting Google’s Edge Network for Massively Multiplayer Online Games. In Proceedings of the 2018 IEEE 2nd International Conference on Fog and Edge Computing (ICFEC), Washington, DC, USA, 3 May 2018; pp. 1–8. [Google Scholar]

- Google. Stadia: Take Game Development Further Than You Thought Possible. 2019. Available online: https://stadia.dev/about (accessed on 23 April 2019).

- Apple. Arcade: Games That Redefine Games. 2019. Available online: https://www.apple.com/lae/apple-arcade (accessed on 23 April 2019).

- Carter, C.J.; El Rhalibi, A.; Merabti, M. A novel scalable hybrid architecture for MMOG. In Proceedings of the 2013 IEEE International Conference on Multimedia and Expo Workshops (ICMEW), San Jose, CA, USA, 15–19 July 2013; pp. 1–6. [Google Scholar]

- Basiri, M.; Rasoolzadegan, A. Delay-aware resource provisioning for cost-efficient cloud gaming. IEEE Trans. Circuits Syst. Video Technol. 2016, 28, 972–983. [Google Scholar] [CrossRef]

| Inclusion Criteria | Exclusion Criteria |

|---|---|

| The paper relates to MMOGs or similar large distributed systems. | The paper does not relate to software engineering or software architecture or software technology. |

| The paper relates to cloud technology which could be applied to developing MMOG backends. | The paper does not provide details for any of the areas of interest in this study. |

| The paper touches at least one of the identified research questions, either directly or indirectly. | The paper is not relevant to any of the targeted research questions. |

| The paper was not published in a peer-reviewed journal or conference proceedings. | |

| The full paper is not available for download. |

| Area of Study | Frequency of Papers |

|---|---|

| Infrastructure | 11 |

| Architecture | 10 |

| Performance | 7 |

| Scalability | 6 |

| Persistence | 4 |

| Security | 3 |

| Criterion | Categories |

|---|---|

| Infrastructure | D = Dedicated |

| PrC = Private Cloud | |

| HC = Hybrid Cloud | |

| PuC = Public Cloud | |

| U = Unknown | |

| Architecture | CS = Client-Server |

| P2P = Peer-to-peer | |

| H = Hybrid architecture | |

| U = Unknown | |

| Performance | Types of simulation: |

| S = Simulation | |

| M = Modelling | |

| U = Unknown | |

| Testing software: | |

| P = Pre-existing MMOGs | |

| O = Other types of applications | |

| Scalability | NS = Not scalable |

| MS = Manually scalable | |

| E = Elastic (automatically scalable) | |

| U = Unknown | |

| Persistence | R = Relational Databases |

| N = NoSQL | |

| U = Unknown | |

| Security | L = Loose security (not controlled by architecture) |

| T = Tight security (controlled by architecture) | |

| U = Unknown |

| Approach | Infrastructure | Architecture | Scalability | Persistence | Performance | Security |

|---|---|---|---|---|---|---|

| GauthierDickey et al. [31] | D | P2P | NS | U | U | L |

| Assiotis and Tzanov [30] | D | CS | NS | U | M & P | L |

| Shaikh et al. [15], Shaikh et al. [17] | PrC | U | E | R | M, S & P | U |

| Lu et al. [39] | D | CS | MS | U | S & M | U |

| Jardine and Zappala [34] | U | H | MS | U | S & P | T |

| Chu [13] | D | CS | MS | R | U | T |

| Blackman and Waldo [38] | D | U | MS | R | U | U |

| Nae et al. [16], Nae et al. [20] | PrC | CS | E | U | S, M & P | U |

| Chang et al. [49], Baker et al. [50] | PrC | U | E | N | S & O | U |

| Weng and Wang [44] | PrC | CS | E | U | M & P | U |

| Chuang et al. [40] | PrC | U | E | N | S & P, O | U |

| Carter et al. [65] | U | H | MS | U | S | U |

| Shabani et al. [3] | PuC-PaaS | U | E | N | U | U |

| Kavalionak et al. [32] | PrC | P2P | E | U | U | U |

| Lin and Shen [54], Lin and Shen [60] | PrC | P2P | MS | U | S, M & O | U |

| Diao et al. [51] | U | U | E | N | S | U |

| Gascon-Samson et al. [55] | PrC | CS | MS | U | S,P | U |

| Dhib et al. [21] | PrC | CS | E | U | S | U |

| Negrão et al. [29] | HC | CS | MS | U | S | U |

| Burger et al. [61] | PrC | U | MS | U | S,P & O | U |

| Dhib et al. [25] | PuC-IaaS | CS | MS | U | P | U |

| Yusen et al. [56] | U | CS | MS | U | S | U |

| Basiri and Rasoolzadegan [66] | PrC | U | U | U | S | U |

| Matsumoto and Okabe [35] | U | P2P | MS | U | M | L |

| Diao [52] | PrC | P2P | MS | N | S,P | U |

| Dhib et al. [26] | PuC-IaaS | U | MS | U | S | U |

| Mildner et al. [33] | D | P2P | MS | U | S,P | U |

| Zhang et al. [36] | PrC | H | U | U | S | U |

| Google [14] | PuC-IaaS PuC-PaaS | U | E | U | U | U |

| Plumb and Stutsman [62] | PrC | P2P | MS | U | S | L |

| Meiländer and Gorlatch [42] | PrC | U | E | U | S & M,P | U |

| Plumb et al. [37] | U | H | MS | U | S | U |

| Farlow and Trahan [43] | U | CS | MS | U | S | U |

| Ghobaei-Arani et al. [45] | PuC-IaaS | CS | E | U | M & P | U |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Kasenides, N.; Paspallis, N. A Systematic Mapping Study of MMOG Backend Architectures. Information 2019, 10, 264. https://doi.org/10.3390/info10090264

Kasenides N, Paspallis N. A Systematic Mapping Study of MMOG Backend Architectures. Information. 2019; 10(9):264. https://doi.org/10.3390/info10090264

Chicago/Turabian StyleKasenides, Nicos, and Nearchos Paspallis. 2019. "A Systematic Mapping Study of MMOG Backend Architectures" Information 10, no. 9: 264. https://doi.org/10.3390/info10090264

APA StyleKasenides, N., & Paspallis, N. (2019). A Systematic Mapping Study of MMOG Backend Architectures. Information, 10(9), 264. https://doi.org/10.3390/info10090264