Driving Style: How Should an Automated Vehicle Behave?

Abstract

1. Introduction

2. Literature Review

2.1. AVs vs. Pedestrians

2.2. Human Driver vs. Human Driver

2.3. AV vs. Human-Driven Vehicles

2.4. AV vs. AV

2.5. AV’s Driving Style

2.6. Anthropomorphism

3. Aims

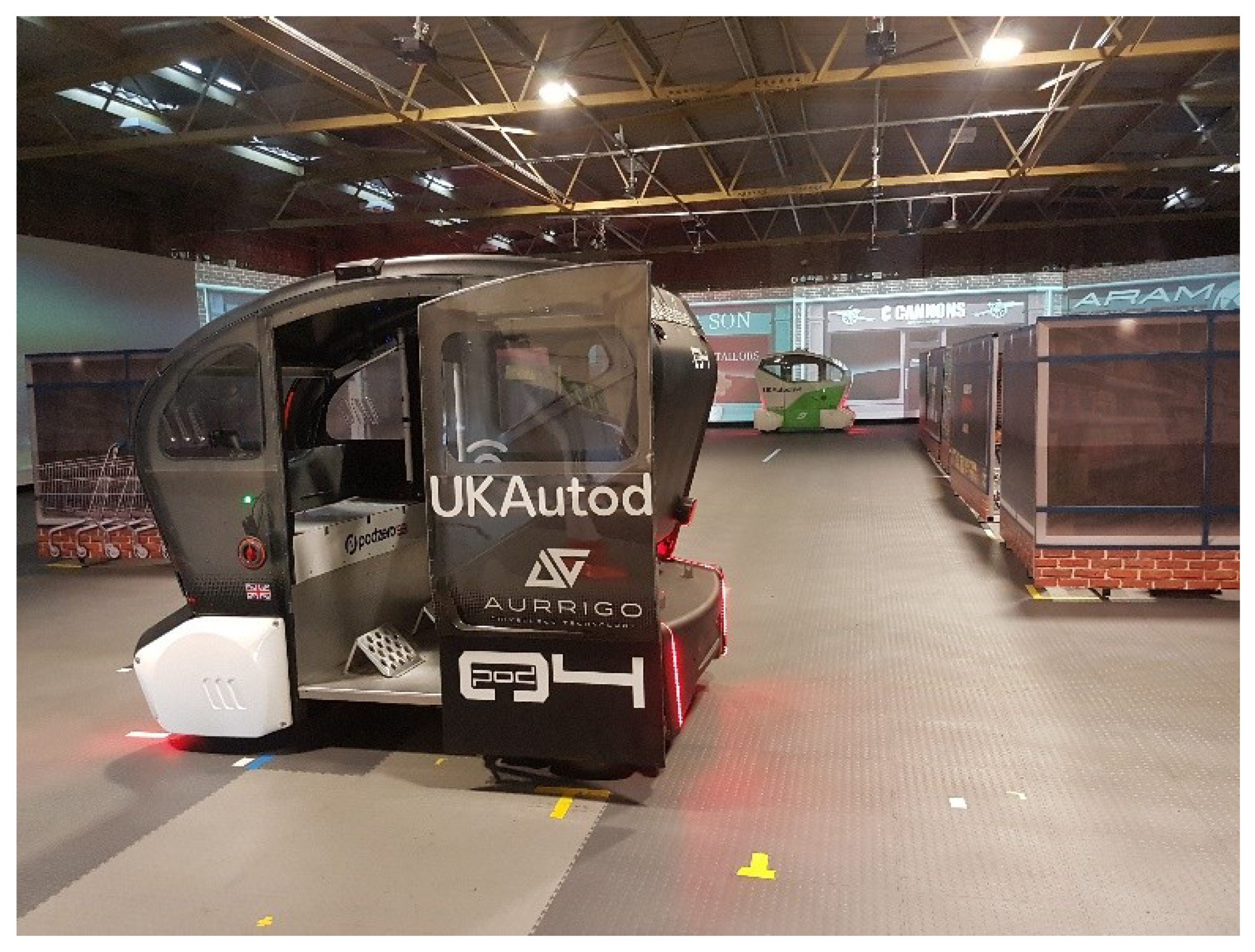

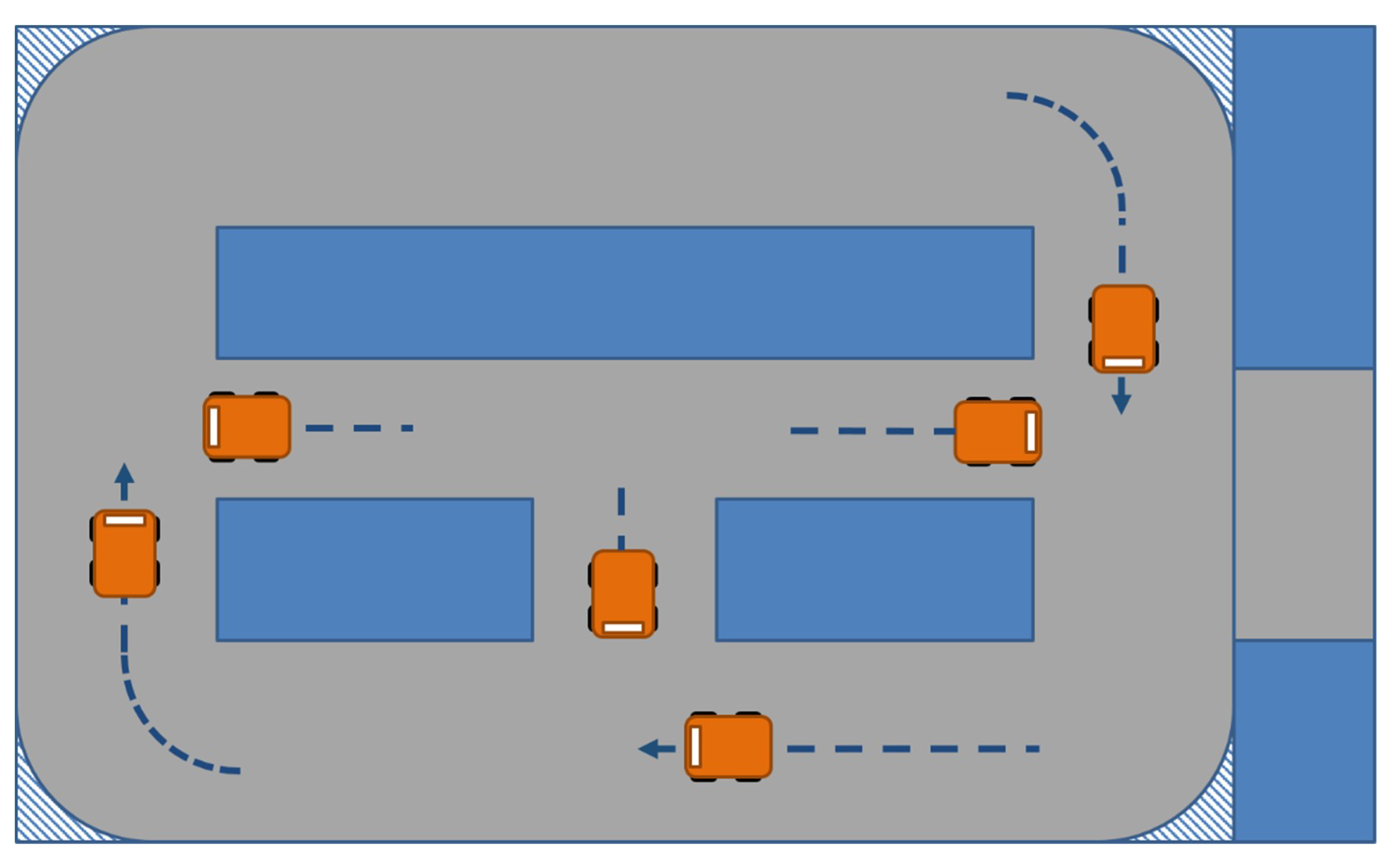

4. Methods

4.1. Vehicle Programming

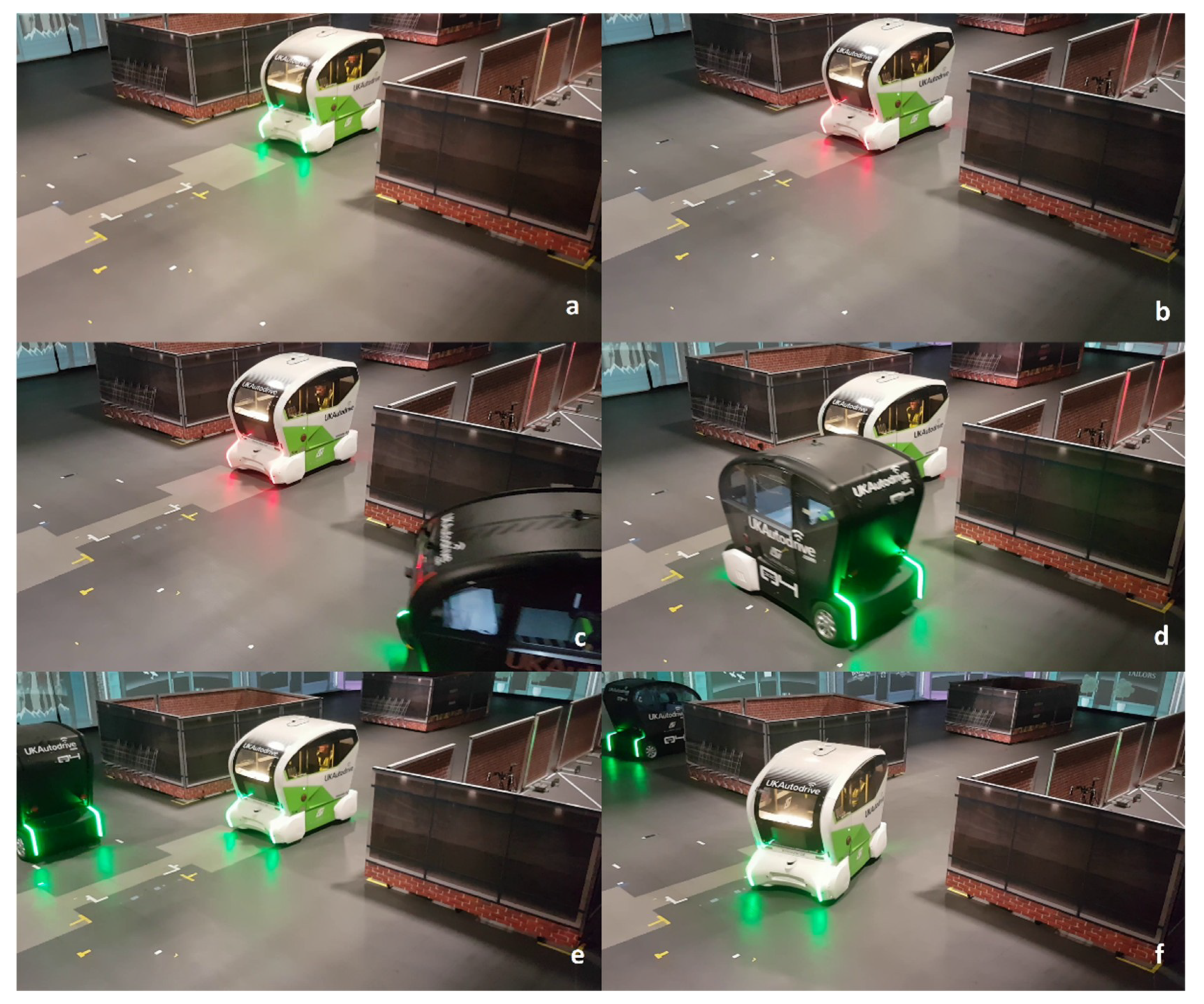

4.1.1. Human-Like Behaviour

4.1.2. Machine-Like Behaviour

4.2. Activities

4.2.1. Trust

4.2.2. Acceptance

5. Results

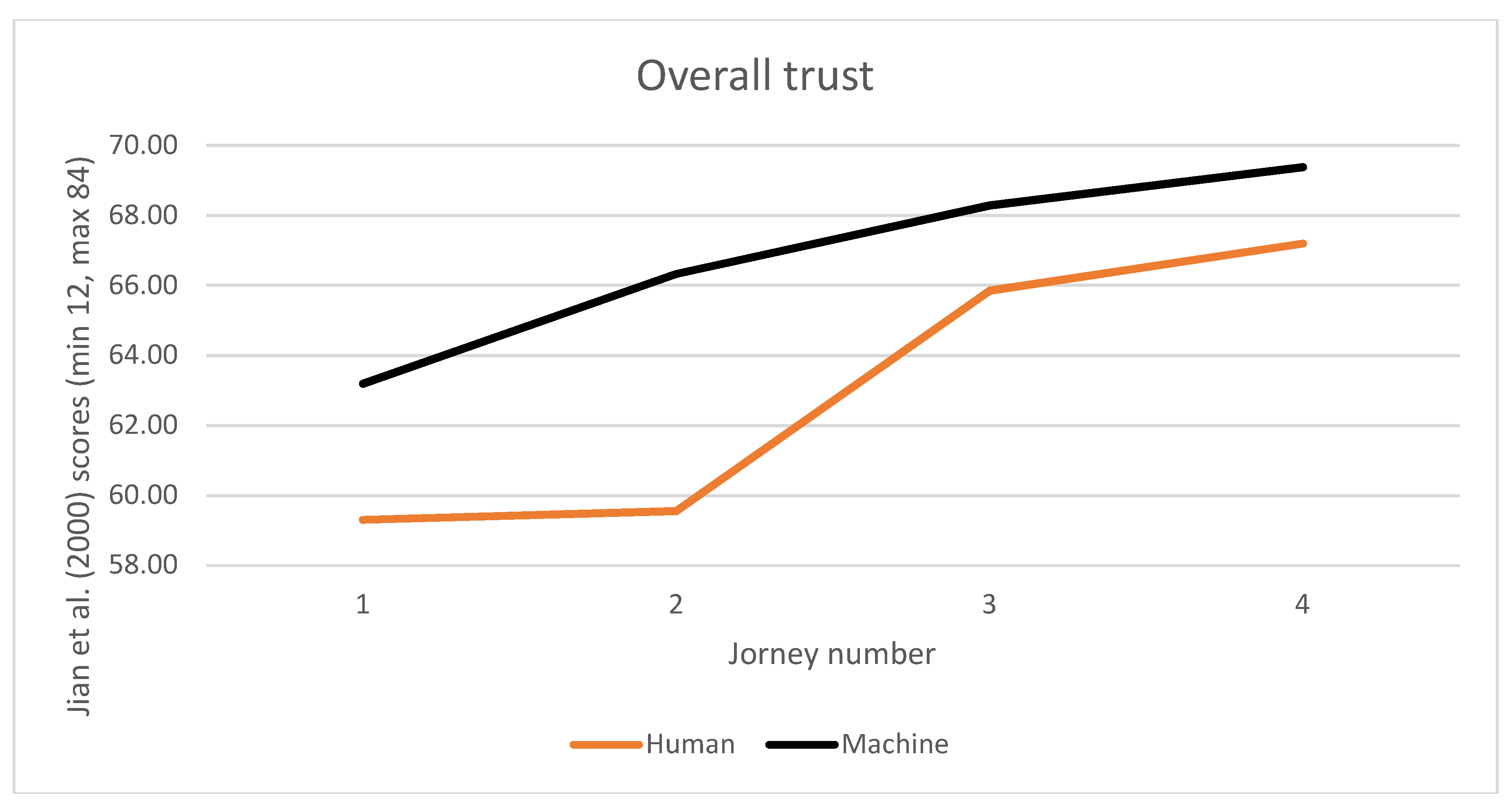

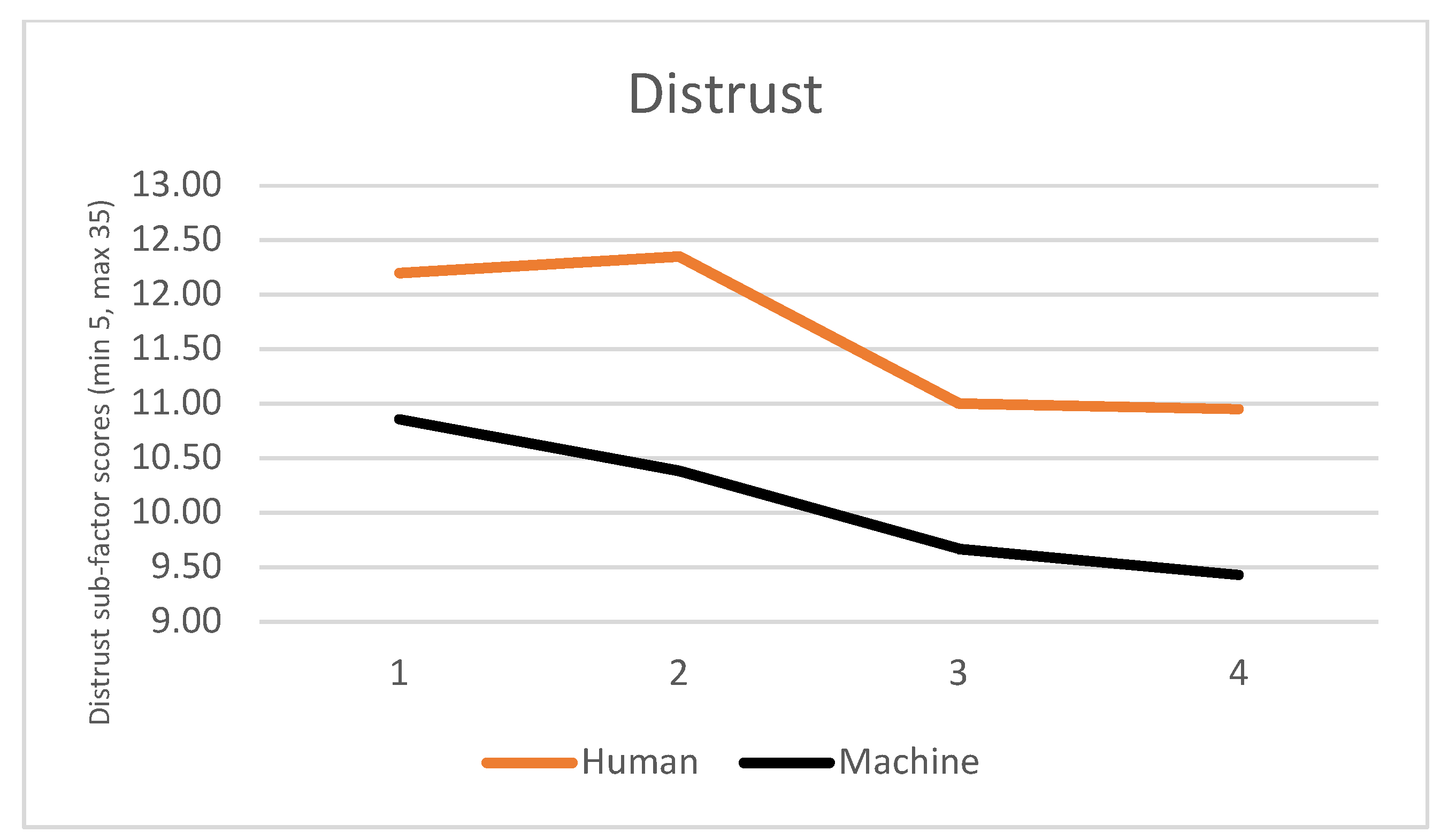

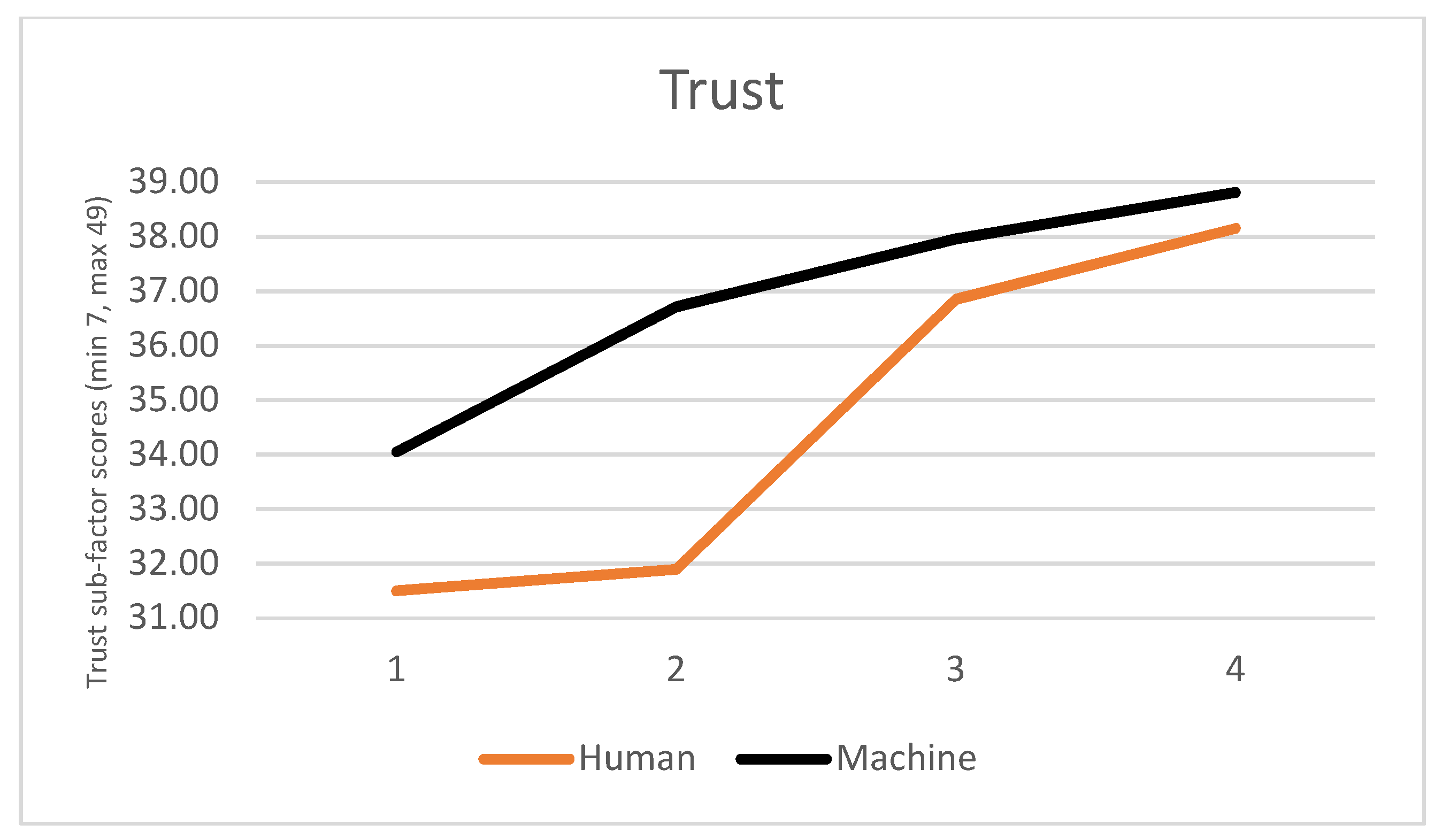

5.1. Quantitative Data

5.2. Qualitative Data

5.2.1. Reassuring Human

5.2.2. Assertive Machine

5.2.3. Incomplete Mental Model

Normally, when you drive, you stop at the junction and check if there’s another car coming or another driver and then will go, but here it didn’t stop, it just went. I did, in my mind, I knew there wasn’t anything coming, but if it would be the real, in real life, I would be a bit cautious, I’d be feeling a bit, ‘why it didn’t stop?’ it was ok at this time, but I wouldn’t feel safe, because it may be other vehicles coming.

6. Discussion

6.1. Suggestions

6.2. Limitations and Future Work

7. Conclusions

- Explain to the general public the details of the driving systems, for example, the recent technological features such as V2V/V2I

- Help create realistic mental models of the complex interactions between vehicles, its sensors, other road users and infrastructure

- Present the features progressively, so occupants can build this knowledge with time

- Convey to occupants the sensed hazards and the shared knowledge received from the other vehicles or infrastructure, so users can acknowledge that the system is aware of hazards beyond the field of view.

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- SAE. J3016: Taxonomy and Definitions for Terms Related to On-Road Motor Vehicle Automated Driving Systems; SAE Int.: Warrendale, PA, USA, 2014; Available online: http://wwwwww.sae.org/standards/content/j3016_201609/ (accessed on 13 April 2018).

- Eden, G.; Nanchen, B.; Ramseyer, R.; Evéquoz, F. On the Road with an Autonomous Passenger Shuttle: Integration in Public Spaces. In Proceedings of the 2017 CHI Conference Extended Abstracts on Human Factors in Computing Systems, Denver, CO, USA, 6–11 May 2017; pp. 1569–1576. [Google Scholar] [CrossRef]

- Nordhoff, S.; de Winter, J.; Madigan, R.; Merat, N.; van Arem, B.; Happee, R. User acceptance of automated shuttles in Berlin-Schöneberg: A questionnaire study. Transp. Res. Part F Traffic Psychol. Behav. 2018, 58, 843–854. [Google Scholar] [CrossRef]

- Meyer, J.; Becker, H.; Bösch, P.M.; Axhausen, K.W. Autonomous vehicles: The next jump in accessibilities? Res. Transp. Econ. 2017, 62, 80–91. [Google Scholar] [CrossRef]

- Fagnant, D.J.; Kockelman, K. Preparing a nation for autonomous vehicles: Opportunities, barriers and policy recommendations. Transp. Res. Part A Policy Pract. 2015, 77, 167–181. [Google Scholar] [CrossRef]

- Wadud, Z.; MacKenzie, D.; Leiby, P. Help or hindrance? The travel, energy and carbon impacts of highly automated vehicles. Transp. Res. Part A Policy Pract. 2016, 86, 1–18. [Google Scholar] [CrossRef]

- Lee, J.D.; See, K.A. Trust in Automation: Designing for Appropriate Reliance. Hum. Factors J. Hum. Factors Ergon. Soc. 2004, 46, 50–80. [Google Scholar] [CrossRef] [PubMed]

- Merritt, S.M.; Heimbaugh, H.; LaChapell, J.; Lee, D. I Trust It, but I don’t Know Why: Effects of Implicit Attitudes Toward Automation on Trust in an Automated System. Hum. Factors J. Hum. Factors Ergon. Soc. 2013, 55, 520–534. [Google Scholar] [CrossRef]

- Mirnig, A.G.; Wintersberger, P.; Sutter, C.; Ziegler, J. A Framework for Analyzing and Calibrating Trust in Automated Vehicles. In Proceedings of the 8th International Conference on Automotive User Interfaces and Interactive Vehicular Applications, Ann Arbor, MI, USA, 24–26 October 2016; pp. 33–38. [Google Scholar] [CrossRef]

- Kundinger, T.; Wintersberger, P.; Riener, A. (Over)Trust in Automated Driving: The Sleeping Pill of Tomorrow? In Extended Abstracts of the 2019 CHI Conference on Human Factors in Computing Systems; CHI’19; ACM Press: New York, NY, USA, 2019; pp. 1–6. [Google Scholar]

- Hoff, K.A.; Bashir, M. Trust in Automation: Integrating Empirical Evidence on Factors That Influence Trust. Hum. Factors J. Hum. Factors Ergon. Soc. 2015, 57, 407–434. [Google Scholar] [CrossRef] [PubMed]

- Khastgir, S.; Birrell, S.; Dhadyalla, G.; Jennings, P. Calibrating trust through knowledge: Introducing the concept of informed safety for automation in vehicles. Transp. Res. Part C Emerg. Technol. 2018, 96, 290–303. [Google Scholar] [CrossRef]

- Helldin, T.; Falkman, G.; Riveiro, M.; Davidsson, S. Presenting system uncertainty in automotive UIs for supporting trust calibration in autonomous driving. In Proceedings of the 5th International Conference on Automotive User Interfaces and Interactive Vehicular Applications, Eindhoven, The Netherlands, 28–30 October 2013; pp. 210–217. [Google Scholar] [CrossRef]

- Lyons, J.B. Being transparent about transparency: A model for human-robot interaction. In Proceedings of the AAAI Spring Symposium, Stanford, CA, USA, 25–27 March 2013; pp. 48–53. [Google Scholar]

- Kunze, A.; Summerskill, S.J.; Marshall, R.; Filtness, A.J. Automation transparency: Implications of uncertainty communication for human-automation interaction and interfaces. Ergonomics 2019, 62, 345–360. [Google Scholar] [CrossRef]

- Haeuslschmid, R.; Shou, Y.; O’Donovan, J.; Burnett, G.; Butz, A. First Steps towards a View Management Concept for Large-sized Head-up Displays with Continuous Depth. In Proceedings of the 8th International Conference on Automotive User Interfaces and Interactive Vehicular Applications—Automotive’UI 16, Ann Arbor, MI, USA, 24–26 October 2016; pp. 1–8. [Google Scholar] [CrossRef]

- Sibi, S.; Baiters, S.; Mok, B.; Steiner, M.; Ju, W. Assessing driver cortical activity under varying levels of automation with functional near infrared spectroscopy. In Proceedings of the 2017 IEEE Intelligent Vehicles Symposium (IV), Redondo Beach, CA, USA, 11–14 June 2017; pp. 1509–1516. [Google Scholar]

- Gustavsson, P.; Victor, T.W.; Johansson, J.; Tivesten, E.; Johansson, R.; Aust, L. What were they thinking? Subjective experiences associated with automation expectation mismatch. In Proceedings of the 6th Driver Distraction and Inattention conference, Gothenburg, Sweden, 15–17 October 2018; pp. 1–12. [Google Scholar]

- Haboucha, C.J.; Ishaq, R.; Shiftan, Y. User preferences regarding autonomous vehicles. Transp. Res. Part C Emerg. Technol. 2017, 78, 37–49. [Google Scholar] [CrossRef]

- Bansal, P.; Kockelman, K.M. Forecasting Americans’ long-term adoption of connected and autonomous vehicle technologies. Transp. Res. Part A Policy Pract. 2017, 95, 49–63. [Google Scholar] [CrossRef]

- Hartwich, F.; Witzlack, C.; Beggiato, M.; Krems, J.F. The first impression counts—A combined driving simulator and test track study on the development of trust and acceptance of highly automated driving. Transp. Res. Part F Traffic Psychol. Behav. 2018, in press. [Google Scholar] [CrossRef]

- Frison, A.; Wintersberger, P.; Riener, A.; Schartmüller, C.; Boyle, L.N.; Miller, E.; Weigl, K. In UX We Trust: Investigation of Aesthetics and Usability of Driver-Vehicle Interfaces and Their Impact on the Perception of Automated Driving. In Proceedings of the 2019 CHI Conference on Human Factors in Computing Systems—CHI ’19, New York, NY, USA, 4–9 May 2019; pp. 1–13. [Google Scholar]

- Smits, M. Taming monsters: The cultural domestication of new technology. Technol. Soc. 2006, 28, 489–504. [Google Scholar] [CrossRef]

- Mirnig, A.; Gärtner, M.; Meschtscherjakov, A.; Gärtner, M. Autonomous Driving: A Dream on Rails? In Mensch und Comput 2017-Workshopband; Digitalen Bibliothek der Gesellschaft für Informatik: Regensburg, Germany, 2017. [Google Scholar]

- Chong, Z.J.; Qin, B.; Bandyopadhyay, T.; Wongpiromsarn, T.; Rebsamen, B.; Dai, P.; Rankin, E.S.; Ang, M.H., Jr. Autonomy for Mobility on Demand. In Advances in Intelligent Systems and Computing; Springer: Berlin/Heidelberg, Germany, 2013; pp. 671–682. [Google Scholar]

- Moorthy, A.; De Kleine, R.; Keoleian, G.; Good, J.; Lewis, G. Shared Autonomous Vehicles as a Sustainable Solution to the Last Mile Problem: A Case Study of Ann Arbor-Detroit Area. SAE Int. J. Passeng. Cars-Electron. Electr. Syst. 2017, 10, 328–336. [Google Scholar] [CrossRef]

- Krueger, R.; Rashidi, T.H.; Rose, J.M. Preferences for shared autonomous vehicles. Transp. Res. Part C Emerg. Technol. 2016, 69, 343–355. [Google Scholar] [CrossRef]

- Fu, X.; Vernier, M.; Kurt, A.; Redmill, K.; Ozguner, U. Smooth: Improved Short-distance Mobility for a Smarter City. In Proceedings of the 2nd International Workshop on Science of Smart City Operations and Platforms Engineering, Pittsburgh, Pennsylvania, 18–21 April 2017; pp. 46–51. [Google Scholar] [CrossRef]

- Distler, V.; Lallemand, C.; Bellet, T. Acceptability and Acceptance of Autonomous Mobility on Demand: The Impact of an Immersive Experience. In Proceedings of the 2018 CHI Conference on Human Factors in Computing Systems, Montreal, QC, Canada, 21–26 April 2018; pp. 1–10. [Google Scholar]

- Wintersberger, P.; Frison, A.-K.; Riener, A. Man vs. Machine: Comparing a Fully Automated Bus Shuttle with a Manu- ally Driven Group Taxi in a Field Study. In Proceedings of the 10th International Conference on Automotive User Interfaces and Interactive Vehicular Applications, Toronto, ON, Canada, 23–25 September 2018; pp. 215–220. [Google Scholar]

- Qiu, H.; Ahmad, F.; Govindan, R.; Gruteser, M.; Bai, F.; Kar, G. Augmented Vehicular Reality: Enabling Extended Vision for Future Vehicles. In Proceedings of the 18th International Workshop on Mobile Computing Systems and Applications, Sonoma, CA, USA, 21–22 February 2017; pp. 67–72. [Google Scholar] [CrossRef]

- Arnold, E.; Al-Jarrah, O.Y.; Dianati, M.; Fallah, S.; Oxtoby, D.; Mouzakitis, A. A Survey on 3D Object Detection Methods for Autonomous Driving Applications. IEEE Trans. Intell. Transp. Syst. 2019, 1–14. [Google Scholar] [CrossRef]

- Kuutti, S.; Fallah, S.; Katsaros, K.; Dianati, M.; Mccullough, F.; Mouzakitis, A. A Survey of the State-of-the-Art Localization Techniques and Their Potentials for Autonomous Vehicle Applications. IEEE Internet Things J. 2018, 5, 829–846. [Google Scholar] [CrossRef]

- Gonzalez, D.; Perez, J.; Milanes, V.; Nashashibi, F.A. Review of Motion Planning Techniques for Automated Vehicles. IEEE Trans. Intell. Transp. Syst. 2016, 17, 1135–1145. [Google Scholar] [CrossRef]

- Lu, K.; Higgins, M.; Woodman, R.; Birrell, S. Dynamic platooning for autonomous vehicles: Real-time, En-route Optimisation. Transp. Res. Part B Methodol. 2019, submitted. [Google Scholar]

- O’Toole, M.; Lindell, D.B.; Wetzstein, G. Confocal non-line-of-sight imaging based on the light-cone transform. Nature 2018, 555, 338–341. [Google Scholar] [CrossRef]

- Beggiato, M.; Krems, J.F. The evolution of mental model, trust and acceptance of adaptive cruise control in relation to initial information. Transp. Res. Part F Traffic Psychol. Behav. 2013, 18, 47–57. [Google Scholar] [CrossRef]

- Rasmussen, J. Skills, rules, and knowledge; signals, signs, and symbols, and other distinctions in human performance models. IEEE Trans. Syst. Man Cybern. 1983, 257–266. [Google Scholar] [CrossRef]

- Revell, K.M.A.; Stanton, N.A. When energy saving advice leads to more, rather than less, consumption. Int. J. Sustain. Energy 2017, 36, 1–19. [Google Scholar] [CrossRef]

- Wintersberger, P.; Riener, A.; Frison, A.-K. Automated Driving System, Male, or Female Driver: Who’D You Prefer? Comparative Analysis of Passengers’ Mental Conditions, Emotional States & Qualitative Feedback. In Proceedings of the 8th International Conference on Automotive User Interfaces and Interactive Vehicular Applications, Ann Arbor, MI, USA, 24–26 October 2016; pp. 51–58. [Google Scholar] [CrossRef]

- Fridman, L.; Mehler, B.; Xia, L.; Yang, Y.; Facusse, L.Y.; Reimer, B. To Walk or Not to Walk: Crowdsourced Assessment of External Vehicle-to-Pedestrian Displays. arXiv 2017, arXiv:1707.02698. [Google Scholar]

- Song, Y.E.; Lehsing, C.; Fuest, T.; Bengler, K. External HMIs and Their Effect on the Interaction Between Pedestrians and Automated Vehicles. Adv. Intell. Syst. Comput. 2018, 722, 13–18. [Google Scholar] [CrossRef]

- Böckle, M.-P.; Brenden, A.P.; Klingegård, M.; Habibovic, A.; Bout, M. SAV2P – Exploring the Impact of an Interface for Shared Automated Vehicles on Pedestrians’ Experience. In Proceedings of the 9th International Conference on Automotive User Interfaces and Interactive Vehicular Applications Adjunct, Oldenburg, Germany, 24–27 September 2017; pp. 136–140. [Google Scholar] [CrossRef]

- Chang, C.; Toda, K.; Sakamoto, D.; Igarashi, T. Eyes on a Car: An Interface Design for Communication between an Autonomous Car and a Pedestrian. In Proceedings of the 9th International Conference on Automotive User Interfaces and Interactive Vehicular Applications, Oldenburg, Germany, 24–27 September 2017; pp. 65–73. [Google Scholar] [CrossRef]

- Merat, N.; Louw, T.; Madigan, R.; Wilbrink, M.; Schieben, A. What externally presented information do VRUs require when interacting with fully Automated Road Transport Systems in shared space? Accid. Anal. Prev. 2018, 118, 244–252. [Google Scholar] [CrossRef] [PubMed]

- Merat, N.; Louw, T.; Madigan, R.; Wilbrink, M.; Schieben, A. Designing the interaction of automated vehicles with other traffic participants: Design considerations based on human needs and expectations. Cogn. Technol. Work 2019, 21, 69–85. [Google Scholar] [CrossRef]

- Burns, C.G.; Oliveira, L.; Hung, V.; Thomas, P.; Birrell, S. Pedestrian Attitudes to Shared-Space Interactions with Autonomous Vehicles—A Virtual Reality Study. In Proceedings of the 10th International Conference on Applied Human Factors and Ergonomics (AHFE), Washington, DC, USA, 24–28 July 2019; pp. 307–316. [Google Scholar]

- Burns, C.G.; Oliveira, L.; Birrell, S.; Iyer, S.; Thomas, P. Pedestrian Decision-Making Responses to External Human-Machine Interface Designs for Autonomous Vehicles. In Proceedings of the 30th IEEE Intelligent Vehicles Symposium, HFIV: Human Factors in Intelligent Vehicles, Paris, France, 9–12 June 2019. [Google Scholar]

- Dey, D.; Terken, J. Pedestrian Interaction with Vehicles: Roles of Explicit and Implicit Communication. In Proceedings of the 9th International Conference on Automotive User Interfaces and Interactive Vehicular Applications, Oldenburg, Germany, 24–27 September 2017; pp. 109–113. [Google Scholar]

- Zimmermann, R.; Wettach, R. First Step into Visceral Interaction with Autonomous Vehicles. In Proceedings of the 9th International Conference on Automotive User Interfaces and Interactive Vehicular Applications, Oldenburg, Germany, 24–27 September 2017; pp. 58–64. [Google Scholar] [CrossRef]

- Mahadevan, K.; Somanath, S.; Sharlin, E. Communicating Awareness and Intent in Autonomous Vehicle-Pedestrian Interaction. In Proceedings of the 2018 CHI Conference on Human Factors in Computing Systems, Montreal QC, Canada, 21–26 April 2018; pp. 1–12. [Google Scholar]

- Portouli, E.; Nathanael, D.; Marmaras, N. Drivers’ communicative interactions: On-road observations and modelling for integration in future automation systems. Ergonomics 2014, 57, 1795–1805. [Google Scholar] [CrossRef]

- Imbsweiler, J.; Ruesch, M.; Weinreuter, H.; Puente León, F.; Deml, B. Cooperation behaviour of road users in t-intersections during deadlock situations. Transp. Res. Part F Traffic Psychol. Behav. 2018, 58, 665–677. [Google Scholar] [CrossRef]

- Kauffmann, N.; Winkler, F.; Naujoks, F.; Vollrath, M. What Makes a Cooperative Driver? Identifying parameters of implicit and explicit forms of communication in a lane change scenario. Transp. Res. Part F Traffic Psychol. Behav. 2018, 58, 1031–1042. [Google Scholar] [CrossRef]

- Kauffmann, N.; Winkler, F.; Vollrath, M. What Makes an Automated Vehicle a Good Driver? Exploring Lane Change Announcements in Dense Traffic Situations. In Proceedings of the 2018 CHI Conference on Human Factors in Computing Systems, Montreal QC, Canada, 21–26 April 2018; pp. 1–9. [Google Scholar]

- Brown, B.; Laurier, E. The Trouble with Autopilots: Assisted and autonomous driving on the social road. In Proceedings of the 2017 CHI Conference on Human Factors in Computing Systems, Denver, Colorado, USA, 6–11 May 2017; pp. 416–429. [Google Scholar]

- Hidas, P. Modelling lane changing and merging in microscopic traffic simulation. Transp. Res. Part C Emerg. Technol. 2002, 10, 351–371. [Google Scholar] [CrossRef]

- Ibanez-Guzman, J.; Lefevre, S.; Mokkadem, A.; Rodhaim, S. Vehicle to vehicle communications applied to road intersection safety, field results. In Proceedings of the 13th International IEEE Conference on Intelligent Transportation Systems, Funchal, Portugal, 19–22 September 2010; pp. 192–197. [Google Scholar]

- Imbsweiler, J.; Stoll, T.; Ruesch, M.; Baumann, M.; Deml, B. Insight into cooperation processes for traffic scenarios: Modelling with naturalistic decision making. Cogn. Technol. Work 2018, 20, 621–635. [Google Scholar] [CrossRef]

- Al-Shihabi, T.; Mourant, R.R. Toward More Realistic Driving Behavior Models for Autonomous Vehicles in Driving Simulators. Transp. Res. Rec J. Transp. Res. Board 2003, 1843, 41–49. [Google Scholar] [CrossRef]

- Voß, G.M.I.; Keck, C.M.; Schwalm, M. Investigation of drivers’ thresholds of a subjectively accepted driving performance with a focus on automated driving. Transp. Res. Part F Traffic Psychol. Behav. 2018, 56, 280–292. [Google Scholar] [CrossRef]

- Bellem, H.; Schönenberg, T.; Krems, J.F.; Schrauf, M. Objective metrics of comfort: Developing a driving style for highly automated vehicles. Transp. Res. Part F Traffic Psychol. Behav. 2016, 41, 45–54. [Google Scholar] [CrossRef]

- Oliveira, L.; Proctor, K.; Burns, C.; Luton, J.; Mouzakitis, A. Trust and acceptance of automated vehicles: A qualitative study. In Proceedings of the INTSYS – 3rd EAI International Conference on Intelligent Transport Systems, Braga, Portugal, 4–6 December 2019. submitted for publication. [Google Scholar]

- Smyth, J.; Jennings, P.; Mouzakitis, A.; Birrell, S. Too Sick to Drive: How Motion Sickness Severity Impacts Human Performance. In Proceedings of the 2018 21st International Conference on Intelligent Transportation Systems (ITSC), Maui, HI, USA, 4–7 November 2018; pp. 1787–1793. [Google Scholar]

- Bellem, H.; Thiel, B.; Schrauf, M.; Krems, J.F. Comfort in automated driving: An analysis of preferences for different automated driving styles and their dependence on personality traits. Transp. Res. Part F Traffic Psychol. Behav. 2018, 55, 90–100. [Google Scholar] [CrossRef]

- Waytz, A.; Heafner, J.; Epley, N. The mind in the machine: Anthropomorphism increases trust in an autonomous vehicle. J. Exp. Soc. Psychol. 2014, 52, 113–117. [Google Scholar] [CrossRef]

- Huang, C.; Mutlu, B. The Repertoire of Robot Behavior: Designing Social Behaviors to Support Human-Robot Joint Activity. J. Hum. -Robot Interact. 2013, 2, 80–102. [Google Scholar] [CrossRef]

- Häuslschmid, R.; von Bülow, M.; Pfleging, B.; Butz, A. Supporting Trust in Autonomous Driving. In Proceedings of the 22nd International Conference on Intelligent User Interfaces, Limassol, Cyprus, 13–16 March 2017; pp. 319–329. [Google Scholar]

- Zihsler, J.; Hock, P.; Walch, M.; Dzuba, K.; Schwager, D.; Szauer, P.; Rukzio, E. Carvatar: Increasing Trust in Highly-Automated Driving Through Social Cues. In Proceedings of the 8th International Conference on Automotive User Interfaces and Interactive Vehicular Applications Adjunct, Ann Arbor, MI, USA, 24–26 October 2016; pp. 9–14. [Google Scholar]

- Zhu, M.; Wang, X.; Wang, Y. Human-like autonomous car-following model with deep reinforcement learning. Transp. Res. Part C Emerg. Technol. 2018, 97, 348–368. [Google Scholar] [CrossRef]

- Lee, J.G.; Kim, K.J.; Lee, S.; Shin, D.H. Can Autonomous Vehicles Be Safe and Trustworthy? Effects of Appearance and Autonomy of Unmanned Driving Systems. Int. J. Hum. Comput. Interact. 2015, 31, 682–691. [Google Scholar] [CrossRef]

- Cha, E.; Kim, Y.; Fong, T.; Mataric, M.J. A Survey of Nonverbal Signaling Methods for Non-Humanoid Robots. Found. Trends Robot. 2018, 6, 211–323. [Google Scholar] [CrossRef]

- Galvin, R. How many interviews are enough? Do qualitative interviews in building energy consumption research produce reliable knowledge? J. Build. Eng. 2014, 1, 2–12. [Google Scholar] [CrossRef]

- Kuniavsky, M.; Goodman, E.; Moed, A. Observing the User Experience: A Practitioner’s Guide to User Research, 2nd ed.; Elsevier: Amsterdam, The Netherlands, 2012. [Google Scholar]

- Jian, J.-Y.; Bisantz, A.M.; Drury, C.G. Foundations for an Empirically Determined Scale of Trust in Automated Systems. Int. J. Cogn. Ergon. 2000, 4, 53–71. [Google Scholar] [CrossRef]

- Braun, V.; Clarke, V. Using thematic analysis in psychology. Qual. Res. Psychol. 2006, 3, 77–101. [Google Scholar] [CrossRef]

- Glaser, B.G. The Constant Comparative Method of Qualitative Analysis. Soc. Probl. 1965, 12, 436–445. [Google Scholar] [CrossRef]

- Yang, X.J.; Unhelkar, V.V.; Li, K.; Shah, J.A. Evaluating Effects of User Experience and System Transparency on Trust in Automation. In Proceedings of the 2017 ACM/IEEE International Conference on Human-Robot Interaction, Vienna, Austria, 6–9 March 2017; pp. 408–416. [Google Scholar]

- Dogramadzi, S.; Giannaccini, M.E.; Harper, C.; Sobhani, M.; Woodman, R.; Choung, J. Environmental Hazard Analysis—A Variant of Preliminary Hazard Analysis for Autonomous Mobile Robots. J. Intell. Robot Syst. 2014, 76, 73–117. [Google Scholar] [CrossRef]

- Vinkhuyzen, E.; Cefkin, M. Developing socially acceptable autonomous vehicles. In Proceedings of the Ethnographic Praxis in Industry Conference, Minneapolis, MN, USA, 9 August–1 September 2016; pp. 522–534. [Google Scholar]

- Mahadevan, K.; Somanath, S.; Sharlin, E. “Fight-or-Flight”: Leveraging Instinctive Human Defensive Behaviors for Safe Human-Robot Interaction. In Proceedings of the Companion of the 2018 ACM/IEEE International Conference on Human-Robot Interaction, Chicago, IL, USA, 5–8 March 2018; pp. 183–184. [Google Scholar]

- Ekman, F.; Johansson, M.; Sochor, J. Creating appropriate trust in automated vehicle systems: A framework for HMI design. IEEE Trans. Hum. Mach. Syst. 2018, 48, 95–101. [Google Scholar] [CrossRef]

- Wiegand, G.; Schmidmaier, M.; Weber, T.; Liu, Y.; Hussmann, H. I Drive—You Trust: Explaining Driving Behavior of Autonomous Cars. In Proceedings of the Extended Abstracts of the 2019 CHI Conference on Human Factors in Computing Systems, Glasgow, UK, 4–9 May 2019; pp. 1–6. [Google Scholar]

- Heikoop, D.D.; de Winter, J.C.F.; van Arem, B.; Stanton, N.A. Effects of mental demands on situation awareness during platooning: A driving simulator study. Transp. Res. Part F Traffic Psychol. Behav. 2018, 58, 193–209. [Google Scholar] [CrossRef]

- Kunze, A.; Summerskill, S.J.; Marshall, R.; Filtness, A.J. Evaluation of Variables for the Communication of Uncertainties Using Peripheral Awareness Displays. In Proceedings of the 10th International Conference on Automotive User Interfaces and Interactive Vehicular Applications, Toronto, ON, Canada, 23–25 September 2018; pp. 147–153. [Google Scholar]

- Oliveira, L.; Luton, J.; Iyer, S.; Burns, C.; Mouzakitis, A.; Jennings, P.; Birrell, S. Evaluating How Interfaces Influence the User Interaction with Fully Autonomous Vehicles. In Proceedings of the 10th International Conference on Automotive User Interfaces and Interactive Vehicular Applications, Toronto, ON, Canada, 23–25 September 2018; pp. 320–331. [Google Scholar]

- Forster, Y.; Hergeth, S.; Naujoks, F.; Beggiato, M.; Krems, J.F.; Keinath, A. Learning to use automation: Behavioral changes in interaction with automated driving systems. Transp. Res. Part F Traffic Psychol. Behav. 2019, 62, 599–614. [Google Scholar] [CrossRef]

- Koo, J.; Kwac, J.; Ju, W.; Steinert, M.; Leifer, L.; Nass, C. Why did my car just do that? Explaining semi-autonomous driving actions to improve driver understanding, trust, and performance. Int. J. Interact. Des. Manuf. 2015, 9, 269–275. [Google Scholar] [CrossRef]

- Forster, Y.; Hergeth, S.; Naujoks, F.; Krems, J.; Keinath, A. User Education in Automated Driving: Owner’s Manual and Interactive Tutorial Support Mental Model Formation and Human-Automation Interaction. Information 2019, 10, 22. [Google Scholar] [CrossRef]

- Hartwich, F.; Beggiato, M.; Krems, J.F. Driving comfort, enjoyment and acceptance of automated driving–effects of drivers’ age and driving style familiarity. Ergonomics 2018, 61, 1017–1032. [Google Scholar] [CrossRef]

- Driggs-Campbell, K.; Govindarajan, V.; Bajcsy, R. Integrating Intuitive Driver Models in Autonomous Planning for Interactive Maneuvers. IEEE Trans. Intell. Transp. Syst. 2017, 18, 3461–3472. [Google Scholar] [CrossRef]

- Elbanhawi, M.; Simic, M.; Jazar, R. In the Passenger Seat: Investigating Ride Comfort Measures in Autonomous Cars. IEEE Intell. Transp. Syst. Mag. 2015, 7, 4–17. [Google Scholar] [CrossRef]

- Hardin, G. The Tragedy of the Commons. Sci. Mag. 1968, 162, 1243–1248. [Google Scholar] [CrossRef]

- Parasuraman, R.; Miller, C.A. Trust and etiquette in high-criticality automated systems. Commun ACM 2004, 47, 51. [Google Scholar] [CrossRef]

| Mean Trust Scores per Run | Human | SD | Machine | SD |

|---|---|---|---|---|

| 1 | 59.30 | 8.163 | 63.19 | 11.197 |

| 2 | 59.55 | 8.470 | 66.33 | 10.910 |

| 3 | 65.85 | 8.506 | 68.29 | 11.645 |

| 4 | 67.20 | 9.622 | 69.38 | 10.929 |

| Paired Differences | Mean | Std. Dev. | Std. Error Mean | 95% Confidence Interval of the Difference | T | df | Sig. (2-tailed) | ||

|---|---|---|---|---|---|---|---|---|---|

| Lower | Upper | ||||||||

| Pair 1 | Trust score run 1-Trust score run 2 | −1.732 | 5.599 | 0.874 | −3.499 | 0.036 | −1.980 | 40 | 0.055 |

| Pair 2 | Trust score run 1-Trust score run 3 | −5.805 | 5.904 | 0.922 | −7.669 | −3.941 | −6.295 | 40 | 0.000 |

| Pair 3 | Trust score run 1-Trust score run 4 | −7.024 | 7.206 | 1.125 | −9.299 | −4.750 | −6.242 | 40 | 0.000 |

| Pair 4 | Trust score run 2-Trust score run 3 | −4.073 | 6.509 | 1.017 | −6.128 | −2.019 | −4.007 | 40 | 0.000 |

| Pair 5 | Trust score run 2-Trust score run 4 | −5.293 | 7.128 | 1.113 | −7.543 | −3.043 | −4.754 | 40 | 0.000 |

| Pair 6 | Trust score run 3-Trust score run 4 | −1.220 | 3.525 | 0.551 | −2.332 | −0.107 | −2.215 | 40 | 0.033 |

| Paired Differences, distrust sub-factor | Mean | Std. Dev. | Std. Error Mean | 95% Confidence Interval of the Difference | T | df | Sig. (2-tailed) | ||

|---|---|---|---|---|---|---|---|---|---|

| Lower | Upper | ||||||||

| Pair 1 | Distrust sub-factor 1-Distrust sub-factor 2 | 0.171 | 3.201 | 0.500 | −0.840 | 1.181 | 0.342 | 40 | 0.734 |

| Pair 2 | Distrust sub-factor 1-Distrust sub-factor 3 | 1.195 | 3.723 | 0.581 | 0.020 | 2.370 | 2.055 | 40 | 0.046 |

| Pair 3 | Distrust sub-factor 1-Distrust sub-factor 4 | 1.341 | 3.947 | 0.616 | 0.096 | 2.587 | 2.176 | 40 | 0.036 |

| Pair 4 | Distrust sub-factor 2-Distrust sub-factor 3 | 1.024 | 2.318 | 0.362 | 0.293 | 1.756 | 2.829 | 40 | 0.007 |

| Pair 5 | Distrust sub-factor 2-Distrust sub-factor 4 | 1.171 | 2.587 | 0.404 | 0.354 | 1.987 | 2.897 | 40 | 0.006 |

| Pair 6 | Distrust sub-factor 3-Distrust sub-factor 4 | 0.146 | 1.459 | 0.228 | −0.314 | 0.607 | 0.642 | 40 | 0.524 |

| Paired Differences, trust sub-factor | Mean | Std. Dev. | Std. Error Mean | 95% Confidence Interval of the Difference | T | df | Sig. (2-tailed) | ||

|---|---|---|---|---|---|---|---|---|---|

| Lower | Upper | ||||||||

| Pair 1 | Trust sub-factor 1-Trust sub-factor 2 | −1.561 | 4.399 | 0.687 | −2.950 | −0.172 | −2.272 | 40 | 0.029 |

| Pair 2 | Trust sub-factor 1-Trust sub-factor 3 | −4.610 | 3.625 | 0.566 | −5.754 | −3.465 | −8.142 | 40 | 0.000 |

| Pair 3 | Trust sub-factor 1-Trust sub-factor 4 | −5.683 | 4.618 | 0.721 | −7.140 | −4.225 | −7.880 | 40 | 0.000 |

| Pair 4 | Trust sub-factor 2-Trust sub-factor 3 | −3.049 | 4.944 | 0.772 | −4.609 | −1.488 | −3.948 | 40 | 0.000 |

| Pair 5 | Trust sub-factor 2-Trust sub-factor 4 | −4.122 | 5.386 | 0.841 | −5.822 | −2.422 | −4.900 | 40 | 0.000 |

| Pair 6 | Trust sub-factor 3-Trust sub-factor 4 | −1.073 | 2.936 | 0.459 | −2.000 | −0.146 | −2.341 | 40 | 0.024 |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Oliveira, L.; Proctor, K.; Burns, C.G.; Birrell, S. Driving Style: How Should an Automated Vehicle Behave? Information 2019, 10, 219. https://doi.org/10.3390/info10060219

Oliveira L, Proctor K, Burns CG, Birrell S. Driving Style: How Should an Automated Vehicle Behave? Information. 2019; 10(6):219. https://doi.org/10.3390/info10060219

Chicago/Turabian StyleOliveira, Luis, Karl Proctor, Christopher G. Burns, and Stewart Birrell. 2019. "Driving Style: How Should an Automated Vehicle Behave?" Information 10, no. 6: 219. https://doi.org/10.3390/info10060219

APA StyleOliveira, L., Proctor, K., Burns, C. G., & Birrell, S. (2019). Driving Style: How Should an Automated Vehicle Behave? Information, 10(6), 219. https://doi.org/10.3390/info10060219