Research on the Quantitative Method of Cognitive Loading in a Virtual Reality System

Abstract

1. Introduction

2. Related Work

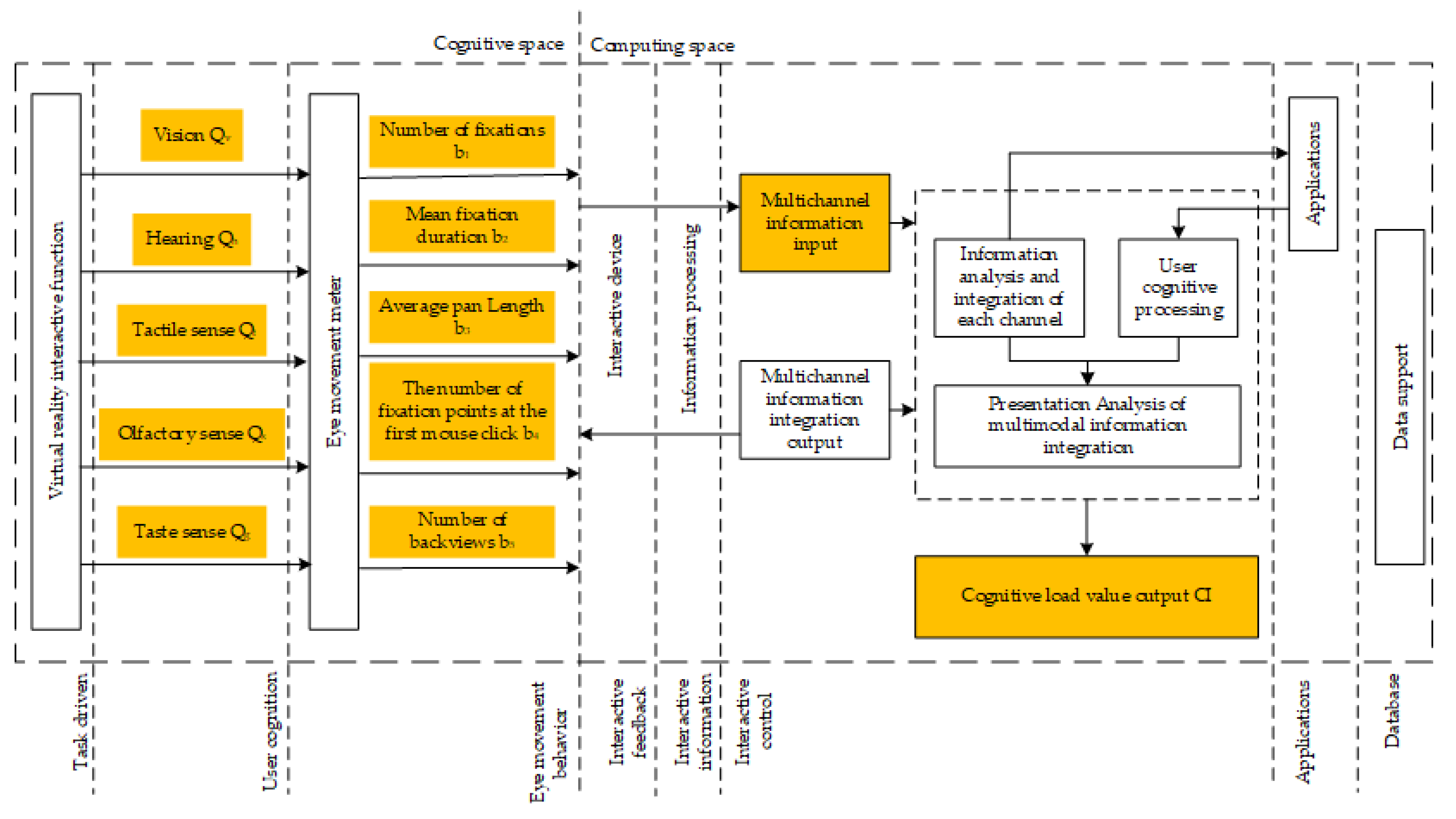

2.1. Multi-Channel Interactive Information Integration in the VR System

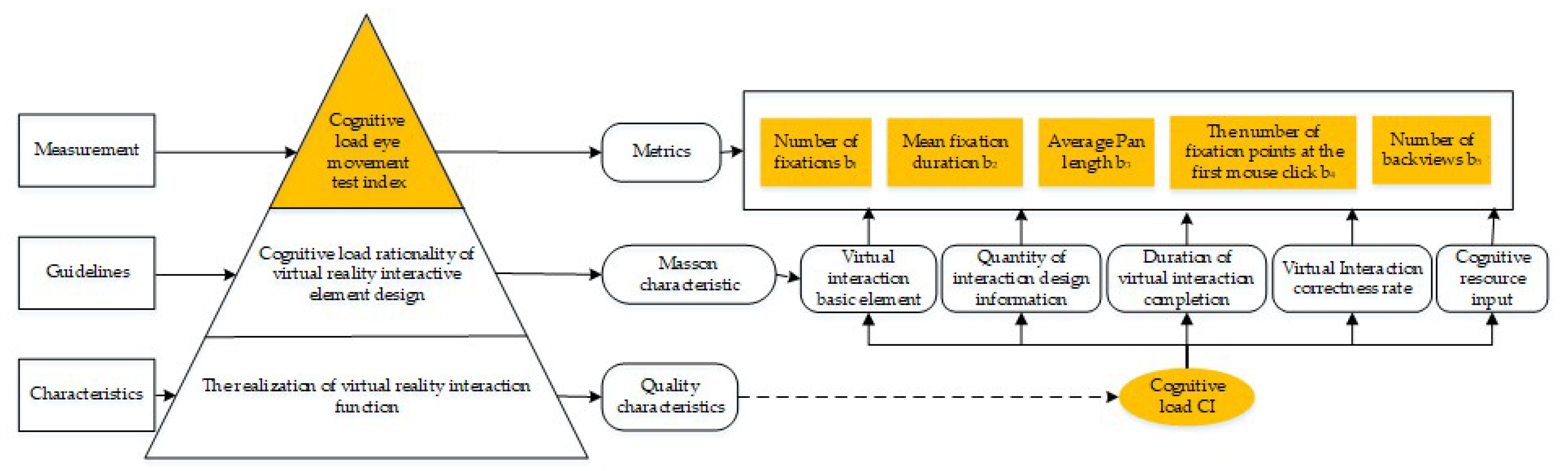

2.2. Construction of Cognitive Load Quality Evaluation Model

2.3. Physiological Index of Cognitive Load Based on an Eye Movement Experiment

3. Methods

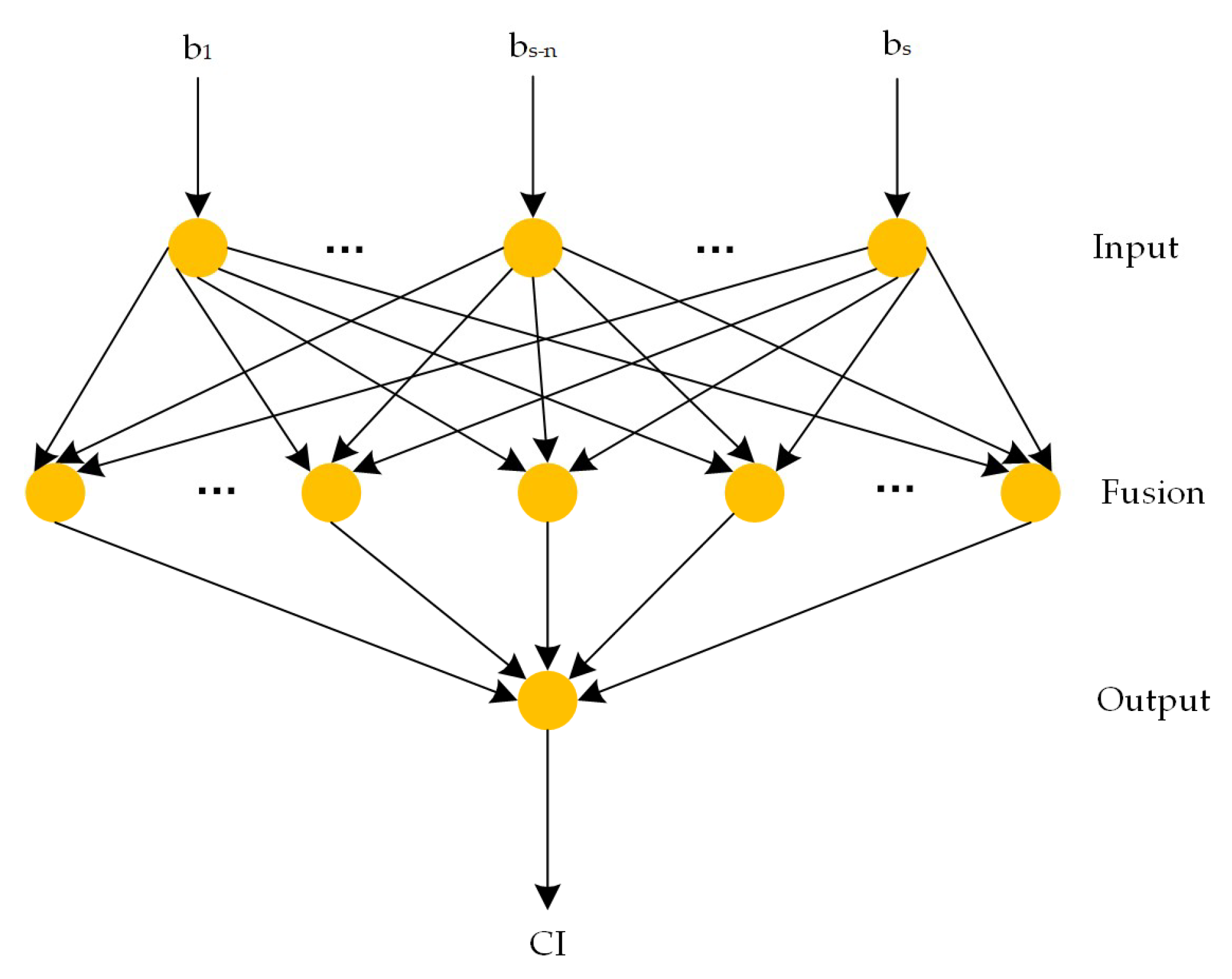

3.1. Cognitive Load Evaluation Model Based on the Probabilistic Neural Network

- Input layer: This refers to eye movement data of the entire virtual reality tunnel rescue mission, such as the number of fixation points, in a single vision channel, dual vision-audio channel, dual vision-tactile channel, and three visual-audio-tactile channels. It also includes average gaze duration, average squint length, number of gaze points to the first mouse click, number of gaze times, etc.

- Fusion layer: This refers to incorporating the acquired data into the cognitive load quantification model based on the probabilistic neural network for data collation.

- Output layer: This refers to the value of the final output after the data fusion processing, which is the value of the cognitive load quantified by the tester under a certain conditional channel.

3.2. Evaluation Index

4. Application Instance

4.1. Experimental Design

- Visual channel: The sound equipment and handle of the emergency rescue system of the VR tunnel were switched off, and the tester obtained the rescue mission information only through the visual channel to complete the rescue mission.

- Visual-auditory: The handle of the VR tunnel emergency rescue system was turned off, and the tester obtained rescue mission information through visual and auditory functions to complete the rescue mission.

- Visual-tactile: The sound equipment of the VR tunnel emergency rescue system was turned off. The tester obtained rescue mission information through visual and tactile sensation and completes the rescue mission.

- Visual-auditor-tactile: The tester obtained the rescue information through visual reading; the auditory system acquires the tunnel rescue information, such as the tunnel wind sound, the water drops sounds, etc., and obtains the rescue information; the handle was touched to obtain the selected rescue information to complete the rescue task.

4.2. Select Subjects

4.3. Experimental Device

4.4. Experimental Variables

4.4.1. Independent Variable

4.4.2. Dependent Variable

4.4.3. Control Disturbance Variable

4.5. Experimental Results

5. Discussion

5.1. Correlation Analysis of Eye Movement Parameters and Cognitive Load of Users

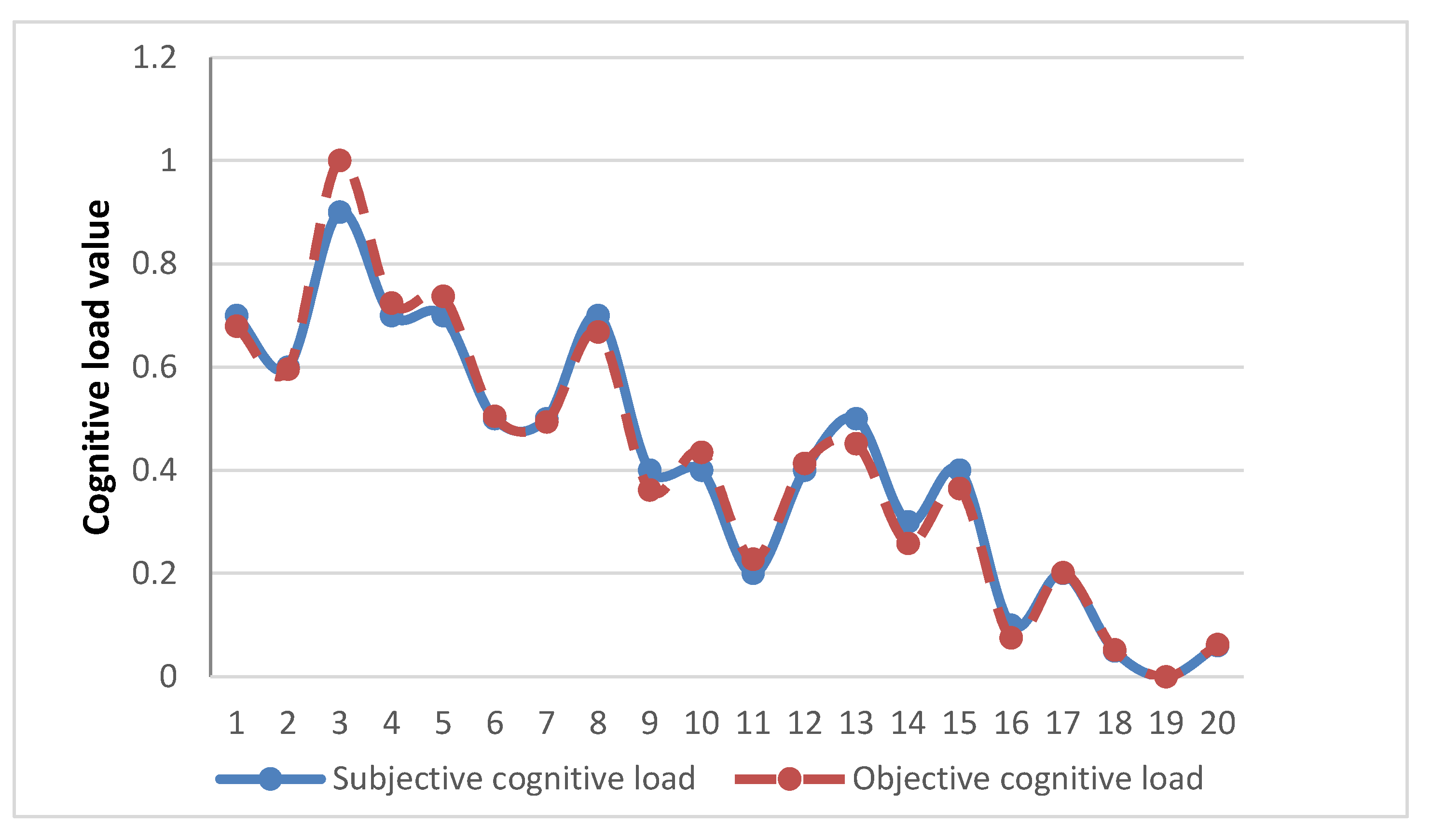

5.2. Model Output Analysis

6. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Mun, S.; Whang, M.; Park, S.; Park, M.C. Effects of mental workload on involuntary attention: A somatosensory ERP study. Neuropsychologia 2017, 106, 7–20. [Google Scholar] [CrossRef]

- Puma, S.; Matton, N.; Paubel, P.V.; Raufaste, É.; El-Yagoubi, R. Using theta and alpha band power to assess cognitive workload in multitasking environments. Int. J. Psychophysiol. 2018, 123, 111–120. [Google Scholar] [CrossRef]

- Yae, J.H.; Shin, J.G.; Woo, J.H.; Kim, S.H. A Review of Ergonomic Researches for Designing In-Vehicle Information Systems. J. Ergon. Soc. Korea 2017, 36, 499–523. [Google Scholar]

- Mun, S.; Park, M.; Park, S.; Whang, M. SSVEP and ERP measurement of cognitive fatigue caused by stereoscopic 3D. Neurosci. Lett. 2012, 525, 89–94. [Google Scholar] [CrossRef] [PubMed]

- Mun, S.; Kim, E.; Park, M. Effect of mental fatigue caused by mobile 3D viewing on selective attention: An ERP study. Int. J. Psychophysiol. 2014, 94, 373–381. [Google Scholar] [CrossRef] [PubMed]

- Yu, C.; Wang, E.M.; Li, W.C.; Braithwaite, G. Pilots’ Visual Scan Patterns and Situation Awareness in Flight Operations. Aviat. Space Environ. Med. 2014, 85, 708–714. [Google Scholar] [CrossRef]

- Hogervorst, M.A.; Brouwer, A.; van Erp, J.B.E. Combining and comparing EEG, peripheral physiology and eye-related measures for the assessment of mental workload. Front. Neurosci. 2014, 8, 322. [Google Scholar] [CrossRef]

- Jimenez-Molina, A.; Retamal, C.; Lira, H. Using Psychophysiological Sensors to Assess Mental Workload during Web Browsing. Sensors 2018, 18, 458. [Google Scholar] [CrossRef] [PubMed]

- Sungchul, M. Overview of Understanding and Quantifying Cognitive Load. J. Ergon. Soc. Korea 2018, 37, 337–346. [Google Scholar]

- Sargezeh, B.A.; Ayatollahi, A.; Daliri, M.R. Investigation of eye movement pattern parameters of individuals with different fluid intelligence. Exp. Brain Res. 2019, 237, 15–28. [Google Scholar] [CrossRef] [PubMed]

- Sekicki, M.; Staudte, M. Eye’ll Help You Out! How the Gaze Cue Reduces the Cognitive Load Required for Reference Processing. Cogn. Sci. 2018, 42, 2418–2458. [Google Scholar] [CrossRef]

- Demberg, V.; Sayeed, A. The Frequency of Rapid Pupil Dilations as a Measure of Linguistic Processing Difficulty. PLoS ONE 2016, 11, e01461941. [Google Scholar] [CrossRef]

- Majooni, A.; Masood, M.; Akhavan, A. An eye-tracking study on the effect of infographic structures on viewer’s comprehension and cognitive load. Inf. Vis. 2018, 17, 257–266. [Google Scholar] [CrossRef]

- Ooms, K.; Coltekin, A.; De Maeyer, P.; Dupont, L.; Fabrikant, S.; Incoul, A.; Kuhn, M.; Slabbinck, H.; Vansteenkiste, P.; Van der Haegen, L. Combining user logging with eyetracking for interactive and dynamic applications. Behav. Res. Methods 2015, 47, 977–993. [Google Scholar] [CrossRef]

- Hua, L.; Dong, W.; Chen, P.; Liu, H. Exploring differences of visual attention in pedestrian navigation when using 2D maps and 3D geo-browsers. Cartogr. Geogr. Inf. Sci. 2016, 44, 1–17. [Google Scholar]

- Anagnostopoulos, V.; Havlena, M.; Kiefer, P.; Giannopoulos, I.; Schindler, K.; Raubal, M. Gaze-Informed location-based services. Int. J. Geogr. Inf. Sci. 2017, 31, 1770–1797. [Google Scholar] [CrossRef]

- Asan, O.; Yang, Y. Using Eye Trackers for Usability Evaluation of Health Information Technology: A Systematic Literature Review. JMIR Hum. Factors 2015, 2, e5. [Google Scholar] [CrossRef]

- Sassaroli, A.; Zheng, F.; Hirshfield, L.M.; Girouard, A.; Solovey, E.T.; Jacob, R.J.K.; Fantini, S. Discrimination of Mental Workload Levels in Human Subjects with Functional Near-infrared Spectroscopy. J. Innov. Opt. Health Sci. 2008, 1, 227–237. [Google Scholar] [CrossRef]

- Herff, C.; Heger, D.; Fortmann, O.; Hennrich, J.; Putze, F.; Schultz, T. Mental workload during n-back task-quantified in the prefrontal cortex using fNIRS. Front. Hum. Neurosci. 2014, 7, 935. [Google Scholar] [CrossRef]

- Wilson, G.F.; Russell, C.A. Real-time assessment of mental workload using psychophysiological measures and artificial neural networks. Hum. Factors 2003, 45, 635–643. [Google Scholar] [CrossRef]

- Mueller, K.; Tangermann, M.; Dornhege, G.; Krauledat, M.; Curio, G.; Blankertz, B. Machine learning for real-time single-trial EEG-analysis: From brain-computer interfacing to mental state monitoring. J. Neurosci. Methods 2008, 167, 82–90. [Google Scholar] [CrossRef]

- Noel, J.B.; Bauer, K.W., Jr.; Lanning, J.W. Improving pilot mental workload classification through feature exploitation and combination: A feasibility study. Comput. Oper. Res. 2005, 32, 2713–2730. [Google Scholar] [CrossRef]

- Oh, H.; Hatfield, B.D.; Jaquess, K.J.; Lo, L.-C.; Tan, Y.Y.; Prevost, M.C.; Mohler, J.M.; Postlethwaite, H.; Rietschel, J.C.; Miller, M.W.; et al. A Composite Cognitive Workload Assessment System in Pilots Under Various Task Demands Using Ensemble Learning. In Proceedings of the AC 2015: Foundations of Augmented Cognition, Los Angeles, CA, USA, 2–7 August 2015. [Google Scholar]

- Lu, L.; Tian, F.; Dai, G.; Wang, H. A Study of the Multimodal Cognition and Interaction Based on Touch, Audition and Vision. J. Comput.-Aided Des. Comput. Graph. 2014, 26, 654–661. [Google Scholar]

- Zhang, G.H.; Lao, S.Y.; Ling, Y.X.; Ye, T. Research on Multiple and Multimodal Interaction in C2. J. Natl. Univ. Def. Technol. 2010, 32, 153–159. [Google Scholar]

- Wei, L.; Yufen, C. Cartography Eye Movements Study and the Experimental Parameters Analysis. Bull. Surv. Mapp. 2012, 10, 16–20. [Google Scholar]

- Chen, X.; Xue, C.; Chen, M.; Tian, J.; Shao, J.; Zhang, J. Quality assessment model of digital interface based on eye-tracking experiments. J. Southeast Univ. (Nat. Sci. Ed.) 2017, 47, 38–42. [Google Scholar]

- Smerecnik, C.M.R.; Mesters, I.; Kessels, L.T.E.; Ruiter, R.A.; De Vries, N.K.; De Vries, H. Understanding the Positive Effects of Graphical Risk Information on Comprehension: Measuring Attention Directed to Written, Tabular, and Graphical Risk Information. Risk Anal. 2010, 30, 1387–1398. [Google Scholar] [CrossRef] [PubMed]

- Henderson, J.M.; Choi, W. Neural Correlates of Fixation Duration during Real-world Scene Viewing: Evidence from Fixation-related (FIRE) fMRI. J. Cogn. Neurosci. 2014, 27, 1137–1145. [Google Scholar] [CrossRef]

- Lin, J.H.; Lin, S.S.J. Cognitive Load for Configuration Comprehension in Computer-Supported Geometry Problem Solving: An Eye Movement Perspective. Int. J. Sci. Math. Educ. 2014, 12, 605–627. [Google Scholar] [CrossRef]

- Wu, X.; Xue, C.; Gedeon, T.; Hu, H.; Li, J. Visual search on information features on digital task monitoring interface. J. Southeast Univ. (Nat. Sci. Ed.) 2018, 48, 807–814. [Google Scholar]

- Allsop, J.; Gray, R.; Bulthoff, H.H.; Chuang, L. Effects of anxiety and cognitive load on instrument scanning behavior in a flight simulation. In Proceedings of the 2016 IEEE Second Workshop on Eye Tracking and Visualization (ETVIS), Baltimore, MD, USA, 23 October 2016. [Google Scholar]

- Nayyar, A.; Dwivedi, U.; Ahuja, K.; Rajput, N. Opti Dwell: Intelligent Adjustment of Dwell Click Time. In Proceedings of the 22nd International Conference, Hong Kong, China, 8–9 December 2017. [Google Scholar]

- Lutteroth, C.; Penkar, M.; Weber, G. Gaze, vs. Mouse: A Fast and Accurate Gaze-Only Click Alternative. In Proceedings of the 28th Annual ACM Symposium, Charlotte, NC, USA, 8–11 November 2015. [Google Scholar]

- Chengshun, W.; Yufen, C.; Shulei, Z. User interest analysis method for dot symbols of web map considering eye movement data. Geomat. Inf. Sci. Wuhan Univ. 2018, 43, 1429–1437. [Google Scholar]

- Paas, F.G.; Van Merri, J.J.; Adam, J.J. Measurement of cognitive load in instructional research. Percept Mot Skills 1994, 79, 419–430. [Google Scholar] [CrossRef] [PubMed]

- Meshkati, N.; Hancock, P.A.; Rahimi, M. Techniques in Mental Workload Assessment. In Evaluation of Human Work: A Practical Ergonomics Methodology; Taylor & Francis: Philadelphia, PA, USA, 1995. [Google Scholar]

- Zarjam, P.; Epps, J.; Lovell, N.H. Beyond Subjective Self-Rating: EEG Signal Classification of Cognitive Workload. IEEE Trans. Auton. Ment. Dev. 2015, 7, 301–310. [Google Scholar] [CrossRef]

- Paas, F.; Tuovinen, J.E.; Tabbers, H. Cognitive Load Measurement as a Means to Advance Cognitive Load Theory. Educ. Psychol. 2003, 38, 63–71. [Google Scholar] [CrossRef]

| Cognitive Channel | Subject Serial Number | |

|---|---|---|

| Single-channel | Vision | 1, 2, 3, 4, 5 |

| Dual-channel | Visual-auditory | 6, 7, 8, 9, 10 |

| Visual-tactile | 11, 12, 13, 14, 15 | |

| Three channels | Visual-auditory-tactile | 16, 17, 18, 19, 20 |

| Number of Cognitive Channels | Classes | |

|---|---|---|

| Single-channel | Vision | |

| Dual-channel | Visual-auditory | Visual-tactile |

| Three channels | Visual-auditory-tactile | |

| Cognitive Load Layer | 0 | 0.2 | 0.4 | 0.6 | 0.8 | 1 | 0.1, 0.3, 0.5, 0.7, 0.9 |

|---|---|---|---|---|---|---|---|

| Meaning | Extremely low cognitive load | Cognitive load is intensely low | Cognitive load was significantly lower | Cognitive load was significantly high | Cognitive load is intensely high | Extremely high cognitive load | The intermediate value of the neighboring judgment |

| Cognitive Channel | Cognitive Load | |

|---|---|---|

| Single-channel | Vision | 0.7 |

| Dual-channel | Visual-auditory | 0.5 |

| Visual-tactile | 0.2 | |

| Three channels | Visual-auditory-tactile | 0.1 |

| Cognitive Channel | Eye Movement Index | |||||

|---|---|---|---|---|---|---|

| Eye Movement Index | Mean Fixation Duration | Average Pan Length | The Number of Fixation Points at the First Mouse Click | Number of Back Views | ||

| Single-channel | Vision | 0.2812 | 0.7555 | 0.9492 | 0.5556 | 0.6000 |

| Dual-channel | Visual-auditory | 0.3438 | 0.6823 | 0.5024 | 0.3333 | 0.5000 |

| Visual-tactile | 0.2812 | 0.5115 | 0.6378 | 0.0000 | 0.4000 | |

| Three channels | Visual-auditory-tactile | 0.2500 | 0.2537 | 0.0030 | 0.0000 | 0.2000 |

| Cognitive Channel Category | Single Channel | Dual Channel | Three Channel | |

|---|---|---|---|---|

| Vision | Visual-Auditory | Visual-Tactile | Visual-Auditory-Tactile | |

| Cognitive load | 0.7 | 0.5 | 0.2 | 0.1 |

| 0.6 | 0.5 | 0.4 | 0.2 | |

| 0.9 | 0.7 | 0.5 | 0.05 | |

| 0.7 | 0.4 | 0.3 | 0 | |

| 0.7 | 0.4 | 0.4 | 0.06 | |

| Cognitive Channel | Eye Movement Index | Cognitive Load | |||||

|---|---|---|---|---|---|---|---|

| Eye Movement Index | Mean Fixation Duration | Mean Fixation Duration | The Number of Fixation Points at the First Mouse Click | Number of Back Views | |||

| Single- channel | Vision | 0.2812 | 0.7555 | 0.9492 | 0.5556 | 0.6000 | 0.6788 |

| 0.8438 | 0.4468 | 0.7731 | 1.0000 | 0.5000 | 0.5962 | ||

| 1.0000 | 0.6051 | 1.0000 | 0.6667 | 0.6000 | 1.0000 | ||

| 0.6250 | 0.5862 | 0.9176 | 0.5556 | 1.0000 | 0.7241 | ||

| 0.5000 | 0.5016 | 0.9902 | 0.6667 | 0.8000 | 0.7370 | ||

| Dual- channel | Visual-auditory | 0.3438 | 0.6823 | 0.5024 | 0.3333 | 0.5000 | 0.5043 |

| 0.6250 | 0.5288 | 0.6810 | 0.4444 | 0.3000 | 0.4934 | ||

| 0.3750 | 1.0000 | 0.6631 | 0.3333 | 0.3000 | 0.6684 | ||

| 0.4375 | 0.2424 | 0.8045 | 0.1111 | 0.4000 | 0.3620 | ||

| 0.7188 | 0.0000 | 0.5541 | 0.6667 | 0.7000 | 0.4344 | ||

| Visual-tactile | 0.2812 | 0.5115 | 0.6378 | 0.0000 | 0.4000 | 0.2279 | |

| 0.3125 | 0.2702 | 0.4161 | 0.3333 | 0.3000 | 0.4133 | ||

| 0.0938 | 0.5154 | 0.3611 | 0.3333 | 0.3000 | 0.4516 | ||

| 0.5625 | 0.4137 | 0.3623 | 0.1111 | 0.4000 | 0.2586 | ||

| 0.0625 | 0.3982 | 0.4966 | 0.6667 | 0.5000 | 0.3646 | ||

| Three channels | Visual-auditory-tactile | 0.2500 | 0.2537 | 0.0030 | 0.0000 | 0.2000 | 0.0751 |

| 0.0312 | 0.0051 | 0.0000 | 0.1111 | 0.2000 | 0.2011 | ||

| 0.1250 | 0.0935 | 0.2113 | 0.0000 | 0.0000 | 0.0523 | ||

| 0.0000 | 0.2675 | 0.1987 | 0.1111 | 0.1000 | 0.0000 | ||

| 0.2500 | 0.5775 | 0.1449 | 0.1111 | 0.1000 | 0.0621 | ||

| Eye Movement Characteristic Parameter | Eye Movement Index | Mean Fixation Duration | Mean Fixation Duration | The Number of Fixation Points at the First Mouse Click | Number of Back Views |

|---|---|---|---|---|---|

| 0.679878252 | 0.559834694 | 0.863182783 | 0.754462615 | 0.754440400 |

| Cognitive Channel | Subjective Cognitive Load | Cognitive Load Quantified Value | Absolute Error | Average Absolute Error | Mean of Mean Absolute Error | Relative Mean Square Error | Mean Relative Mean Square Error |

|---|---|---|---|---|---|---|---|

| Vision | 0.6788 | 0.7 | 0.0312 | 0.1 | 0.107575 | 0.0989 | 0.127675 |

| 0.5962 | 0.6 | 0.0064 | |||||

| 1 | 0.9 | 0.1 | |||||

| 0.7241 | 0.7 | 0.0333 | |||||

| 0.737 | 0.7 | 0.0502 | |||||

| Visual-auditory | 0.5043 | 0.5 | 0.0085 | 0.105 | 0.1133 | ||

| 0.4934 | 0.5 | 0.0134 | |||||

| 0.6684 | 0.7 | 0.0473 | |||||

| 0.362 | 0.4 | 0.105 | |||||

| 0.4344 | 0.4 | 0.0792 | |||||

| Visual-tactile | 0.2279 | 0.2 | 0.1224 | 0.1601 | 0.2321 | ||

| 0.4133 | 0.4 | 0.0322 | |||||

| 0.4516 | 0.5 | 0.1072 | |||||

| 0.2586 | 0.3 | 0.1601 | |||||

| 0.3646 | 0.4 | 0.0971 | |||||

| Visual-auditory-tactile | 0.0751 | 0.08 | 0.0652 | 0.0652 | 0.0664 | ||

| 0.2011 | 0.2 | 0.0055 | |||||

| 0.0523 | 0.05 | 0.044 | |||||

| 0 | 0 | 0 | |||||

| 0.0621 | 0.06 | 0.0338 |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Lv, J.; Xu, X.; Ding, N. Research on the Quantitative Method of Cognitive Loading in a Virtual Reality System. Information 2019, 10, 170. https://doi.org/10.3390/info10050170

Lv J, Xu X, Ding N. Research on the Quantitative Method of Cognitive Loading in a Virtual Reality System. Information. 2019; 10(5):170. https://doi.org/10.3390/info10050170

Chicago/Turabian StyleLv, Jian, Xiaoping Xu, and Ning Ding. 2019. "Research on the Quantitative Method of Cognitive Loading in a Virtual Reality System" Information 10, no. 5: 170. https://doi.org/10.3390/info10050170

APA StyleLv, J., Xu, X., & Ding, N. (2019). Research on the Quantitative Method of Cognitive Loading in a Virtual Reality System. Information, 10(5), 170. https://doi.org/10.3390/info10050170