A Unified Framework for Enhanced 3D Spatial Localization of Weeds via Keypoint Detection and Depth Estimation

Abstract

1. Introduction

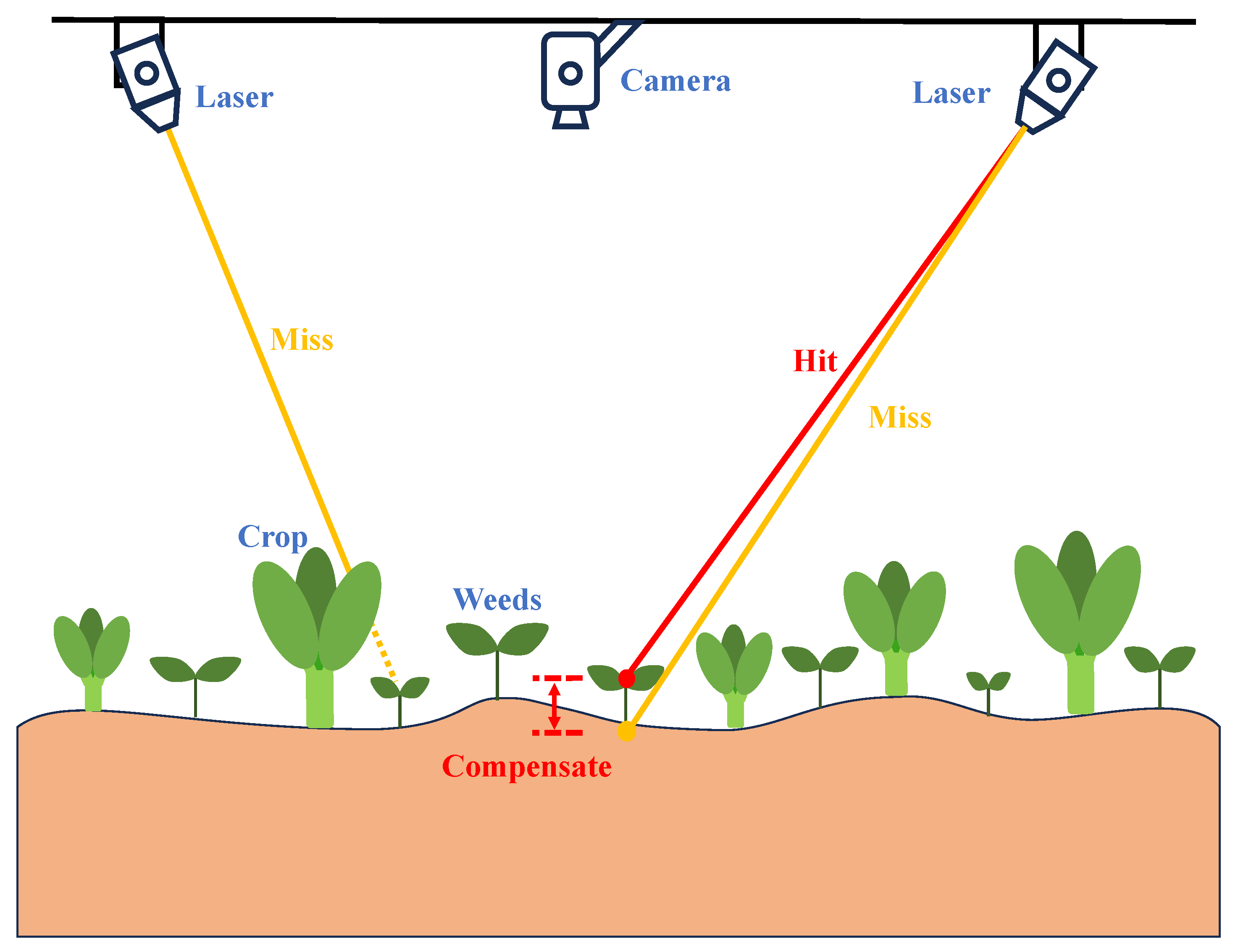

- Proposing the first unified framework for 3D keypoint localization in laser weeding applications, achieving end-to-end learning for 2D planar localization and depth compensation.

- Designing a multi-task optimization loss function to effectively balance the joint training of keypoint detection and depth estimation.

- Validating the proposed method’s reliability and precision in real operating environments through experiments on datasets from real agricultural fields.

2. Related Work

2.1. Weed Detection

2.2. Depth Estimation

2.3. Multi-Task Learning

3. Methods

3.1. Network Architecture

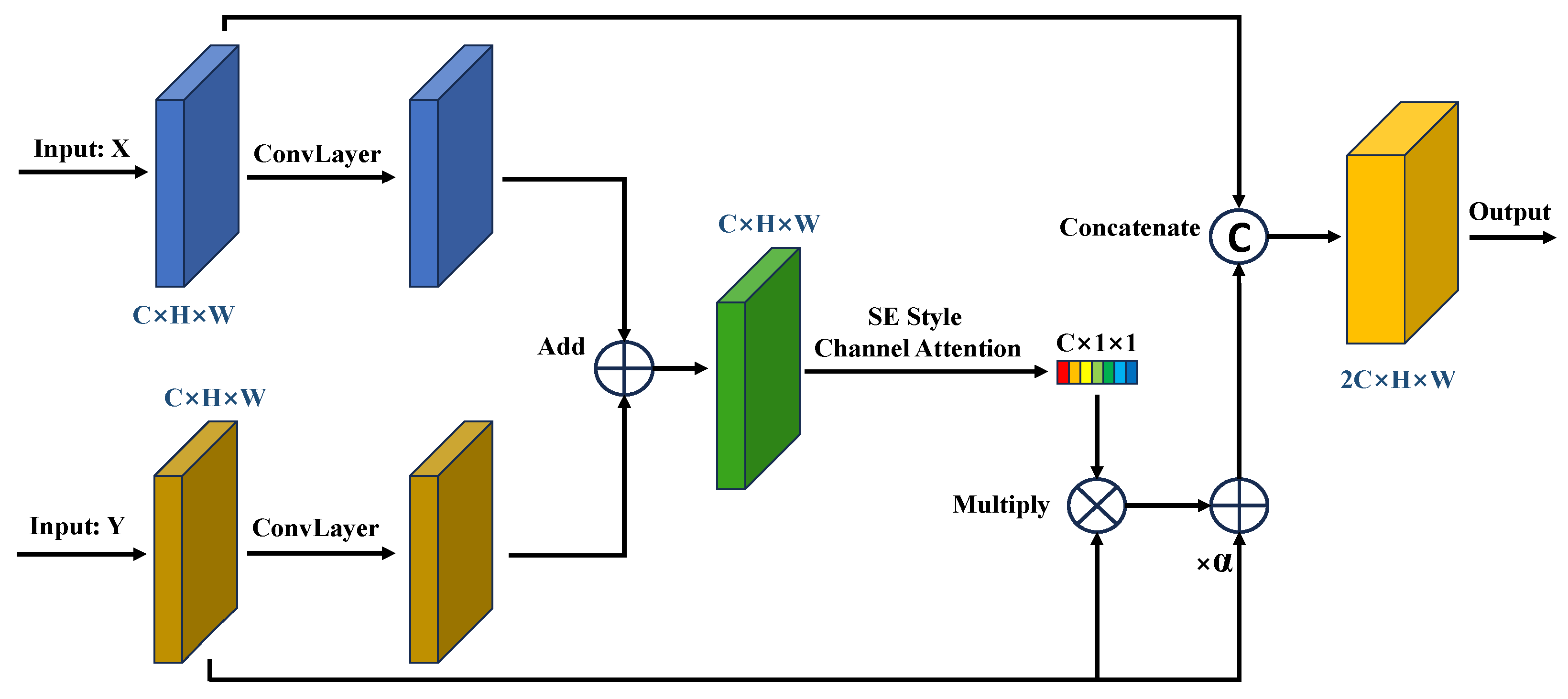

3.2. Gated Feature Fusion

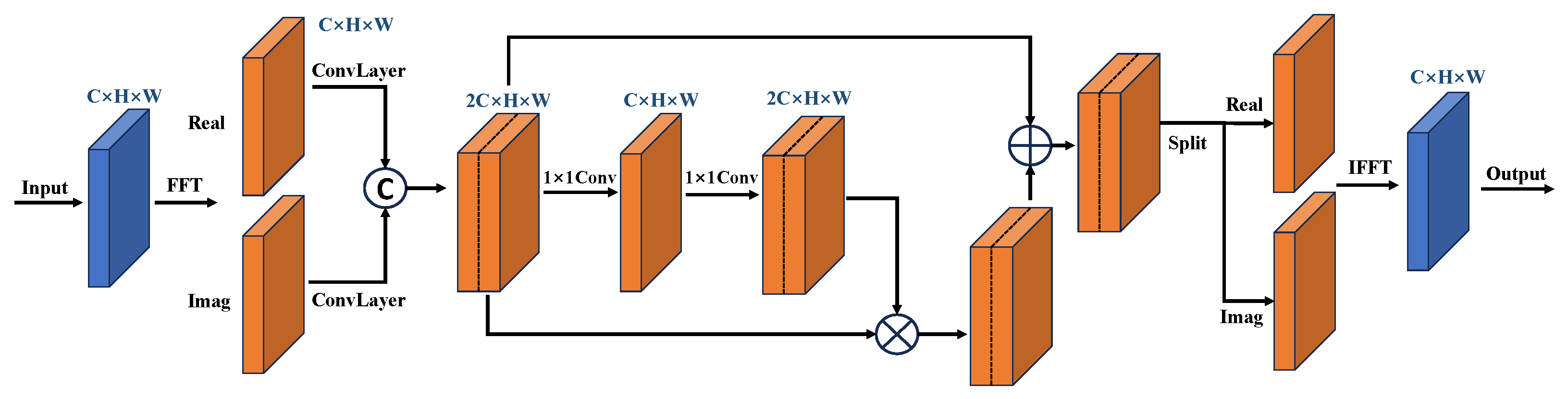

3.3. Hybrid Domain Block

3.3.1. Frequency Unit

3.3.2. Spatial Unit

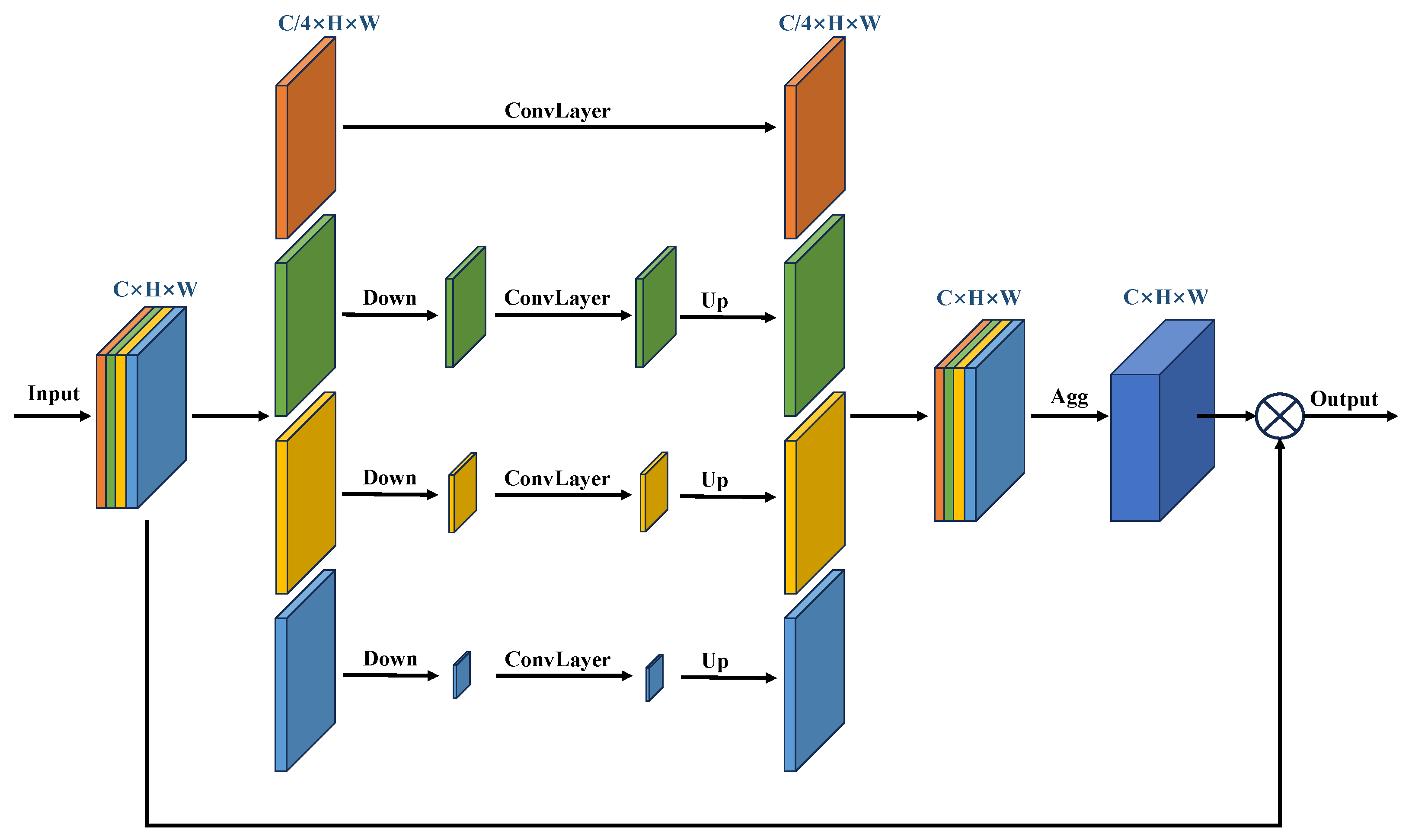

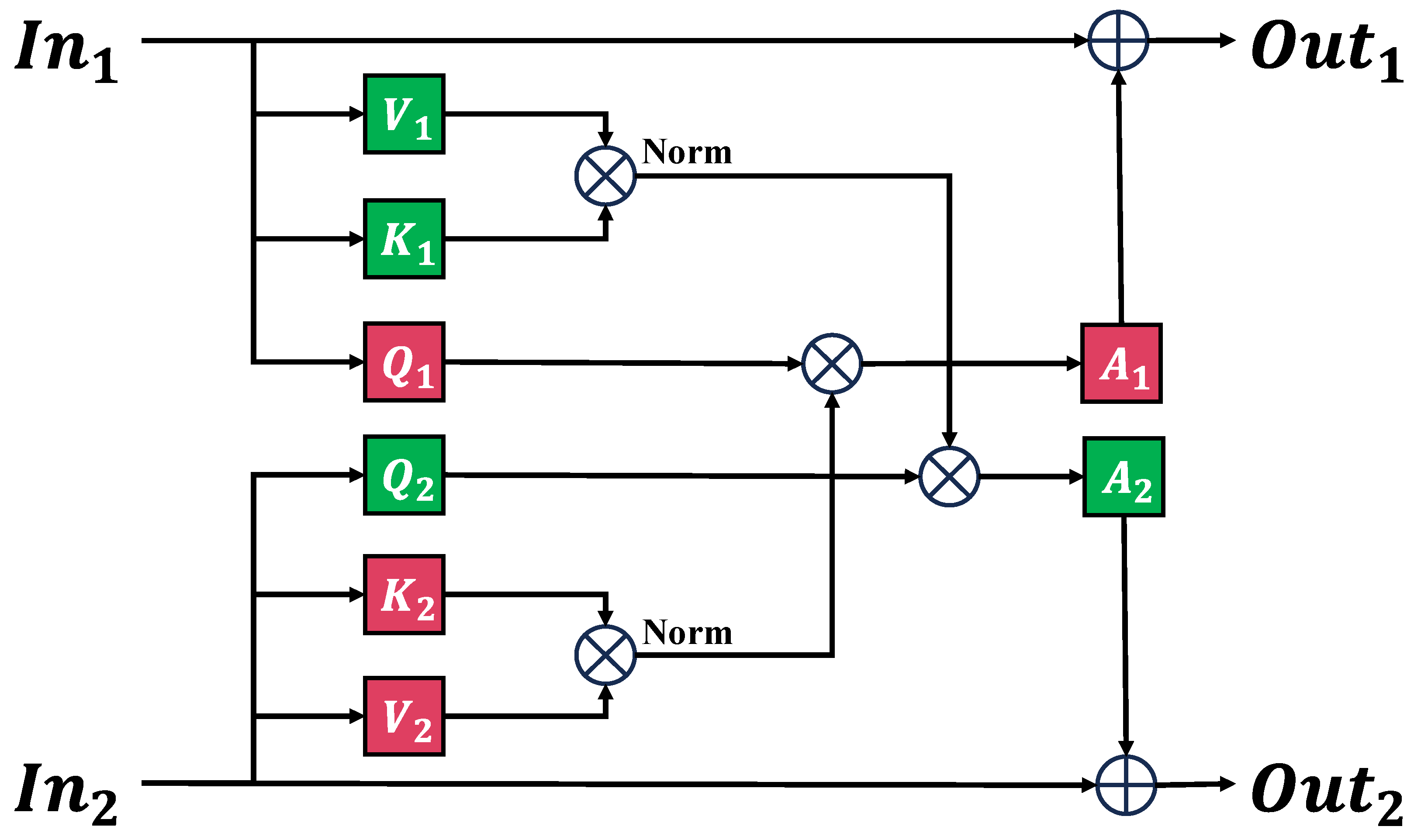

3.4. Cross-Branch Attention

3.5. Loss Function

3.5.1. Keypoints Detection Loss

3.5.2. Depth Estimation Loss

3.5.3. Total Loss

3.6. Three-Dimensional Coordinate Reconstruction of Keypoints

4. Experiments

4.1. Datasets

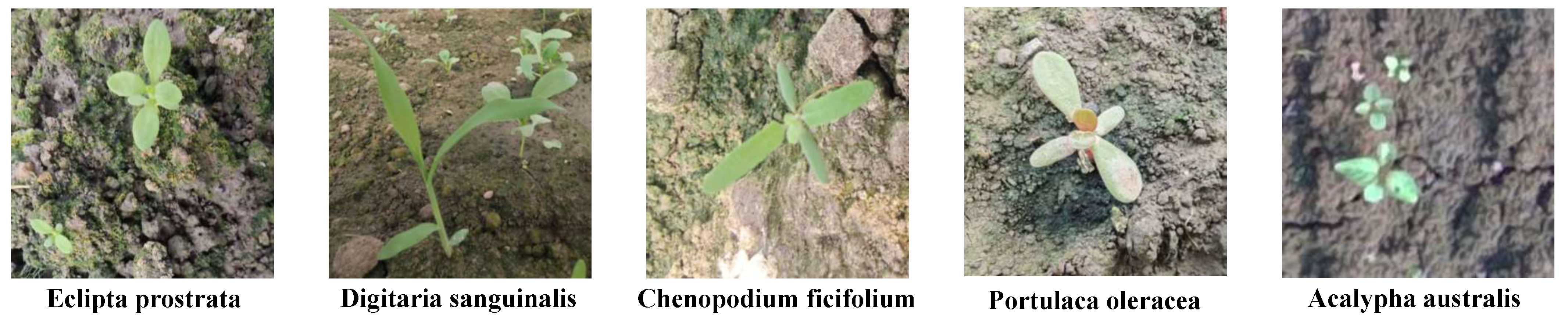

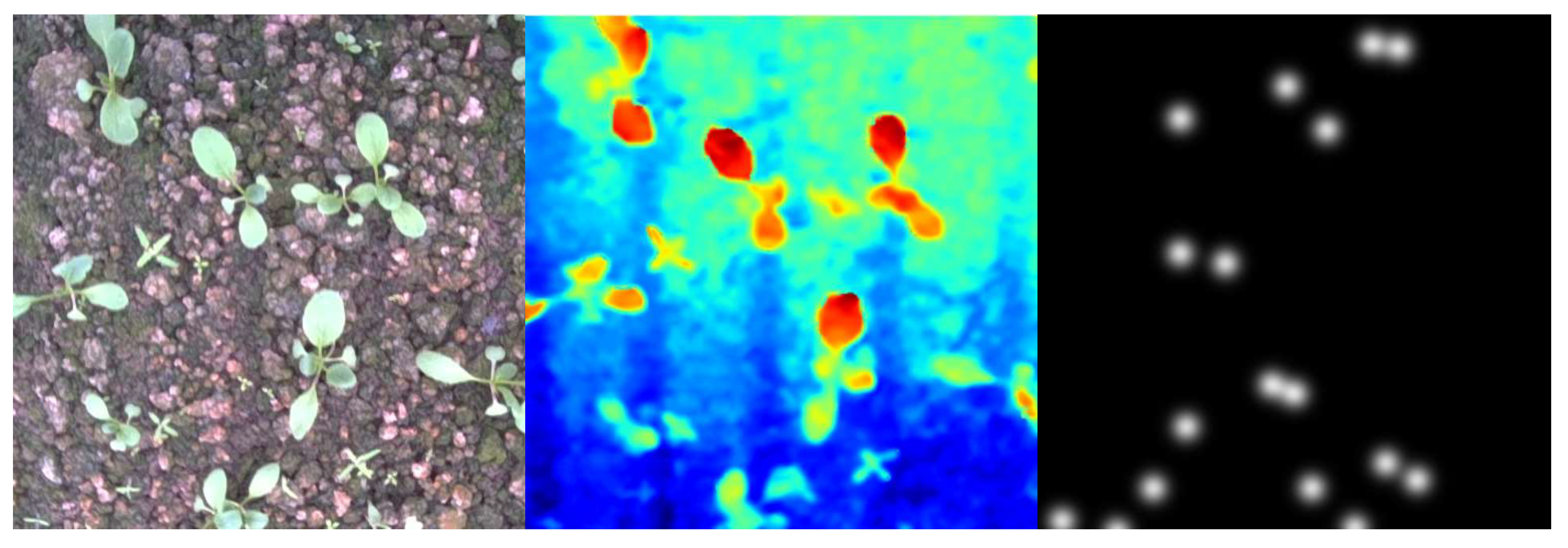

4.1.1. Data Collection

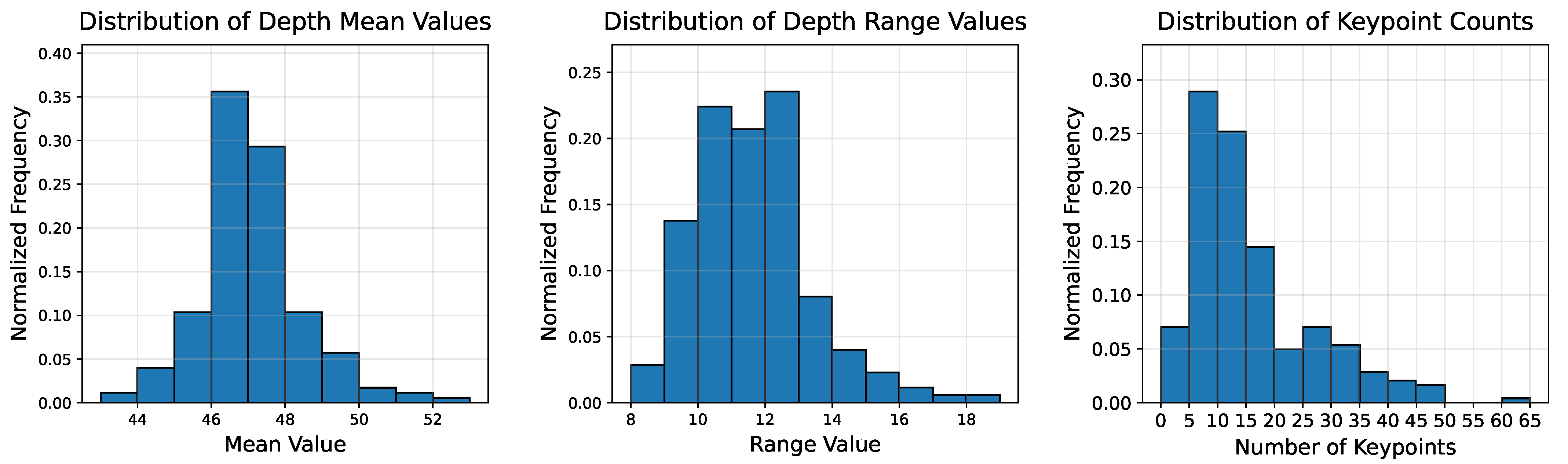

4.1.2. Data Annotation and Splitting

4.1.3. Data Preprocessing and Augmentation

4.2. Training Environment and Hyperparameters

4.3. Performance Metrics

4.3.1. Keypoint Detection Metrics

- True Positives (TP): The number of successfully matched predicted keypoints.

- False Positives (FP): The number of unmatched predicted keypoints, representing incorrect detections.

- False Negatives (FN): The number of unmatched ground truth keypoints, representing missed detections.

4.3.2. Depth Estimation Metrics

4.4. Experimental Results

4.4.1. Keypoint Detection Performance

4.4.2. Depth Estimation Performance

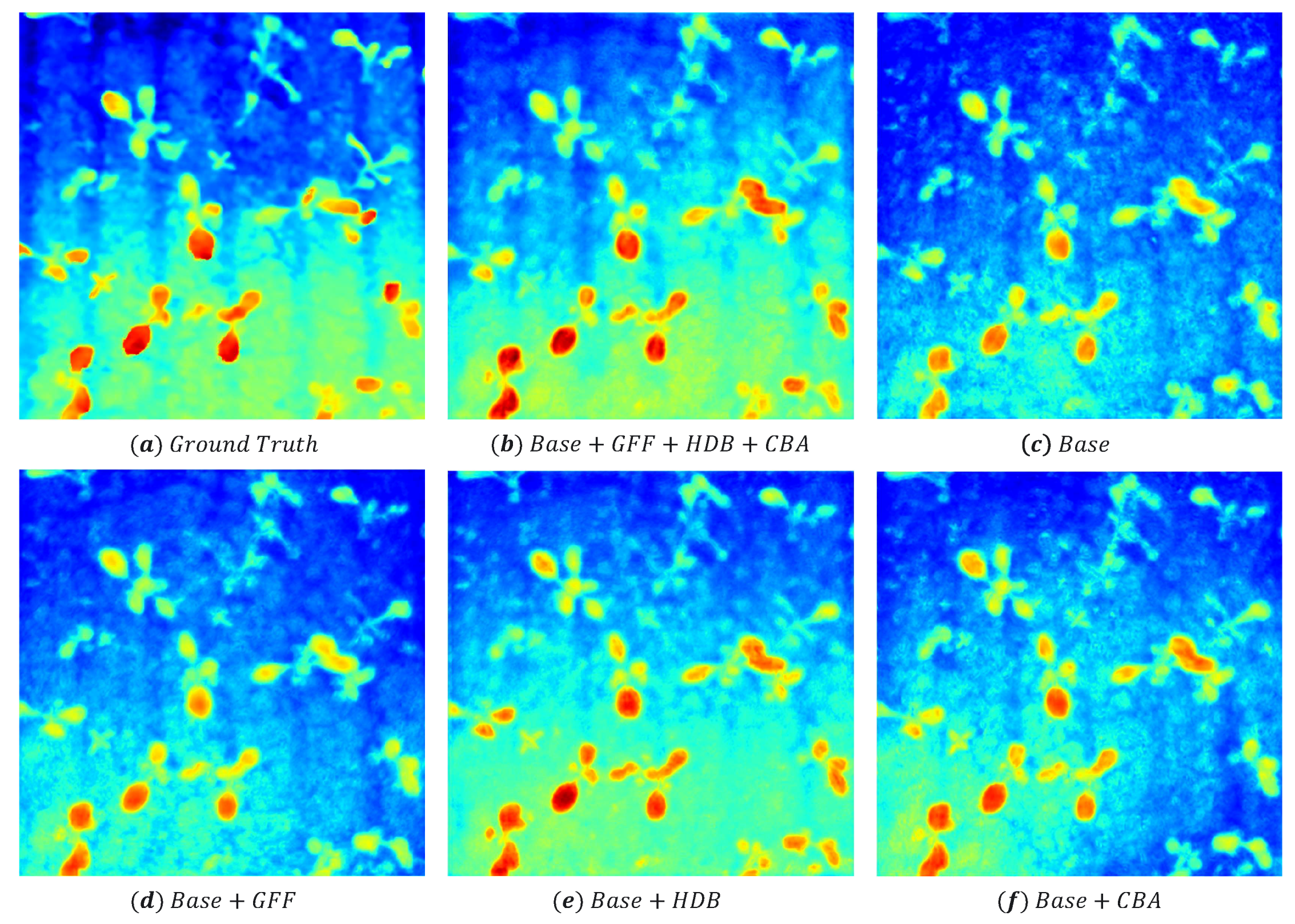

4.5. Ablation Study

4.6. Backbone Comparison and Computational Analysis

5. Conclusions

- Proposing an end-to-end unified architecture that efficiently integrates keypoint detection and depth estimation tasks.

- Designing a series of innovative structural modules, including Gated Feature Fusion (GFF), Hybrid Domain Block (HDB), and Cross-Branch Attention (CBA).

- Enhance Generalization and Robustness: We will build larger datasets containing image data of more species of weeds as well as multiple growth stages. Our future work will focus on validating and optimizing the model across diverse crop types, growth stages, and challenging field conditions (e.g., varying lighting and weather). We also plan to explore domain adaptation techniques to improve cross-scene generalization capabilities.

- Reduce Annotation Burden: To mitigate the high cost of manual data annotation, we will investigate weak, semi-supervised, and self-supervised learning paradigms. This includes leveraging multi-view geometric constraints or time-series information for pseudo-label generation, aiming to significantly reduce reliance on human labeling efforts.

- Robust Absolute Depth Estimation: While our current approach focuses on depth compensation leveraging a fixed operating height, future work will explore methods for robustly acquiring absolute depth measurements.This includes integrating low-cost auxiliary sensors (e.g., ultrasonic sensors, structured light) or advanced monocular depth estimation techniques that can reduce the inherent error in absolute depth prediction, thus broadening applicability to scenarios with variable robot heights.

- Final Deployment Optimization: We aim for extreme model lightweighting through techniques such as knowledge distillation, network pruning, and quantization. This will enable real-time inference on resource-constrained embedded platforms, ultimately achieving seamless software-hardware integration for practical application deployment.

- Perception-to-Action Closed-Loop Autonomy: Utilizing the high-precision 3D coordinates from our model, we envision constructing a complete 3D scene reconstruction and task planning module. This includes optimizing multi-target striking sequences and dynamically adjusting laser parameters based on energy models, ultimately forming a closed-loop system from environmental perception to intelligent decision making and precise execution, thereby endowing intelligent weeding robots with a higher level of autonomy.

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Yaseen, M.U.; Long, J.M. Laser Weeding Technology in Cropping Systems: A Comprehensive Review. Agronomy 2024, 14, 2253. [Google Scholar] [CrossRef]

- Li, Y.; Guo, Z.; Shuang, F.; Zhang, M.; Li, X. Key technologies of machine vision for weeding robots: A review and benchmark. Comput. Electron. Agric. 2022, 196, 106880. [Google Scholar] [CrossRef]

- Lu, R.; Zhang, D.; Wang, S.; Hu, X. Progress and Challenges in Research on Key Technologies for Laser Weed Control Robot-to-Target System. Agronomy 2025, 15, 1015. [Google Scholar] [CrossRef]

- Ronneberger, O.; Fischer, P.; Brox, T. U-net: Convolutional networks for biomedical image segmentation. In Proceedings of the Medical Image Computing and Computer-Assisted Intervention—MICCAI 2015: 18th International Conference, Munich, Germany, 5–9 October 2015; Proceedings, Part III 18. Springer: Berlin/Heidelberg, Germany, 2015; pp. 234–241. [Google Scholar]

- Chen, L.C.; Papandreou, G.; Kokkinos, I.; Murphy, K.; Yuille, A.L. Deeplab: Semantic image segmentation with deep convolutional nets, atrous convolution, and fully connected crfs. IEEE Trans. Pattern Anal. Mach. Intell. 2017, 40, 834–848. [Google Scholar] [CrossRef] [PubMed]

- Redmon, J.; Divvala, S.; Girshick, R.; Farhadi, A. You only look once: Unified, real-time object detection. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27–30 June 2016; pp. 779–788. [Google Scholar]

- Ren, S.; He, K.; Girshick, R.; Sun, J. Faster r-cnn: Towards real-time object detection with region proposal networks. Adv. Neural Inf. Process. Syst. 2015, 28, 91–99. [Google Scholar] [CrossRef]

- Cao, Z.; Hidalgo, G.; Simon, T.; Wei, S.E.; Sheikh, Y. Openpose: Realtime multi-person 2d pose estimation using part affinity fields. IEEE Trans. Pattern Anal. Mach. Intell. 2019, 43, 172–186. [Google Scholar] [CrossRef] [PubMed]

- Sun, K.; Xiao, B.; Liu, D.; Wang, J. Deep high-resolution representation learning for human pose estimation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Long Beach, CA, USA, 15–20 June 2019; pp. 5693–5703. [Google Scholar]

- Osorio, K.; Puerto, A.; Pedraza, C.; Jamaica, D.; Rodríguez, L. A deep learning approach for weed detection in lettuce crops using multispectral images. AgriEngineering 2020, 2, 471–488. [Google Scholar] [CrossRef]

- Sun, J.; Yang, K.; He, X.; Luo, Y.; Wu, X.; Shen, J. Beet seedling and weed recognition based on convolutional neural network and multi-modality images. Multimed. Tools Appl. 2022, 81, 5239–5258. [Google Scholar] [CrossRef]

- Nasiri, A.; Omid, M.; Taheri-Garavand, A.; Jafari, A. Deep learning-based precision agriculture through weed recognition in sugar beet fields. Sustain. Comput. Inform. Syst. 2022, 35, 100759. [Google Scholar] [CrossRef]

- Zhao, P.; Chen, J.; Li, J.; Ning, J.; Chang, Y.; Yang, S. Design and Testing of an autonomous laser weeding robot for strawberry fields based on DIN-LW-YOLO. Comput. Electron. Agric. 2025, 229, 109808. [Google Scholar] [CrossRef]

- Zhang, D.; Lu, R.; Guo, Z.; Yang, Z.; Wang, S.; Hu, X. Algorithm for Locating Apical Meristematic Tissue of Weeds Based on YOLO Instance Segmentation. Agronomy 2024, 14, 2121. [Google Scholar] [CrossRef]

- Arsa, D.M.S.; Ilyas, T.; Park, S.H.; Won, O.; Kim, H. Eco-friendly weeding through precise detection of growing points via efficient multi-branch convolutional neural networks. Comput. Electron. Agric. 2023, 209, 107830. [Google Scholar] [CrossRef]

- Lottes, P.; Behley, J.; Chebrolu, N.; Milioto, A.; Stachniss, C. Joint stem detection and crop-weed classification for plant-specific treatment in precision farming. In Proceedings of the 2018 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), IEEE, Madrid, Spain, 1–5 October 2018; pp. 8233–8238. [Google Scholar]

- Li, J.; Güldenring, R.; Nalpantidis, L. Real-time joint-stem prediction for agricultural robots in grasslands using multi-task learning. Agronomy 2023, 13, 2365. [Google Scholar] [CrossRef]

- Zhu, H.; Zhang, Y.; Mu, D.; Bai, L.; Zhuang, H.; Li, H. YOLOX-based blue laser weeding robot in corn field. Front. Plant Sci. 2022, 13, 1017803. [Google Scholar] [CrossRef]

- Coll-Ribes, G.; Torres-Rodríguez, I.J.; Grau, A.; Guerra, E.; Sanfeliu, A. Accurate detection and depth estimation of table grapes and peduncles for robot harvesting, combining monocular depth estimation and CNN methods. Comput. Electron. Agric. 2023, 215, 108362. [Google Scholar] [CrossRef]

- Tamrakar, N.; Paudel, B.; Karki, S.; Deb, N.C.; Arulmozhi, E.; Kook, J.H.; Kang, M.Y.; Kang, D.Y.; Ogundele, O.M.; Nakarmi, B.; et al. Peduncle Detection of Ripe Strawberry to Localize Picking Point using DF-Mask R-CNN and Monocular Depth. IEEE Access 2025, 13, 73889–73902. [Google Scholar] [CrossRef]

- Cui, X.Z.; Feng, Q.; Wang, S.Z.; Zhang, J.H. Monocular depth estimation with self-supervised learning for vineyard unmanned agricultural vehicle. Sensors 2022, 22, 721. [Google Scholar] [CrossRef]

- Kim, W.S.; Lee, D.H.; Kim, Y.J.; Kim, T.; Lee, W.S.; Choi, C.H. Stereo-vision-based crop height estimation for agricultural robots. Comput. Electron. Agric. 2021, 181, 105937. [Google Scholar] [CrossRef]

- Vandenhende, S.; Georgoulis, S.; Van Gansbeke, W.; Proesmans, M.; Dai, D.; Van Gool, L. Multi-task learning for dense prediction tasks: A survey. IEEE Trans. Pattern Anal. Mach. Intell. 2021, 44, 3614–3633. [Google Scholar] [CrossRef]

- Goncalves, D.N.; Junior, J.M.; Zamboni, P.; Pistori, H.; Li, J.; Nogueira, K.; Goncalves, W.N. MTLSegFormer: Multi-task learning with transformers for semantic segmentation in precision agriculture. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Vancouver, BC, Canada, 17–24 June 2023; pp. 6290–6298. [Google Scholar]

- Amrani, A.; Diepeveen, D.; Murray, D.; Jones, M.G.; Sohel, F. Multi-task learning model for agricultural pest detection from crop-plant imagery: A Bayesian approach. Comput. Electron. Agric. 2024, 218, 108719. [Google Scholar] [CrossRef]

- Duc, C.D.; Lim, J. X-PDNet: Accurate Joint Plane Instance Segmentation and Monocular Depth Estimation with Cross-Task Distillation and Boundary Correction. arXiv 2023, arXiv:2309.08424. [Google Scholar]

- He, L.; Lu, J.; Wang, G.; Song, S.; Zhou, J. Sosd-net: Joint semantic object segmentation and depth estimation from monocular images. Neurocomputing 2021, 440, 251–263. [Google Scholar] [CrossRef]

- Zhang, C.; Tang, Y.; Zhao, C.; Sun, Q.; Ye, Z.; Kurths, J. Multitask GANs for semantic segmentation and depth completion with cycle consistency. IEEE Trans. Neural Netw. Learn. Syst. 2021, 32, 5404–5415. [Google Scholar] [CrossRef] [PubMed]

- Zhang, H.; Gu, D. Deep Multi-task Learning for Animal Chest Circumference Estimation from Monocular Images. Cogn. Comput. 2024, 16, 1092–1102. [Google Scholar] [CrossRef]

- Lottes, P.; Behley, J.; Chebrolu, N.; Milioto, A.; Stachniss, C. Robust joint stem detection and crop-weed classification using image sequences for plant-specific treatment in precision farming. J. Field Robot. 2020, 37, 20–34. [Google Scholar] [CrossRef]

- Tan, M.; Le, Q. Efficientnet: Rethinking model scaling for convolutional neural networks. In Proceedings of the International Conference on Machine Learning, PMLR, Long Beach, CA, USA, 9–15 June 2019; pp. 6105–6114. [Google Scholar]

- Hu, J.; Shen, L.; Sun, G. Squeeze-and-excitation networks. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–23 June 2018; pp. 7132–7141. [Google Scholar]

- Sun, L.; Dong, J.; Tang, J.; Pan, J. Spatially-adaptive feature modulation for efficient image super-resolution. In Proceedings of the IEEE/CVF International Conference on Computer Vision, Paris, France, 2–3 October 2023; pp. 13190–13199. [Google Scholar]

- Shen, Z.; Zhang, M.; Zhao, H.; Yi, S.; Li, H. Efficient attention: Attention with linear complexities. In Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision, Virtual, 5–9 January 2021; pp. 3531–3539. [Google Scholar]

- Lin, T.Y.; Goyal, P.; Girshick, R.; He, K.; Dollár, P. Focal loss for dense object detection. In Proceedings of the IEEE International Conference on Computer Vision, Venice, Italy, 22–29 October 2017; pp. 2980–2988. [Google Scholar]

| Metric Category | P (%) | R (%) | PMAE (pix) | HIoU (%) |

|---|---|---|---|---|

| Average |

| Metric Category | DMAE-Comp | AWT-Comp | DMAE-Final | AWT-Final | MAE-3D |

|---|---|---|---|---|---|

| WeedLoc3D | 0.8358 cm | 83.46% | 1.2243 cm | 67.84% | 1.238 cm |

| Traditional Method | 1.4547 cm | 62.28% | 1.5048 cm | 51.22% | 1.516 cm |

| Improvement | 42.54% | 33.99% | 18.64% | 32.45% | 18.33% |

| Coupled | GFF | HDB | CBA | P(%) ↑ | R(%) ↑ | PMAE (Pix) ↓ |

|---|---|---|---|---|---|---|

| √ | √ | × | × | 2.63 | ||

| √ | × | √ | × | 2.37 | ||

| √ | × | × | √ | 2.60 | ||

| √ | √ | √ | √ | 2.37 | ||

| √ | × | × | × | 2.75 | ||

| × | √ | √ | × | 2.51 |

| Coupled | GFF | HDB | CBA | HIoU (%) ↑ | DMAE (cm) ↓ | AWT (%) ↑ |

|---|---|---|---|---|---|---|

| √ | √ | × | × | 0.9295 | ||

| √ | × | √ | × | 0.9007 | ||

| √ | × | × | √ | 0.9090 | ||

| √ | √ | √ | √ | 0.8358 | ||

| √ | × | × | × | 1.0577 | ||

| × | √ | √ | × | 0.9475 |

| Backbone | Parameters (M) | FLOPs (G) | FPS |

|---|---|---|---|

| MobileNetV3 | 3.20 | 1.66 | 186.88 |

| WeedLoc3D | 6.09 | 5.85 | 98.24 |

| RexNet | 8.54 | 5.98 | 133.92 |

| ShuffleNetV2 | 7.60 | 6.57 | 177.16 |

| GhostNet | 14.34 | 6.00 | 38.64 |

| RegNet | 15.37 | 19.62 | 125.57 |

| Backbone | P (%) ↑ | R (%) ↑ | PMAE (pix) ↓ | HIoU (%) ↑ | DMAE (cm) ↓ | AWT (%) ↑ |

|---|---|---|---|---|---|---|

| MobileNetV3 | 3.46 | 1.0703 | ||||

| WeedLoc3D | 2.37 | |||||

| RexNet | 2.76 | 0.9532 | ||||

| ShuffleNetV2 | 3.45 | 1.1414 | ||||

| GhostNet | 3.07 | 0.9287 | ||||

| RegNet | 2.84 | 1.0823 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Xie, S.; Quan, T.; Luo, J.; Ren, X.; Miao, Y. A Unified Framework for Enhanced 3D Spatial Localization of Weeds via Keypoint Detection and Depth Estimation. Agriculture 2025, 15, 1854. https://doi.org/10.3390/agriculture15171854

Xie S, Quan T, Luo J, Ren X, Miao Y. A Unified Framework for Enhanced 3D Spatial Localization of Weeds via Keypoint Detection and Depth Estimation. Agriculture. 2025; 15(17):1854. https://doi.org/10.3390/agriculture15171854

Chicago/Turabian StyleXie, Shuxin, Tianrui Quan, Junjie Luo, Xuesong Ren, and Yubin Miao. 2025. "A Unified Framework for Enhanced 3D Spatial Localization of Weeds via Keypoint Detection and Depth Estimation" Agriculture 15, no. 17: 1854. https://doi.org/10.3390/agriculture15171854

APA StyleXie, S., Quan, T., Luo, J., Ren, X., & Miao, Y. (2025). A Unified Framework for Enhanced 3D Spatial Localization of Weeds via Keypoint Detection and Depth Estimation. Agriculture, 15(17), 1854. https://doi.org/10.3390/agriculture15171854