1. Introduction

At a time when the general public has concerns about how livestock are managed and their welfare, tools that can improve animal welfare standards and increase the public acceptance of farming are required. In recent years, the expectation has been for each stockperson to look after more animals, as input costs (including labor) have increased and finding skilled farm workers has become more challenging, and with the increased size of the average dairy herd. With these challenges have come high-quality digital camera systems that provide 24 h video surveillance capabilities, and the opportunity for farmers to monitor their livestock remotely and whilst carrying out other farm tasks. The use of cameras to monitor animals and their behaviors manually has been available for decades, with animal behavior and welfare concerns commonly directed at housed livestock production, such as dairy cows [

1,

2]. The monitoring of animals is essential for their welfare and survival [

3].

Automated image analysis techniques have developed that allow continuous monitoring during the day and night, and require no prior training by the user other than interpreting the output. Such continuous monitoring is not possible for a stockperson. Recent technological advances in the field of computer vision based on the technique of deep learning [

4,

5] have emerged which now make automated monitoring of video feeds feasible. Computer vision combined with artificial intelligence (neural networks) can be used for a number of animal monitoring tasks such as recognizing the type of animals (recognition), detecting where the animals (and any other objects of interest) are located in the image (detection), localizing their body parts, and even segmenting their exact shape (silhouette) from the image. Furthermore, adaptations of neural networks for analyzing video can be used for a number of tasks such as recognition of specific animal behaviors (e.g., standing, lying, walking, eating, and drinking) [

6]. Major benefits of image analysis are that it does not rely on human interpretation or intervention, transponder attachments or invasive equipment (e.g., boluses and collars). Furthermore, it may provide more information compared to other monitoring systems at a relatively low cost. However, the technology does rely on obtaining a large number of high-quality images. The need for high-quality image datasets for agricultural solutions has been recognized by others [

7]. Vision-based monitoring can not only detect and track individuals but also groups of animals (i.e., herd, flock or mother with offspring). Vision technology that can continuously monitor individual animals can potentially provide an objective assessment of an abnormal behavioral state to allow early intervention and improved awareness by a stockperson.

The objective of this study was to investigate using existing image recognition techniques to predict the behavior of dairy cows. This study collected a large number of high-quality video images for a range of cow behaviors. Such a dataset was found to be lacking but was required in the current study to train a computer vision model.

2. Materials and Methods

Approval for this study was obtained from the University of Nottingham animal ethics committee before commencement (approval number 151, 2017).

2.1. Data

Video cameras (5 Mp, 30 m IR. Hikvision HD Bullet; Hangzhou, China) were used to record Holstein–Friesian dairy cows at the Nottingham University Dairy Centre (Sutton Bonington, Leicestershire, UK) prior to calving. Cameras were recording at 20 frames per second, with a frame width of 640 pixels and height of 360 pixels. Three calving pens with two surveillance cameras looking into each pen were used to obtain 24 h video footage of 46 individual cows between April and June 2018. Both cameras on each pen allowed full coverage of the area (10 m × 7 m) and were approximately at a 45-degree angle looking into the pen at a height of 4 m. Each calving pen holds a maximum of eight cows. Several days prior to calving, each cow was moved into one of the three calving pens so that the entire calving process could be monitored.

2.2. Image Annotation

The video recording for each cow was annotated from 10 h before calving by three observers using custom-made scripts in the PyTorch 1.5 framework to label video clips. The PyTorch framework was used as it allows several steps in the processing of images to be carried out, such as behavioral annotations, video segmentation and model development using the Python programming language as discussed below. The start of the observation period was determined as 10 h from when the calf was fully expelled at birth using the video recording. Seven behaviors were recorded (

Table 1).

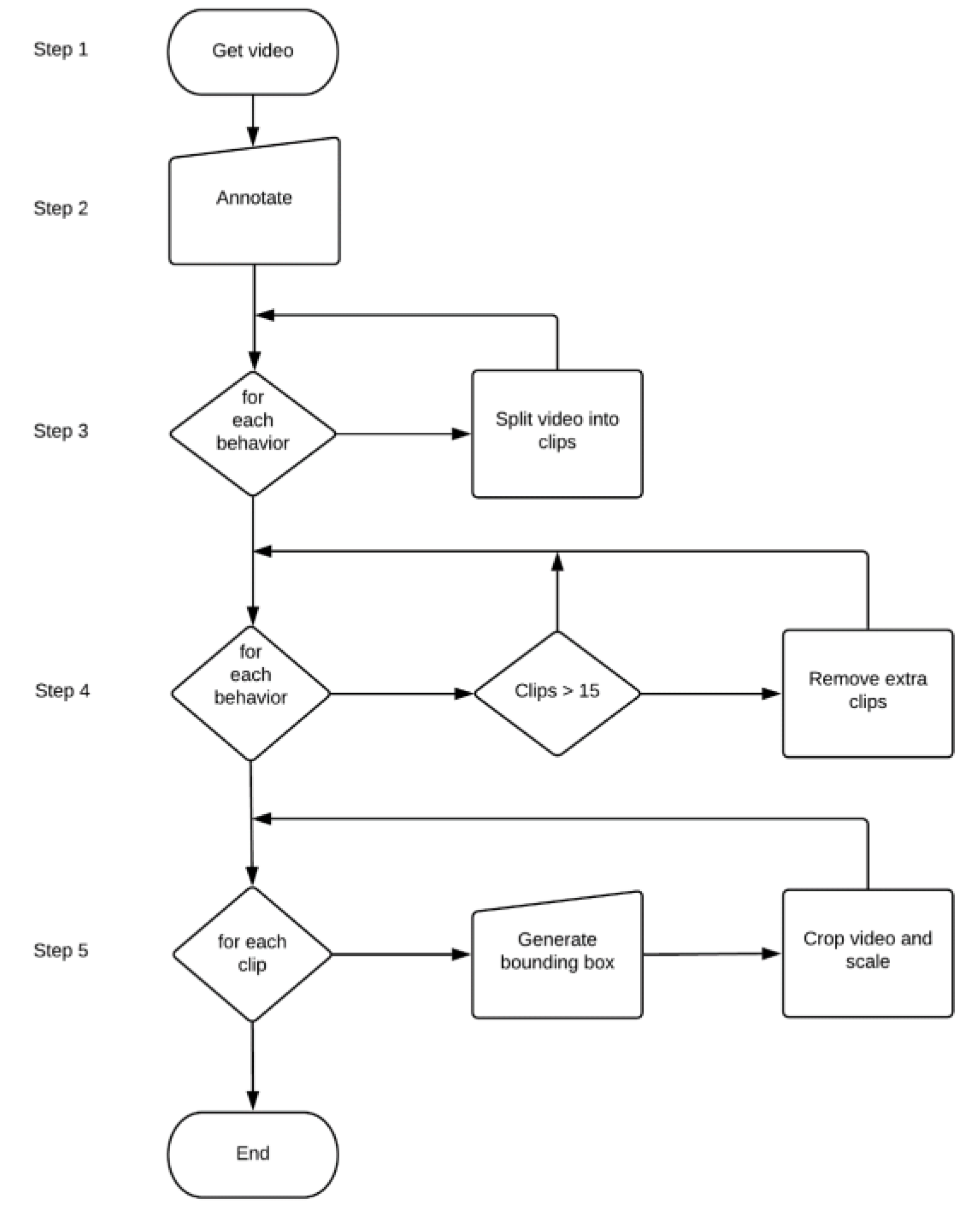

A total of 19,191 individual behavioral observations were obtained from all 46 cows. For the analysis, 15 video clips of each behavior that ranged between three to ten seconds were extracted from individual cow footage to provide a total of 3969 video clips for analysis (

Table 2). If there were more than 15 video clips, then they would be evenly sampled from available data. There were 248–686 video clips for each behavior for training and validation. To ensure accuracy of video annotation and subsequent behavioral video clips extracted, each behavioral video clip was checked by a single trained observer to be correctly labelled and any errors corrected if required.

The output of the behavior annotations from each video clip was described in a

N*3 matrix, where

N is the total number of behaviors in the video (

Table 3). Start and end frames for annotated behaviors are recorded for each video clip. Each of the retained video clips were cropped to remove excessive background and to focus on a single cow (

Figure 1).

To be compliant with the non-local network [

8], we used a fixed-size bounding box that fully covered the cow over all frames (this is to emulate [

8], who used the entire frame). We used the image annotation tool ViTBAT [

9] to generate the bounding boxes. The steps taken to process images for model development are illustrated in

Figure 2.

2.3. Computer Vision Model Used for Behavior Recognition

A custom-made script in the PyTorch 1.5 framework was used to combine the behavioral matrix with cropped video images. This was performed using a non-local network [

8] using the ResNet-50 architecture [

10]. Further detailed explanations are discussed in prior research [

8,

10]. As shown in Equation (1), the non-local block computes the response at a position as a weighted sum of the features at all positions in the input feature maps and is defined as follows:

where

is the input features,

is the output features (same size as

),

is the current position of interest,

enumerates over all possible positions,

is the normalization factor

,

is a linear embedding

, where

is learned weight matrix and

is a pairwise function that computes the correlations between the feature at location

and those at all possible positions

.

The non-local network [

8] is initialized using weights that are pre-trained on the Kinetics image dataset [

11], which includes 400 behaviors for humans. This approach has been shown by [

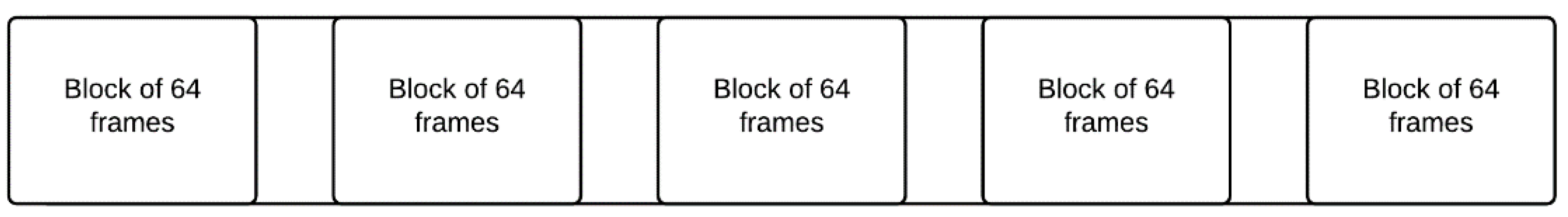

12] to improve action recognition accuracy by using a pre-trained initialization starting point for modelling. To decrease training and testing times, the current study used 8-frame input clips. The 8-frame clips were generated by randomly cropping out 64 consecutive frames from the training video and then keeping 8 frames that are evenly separated by a stride of 8 frames (

Figure 3). Additionally, while training, the spatial size is fixed to 224 pixels squared, which is randomly cropped from a video or its horizontal flip, whose shorter side is randomly scaled between 256 and 320 pixels.

2.4. Computer Vision Model Validation

To validate the performance of the model, we performed spatially fully convolutional inference as described by [

8]. Briefly, the shorter side is resized to 256 pixels and 3 crops of 256x256 pixels are used to cover the entire spatial domain. The final predicted output is the average score for 10 evenly spaced 8 frame clips sampled along the temporal dimension of a full-length video (

Figure 4).

3. Results and Discussion

Despite scientific value, pressing need and direct impact on animal health and welfare, very little attention has been paid in developing an annotated video dataset of dairy cow behaviors. Most research to date has been based on wearable accelerometer-based activity monitoring sensors [

13,

14,

15]. We introduce a new large-scale video dataset for the purpose of cow behavior classification. Image banks containing a large number of high-quality (i.e., accurate and high-resolution) images for different applications are needed to develop vision-based technologies, such as behavior recognition in animals, as suggested by other studies [

7]. This study showed that automated monitoring of the cow during parturition is possible, which for a high-value animal is beneficial to assist the stockperson and enhance animal welfare.

Our dataset consisted of almost 4000 video clips of individual animal behaviors, each between 3 and 10 s in length, which were on pregnant dairy cows prior to calving. There was over 9 h and 42 min of captured video data, which was split into approximately 7 h and 48 min for training and 1 h and 54 min for validation. In the field of computer vision, action recognition has been applied on humans with a high degree of success [

8]. We show that the same model pre-trained on a dataset devised for human action recognition, namely Kinetics [

11], can be successfully adapted to detect the behavior of dairy cows. As shown in

Table 4, the accuracy of identifying contractions while lying was 83%—this in itself is sufficient enough to predict the birth of a calf, as a cow will generally start contractions approximately 1 to 2 h prior to giving birth. Standing, lying, eating and drinking behaviors all scored greater that 84% and can also help with the monitoring of animal well-being. Furthermore, changes in duration or frequency of behaviors studied may help identify abnormal behavior patterns that can assist in animal management. For example, eating and drinking can be detected with a high level of accuracy at over 90%, and these behaviors can be used to identify health problems [

16].

As well as working with cows, the proposed computer vision approach could be adapted for other livestock species such as pigs, poultry, sheep, and horses to predict birth and identify behavior patterns or behaviors that occur over many hours, which may be missed by subjective and observational sampling. Furthermore, because the calving pen is continuously monitored, it should also be possible to detect and track the behaviors of the mother and its newborn offspring, which is not feasible using standard predictive animal monitoring applications that are currently being used by the livestock industry.

The development of behavior recognition using continuous camera surveillance within the farm environment is challenging. The current study identified several potential causes of error in computer model predictions which are limitations of current vision-based monitoring (

Table 5).

4. Conclusions

We show that computer vision can be successfully applied to predict individual dairy cow behaviors with an accuracy of 80% or more for the behaviors studied. This approach could be used for early detection of abnormal behavior in animals, birth events and the need for assistance. Computer vision technology may help a stockperson make more timely decisions based on the continuous tracking of individuals within groups of animals.

Author Contributions

Conceptualization, M.J.B. and G.T.; methodology, M.J.B. and G.T.; software, J.M.; validation, J.M.; formal analysis, J.M.; investigation, J.M.; resources, M.J.B.; data curation, M.J.B., K.R.S., Z.J.H. and J.M.; writing—original draft preparation, J.M. and M.J.B.; writing—review and editing, J.M., M.J.B., G.T. and P.M.D.; visualization, J.M.; supervision, M.J.B. and G.T.; project administration, M.J.B.; funding acquisition, M.J.B. and G.T. All authors have read and agreed to the published version of the manuscript.

Funding

This research was funded by the Douglas Bomford Trust, the Engineering and Physical Sciences Research Council and the Biotechnology and Biological Sciences Research Council.

Institutional Review Board Statement

The study was conducted according to the guidelines of the Declaration of Helsinki, and approved by the Animal Ethics Committee at the University of Nottingham (approval number 151, 2017).

Informed Consent Statement

Not applicable.

Data Availability Statement

The analyzed datasets are available from the corresponding author on request.

Conflicts of Interest

The authors declare no conflict of interest.

References

- Lawrence, A.; Stott, A. Profiting from animal welfare: An animal-based perspective. J. R. Agric. Soc. Engl. 2009, 170, 40–47. [Google Scholar]

- Barkema, H.W.; Von Keyserlingk, M.A.G.; Kastelic, J.P.; Lam, T.J.G.M.; Luby, C.; ROY, J.P.; Leblanc, S.J.; Keefe, G.P.; Kelton, D.F. Invited review: Changes in the dairy industry affecting dairy cattle health and welfare. J. Dairy Sci. 2015, 98, 7426–7445. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Wathes, C.M.; Kristensen, H.H.; Aerts, J.-M.; Berckmans, D. Is precision livestock farming an engineer’s daydream or nightmare, an animal’s friend or foe, and a farmer’s panacea or pitfall? Comput. Electron. Agric. 2008, 64, 2–10. [Google Scholar] [CrossRef]

- Krizhevsky, A.; Sutskever, I.; Hinton, G. Imagenet classification with deep convolutional neural networks. Adv. Neural Inf. Process. Syst. 2012, 25, 1097–1105. [Google Scholar] [CrossRef]

- Girshick, R.; Donahue, J.; Darrell, T.; Malik, J. Rich feature hierarchies for accurate object detection and semantic segmentation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Columbus, OH, USA, 24–27 June 2014. [Google Scholar]

- Cangar, Ö.; Leroy, T.; Guarino, M.; Vranken, E.; Fallon, R.; Lenehan, J.; Mee, J.; Berckmans, D. Automatic real-time monitoring of locomotion and posture behavior of pregnant cows to calving using online image analysis. Comput. Electron. Agric. 2008, 64, 53–60. [Google Scholar] [CrossRef]

- Tian, H.; Wang, T.; Liu, Y.; Qiao, X.; Li, Y. Computer vision technology in agricultural automation—A review. Inf. Process. Agric. 2020, 7, 1–19. [Google Scholar] [CrossRef]

- Wang, X.; Girshick, R.; Gupta, A.; He, K. Non-Local Neural Networks. arXiv 2018, arXiv:1711.07971v3. [Google Scholar]

- Biresaw, T.; Nawaz, T.; Ferryman, J.; Dell, A. ViTBAT: Video Tracking and Behavior Annotation Tool. In Proceedings of the 13th IEEE International Conference on Advanced Video and Signal Based Surveillance (AVSS), Colorado Springs, CO, USA, 23–26 August 2016; pp. 295–301. [Google Scholar] [CrossRef]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep Residual Learning for Image Recognition. arXiv 2015, arXiv:1512.03385v1. [Google Scholar]

- Kay, W.; Carreira, J.; Simonyan, K.; Zhang, B.; Hillier, C.; Vi-Jayanarasimhan, S.; Viola, F.; Green, T.; Back, T.; Natsev, P.; et al. The kinetics human action video dataset. arXiv 2017, arXiv:1705.06950. [Google Scholar]

- Carreira, J.; Zisserman, A. Quo Vadis, Action Recognition? A New Model and the Kinetics Dataset. arXiv 2017, arXiv:1705.07750v3. [Google Scholar]

- Diosdado, J.; Barker, Z.; Hodges, H.; Amory, J.; Croft, D.; Bell, N.; Codling, E. Classification of behavior in housed dairy cows using an accelerometer-based activity monitoring system. Anim. Biotelem. 2015, 3, 15. [Google Scholar] [CrossRef] [Green Version]

- Rahman, A.; Smith, D.; Little, B.; Ingham, A.; Greenwood, P.; Bishop-Hurley, G. Cattle behavior classification from collar, halter, and ear tag sensors. Inf. Process. Agric. 2018, 5, 124–133. [Google Scholar]

- Benaissa, S.; Tuyttens, F.; Plets, D.; Pessemier, T.; Trogh, J.; Tanghe, E.; Martens, L.; Vandaele, L.; van Nuffel, A.; Joseph, W.; et al. On the use of on-cow accelerometers for the classification of behaviors in dairy barns. Res. Vet. Sci. 2019, 125, 425–433. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Weary, D.M.; Huzzey, J.M.; von Keyserlingk, M.A.G. Board-Invited review: Using behavior to predict and identify ill health in animals. J. Anim. Sci. 2009, 87, 770–777. [Google Scholar] [CrossRef] [PubMed] [Green Version]

| Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).