Classification of Drowsiness Levels Based on a Deep Spatio-Temporal Convolutional Bidirectional LSTM Network Using Electroencephalography Signals

Abstract

1. Introduction

2. Methods

2.1. Subjects

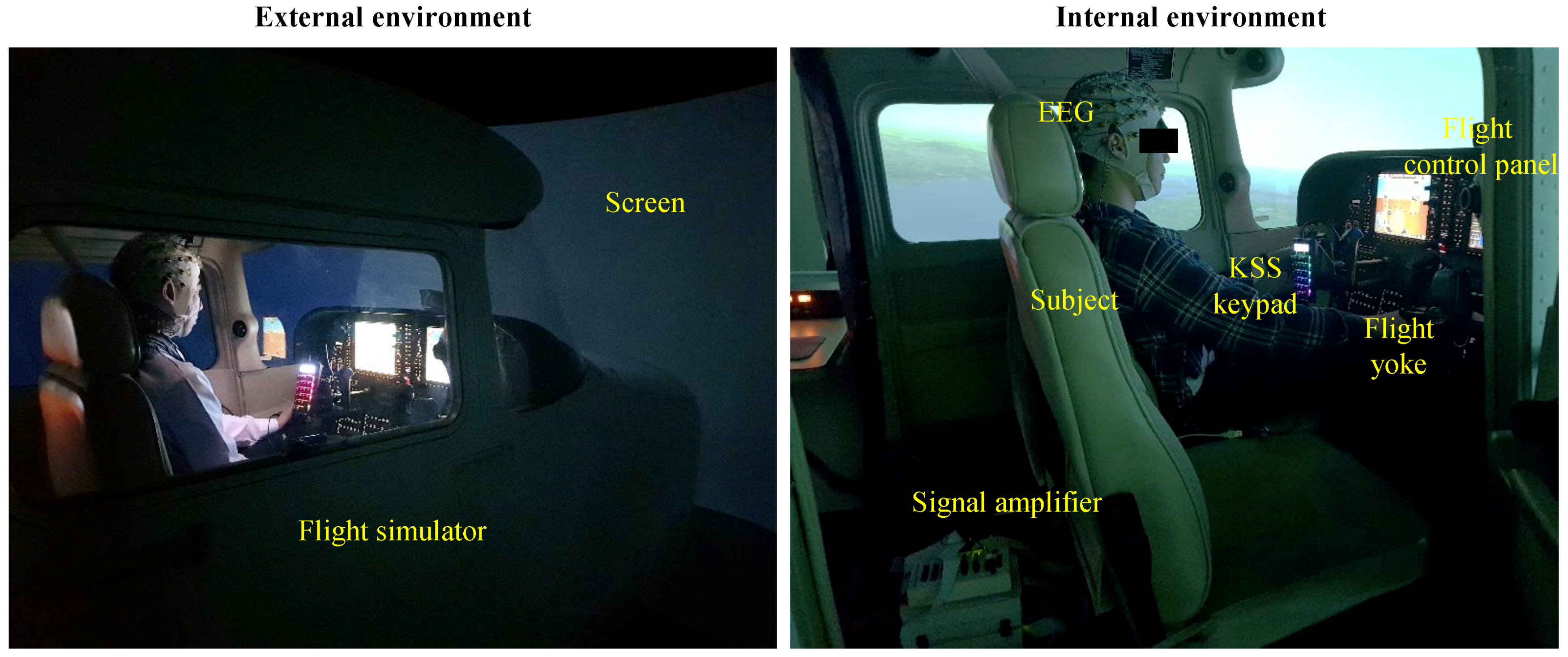

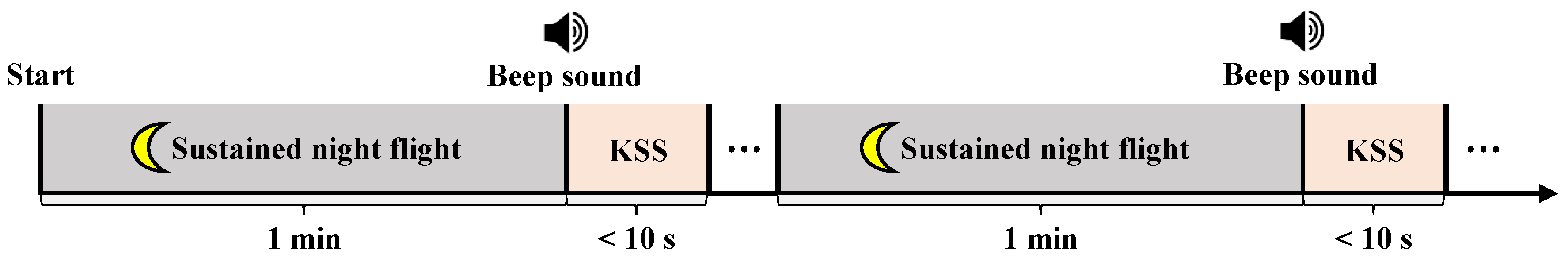

2.2. Experimental Protocols and Paradigm

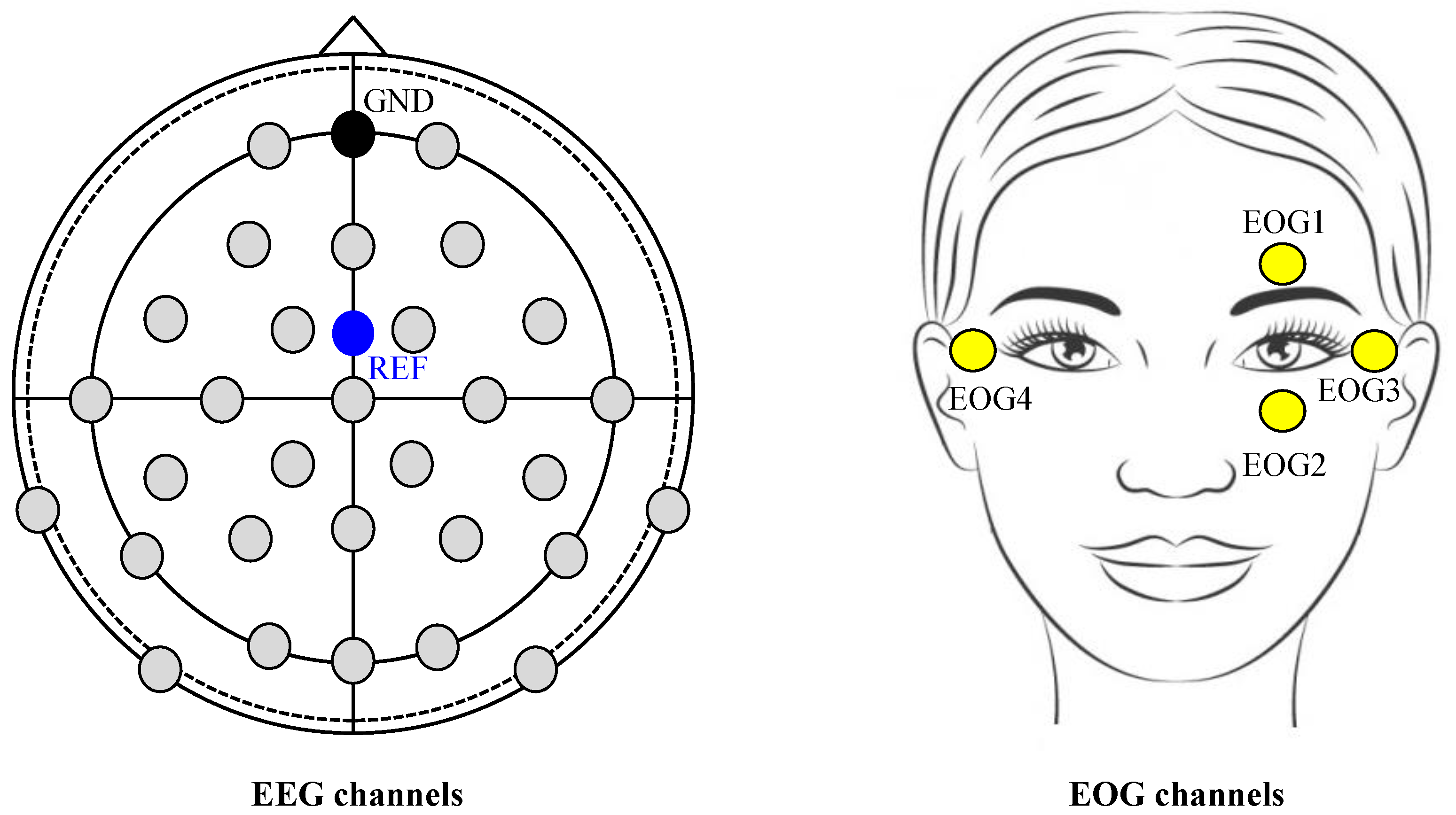

2.3. Data Acquisition

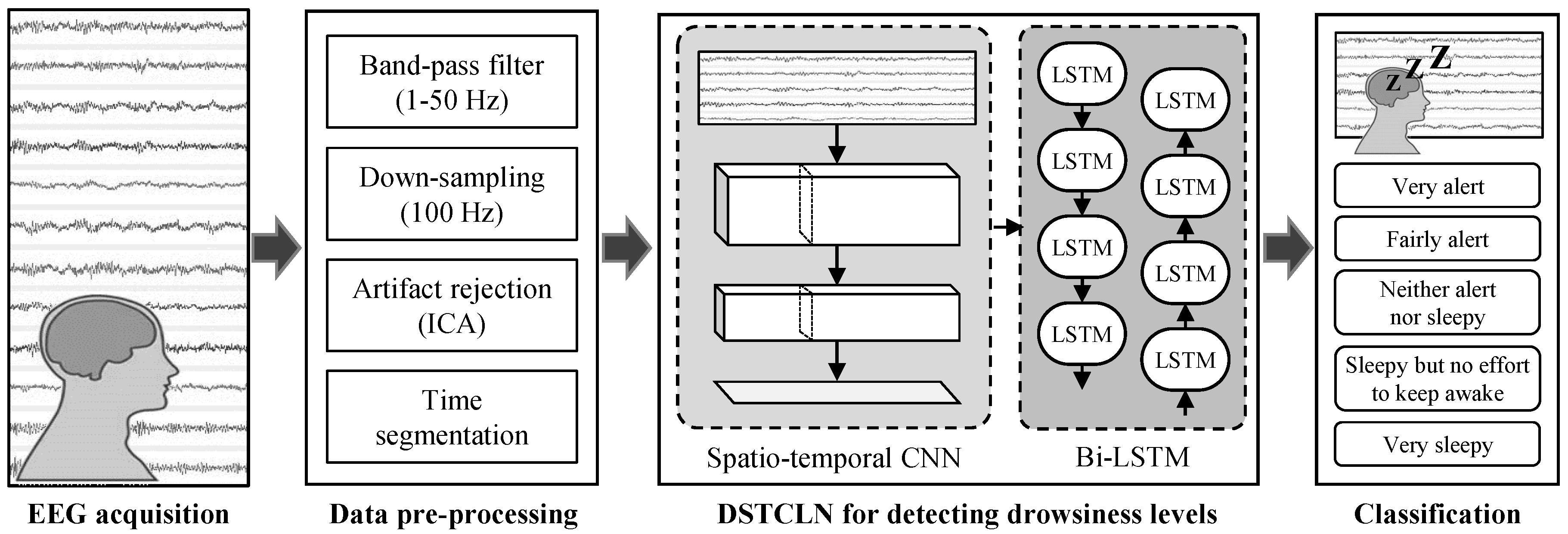

2.4. EEG Pre-Processing

2.5. DSTCLN

3. Results

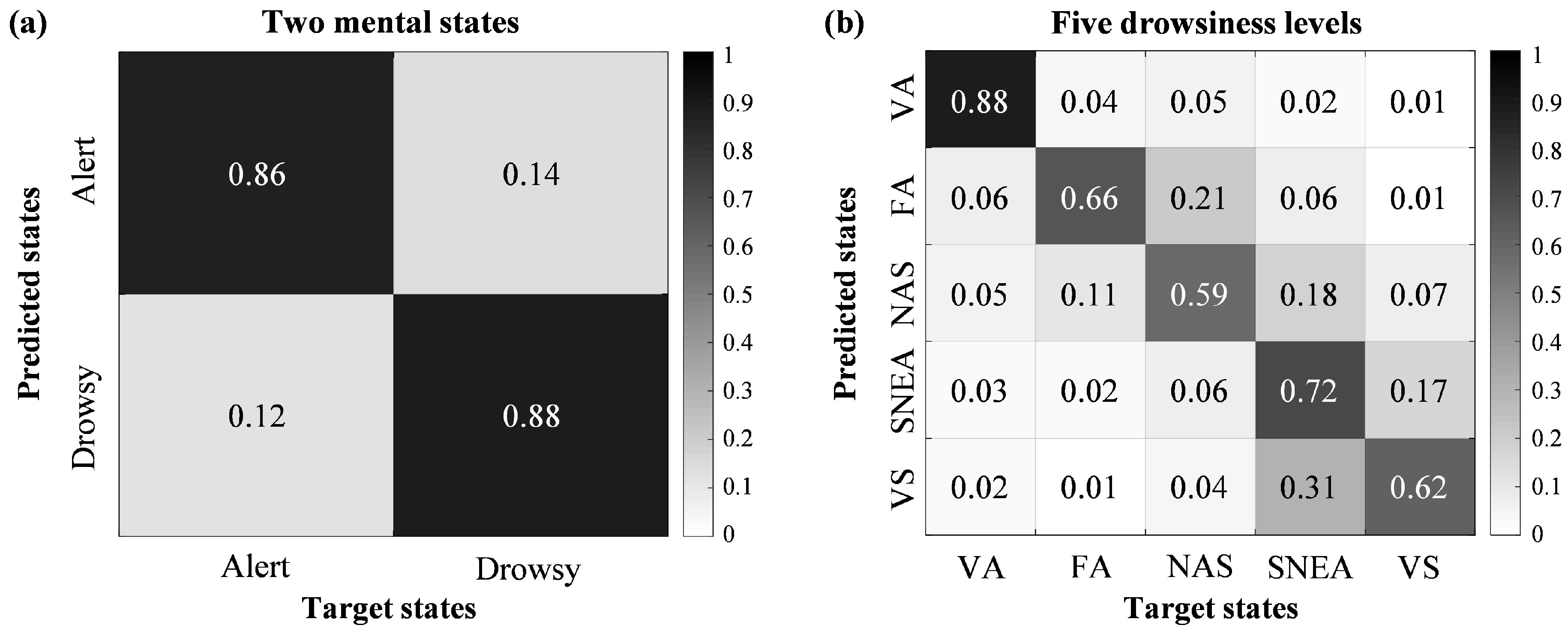

3.1. Classification Performances for Drowsiness Levels

3.2. Comparison Classification Performances with Conventional Methods

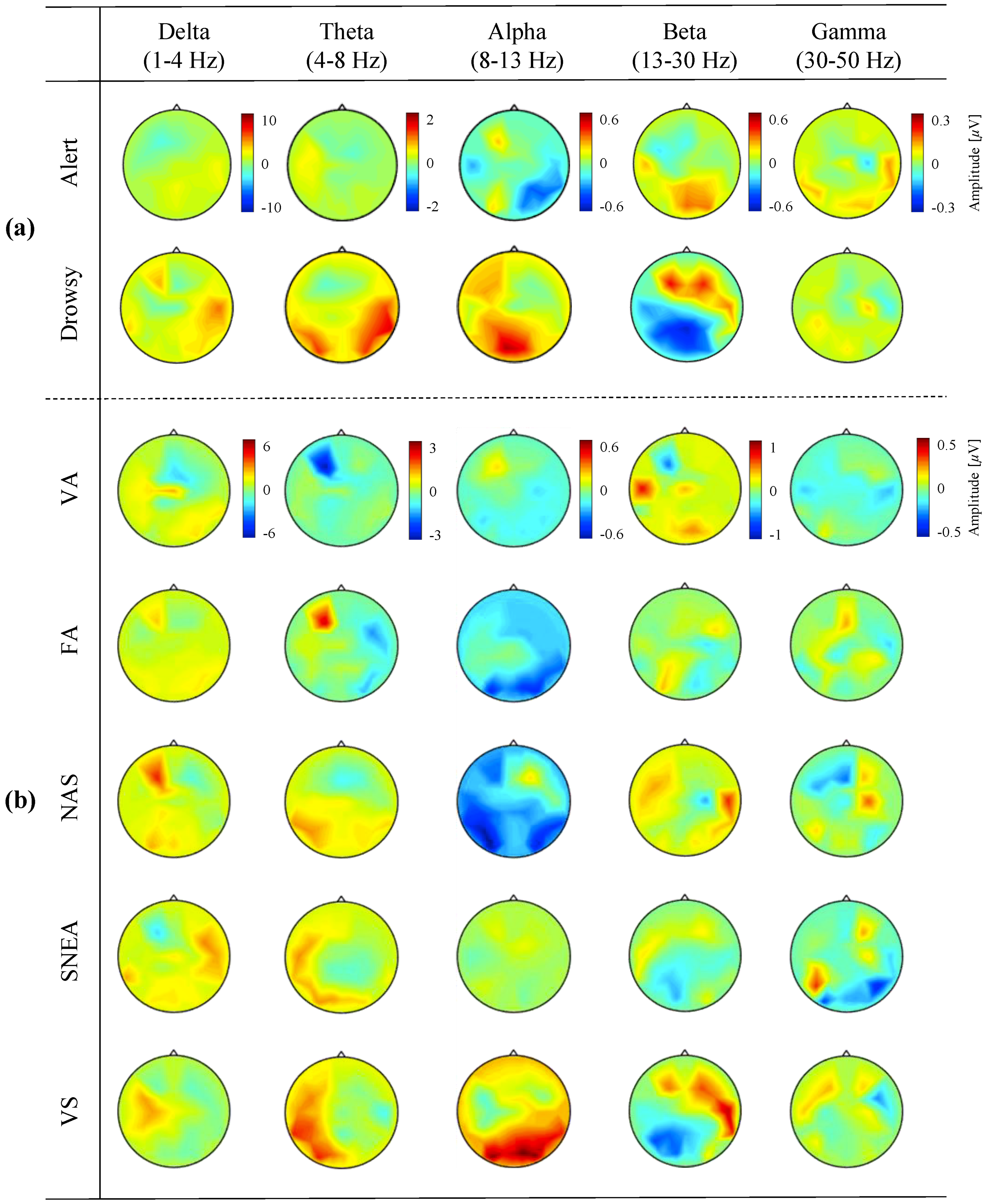

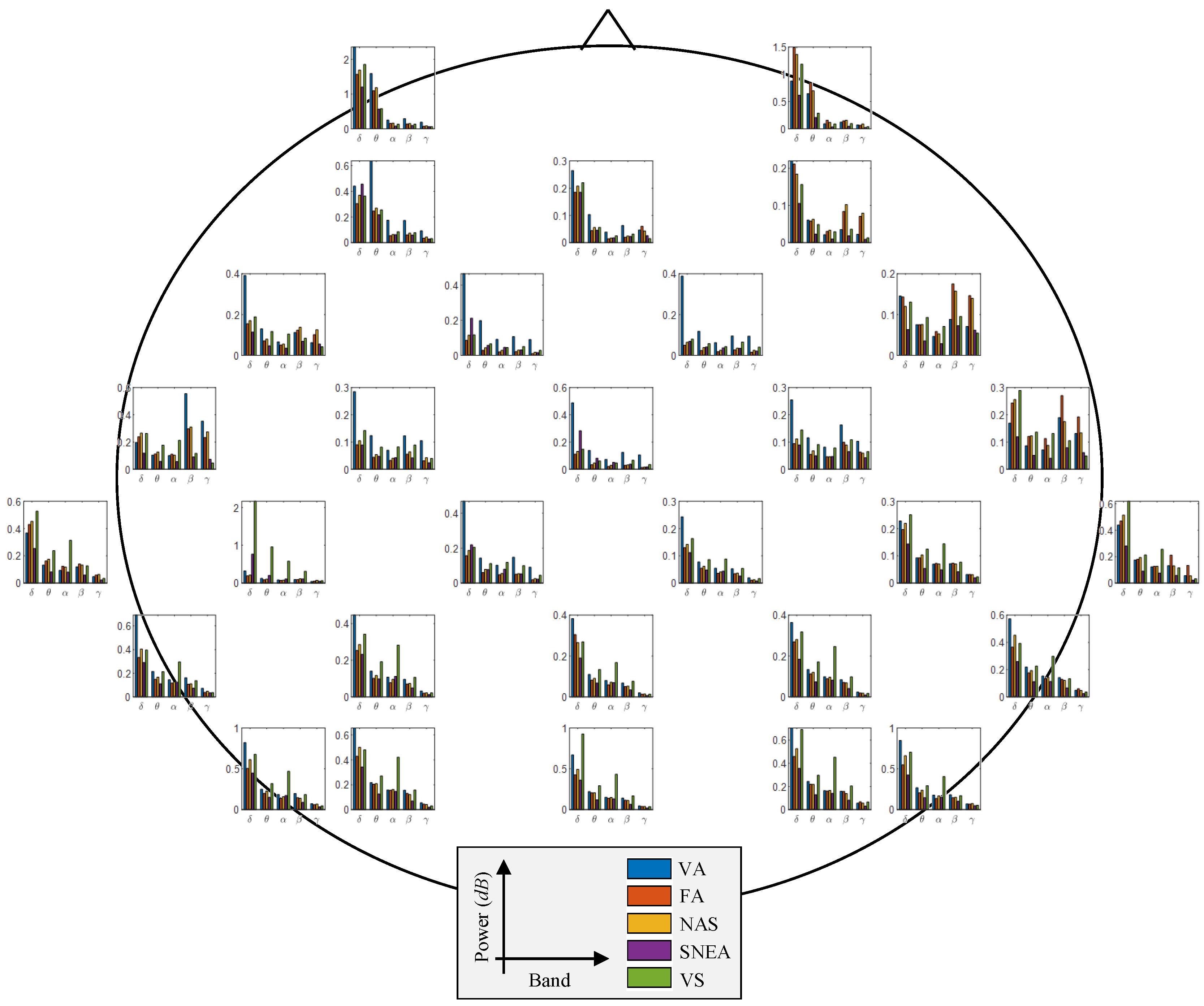

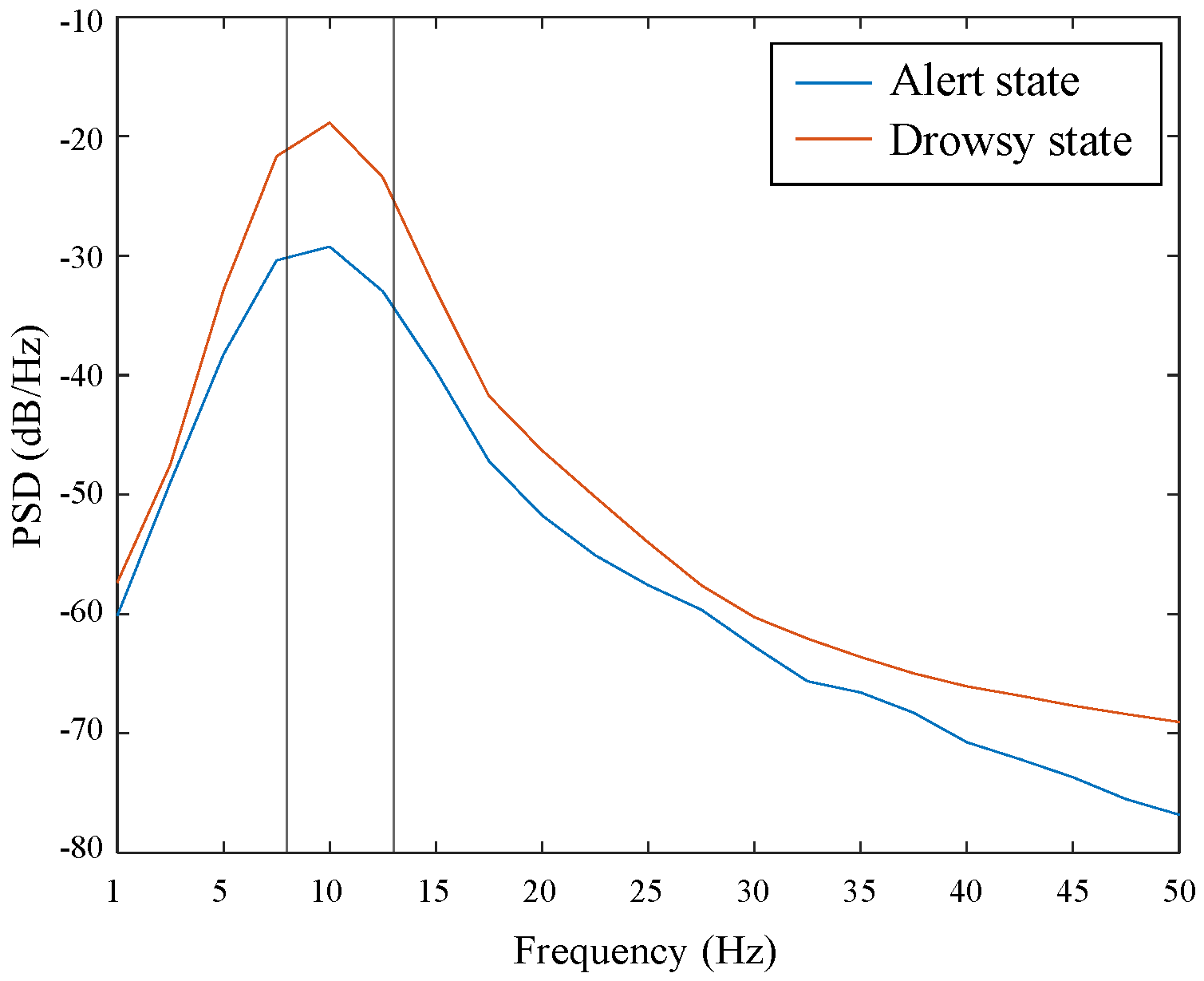

3.3. Neurophysiological Analysis from EEG Signals

4. Discussion

5. Conclusions and Future Works

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

Abbreviations

| BCI | Brain-Computer Interface |

| EEG | Electroencephalogram |

| DSTCLN | Deep Spatio-Temporal Convolutional Bidirectional Long Short-Term Memory Network |

| KSS | Karolinska Sleepiness Scale |

| AI | Artificial Intelligence |

| ECG | ElectroCardioGram |

| EOG | ElectroOculoGram |

| NIRS | Near-Infrared Spectroscopy |

| HRV | Heart Rate Variability |

| PPG | PhotoPlethysmoGraphy |

| AdaBoost | Adaptive Boosting |

| SVM | Support Vector Machine |

| CNN | Convolutional Neural Network |

| AUC | Area Under the Curve |

| PPMs | Peripheral Physiological Measures |

| VA | Very Alert |

| FA | Fairly Alert |

| NAS | Neither Alert nor Sleepy |

| SNEA | Sleepy but No Effort to keep Awake |

| VS | Very Sleepy |

| ICA | Independent Component Analysis |

| IC | Independent Component |

| Bi-LSTM | Bidirectional Long Short-Term Memory |

| ELUs | Exponential Linear Units |

| LSTM | Long Short-Term Memory |

| Std | Standard Deviation |

| PSD | Power Spectral Density |

| CCA | Canonical Correlation Analysis |

| CCNN | Channel-Wise Convolutional Neural Network |

| ESTCNN | EEG-based Spatio-Temporal Convolutional Neural Network |

| LSTM-D | Deep Long Short-Term Memory |

References

- Vaughan, T.M.; Heetderks, W.; Trejo, L.; Rymer, W.; Weinrich, M.; Moore, M.; Kübler, A.; Dobkin, B.; Birbaumer, N.; Donchin, E.; et al. Brain–computer interface technology: A review of the second international meeting. IEEE Trans. Neural Syst. Rehabil. Eng. 2003, 11, 94–109. [Google Scholar] [CrossRef]

- Wolpaw, J.R.; Birbaumer, N.; McFarland, D.J.; Pfurtscheller, G.; Vaughan, T.M. Brain–computer interfaces for communication and control. Clin. Neurophysiol. 2002, 113, 767–791. [Google Scholar] [CrossRef]

- Abiri, R.; Borhani, S.; Sellers, E.W.; Jiang, Y.; Zhao, X. A comprehensive review of EEG-based brain–computer interface paradigms. J. Neural. Eng. 2019, 16, 011001. [Google Scholar] [CrossRef] [PubMed]

- Kam, T.-E.; Suk, H.-I.; Lee, S.-W. Non-homogeneous spatial filter optimization for Electroencephalogram (EEG)-based motor imagery classification. Neurocomputing 2013, 108, 58–68. [Google Scholar] [CrossRef]

- Lee, M.-H.; Fazli, S.; Mehnert, J.; Lee, S.-W. Subject-dependent classification for robust idle state detection using multi-modal neuroimaging and data-fusion techniques in BCI. Pattern Recognit. 2015, 48, 2725–2737. [Google Scholar] [CrossRef]

- Suk, H.-I.; Lee, S.-W. Subject and class specific frequency bands selection for multiclass motor imagery classification. Int. J. Imaging Syst. Technol. 2011, 21, 123–130. [Google Scholar] [CrossRef]

- Jochumsen, M.; Navid, M.S.; Nedergaard, R.W.; Signal, N.; Rashid, U.; Hassan, A.; Haavik, H.; Taylor, D.; Niazi, I.K. Self-paced online vs. cue-based offline brain–computer interfaces for inducing neural plasticity. Brain Sci. 2019, 9, 127. [Google Scholar] [CrossRef]

- Ramele, R.; Villar, A.J.; Santos, J.M. EEG waveform analysis of P300 ERP with applications to brain computer interfaces. Brain Sci. 2018, 8, 199. [Google Scholar] [CrossRef]

- Jeong, J.-H.; Lee, M.-H.; Kwak, N.-S.; Lee, S.-W. Single-trial analysis of readiness potentials for lower limb exoskeleton control. In Proceedings of the 5th International Winter Conference on Brain-Computer Interface (BCI), Gangwon, Korea, 9–11 January 2017. [Google Scholar]

- Kim, I.-H.; Kim, J.-W.; Haufe, S.; Lee, S.-W. Detection of braking intention in diverse situations during simulated driving based on EEG feature combination. J. Neural. Eng. 2014, 12, 016001. [Google Scholar] [CrossRef]

- Chen, Y.; Atnafu, A.D.; Schlattner, I.; Weldtsadik, W.T.; Roh, M.C.; Kim, H.J.; Lee, S.-W.; Blankertz, B.; Fazli, S. A high-security EEG-based login system with RSVP stimuli and dry electrodes. IEEE Trans. Inf. Forensic Secur. 2016, 11, 2635–2647. [Google Scholar] [CrossRef]

- Meng, J.; Zhang, S.; Bekyo, A.; Olsoe, J.; Baxter, B.; He, B. Noninvasive electroencephalogram based control of a robotic arm for reach and grasp tasks. Sci. Rep. 2016, 6, 38565. [Google Scholar] [CrossRef]

- Jeong, J.-H.; Shim, K.-H.; Cho, J.-H.; Lee, S.-W. Trajectory decoding of arm reaching movement imageries for brain–controlled robot arm system. In Proceedings of the IEEE Engineering in Medicine and Biology Society (EMBC), Berlin, Germany, 23–27 July 2019. [Google Scholar]

- Jeong, J.-H.; Kim, K.-T.; Yun, Y.-D.; Lee, S.-W. Design of a brain-controlled robot arm system based on upper-limb movement imagery. In Proceedings of the 6th International Winter Conference on Brain-Computer Interface (BCI), Gangwon, Korea, 15–17 January 2018. [Google Scholar]

- Kim, K.-T.; Suk, H.-I.; Lee, S.-W. Commanding a brain–controlled wheelchair using steady–state somatosensory evoked potentials. IEEE Trans. Neural Syst. Rehabil. Eng. 2018, 26, 654–665. [Google Scholar] [CrossRef]

- Lee, M.-H.; Williamson, J.; Won, D.-O.; Fazli, S.; Lee, S.-W. A high performance spelling system based on EEG–EOG signals with visual feedback. IEEE Trans. Neural. Syst. Rehabil. Eng. 2018, 26, 1443–1459. [Google Scholar] [CrossRef]

- Won, D.-O.; Hwang, H.-J.; Dähne, S.; Müller, K.R.; Lee, S.-W. Effect of higher frequency on the classification of steady-state visual evoked potentials. J. Neural. Eng. 2016, 13, 016014. [Google Scholar] [CrossRef] [PubMed]

- Stawicki, P.; Gembler, F.; Rezeika, A.; Volosyak, I. A novel hybrid mental spelling application based on eye tracking and SSVEP-based BCI. Brain Sci. 2017, 4, 35. [Google Scholar] [CrossRef] [PubMed]

- Kleih, S.C.; Herweg, A.; Kaufmann, T.; Staiger-Sälzer, P.; Gerstner, N.; Kübler, A. The WIN-speller: A new intuitive auditory brain-computer interface spelling application. Front. Neurosci. 2015, 9, 346. [Google Scholar] [CrossRef] [PubMed]

- Trejo, L.J.; Kubitz, K.; Rosipal, R.; Kochav, R.L.; Montgomery, L.D. EEG-based estimation and classification of mental fatigue. Psychology 2015, 6, 572–589. [Google Scholar] [CrossRef]

- Jap, B.T.; Lal, S.; Fischer, P.; Bekiaris, E. Using EEG spectral components to assess algorithms for detecting fatigue. Expert Syst. Appl. 2009, 36, 2352–2359. [Google Scholar] [CrossRef]

- Borghini, G.; Astolfi, L.; Vecchiato, G.; Mattia, D.; Babiloni, F. Measuring neurophysiological signals in aircraft pilots and car drivers for the assessment of mental workload, fatigue, and drowsiness. Neurosci. Biobehav. Rev. 2016, 44, 58–75. [Google Scholar] [CrossRef]

- Gao, Z.; Wang, X.; Yang, Y.; Mu, C.; Cai, Q.; Dang, W.; Zuo, S. EEG-based spatio-temporal convolutional neural network for driver fatigue evaluation. IEEE Trans. Neural Netw. Learn. Syst. 2019. [Google Scholar] [CrossRef]

- Naurois, C.J.D.; Bourdin, C.; Stratulat, A.; Diaz, E.; Vercher, J.-L. Detection and prediction of driver drowsiness using artificial neural network models. Accid. Anal. Prev. 2019, 126, 95–104. [Google Scholar] [CrossRef]

- Chen, J.; Wang, H.; Hua, C. Assessment of driver drowsiness using electroencephalogram signals based on multiple functional brain networks. Int. J. Psychophysiol. 2018, 133, 120–130. [Google Scholar] [CrossRef]

- Lal, S.K.L.; Craig, A.; Boord, P.; Kirkup, L.; Nguyen, H. Development of an algorithm for an EEG-based driver fatigue countermeasure. J. Saf. Res. 2003, 34, 321–328. [Google Scholar] [CrossRef]

- Ahn, S.; Nguyen, T.; Jang, H.; Kim, J.G.; Jun, S.C. Exploring neuro–physiological correlates of drivers’ mental fatigue caused by sleep deprivation using simultaneous EEG, ECG, and fNIRS data. Front. Hum. Neurosci. 2016, 10, 219. [Google Scholar] [CrossRef] [PubMed]

- Wu, E.Q.; Peng, X.Y.; Zhang, C.Z.; Lin, J.X.; Sheng, R.S.F. Pilot’s fatigue status recognition using deep contractive autoencoder network. IEEE Trans. Instrum. Meas. 2019. [Google Scholar] [CrossRef]

- Liu, Y.; Ayaz, H.; Shewokis, P.A. Multisubject “learning” for mental workload classification using concurrent EEG, fNIRS, and physiological measures. Front. Hum. Neurosci. 2017, 11. [Google Scholar] [CrossRef] [PubMed]

- Sonnleitner, A.; Sonnleitner, M.S.; Simon, M.; Willmann, S.; Ewald, A.; Buchner, A.; Schrauf, M. EEG alpha spindles and prolonged brake reaction times during auditory distraction in an on-road driving study. Accid. Anal. Prev. 2014, 62, 110–118. [Google Scholar] [CrossRef] [PubMed]

- Choi, I.-H.; Kim, Y.-G. Head pose and gaze direction tracking for detecting a drowsy driver. Appl. Math. Inf. Sci. 2015, 9, 505–512. [Google Scholar]

- Rumagit, A.M.; Akbar, I.A.; Igasaki, T. Gazing time analysis for drowsiness assessment using eye gaze tracker. Telkomnika 2017, 15, 919–925. [Google Scholar] [CrossRef]

- Balandong, R.P.; Ahmad, R.F.; Saad, M.N.M.; Malik, A.S. A review on EEG-based automatic sleepiness detection systems for driver. IEEE Access 2018, 6, 22908–22919. [Google Scholar] [CrossRef]

- Fujiwara, K.; Abe, E.; Kamata, K.; Nakayama, C.; Suzuki, Y.; Yamakawa, T.; Hiraoka, T.; Kano, M.; Sumi, Y.; Masuda, F.; et al. Heart rate variability—Based driver drowsiness detection and its validation with EEG. IEEE Trans. Biomed. Eng. 2018, 66, 1769–1778. [Google Scholar] [CrossRef] [PubMed]

- Hong, S.; Kwon, H.; Choi, S.H.; Park, K.S. Intelligent system for drowsiness recognition based on ear canal EEG with PPG and ECG. Inf. Sci. 2018, 453, 302–322. [Google Scholar] [CrossRef]

- Mårtensson, H.; Keelan, O.; Ahlstrüm, C. Driver sleepiness classification based on physiological data and driving performance from real road driving. IEEE Trans. Intell. Trans. Syst. 2018, 20, 421–430. [Google Scholar] [CrossRef]

- Awais, M.; Badruddin, N.; Drieberg, M. A hybrid approach to detect driver drowsiness utilizing physiological signals to improve system performance and wearability. Sensors 2017, 17, 1991. [Google Scholar] [CrossRef] [PubMed]

- Nguyen, T.; Ahn, S.; Jang, H.; Jun, S.C.; Kim, J.G. Utilization of a combined EEG/NIRS system to predict driver drowsiness. Sci. Rep. 2017, 7, 43933. [Google Scholar] [CrossRef] [PubMed]

- Wei, C.-S.; Wang, Y.-T.; Lin, C.-T.; Jung, T.-P. Toward drowsiness detection using non–hair-bearing EEG-based BCI. IEEE Trans. Neural Syst. Rehabil. Eng. 2018, 26, 400–406. [Google Scholar] [CrossRef]

- Min, J.; Wang, P.; Hu, J. Driver fatigue detection through multiple entropy fusion analysis in an EEG-based system. PLoS ONE 2017, 12, e0188756. [Google Scholar] [CrossRef]

- Dimitrakopoulos, G.N.; Kakkos, I.; Dai, Z.; Wang, H.; Sgarbas, K.; Thakor, N.; Bezerianos, A.; Sun, Y. Functional connectivity analysis of fatigue reveals different network topological alterations. IEEE Trans. Neural Syst. Rehabil. Eng. 2018, 26, 740–749. [Google Scholar] [CrossRef]

- Liang, Y.; Horrey, W.J.; Howard, M.E.; Lee, M.L.; Anderson, C.; Shreeeve, M.S.; O’Brien, C.S.; Czeisler, C.A. Prediction of drowsiness events in night shift workers during morning driving. Accid. Anal. Prev. 2017, 126, 105–114. [Google Scholar] [CrossRef]

- Padfield, N.; Zabalza, J.; Zhao, H.; Masero, V.; Ren, J. EEG-based brain-computer interfaces using motor-imagery: Techniques and challenges. Sensors 2019, 19, 1423. [Google Scholar] [CrossRef]

- Nicolas-Alonso, L.F.; Gomez-Gil, J. Brain computer interfaces, a review. Sensors 2012, 12, 1211–1279. [Google Scholar] [CrossRef] [PubMed]

- Åkerstedt, T.; Gillberg, M. Subjective and objective sleepiness in the active individual. Int. J. Neurosci. 1990, 52, 29–37. [Google Scholar] [CrossRef] [PubMed]

- Han, S.-Y.; Kim, J.-W.; Lee, S.-W. Recognition of pilot’s cognitive states based on combination of physiological signals. In Proceedings of the 7th International Winter Conference on Brain-Computer Interface (BCI), Gangwon, Korea, 18–20 February 2019. [Google Scholar]

- Blankertz, B.; Tangermann, M.; Vidaurre, C.; Fazli, S.; Sannelli, C.; Haufe, S.; Maeder, C.; Ramsey, L.E.; Sturm, I.; Curio, G.; et al. The Berlin brain–computer interface: Non–medical uses of BCI technology. Front. Neurosci. 2010, 4, 198. [Google Scholar] [CrossRef] [PubMed]

- Lee, M.-H.; Kwon, O.-Y.; Kim, Y.-J.; Kim, H.-K.; Lee, Y.-E.; Williamson, J.; Fazli, S.; Lee, S.-W. EEG dataset and OpenBMI Toolbox for three BCI paradigms: An investigation into BCI illiteracy. Gigascience 2019, 8, giz002. [Google Scholar] [CrossRef]

- Jung, T.P.; Humphries, C.; Lee, T.W.; Makeig, S.; McKeown, M.J.; Iragui, V.; Sejnowski, T.J. Extended ICA removes artifacts from electroencephalographic recordings. Adv. Neural. Inf. Process. Syst. 1998, 894–900. [Google Scholar]

- Sakhavi, S.; Guan, C.; Yan, S. Learning temporal information for brain—Computer interface using convolutional neural networks. IEEE Trans. Neural Netw. Learn. Syst. 2018, 29, 5619–5629. [Google Scholar] [CrossRef]

- Lawhern, V.J.; Solon, A.J.; Waytowich, N.R.; Gordon, S.M.; Hung, C.P.; Lance, B.J. EEGNet: A compact convolutional neural network for EEG-based brain–computer interfaces. J. Neural. Eng. 2018, 15, 1–17. [Google Scholar] [CrossRef]

- Jinpeng, L.; Zhang, Z.; He, H. Hierarchical convolutional neural networks for EEG-based emotion recognition. Cogn. Comput. 2018, 10, 368–380. [Google Scholar]

- Graves, A.; Schmidhuber, J. Framewise phoneme classification with bidirectional LSTM and other neural network architectures. Neural Netw. 2005, 18, 602–610. [Google Scholar] [CrossRef]

- Supratak, A.; Dong, H.; Wu, C.; Guo, Y. DeepSleepNet: A model for automatic sleep stage scoring based on raw single-channel EEG. IEEE Trans. Neural Syst. Rehabil. Eng. 2017, 25, 1998–2008. [Google Scholar] [CrossRef]

- Hefron, R.G.; Borghetti, B.J.; Christensen, J.C.; Kabban, C.M.S. Deep long short–term memory structures model temporal dependencies improving cognitive workload estimation. Pattern Recognit. Lett. 2017, 94, 96–104. [Google Scholar] [CrossRef]

- Krizhevsky, A.; Sutskever, I.; Hinton, G.E. Imagenet classification with deep convolutional neural networks. Adv. Neural Inf. Process. Syst. 2012, 1097–1105. [Google Scholar] [CrossRef]

- Zhang, X.; Li, J.; Liu, Y.; Zhang, Z.; Wang, Z.; Luo, D.; Zhou, X.; Zhu, M.; Salman, W.; Hu, G.; et al. Design of a fatigue detection system for high speed trains based on driver vigilance using a wireless wearable EEG. Sensors 2017, 17, 486. [Google Scholar] [CrossRef] [PubMed]

- Hajinoroozi, M.; Mao, Z.; Jung, T.-P.; Lin, C.-T.; Huang, Y. EEG based prediction of driver’s cognitive performance by deep convolutional neural network. Signal Process. Image Commun. 2016, 47, 549–555. [Google Scholar] [CrossRef]

- Boonstra, T.W.; Nikolin, S.; Meisener, A.C.; Martin, D.M.; Loo, C.K. Change in mean frequency of resting-state electroencephalography after transcranial direct current stimulation. Front. Hum. Neurosci. 2016, 10, 270. [Google Scholar] [CrossRef]

- Rahma, O.N.; Rahmatillah, A. Drowsiness analysis using common spatial pattern and extreme learning machine based on electroencephalogram signal. J. Med. Signals Sens. 2019, 9, 130–136. [Google Scholar] [CrossRef]

- Zeng, H.; Yang, C.; Dai, G.; Qin, F.; Zhang, J.; Kong, W. EEG classification of driver mental states by deep learning. Cogn. Neurodyn. 2018, 12, 597–606. [Google Scholar] [CrossRef]

- Zander, T.O.; Kothe, C. Towards passive brain-computer interfaces: Applying brain-computer interface technology to human-machine systems in general. J. Neural Eng. 2011, 8, 025005. [Google Scholar] [CrossRef]

- Roy, Y.; Banville, H.; Albuquerque, I.; Gramfort, A.; Falk, T.H.; Faubert, J. Deep learning-based electroencephalography analysis: A systematic review. J. Neural Eng. 2019, 16, 051001. [Google Scholar] [CrossRef]

- Chai, R.; Ling, S.H.; San, P.P.; Naik, G.R.; Nguyen, T.N.; Tran, Y.; Craig, A.; Nguyen, H.T. Improving EEG-based driver fatigue classification using sparse-deep belief networks. Front. Neurosci. 2017, 11, 103. [Google Scholar] [CrossRef]

- Ma, Y.; Chen, B.; Li, R.; Wang, C.; Wang, J.; She, Q.; Luo, Z.; Zhang, Y. Driving fatigue detection from EEG using a modified PCANet method. Comput. Intell. Neurosci. 2019, 2019, 867–876. [Google Scholar] [CrossRef] [PubMed]

- Wiegmann, D.A.; Shappell, S.A. A Human Error Approach to Aviation Accident Analysis: The Human Factors Analysis and Classication System; Aldershot, Great Britain Ashgate: Farnham, UK, 2003; pp. 45–71. [Google Scholar]

- Yen, J.-R.; Hsu, C.-C.; Yang, H.; Ho, H. An investigation of fatigue issues on different flight operations. J. Air Trans. Manag. 2009, 15, 236–240. [Google Scholar] [CrossRef]

- Regan, M.A.; Hallet, C.; Gordon, C.P. Driver distraction and driver inattention: Definition, relationship and taxonomy. Accid. Anal. Prev. 2011, 43, 1771–1781. [Google Scholar] [CrossRef] [PubMed]

| Input Size | Block | Layer | Parameter | Output Size |

|---|---|---|---|---|

| - | - | Input | - | 30 × 100 |

| 30 × 100 | Convolutional block I | Convolution | Layer number: 2 | 30 × 32 × 92 |

| Filter size: 1 × 5 | ||||

| Feature map: 32 | ||||

| Stride size: 1 × 1 | ||||

| BatchNorm | - | |||

| 30 × 32 × 92 | Convolutional block II | Convolution | Layer number: 2 | 30 × 64 × 84 |

| Filter size: 1 × 5 | ||||

| Feature map: 64 | ||||

| Stride size: 1 × 1 | ||||

| BatchNorm | - | |||

| 30 × 64 × 84 | Convolutional block III | Convolution | Layer number: 2 | 30 × 128 × 76 |

| Filter size: 1 × 5 | ||||

| Feature map: 128 | ||||

| Stride size: 1 × 1 | ||||

| BatchNorm | - | |||

| 30 × 128 × 76 | Convolutional block IV | Convolution | Layer number: 3 | 18 × 128 × 76 |

| Filter size: 5 × 1 | ||||

| Feature map: 128 | ||||

| Stride size: 1 × 1 | ||||

| 18 × 128 × 76 | Maxpool | Filter size: 2 × 1 | 9 × 128 × 76 | |

| Stride size: 2 × 1 | ||||

| BatchNorm | - | |||

| 9 × 128 × 76 | Convolutional block V | Convolution | Layer number: 3 | 3 × 256 × 76 |

| Filter size: 1 × 3 | ||||

| Feature map: 256 | ||||

| Stride size: 1 × 1 | ||||

| 3 × 256 × 76 | Avgpool | Filter size: 3 × 1 | 1 × 256 × 76 | |

| BatchNorm | - | |||

| Acvivation (ELU) | - | |||

| Dropout (0.5) | - | |||

| 1 × 256 × 76 | Bi-LSTM block | Bi-LSTM | Hidden units: 256 | 512 × 76 |

| 512 × 76 | Bi-LSTM | Hidden units: 256 | 512 × 76 | |

| 512 × 76 | Bi-LSTM | Hidden units: 128 | 256 × 76 | |

| 256 × 76 | Bi-LSTM | Hidden units: 128 | 256 × 1 | |

| Dropout (0.5) | - | |||

| 256 × 1 | Classification | Fully connected | Hidden units: 128 | 128 × 1 |

| 128 × 1 | Fully connected | Hidden units: 64 | 64 × 1 | |

| 64 × 1 | Fully connected | Hidden units: 5 | 5 × 1 | |

| Softmax | - |

| 1-Fold | 2-Fold | 3-Fold | 4-Fold | Classification Accuracy | Std | |

|---|---|---|---|---|---|---|

| 2-class (Alert state / Drowsy state) | 0.86 | 0.87 | 0.88 | 0.87 | 0.87 | ±0.01 |

| 5-class (VA / FA / NAS / SNEA / VS) | 0.67 | 0.69 | 0.70 | 0.71 | 0.69 | ±0.02 |

| Methods | Alert State and Drowsy State (2-Class) | Drowsiness Levels (5-Class) | ||||

|---|---|---|---|---|---|---|

| Accuracy | Std | Sensitivity | Specificity | Accuracy | Std | |

| PSD-SVM [57] | 0.64 | 0.03 | 0.77 | 0.50 | 0.31 | 0.04 |

| CCA-SVM [46] | 0.78 | 0.02 | 0.73 | 0.84 | 0.47 | 0.05 |

| CCNN [58] | 0.52 | 0.01 | 0.68 | 0.34 | 0.33 | 0.03 |

| ESTCNN [23] | 0.78 | 0.01 | 0.73 | 0.85 | 0.56 | 0.01 |

| LSTM-D [55] | 0.74 | 0.01 | 0.55 | 0.92 | 0.45 | 0.02 |

| ProposedDSTCLN | 0.87 | 0.01 | 0.86 | 0.88 | 0.69 | 0.02 |

| State | Delta (1–4 Hz) | Theta (4–8 Hz) | Alpha (8–13 Hz) | Beta (13–30 Hz) | Gamma (30–50 Hz) | |

|---|---|---|---|---|---|---|

| 2-class | Alert | 1.99 Hz | 5.98 Hz | 9.86 Hz | 20.74 Hz | 36.96 Hz |

| Drowsy | 1.98 Hz | 6.22 Hz | 9.68 Hz | 19.87 Hz | 36.77 Hz | |

| Difference | 0.01 | −0.24 | 0.18 | 0.87 | 0.19 | |

| 5-class | VA | 2.19 Hz | 5.82 Hz | 9.92 Hz | 20.68 Hz | 36.59 Hz |

| FA | 2.22 Hz | 5.92 Hz | 10.01 Hz | 20.82 Hz | 37.12 Hz | |

| NAS | 2.15 Hz | 6.05 Hz | 9.84 Hz | 20.80 Hz | 37.13 Hz | |

| SNEA | 2.04 Hz | 6.17 Hz | 9.69 Hz | 20.21 Hz | 36.92 Hz | |

| VS | 2.24 Hz | 6.29 Hz | 9.70 Hz | 19.38 Hz | 36.00 Hz |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Jeong, J.-H.; Yu, B.-W.; Lee, D.-H.; Lee, S.-W. Classification of Drowsiness Levels Based on a Deep Spatio-Temporal Convolutional Bidirectional LSTM Network Using Electroencephalography Signals. Brain Sci. 2019, 9, 348. https://doi.org/10.3390/brainsci9120348

Jeong J-H, Yu B-W, Lee D-H, Lee S-W. Classification of Drowsiness Levels Based on a Deep Spatio-Temporal Convolutional Bidirectional LSTM Network Using Electroencephalography Signals. Brain Sciences. 2019; 9(12):348. https://doi.org/10.3390/brainsci9120348

Chicago/Turabian StyleJeong, Ji-Hoon, Baek-Woon Yu, Dae-Hyeok Lee, and Seong-Whan Lee. 2019. "Classification of Drowsiness Levels Based on a Deep Spatio-Temporal Convolutional Bidirectional LSTM Network Using Electroencephalography Signals" Brain Sciences 9, no. 12: 348. https://doi.org/10.3390/brainsci9120348

APA StyleJeong, J.-H., Yu, B.-W., Lee, D.-H., & Lee, S.-W. (2019). Classification of Drowsiness Levels Based on a Deep Spatio-Temporal Convolutional Bidirectional LSTM Network Using Electroencephalography Signals. Brain Sciences, 9(12), 348. https://doi.org/10.3390/brainsci9120348