Attention Modulates Electrophysiological Responses to Simultaneous Music and Language Syntax Processing

Abstract

1. Introduction

2. Materials and Methods

2.1. Subjects

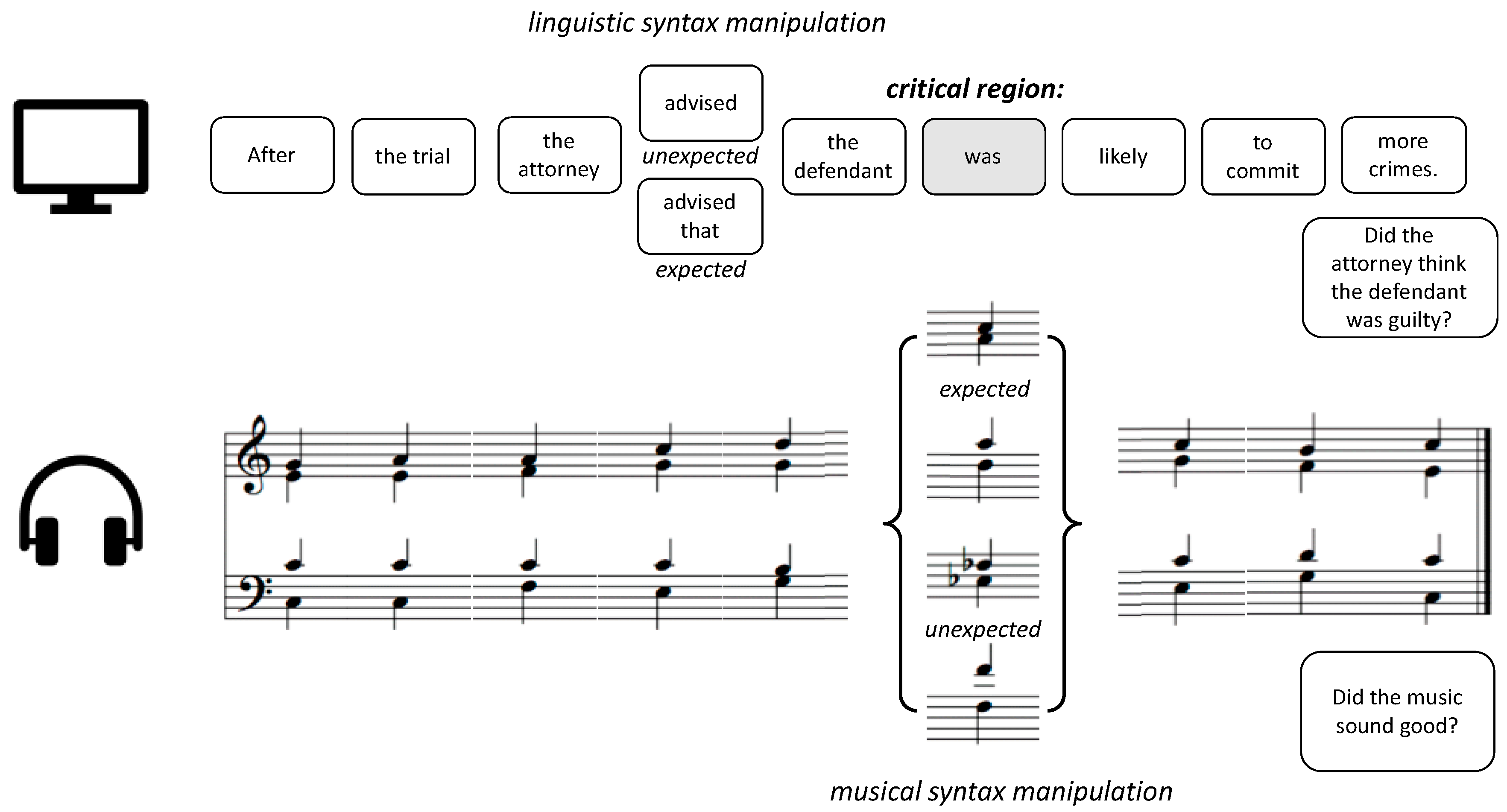

2.2. Stimuli

2.3. Procedure

2.4. Behavioral Data Analysis

2.5. EEG Preprocessing

2.6. Event-Related Potential Analysis

3. Results

3.1. Behavioral Results

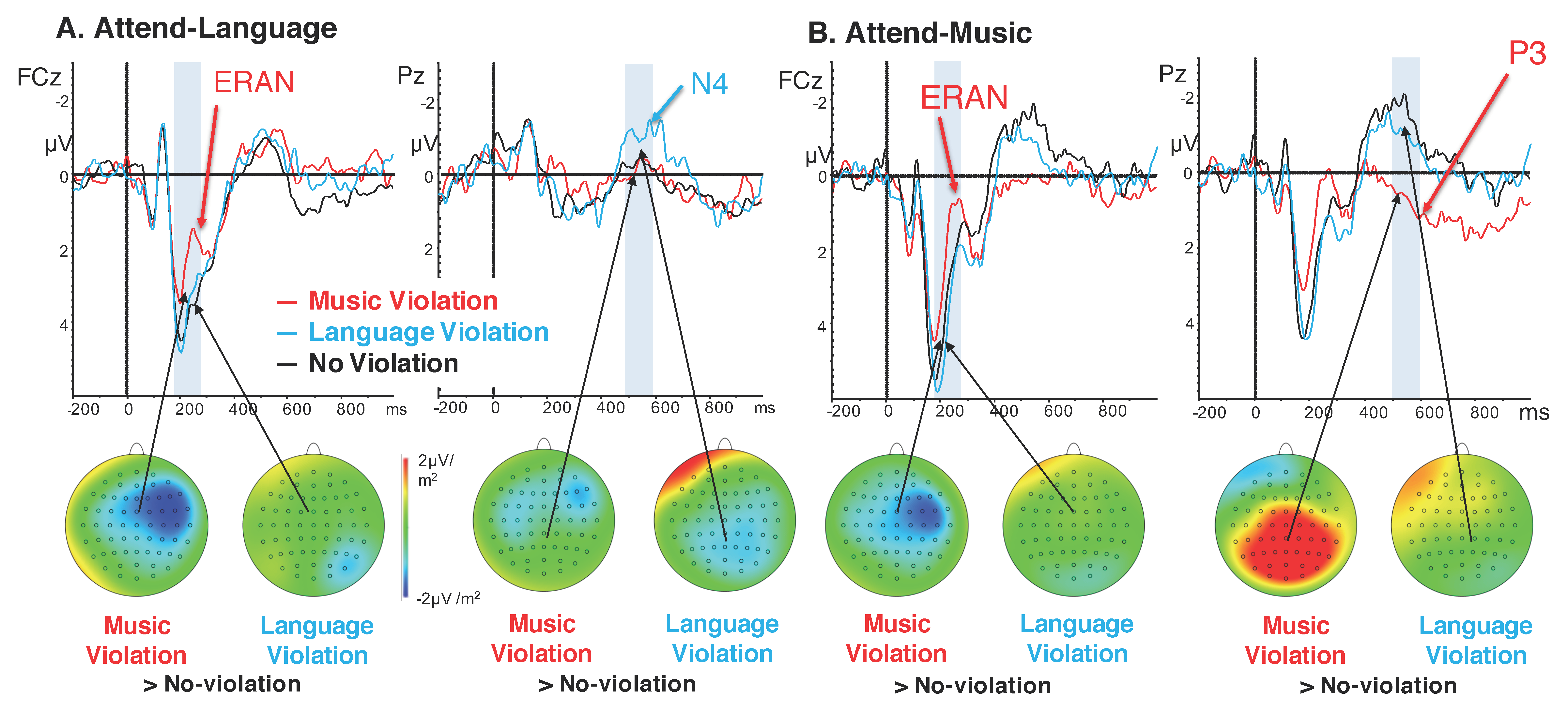

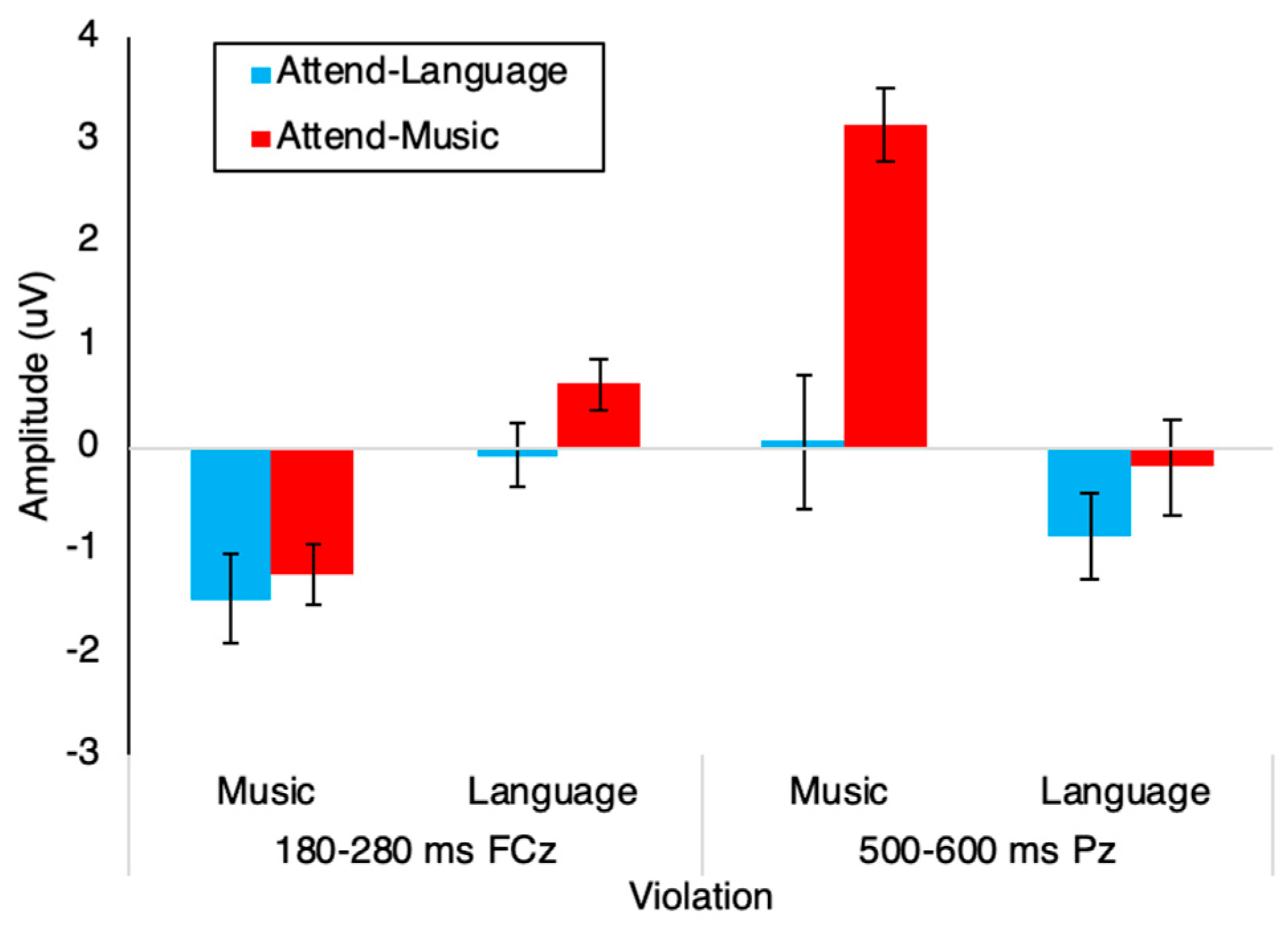

3.2. Event-Related Potentials

4. Discussion

5. Limitations

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Patel, A.D. Language, music, syntax and the brain. Nat. Neurosci. 2003, 6, 674–681. [Google Scholar] [CrossRef]

- Slevc, L.R.; Rosenberg, J.C.; Patel, A.D. Making psycholinguistics musical: Self-paced reading time evidence for shared processing of linguistic and musical syntax. Psychon. Bull. Rev. 2009, 16, 374–381. [Google Scholar] [CrossRef] [PubMed]

- Roncaglia-Denissen, M.P.; Bouwer, F.L.; Honing, H. Decision Making Strategy and the Simultaneous Processing of Syntactic Dependencies in Language and Music. Front. Psychol. 2018, 9, 38. [Google Scholar] [CrossRef] [PubMed]

- Jentschke, S.; Koelsch, S.; Friederici, A.D. Investigating the relationship of music and language in children: Influences of musical training and language impairment. Ann. N. Y. Acad. Sci. 2005, 1060, 231–242. [Google Scholar] [CrossRef] [PubMed]

- Koelsch, S.; Gunter, T.C.; Wittfoth, M.; Sammler, D. Interaction between Syntax Processing in Language and in Music: An ERP Study. J. Cogn. Neurosci. 2005, 17, 1565–1577. [Google Scholar] [CrossRef]

- Fedorenko, E.; Patel, A.; Casasanto, D.; Winawer, J.; Gibson, E. Structural integration in language and music: Evidence for a shared system. Mem. Cogn. 2009, 37, 1–9. [Google Scholar] [CrossRef]

- Perruchet, P.; Poulin-Charronnat, B. Challenging prior evidence for a shared syntactic processor for language and music. Psychon. Bull. Rev. 2012, 20, 310–317. [Google Scholar] [CrossRef]

- Slevc, L.R.; Okada, B.M. Processing structure in language and music: A case for shared reliance on cognitive control. Psychon. Bull. Rev. 2015, 22, 637–652. [Google Scholar] [CrossRef]

- Posner, M.I.; Petersen, S.E. The Attention System of the Human Brain. Annu. Rev. Neurosci. 1990, 13, 25–42. [Google Scholar] [CrossRef]

- Coull, J.T.; Nobre, A.C. Where and When to Pay Attention: The Neural Systems for Directing Attention to Spatial Locations and to Time Intervals as Revealed by Both PET and fMRI. J. Neurosci. 1998, 18, 7426–7435. [Google Scholar] [CrossRef]

- Koelsch, S.; Schmidt, B.-H.; Kansok, J. Effects of musical expertise on the early right anterior negativity: An event-related brain potential study. Psychophysiology 2002, 39, 657–663. [Google Scholar] [CrossRef] [PubMed]

- Koelsch, S.; Gunter, T.; Friederici, A.D.; Schröger, E. Brain Indices of Music Processing: “Nonmusicians” are Musical. J. Cogn. Neurosci. 2000, 12, 520–541. [Google Scholar] [CrossRef] [PubMed]

- Sammler, D.; Koelsch, S.; Ball, T.; Brandt, A.; Grigutsch, M.; Huppertz, H.-J.; Knösche, T.R.; Wellmer, J.; Widman, G.; Elger, C.E.; et al. Co-localizing linguistic and musical syntax with intracranial EEG. NeuroImage 2013, 64, 134–146. [Google Scholar] [CrossRef] [PubMed]

- Hahne, A.B.; Friederici, A.D. Electrophysiological evidence for two steps in syntactic analysis. Early automatic and late controlled processes. J. Cogn. Neurosci. 1999, 11, 194–205. [Google Scholar] [PubMed]

- Friederici, A.D. Towards a neural basis of auditory sentence processing. Trends Cogn. Sci. 2002, 6, 78–84. [Google Scholar] [CrossRef]

- Neville, H.; Nicol, J.L.; Barss, A.; Forster, K.I.; Garrett, M.F. Syntactically Based Sentence Processing Classes: Evidence from Event-Related Brain Potentials. J. Cogn. Neurosci. 1991, 3, 151–165. [Google Scholar] [CrossRef]

- Maess, B.; Koelsch, S.; Gunter, T.C.; Friederici, A.D. Musical syntax is processed in Broca’s area: An MEG study. Nat. Neurosci. 2001, 4, 540–545. [Google Scholar] [CrossRef]

- Cheung, V.K.M.; Meyer, L.; Friederici, A.D.; Koelsch, S. The right inferior frontal gyrus processes nested non-local dependencies in music. Sci. Rep. 2018, 8, 3822. [Google Scholar] [CrossRef]

- Tillmann, B.; Koelsch, S.; Escoffier, N.; Bigand, E.; Lalitte, P.; Friederici, A.; Von Cramon, D. Cognitive priming in sung and instrumental music: Activation of inferior frontal cortex. NeuroImage 2006, 31, 1771–1782. [Google Scholar] [CrossRef]

- Bianco, R.; Novembre, G.; Keller, P.; Kim, S.-G.; Scharf, F.; Friederici, A.; Villringer, A.; Sammler, D.; Keller, P. Neural networks for harmonic structure in music perception and action. NeuroImage 2016, 142, 454–464. [Google Scholar] [CrossRef]

- Sammler, D.; Koelsch, S.; Friederici, A.D. Are left fronto-temporal brain areas a prerequisite for normal music-syntactic processing? Cortex 2011, 47, 659–673. [Google Scholar] [CrossRef] [PubMed]

- Jentschke, S.; Koelsch, S.; Sallat, S.; Friederici, A.D. Children with Specific Language Impairment Also Show Impairment of Music-syntactic Processing. J. Cogn. Neurosci. 2008, 20, 1940–1951. [Google Scholar] [CrossRef] [PubMed]

- Koelsch, S.; Vuust, P.; Friston, K. Predictive Processes and the Peculiar Case of Music. Trends Cogn. Sci. 2018, 23, 63–77. [Google Scholar] [CrossRef] [PubMed]

- Loui, P.; Grent-’t-Jong, T.; Torpey, D.; Woldorff, M. Effects of attention on the neural processing of harmonic syntax in Western music. Brain Res. Cogn. Brain Res. 2005, 25, 678–687. [Google Scholar] [CrossRef]

- Maidhof, C.; Koelsch, S. Effects of Selective Attention on Syntax Processing in Music and Language. J. Cogn. Neurosci. 2011, 23, 2252–2267. [Google Scholar] [CrossRef] [PubMed]

- Kutas, M.; Hillyard, S.A. Brain potentials during reading reflect word expectancy and semantic association. Nature 1984, 307, 161–163. [Google Scholar] [CrossRef]

- Kutas, M.; Hillyard, S. Reading senseless sentences: Brain potentials reflect semantic incongruity. Science 1980, 207, 203–205. [Google Scholar] [CrossRef]

- Kuperberg, G.R. Neural mechanisms of language comprehension: Challenges to syntax. Brain Res. 2007, 1146, 23–49. [Google Scholar] [CrossRef]

- Osterhout, L.; Holcomb, P.J.; Swinney, D.A. Brain potentials elicited by garden-path sentences: Evidence of the application of verb information during parsing. J. Exp. Psychol. Learn. Mem. Cogn. 1994, 20, 786–803. [Google Scholar] [CrossRef]

- Patel, A.D.; Gibson, E.; Ratner, J.; Besson, M.; Holcomb, P.J. Processing syntactic relations in language and music: An event-related potential study. J. Cogn. Neurosci. 1998, 10, 717–733. [Google Scholar] [CrossRef]

- Polich, J. Updating P300: An integrative theory of P3a and P3b. Clin. Neurophysiol. 2007, 118, 2128–2148. [Google Scholar] [CrossRef] [PubMed]

- Knight, R.T.; Grabowecky, M.F.; Scabini, D. Role of human prefrontal cortex in attention control. Adv. Neurol. 1995, 66, 21–36. [Google Scholar] [PubMed]

- Przysinda, E.; Zeng, T.; Maves, K.; Arkin, C.; Loui, P. Jazz musicians reveal role of expectancy in human creativity. Brain Cogn. 2017, 119, 45–53. [Google Scholar] [CrossRef] [PubMed]

- Shipley, W.C. A Self-Administering Scale for Measuring Intellectual Impairment and Deterioration. J. Psychol. 1940, 9, 371–377. [Google Scholar] [CrossRef]

- Loui, P.; Guenther, F.H.; Mathys, C.; Schlaug, G. Action-perception mismatch in tone-deafness. Curr. Boil. 2008, 18, R331–R332. [Google Scholar] [CrossRef] [PubMed]

- Peretz, I.; Champod, A.S.; Hyde, K. Varieties of musical disorders. The Montreal Battery of Evaluation of Amusia. Ann. N. Y. Acad. Sci. 2003, 999, 58–75. [Google Scholar] [CrossRef] [PubMed]

- Zicarelli, D. An extensible real-time signal processing environment for Max. In Proceedings of the International Computer Music Conference, University of Michigan, Ann Arbor, MI, USA, 1–6 October 1998; Available online: https://quod.lib.umich.edu/i/icmc/bbp2372.1998.274/1 (accessed on 25 October 2019).

- Loui, P.; Wu, E.H.; Wessel, D.L.; Knight, R.T. A generalized mechanism for perception of pitch patterns. J. Neurosci. 2009, 29, 454–459. [Google Scholar] [CrossRef]

- Widmann, A.; Schröger, E.; Maess, B. Digital filter design for electrophysiological data—A practical approach. J. Neurosci. Methods 2015, 250, 34–46. [Google Scholar] [CrossRef]

- Hillyard, S.A.; Hink, R.F.; Schwent, V.L.; Picton, T.W. Electrical Signs of Selective Attention in the Human Brain. Science 1973, 182, 177–180. [Google Scholar] [CrossRef]

- Donchin, E.; Heffley, E.; Hillyard, S.A.; Loveless, N.; Maltzman, I.; Ohman, A.; Rosler, F.; Ruchkin, D.; Siddle, D. Cognition and event-related potentials. II. The orienting reflex and P300. Ann. N. Y. Acad. Sci. 1984, 425, 39–57. [Google Scholar] [CrossRef]

- Gray, J.A.; Wedderburn, A.A.I. Shorter articles and notes grouping strategies with simultaneous stimuli. Q. J. Exp. Psychol. 1960, 12, 180–184. [Google Scholar] [CrossRef]

- Deutsch, J.A.; Deutsch, D. Attention: Some theoretical considerations. Psychol. Rev. 1963, 70, 51–60. [Google Scholar] [CrossRef]

- Treisman, A.M.; Gelade, G. A feature-integration theory of attention. Cogn. Psychol. 1980, 12, 97–136. [Google Scholar] [CrossRef]

- Woldorff, M.G.; Hillyard, S.A. Modulation of early auditory processing during selective listening to rapidly presented tones. Electroencephalogr. Clin. Neurophysiol. 1991, 79, 170–191. [Google Scholar] [CrossRef]

- Woldorff, M.G.; Gallen, C.C.; Hampson, S.A.; Hillyard, S.A.; Pantev, C.; Sobel, D.; Bloom, F.E. Modulation of early sensory processing in human auditory cortex during auditory selective attention. Proc. Natl. Acad. Sci. USA 1993, 90, 8722–8726. [Google Scholar] [CrossRef]

- Näätänen, R.; Gaillard, A.; Mäntysalo, S. Early selective-attention effect on evoked potential reinterpreted. Acta Psychol. 1978, 42, 313–329. [Google Scholar] [CrossRef]

- Falkenstein, M.; Hohnsbein, J.; Hoormann, J.; Blanke, L. Effects of crossmodal divided attention on late ERP components. II. Error processing in choice reaction tasks. Electroencephalogr. Clin. Neurophysiol. 1991, 78, 447–455. [Google Scholar] [CrossRef]

- Large, E.W.; Jones, M.R. The dynamics of attending: How people track time-varying events. Psychol. Rev. 1999, 106, 119–159. [Google Scholar] [CrossRef]

- Treisman, A.M. Contextual cues in selective listening. Q. J. Exp. Psychol. 1960, 12, 242–248. [Google Scholar] [CrossRef]

- O’Connell, R.G.; Dockree, P.M.; Kelly, S.P. A supramodal accumulation-to-bound signal that determines perceptual decisions in humans. Nat. Neurosci. 2012, 15, 1729–1735. [Google Scholar] [CrossRef]

- Van Vugt, M.K.; Beulen, M.A.; Taatgen, N.A. Relation between centro-parietal positivity and diffusion model parameters in both perceptual and memory-based decision making. Brain Res. 2019, 1715, 1–12. [Google Scholar] [CrossRef] [PubMed]

- Brouwer, H.; Hoeks, J.C.J. A time and place for language comprehension: Mapping the N400 and the P600 to a minimal cortical network. Front. Hum. Neurosci. 2013, 7, 758. [Google Scholar] [CrossRef] [PubMed]

- Brouwer, H.; Crocker, M.W. On the Proper Treatment of the N400 and P600 in Language Comprehension. Front. Psychol. 2017, 8, 1327. [Google Scholar] [CrossRef] [PubMed]

- Brouwer, H.; Crocker, M.W.; Venhuizen, N.J.; Hoeks, J.C.J. A Neurocomputational Model of the N400 and the P600 in Language Processing. Cogn. Sci. 2017, 41 (Suppl. 6), 1318–1352. [Google Scholar] [CrossRef]

- Bianco, R.; Novembre, G.; Keller, P.E.; Scharf, F.; Friederici, A.D.; Villringer, A.; Sammler, D. Syntax in Action Has Priority over Movement Selection in Piano Playing: An ERP Study. J. Cogn. Neurosci. 2016, 28, 41–54. [Google Scholar] [CrossRef] [PubMed]

- Hagoort, P. Interplay between Syntax and Semantics during Sentence Comprehension: ERP Effects of Combining Syntactic and Semantic Violations. J. Cogn. Neurosci. 2003, 15, 883–899. [Google Scholar] [CrossRef]

- Koelsch, S.; Kasper, E.; Sammler, D.; Schulze, K.; Gunter, T.; Friederici, A.D. Music, language and meaning: Brain signatures of semantic processing. Nat. Neurosci. 2004, 7, 302–307. [Google Scholar] [CrossRef]

| Variable | Attend-Language (N = 16) | Attend-Music (N = 19) |

|---|---|---|

| Age in years, M (SD) | 19.625 (2.029) | 19.389 (2.033) |

| Male, n | 11/16 | 8/19 |

| Music Training, years, M (SD) | 2.233 (3.422) | 3.105 (4.012) |

| Musically trained, n | 9/16 | 11/18 |

| Full-Scale IQ (Estimated from Shipley-Hartford IQ scale, M (SD)) | 100 (10) | 101 (7) |

| MBEA, M(SD) | 23.375 (3.828) | 25.11 (2.685) |

| Pitch Discrimination, ΔHz/500 Hz, M (SD) | 12.469 (11.895) | 11.087 (7.174) |

| Normal Hearing, % | 100% | 100% |

| English as First Language, n | 15/16 | 12/18 |

| Tests of Within-Subjects Contrasts | |||||

| Source | Time-Window | df | F | p | Partial eta2 |

| Violation | 180–280 ms | 1 | 33.198 | < 0.001 | 0.501 |

| 500–600 ms | 1 | 31.317 | < 0.001 | 0.487 | |

| Violation * Attend | 180–280 ms | 1 | 0.64 | 0.43 | 0.019 |

| 500–600 ms | 1 | 9.951 | 0.003 | 0.232 | |

| Tests of Between-Subjects Effects | |||||

| Source | Time-Window | df | F | p | Partial eta2 |

| Attend | 180–280 ms | 1 | 1.381 | 0.248 | 0.040 |

| 500–600 ms | 1 | 9.763 | 0.004 | 0.228 | |

| First Language English Speakers Only | Tests of Within-Subjects Contrasts | ||||

| Source | Time-Window | df | F | p | Partial eta2 |

| Violation | 180–280 ms | 1 | 14.216 | < 0.001 | 0.428 |

| 500–600 ms | 1 | 30.722 | < 0.001 | 0.618 | |

| Violation * Attend | 180–280 ms | 1 | 0.195 | 0.664 | 0.01 |

| 500–600 ms | 1 | 11.075 | 0.004 | 0.368 | |

| Tests of Between-Subjects Effects | |||||

| Source | Time-Window | df | F | p | Partial eta2 |

| Attend | 180–280 ms | 1 | 0.971 | 0.337 | 0.049 |

| 500–600 ms | 1 | 13.99 | < 0.001 | 0.424 | |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Lee, D.J.; Jung, H.; Loui, P. Attention Modulates Electrophysiological Responses to Simultaneous Music and Language Syntax Processing. Brain Sci. 2019, 9, 305. https://doi.org/10.3390/brainsci9110305

Lee DJ, Jung H, Loui P. Attention Modulates Electrophysiological Responses to Simultaneous Music and Language Syntax Processing. Brain Sciences. 2019; 9(11):305. https://doi.org/10.3390/brainsci9110305

Chicago/Turabian StyleLee, Daniel J., Harim Jung, and Psyche Loui. 2019. "Attention Modulates Electrophysiological Responses to Simultaneous Music and Language Syntax Processing" Brain Sciences 9, no. 11: 305. https://doi.org/10.3390/brainsci9110305

APA StyleLee, D. J., Jung, H., & Loui, P. (2019). Attention Modulates Electrophysiological Responses to Simultaneous Music and Language Syntax Processing. Brain Sciences, 9(11), 305. https://doi.org/10.3390/brainsci9110305